Abstract

Industry Revolution Five (Industry 5.0) will shift the focus away from technology and rely more on to the collaboration between humans and AI-powered robots. This approach emphasizes a more human-centric perspective, enhanced resilience, optimized workplace processes, and a stronger commitment to sustainability. The humanoid robot market has experienced substantial growth, fueled by technological advancements and the increasing need for automation in industries such as service, customer support, and education. However, challenges like high costs, complex maintenance, and societal concerns about job displacement remain. Despite these issues, the market is expected to continue expanding, supported by innovations that enhance both accessibility and performance. Therefore, this article proposes the design and implementation of low-cost, remotely controlled humanoid robots via a mobile application for home-assistant applications. The humanoid robot boasts an advanced mechanical structure, high-performance actuators, and an array of sensors that empower it to execute a wide range of tasks with human-like dexterity and mobility. Incorporating sophisticated control algorithms and a user-friendly Graphical User Interface (GUI) provides precise and stable robot operation and control. Through an in-house developed code, our research contributes to the growing field of humanoid robotics and underscores the significance of advanced control systems in fully harnessing the capabilities of these human-like machines. The implications of our findings extend to the future development and deployment of humanoid robots across various industries and societal contexts, making this an ideal area for students and researchers to explore innovative solutions.

1. Introduction

The next phase of industrial evolution (Industry 5.0) emphasizes the human-centric approach that is based on the partnership between humans and advanced technologies, such as AI and robotics, to enhance creativity, productivity, and decision-making. This new phase is aiming to create a balance between technological innovation and human well-being in different applications [1,2,3]. Therefore, humanoid robots recently gained great importance in a variety of areas and have the potential to revolutionize society. Humanoid robots can be invaluable in tasks that are dangerous, challenging, or physically demanding. They can provide aid in healthcare settings, helping with patient care, monitoring, and companionship. In industries like manufacturing, humanoid robots can collaborate with humans, performing repetitive or strenuous tasks and enhancing productivity. Moreover, in emergency situations, humanoid robots can assist in search and rescue missions, accessing hazardous areas and potentially saving lives. Secondly, humanoid robots excel in enhancing human–robot interaction. With their human-like appearance and natural movements, they can facilitate seamless communication and collaboration with humans [4,5,6,7,8,9]. This aspect is particularly valuable in customer service roles, where humanoid robots can greet and assist people in a more engaging and interactive manner. In educational settings, they can act as tutors, engaging students and providing personalized instruction. By improving human–robot interaction, these robots have the potential to create positive and meaningful experiences for individuals. Humanoid robots contribute to research and development. They serve as physical embodiments for studying various aspects of robotics, AI, and cognitive science. Researchers can leverage humanoid robots to gain insights into human locomotion, perception, and cognition, leading to advancements in these fields. Additionally, humanoid robots act as testbeds for refining algorithms and control systems that can be applied to other robotic systems. By pushing the boundaries of technology, humanoid robots pave the way for innovations and progress in the broader field of robotics [10,11,12,13,14,15,16,17].

Several factors come into play when making humanoid robots feel very expensive: their complex design, advanced technology, R&D costs, and many others. In general, the price for a humanoid robot varies from tens of thousands of dollars to a few hundred thousand dollars, contingent on its capabilities and intended application. For example, with robots used for research or for performing complex tasks, sophisticated AI, sensors, and actuators are all involved, which inflates the prices considerably. A high price tag also arises due to the maintenance and support required for those robots, making them worthy investments for businesses and research establishments. As technology advances, though, the cost should go down; for now, it is still considered very expensive [18,19,20,21,22,23,24].

1.1. Literature Review

Several studies indicated humanoid robots with many existing characteristics, and therefore, the development of a humanoid robot is on the agenda in many of the studies [25,26,27,28,29,30]. Therefore, the development of human robots generally involves making them capable of walking in different environments with different capabilities, and therefore, each robot has features that vary according to what is required. The humanoid robot, which walks in all directions, aims to make humanoid robots autonomous. Motion, robot visibility, and behavior control are some of the most important and difficult software development efforts. In the field of modeling and simulation of robots, kinematics is a subject that is thoroughly researched. Iqbal et al. [31] proposed a geometric model to solve the unknown joint angles required to determine the independent positions of the robotic system. Potkonjak et al. [32] developed a strategy to analyze the movement of the upper arm limb, while Wang et al. presented the full-body kinematics of a six-legged symmetrical radial robot. These papers [33,34] proposed the IK model to solve all the common variables for a serial arm manipulator of any type. This model is based on the forward kinematic solution. Biomechanics and robotics researchers have made significant strides in studying various aspects of human and humanoid robot motion. They have explored topics such as bipedal gait jumping and running and even somersaults on trampolines. A comprehensive overview of advanced subjects in humanoid dynamics can be found in [35,36]. Notably, separate models have been developed for specific problems like gymnastic exercises, soccer, and tennis. However, creating individual models for each distinct problem poses a significant challenge when attempting to generalize findings [37,38,39,40]. Researchers derive a general model that encompasses all the specific motions as special cases. This approach heavily relies on contact analysis, which is guided by the theory elucidated in [41]. By adopting this deductive approach, researchers aim to establish a unified framework that can account for a wide range of motions and facilitate a deeper understanding of human and humanoid robot movement [42,43,44]. This classification serves as a valuable tool for researchers in the field of humanoid robots, as it offers a comprehensive overview of the characteristics and technologies associated with existing humanoid robots. The primary purpose of this classification is to expedite and improve understanding by providing a broad comparative perspective on the various humanoid robots that have been developed. By categorizing these robots, including those with different compositions, researchers gain insights into the essential aspects of humanoid robot development [45]. Moreover, this classification facilitates an analysis of the development aspects of humanoid robots. By examining the construction goals of each available humanoid robot based on the defined criteria, researchers can identify commonalities and differences among them. This analysis also highlights the shortcomings and limitations of existing humanoid robots, which can guide future research and development efforts. To construct this classification, the relevant information is extracted from the existing literature, ensuring that the roadmap of humanoid robots is grounded in the knowledge and findings already documented. By studying the literature, researchers can identify trends, advancements, and challenges in the field, which aids in shaping the general roadmap for the future development of humanoid robots. This classification and analysis offer researchers a comprehensive understanding of existing humanoid robots by examining their construction goals, characteristics, and technological aspects. It provides a comparative perspective, identifies shortcomings, and contributes to the creation of a general roadmap for the development of humanoid robots [46,47,48,49].

The references collectively highlight significant advancements in humanoid robotics and motion planning, yet several gaps persist. Many studies focus primarily on single-arm movements and basic kinematics, neglecting the complexities of dual-arm coordination and sophisticated manipulation tasks. Additionally, while there is a strong emphasis on biologically inspired techniques for generating human-like motions, these approaches often fail to fully capture the intricacies of human behavior and neurological features. Furthermore, the application of robotic manipulators in specific fields, such as sports training, remains limited, with few innovations addressing the dynamic challenges encountered in real-world scenarios. Table 1 shows the summary of the literature review.

Table 1.

The literature review summary.

1.2. Contributions

Humanoid robots are often considered expensive due to several factors, including their complex design, advanced technology, and extensive research and development costs. Typically, the price of humanoid robots can range from tens of thousands to several hundred thousand dollars, depending on their capabilities and intended applications. For instance, robots designed for research or intricate tasks may incorporate sophisticated artificial intelligence, sensors, and actuators, driving up the cost significantly. Additionally, the ongoing maintenance and support needed for these robots can add to their overall expense, making them a significant investment for businesses and research institutions.

This article proposes a low-cost, remotely controlled humanoid robot for home-assistant applications, aligning with the human-centric focus of Industry 5.0. It features advanced mechanics, high-performance actuators, and sensors for human-like dexterity. A user-friendly Graphical User Interface (GUI) and precise control algorithms enhance usability and stability. The research addresses societal concerns about cost and job displacement, facilitating broader adoption of humanoid robots across industries. Ultimately, it emphasizes collaboration between humans and AI-powered robots, aiming to improve workplace processes and promote sustainability. The main contribution of the proposed design in this article is as follows:

- The proposed low-cost humanoid robot serves as an accessible entry point for students and researchers interested in robotics and AI, encouraging exploration in this innovative field.

- Design and implementation of a humanoid robot for remotely controlling home appliances, providing practical applications that can be explored in academic projects.

- Development of a mobile application with a friendly Graphical User Interface (GUI), allowing students to engage with user experience design and interface development.

- Utilization of polylactic acid (PLA) for mechanical parts, demonstrating a sustainable and low-cost approach to robotics that can inspire future research and prototypes.

1.3. Paper Organization

The rest of the paper is organized as follows: The mathematical model is illustrated in Section 2. The design process is illustrated in Section 3. The proposed design of the robot in terms of the mechanical, electrical, and software aspects is presented in Section 4. The results and discussion are presented in Section 5. Finally, the conclusion and future work are presented in Section 6.

2. Mathematical Model for Robot Kinematics

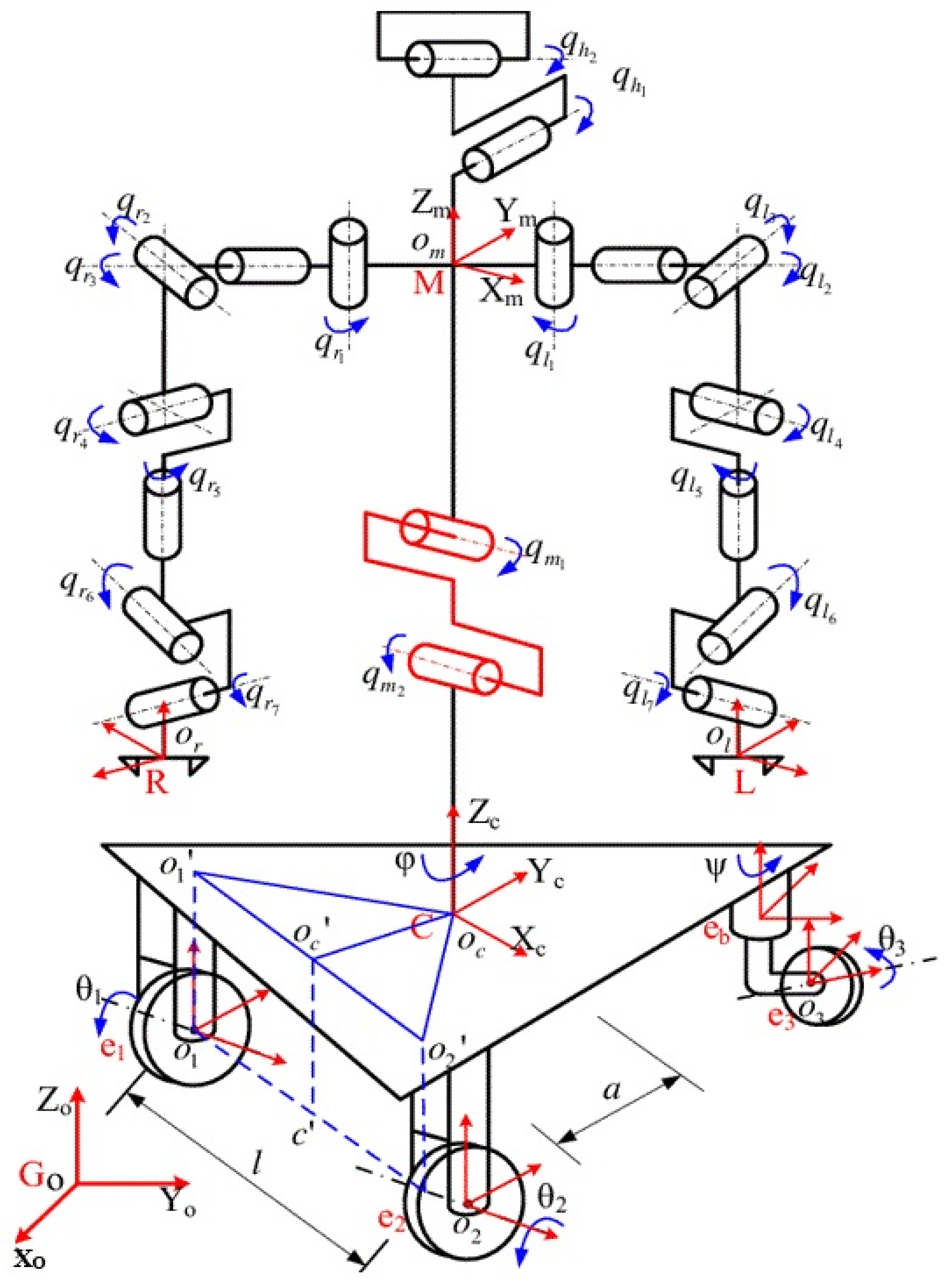

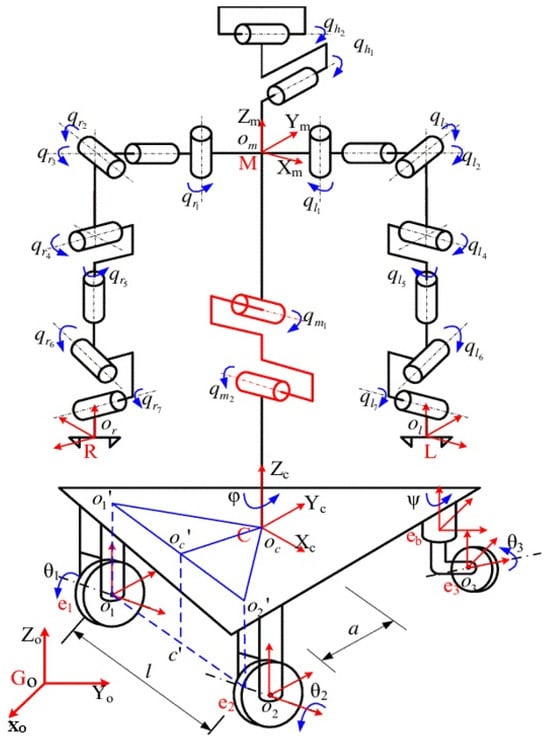

A robot kinematic chain is an articulated manipulator consisting of links connected by rotary joints, which control the relative angular positioning of the links. The number of joints, or degrees of freedom (DOF), determines the flexibility of the robot’s motion. Robot kinematics applies geometry to study kinematic chains with multiple DOF, focusing on transforming movements between joint space (where the chain is defined) and Cartesian space (where the robot moves). This is suitable for movement planning, execution, and calculating forces and torques for the robot’s actuators [50,51,52]. The humanoid robot’s upper-body kinematics is modeled with multiple degrees of freedom (DoF) per joint, as illustrated in Figure 1. The head has 2 DoF (pan and tilt), enabling gaze control. The shoulder features 2 DoF (flexion/extension and abduction/adduction), allowing arm elevation and lateral movement. The elbow also has 1 DoF (flexion/extension and pronation/supination), providing forearm rotation and bending. Finally, the hand is designed with 1 DoF (wrist flexion/extension and radial/ulnar deviation), ensuring precise orientation for grasping. This configuration ensures naturalistic motion while maintaining computational tractability for inverse kinematics and dynamic control.

Figure 1.

The kinematics model of a humanoid robot.

2.1. Forward Kinematic Model for Arm

The joint space provides limited insight into the position and orientation of the kinematic chain’s end effector. Forward kinematics establishes a mapping that translates the joint space into the three-dimensional Cartesian space. Forward kinematics is a domain-independent problem that can be solved for both simple and complex kinematic chains, resulting in a closed-form, analytical solution. Forward kinematics is capable of obtaining the end effector of the kinematic chain position and orientation () in the three-dimensional space, given a kinematic chain with m-joints and a set of joint values [52].

2.2. Inverse Kinematic Model for Arm

Robot manipulators usually make the end effector reach a target point or follow trajectories in the three-dimensional space by specifying appropriate values for the joints of the kinematic chain. The relation between points in the three-dimensional space position () and orientation () and the joint values and angles can be defined in the joint space of a kinematic chain with m-joints by inverse kinematics. Inverse kinematics is a domain-dependent problem, with each kinematic chain having its own unique solution. The solution can either be an analytical, closed-form equation or a numerical, iterative approximation (e.g., using the Jacobian method). As the number of degrees of freedom (DOF) increases, a point in three-dimensional space may correspond to multiple solutions in joint space, making inverse kinematics a relation rather than a one-to-one mapping [18].

2.3. Mathematical Model

Affine transformations are adopted to model transformations between coordinate systems or robot movement in space. Also, these transformations are suitable tools for manipulating and analyzing objects in 2D and 3D spaces, with applications across a variety of fields. In addition, it preserves points, straight lines, and planes, but it may alter distances and angles. In mathematical terms, affine transformations are a combination of linear transformations (like rotation, scaling, and shearing) and translations (shifting points by a constant vector). The presented model in this article focuses exclusively on rotation and translation affine transformations. Additionally, the presented work is considering a three-dimensional Cartesian coordinate system, so all definitions will be based on this 3D space. The affine transformation matrix shown in Equation (1) is the matrix for the n-dimensional space.

where is a matrix, is a vector and the last line in the matrix contains a vector of zeros followed by a 1. For a given point in the three-dimensional space the transformation is applied by multiplying the affine transformation matrix with the column vector as shown in Equation (2).

Translation in a Cartesian space is a function that moves every point by a fixed distance in a specified direction, which can be described in the three-dimensional space with a (4 × 4) matrix of the following form presented in Equation (3):

where , and represent the distance of translation along the and axis, respectively. To move a point in the three-dimensional space by distances , the translation is performed by applying the following transformation shown in Equation (4):

Rotation in a Cartesian space is a function that rotates vectors by a fixed angle about a specified direction. An orthogonal matrix with determinant 1 represents the rotation matrix in the n-dimensional space, as shown below:

In the three-dimensional Cartesian space, there are three different rotation matrices, and each one of them make a rotation of () about the axis, respectively, assuming a right-handed coordinate system as presented in Equation (5):

Rotating a vector defined by the end point about a specific axis can be performed by multiplying by the corresponding rotation matrix. Rotating the vector first about the -axis and then about the -axis and finally about the -axis should be performed by multiplying with the corresponding rotation matrices in the same order . The analytical form of that rotation matrix is shown in Equation (6):

Therefore, any rotation matrix can be transformed into an affine transformation matrix through padding the last line and the last column with , as follows in Equation (7):

For the purpose of kinematics, both rotation and translation matrices are used to transform points in the three-dimensional space. Equation (8) represents the affine transformation matrix that is considered in the presented model, which combines translation and rotation, where rotation is defined by the X block and the translation is defined by the Y block.

3. Design Process

The design of the home-assistant humanoid robot was crafted to balance cost-effectiveness with practical functionality, particularly its ability to lift a weight of 2 kg. This capability is essential for controlling various home appliances remotely, making the robot a valuable tool in modern households. To facilitate user interaction, a mobile application featuring a user-friendly graphical interface (GUI) was developed, enabling precise command over the robot’s tasks through its installed camera. This integration of technology ensures that users can easily manage and monitor home operations from their smartphones, enhancing the overall usability of the robot. Material selection played a suitable role in the robot’s design, with polylactic acid (PLA) chosen for its advantageous properties. PLA is not only cost-effective but also lightweight and strong enough to meet the functional demands of the robot. This choice of material supports the structural integrity of the robot while keeping the project within budget constraints.

By prioritizing these factors, the design enhances accessibility for a wider range of users, ensuring that the robot can efficiently perform its tasks in a domestic environment without compromising performance or durability. Table 2 shows the specifications of the robot design.

Table 2.

Robot link and joint specification.

4. The Proposed Robot Design

The head, torso, and legs are the three main structural components of any human-like robot. Using this method of construction, there are numerous advantages, such as easy manufacturing, assembly, and maintenance of the components. Since each of the three portions can move independently while simulating human movements, the three-section design provides extra degrees of freedom and mobility for the robot. With more freedom to maneuver and explore, the vehicle can interact with its surroundings in so many ways.

A humanoid robot is powered and controlled through its electrical system, which forms the core. At its core is a very powerful rechargeable battery that may provide energy for long working time periods. The motor control system, incorporating advanced electronic drivers and microcontrollers, can precisely govern the movements of the joints and limbs, producing smooth and well-coordinated actions. The system integrates several sensors ranging from encoders and force sensors to inertial measurement units; these sensors supply the feedback that is required in ensuring stable and precise control. Another facet of the electrical system is an interface for communication through which command data can be sent to the robot and the robot can transmit information to a central control unit or to some other external devices, thereby allowing the communications to be seamlessly integrated with other systems in the environment or systems for remote monitoring and operation.

The software and control systems of a humanoid robot can be depicted as its brain. Processing sensor information, generating commands for motion, and coordinating the overall behavior of the robot are some functions served by these systems. The control system is constructed with a modular and scalable architecture, making it possible to integrate several functionalities, including those for navigation, object manipulation, and human–robot interaction. Advanced algorithms operate within the system—kinematics calculations, path planning, and dynamic balance control—to ensure that all motions appear smooth, stable, and natural; machine learning and AI modules constitute another suitable segment of the system. Alongside adaptation to the environment and learning from experiences, this software allows the robot to accomplish human interaction more naturally and intuitively. Such a flexible and extensible control system may thus inspire further development efforts and integration of new functionalities as the underlying technology evolves.

A highly competent and adaptable humanoid robot has been created by combining the three-section design, the sturdy electrical system, and the sophisticated software and control system. The robot can carry out a variety of tasks and engage with people in a natural and intuitive way thanks to its modular design, precise control, and flexible software. As robotics continues to advance, this humanoid robot is a major step toward creating human-like machines that can work alongside and support people in a variety of contexts, including healthcare and industrial settings.

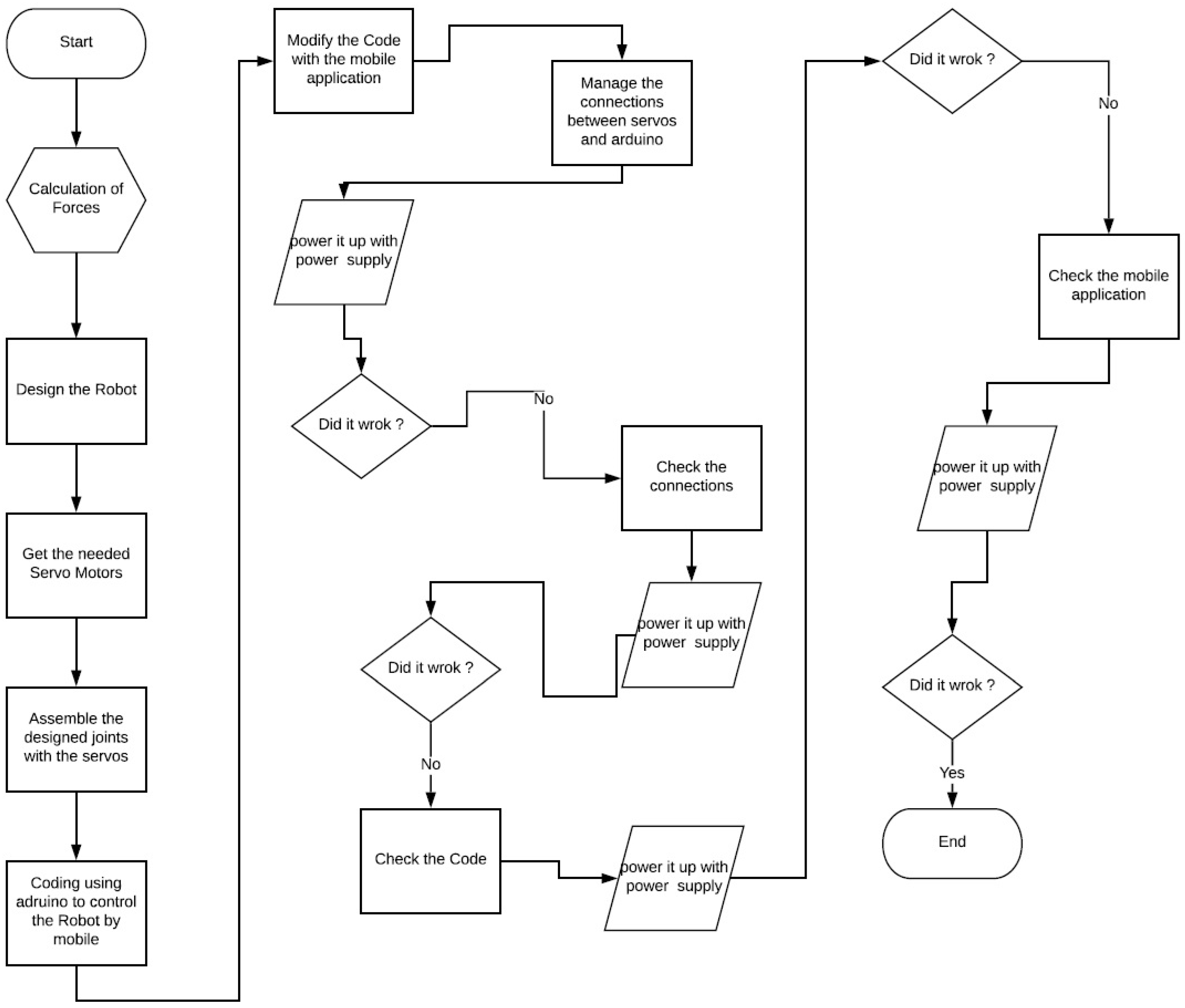

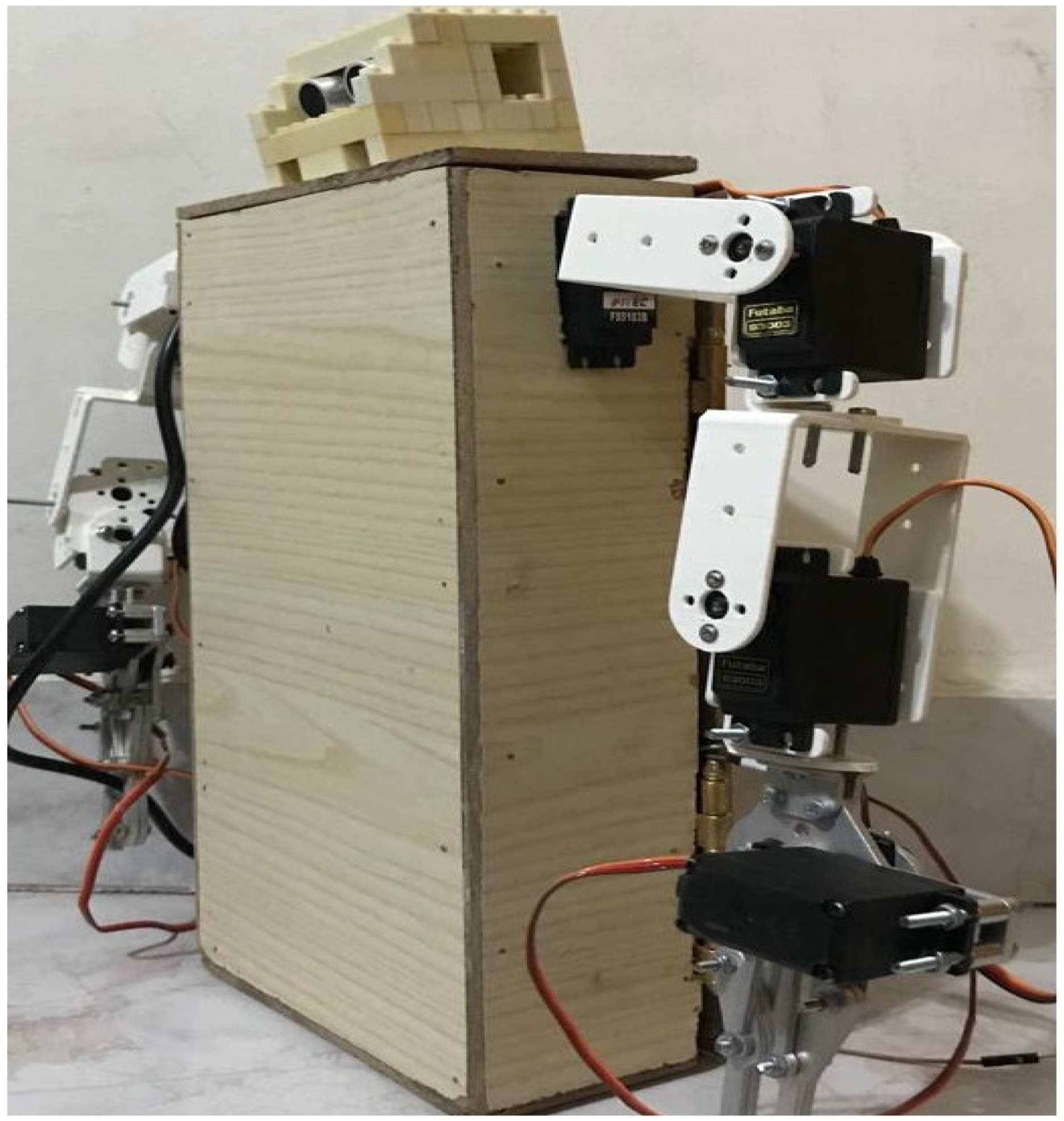

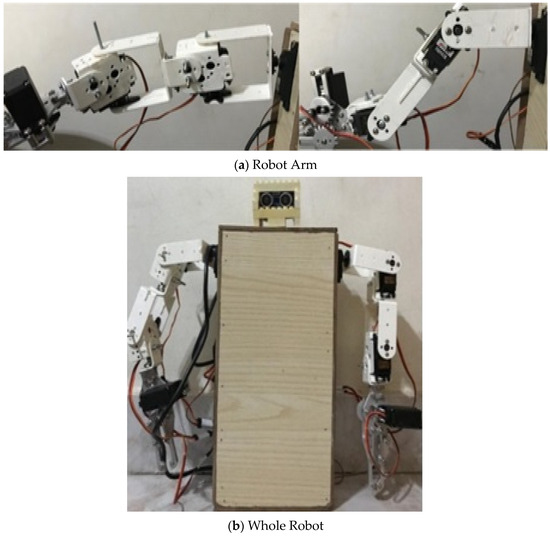

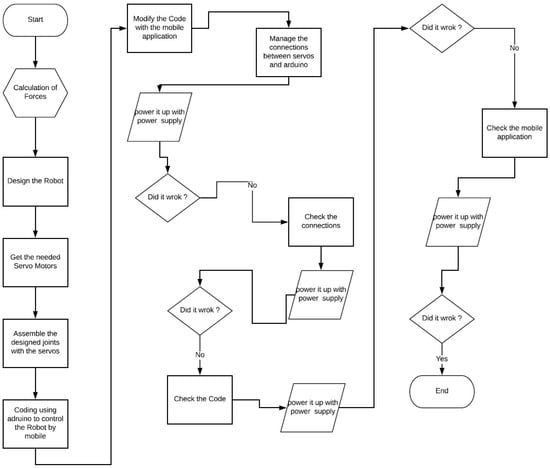

SolidWorks software (version 2021) is used to design the hinges and robotic arms. A flow chart is then made to cover each stage of the coding process and to handle any possible problems the robot might run into while operating. After that, MIT App Inventor is used to create the mobile software needed to operate the robot. By using the robot to lift and move two kilograms, we achieved a satisfactory outcome. Additionally, the robot’s head is equipped with ultrasonic sensors for object detection.

The proposed robot design is centered around functionality and affordability, aiming to serve as an effective home assistant. This initial prototype showcases a streamlined construction that emphasizes mechanical reliability and software integration, allowing it to perform essential tasks efficiently. By utilizing cost-effective materials and a straightforward design, we have created a platform that not only validates core functionalities but also lays the groundwork for future enhancements. This focus on performance ensures that the robot can adapt to user needs, making it a practical solution for everyday challenges.

4.1. Mechanical System Design and Implementation

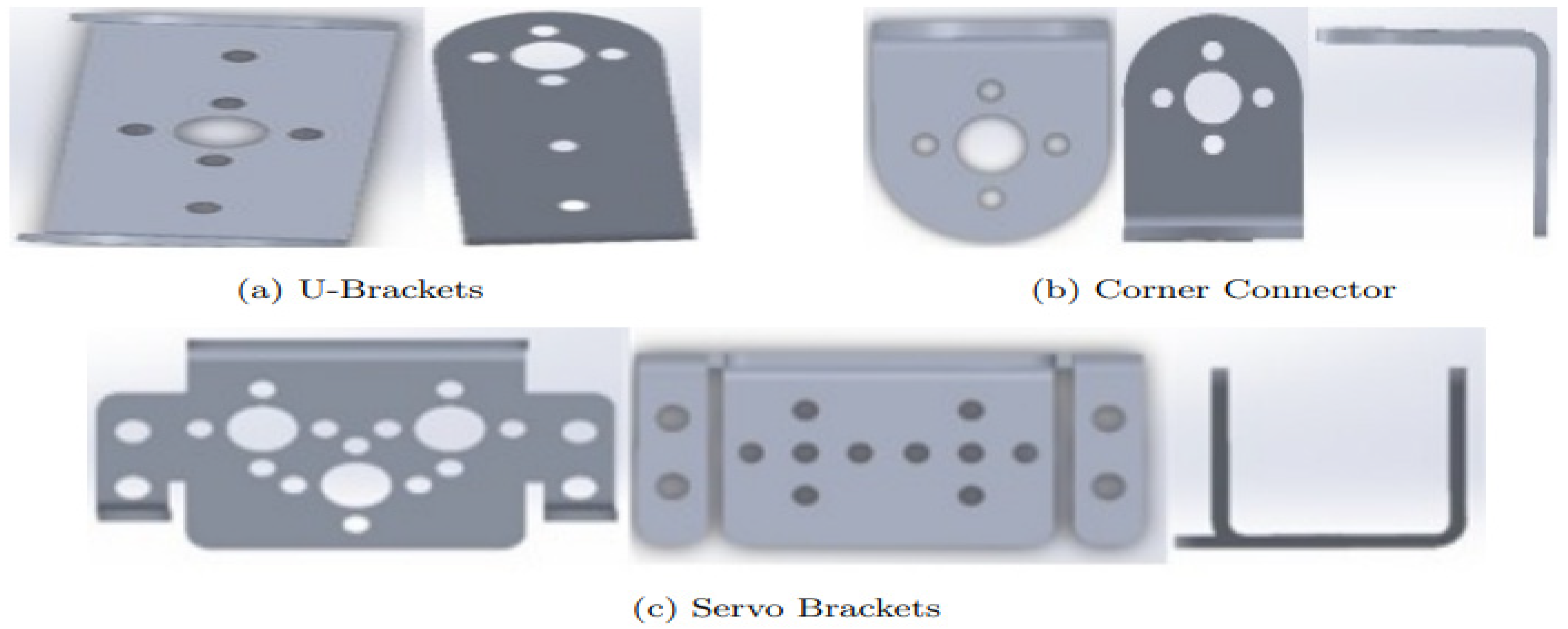

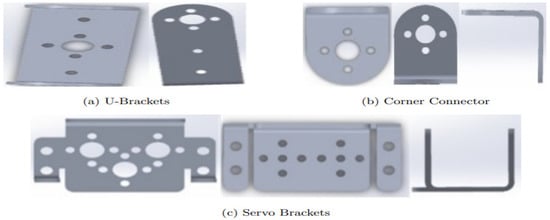

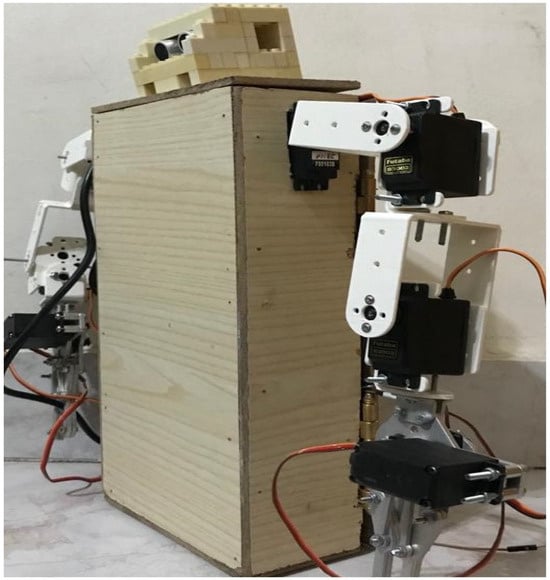

The humanoid robot features a design that includes two arms, a head, and a chest. Each arm is constructed from two U-brackets, two corner connectors, two servo brackets, and one MK II Gripper. These components are connected to a wooden chest using screws, providing a sturdy and reliable framework for the robot’s movements and tasks. The head of the robot is uniquely crafted from Lego, adding an element of modularity and ease of modification. This design choice allows for future enhancements and adjustments to be made with relative simplicity. The combination of materials used in constructing the head and chest ensures that the robot maintains a balance between durability and flexibility.

All these parts were designed using SolidWorks software, a powerful tool for creating precise and detailed 3D models. The components were then fabricated using a 3D printer with PLA plastic, chosen for its strength and lightweight properties. This method of construction not only ensures high accuracy and consistency in the parts but also allows for rapid prototyping and iterative improvements in the robot’s design. Figure 2 shows the robotic arm with different shapes and different views.

Figure 2.

Robot arm connecting parts.

One of the most important parts of the humanoid robot’s overall construction is the robotic arm assembly. The arm’s modular design makes it simple to integrate the different sub-components, including the wrist, elbow, and shoulder joints. The arm can achieve a wide range of motion and dexterity because each joint is outfitted with high-precision motors and gearboxes. The arm’s structure uses cutting-edge materials and manufacturing processes to balance strength and weight, making it both lightweight and sturdy.

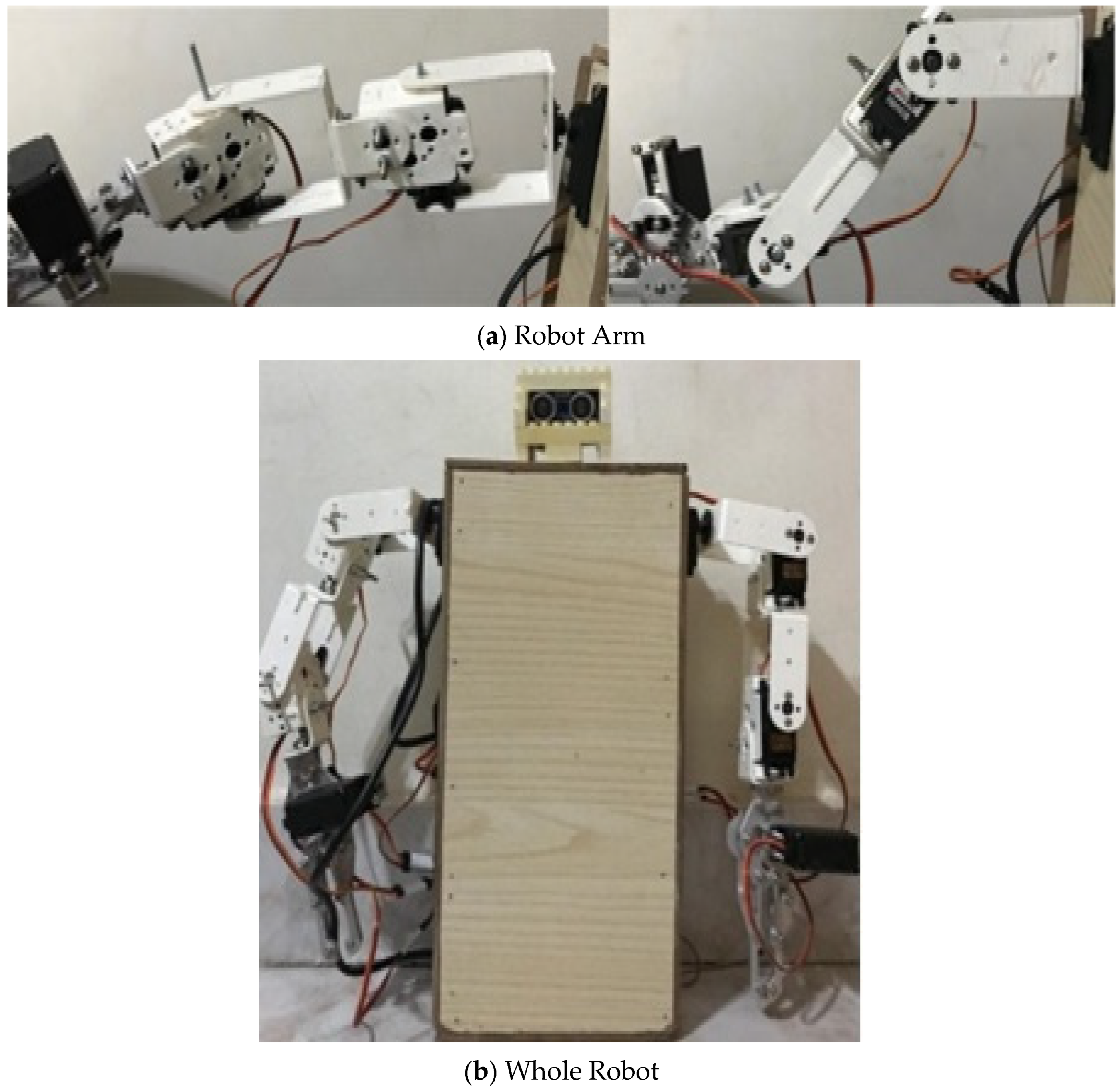

The arm’s joints are carefully aligned and calibrated during assembly to guarantee fluid and well-coordinated movements. The incorporation of sensors, like force and position encoders, improves the arm’s control and feedback capabilities even more, enabling accurate object manipulation and gestures that appear natural. The robotic arm’s modular design streamlines the humanoid robot’s overall assembly, facilitating future maintenance and enhancements. Figure 3 depicts the entire suggested body as well as the robot arm after assembly.

Figure 3.

Assembled robot.

4.2. Electrical System Design and Implementation

The humanoid robot’s electrical system serves as its backbone, coordinating and powering all of its parts to ensure smooth operation. A high-capacity, rechargeable battery at the heart of the electrical system supplies the energy required to run the robot for prolonged periods of time. The robot’s motor control system, which makes use of sophisticated electronic drivers and microcontrollers to precisely regulate the movement of the joints and limbs, is carefully integrated with this power source.

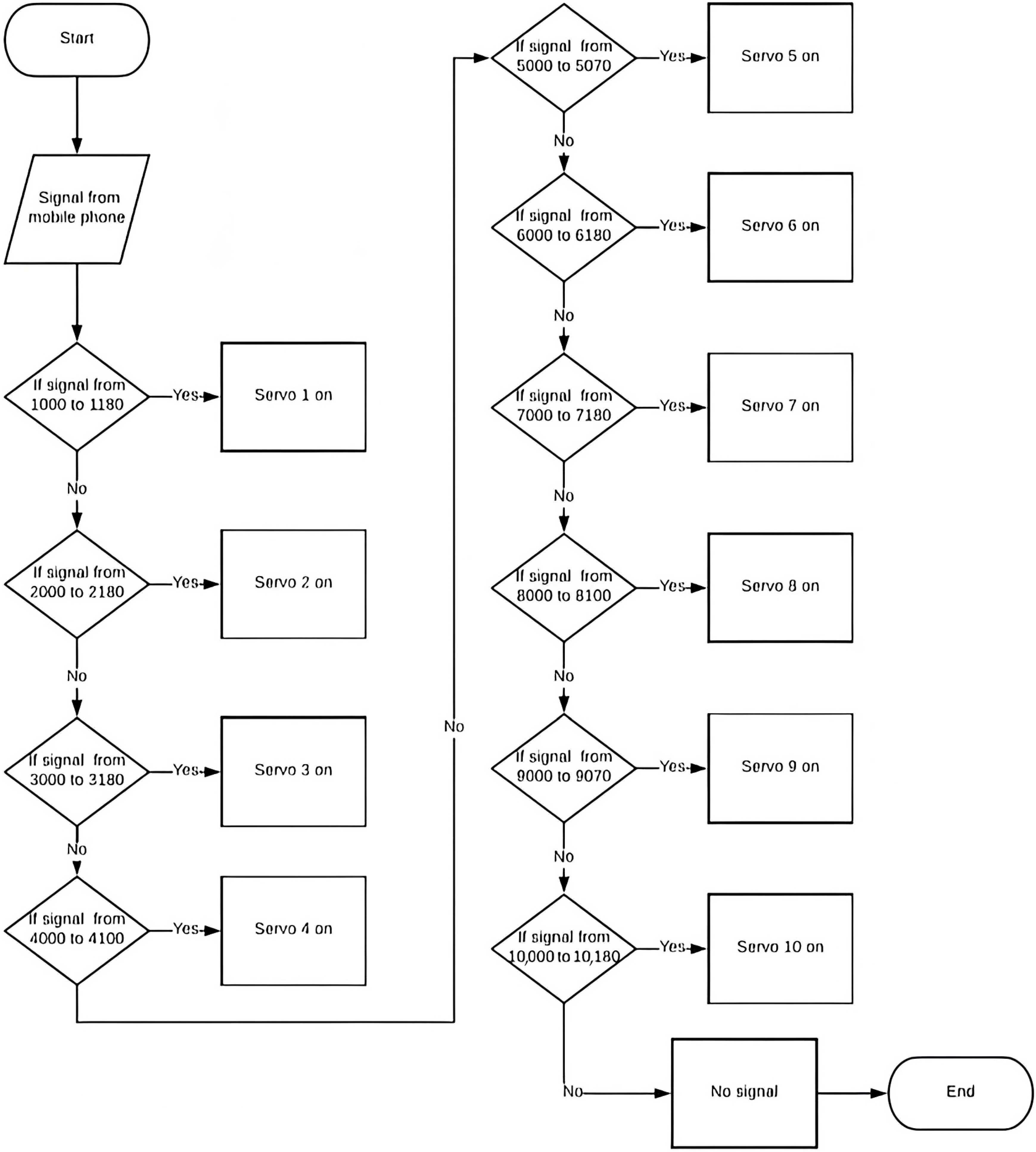

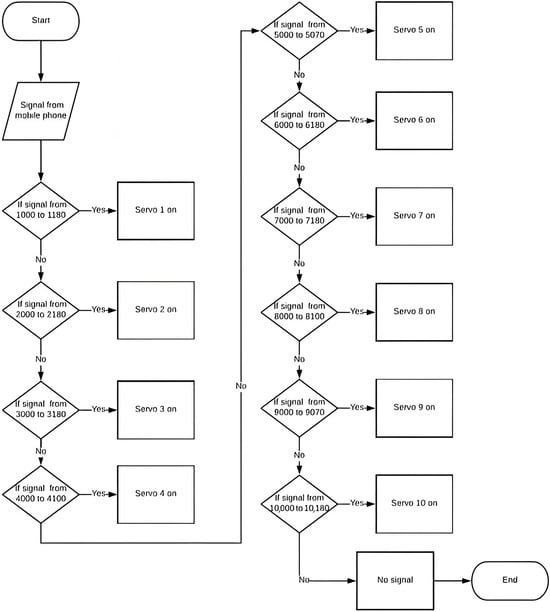

The flow chart shows the steps that must be taken to design, build, code, and troubleshoot a humanoid robot. These steps include managing connections, modifying code, and confirming functionality at each stage to guarantee successful operation. The robotic arm operation flow chart is displayed in Figure 4:

Figure 4.

Robotic operation flow chart.

The FS5103B servo motor was chosen as the main actuator for the humanoid robot because it offers the best combination of affordability, power efficiency, and performance when compared to the MG996R and SG90. According to Table 3, the FS5103B has a stall torque of 5 kg·cm at a 6V operating voltage, which is more than enough to actuate the robot’s wrist, elbow, and shoulder joints when compared to other options like the MG996R and SG90. It is appropriate for low-to-medium torque applications without taxing the power supply because it draws a moderate current of 350 mA and uses 2.1 W of power. The FS5103B sustains a lightweight, compact form that is suitable for the robot’s small frame and intended tasks, in contrast to the MG996R, which offers higher torque but at the expense of greater power consumption and bulk. Despite being more energy-efficient, the SG90 can only be used for lightweight tasks like finger movement, which are not supported by the current design due to its lower torque rating of 2.5 kg·cm.

Table 3.

Torque and power comparison between servo models.

In addition to comparative advantages, the FS5103B also offers specific technical specifications, as can be seen in Table 4, that enhance its suitability for the robot’s mechanical and control needs. Operating within a voltage range of 4.8 to 6.8 V, it delivers a stall torque of up to 13 kg·cm at 6.0 V, sufficient to support basic object manipulation and arm movement. Its rotation speed is 0.16 s/60° under no load—fast enough for smooth and responsive joint motion. The servo operates reliably across a wide temperature range (−10 °C to 60 °C), which broadens its applicability in different ambient conditions. Weighing just 55 g, it helps keep the overall robot weight low. Furthermore, its 180° mechanical rotation limit aligns with the intended joint motion range, and its metal gear construction ensures durability under repeated use. These combined features affirm the FS5103B as a reliable, efficient, and cost-effective solution for humanoid robotic applications.

Table 4.

Servo performance specifications (FS5103B model).

Each arm of the humanoid robot is equipped with four servo motors: the first servo, located in the shoulder, is programmed to rotate the arm, while the second servo enables vertical movement of the arm. The third servo, positioned in the elbow, also facilitates up-and-down motion, and the fourth servo is integrated into the MK II Gripper to open and close the gripper. Communication with the robot is established through a Bluetooth module, which receives signals from a mobile phone, and control logic is implemented using an Arduino Mega 2560. The components utilized in this design include eight servo motors, a Bluetooth module (HC-05), connectors, a breadboard, and a power supply. The servo motor control system is a critical component of the humanoid robot’s electrical architecture, responsible for precisely managing the movement of the various joints and limbs. This control system is represented in a detailed flow chart, outlining the step-by-step process for actuating the servo motors and coordinating the robot’s movements. At the top of the flow chart, the central control unit receives input commands, either from an external interface or the robot’s internal control algorithms. These commands are then parsed and translated into specific motor control signals, which are sent to the corresponding servo drivers. The flow chart illustrates the feedback loop, where encoders and other sensors provide real-time position and torque data back to the control system. This flow chart outlines the decision-making process for activating the robot’s servos based on specific signal ranges received from a mobile phone, sequentially checking each signal range to determine which servo to turn on and concluding if no signal matches the predefined ranges. Figure 5 shows the flow chart for servo motor control.

Figure 5.

Servo motor control flow chart.

4.3. Software System Design and Implementation

Arduino was selected to program the robot due to its user-friendly coding environment and simplicity in controlling multiple servo motors, using the C++ programming language. The controlling process of the robot’s operations is managed via an application developed using MIT App Inventor. The first stage of the mobile application was used to test the control algorithm of the robot tasks and movements. To further enhance the robot’s capabilities, a robust mobile application was developed, providing users with a comprehensive interface to control and monitor the robotic arm. Through this app, users can issue commands, adjust parameters, and even program custom movements, all from the convenience of their mobile devices. The tight integration between the robot’s software and the mobile application has enabled a level of flexibility and user-friendliness that expands the potential applications of this humanoid platform, from industrial automation to assistive living and beyond.

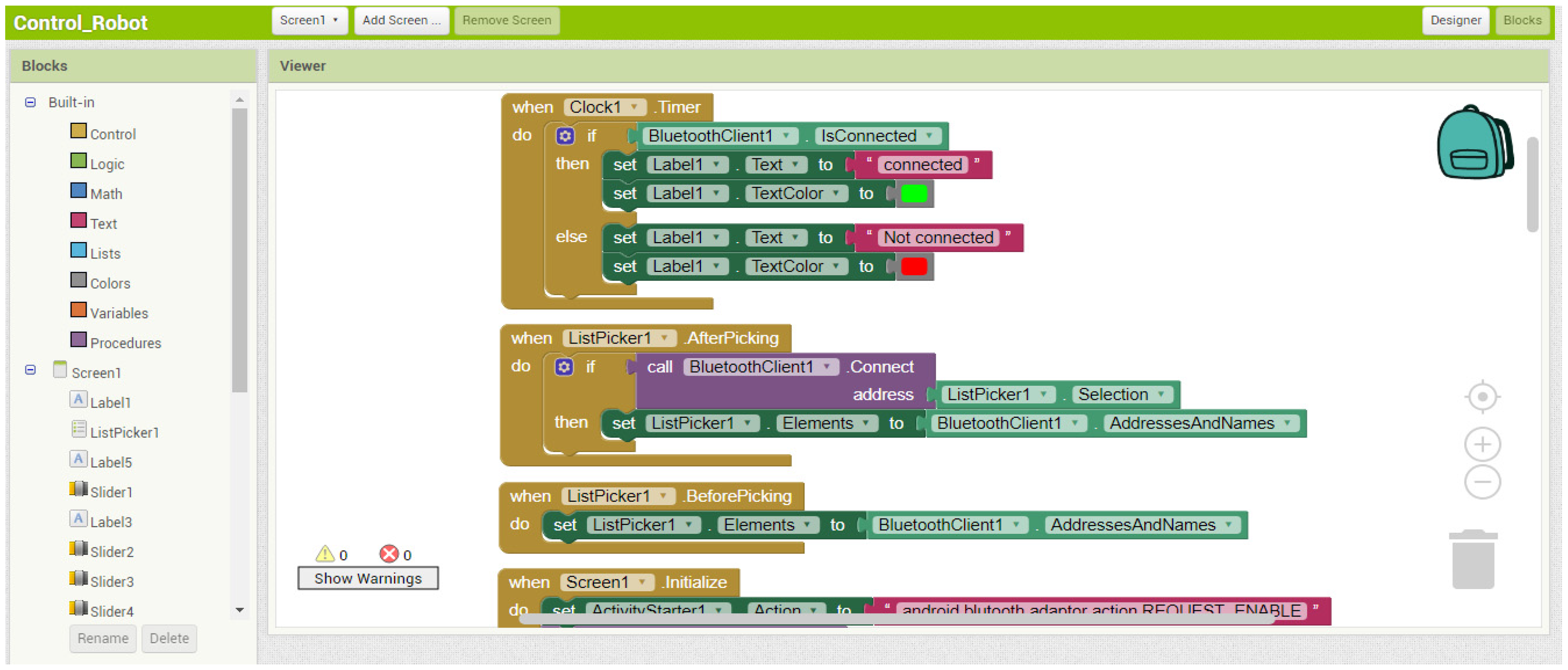

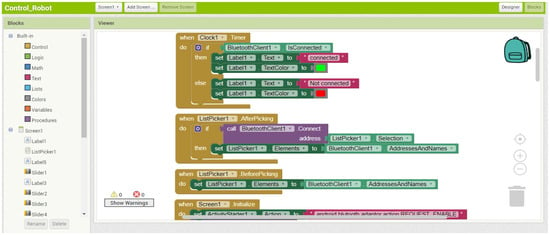

4.3.1. Testing Mobile Application

The mobile application developed for the humanoid robot’s operation control offers a wide range of advanced features that seamlessly integrate with the robotic arm. The app’s intuitive user interface allows operators to easily visualize the current state of the arm, with real-time feedback on joint positions, torque levels, and overall system status. Users can issue high-level commands to the arm, such as instructing it to grasp an object, reach for a target location, or perform a predefined sequence of movements. The app also provides granular control, enabling users to manually adjust the position and orientation of individual joints, facilitating precise object manipulation and dexterous maneuvers. The designed application was implemented on the MIT App Inventor site to control the servo motor by an Android phone, as shown in Figure 6.

Figure 6.

MIT App Inventor.

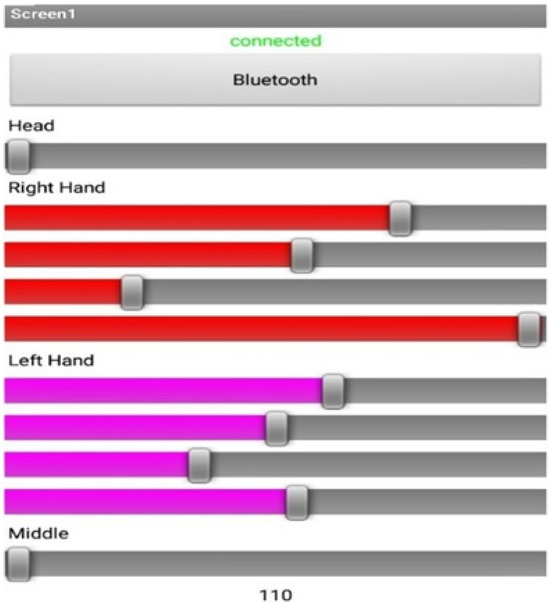

The following Figure 7 shows the mobile application interface developed on the MIT App Inventor platform, which enables the control of the robot’s servo motors via an Android phone.

Figure 7.

Testing mobile application interface.

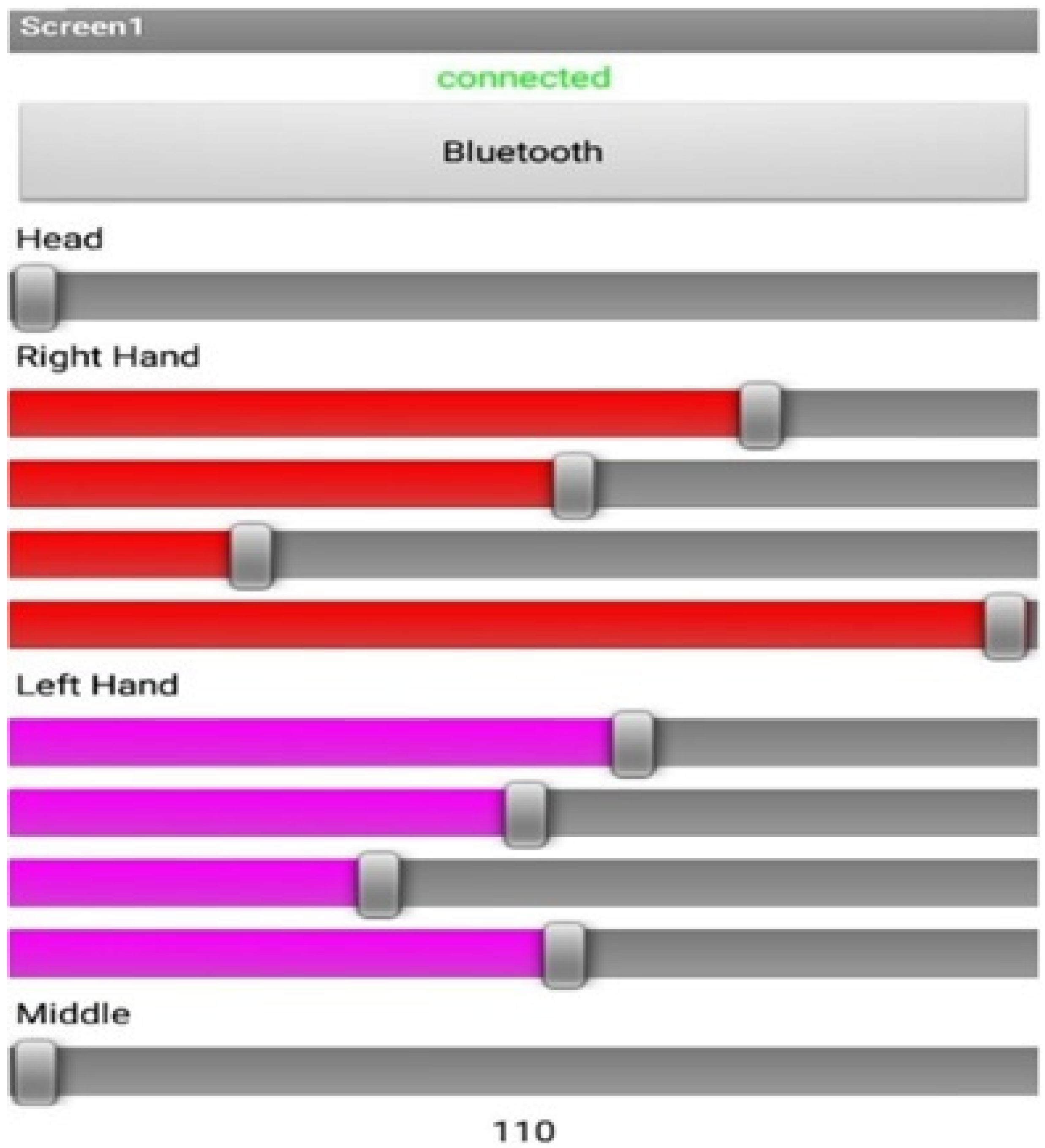

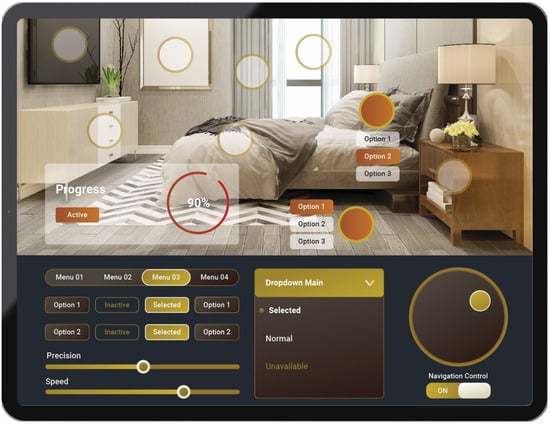

4.3.2. User Interaction and Visual Design for a Humanoid Robot Mobile Application

After the testing of the robot control operations using the test mobile application, an enhanced user-friendly GUI mobile application is designed, taking into consideration different aspects of the robot and their potential for interaction with humans. Since designing graphical interfaces for humanoid robots poses different challenges, detailed information on user interaction and visual design of graphical interfaces for humanoid robots covers the following aspects:

- Multimodality: The robot GUI should be accessible by means of a touchscreen for accurate control and selection of tasks, as well as for the graphical representation of data. For other activities, also consider other touch interfaces, like a virtual joystick to be used for movement control and a multi-touch surface for data handling.

- Speech Control: This feature is designed for hands-free interaction in commanding and making inquiries. Couple speech recognition with a natural language processing engine to enable a far more conversational interaction.

- Gesture Recognition: It will enable natural commands, mostly for navigation and basic actions. Have the robot learn the specific set of gestures for common commands, such as “stop,” “go here,” “faster,” and so on.

- Contextual Adaptation: The GUI will adapt contextually in the following respects:

- –

- Activity: Highlight relevant controls and information based on the activity being performed now.

- –

- Ambient: Light ambient and screen size adaptation.

- –

- User Profile: It also supports personalization of the layout, color scheme, or the level of access depending on the requirement or preference of users.

- Visual Aids: The status of the commands and activities must be displayed by using the facilities of animation, color, and highlighting.

- Audio Cues: Successes, errors, warnings, etc., shall be accompanied by voice feedback, sound effects, and variations in tone.

- Haptic Feedback: This is realized with the use of haptic actuators on a touchscreen, which provide the user with a sensation of tactility when navigating and selecting. The following Figure 8 shows the GUI of the mobile application developed for the control of the humanoid robot.

Figure 8. Enhanced GUI of mobile application.

Figure 8. Enhanced GUI of mobile application.

4.4. Experimental Setup

An experimental investigation was conducted to test the humanoid robot’s applicability to various missions. Prior to initiating the experiments, we ran extensive tests to ensure that the robot’s systems were fully operational and safe for use, conducting a series of diagnostics to verify the functionality of the sensors and actuators and adjusting as necessary to optimize performance. We designed essential components using AutoCAD, including U-brackets, corner connectors, and servo brackets, ensuring structural integrity and optimal performance.

An electric flow chart was developed to visualize the system’s components, encompassing the power source, main controller, sensors, actuators, and feedback loops. Coding was implemented in Python (version 3.8), featuring a continuous operational loop to read sensor data, process it for decision-making, and control actuators, alongside safety checks. Next, we placed the humanoid robot in a controlled environment, simulating various scenarios it may encounter during missions.

The dimensions of the testing area (laboratory) are 25 × 20 × 7 m (Figure 9). Subsequently, the pilot activated the robot’s systems and used a mobile interface to control its movements through a Graphical User Interface (GUI) platform. During the experiment, installed cameras on the robot allowed real-time display of the surrounding environment on a mobile, enabling the pilot to effectively navigate and interact with the designated objects.

Figure 9.

Experimental setup.

4.5. Cost Breakdown of Components

One of the most attractive aspects of the humanoid robot design is its affordability, especially for academic institutions, researchers, and enthusiasts looking for reasonably priced solutions. The robot is substantially less expensive than the majority of commercial or research-grade humanoid platforms, with a total hardware cost of about USD 312. Important mechanical and structural parts like servo brackets, corner connectors, and U-connectors are all priced at USD 10 each, making the total USD 120.

Production costs are further decreased by using 3D-printed brackets and a simple wooden plank for the robot’s framework. Furthermore, building modular heads with a Lego set increases design flexibility while also saving money.

Table 5 shows that, at almost 45% of the total cost, the servo motors receive the lion’s share of the budget. The robot’s head, shoulders, elbows, and wrists cannot move without the help of these actuators. A camera for visual feedback and a Bluetooth module for wireless control are two more essential electronic parts that enable remote interaction and real-time operation. This robot maintains its affordability while providing strong capabilities by using widely accessible, reasonably priced parts and open-source software platforms. Without the need for substantial funding or sophisticated manufacturing resources, this affordability creates opportunities for wider adoption in educational settings and grassroots research projects.

Table 5.

Summarizing the components, their quantities, and prices.

4.6. Comparative Analysis of Mechanical and Control Innovations in Low-Cost Humanoid Robots

The field of humanoid robotics is rapidly developing, but finding a balance between mechanical capabilities, affordability, and accessibility continues to be a challenge. Although many humanoid platforms are being developed for educational, research and assistive applications, few platforms cover everything that offers some level of flexibility in structure, sufficient payload capacity, and ability to be controlled easily while remaining easy to set up and use within a limited budget. This section comprises a systematic comparison of the current humanoid robot design with eight leading low-cost or mid-range humanoid platforms, namely, InMoov [53,54], Poppy Humanoid [55,56], Robotis Mini [57,58,59,60], TAMIYA Biped Kit [61,62,63], PLEN2 [64,65,66], Bioloid GP [67,68], Teto Humanoid [69], and QD Robot Kit [70,71]. The human platform comparison is based on four dimensions—mechanical design, actuation method, control method, and application.

4.6.1. Mechanical Architecture and Design Innovation

Mechanical architecture directly impacts the robot’s range of motion, stability, payload capacity, and modularity. The robot at the center of this project uses PLA 3D-printed joints, a wooden torso, and LEGO head components to show an economical and fast robot fabrication process. This combination provides a lower material cost and a very fast assembly time with minimal complexity and similar human-like articulation.

- InMoov has a 3D-printed upper and lower human body with an assembly of parts and alignments, which leads to a longer build time and a more rigid structure.

- Poppy Humanoid has bio-inspired and modular limbs, though the standard templates rely on precision printing and close-tolerance joints.

- Robotis Mini, PLEN2, and TAMIYA Biped Kit are based on lightweight molded plastic components, which reduced weights but were limiting for customization.

- Bioloid GP and QD Robot Kit use aluminum alloy and high-grade nylon reinforced plastics for their component materials, which provide strength, but the robot requires a heavier-weight and more expensive robotic platform.

- Teto Humanoid is maker-friendly and defines the guidelines for typically using community-defined 3D-printed parts and custom frames.

4.6.2. Actuation System and Joint Capabilities

The proposed robot makes use of FS5103B servos, which deliver 13 kg·cm torque—sufficient for upper-body handling. The robot possesses 12 DOF in the upper body, used in arm and head motion required for interaction, object manipulation, and basic home-assistant tasks.

- InMoov employs MG996R/MG995 servos across 16–22 DOF, which are cost-effective but leave the system vulnerable to overheating and mechanical jitter.

- Poppy, Robotis Mini, and Bioloid GP utilize Dynamixel actuators, which are highly precise and provide real-time feedback at the cost of significantly increasing system expenses.

- PLEN2 makes use of 18 proprietary walking and smooth motion actuators, but their limited torque output limits payload capacity.

- TAMIYA Biped Kit provides only 6 DOF, with specialization in legged motion, with no manipulation capability.

- Teto and QD Robot Kit typically supply 14–18 DOF, employing regular hobby servos or Dynamixel-type smart actuators.

4.6.3. Control Architecture and User Interface Integration

One of the main influences on usability is the robot’s control system and the method of interaction with it. The subject robot is centered on an Arduino Mega 2560 platform, interfaced with a Bluetooth module (HC-05) and an Android-based GUI created using MIT App Inventor. This offers the following:

- Manual control by touchscreen;

- Integration of voice command functionality using Google Speech API;

- Gesture-based mapping of control.

In contrast,

- InMoov is controlled using MyRobotLab, requiring a PC interface and a more advanced setup.

- Poppy and Bioloid GP use ROS or custom desktop software, offering high flexibility for advanced users.

- Robotis Mini, PLEN2, and QD Robot Kit offer app-based interfaces with pre-programmed gesture scripts.

- TAMIYA Biped Kit is completely manual with no electronic interface.

- Teto Humanoid uses Arduino or community-developed GUIs, offering varying degrees of sophistication.

4.6.4. Use-Case Suitability and Modularity

A humanoid robot’s utility is not only a function of its structural and control sophistication but also of its usability in the real world across a range of applications. The proposed robot was designed with specific emphasis on accessibility, task usefulness, and versatility, with target primary applications being home automation, human–robot interaction research, and STEM-focused robotics education.

In this section, we compare the relative platforms along four significant dimensions:

- Home-Assistance Capability: Ability to perform household activities such as picking-and-placing, object manipulation, and general environmental interaction.

- HRI Features: Multimodal interaction capability via voice, touch, visual feedback, and gesture control.

- Educational Suitability: Applicability for use in K–12 through university robotics classes.

- Modularity and Expandability: Possibility of structural or software-based modification, customization, or extension for novel applications.

As one can see from Table 6, the proposed humanoid robot shows a remarkable balance in every aspect evaluated. Its mobile app-based UI, secure cargo capacity, and modularity present it as particularly tailored for domestic use and education in resource-constrained environments. While more advanced systems like Poppy and Bioloid GP offer improved control and maneuverability, they have the tendency to require intensive financial and technical resources. Conversely, novice kits like TAMIYA or Robotis Mini are less costly but not of very much practical value.

Table 6.

Use-case suitability comparison.

With functionality at a cost fraction, the solution being designed is an ideal middle-range option—fine for environments calling for reasonable task running, expandability, and content instruction without complicated setup or advanced hardware.

4.6.5. Comparative Summary

The comparative Table 7 effectively shows how the suggested humanoid robot effectively bridges the gap between low-capability educational sets and more capable research-grade humanoid devices. It combines essential mechanical and control features—like 12 degrees of freedom, a payload capacity of 2 kg, and a Bluetooth-based mobile GUI—into an economic and accessible structure. At approximately USD 312, the system is comfortably in the competitive space below that of top-of-the-line platforms like Poppy (~USD 2500) or Bioloid GP (~USD 1200) but offers substantially greater task utility and adaptability than low-end packages like the TAMIYA Biped Kit or Robotis Mini.

Table 7.

Comparative summary table.

From the standpoint of ease of use, the low assembly complexity of the robot and its compatibility with Arduino-based programming platforms make it ideal for students, educators, and hobbyists. Even its modular nature, acquired through 3D-printed joints and LEGO-based head modules, facilitates customization and experimentation—options one cannot normally expect from commercial kits. Meanwhile, support for voice input in the mobile interface provides a level of human–robot interaction unavailable in this price range.

While more expensive systems like Poppy, Bioloid GP, and QD Robot Kit have better motion control and ROS support, they demand much higher technical skills, expense, and facilities for effective operation. On the other hand, while platforms like TAMIYA and PLEN2 are cheap and easy to start with, they fall short in mechanical robustness and control extensibility required for assistive or interactive use.

Overall, the robot design introduced offers a fair tradeoff among mechanical performance, ease of control, and modularity in the system that is highly conducive to use in the environment of STEM classrooms, experiments in home automation, and low-cost HRI research laboratories. The design emphasizes usability with respect to function, enabling a wide range of users to experiment with humanoid robotics short of theory-only or simulation-only settings.

5. Results and Discussion

A comprehensive set of tests was carried out to verify the efficacy and performance of the suggested low-cost humanoid robot. These tests focused on servo performance, task execution success rate, energy efficiency, joint motion comparison, and human–robot similarity in degrees of freedom (DOF). The accuracy, human-likeness, energy, and task success efficiency of the robot’s movements are suitable for evaluating its practicality in home-assistant scenarios. In-depth analyses backed by structured tables of empirical data are presented in the ensuing subsections.

5.1. Anatomical Comparison: Degrees of Freedom and Motion Range

A comparison of joint degrees of freedom (DOF) is shown in Table 8, and an assessment of the robot’s joint range of motion in relation to human capabilities is shown in Table 9. By contrasting the humanoid robot’s joint degrees of freedom (DOF) with those of the human body, Table 8 illustrates both the robot’s limitations and its capacity to mimic human motion. The human neck’s articulation is very similar to the two DOFs the robot uses to replicate full head motion. The robot’s wrist and shoulder joints are simplified compared to human anatomy, allowing only one-axis movement, where humans have multi-axis articulation. The robot can still manipulate and position objects in basic ways, but its capacity to perform complex or highly dexterous tasks is significantly diminished by its limited shoulder rotation and lack of wrist rotation (pronation/supination).

Table 8.

Degrees of freedom (DOF)—robot vs. human.

Table 9.

Robot vs. human motion comparison.

Table 9 was developed to better evaluate the humanoid robot’s anatomical fidelity in comparison to the human upper body. These contrasts are essential for assessing the robot’s capacity to carry out tasks that resemble those of a human.

By contrasting the humanoid robot’s joint degrees of freedom (DOF) with those of the human body, Table 8 illustrates both the robot’s limitations and its capacity to mimic human motion. The human neck’s articulation is very similar to the two DOFs the robot uses to replicate full head motion. The robot’s wrist and shoulder joints are simplified compared to human anatomy, allowing only one-axis movement, where humans have multi-axis articulation. The robot can still manipulate and position objects in basic ways, but its capacity to perform complex or highly dexterous tasks is significantly diminished by its limited shoulder rotation and lack of wrist rotation (pronation/supination).

Table 9 illustrates that the use of the humanoid robot’s range of motion analysis reveals that it closely mimics human movement patterns in the majority of joints, especially the elbow and head. The robot reaches over 89% of human capacity in shoulder and elbow flexion, and it can move the head 100% of a human range. The main causes of the slight decreases in wrist and shoulder range are servo and mechanical limitations. While indicating areas for possible future improvement in simulating more natural, human-like fluidity of motion, the robot nevertheless retains enough mobility for standard home-assistant tasks.

5.2. Manipulation and Scenario-Based Performance Analysis

To evaluate the practical functionality and manipulation skills of the humanoid robot, performance testing was conducted in two distinct stages. The first stage involved executing simple, structured household tasks—such as object retrieval, button pressing, and interaction with lightweight doors—emulating real-world smart-home-assistance scenarios. These tasks focused on evaluating the robot’s gross motor control and environmental responsiveness. The second stage examined object handling and manipulation efficiency with various shapes and weights. This phase specifically assessed the robot’s fine motor control, grip precision, and adaptation to different geometries, which are critical for handling unpredictable or irregular items in home environments.

The robot’s operational success in realistic environments is highlighted in Table 10. The robot excels at tasks requiring accuracy and repeatable motion patterns, such as button pressing and pick-and-place. Lightweight door operation and shelf-based object retrieval, which require more subtle joint articulation and variable force application, exhibit somewhat worse performance. Because of the hardware limitations now, especially regarding fluid handling and wrist control, pouring water was not attempted. Overall, the results demonstrate the robot’s strong performance in structured household environments with room for improvement in dynamic scenarios.

Table 10.

Task execution accuracy across scenarios.

In this second phase of testing, Table 11 shows that the robot’s object manipulation skills were challenged using items of varying shapes and weights. It performed best when handling cubic and lightweight objects due to their consistent geometry and balance, achieving success rates of 94% and 98%, respectively. The robot struggled more with irregular shapes, where unpredictable contours compromised grip stability. The cylindrical object posed moderate difficulty due to potential slippage and alignment issues. These outcomes affirm the robot’s capacity for handling standardized household items but suggest that further enhancements in end-effector design and adaptive control algorithms could expand its effectiveness in more complex or unstructured object manipulation scenarios.

Table 11.

Object manipulation success rate.

5.3. Servo Performance and Power Efficiency Analysis

This section provides a detailed analysis of the robot’s servo responsiveness and its overall power consumption under various operational modes. Servo responsiveness is a suitable metric that determines the precision and speed of robot movement, which directly affects task performance, especially in real-time control scenarios. Similarly, power efficiency plays a significant role in determining the robot’s operating lifespan on a single charge, influencing its practicality for continuous use in domestic environments.

Table 12 above outlines the servo actuation characteristics for each joint, using the FS5103B model across all applications. Response times generally increase from the head joints to the wrist joints, reflecting the additional mechanical load and range involved in moving the arms. Head movement is the fastest, which is advantageous for tasks requiring quick attention redirection, while the wrists exhibit the slowest response time due to greater lever arm effects and servo workload.

Table 12.

Average servo response time by joint.

Maximum deviation remains within ±12 to ±20 milliseconds, indicating a reasonable level of consistency and stability in the servo’s performance. Although deviations slightly increase in distal joints, this performance is still acceptable for non-industrial applications like smart-home assistance. The FS5103B offers a balance between cost and responsiveness, making it a suitable choice for low-cost humanoid robotics, albeit with potential limitations in tasks requiring ultra-precise timing.

Table 13 presents the robot’s energy usage patterns across various functional scenarios, ranging from idle mode to full dual-arm operation with visual feedback. Power consumption increases proportionally with task complexity, with the lowest draw observed during idle mode (1.2 W) and the highest during full engagement of motion and vision systems (9.0 W). This gradient in power use underscores the importance of energy-aware task scheduling in practical deployments.

Table 13.

Energy consumption across tasks.

The corresponding operational times highlight that the robot can run continuously for up to 12 h when idle or for approximately 2 h under full load. These values are suitable for most domestic tasks if recharging intervals are planned appropriately. The system demonstrates commendable energy efficiency given its mechanical workload, and the modular power consumption profile supports flexible deployment depending on task intensity and battery availability.

5.4. Task-Based Performance Benchmarking with Low-Cost Humanoid Robots

To validate the practical effectiveness of the novel humanoid robot design and illustrate more tangible positioning within the field, we present a side-by-side performance evaluation with nine other low-cost popular platforms. The benchmarking includes task-level comparisons in five common activities of general importance to assistive humanoid systems:

- Pick-and-place from flat surfaces;

- Pick up from elevated shelf heights;

- Open light doors;

- Pour water from a cup;

- Press push buttons.

These tasks exhibit the robot’s usability at home, basic HRI scenarios, and teaching demonstrations. The robots selected for comparison represent a wide variety of affordability, popularity, and availability. This section presents a performance-based comparison of the robot here with a variety of well-known low-cost humanoid platforms. Rather than comparing mechanical capabilities alone, the comparison benchmarks five essential household tasks. These are basic functional requirements in assistive, smart home, and educational robotics. Performance measures shown in Table 14 for the proposed system here are drawn from controlled experiments in Section 5.2. Measures for other robots are estimated from published benchmarks, developer manuals, or experimental studies reported.

Table 14.

Task-based performance benchmarking.

Table 14 benchmarking outcomes provide a relative evaluation of the proposed humanoid robot against several popular low-cost platforms in terms of task-based criteria. The selected tasks—pick-and-place, shelf interaction, door opening, water pouring, and button pressing—are typical of core real-world application domains in service robotics, human–robot interaction (HRI), and education. The proposed robot demonstrates excellent reliability in high-frequency tasks such as flat-surface object handling (96%) and push-button use (98%), indicating excellent repeatability and actuator coordination for its category.

As compared with more advanced systems such as Bioloid GP and Poppy Humanoid, which also score highly in these aspects, the proposed system performs equally at a fraction of the cost and complexity. Although its door opening (76%) and shelf interaction (90%) scores are modestly lower, these remain competitive, given the absence of sensor-integrated or high-torque end effectors. The robot currently does not enable tasks requiring fine wrist control (e.g., pouring), an identified design deficiency summarized in Section 5.

One main differentiator is the setup complexity. Robotics platforms such as InMoov, Poppy, and Bioloid GP require special software (e.g., ROS and MyRobotLab), offboard computer resources, or technological assembly that may discourage non-technical users. In contrast, the robot in this work is a plug-and-play system with Arduino-based control, Bluetooth, and a mobile-supporting GUI that allows rapid deployment in classroom, prototyping, or demonstration environments without the use of a host PC or special programming expertise.

Low-end kits such as Robotis Mini, PLEN2, and TAMIYA exhibit ease of setup but limit functional performance. These systems are usually not adequate for manipulation-centric tasks, either due to low articulation or a shortage of enough torque, which inhibits their utilization in assistive or dynamic settings. The designed system elegantly fills this gap by pitting low setup overhead against provably correct task execution, particularly in object manipulation and user-specified tasks.

Overall, the anticipated humanoid robot offers a compelling combination of task reliability, power efficiency, usability, and budget affordability. Its performance earns its adoption in schools, HRI demonstrations, and homes, especially where affordability and rapid setup are vital. The results-based benchmarking of the system not only validates its key capabilities but also determines its potential for broader deployment in applied robotics with minimal infrastructure requirements.

5.5. AI Integration

To achieve realistic and dependable obstacle detection and avoidance, we employ low-cost infrared and ultrasonic sensors, which are often found in Arduino packages. These sensors enable the robot to recognize things in its path and appropriately steer clear of or prevent collisions with them. By integrating these affordable and dependable sensor technologies, we make sure that the robot can function securely in a variety of settings, which helps the robot and eventually can lead to only having robotic help around the house. This level of capacity is critical for robots to cooperate with humans in everyday scenarios, and it is consistent with Industry 5.0 concepts such as human safety and adaptability in intelligent systems.

The robot’s camera system with real-time image processing and machine learning (ML) provides a rich understanding of the surrounding environment through two essential components: intelligent navigation and preventive monitoring. While the robot navigates its environment, the camera system classifies obstacles, separating static (e.g., walls), dynamic (e.g., human or pets), and hazard; and, at the same time, scanning and searching for objects of interest—all of this, while making navigation decisions based on context (e.g., turning in a direction to avoid a pet; carefully avoiding fragile items).

Looking beyond navigation, the system actively detects emergencies or verified objects specified by the user (liquid spills, fallen people, misplaced tools, and suspicious packages). When detected, the robot captures an image and evaluates the emergency using its ML model and generates an alert (in real time) with visual evidence that is sent to the user’s smartphone. Having dual capability is extremely useful for home security devices or eldercare, where the robot functions as a mobile safety monitor and autonomous assistant. When combined with a real-time analysis of the environment and alerting the user, the system achieves real autonomy with AI that meshes meaningful human–robot collaboration instead of basic obstacle detection and avoidance.

5.6. Practical Applications

The humanoid robot’s thorough performance evaluation attests to its suitability for a variety of real-world uses, especially in the fields of education, research, and assistance. The robot has shown a realistic balance between cost, functionality, and dependability in everything from servo response evaluations to power consumption tests. Despite having simplified degrees of freedom, it can execute human-like motions with high precision, which makes it a great model for teaching the basics of robotics. Particularly in STEM and robotics curricula, students can use this reasonably priced platform to investigate sensor integration, kinematic modeling, motion planning, and mobile control interfaces. With success rates between 90% and 98%, scenario-based task results further confirm the robot’s capacity to perform basic domestic tasks like object pick up, shelf retrieval, and button activation. These results justify its use in smart-home environments to help with everyday tasks, especially for elderly or mobility-impaired people.

The robot is currently capable of performing tasks like navigating through home layouts, pressing control buttons, and retrieving objects. Further illustrating the robot’s versatility in a variety of settings and demands is the systematic two-phase testing methodology, which covers both complex object handling and real-world actions. Power efficiency and modular design further extend the robot’s utility across longer durations and varied operational settings. The robot can function effectively for 5 to 12 h depending on load intensity, making it viable for daily use in homes, hospitals, or research labs without frequent recharging. The 3D-printed components and open-source software architecture (e.g., Arduino and MIT App Inventor) allow for easy replication and customization, supporting research initiatives that explore AI integration, swarm behavior, and machine learning. Altogether, the experimental outcomes show that this low-cost humanoid robot is not only a viable tool for academic training but also a valuable assistant in home automation, healthcare logistics, and interactive robotics research.

6. Conclusions

This study demonstrated the development, deployment, and assessment of an affordable, remote-controlled humanoid robot for use as a smart-home assistant. With a weight limit of up to 2 kg, the robot showed that it could accomplish a range of useful tasks, such as manipulating objects and interacting with the home. With the help of FS5103B servo motors and a mobile application interface, the system was able to produce precise, stable movements at a low cost. Two phases of structured testing—task-based scenarios and object handling with different weights and shapes—were used to validate the robot’s performance. The mechanical and control design’s effectiveness was confirmed by the results, which consistently demonstrated high success rates for routine household tasks and dependable performance across multiple joints. Important conclusions drawn from this research emphasize the importance of power-conscious motion planning, modular hardware architecture, and effective software integration.

The entire building cost was only USD 312 thanks to the use of commercially available electronics, open-source programming tools (such as C++, Arduino, and MIT App Inventor), and 3D-printed components. The suggested robot is suitable for use in research, assistive technology, and education because of its low cost, robust performance metrics, and versatility. To allow for more autonomous operations, future improvements might involve integrating cutting-edge sensors, increasing the number of degrees of freedom, and enhancing gripper dexterity. The main points of the research can be summarized as follows:

- Efficient software integration using C++ for core functionality and MIT App Inventor for user-friendly mobile app control.

- Enhanced payload capacity through optimized mechanical design, enabling reliable object lifting (2 kg+).

- Cost-effective production using 3D-printed parts and off-the-shelf electronic components.

- Open-source foundation ideal for STEM education, hands-on learning, and robotics experimentation.

Future work will focus on enhancing the robot’s dexterity, improving the battery life, and incorporating advanced sensory feedback mechanisms to enable more autonomous operations. Additionally, exploring the potential of machine learning algorithms for adaptive and intelligent behavior will be pivotal in advancing the capabilities of such humanoid robots. As we move forward, we recognize the critical importance of esthetics in the overall user experience. While the current design prioritizes functionality, our vision for future iterations includes significant emphasis on improving the robot’s appearance. We aim to create a visually appealing product that resonates with users and enhances its usability. By integrating thoughtful design elements, we aspire to transform the robot into not just a functional tool but an attractive and engaging companion that complements modern living.

Author Contributions

Conceptualization, K.M.S., A.O.E., M.H.E., A.E., H.E., and M.S.M.; methodology, K.M.S., A.O.E., M.H.E., A.E., H.E., and M.S.M.; software, K.M.S., A.E., and A.O.E.; validation, K.M.S., A.E., A.O.E., and M.S.M.; formal analysis, K.M.S., A.E., A.O.E., M.H.E., and M.S.M.; investigation, K.M.S., A.O.E., and M.S.M.; resources, K.M.S., A.O.E., M.H.E., and M.S.M.; data curation, K.M.S., A.O.E., H.E., and M.S.M.; writing—original draft preparation, K.M.S., M.H.E., H.E., and A.O.E.; writing—review and editing, K.M.S., A.E., A.O.E., M.H.E., and M.S.M.; visualization, K.M.S., A.E., A.O.E., and M.S.M.; supervision, K.M.S., A.E., A.O.E., M.H.E., H.E., and M.S.M. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The data that supports the findings of this study are available from the corresponding author upon reasonable request.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Adel, A. Future of Industry 5.0 in Society: Human-Centric Solutions, Challenges and Prospective Research Areas. J. Cloud Comput. 2022, 11, 40. [Google Scholar] [CrossRef]

- Demir, K.A.; Döven, G.; Sezen, B. Industry 5.0 and Human-Robot Co-Working. Procedia Comput. Sci. 2019, 158, 688–695. [Google Scholar] [CrossRef]

- Sferrazza, C.; Huang, D.-M.; Lin, X.; Lee, Y.; Abbeel, P. Humanoidbench: Simulated Humanoid Benchmark for Whole-Body Locomotion and Manipulation. arXiv 2024, arXiv:2403.10506. [Google Scholar]

- Mukherjee, S.; Baral, M.M.; Pal, S.K.; Chittipaka, V.; Roy, R.; Alam, K. Humanoid Robot in Healthcare: A Systematic Review and Future Research Directions. In Proceedings of the 2022 International Conference on Machine Learning, Big Data, Cloud and Parallel Computing (COM-IT-CON), Faridabad, India, 26–27 May 2022; Volume 1, pp. 822–826. [Google Scholar]

- Tomic, M.; Potkonjak, V.; Rodic, A.; Antoska, V. Human-and-Humanoid Motion-Distinguish between Safe and Risky Mode. IFAC Proc. Vol. 2012, 45, 524–529. [Google Scholar] [CrossRef]

- Zakka, K.; Tabanpour, B.; Liao, Q.; Haiderbhai, M.; Holt, S.; Luo, J.Y.; Allshire, A.; Frey, E.; Sreenath, K.; Kahrs, L.A. Mujoco Playground. arXiv 2025, arXiv:2502.08844. [Google Scholar]

- Mu, Y.; Chen, T.; Chen, Z.; Peng, S.; Lan, Z.; Gao, Z.; Liang, Z.; Yu, Q.; Zou, Y.; Xu, M. Robotwin: Dual-Arm Robot Benchmark with Generative Digital Twins. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025; pp. 27649–27660. [Google Scholar]

- Elreafay, A.M.; Salem, K.M.; Abumandour, R.M.; Dawood, A.S.; Al Nuaimi, S. Effect of Particle Diameter and Void Fraction on Gas–Solid Two-Phase Flow: A Numerical Investigation Using the Eulerian–Eulerian Approach. Comput. Part. Mech. 2025, 12, 289–311. [Google Scholar] [CrossRef]

- Gu, Z.; Li, J.; Shen, W.; Yu, W.; Xie, Z.; McCrory, S.; Cheng, X.; Shamsah, A.; Griffin, R.; Liu, C.K. Humanoid Locomotion and Manipulation: Current Progress and Challenges in Control, Planning, and Learning. arXiv 2025, arXiv:2501.02116. [Google Scholar]

- Liu, F.; Gu, Z.; Cai, Y.; Zhou, Z.; Jung, H.; Jang, J.; Zhao, S.; Ha, S.; Chen, Y.; Xu, D. Opt2skill: Imitating Dynamically-Feasible Whole-Body Trajectories for Versatile Humanoid Loco-Manipulation. arXiv 2024, arXiv:2409.20514. [Google Scholar]

- Luo, Z.; Wang, J.; Liu, K.; Zhang, H.; Tessler, C.; Wang, J.; Yuan, Y.; Cao, J.; Lin, Z.; Wang, F. Smplolympics: Sports Environments for Physically Simulated Humanoids. arXiv 2024, arXiv:2407.00187. [Google Scholar]

- Tirinzoni, A.; Touati, A.; Farebrother, J.; Guzek, M.; Kanervisto, A.; Xu, Y.; Lazaric, A.; Pirotta, M. Zero-Shot Whole-Body Humanoid Control via Behavioral Foundation Models. arXiv 2025, arXiv:2504.11054. [Google Scholar]

- Sferrazza, C.; Huang, D.-M.; Liu, F.; Lee, J.; Abbeel, P. Body Transformer: Leveraging Robot Embodiment for Policy Learning. arXiv 2024, arXiv:2408.06316. [Google Scholar]

- Salem, K.M.; Rady, M.; Aly, H.; Elshimy, H. Design and Implementation of a Six-Degrees-of-Freedom Underwater Remotely Operated Vehicle. Appl. Sci. 2023, 13, 6870. [Google Scholar] [CrossRef]

- Abumandour, R.M.; El-Reafay, A.M.; Salem, K.M.; Dawood, A.S. Numerical Investigation by Cut-Cell Approach for Turbulent Flow through an Expanded Wall Channel. Axioms 2023, 12, 442. [Google Scholar] [CrossRef]

- Salem, K.M.; Rey-Hernández, J.M.; Elgharib, A.O.; Rey-Martínez, F.J. Optimizing Energy Forecasting Using ANN and RF Models for HVAC and Heating Predictions. Appl. Sci. 2025, 15, 6806. [Google Scholar] [CrossRef]

- Salem, K.M.; Rey-Hernández, J.M.; Rey-Martínez, F.J.; Elgharib, A.O. Assessing the Accuracy of AI Approaches for CO2 Emission Predictions in Buildings. J. Clean. Prod. 2025, 513, 145692. [Google Scholar] [CrossRef]

- Chen, Y.; Yu, W.; Benali, A.; Lu, D.; Kok, S.Y.; Wang, R. Towards Human-like Walking with Biomechanical and Neuromuscular Control Features: Personalized Attachment Point Optimization Method of Cable-Driven Exoskeleton. Front. Aging Neurosci. 2024, 16, 1327397. [Google Scholar] [CrossRef]

- Clever, D.; Hu, Y.; Mombaur, K. Humanoid Gait Generation in Complex Environments Based on Template Models and Optimality Principles Learned from Human Beings. Int. J. Rob. Res. 2018, 37, 1184–1204. [Google Scholar] [CrossRef]

- Boutin, L.; Eon, A.; Zeghloul, S.; Lacouture, P. From Human Motion Capture to Humanoid Locomotion Imitation Application to the Robots HRP-2 and HOAP-3. Robotica 2011, 29, 325–334. [Google Scholar] [CrossRef]

- Zhao, E.; Raval, V.; Zhang, H.; Mao, J.; Shangguan, Z.; Nikolaidis, S.; Wang, Y.; Seita, D. ManipBench: Benchmarking Vision-Language Models for Low-Level Robot Manipulation. arXiv 2025, arXiv:2505.09698. [Google Scholar]

- Liu, Y.; Yang, B.; Zhong, L.; Wang, H.; Yi, L. Mimicking-Bench: A Benchmark for Generalizable Humanoid-Scene Interaction Learning via Human Mimicking. arXiv 2024, arXiv:2412.17730. [Google Scholar]

- Xu, X.; Bauer, D.; Song, S. Robopanoptes: The All-Seeing Robot with Whole-Body Dexterity. arXiv 2025, arXiv:2501.05420. [Google Scholar]

- Salem, K.M.; Elreafay, A.M.; Abumandour, R.M.; Dawood, A.S. Modeling Two-Phase Gas-Solid Flow in Axisymmetric Diffusers Using Cut Cell Technique: An Eulerian-Eulerian Approach. Bound. Value Probl. 2024, 2024, 150. [Google Scholar] [CrossRef]

- Shamsuddoha, M.; Nasir, T.; Fawaaz, M.S. Humanoid Robots like Tesla Optimus and the Future of Supply Chains: Enhancing Efficiency, Sustainability, and Workforce Dynamics. Automation 2025, 6, 9. [Google Scholar] [CrossRef]

- Gupta, S.; Mamodiya, U.; Al-Gburi, A.J.A. Speech Recognition-Based Wireless Control System for Mobile Robotics: Design, Implementation, and Analysis. Automation 2025, 6, 25. [Google Scholar] [CrossRef]

- Joshi, V.; Xu, Z.; Liu, B.; Stone, P.; Zhang, A. Benchmarking Massively Parallelized Multi-Task Reinforcement Learning for Robotics Tasks. arXiv 2025, arXiv:2507.23172. [Google Scholar]

- Seo, Y.; Abbeel, P. Reinforcement Learning with Action Sequence for Data-Efficient Robot Learning. arXiv 2024, arXiv:2411.12155. [Google Scholar]

- Fujita, M.; Kuroki, Y.; Ishida, T.; Doi, T.T. A Small Humanoid Robot Sdr-4x for Entertainment Applications. In Proceedings of the Proceedings 2003 IEEE/ASME International Conference on Advanced Intelligent Mechatronics (AIM 2003), Kobe, Japan, 20–24 July 2003; Volume 2, pp. 938–943. [Google Scholar]

- Salem, K.M.; Rey-Martínez, F.J.; Elgharib, A.O.; Rey-Hernández, J.M. Energy Demand Forecasting Scenarios for Buildings Using Six AI Models. Appl. Sci. 2025, 15, 8238. [Google Scholar] [CrossRef]

- Iqbal, J.; Islam, R.U.; Khan, H. Modeling and Analysis of a 6 DOF Robotic Arm Manipulator. Can. J. Electr. Electron. Eng. 2012, 3, 300–306. [Google Scholar]

- Potkonjak, V.; VukobratoviĆ, M.; BabkoviĆ, K.; Borovac, B. General Model of Dynamics of Human and Humanoid Motion: Feasibility, Potentials and Verification. Int. J. Humanoid Robot. 2006, 3, 21–47. [Google Scholar] [CrossRef]

- Toquica, A.; Martinez, L.O.; Rodriguez, R.; Chavarro, A.C.; Cardozo, T. Kinematic Modelling of a Robotic Arm Manipulator Using Matlab. J. Eng. Appl. Sci. 2017, 12, 1819–6608. [Google Scholar]

- Deshpande, V.; George, P.M. Kinematic Modelling and Analysis of 5 DOF Robotic Arm. Int. J. Robot. Res. Dev. 2014, 4, 17–24. [Google Scholar]

- Shamsuddin, S.; Ismail, L.I.; Yussof, H.; Zahari, N.I.; Bahari, S.; Hashim, H.; Jaffar, A. Humanoid Robot NAO: Review of Control and Motion Exploration. In Proceedings of the 2011 IEEE International Conference on Control System, Computing and Engineering, Penang, Malaysia, 25–27 November 2011; pp. 511–516. [Google Scholar]

- Saeedvand, S.; Jafari, M.; Aghdasi, H.S.; Baltes, J. A Comprehensive Survey on Humanoid Robot Development. Knowl. Eng. Rev. 2019, 34, e20. [Google Scholar] [CrossRef]

- Jing, Z.; Yang, S.; Ao, J.; Xiao, T.; Jiang, Y.; Bai, C. HumanoidGen: Data Generation for Bimanual Dexterous Manipulation via LLM Reasoning. arXiv 2025, arXiv:2507.00833. [Google Scholar]

- Lv, L.; Li, Y.; Luo, Y.; Sun, F.; Kong, T.; Xu, J.; Ma, X. Flow-Based Policy for Online Reinforcement Learning. arXiv 2025, arXiv:2506.12811. [Google Scholar]

- Erdmann, W.S. Problems of Sport Biomechanics and Robotics. Int. J. Adv. Robot. Syst. 2013, 10, 123. [Google Scholar] [CrossRef]

- Singh, D.; Singh, Y. Development and Analysis of a Five Degrees of Freedom Robotic Manipulator Serving as a Goalkeeper to Train the Football Players. In Proceedings of the IOP Conference Series: Materials Science and Engineering, Melbourne, Australia, 26–28 September 2018; Volume 402, p. 012092. [Google Scholar]

- Selvaggio, M.; Cacace, J.; Pacchierotti, C.; Ruggiero, F.; Giordano, P.R. A Shared-Control Teleoperation Architecture for Nonprehensile Object Transportation. IEEE Trans. Robot. 2021, 38, 569–583. [Google Scholar] [CrossRef]

- Pacchierotti, C.; Prattichizzo, D. Cutaneous/Tactile Haptic Feedback in Robotic Teleoperation: Motivation, Survey, and Perspectives. IEEE Trans. Robot. 2023, 40, 978–998. [Google Scholar] [CrossRef]

- Li, G.; Li, Q.; Yang, C.; Su, Y.; Yuan, Z.; Wu, X. The Classification and New Trends of Shared Control Strategies in Telerobotic Systems: A Survey. IEEE Trans. Haptics 2023, 16, 118–133. [Google Scholar] [CrossRef]

- Heins, A.; Schoellig, A.P. Keep It Upright: Model Predictive Control for Nonprehensile Object Transportation with Obstacle Avoidance on a Mobile Manipulator. IEEE Robot. Autom. Lett. 2023, 8, 7986–7993. [Google Scholar] [CrossRef]

- Kajita, S.; Hirukawa, H.; Harada, K.; Yokoi, K. Kinematics. In Introduction to Humanoid Robotics; Springer: Berlin/Heidelberg, Germany, 2014; pp. 19–67. [Google Scholar]

- Jaafar, A.; Raman, S.S.; Wei, Y.; Harithas, S.; Juliani, S.; Wernerfelt, A.; Quartey, B.; Idrees, I.; Liu, J.X.; Tellex, S. λ: A Benchmark for Data-Efficiency in Long-Horizon Indoor Mobile Manipulation Robotics. arXiv 2024, arXiv:2412.05313. [Google Scholar]

- Baek, E.; Park, K.; Ko, J.; Oh, M.; Gong, T.; Kim, H.-S. AI Should Sense Better, Not Just Scale Bigger: Adaptive Sensing as a Paradigm Shift. arXiv 2025, arXiv:2507.07820. [Google Scholar]

- ElMessmary, M.H.; Diab, H.Y.; Abdelsalam, M.; Moussa, M.F. A Novel Optimization Algorithm Inspired by Egyptian Stray Dogs for Solving Multi-Objective Optimal Power Flow Problems. Appl. Syst. Innov. 2024, 7, 122. [Google Scholar] [CrossRef]

- Dang, L.; Kwon, J. Design of a New Cost-Effective Head for a Low-Cost Humanoid Robot. In Proceedings of the 2016 IEEE 7th Annual Ubiquitous Computing, Electronics & Mobile Communication Conference (UEMCON), New York, NY, USA, 20–22 October 2016; pp. 1–7. [Google Scholar]

- Fernando, M.J.; Saypulaev, G.R.; Saypulaev, M.R. Analysis of a Four-Legged Robot Kinematics during Rotational Movements of Its Body. Adv. Eng. Res. (Rostov-on-Don) 2025, 25, 14–22. [Google Scholar] [CrossRef]