1. Introduction

Human gait is a complex process that involves the entire locomotor system. As a consequence, it is affected by many factors, like diseases, injuries, and age, just to mention a few [

1]. Gait analysis is a discipline that studies the walking process of subjects in order to reveal these factors. Since the gait is very individual, not only medical professionals are interested in its analysis. Security research recognized the opportunity to use the walking behavior as a biometric [

2]. Progressing computer performance allowed for complex image processing tasks. Gait analysis now offers person identification using a biometric that is recorded from a distance by surveillance cameras, for example.

Not only can vision systems identify individuals by their gait, but humans themselves are able to recognize others in their workplace, e.g., by means of visual and aural perception [

3]. Even without visual information, humans are still able to identify a passerby in front of their office door only by its walking sound. Individual training may enhance the performance of recognition.

Person identification using only walking sound includes some advantages. It requires less complex and expensive hardware than visual systems. Difficult coverage, illumination, and view angle are just a few difficulties that are associated with the use of cameras. Sound, on the other hand, propagates freely in the air, and the position of the microphone can be selected less strictly.

An acoustic gait analysis to extract parameters for medical purposes is presented in [

4]. The authors are able to extract the quantitative characteristics of human gait by using audio signals from a recording room. Further studies are foreseen to apply this technique in clinical conditions. In contrast to the preceding non-intrusive measurement, the authors in [

5] work with wearable microphones to record the walking sound of individuals. Data analysis is performed with an external laptop.

Nevertheless, walking sounds are complex audio signals. Simple time signal recording and comparison cannot reveal the identity of the walker. Variations of walking sound from step-to-step are rather high. The human ear and brain are able to distinguish individuals. Therefore, neural networks seem to be ideally suited to solve this issue and identify a walker. In fact, neural networks have been discovered for this application in recent years [

6,

7,

8,

9,

10]. The increasing capability of convolutional neural networks make them popular in machine learning applications. Deep learning facilitates the training of CNN with high numbers of layers and parameters. The origin of the CNN lies in image recognition tasks. Nets, like VGG16/19 [

11], AlexNet [

12], or DenseNet [

13], are able to classify images with human-like performance.

This performance led to a trend of reformulating non-image based problems into image-based ones. Bird song recordings are transformed into spectrograms in [

14,

15]. The species are determined with high accuracy by using image recognition CNN on the spectrograms. Comparable approaches have been made to recognize speakers [

16,

17] and their emotion [

17,

18]. Auditory information allows humans to recognize their environment and react to events. The same functionality is transferred to machine learning using CNN. Numerous challenges, like

Detection and Classification of Acoustic Scenes and Events (DCASE) (dcase.community), provide tasks for event and scene recognition based on audio recordings. In many cases these tasks are solved by the use of CNN [

17,

19,

20,

21,

22].

In this paper, the objective is to identify walking subjects based on single microphone recordings of airborne sound. The focus lies on the recognition of step sound. Here, step sound is treated as a subset of walking sound. Walking sound includes all sounds that are emitted by a walking person. Sources may be clothing, backpacks, handbags, etc. Furthermore, the selection of step sound for recognition gives the opportunity to neglect the walking speed or step frequency, respectively. Additionally, the process of running is excluded from analysis by defining that at least one foot must contact the ground [

1].

In medicine, the human gait is defined as a gait cycle subdivided into seven events. It begins when the heel of a foot has initial contact to the ground and it ends when the same foot touches the ground again. One cycle is divided into a stance and a swing phase. The stance phase begins with the initial contact of the heel and it remains as long as the foot is in contact with the ground. During the swing phase, the foot swings back and forward until the heel touches the ground again. From an acoustical point of view, the stance phase is of interest, since the footstep sound is emitted here. The human ear usually perceives only a single impact sound when the foot touches the ground. However, recordings of step sounds reveal that it consists of three subsequent impacts [

1,

4]. The initial contact of the heel leads to the heelstrike transient. It is followed by the mid-stance peak when the metatarso-phalangeal (MTP) joints hit the ground. The terminal stance or so-called toe-off event concludes the step sound. The characteristic of these three acoustic events is very individual and it may serve as a kind of biometric for person identification. For practical implementations, additional filtering of the step sounds by footwear, injuries, additional weight, or floor type, e.g., have to be considered.

The first section of this article describes the recording of experimental data in the acoustic lab of DLR. The recordings are pre-processed and transformed into images for the use in an input layer of a CNN. Afterwards, the setup and the training of the CNN is described in detail. In order to obtain the performance of the proposed CNN, it is applied to the validation dataset. Because CNN are like black boxes, a class activation mapping algorithm is used afterwards to gain insight in the functionality of the net. Two challenges in practical applications, noise and different footwear, are investigated in the final section.

3. Data Pre-Processing

The recorded raw audio signals have to be pre-processed before they are suitable as inputs for a CNN. The selected microphone has a frequency bandwidth of 20–20,000 Hz. Even in a semi-anechoic room, like the ATB, external low frequency noise with several meters wavelength is measured. Sources of this noise are often construction sites or traffic on highways or airports. To eliminate these disturbances, all of the time signals are high-pass filtered by a butterworth filter of fourth order with a cut-off frequency of 40 Hz. Afterwards, the steps are visible in the time signal, like in

Figure 1. Additionally,

Figure 2 shows the spectrum of the signal from M13. It becomes apparent that the main frequency content is below 1 kHz. This holds for all of the measurements.

The objective of this work is to identify the subjects by their step sound. Therefore, the locations of single steps have to be determined in the time signal. The localization is performed with the

find_peaks() method from

SciPy (

www.scipy.org/scipylib, accessed on 1 December 2020) library in

Python. The peak to find is the heelstrike transient, since it is usually higher than the mid-stance peak. Two additional parameters are given to the

find_peaks() method to ensure correct identification. First, the

distance parameter is set to the number of samples that correspond to 500 ms, which is the lower bound for the step distance observed in all measurements. Second, the parameter

height is set to five times the root mean square (RMS) value of the entire signal. This minimum height leads to a stable identification of the heelstrike transients while neglecting disturbance peaks or weak steps. Starting from the position of a heelstrike transient, a frame with

samples is placed around it to separate the step, see

Figure 3 (top). The heelstrike is placed at the first quarter of the frame.

corresponds to the mean step duration of 500 ms. The frame time signal is normalized to a RMS value of 1 to ensure constant signal energy. The time signal is transformed into a spectrogram by means of a Fast Fourier Transform (FFT) with overlapping windows, see

Figure 3 (mid). The size of the window

is set to 4096 to achieve a frequency resolution of 12.5 Hz. Because the step sound peaks are rather short events with 5–10 ms duration, a time resolution of 2.5 ms is chosen for the spectrogram. This leads to a large overlapping of the windows and, thus, a small hop size of

. The spectrogram is transformed to a mel-spectrogram by applying a mel filterbank [

23]. The filterbank consists of 25 triangular shaped filters within the bandwidth from 0 to 1 kHz, since the main frequency content is located here, see

Figure 2. The center frequencies are evenly distributed in the mel frequency domain. A discrete cosine transform (DCT) is applied to the result after calculating the logarithm of the mel-spectrogram.

As a result, each window in the frame is reduced to a vector of 25 so-called mel-frequency cepstral coefficients (MFCC). All 200 vectors of a frame are arranged in a matrix. This MFCC matrix is normalized to an interval of [0, 1].

Figure 3 (bottom) shows this matrix as a bitmap using a

viridis colormap from

Matplotlib (

matplotlib.org, accessed on 1 December 2020).

Finally, 70 to 90 steps are identified in each measurement of

Table 1. Each of these steps is transformed into a MFCC matrix and then exported as bitmap without compression. It serves as input to the CNN described in the following section.

5. Results

The CNN designed in the preceding section is evaluated against the entire validation dataset, which consists of 288 steps. Each step is classified by the CNN and then checked for correctness afterwards. The results are summarized in the confusion matrix shown in

Figure 6. The majority of the classifications are correct. Only a few false detections are located in the upper and lower triangles of the matrix. Additional metrics are calculated to quantify the quality of the results. Therefore, the results are divided into true positive

(step is labelled correctly), false positive

(step is incorrectly labelled as belonging to class), and false negative

(step belongs to class, but it is labelled incorrectly) predictions for each class. Based on these values, three metrics, precision

P, recall

R, and

-score, which are widely used in machine learning, are calculated:

Table 4 summarizes the metrics for each class. The precision and recall metrics range between 0.93 and 1.0, while the

-score is above 0.94 for all classes. The accuracy

A, which is the ratio of correct predictions to all predictions, is 0.98 for this net. These results are excellent and, within this validation dataset, the CNN allows nearly perfect predictions. It is also noticeable that the classes C01 and C04 with the most steps gain the highest

-score. When interpreting the metrics it has to be considered that these results represent the upper boundary of detection and cannot be reached in practical implementations. It has to be kept in mind that the recordings are conducted in a semi-anechoic room without any background noise and echoes. The detection of the steps in the time signals are clear, apart from some time shifts where the mid-stance peak is detected instead of the heelstrike transient. This is discussed in more depth in the following section.

Another topic shall be touched to conclude this section. The more superior the results of CNNs are, the less understandable and interpretable they are. CNNs are a kind of black box and the question “

…why they predict what they predict…” [

25] always arises when the results have to be interpreted. In the past years, several approaches were made to gain insight into CNNs and visualize which region in an image is relevant for prediction. In this paper, the Gradient-weighted Class Activation Mapping (Grad-CAM) approach [

25,

26] is applied to the CNN above. The Grad-CAM method produces a heatmap that is a kind of overlay to the original image. The heatmap highlights regions that lead to the prediction of the class. Grad-CAM uses the class specific gradients flowing into the last convolutional layer of the CNN to create it. No change or re-training of the CNN is necessary.

A heatmap is calculated for each of the 288 MFCC images of the validation dataset. The objective is to compare the heatmaps with the regions that the designer considers important. If there were differences, then the CNN would “look” everywhere but the step event. It would be a sign for a not properly trained net. In

Figure 7a, a selected MFCC image for each class, together with the corresponding heatmap, are shown side-by-side. In all MFCC images, the location of the heelstrike transient is clearly identifiable in the first quarter, compare

Figure 3 (bottom). A closer look also reveals the mid-stance peak. Looking at the heatmaps on the right hand side, it becomes obvious that the highlighted regions are mapping to the acoustic events in the MFCC images. Because this behavior holds for nearly all heatmaps of the validation dataset, it is assumed that the classification of the CNN is based on the step acoustics and that the functionality is given. The Grad-CAM method cannot prove a safe operation of the CNN, but it is able to create confidence by gaining insight into internal processes.

6. Challenges in Practical Applications

The CNN designed above is trained and validated with acoustic data being recorded in an anechoic environment. To roll-out this design for practical applications, a lot of boundary conditions have to be considered. Human gait, for example, is influenced by the footwear, the mood, injuries, etc., or even by extra weight that is carried. Furthermore, the environmental influences cannot be neglected. Long reverberation times in a corridor with tile floor produce echoes and complicate classification just as ambient noise. Several subjects in the location of recording produce a mixture of step sounds, which are usually paired with conversation. Unknown subjects may lead to incorrect or not clear identification results. Two practical challenges are addressed in the following sections. The objective is to provide an insight into the opportunities and limitations of person identification using CNN.

6.1. Different Footwear

Many factors influence the step sound of a walker. The footwear of the walker is the one with the largest impact apart from injuries or similar. The individual walking behavior is heavily “filtered” by the footwear of the walker. For practical implementations, the question arises as to whether a CNN trained to a subject with footwear A is able to identify the same subject with footwear B.

In the measurements for this paper, subjects 1 and 4 were recorded with different footwear, see

Table 1. This gives the opportunity to perhaps answer the question raised above within a different footwear test (DFT). For this purpose, the CNN is trained without M07, M08, M11, and M12, which represent the measurements of subjects 1 and 4 with a second set of footwear.

Table 5 summarizes the mapping of classes and measurements for the training. All of the training parameters and settings of the CNN are kept fixed as in the preceding section. After 50 epochs, a maximum validation accuracy of 0.958 could be achieved, which is comparable to the one of the original CNN (0.976).

Table 6 summarizes the validation metrics for the DFT. The validation dataset has a reduced size of 192 steps due to the missing measurements. The metrics only show small changes. The

-score is again over 0.94 for all classes.

Because the measurements left out for training are not sub-divided into train and validation data, all 323 steps of M07, M08, M11, and M12 are given to the CNN for classification. The test results are summarized in the confusion matrix of

Figure 8. The differences to the confusion matrix in

Figure 6 become obvious. Subject 1 is misclassified in 137 of 170 cases. The prevailing majority is classified as subject 3, which suggests a strong similarity between C03 and M07/M08. In contrast to that, subject 4 is correctly classified in 128 of 153 cases.

Table 7 shows the corresponding test metrics. The recall value drops, as expected, and leads to a poor

-score, because of the misclassification of subject 1. The metrics for subject 4 are sufficient for classification.

From this test it can be concluded that the footwear has a strong impact on the classification results. The results for unknown footwear have to be considered with caution. As presented, it may work properly (e.g., subject 4), but it could also be completely misleading (e.g., for subject 1).

6.2. Influence of Noise

Footsteps are rather quiet sound events when compared to urban or indoor sound scenarios. Except for some high heel shoes, the footstep sounds are often masked by environmental noise and cannot be easily identified in practical measurement setups. Calculations with extra noise are conducted in this section to explore the potential of the CNN footstep detection.

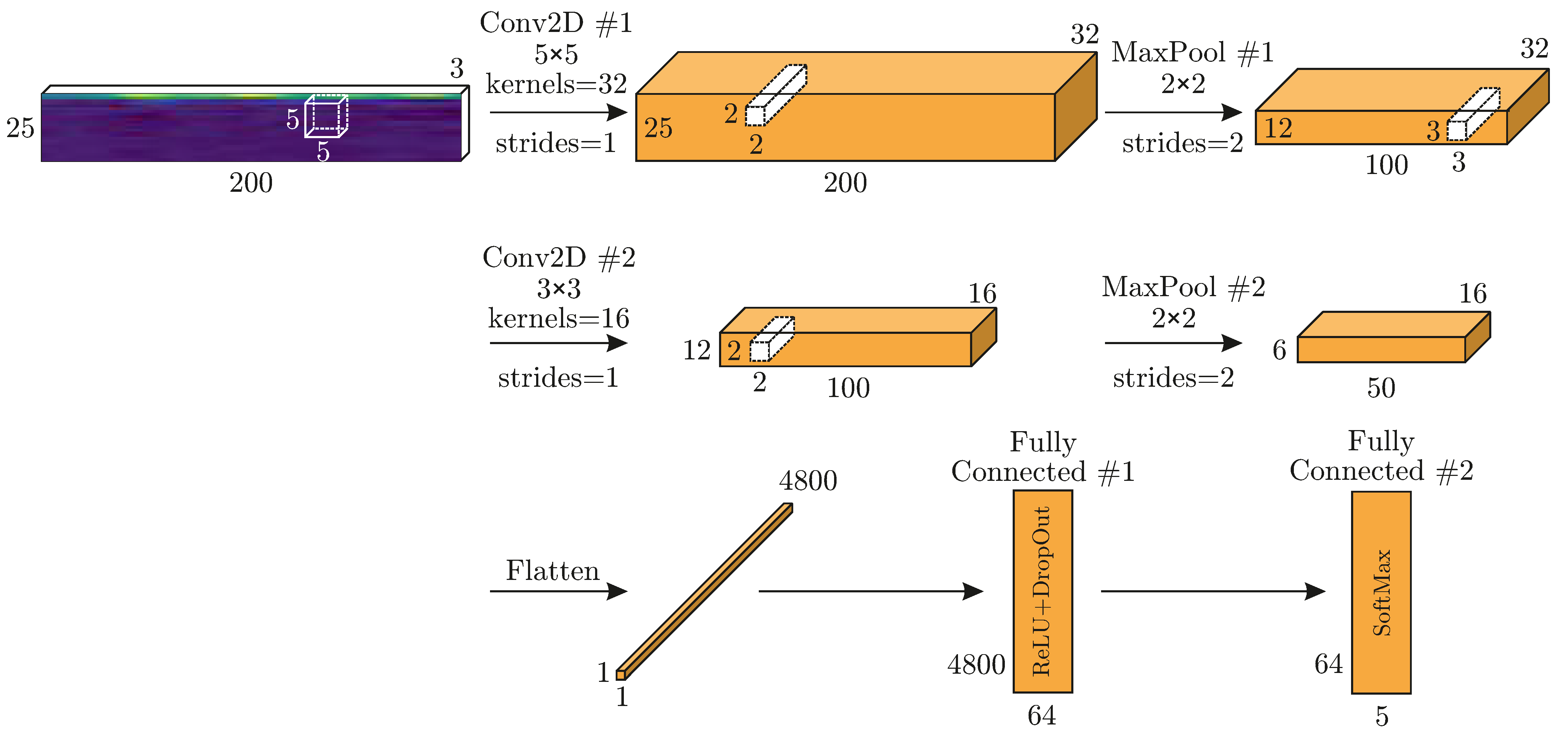

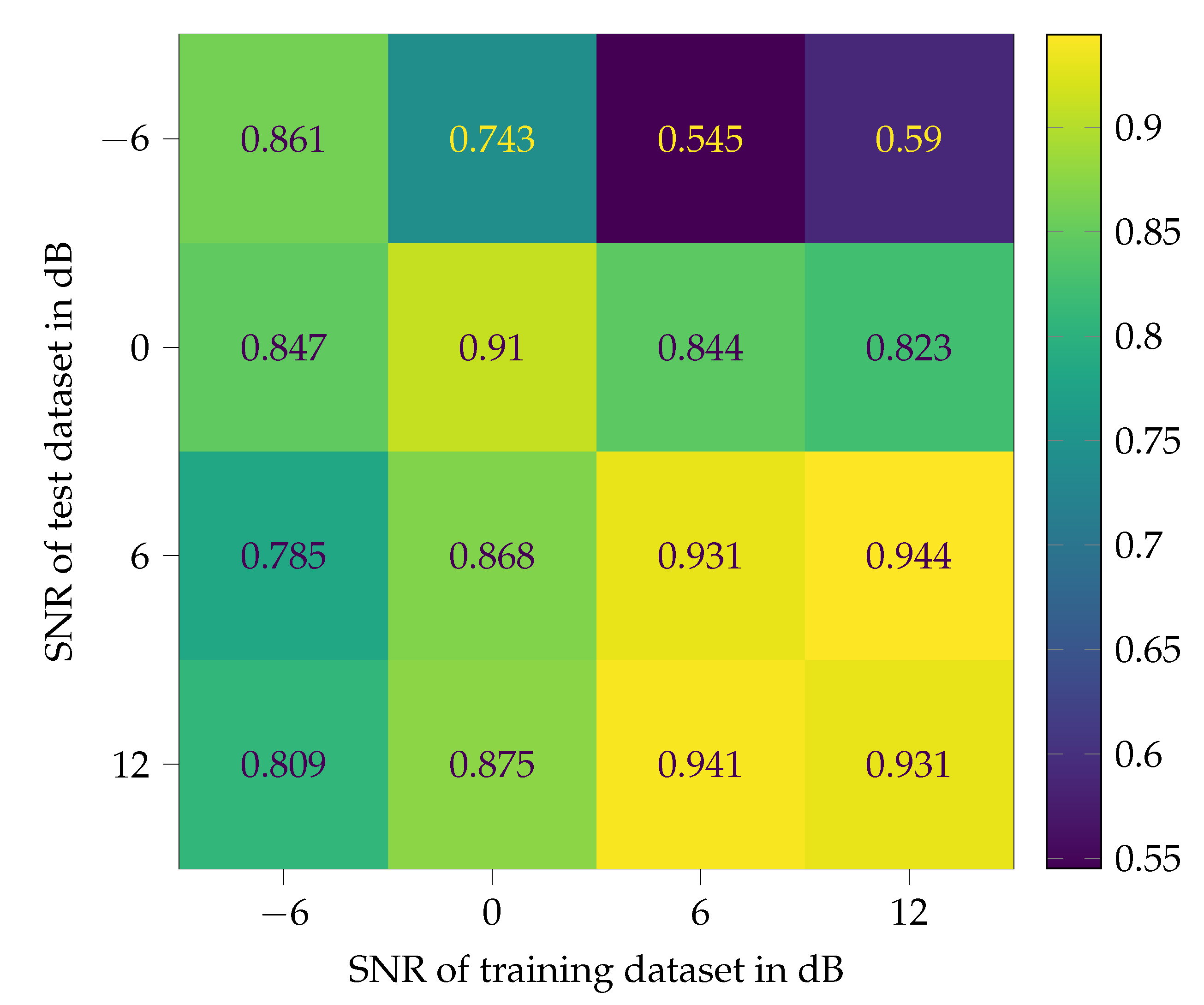

To explore the influence of noise in two ways, it is added to the training/validation data as well as to the test data. Four different signal-to-noise ratios (SNR) −6, 0, 6, and 12 dB are defined for this study. Therefore, the original footstep sounds measured in M01–M14 are treated as “pure” and noise free signals. The SNR only refers to the additional noise. In common measurement setups, SNR are much higher. These low values are selected to create representative conditions of environmental noise scenarios.

Figure 9 shows an impression of the influence of different SNR on a signal. With a SNR of 12 dB, the footstep is clearly recognizable by eye, while, with −6 dB SNR, it is not. Nevertheless, the ear is able to detect the step in both time signals when played with a loudspeaker system. The CNN of

Figure 4 is trained and tested with all SNR, which leads to a matrix of 16 tests. Pink or

noise is chosen as additional noise, since its spectrum matches very well with that of the footstep sounds, compare

Figure 2. Before the noise is added, it is high-pass filtered, like the original footstep sounds, see

Section 3. A dataset for each SNR is created from all measurements. These datasets are split again into training (75 %) and validation (25 %) data. For each SNR, a CNN is trained with the corresponding train dataset. The validation data of a SNR serve as test data for the other SNR. The results are shown in confusion matrix style in

Figure 10. The test accuracy is chosen for comparison of the different CNN. The validation accuracies of the CNN during training appear on the diagonal.

It becomes obvious that training and testing with a SNR of 6 dB and 12 dB lead to excellent classification results. The performance of the two CNN drop at a SNR of 0 dB and disappear at −6 dB. The CNN trained with 0 dB SNR is able to enhance the test accuracy up to 0.743 at −6 dB SNR, while the performance at 6 dB and 12 dB decreases significantly. Astonishing results can be achieved with the CNN that was trained with −6 dB SNR data. Although the validation accuracy is 0.861 only, test accuracies of around 0.8 are reached for all other datasets. It must be considered that the dataset with −6 dB SNR is really noisy, even for human ears.

The results of the test above can be summarized, as follows. If in the application phase of a CNN quiet and clear measurement are expected, training with additional noise in a mixing ratio of 12 dB and more would be sufficient and lead to stable classification results. When a significant presence of noise is expected, a SNR of 0 dB is recommended for the training data. A SNR of −6 dB should not be aimed at for practical applications. If it occurs, then it would not be acceptable and the entire setup should be redesigned. This case is only used in this paper to demonstrate the limits of feasibility.

The results are comparable to many problems in control applications. The robustness and performance are two opposing objectives that cannot be simultaneously maximized.

7. Conclusions & Outlook

The approach that is presented in this article is able to identify persons based on their step sound. It shows excellent performance with an accuracy of 0.98 and a minimum -score of 0.94 for all five classes. Only two convolutional and two fully connected layers with 314 k trainable parameters were needed to achieve the results. Furthermore, the confidence in the method was strengthened by the application of the Grad-CAM method. The depicted heatmaps revealed that the designed CNN uses areas of the MFCC bitmaps that are closely related to the step sound. Although the experiments were conducted in a laboratory environment, the results are promising that this method is capable of real world applications. To tackle challenges that may occur in practical applications, two of these, noise and different footwear, were addressed. The experimental data with different SNR were used for the training and test. When considering the low SNR values, the synthesized CNN showed astonishing performance. The influence of different footwear on the classification results showed the limitations of the CNN. One subject is identified with different shoes with a -score of 0.90, which is nearly perfect. The identification of the other subject failed, with a -score of 0.31. It can be concluded that, for correct classification, the CNN needs as many different footwear training samples as possible. Finally, it could be shown that image recognition CNN are able to solve problems in classifying complex audio signals.

Future work will transfer these results to comparable audio classification tasks. This paper is a pre-study for the further development of recognition applications in aerospace and traffic research.