Prompt Optimization with Two Gradients for Classification in Large Language Models

Abstract

1. Introduction

1.1. Literature Review

1.1.1. Survey Paper

1.1.2. Foundation Inspiration

1.1.3. Related Work

1.2. Framework with Two Gradients

1.3. Legal LLM

1.4. Research Contributions

2. Methodology

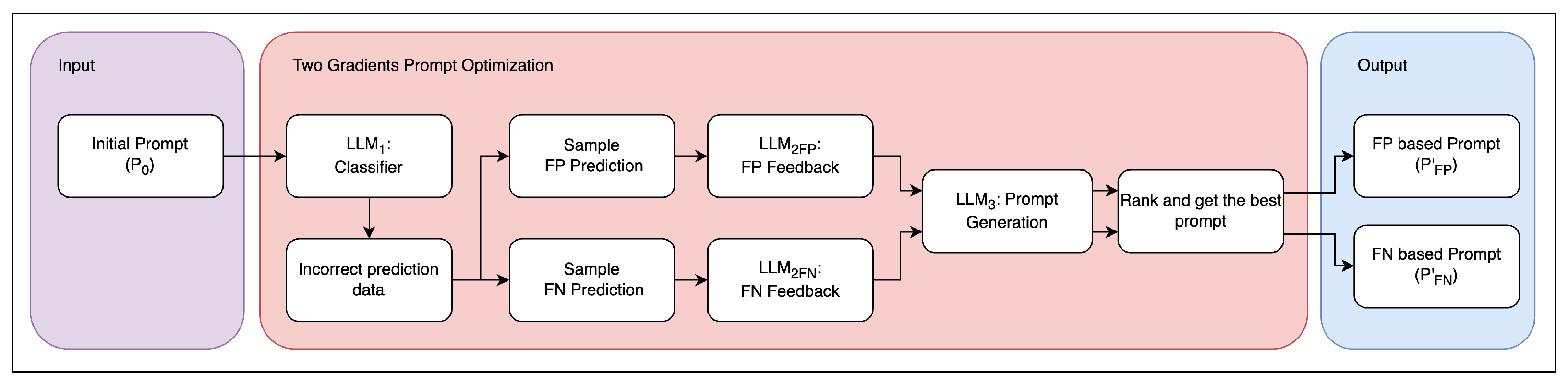

2.1. Hard Prompt Tuning in Textual Gradient Descent

2.2. Two Distinct Gradients

| Algorithm 1 Two gradients in the PO2G framework. |

| Require:

: Initial prompt, : Training dataset (text, label), : LLM to classify, : LLM to generate FP feedback, : LLM to generate FN feedback, : LLM to generate new prompt Ensure:

|

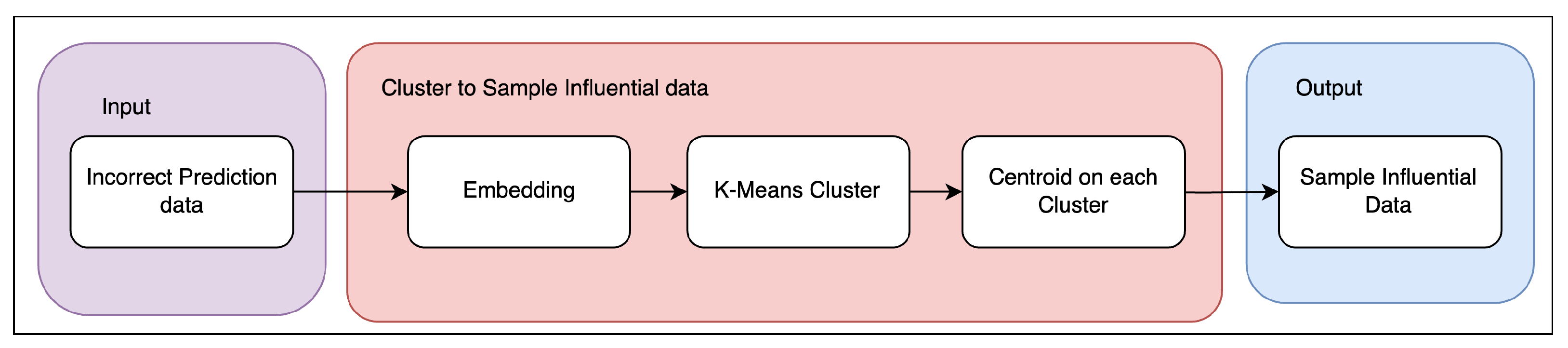

2.3. Loss Signal Selection: Cluster Sampling

| Algorithm 2 Clustering to find an influential sample. |

| Require: D: Input dataset (FN or FP data), num_clusters: Number of clusters to form, embed: Text embedding function, K-Means: K-means clustering function Ensure: representative_data: Set of data points representing each cluster

|

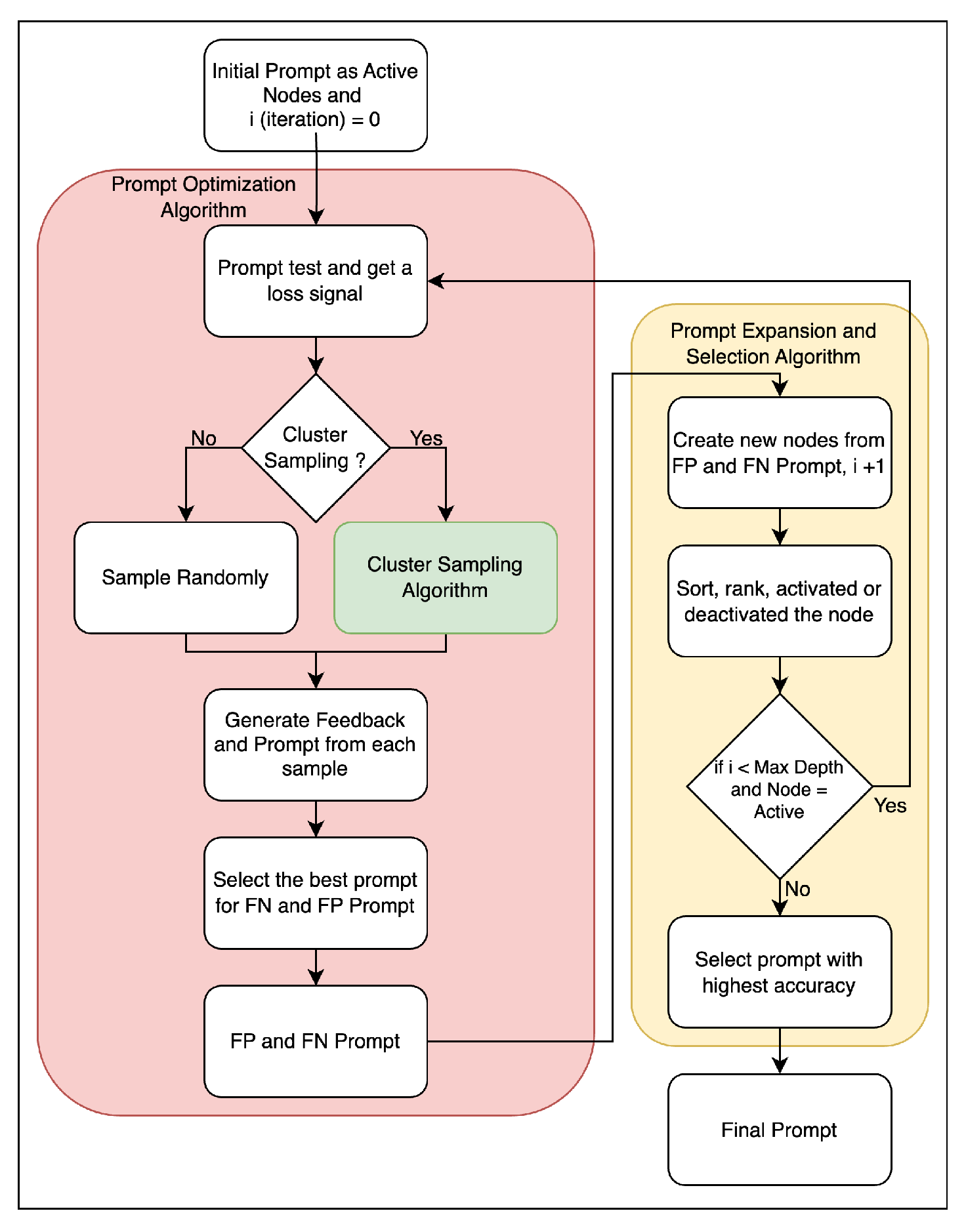

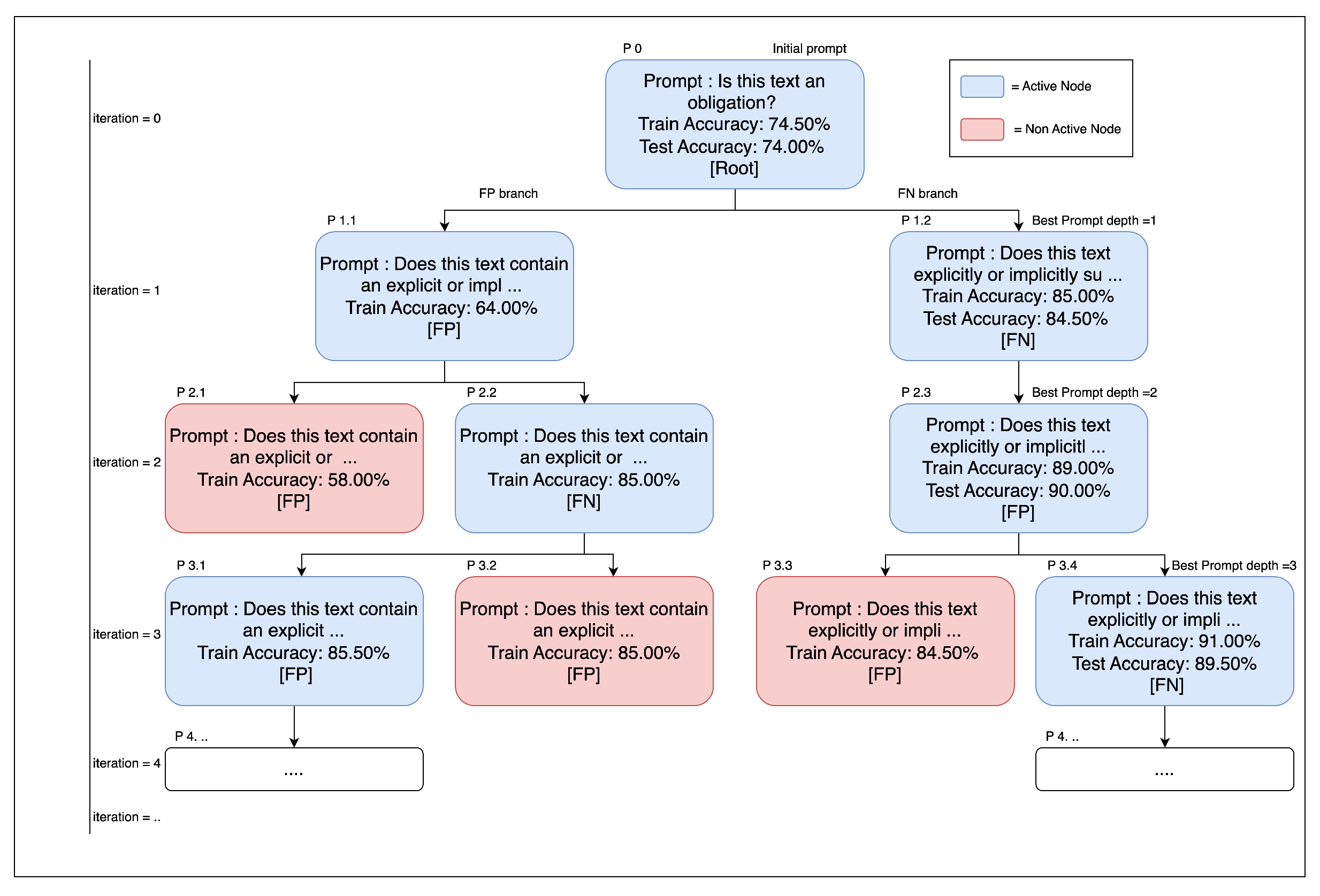

2.4. Prompt Expansion and Selection

| Algorithm 3 Prompt expansion and selection. |

| Require: : Initial prompt, : Training dataset (text, label), max_depth: Maximum iteration of expansion, max_nodes_per_level: Maximum expanded nodes per level (default = 2), : Two Gradients Function. Ensure: Optimized prompt

|

2.5. Selecting the Final Prompt

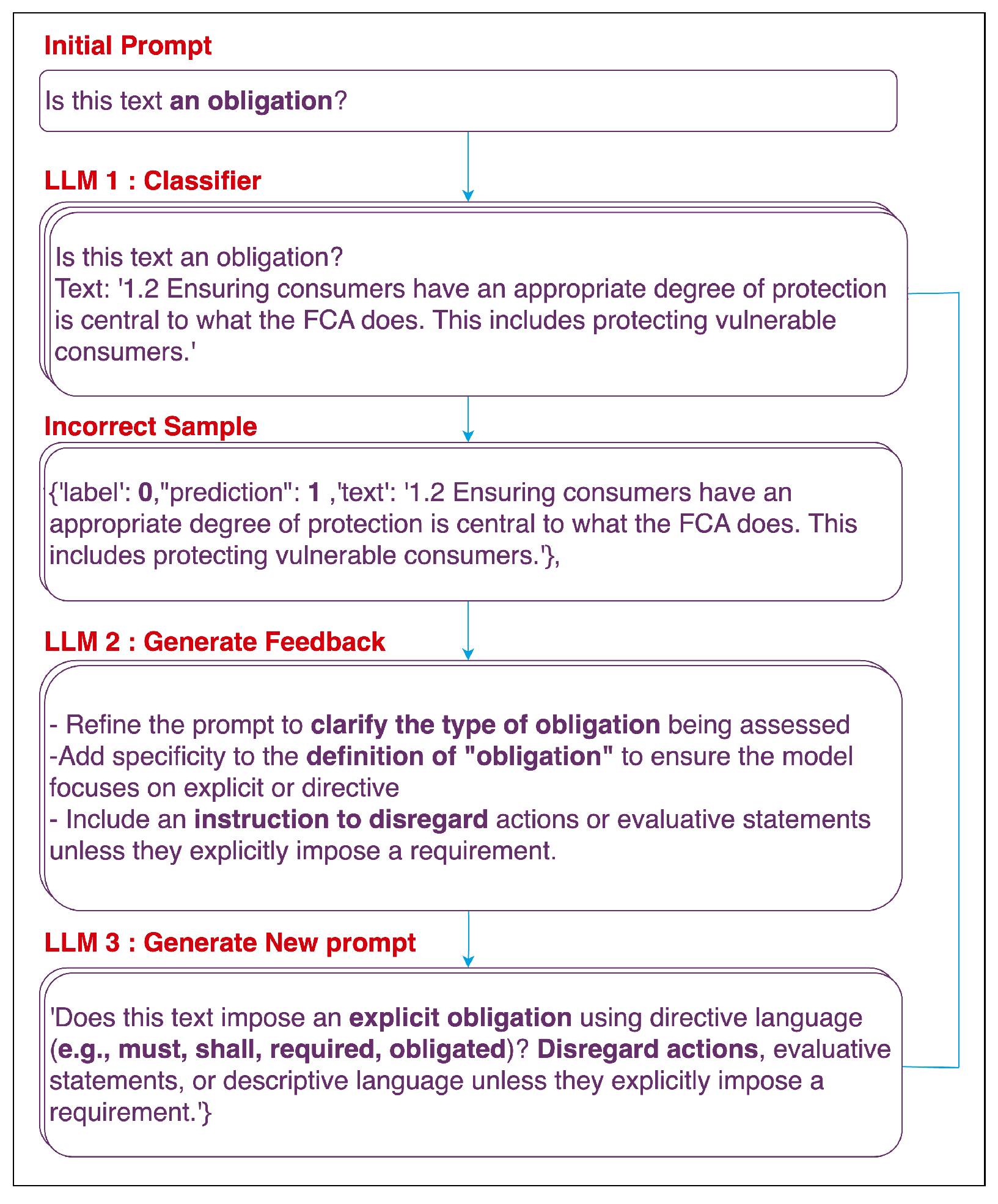

2.6. PO2G Framework Illustration

2.7. Comparing the PO2G Framework with ProTeGi

3. Experimental Setup

3.1. Tasks and Data

- Tasks used in ProTeGi research. [13]:

- Publicly available data (Non-Domain-Specific Tasks):

- Legal document data (Domain-Specific Tasks):

- –

- Italian FTT documents (150 samples):

- ∗

- Tax Product

- ∗

- Tax Transaction

- ∗

- Tax Exemption

- ∗

- Tax Calculation

- ∗

- Tax Subject

- ∗

- Tax Process

- –

- FCA Regulation Document:

- ∗

- Obligation Extraction

3.2. Language Models and API Configuration

3.3. Prompt Initialization

3.4. Systems Compared

- ProTeGi (baseline): We reimplement ProTeGi [13] with identical algorithmic settings (e.g., beam size ) under GPT-4o Mini.

- PO2G+C (proposed with clustering): PO2G uses two loss signals (one from false positives (FP) and one from false negatives (FN)) to propose and score edits during each iteration. We cluster the FP and FN error sets separately (default clusters per side) and select the most influential instances within clusters to form gradients. We then expand each gradient into candidate edits (max expansion ) and score them.

- PO2G (no clustering): This is identical to PO2G+C but without clustering. FP and FN examples are sampled randomly (default random samples per side), expanded (max expansion ), and scored.

3.5. Evaluation Organization

- Non-domain performance: Accuracy and cumulative API calls across nine tasks (Section 1.1); robustness calls on three tasks (LIAR, AR_Sarcasm, and Clickbait) with five independent runs each, reporting SE and pairwise t-test.

- Legal (domain) behavioral analysis: Accuracy, API calls, and positive-class precision/recall/F1 across seven tasks with train=test; robustness on Obligation with five runs, reporting SE and pairwise t-test.

- Ablations: Clustering (PO2G+C vs. PO2G), empty vs. initial prompt, and LLM by mainly comparing the accuracy and API calls.

3.6. Iteration Budget, Metrics, and Statistics

- Accuracy and cumulative API calls per iteration.

- Positive-class precision/recall/F1 for legal imbalance data.

- Robustness: five independent runs for selected tasks; we report the mean and standard error (SE = SD).

- The pairwise t-test: comparing frameworks’ accuracy on a certain condition explained in the results and discussion. Exact p-values are reported.

4. Results and Discussion

4.1. Main Performance on Non-Domain Tasks

4.1.1. Overview

Initial Prompt (Iteration 0)

Iteration 3

Iteration 6

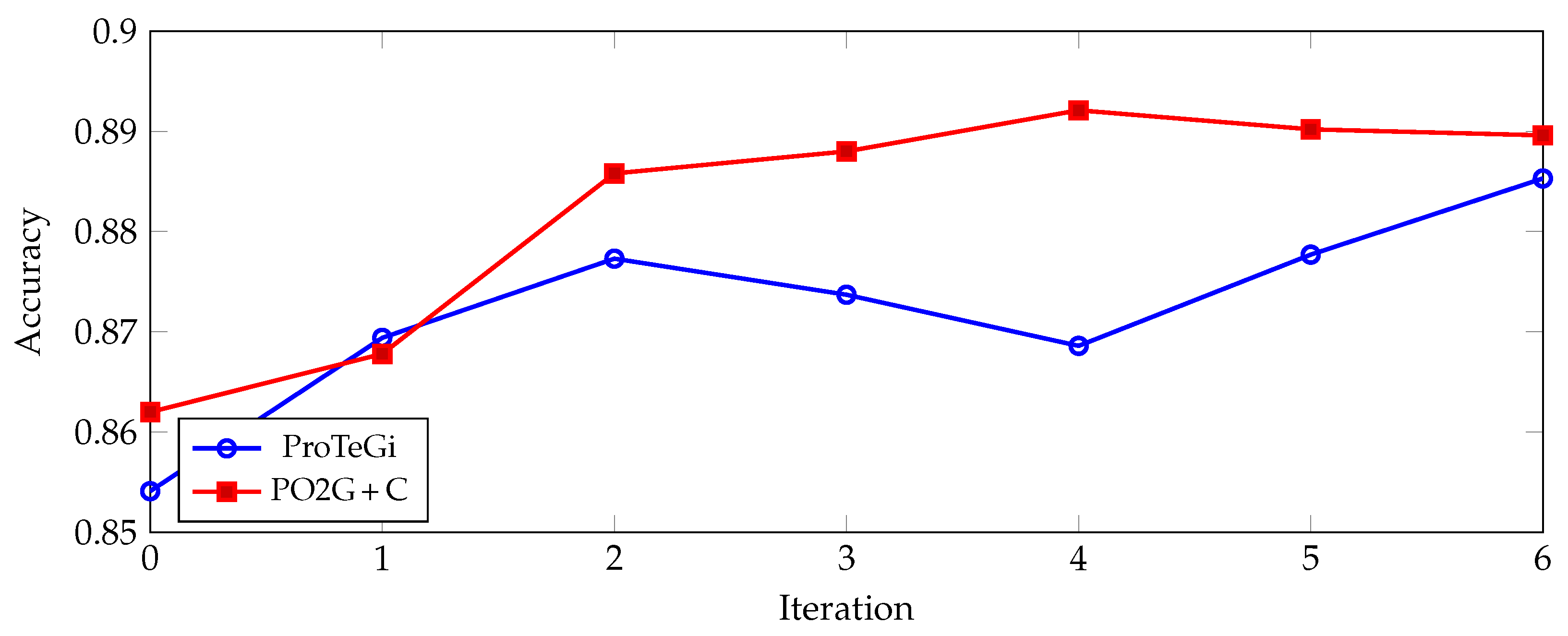

4.1.2. Accuracy

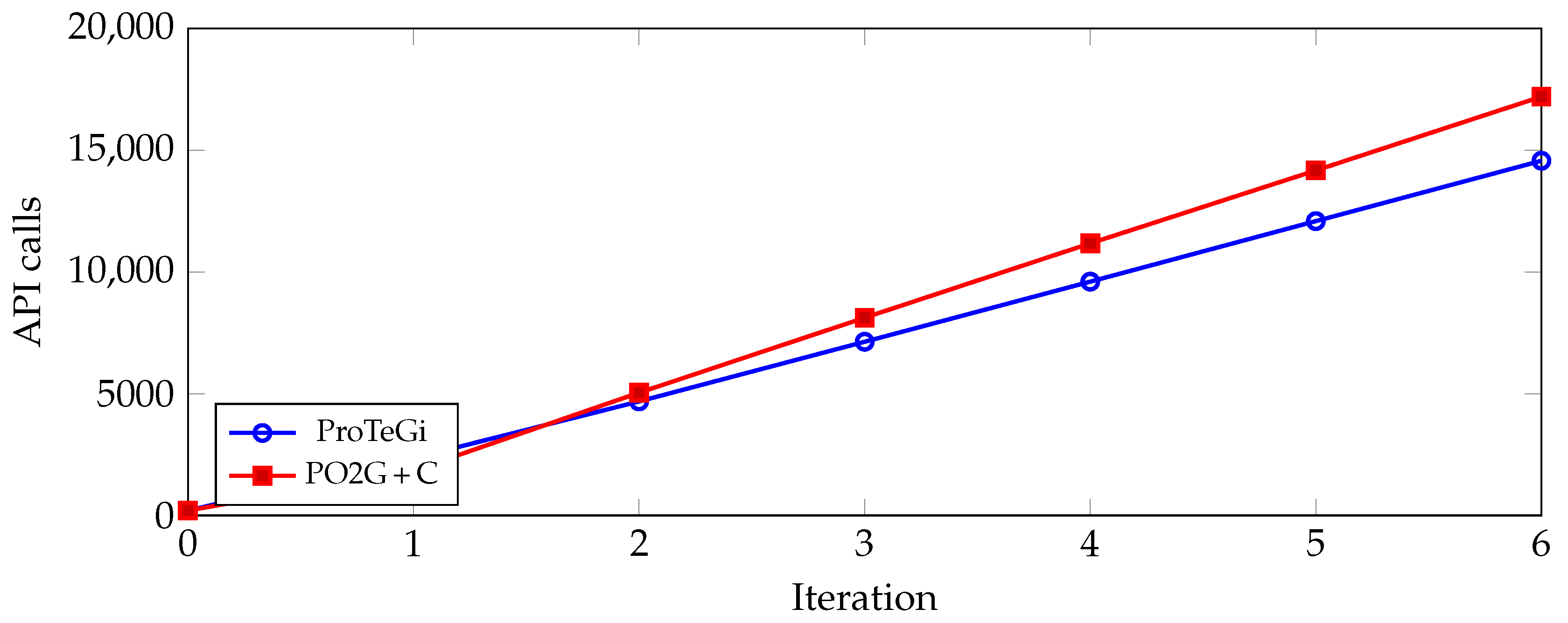

4.1.3. API Call Efficiency and Trade-Off

4.1.4. Statistical Significance on Three Public Datasets (5 runs)

4.2. Legal Data Behavioral Analysis

4.2.1. Overview

Initial Prompt (Iteration 0)

Iteration 3

Iteration 6

4.2.2. Accuracy

4.2.3. API Call Efficiency and Trade-Off

4.2.4. Precision, Recall, and F1 Score

4.2.5. Statistical Significance

4.3. Ablation Study

4.3.1. Clustering

4.3.2. Empty Prompt

4.3.3. LLM Comparison

5. Conclusions

- Clustering generally reduces long-run API calls with minimal accuracy change on non-domain data, and it improves accuracy and reduces cost on legal tasks; we hypothesize larger gains with bigger training pools for future work.

- Initial prompt quality matters: a good seed can accelerate optimization, whereas a suboptimal seed can mislead the search; nevertheless, PO2G can generate a prompt from an empty prompt, albeit typically with more iterations.

- LLM choice matters: using GPT-4o for feedback/prompt generation produces longer, more detailed prompts and mixed accuracy changes when the classifier remains 4o-mini, suggesting a mismatch effect; fully matched upgrades (generator and classifier) remain to be assessed and are left for future work.

6. Limitations

- Legal domain

- LLM scope

- Data size

- Cost measurement

- Statistics and robustness

- Classification task

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| LLM | Large Language Model |

| PO2G | Prompt Optimization with Two Gradients |

| PO2G+C | Prompt Optimization with Two Gradients with Cluster |

| NLP | Natural Language Processing |

| GPT | Generative Pre-training Transformer |

| FP | False Positive |

| FN | False Negative |

| ProTeGi | Prompt Optimization with Textual Gradients |

| SE | Standard Error |

| CLAPS | Clustering and Pruning for Efficient Black-box Prompt Search |

| PACE | Prompt with Actor–Critic Editing |

| AMPO | Automatic Multi-Branched Prompt Optimization |

| STRAGO | Strategic-Guided Optimization |

| APO-CF | Automatic Prompt Optimization via Confusion Matrix Feedback |

| SCULPT | Systematic Tuning of Long Prompts |

| GREATER | Gradient Over Reasoning makes Smaller Language Models Strong Prompt Optimizers |

| LPO | Local Prompt Optimization |

| PROPEL | PRompt OPtimization with Expert priors for LLMs |

| p | p-Value (p) |

| Delta |

Appendix A

Appendix A.1. Prompt in LLM

| LLM | Prompt |

|---|---|

| #Task {prompt} #Output format Answer Yes or No as the label # PredictionText: {text} Classification: | |

| The prompt is the modified version from ProTeGi [13]. The modified prompt was built to generate false positive Feedback and optimized for a larger context window. | |

| The prompt is the modified version from ProTeGi [13]. The modified prompt was built to generate false negative Feedback and optimized for a larger context window. | |

| The prompt was built to generate the prompt by incorporating feedback generated by and . |

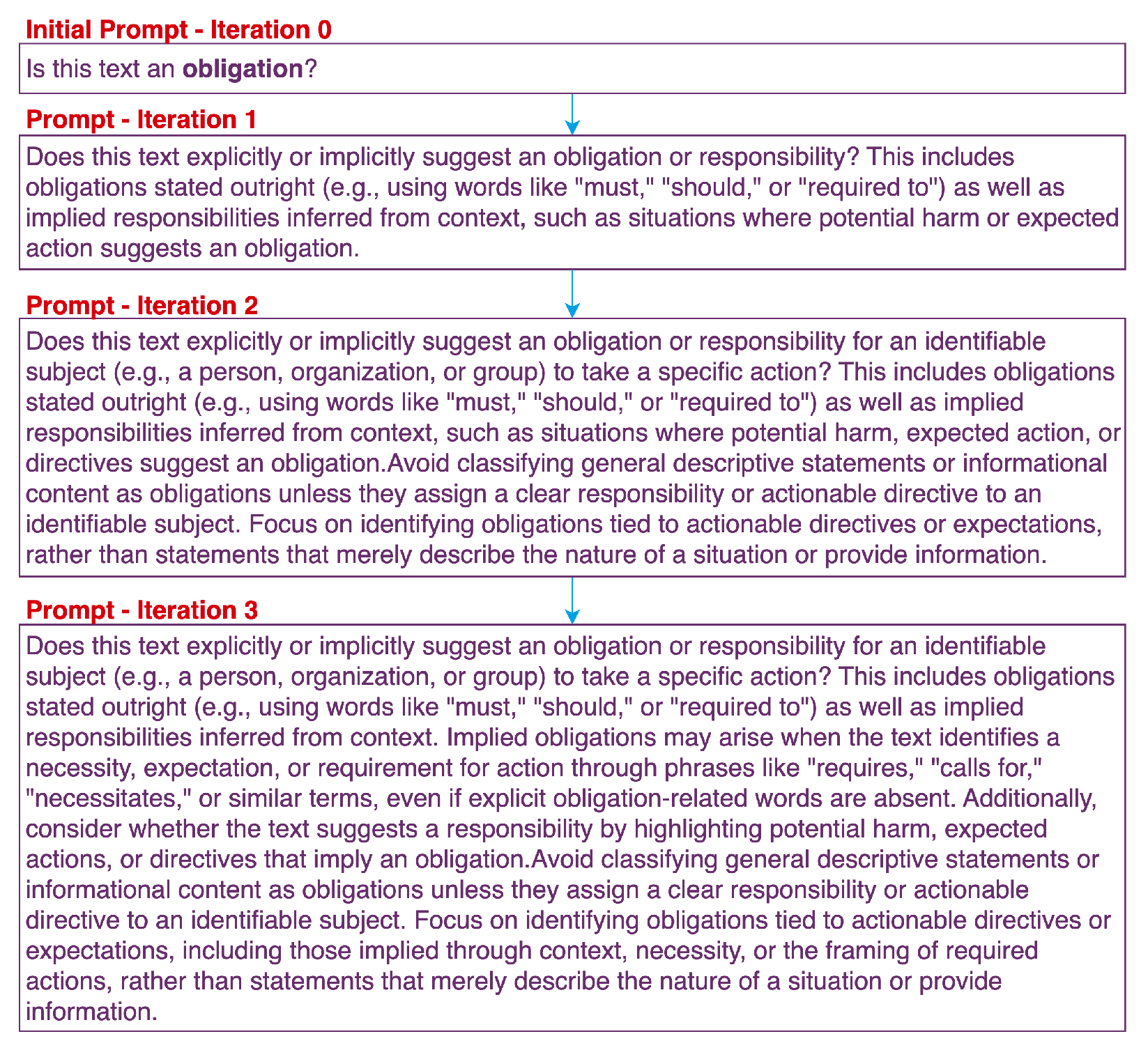

Appendix A.2. List Obligation Prompt from Prompt Expansion Sample

| Prompt ID | Prompt Text |

|---|---|

| 0 | Does the following text contain an obligation? (Train Accuracy: 74.50%) (Test Accuracy: 74.00%) [Root] |

| 1.1 | Does this text contain an explicit or implied reference to obligations, such as legal, regulatory, or procedural responsibilities? Consider both direct language (e.g., “must,” “required,” “obligations”) and indirect references (e.g., discussions of responsibilities, standards, or guidance relevant to obligations). (Train Accuracy: 64.00%) [FP] |

| 1.2 | Does this text explicitly or implicitly suggest an obligation or responsibility? This includes obligations stated outright (e.g., using words like “must,” “should,” or “required to”) as well as implied responsibilities inferred from context, such as situations where potential harm or expected action suggests an obligation. (Train Accuracy: 82.50%) (Test Accuracy: 82.50%) [FN] |

| 2.1 | Does this text contain an explicit or implied reference to *actionable* obligations, such as legal, regulatory, or procedural responsibilities? Focus on identifying texts that impose, discuss, or describe specific responsibilities that need to be fulfilled. Consider both direct language (e.g., “must,” “required,” “obligations”) and indirect references (e.g., discussions of standards, guidance, or responsibilities relevant to actionable obligations). Exclude texts that merely mention the existence of obligations without describing or imposing specific actionable responsibilities. (Train Accuracy: 58.50%) [FP] |

| 2.2 | Does this text contain an explicit or implied reference to obligations, such as legal, regulatory, or procedural responsibilities? Consider both: - **Direct language** that clearly indicates obligations (e.g., “must,” “required,” “obligations”). - **Indirect references** that imply expectations, responsibilities, or strong recommendations, such as guidance, suggestions, or calls to action (e.g., “should,” “expected to,” “encouraged to”). When analyzing the text, evaluate whether the context suggests an expectation of action that aligns with obligations, even if the language is not explicitly directive. (Train Accuracy: 85.00%) [FN] |

| 2.3 | Does this text explicitly or implicitly suggest an obligation or responsibility for an identifiable subject (e.g., a person, organization, or group) to take a specific action? This includes obligations stated outright (e.g., using words like “must,” “should,” or “required to”) as well as implied responsibilities inferred from context, such as situations where potential harm, expected action, or directives suggest an obligation.Avoid classifying general descriptive statements or informational content as obligations unless they assign a clear responsibility or actionable directive to an identifiable subject. Focus on identifying obligations tied to actionable directives or expectations, rather than statements that merely describe the nature of a situation or provide information. (Train Accuracy: 89.00%) (Test Accuracy: 90.00%) [FP] |

| 3.1 | Does this text contain an explicit or implied reference to obligations, such as legal, regulatory, or procedural responsibilities? When analyzing the text, consider the following: 1. **Direct Language**: Look for clear and explicit indicators of obligations, such as words like “must,” “required,” “obligations,” or other directive language that imposes a necessary action. 2. **Implied References**: Evaluate whether the text contains indirect suggestions of expectations, responsibilities, or strong recommendations using terms like “should,” “expected to,” “encouraged to,” or similar phrasing. Implied obligations often suggest an expectation of action but may not explicitly command it. 3. **Context of Action**: Assess whether the text actively directs or implies that the reader or audience must take action to fulfill an obligation. - **Instructive Context**: Texts that guide, recommend, or imply that a specific action should or must be taken are likely referencing obligations. - **Informational Context**: Exclude texts that solely describe or explain existing legal, regulatory, or procedural frameworks without suggesting or implying that the reader must take action. **Key Distinction**: A reference to legal, regulatory, or procedural terms (e.g., “Article 6 of the GDPR”) does not necessarily constitute an obligation unless the text ties it to an expectation or directive for the reader to act. **Examples**: - **Implied Obligation**: “Organizations are expected to demonstrate compliance with GDPR requirements.” - **Neutral Description**: “The GDPR outlines requirements for data processing under Article 6.” **Final Evaluation**: Determine whether the text is intended to inform the reader about obligations (neutral description) or actively direct them to fulfill an obligation (instructive context). (Train Accuracy: 85.50%) [FP] |

| 3.2 | Does this text contain an explicit or implied reference to obligations, such as legal, regulatory, or procedural responsibilities? Consider both: - **Direct language** that clearly indicates obligations (e.g., “must,” “required,” “obligations”). - **Indirect references** that imply expectations, responsibilities, or strong recommendations, such as guidance, suggestions, or calls to action (e.g., “should,” “expected to,” “encouraged to”). When analyzing the text: - Evaluate whether the context suggests an expectation of action that aligns with obligations, even if the language is not explicitly directive. - Pay attention to **soft guidance or recommendations** (e.g., “could,” “may”) if they are tied to formal entities, guidelines, or decision-making processes that suggest procedural or regulatory expectations. - Consider references to external authorities (e.g., “The ICO provides more information”) as potential indicators of implied obligations. - Analyze whether the text references resources, guidelines, or tools provided by formal entities, as these could imply procedural or regulatory responsibilities. **Example of Indirect Obligations**: - “Firms could use a legitimate interests assessment to help determine whether they should use it as a basis for processing. The ICO provides more information on legitimate interests in their guide to data protection.” In this example, the phrase “should use it” implies an expectation, and the mention of the ICO suggests a regulatory guideline, both of which are relevant to obligations. (Train Accuracy: 85.00%) [FN] |

| 3.3 | Does this text explicitly or implicitly suggest an obligation or responsibility for an identifiable subject (e.g., a person, organization, or group) to take a specific action? This includes obligations stated outright (e.g., using words like “must,” “should,” or “required to”) as well as implied responsibilities inferred from context, such as situations where potential harm, expected action, or directives suggest an obligation.Avoid classifying general descriptive statements, explanations of terms, or informational content as obligations unless they directly assign a clear responsibility or actionable directive to an identifiable subject within the context of the statement itself. For example: - Definitions or explanations of how terms like “Must” or “Should” are used in a document are *not* obligations unless the text actively imposes a duty or responsibility. - Statements that describe a situation or provide general information without assigning responsibility to an identifiable subject are *not* obligations. Focus on identifying obligations tied to actionable directives or expectations, rather than statements that merely describe the nature of a situation, explain terminology, or provide general information. Confirm that the text imposes or implies a responsibility directly, rather than referencing obligations indirectly or explaining their framework. (Train Accuracy: 84.50%) [FP] |

| 3.4 | Does this text explicitly or implicitly suggest an obligation or responsibility for an identifiable subject (e.g., a person, organization, or group) to take a specific action? This includes obligations stated outright (e.g., using words like “must,” “should,” or “required to”) as well as implied responsibilities inferred from context. Implied obligations may arise when the text identifies a necessity, expectation, or requirement for action through phrases like “requires,” “calls for,” “necessitates,” or similar terms, even if explicit obligation-related words are absent. Additionally, consider whether the text suggests a responsibility by highlighting potential harm, expected actions, or directives that imply an obligation.Avoid classifying general descriptive statements or informational content as obligations unless they assign a clear responsibility or actionable directive to an identifiable subject. Focus on identifying obligations tied to actionable directives or expectations, including those implied through context, necessity, or the framing of required actions, rather than statements that merely describe the nature of a situation or provide information. (Train Accuracy: 91.00%) (Test Accuracy: 89.50%) [FN] |

Appendix A.3. List Initial Prompt

| Task ID | Task | Initial Prompt |

|---|---|---|

| Category I | ||

| 9.1 | ethos | Is the following text hate speech? |

| 10.1 | liar | Determine whether the statement is a lie (Yes) or not (No) based on the context and other information. |

| 11.1 | ArSarcasm | Is this tweet sarcastic? |

| Category II | ||

| 3.1 | Financial Sentiment | Is this text expressing a positive overall sentiment? |

| 4.1 | Amazon_review | Is this text a positive review? |

| 5.1 | Tweet_airline | Is this text containing a positive meaning? |

| 6.1 | HateSpeech | Is this text hate speech? |

| 7.1 | Offensive_Language | Is this text offensive language? |

| 8.1 | ClickBait | Is this text clickbait? |

| Category III | ||

| 1.1 | tax_product | Is this text about a tax product that is taxed by the authority? |

| 1.2 | tax_transaction | Is this text about a tax transaction? |

| 1.3 | tax_exemption | Is this a tax exemption? |

| 1.4 | tax_calculation | Is this a method or formula for calculating tax? |

| 1.5 | tax_subject | Is this text about someone who is liable to pay or report tax? |

| 1.6 | tax_process | Is this a process of paying tax to the authority? |

| 2.1 | Obligation | Is this text an obligation? |

Appendix A.4. Performance Results

References

- OpenAI. GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Ling, C.; Zhao, X.; Lu, J.; Deng, C.; Zheng, C.; Wang, J.; Chowdhury, T.; Li, Y.; Cui, H.; Zhang, X.; et al. Domain Specialization as the Key to Make Large Language Models Disruptive: A Comprehensive Survey. arXiv 2024, arXiv:2305.18703. [Google Scholar] [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; Chi, E.; Le, Q.; Zhou, D. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. arXiv 2022, arXiv:2201.11903. [Google Scholar]

- Katz, D.M.; Bommarito, M.J.; Gao, S.; Arredondo, P. GPT-4 Passes the Bar Exam. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2024, 382, 20230254. [Google Scholar] [CrossRef] [PubMed]

- Savelka, J.; Ashley, K.D. The Unreasonable Effectiveness of Large Language Models in Zero-Shot Semantic Annotation of Legal Texts. Front. Artif. Intell. 2023, 6, 1279794. [Google Scholar] [CrossRef] [PubMed]

- Choi, J.H.; Hickman, K.E.; Monahan, A.B.; Schwarcz, D. ChatGPT Goes to Law School. J. Leg. Educ. 2022, 71, 387–400. [Google Scholar] [CrossRef]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models Are Few-Shot Learners. In Proceedings of the Advances in Neural Information Processing Systems; Larochelle, H., Ranzato, M., Hadsell, R., Balcan, M., Lin, H., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2020; Volume 33, pp. 1877–1901. [Google Scholar]

- White, J.; Fu, Q.; Hays, S.; Sandborn, M.; Olea, C.; Gilbert, H.; Elnashar, A.; Spencer-Smith, J.; Schmidt, D.C. A Prompt Pattern Catalog to Enhance Prompt Engineering with ChatGPT, 2023. arXiv 2023, arXiv:2302.11382. [Google Scholar] [CrossRef]

- Liu, P.; Yuan, W.; Fu, J.; Jiang, Z.; Hayashi, H.; Neubig, G. Pre-train, Prompt, and Predict: A Systematic Survey of Prompting Methods in Natural Language Processing. ACM Comput. Surv. 2023, 55, 1–35. [Google Scholar] [CrossRef]

- Li, W.; Wang, X.; Li, W.; Jin, B. A Survey of Automatic Prompt Engineering: An Optimization Perspective. arXiv 2025, arXiv:2502.11560. [Google Scholar]

- Zhang, Z.; Zhang, A.; Li, M.; Smola, A. Automatic Chain of Thought Prompting in Large Language Models. In Proceedings of the 11th International Conference on Learning Representations (ICLR), Kigali, Rwanda, 1–5 May 2023. [Google Scholar]

- Sun, H.; Li, X.; Xu, Y.; Homma, Y.; Cao, Q.; Wu, M.; Jiao, J.; Charles, D. AutoHint: Automatic Prompt Optimization with Hint Generation. arXiv 2023, arXiv:2307.07415. [Google Scholar] [CrossRef]

- Pryzant, R.; Iter, D.; Li, J.; Lee, Y.; Zhu, C.; Zeng, M. Automatic Prompt Optimization with “Gradient Descent” and Beam Search; Association for Computational Linguistics: Singapore, 2023. [Google Scholar] [CrossRef]

- Zhou, H.; Wan, X.; Vulić, I.; Korhonen, A. Survival of the Most Influential Prompts: Efficient Black-Box Prompt Search via Clustering and Pruning. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023, Singapore, 6–10 December 2023; Association for Computational Linguistics: Singapore, 2023; pp. 13064–13077. [Google Scholar] [CrossRef]

- Dong, Y.; Luo, K.; Jiang, X.; Jin, Z.; Li, G. PACE: Improving Prompt with Actor-Critic Editing for Large Language Model. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2024, Bangkok, Thailand, 11–16 August 2024; Ku, L.W., Martins, A., Srikumar, V., Eds.; Association for Computational Linguistics: Bangkok, Thailand, 2024; pp. 7304–7323. [Google Scholar] [CrossRef]

- Yuksekgonul, M.; Bianchi, F.; Boen, J.; Liu, S.; Huang, Z.; Guestrin, C.; Zou, J. TextGrad: Automatic “Differentiation” via Text. arXiv 2024, arXiv:2406.07496. [Google Scholar] [CrossRef]

- Yang, S.; Wu, Y.; Gao, Y.; Zhou, Z.; Zhu, B.B.; Sun, X.; Lou, J.G.; Ding, Z.; Hu, A.; Fang, Y.; et al. AMPO: Automatic Multi-Branched Prompt Optimization. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, Miami, FL, USA, 12–16 November 2024; Association for Computational Linguistics: Miami, FL, USA, 2024; pp. 20267–20279. [Google Scholar] [CrossRef]

- Wu, Y.; Gao, Y.; Zhu, B.B.; Zhou, Z.; Sun, X.; Yang, S.; Lou, J.G.; Ding, Z.; Yang, L. StraGo: Harnessing Strategic Guidance for Prompt Optimization. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024, Miami, FL, USA, 12–16 November 2024; Association for Computational Linguistics: Miami, FL, USA, 2024; pp. 10043–10061. [Google Scholar] [CrossRef]

- Choi, J. Efficient Prompt Optimization for Relevance Evaluation via LLM-Based Confusion Matrix Feedback. Appl. Sci. 2025, 15, 5198. [Google Scholar] [CrossRef]

- Kumar, S.; Venkata, A.Y.; Khandelwal, S.; Santra, B.; Agrawal, P.; Gupta, M. SCULPT: Systematic Tuning of Long Prompts. arXiv 2025, arXiv:2410.20788. [Google Scholar]

- Das, S.S.S.; Kamoi, R.; Pang, B.; Zhang, Y.; Xiong, C.; Zhang, R. GReaTer: Gradients over Reasoning Makes Smaller Language Models Strong Prompt Optimizers. arXiv 2025, arXiv:2412.09722. [Google Scholar]

- Jain, Y.; Chowdhary, V. Local Prompt Optimization. arXiv 2025, arXiv:2504.20355. [Google Scholar] [CrossRef]

- Mayilvaghanan, K.; Nathan, V.; Kumar, A. PROPEL: Prompt Optimization with Expert Priors for Small and Medium-sized LLMs. In Proceedings of the 4th International Workshop on Knowledge-Augmented Methods for Natural Language Processing, Albuquerque, NM, USA, 3 May 2025; Association for Computational Linguistics: Albuquerque, NM, USA, 2025; pp. 272–302. [Google Scholar] [CrossRef]

- Wu, S.; Koo, M.; Scalzo, F.; Kurtz, I. AutoMedPrompt: A New Framework for Optimizing LLM Medical Prompts Using Textual Gradients. arXiv 2025, arXiv:2502.15944. [Google Scholar]

- Mollas, I.; Chrysopoulou, Z.; Karlos, S.; Tsoumakas, G. ETHOS: A multi-label hate speech detection dataset. Complex Intell. Syst. 2022, 8, 4663–4678. [Google Scholar] [CrossRef]

- Wang, W.Y. Liar, Liar Pants on Fire: A New Benchmark Dataset for Fake News Detection; Association for Computational Linguistics: Vancouver, BC, Canada, 2017. [Google Scholar] [CrossRef]

- Abu Farha, I.; Magdy, W. From Arabic Sentiment Analysis to Sarcasm Detection: The ArSarcasm Dataset. In Proceedings of the 4th Workshop on Open-Source Arabic Corpora and Processing Tools, with a Shared Task on Offensive Language Detection, Marseille, France, 11–16 May 2020; pp. 32–39. [Google Scholar]

- Malo, P.; Sinha, A.; Korhonen, P.; Wallenius, J.; Takala, P. Good Debt or Bad Debt: Detecting Semantic Orientations in Economic Texts. J. Assoc. Inf. Sci. Technol. 2014, 65, 782–796. [Google Scholar] [CrossRef]

- Hou, Y.; Li, J.; He, Z.; Yan, A.; Chen, X.; McAuley, J. Bridging Language and Items for Retrieval and Recommendation. arXiv 2024, arXiv:2403.03952. [Google Scholar] [CrossRef]

- Crowdflower. Twitter Airline Sentiment. Kaggle Dataset. 2015. Available online: https://www.kaggle.com/datasets/crowdflower/twitter-airline-sentiment (accessed on 29 January 2025).

- Davidson, T.; Warmsley, D.; Macy, M.; Weber, I. Automated Hate Speech Detection and the Problem of Offensive Language. In Proceedings of the 11th International AAAI Conference on Web and Social Media (ICWSM), Montreal, QC, Canada, 15–18 May 2017; pp. 512–515. [Google Scholar] [CrossRef]

- Chakraborty, A.; Paranjape, B.; Kakarla, S.; Ganguly, N. Stop Clickbait: Detecting and Preventing Clickbaits in Online News Media. In Proceedings of the 2016 IEEE/ACM International Conference on Advances in Social Networks Analysis and Mining (ASONAM), San Francisco, CA, USA, 18–21 August 2016; pp. 9–16. [Google Scholar] [CrossRef]

| Reference | Method | Limitation |

|---|---|---|

| White et al. [8] | Prompt Pattern Catalog (survey) | — |

| Liu et al. [9] | Pretrain–prompt–predict survey | — |

| Li et al. [10] | Optimization-based prompting survey | — |

| Reference | Method | Limitation |

|---|---|---|

| Analysis-driven methods | ||

| Wei et al. [3] | Chain-of-Thought | Requires curated prompt; higher inference cost due to longer reasoning chains/tokens |

| Zhang et al. [11] | Auto-CoT | LLM-generated prompt; inconsistent on multiple run;higher inference cost due to longer reasoning chains/tokens |

| Sun et al. [12] | AutoHint | Actor–critic loop over a single evolving prompt; hint may encourage prompt to analyze and reason |

| Direct-label methods | ||

| Pryzant et al. [13] | ProTeGi | Bandit/minibatch scoring; no explicit separation of FP vs. FN errors |

| Zhou et al. [14] | CLAPS | Evolutionary selection without explicit error feedback |

| Dong et al. [15] | PACE | Optimizes one candidate prompt per step, not tailored to classification; no explicit separation of FP vs. FN errors |

| Yuksekgonul et al. [16] | TextGrad | complex framework; better fit to complex reasoning/ than classification |

| Reference | Method | Limitation/Notes vs. Our Framework |

|---|---|---|

| Yang et al. [17] | AMPO | Expands and keeps a pool of candidates; evaluated mainly on instruction-following/reasoning tasks. |

| Wu et al. [18] | STRAGO | Generates guided edits with additional “thinking” tokens; analysis-oriented, increasing token cost. |

| Choi [19] | APO-CF | Uses aggregate confusion-matrix signals (not per-instance FP/FN); demonstrated mainly for relevance evaluation; limited search depth per round. |

| Kumar et al. [20] | SCULPT | Designed a systematic way to edit long prompts, may miss on the other structure not defined. |

| Das et al. [21] | GREATER | Requires token-level logits/probabilities unavailable in closed LLM. |

| Jain and Chowdhary [22] | LPO | Token-level/local edits can miss larger structural changes. |

| Mayilvaghanan et al. [23] | PROPEL | Agent-loop with cost overhead; evaluated mainly on small/medium LLMs. |

| Wu et al. [24] | AutoMedPrompt | Medical QA-centric; single-candidate refinement. |

| Task ID | Task | ProTeGi | PO2G+C | ||

|---|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | ||

| 9.1 | Ethos | 0.9350 | 200 | 0.9350 | 200 |

| 10.1 | Liar | 0.5020 | 200 | 0.5280 | 200 |

| 11.1 | ARSarcasm | 0.8270 | 200 | 0.8410 | 200 |

| Average_1 | 0.7547 | 200 | 0.7680 | 200 | |

| 3.1 | Sentiment | 0.9350 | 200 | 0.9400 | 200 |

| 4.1 | Amazon Review | 0.9300 | 200 | 0.9300 | 200 |

| 5.1 | Tweet Airline | 0.9400 | 200 | 0.9400 | 200 |

| 6.1 | Hate Speech | 0.9000 | 200 | 0.9000 | 200 |

| 7.1 | Offensive Language | 0.8550 | 200 | 0.8550 | 200 |

| 8.1 | Clickbait | 0.8630 | 200 | 0.8890 | 200 |

| Average_2 | 0.9038 | 200 | 0.9090 | 200 | |

| Average_all | 0.8541 | 200 | 0.8620 | 200 | |

| Task ID | Task | ProTeGi | PO2G+C | ||

|---|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | ||

| 9.1 | Ethos | 0.9350 | 7481 | 0.9300 | 6462 |

| 10.1 | Liar | 0.5820 | 7344 | 0.6130 | 10,300 |

| 11.1 | ARSarcasm | 0.8280 | 7510 | 0.8670 | 10,138 |

| Average_1 | 0.7817 | 7445 | 0.8033 | 8967 | |

| 3.1 | Sentiment | 0.9850 | 6989 | 0.9950 | 4240 |

| 4.1 | Amazon Review | 0.9450 | 7080 | 0.9400 | 3230 |

| 5.1 | Tweet Airline | 0.9450 | 7118 | 0.9550 | 8886 |

| 6.1 | Hate Speech | 0.8850 | 7055 | 0.9000 | 10,098 |

| 7.1 | Offensive Language | 0.8600 | 6822 | 0.8650 | 10,098 |

| 8.1 | Clickbait | 0.8980 | 6779 | 0.9270 | 9613 |

| Average_2 | 0.9197 | 6974 | 0.9303 | 7694 | |

| Average_all | 0.8737 | 7131 | 0.8880 | 8118 | |

| Task ID | Task | ProTeGi | PO2G+C | ||

|---|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | ||

| 9.1 | Ethos | 0.9500 | 15,070 | 0.9250 | 13,128 |

| 10.1 | Liar | 0.6020 | 14,998 | 0.6010 | 22,420 |

| 11.1 | ARSarcasm | 0.8440 | 15,029 | 0.8650 | 22,258 |

| Average_1 | 0.7987 | 15,032 | 0.7970 | 19,269 | |

| 3.1 | Sentiment | 0.9900 | 14,401 | 0.9400 | 7876 |

| 4.1 | Amazon Review | 0.9400 | 14,478 | 0.9500 | 6462 |

| 5.1 | Tweet Airline | 0.9250 | 14,469 | 0.9400 | 19,794 |

| 6.1 | Hate Speech | 0.9100 | 14,468 | 0.9150 | 22,016 |

| 7.1 | Offensive Language | 0.8650 | 14,012 | 0.9350 | 20,400 |

| 8.1 | Clickbait | 0.9420 | 14,144 | 0.9350 | 20,400 |

| Average_2 | 0.9287 | 14,329 | 0.9358 | 16,158 | |

| Average_all | 0.8853 | 14,563 | 0.8896 | 17,195 | |

| Iteration | ProTeGi | PO2G+C | ||

|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | |

| 0 | 0.8541 | 200 | 0.8620 | 200 |

| 1 | 0.8694 | 2347 | 0.8678 | 1843 |

| 2 | 0.8773 | 4681 | 0.8858 | 5039 |

| 3 | 0.8737 | 7131 | 0.8880 | 8118 |

| 4 | 0.8686 | 9599 | 0.8921 | 11,171 |

| 5 | 0.8777 | 12,084 | 0.8902 | 14,165 |

| 6 | 0.8853 | 14,563 | 0.8896 | 17,195 |

| Run | Iteration = 3 | Iteration = 6 | ||

|---|---|---|---|---|

| ProTeGi | PO2G+C | ProTeGi | PO2G+C | |

| 1 | 0.5900 | 0.6250 | 0.6150 | 0.6250 |

| 2 | 0.5900 | 0.6250 | 0.6150 | 0.6250 |

| 3 | 0.6000 | 0.6300 | 0.6200 | 0.6400 |

| 4 | 0.5900 | 0.6050 | 0.5900 | 0.5600 |

| 5 | 0.5800 | 0.5800 | 0.6100 | 0.5850 |

| Average | 0.5900 | 0.6130 | 0.6100 | 0.6070 |

| SE | 0.0032 | 0.0093 | 0.0052 | 0.0149 |

| Pairwise t-test | 0.0280 | 0.7833 | ||

| Run | Iteration = 3 | Iteration = 6 | ||

|---|---|---|---|---|

| ProTeGi | PO2G+C | ProTeGi | PO2G+C | |

| 1 | 0.8550 | 0.8550 | 0.8450 | 0.8650 |

| 2 | 0.8550 | 0.8550 | 0.8450 | 0.8650 |

| 3 | 0.7900 | 0.8800 | 0.8100 | 0.8500 |

| 4 | 0.8250 | 0.8650 | 0.8500 | 0.8550 |

| 5 | 0.8500 | 0.8800 | 0.8500 | 0.8750 |

| Average | 0.8350 | 0.8670 | 0.8400 | 0.8620 |

| SE | 0.0125 | 0.0056 | 0.0076 | 0.0044 |

| Pairwise t-test | 0.1254 | 0.0173 | ||

| Run | Iteration = 3 | Iteration = 6 | ||

|---|---|---|---|---|

| ProTeGi | PO2G+C | ProTeGi | PO2G+C | |

| 1 | 0.8250 | 0.9750 | 0.9400 | 0.9550 |

| 2 | 0.8250 | 0.9750 | 0.9400 | 0.9550 |

| 3 | 0.9200 | 0.9200 | 0.9500 | 0.9150 |

| 4 | 0.9550 | 0.9200 | 0.9450 | 0.9400 |

| 5 | 0.9300 | 0.9200 | 0.9550 | 0.9350 |

| Average | 0.8910 | 0.9420 | 0.9460 | 0.9400 |

| SE | 0.0275 | 0.0135 | 0.0029 | 0.0074 |

| Pairwise t-test | 0.2796 | 0.5734 | ||

| Task ID | Task | ProTeGi | PO2G+C | ||

|---|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | ||

| 1.1 | Tax Product | 0.5200 | 150 | 0.5867 | 150 |

| 1.2 | Tax Transaction | 0.3600 | 150 | 0.3600 | 150 |

| 1.3 | Tax Exemption | 0.9600 | 150 | 0.9667 | 150 |

| 1.4 | Tax Calculation | 0.9667 | 150 | 0.9667 | 150 |

| 1.5 | Tax Subject | 0.5800 | 150 | 0.6000 | 150 |

| 1.6 | Tax Process | 0.5667 | 150 | 0.5800 | 150 |

| 2.1 | Obligation | 0.4670 | 200 | 0.4670 | 200 |

| Average | 0.6315 | 157 | 0.6467 | 157 | |

| Task ID | Task | ProTeGi | PO2G+C | ||

|---|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | ||

| 1.1 | Tax Product | 0.9400 | 6366 | 0.9800 | 6382 |

| 1.2 | Tax Transaction | 0.8533 | 6440 | 0.9267 | 5926 |

| 1.3 | Tax Exemption | 0.9733 | 6068 | 0.9800 | 1974 |

| 1.4 | Tax Calculation | 0.9933 | 6417 | 1.0000 | 3646 |

| 1.5 | Tax Subject | 0.8000 | 6438 | 0.8733 | 6838 |

| 1.6 | Tax Process | 0.8467 | 6383 | 0.8600 | 7598 |

| 2.1 | Obligation | 0.8690 | 7308 | 0.8950 | 9452 |

| Average | 0.8965 | 6489 | 0.9307 | 5974 | |

| Task ID | Task | ProTeGi | PO2G+C | ||

|---|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | ||

| 1.1 | Tax Product | 0.9533 | 13,575 | 0.9800 | 11,398 |

| 1.2 | Tax Transaction | 0.8933 | 13,640 | 0.9267 | 13,982 |

| 1.3 | Tax Exemption | 0.9867 | 13,201 | 0.9800 | 3950 |

| 1.4 | Tax Calculation | 0.9933 | 13,593 | 1.0000 | 7142 |

| 1.5 | Tax Subject | 0.8133 | 13,565 | 0.9000 | 15,958 |

| 1.6 | Tax Process | 0.8467 | 13,504 | 0.8733 | 18,300 |

| 2.1 | Obligation | 0.8740 | 14,688 | 0.9240 | 20,642 |

| Average | 0.9087 | 13,681 | 0.9406 | 13,053 | |

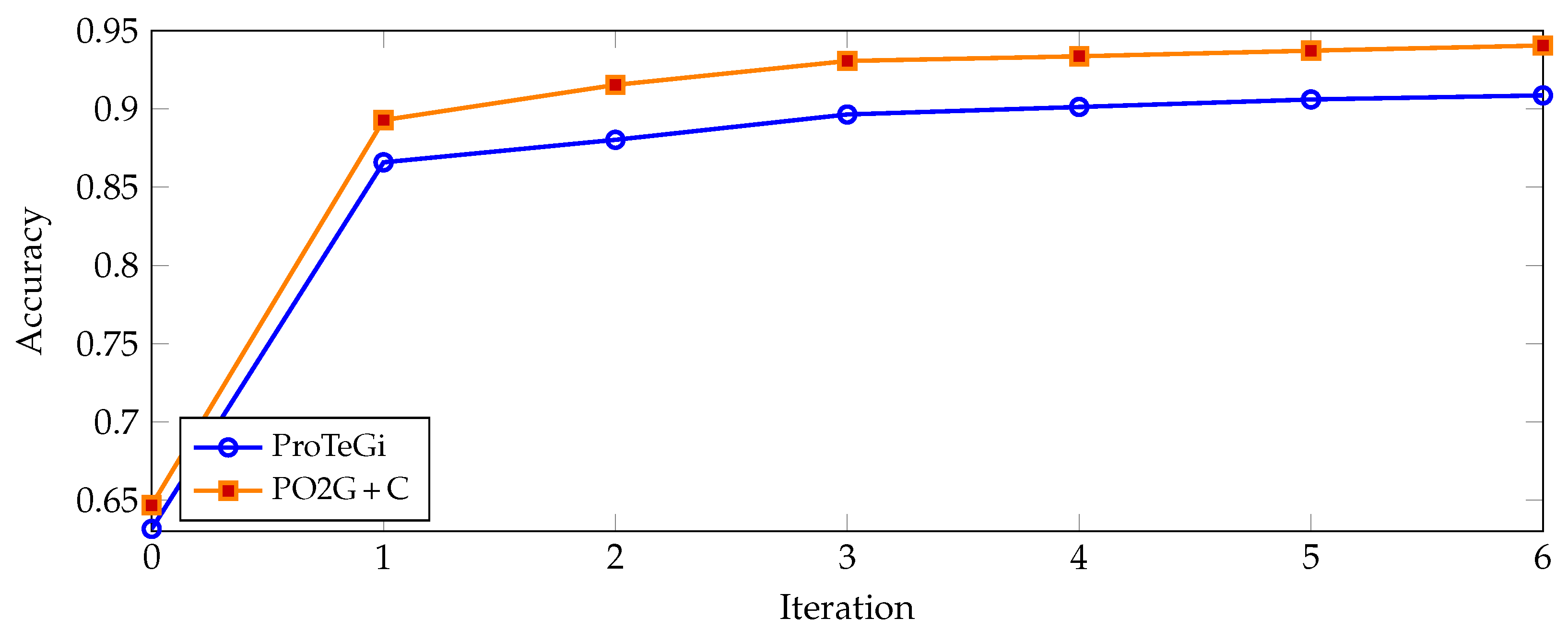

| Iteration | ProTeGi | PO2G+C | ||

|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | |

| 0 | 0.6315 | 157 | 0.6467 | 157 |

| 1 | 0.8659 | 1847 | 0.8929 | 1165 |

| 2 | 0.8803 | 4094 | 0.9154 | 3532 |

| 3 | 0.8965 | 6489 | 0.9307 | 5974 |

| 4 | 0.9013 | 8889 | 0.9337 | 8876 |

| 5 | 0.9061 | 11,283 | 0.9373 | 11,025 |

| 6 | 0.9087 | 13,681 | 0.9406 | 13,053 |

| Iter. | ProTeGi | PO2G+C | ||||

|---|---|---|---|---|---|---|

| Prec. | Rec. | F1 | Prec. | Rec. | F1 | |

| 0 | 0.5248 | 0.8078 | 0.5389 | 0.5575 | 0.7747 | 0.5422 |

| 1 | 0.6353 | 0.5332 | 0.5612 | 0.7995 | 0.6379 | 0.6961 |

| 2 | 0.6595 | 0.6364 | 0.6439 | 0.8740 | 0.7217 | 0.7643 |

| 3 | 0.7771 | 0.7520 | 0.7516 | 0.9174 | 0.7950 | 0.8137 |

| 4 | 0.7998 | 0.7440 | 0.7693 | 0.8839 | 0.8172 | 0.8367 |

| 5 | 0.8039 | 0.7484 | 0.7587 | 0.8960 | 0.8194 | 0.8409 |

| 6 | 0.8265 | 0.7591 | 0.7754 | 0.9218 | 0.7945 | 0.8401 |

| Run | Iteration = 3 | Iteration = 6 | ||

|---|---|---|---|---|

| ProTeGi | PO2G+C | ProTeGi | PO2G+C | |

| 1 | 0.8600 | 0.8700 | 0.8750 | 0.9200 |

| 2 | 0.8600 | 0.8700 | 0.8750 | 0.9200 |

| 3 | 0.8900 | 0.9000 | 0.8850 | 0.9250 |

| 4 | 0.9050 | 0.9000 | 0.8500 | 0.9100 |

| 5 | 0.8350 | 0.9050 | 0.8850 | 0.9400 |

| Average | 0.8700 | 0.8890 | 0.8740 | 0.9230 |

| SE | 0.0123 | 0.0078 | 0.0064 | 0.0049 |

| Pairwise t-test | 0.2199 | 0.0002 | ||

| Iteration | PO2G | (PO2G+C − PO2G) | ||

|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | |

| 0 | 0.8552 | 200 | 0.0068 | 0 |

| 1 | 0.8712 | 1888 | −0.0034 | −45 |

| 2 | 0.8760 | 5035 | 0.0098 | 4 |

| 3 | 0.8892 | 8006 | −0.0012 | 112 |

| 4 | 0.8961 | 11,216 | −0.0040 | −45 |

| 5 | 0.8932 | 14,398 | −0.0030 | −233 |

| 6 | 0.8853 | 19,062 | 0.0042 | −1867 |

| Iteration | PO2G | (PO2G+C − PO2G) | ||

|---|---|---|---|---|

| Accuracy | API Calls | Accuracy | API Calls | |

| 0 | 0.6423 | 157 | 0.0044 | 0 |

| 1 | 0.8781 | 1199 | 0.0149 | −33 |

| 2 | 0.8957 | 3484 | 0.0197 | 48 |

| 3 | 0.8977 | 6073 | 0.0330 | −100 |

| 4 | 0.9033 | 8041 | 0.0304 | 835 |

| 5 | 0.9113 | 11,776 | 0.0259 | −751 |

| 6 | 0.9201 | 14,599 | 0.0205 | −1546 |

| Iteration | PO2G | (PO2G+C − PO2G) | ||||

|---|---|---|---|---|---|---|

| Precision | Recall | F1 | Prec. | Rec. | F1 | |

| 0 | 0.5623 | 0.7725 | 0.5396 | 0.0023 | 0.0026 | |

| 1 | 0.7455 | 0.6541 | 0.6897 | 0.0541 | 0.0064 | |

| 2 | 0.7502 | 0.6576 | 0.6625 | 0.1239 | 0.0641 | 0.1018 |

| 3 | 0.7581 | 0.6492 | 0.6627 | 0.1593 | 0.1459 | 0.1511 |

| 4 | 0.8281 | 0.7225 | 0.7331 | 0.0557 | 0.0948 | 0.1036 |

| 5 | 0.8735 | 0.7192 | 0.7504 | 0.0225 | 0.1002 | 0.0905 |

| 6 | 0.8840 | 0.7373 | 0.7656 | 0.0379 | 0.0571 | 0.0744 |

| Dataset | Empty Prompt (Test Accuracy.) | Accuracy (Initial − Empty) | ||||

|---|---|---|---|---|---|---|

| LIAR | 0.3650 | 0.6800 | 0.6200 | 0.1630 | −0.0670 | −0.0190 |

| AR_Sarcasm | 0.3800 | 0.8950 | 0.9000 | 0.4610 | −0.0280 | −0.0350 |

| Clickbait | 0.4250 | 0.6250 | 0.6600 | 0.4640 | 0.3020 | 0.2750 |

| Obligation | 0.6300 | 0.7900 | 0.8000 | −0.1630 | 0.1050 | 0.1240 |

| Average | 0.4500 | 0.7475 | 0.7450 | 0.2313 | 0.0780 | 0.0863 |

| Dataset | GPT-4o | 4o-mini - GPT-4o | ||||

|---|---|---|---|---|---|---|

| i = 0 | i = 3 | i = 6 | i = 0 | i = 3 | i = 6 | |

| LIAR | 0.5050 | 0.6000 | 0.5800 | 0.0230 | 0.0130 | 0.0210 |

| AR_Sarcasm | 0.8500 | 0.8700 | 0.8650 | −0.0090 | −0.0030 | 0.0000 |

| Clickbait | 0.8850 | 0.9800 | 0.9900 | 0.0040 | −0.0530 | −0.0550 |

| Obligation | 0.4650 | 0.8750 | 0.8950 | 0.0020 | 0.0200 | 0.0290 |

| Dataset | GPT-4o | 4o Mini |

|---|---|---|

| LIAR | 360 | 222 |

| AR_Sarcasm | 466 | 67 |

| Clickbait | 459 | 302 |

| Obligation | 440 | 31 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lieander, A.J.; Wang, H.; Rafferty, K. Prompt Optimization with Two Gradients for Classification in Large Language Models. AI 2025, 6, 182. https://doi.org/10.3390/ai6080182

Lieander AJ, Wang H, Rafferty K. Prompt Optimization with Two Gradients for Classification in Large Language Models. AI. 2025; 6(8):182. https://doi.org/10.3390/ai6080182

Chicago/Turabian StyleLieander, Anthony Jethro, Hui Wang, and Karen Rafferty. 2025. "Prompt Optimization with Two Gradients for Classification in Large Language Models" AI 6, no. 8: 182. https://doi.org/10.3390/ai6080182

APA StyleLieander, A. J., Wang, H., & Rafferty, K. (2025). Prompt Optimization with Two Gradients for Classification in Large Language Models. AI, 6(8), 182. https://doi.org/10.3390/ai6080182