1.2. Literature Survey

Many researchers have studied the energy management for fuel cell hybrid vehicles. Thereby, some good review works can be found in [

8,

9], and thus a comprehensive review of energy management is not the goal of this work here. Instead, a literature study with a combination of the authors’ research experience will be given.

For fuel cell hybrid vehicles without an extra charger, the hybrid vehicles work in the charge-sustaining mode. In this case, the electrical energy provided by the fuel cell system covers the load energy and the ohmic losses in the battery system. According to the energy balance, the average fuel cell power equals the sum of the average load power and the average battery loss power. The driving cycles primarily determine the average load power, and the energy management does not influence it. In contrast, energy management determines the power distribution between fuel cells and batteries, and then the battery current and the state-of-charge (SoC) trajectories will be directly influenced. Since the battery power losses depend on the battery current and inner resistance, which is strongly dependent on the SoC, it can be concluded that energy management strongly influences the average battery losses. Therefore, two aspects must be considered when designing energy management strategies to reduce hydrogen consumption. On the one side, the fuel cell system must be operated in the high-efficiency range; On the other side, the battery losses must be reduced.

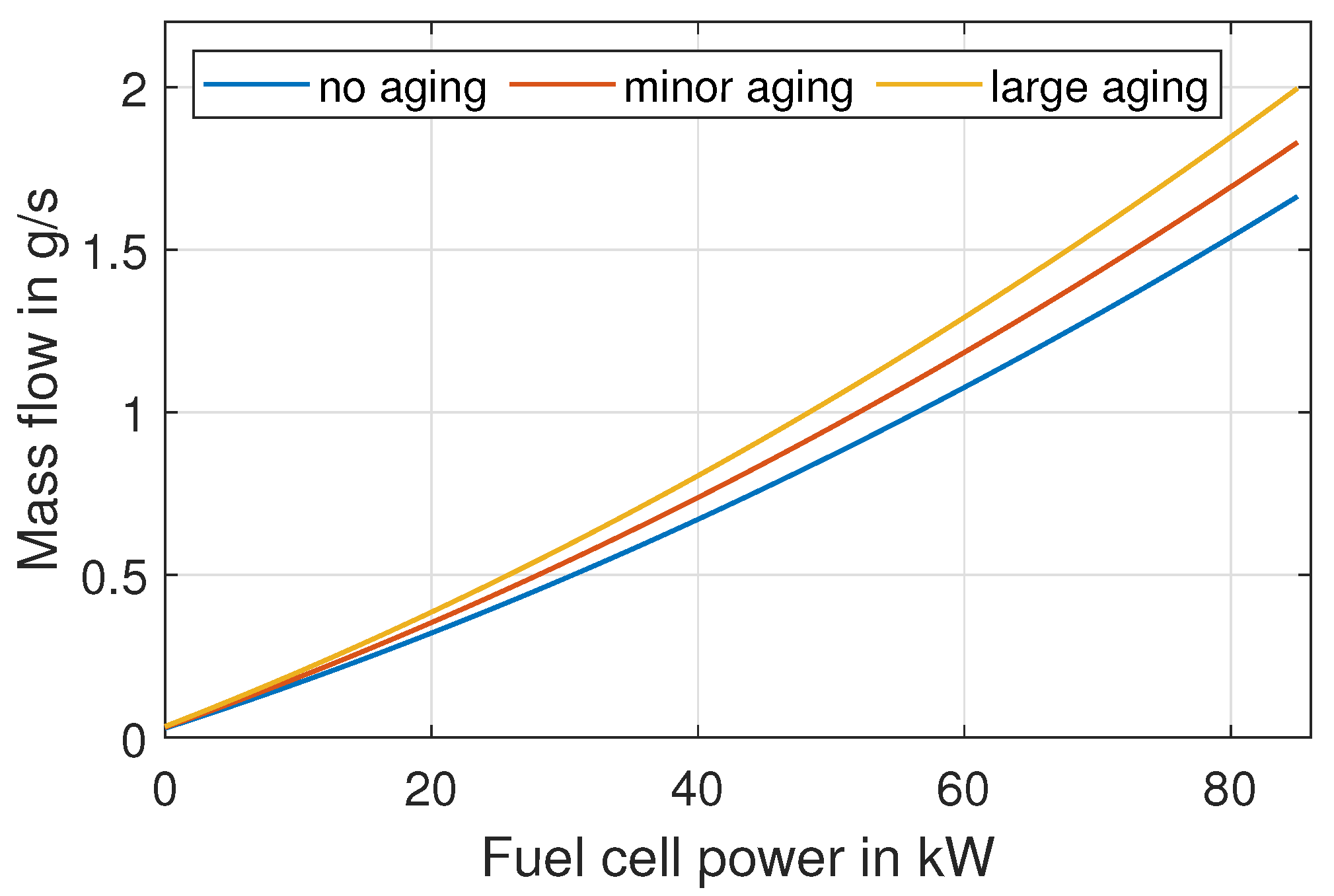

Regarding the first aspect, many works claim to have developed various rules with hyper-parameters to enable the fuel cell system to work within its high-efficiency range. However, the high-efficiency range is not well understood in literature because the high-efficiency range is intrinsically varying and depends on the load conditions. For example, although the fuel cell system has its maximal efficiency in the part-load range, it is not reasonable to operate the fuel cells in the part-load range if the average load lies far higher than the part-load range. The reason lies in the constraint of a charge-sustaining operational mode. Therefore, based on the concept that the high-efficiency range of a fuel cell system varies, a rule-based strategy utilizing the average fuel cell power is proposed by the authors in [

10]. The concept of the average fuel cell power utilizes convexity in the specific consumption curve of fuel cell systems. The convexity in this case means that the efficiency at the average fuel cell power is larger than the average efficiency of the fuel cell power trajectory. Further information about utilizing convexity in energy management for fuel cell hybrid vehicles can be found in [

10].

Regarding the second aspect of reducing the ohmic battery losses, a compromise of distributing dynamic power during acceleration and regenerative braking between fuel cells and batteries has to be found. For example, suppose the fuel cell power is strictly kept at the average load power value. Then, the battery system must afford the full dynamic power, which is the difference between the transient load power and the average load power. As a result, the battery charge and discharge currents are large, and the corresponding battery power losses also increase. Therefore, to reduce the ohmic battery losses, the fuel cell system is suggested to share some dynamic power as long as the fuel cell power dynamic constraints are not violated. Then, the next task is to find a compromise in distributing the dynamic power between fuel cells and batteries. On the one hand, the battery losses are reduced, and on the other hand, the fuel cell system’s operation is not far from the average load power mentioned before. The authors developed an adaptive Pontryagin’s minimum principle (APMP)-based strategy in [

11,

12] for reasonable dynamic power distribution. An analytical formula for calculating the costate defined in the optimal control theory is derived and validated. It is worth mentioning that the equivalent consumption minimization strategy (ECMS) is mathematically the same as the APMP because the equivalent factor in ECMS is linked to the costate in the APMP. However, it is suggested by the authors to use the APMP instead of ECMS because the ECMS is a simplified version of the APMP, which has poorer performance compared to the APMP. Using the analytical formula to estimate the costate in APMP, the fuel cell power is slightly higher than the average fuel cell power during acceleration phases and slightly lower than the average fuel cell power during regenerative braking. However, the fuel cell power is almost equal to the average power during the rest driving time. Summarily, the fuel cell system under the APMP strategy is operated around its average power to enable high-efficiency operation on one side. On the other side, the fuel cell power oscillates around its mean value during acceleration and regenerative braking to reduce the battery current, reducing battery ohmic losses. Therefore, the developed APMP-strategy represents the state-of-the-art energy management for fuel cell hybrid vehicles.

Nevertheless, there is still room for improvement regarding average fuel cell power estimation. In [

10], the history information, including load power, battery ohmic losses and gradient, is used to estimate the fuel cell power. The deviation in estimating the average fuel cell power decreases with more history information collected. However, there is not enough history information at the beginning of driving cycles. Therefore, the method provided in [

10] cannot estimate the average fuel cell power in the beginning phases of driving cycles. In order to handle this problem, a feedforward neural network will be utilized to estimate the average fuel cell power based on features of driving cycles such as average speed along the routes, average gradient, average number of stops along the trip and average total weight of the hybrid vehicles. Since average values of features are used instead of time series data, it is much less challenging to realize the average power estimation. Since the feedforward neural network will be used in this work, many driving cycles with different values in the average speed, gradients, number of stops, and weight must be prepared. One way to collect training data for the neural network is to collect real driving cycles, which is time-consuming and challenging to collect enough driving cycles, since more than a thousand driving cycles is required for training networks. Therefore, data extension methods are applied to generate enough driving cycles with different features in this work based on some real driving cycles.

Besides the improvement in estimating the average fuel cell power, reducing battery ohmic losses can be done to have a more energy-efficient energy management strategy than the APMP-based strategy. Since the ohmic battery losses are dependent not only on the battery current but also on the varying inner resistance, it is suggested to have a small battery current and low inner resistance. The battery current is reduced under the APMP-based strategy because the fuel cell power shares part of the dynamic power during vehicle acceleration. Besides that, keeping the battery inner resistance low is another way to improve the fuel economy, which can be understood as follows. As the fuel cell vehicles work in the charge-sustaining mode, the start and ending SoC of the battery system must be equal. However, the number of SoC trajectories having the same start and end SoC values is endless. Thereby, there are two typical types of trajectories. One type of the SoC trajectory can first go down, then go up, and finally reach the same value as the beginning value. In contrast, the second type of the SoC trajectory can first go up, then go down, and finally reach the same value as the beginning. After comparison, in the second type of SoC trajectory, the SoC average value is higher than that in the first type of SoC trajectory, which leads to lower inner battery resistance and fewer ohmic battery losses. Furthermore, an SoC trajectory with higher average values results in a higher average open-circuit voltage, which reduces battery currents if the same battery power is required. In this way, the ohmic battery losses are reduced because of the lower inner resistance and the lower battery current for the same amount of battery power. From this aspect, the fuel cell power must be decreased tendentially along with the driving cycles. At the beginning of the driving cycles, the fuel cell power can be increased above the estimated average fuel cell power even in the phase of cruising, which leads to a tendential going up of the SoC trajectory. Then, along with the driving cycles, the fuel cell power is decreased below the estimated average value even in the phase of cruising to let the SoC trajectory come down, which is necessary for the charge-sustaining mode. This phenomenon of the SoC trajectory first going up and then coming down is also found in the offline PMP results. Thereby, the costate amplitude at the beginning of driving cycles is larger than that at the end. However, the formula in the so-far developed APMP-based strategy in [

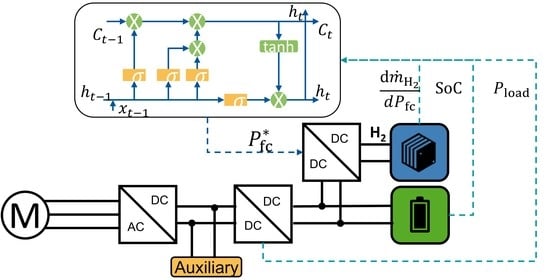

11] calculates the same costate values for the driving cycles’ initial and end time points. Therefore, reducing the battery inner resistance by increasing fuel cell power in the beginning phases of driving cycles to above-average values to improve fuel economy is not included in the APMP strategy. In this work, this kind of time effect of enlarging fuel cell power at the beginning of driving cycles and decreasing along the trip will be considered using an LSTM network. Since machine learning is used in this work, several works about using machine learning will be introduced in the following parts.

With machine learning technology evolved, machine learning-based strategies attract more and more attention in energy management. They can be divided into unsupervised learning, supervised learning, and reinforcement learning-based strategies. Unsupervised learning is not actively used and is mainly used to classify driving profiles through clustering. Reinforcement learning is a learning process of the policy principle by utilizing the interaction between agents and the environment under the pre-definition of a reward function. The advantage of reinforcement learning is that it can learn long-term accumulated rewards. However, for hybrid vehicles, its most significant drawback lies in the limited transferability of the trained control models. This limitation lies in that the data used in training can not cover all the driving situations. Moreover, the control policy is updated based on the maximization of accumulated reward function during training after each time step. Furthermore, various goals have to be considered to define the reward function, which leads to a lack of physical meanings. For example, different factors, including fuel consumption, component aging, and charge sustaining, are integrated into the reward function using weighting coefficients. However, the coefficients lack physical meaning, whose tuning is challenging without a reasonable universal solution for all changeable driving conditions. Due to the problems in using reinforcement learning, this method will not be used in this work.

Supervised learning is active in research, including the forward and recurrent neural networks. The forward neural network aims to identify the nonlinear relationship between the inputs and the output. However, the forward neural network cannot capture the sequential information needed to process sequential data in the input data, which the recurrent neural network can do. One of the recurrent neural networks is the so-called long short-term memory (LSTM) network, which is good at identifying the time effects included in the input variables. Due to the advantages of different neural networks, there are two common applications for hybrid vehicles. The first application is to use machine learning-based models to predict future driving information, e.g. velocity profiles [

13,

14,

15], roadway types [

16], traffic congestion levels [

17] and driver’s driving styles [

18]. In [

13], the vehicle speed is first predicted through a Markov decision process, then model predictive control (MPC) utilizes the predicted speed trajectory. In [

19], the future 10-second velocity profile is predicted by an LSTM network, and the predicted velocity is then used as an input for the dynamic programming algorithm. In [

18], the concept of driving pattern recognition is used. In each representative driving pattern, control parameters of a parallel hybrid electric vehicle are optimized offline. First, the driving pattern is recognized in the real-time application, and then the driving control algorithm is switched to the corresponding one. Summarily, to our best knowledge, an estimation of the average power based on machine learning has not been found, and this work will fill this gap.

The second application area of machine learning in fuel cell hybrid vehicles is to develop energy management using data generated by the offline optimal control strategy. In [

16], besides the roadway type and traffic congestion level predictor, another neural network is used to determine battery power and engine rotational speed with traffic congestion and driving trends as inputs. The training data are obtained offline by dynamic programming. In [

20], a neural network is used to predict the equivalent factor in the ECMS. First, three input features are chosen, including the load power, battery SoC and the ratio of distance traveled to the total distance. Then, the offline results of ECMS construct the training data, and the neural network is trained to predict the current equivalent factor using instantaneous load power, battery SoC and the distance ratio. In [

21], several neural networks are applied for driving environment prediction and optimal power control. Summarily, supervised learning is promising for developing energy management strategies. However, the input variables of the neural network, as found in literature, are not chosen physically, which leads to the trained network’s lack of transferability to different driving cycles from the training environment. Therefore, to fill this gap, the neural network’s input variables in this work are chosen physically, enabling the trained model to have powerful adaptivity.