1. Introduction

Erythrina edulis, commonly known as pajuro in Peru, is a leguminous, tree-like plant belonging to the Fabaceae family. Native to the Americas, it is widely distributed across South America, ranging from Venezuela to Bolivia [

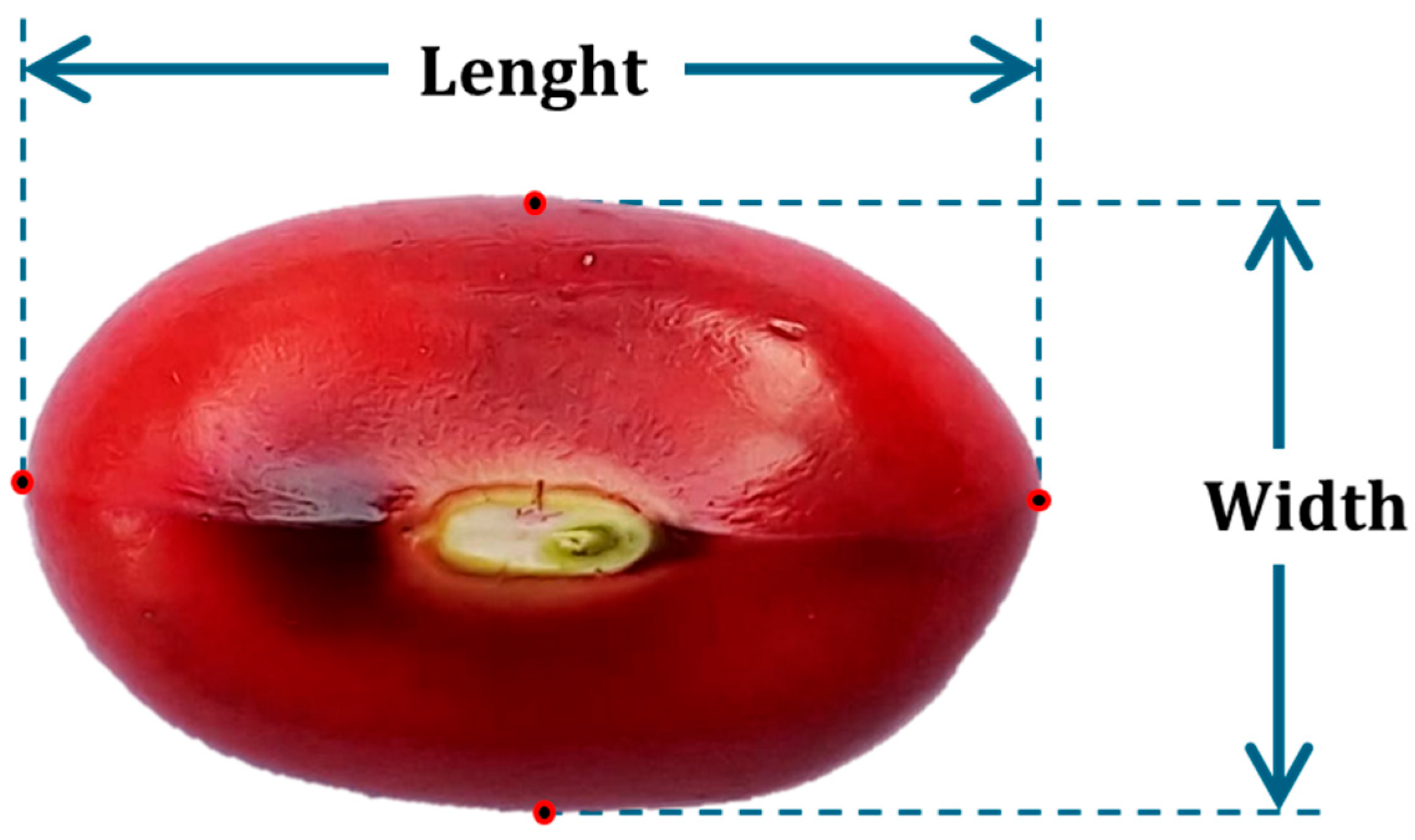

1]. The crop produces knobby pods approximately 20 to 25 cm in length, each containing between two and six edible seeds measuring 2 to 3.5 cm long and around 2 cm wide [

2]. These seeds must be extracted and classified according to their size and maturity stage, which is typically categorized as ripe, overripe, or unfit for consumption.

Pajuro is notable for its high protein content, which ranges between 18% and 24%, and the quality of its proteins is superior to that of many other legumes. Its amino acid profile is comparable to that of eggs, making it an important nutritional resource [

3]. The biological value of pajuro protein reaches 70.9, surpassing other legumes such as lentils (44.6), beans (58), peas (63.7), and broad beans (54.8) [

4]. Despite its nutritional potential, its consumption remains largely limited to local communities, and no dedicated industry has yet emerged to promote its use [

5].

In large-scale harvesting, ensuring uniform seed size and optimal ripeness is essential for quality assurance. However, individual pods often contain seeds at varying maturity stages. Seeds develop a characteristic red color when ripe and turn dark brown if stored for more than eight days after harvest, reducing their sensory and nutritional properties [

6]. Currently, the harvesting and classification processes are entirely manual. Workers use knives to extract seeds, a practice that is both hazardous and inefficient, especially when processing large volumes. Proper grading is rarely performed due to seed variability, which hinders standardization and lowers market value.

Field observations and interviews with farmers in Luya (Amazonas, Peru) indicate that a worker can sort approximately 3.5 kg of seeds per hour, earning USD 2.40. This translates to a labor cost of around USD 685.70 to manually process one metric ton of pajuro. For plantations with 156 trees per hectare and an average yield of 170 kg per tree, the annual production reaches 26,520 kg per hectare, resulting in an estimated labor cost of USD 18,181.10 per hectare per year, not accounting for other operational expenses. Furthermore, the absence of a defined harvest season demands continuous labor throughout the year [

6].

To address these limitations, computer vision and deep learning techniques have emerged as promising tools for agricultural automation. These technologies enable real-time inspection, defect detection, and classification across various crops, including tomatoes, strawberries, coffee, and legumes [

7]. In particular, convolutional neural networks (CNNs) have demonstrated superior performance in identifying defects and classifying legume samples under diverse conditions [

8].

Models such as YOLO, originally designed for real-time object detection, have been successfully adapted for agricultural applications due to their ability to segment images into discrete regions and analyze multiple items simultaneously. This approach has proven effective in avoiding overcounting in products like fruits and vegetables [

9]. The use of attention mechanisms and multi-input architectures, such as the combination of fruit and leaf images, has further enhanced class discrimination in morphologically similar crops, including tropical and jujube fruits [

9].

Recent adaptations of CNN-based models such as YOLO have been applied to agricultural tasks requiring real-time object detection and simultaneous analysis of multiple items [

9]. Models like PP-YOLO have incorporated spatial attention mechanisms and image preprocessing to detect fruit trees with high precision, achieving mAP50 values of up to 98.3% [

10]. Similarly, YOLOv8n-based models optimized for embedded hardware have enabled real-time fruit detection using lightweight architectures of less than 4 MB [

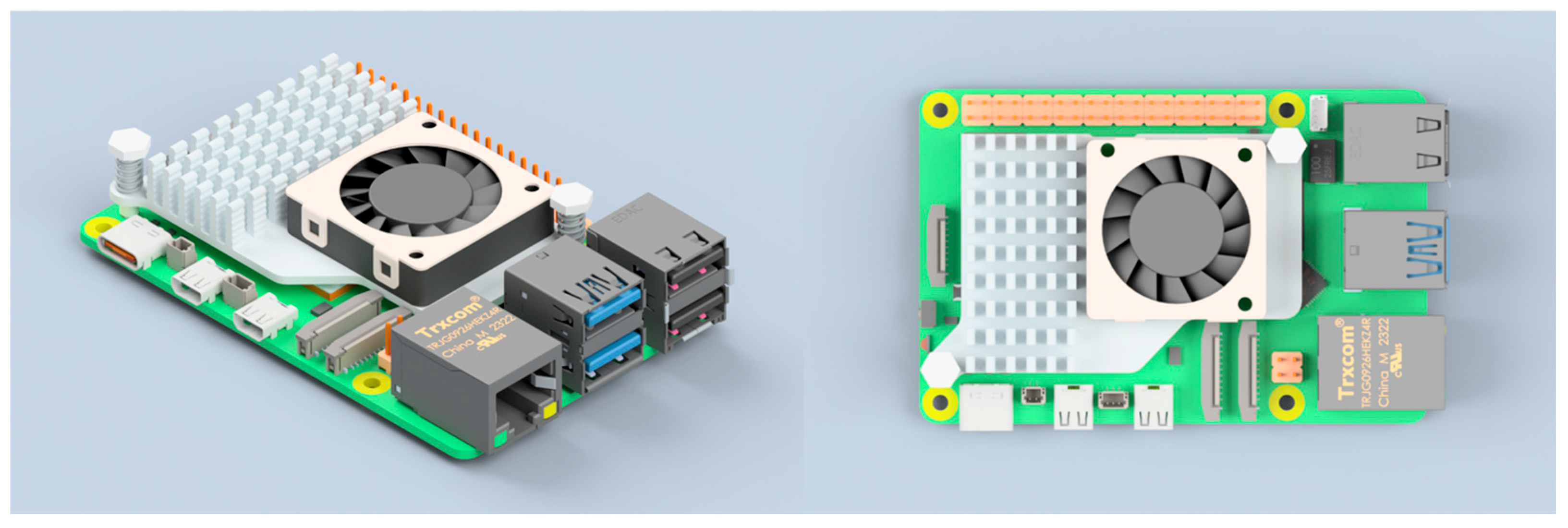

11]. These developments align with the objectives of the present study, which proposes a compact CNN architecture optimized for deployment on Raspberry Pi 5 (Raspberry Pi Ltd., Cambridge, UK; manufactured at Sony UK Technology Centre, Pencoed, South Wales, UK).

Several studies have validated the effectiveness of conventional image processing and machine learning techniques for the classification of legumes and grains. These methods are often low-cost and applicable in practical agricultural contexts. For example, a vision-based system using classifiers such as SVM, MLP, k-Nearest Neighbors (k-NN), and Decision Trees distinguished seven varieties of dry beans, with the SVM achieving 100% accuracy for some categories [

12]. Similarly, coffee beans were classified by species and origin using Artificial Neural Networks (ANN) and k-NN, achieving up to 96.66% accuracy based on simple morphological features [

13]. In another study, a vibratory conveyor system combined with image processing effectively rejected defective lima beans, achieving over 95% efficiency after 2200 trials [

14]. Basic RGB extraction techniques were also applied to identify and remove black coffee beans with 100% accuracy on test images [

15]. Additionally, RGB histograms were used to evaluate the “hard-to-cook” phenomenon in red beans, showing a strong correlation between color change and water absorption capacity, which enabled non-destructive ripeness evaluation [

16].

Beyond traditional approaches, many researchers have employed ANN and spectral or optical sensors for non-invasive quality assessment. In one study, backscattering laser imaging combined with ANN accurately predicted firmness and total soluble solids in apricots, achieving R

2 values of 0.974 and 0.963, respectively [

17]. Hyperspectral sensing has also been used to estimate water content and classify ripeness in strawberries, achieving over 98% classification accuracy with an RMSE of 0.0092 g/g [

18]. CNNs have also been employed for real-time fruit spoilage detection, with the VGG16 model reaching 95% accuracy [

19].

Further advancements in CNN-based models have introduced architectures such as TRA-CNN, which integrate spatial and channel attention mechanisms to enhance classification accuracy. In jujube fruit classification, TRA-CNN achieved 94.77% accuracy by incorporating features from fruits, leaves, and textures [

9]. Other approaches have used MobileNet-v2 features in combination with machine learning classifiers to classify Indian mangoes, achieving 99.5% accuracy [

20]. Hybrid architectures combining CNNs with Recurrent Neural Networks (RNN) and Long Short-Term Memory (LSTM) units have also outperformed SVM and Feedforward Neural Networks (FFNN) in fruit classification tasks [

21]. In coffee classification, SqueezeNet models enhanced with Vision Transformers (ViT) reached 85.9% accuracy in identifying bean varieties and roast levels [

22]. These findings underscore the importance of hybrid and deep architectures in precision agriculture [

23].

The integration of real-time object detection with industrial vision systems has led to substantial gains in sorting efficiency. For instance, the Mask-RCNN algorithm, combined with ResNet50, detected coffee defects with a mean average precision (mAP) of 0.99 and an accuracy of 94% at a conveyor speed of 35 RPM [

24]. In the table olive industry, a prototype system incorporating liquid transport and traceability mechanisms achieved 98% efficiency in removing small fruits and 89.3% accuracy in maturity classification, offering both logistical and quality improvements [

25].

Despite these technological advancements, native crops like pajuro have received limited attention. The absence of specialized datasets and tailored computational models hinders the development of automated solutions suited to local agricultural conditions. This study addresses this gap by developing a computer vision-based classification system for pajuro seeds. The proposed system offers a methodological framework for assessing ripeness and size, incorporating visual descriptors informed by local practices.

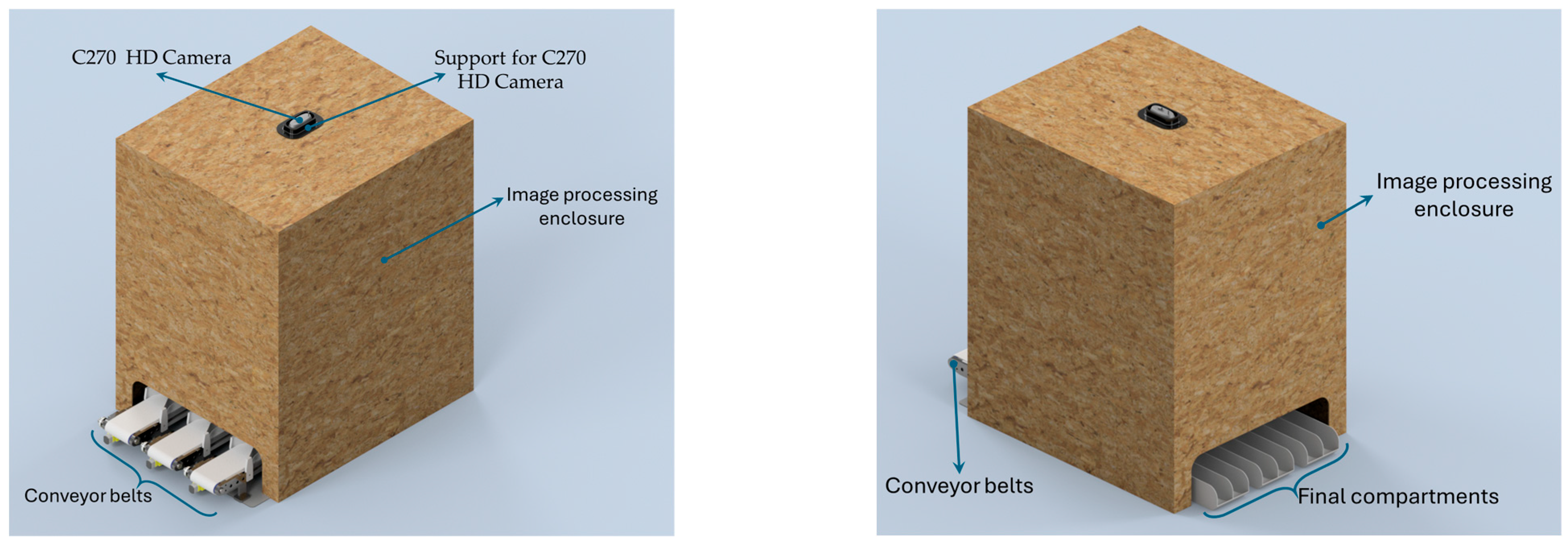

This work introduces methodological innovations that extend beyond engineering implementation. First, a lightweight convolutional neural network (CNN) was developed from scratch to enable real-time classification on the Raspberry Pi 5, a low-cost embedded device, without relying on external accelerators. The architecture uses the ReLU activation function in hidden layers, the Adam optimizer for training, and a SoftMax layer for final classification. Second, a fixed-region segmentation strategy was adopted to reduce inference time and prevent overcounting by restricting the analysis to predefined zones aligned with the conveyor layout. Third, the classification module was integrated with real-time actuator control through the GPIO interface of the Raspberry Pi, ensuring low-latency and deterministic sorting. Together, these contributions result in a compact, efficient, and scalable framework for AI-based agricultural classification in resource-constrained environments.

The remainder of this article is structured as follows:

Section 2 details the materials and methods used in this study, including the classification criteria for pajuro seeds, the mechanical design of the sorting system, and the hardware components such as the Raspberry Pi 5 and the camera. This section also outlines the methodology for dataset preparation, image processing, model comparison, and the logic behind the selection system.

Section 3 presents and discusses the experimental results, focusing on the training behavior and stability of the proposed CNN, an evaluation of alternative YOLO-based models, and a performance comparison against human classification. Finally,

Section 4 provides the conclusions and highlights the main contributions and potential future improvements of the proposed system.

3. Results and Discussion

3.1. Training Behavior and Model Stability

The proposed convolutional neural network was trained using a five-fold stratified cross-validation protocol applied to a dataset of 2010 labeled images. Each image was segmented into regions of 140 × 140 pixels and categorized into two classes: good_state (optimally ripe) and bad_state (overripe). For each fold, the model was trained for 30 epochs using a batch size of 16, the Adam optimizer with a learning rate of 0.001, and the categorical cross entropy loss function.

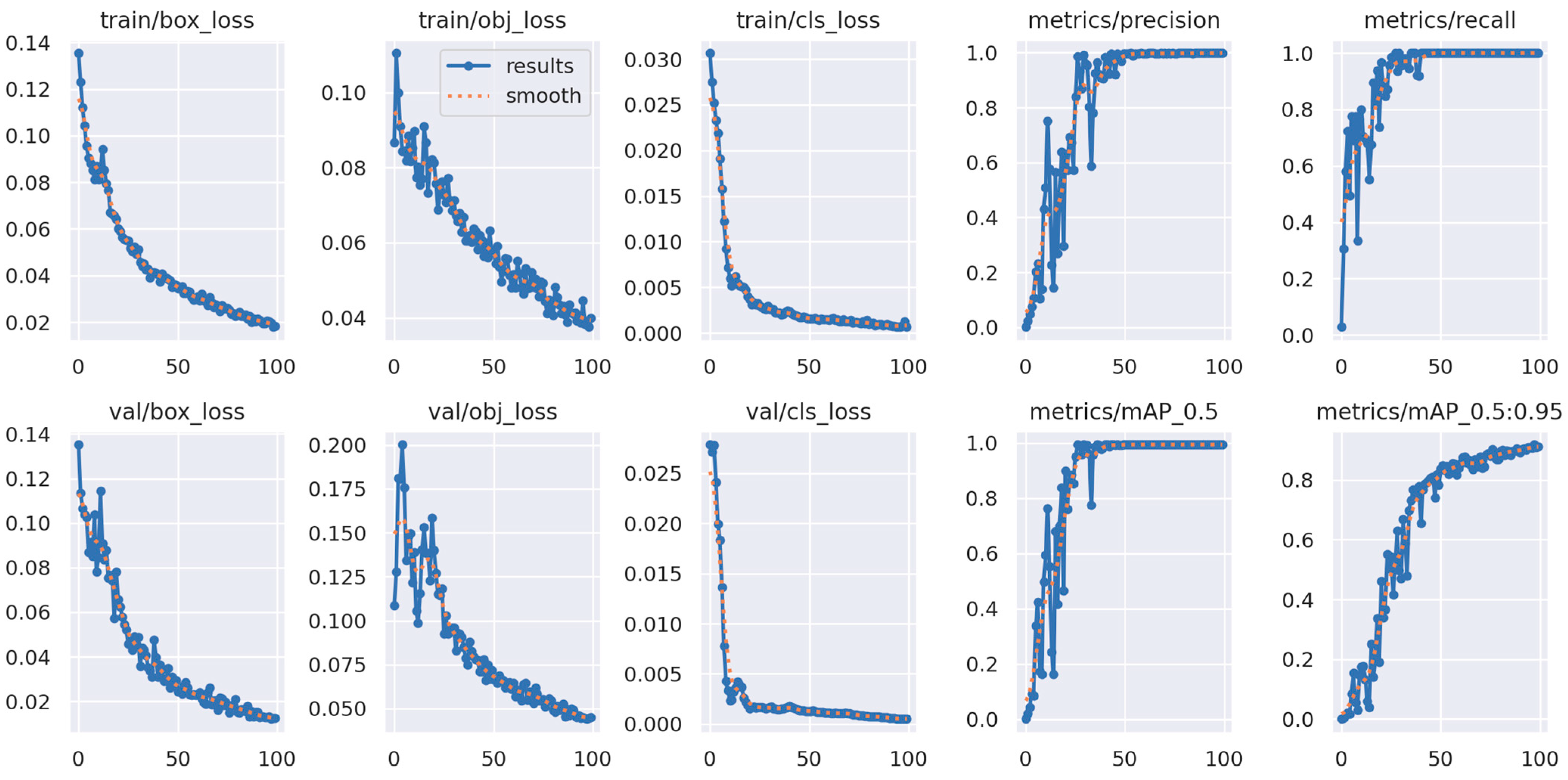

The average training and validation curves obtained from the cross-validation procedure are shown in

Figure 12. The results indicate early convergence, with accuracy exceeding 98 percent by epoch 10 and loss values approaching zero. The mean performance across the folds reveals consistent validation behavior with minimal signs of overfitting, which is attributed to the inclusion of dropout layers and the fixed region segmentation strategy applied during preprocessing.

The model demonstrated high robustness throughout training, as reflected in the average curves across the five validation folds. Classification accuracy consistently exceeded 98 percent after the first 10 epochs, and loss values approached zero before epoch 20. The averaged trends reveal stable convergence with minimal signs of overfitting, which can be attributed to the use of dropout layers and the fixed-size patch segmentation strategy. The final average validation accuracy reached 99.5 percent, confirming the model’s ability to reliably extract ripeness-related features from RGB input data under controlled conditions.

To evaluate the risk of overfitting in the proposed convolutional neural network, a five-fold cross-validation strategy was implemented during training. The classification metrics obtained across all folds were consistent, demonstrating strong generalization capability. Furthermore, the training and validation curves exhibited stable and parallel convergence, with no evidence of divergence or oscillatory behavior. These results indicate that the model successfully avoids overfitting, despite the moderate size of the dataset (2010 images), thanks to its compact design and the relatively low number of trainable parameters.

In contrast, the YOLOv5 model, although capable of processing entire images in real time, exhibited slightly lower classification accuracy when evaluated under the same conditions. While it achieved high mean average precision (mAP) values and provided integrated object localization, it showed greater sensitivity to background noise and object scale variation. These factors, combined with its higher computational demand, made it less efficient in scenarios with fixed and known object positions, such as the one addressed in this study. The training and validation loss curves of the YOLOv5 model, generated during the training phase on Google Colab, are presented in

Figure 13. These curves illustrate the model’s convergence behavior and performance stability during the learning process.

Overall, the proposed CNN model outperformed YOLOv5 in terms of classification accuracy, training consistency, and suitability for deployment in structured environments. Its tailored architecture, specifically designed for the characteristics of the pajuro grain dataset, resulted in a more reliable and resource-efficient solution.

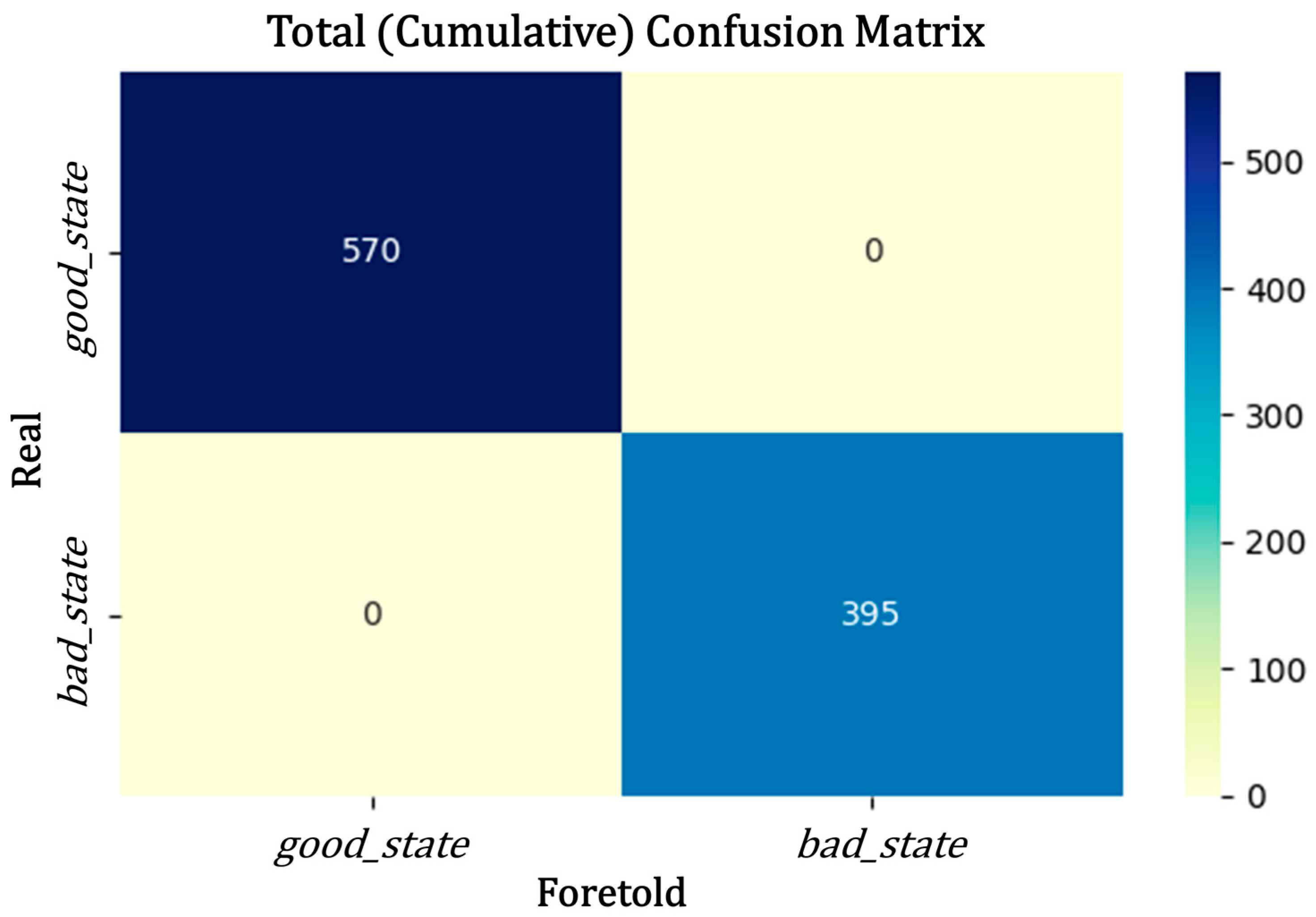

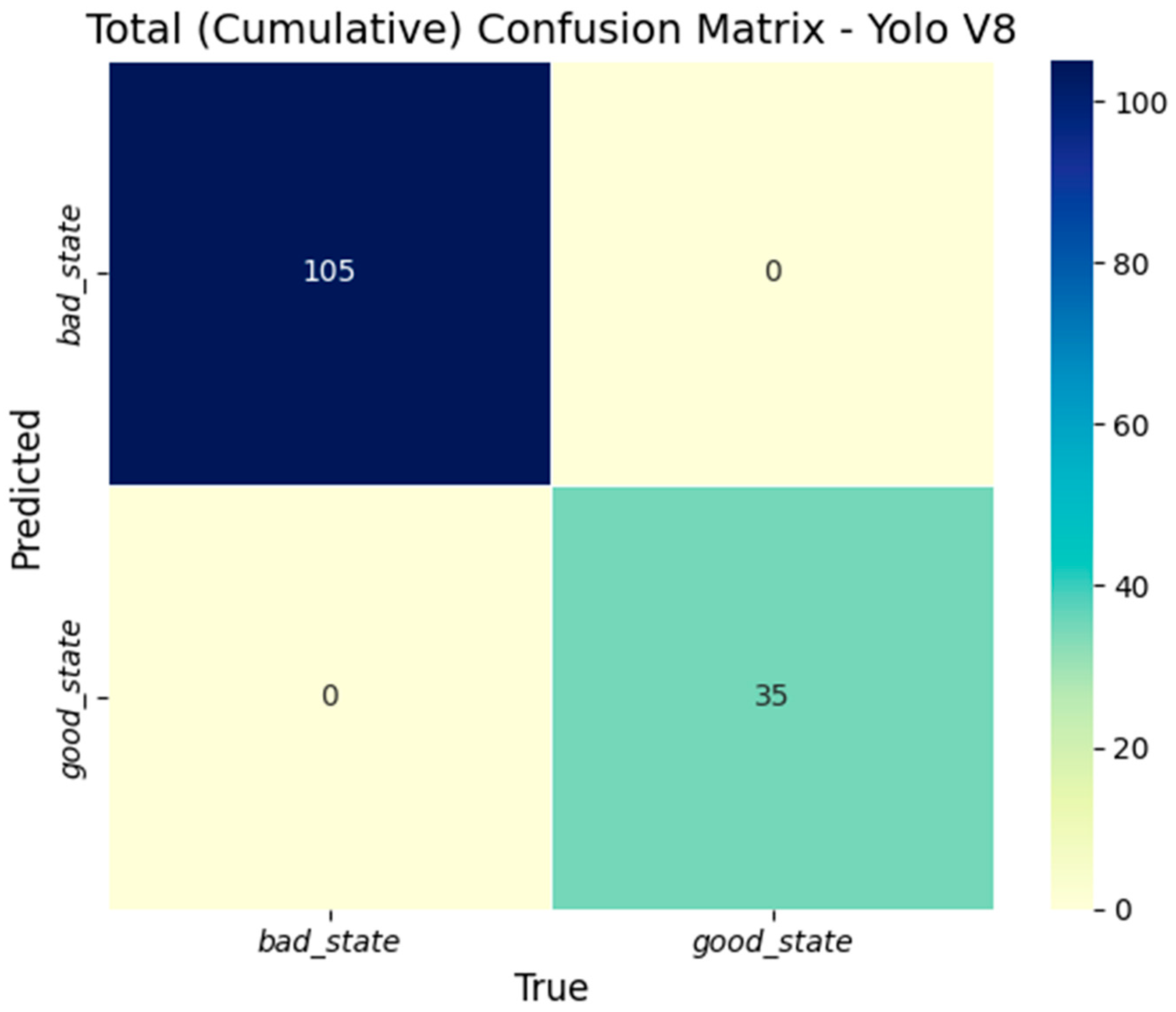

3.2. Cumulative Classification Results

The cumulative performance of the proposed model across all test sets demonstrated perfect classification: all 570 segments labeled as good_state and 395 labeled as bad_state were correctly identified. These labels, defined during the manual annotation phase of the dataset, represent the two quality categories used by the vision system—good_state corresponding to acceptable pajuro beans and bad_state to defective ones. The aggregated confusion matrix, shown in

Figure 14, confirms the absence of misclassifications across both classes.

Such reliability is essential in real-time sorting environments, where even isolated errors can result in incorrect actuator responses, either by rejecting viable beans or by failing to remove defective ones.

The precision, recall, and F1 score values for both the proposed CNN and YOLOv5s models are presented in

Table 7. While the YOLO model achieved strong performance, the CNN architecture demonstrated superior results in both classes, reaching perfect or near-perfect scores. These findings indicate that models specifically designed with domain-specific constraints, such as consistent seed morphology and fixed image patch sizes, can outperform general object detectors in highly structured and specialized classification tasks.

The discrepancy in performance is particularly evident in the recall values, where the proposed CNN model achieved 0.997 for both classes, indicating a lower rate of false negatives. This aspect is especially relevant in agricultural sorting tasks, where failing to detect a defective or overripe seed can compromise product quality or contaminate downstream processing stages. Similarly, the F1 scores confirm the model’s consistency across classes, showing no significant tradeoff between precision and recall.

In terms of efficiency, the average inference time per image segment was 12.3 milliseconds for the CNN model, compared to 41.7 milliseconds for YOLO. This difference, representing more than a threefold increase in speed, is primarily attributed to the CNN’s simpler architecture, optimized input size, and the absence of a complete object localization pipeline. With an inference rate of approximately five frames per second, the proposed model supports real-time operation, even on low-power devices. In contrast, YOLO’s more general framework, although robust and widely adopted, imposes a higher computational load and is less suited for environments with limited hardware resources and energy availability.

This substantial gain in both speed and accuracy positions the proposed CNN model as a practical solution for embedded agricultural applications, such as seed sorting or quality control systems operating on platforms like the Raspberry Pi. Moreover, the fixed input configuration used during training ensures stable performance, as the model does not need to generalize across varying object scales, random positions, or complex backgrounds, which are often sources of error in task-specific scenarios using general detection frameworks.

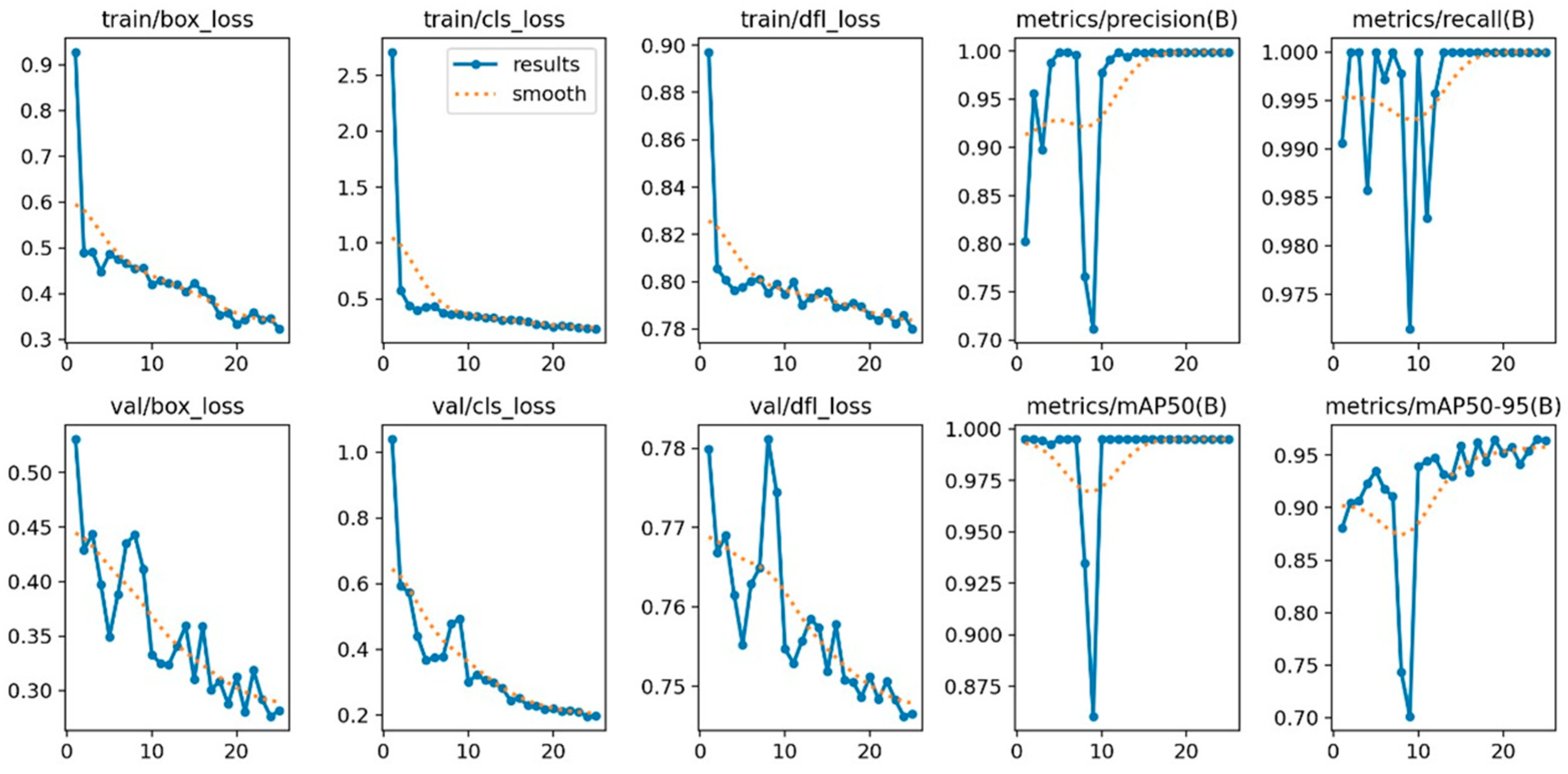

3.3. Evaluation of Alternative YOLO Models

To support the selection of YOLOv5s as the baseline model, additional experiments were conducted using two alternative object detection architectures: YOLOv5n and YOLOv8. These evaluations were performed under identical classification tasks and hardware constraints to ensure fair comparison.

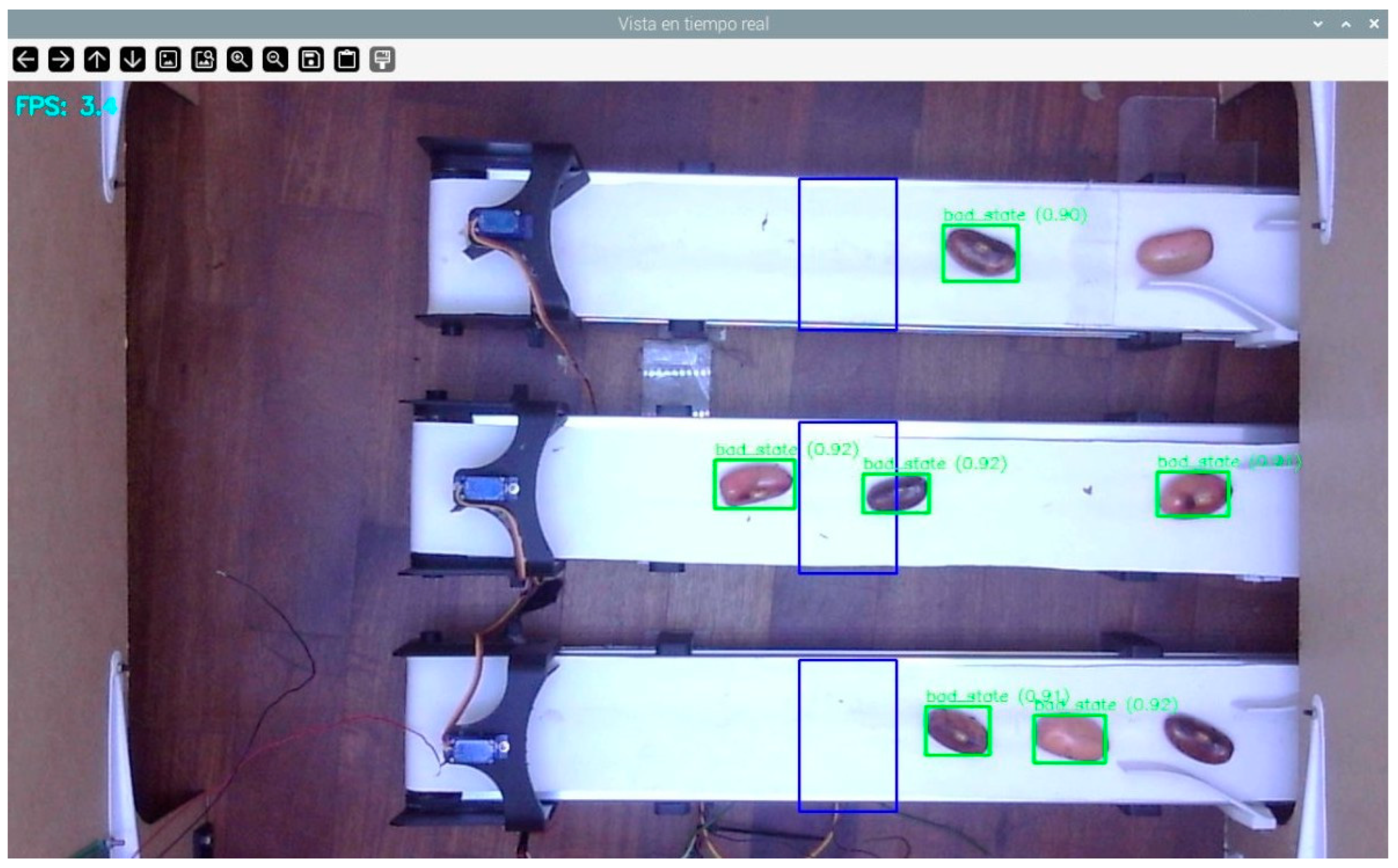

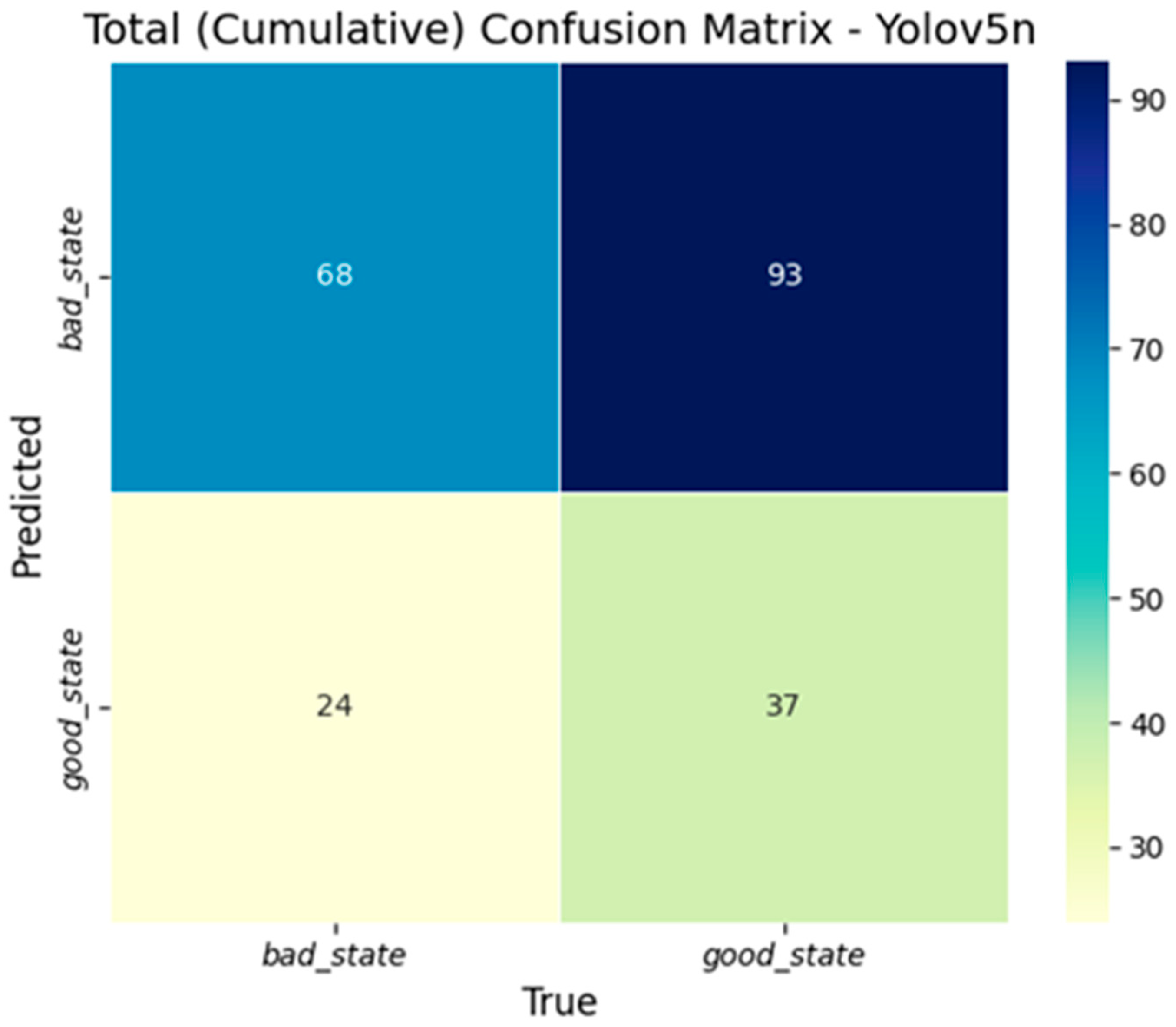

YOLOv5n was selected due to its lightweight design, which prioritizes inference speed and a reduced computational load. On the Raspberry Pi 5, it achieved an average processing speed of 3.5 frames per second. However, this gain in speed came at the expense of classification accuracy. As shown in

Figure 15, the model systematically misclassified all detected regions as “bad_state”, regardless of actual seed maturity. This behavior is further confirmed by the confusion matrix in

Figure 16, which reveals a complete absence of true positives for the “good_state” category. Despite its high speed, YOLOv5n fails to meet the accuracy requirements necessary for reliable real-time agricultural sorting, making it unsuitable for the intended application.

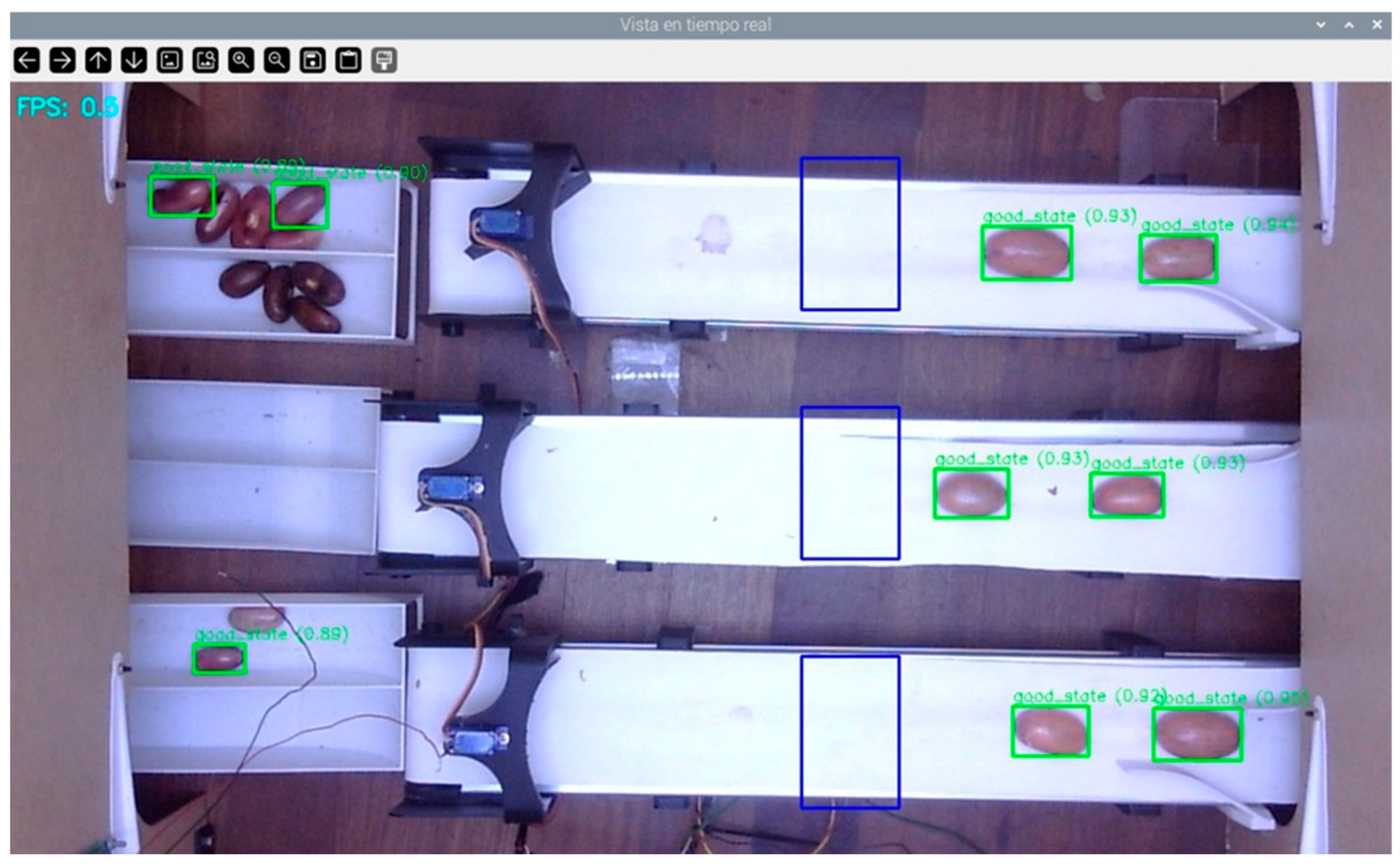

In contrast, YOLOv8 exhibited markedly superior classification performance. Validation metrics indicated a precision of 99.9%, a recall of 99.8%, and an mAP50 score of 96.9%. The confusion matrix presented in

Figure 17 confirms complete separation between the “good_state” and “bad_state” classes, reflecting high predictive reliability. Furthermore, the training curves shown in

Figure 18 demonstrate consistent loss minimization and stable evolution of performance metrics over 25 epochs, suggesting effective learning and generalization.

Despite these strengths, inference tests conducted on the Raspberry Pi 5 revealed a critical limitation: the model achieved an average frame rate of only 0.5

fps, as depicted in

Figure 19. This low inference speed makes YOLOv8 unsuitable for real-time deployment in conveyor-based sorting systems, where rapid detection and actuation are essential for operational viability.

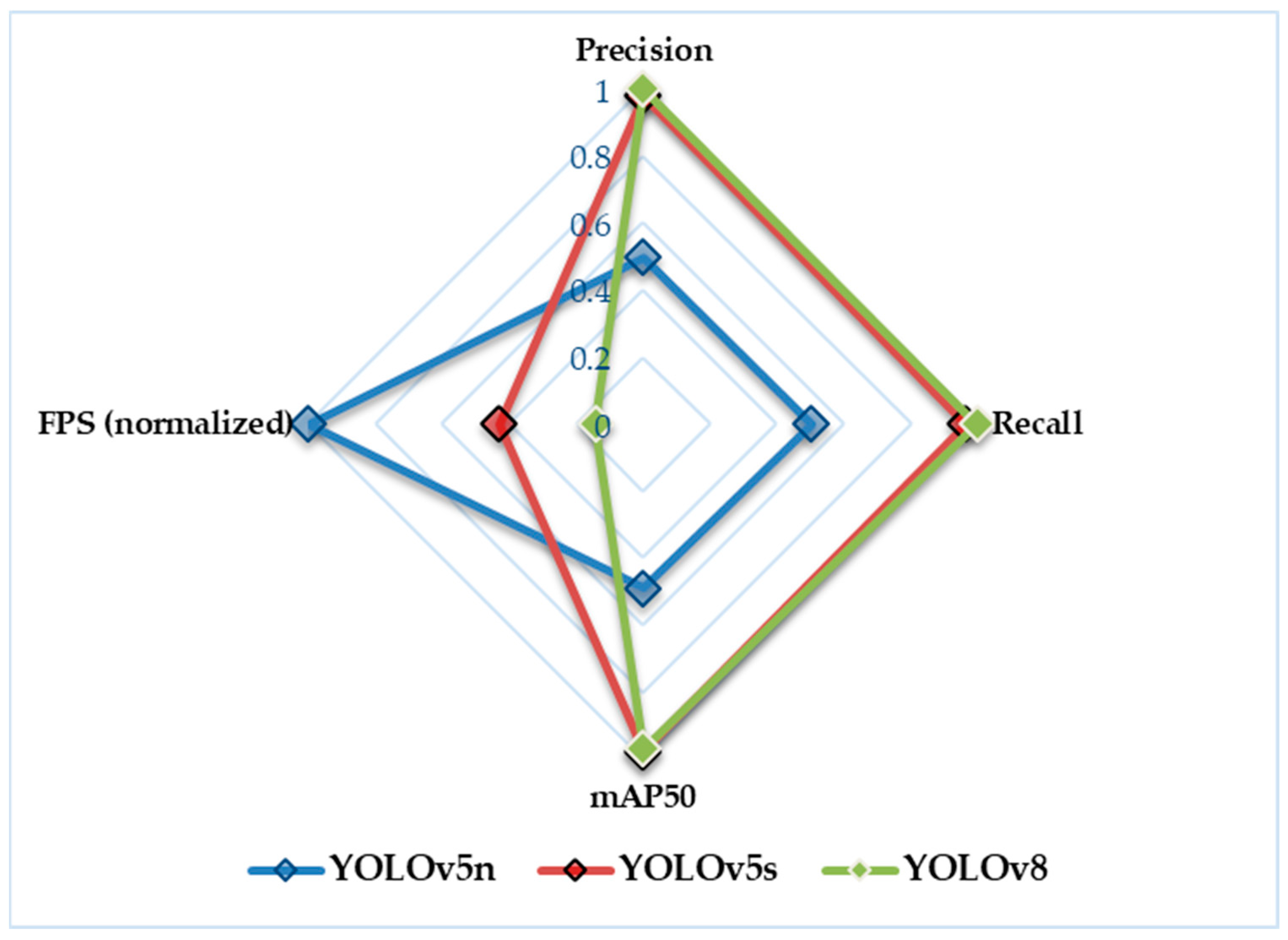

To summarize and compare the performance of all evaluated YOLO models,

Table 7 presents the key classification metrics and inference speeds. Additionally, a radar plot is included in

Figure 20 to visually illustrate the trade-offs between accuracy and processing speed across the three architectures.

The results confirm that YOLOv5s offers the most balanced solution for the embedded application requirements of this study. It achieves high classification accuracy while maintaining a processing speed compatible with real-time deployment on the Raspberry Pi 5. Although YOLOv8 demonstrated the highest accuracy, its low inference speed (0.5 fps) renders it unsuitable for time-sensitive sorting tasks. Conversely, YOLOv5n delivered a higher processing speed (3.5 fps) but failed to provide reliable classification, as it misclassified all samples.

The

fps values reported in

Table 8 were obtained using the same real-time evaluation methodology described in

Section 2.2.4, ensuring consistent and comparable results. Based on this analysis, YOLOv5s was selected as the baseline architecture, as it provides the best trade-off between detection accuracy and inference latency under the hardware constraints of this study.

3.4. Comparison with Human Performance

To evaluate the effectiveness of the automated classification system relative to manual labor, a comparative study was carried out involving human operators. Each bean was individually assessed by three experienced local workers with practical knowledge of pajuro harvesting and manual sorting procedures in the Luya region (Amazonas, Peru). The classification results were then compared with the predictions produced by both neural network models.

For reference labeling, a consensus approach was adopted: the label assigned to each bean corresponded to the majority vote among the three evaluators, meaning that at least two operators agreed on the same class. This consensus served as the ground truth to assess both the accuracy of individual human performance and the reliability of the AI models under realistic classification scenarios.

The three human operators, all of whom had previous experience in manual sorting of agricultural grains, showed variability in their classification decisions. This outcome is consistent with existing findings in the literature related to manual grading of agricultural products [

25]. A total of 180 samples were independently evaluated by each operator. The average classification time per bean was 1.47 s, with noticeable variability attributed to subjective judgment and operator fatigue. A summary of the comparative results between the automated models and human operators, including accuracy, consistency, and average classification time, is provided in

Table 9.

Although human performance remains reasonably high, the automated systems, particularly the proposed CNN model, demonstrate a clear advantage in both accuracy and consistency. The classification speed achieved by the CNN model, which operates in less than 13 milliseconds per segment, significantly exceeds what is feasible through manual inspection. This efficiency highlights its suitability for implementation in continuous agroindustrial processes that require real-time decision making.

Furthermore, the observed variation among human operators, with an average agreement rate of 89.5 percent, emphasizes the need for standardization in quality control tasks. Inconsistencies caused by fatigue, differences in experience, and cognitive bias can lead to unreliable sorting outcomes. In contrast, artificial intelligence models trained on well-annotated datasets apply consistent classification criteria, reduce subjectivity, and ensure reproducible results across evaluations.

Statistical Analysis of Model Disagreements

To evaluate whether the differences observed between classification methods were statistically significant rather than the result of random variation, McNemar’s test with Yates’ continuity correction was applied. This non-parametric test is specifically designed to compare the performance of two classifiers on the same dataset, focusing on asymmetries in their classification errors.

Unlike traditional accuracy-based comparisons, McNemar’s test does not rely on overall correct predictions but instead assesses whether one classifier tends to outperform the other in the cases where they disagree. This approach is particularly useful when both models achieve high overall accuracy, as it can reveal systematic performance differences that would otherwise remain undetected.

Two pairwise comparisons were conducted: (1) between the proposed CNN model and the YOLOv5s detector, and (2) between the CNN model and human operators. Each evaluation involved a balanced test set of 900 samples. The corresponding contingency tables are presented in

Table 10 and

Table 11, which show the number of instances where both classifiers agreed, as well as cases in which only one provided the correct prediction.

In the first comparison, between the CNN and YOLOv5s, McNemar’s test resulted in a chi-squared statistic of 9.33 and a p-value of 0.0022. This indicates that the likelihood of such a disagreement pattern occurring by chance, assuming both models had equal error distributions, is only 0.22%. Since this p-value is well below the standard significance threshold of 0.05, the null hypothesis of equivalent performance is rejected. Therefore, it can be concluded that the CNN significantly outperforms YOLOv5s in scenarios where their predictions differ.

In the second comparison, between the CNN model and human operators, McNemar’s test yielded a chi-squared statistic of 66.96 with a p-value lower than 0.0001. This extremely low probability strongly indicates that the disagreement pattern is not due to chance but rather reflects a systematic superiority of the CNN model over human judgment in classification tasks. The result confirms that the CNN consistently outperforms human operators, particularly in challenging or ambiguous cases.

Taken together, these findings provide robust statistical support for the reliability of the proposed CNN model. Not only does it achieve high overall accuracy, but it also demonstrates superior performance in instances where traditional classification methods, such as manual evaluation by human operators or general-purpose object detectors like YOLOv5s, tend to be less effective. The application of McNemar’s test confirms that the differences observed are statistically significant and reflect a genuine advantage of the proposed approach.

3.5. Discussion of Relative Performance

The results obtained demonstrate a clear superiority of the automated classification models over manual sorting by human operators, both in terms of accuracy and processing speed. Among the evaluated architectures, the custom-designed CNN model achieved perfect classification performance along with the shortest inference time, confirming the advantages of employing task-specific neural networks in agricultural applications.

These findings are consistent with previous studies in other crops, where artificial intelligence-based classifiers have outperformed human operators not only in precision but also in repeatability. For example, one study reported 94 percent accuracy using a YOLOv3 model for cherry coffee grading, resulting in significant reductions in manual processing time [

28]. In comparison, the custom CNN model in this study achieved 100 percent accuracy on the pajuro test set and processed each sample in under 13 milliseconds, demonstrating both technical precision and operational viability in real-time conditions.

The slight performance gap between the custom CNN model and the YOLO architecture highlights the importance of model specialization. While YOLO is a general-purpose framework suitable for a wide range of real-time detection tasks, its structure is not optimized for binary classification problems with low visual variability, such as the maturity grading of pajuro beans. In contrast, the custom CNN model was specifically designed and trained for this task, incorporating tailored parameters and fixed input segmentation strategies that prevent overcounting and enhance classification accuracy.

Furthermore, human decision making is inherently variable and subject to factors such as fatigue, prior experience, visual perception, and cognitive bias. As discussed in

Section 3.3, the average agreement among human operators remained below 90 percent, and their classification speed was significantly slower than that of the automated models. These limitations reinforce the need to adopt automated alternatives, particularly in regions where industrial-scale pajuro processing remains underdeveloped.

The successful deployment of the custom CNN model on a low-cost embedded platform such as the Raspberry Pi further strengthens its practical applicability. This hardware integration allows for scalable implementation in rural or remote environments, thereby promoting the technological development of underutilized crops like pajuro and contributing to improved postharvest quality control in local production chains.

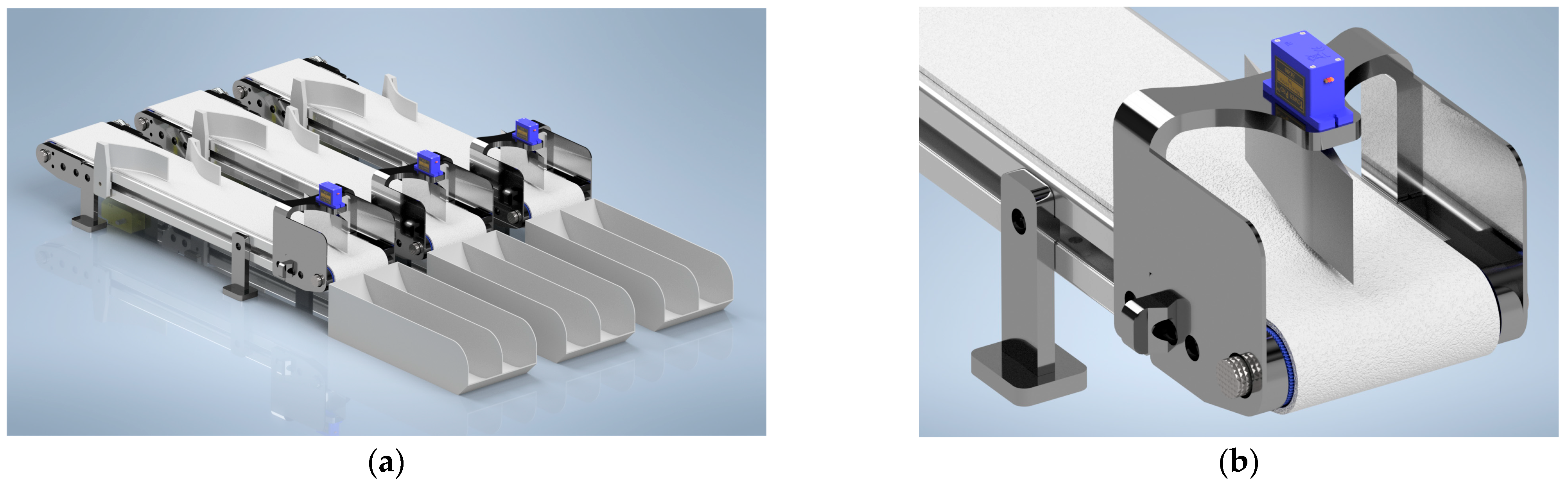

From a practical standpoint, the mechanical configuration of the proposed system enables a maximum processing capacity of approximately 81 kg of pajuro per hour per conveyor belt, assuming continuous operation and optimal grain flow. This estimate is based on experimental timing and spacing conditions: each grain requires approximately six seconds to travel from entry to sorting, and a minimum spacing of 50 mm is necessary to ensure accurate classification. With an effective conveyor length of 57 cm, the system can process up to nine grains per cycle, yielding a total of 5400 grains per hour. Considering an average grain weight of 15 g, this corresponds to an output of 81 kg per hour. Such performance represents a substantial improvement over manual methods and reinforces the potential of the system for deployment in agroindustrial contexts.

3.6. Comparison with Related Studies

The results obtained in this study, which employed a custom convolutional neural network for pajuro grain classification, demonstrate an average accuracy of 99.7 percent with inference times of only 12.3 milliseconds per segment. These outcomes not only surpass traditional manual classification methods but also outperform general architectures such as YOLO. Despite its extensive validation in agricultural applications, YOLO exhibited longer processing times of 41.7 milliseconds and slightly lower classification accuracy.

When compared with recent research on Egyptian cotton fiber classification using deep learning, the advantages of the proposed system become evident. In that study, pre-trained models including VGG19, AlexNet, and GoogleNet were applied to classify fibers from five cotton cultivars, achieving accuracies ranging from 75.7 to 90.0 percent depending on the cultivar and architecture used [

27]. Although that work highlights the effectiveness of transfer learning and the handling of a diverse range of fiber qualities, training times were significantly longer, reaching up to 487.345 s, and optimized inference times for embedded hardware were not reported. This limits the applicability of such models in real-time scenarios, particularly in rural or resource-constrained environments.

Additionally, while the aforementioned study explored model fusion strategies to slightly improve accuracy—achieving up to 92.9 percent in the best configuration—the system proposed in the present work attains high performance without the need for fusion techniques. This results in lower computational complexity and improved operational efficiency. The superior performance can be attributed to the specialized nature of the custom convolutional neural network, which was specifically designed for the morzphological characteristics of pajuro grains and the operational constraints of the processing environment. This contrasts with the more generic and resource-intensive pre-trained architectures employed in other studies.

While this study focused on a specific native crop,

Erythrina edulis (pajuro), and was conducted in a controlled testing environment, the system was developed with scalability and adaptability as fundamental design principles. The modular structure of the solution, which includes a conveyor belt mechanism, an overhead camera, and a real-time classification module executed on an embedded platform with low cost, can be replicated in multiple parallel lines to increase processing capacity in industrial contexts. As described in

Section 3.5, each conveyor unit is capable of handling up to 81 kg of seeds per hour. Therefore, by deploying several synchronized modules, the system can be scaled to meet higher production demands without substantially increasing computational requirements or hardware complexity.

Although the convolutional neural network was trained specifically using visual features extracted from pajuro seeds, the methodology applied in this study is broadly transferable. It integrates color-based segmentation, classification restricted to predefined regions of interest, and actuation through a servo-driven sorting mechanism. This approach can be adapted to other agricultural products that exhibit visually distinguishable maturity or quality indicators. By retraining the model with appropriately labeled datasets, the system can be extended to crops such as beans, maize, or coffee, as long as they present identifiable visual characteristics relevant for classification.

One limitation of the current implementation lies in its evaluation under controlled lighting and environmental conditions. While this approach facilitated consistent training and testing, it may reduce the model’s robustness in variable real-world scenarios. Future work will address this by incorporating data collected under diverse lighting conditions, camera orientations, and sensor types, thereby improving the model’s generalization capacity without requiring changes to the existing system hardware.

In addition, the conveyor belt operated at a fixed speed during evaluation to maintain synchronization between detection and actuation. However, the system’s processing loop is already optimized for low-latency execution, making it feasible to adapt to variable conveyor speeds through minor modifications to the timing logic. This opens the door for further experimentation on the relationship between belt velocity, accuracy, and sorting response time.

Finally, although this work focused specifically on pajuro seeds, the modular design of both hardware and software components makes the system readily adaptable to other native legumes that share similar visual traits. Future developments will focus on validating the system’s robustness, flexibility, and scalability across a broader range of agricultural applications.

4. Conclusions

This study presented a lightweight convolutional neural network specifically designed for the classification of pajuro (Erythrina edulis) grains according to their ripeness stage. The proposed model was trained using stratified five-fold cross-validation and achieved an average validation accuracy of 99.7 percent, with near-perfect precision, recall, and F1 scores for both optimally ripe and overripe classes. These results confirm the model’s robustness and reliability under controlled imaging conditions.

In comparison with the YOLOv5s architecture, a widely used general-purpose object detector, the proposed CNN model consistently outperformed it across all evaluation metrics. While YOLOv5s achieved precision and recall values close to 97 and 98 percent, respectively, the custom network exceeded 99 percent in both categories. This improvement reflects a significant reduction in misclassifications and a more consistent performance, particularly under structured input conditions.

Beyond classification accuracy, the model also demonstrated a notable advantage in computational efficiency. With an average inference time of 12.3 milliseconds per segment, considerably faster than the 41.7 milliseconds observed for YOLOv5s, the system is capable of real-time operation at five frames per second, even when deployed on low-power platforms such as the Raspberry Pi. This level of performance highlights its suitability for embedded agricultural applications where energy efficiency, processing speed, and hardware limitations are critical factors.

In summary, the results validate the benefits of developing task-specific neural network architectures tailored to the morphological and operational characteristics of agricultural products. The proposed CNN model not only achieves high classification performance but also offers a scalable and cost-effective solution for real-time sorting in agro-industrial environments. Its successful implementation for the classification of pajuro grains demonstrates strong potential for broader application to other native or underutilized crops, contributing to the advancement of precision agriculture in regions with limited technological infrastructure.