A Review of Online Classification Performance in Motor Imagery-Based Brain–Computer Interfaces for Stroke Neurorehabilitation

Abstract

:1. Introduction

2. Motor Imagery Classification Pipelines

2.1. Pre-Processing

2.2. Feature Extraction and Selection

2.3. Classification

3. Methods

3.1. Searching Criteria

3.2. Statistical Tests

4. Results

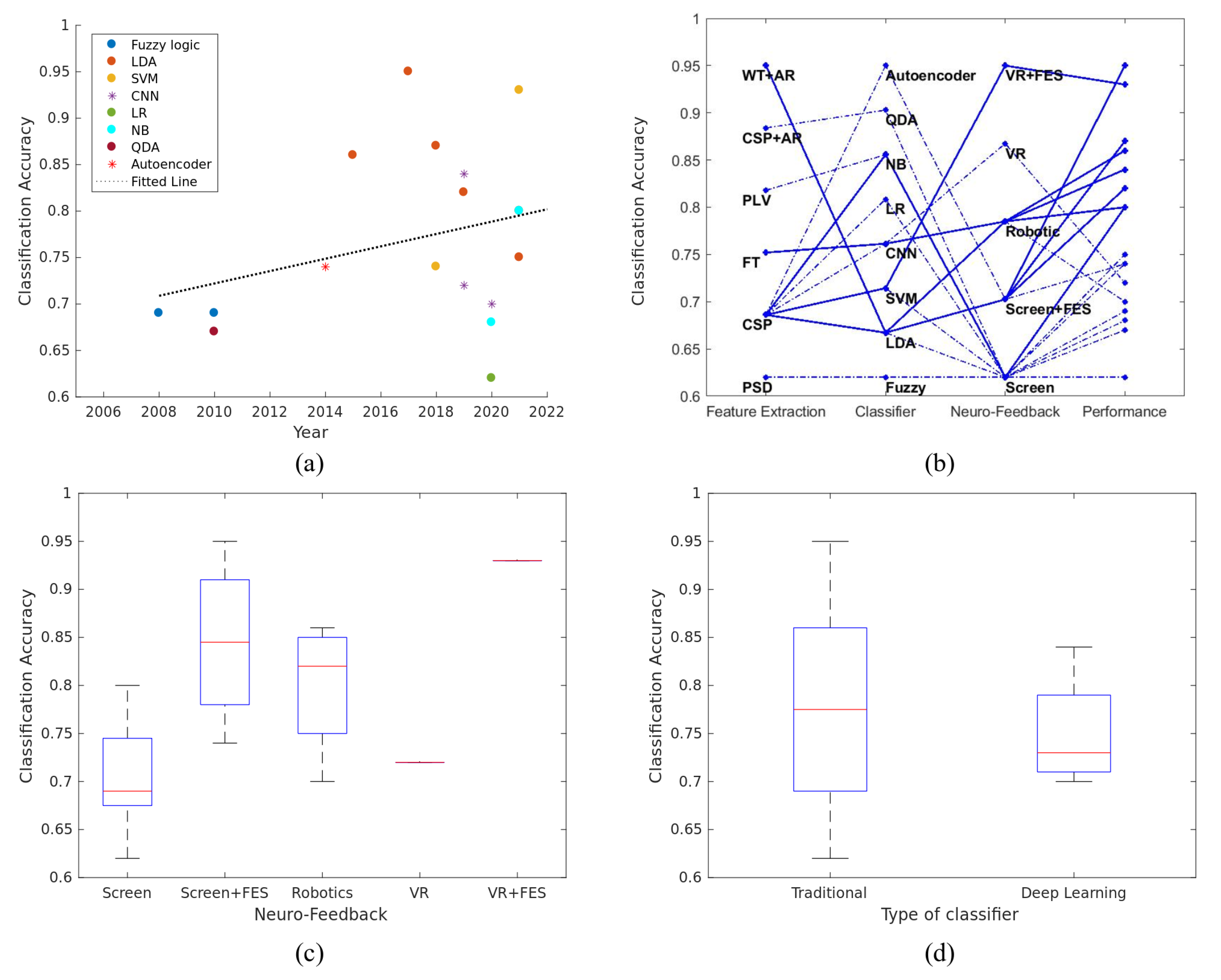

4.1. Comparison of the Algorithms Used for Classifying Motor Imagery

4.2. Influence of User’s Characteristics to the BCI Performance

4.3. Correlation of Classification Accuracy with Various Parameters of BCI Framework

5. Discussion

6. Limitations

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Mozaffarian, D.; Benjamin, E.J.; Go, A.S.; Arnett, D.K.; Blaha, M.J.; Cushman, M.; De Ferranti, S.; Després, J.P.; Fullerton, H.J.; Howard, V.J.; et al. Heart disease and stroke statistics—2015 update: A report from the American Heart Association. Circulation 2015, 131, e29–e322. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Butler, A.J.; Page, S.J. Mental practice with motor imagery: Evidence for motor recovery and cortical reorganization after stroke. Arch. Phys. Med. Rehabil. 2006, 87, 2–11. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Thomas, L.H.; French, B.; Coupe, J.; McMahon, N.; Connell, L.; Harrison, J.; Sutton, C.J.; Tishkovskaya, S.; Watkins, C.L. Repetitive task training for improving functional ability after stroke: A major update of a Cochrane review. Stroke 2017, 48, e102–e103. [Google Scholar] [CrossRef] [Green Version]

- Pérez-Cruzado, D.; Merchán-Baeza, J.A.; González-Sánchez, M.; Cuesta-Vargas, A.I. Systematic review of mirror therapy compared with conventional rehabilitation in upper extremity function in stroke survivors. Aust. Occup. Ther. J. 2017, 64, 91–112. [Google Scholar] [CrossRef] [PubMed]

- Kho, A.Y.; Liu, K.P.; Chung, R.C. Meta-analysis on the effect of mental imagery on motor recovery of the hemiplegic upper extremity function. Aust. Occup. Ther. J. 2014, 61, 38–48. [Google Scholar] [CrossRef] [PubMed]

- Celnik, P.; Webster, B.; Glasser, D.M.; Cohen, L.G. Effects of action observation on physical training after stroke. Stroke 2008, 39, 1814–1820. [Google Scholar] [CrossRef] [Green Version]

- Hong, X.; Lu, Z.K.; Teh, I.; Nasrallah, F.A.; Teo, W.P.; Ang, K.K.; Phua, K.S.; Guan, C.; Chew, E.; Chuang, K.H. Brain plasticity following MI-BCI training combined with tDCS in a randomized trial in chronic subcortical stroke subjects: A preliminary study. Sci. Rep. 2017, 7, 9222. [Google Scholar] [CrossRef] [Green Version]

- Ramos-Murguialday, A.; Curado, M.R.; Broetz, D.; Yilmaz, Ö.; Brasil, F.L.; Liberati, G.; Garcia-Cossio, E.; Cho, W.; Caria, A.; Cohen, L.G.; et al. Brain-machine interface in chronic stroke: Randomized trial long-term follow-up. Neurorehabilit. Neural Repair 2019, 33, 188–198. [Google Scholar] [CrossRef]

- Cervera, M.A.; Soekadar, S.R.; Ushiba, J.; Millán, J.d.R.; Liu, M.; Birbaumer, N.; Garipelli, G. Brain-computer interfaces for post-stroke motor rehabilitation: A meta-analysis. Ann. Clin. Transl. Neurol. 2018, 5, 651–663. [Google Scholar] [CrossRef]

- Jeannerod, M.; Decety, J. Mental motor imagery: A window into the representational stages of action. Curr. Opin. Neurobiol. 1995, 5, 727–732. [Google Scholar] [CrossRef]

- Di Rienzo, F.; Collet, C.; Hoyek, N.; Guillot, A. Impact of neurologic deficits on motor imagery: A systematic review of clinical evaluations. Neuropsychol. Rev. 2014, 24, 116–147. [Google Scholar] [CrossRef] [PubMed]

- Cramer, S.C.; Sur, M.; Dobkin, B.H.; O’Brien, C.; Sanger, T.D.; Trojanowski, J.Q.; Rumsey, J.M.; Hicks, R.; Cameron, J.; Chen, D.; et al. Harnessing neuroplasticity for clinical applications. Brain 2011, 134, 1591–1609. [Google Scholar] [CrossRef]

- Nicolas-Alonso, L.F.; Gomez-Gil, J. Brain computer interfaces, a review. Sensors 2012, 12, 1211–1279. [Google Scholar] [CrossRef] [PubMed]

- Pfurtscheller, G. EEG event-related desynchronization (ERD) and synchronization (ERS). Electroencephalogr. Clin. Neurophysiol. 1997, 1, 26. [Google Scholar] [CrossRef]

- Lotte, F.; Congedo, M.; Lécuyer, A.; Lamarche, F.; Arnaldi, B. A review of classification algorithms for EEG-based brain-computer interfaces. J. Neural Eng. 2007, 4, R1–R13. [Google Scholar] [CrossRef] [Green Version]

- Zhang, X.; Hou, W.; Wu, X.; Feng, S.; Chen, L. A Novel Online Action Observation-Based Brain-Computer Interface That Enhances Event-Related Desynchronization. IEEE Trans. Neural Syst. Rehabil. Eng. 2021, 29, 2605–2614. [Google Scholar] [CrossRef]

- Myrden, A.; Chau, T. Effects of user mental state on EEG-BCI performance. Front. Hum. Neurosci. 2015, 9, 308. [Google Scholar] [CrossRef] [Green Version]

- Juliano, J.M.; Spicer, R.P.; Vourvopoulos, A.; Lefebvre, S.; Jann, K.; Ard, T.; Santarnecchi, E.; Krum, D.M.; Liew, S.L. Embodiment Is Related to Better Performance on a Brain–Computer Interface in Immersive Virtual Reality: A Pilot Study. Sensors 2020, 20, 1204. [Google Scholar] [CrossRef] [Green Version]

- Prasad, G.; Herman, P.; Coyle, D.; McDonough, S.; Crosbie, J. Applying a brain-computer interface to support motor imagery practice in people with stroke for upper limb recovery: A feasibility study. J. NeuroEng. Rehabil. 2010, 7, 60. [Google Scholar] [CrossRef] [Green Version]

- Vourvopoulos, A.; Pardo, O.M.; Lefebvre, S.; Neureither, M.; Saldana, D.; Jahng, E.; Liew, S.L. Effects of a brain-computer interface with virtual reality (VR) neurofeedback: A pilot study in chronic stroke patients. Front. Hum. Neurosci. 2019, 13, 210. [Google Scholar] [CrossRef]

- Achanccaray, D.; Izumi, S.I.; Hayashibe, M. Visual-Electrotactile Stimulation Feedback to Improve Immersive Brain-Computer Interface Based on Hand Motor Imagery. Comput. Intell. Neurosci. 2021, 2021, 8832686. [Google Scholar] [CrossRef]

- Gaur, P.; Gupta, H.; Chowdhury, A.; McCreadie, K.; Pachori, R.B.; Wang, H. A Sliding Window Common Spatial Pattern for Enhancing Motor Imagery Classification in EEG-BCI. IEEE Trans. Instrum. Meas. 2021, 70, 4002709. [Google Scholar] [CrossRef]

- Vourvopoulos, A.; Jorge, C.; Abreu, R.; Figueiredo, P.; Fernandes, J.C.; Bermúdez i Badia, S. Efficacy and Brain Imaging Correlates of an Immersive Motor Imagery BCI-Driven VR System for Upper Limb Motor Rehabilitation: A Clinical Case Report. Front. Hum. Neurosci. 2019, 13, 244. [Google Scholar] [CrossRef] [Green Version]

- Vourvopoulos, A.; Blanco-Mora, D.A.; Aldridge, A.; Jorge, C.; Figueiredo, P.; Badia, S.B.i. Enhancing Motor-Imagery Brain-Computer Interface Training with Embodied Virtual Reality: A Pilot Study with Older Adults. In Proceedings of the 2022 IEEE International Conference on Metrology for Extended Reality, Artificial Intelligence and Neural Engineering (MetroXRAINE), Rome, Italy, 26–28 October 2022; pp. 157–162. [Google Scholar] [CrossRef]

- Fleury, M.; Lioi, G.; Barillot, C.; Lécuyer, A. A Survey on the Use of Haptic Feedback for Brain-Computer Interfaces and Neurofeedback. Front. Neurosci. 2020, 14, 528. [Google Scholar] [CrossRef] [PubMed]

- Altaheri, H.; Muhammad, G.; Alsulaiman, M.; Amin, S.U.; Altuwaijri, G.A.; Abdul, W.; Bencherif, M.A.; Faisal, M. Deep learning techniques for classification of electroencephalogram (EEG) motor imagery (MI) signals: A review. Neural Comput. Appl. 2021, 1–42. [Google Scholar] [CrossRef]

- Padfield, N.; Zabalza, J.; Zhao, H.; Masero, V.; Ren, J. EEG-Based Brain-Computer Interfaces Using Motor-Imagery: Techniques and Challenges. Sensors 2019, 19, 1423. [Google Scholar] [CrossRef] [Green Version]

- Ahn, M.; Jun, S.C. Performance variation in motor imagery brain-computer interface: A brief review. J. Neurosci. Methods 2015, 243, 103–110. [Google Scholar] [CrossRef]

- Jayaram, V.; Alamgir, M.; Altun, Y.; Scholkopf, B.; Grosse-Wentrup, M. Transfer learning in brain-computer interfaces. IEEE Comput. Intell. Mag. 2016, 11, 20–31. [Google Scholar] [CrossRef] [Green Version]

- Wu, D.; Xu, Y.; Lu, B.L. Transfer Learning for EEG-Based Brain–Computer Interfaces: A Review of Progress Made Since 2016. IEEE Trans. Cogn. Dev. Syst. 2020, 14, 4–19. [Google Scholar] [CrossRef]

- Lotte, F.; Bougrain, L.; Cichocki, A.; Clerc, M.; Congedo, M.; Rakotomamonjy, A.; Yger, F. A review of classification algorithms for EEG-based brain-computer interfaces: A 10 year update. J. Neural Eng. 2018, 15, 031005. [Google Scholar] [CrossRef] [Green Version]

- Mladenović, J. A generic framework for adaptive EEG-based BCI training and operation. arXiv 2017, arXiv:1707.07935. [Google Scholar]

- Ang, K.K.; Chin, Z.Y.; Zhang, H.; Guan, C. Filter Bank Common Spatial Pattern (FBCSP) in brain-computer interface. In Proceedings of the 2008 IEEE International Joint Conference on Neural Networks (IEEE World Congress on Computational Intelligence), Hong Kong, China, 1–8 June 2008; pp. 2390–2397. [Google Scholar] [CrossRef]

- Delorme, A.; Sejnowski, T.; Makeig, S. Enhanced detection of artifacts in EEG data using higher-order statistics and independent component analysis. NeuroImage 2007, 34, 1443–1449. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Syam, S.H.F.; Lakany, H.; Ahmad, R.B.; Conway, B.A. Comparing Common Average Referencing to Laplacian Referencing in Detecting Imagination and Intention of Movement for Brain Computer Interface. MATEC Web Conf. 2017, 140, 01028. [Google Scholar] [CrossRef]

- Lakshmi, M.R.; Prasad, T.; Prakash, D.V.C. Survey on EEG signal processing methods. Int. J. Adv. Res. Comput. Sci. Softw. Eng. 2014, 4, 84–91. [Google Scholar]

- Aggarwal, S.; Chugh, N. Signal processing techniques for motor imagery brain computer interface: A review. Array 2019, 1–2, 100003. [Google Scholar] [CrossRef]

- Da Silva, F.L. EEG: Origin and measurement. In EEg-fMRI; Springer: Berlin/Heidelberg, Germany, 2022; pp. 23–48. [Google Scholar]

- Schlögl, A.; Lugger, K.; Pfurtscheller, G. Using adaptive autoregressive parameters for a brain-computer-interface experiment. In Proceedings of the Proceedings of the 19th Annual International Conference of the IEEE Engineering in Medicine and Biology Society. ‘Magnificent Milestones and Emerging Opportunities in Medical Engineering’ (Cat. No. 97CH36136); Chicago, IL, USA, 30 October–2 November 1997, Volume 4, pp. 1533–1535.

- Darvishi, S.; Al-Ani, A. Brain-computer interface analysis using continuous wavelet transform and adaptive neuro-fuzzy classifier. In Proceedings of the 2007 29th Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Lyon, France, 22–26 August 2007; Volume 2007, pp. 3220–3223. [Google Scholar] [CrossRef] [Green Version]

- McFarland, D.J.; Wolpaw, J.R. Sensorimotor rhythm-based brain-computer interface (BCI): Feature selection by regression improves performance. IEEE Trans. Neural Syst. Rehabil. Eng. 2005, 13, 372–379. [Google Scholar] [CrossRef]

- Molla, M.K.I.; Al Shiam, A.; Islam, M.R.; Tanaka, T. Discriminative feature selection-based motor imagery classification using EEG signal. IEEE Access 2020, 8, 98255–98265. [Google Scholar] [CrossRef]

- Ozer, D.J. Correlation and the coefficient of determination. Psychol. Bull. 1985, 97, 307. [Google Scholar] [CrossRef]

- Wolpaw, J.R.; McFarland, D.J.; Vaughan, T.M.; Schalk, G. The Wadsworth Center brain-computer interface (BCI) research and development program. IEEE Trans. Neural Syst. Rehabil. Eng. 2003, 11, 1–4. [Google Scholar] [CrossRef]

- Perdikis, S.; Tonin, L.; Saeedi, S.; Schneider, C.; Millán, J.d.R. The Cybathlon BCI race: Successful longitudinal mutual learning with two tetraplegic users. PLoS Biol. 2018, 16, e2003787. [Google Scholar] [CrossRef]

- Müller-Gerking, J.; Pfurtscheller, G.; Flyvbjerg, H. Designing optimal spatial filters for single-trial EEG classification in a movement task. Clin. Neurophysiol. Off. J. Int. Fed. Clin. Neurophysiol. 1999, 110, 787–798. [Google Scholar] [CrossRef] [PubMed]

- Blankertz, B.; Tomioka, R.; Lemm, S.; Kawanabe, M.; Muller, K.R. Optimizing spatial filters for robust EEG single-trial analysis. IEEE Signal Process. Mag. 2007, 25, 41–56. [Google Scholar] [CrossRef]

- Gaur, P.; McCreadie, K.; Pachori, R.B.; Wang, H.; Prasad, G. An automatic subject specific channel selection method for enhancing motor imagery classification in EEG-BCI using correlation. Biomed. Signal Process. Control 2021, 68, 102574. [Google Scholar] [CrossRef]

- Tiwari, A.; Chaturvedi, A. Automatic EEG channel selection for multiclass brain-computer interface classification using multiobjective improved firefly algorithm. Multimed. Tools Appl. 2022, 1–29. [Google Scholar] [CrossRef]

- Mahamune, R.; Laskar, S.H. An automatic channel selection method based on the standard deviation of wavelet coefficients for motor imagery based brain–computer interfacing. Int. J. Imaging Syst. Technol. 2022, 1–15. [Google Scholar] [CrossRef]

- James, G.; Witten, D.; Hastie, T.; Tibshirani, R. An Introduction to Statistical Learning; Springer: Berlin/Heidelberg, Germany, 2013; Volume 112. [Google Scholar]

- Bishop, C.M.; Nasrabadi, N.M. Pattern Recognition and Machine Learning; Springer: Berlin/Heidelberg, Germany, 2006; Volume 4. [Google Scholar]

- Chen, M.; Liu, Y.; Zhang, L. Classification of stroke patients’ motor imagery EEG with autoencoders in BCI-FES rehabilitation training system. In Proceedings of the 21st International Conference, ICONIP 2014, Kuching, Malaysia, 3–6 November 2014; Volume 8836, pp. 202–209. [Google Scholar] [CrossRef]

- Rodŕiguez-Beŕmudez, G.; Gárcia-Laencina, P.J. Automatic and adaptive classification of electroencephalographic signals for brain computer interfaces. J. Med. Syst. 2012, 36, S51–S63. [Google Scholar] [CrossRef]

- Ortner, R.; Irimia, D.C.; Scharinger, J.; Guger, C. A motor imagery based brain-computer interface for stroke rehabilitation. Annu. Rev. CyberTherapy Telemed. 2012, 10, 319–323. [Google Scholar]

- Sebastián-Romagosa, M.; Cho, W.; Ortner, R.; Murovec, N.; Von Oertzen, T.; Kamada, K.; Allison, B.Z.; Guger, C. Brain Computer Interface Treatment for Motor Rehabilitation of Upper Extremity of Stroke Patients—A Feasibility Study. Front. Neurosci. 2020, 14, 591435. [Google Scholar] [CrossRef]

- Vourvopoulos, A.; Badia, S.B.I. Usability and cost-effectiveness in brain-computer interaction: Is it user throughput or technology related? In Proceedings of the 7th Augmented Human International Conference, Geneva, Switzerland, 25–27 February 2016. [Google Scholar] [CrossRef]

- Vourvopoulos, A.; Niforatos, E.; Bermudez i Badia, S.; Liarokapis, F. Brain–Computer Interfacing with Interactive Systems—Case Study 2. In Intelligent Computing for Interactive System Design; ACM: New York, NY, USA, 2021; pp. 237–272. [Google Scholar] [CrossRef]

- Shenoy, H.V.; Vinod, A.P. An iterative optimization technique for robust channel selection in motor imagery based brain computer interface. In Proceedings of the 2014 IEEE International Conference on Systems, Man, and Cybernetics (SMC), San Diego, CA, USA, 5–8 October 2014; Volume 2014, pp. 1858–1863. [Google Scholar] [CrossRef]

- Garcia, G.N.; Ebrahimi, T.; Vesin, J.M. Correlative exploration of EGG signals for direct brain-computer communication. In Proceedings of the 2003 IEEE International Conference on Acoustics, Speech, and Signal Processing, 2003. Proceedings. (ICASSP ’03), Hong Kong, China, 6–10 April 2003; Volume 5, pp. 816–819. [Google Scholar] [CrossRef] [Green Version]

- Hamedi, M.; Salleh, S.H.; Noor, A.M.; Mohammad-Rezazadeh, I. Neural network-based three-class motor imagery classification using time-domain features for BCI applications. In Proceedings of the 2014 IEEE REGION 10 SYMPOSIUM, Kuala Lumpur, Malaysia, 14–16 April 2014; pp. 204–207. [Google Scholar] [CrossRef]

- Millán, J.D.R.; Renkens, F.; Mouriño, J.; Gerstner, W. Noninvasive brain-actuated control of a mobile robot by human EEG. IEEE Trans. Biomed. Eng. 2004, 51, 1026–1033. [Google Scholar] [CrossRef] [Green Version]

- Wang, H.; Zhang, Y. Detection of motor imagery EEG signals employing Naïve Bayes based learning process. Measurement 2016, 86, 148–158. [Google Scholar]

- Bhaduri, S.; Khasnobish, A.; Bose, R.; Tibarewala, D.N. Classification of lower limb motor imagery using K Nearest Neighbor and Naïve-Bayesian classifier. In Proceedings of the 2016 3rd International Conference on Recent Advances in Information Technology (RAIT), Dhanbad, India, 3–5 March 2016; pp. 499–504. [Google Scholar] [CrossRef]

- Agarwal, S.K.; Shah, S.; Kumar, R. Classification of mental tasks from EEG data using backtracking search optimization based neural classifier. Neurocomputing 2015, 166, 397–403. [Google Scholar] [CrossRef]

- Sagee, G.S.; Hema, S. EEG feature extraction and classification in multiclass multiuser motor imagery brain computer interface u sing Bayesian Network and ANN. In Proceedings of the 2017 International Conference on Intelligent Computing, Instrumentation and Control Technologies, ICICICT 2017, Kerala, India, 6–7 July 2018; Volume 2018, pp. 938–943. [Google Scholar] [CrossRef]

- Sakhavi, S.; Guan, C.; Yan, S. Learning Temporal Information for Brain-Computer Interface Using Convolutional Neural Networks. IEEE Trans. Neural Netw. Learn. Syst. 2018, 29, 5619–5629. [Google Scholar] [CrossRef] [PubMed]

- Lee, H.K.; Choi, Y.S. Application of continuous wavelet transform and convolutional neural network in decoding motor imagery brain-computer Interface. Entropy 2019, 21, 1199. [Google Scholar] [CrossRef]

- Safitri, A.; Djamal, E.C.; Nugraha, F. Brain-Computer Interface of Motor Imagery Using ICA and Recurrent Neural Networks. In Proceedings of the 2020 3rd International Conference on Computer and Informatics Engineering (IC2IE), Yogyakarta, Indonesia, 15–16 September 2020; pp. 118–122. [Google Scholar] [CrossRef]

- jian Luo, T.; le Zhou, C.; Chao, F. Exploring spatial-frequency-sequential relationships for motor imagery classification with recurrent neural network. BMC Bioinform. 2018, 19, 344. [Google Scholar] [CrossRef] [Green Version]

- Lawhern, V.J.; Solon, A.J.; Waytowich, N.R.; Gordon, S.M.; Hung, C.P.; Lance, B.J. EEGNet: A compact convolutional neural network for EEG-based brain–computer interfaces. J. Neural Eng. 2018, 15, 056013. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Lu, N.; Li, T.; Ren, X.; Miao, H. A Deep Learning Scheme for Motor Imagery Classification based on Restricted Boltzmann Machines. IEEE Trans. Neural Syst. Rehabil. Eng. 2017, 25, 566–576. [Google Scholar] [CrossRef]

- Zhang, J.; Yan, C.; Gong, X. Deep convolutional neural network for decoding motor imagery based brain computer interface. In Proceedings of the 2017 IEEE International Conference on Signal Processing, Communications and Computing, ICSPCC 2017, Xiamen, China, 22–25 October 2017; Volume 2017, pp. 1–5. [Google Scholar] [CrossRef]

- Wang, P.; Jiang, A.; Liu, X.; Shang, J.; Zhang, L. LSTM-based EEG classification in motor imagery tasks. IEEE Trans. Neural Syst. Rehabil. Eng. 2018, 26, 2086–2095. [Google Scholar] [CrossRef]

- Herman, P.; Prasad, G.; McGinnity, T.M. Design and on-line evaluation of type-2 fuzzy logic system-based framework for handling uncertainties in BCI classification. In Proceedings of the 2008 30th Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Vancouver, BC, Canada, 20–25 August 2008; pp. 4242–4245. [Google Scholar] [CrossRef]

- Pan, Y.; Goh, Q.Z.; Ge, S.S.; Tee, K.P.; Hong, K.S. Mind robotic rehabilitation based on motor imagery brain computer interface. In Proceedings of the Second International Conference on Social Robotics, ICSR 2010, Singapore, 23–24 November 2010; pp. 161–171. [Google Scholar] [CrossRef]

- Xu, B.; Song, A.; Zhao, G.; Xu, G.; Pan, L.; Yang, R.; Li, H.; Cui, J.; Zeng, H. Robotic neurorehabilitation system design for stroke patients. Adv. Mech. Eng. 2015, 7, 1–12. [Google Scholar] [CrossRef]

- Irimia, D.C.; Cho, W.; Ortner, R.; Allison, B.Z.; Ignat, B.E.; Edlinger, G.; Guger, C. Brain-Computer Interfaces With Multi-Sensory Feedback for Stroke Rehabilitation: A Case Study. Artif. Organs 2017, 41, E178–E184. [Google Scholar] [CrossRef]

- Zhao, L.; Li, X.; Bian, Y. Real time system design of motor imagery brain-computer interface based on multi band CSP and SVM. AIP Conf. Proc. 2018, 1955, 040053. [Google Scholar] [CrossRef]

- Irimia, D.C.; Ortner, R.; Poboroniuc, M.S.; Ignat, B.E.; Guger, C. High classification accuracy of a motor imagery based brain-computer interface for stroke rehabilitation training. Front. Robot. AI 2018, 5, 130. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Tayeb, Z.; Fedjaev, J.; Ghaboosi, N.; Richter, C.; Everding, L.; Qu, X.; Wu, Y.; Cheng, G.; Conradt, J. Validating deep neural networks for online decoding of motor imagery movements from eeg signals. Sensors 2019, 19, 210. [Google Scholar] [CrossRef] [Green Version]

- Karácsony, T.; Hansen, J.P.; Iversen, H.K.; Puthusserypady, S. Brain computer interface for neuro-rehabilitation with deep learning classification and virtual reality feedback. In Proceedings of the 10th Augmented Human International Conference 2019, Reims, France, 11–12 March 2019. [Google Scholar] [CrossRef] [Green Version]

- Vidaurre, C.; Ramos Murguialday, A.; Haufe, S.; Gómez, M.; Müller, K.R.; Nikulin, V.V. Enhancing sensorimotor BCI performance with assistive afferent activity: An online evaluation. NeuroImage 2019, 199, 375–386. [Google Scholar] [CrossRef] [PubMed]

- Raza, H.; Chowdhury, A.; Bhattacharyya, S. Deep Learning based Prediction of EEG Motor Imagery of Stroke Patients’ for Neuro-Rehabilitation Application. In Proceedings of the International Joint Conference on Neural Networks, Glasgow, UK, 19–24 July 2020. [Google Scholar] [CrossRef]

- Mousavi, M.; Krol, L.R.; De Sa, V.R. Hybrid brain-computer interface with motor imagery and error-related brain activity. J. Neural Eng. 2020, 17, 056041. [Google Scholar] [CrossRef] [PubMed]

- Benzy, V.K.; Vinod, A.P.; Subasree, R.; Alladi, S.; Raghavendra, K. Motor Imagery Hand Movement Direction Decoding Using Brain Computer Interface to Aid Stroke Recovery and Rehabilitation. IEEE Trans. Neural Syst. Rehabil. Eng. 2020, 28, 3051–3062. [Google Scholar] [CrossRef]

- Vasilyev, A.N.; Nuzhdin, Y.O.; Kaplan, A.Y. Does real-time feedback affect sensorimotor eeg patterns in routine motor imagery practice? Brain Sci. 2021, 11, 1234. [Google Scholar] [CrossRef]

- Ron-Angevin, R.; Díaz-Estrella, A. Brain-computer interface: Changes in performance using virtual reality techniques. Neurosci. Lett. 2009, 449, 123–127. [Google Scholar] [CrossRef]

- Bhattacharyya, S.; Clerc, M.; Hayashibe, M. A Study on the Effect of Electrical Stimulation as a User Stimuli for Motor Imagery Classification in Brain-Machine Interface. Eur. J. Transl. Myol. 2016, 26, 165–168. [Google Scholar] [CrossRef] [Green Version]

- Höller, Y.; Thomschewski, A.; Uhl, A.; Bathke, A.C.; Nardone, R.; Leis, S.; Trinka, E.; Höller, P. HD-EEG Based Classification of Motor-Imagery Related Activity in Patients With Spinal Cord Injury. Front. Neurol. 2018, 9, 955. [Google Scholar] [CrossRef] [Green Version]

- Blanco-Mora, D.A.; Aldridge, A.; Jorge, C.; Vourvopoulos, A.; Figueiredo, P.; Bermúdez, S.; Badia, I. Impact of age, VR, immersion, and spatial resolution on classifier performance for a MI-based BCI. Brain-Comput. Interfaces 2022, 9, 169–178. [Google Scholar] [CrossRef]

- Meng, J.; Edelman, B.J.; Olsoe, J.; Jacobs, G.; Zhang, S.; Beyko, A.; He, B. A study of the effects of electrode number and decoding algorithm on online EEG-based BCI Behavioral Performance. Front. Neurosci. 2018, 12, 227. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Farquhar, J.; Hill, N.J. Interactions between pre-processing and classification methods for event-related-potential classification: Best-practice guidelines for brain-computer interfacing. Neuroinformatics 2013, 11, 175–192. [Google Scholar] [CrossRef] [PubMed]

- Graimann, B.; Allison, B.; Pfurtscheller, G. Brain–Computer Interfaces: A Gentle Introduction. In Brain-Computer Interfaces. The Frontiers Collection; Springer: Berlin/Heidelberg, Germany, 2009; pp. 1–27. [Google Scholar] [CrossRef]

| Author | Classifier | Performance | Feature Extraction | Number of Electrodes | Number of Subjects | Number of Sessions | Number of Trials | Feedback Modality | Participants | Years |

|---|---|---|---|---|---|---|---|---|---|---|

| Herman [75] | T2FLS | 69% | PSD | 2 | 6 | 7 | 160 | Screen | Healthy | - |

| Prasad [19] | T2FLS | 69% | PSD | 2 | 5 | 12 | 160 | Screen | Patients | 59 |

| Pan [76] | QDA | 67% | CSP+AR | 3 | 3 | 1 | 230 | Screen | Healthy | - |

| Chen [53] | Autoencoder | 74% | CSP | 16 | 4 | 144 | 15 | Screen+ FES | Patients | 62 |

| Xu [77] | LDA | 86% | WT+AR | 2 | 8 | 3 | 40 | Robotic | Healthy | 27 |

| Irimia [78] | LDA | 95% | CSP | 45 | 2 | 10 | 240 | Screen+FES | Patients | 50 |

| Zhao [79] | SVM | 74% | CSP | 41 | 5 | 1 | 40 | Screen | Healthy | - |

| Irimia [80] | LDA | 87% | CSP | 64 | 5 | 18 | 160 | Screen+FES | Patients | 60 |

| Tayeb [81] | CNN | 84% | FT | 3 | 20 | 2 | 90 | Robotic | Healthy | 31 |

| Karacsony [82] | CNN | 72% | - | 16 | 10 | - | - | VR | Healthy | 25 |

| Vidaurre [83] | LDA | 82% | CSP | 64 | 15 | 1 | 300 | Robotic | Healthy | - |

| Raza [84] | CNN | 70% | CSP | 12 | 10 | 1 | 120 | Robotic | Patients | 41 |

| Mousavi [85] | LR | 62% | CSP | 64 | 12 | 1 | 180 | Screen | Healthy | 20 |

| Benzy [86] | NB | 68% | PLV | 64 | 16 | 2 | 50 | Screen | Patients | 50 |

| Achanccaray [21] | SVM | 93% | CSP | 16 | 20 | - | - | VR+FES | Healthy | 26 |

| Gaur [22] | LDA | 80% | CSP | 12 | 10 | 3 | 40 | Robotic | Patients | 41 |

| Vasilyev [87] | NB | 80% | CSP | 30 | 11 | 6 | - | Screen | Healthy | 26 |

| Zhang [16] | LDA | 75% | WT+AR | 16 | 7 | 3 | 200 | Screen | Patients | 60 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Vavoulis, A.; Figueiredo, P.; Vourvopoulos, A. A Review of Online Classification Performance in Motor Imagery-Based Brain–Computer Interfaces for Stroke Neurorehabilitation. Signals 2023, 4, 73-86. https://doi.org/10.3390/signals4010004

Vavoulis A, Figueiredo P, Vourvopoulos A. A Review of Online Classification Performance in Motor Imagery-Based Brain–Computer Interfaces for Stroke Neurorehabilitation. Signals. 2023; 4(1):73-86. https://doi.org/10.3390/signals4010004

Chicago/Turabian StyleVavoulis, Athanasios, Patricia Figueiredo, and Athanasios Vourvopoulos. 2023. "A Review of Online Classification Performance in Motor Imagery-Based Brain–Computer Interfaces for Stroke Neurorehabilitation" Signals 4, no. 1: 73-86. https://doi.org/10.3390/signals4010004

APA StyleVavoulis, A., Figueiredo, P., & Vourvopoulos, A. (2023). A Review of Online Classification Performance in Motor Imagery-Based Brain–Computer Interfaces for Stroke Neurorehabilitation. Signals, 4(1), 73-86. https://doi.org/10.3390/signals4010004