Detection of Pitting Corrosion in Stainless-Steel Sheet Pile Walls Using Deep Learning

Abstract

1. Introduction

2. Analytical Procedures

2.1. Definition of Pitting Corrosion in Stainless-Steel Sheet Pile Walls

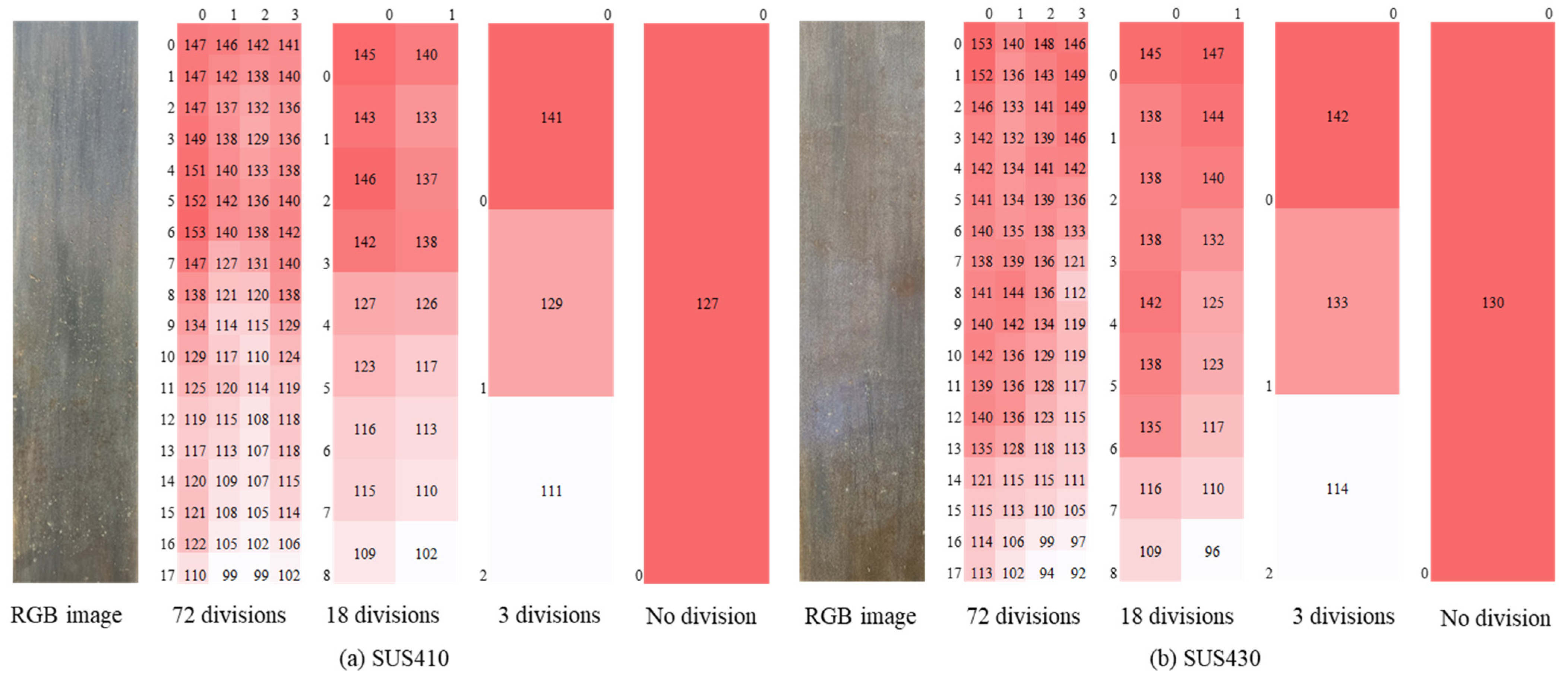

2.1.1. Definition of Pitting Corrosion in Image Information

2.1.2. Task Definition

2.2. Deep Learning Workflow

2.2.1. Annotation

2.2.2. Preprocessing (Data Splitting)

2.2.3. Model

2.2.4. Hyperparameter Tuning

2.2.5. Evaluation Metrics

3. Target Structure and Measurement Methods

3.1. Environmental Conditions and Steel Grades

3.1.1. Site Overview

3.1.2. Preliminary Investigation by Prior Measurements

3.1.3. Surface Cleaning Before Image Acquisition

3.1.4. Image Acquisition and Imaging Device

4. Results and Discussion

4.1. Dataset Configuration and Model Optimization

4.2. Effect of Data Augmentation on Detection Performance

4.3. Comparison with Binary Thresholding Approach

4.4. Summary and Practical Implications

5. Conclusions

- The U-net-based semantic segmentation approach achieved F1-scores exceeding 0.80 for both steel grades, with precision around 0.77 and recall above 0.84. This represents a substantial 42-point improvement over conventional binary thresholding methods, demonstrating the superiority of deep learning for this application.

- The method offers exceptional robustness to natural variations in field imaging conditions, uses accessible smartphone cameras, and provides objective quantitative assessments. The optimal 128 × 128 pixel resolution captures sub-millimeter pitting while maintaining reasonable computational requirements.

- The results confirmed the superior corrosion resistance of SUS430 (PI = 16) over SUS410 (PI = 11), with a significantly lower pitting density and area ratio, validating the effectiveness of higher chromium contents in aggressive brackish water environments.

- The primary limitation is the restricted training dataset size (two images from a single site). The scarcity reflects the limited number of existing stainless-steel sheet pile installations with sufficient service history to develop detectable pitting under real environmental conditions.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Phull, B. Evaluating pitting corrosion. In ASM Handbook: Corrosion—Fundamentals, Testing, and Protection; ASM International: Materials Park, OH, USA, 2003; Volume 13A, pp. 545–548. [Google Scholar]

- ASTM G46-05; Standard Guide for Examination and Evaluation of Pitting Corrosion. ASTM International: West Conshohocken, PA, USA, 2005.

- Caines, S.; Khan, F.; Shirokoff, J. Analysis of pitting corrosion on steel under insulation in marine environments. J. Loss Prev. Process Ind. 2013, 26, 1466–1483. [Google Scholar] [CrossRef]

- Sasidhar, K.N.; Ahuja, R.; Lukas, C.; Sridharan, K. Convolutional neural network for automated quantitative analysis of non-destructively acquired three-dimensional corrosion pit morphology data. Scr. Mater. 2025, 262, 116660. [Google Scholar] [CrossRef]

- Qi, X.; Lian, Y.; Wang, Y.; Lu, Z. Simulation-driven end-to-end deep learning method for white-light interference topography reconstruction. Photonics 2025, 12, 702. [Google Scholar] [CrossRef]

- Ghahari, S.M.; Davenport, A.J.; Rayment, T.; Suter, T.; Tinnes, J.P.; Padovani, C.; Hammons, J.; Stampanoni, M.; Marone, F.; Mokso, R. In situ synchrotron X-ray micro-tomography study of pitting corrosion in stainless steel. Corros. Sci. 2011, 53, 2684–2687. [Google Scholar] [CrossRef]

- Khodabux, W.; Liao, C.; Brennan, F. Characterisation of pitting corrosion for inner sections of offshore wind foundations using laser scanning. Ocean Eng. 2021, 230, 109079. [Google Scholar] [CrossRef]

- Zhang, W.; Wan, W.; Ren, Q.; Liu, Z.; Zhang, X.; Zhao, L.; Yang, L.; Chai, S.; Shi, M.; Wang, H.; et al. High-throughput quantitative characterization of pitting corrosion in 907A steel based on a multidimensional information strategy combined with deep learning image identification. Prog. Nat. Sci. Mater. Int. 2025, 35, 917–933. [Google Scholar] [CrossRef]

- Bing, H.; Li, S. Point cloud data-driven modelling of high-strength steel wire corrosion pits considering orientation features. Constr. Build. Mater. 2024, 449, 138451. [Google Scholar] [CrossRef]

- Hu, Z.; Hua, L.; Liu, J.; Min, S.; Li, C.; Wu, F. Numerical simulation and experimental verification of random pitting corrosion characteristics. Ocean Eng. 2021, 240, 110000. [Google Scholar] [CrossRef]

- Wang, R. On the effect of pit shape on pitted plates, Part II: Compressive behavior due to random pitting corrosion. Ocean Eng. 2021, 236, 108737. [Google Scholar] [CrossRef]

- Choi, K.Y.; Kim, S.S. Morphological analysis and classification of types of surface corrosion damage by digital image processing. Corros. Sci. 2005, 47, 1–15. [Google Scholar] [CrossRef]

- Pidaparti, R.M.; Aghazadeh, B.S.; Whitfield, A.; Rao, A.S.; Mercier, G.P. Classification of corrosion defects in NiAl bronze through image analysis. Corros. Sci. 2010, 52, 3661–3666. [Google Scholar] [CrossRef]

- Wang, Y.; Cheng, G. Application of gradient-based Hough transform to the detection of corrosion pits in optical images. Appl. Surf. Sci. 2016, 366, 9–18. [Google Scholar] [CrossRef]

- Liu, C.; Tian, L.; Wang, P.; Yu, Q.Q.; Song, L.; Miao, J. Non-destructive detection and quantification of corrosion damage in coated steel components under different illumination conditions. Expert Syst. Appl. 2025, 282, 127854. [Google Scholar] [CrossRef]

- Malashin, I.; Tynchenko, V.; Nelyub, V.; Borodulin, A.; Gantimurov, A.; Krysko, N.V.; Shchipakov, N.A.; Kozlov, D.M.; Kusyy, A.G.; Galinovsky, A. Deep learning approach for pitting corrosion detection in gas pipelines. Sensors 2024, 24, 3563. [Google Scholar] [CrossRef]

- Chen, Y.; Tang, F.; Bao, Y.; Tang, Y.; Chen, G. A Fe-C coated long-period fiber grating sensor for corrosion-induced mass loss measurement. Opt. Lett. 2016, 41, 2306–2309. [Google Scholar] [CrossRef]

- Xu, L.; Shi, S.; Huang, Y.; Yan, F.; Wang, X.; Wilson, R.; Zhang, D. Quantification and assessment of steel pitted corrosion using OFDR-based distributed fiber optic sensors. Measurement 2025, 256, 118519. [Google Scholar] [CrossRef]

- Tan, X.; Fan, L.; Huang, Y.; Bao, Y. Detection, visualization, quantification, and warning of pipe corrosion using distributed fiber optic sensors. Autom. Constr. 2021, 132, 103953. [Google Scholar] [CrossRef]

- ISO 8044:2015; Corrosion of Metals and Alloys—Basic Terms and Definitions. International Organization for Standardization: Geneva, Switzerland, 2015.

- Sugimoto, K. Fundamental aspects of localized corrosion. Met. Surf. Finish. 1981, 32, 355–365. (In Japanese) [Google Scholar] [CrossRef]

- Roberge, P.R. Corrosion Engineering; McGraw-Hill: New York, NY, USA, 2008; p. 708. [Google Scholar]

- Xia, D.H.; Song, S.; Tao, L.; Qin, Z.; Wu, Z.; Gao, Z.; Luo, J.L. Material degradation assessed by digital image processing: Fundamentals, progress, and challenges. J. Mater. Sci. Technol. 2020, 53, 146–162. [Google Scholar] [CrossRef]

- Halevy, A.; Norvig, P.; Pereira, F. The unreasonable effectiveness of data. IEEE Intell. Syst. 2009, 24, 8–12. [Google Scholar] [CrossRef]

- Gulli, A.; Pal, S. Deep Learning with Keras; Packt Publishing: Birmingham, UK, 2017. [Google Scholar]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, (CVPR), Boston, MA, USA, 7–12 June 2015; IEEE: Boston, MA, USA, 2015; pp. 3431–3440. [Google Scholar]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. SegNet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef] [PubMed]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015; Springer: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. DeepLab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 834–848. [Google Scholar] [CrossRef] [PubMed]

- FastLabel Inc. FastLabel: AI Data Platform for Annotation and MLOps. 2026. Available online: https://app.fastlabel.ai (accessed on 5 February 2026).

- Chollet, F. Deep Learning with Python, 2nd ed.; Manning Publications: Shelter Island, NY, USA, 2021. [Google Scholar]

- Albumentations Team. Albumentations: Fast and Flexible Image Augmentations. 2026. Available online: https://albumentations.ai (accessed on 5 February 2026).

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Sano, S.; Akiba, T.; Imamura, H.; Ohta, T.; Mizuno, N.; Yanase, T. Black-Box Optimization with Optuna; Ohmsha: Tokyo, Japan, 2023. (In Japanese) [Google Scholar]

- Akiba, T.; Sano, S.; Yanase, T.; Ohta, T.; Koyama, M. Optuna: A next-generation hyperparameter optimization framework. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Anchorage, AK, USA, 4–8 August 2019; ACM: Anchorage, AK, USA, 2019; pp. 2623–2631. [Google Scholar] [CrossRef]

- Otaka, N.; Fujimoto, Y.; Asano, I.; Kawabe, S.; Hagiwara, H.; Suzuki, T. Evaluation of Corrosion Characteristics of Stainless-Steel Sheet Piles in Agricultural Drainage Canals by Exposure Tests. Trans. JSIDRE 2023, 91, I_203–I_209. [Google Scholar] [CrossRef]

- JIS Z 2355-1:2016; Non-Destructive Testing—Ultrasonic Thickness Measurement—Part 1: Measurement Method. JSA: Tokyo, Japan, 2016.

- Buslaev, A.; Iglovikov, V.I.; Khvedchenya, E.; Parinov, A.; Druzhinin, M.; Kalinin, A.A. Albumentations: Fast and flexible image augmentations. Information 2020, 11, 125. [Google Scholar] [CrossRef]

- Bondar, D.; Basova, Y.; Vodka, O.; Machado, J. Mobile-focused spatial inspection of industrial parts using 2D image processing and LiDAR. Measurement 2026, 266, 120522. [Google Scholar] [CrossRef]

- Kim, J.W.; Choi, H.W.; Kim, S.K.; Na, W.S. Review of image-processing-based technology for structural health monitoring of civil infrastructures. J. Imaging 2024, 10, 93. [Google Scholar] [CrossRef]

- Kaur, R.; Karmakar, G.; Xia, F.; Imran, M. Deep learning: Survey of environmental and camera impacts on Internet of Things images. Artif. Intell. Rev. 2023, 56, 9605–9638. [Google Scholar] [CrossRef]

- O’Byrne, M.; Pakrashi, V.; Schoefs, F.; Ghosh, B. Damage assessment of built infrastructure using smartphones. In Civil Engineering Research in Ireland; CERI: Dublin, Ireland, 2018. [Google Scholar]

- Chen, Z.; Chen, J. Mobile imaging and computing for intelligent structural damage inspection. Adv. Civ. Eng. 2014, 2014, 483729. [Google Scholar] [CrossRef]

- Perez, H.; Tah, J.H.M. Deep learning smartphone application for real-time detection of defects in buildings. Struct. Control Health Monit. 2021, 28, e2751. [Google Scholar] [CrossRef]

- Ozer, E.; Kromanis, R. Smartphone prospects in bridge structural health monitoring: A literature review. Sensors 2024, 24, 3287. [Google Scholar] [CrossRef] [PubMed]

- Chen, X.; Wang, B.; Chen, J.; Zhang, X.; Liu, S.; Zhou, G.; Li, P.; Zhao, X. Innovative life-cycle inspection strategy of civil infrastructure: Smartphone-based public participation. Struct. Control Health Monit. 2023, 2023, 8715784. [Google Scholar] [CrossRef]

- Coelho, L.B.; Zhang, D.; Van Ingelgem, Y.; Steckelmacher, D.; Nowé, A.; Terryn, H. Reviewing machine learning of corrosion prediction from a data-oriented perspective. npj Mater. Degrad. 2022, 6, 8, Correction in npj Mater. Degrad. 2022, 6, 72. [Google Scholar] [CrossRef]

- Das, A.; Dorafshan, S.; Kaabouch, N. Autonomous image-based corrosion detection in steel structures using deep learning. Sensors 2024, 24, 3630. [Google Scholar] [CrossRef]

- Otaka, N.; Fujimoto, Y.; Asano, I.; Yamauchi, Y.; Hagiwara, T.; Suzuki, T. Material Design and Life Cycle Cost Assessment for Extra Long-term Durability of Agricultural Canal Revetments. J. Jpn. Soc. Irrig. Drain. Rural Eng. 2023, 91, 801–804. (In Japanese) [Google Scholar] [CrossRef]

- Ahmad, S.; Ahmad, S.; Akhtar, S.; Ahmad, F.; Ansari, M.A. Data-driven assessment of corrosion in reinforced concrete structures embedded in clay-dominated soils. Sci. Rep. 2025, 15, 22744. [Google Scholar] [CrossRef] [PubMed]

- Franciosi, M.; Kasser, M.; Viviani, M. Digital twins in bridge engineering for streamlined maintenance and enhanced sustainability. Autom. Constr. 2024, 168, 105834. [Google Scholar] [CrossRef]

| Author, Year | Target | Method | Purpose | Annotation |

|---|---|---|---|---|

| Liu. et al., 2025 [15] | Corrosion of painted steel structural members | Semantic segmentation (YOLOv8-G) using smartphone images |

| Images correspond to five stages of the corrosion process of painted steel structural members |

| Malashin. et al., 2024 [16] | Pitting corrosion in gas pipelines | Binary classification using Gaussian process (GP) and Sequential Model-based Algorithm Configuration (SMAC) |

| No description of classification |

| Wang. and Cheng., 2016 [14] | X80 pipeline steel specimens immersed in NaCl solution | Circular detection using gradient-based Hough transform |

| Diameter measured using image editing software (size depends on microscope magnification) |

| Term | Definition |

|---|---|

| Image Classification | Assigning one or more labels to an image. Example: does this image contain pitting corrosion? |

| Image Segmentation | Segmenting or dividing an image into multiple areas. Example: is any given pixel pitting corrosion? |

| Object Detection | Drawing rectangles called bounding boxes around target objects in an image and associating each rectangle with a class. |

| Item | Unit | Measured Value |

|---|---|---|

| Sulfate ion | mg/L | 21 |

| Nitrate ion | mg/L | 3.7 |

| Chloride ion | mg/L | 120 |

| pH | - | 6.9 |

| Steel Grade | Precision | Recall | F1-Score |

|---|---|---|---|

| SUS410 | 0.770 | 0.903 | 0.831 |

| Steel Grade | Precision | Recall | F1-Score |

|---|---|---|---|

| SUS430 | 0.771 | 0.848 | 0.808 |

| Steel Grade | Number of Pitting Corrosions | Area Rate (%) |

|---|---|---|

| SUS410 | 1134 | 3.573 |

| SUS430 | 314 | 0.714 |

| Steel Grade | Precision | Recall | F1-Score |

|---|---|---|---|

| SUS410 | 0.826 | 0.781 | 0.803 |

| Steel Grade | Precision | Recall | F1-Score |

|---|---|---|---|

| SUS430 | 0.801 | 0.792 | 0.796 |

| Steel Grade | Precision | Recall | F1-Score |

|---|---|---|---|

| SUS410 | 0.318 | 0.565 | 0.407 |

| Steel Grade | Precision | Recall | F1-Score |

|---|---|---|---|

| SUS430 | 0.258 | 0.456 | 0.329 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Suzuki, T.; Otaka, N.; Shibano, K.; Fujimoto, Y.; Hagiwara, T. Detection of Pitting Corrosion in Stainless-Steel Sheet Pile Walls Using Deep Learning. Corros. Mater. Degrad. 2026, 7, 23. https://doi.org/10.3390/cmd7020023

Suzuki T, Otaka N, Shibano K, Fujimoto Y, Hagiwara T. Detection of Pitting Corrosion in Stainless-Steel Sheet Pile Walls Using Deep Learning. Corrosion and Materials Degradation. 2026; 7(2):23. https://doi.org/10.3390/cmd7020023

Chicago/Turabian StyleSuzuki, Tetsuya, Norihiro Otaka, Kazuma Shibano, Yuji Fujimoto, and Taiki Hagiwara. 2026. "Detection of Pitting Corrosion in Stainless-Steel Sheet Pile Walls Using Deep Learning" Corrosion and Materials Degradation 7, no. 2: 23. https://doi.org/10.3390/cmd7020023

APA StyleSuzuki, T., Otaka, N., Shibano, K., Fujimoto, Y., & Hagiwara, T. (2026). Detection of Pitting Corrosion in Stainless-Steel Sheet Pile Walls Using Deep Learning. Corrosion and Materials Degradation, 7(2), 23. https://doi.org/10.3390/cmd7020023