1. Introduction

Metallic pipelines play a critical role across multiple industrial sectors by transporting fluids such as oil, gas, water, and chemical products. During operation, these systems are exposed to demanding conditions, including high pressures, temperature fluctuations, mechanical loads, and aggressive environments. Such conditions promote the development of degradation mechanisms such as erosion, internal and external corrosion, welding defects, structural cracking, and valve failures, which compromise operational reliability, reduce hydraulic efficiency, and increase the risk of failure with direct implications for industrial safety and process continuity [

1].

Among these mechanisms, corrosion represents one of the most critical threats to pipeline integrity due to its direct impact on mechanical strength and long-term durability. This electrochemical process arises from interactions between the metallic material and its surrounding environment, either internal or external, leading to oxidation reactions and progressive material loss. As corrosion advances, pipe wall thickness decreases, structural resistance deteriorates, and the likelihood of operational failure increases significantly [

2,

3].

The oil and gas industry is particularly vulnerable to corrosion-related degradation, as pipelines often extend over long distances and operate under high pressure and variable environmental conditions. The presence of aggressive fluids, dissolved gases, salts, and complex chemical compounds accelerates corrosive processes and material deterioration. Corrosion-related costs in this sector have been reported to range from 2.42 to 4.84 billion USD, with operation and maintenance expenses estimated at 3100 to 6200 USD per kilometer of pipeline. Moreover, the National Association of Corrosion Engineers (NACE) estimates that annual expenditures for repairing or replacing corroded pipelines exceed 7 billion USD, highlighting the substantial economic impact of corrosion and the need for more effective inspection strategies [

4]. Within this broader context, internal corrosion deserves particular attention, as it originates from direct interaction between the internal pipe surface and transported fluids containing water, dissolved gases, salts, microorganisms, or chemically aggressive compounds. This form of degradation often progresses without visible external signs, allowing damage to evolve unnoticed over extended periods.

Uniform corrosion is a prevalent form of internal pipeline degradation, characterized by continuous, homogeneous material loss across the internal surface. Unlike localized corrosion mechanisms, its lack of distinct point defects complicates early detection via conventional visual inspection. Consequently, this deterioration often progresses undetected until it reaches critical thresholds, potentially leading to leaks, catastrophic ruptures, environmental contamination, and significant operational disruptions [

5].

Early detection and continuous monitoring of corrosion are therefore essential to ensure operational safety and extend the service life of infrastructure. However, internal pipeline inspection presents significant technical challenges due to restricted access, tubular geometry, limited illumination, and the presence of residues or surface irregularities that hinder direct observation [

6,

7].

Traditionally, internal inspection has relied on non-destructive testing techniques such as industrial radiography and ultrasonic testing. Radiography enables the detection of thickness loss using X-rays or gamma rays, but it exhibits reduced sensitivity to surface corrosion and thin material layers, requires strict safety protocols, and incurs high operational costs [

8,

9]. Ultrasonic testing employs acoustic waves to measure wall thickness and detect discontinuities; however, its effectiveness depends strongly on surface conditions, sensor coupling, and pipeline geometry [

10,

11,

12].

These limitations have motivated the development of automated, safer, and more cost-effective inspection alternatives. In this context, computer vision has emerged as a promising solution for industrial surface inspection. Advances in embedded hardware, high-resolution imaging, and image-processing algorithms now enable automated visual analysis of defects, pattern recognition, and defect localization in complex environments [

13].

Digital image processing techniques, including color space transformations, filtering, contrast enhancement, and segmentation, improve defect visibility and facilitate feature extraction. Furthermore, deep learning approaches based on convolutional neural networks have significantly advanced defect detection by enabling the learning of complex visual representations under challenging conditions [

14,

15]. Architectures such as YOLO and DeepLab have become widely adopted benchmarks for object detection and semantic segmentation tasks.

Several studies have explored integrating robotic platforms with computer vision for internal pipeline inspection, demonstrating the feasibility of corrosion detection via image analysis. Despite these advances, challenges related to illumination control, image quality, real-time processing, and operation in fully confined environments remain open research problems [

16,

17].

To address these challenges, this work proposes the design and development of a mobile embedded computer vision system for detecting uniform corrosion inside steel pipelines. The system integrates a high-resolution camera, adaptive illumination, and a mobile robotic platform controlled by a Raspberry Pi 4, which manages both navigation and real-time image processing. Image enhancement is achieved through RGB–HSV color space conversion, Gaussian filtering, and thresholding, while deep learning models are employed for the classification and segmentation of corroded regions. This study highlights four key contributions:

- (i)

A custom dataset was constructed from real images acquired from a pipe exhibiting uniform corrosion in a controlled environment that simulates industrial pipeline conditions, ensuring the realism and operational relevance of the dataset.

- (ii)

Three deep learning models—ResNet-18, YOLOv8-seg, and DeepLabV3—were trained and rigorously evaluated using standard performance metrics, including accuracy, sensitivity, F1-score, and mean mIoU.

- (iii)

The integration of mobile robotics, embedded processing, and deep learning enables the development of an autonomous inspection system capable of operating with high precision under real industrial conditions.

- (iv)

The proposed approach provides a non-invasive and scalable solution that significantly enhances the reliability of uniform corrosion detection in metallic pipelines.

Overall, the novelty of this work lies in integrating a mobile robotic inspection platform with deep-learning-based corrosion detection for internal steel pipeline monitoring. Unlike previous studies that mainly focus on offline image classification, the proposed approach combines a Raspberry Pi-based mobile robot with artificial vision algorithms to perform automated inspection inside pipelines.

In addition, the study evaluates different deep learning models, including ResNet-18, YOLOv8-seg, and DeepLabV3, allowing both classification and pixel-level segmentation of corrosion regions. In particular, the use of YOLOv8-seg for corrosion segmentation within a robotic inspection system provides a practical framework for real-time monitoring of pipeline surfaces [

18,

19].

2. Materials and Methods

Figure 1 illustrates the methodology adopted for the development of the mobile vision-based corrosion detection system, structured into five main phases: (1) mechanical and electronic design of the mobile platform; (2) image acquisition and preprocessing under real inspection conditions; (3) dataset construction through image selection and labeling; (4) model training and evaluation using deep learning techniques; and (5) system integration and visualization for autonomous corrosion inspection.

These phases combine principles from robotics, embedded systems, digital image processing, and deep learning.

2.1. Materials

Mechanical and Electronic Design

The robotic platform was engineered to autonomously navigate the interior of 60 cm-diameter steel pipelines, in accordance with the structural and dimensional criteria specified in ASME B36.10M for welded and seamless pipe systems [

20]. The mobility system consists of DC gear motors selected for their high torque and stability during confined movement. Motors compliant with industrial specifications, such as the 6 V gearmotor series, were integrated to ensure continuous traction.

For camera positioning and angular adjustment, high-torque servomotors (MG996R and SG90; Tower Pro, Shenzhen, China) were used to ensure precise, repeatable camera orientation, thanks to their robust metal-gear construction and reliability in embedded robotic applications. These servos enable dynamic control of the camera’s vertical and horizontal alignment, thereby improving the interior pipe’s visual coverage.

The processing and control backbone of the system is a Raspberry Pi 4 Model B (Raspberry Pi Foundation, Cambridge, UK), selected for its quad-core ARM Cortex-A72 processor, 8 GB of RAM, and full Linux compatibility, which make it suitable for embedded robotics and real-time processing tasks. A dedicated motor controller (Robot HAT) was employed to interface with motors and servomotors, ensuring safe electrical operation in accordance with the collaborative robotics requirements defined in ISO/TS 15066 [

16].

On the other hand, the imaging module is based on the Raspberry Pi High Quality Camera (Raspberry Pi Foundation, Cambridge, UK), which incorporates a 12.3-megapixel Sony IMX477 sensor (Sony Semiconductor Solutions Corporation, Atsugi, Japan), delivering high sensitivity and low noise performance. A compatible CS-mount wide-angle lens with a focal length of 0.236 in (approximately 6 mm) and manual focus was installed to increase the field of view in confined environments. Integrated Light-Emitting Diode (LED) lighting enhances image quality in low-light conditions typical of enclosed pipelines. Power was supplied by four 18,650 lithium-ion batteries (3.7 V, 2500 mAh), with two allocated to the Raspberry Pi and two dedicated to the lighting system, ensuring an appropriate power balance for extended autonomous inspection. Several modifications were made to the original structure to integrate the camera module connected to the Raspberry Pi and the servomotors that control the camera orientation, as illustrated in

Figure 2.

Given the pipeline’s approximate 60 cm diameter, a custom mobile robot was developed to capture internal images of the pipe walls. The prototype features approximate dimensions of 28 cm in length, 11 cm in height, and 14 cm in width. The mechanical platform is based on the Picar-X structure from SunFounder (SunFounder Inc., Wilmington, NC, USA), a company specializing in embedded system development kits for platforms such as Raspberry Pi, Arduino, and ESP32.

2.2. Methods

2.2.1. Image Acquisition and Pre-Processing

Starting from a flat metal plate measuring 1.60 m × 2.20 m, a cutting and bending process was carried out to fabricate a tubular structure with an approximate diameter of 60 cm, corresponding to a DN600 industrial pipeline. Once the cylindrical geometry was established, additional structural modifications were introduced to facilitate handling and transportation, given the pipe’s total weight of approximately 60 kg.

Subsequently, the inner surface of the pipe was uniformly conditioned and painted to standardize illumination and background texture during image acquisition. A corrosion layer was generated on the internal surface to reproduce the visual characteristics commonly observed in uniform corrosion of steel structures. Although uniform corrosion is characterized by relatively homogeneous material degradation, the resulting corrosion patterns may present irregular shapes and heterogeneous oxidation intensities due to natural variations in surface morphology and oxide formation. Such characteristics are commonly reported in corroded steel surfaces exposed to controlled environments [

21].

The corrosion regions used for training and evaluation were manually annotated using image labeling tools, focusing on visually identifiable features such as color variation, oxide formation, and surface texture degradation. This controlled pipeline setup was designed to simulate the visual inspection conditions of industrial pipelines while ensuring repeatability during image acquisition and experimental evaluation of computer vision and semantic segmentation models.

Figure 3 presents the experimental pipeline setup used for corrosion inspection.

Figure 3 presents the experimental pipeline setup used for corrosion inspection.

As shown in

Figure 3a, DN600 pipe sections were fabricated and conditioned by applying artificial corrosion patterns of varying sizes and distributions to the inner surface, thereby generating a representative dataset for subsequent analysis.

Figure 3b shows the mobile robotic platform operating inside the prepared pipeline during image acquisition, demonstrating the system’s capability to navigate the confined environment and capture visual data under controlled inspection conditions.

Images were acquired from the interior of corroded steel pipelines using controlled illumination provided by the incorporated lighting system.

The preprocessing stage consisted of the following steps:

RGB (red, green, and blue)–HSV (hue, saturation, and value) color space transformation, commonly used for segmentation because HSV isolates chromatic information and stabilizes color-based thresholding in variable lighting [

22,

23]. To apply this image analysis technique, the conversion is performed using Equations (1)–(3) as follows:

This calculation is performed with each pixel to transform the corroded image into HSV space, where the values of R, G, and B can range from 0 to 255. The value of A can be π/3 if H is represented in radians or 60° if expressed in degrees. This means that H can vary from −π/3 to 5π/3 when represented in radians and from −60° to 300° when expressed in degrees. S and V, on the other hand, vary from 0 to 255 [

24].

These operations improved feature separability and served as the basis for subsequent deep learning-based segmentation processes. The resulting masks were used both for dataset generation and to validate the consistency of supervised models.

Figure 4 illustrates the processing pipeline applied to each image.

2.2.2. Dataset Construction

A corrosion-focused dataset was created from 600 images of steel pipe surfaces affected by uniform corrosion, each originally captured at 640 × 640 pixels, a resolution selected to meet embedded inference requirements and to align with hardware constraints in Raspberry Pi-based vision systems [

28]. Images presented variations in illumination, angle, and corrosion intensity to increase dataset diversity.

In addition, 600 images of non-corroded surfaces were acquired to balance the dataset and strengthen the robustness of the classification stage. Ground-truth segmentation masks for the corroded regions were manually annotated at the pixel level by visual inspection, following widely accepted practices in semantic segmentation research.

These annotations were used as reference labels for both training and quantitative evaluation of the segmentation models.

Data Augmentation

To enhance the robustness and generalization capability of the segmentation models, data augmentation was applied to the corrosion images. This process included systematic variations in color saturation from −29% to +29%, as well as geometric transformations including 90° and 180° rotations.

As a result, the corrosion dataset was expanded to 1195 samples, each paired with its corresponding binary segmentation mask. For the YOLOv8-seg framework, the binary masks were converted to polygon-based representations and encoded according to the annotation format required for instance segmentation, following the conventions of contemporary deep learning frameworks.

Dataset Splitting and Normalization

The dataset was divided into:

All images were resized to 512 × 512 px, consistent with the input specifications of ResNet-18, YOLOv8-seg, and DeepLabV3 architectures.

2.2.3. Model Training and Evaluation

Training Environment

Training was conducted on a computer equipped with an Intel Core i7 processor (8th generation). Although the system lacked a GPU, CPU-based training was feasible owing to the moderate dataset size and the efficiency of CPU kernels. This environment provided significantly faster training than the Raspberry Pi while maintaining practical computing capabilities expected for academic or industrial prototypes.

Evaluation Metrics

The performance of the proposed models was evaluated using multiple quantitative metrics commonly adopted in image analysis and classification tasks. These metrics include Accuracy, Precision, Recall, Specificity, and F-score (F1), which provide a comprehensive assessment of classification performance and robustness. In addition, the area under the receiver operating characteristic (ROC) curve (AUC) was computed to evaluate the models’ discriminative capability [

31].

For segmentation performance, the mIoU was used, defined as the ratio of the area of overlap to the area of union between the predicted segmentation and the ground-truth regions [

32]. Furthermore, confusion matrices were generated and normalized according to the guidelines provided by the Scikit-learn library to facilitate an interpretable analysis of classification outcomes.

Finally, Principal Component Analysis (PCA) was applied to explore feature space variance and class separability, following established methodologies that integrate dimensionality reduction techniques with deep learning model assessment [

33].

Inference time was measured on the Raspberry Pi 4 to assess the feasibility of real-time deployment.

2.2.4. System Integration and Real-Time Visualization

To enable remote visualization of the inspection process, a lightweight MJPEG video server was implemented on the Raspberry Pi. This approach enables the sequential transmission of JPEG frames over HTTP, ensuring low latency and efficient operation on resource-constrained embedded systems [

34,

35]. Such architectures are widely adopted in Internet of Things (IoT) and edge computing applications, where minimizing transmission delay is critical for real-time monitoring and control [

36].

The video stream is accessible both through a standard web browser and a custom-developed mobile application, providing operational flexibility and cross-platform compatibility. To enhance the user experience and ensure smooth visualization, latency-optimization techniques were applied to minimize buffering and reduce end-to-end delay, thereby guaranteeing near-real-time video streaming. This capability is essential for robotic navigation and inspection tasks in confined environments [

37].

The mobile application integrates an intuitive graphical user interface (GUI) that enables direct interaction with the inspection system. Through this interface, the operator can perform the following functions:

Live video monitoring.

Selection of the operating mode (classification, YOLOv8-seg segmentation, or DeepLabV3 segmentation).

Remote control of the robot’s movement.

Management of the inspection session.

Figure 5 illustrates the graphical user interface used to operate the robotic inspection system during the experiments. Through this interface, the operator can monitor the live video stream, select detection or segmentation mode, and control both the robot’s movement and the camera’s orientation. Therefore, the figure helps to understand the practical operation and integration of the proposed inspection system.

The GUI design follows principles commonly used in IoT-based embedded systems and robotic teleoperation platforms, where simplicity, fast response, and seamless integration with real-time video streams are essential for efficient operation [

38,

39]. Although the communication architecture is inspired by cloud-based platforms such as Firebase, the system was optimized to operate exclusively within a local network, eliminating dependency on external cloud services and significantly reducing communication latency.

Finally, the video transmission and visualization algorithm were optimized to minimize frame delivery delay, ensuring near-real-time supervision of the internal pipe surface. This feature is crucial for decision-making during robotic inspections and for accurately identifying areas affected by uniform corrosion.

3. Results and Discussion

This section presents the performance of the three implemented deep learning models (ResNet-18, YOLOv8-seg, and DeepLabV3), along with quantitative, qualitative, and operational analyses of the integrated system during real-world testing on the Raspberry Pi 4. The models were originally trained on a dataset of 600 corrosion and 600 non-corrosion images. For performance assessment, a separate, fully independent test dataset consisting of 200 images (100 with corrosion and 100 without) was used. These images were not used during the training or validation stages and have no overlap with the training dataset, ensuring an unbiased evaluation and preventing potential data leakage.

All test images were acquired under the same illumination conditions, resolution, and image acquisition format as the training data, to maintain a consistent experimental setup while ensuring the independence of the evaluation process.

The deep learning models evaluated in this study were trained using different configurations depending on the task. All models were trained using transfer learning with pretrained weights. The YOLOv8-seg model was initialized with weights pretrained on the COCO dataset, whereas DeepLabV3 and ResNet18 used ImageNet-pretrained weights. Training was performed for 100 epochs for the segmentation models and 50 epochs for the classification model. The YOLOv8-seg model was trained using stochastic gradient descent (SGD) with an initial learning rate of 0.001 and a batch size of 16. The optimization process included bounding-box regression, segmentation, classification, and distributional focal losses. For DeepLabV3, the Adam optimizer was used with a learning rate of 0.0001 and a batch size of 8. The loss function combined cross-entropy and Dice losses to improve segmentation accuracy. The ResNet18 classification model was trained using the Adam optimizer with a learning rate of 0.0001 and a batch size of 32, optimizing Binary Cross-Entropy loss.

Table 1 presents the configuration used for training.

Table 2 presents an ablation study by progressively eliminating each stage: HSV color conversion, filtering, and thresholding. The experiments were conducted with three models: YOLOv8-seg, DeepLabV3, and ResNet18. The results show that the complete process consistently achieved the highest accuracy for all models. For example, YOLO achieved 99.33% accuracy when the full process was applied, whereas performance decreased to 79.52% when the model was used without preprocessing. Similar behavior was observed for DeepLabV3 and ResNet18. Eliminating individual preprocessing steps also resulted in a significant decrease in performance. In particular, the HSV conversion step significantly improved the separation of the corrosion region, while filtering reduced noise, and thresholding improved the contrast between the corrosion and the background. These findings demonstrate that the proposed preprocessing stages play a crucial role in improving model performance and that the improvements in accuracy are not solely due to the deep learning models, but also to the manually designed preprocessing process.

3.1. ResNet18 Model Results (Binary Classification)

Figure 6 shows the confusion matrix of the model trained to classify images as corroded or non-corroded. The model was evaluated on a dataset of 200 images, comprising 100 with corrosion and 100 without. From these results, the following observations were obtained.

95 images were correctly classified as corrosion, representing the true positives (TP), corresponding to 95%.

5 corroded images were incorrectly classified as non-corroded, representing the false negatives (FN) and accounting for 5% classification error for this class.

97 non-corroded images were correctly identified, representing the true negatives (TN) with 97% accuracy for this category.

3 non-corroded images were incorrectly classified as corroded, corresponding to the false positives (FP) and accounting for 3% error.

The classification metrics obtained were as follows:

Accuracy: 96%, indicating that the model correctly classified this percentage of the total images.

Precision: 97% for the corrosion class and 95% for the non-corrosion class.

Recall (Sensitivity) for corrosion: 95%, reflecting the model’s ability to identify positive cases correctly.

Specificity (Recall for non-corrosion): 97%, indicating the model’s ability to recognize negative cases.

F1-score: 96% for both classes, confirming a balanced relationship between precision and recall.

These metrics demonstrated the model’s solid, reliable performance in corrosion detection, with a well-balanced trade-off between false positives and false negatives.

Figure 7 presents the results of the PCA applied to the feature representations extracted from the internal layers of the convolutional model. The 2D (see

Figure 7a) projection reveals a clear separation between the classes: blue points correspond to images with corrosion, whereas orange points correspond to non-corroded images. This separation indicates that the model successfully learned discriminative features that distinguish between the two categories.

The variance explained by the principal components is notably high, with PC1 accounting for 93.94% and PC2 for 5.77%, meaning that together they retain approximately 99.71% of the relevant information in the dataset. In the 3D space (see

Figure 7b), the remaining principal component, PC3, explains only 0.11% of the variance, a negligible contribution that justifies considering only PC1 and PC2 for the analysis.

Visualization facilitates interpretation and reinforces the conclusion that the model has effectively learned meaningful patterns to differentiate between corroded and non-corroded surfaces.

Figure 8 illustrates the model’s performance as the decision threshold is varied. The ROC curve approaches the upper-left corner of the plot, indicating a strong discriminative capability between corroded and non-corroded classes. An AUC value of 0.99 further confirms the excellent overall classification performance of the proposed model.

3.2. YOLOv8-Seg Results (Instance Segmentation)

For the segmentation models, evaluation techniques similar to those used for the detection model were applied, with the main difference that performance was assessed at the pixel level rather than at the image level. A total of 200 images were evaluated: 100 with regions of corrosion and their corresponding 100 ground-truth binary masks.

Figure 9a presents the pixel-level confusion matrix. Out of more than 29 million evaluated pixels, the model correctly classified approximately 23 million as non-corroded and 7 million as corroded. Only 60,810 pixels were incorrectly classified as corroded (false positives), while 140,886 pixels were incorrectly classified as non-corroded (false negatives).

The resulting performance metrics are as follows:

Accuracy: 99.33%

Precision: 99.14%

Recall (Sensitivity): 98.03%

F1-score: 98.58%

IoU for the “corrosion” class: 97.20%

mIoU (mean Intersection over Union): 98.17%

The normalized confusion matrix (see

Figure 9b) further confirms the strong performance of the proposed model. Both classes exhibit near-perfect precision values when rounded, indicating a high level of agreement between the predicted labels and the ground-truth annotations. This result demonstrates the model’s effectiveness in accurately identifying and segmenting corrosion-related pixels while maintaining a low misclassification rate across classes. Moreover, the balanced distribution observed in the matrix suggests stable and reliable behavior, even in the presence of visually similar surface patterns.

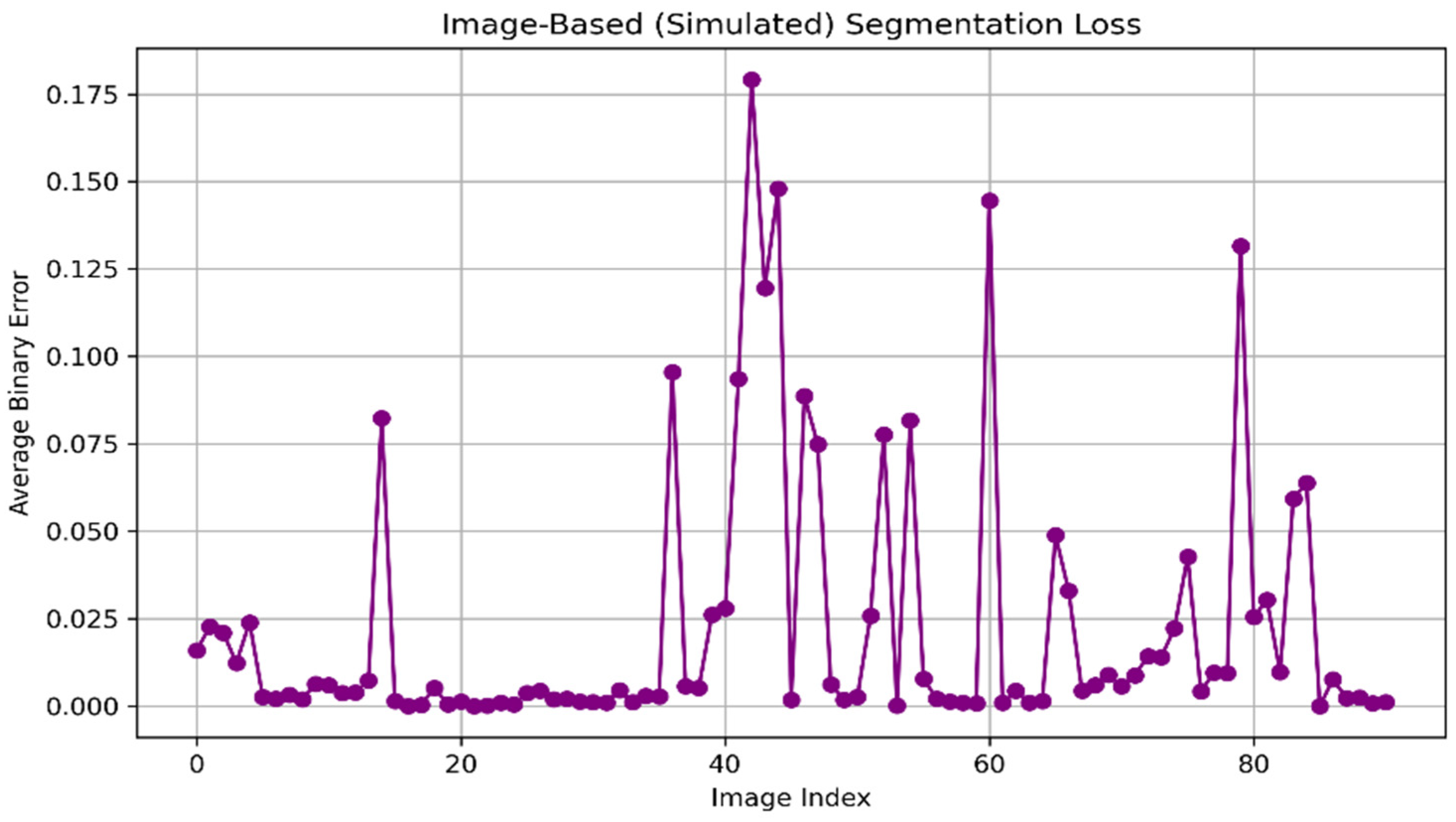

Figure 10 illustrates the average binary error per image during the segmentation evaluation. The

x-axis represents the index of the evaluated images, while the

y-axis corresponds to the average binary error computed for each image.

As shown in the figure, the binary error remains very close to zero for most evaluated images, typically below 0.01, indicating a high level of agreement between the predicted masks and the ground-truth annotations. This behavior reflects the segmentation model’s overall robustness and stability across most test samples.

However, two significant error peaks are clearly visible. The first peak occurs at an early image index and reaches values close to 0.09, while a second peak appears around image indices 70–75, with binary error values approaching 0.07.

These peaks indicate that the model encountered localized difficulties when processing specific images, likely due to challenging visual conditions such as uneven illumination, low contrast, or complex corrosion patterns. Additionally, a moderate increase in binary error is observed within the image range, approximately 40–60, where values fluctuate between 0.01 and 0.03. Although these errors are substantially lower than those at the major peaks, they indicate regions where segmentation performance reduces slightly, possibly due to gradual transitions between corroded and non-corroded areas. Overall, the distribution of binary error values confirms that segmentation errors are sparse and image-dependent, while the consistently low error across most samples demonstrates the reliability of the proposed model for uniform corrosion detection.

The ROC curve in

Figure 11 illustrates the model’s pixel-level performance, yielding an AUC of 0.9889, which is extremely close to 1. This value indicates an exceptional ability to distinguish between corrosion and non-corrosion pixels, confirming that the model is highly effective for segmentation tasks.

3.3. DeepLabV3 Results (Semantic Segmentation)

As shown in

Figure 12a, the second segmentation model was evaluated on more than 29 million pixels from the evaluation dataset. The model correctly classified approximately 21 million pixels as non-corroded and around 5.9 million pixels as corroded.

Additionally, the results indicate that 553,325 pixels were incorrectly classified as corroded when they were non-corroded (false positives), and 75,516 pixels were misclassified as non-corroded when they were in fact corroded (false negatives).

The corresponding performance metrics are:

Accuracy: 97.72%

Precision: 91.42%

Recall (Sensitivity): 98.74%

F1-score: 94.97%

IoU for the “corrosion” class: 90.37%

mIoU (mean Intersection over Union): 93.73%

The normalized confusion matrix shown in

Figure 12b illustrates the proportion of correctly classified pixels for each class relative to the total number of pixels belonging to that class. The results indicate that the non-corroded class achieves a correct classification rate of 97%, with only 3% of pixels misclassified as corroded. Similarly, the corroded class exhibits a correct prediction rate of approximately 99%, demonstrating the model’s high accuracy and reliability in pixel-level corrosion segmentation.

In

Figure 13, the results show that, for a considerable part of the dataset, the binary error remains close to zero, generally below 0.01, indicating accurate model performance in segmenting corroded and non-corroded regions under normal visual conditions.

Nevertheless, several pronounced error peaks are observed throughout the evaluation sequence. The most significant peak occurs around image indices 42–45, reaching approximately 0.18, representing the maximum error observed in this evaluation. Additional notable peaks appear around indices 60 and 78–80, with error values of 0.13–0.15.

These elevated error rates indicate that the model encountered considerable difficulties in processing specific images, likely due to abrupt illumination changes, high surface reflectivity, occlusions, or complex corrosion textures that deviate from the dominant patterns in the training dataset.

Furthermore, moderate fluctuations in binary error are observed across intermediate image ranges, ranging from 0.02 to 0.08, indicating partial misclassification or boundary uncertainty in regions where corrosion transitions gradually into non-corroded areas.

Overall, the error distribution indicates that segmentation inaccuracies are concentrated in a small subset of images, whereas the consistently low error observed across most samples confirms the general robustness of the proposed segmentation approach.

Despite the presence of false positives, the model achieves strong segmentation performance, yielding an AUC of 0.9811. This value indicates a highly robust behavior, reflecting the model’s capability to accurately identify corrosion regions. The ROC curve and AUC were computed using pixel-wise predictions, where each pixel’s probability from the segmentation model was compared with its corresponding ground-truth label. This pixel-level evaluation provides a detailed assessment of the model’s discriminative capacity in distinguishing corrosion and non-corrosion regions.

Figure 14 illustrates the results obtained.

Table 3 presents all the metrics obtained during the evaluation. From these results, it can be concluded that YOLO achieves the highest performance on segmentation tasks, with an mIoU of 0.9817 and an F1-score of 0.9858, outperforming DeepLabV3, which shows slightly lower precision and a higher tendency toward false positives.

The ResNet-18 model also demonstrated strong performance, achieving 97% accuracy; however, its intended application is image-level classification rather than pixel-level segmentation. Therefore, YOLO is recommended as the most effective model for detailed pixel-wise corrosion segmentation.

Table 4 presents the computational performance of the evaluated models deployed on the Raspberry Pi 4 platform. The results show that the YOLOv8-seg model achieved the highest segmentation accuracy (mIoU of 0.9817) while maintaining an inference speed of 5.5 FPS, with an average inference time of 181 ms for 512 × 512-pixel input images. DeepLabV3 also demonstrates strong segmentation performance with an mIoU of 0.9373; however, it incurs a higher computational cost, achieving only 3.68 FPS and an average inference time of 271 ms at the same input resolution. In contrast, the ResNet18 classification model achieved the fastest inference time of 37.59 ms and the highest frame rate of 26.6 FPS using an input size of 224 × 224 pixels, although it does not provide pixel-level segmentation. These results demonstrate the trade-off between segmentation accuracy and computational efficiency when deploying deep learning models on edge devices.

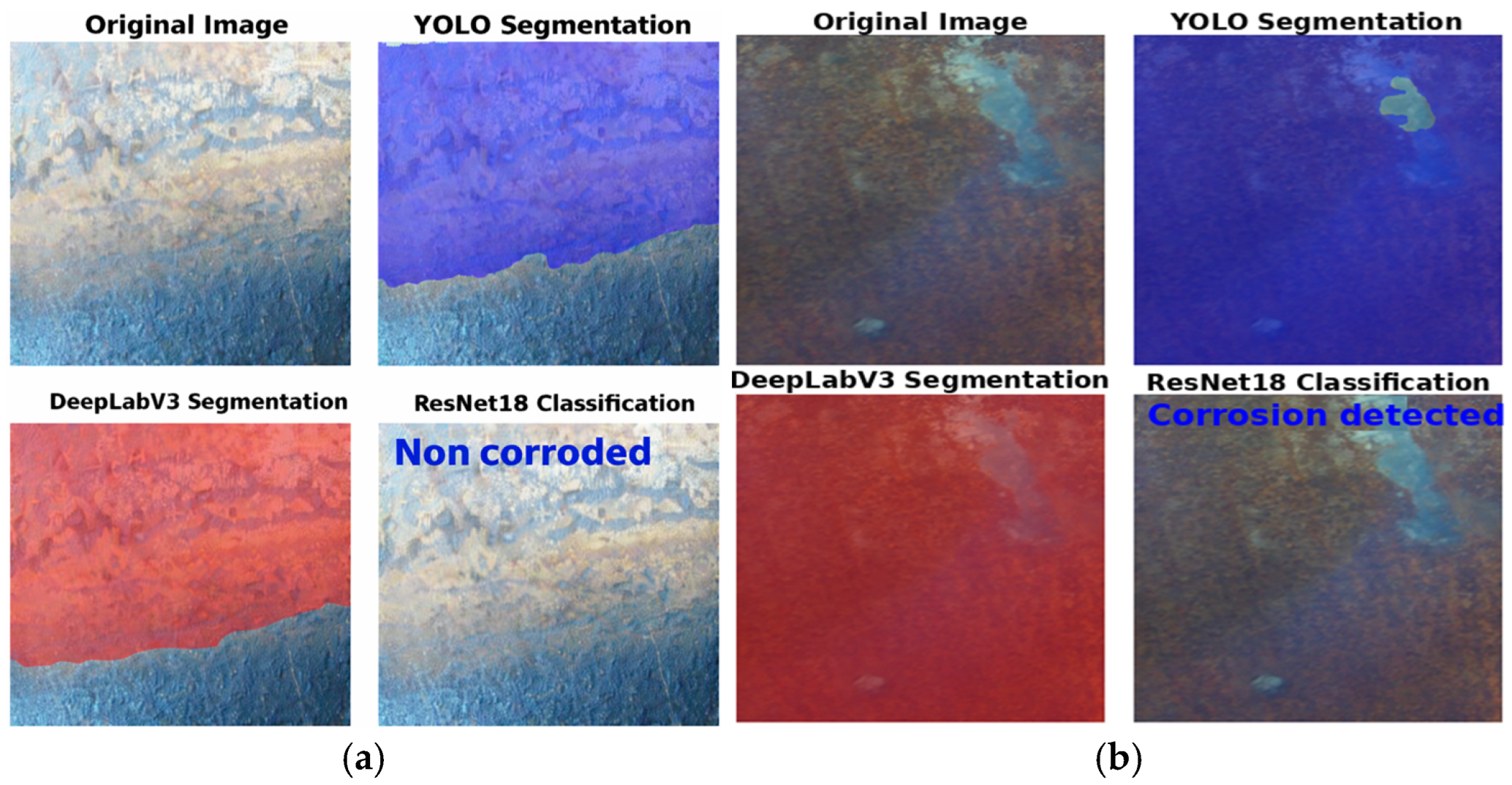

Figure 15 illustrates the analysis of failure cases and model discrepancies: (a) It shows a false negative in the ResNet18 classifier, which fails to identify corrosion despite successful segmentation by YOLOv8-seg and DeepLabV3. (b) It illustrates an over-segmentation error (false positive) where YOLOv8-seg and DeepLabV3 identify a small, non-corroded area as affected, likely due to chromatic similarities with actual oxidation. These discrepancies highlight that while segmentation models offer greater spatial accuracy, they are susceptible to local texture noise, whereas classification models can overlook detailed features on complex backgrounds.

4. Discussion

The experimental results demonstrate that the proposed mobile vision-based system can accurately detect and segment uniform internal corrosion in steel pipelines. In particular, the YOLOv8-seg model achieved a mIoU greater than 0.98 and a precision close to 0.99, indicating a highly reliable delineation of corroded regions.

Recent advances in corrosion inspection have demonstrated the growing relevance of computer vision and deep learning techniques as alternatives to conventional non-destructive testing methods. In this context, the results obtained in the present study confirm that image-based learning approaches can provide highly reliable discrimination between corroded and non-corroded regions when supported by an appropriate acquisition and processing framework. The strong class separation observed in the PCA projections, together with the high accuracy values reflected in the confusion matrices and ROC curves, indicates that the visual features extracted from internal pipeline surfaces are highly informative for corrosion assessment.

Earlier studies approached corrosion detection mainly as an image-level classification problem. For example, Ahuja et al. [

40] proposed a convolutional neural network to classify surface corrosion grades according to standardized visual categories. Their results demonstrated that CNNs are capable of learning discriminative corrosion-related features and outperforming traditional image processing techniques. However, their approach assigns a single corrosion grade to an entire image and does not provide information about the spatial distribution of corrosion. In contrast, although the present study also evaluates binary discrimination between corroded and non-corroded conditions, the analysis is supported by detailed performance metrics and feature-space visualization, offering a clearer understanding of the separability of corrosion patterns extracted from pipeline images.

More recently, explainable artificial intelligence has been introduced to enhance the interpretability of corrosion classification models. The study employs Grad-CAM to visualize the regions contributing to binary corrosion classification decisions [

41]. While this approach improves model transparency, it remains limited to image-level predictions and is primarily evaluated under offline conditions using curated datasets. In contrast, the present study emphasizes robustness and practical applicability, demonstrating that high discrimination performance can be achieved within a compact, reproducible processing pipeline suitable for integration into inspection systems. The reported ROC–AUC values close to unity indicate that the proposed feature representation and learning strategy provide stable performance across decision thresholds, even without relying on highly complex model architectures.

A different line of research has explored corrosion detection using supervised learning combined with image processing techniques. Jabbar et al. [

42] proposed a CNN-based framework for detecting pitting corrosion on metallic surfaces using a large open-source image dataset of steel bridge corrosion. Their work focused on binary classification (corroded vs. non-corroded surfaces) and achieved high training accuracy (≈98%). While this study demonstrated the effectiveness of CNNs combined with preprocessing techniques, it relied exclusively on offline image analysis and did not address localization or deployment in confined environments such as pipelines. In contrast, the proposed system not only performs detection but also pixel-level semantic segmentation, allowing precise identification of corrosion-affected regions within pipes under realistic inspection conditions and the integration with a mobile artificial vision system.

A further comparison can be made with [

43], which employed EfficientNetV2 for corrosion image classification, achieving high classification accuracy using deep convolutional architectures. While that work confirms the effectiveness of modern CNN backbones for corrosion-related image analysis, it relies on complex models and transfer learning. The present study demonstrates that comparable discriminatory performance can be achieved using a streamlined methodology that prioritizes feature separability and robust evaluation over architectural complexity. This balance is particularly relevant for industrial inspection systems, where computational efficiency and ease of deployment are critical factors.

Taken together, the comparison with other studies indicates that the present work contributes to the field by reinforcing three key aspects: reliable discrimination between corroded and non-corroded conditions, methodological simplicity combined with strong quantitative validation, and alignment with practical inspection constraints highlighted in recent reviews. Rather than focusing exclusively on model novelty, the proposed approach emphasizes reproducibility and applicability, positioning it as a suitable candidate for integration into real-world corrosion monitoring workflows. These characteristics support the potential of image-based learning systems as complementary tools to conventional inspection techniques, particularly for early-stage corrosion detection in confined pipeline environments.

An additional and distinctive contribution of the present study is the integration of the YOLOv8 architecture into a mobile robotic inspection system for corrosion detection in steel pipelines. To the best of the authors’ knowledge, this is the first study to apply YOLOv8-based deep learning models in combination with a robotic platform for internal pipeline corrosion inspection. Furthermore, the proposed framework is inherently scalable and adaptable, enabling its application across a wide range of industrial scenarios. Potential deployment contexts include oil and gas transmission pipelines, water distribution networks, chemical processing facilities, and industrial heat exchangers, where internal corrosion poses significant safety and operational risks. Beyond pipelines, the same methodology could be extended to storage tanks, pressure vessels, and confined metallic structures, provided that visual access can be ensured. The combination of robotic mobility and YOLOv8-based detection also opens opportunities for preventive maintenance, early-stage corrosion monitoring, and condition-based inspection strategies, reducing reliance on costly and invasive non-destructive testing methods.

Despite the promising results obtained in this study, several limitations should be acknowledged. First, the dataset used for training and evaluation was collected under controlled laboratory conditions, where lighting, camera positioning, and background variability were relatively stable. In real industrial environments, additional factors such as illumination variability, reflections from metallic surfaces, environmental noise, and complex textures may affect image quality and segmentation performance.

Another limitation relates to the computational requirements of deep learning models when deployed on embedded platforms or edge devices. Although the models used in the study demonstrate strong performance, further optimization may be required for real-time deployment in resource-constrained environments.

Future work will focus on expanding the dataset with images captured in real industrial environments and exploring domain adaptation and model optimization techniques to improve robustness and generalization.

Classical image processing techniques such as global thresholding, edge detection, and color-based segmentation have been widely used for surface defect detection. However, these techniques strongly depend on stable illumination and well-defined object boundaries. In corrosion detection, the visual appearance of rust often exhibits irregular textures and variable color distribution, which limits the robustness of traditional methods. Recent studies have demonstrated that deep learning approaches significantly outperform classical image processing techniques for complex visual inspection task and defect detection problems [

44].

5. Conclusions

This study demonstrates that artificial vision combined with deep learning provides an effective solution for detecting and segmenting uniform corrosion in steel pipelines. The proposed system reached high performance across the evaluated models. The ResNet-18 classification model achieved an overall accuracy of 96%, an F1 Score of 96%, and an AUC of 0.99, indicating strong discrimination between corroded and non-corroded images.

For pixel-level segmentation, the YOLOv8-seg model achieved the best performance, with accuracies of 99.33%, 99.14%, and 98.58%, and an mIoU of 98.17%, indicating highly accurate delineation of corrosion regions. The DeepLabV3 model also showed robust performance, with an accuracy of 97.72%, an F1-score of 94.97%, and an mIoU of 93.73%, though with a slightly higher tendency toward false positives than YOLOv8-seg.

The use of a low-cost embedded platform based on a Raspberry Pi proved sufficient to execute computer vision and deep learning algorithms in near-real time, highlighting the feasibility of deploying corrosion-monitoring systems without requiring high-performance computing infrastructure. Despite moderate inference latency, the system performance remains suitable for inspection tasks in preventive maintenance and condition-based monitoring scenarios.

From a materials degradation perspective, the proposed approach provides a practical alternative to conventional inspection techniques by enabling non-destructive visual assessment of corrosion progression on internal steel surfaces. The integration of image acquisition, preprocessing, deep-learning-based analysis, robotic mobility, and real-time visualization demonstrates the feasibility of an automated internal pipeline inspection for early detection of degradation mechanisms and reduction of failure risk in steel infrastructure.

Future work will focus on improving computational efficiency through lightweight segmentation models and optimization strategies to reduce inference time and enhance system autonomy. Additionally, integrating complementary sensing technologies could enable a more comprehensive assessment of material degradation, including subsurface defects and structural deformations that cannot be detected by visual inspection alone.