A Study on a Complex Flame and Smoke Detection Method Using Computer Vision Detection and Convolutional Neural Network

Abstract

:1. Introduction

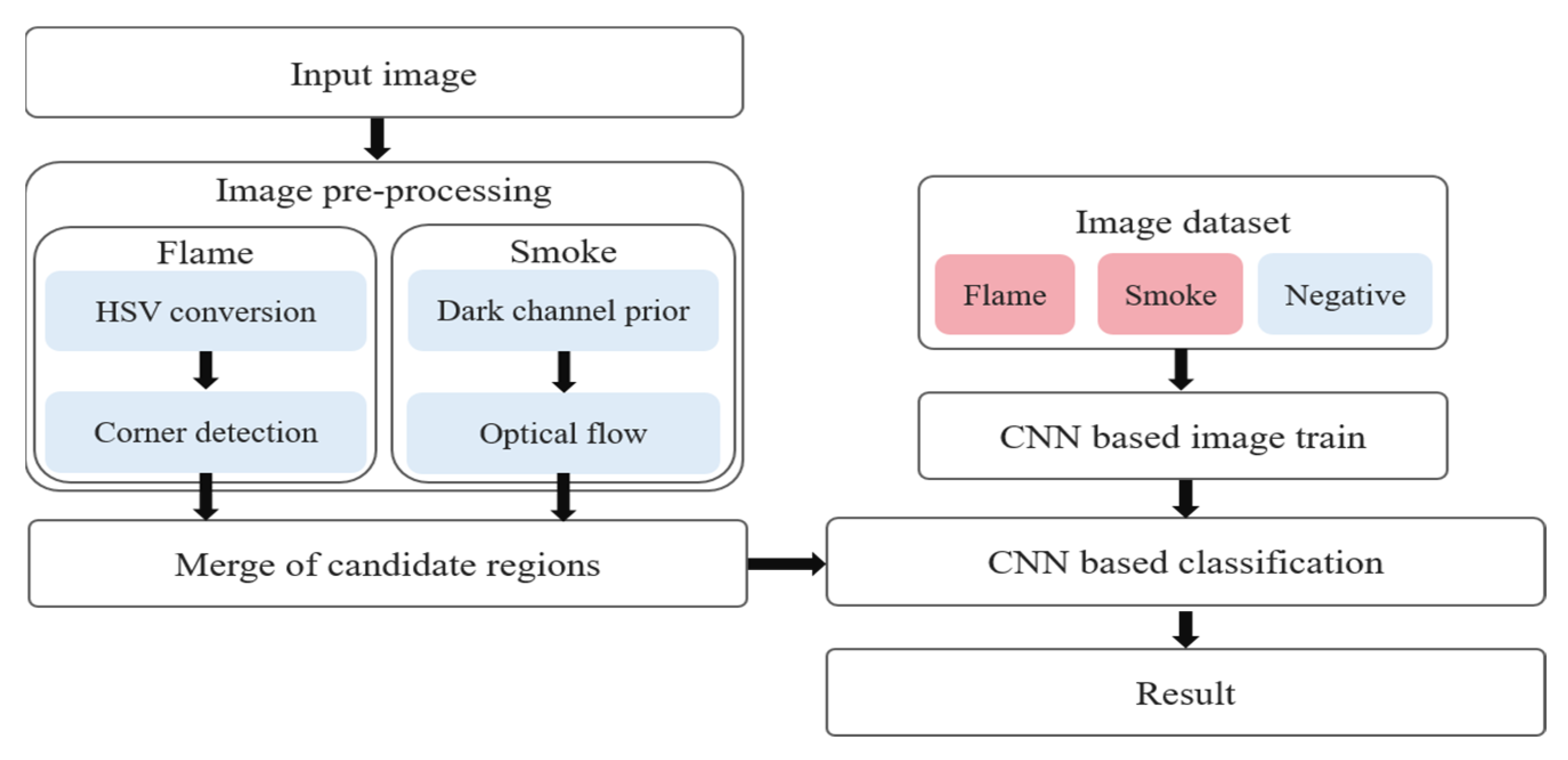

2. Image Pre-Processing for Fire Detection

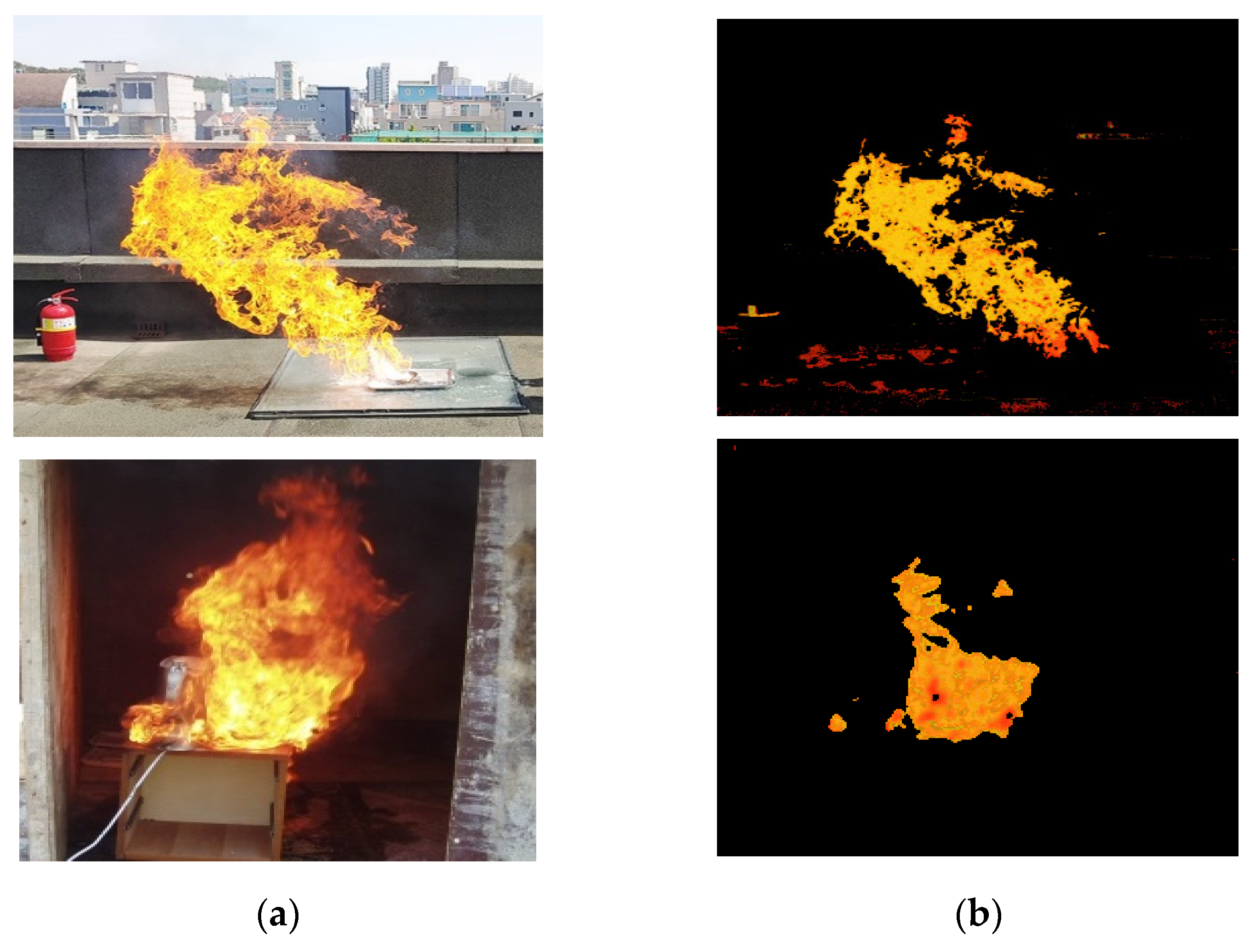

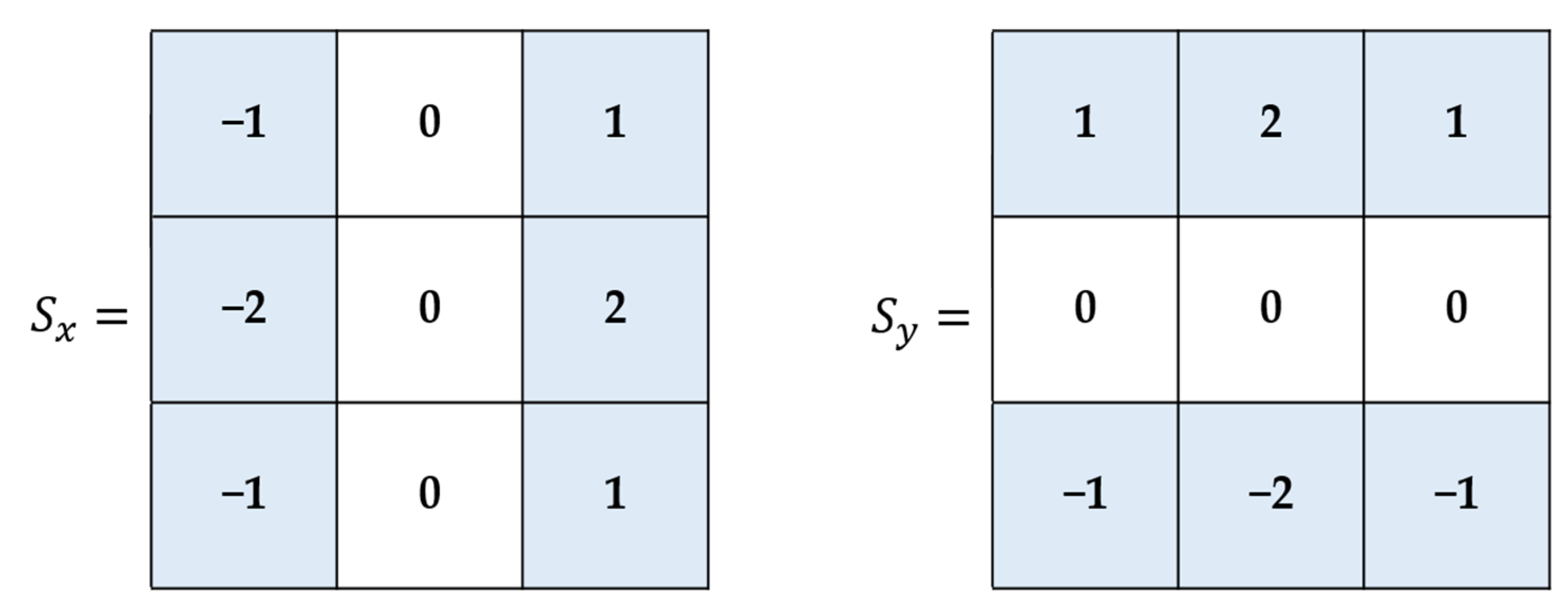

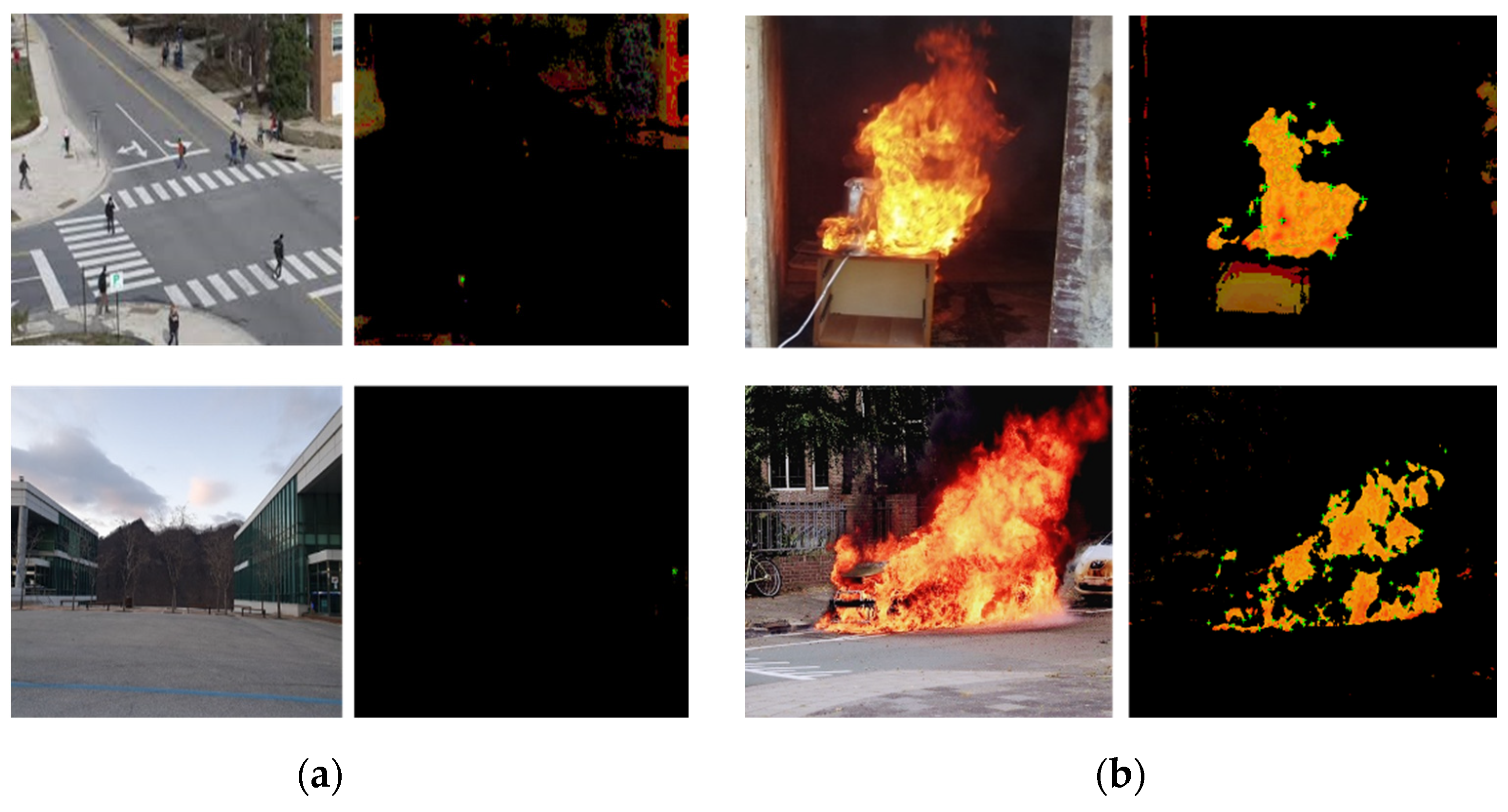

2.1. Flame Detection

- When |R| is small, which happens when and are small, these points belong to flat regions;

- When R < 0, if only one eigenvalue of and is bigger than the other eigenvalue, the region belongs to edges;

- If R has a large value, the region is a corner.

2.2. Smoke Detection

2.3. Inference Using Inception-V3

3. Experimental Results

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Dubinin, D.; Cherkashyn, O.; Maksymov, A.; Beliuchenko, D.; Hovalenkov, S.; Shevchenko, S.; Avetisyan, V. Investigation of the effect of carbon monoxide on people in case of fire in a building. Sigurnost 2020, 62, 347–357. [Google Scholar] [CrossRef]

- Hadano, H.; Nagawa, Y.; Doi, T.; Mizuno, M. Study of effectiveness of CO and Smoke Alarm in smoldering fire. ECS Trans. 2020, 98, 75–79. [Google Scholar] [CrossRef]

- Gałaj, J.; Saleta, D. Impact of apartment tightness on the concentrations of toxic gases emitted during a fire. Sustainability 2019, 12, 223. [Google Scholar] [CrossRef] [Green Version]

- Shen, D.; Chen, X.; Nguyen, M.; Yan, W. Flame detection using deep learning. In Proceedings of the 2018 4th International Conference on Control, Automation and Robotics (ICCAR), Auckland, New Zealand, 20–23 April 2018; pp. 416–420. [Google Scholar]

- Muhammad, K.; Khan, S.; Elhoseny, M.; Ahmed, S.; Baik, S. Efficient Fire Detection for Uncertain Surveillance Environment. IEEE Trans. Ind. Inform. 2019, 15, 3113–3122. [Google Scholar] [CrossRef]

- Nguyen, A.Q.; Nguyen, H.T.; Tran, V.C.; Pham, H.X.; Pestana, J. A visual real-time fire detection using single shot MultiBox detector for UAV-based fire surveillance. In Proceedings of the 2020 IEEE Eighth International Conference on Communications and Electronics (ICCE), Phu Quoc Island, Vietnam, 13–15 January 2021. [Google Scholar]

- Jeon, M.; Choi, H.-S.; Lee, J.; Kang, M. Multi-scale prediction for fire detection using Convolutional Neural Network. Fire Technol. 2021, 57, 2533–2551. [Google Scholar] [CrossRef]

- Lai, T.Y.; Kuo, J.Y.; Fanjiang, Y.-Y.; Ma, S.-P.; Liao, Y.H. Robust little flame detection on real-time video surveillance system. In Proceedings of the 2012 Third International Conference on Innovations in Bio-Inspired Computing and Applications, Kaohsiung, Taiwan, 26–28 September 2012. [Google Scholar]

- Ryu, J.; Kwak, D. Flame detection using appearance-based pre-processing and Convolutional Neural Network. Appl. Sci. 2021, 11, 5138. [Google Scholar] [CrossRef]

- Kaiming, H.; Sun, J.; Tang, X. Single Image Haze Removal Using Dark Channel prior. In Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009. [Google Scholar]

- Kwak, D.-K.; Ryu, J.-K. A study on the dynamic image-based dark channel prior and smoke detection using Deep Learning. J. Electr. Eng. Technol. 2021, 17, 581–589. [Google Scholar] [CrossRef]

- Kang, H.-C.; Han, H.-N.; Bae, H.-C.; Kim, M.-G.; Son, J.-Y.; Kim, Y.-K. HSV color-space-based automated object localization for robot grasping without prior knowledge. Appl. Sci. 2021, 11, 7593. [Google Scholar] [CrossRef]

- Chen, W.; Chen, S.; Guo, H.; Ni, X. Welding flame detection based on color recognition and progressive probabilistic Hough Transform. Concurr. Comput. Pract. Exp. 2020, 32, e5815. [Google Scholar] [CrossRef]

- Gao, Q.-J.; Xu, P.; Yang, L. Breakage detection for grid images based on improved Harris Corner. J. Comput. Appl. 2013, 32, 766–769. [Google Scholar] [CrossRef]

- Chen, L.; Lu, W.; Ni, J.; Sun, W.; Huang, J. Region duplication detection based on Harris Corner Points and step sector statistics. J. Vis. Commun. Image Represent. 2013, 24, 244–254. [Google Scholar] [CrossRef]

- Sánchez, J.; Monzón, N.; Salgado, A. An analysis and implementation of the Harris Corner Detector. Image Process. Line 2018, 8, 305–328. [Google Scholar] [CrossRef]

- Semma, A.; Hannad, Y.; Siddiqi, I.; Djeddi, C.; El Youssfi El Kettani, M. Writer identification using deep learning with fast keypoints and Harris Corner Detector. Expert Syst. Appl. 2021, 184, 115473. [Google Scholar] [CrossRef]

- Appana, D.K.; Islam, R.; Khan, S.A.; Kim, J.-M. A video-based smoke detection using smoke flow pattern and spatial-temporal energy analyses for alarm systems. Inf. Sci. 2017, 418–419, 91–101. [Google Scholar] [CrossRef]

- Wang, Y.; Wu, A.; Zhang, J.; Zhao, M.; Li, W.; Dong, N. Fire smoke detection based on texture features and optical flow vector of contour. In Proceedings of the 2016 12th World Congress on Intelligent Control and Automation (WCICA), Guilin, China, 12–15 June 2016. [Google Scholar]

- Bilyaz, S.; Buffington, T.; Ezekoye, O.A. The effect of fire location and the reverse stack on fire smoke transport in high-rise buildings. Fire Saf. J. 2021, 126, 103446. [Google Scholar] [CrossRef]

- Plyer, A.; Le Besnerais, G.; Champagnat, F. Massively parallel lucas kanade optical flow for real-time video processing applications. J. Real-Time Image Process. 2014, 11, 713–730. [Google Scholar] [CrossRef]

- Sharmin, N.; Brad, R. Optimal filter estimation for Lucas-Kanade Optical Flow. Sensors 2012, 12, 12694–12709. [Google Scholar] [CrossRef] [Green Version]

- Liu, Y.; Xi, D.-G.; Li, Z.-L.; Hong, Y. A new methodology for pixel-quantitative precipitation nowcasting using a pyramid Lucas Kanade Optical Flow Approach. J. Hydrol. 2015, 529, 354–364. [Google Scholar] [CrossRef]

- Douini, Y.; Riffi, J.; Mahraz, M.A.; Tairi, H. Solving sub-pixel image registration problems using phase correlation and Lucas-Kanade Optical Flow Method. In Proceedings of the 2017 Intelligent Systems and Computer Vision (ISCV), Fez, Morocco, 17–19 April 2017. [Google Scholar]

- Hambali, R.; Legono, D.; Jayadi, R. The application of pyramid Lucas-Kanade Optical Flow Method for tracking rain motion using high-resolution radar images. J. Teknol. 2020, 83, 105–115. [Google Scholar] [CrossRef]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the inception architecture for computer vision. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Demir, A.; Yilmaz, F.; Kose, O. Early detection of skin cancer using deep learning architectures: Resnet-101 and inception-V3. In Proceedings of the 2019 Medical Technologies Congress (TIPTEKNO), Izmir, Turkey, 3–5 October 2019. [Google Scholar]

- Kristiani, E.; Yang, C.-T.; Huang, C.-Y. ISEC: An optimized deep learning model for image classification on Edge Computing. IEEE Access 2020, 8, 27267–27276. [Google Scholar] [CrossRef]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.-Y.; Berg, A.C. SSD: Single shot multibox detector. In Computer Vision—ECCV 2016; Springer: Cham, Switzerland, 2016; pp. 21–37. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef] [PubMed] [Green Version]

| Layer | Kernel Size | Input Size |

|---|---|---|

| Convolution | ||

| Convolution | ||

| Convolution | ||

| Max pooling | ||

| Convolution | ||

| Convolution | ||

| Max pooling | ||

| Inception module | - | |

| Reduction | - | |

| Inception module | - | |

| Reduction | - | |

| Inception module | - | |

| Average pooling | - | |

| Fully connected | - | |

| Softmax | - | 3 |

| Classes of Image Datasets | |||

|---|---|---|---|

| Flame | Smoke | Non-Fire | |

| Train set | 6200 | 6200 | 6200 |

| Test set | 1800 | 1800 | 1800 |

| Evaluation Indicator | ||||

|---|---|---|---|---|

| Accuracy | Precision | Recall | F1 Score | |

| Our proposed model | 96.0 | 94.2 | 98.0 | 96.1 |

| SSD | 89.0 | 86.8 | 92.0 | 89.3 |

| Faster R-CNN | 92.0 | 88.9 | 96.0 | 92.3 |

| Evaluation Indicator | ||||

|---|---|---|---|---|

| Accuracy | Precision | Recall | F1 Score | |

| Our proposed model | 93.0 | 93.9 | 92.0 | 92.9 |

| SSD | 85.0 | 84.3 | 86.0 | 85.1 |

| Faster R-CNN | 89.0 | 89.8 | 88.0 | 88.9 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ryu, J.; Kwak, D. A Study on a Complex Flame and Smoke Detection Method Using Computer Vision Detection and Convolutional Neural Network. Fire 2022, 5, 108. https://doi.org/10.3390/fire5040108

Ryu J, Kwak D. A Study on a Complex Flame and Smoke Detection Method Using Computer Vision Detection and Convolutional Neural Network. Fire. 2022; 5(4):108. https://doi.org/10.3390/fire5040108

Chicago/Turabian StyleRyu, Jinkyu, and Dongkurl Kwak. 2022. "A Study on a Complex Flame and Smoke Detection Method Using Computer Vision Detection and Convolutional Neural Network" Fire 5, no. 4: 108. https://doi.org/10.3390/fire5040108

APA StyleRyu, J., & Kwak, D. (2022). A Study on a Complex Flame and Smoke Detection Method Using Computer Vision Detection and Convolutional Neural Network. Fire, 5(4), 108. https://doi.org/10.3390/fire5040108