6.1. Language Similarity and Cross-Lingual Transfer

In this study, we investigated the correlation between language similarity metrics and the performance of multilingual language models trained for cross-lingual transfer learning.

The results of fine-tuning the multilingual transformer models (mBERT and XLM-R) on abusive language detection, sentiment analysis (SA), named entity recognition (NER), and dependency parsing (DEP) tasks provided valuable insights into the performance dynamics of these models across different languages and tasks. Across most tasks, XLM-R consistently outperformed mBERT, demonstrating its superior capacity for cross-lingual transfer. However, the sentiment analysis task presented a notable exception, where mBERT slightly surpassed XLM-R, suggesting that certain tasks might benefit from mBERT’s architectural features, particularly for sentiment-related nuances.

In abusive language detection and dependency parsing, XLM-R’s edge over mBERT aligned with expectations, as these tasks often require deeper syntactic and semantic representations. XLM-R’s performance in NER was particularly noteworthy, with its high zero-shot transfer scores, suggesting it may be better at handling complex cross-lingual name entity recognition due to its robust multilingual pretraining.

Interestingly, the results revealed that cross-lingual transfer tends to perform better between languages within the same family. For instance, Germanic and Slavic languages showed higher transfer scores when evaluated against one another, highlighting the impact of linguistic similarity on model performance. However, this pattern was less clear in the sentiment analysis task, where languages from different families also exhibited strong performance. This could indicate that sentiment analysis relies more on shared semantic or contextual features than on structural linguistic similarities.

Japanese and Korean, as the only non-Indo-European languages applied in the study, performed comparably to Indo-European languages across most tasks, except for dependency parsing, where their performance was significantly lower. This finding highlights the unique challenges posed by languages with different typological structures, such as Koreano-Japonic languages, especially in tasks like dependency parsing that rely strongly on syntactic alignment.

Additionally, the high performance of German, Croatian, and Russian as source languages, particularly for mBERT, suggests that certain languages may serve as better general-purpose bases for cross-lingual transfer. This aligns with previous studies, such as by Turc et al. [

116], which have observed similar trends with these languages. These findings imply that leveraging specific languages as source data could enhance model transferability in multilingual contexts.

In summary, the results demonstrate that while XLM-R generally offers stronger performance in cross-lingual tasks, there are exceptions, such as sentiment analysis, where mBERT holds a slight advantage. The impact of linguistic similarity is also evident, with better transfer between languages in the same family, although this effect is task-dependent. These insights contribute to our understanding of how multilingual models perform in diverse linguistic landscapes and can guide future improvements in cross-lingual NLP applications.

The optimized WALS feature subsets (

Appendix A) offer additional interpretability beyond aggregate correlation scores. Because qWALS is computed directly from typological features, optimizing the feature set per task provides a data-driven indication of which linguistic properties are most predictive of successful transfer. For example, the dependency-parsing subset emphasizes features related to syntactic configuration (e.g., word order and clause structure), consistent with DEP being strongly syntax-driven, whereas the sentiment-analysis subset is smaller and contains fewer clearly syntax-defining features, consistent with sentiment transfer being dominated by lexical and discourse cues. We therefore discuss these feature subsets as linguistic hypotheses about what matters most for transfer in each task.

The analysis of the impact of linguistic similarity on zero-shot cross-lingual transfer revealed several key patterns across the tasks and models. For both Pearson’s and Spearman’s correlation coefficients, a strong relationship was generally observed between linguistic similarity, as measured by WALS and eLinguistics, and transfer performance in abusive language identification, NER, and DEP tasks. On the other hand, the correlation with the EzGlot metric was somewhat weaker, except in sentiment analysis, where it showed slightly higher correlation values than WALS and eLinguistics, particularly for mBERT.

The strongest correlation was observed in the DEP task for XLM-R, with a Spearman’s correlation coefficient of 0.897 for the eLinguistics metric, followed by abusive language identification and NER. Sentiment analysis displayed the weakest correlations overall, particularly with XLM-R, where the correlation coefficients were only moderate across all metrics. Interestingly, mBERT showed slightly higher correlations in abusive language identification and sentiment analysis, while XLM-R outperformed in NER and DEP.

After removing the anchor points of monolingual source-target language pairs (same language pairs), the correlation results changed significantly for all tasks except DEP. This adjustment aimed to eliminate the bias of higher transfer scores for monolingual scenarios, which also has the highest linguistic similarity, thus automatically boosting the correlation score. In abusive language identification and NER, both WALS (ordinal) and eLinguistics correlations dropped from strong to moderate, while EzGlot correlations fell close to zero and lost statistical significance. In sentiment analysis, the opposite was true, with WALS (ordinal) and eLinguistics correlations degrading, while EzGlot maintaining a slight drop but remaining statistically significant.

Overall, the strongest correlations remained in DEP, followed by abusive language identification and NER, while sentiment analysis consistently showed the weakest correlations after removing monolingual anchor points. These findings suggest that linguistic similarity plays a more significant role in tasks like dependency parsing and abusive language identification, but its influence is less prominent in sentiment analysis, where other factors may have a greater impact on cross-lingual transfer performance.

We compared two commonly used representations of features from the World Atlas of Language Structures (WALS): ordinal and one-hot encoding. The ordinal representation retains the numeric order of feature values, reflecting underlying gradience where it exists. In contrast, one-hot encoding transforms categorical values into binary vectors, assuming no inherent structure among them.

Our evaluation revealed that ordinal encoding generally yields stronger for instance, in the dependency parsing task, which is particularly sensitive to grammatical structure, the ordinal WALS metric exhibited very strong negative correlations with model performance, outperforming the one-hot variant in all comparisons. On the other hand, one-hot features yielded better performance in more simple tasks like sentiment analysis.

By treating all feature values as mutually unrelated, one-hot representations ignore the internal structure present in many WALS features. Consider, for example, the feature describing gender distinctions in pronouns. Languages range from having no gender system to distinguishing masculine and feminine, and some extend to animate/inanimate or additional noun classes. Similarly, features like the number of grammatical cases exhibit a clear ordinal structure, progressing from zero to more than ten. One-hot encoding collapses these gradation into discrete, unconnected dimensions, thereby obscuring meaningful typological similarities. Ordinal encoding, by contrast, preserves such gradience. Breaking features with inherent ordinal structure into binary variables via one-hot encoding is not only conceptually inappropriate but may also result in information loss. Such transformations obscure the gradience encoded in the original values, and might diminish their capacity to capture meaningful similarities between languages.

We also introduced a composite metric that combined WALS-based similarity scores with a reliability factor derived from feature coverage. This score adjusted raw similarity by the extent of shared, non-missing data between each language pair, effectively down-weighting comparisons that are based on sparse or incomplete information. The intuition behind this was simple: even if two languages appear similar, the similarity which is calculated from only a handful of overlapping features is less meaningful or reliable. By multiplying the WALS similarity (expressed as ) with the corresponding coverage values, we give more weight to comparisons grounded in richer typological evidence. However, despite this theoretical appeal, the combined similarity × reliability score did not improve correlations with zero-shot transfer performance. In several settings it even produced negative correlations. We argue that this outcome is plausible for at least three reasons. First, the reliability term is itself strongly tied to WALS coverage: when two languages share only a small fraction of features (e.g., in the most extreme cases around 30% overlap), the coverage-based reliability becomes small and the product acts like a broad penalty. This can disproportionately downweight precisely those typologically distant or under-described language pairs for which transfer performance is both informative and noisy, changing the ranking in a way that may hurt correlation. Second, a multiplicative adjustment can distort the scale of similarities by compressing mid-range values and amplifying small differences at the extremes; if the similarity–transfer relationship is non-linear and task-dependent, such rescaling can reduce linear (Pearson) and rank (Spearman) associations. Third, because the reliability estimate is computed from the same sparse feature matrix, it can be unstable when overlap is low; multiplying by an unstable factor can inject additional noise rather than mitigate it. These negative findings suggest that reliability should be incorporated more carefully than via a single global multiplicative weight.

Additionally, the application of the leave-one-feature-out optimization method led to substantial improvements in the results, as demonstrated by the refined Quantified WALS metric. The refined metric showed notable gains in Pearson correlation coefficients across all tasks. For abusive language identification, the Pearson correlation () improved significantly from −0.646 with 169 features to −0.8221 with just 53 features. This considerable increase highlights the refined metric’s effectiveness in capturing the most relevant linguistic similarities for this task.

In dependency parsing, the Pearson correlation surged from −0.771 (169 features) to nearly ideal −0.9903 (75 features). This dramatic improvement indicates that the refined metric more accurately reflects the linguistic similarities pertinent to the DEP task. Named entity recognition also saw a significant enhancement, with the Pearson correlation rising from −0.561 to −0.808 while reducing the feature count from 169 to 63. This indicates that the refined metric better captures the linguistic characteristics relevant to NER. Lastly, for sentiment analysis, the correlation coefficient improved from −0.3609 (169 features) to −0.8027 (21 features), demonstrating the refined metric’s improved ability to account for linguistic nuances affecting sentiment analysis.

Overall, these improvements clearly indicate that the leave-one-feature-out optimization method not only streamlined the feature set but also enhanced the metric’s effectiveness in predicting cross-lingual transfer performance across different tasks. By eliminating less important and redundant features, the refined metric became more sensitive to the linguistic characteristics that genuinely impact similarity and transfer success. This enhancement led to more accurate predictions of transfer performance between language pairs, facilitating more effective cross-lingual applications. The reduction in computational complexity, coupled with the improved reliability and validity of the similarity measurements, confirms the effectiveness of the refinement process.

However, while these results were promising, it is essential to recognize that correlation does not imply causation. The observed improvements could be influenced by random factors, and further validation is needed to confirm these findings. Future work will involve additional tests and cross-validation to ensure that the enhanced correlations are genuinely indicative of improved metric performance and not merely statistical anomalies. This will help solidify the refined metric’s utility in both linguistic research and practical language technology applications. We also plan to test the influence of language similarity using other forms of model alignment, such as continuous pretraining, or probing (updating the last linear layer), since it has been pointed out that fine-Tuning can distort pretrained features and in effect underperform in out-of-distribution settings [

122].

The comparison between the optimized qWALS features and various Lang2vec feature groups also revealed both complementary aspects and unique strengths that each approach offers. The comparison showed valuable insights into their respective roles and applications in capturing linguistic similarities.

Both the optimized qWALS features and Lang2vec’s grammatical feature group cover a broad spectrum of syntactic, morphological, and phonological characteristics. They underscore the importance of grammatical structures in understanding linguistic nuances across languages. For instance, features such as word order, negation patterns, and syntactic constructions were central to both qWALS and Lang2vec, highlighting their shared emphasis on grammatical elements.

In terms of semantic and pragmatic features, both approaches strive to capture contextual meaning and language use. Lang2vec provides a more extensive overview of semantic and pragmatic categories, encompassing a wider range of generalizable features. In contrast, qWALS focuses on specific semantic distinctions, modalities, and pragmatic markers that are particularly relevant for tasks like sentiment analysis and named entity recognition. This specificity in qWALS allows for a better understanding of language that can enhance task-specific performance.

Lang2vec also includes geographical and typological features that account for regional and typological variations in language. These features consider factors such as language families, geographical proximity, and typological traits. On the other hand, qWALS features are more concentrated on linguistic properties and their direct impact on specific tasks, with less emphasis on geographical or typological aspects. This focus makes qWALS particularly effective for analyzing linguistic traits and their relevance to targeted NLP tasks.

The comparison highlights the advantages of integrating both approaches for a more comprehensive understanding of linguistic similarity. While Lang2vec provides a broad overview that incorporates regional and typological variations, qWALS offers detailed insights into specific linguistic traits and their task-specific relevance. Combining these perspectives can lead to more robust and interpretable linguistic similarity metrics.

An integrated approach that leverages the strengths of both qWALS and Lang2vec could significantly enhance the accuracy and adaptability of linguistic similarity metrics. By incorporating geographical and typological insights from Lang2vec alongside the detailed linguistic features identified by qWALS, researchers can develop models that are not only more accurate but also more generalizable across different languages and tasks. This integrated perspective paves the way for improved natural language processing models and more effective cross-lingual applications, capturing both linguistic universals and language-specific variations.

Overall, the findings demonstrate strong correlations between language similarity and model performance across different tasks, supporting the proposed hypothesis that models benefit more from cross-lingual transfer when the source and target languages are similar. However, the variability in correlation across tasks indicates that certain tasks might depend more heavily on language similarity than others, which opens up an important avenue for further research.

6.2. Expert Survey

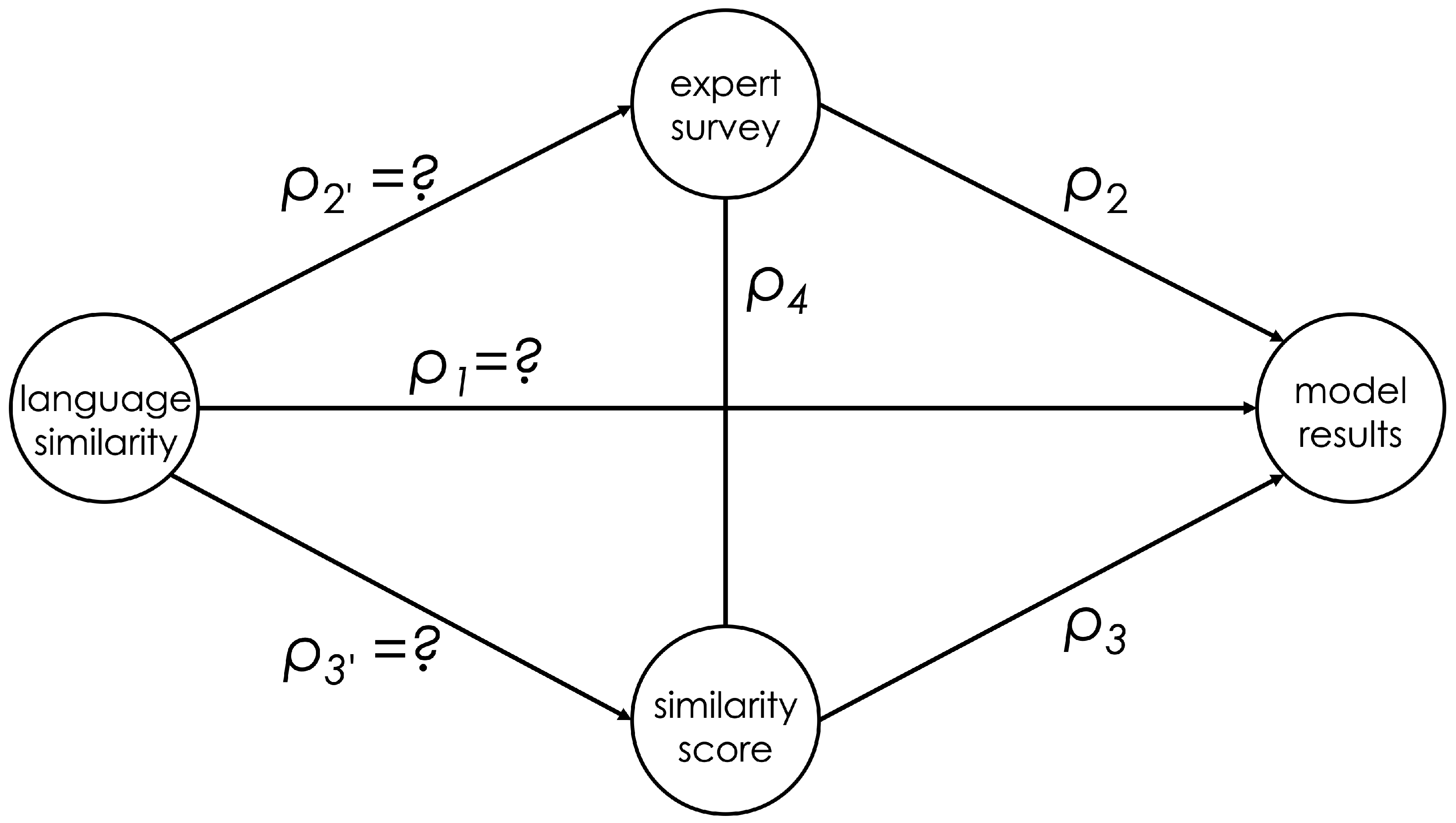

One of the key contributions of this work was the optimization of the qWALS language similarity score, which yielded correlations up to −0.99 in some cases. This suggests that language similarity can be a highly reliable predictor of model performance in cross-lingual tasks. However, the caveat remains that correlation does not imply causation. The unknown, non-measurable ideal language similarity complicates this analysis, as we must rely on proxy measurements like qWALS and cross-lingual transfer results. Thus, while we presented evidence of strong correlations, establishing a direct causal link between language similarity and model performance required additional verification.

To address this challenge, we designed an expert-based survey as a third independent variable, which helped infer causal relations between the measured variables using methods from causal inference theory. This triangulation of evidence, based on: qWALS scores, cross-lingual transfer performance, and expert survey results, provided a compelling case for the causal role of language similarity in improving cross-lingual transfer learning. The results showed that expert judgments aligned with both computational similarity scores and model performance, particularly when considering high-agreement languages such as Serbian-Croatian and Russian. This bolstered our confidence in the hypothesis, but also revealed that some languages (e.g., Indonesian and Arabic) remain challenging to categorize consistently, reflecting the complexity of linguistic diversity.

The expert survey also highlighted the inherent difficulties in estimating language similarity, as evidenced by the variation in agreement rates among experts. This variation points to the subjectivity in human judgment, especially for languages with more complex historical and cultural relationships. Nevertheless, the moderate-to-high agreement rates between expert evaluations and computational methods reinforce the validity of using both human expertise and algorithmic measures to assess language similarity.

The survey results revealed insightful correlations between linguistic similarity metrics, transfer performance, and expert evaluations. The agreement between linguists and qWALS was notably high, indicating that qWALS’s assessments of linguistic similarity align closely with expert judgments. Linguists generally concurred with qWALS’s similarity scores for languages like Serbian-Croatian, Russian, and French, where the agreement rates were notably high. This suggests that qWALS provides a robust representation of linguistic similarity as perceived by experts. In contrast, the lang2vec system demonstrated lower agreement with the linguists’ choices. This discrepancy suggests that while lang2vec is a useful similarity metric, it may not align as closely with expert evaluations, particularly for languages such as Indonesian and Arabic, which showed weaker agreement.

Regarding transfer performance, the correlation with expert judgments varied across different models and tasks. The best-performing language models, based on cross-lingual transfer scores, exhibited substantial agreement with Linguist 1 and Linguist2, reflecting that high-performing models in cross-lingual tasks often align well with expert evaluations. However, Linguist 3 showed lower agreement with these models, highlighting that transfer performance may not consistently align with expert judgments across all linguists. The disagreements were observed most of the time in ambiguous cases like the example of Japanese being closer to Hindi or Portuguese. The survey also indicated that specific language tasks, such as dependency parsing and abusive language detection, demonstrated the highest levels of agreement with experts. This suggests that language models excelling in these tasks may better match expert evaluations. Conversely, tasks like sentiment analysis, which are relatively simpler, and often relying on specific keyword matching, even in transformer language models, showed only moderate alignment with expert judgments.

Overall, the survey highlights that qWALS provides a strong alignment with expert evaluations of linguistic similarity. Moreover, language similarity as determined by the experts, also accurately predicts transfer performance. High-performing models in specific language tasks tend to align better with experts, though variability exists, particularly among different linguists and tasks. This underscores the complexity of aligning computational metrics with human judgments and suggests that both linguistic similarity metrics and transfer performance need to be considered together for a comprehensive understanding of language model effectiveness.

The inclusion of the survey as a third independent variable offers a better view of causation between language similarity and transfer performance. By comparing the correlations between expert evaluations, linguistic similarity metrics, and transfer performance, we can clearly infer that there exists a causal relationship between language similarity and the performance of transfer learning. Specifically, the survey results provide additional validation for the proposed hypothesis that language similarity influences transfer performance. The strong alignment between expert evaluations and qWALS, combined with the observed correlation between qWALS scores and model performance, suggests that language similarity in general, as well as measured by qWALS likely plays a causal role in improving transfer performance. This is supported by the fact that languages with high agreement rates among experts also tend to show better performance in transfer learning tasks.

The consistency of correlations between qWALS and expert evaluations strengthens the argument for causation, as it demonstrates that a well-established similarity measure correlates strongly with model performance. The survey data help confirm that when the similarity between languages is accurately represented (as indicated by qWALS), it correlates with better performance in cross-lingual transfer learning tasks. However, the variability in agreement with lang2vec, as well as differences in expert linguists’ evaluations show that the influence might not be uniform across all metrics, tasks or contexts.

While the survey data strengthens the evidence for a causal relationship between language similarity and transfer performance, it also highlights that this relationship is complex and influenced by various factors. The presence of strong correlations and the consistency of qWALS with expert judgments support the causation hypothesis, but variability in other metrics and evaluations indicates that further investigation is necessary to fully understand the causal dynamics.

Thus, even though qWALS can be considered an effective tools for predicting model performance in cross-lingual settings, there is still room for refinement, particularly in tasks where language similarity might play a lesser role. Furthermore, the success of the expert-based survey in enhancing the interpretation of our findings opens up possibilities for hybrid approaches that combine computational and human insights to further optimize language models for cross-lingual transfer.

In short, while our findings offer robust support for the influence of language similarity in cross-lingual transfer learning, the complexity of language relationships and task-specific dependencies highlight the need for further investigation. Future work should explore other factors, such as cultural or typological features, and their impact on transfer learning, as well as the development of even more precise language similarity metrics. Additionally, expanding the scope of expert surveys to cover more languages and refining the methodology to reduce subjectivity could further enhance our understanding of the role language similarity plays—from the practical perspective—in model performance, as well as—from a more general perspective in language itself as the tool for everyday communication.

6.4. Limitations and Ethical Considerations

This study was challenged with several limitations and ethical considerations that must be acknowledged. One significant limitation is the incomplete WALS feature coverage, which affects low-resource languages most strongly. For some language pairs, only a small fraction of the available WALS features is shared (as low as roughly 30% overlap), meaning that qWALS is computed from a limited and potentially unrepresentative subset of typological properties. In such cases, similarity estimates become more sensitive to which specific features happen to be available (and to systematic missingness), which increases variance and reduces metric stability. As a result, conclusions involving low-resource languages, and more generally, any pairs with low WALS overlap should be interpreted cautiously, and we expect stronger and more stable associations once typological coverage improves. A related limitation is the absence of a dedicated Romance-language cluster in our experimental set. Without at least two Romance languages tested on the same task, we cannot directly verify that qWALS preserves fine-grained similarity within this well-studied family. This limits the strength of our universality claims: the current results demonstrate cross-lingual transfer trends across the included families, but they do not yet establish that the metric is equally precise within Romance languages or that similar within-family behavior would necessarily generalize.

Another inherent limitation is the very reliance on existing linguistic similarity metrics, such as qWALS or lang2vec, which may not fully capture the complexities of language relationships. These metrics are based on predefined sets of linguistic features, and their ability to accurately represent language similarity across a set of diverse languages can be constrained by their inherent limitations and the quality of the data they are based on. To address these issues, future research should explore alternative measures, such as the Grambank project (

https://grambank.clld.org/ (accessed on 4 March 2026)), which offers an updated, more comprehensive set of linguistic features and may provide a more accurate assessment of linguistic similarity, especially for low-resource languages.

Another limitation is the potential bias introduced by the choice of language models and tasks used in this study. The focus on specific models like mBERT and XLM-R, and tasks such as abusive language detection and sentiment analysis, may not generalize to other models or linguistic tasks. Different models may exhibit varied performance landscape, and the tasks chosen may not encompass the full range of linguistic phenomena that could affect transfer performance.

Additionally, the study’s methodology involves a relatively small sample of languages, which may not be fully representative of the global linguistic landscape. This limited sample size can impact the generalizability of the findings and may overlook important nuances present in less-represented languages or language pairs.

More concretely, our main experiments cover only eight languages drawn from three language families. Therefore, the observed patterns may not transfer to typologically distant or underrepresented language families (e.g., Afro-Asiatic, Niger–Congo, Austronesian). Likewise, because we evaluate only two multilingual encoder models (mBERT and XLM-R), the results may not extend to newer model families and paradigms (e.g., large decoder-only LLMs such as GPT-4, LLaMA, or Claude), which differ substantially in pretraining data, objectives, and inference-time behavior.

The survey-based approach to evaluating linguistic similarity and transfer performance introduces another limitation. The subjectivity is inherent for human judgments—even for experts—and can lead to variability and inconsistencies in the assessment of language similarity. While the survey provides valuable insights, the variability among experts highlights the challenge of achieving consensus on linguistic relationships and may affect the reliability of the conclusions drawn from these evaluations.

There were also some ethical considerations that need to be taken into consideration in the context of this research. Ensuring the fair and equitable treatment of all languages, including those with fewer resources, is essential. It is important to recognize that linguistic diversity extends beyond the well-represented languages, and efforts should be made to include and accurately represent currently under-represented languages in future research. This includes being mindful of potential biases in language data and ensuring that the models do not perpetuate or exacerbate linguistic inequalities. Additionally, researchers should consider the broader implications of their work on the communities and cultures associated with the studied languages, ensuring that the outcomes of their research contribute positively to the field of natural language processing and its applications.