Identification and Association of Multiple Visually Identical Targets for Air–Ground Cooperative Systems

Abstract

1. Introduction

- A markerless identification framework that correlates UGVs sensor data with UAV visual detection results, enabling reliable distinction of visually identical UGVs without physical modifications.

- A decision-level method to integrate the association results and achieve improved accuracy and noise robustness, with evaluation under comprehensive simulations with diverse motion patterns and noise scenarios.

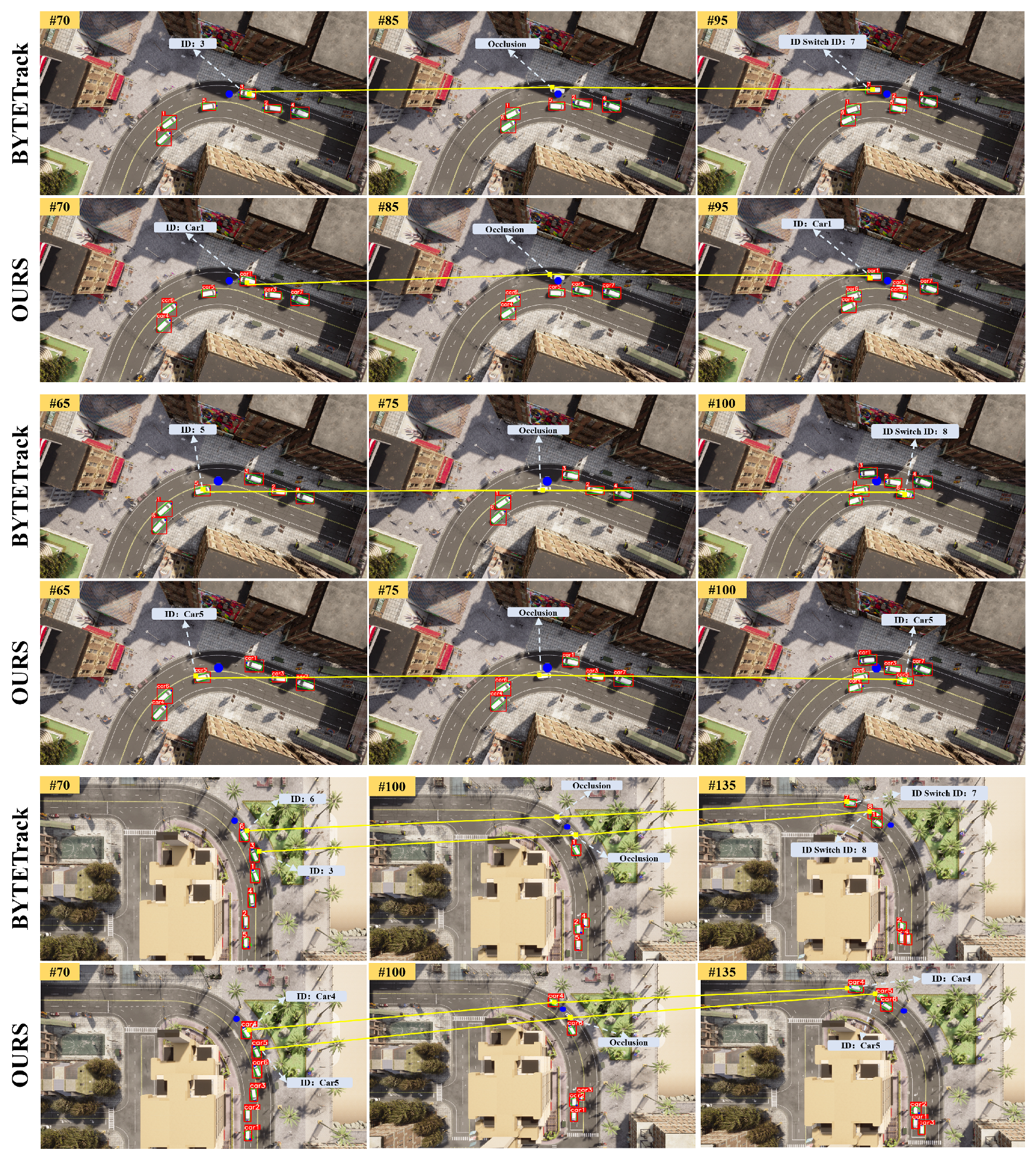

- An enhanced multi-object tracking architecture that reduces ID-switch rates utilizing the above sensor-visual association results, which remains effective in occlusion scenarios.

2. Related Work

2.1. Target Identification

2.2. Multi-Target Association

3. Methods

3.1. Overall Framework

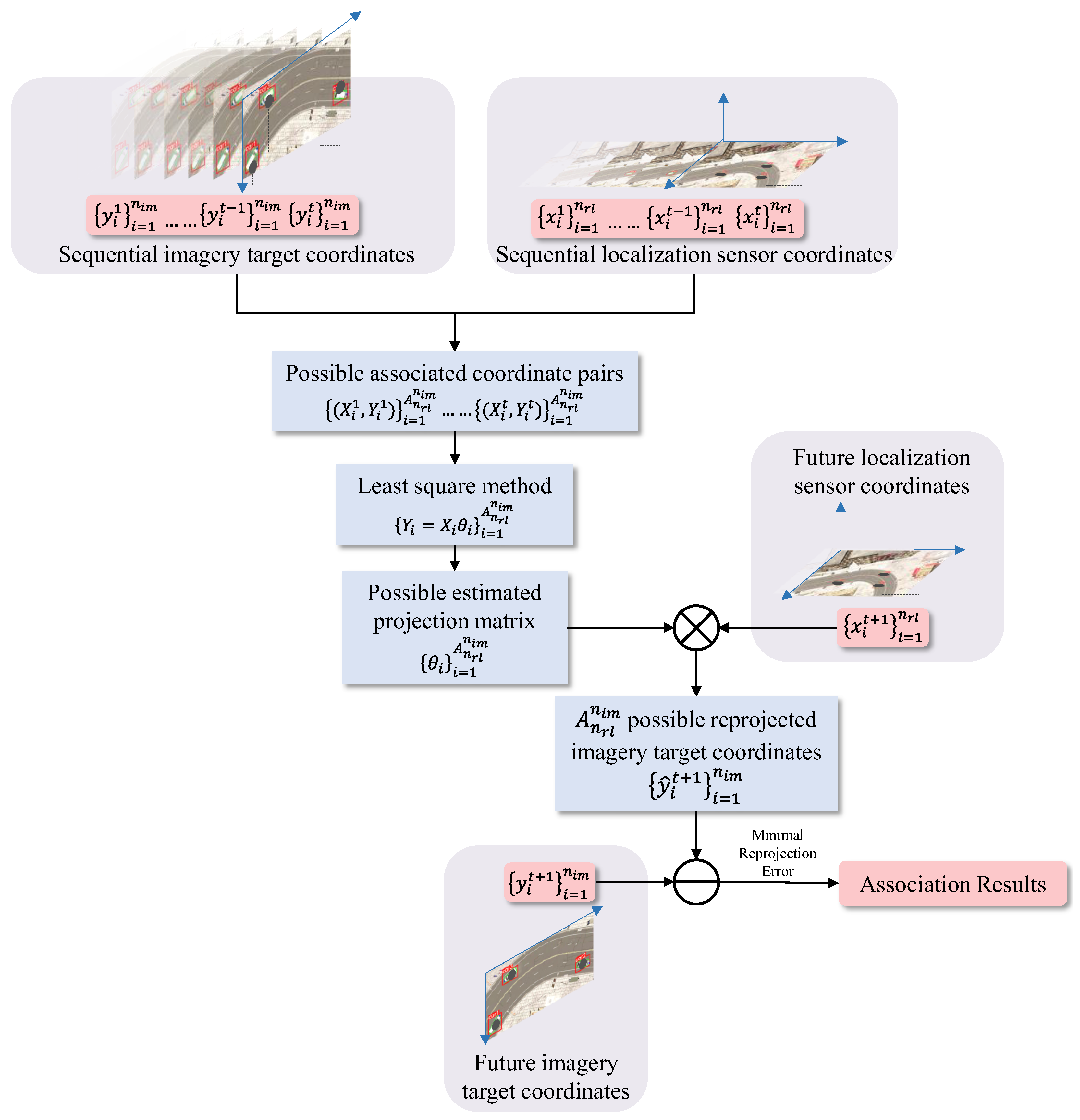

3.2. Projection-Based Association Method

3.3. Topology-Based Association Method

3.4. Decision-Level Fusion Based on Dempster–Shafer Method

3.5. Enhanced Multi-Object Tracking Process

| Algorithm 1: Enhanced multi-object tracking process based on BYTETrack. (The lines 7–12 and 15–22 are blue to indicate newly proposed processes based on BYTETrack.) |

| Input: Video sequence V, oriented object detector , orientation classifier , |

| sensor data |

| Output: Video tracks T with associated ID |

| 1. Initialize tracks and sensor buffer |

| 2. for each frame do |

| 3. Oriented object detection: |

| 4. Orientation classification: |

| 5. Store sequential sensor data: |

| 6. Split into by threshold |

| 7. |

| 8. |

| 9. |

| 10. for each track do |

| 11. |

| 12. end for |

| 13. |

| 14. Update matched tracks: |

| 15. for each do |

| 16. |

| 17. |

| 18. if with then |

| 19. Update unmatched track t with unmatched detection d |

| 20. , |

| 21. end if |

| 22. end for |

| 23. Prune lost tracks () |

| 24. Init new tracks for with |

| 25. end for |

| 26. return T with ID association results |

4. Experiments

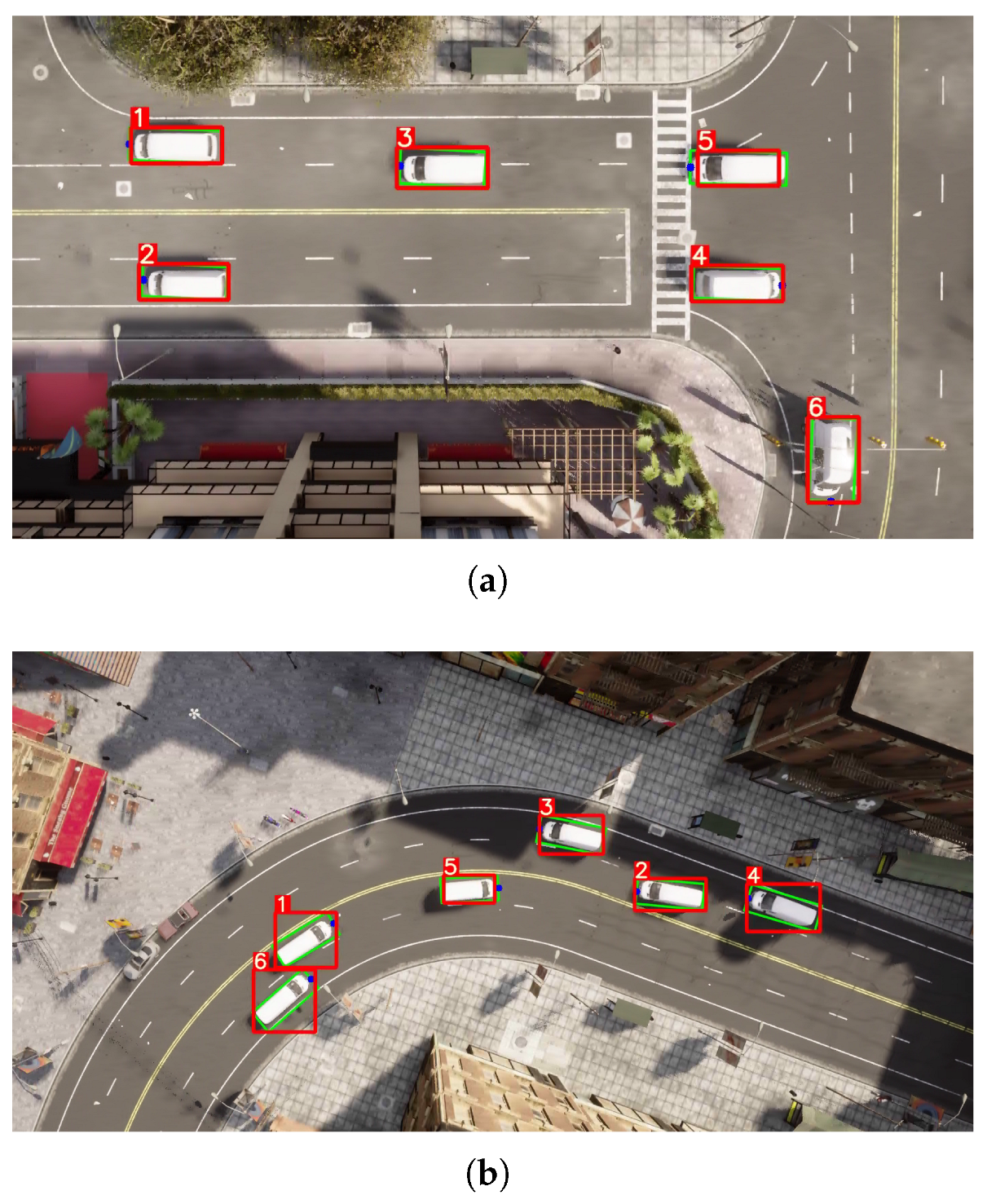

4.1. Simulation Experiment

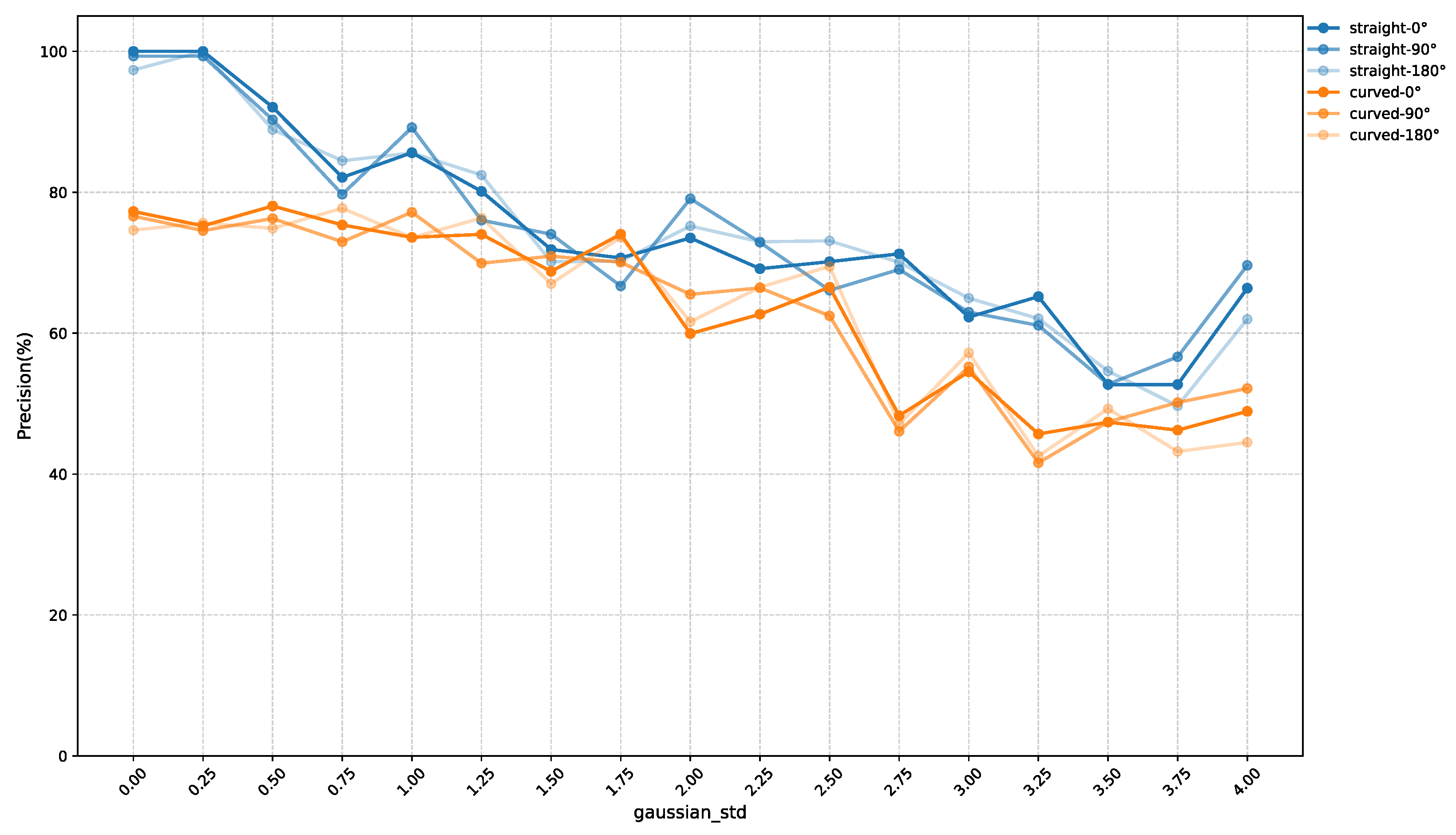

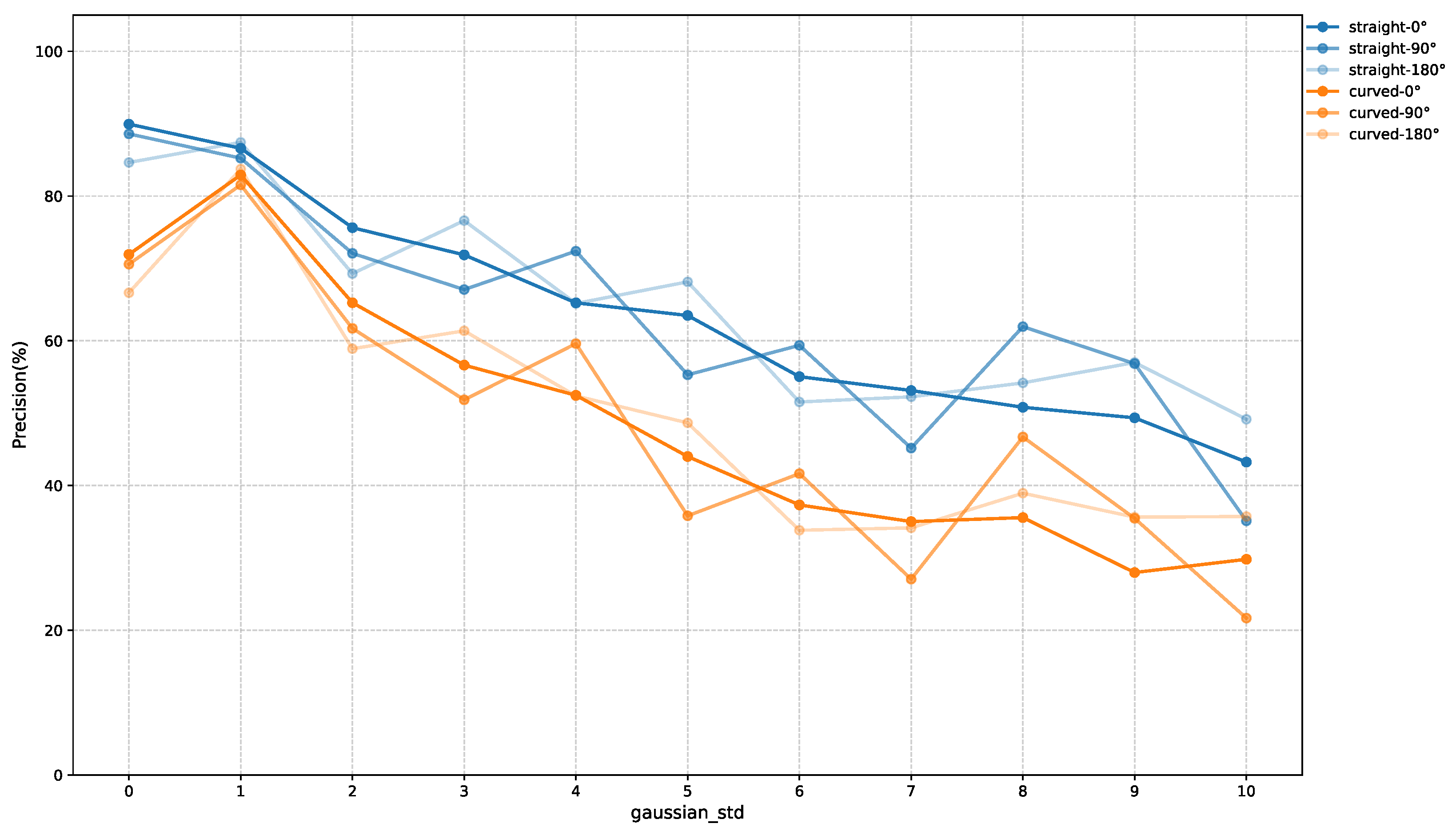

4.1.1. Association Experiments Under Different Conditions

4.1.2. Association Comparison with TTS Method

4.1.3. Association Experiments Under False Detection Condition

4.1.4. Enhanced Tracking Experiments Under Occlusion Conditions

4.2. Physical Experiments

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Elmokadem, T.; Savkin, A.V. Towards Fully Autonomous UAVs: A Survey. Sensors 2021, 21, 6223. [Google Scholar] [CrossRef]

- Linker Criollo, C.; Mena-Arciniega, S.; Xing, S. Classification, Military Applications, and Opportunities of Unmanned Aerial Vehicles. Aviation 2024, 28, 115–127. [Google Scholar] [CrossRef]

- Du, S.; Zhong, G.; Wang, F.; Pang, B.; Zhang, H.; Jiao, Q. Safety Risk Modelling and Assessment of Civil Unmanned Aircraft System Operations: A Comprehensive Review. Drones 2024, 8, 354. [Google Scholar] [CrossRef]

- Tang, J.; Duan, H.; Lao, S. Swarm Intelligence Algorithms for Multiple Unmanned Aerial Vehicles Collaboration: A Comprehensive Review. Artif. Intell. Rev. 2023, 56, 4295–4327. [Google Scholar] [CrossRef]

- Zhou, C.; Li, J.; Shi, M.; Wu, T. Multi-robot path planning algorithm for collaborative mapping under communication constraints. Drones 2024, 8, 493. [Google Scholar] [CrossRef]

- Ma, T.; Lu, P.; Deng, F.; Geng, K. Air–Ground Collaborative Multi-Target Detection Task Assignment and Path Planning Optimization. Drones 2024, 8, 110. [Google Scholar] [CrossRef]

- Minaeian, S.; Liu, J.; Son, Y.-J. Vision-based target detection and localization via a team of cooperative UAV and UGVs. IEEE Trans. Syst. Man Cybern. Syst. 2016, 46, 1005–1016. [Google Scholar] [CrossRef]

- Wang, M.; Li, R.; Jing, F.; Gao, M. Multi-UAV Assisted Air–Ground Collaborative MEC System: DRL-Based Joint Task Offloading and Resource Allocation and 3D UAV Trajectory Optimization. Drones 2024, 8, 510. [Google Scholar] [CrossRef]

- Tan, Q.; Yang, X.; Qiu, C.; Liu, W.; Li, Y.; Zou, Z.; Huang, J. Graph-based target association for multi-drone collaborative perception under imperfect detection conditions. Drones 2025, 9, 300. [Google Scholar] [CrossRef]

- Olson, E. AprilTag: A Robust and Flexible Visual Fiducial System. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 3400–3407. [Google Scholar] [CrossRef]

- Wang, J.; Olson, E. AprilTag 2: Efficient and Robust Fiducial Detection. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Republic of Korea, 9–14 October 2016; pp. 4193–4198. [Google Scholar] [CrossRef]

- Garrido-Jurado, S.; Muñoz-Salinas, R.; Madrid-Cuevas, F.J.; Marín-Jiménez, M.J. Automatic Generation and Detection of Highly Reliable Fiducial Markers Under Occlusion. Pattern Recognit. 2014, 47, 2280–2292. [Google Scholar] [CrossRef]

- Wojke, N.; Bewley, A.; Paulus, D. Simple Online and Realtime Tracking with a Deep Association Metric. In Proceedings of the 2017 IEEE International Conference on Image Processing (ICIP), Beijing, China, 17–20 September 2017; pp. 3645–3649. [Google Scholar] [CrossRef]

- Kuhn, H.W. The Hungarian Method for the Assignment Problem. Nav. Res. Logist. Q. 1955, 2, 83–97. [Google Scholar] [CrossRef]

- Yager, R.R. On the Dempster-Shafer Framework and New Combination Rules. Inf. Sci. 1987, 41, 93–137. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You Only Look Once: Unified, Real-Time Object Detection. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 779–788. [Google Scholar] [CrossRef]

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R. Mask R-CNN. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2961–2969. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar] [CrossRef]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 11–17 October 2021; pp. 10012–10022. [Google Scholar] [CrossRef]

- Caron, M.; Touvron, H.; Misra, I.; Jégou, H.; Mairal, J.; Bojanowski, P.; Joulin, A. Emerging Properties in Self-Supervised Vision Transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 11–17 October 2021; pp. 9650–9660. [Google Scholar] [CrossRef]

- Liu, D.; Zhao, L.; Wang, Y.; Kato, J. Learn from Each Other to Classify Better: Cross-Layer Mutual Attention Learning for Fine-Grained Visual Classification. Pattern Recognit. 2023, 140, 109550. [Google Scholar] [CrossRef]

- Yang, J.; He, K.; Zhang, J.; Li, J.; Chen, Q.; Wei, X.; Sheng, H. A Binocular Vision-Assisted Method for the Accurate Positioning and Landing of Quadrotor UAVs. Drones 2025, 9, 35. [Google Scholar] [CrossRef]

- Bewley, A.; Ge, Z.; Ott, L.; Ramos, F.; Upcroft, B. Simple Online and Realtime Tracking. In Proceedings of the 2016 IEEE International Conference on Image Processing (ICIP), Phoenix, AZ, USA, 25–28 September 2016; pp. 3464–3468. [Google Scholar] [CrossRef]

- Aharon, N.; Orfaig, R.; Bobrovsky, B.-Z. BoT-SORT: Robust Associations for Multi-Pedestrian Tracking. arXiv 2022, arXiv:2206.14651. [Google Scholar] [CrossRef]

- Du, Y.; Zhao, Z.; Song, Y.; Zhao, Y.; Su, F.; Gong, T.; Meng, H. StrongSORT: Make DeepSORT Great Again. IEEE Trans. Multimed. 2023, 25, 8725–8737. [Google Scholar] [CrossRef]

- Zhang, Y.; Sun, P.; Jiang, Y.; Yu, D.; Weng, F.; Yuan, Z.; Luo, P.; Liu, W.; Wang, X. ByteTrack: Multi-Object Tracking by Associating Every Detection Box. In Proceedings of the European Conference on Computer Vision (ECCV), Tel Aviv, Israel, 23–27 October 2022; pp. 1–21. [Google Scholar] [CrossRef]

- Zhang, Y.; Wang, C.; Wang, X.; Zeng, W.; Liu, W. FairMOT: On the Fairness of Detection and Re-Identification in Multiple Object Tracking. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 11–17 October 2021; pp. 5689–5698. [Google Scholar] [CrossRef]

- Sun, P.; Cao, J.; Jiang, Y.; Zhang, R.; Xie, E.; Yuan, Z.; Wang, C.; Luo, P. TransTrack: Multiple Object Tracking with Transformer. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; pp. 8122–8130. [Google Scholar] [CrossRef]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-End Object Detection with Transformers. In Proceedings of the European Conference on Computer Vision (ECCV), Glasgow, UK, 23–28 August 2020; pp. 213–229. [Google Scholar] [CrossRef]

- Sun, P.; Cao, J.; Jiang, Y.; Yuan, Z.; Bai, S.; Kitani, K.; Luo, P. DanceTrack: Multi-object tracking in uniform appearance and diverse motion. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 18–24 June 2022; pp. 20993–21002. [Google Scholar]

- Yang, F.; Odashima, S.; Masui, S.; Jiang, S. Hard to track objects with irregular motions and similar appearances? make it easier by buffering the matching space. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 2–7 January 2023; pp. 4799–4808. [Google Scholar]

- de Oliveira, I.O.; Fonseca, K.V.O.; Minetto, R. A Two-Stream Siamese Neural Network for Vehicle Re-Identification by Using Non-Overlapping Cameras. In Proceedings of the 2019 IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 22–25 September 2019; pp. 669–673. [Google Scholar] [CrossRef]

- Yang, S.; Ding, F.; Li, P.; Hu, S. Distributed Multi-Camera Multi-Target Association for Real-Time Tracking. Sci. Rep. 2022, 12, 11052. [Google Scholar] [CrossRef]

- Yue, S.; Yue, W.; Shu, W.; Xiu, S. Fuzzy Data Association Based on Target Topology of Reference. J. Natl. Univ. Def. Technol. 2006, 28, 105–109. [Google Scholar]

- Hao, Z.; Chula, S. Algorithm of Multi-Feature Track Association Based on Topology. Command. Inf. Syst. Technol. 2020, 11, 83–88. [Google Scholar]

- Li, X.; Wu, L.; Niu, Y.; Ma, A. Multi-Target Association for UAVs Based on Triangular Topological Sequence. Drones 2022, 6, 119. [Google Scholar] [CrossRef]

- Li, X.; Wu, L.; Niu, Y.; Jia, S.; Lin, B. Topological Similarity-Based Multi-Target Correlation Localization for Aerial-Ground Systems. Guid. Navig. Control 2021, 1, 2150016. [Google Scholar] [CrossRef]

- Han, R.; Feng, W.; Zhang, Y.; Zhao, J.; Wang, S. Multiple Human Association and Tracking from Egocentric and Complementary Top Views. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 44, 5225–5242. [Google Scholar] [CrossRef] [PubMed]

- Tian, W.; Wang, Y.; Shan, X.; Yang, J. Track-to-Track Association for Biased Data Based on the Reference Topology Feature. IEEE Signal Process. Lett. 2014, 21, 449–453. [Google Scholar] [CrossRef]

| Method | Latency (ms) |

|---|---|

| Projection-based | 148.49 |

| Topology-based | 34.48 |

| TTS | 22.52 |

| Dempster–Shafer Fusion | 0.27 |

| Method | Precision | FPER |

|---|---|---|

| Projection-based | 99.07% | 100.00% |

| Topology-based | 96.53% | 98.53% |

| TTS | 88.43% | 45.71% |

| Method | Precision | FPER |

|---|---|---|

| Projection-based | 74.20% | 75.00% |

| TTS | 19.50% | 82.00% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chen, Y.; Du, B.; Wu, T. Identification and Association of Multiple Visually Identical Targets for Air–Ground Cooperative Systems. Drones 2025, 9, 612. https://doi.org/10.3390/drones9090612

Chen Y, Du B, Wu T. Identification and Association of Multiple Visually Identical Targets for Air–Ground Cooperative Systems. Drones. 2025; 9(9):612. https://doi.org/10.3390/drones9090612

Chicago/Turabian StyleChen, Yang, Binhan Du, and Tao Wu. 2025. "Identification and Association of Multiple Visually Identical Targets for Air–Ground Cooperative Systems" Drones 9, no. 9: 612. https://doi.org/10.3390/drones9090612

APA StyleChen, Y., Du, B., & Wu, T. (2025). Identification and Association of Multiple Visually Identical Targets for Air–Ground Cooperative Systems. Drones, 9(9), 612. https://doi.org/10.3390/drones9090612