A Review on Drone-Based Data Solutions for Cereal Crops

Abstract

1. Introduction

2. Research Need

- An importance of drone-based data solutions for cereal crops to combat food insecurity,

- A historical overview of drone and sensor technologies,

- An inventory of methods and techniques used in various phases of drone data acquisition and processing for cereal crops monitoring and yield estimation, and

- A series of research issues and recommendations for potential future research directions.

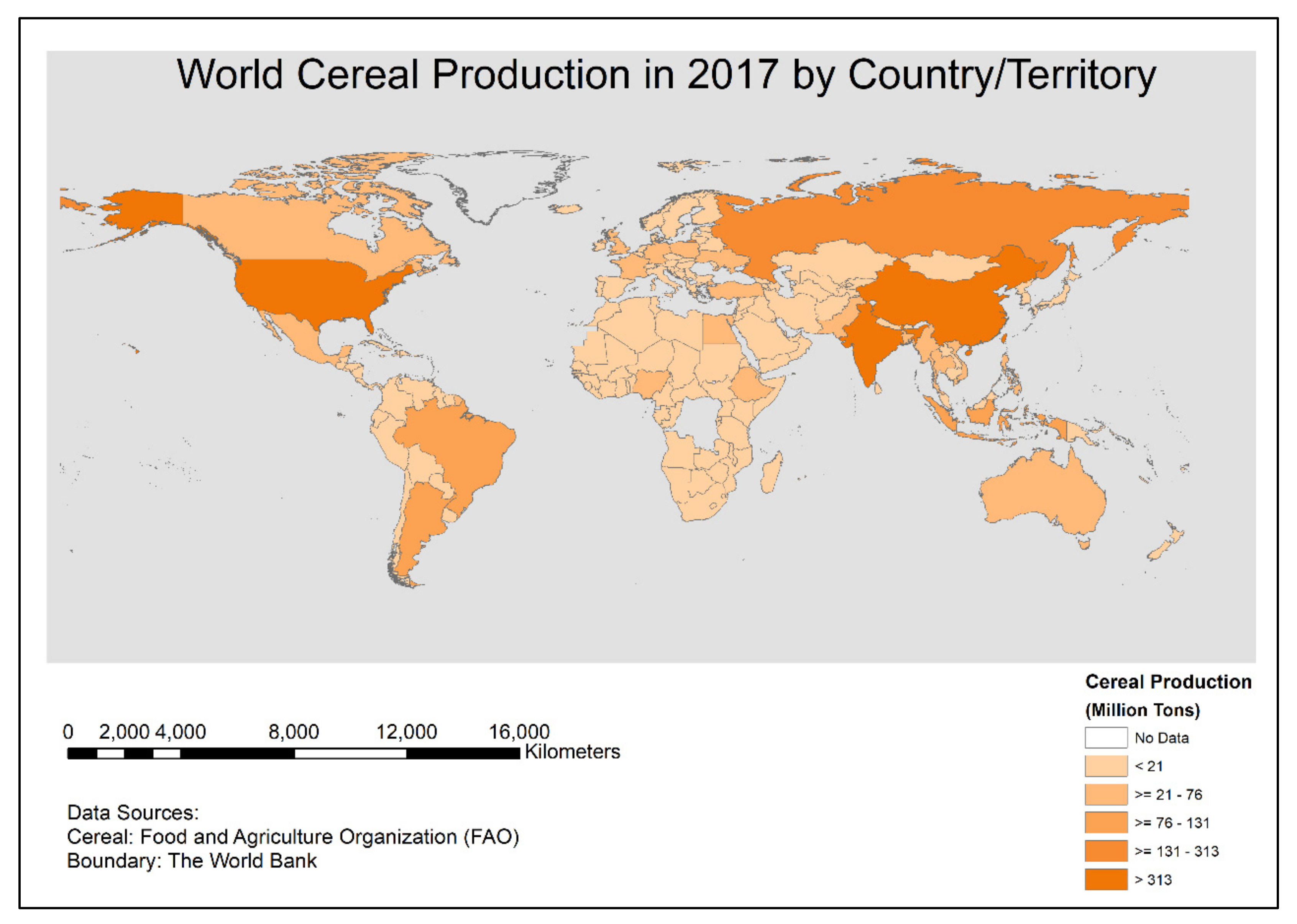

3. Importance of Cereal Crops for Ensuring Food Security

4. Impact of the COVID-19 Pandemic on Food Security

5. State-of-the-Art of Drone and Sensor Technologies

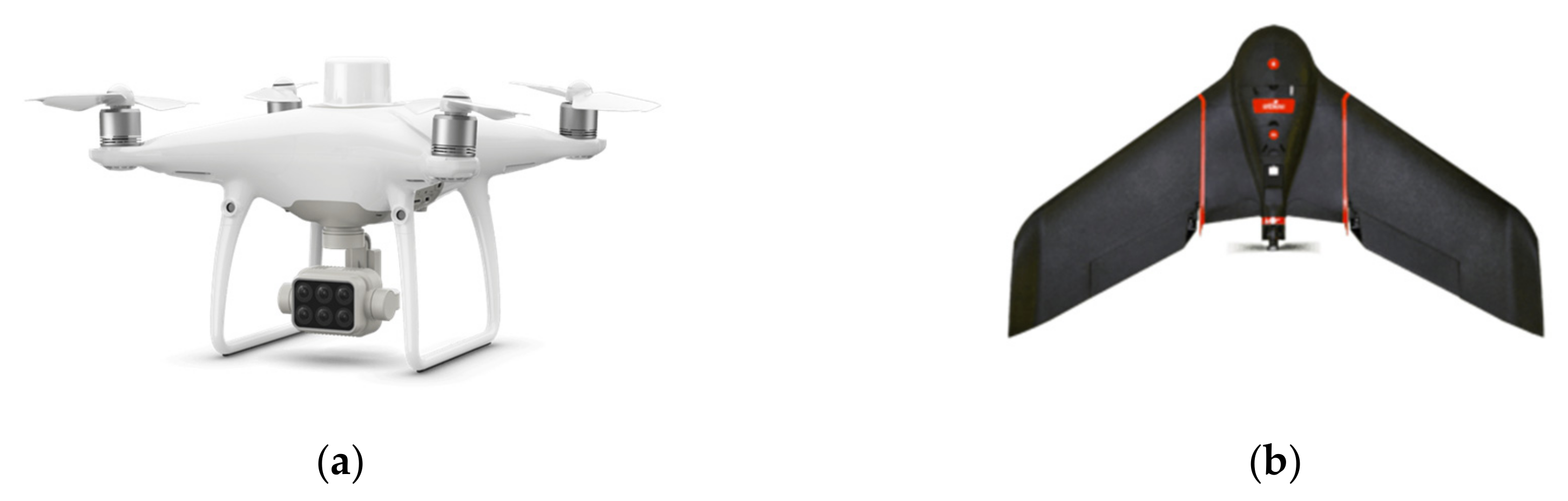

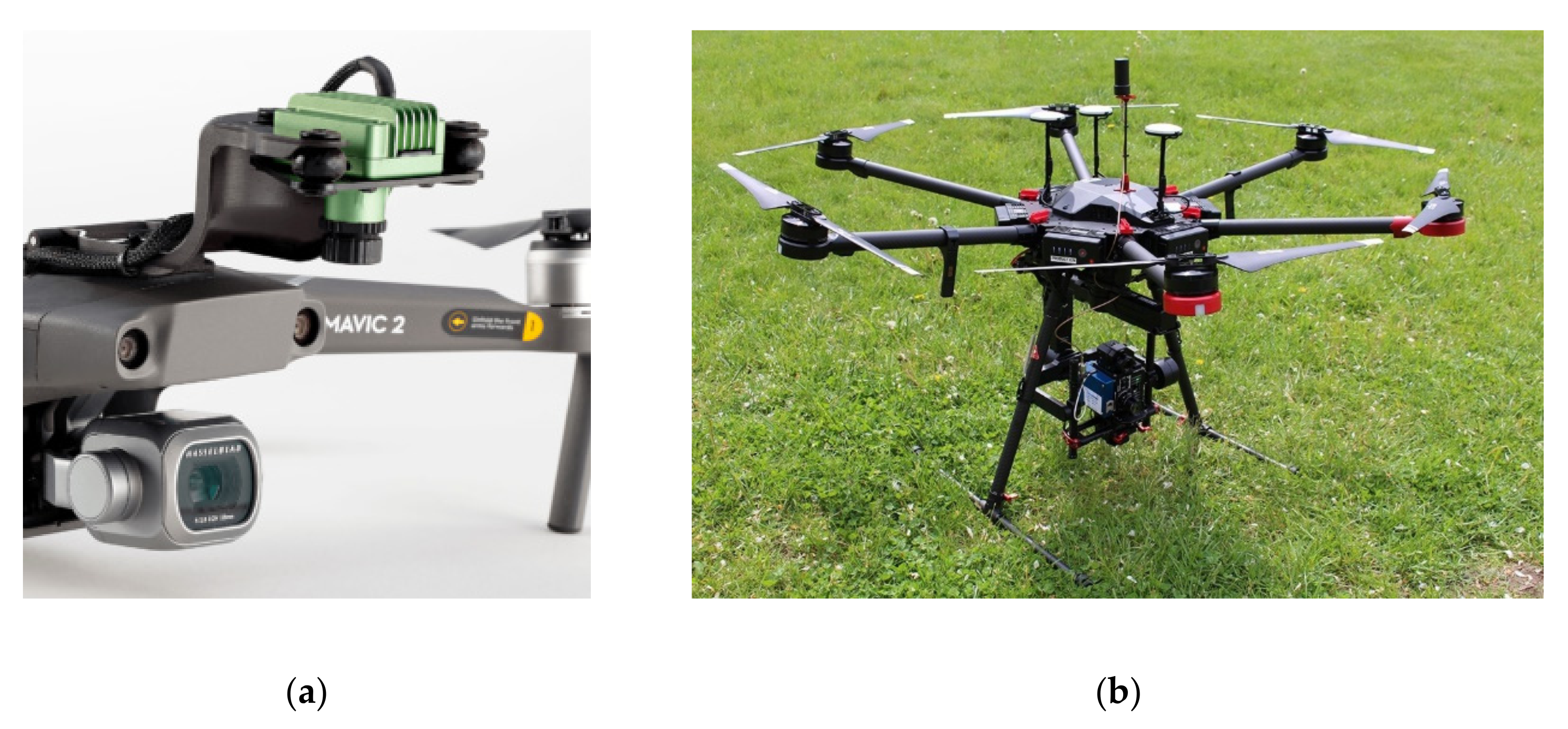

5.1. Drone Types and Categories

5.2. Applications of Drone Technologies in Geospatial Engineering.

5.3. Suitable Sensors for Vegetation Scouting

6. Drone-Based Data Solutions for Cereal Crops

6.1. Use of Drones for Cereal Crop Scouting

6.1.1. Crop Monitoring

6.1.2. Biomass Estimation

6.1.3. Yield Estimation

6.1.4. Fertilizer, Weeds, Pest, and Water-Stress Assessment

6.2. Crop Characteristics and Modeling Methods

6.2.1. Correlating Crop Characteristics with Remote Sensing Data

6.2.2. Comparative Analysis of Cereal Crop Modeling with Machine Learning Methods

6.3. Opportunities and Challenges

6.3.1. Opportunities

- Ultra-high spatial resolution

- Extremely high temporal resolution

- Cloud-free data/images

- Potential for high-density 3D point cloud

- High potential for citizens participation

- Scalability with relatively low costs operation

- The emergence of cloud-based data processing platforms

- The fair and accurate payout for crop insurance

6.3.2. Challenges

- Limited payload

- Low spectral resolution for low-cost sensors and high cost of hyperspectral sensors

- Sensitivity to atmospheric conditions

- Limited flight endurance

- The high initial cost of ownership

- Requirement of customized training to the farmers

- Lack of technical knowledge for repair and maintenance, and unavailability of parts

7. Conclusions and Outlook

- Drone-based data solutions have produced promising results in crop biomass and yield estimation. However, they are applicable to farm scales only due to logistics, cost, and big amount of data acquisition. Furthermore, drone technologies are still considered high-tech in farmers’ communities, especially in low-income countries. Satellite remote sensing images are better suited for homogeneous agriculture practice where larger farm sizes exist. While satellite remote sensing data are not capable to produce high accuracy yield prediction in heterogeneous agriculture practice, the drone-based solutions apply to local scales only. Hence, there is a requirement for a robust system and method for estimating crop yield in heterogenous agriculture practices with smaller farm sizes, and yet they should be scalable to larger areas. A framework is required for integrating the information from drones at local scales to satellite-based data which will make yield prediction at larger scales possible [182]. This framework could reduce the requirement for in-situ data. For biomass estimation of mangrove forest, up to 37% reduction in in-situ data was observed than what is normally required for direct calibration-validation using satellite data [55]). Sentinel-1 SAR (https://sentinel.esa.int/web/sentinel/user-guides/sentinel-1-sar/overview) and upcoming NASA-ISRO SAR mission (NISAR) (https://nisar.jpl.nasa.gov/) can provide a backscatter of up to 10 m spatial resolution. This will also help to get weather independent data (cloud during the monsoon crops like rice and maize; and fog and haze during the winter crops) to upscale the results.

- Citizen science has been considered as an alternative and cost-effective way to acquire in-situ data [183]. Citizen science-based data can be utilized to calibrate and validate the models. The usability of smartphones and low-cost sensors can be explored to increase the wider acceptance of the system in low-income countries [184,185]. Furthermore, the utilization of drones by local farmers themselves will allow cost reduction, enhance technical know-how, increase acceptance by the farmers’ communities, and make the system sustainable. Nevertheless, data privacy, data governance, and the degree to which communities would require external support, training, and funding for drone operation also need to be considered carefully.

- It is usually not possible to regulate multiple variables through linear regression models. Multiple variables can be ingested to machine learning techniques; however, most studies have been using developed vegetation indices only. Additional research can ingest multiple variables (e.g., agro-environmental conditions) in the model. Internet of things (IoT) based low-cost sensors can be employed to automate the system and to reduce the cost of data acquisition.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Ehrlich, P.R.; Harte, J. To feed the world in 2050 will require a global revolution. Proc. Natl. Acad. Sci. USA 2015, 112, 14743–14744. [Google Scholar] [CrossRef] [PubMed]

- UN. Sustainable Development Goals. Available online: https://www.un.org/sustainabledevelopment/sustainable-development-goals/ (accessed on 1 October 2019).

- FAO; IFAD; UNICEF; WFP; WHO. The State of Food Security and Nutrition in the World. Safeguarding against Economic Slowdowns and Downturns; FAO: Rome, Italy, 2019. [Google Scholar]

- Chakraborty, S.; Newton, A.C. Climate change, plant diseases and food security: An overview. Plant Pathol. 2011, 60, 2–14. [Google Scholar] [CrossRef]

- Godfray, H.C.J.; Beddington, J.R.; Crute, I.R.; Haddad, L.; Lawrence, D.; Muir, J.F.; Pretty, J.; Robinson, S.; Thomas, S.M.; Toulmin, C. Food security: The challenge of feeding 9 billion people. Science (80) 2010, 327, 812–818. [Google Scholar] [CrossRef] [PubMed]

- Alexandratos, N.; Bruinsma, J. World Agriculture towards 2030/2050: The 2012 Revision ESA Working Paper No. 12-03; FAO: Rome, Italy, 2012. [Google Scholar]

- Goff, S.A.; Salmeron, J.M. Back to the future of cereals. Sci. Am. 2004, 291, 42–49. [Google Scholar] [CrossRef]

- Gower, S.T.; Kucharik, C.J.; Norman, J.M. Direct and indirect estimation of leaf area index, f(APAR), and net primary production of terrestrial ecosystems. Remote Sens. Environ. 1999, 70, 29–51. [Google Scholar] [CrossRef]

- Son, N.T.; Chen, C.F.; Chen, C.R.; Duc, H.N.; Chang, L.Y. A phenology-based classification of time-series MODIS data for rice crop monitoring in Mekong Delta, Vietnam. Remote Sens. 2013, 6, 135–156. [Google Scholar] [CrossRef]

- Wijesingha, J.S.J.; Deshapriya, N.L.; Samarakoon, L. Rice crop monitoring and yield assessment with MODIS 250m gridded vegetation product: A case study in Sa Kaeo Province, Thailand. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2015, XL-7/W3. [Google Scholar] [CrossRef]

- Torbick, N.; Chowdhury, D.; Salas, W.; Qi, J. Monitoring rice agriculture across myanmar using time series Sentinel-1 assisted by Landsat-8 and PALSAR-2. Remote Sens. 2017, 9, 119. [Google Scholar] [CrossRef]

- Pratihast, A.K.; DeVries, B.; Avitabile, V.; de Bruin, S.; Kooistra, L.; Tekle, M.; Herold, M. Combining satellite data and community-based observations for forest monitoring. Forests 2014, 5, 2464–2489. [Google Scholar] [CrossRef]

- Family Farming Knowledge Platform—Smallholders Dataportrait. Available online: http://www.fao.org/family-farming/data-sources/dataportrait/farm-size/en/ (accessed on 3 April 2020).

- Park, S.; Im, J.; Park, S.; Yoo, C.; Han, H.; Rhee, J. Classification and mapping of paddy rice by combining Landsat and SAR time series data. Remote Sens. 2018, 10, 447. [Google Scholar] [CrossRef]

- Vargas-Ramírez, N.; Paneque-Gálvez, J. The Global Emergence of Community Drones (2012–2017). Drones 2019, 3, 76. [Google Scholar] [CrossRef]

- González-Jorge, H.; Martínez-Sánchez, J.; Bueno, M.; Arias, P. Unmanned Aerial Systems for Civil Applications: A Review. Drones 2017, 1, 2. [Google Scholar] [CrossRef]

- Bendig, J.; Bolten, A.; Bennertz, S.; Broscheit, J.; Eichfuss, S.; Bareth, G. Estimating biomass of barley using crop surface models (CSMs) derived from UAV-based RGB imaging. Remote Sens. 2014, 6, 10395–10412. [Google Scholar] [CrossRef]

- Swain, K.C.; Thomson, S.J.; Jayasuriya, H.P.W. Adoption of an unmanned helicopter for low-altitude remote sensing to estimate yield and total biomass of a rice crop. Trans. ASABE 2010, 53, 21–27. [Google Scholar] [CrossRef]

- Stöcker, C.; Bennett, R.; Nex, F.; Gerke, M.; Zevenbergen, J. Review of the current state of UAV regulations. Remote Sens. 2017, 9, 459. [Google Scholar] [CrossRef]

- Mulla, D.J. Twenty five years of remote sensing in precision agriculture: Key advances and remaining knowledge gaps. Biosyst. Eng. 2013, 114, 358–371. [Google Scholar] [CrossRef]

- Ahmad, L.; Mahdi, S.S. Satellite Farming: An Information and Technology Based Agriculture; Springer International Publishing: Cham, Switzerland, 2018. [Google Scholar]

- Tsouros, D.C.; Bibi, S.; Sarigiannidis, P.G. A review on UAV-based applications for precision agriculture. Information 2019, 10, 349. [Google Scholar] [CrossRef]

- Liakos, K.G.; Busato, P.; Moshou, D.; Pearson, S.; Bochtis, D. Machine learning in agriculture: A review. Sensors 2018, 18, 1–29. [Google Scholar] [CrossRef]

- Elsevier Scopus Search. Available online: https://www.scopus.com/sources (accessed on 1 July 2020).

- Web of Science Group Master Journal List. Available online: https://mjl.clarivate.com/search-results (accessed on 1 July 2020).

- Colomina, I.; Molina, P. Unmanned aerial systems for photogrammetry and remote sensing: A review. ISPRS J. Photogramm. Remote Sens. 2014, 92, 79–97. [Google Scholar] [CrossRef]

- Sharma, L.K.; Bali, S.K. A review of methods to improve nitrogen use efficiency in agriculture. Sustainability 2018, 10, 51. [Google Scholar] [CrossRef]

- Messina, G.; Modica, G. Applications of UAV thermal imagery in precision agriculture: State of the art and future research outlook. Remote Sens. 2020, 12, 1491. [Google Scholar] [CrossRef]

- Boursianis, A.D.; Papadopoulou, M.S.; Diamantoulakis, P.; Liopa-Tsakalidi, A.; Barouchas, P.; Salahas, G.; Karagiannidis, G.; Wan, S.; Goudos, S.K. Internet of Things (IoT) and Agricultural Unmanned Aerial Vehicles (UAVs) in smart farming: A comprehensive review. Internet Things 2020, 100187. [Google Scholar] [CrossRef]

- Hassler, S.C.; Baysal-Gurel, F. Unmanned aircraft system (UAS) technology and applications in agriculture. Agronomy 2019, 9, 618. [Google Scholar] [CrossRef]

- Barbedo, J.G.A. A Review on the Use of Unmanned Aerial Vehicles and Imaging Sensors for Monitoring and Assessing Plant Stresses. Drones 2019, 3, 1–27. [Google Scholar] [CrossRef]

- Radoglou-Grammatikis, P.; Sarigiannidis, P.; Lagkas, T.; Moscholios, I. A compilation of UAV applications for precision agriculture. Comput. Netw. 2020, 172, 107148. [Google Scholar] [CrossRef]

- Awika, J.M. Major cereal grains production and use around the world. Am. Chem. Soc. 2011, 1089, 1–13. [Google Scholar]

- McKevith, B. Nutritional aspects of cereals. Nutr. Bull. 2004, 29, 111–142. [Google Scholar] [CrossRef]

- FAO. World Agriculture: Towards 2015/2030; FAO: Rome, Italy, 2002. [Google Scholar]

- FAO. FAOSTAT. Available online: http://www.fao.org/faostat/en/#data/QC (accessed on 7 August 2020).

- CIMMYT. The Cereals Imperative of Future Food Systems. Available online: https://www.cimmyt.org/news/the-cereals-imperative-of-future-food-systems/ (accessed on 7 August 2020).

- FAO. Novel Coronavirus (COVID-19). Available online: http://www.fao.org/2019-ncov/q-and-a/impact-on-food-and-agriculture/en/ (accessed on 17 June 2020).

- Jámbor, A.; Czine, P.; Balogh, P. The impact of the coronavirus on agriculture: First evidence based on global newspapers. Sustainability 2020, 12, 4535. [Google Scholar] [CrossRef]

- Poudel, P.B.; Poudel, M.R.; Gautam, A.; Phuyal, S.; Tiwari, C.K. COVID-19 and its Global Impact on Food and Agriculture. J. Biol. Today’s World 2020, 9, 7–10. [Google Scholar]

- World Bank. Food Security and COVID-19. Available online: https://www.worldbank.org/en/topic/agriculture/brief/food-security-and-covid-19 (accessed on 17 June 2020).

- WFP. Risk of Hunger Pandemic as Coronavirus Set to Almost Double Acute Hunger by End of 2020. Available online: https://insight.wfp.org/covid-19-will-almost-double-people-in-acute-hunger-by-end-of-2020-59df0c4a8072 (accessed on 17 June 2020).

- Hobbs, J.E. Food supply chains during the COVID-19 pandemic. Can. J. Agric. Econ. 2020, 1–6. [Google Scholar] [CrossRef]

- FSIN. 2020 Global Report on Food Crises: Joint Analysis for Better Decisions; FSIN: Saskatoon, SK, Canada, 2020. [Google Scholar]

- Samberg, L.H.; Gerber, J.S.; Ramankutty, N.; Herrero, M.; West, P.C. Subnational distribution of average farm size and smallholder contributions to global food production. Environ. Res. Lett. 2016, 11, 1–12. [Google Scholar] [CrossRef]

- Cranfield, J.; Spencer, H.; Blandon, J. The Effect of Attitudinal and Sociodemographic Factors on the Likelihood of Buying Locally Produced Food. Agribusiness 2012, 28, 205–221. [Google Scholar] [CrossRef]

- Béné, C. Resilience of local food systems and links to food security—A review of some important concepts in the context of COVID-19 and other shocks. Food Secur. 2020. [Google Scholar] [CrossRef]

- Nonami, K. Prospect and Recent Research & Development for Civil Use Autonomous Unmanned Aircraft as UAV and MAV. J. Syst. Des. Dyn. 2007, 1, 120–128. [Google Scholar]

- Turner, D.; Lucieer, A.; Watson, C. An automated technique for generating georectified mosaics from ultra-high resolution Unmanned Aerial Vehicle (UAV) imagery, based on Structure from Motion (SFM) point clouds. Remote Sens. 2012, 4, 1392–1410. [Google Scholar] [CrossRef]

- Koeva, M.; Bennett, R.; Gerke, M.; Crommelinck, S.; Stöcker, C.; Crompvoets, J.; Ho, S.; Schwering, A.; Chipofya, M.; Schultz, C.; et al. Towards innovative geospatial tools for fit-for-purpose land rights mapping. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, 42, 37–43. [Google Scholar] [CrossRef]

- Kamarudin, S.S.; Tahar, K.N. Assessment on UAV onboard positioning in ground control point establishment. In Proceedings of the 2016 IEEE 12th International Colloquium on Signal Processing and its Applications, CSPA 2016, Melaka, Malaysia, 4–6 March 2016; pp. 210–215. [Google Scholar]

- Jhan, J.P.; Rau, J.Y.; Haala, N. Robust and adaptive band-to-band image transform of UAS miniature multi-lens multispectral camera. ISPRS J. Photogramm. Remote Sens. 2018, 137, 47–60. [Google Scholar] [CrossRef]

- Nahon, A.; Molina, P.; Blázquez, M.; Simeon, J.; Capo, S.; Ferrero, C. Corridor mapping of sandy coastal foredunes with UAS photogrammetry and mobile laser scanning. Remote Sens. 2019, 11, 1352. [Google Scholar] [CrossRef]

- Nex, F.; Duarte, D.; Steenbeek, A.; Kerle, N. Towards real-time building damage mapping with low-cost UAV solutions. Remote Sens. 2019, 11, 287. [Google Scholar] [CrossRef]

- Wang, D.; Wan, B.; Liu, J.; Su, Y.; Guo, Q.; Qiu, P.; Wu, X. Estimating aboveground biomass of the mangrove forests on northeast Hainan Island in China using an upscaling method from field plots, UAV-LiDAR data and Sentinel-2 imagery. Int. J. Appl. Earth Obs. Geoinf. 2020, 85, 101986. [Google Scholar] [CrossRef]

- Fujimoto, A.; Haga, C.; Matsui, T.; Machimura, T.; Hayashi, K.; Sugita, S.; Takagi, H. An end to end process development for UAV-SfM based forest monitoring: Individual tree detection, species classification and carbon dynamics simulation. Forests 2019, 10, 680. [Google Scholar] [CrossRef]

- Sandino, J.; Pegg, G.; Gonzalez, F.; Smith, G. Aerial mapping of forests affected by pathogens using UAVs, hyperspectral sensors, and artificial intelligence. Sensors 2018, 18, 944. [Google Scholar] [CrossRef] [PubMed]

- Shin, J.I.; Seo, W.W.; Kim, T.; Park, J.; Woo, C.S. Using UAV multispectral images for classification of forest burn severity-A case study of the 2019 Gangneung forest fire. Forests 2019, 10, 1025. [Google Scholar] [CrossRef]

- Gonzalez, L.F.; Montes, G.A.; Puig, E.; Johnson, S.; Mengersen, K.; Gaston, K.J. Unmanned aerial vehicles (UAVs) and artificial intelligence revolutionizing wildlife monitoring and conservation. Sensors 2016, 16, 97. [Google Scholar] [CrossRef] [PubMed]

- Muller, C.G.; Chilvers, B.L.; Barker, Z.; Barnsdale, K.P.; Battley, P.F.; French, R.K.; McCullough, J.; Samandari, F. Aerial VHF tracking of wildlife using an unmanned aerial vehicle (UAV): Comparing efficiency of yellow-eyed penguin (Megadyptes antipodes) nest location methods. Wildl. Res. 2019, 46, 145–153. [Google Scholar] [CrossRef]

- Jiménez López, J.; Mulero-Pázmány, M. Drones for Conservation in Protected Areas: Present and Future. Drones 2019, 3, 10. [Google Scholar] [CrossRef]

- Gašparović, M.; Zrinjski, M.; Barković, Đ.; Radočaj, D. An automatic method for weed mapping in oat fields based on UAV imagery. Comput. Electron. Agric. 2020, 173, 105385. [Google Scholar] [CrossRef]

- Su, J.; Liu, C.; Coombes, M.; Hu, X.; Wang, C.; Xu, X.; Li, Q.; Guo, L.; Chen, W.H. Wheat yellow rust monitoring by learning from multispectral UAV aerial imagery. Comput. Electron. Agric. 2018, 155, 157–166. [Google Scholar] [CrossRef]

- Zhang, X.; Han, L.; Dong, Y.; Shi, Y.; Huang, W.; Han, L.; González-Moreno, P.; Ma, H.; Ye, H.; Sobeih, T. A deep learning-based approach for automated yellow rust disease detection from high-resolution hyperspectral UAV images. Remote Sens. 2019, 11, 1554. [Google Scholar] [CrossRef]

- Fernández, E.; Gorchs, G.; Serrano, L. Use of consumer-grade cameras to assess wheat N status and grain yield. PLoS ONE 2019, 14, 18. [Google Scholar] [CrossRef]

- Niu, Y.; Zhang, L.; Zhang, H.; Han, W.; Peng, X. Estimating above-ground biomass of maize using features derived from UAV-based RGB imagery. Remote Sens. 2019, 11, 21. [Google Scholar] [CrossRef]

- Matese, A.; Di Gennaro, S.F. Practical applications of a multisensor UAV platform based on multispectral, thermal and RGB high resolution images in precision viticulture. Agriculture 2018, 8, 116. [Google Scholar] [CrossRef]

- Melville, B.; Lucieer, A.; Aryal, J. Classification of Lowland Native Grassland Communities Using Hyperspectral Unmanned Aircraft System (UAS) Imagery in the Tasmanian Midlands. Drones 2019, 3, 12. [Google Scholar] [CrossRef]

- Moharana, S.; Dutta, S. Spatial variability of chlorophyll and nitrogen content of rice from hyperspectral imagery. ISPRS J. Photogramm. Remote Sens. 2016, 122, 17–29. [Google Scholar] [CrossRef]

- López-Granados, F.; Torres-Sánchez, J.; De Castro, A.I.; Serrano-Pérez, A.; Mesas-Carrascosa, F.J.; Peña, J.M. Object-based early monitoring of a grass weed in a grass crop using high resolution UAV imagery. Agron. Sustain. Dev. 2016, 36. [Google Scholar] [CrossRef]

- Maimaitijiang, M.; Ghulam, A.; Sidike, P.; Hartling, S.; Maimaitiyiming, M.; Peterson, K.; Shavers, E.; Fishman, J.; Peterson, J.; Kadam, S.; et al. Unmanned Aerial System (UAS)-based phenotyping of soybean using multi-sensor data fusion and extreme learning machine. ISPRS J. Photogramm. Remote Sens. 2017, 134, 43–58. [Google Scholar] [CrossRef]

- Kalischuk, M.; Paret, M.L.; Freeman, J.H.; Raj, D.; Silva, S.D.; Eubanks, S.; Wiggins, D.J.; Lollar, M.; Marois, J.J.; Charles Mellinger, H.; et al. An improved crop scouting technique incorporating unmanned aerial vehicle-assisted multispectral crop imaging into conventional scouting practice for gummy stem blight in Watermelon. Plant Dis. 2019, 103, 1642–1650. [Google Scholar] [CrossRef]

- Deng, L.; Mao, Z.; Li, X.; Hu, Z.; Duan, F.; Yan, Y. UAV-based multispectral remote sensing for precision agriculture: A comparison between different cameras. ISPRS J. Photogramm. Remote Sens. 2018, 146, 124–136. [Google Scholar] [CrossRef]

- Näsi, R.; Viljanen, N.; Kaivosoja, J.; Alhonoja, K.; Hakala, T.; Markelin, L.; Honkavaara, E. Estimating biomass and nitrogen amount of barley and grass using UAV and aircraft based spectral and photogrammetric 3D features. Remote Sens. 2018, 10, 1082. [Google Scholar] [CrossRef]

- Herrmann, I.; Bdolach, E.; Montekyo, Y.; Rachmilevitch, S.; Townsend, P.A.; Karnieli, A. Assessment of maize yield and phenology by drone-mounted superspectral camera. Precis. Agric. 2020, 21, 51–76. [Google Scholar] [CrossRef]

- Gil-Docampo, M.L.; Arza-García, M.; Ortiz-Sanz, J.; Martínez-Rodríguez, S.; Marcos-Robles, J.L.; Sánchez-Sastre, L.F. Above-ground biomass estimation of arable crops using UAV-based SfM photogrammetry. Geocarto Int. 2020, 35, 687–699. [Google Scholar] [CrossRef]

- Fawcett, D.; Panigada, C.; Tagliabue, G.; Boschetti, M.; Celesti, M.; Evdokimov, A.; Biriukova, K.; Colombo, R.; Miglietta, F.; Rascher, U.; et al. Multi-scale evaluation of drone-based multispectral surface reflectance and vegetation indices in operational conditions. Remote Sens. 2020, 12, 514. [Google Scholar] [CrossRef]

- Wang, H.; Mortensen, A.K.; Mao, P.; Boelt, B.; Gislum, R. Estimating the nitrogen nutrition index in grass seed crops using a UAV-mounted multispectral camera. Int. J. Remote Sens. 2019, 40, 2467–2482. [Google Scholar] [CrossRef]

- Stavrakoudis, D.; Katsantonis, D.; Kadoglidou, K.; Kalaitzidis, A.; Gitas, I.Z. Estimating rice agronomic traits using drone-collected multispectral imagery. Remote Sens. 2019, 11, 545. [Google Scholar] [CrossRef]

- Sofonia, J.; Shendryk, Y.; Phinn, S.; Roelfsema, C.; Kendoul, F.; Skocaj, D. Monitoring sugarcane growth response to varying nitrogen application rates: A comparison of UAV SLAM LiDAR and photogrammetry. Int. J. Appl. Earth Obs. Geoinf. 2019, 82, 101878. [Google Scholar] [CrossRef]

- Olson, D.; Chatterjee, A.; Franzen, D.W.; Day, S.S. Relationship of drone-based vegetation indices with corn and sugarbeet yields. Agron. J. 2019, 111, 2545–2557. [Google Scholar] [CrossRef]

- Guan, S.; Fukami, K.; Matsunaka, H.; Okami, M.; Tanaka, R.; Nakano, H.; Sakai, T.; Nakano, K.; Ohdan, H.; Takahashi, K. Assessing correlation of high-resolution NDVI with fertilizer application level and yield of rice and wheat crops using small UAVs. Remote Sens. 2019, 11, 112. [Google Scholar] [CrossRef]

- Devia, C.A.; Rojas, J.P.; Petro, E.; Martinez, C.; Mondragon, I.F.; Patino, D.; Rebolledo, M.C.; Colorado, J. High-Throughput Biomass Estimation in Rice Crops Using UAV Multispectral Imagery. J. Intell. Robot. Syst. Theory Appl. 2019, 96, 573–589. [Google Scholar] [CrossRef]

- Borra-Serrano, I.; De Swaef, T.; Muylle, H.; Nuyttens, D.; Vangeyte, J.; Mertens, K.; Saeys, W.; Somers, B.; Roldán-Ruiz, I.; Lootens, P. Canopy height measurements and non-destructive biomass estimation of Lolium perenne swards using UAV imagery. Grass Forage Sci. 2019, 74, 356–369. [Google Scholar] [CrossRef]

- Viljanen, N.; Honkavaara, E.; Näsi, R.; Hakala, T.; Niemeläinen, O.; Kaivosoja, J. A novel machine learning method for estimating biomass of grass swards using a photogrammetric canopy height model, images and vegetation indices captured by a drone. Agriculture 2018, 8, 70. [Google Scholar] [CrossRef]

- Gao, J.; Liao, W.; Nuyttens, D.; Lootens, P.; Vangeyte, J.; Pižurica, A.; He, Y.; Pieters, J.G. Fusion of pixel and object-based features for weed mapping using unmanned aerial vehicle imagery. Int. J. Appl. Earth Obs. Geoinf. 2018, 67, 43–53. [Google Scholar] [CrossRef]

- Sanches, G.M.; Duft, D.G.; Kölln, O.T.; Luciano, A.C.D.S.; De Castro, S.G.Q.; Okuno, F.M.; Franco, H.C.J. The potential for RGB images obtained using unmanned aerial vehicle to assess and predict yield in sugarcane fields. Int. J. Remote Sens. 2018, 39, 5402–5414. [Google Scholar] [CrossRef]

- Christiansen, M.P.; Laursen, M.S.; Jørgensen, R.N.; Skovsen, S.; Gislum, R. Designing and testing a UAV mapping system for agricultural field surveying. Sensors 2017, 17, 19. [Google Scholar] [CrossRef] [PubMed]

- Gago, J.; Douthe, C.; Coopman, R.E.; Gallego, P.P.; Ribas-Carbo, M.; Flexas, J.; Escalona, J.; Medrano, H. UAVs challenge to assess water stress for sustainable agriculture. Agric. Water Manag. 2015, 153, 9–19. [Google Scholar] [CrossRef]

- Ghorbanzadeh, O.; Meena, S.R.; Blaschke, T.; Aryal, J. UAV-based slope failure detection using deep-learning convolutional neural networks. Remote Sens. 2019, 11, 2046. [Google Scholar] [CrossRef]

- Chaudhary, S.; Wang, Y.; Dixit, A.M.; Khanal, N.R.; Xu, P.; Fu, B.; Yan, K.; Liu, Q.; Lu, Y.; Li, M. Spatiotemporal degradation of abandoned farmland and associated eco-environmental risks in the high mountains of the Nepalese Himalayas. Land 2020, 9, 1. [Google Scholar] [CrossRef]

- Piralilou, S.T.; Shahabi, H.; Jarihani, B.; Ghorbanzadeh, O.; Blaschke, T.; Gholamnia, K.; Meena, S.R.; Aryal, J. Landslide detection using multi-scale image segmentation and different machine learning models in the higher himalayas. Remote Sens. 2019, 11, 2575. [Google Scholar] [CrossRef]

- Kakooei, M.; Baleghi, Y. Fusion of satellite, aircraft, and UAV data for automatic disaster damage assessment. Int. J. Remote Sens. 2017, 38, 2511–2534. [Google Scholar] [CrossRef]

- Erdelj, M.; Natalizio, E.; Chowdhury, K.R.; Akyildiz, I.F. Help from the Sky: Leveraging UAVs for Disaster Management. IEEE Pervasive Comput. 2017, 16, 24–32. [Google Scholar] [CrossRef]

- Jones, C.A.; Church, E. Photogrammetry is for everyone: Structure-from-motion software user experiences in archaeology. J. Archaeol. Sci. Rep. 2020, 30, 102261. [Google Scholar] [CrossRef]

- Parisi, E.I.; Suma, M.; Güleç Korumaz, A.; Rosina, E.; Tucci, G. Aerial platforms (uav) surveys in the vis and tir range. Applications on archaeology and agriculture. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2019, 945–952. [Google Scholar] [CrossRef]

- Agudo, P.; Pajas, J.; Pérez-Cabello, F.; Redón, J.; Lebrón, B. The Potential of Drones and Sensors to Enhance Detection of Archaeological Cropmarks: A Comparative Study Between Multi-Spectral and Thermal Imagery. Drones 2018, 2, 29. [Google Scholar] [CrossRef]

- Hess, M.; Petrovic, V.; Meyer, D.; Rissolo, D.; Kuester, F. Fusion of multimodal three-dimensional data for comprehensive digital documentation of cultural heritage sites. In Proceedings of the 2015 Digital Heritage International Congress, Granada, Spain, 28 September–2 October 2015. [Google Scholar]

- Oreni, D.; Brumana, R.; Della Torre, S.; Banfi, F.; Barazzetti, L.; Previtali, M. Survey turned into HBIM: The restoration and the work involved concerning the Basilica di Collemaggio after the earthquake (L’Aquila). ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2014, 2, 267–273. [Google Scholar] [CrossRef]

- Raeva, P.; Pavelka, K.; Hanuš, J.; Gojda, M. Using of both hyperspectral aerial sensing and RPAS multispectral sensing for potential archaeological sites detection. In Multispectral, Hyperspectral, and Ultraspectral Remote Sensing Technology, Techniques and Applications VII; SPIE: Honolulu, HI, USA, 2018; 15p. [Google Scholar]

- Gonzalez-Aguilera, D.; Bitelli, G.; Rinaudo, F.; Grussenmeyer, P. (Eds.) Data Acquisition and Processing in Cultural Heritage; MDPI: Basel, Switzerland, 2020. [Google Scholar]

- Luo, L.; Wang, X.; Guo, H.; Lasaponara, R.; Zong, X.; Masini, N.; Wang, G.; Shi, P.; Khatteli, H.; Chen, F.; et al. Airborne and spaceborne remote sensing for archaeological and cultural heritage applications: A review of the century (1907–2017). Remote Sens. Environ. 2019, 232, 111280. [Google Scholar] [CrossRef]

- Tucci, G.; Parisi, E.I.; Castelli, G.; Errico, A.; Corongiu, M.; Sona, G.; Viviani, E.; Bresci, E.; Preti, F. Multi-sensor UAV application for thermal analysis on a dry-stone terraced vineyard in rural Tuscany landscape. ISPRS Int. J. Geo-Inf. 2019, 8, 87. [Google Scholar] [CrossRef]

- Wallace, L.; Lucieer, A.; Watson, C.; Turner, D. Development of a UAV-LiDAR system with application to forest inventory. Remote Sens. 2012, 4, 1519–1543. [Google Scholar] [CrossRef]

- Lin, Q.; Huang, H.; Wang, J.; Huang, K.; Liu, Y. Detection of pine shoot beetle (PSB) Stress on pine forests at individual tree level using UAV-based hyperspectral imagery and lidar. Remote Sens. 2019, 11, 2540. [Google Scholar] [CrossRef]

- Zhou, L.; Gu, X.; Cheng, S.; Yang, G.; Shu, M.; Sun, Q. Analysis of plant height changes of lodged maize using UAV-LiDAR data. Agriculture 2020, 10, 146. [Google Scholar] [CrossRef]

- SAL Engineering, E.; Fondazione, B.K. MAIA S2—the Multispectral Camera. Available online: https://www.spectralcam.com/maia-tech-2/ (accessed on 22 July 2020).

- Logie, G.S.J.; Coburn, C.A. An investigation of the spectral and radiometric characteristics of low-cost digital cameras for use in UAV remote sensing. Int. J. Remote Sens. 2018, 39, 4891–4909. [Google Scholar] [CrossRef]

- Lebourgeois, V.; Bégué, A.; Labbé, S.; Mallavan, B.; Prévot, L.; Roux, B. Can commercial digital cameras be used as multispectral sensors? A crop monitoring test. Sensors 2008, 8, 7300–7322. [Google Scholar] [CrossRef]

- Ghebregziabher, Y.T. Monitoring Growth Development and Yield Estimation of Maize Using Very High-Resolution Uav-Images in Gronau, Germany. Enschede Univ. Twente Fac. Geo-Inf. Earth Obs. (ITC) 2017, unpublished. [Google Scholar]

- Ashapure, A.; Jung, J.; Chang, A.; Oh, S.; Maeda, M.; Landivar, J. A comparative study of RGB and multispectral sensor-based cotton canopy cover modelling using multi-temporal UAS data. Remote Sens. 2019, 11, 2757. [Google Scholar] [CrossRef]

- Cholula, U.; Da Silva, J.A.; Marconi, T.; Thomasson, J.A.; Solorzano, J.; Enciso, J. Forecasting yield and lignocellulosic composition of energy cane using unmanned aerial systems. Agronomy 2020, 10, 718. [Google Scholar] [CrossRef]

- SlantRange Inc. Multispectral Drone Sensor Systems for Agriculture. Available online: https://slantrange.com/product-sensor/ (accessed on 24 July 2020).

- Doughty, C.L.; Cavanaugh, K.C. Mapping coastal wetland biomass from high resolution unmanned aerial vehicle (UAV) imagery. Remote Sens. 2019, 11, 540. [Google Scholar] [CrossRef]

- MicaSense Inc. RedEdge.MX. Available online: https://micasense.com/rededge-mx/ (accessed on 25 July 2020).

- Vanegas, F.; Bratanov, D.; Powell, K.; Weiss, J.; Gonzalez, F. A novel methodology for improving plant pest surveillance in vineyards and crops using UAV-based hyperspectral and spatial data. Sensors (Switzerland) 2018, 18, 260. [Google Scholar] [CrossRef]

- Nocerino, E.; Dubbini, M.; Menna, F.; Remondino, F.; Gattelli, M.; Covi, D. Geometric calibration and radiometric correction of the maia multispectral camera. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, 42, 149–156. [Google Scholar] [CrossRef]

- Horstrand, P.; Guerra, R.; Rodriguez, A.; Diaz, M.; Lopez, S.; Lopez, J.F. A UAV Platform Based on a Hyperspectral Sensor for Image Capturing and On-Board Processing. IEEE Access 2019, 7, 66919–66938. [Google Scholar] [CrossRef]

- SpectraPartners BV SPECIM AFX10. Available online: https://www.hyperspectralimaging.nl/products/afx10/ (accessed on 25 July 2020).

- Headwall Photonics Inc. Hyperspectral Sensors. Available online: https://www.headwallphotonics.com/hyperspectral-sensors (accessed on 25 July 2020).

- Ge, X.; Wang, J.; Ding, J.; Cao, X.; Zhang, Z.; Liu, J.; Li, X. Combining UAV-based hyperspectral imagery and machine learning algorithms for soil moisture content monitoring. PeerJ 2019, 7, e6926. [Google Scholar] [CrossRef]

- Zhang, H.; Zhang, B.; Wei, Z.; Wang, C.; Huang, Q. Lightweight integrated solution for a UAV-borne hyperspectral imaging system. Remote Sens. 2020, 12, 657. [Google Scholar] [CrossRef]

- FLIR Systems Inc. HD Dual-Sensor Thermal Camera for Drones Flir Duo® Pro R. Available online: https://www.flir.com/products/duo-pro-r/ (accessed on 6 August 2020).

- Yang, Y.; Lee, X. Four-band thermal mosaicking: A new method to process infrared thermal imagery of urban landscapes from UAV flights. Remote Sens. 2019, 11, 1365. [Google Scholar] [CrossRef]

- FLIR Systems Inc. Radiometric Drone Thermal Camera Flir Vue Pro R. Available online: https://www.flir.asia/products/vue-pro-r/?model=436-0019-00S (accessed on 6 August 2020).

- Sagan, V.; Maimaitijiang, M.; Sidike, P.; Eblimit, K.; Peterson, K.T.; Hartling, S.; Esposito, F.; Khanal, K.; Newcomb, M.; Pauli, D.; et al. UAV-based high resolution thermal imaging for vegetation monitoring, and plant phenotyping using ICI 8640 P, FLIR Vue Pro R 640, and thermomap cameras. Remote Sens. 2019, 11, 330. [Google Scholar] [CrossRef]

- Workswell Thermal Imaging System WIRIS Agro R. Available online: https://www.drone-thermal-camera.com/products/workswell-cwsi-crop-water-stress-index-camera/ (accessed on 29 July 2020).

- Lin, Y.C.; Cheng, Y.T.; Zhou, T.; Ravi, R.; Hasheminasab, S.M.; Flatt, J.E.; Troy, C.; Habib, A. Evaluation of UAV LiDAR for mapping coastal environments. Remote Sens. 2019, 11, 2893. [Google Scholar] [CrossRef]

- Velodyne Lidar Drone/UAV: Leading Lidar Technology in the Air. Available online: https://velodynelidar.com/industries/drone-uav/ (accessed on 26 July 2020).

- RIEGL Laser Measurement Systems GmbH Unmanned Laser Scanning. Available online: http://www.riegl.com/products/unmanned-scanning/ (accessed on 26 July 2020).

- Schirrmann, M.; Giebel, A.; Gleiniger, F.; Pflanz, M.; Lentschke, J.; Dammer, K.H. Monitoring agronomic parameters of winter wheat crops with low-cost UAV imagery. Remote Sens. 2016, 8, 706. [Google Scholar] [CrossRef]

- Zhou, X.; Zheng, H.B.; Xu, X.Q.; He, J.Y.; Ge, X.K.; Yao, X.; Cheng, T.; Zhu, Y.; Cao, W.X.; Tian, Y.C. Predicting grain yield in rice using multi-temporal vegetation indices from UAV-based multispectral and digital imagery. ISPRS J. Photogramm. Remote Sens. 2017, 130, 246–255. [Google Scholar] [CrossRef]

- Acorsi, M.G.; Abati Miranda, F.D.D.; Martello, M.; Smaniotto, D.A.; Sartor, L.R. Estimating biomass of black oat using UAV-based RGB imaging. Agronomy 2019, 9, 14. [Google Scholar] [CrossRef]

- Zhang, L.; Zhang, H.; Niu, Y.; Han, W. Mapping maize water stress based on UAV multispectral remote sensing. Remote Sens. 2019, 11, 605. [Google Scholar] [CrossRef]

- Song, Y.; Wang, J. Winter wheat canopy height extraction from UAV-based point cloud data with a moving cuboid filter. Remote Sens. 2019, 11, 22. [Google Scholar] [CrossRef]

- Hunt, E.R.; Dean Hively, W.; Fujikawa, S.J.; Linden, D.S.; Daughtry, C.S.T.; McCarty, G.W. Acquisition of NIR-green-blue digital photographs from unmanned aircraft for crop monitoring. Remote Sens. 2010, 2, 290–305. [Google Scholar] [CrossRef]

- Panday, U.S.; Shrestha, N.; Maharjan, S.; Pratihast, A.K.; Shahnawaz; Shrestha, K.L.; Aryal, J. Correlating the Plant Height of Wheat with Above-Ground Biomass and Crop Yield Using Drone Imagery and Crop Surface Model, A Case Study from Nepal. Drones 2020, 4, 28. [Google Scholar] [CrossRef]

- Tao, H.; Feng, H.; Xu, L.; Miao, M.; Yang, G.; Yang, X.; Fan, L. Estimation of the yield and plant height of winter wheat using UAV-based hyperspectral images. Sensors 2020, 20, 1231. [Google Scholar] [CrossRef]

- Reza, M.N.; Na, I.S.; Baek, S.W.; Lee, K.H. Rice yield estimation based on K-means clustering with graph-cut segmentation using low-altitude UAV images. Biosyst. Eng. 2019, 177, 109–121. [Google Scholar] [CrossRef]

- Nakshmi, J.V.N.; Hemanth, K.S.; Bharath, J. Optimizing Quality and Outputs by Improving Variable Rate Prescriptions in Agriculture using UAVs. Procedia Comput. Sci. 2020, 167, 1981–1990. [Google Scholar] [CrossRef]

- FAO. Water for Sustainable Food and Agriculture; FAO: Rome, Italy, 2017. [Google Scholar]

- Su, J.; Coombes, M.; Liu, C.; Guo, L.; Chen, W.H. Wheat Drought Assessment by Remote Sensing Imagery Using Unmanned Aerial Vehicle. In Proceedings of the Chinese Control Conference, Wuhan, China, 25–27 July 2018. 5p. [Google Scholar]

- Tilly, N.; Hoffmeister, D.; Cao, Q.; Huang, S.; Lenz-Wiedemann, V.; Miao, Y.; Bareth, G. Multitemporal crop surface models: Accurate plant height measurement and biomass estimation with terrestrial laser scanning in paddy rice. J. Appl. Remote Sens. 2014, 8, 083671. [Google Scholar] [CrossRef]

- Tilly, N.; Hoffmeister, D.; Cao, Q.; Lenz-Wiedemann, V.; Miao, Y.; Bareth, G. Transferability of Models for Estimating Paddy Rice Biomass from Spatial Plant Height Data. Agriculture 2015, 5, 538–560. [Google Scholar] [CrossRef]

- Tilly, N.; Aasen, H.; Bareth, G. Fusion of plant height and vegetation indices for the estimation of barley biomass. Remote Sens. 2015, 7, 11449–11480. [Google Scholar] [CrossRef]

- Rouse, J.W. Monitoring the Vernal Advancement and Retrogradation (Greenwave Effect) of Natural Vegetation; NASA: Washington, DC, USA, 1974. [Google Scholar]

- Fitzgerald, G.J.; Rodriguez, D.; Christensen, L.K.; Belford, R.; Sadras, V.O.; Clarke, T.R. Spectral and thermal sensing for nitrogen and water status in rainfed and irrigated wheat environments. Precis. Agric. 2006, 7, 223–248. [Google Scholar] [CrossRef]

- Tucker, C.J. Red and photographic infrared linear combinations for monitoring vegetation. Remote Sens. Environ. 1979, 8, 127–150. [Google Scholar] [CrossRef]

- Gitelson, A.A.; Kaufman, Y.J.; Merzlyak, M.N. Use of a green channel in remote sensing of global vegetation from EOS- MODIS. Remote Sens. Environ. 1996, 58, 289–298. [Google Scholar] [CrossRef]

- Jordan, C.F. Derivation of Leaf-Area Index from Quality of Light on the Forest Floor. Ecology 1969, 50, 663–666. [Google Scholar] [CrossRef]

- Huete, A.R. A soil-adjusted vegetation index (SAVI). Remote Sens. Environ. 1988, 25, 295–309. [Google Scholar] [CrossRef]

- Rondeaux, G.; Steven, M.; Baret, F. Optimization of soil-adjusted vegetation indices. Remote Sens. Environ. 1996, 55, 95–107. [Google Scholar] [CrossRef]

- Sripada, R.P.; Heiniger, R.W.; White, J.G.; Meijer, A.D. Aerial color infrared photography for determining early in-season nitrogen requirements in corn. Agron. J. 2006, 98, 968. [Google Scholar] [CrossRef]

- Cao, Q.; Miao, Y.; Wang, H.; Huang, S.; Cheng, S.; Khosla, R.; Jiang, R. Non-destructive estimation of rice plant nitrogen status with Crop Circle multispectral active canopy sensor. Field Crop. Res. 2013, 154, 133–144. [Google Scholar] [CrossRef]

- Huete, A.R.; Liu, H.Q.; Batchily, K.; Van Leeuwen, W. A comparison of vegetation indices over a global set of TM images for EOS-MODIS. Remote Sens. Environ. 1997, 59, 440–451. [Google Scholar] [CrossRef]

- Li, F.; Mistele, B.; Hu, Y.; Yue, X.; Yue, S.; Miao, Y.; Chen, X.; Cui, Z.; Meng, Q.; Schmidhalter, U. Remotely estimating aerial N status of phenologically differing winter wheat cultivars grown in contrasting climatic and geographic zones in China and Germany. Field Crop. Res. 2012, 138, 21–32. [Google Scholar] [CrossRef]

- Gitelson, A.A.; Gritz, Y.; Merzlyak, M.N. Relationships between leaf chlorophyll content and spectral reflectance and algorithms for non-destructive chlorophyll assessment in higher plant leaves. J. Plant Physiol. 2003, 160, 271–282. [Google Scholar] [CrossRef]

- Roujean, J.L.; Breon, F.M. Estimating PAR absorbed by vegetation from bidirectional reflectance measurements. Remote Sens. Environ. 1995, 51, 375–384. [Google Scholar] [CrossRef]

- Hunt, E.R.; Cavigelli, M.; Daughtry, C.S.T.; McMurtrey, J.E.; Walthall, C.L. Evaluation of digital photography from model aircraft for remote sensing of crop biomass and nitrogen status. Precis. Agric. 2005, 6, 359–378. [Google Scholar] [CrossRef]

- Meyer, G.E.; Neto, J.C. Verification of color vegetation indices for automated crop imaging applications. Comput. Electron. Agric. 2008, 63, 282–293. [Google Scholar] [CrossRef]

- Gitelson, A.A.; Kaufman, Y.J.; Stark, R.; Rundquist, D. Novel algorithms for remote estimation of vegetation fraction. Remote Sens. Environ. 2002, 80, 76–87. [Google Scholar] [CrossRef]

- Louhaichi, M.; Borman, M.M.; Johnson, D.E. Spatially located platform and aerial photography for documentation of grazing impacts on wheat. Geocarto Int. 2001, 16, 65–70. [Google Scholar] [CrossRef]

- Kataoka, T.; Kaneko, T.; Okamoto, H.; Hata, S. Crop growth estimation system using machine vision. In Proceedings of the IEEE/ASME International Conference on Advanced Intelligent Mechatronics, Kobe, Japan, 20–24 July 2003. [Google Scholar]

- Hague, T.; Tillett, N.D.; Wheeler, H. Automated crop and weed monitoring in widely spaced cereals. Precis. Agric. 2006, 7, 21–32. [Google Scholar] [CrossRef]

- Karthikeyan, L.; Chawla, I.; Mishra, A.K. A review of remote sensing applications in agriculture for food security: Crop growth and yield, irrigation, and crop losses. J. Hydrol. 2020, 586, 124905. [Google Scholar] [CrossRef]

- Chew, R.; Rineer, J.; Beach, R.; Neil, M.O.; Ujeneza, N.; Lapidus, D.; Miano, T.; Hegarty-craver, M.; Polly, J.; Temple, D.S. Deep Neural Networks and Transfer Learning for Food Crop Identification in UAV Images. Drones 2020, 4, 7. [Google Scholar] [CrossRef]

- Fu, Z.; Jiang, J.; Gao, Y.; Krienke, B.; Wang, M.; Zhong, K.; Cao, Q.; Tian, Y.; Zhu, Y.; Cao, W.; et al. Wheat growth monitoring and yield estimation based on multi-rotor unmanned aerial vehicle. Remote Sens. 2020, 12, 508. [Google Scholar] [CrossRef]

- Mountrakis, G.; Im, J.; Ogole, C. Support vector machines in remote sensing: A review. ISPRS J. Photogramm. Remote Sens. 2011, 66, 247–259. [Google Scholar] [CrossRef]

- Belgiu, M.; Drăgu, L. Random forest in remote sensing: A review of applications and future directions. ISPRS J. Photogramm. Remote Sens. 2016, 114, 24–31. [Google Scholar] [CrossRef]

- Shi, L.; Duan, Q.; Ma, X.; Weng, M. The research of support vector machine in agricultural data classification. In Proceedings of the International Conference on Computer and Computing Technologies in Agriculture, Beijing, China, 29–31 October 2011; Daoliang, L., Chen, Y., Eds.; Springer: Beijing, China, 2012. 5p. [Google Scholar]

- Maimaitijiang, M.; Sagan, V.; Sidike, P.; Daloye, A.M. Crop Monitoring Using Satellite / UAV Data Fusion and Machine Learning. Remote Sens. 2020, 12, 1357. [Google Scholar] [CrossRef]

- Shrestha, R.; Zevenbergen, J.; Panday, U.S.; Awasthi, B.; Karki, S. Revisiting the current uav regulations in Nepal: A step towards legal dimension for uavs efficient application. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2019, 42, 107–114. [Google Scholar] [CrossRef]

- Hämmerle, M.; Höfle, B.; Höfle, B. Effects of reduced terrestrial LiDAR point density on high-resolution grain crop surface models in precision agriculture. Sensors 2014, 14, 24212–24230. [Google Scholar] [CrossRef]

- Mlambo, R.; Woodhouse, I.H.; Gerard, F.; Anderson, K. Structure from Motion (SfM) Photogrammetry with Drone Data: A Low Cost Method for Monitoring Greenhouse Gas Emissions from Forests in Developing Countries. Forests 2017, 8, 68. [Google Scholar] [CrossRef]

- ITU. Indicator 9.C.1: Proportion of Population Covered by a Mobile Network, by Technology. Available online: https://www.itu.int/en/ITU-D/Statistics/Pages/SDGs-ITU-ICT-indicators.aspx (accessed on 1 July 2020).

- Jin, X.; Kumar, L.; Li, Z.; Feng, H.; Xu, X.; Yang, G.; Wang, J. A review of data assimilation of remote sensing and crop models. Eur. J. Agron. 2018, 92, 141–152. [Google Scholar] [CrossRef]

- Neupane, H.; Adhikari, M.; Rauniyar, P.B. Farmers’ perception on role of cooperatives in agriculture practices of major cereal crops in Western Terai of Nepal. J. Inst. Agric. Anim. Sci. 2015, 33–34, 177–186. [Google Scholar] [CrossRef]

- Tamiminia, H.; Salehi, B.; Mahdianpari, M.; Quackenbush, L.; Adeli, S.; Brisco, B. Google Earth Engine for geo-big data applications: A meta-analysis and systematic review. ISPRS J. Photogramm. Remote Sens. 2020, 164, 152–170. [Google Scholar] [CrossRef]

- Kotas, C.; Naughton, T.; Imam, N. A comparison of Amazon Web Services and Microsoft Azure cloud platforms for high performance computing. In Proceedings of the 2018 IEEE International Conference on Consumer Electronics, Las Vegas, NV, USA, 12–14 January 2018. [Google Scholar]

- Joshi, E.; Sasode, D.S.; Chouhan, N. Revolution of Indian Agriculture Through Drone Technology. Biot. Res. Today 2020, 2, 174–176. [Google Scholar]

- Honkavaara, E.; Saari, H.; Kaivosoja, J.; Polonen, I.; Hakala, T.; Litkey, P.; Makynen, J.; Pesonen, L. Processing and Assessment of Spectrometric, Stereoscopic Imagery Collected Using a Lightweight UAV Spectral Camera for Precision Agriculture. Remote Sens. 2013, 5, 5006–5039. [Google Scholar] [CrossRef]

- Tang, Z.; Wang, H.; Li, X.; Li, X.; Cai, W.; Han, C. An object-based approach for mapping crop coverage using multiscale weighted and machine learning methods. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 1700–1713. [Google Scholar] [CrossRef]

- Brammer, J.R.; Brunet, N.D.; Burton, A.C.; Cuerrier, A.; Danielsen, F.; Dewan, K.; Herrmann, T.M.; Jackson, M.V.; Kennett, R.; Larocque, G.; et al. The role of digital data entry in participatory environmental monitoring. Conserv. Biol. 2016, 30, 1277–1287. [Google Scholar] [CrossRef]

- Pratihast, A.K.; Herold, M.; Avitabile, V.; De Bruin, S.; Bartholomeus, H.; Souza, C.M.; Ribbe, L. Mobile devices for community-based REDD+ monitoring: A case study for central Vietnam. Sensors (Switzerland) 2013, 13, 21–38. [Google Scholar] [CrossRef]

- Pratihast, A.K.; Herold, M.; De Sy, V.; Murdiyarso, D.; Skutsch, M. Linking community-based and national REDD+ monitoring: A review of the potential. Carbon Manag. 2013, 4, 91–104. [Google Scholar] [CrossRef]

| Type | Pros | Cons | Common Applications in Geospatial Domain | Indicative Price Range |

|---|---|---|---|---|

| Multi-rotor |

|

|

| Low -Medium |

| Fixed-wing |

|

|

| Medium-High |

| Application Area | Aims | Common Sensor Type on Drone | Ref. |

|---|---|---|---|

| Survey and mapping |

| RGB and multispectral | [49,50,52,53,54] |

| Agriculture |

| RGB, multispectral, thermal, and hyperspectral | [68,70,73,75,83,88,89] |

| Forest and wildlife conservation |

| RGB, multispectral, hyperspectral, thermal, and LiDAR | [55,56,57,58,59] |

| Cultural heritage documentation |

| RGB, multispectral, and thermal | [95,97,99] |

| Disaster management |

| RGB | [90,91] |

| Spectral Category | Sensor Type | Sensor | Color Space/Spectral Band | Carrier Drone | Ref. |

|---|---|---|---|---|---|

| RGB | 1′’ CMOS | DJI FC6310 | sRGB | DJI Phantoms | [66,110] |

| Multispectral | CMOS | SlantRange 3p/4p/4p+ | 470–850 nm (6 bands) | DJI M100 | [111,112,113] |

| CMOS | MicaSense RedEdge | 475–842 nm (5 bands) | DJI M100, S800 EVO | [114,115,116] | |

| 9 CMOS Mono-Chromatic sensors | MAIA S2 | Same wavelength intervals as of Sentinel-2 (9 bands) | - | [107,117] | |

| Hyperspectral | - | SPECIM AFX10 | 400–1000 nm (224 bands) | DJI M600 | [118,119] |

| CMOS | Headwall Nano-Hyperspec VNIR | 400–1000 nm (270 bands) | S800 EVO | [105,116,120,121] | |

| CCD/sCMOS | Headwall Micro-Hyperspec VNIR A/E-Series | 400–1000 nm (324/369 bands) | DJI M600 Pro | [120,122] | |

| Thermal | CMOS | FLIR Tau 2 | 7.5–13.5 µm | S800 EVO, modified Hexacopter | [59,67] |

| - | FLIR Duo Pro R | 7.5–13.5 µm | DJI Phantom 4 Pro | [123,124] | |

| - | FLIR Vue Pro R | 7.5–13.5 µm | DJI M600 Pro, DJI S1000+ | [125,126] | |

| 1/3” sensor | WIRIS Agro R | Long Wavelength InfraRed | DJI M600 Pro, DJI S1000 | [127] | |

| LiDAR | - | Velodyne VLP-32C | 32 channels | DJI M600 Pro | [128,129] |

| - | RIEGL VUX-1UAV | - | Hexacopter, RiCopter | [106,130] | |

| - | Hesai Pandar40 | 40 channels | DJI M200 series, LiAir 200 | [105] |

| Application | Crop | Indices/Variable | Methods | R2/Accuracy | Ref. |

|---|---|---|---|---|---|

| Growth monitoring | Wheat | Coverage, EXG, RED, BG, RG, RB, and Plant height | LR | 0.70 to 0.97 | [131] |

| Yield estimation | Rice | VARI, NDVI | LR, MLR, and Log | 0.71, 0.76 | [132] |

| Biomass estimation | Maize | NGRDI, ExG, ExGR, CIVE, and VEG | LR, MLR, and ER | 0.59–0.82 | [66] |

| Biomass estimation | Barley | Plant height | ER | 0.31–0.72 | [17] |

| Biomass estimation | Black Oat | Plant height | LR and ER | 0.69–0.94 | [133] |

| Fertilizer management | Rice | 39 variables | LR | ≥ 0.8(N uptake and biomass) | [79] |

| Water stress assessment | Maize | TCARI/SAVI and TCARI/RDVI | LR | 0.80 and 0.81 (with CWSI) | [134] |

| Weeds, | Oat | - | RF | 87–89% | [62] |

| Pest and disease management | Wheat | RVI, NDVI, and OSAVI | RF | 89% | [63] |

| Name | Abbr. | Formula | Ref. | |

|---|---|---|---|---|

| Vegetation index | Normalized Difference Vegetation Index | NDVI | [146] | |

| Normalized Difference Red Edge Index | NDRE | [147] | ||

| Transformed NDVI | TNDVI | [148] | ||

| Green NDVI | gNDVI | [149] | ||

| Ratio Vegetation Index | RVI (λ1, λ2) | [150] | ||

| Difference Vegetation Index | DVI (λ1, λ2) | [150] | ||

| Green DVI | gDVI | [148] | ||

| Soil Adjusted Vegetation Index | SAVI | [151] | ||

| Optimized Soil Adjusted Vegetation Index | OSAVI | [152] | ||

| Green SAVI | GSAVI | [153] | ||

| Red-Edge SAVI | RESAVI | [154] | ||

| Modified Enhanced VI | MEVI | [154] | ||

| Enhanced Vegetation Index | EVI | [155] | ||

| Canopy Chlorophyll Content Index | CCCI | [156] | ||

| Green Chlorophyll Index | CIgreen | [157] | ||

| Renormalized Difference Vegetation Index | RDVI | [158] | ||

| Modified GSAVI | MGSAVI | [154] | ||

| Red-Edge wide dynamic range VI | REWDRVI | [154] | ||

| Green RDVI | gRDVI | |||

| Transformed Chlorophyll Absorption Index | TCARI | (RE⁄R)] | [134] | |

| Color Index | Normalized Difference Green/Red Index | NGRDI | [159] | |

| Excess Green Vegetation Index | ExG | [131] | ||

| Excess Red Vegetation Index | ExR | [160] | ||

| Excess Green Minus Red Vegetation Index | ExGR | [160] | ||

| Visual Atmospheric Resistance Index | VARI | [161] | ||

| Green Leaf Index | GLI | [162] | ||

| Color Index of Vegetation | CIVE | [163] | ||

| Vegetation Index | VEG | , a = 0.667 | [164] |

| Application | Inputs | Est. Param. | Method/Algorithm | Target Crop | Results | Ref. |

|---|---|---|---|---|---|---|

| Crop classification | Drone RGB images | - | DNN | Maize, Banana, and Legume | (Accuracy, Kappa) Maize: (0.93, 0.85) Banana: (0.98, 0.95) Legume: (0.95, 0.46) | [166] |

| Weeds identification | Spectral, textural, and geometric features | Soil, weeds, and maize | RF | Maize | Overall Accuracy: 0.945 Kappa: 0.912 | [86] |

| Disease detection | Spatial and spectral info. to DCNN; only spatial info. to RF | Yellow rust | DCNN, RF | Wheat | Overall Accuracy DCNN: 0.85 RF: 0.77 | [64] |

| Water-stress assessment | DI, RI, NDI, and PI | Soil moisture content (SMC) | RF, ELM | Wheat | (R2, RMSEP, RPD) RF: (0.907, 1.477, 3.396) ELM: (0.820, 1.984, 2.322) | [121] |

| Fertilizer estimation | 36 spectral features from hyperspectral sensor, 13 VIs/Cis, and 16 3D features | Nitrogen | RF | Barley | PCC: 0.97%RMSE: 21.6% | [74] |

| Biomass and yield estimation | Biomass | RF | Barley | PCC: 0.95% RMSE: 33.3% | [74] | |

| NDVI, NDRE, OSAVI & CCCI | Yield | PLSR, ANN, RF | Wheat | (R2, RRMSE) PLSR: (0.7667, 0.1353) ANN: (0.7701, 0.1126) RF: (0.7800, 0.1030) | [167] |

| Opportunities | Challenges |

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Panday, U.S.; Pratihast, A.K.; Aryal, J.; Kayastha, R.B. A Review on Drone-Based Data Solutions for Cereal Crops. Drones 2020, 4, 41. https://doi.org/10.3390/drones4030041

Panday US, Pratihast AK, Aryal J, Kayastha RB. A Review on Drone-Based Data Solutions for Cereal Crops. Drones. 2020; 4(3):41. https://doi.org/10.3390/drones4030041

Chicago/Turabian StylePanday, Uma Shankar, Arun Kumar Pratihast, Jagannath Aryal, and Rijan Bhakta Kayastha. 2020. "A Review on Drone-Based Data Solutions for Cereal Crops" Drones 4, no. 3: 41. https://doi.org/10.3390/drones4030041

APA StylePanday, U. S., Pratihast, A. K., Aryal, J., & Kayastha, R. B. (2020). A Review on Drone-Based Data Solutions for Cereal Crops. Drones, 4(3), 41. https://doi.org/10.3390/drones4030041