UAVs in Support of Algal Bloom Research: A Review of Current Applications and Future Opportunities

Abstract

1. Introduction

Algal Blooms

2. Methods

3. Results

3.1. Applications of Unmanned Aerial Vehicles (UAVs) in Algal Bloom Research

3.1.1. Benefits of UAVs in Algal Bloom Research

3.1.2. Factors to Consider for UAV Algal Bloom Detection

3.2. UAV Platforms

3.3. Sensors and Cameras

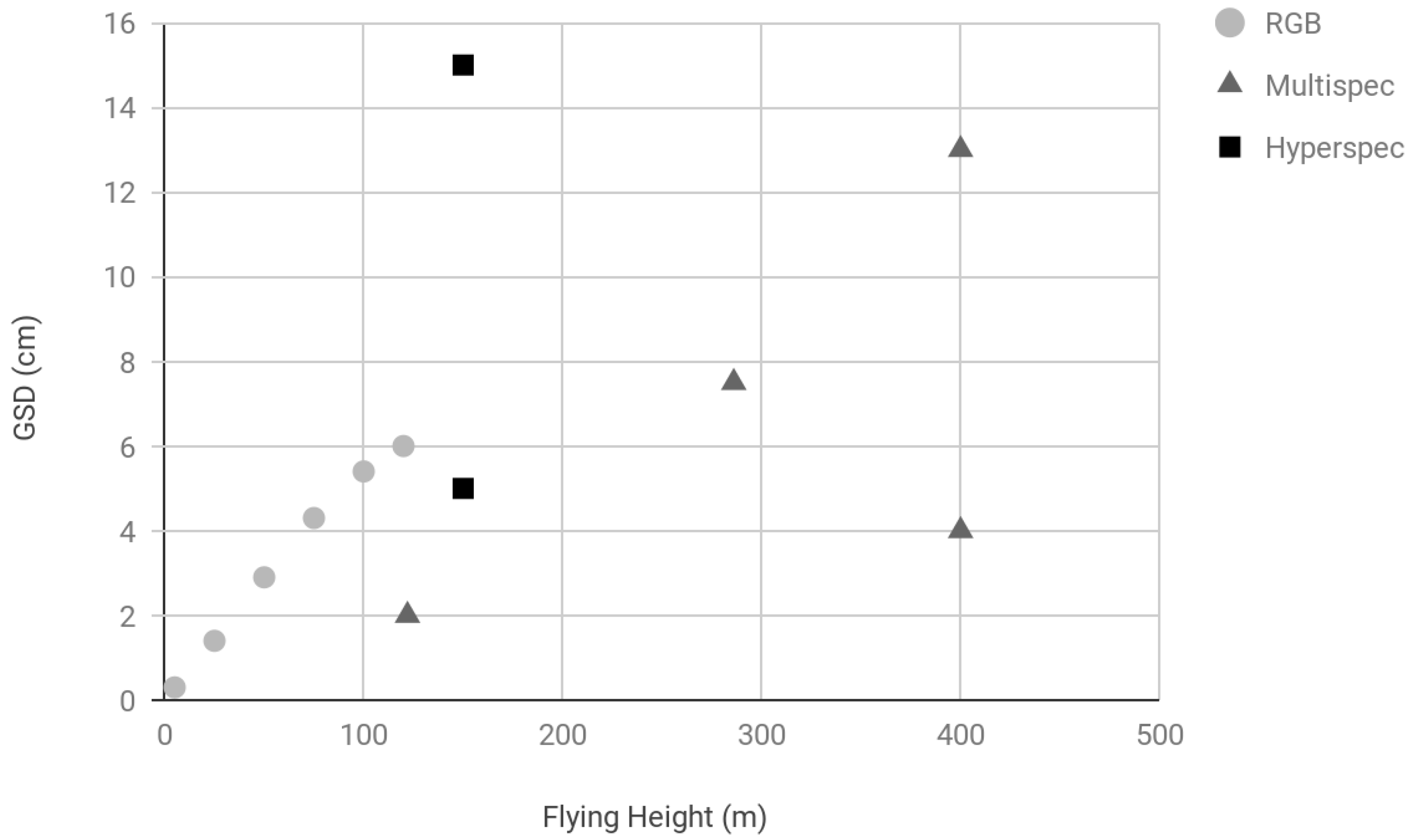

3.4. Ground Sampling Distance (GSD)

3.5. Algal Spectral Indices

3.6. Software

3.7. Calibration Techniques

3.8. Validation Techniques

3.9. Quantitative Analyses

3.10. Barriers and Challenges

4. Discussion

4.1. Future Opportunities

4.1.1. Hyperspectral UAVs

4.1.2. Integrated Methods for Algal Bloom Research

4.1.3. Standardization and Interoperability

4.1.4. UAV Regulation

4.1.5. Technical Challenges

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Van der Merwe, D.; Price, K.P. Harmful algal bloom characterization at ultra-high spatial and temporal resolution using small unmanned aircraft systems. Toxins 2015, 7, 1065–1078. [Google Scholar] [CrossRef] [PubMed]

- Klemas, V. Remote Sensing of Algal Blooms: An Overview with Case Studies. J. Coast. Res. 2012, 28, 34–43. [Google Scholar] [CrossRef]

- Lee, Z.; Marra, J.; Perry, M.J.; Kahru, M. Estimating oceanic primary productivity from ocean color remote sensing: A strategic assessment. J. Mar. Syst. 2015, 149, 50–59. [Google Scholar] [CrossRef]

- Blondeau-Patissier, D.; Gower, J.F.R.; Dekker, A.G.; Phinn, S.R.; Brando, V.E. A review of ocean color remote sensing methods and statistical techniques for the detection, mapping and analysis of phytoplankton blooms in coastal and open oceans. Prog. Oceanogr. 2014, 123, 123–144. [Google Scholar] [CrossRef]

- Hallegraeff, G.M. Harmful algal blooms: A global overview. In Manual on Harmful Marine Microalgae; Monographs on Oceanographic Methodology Series; Hallegraeff, G.M., Anderson, D.M., Cembella, A.D., Eds.; UNESCO Publishing: Paris, France, 2003; Volume 11, pp. 25–49. [Google Scholar]

- Kirkpatrick, B.; Fleming, L.E.; Squicciarini, D.; Backer, L.C.; Clark, R.; Abraham, W.; Benson, J.; Cheng, Y.S.; Johnson, D.; Pierce, R.; et al. Literature Review of Florida Red Tide: Implications for Human Health Effects. Harmful Algae 2004, 3, 99–115. [Google Scholar] [CrossRef]

- Smayda, T.J. Bloom dynamics: Physiology, behavior, trophic effects. Limnol. Oceanogr. 1997, 42, 1132–1136. [Google Scholar] [CrossRef]

- Moore, S.K.; Trainer, V.L.; Mantua, N.J.; Parker, M.S.; Laws, E.A.; Backer, L.C.; Fleming, L.E. Impacts of climate variability and future climate change on harmful algal blooms and human health. Environ. Health 2008, 7 (Suppl. 2), S4. [Google Scholar] [CrossRef]

- Gobler, C.J.; Doherty, O.M.; Hattenrath-Lehmann, T.K.; Griffith, A.W.; Kang, Y.; Litaker, R.W. Ocean warming since 1982 has expanded the niche of toxic algal blooms in the North Atlantic and North Pacific oceans. Proc. Natl. Acad. Sci. USA 2017, 114, 4975–4980. [Google Scholar] [CrossRef]

- Jochens, A.E.; Malone, T.C.; Stumpf, R.P.; Hickey, B.M.; Carter, M.; Morrison, R.; Dyble, J.; Jones, B.; Trainer, V.L. Integrated Ocean Observing System in Support of Forecasting Harmful Algal Blooms. Mar. Technol. Soc. J. 2010, 44, 99–121. [Google Scholar] [CrossRef]

- Kutser, T. Passive optical remote sensing of cyanobacteria and other intense phytoplankton blooms in coastal and inland waters. Int. J. Remote Sens. 2009, 30, 4401–4425. [Google Scholar] [CrossRef]

- Longhurst, A. Seasonal cycles of pelagic production and consumption. Prog. Oceanogr. 1995, 36, 77–167. [Google Scholar] [CrossRef]

- Shen, L.; Xu, H.; Guo, X. Satellite remote sensing of harmful algal blooms (HABs) and a potential synthesized framework. Sensors 2012, 12, 7778–7803. [Google Scholar] [CrossRef]

- Hook, S.J.; Myers, J.J.; Thome, K.J.; Fitzgerald, M.; Kahle, A.B. The MODIS/ASTER airborne simulator (MASTER)—A new instrument for earth science studies. Remote Sens. Environ. 2001, 76, 93–102. [Google Scholar] [CrossRef]

- Shang, S.; Lee, Z.; Lin, G.; Hu, C.; Shi, L.; Zhang, Y.; Li, X.; Wu, J.; Yan, J. Sensing an intense phytoplankton bloom in the western Taiwan Strait from radiometric measurements on a UAV. Remote Sens. Environ. 2017, 198, 85–94. [Google Scholar] [CrossRef]

- Manfreda, S.; McCabe, M.F.; Miller, P.E.; Lucas, R.; Pajuelo Madrigal, V.; Mallinis, G.; Ben Dor, E.; Helman, D.; Estes, L.; Ciraolo, G.; et al. On the Use of Unmanned Aerial Systems for Environmental Monitoring. Remote Sens. 2018, 10, 641. [Google Scholar] [CrossRef]

- DeBell, L.; Anderson, K.; Brazier, R.E.; King, N.; Jones, L. Water resource management at catchment scales using lightweight UAVs: Current capabilities and future perspectives. J. Unmanned Veh. Syst. 2015, 4, 7–30. [Google Scholar] [CrossRef]

- Honkavaara, E.; Hakala, T.; Kirjasniemi, J.; Lindfors, A.; Mäkynen, J.; Nurminen, K.; Ruokokoski, P.; Saari, H.; Markelin, L. New light-weight stereosopic spectrometric airborne imaging technology for high-resolution environmental remote sensing case studies in water quality mapping. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2013, 1, W1. [Google Scholar] [CrossRef]

- Jang, S.W.; Yoon, H.J.; Kwak, S.N.; Sohn, B.Y.; Kim, S.G.; Kim, D.H. Algal Bloom Monitoring using UAVs Imagery. Adv. Sci. Technol. Lett. 2016, 138, 30–33. [Google Scholar]

- Kim, H.-M.; Yoon, H.-J.; Jang, S.W.; Kwak, S.N.; Sohn, B.Y.; Kim, S.G.; Kim, D.H. Application of Unmanned Aerial Vehicle Imagery for Algal Bloom Monitoring in River Basin. Int. J. Control Autom. 2016, 9, 203–220. [Google Scholar] [CrossRef]

- Lyu, P.; Malang, Y.; Liu, H.H.T.; Lai, J.; Liu, J.; Jiang, B.; Qu, M.; Anderson, S.; Lefebvre, D.D.; Wang, Y. Autonomous cyanobacterial harmful algal blooms monitoring using multirotor UAS. Int. J. Remote Sens. 2017, 38, 2818–2843. [Google Scholar] [CrossRef]

- Pölönen, I.; Puupponen, H.H.; Honkavaara, E.; Lindfors, A.; Saari, H.; Markelin, L.; Hakala, T.; Nurminen, K. UAV-based hyperspectral monitoring of small freshwater area. Proc. SPIE 2014, 9239, 923912. [Google Scholar]

- Goldberg, S.J.; Kirby, J.T.; Licht, S.C. Applications of Aerial Multi-Spectral Imagery for Algal Bloom Monitoring in Rhode Island; SURFO Technical Report No. 16-01; University of Rhode Island: South Kingstown, RI, USA, 2016; p. 28. [Google Scholar]

- Aguirre-Gómez, R.; Salmerón-García, O.; Gómez-Rodríguez, G.; Peralta-Higuera, A. Use of unmanned aerial vehicles and remote sensors in urban lakes studies in Mexico. Int. J. Remote Sens. 2017, 38, 2771–2779. [Google Scholar] [CrossRef]

- Flynn, K.F.; Chapra, S.C. Remote Sensing of Submerged Aquatic Vegetation in a Shallow Non-Turbid River Using an Unmanned Aerial Vehicle. Remote Sens. 2014, 6, 12815–12836. [Google Scholar] [CrossRef]

- Su, T.-C.; Chou, H.-T. Application of Multispectral Sensors Carried on Unmanned Aerial Vehicle (UAV) to Trophic State Mapping of Small Reservoirs: A Case Study of Tain-Pu Reservoir in Kinmen, Taiwan. Remote Sens. 2015, 7, 10078–10097. [Google Scholar] [CrossRef]

- Xu, F.; Gao, Z.; Jiang, X.; Shang, W.; Ning, J.; Song, D.; Ai, J. A UAV and S2A data-based estimation of the initial biomass of green algae in the South Yellow Sea. Mar. Pollut. Bull. 2018, 128, 408–414. [Google Scholar] [CrossRef] [PubMed]

- Bollard-Breen, B.; Brooks, J.D.; Jones, M.R.L.; Robertson, J.; Betschart, S.; Kung, O.; Craig Cary, S.; Lee, C.K.; Pointing, S.B. Application of an unmanned aerial vehicle in spatial mapping of terrestrial biology and human disturbance in the McMurdo Dry Valleys, East Antarctica. Polar Biol. 2015, 38, 573–578. [Google Scholar] [CrossRef]

- Koparan, C.; Koc, A.; Privette, C.; Sawyer, C. In Situ Water Quality Measurements Using an Unmanned Aerial Vehicle (UAV) System. Water 2018, 10, 264. [Google Scholar] [CrossRef]

- Kutser, T.; Metsamaa, L.; Strömbeck, N.; Vahtmäe, E. Monitoring cyanobacterial blooms by satellite remote sensing. Estuar. Coast. Shelf Sci. 2006, 67, 303–312. [Google Scholar] [CrossRef]

- Berni, J.A.J.; Zarco-Tejada, P.J.; Suárez Barranco, M.D.; Fereres Castiel, E. Thermal and narrow-band multispectral remote sensing for vegetation monitoring from an unmanned aerial vehicle. IEEE Trans. Geosci. Remote Sens. 2009, 47, 722–738. [Google Scholar] [CrossRef]

- Liu, S.; Zhang, C.; Zhang, Y.; Wang, T.; Zhao, A.; Zhou, T.; Jia, X. Miniaturized spectral imaging for environment surveillance based on UAV platform. Proc. SPIE 2017, 10461, 104611K. [Google Scholar]

- Chung, M.; Detweiler, C.; Hamilton, M.; Higgins, J.; Ore, J.-P.; Thompson, S. Obtaining the Thermal Structure of Lakes from the Air. Water 2015, 7, 6467–6482. [Google Scholar] [CrossRef]

- Rhee, D.S.; Kim, Y.D.; Kang, B.; Kim, D. Applications of unmanned aerial vehicles in fluvial remote sensing: An overview of recent achievements. KSCE J. Civ. Eng. 2018, 22, 588–602. [Google Scholar] [CrossRef]

- Agisoft PhotoScan. Available online: http://www.agisoft.com/ (accessed on 7 August 2018).

- Mission Planner. Available online: http://ardupilot.org/planner/ (accessed on 7 August 2018).

- Pix4D. Available online: https://pix4d.com/ (accessed on 7 August 2018).

- DroneDeploy. Available online: https://www.dronedeploy.com/ (accessed on 7 August 2018).

- ESRI Esri GIS Products. Available online: https://www.esri.com/en-us/arcgis/products/index (accessed on 7 August 2018).

- ENVI. Available online: https://www.harrisgeospatial.com/SoftwareTechnology/ENVI.aspx (accessed on 7 August 2018).

- MATLAB. Available online: https://www.mathworks.com/products/matlab.html (accessed on 7 August 2018).

- Kolor|Autopano. Available online: http://www.kolor.com/fr/autopano/ (accessed on 7 August 2018).

- Tetracam PixelWrench2. Available online: http://www.tetracam.com/Products_PixelWrench2.htm (accessed on 7 August 2018).

- Sathyendranath, S.; Cota, G.; Stuart, V.; Maass, H.; Platt, T. Remote sensing of phytoplankton pigments: A comparison of empirical and theoretical approaches. Int. J. Remote Sens. 2001, 22, 249–273. [Google Scholar] [CrossRef]

- Anderson, K.; Gaston, K.J. Lightweight unmanned aerial vehicles will revolutionize spatial ecology. Front. Ecol. Environ. 2013, 11, 138–146. [Google Scholar] [CrossRef]

- Jung, S.; Cho, H.; Kim, D.; Kim, K.; Han, J.I.; Myung, H. Development of Algal Bloom Removal System Using Unmanned Aerial Vehicle and Surface Vehicle. IEEE Access 2017, 5, 22166–22176. [Google Scholar] [CrossRef]

- Zingone, A.; Oksfeldt Enevoldsen, H. The diversity of harmful algal blooms: A challenge for science and management. Ocean Coast. Manag. 2000, 43, 725–748. [Google Scholar] [CrossRef]

- Kudela, R.M.; Palacios, S.L.; Austerberry, D.C.; Accorsi, E.K.; Guild, L.S.; Torres-Perez, J. Application of hyperspectral remote sensing to cyanobacterial blooms in inland waters. Remote Sens. Environ. 2015, 167, 196–205. [Google Scholar] [CrossRef]

- Zomer, R.J.; Trabucco, A.; Ustin, S.L. Building spectral libraries for wetlands land cover classification and hyperspectral remote sensing. J. Environ. Manag. 2009, 90, 2170–2177. [Google Scholar] [CrossRef]

- Hallegraeff, G.M. Ocean climate change, phytoplankton community responses, and harmful algal blooms: A formidable predictive challenge1. J. Phycol. 2010, 46, 220–235. [Google Scholar] [CrossRef]

- Hogan, S.D.; Kelly, M.; Stark, B.; Chen, Y. Unmanned aerial systems for agriculture and natural resources. Calif. Agric. 2017, 71, 5–14. [Google Scholar] [CrossRef]

- Duffy, J.P.; Cunliffe, A.M.; DeBell, L.; Sandbrook, C.; Wich, S.A.; Shutler, J.D.; Myers-Smith, I.H.; Varela, M.R.; Anderson, K. Location, location, location: Considerations when using lightweight drones in challenging environments. Remote Sens. Ecol. Conserv. 2018, 4, 7–19. [Google Scholar] [CrossRef]

- Chirayath, V.; Earle, S.A. Drones that see through waves—Preliminary results from airborne fluid lensing for centimetre-scale aquatic conservation. Aquat. Conserv. 2016, 26, 237–250. [Google Scholar] [CrossRef]

- Dietrich, J.T. Riverscape mapping with helicopter-based Structure-from-Motion photogrammetry. Geomorphology 2016, 252, 144–157. [Google Scholar] [CrossRef]

- Brown, A.; Carter, D. Geolocation of Unmanned Aerial Vehicles in GPS-Degraded Environments. In Proceedings of the AIAA Infotech@Aerospace Conference and Exhibit, Arlington, VA, USA, 26–29 September 2005. [Google Scholar]

- Gerke, M.; Przybilla, H.-J. Accuracy Analysis of Photogrammetric UAV Image Blocks: Influence of Onboard RTK-GNSS and Cross Flight Patterns. Photogrammetrie Fernerkundung Geoinformation 2016, 2016, 17–30. [Google Scholar] [CrossRef]

- Levy, J.; Hunter, C.; Lukacazyk, T.; Franklin, E.C. Assessing the spatial distribution of coral bleaching using small unmanned aerial systems. Coral Reefs 2018, 37, 373–387. [Google Scholar] [CrossRef]

- Hu, C. A novel ocean color index to detect floating algae in the global oceans. Remote Sens. Environ. 2009, 113, 2118–2129. [Google Scholar] [CrossRef]

- Power, M.; Lowe, R.; Furey, P.; Welter, J.; Limm, M.; Finlay, J.; Bode, C.; Chang, S.; Goodrich, M.; Sculley, J. Algal mats and insect emergence in rivers under Mediterranean climates: Towards photogrammetric surveillance. Freshw. Biol. 2009, 54, 2101–2115. [Google Scholar] [CrossRef]

| Target, Location | UAV Platform | Sensor(s) | Parameters and Indices | Validation | Challenges | Example Reference |

|---|---|---|---|---|---|---|

| Lake cyanobacteria, Chapultepec Park, Mexico | Multirotor: DJI Phantom 3 | 12-megapixel red green blue (RGB) camera | Chlorophyll a at 544 nm, phycocyanin absorption at 619 nm | In situ upwelling and downwelling irradiances with hyperspectral spectroradiometer (GER-1500), cyanobacteria sampling, meteorological data | Not specified | [24] |

| Cyanobacterial mats, Antarctica | Fixed-wing: Modified UAV (Kevlar fabric, Skycam UAV Ltd. airframe) “Polar fox” | Sony NEX 5 RGB and near-infrared (NIR) cameras | Not specified | In situ spectral reflectance using multi- and hyperspectral cameras. Field microscopy of cyanobacterial mats. | Resolution: Fixed-wing UAV imagery too coarse to see small algal colonies | [28] |

| Shallow river algae (Cladophora glomerata) in Clark Fork River, Montana, USA | Multirotor: DJI (unspecified) | GoPro Hero 3 with 12MP RGB camera | ACE 1, SAM 2 | In situ data of total suspended sediment samples, light attenuation profiles, daily streamflow | Regulation: federal flight restrictions. Weather: Wind Turbulence. Hardware: Off-nadir images | [25] |

| Cyanobacteria biomass, Rhode Island, USA | Multirotor: 3DR X8+ Multirotor: 3DR Iris+ | Tetracam Mini-MCA6 multispectral camera 4 MAPIR Survey 1 cameras | NDVI, GNDVI 3 | In situ water quality data (not specified) | Data processing: aligning images; mosaicking | [23] |

| Shallow lake algae, Finland | Helicopter UAV: not specified | Fabry-Perot interferometer (FPI) | 500–900 nm filter | In situ radiometric water measurements. Signal-to-noise ratios. | Data processing: low signal-to-noise ratio for narrow band hyperspectral images | [18] |

| River algal blooms, Daecheong Dam, South Korea | Fixed-wing: Ltd’s eBee | Canon S110 RGB and NIR cameras | AI 4 | In situ field spectral reflectance | Not specified | [19] |

| River algal blooms, Nakdong River, South Korea | Fixed-wing: Ltd’s eBee | Canon Powershot S110 RGB and NIR camera | AI | In situ water quality, microscopy analysis of phytoplankton species Field spectral reflectance | Laboratory analysis: identification of phytoplankton to species level | [20] |

| Pond Cyanobacteria, Kingston, Canada | Octorotor: UAV not specified with Pixhawk as autopilot | Sony ILCE-6000 mini SLR camera (RGB) with MTI-G-700 gimbal Sony HX60v used for test flights | Not specified | Only technical validation performed | Hardware: Gimbal errors | [21] |

| Lake blue-green algal distribution, water turbidity, Finland | Helicopter UAV: not specified | Fabry-Perot interferometer (FPI) CMV4000 4.2 Megapixel CMOS camera | SAM, HFC 5, VCA 6, FNNLS 7 | In situ water quality measurements (Temp, conductance, turbidity, chlorophyll a, blue-green algae distribution) | Data processing: geometric and radiometric water image processing | [22] |

| Estuarine phytoplankton bloom (Phaeocystis globosa), Western Taiwan Strait and Weitou Bay, China | Fixed-wing: LT-150, from TOPRS Technology Co., Ltd. | AvaSpec-dual spectroradiometer with 2 sensors that span 360–1000 nm | Not specified | In situ chlorophyll a sampling and spectroradiometer measurements via ship | Fundamental: Accurate remote sensing reflectance values in coastal region. Environmental: tides, wind | [15] |

| Algal cover in Tain-Pu Reservoir, Taiwan, China | Fixed-wing: Ltd’s eBee | Canon Powershot S110 RGB & Canon Powershot S110 NIR cameras | Chlorophyll a regression model | In situ total phosphorus, chlorophyll a, Secchi disk depth | Environmental: reflection of water | [26] |

| Lake cyanobacterial (Microcystis) buoyant volume, Kansas, USA | Fixed-wing: Zephyr sUAS from RitewingRC; Controller: Ardupilot Mega 2.6 Multirotor: DJI F550; Controller: NAZA V2 | Canon Powershot S100 NDVI camera Multirotor gimbal (Gaui Crane II) and real-time video (ReadymadeRC) | BNDVI 8 | In situ microscopy identification of cyanobacterial genus | Hardware: Multirotor flight limits, slow speed, small coverage. Airspace: use constraints | [1] |

| Green tide algae (on P. yezoenis rafts) biomass in aquaculture zones, Yellow Sea, China | Multirotor: DJI Inspire 1 | DJI X3 RGB camera | NGRDI 9, NGBDI 10, GLI 11, EXG 12 | In situ samples of green algae attached to P. yezoenis in the aquaculture zone | Not specified | [27] |

| Name | Index | Reference(s) |

|---|---|---|

| Normalized Difference Vegetation Index (NDVI) | (NIR − Red)/(NIR + Red) | [23] |

| Normalized Green Red Difference Index (NGRDI) | (Green − Red)/(Green + Red) | [27] |

| Blue Normalized Difference Vegetation Index (BNDVI) | (NIR − Blue)/(NIR + Blue) | [1] |

| Normalized Green Blue Difference Index (NGBDI) | (Green − Blue)/(Green + Blue) | [27] |

| Green Normalized Difference Vegetation Index (GNDVI) | (NIR − Green)/(NIR + Green) | [23] |

| Green Leaf Index (GLI) | (2 * Green – Red − Blue)/(2 * Green + Red + Blue) | [27] |

| Excess Green (EXG) | 2 * Green − Red − Blue | [27] |

| Algal Bloom Detection Index (AI) | ((R850nm − R660nm)/(R850nm + R660nm)) + ((R850nm − R625nm)/(R850nm + R625nm)) | [19,20] |

| Workflow | Processing Software | Uses | References |

|---|---|---|---|

| Mission and flight planning | Mission Planner | Flight planning | [1] |

| Image Processing | Agisoft PhotoScan | Mosaicking, orthorectification and georeferencing | [1,23] |

| BAE Systems SOCET SET and GXP | Mosaicking, radiometric processing | [18] | |

| MATLAB | Image processing | [23] | |

| Clipping rasters | [25] | ||

| Pix4D | Digital surface models, orthomosaics | [27,28] | |

| PixelWrench2 | Image processing | [23] | |

| VIsualSFM method | Image orientation, radiometric processing, mosaicking | [22] | |

| DroneDeploy | Mosaicking | [23] | |

| QGIS | Georeferencing | [24] | |

| ArcGIS | Mosaicking, georeferencing | [25,28] | |

| Menci Software | Sensor orientation, Image processing | [26] | |

| Spatial Analysis | ArcGIS | Spatial analysis | [28] |

| ENVI | Spatial analysis | [28] | |

| Opticks | Classification | [25] | |

| Visualization | QGIS | Map production | [24] |

| ArcGIS | Map production | [1] |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kislik, C.; Dronova, I.; Kelly, M. UAVs in Support of Algal Bloom Research: A Review of Current Applications and Future Opportunities. Drones 2018, 2, 35. https://doi.org/10.3390/drones2040035

Kislik C, Dronova I, Kelly M. UAVs in Support of Algal Bloom Research: A Review of Current Applications and Future Opportunities. Drones. 2018; 2(4):35. https://doi.org/10.3390/drones2040035

Chicago/Turabian StyleKislik, Chippie, Iryna Dronova, and Maggi Kelly. 2018. "UAVs in Support of Algal Bloom Research: A Review of Current Applications and Future Opportunities" Drones 2, no. 4: 35. https://doi.org/10.3390/drones2040035

APA StyleKislik, C., Dronova, I., & Kelly, M. (2018). UAVs in Support of Algal Bloom Research: A Review of Current Applications and Future Opportunities. Drones, 2(4), 35. https://doi.org/10.3390/drones2040035