Monitoring Human Visual Behavior during the Observation of Unmanned Aerial Vehicles (UAVs) Videos

Abstract

1. Introduction

2. Methodology

2.1. Experimental Design

2.1.1. Visual Stimuli

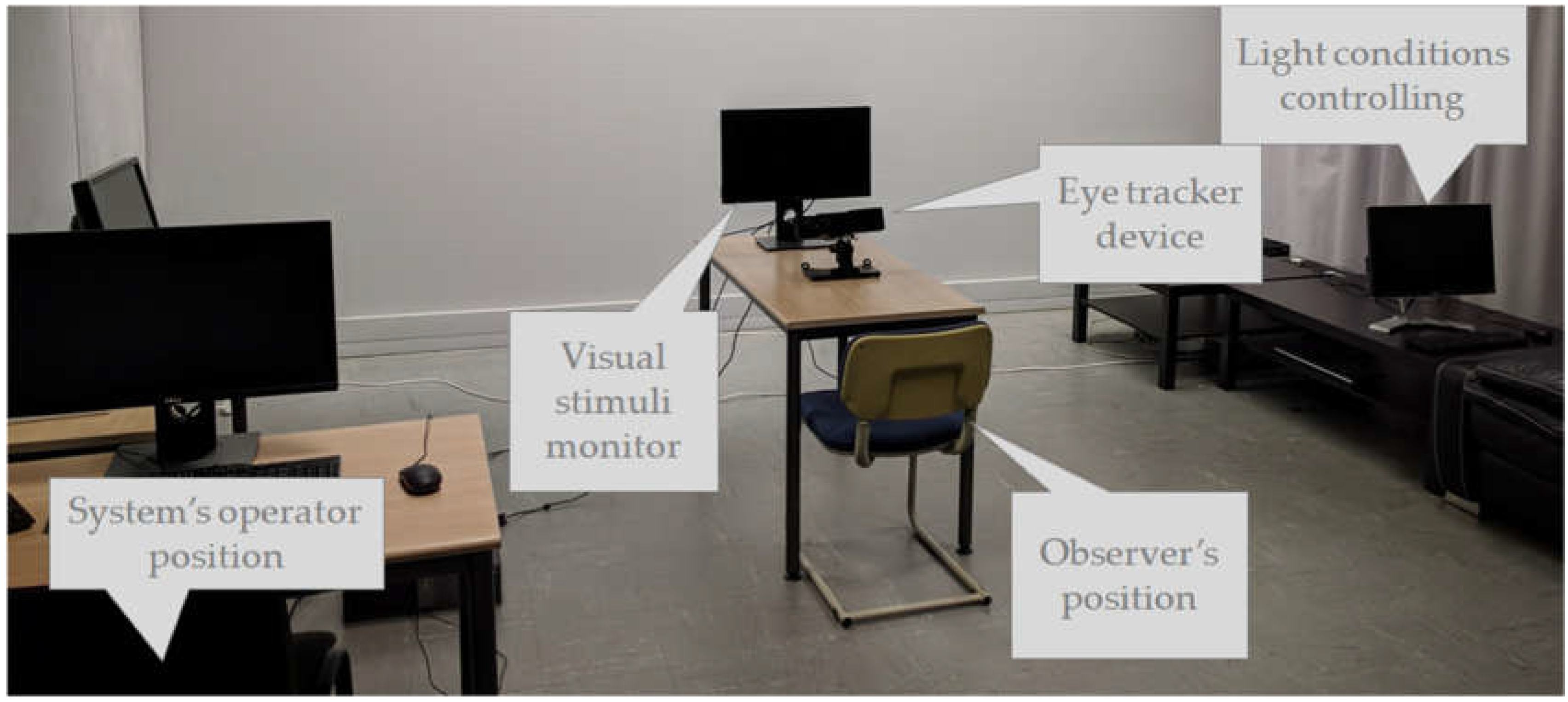

2.1.2. Equipment and Software

2.1.3. Observers

2.1.4. Experimental Process

2.2. Data Analysis

2.2.1. Fixation Detection

2.2.2. Eye Tracking Metrics

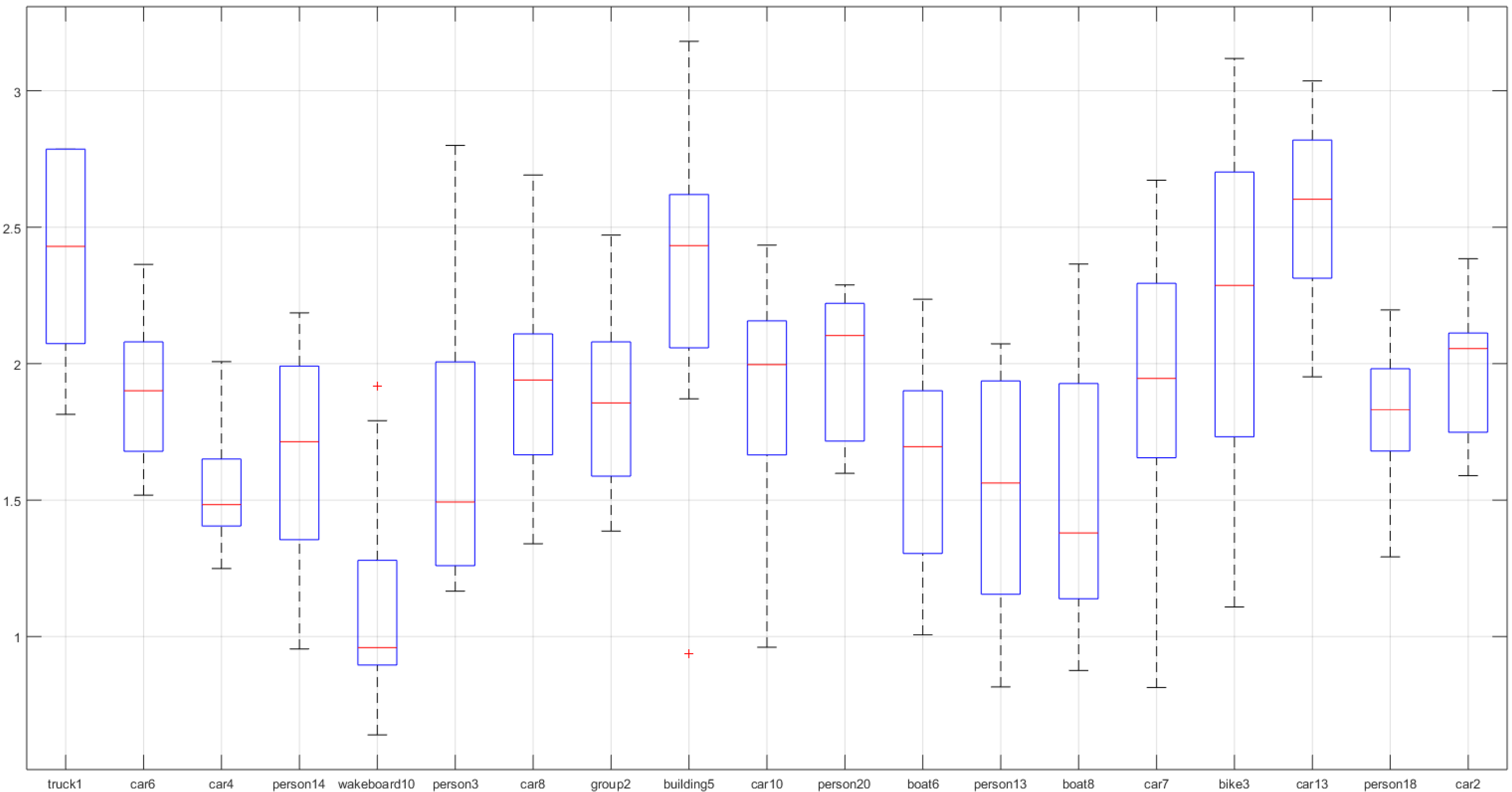

- Normalized Number of Fixations Per Second

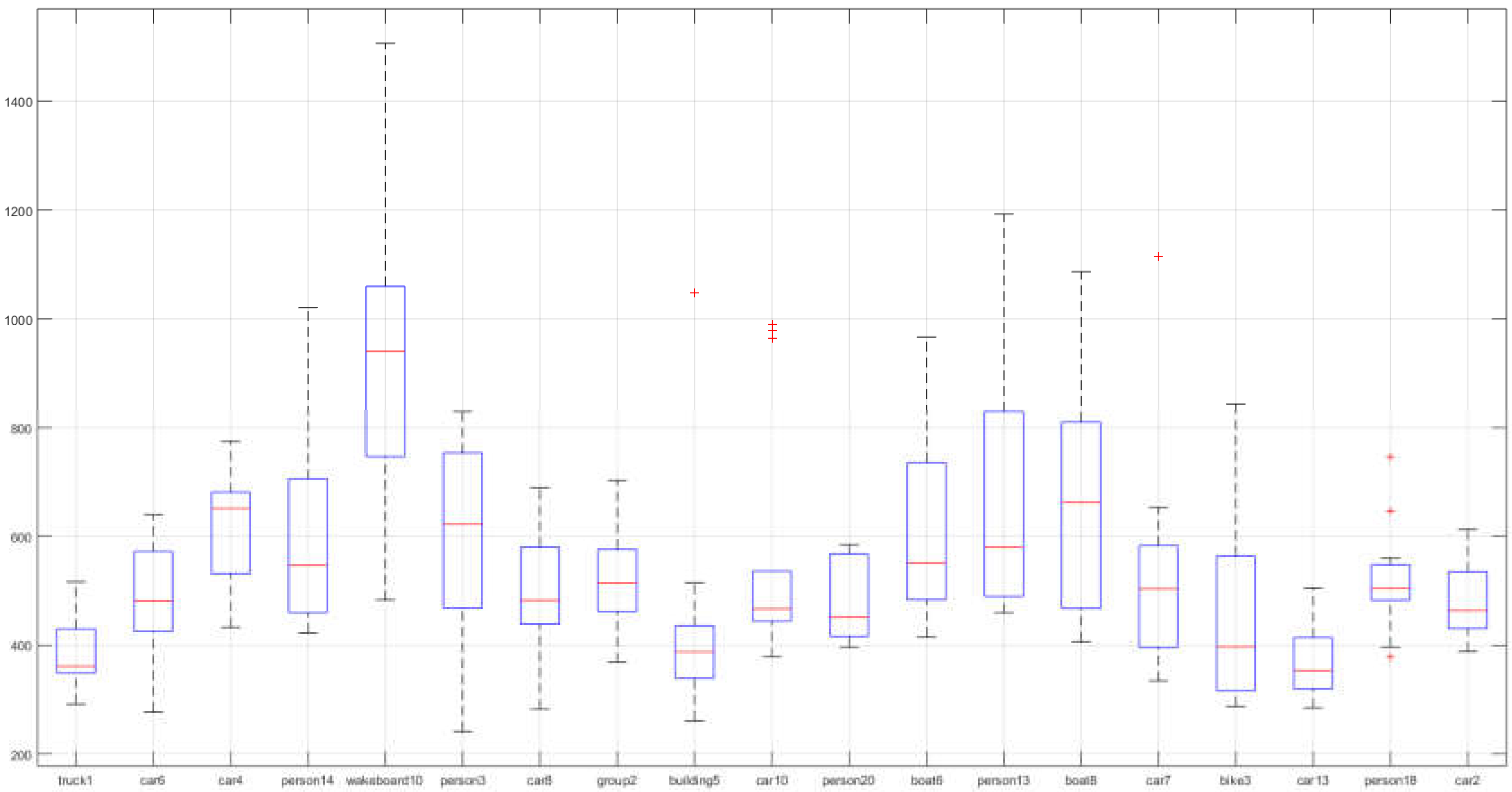

- Average Fixations Duration (ms)

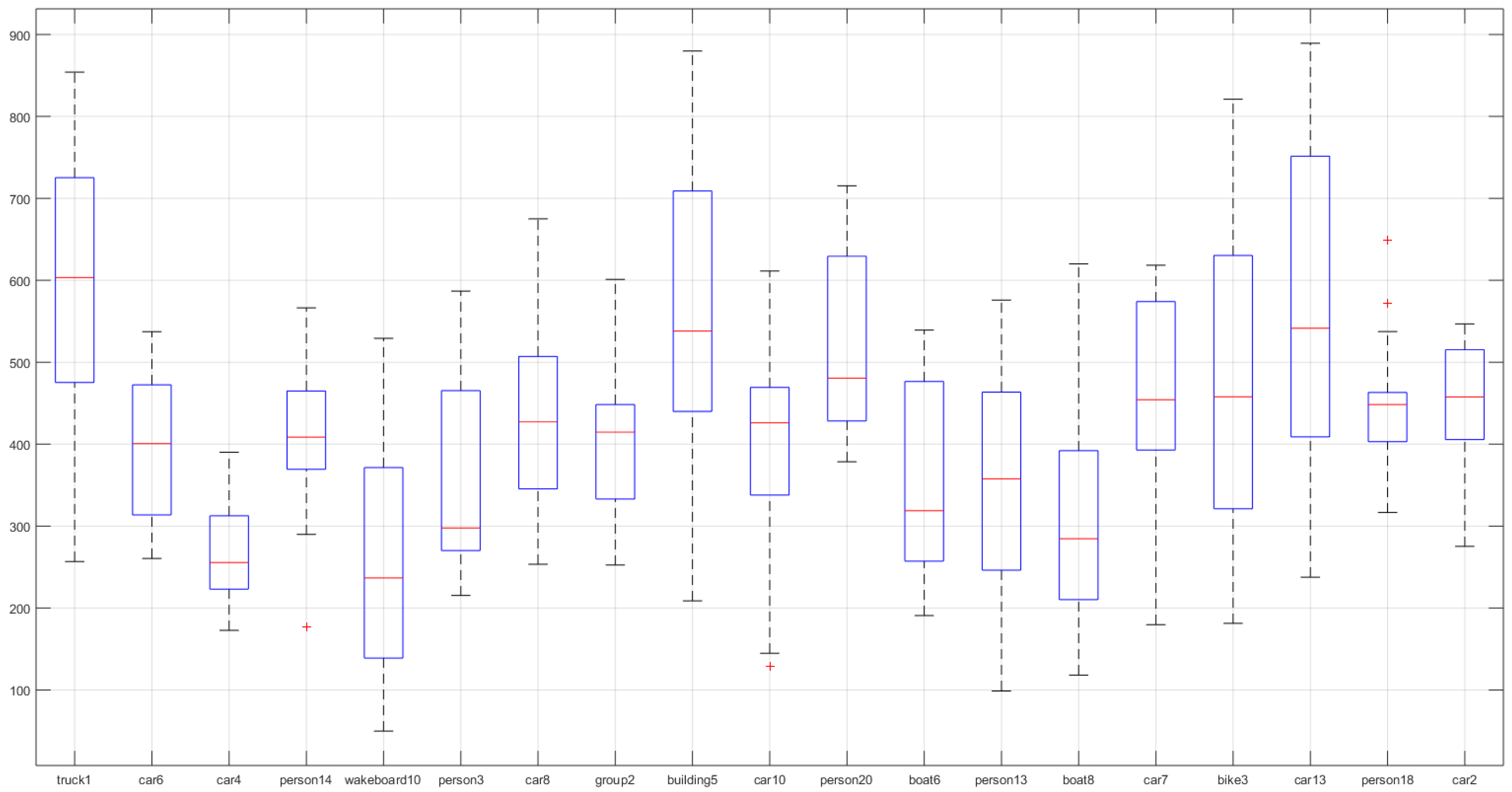

- Normalized Scanpath Length (px) Per Second

2.2.3. Data Visualization

3. Results

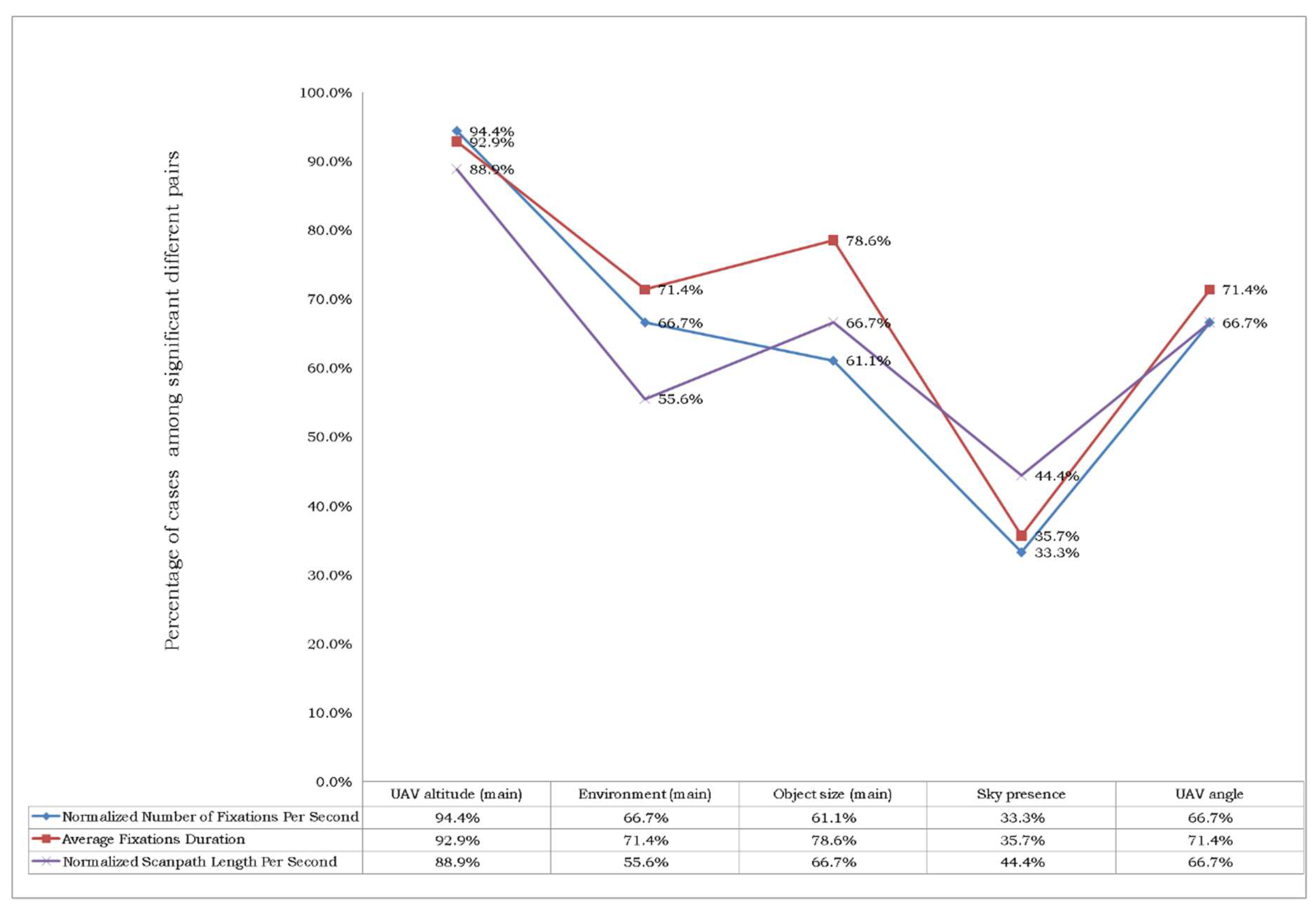

3.1. Quantitative Analysis

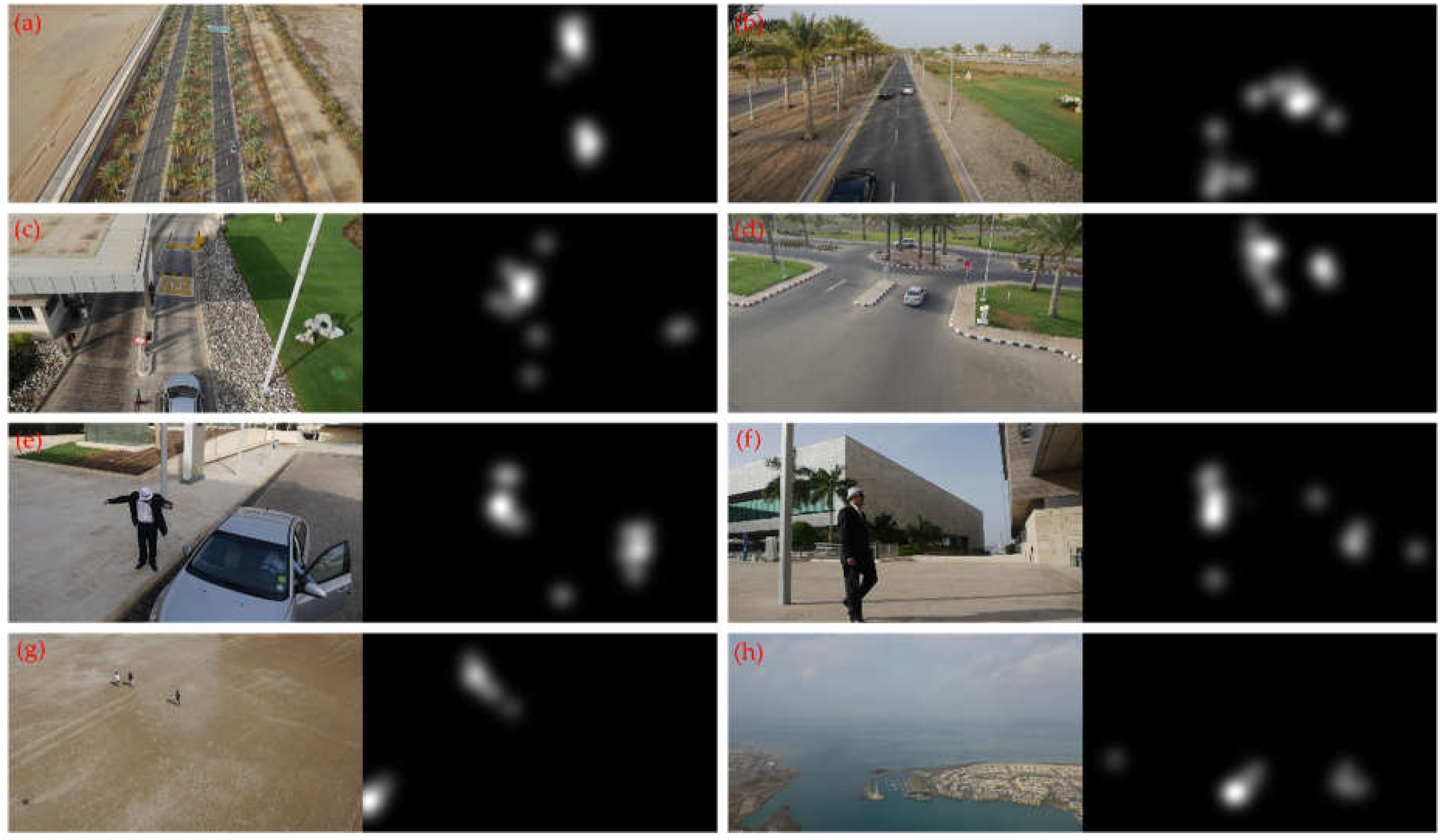

3.2. Qualitative Analysis

3.3. Dataset Distribution

4. Discussion and Conclusions

5. Future Outlook

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Puri, A. A Survey of Unmanned Aerial Vehicles (UAV) for Traffic Surveillance; Department of Computer Science and Engineering, University of South Florida: Tampa, FL, USA, 2005; pp. 1–29. [Google Scholar]

- Hassanalian, M.; Abdelkefi, A. Classifications, applications, and design challenges of drones: A review. Progress Aerospace Sci. 2017, 91, 99–131. [Google Scholar] [CrossRef]

- González-Jorge, H.; Martínez-Sánchez, J.; Bueno, M. Unmanned aerial systems for civil applications: A review. Drones 2017, 1, 2. [Google Scholar] [CrossRef]

- Chmaj, G.; Selvaraj, H. Distributed processing applications for UAV/drones: A survey. In Progress in Systems Engineering. Advances in Intelligent Systems and Computing; Selvaraj, H., Zydek, D., Chmaj, G., Eds.; Springer: Cham, Switzerland, 2015; pp. 449–454. [Google Scholar]

- Martínez-Tomás, R.; Rincón, M.; Bachiller, M.; Mira, J. On the correspondence between objects and events for the diagnosis of situations in visual surveillance tasks. Pattern Recognit. Lett. 2008, 29, 1117–1135. [Google Scholar] [CrossRef]

- Shah, M.; Javed, O.; Shafique, K. Automated visual surveillance in realistic scenarios. IEEE MultiMedia 2007, 14, 30–39. [Google Scholar] [CrossRef]

- Pan, Z.; Liu, S.; Fu, W. A review of visual moving target tracking. Multimedia Tools Appl. 2017, 76, 16989–17018. [Google Scholar] [CrossRef]

- Kim, I.S.; Choi, H.S.; Yi, K.M.; Choi, J.Y.; Kong, S.G. Intelligent visual surveillance—A survey. Int. J. Control Autom. Syst. 2010, 8, 926–939. [Google Scholar] [CrossRef]

- Yazdi, M.; Bouwmans, T. New trends on moving object detection in video images captured by a moving camera: A survey. Comput. Sci. Rev. 2018, 28, 157–177. [Google Scholar] [CrossRef]

- Teutsch, M.; Krüger, W. Detection, segmentation, and tracking of moving objects in UAV videos. In Proceedings of the 2012 IEEE Ninth International Conference on Advanced Video and Signal-Based Surveillance (AVSS), Beijing, China, 18–21 September 2012; pp. 313–318. [Google Scholar]

- Tsakanikas, V.; Dagiuklas, T. Video surveillance systems-current status and future trends. Comput. Electrical Eng. 2018, 70, 736–753. [Google Scholar] [CrossRef]

- Dupont, L.; Ooms, K.; Duchowski, A.T.; Antrop, M.; Van Eetvelde, V. Investigating the visual exploration of the rural-urban gradient using eye-tracking. Spatial Cognit. Comput. 2017, 17, 65–88. [Google Scholar] [CrossRef]

- Bonetto, M.; Korshunov, P.; Ramponi, G.; Ebrahimi, T. Privacy in mini-drone based video surveillance. In Proceedings of the 2015 11th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (FG), Ljubljana, Slovenia, 4 May 2015; pp. 1–6. [Google Scholar]

- Shu, T.; Xie, D.; Rothrock, B.; Todorovic, S.; Zhu, S.-C. Joint inference of groups, events and human roles in aerial videos. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 4576–4584. [Google Scholar]

- Mueller, M.; Smith, N.; Ghanem, B. A benchmark and simulator for UAV tracking. In Computer Vision—ECCV 2016. ECCV 2016; Leibe, B., Matas, J., Sebe, N., Welling, M., Eds.; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2016; Volume 9905, pp. 445–461. [Google Scholar]

- Robicquet, A.; Sadeghian, A.; Alahi, A.; Savarese, S. Learning social etiquette: Human trajectory understanding in crowded scenes. In Computer Vision—ECCV 2016. ECCV 2016; Leibe, B., Matas, J., Sebe, N., Welling, M., Eds.; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2016; Volume 9912, pp. 549–565. [Google Scholar]

- Barekatain, M.; Martí, M.; Shih, H.F.; Murray, S.; Nakayama, K.; Matsuo, Y.; Prendinger, H. Okutama-Action: An aerial view video dataset for concurrent human action detection. In Proceedings of the 1st Joint BMTT-PETS Workshop on Tracking and Surveillance, CVPR, Honolulu, HI, USA, 26 July 2017; pp. 1–8. [Google Scholar]

- Guznov, S.; Matthews, G.; Warm, J.S.; Pfahler, M. Training Techniques for Visual Search in Complex Task Environments. Hum. Factors 2017, 59, 1139–1152. [Google Scholar] [CrossRef] [PubMed]

- Posner, M.I.; Snyder, C.R.; Davidson, B.J. Attention and the detection of signals. J. Exp. Psychol. Gen. 1980, 109, 160. [Google Scholar] [CrossRef]

- Kramer, S.H.A.F. Further evidence for the division of attention among non-contiguous locations. Vis. Cognit. 1998, 5, 217–256. [Google Scholar] [CrossRef]

- Scholl, B.J. Objects and attention: The state of the art. Cognition 2001, 80, 1–46. [Google Scholar] [CrossRef]

- Connor, C.E.; Egeth, H.E.; Yantis, S. Visual attention: Bottom-up versus top-down. Curr. Biol. 2004, 14, R850–R852. [Google Scholar] [CrossRef] [PubMed]

- Neisser, U. Cognitive Psychology; Appleton, Century, Crofts: New York, NY, USA, 1967. [Google Scholar]

- Sussman, T.J.; Jin, J.; Mohanty, A. Top-down and bottom-up factors in threat-related perception and attention in anxiety. Biol. Psychol. 2016, 121, 160–172. [Google Scholar] [CrossRef] [PubMed]

- Itti, L.; Koch, C. Computational modelling of visual attention. Nat. Rev. Neurosci. 2001, 2, 194–203. [Google Scholar] [CrossRef] [PubMed]

- Martinez-Conde, S.; Macknik, S.L.; Hubel, D.H. The role of fixational eye movements in visual perception. Nat. Rev. Neurosci. 2004, 5, 229–240. [Google Scholar] [CrossRef] [PubMed]

- Larsson, L.; Nyström, M.; Ardö, H.; Åström, K.; Stridh, M. Smooth pursuit detection in binocular eye-tracking data with automatic video-based performance evaluation. J. Vis. 2016, 16, 20. [Google Scholar] [CrossRef] [PubMed]

- Duchowski, A.T. A breadth-first survey of eye-tracking applications. Behav. Res. Methods Instrum. Comput. 2002, 34, 455–470. [Google Scholar] [CrossRef] [PubMed]

- Poole, A.; Ball, L.J. Eye tracking in HCI and usability research. In Encyclopaedia of Human-Computer Interaction; Ghaoui, C., Ed.; Idea Group Inc.: Pennsylvania, PA, USA, 2006; pp. 211–219. [Google Scholar]

- Ehmke, C.; Wilson, S. Identifying web usability problems from eye-tracking data. In Proceedings of the 21st British HCI Group Annual Conference on People and Computers: HCI... but Not as We Know It; British Computer Society: Swindon, UK, 2007; Volume 1, pp. 119–128. [Google Scholar]

- Blascheck, T.; Kurzhals, K.; Raschke, M.; Burch, M.; Weiskopf, D.; Ertl, T. Visualization of eye tracking data: A taxonomy and survey. Comput. Graph. Forum 2017, 36, 260–284. [Google Scholar] [CrossRef]

- Krassanakis, V.; Filippakopoulou, V.; Nakos, B. EyeMMV toolbox: An eye movement post-analysis tool based on a two-step spatial dispersion threshold for fixation identification. J. Eye Movement Res. 2014, 7, 1–10. [Google Scholar]

- Krassanakis, V.; Misthos, M.L.; Menegaki, M. LandRate toolbox: An adaptable tool for eye movement analysis and landscape rating. In Proceedings of the 3rd International Workshop on Eye Tracking for Spatial Research (ET4S), Zurich, Switzerland, 14 January 2018; pp. 40–45. [Google Scholar]

- Dorr, M.; Vig, E.; Barth, E. Eye movement prediction and variability on natural video data sets. Vis. Cognit. 2012, 20, 495–514. [Google Scholar] [CrossRef] [PubMed]

- Vig, E.; Dorr, M.; Cox, D. Space-variant descriptor sampling for action recognition based on saliency and eye movements. In European Conference on Computer Vision; Springer: Berlin/Heidelberg, Germany, 2012; pp. 84–97. [Google Scholar]

- Dechterenko, F.; Lukavsky, J. Predicting eye movements in multiple object tracking using neural networks. In Proceedings of the Ninth Biennial ACM Symposium on Eye Tracking Research & Applications, Charleston, SC, USA, 14–17 March 2016; pp. 271–274. [Google Scholar]

- Breeden, K.; Hanrahan, P. Gaze data for the analysis of attention in feature films. ACM Trans. Appl. Percept. 2017, 14, 23. [Google Scholar] [CrossRef]

- Hild, J.; Voit, M.; Kühnle, C.; Beyerer, J. Predicting observer’s task from eye movement patterns during motion image analysis. In Proceedings of the 2018 ACM Symposium on Eye Tracking Research & Applications, Warsaw, Poland, 14–17 June 2018. Article No. 58. [Google Scholar]

- ITU-R. Methodology for the Subjective Assessment of the Quality of Television Pictures; BT.500-13; ITU-R: Geneva, Switzerland, 2012. [Google Scholar]

- ITU-R. Subjective Assessment Methods for Image Quality in High-Definition Television; BT.710-4; ITU-R: Geneva, Switzerland, 1998. [Google Scholar]

- Cornelissen, F.W.; Peters, E.M.; Palmer, J. The Eyelink Toolbox: Eye tracking with MATLAB and the Psychophysics Toolbox. Behav. Res. Methods Instrum. Comput. 2002, 34, 613–617. [Google Scholar] [CrossRef] [PubMed]

- Krassanakis, V.; Filippakopoulou, V.; Nakos, B. Detection of moving point symbols on cartographic backgrounds. J. Eye Movement Res. 2016, 9. [Google Scholar] [CrossRef]

- Salvucci, D.D.; Goldberg, J.H. Identifying fixations and saccades in eye-tracking protocols. In Proceedings of the 2000 Symposium on Eye Tracking Research & Applications, Palm Beach Gardens, FL, USA, 6–8 November 2000; pp. 71–78. [Google Scholar]

- Jacob, R.J.; Karn, K.S. Eye tracking in human-computer interaction and usability research: Ready to deliver the promises. In The Mind’s Eye; Hyönä, J., Radach, R., Deubel, H., Eds.; North-Holland: Amsterdam, The Netherlands, 2003; pp. 573–605. [Google Scholar]

- Camilli, M.; Nacchia, R.; Terenzi, M.; Di Nocera, F. ASTEF: A simple tool for examining fixations. Behav. Res. Methods 2008, 40, 373–382. [Google Scholar] [CrossRef] [PubMed]

- Blignaut, P. Fixation identification: The optimum threshold for a dispersion algorithm. Atten. Percept. Psychophys. 2009, 71, 881–895. [Google Scholar] [CrossRef] [PubMed]

- Blignaut, P.; Beelders, T. The effect of fixational eye movements on fixation identification with a dispersion-based fixation detection algorithm. J. Eye Movement Res. 2009, 2. [Google Scholar] [CrossRef]

- Manor, B.R.; Gordon, E. Defining the temporal threshold for ocular fixation in free-viewing visuocognitive tasks. J. Neurosci. Methods 2003, 128, 85–93. [Google Scholar] [CrossRef]

- Bojko, A.A. Informative or misleading? Heatmaps deconstructed. In Human-Computer Interaction. New Trends. HCI 2009; Jacko, J.A., Ed.; Springer: Berlin/Heidelberg, Germany, 2009; pp. 30–39. [Google Scholar]

- Nyström, M.; Holmqvist, K. An adaptive algorithm for fixation, saccade, and glissade detection in eyetracking data. Beha. Res. Methods 2010, 42, 188–204. [Google Scholar] [CrossRef] [PubMed]

- Vigier, T.; Rousseau, J.; Da Silva, M.P.; Le Callet, P. A new HD and UHD video eye tracking dataset. In Proceedings of the 7th International Conference on Multimedia Systems, Klagenfurt, Austria, 10–13 May 2016. Article No. 48. [Google Scholar]

- Wandell, B.A. Foundations of Vision; Sinauer Associates: Sunderland, MA, USA, 1995. [Google Scholar]

- Goldberg, J.H.; Kotval, X.P. Computer interface evaluation using eye movements: Methods and constructs. Int. J. Ind. Ergon. 1999, 24, 631–645. [Google Scholar] [CrossRef]

- Jarodzka, H.; Scheiter, K.; Gerjets, P.; Van Gog, T. In the eyes of the beholder: How experts and novices interpret dynamic stimuli. Learn. Instr. 2010, 20, 146–154. [Google Scholar] [CrossRef]

- Stofer, K.; Che, X. Comparing experts and novices on scaffolded data visualizations using eye-tracking. J. Eye Movement Res. 2014, 7. [Google Scholar] [CrossRef]

- Bylinskii, Z.; Borkin, M.A.; Kim, N.W.; Pfister, H.; Oliva, A. Eye fixation metrics for large scale evaluation and comparison of information visualizations. In Eye Tracking and Visualization. ETVIS 2015. Mathematics and Visualization; Burch, M., Chuang, L., Fisher, B., Schmidt, A., Weiskopf, D., Eds.; Springer: Cham, Switzerland, 2015; pp. 235–255. [Google Scholar]

- Duchowski, A.T. Eye Tracking Methodology: Theory & Practice, 2nd ed.; Springer-Verlag: London, UK, 2007. [Google Scholar]

- Rayner, K. Eye movements in reading and information processing: 20 years of research. Psychol. Bull. 1998, 124, 372. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Chandler, D.M.; Le Callet, P. Quantifying the relationship between visual salience and visual importance. Proc. SPIE 2010, 7527. [Google Scholar] [CrossRef]

- Dupont, L.; Antrop, M.; Van Eetvelde, V. Eye-tracking analysis in landscape perception research: Influence of photograph properties and landscape characteristics. Landsc. Res. 2014, 39, 417–432. [Google Scholar] [CrossRef]

- Wolfe, J.M.; Horowitz, T.S. Five factors that guide attention in visual search. Nature Human Behav. 2017, 1, 0058. [Google Scholar] [CrossRef]

- Borji, A.; Itti, L. Defending Yarbus: Eye movements reveal observers’ task. J. Vis. 2014, 14, 29. [Google Scholar] [CrossRef] [PubMed]

- Wolfe, J.M. Guidance of visual search by preattentive information. In Neurobiology of Attention; Itti, L., Rees, G., Tsotsos, J., Eds.; Academic Press: San Diego, CA, USA, 2005; pp. 101–104. [Google Scholar]

- Ren, X.; Kang, J. Interactions between landscape elements and tranquility evaluation based on eye tracking experiments. J. Acoust. Soc. Am. 2015, 138, 3019–3022. [Google Scholar] [CrossRef] [PubMed]

- Wu, C.C.; Alaoui-Soce, A.; Wolfe, J.M. Event monitoring: Can we detect more than one event at a time? Vis. Res. 2018, 145, 49–55. [Google Scholar] [CrossRef] [PubMed]

- Dalmaijer, E. Is the low-cost EyeTribe eye tracker any good for research? PeerJ PrePrints 2014, 2, e585v1. [Google Scholar]

- Ooms, K.; Dupont, L.; Lapon, L.; Popelka, S. Accuracy and precision of fixation locations recorded with the low-cost Eye Tribe tracker in different experimental setups. J. Eye Movement Res. 2015, 8, 1–24. [Google Scholar]

- Ooms, K.; Krassanakis, V. Measuring the Spatial Noise of a Low-Cost Eye Tracker to Enhance Fixation Detection. J. Imaging 2018, 4, 96. [Google Scholar] [CrossRef]

| ID | Video Name | No Frames | Duration (sec, 30 FPS) | UAV Altitude (main) | Environment (main) | Object Size (main) | Sky Presence | Main Perceived Angle between UAV Flight Plane and Ground |

|---|---|---|---|---|---|---|---|---|

| 1 | truck1 | 463 | 15.43 | low, intermediate | road | big, medium | true | vertical-oblique |

| 2 | car6 | 4861 | 162.03 | low to high | roads, buildings area | big to small | true | vertical-oblique-horizontal |

| 3 | car4 | 1345 | 44.83 | high to intermediate | roads | small to medium | false | oblique-horizontal |

| 4 | person14 | 2923 | 97.43 | intermediate | beach | medium | false | oblique |

| 5 | wakeboard10 | 469 | 15.63 | intermediate to low | sea | medium to big | true | oblique |

| 6 | person3 | 643 | 21.43 | low to intermediate | green place (grass) | big to medium | false | oblique |

| 7 | car8 | 2575 | 85.83 | low, intermediate | parking, roundabout, roads, crossroads | big, medium | true | oblique |

| 8 | group2 | 2683 | 89.43 | intermediate | beach | medium (3 persons) | false | oblique |

| 9 | building5 | 481 | 16.03 | high (very high) | port, buildings area | not clear object without considering the annotation | true | oblique |

| 10 | car10 | 1405 | 46.83 | intermediate, high | roads | medium, small | true | oblique-vertical |

| 11 | person20 | 1783 | 59.43 | low to very low | building area | bit to very big | true | oblique-vertical |

| 12 | boat6 | 805 | 26.83 | high to low | sea | small to big | true | vertical |

| 13 | person13 | 883 | 29.43 | low | square place (almost empty) | big | false | oblique |

| 14 | boat8 | 685 | 22.83 | high to low | sea, city | small, medium | true | oblique-vertical |

| 15 | car7 | 1033 | 34.43 | intermediate | roundabout | medium | false | oblique |

| 16 | bike3 | 433 | 14.43 | intermediate | building, road | small | true | oblique |

| 17 | car13 | 415 | 13.83 | very high | buildings area, road network, sea | very small | false | horizontal-oblique |

| 18 | person18 | 1393 | 46.43 | very low | building area | very big | true | vertical |

| 19 | car2 | 1321 | 44.03 | high | roads, roundabout | small | false | horizontal |

| Basic Statistics | |

|---|---|

| Percentage (duration) of the complete dataset | ~23% |

| Percentage (number) of the complete dataset | ~21% |

| Number of videos | 19 |

| Total number of frames | 26,599 |

| Total duration | ~887 s (14:47 min) |

| Average duration | ~47 s |

| Standard deviation (duration) | ~38 s |

| Min video duration | ~14 s |

| Max video duration | ~162 s |

| UAV Video | truck1 | car6 | car4 | person14 | wakeboard10 | person3 | car8 | group2 | building5 | car10 | ||||||||||

| Eye Tracking Metric | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD |

| Normalized Number of Fixations Per Second | 2.41 | 0.33 | 1.91 | 0.27 | 1.55 | 0.23 | 1.68 | 0.38 | 1.14 | 0.40 | 1.67 | 0.48 | 1.92 | 0.36 | 1.85 | 0.32 | 2.35 | 0.56 | 1.83 | 0.49 |

| Average Fixations Duration (ms) | 386 | 64 | 489 | 100 | 624 | 99 | 583 | 169 | 922 | 300 | 593 | 178 | 495 | 113 | 524 | 93 | 431 | 192 | 566 | 226 |

| Normalized Scanpath Length (px) Per Second | 594 | 167 | 401 | 94 | 273 | 64 | 403 | 100 | 256 | 152 | 367 | 127 | 433 | 118 | 405 | 98 | 556 | 177 | 381 | 147 |

| UAV Video | person20 | boat6 | person13 | boat8 | car7 | bike3 | car13 | person18 | car2 | |||||||||||

| Eye Tracking Metric | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | AVG | STD | ||

| Normalized Number of Fixations Per Second | 2.00 | 0.26 | 1.60 | 0.37 | 1.51 | 0.40 | 1.49 | 0.48 | 1.93 | 0.49 | 2.26 | 0.59 | 2.52 | 0.35 | 1.82 | 0.25 | 1.97 | 0.23 | ||

| Average Fixations Duration (ms) | 477 | 74 | 623 | 177 | 671 | 217 | 691 | 237 | 531 | 197 | 443 | 158 | 370 | 60 | 518 | 94 | 482 | 68 | ||

| Normalized Scanpath Length (px) Per Second | 516 | 107 | 348 | 120 | 357 | 131 | 322 | 151 | 453 | 127 | 471 | 189 | 574 | 207 | 455 | 84 | 448 | 78 | ||

| Eye Tracking Metric | AVG | STD |

|---|---|---|

| Normalized Number of Fixations Per Second | 1.86 | 0.35 |

| Average Fixations Duration (ms) | 548 | 127 |

| Normalized Scanpath Length (px) Per Second | 422 | 94 |

| No Cases | “Normalized Number of Fixations Per Second” | “Normalized Average Fixations Duration” | “Normalized Scanpath Length Per Second” | |||

|---|---|---|---|---|---|---|

| 1 | [truck1] | [car4] | [truck1] | [car4] | [truck1] | [wakeboard10] |

| 2 | [truck1] | [wakeboard10] | [truck1] | [wakeboard10] | [truck1] | [boat8] |

| 3 | [truck1] | [boat6] | [truck1] | [person13] | [car4] | [building5] |

| 4 | [truck1] | [person13] | [truck1] | [boat8] | [car4] | [person20] |

| 5 | [truck1] | [boat8] | [car4] | [building5] | [car4] | [car13] |

| 6 | [car4] | [building5] | [car4] | [car13] | [wakeboard10] | [building5] |

| 7 | [car4] | [car13] | [wakeboard10] | [building5] | [wakeboard10] | [person20] |

| 8 | [person14] | [car13] | [wakeboard10] | [person20] | [wakeboard10] | [car13] |

| 9 | [wakeboard10] | [building5] | [wakeboard10] | [bike3] | ||

| 10 | [wakeboard10] | [person20] | [wakeboard10] | [car13] | ||

| 11 | [wakeboard10] | [bike3] | [person3] | [car13] | ||

| 12 | [wakeboard10] | [car13] | [boat6] | [car13] | ||

| 13 | [person3] | [car13] | [person13] | [car13] | ||

| 14 | [building5] | [person13] | [boat8] | [car13] | ||

| 15 | [building5] | [boat8] | ||||

| 16 | [boat6] | [car13] | ||||

| 17 | [person13] | [car13] | ||||

| 18 | [boat8] | [car13] | ||||

| UAV Altitude (main) | Environment (main) | Object Size (main) | Sky Presence | Main Perceived Angle between UAV Flight Plane and Ground | |

|---|---|---|---|---|---|

| Average percentage value | 92.1% | 64.6% | 68.8% | 37.8% | 68.3% |

| Standard deviation | 2.9% | 8.1% | 8.9% | 5.9% | 2.7% |

| Normalized Number of Fixations Per Second | Average Fixations Duration (ms) | Normalized Scanpath Length (px) Per Second |

|---|---|---|

| car13 | wakeboard10 | truck1 |

| truck1 | boat8 | car13 |

| building5 | person13 | building5 |

| bike3 | car4 | person20 |

| person20 | boat6 | bike3 |

| car2 | person3 | person18 |

| car7 | person14 | car7 |

| car8 | car10 | car2 |

| car6 | car7 | car8 |

| group2 | group2 | group2 |

| car10 | person18 | person14 |

| person18 | car8 | car6 |

| person14 | car6 | car10 |

| person3 | car2 | person3 |

| boat6 | person20 | person13 |

| car4 | bike3 | boat6 |

| person13 | building5 | boat8 |

| boat8 | truck1 | car4 |

| wakeboard10 | car13 | wakeboard10 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Krassanakis, V.; Perreira Da Silva, M.; Ricordel, V. Monitoring Human Visual Behavior during the Observation of Unmanned Aerial Vehicles (UAVs) Videos. Drones 2018, 2, 36. https://doi.org/10.3390/drones2040036

Krassanakis V, Perreira Da Silva M, Ricordel V. Monitoring Human Visual Behavior during the Observation of Unmanned Aerial Vehicles (UAVs) Videos. Drones. 2018; 2(4):36. https://doi.org/10.3390/drones2040036

Chicago/Turabian StyleKrassanakis, Vassilios, Matthieu Perreira Da Silva, and Vincent Ricordel. 2018. "Monitoring Human Visual Behavior during the Observation of Unmanned Aerial Vehicles (UAVs) Videos" Drones 2, no. 4: 36. https://doi.org/10.3390/drones2040036

APA StyleKrassanakis, V., Perreira Da Silva, M., & Ricordel, V. (2018). Monitoring Human Visual Behavior during the Observation of Unmanned Aerial Vehicles (UAVs) Videos. Drones, 2(4), 36. https://doi.org/10.3390/drones2040036