Person Identification from Drones by Humans: Insights from Cognitive Psychology

Abstract

1. Background

2. Person Identification from the Face

3. Person Identification from the Body

4. Person Identification from Motion

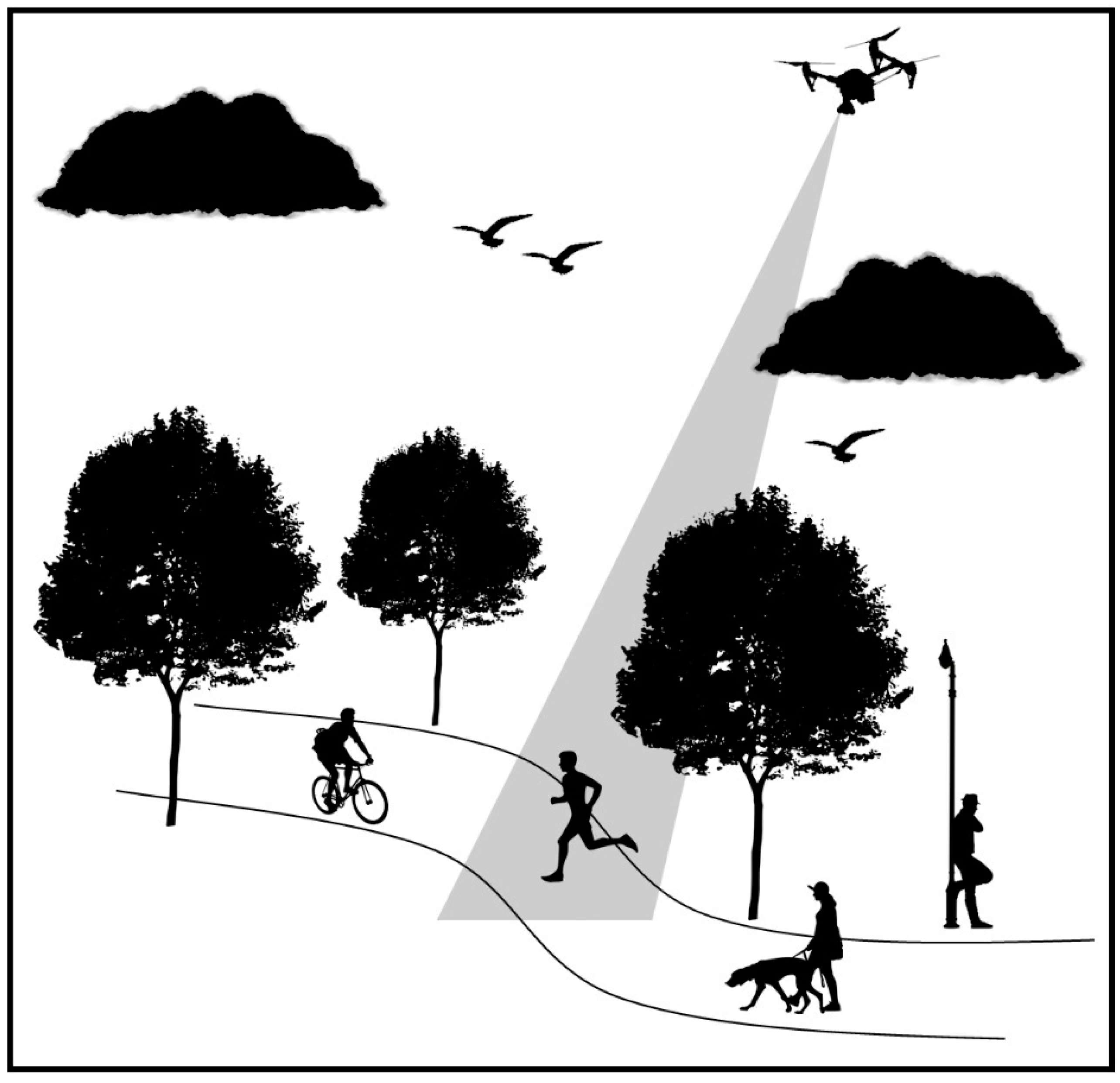

5. Person Identification from Aerial Footage Collected by a Drone

6. Possible Solutions

7. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Gibson, J.J. The Visual Perception of Objective Motion and Subjective Movement 1. Psychol. Rev. 1954, 61, 304–314. [Google Scholar] [CrossRef] [PubMed]

- Von Helmholtz, H. Helmholtz’s Treatise on Physiological Optics (Trans. from the 3rd German Ed.), 3rd ed.; Southall, J.P.C., Ed.; Optical Society of America: New York, NY, USA, 1924. [Google Scholar]

- Merseyside police drone tracks car theft suspects. BBC News. 11 February 2010. Available online: http://news.bbc.co.uk/1/hi/england/merseyside/8510370.stm (accessed on 10 August 2018).

- Sulleyman, A. UK police to use 24-hour drone unit to investigate crimes and search for missing people. The Independent. 20 March 2017. Available online: https://www.independent.co.uk/life-style/gadgets-and-tech/news/uk-police-drones-24-hour-unit-investigate-crimes-missing-person-search-cases-cornwall-devon-forces-a7639641.html (accessed on 10 August 2018).

- Unmanned Aircraft Systems; Ministry of Defence: London, UK, 2017.

- Linebaugh, H. I worked on the US drone program. The public should know what really goes on. The Guardian. 29 December 2013. Available online: https://www.theguardian.com/commentisfree/2013/dec/29/drones-us-military (accessed on 10 August 2018).

- Fielding-Smith, A. “When you mess up, people die”: Civilians who are drone pilots’ extra eyes. The Guardian. 30 July 2015. Available online: https://www.theguardian.com/us-news/2015/jul/30/when-you-mess-up-people-die-civilians-who-are-drone-pilots-extra-eyes (accessed on 10 August 2018).

- Amnesty International. Will I Be Next?: US Drone Strikes in Pakistan; Amnesty International: London, UK, 2013. [Google Scholar]

- “Too easy”: Ex-drone operator on watching civilians die. BBC News. 5 December 2012. Available online: https://www.bbc.co.uk/news/av/world-us-canada-19820760/too-easy-ex-drone-operator-on-watching-civilians-die (accessed on 10 August 2018).

- Rice, A.; Phillips, P.J.; O’Toole, A.J. The Role of the Face and Body in Unfamiliar Person Identification. Appl. Cogn. Psychol. 2013, 27, 761–768. [Google Scholar] [CrossRef]

- O’Toole, A.J.; Jonathon Phillips, P.; Weimer, S.; Roark, D.A.; Ayyad, J.; Barwick, R.; Dunlop, J. Recognizing People from Dynamic and Static Faces and Bodies: Dissecting Identity with a Fusion Approach. Vis. Res. 2011, 51, 74–83. [Google Scholar] [CrossRef] [PubMed]

- Bruce, V.; Young, A.W. Understanding Face Recognition. Br. J. Psychol. 1986, 77, 305–327. [Google Scholar] [CrossRef] [PubMed]

- Burton, A.M.; Wilson, S.; Cowan, M.; Bruce, V. Face Recognition in Poor-Quality Video: Evidence from Security Surveillance. Psychol. Sci. 1999, 10, 243–248. [Google Scholar] [CrossRef]

- Johnston, R.A.; Edmonds, A.J. Familiar and Unfamiliar Face Recognition: A Review. Memory 2009, 17, 577–596. [Google Scholar] [CrossRef] [PubMed]

- Young, A.W.; Burton, A.M. Recognizing Faces. Curr. Dir. Psychol. Sci. 2017, 26, 212–217. [Google Scholar] [CrossRef]

- Jenkins, R.; Kerr, C. Identifiable Images of Bystanders Extracted from Corneal Reflections. PLoS ONE 2013, 8, 8–12. [Google Scholar] [CrossRef] [PubMed]

- Bruce, V.; Henderson, Z.; Greenwood, K.; Hancock, P.J.B.; Burton, A.M.; Miller, P. Verification of Face Identities from Images Captured on Video. J. Exp. Psychol. Appl. 1999, 5, 339–360. [Google Scholar] [CrossRef]

- Megreya, A.M.; Burton, A.M. Unfamiliar Faces Are Not Faces. Mem. Cogn. 2006, 34, 865–876. [Google Scholar] [CrossRef]

- Fysh, M.C.; Bindemann, M. Forensic Face Matching: A Review. In Face processing: Systems, Disorders and Cultural Differences; Bindemann, M., Megreya, A.M., Eds.; Nova Science Publishing, Inc.: New York, NY, USA, 2017; pp. 1–20. [Google Scholar]

- Johnston, R.A.; Bindemann, M. Introduction to Forensic Face Matching. Appl. Cogn. Psychol. 2013, 27, 697–699. [Google Scholar] [CrossRef]

- Burton, A.M.; White, D.; McNeill, A. The Glasgow Face Matching Test. Behav. Res. Methods 2010, 42, 286–291. [Google Scholar] [CrossRef] [PubMed]

- Bindemann, M.; Avetisyan, M.; Rakow, T. Who Can Recognize Unfamiliar Faces? Individual Differences and Observer Consistency in Person Identification. J. Exp. Psychol. Appl. 2012, 18, 277–291. [Google Scholar] [CrossRef] [PubMed]

- Bindemann, M.; Sandford, A. Me, Myself, and I: Different Recognition Rates for Three Photo-IDs of the Same Person. Perception 2011, 40, 625–627. [Google Scholar] [CrossRef] [PubMed]

- Jenkins, R.; White, D.; Van Montfort, X.; Mike Burton, A. Variability in Photos of the Same Face. Cognition 2011, 121, 313–323. [Google Scholar] [CrossRef] [PubMed]

- Kemp, R.I.; Towell, N.; Pike, G. When Seeing Should Not Be Believing: Photographs, Credit Cards and Fraud. Appl. Cogn. Psychol. 1997, 11, 211–222. [Google Scholar] [CrossRef]

- White, D.; Kemp, R.I.; Jenkins, R.; Matheson, M.; Burton, A.M. Passport Officers’ Errors in Face Matching. PLoS ONE 2014, 9. [Google Scholar] [CrossRef] [PubMed]

- Davis, J.P.; Forrest, C.; Treml, F.; Jansari, A. Identification from CCTV: Assessing Police Super-Recogniser Ability to Spot Faces in a Crowd and Susceptibility to Change Blindness. Appl. Cogn. Psychol. 2018, 32, 337–353. [Google Scholar] [CrossRef]

- White, D.; Dunn, J.D.; Schmid, A.C.; Kemp, R.I. Error Rates in Users of Automatic Face Recognition Software. PLoS ONE 2015, 10, 1–14. [Google Scholar] [CrossRef] [PubMed]

- Wirth, B.E.; Carbon, C.C. An Easy Game for Frauds? Effects of Professional Experience and Time Pressure on Passport-Matching Performance. J. Exp. Psychol. Appl. 2017, 23, 138–157. [Google Scholar] [CrossRef] [PubMed]

- Henderson, Z.; Bruce, V.; Burton, A.M. Matching the Faces of Robbers Captured on Video. Appl. Cogn. Psychol. 2001, 15, 445–464. [Google Scholar] [CrossRef]

- Bindemann, M.; Attard, J.; Leach, A.; Johnston, R.A. The Effect of Image Pixelation on Unfamiliar Face Matching. Appl. Cogn. Psychol. 2013, 27, 707–717. [Google Scholar] [CrossRef]

- Lander, K.; Bruce, V.; Hill, H. Evaluating the Effectiveness of Pixelation and Blurring on Masking the Identity of Familiar Faces. Appl. Cogn. Psychol. 2001, 15, 101–116. [Google Scholar] [CrossRef]

- Estudillo, A.J.; Bindemann, M. Generalization across View in Face Memory and Face Matching. Iperception 2014, 5, 589–601. [Google Scholar] [CrossRef] [PubMed]

- Hill, H.; Bruce, V. Effects of Lighting on the Perception of Facial Surfaces. J. Exp. Psychol. Hum. Percept. Perform. 1996, 22, 986–1004. [Google Scholar] [CrossRef] [PubMed]

- Kramer, R.S.S.; Reynolds, M.G. Unfamiliar Face Matching With Frontal and Profile Views. Perception 2018, 47, 414–431. [Google Scholar] [CrossRef] [PubMed]

- Megreya, A.M.; Sandford, A.; Burton, A.M. Matching Face Images Raken on the Same Day or Months Apart: The Limitations of Photo ID. Appl. Cogn. Psychol. 2013, 27, 700–706. [Google Scholar] [CrossRef]

- Noyes, E.; Jenkins, R. Camera-to-Subject Distance Affects Face Configuration and Perceived Identity. Cognition 2017, 165, 97–104. [Google Scholar] [CrossRef] [PubMed]

- Özbek, M.; Bindemann, M. Exploring the Time Course of Face Matching: Temporal Constraints Impair Unfamiliar Face Identification under Temporally Unconstrained Viewing. Vis. Res. 2011, 51, 2145–2155. [Google Scholar] [CrossRef] [PubMed]

- O’Toole, A.J.; Phillips, P.J.; Jiang, F.; Ayyad, J.; Pénard, N.; Abdi, H. Face Recognition Algorithms Surpass Humans Matching Faces over Changes in Illumination. IEEE Trans. Pattern Anal. Mach. Intell. 2007, 29, 1642–1646. [Google Scholar] [CrossRef] [PubMed]

- Bindemann, M.; Avetisyan, M.; Blackwell, K.A. Finding Needles in Haystacks: Identity Mismatch Frequency and Facial Identity Verification. J. Exp. Psychol. Appl. 2010, 16, 378–386. [Google Scholar] [CrossRef] [PubMed]

- Bindemann, M.; Fysh, M.; Cross, K.; Watts, R. Matching Faces against the Clock. Iperception 2016, 7, 1–18. [Google Scholar] [CrossRef] [PubMed]

- Fysh, M.C.; Bindemann, M. Effects of Time Pressure and Time Passage on Face-Matching Accuracy. R. Soc. Open Sci. 2017, 4, 170249. [Google Scholar] [CrossRef] [PubMed]

- Hahn, C.A.; O’Toole, A.J.; Phillips, P.J. Dissecting the Time Course of Person Recognition in Natural Viewing Environments. Br. J. Psychol. 2016, 107, 117–134. [Google Scholar] [CrossRef] [PubMed]

- Rice, A.; Phillips, P.J.; Natu, V.; An, X.; O’Toole, A.J. Unaware Person Recognition From the Body When Face Identification Fails. Psychol. Sci. 2013, 24, 2235–2243. [Google Scholar] [CrossRef] [PubMed]

- Bruce, V.; Henderson, Z.; Newman, C.; Burton, A.M. Matching Identities of Familiar and Unfamiliar Faces Caught on CCTV Images. J. Exp. Psychol. Appl. 2001, 7, 207–218. [Google Scholar] [CrossRef] [PubMed]

- Davis, J.P.; Valentine, T. CCTV on Trial: Matching Video Images with the Defendant in the Dock. Appl. Cogn. Psychol. 2009, 23, 482–505. [Google Scholar] [CrossRef]

- Bindemann, M.; Fysh, M.C.; Sage, S.S.K.; Douglas, K.; Tummon, H.M. Person Identification from Aerial Footage by a Remote-Controlled Drone. Sci. Rep. 2017, 7, 1–10. [Google Scholar] [CrossRef] [PubMed]

- Robertson, D.J.; Noyes, E.; Dowsett, A.J.; Jenkins, R.; Burton, A.M. Face Recognition by Metropolitan Police Super-Recognisers. PLoS ONE 2016, 11, 1–8. [Google Scholar] [CrossRef] [PubMed]

- Camber, R. Take off for Police Drones Air Force: Remote-Controlled “Flying” Squad to Chase Criminals and Hunt for Missing People. Available online: http://www.dailymail.co.uk/news/article-4329714/Remote-controlled-flying-squad-chase-criminals.html (accessed on 10 August 2018).

- Megreya, A.M.; Burton, A.M. Matching Faces to Photographs: Poor Performance in Eyewitness Memory (Without the Memory). J. Exp. Psychol. Appl. 2008, 14, 364–372. [Google Scholar] [CrossRef] [PubMed]

- Layne, R.; Hospedales, T.M.; Gong, S. Investigating Open-World Person Re-Identification Using a Drone. In Computer Vision—ECCV 2014 Workshops; Agapito, L., Bronstein, M., Rother, C., Eds.; Springer: Cham, Switzerland, 2014; pp. 225–240. [Google Scholar]

- Bondi, E.; Fang, F.; Hamilton, M.; Kar, D.; Dmello, D.; Choi, J.; Hannaford, R.; Iyer, A.; Joppa, L.; Tambe, M.; et al. SPOT Poachers in Action: Augmenting Conservation Drones With Automatic Detection in Near Real Time. In Proceedings of the Thirtieth Conference on Innovative Applications of Artificial Intelligence (IAAI-18), New Orleans, LA, USA, 2–7 February 2018; pp. 7741–7746. [Google Scholar]

- Nousi, P.; Tefas, A. Discriminatively Trained Autoencoders for Fast and Accurate Face Recognition. In Communications in Computer and Information Science; Boracchi, G., Iliadis, L., Jayne, C., Likas, A., Eds.; Springer: Cham, Switzerland, 2017; Volume 744, pp. 205–215. [Google Scholar]

- Parkhi, O.M.; Vedaldi, A.; Zisserman, A. Deep Face Recognition. Proc. Br. Mach. Vis. Conf. 2015, 1, 41.1–41.12. [Google Scholar] [CrossRef]

- Phillips, P.J.; Scruggs, W.T.; O’Toole, A.J.; Flynn, P.J.; Bowyer, K.W.; Schott, C.L.; Sharpe, M. FRVT 2006 and ICE 2006 Large-Scale Experimental Results. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 831–846. [Google Scholar] [CrossRef] [PubMed]

- Phillips, P.J.; Yates, A.N.; Hu, Y.; Hahn, C.A.; Noyes, E.; Jackson, K.; Cavazos, J.G.; Jeckeln, G.; Ranjan, R.; Sankaranarayanan, S.; et al. Face Recognition Accuracy of Forensic Examiners, Superrecognizers, and Face Recognition Algorithms. Proc. Natl. Acad. Sci. USA 2018, 115, 6171–6176. [Google Scholar] [CrossRef] [PubMed]

- White, D.; Jonathon Phillips, P.; Hahn, C.A.; Hill, M.; O’Toole, A.J. Perceptual Expertise in Forensic Facial Image Comparison. Proc. R. Soc. B Biol. Sci. 2015, 282. [Google Scholar] [CrossRef] [PubMed]

- Fysh, M.C.; Bindemann, M. Human-Computer Interaction in Face Matching. Cogn. Sci. 2018, 42, 1714–1732. [Google Scholar] [CrossRef] [PubMed]

- Bindemann, M.; Brown, C.; Koyas, T.; Russ, A. Individual Differences in Face Identification Postdict Eyewitness Accuracy. J. Appl. Res. Mem. Cogn. 2012, 1, 96–103. [Google Scholar] [CrossRef]

- Fysh, M.C. Individual Differences in the Detection, Matching and Memory of Faces. Cogn. Res. Princ. Implic. 2018, 3, 20. [Google Scholar] [CrossRef] [PubMed]

- Robertson, D.J.; Jenkins, R.; Burton, A.M. Face Detection Dissociates from Face Identification. Vis. Cogn. 2017, 25, 740–748. [Google Scholar] [CrossRef]

- Russell, R.; Duchaine, B.; Nakayama, K. Super-Recognizers: People with Extraordinary Face Recognition Ability. Psychon. Bull. Rev. 2009, 16, 252–257. [Google Scholar] [CrossRef] [PubMed]

- Bobak, A.K.; Dowsett, A.J.; Bate, S. Solving the Border Control Problem: Evidence of Enhanced Face Matching in Individuals with Extraordinary Face Recognition Skills. PLoS ONE 2016, 11, 1–13. [Google Scholar] [CrossRef] [PubMed]

- Davis, J.P.; Lander, K.; Evans, R.; Jansari, A. Investigating Predictors of Superior Face Recognition Ability in Police Super-Recognisers. Appl. Cogn. Psychol. 2016, 30, 827–840. [Google Scholar] [CrossRef]

- Papesh, M.H. Photo ID Verification Remains Challenging despite Years of Practice. Cogn. Res. Princ. Implic. 2018, 3, 19. [Google Scholar] [CrossRef] [PubMed]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fysh, M.C.; Bindemann, M. Person Identification from Drones by Humans: Insights from Cognitive Psychology. Drones 2018, 2, 32. https://doi.org/10.3390/drones2040032

Fysh MC, Bindemann M. Person Identification from Drones by Humans: Insights from Cognitive Psychology. Drones. 2018; 2(4):32. https://doi.org/10.3390/drones2040032

Chicago/Turabian StyleFysh, Matthew C., and Markus Bindemann. 2018. "Person Identification from Drones by Humans: Insights from Cognitive Psychology" Drones 2, no. 4: 32. https://doi.org/10.3390/drones2040032

APA StyleFysh, M. C., & Bindemann, M. (2018). Person Identification from Drones by Humans: Insights from Cognitive Psychology. Drones, 2(4), 32. https://doi.org/10.3390/drones2040032