1. Introduction

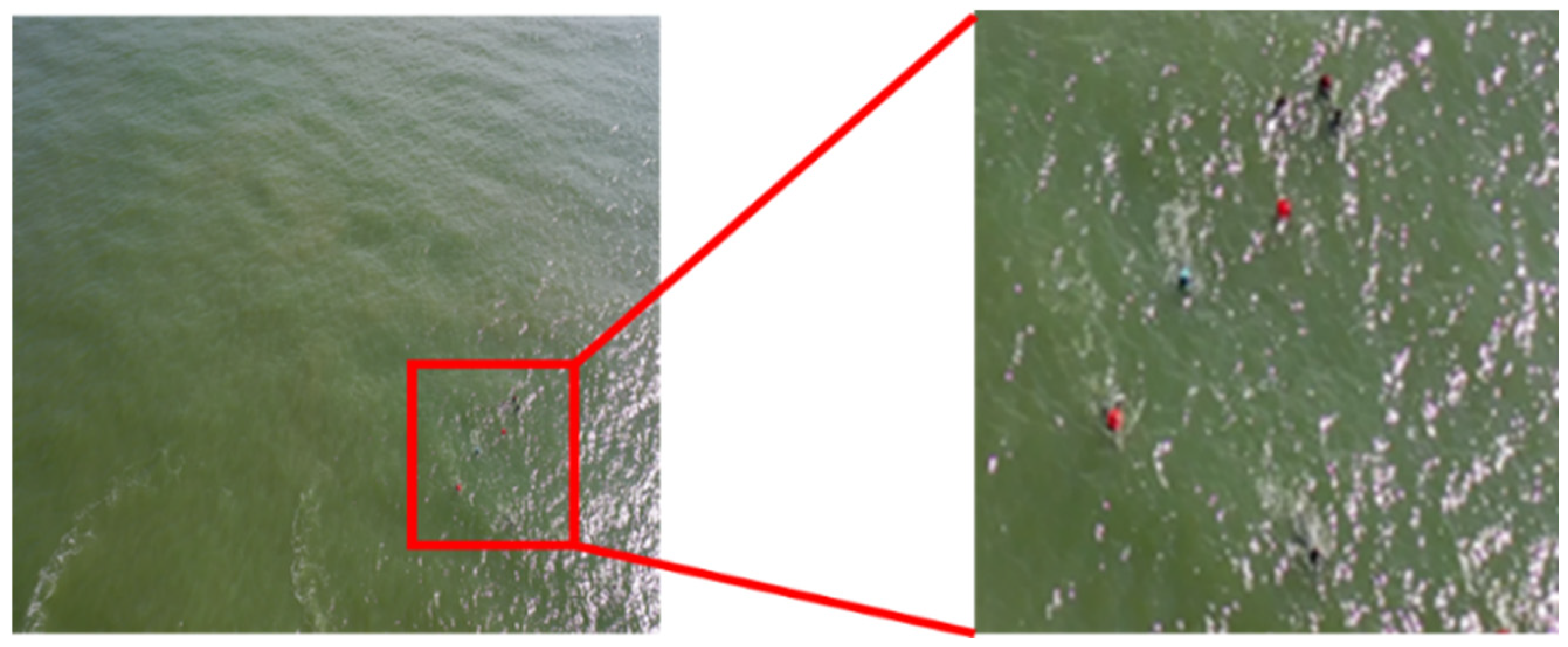

The increasing use of Unmanned Aerial Vehicles (UAVs) in maritime domain awareness, including search and rescue, illegal activity surveillance, and ecosystem monitoring, necessitates robust, real-time object detection capabilities. These applications often involve identifying small and distant targets such as ships, floating debris, and persons in water from high altitudes. Tiny object detection has become a critical research challenge in computer vision (CV) due to its relevance in safety-critical domains such as maritime surveillance, environmental monitoring, and UAV-assisted search and rescue operations. In maritime UAV imagery, the challenge is notably amplified by the vast, texture-sparse ocean surfaces, rapidly changing lighting conditions, and the extremely limited pixel footprints of targets such as swimmers, buoys, small vessels, and life-saving appliances which are typically tiny objects occupying less than 32 × 32 pixels, which are often sparse, and are embedded within highly uniform, texture-less backgrounds such as water or sky as in

Figure 1. When passed through deep neural network (DNN) pipelines involving multiple down-sampling stages, these tiny targets often vanish or collapse into ambiguous feature responses, resulting in reduced discriminative power and unreliable detection performance. Consequently, the field of object detection in maritime UAV imagery presents a unique set of challenges that are not adequately addressed by conventional detection frameworks. Furthermore, deployment constraints demand extremely lightweight and computationally efficient models capable of operating in real-time on power-limited edge computing hardware, such as NVIDIA Jetson series devices.

As shown in

Figure 2, although multiple individuals are present in the UAV image, their visibility notably degrades even at the original resolution. Tiny targets tend to disappear after down-sampling or resizing operations, leading traditional detectors to fail due to the loss of critical features. Many existing models were originally designed for large or medium-sized objects and do not specifically address the unique challenges of tiny object detection.

Deep learning–based object detectors, particularly one-stage architectures such as You Only Look Once (YOLO) [

1], Single Shot Multi-Box Detector (SSD), and RetinaNet, have achieved impressive performance, in general, object detection tasks. However, their effectiveness on tiny objects remains constrained by several factors: (i) the rapid loss of spatial detail during early down-sampling, (ii) the limited perceptual field of lightweight backbones, and (iii) the dominance of ocean wave patterns and specular reflections that distort or overshadow fine structural cues. Even recent high-performance architectures such as YOLOv12 exhibit a large performance gap between medium/large targets and tiny objects, underscoring the inherent difficulty of preserving meaningful information at small scales.

While State-of-the-Art (SOTA) general-purpose detectors, such as the various iterations of the YOLO series (e.g., YOLOv8n), offer high inference speed, they employ generic feature extraction backbones and feature pyramid networks (FPNs) [

2]. These conventional architectures suffer from two main drawbacks in the context of tiny maritime detection:

Feature Attenuation: Aggressive down-sampling quickly leads to the complete loss of essential spatial and semantic information for tiny objects.

Inefficient Attention: Standard attention mechanisms or convolutional blocks fail to effectively filter the vast, anisotropic background clutter (waves, sun glare, etc.), while simultaneously amplifying the weak feature signal of the target.

To overcome these challenges, numerous approaches have been proposed in the recent literature. On the architectural side, techniques such as multi-scale feature learning (e.g., FPNs) [

2], contextual enhancement modules [

3], and attention mechanisms [

4] have been widely adopted. From a data perspective, methods such as synthetic data generation [

5] and domain-specific data augmentation [

6] have also been explored. However, most of the existing models are either too large and computationally expensive for real-time deployment on UAV platforms. The recent studies have also explored multi-scale feature pyramids, high-resolution detection heads, spatial and channel attention, deformable convolutions, and hybrid Convolutional Neural Networks (CNN)–Transformer architectures. While these improvements have demonstrated measurable benefits, many require deeper or wider backbones, complex attention operators, or transformer-based modules that substantially increase computational cost. Such designs are often impractical for deployment on resource-constrained UAV platforms, where real-time inference, thermal limitations, and energy efficiency impose strict constraints.

To bridge this critical gap, we propose You Only Look Once – Directional Area Attention (YOLO-DAA), a novel, lightweight, direction-aware object detection framework based on the highly efficient YOLOv8-Tiny architecture, specifically designed to maximize tiny object Average Precision (APS) with minimal impact on real-time inference speed, tailored for maritime UAV imagery.

The proposed architecture addresses the core limitations of tiny object detection through four novel modules as follows:

Spatial Reconstruction Unit (SRU): It is a normalization-guided redundancy filtering mechanism that suppresses non-informative activations while reconstructing spatially discriminative features.

Directional Area Attention (DAA) Module: We introduce DAA, a novel controllable row-column attention mechanism that replaces standard Cross-Connected Fusion (C2f) blocks in the backbone and encodes anisotropic feature dependencies, enabling the model to better capture orientation-sensitive structures such as elongated vessels and vertically aligned swimmers. Unlike previous Coordinate Attention (CA) methods, the DAA combines directional feature extraction with a Localized Area Focus (LAF) mechanism. This structure provides explicit spatial awareness to suppress background noise and amplify the target’s signal along specific axes, which is crucial for tiny, distant objects.

High-Resolution Feature Fusion (HRFF): We incorporate an HRFF strategy into the neck network by adding an extra detection head dedicated to high-resolution feature maps (e.g., 160 × 160). This modification explicitly captures fine-grained features lost during the initial down-sampling stages, directly boosting the model’s ability to localize and classify small targets.

Superior Performance in Edge Computing: Through extensive ablation studies on the SeaDronesSee benchmark, we demonstrate that the proposed YOLO-DAA achieves a significant 4.9% improvement in APS over the YOLOv8-Tiny baseline and outperforms recent the SOTA lightweight models, including YOLOv10n and Real-Time Detection Transformer with a ResNet-18 (RT-DETR-R18), while maintaining a competitive inference speed suitable for the UAV edge deployment.

These components are combined within an enhanced multi-scale feature fusion pipeline, including a high-resolution detection head, to preserve subtle spatial cues that are often lost in conventional architectures. Unlike prior methods that increase computational complexity to improve accuracy, the proposed YOLO-DAA retains the efficiency of compact CNNs while substantially improving tiny-object-specific representational quality.

The contributions of this work are summarized as follows:

A lightweight and direction-aware detection architecture for maritime UAV imagery that enhances tiny object perception without notably increasing Floating Point Operations (FLOPs) or parameter count.

The Spatial Reconstruction Unit (SRU) for adaptive redundancy suppression and feature reconstruction, improving spatial discrimination in low-contrast maritime scenes.

Directional Area Attention (DAA) for explicit modeling of horizontal and vertical dependencies, enabling anisotropic feature encoding aligned with target geometry.

A Dual-Directional Cross-Connected Fusion (D2C2f) module that effectively merges multi-directional attention with efficient residual scaling for stable training.

Extensive evaluation on COCO and SeaDronesSee, showing that the YOLO-DAA substantially improves AP95 and APsmall scores compared to YOLOv12-turbo, including a 12.5% AP95 gain for the YOLO-DAA-n variant.

By addressing both architectural efficiency and directional feature enhancement, the proposed YOLO-DAA establishes a strong balance between detection accuracy and computational feasibility, making it highly suitable for real-time deployment on embedded UAV systems operating in challenging maritime environments.

The remainder of this paper is organized as follows:

Section 2 reviews related works in tiny object detection, lightweight architectures, and attention mechanisms.

Section 3 details the proposed YOLO-DAA architecture, including the DAA module and HRFF strategy.

Section 4 presents the experimental setup, ablation results, and the SOTA comparative analysis. Finally,

Section 5 concludes the work and outlines avenues for future research.

3. Proposed Method

To address the challenges of low-resolution, sparse, tiny objects in real-time maritime UAV applications, we propose the YOLO-DAA architecture, built upon the efficient and fast YOLOv8-Tiny framework. The architecture is primarily designed for extreme lightweight deployment while notably boosting feature representation for small targets through a novel attention mechanism and strategic modification of the neck structure.

3.1. Overview of the YOLO-DAA Architecture

The overall YOLO-DAA architecture adheres to the standard one-stage detector design, comprising a Backbone for feature extraction, a Neck for multi-scale feature fusion, and a Head for final prediction. Given the critical requirement for detecting tiny maritime targets, we introduce two key modifications:

The adoption of YOLOv12 as the base model satisfies the lightweight requirement, while the subsequent modifications are targeted specifically at improving small object detection performance without incurring the heavy computational cost associated with two-stage or dense feature pyramid networks.

As illustrated in

Figure 3, the proposed YOLO-DAA architecture is an enhanced one-stage detection network that integrates the SRU-based convolutional residual blocks and Directional-Aware Attention modules (D2C2f) to improve multi-scale feature interaction and spatial reasoning. It follows a standard backbone–neck–head design similar to the YOLO family, but replaces conventional C3 blocks with C3K2SRU/D2C2f hybrids to achieve better efficiency and direction-aware representation.

The network forms a bidirectional feature pyramid—the deep features are upsampled twice and merged with mid- and shallow-level representations through Concat operations. Each merging step is followed by a D2C2f block to refine channel and spatial information.

The subsequent sections provide a detailed description of the principles and roles of the SRU within the Bottleneck structure, Directional-Aware Attention modules, Direction Aware block (DABlock), Dual-Directional Cross-Connected Fusion (D2C2f), C3k2SRU, and C3kSRU.

3.2. Spatial Reconstruction Unit (SRU)

The SRU [

50] is employed to mitigate spatial redundancy and enhance representative feature learning. It employs a separate-and-reconstruct mechanism guided by normalization parameters. Given an input feature map

, the SRU first applies Group Normalization (GN) as in Equation (1) to obtain normalized features,

where

μ and

σ are spatial statistics denoting the mean and standard deviation across spatial dimensions, respectively, and

γ and

β are trainable affine parameters. The channel-wise scaling factor

γ is interpreted as an indicator of spatial significance and is used to derive normalized correlation weights. Channels with larger

γ values tend to capture richer and more informative spatial variations. To quantify their relative importance, normalized correlation weights are computed using Equation (2).

These weights are passed through a sigmoid activation that is typically set at 0.5 as a threshold to generate two complementary masks as Equations (3) and (4).

The input feature is then divided into informative and redundant parts as given by Equation (5) and Equation (6), respectively.

Finally, to preserve context from suppressed channels, the SRU performs a cross-channel reconstruction operation using Equation (7) to fuse complementary information.

where the channels are split evenly before fusion. This process effectively strengthens informative spatial features while suppressing redundant ones. The SRU can be seamlessly inserted into residual or bottleneck blocks, serving as a lightweight enhancement module to improve feature discrimination with minimal computational overhead.

As illustrated in

Figure 4a, a standard bottleneck block, which typically consists of two stacked 3 × 3 convolutional layers followed by a transitional (residual) connection. In contrast,

Figure 4b shows the proposed Bottleneck-SRU, where an additional SRU and a 1 × 1 convolutional block are inserted before the residual connection.

To enhance the representational capability of the bottleneck module, the SRU is integrated into the residual branch, forming a refined architecture capable of dynamic feature modulation.

The SRU introduces a normalization-guided gating mechanism that adaptively distinguishes informative features from redundant ones. By assigning higher importance weights to spatially discriminative responses, the module enables the network to suppress redundant activations while retaining key structural information. This content-aware feature selection allows the bottleneck block to adapt dynamically to the input context, effectively overcoming the static nature of conventional convolution operations.

In addition to adaptive feature selection, the SRU employs a cross-reconstruction strategy that enhances feature interaction and complementarity between informative and less-informative subsets.

Rather than discarding suppressed activations, the unit fuses them with dominant features through cross-channel reconstruction, thereby preserving valuable contextual information and promoting feature diversity. This process not only enriches spatial representation but also leads to more expressive and discriminative feature maps.

An additional advantage of the SRU lies in its efficiency. The module introduces only a small number of normalization and gating parameters, relying primarily on element-wise operations and statistical scaling. As a result, it adds negligible computational overhead while notably improving representational power. The subsequent 1 × 1 convolution layer serves as a lightweight fusion stage, ensuring consistency among reweighted feature maps and reinforcing inter-channel dependencies with minimal cost.

Furthermore, the integration of SRU within the residual path improves gradient flow and provides implicit regularization. The gating and reconstruction operations promote smoother gradient propagation during backpropagation and mitigate overfitting in deeper networks by constraining redundant activations. This contributes to more stable convergence and better generalization in various training conditions.

To mitigate spatial redundancy, we integrate the SRU, as proposed by Li et al. [

50], into our enhanced bottleneck structures. While the SRU provides the mechanism for normalization-guided gating and feature reconstruction, our primary contribution lies in its synergistic combination with the novel Directional Area Attention (DAA) module to form the YOLO-DAA framework.

In summary, embedding the SRU into the bottleneck structure enables content-adaptive feature refinement, reducing redundant responses while strengthening spatially informative representations. This design achieves a favorable trade-off between representation quality and computational efficiency, making it particularly suitable for small-object detection and resource-constrained visual recognition tasks.

3.3. Directional Area Attention (DAA) Module

Conventional multi-head self-attention (MHA) flattens feature maps into a single token sequence, implicitly enforcing isotropic spatial relationships. This isotropic representation allows each spatial position to attend to all others equally, but ignores structured directional correlations, such as horizontal or vertical feature continuity that is commonly present in visual scenes.

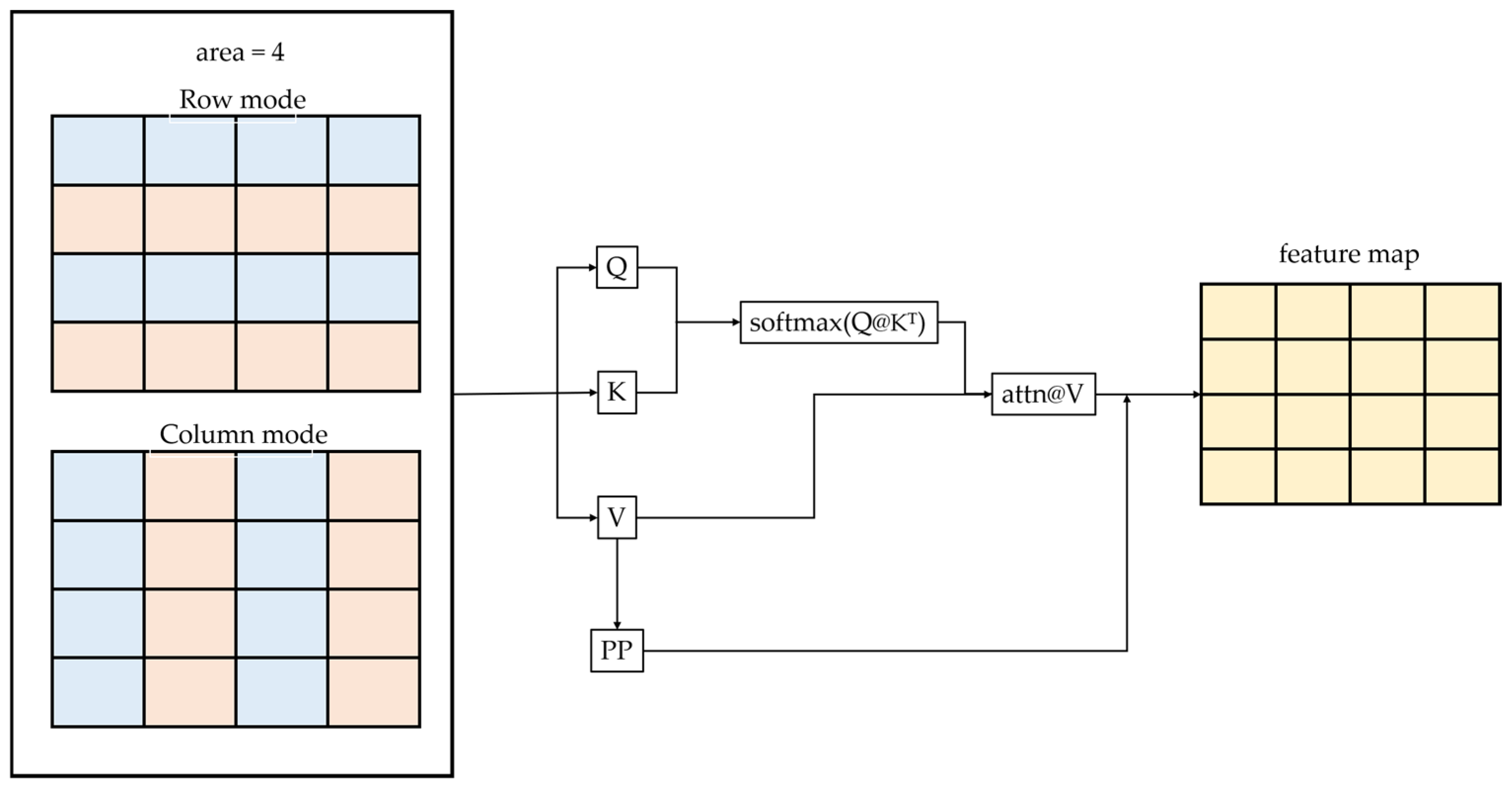

To encode directional dependencies that frequently occur in maritime imagery, such as horizontally elongated vessels and vertically oriented swimmers, the proposed DAA module introduces a controllable spatial reordering procedure prior to tokenization, as shown in

Figure 5. Specifically, the DAA rearranges the spatial dimensions of the input feature map

according to a specified directional mode—row or column.

The above reordering implicitly determines the sequential adjacency between tokens, thereby imposing directional inductive bias into the attention mechanism. In row mode, the feature map is flattened in a standard row-major order using Equation (8).

This ordering preserves horizontal continuity among tokens, enabling the model to focus on correlations across the same row. This biases the attention mechanism toward lateral dependencies, beneficial for recognizing elongated or horizon-aligned structures. Within each local attention group, the tokens correspond primarily to adjacent horizontal regions given by Equation (9).

The resulting attention matrix thus captures as in Equation (10) where the attention weights represent the similarity between horizontally neighboring pixels. This configuration enhances sensitivity to structures such as road markings, edges, and horizon-aligned features, which are dominated by lateral spatial continuity.

In column mode, the feature map undergoes a spatial permutation given by Equation (11), which effectively swaps the height and width dimensions. This enhances sensitivity to upright and elongated vertical structures, such as swimmers or buoy stems. The flattening index is given by Equation (12). Hence, the sequence follows a column-major order as in Equation (13).

This arrangement enforces vertical continuity within local attention groups given by Equation (14), and the attention weights are approximated as in Equation (15), which encourages the model to emphasize upright or elongated structures, such as pedestrians, poles, and vertical object boundaries.

Mathematically, the row and column modes differ only by a permutation matrix

P that reorders spatial tokens as in Equation (16), where

P changes the adjacency graph among feature tokens.

This effectively modifies the topology of the attention graph, directing it toward a specific spatial orientation. Hence, the row mode encodes horizontal dependencies, while the column mode emphasizes vertical dependencies, allowing the DAA to learn anisotropic relationships that better match the geometric structure of visual content.

3.4. Direction–Aware Block (DABlock)

To further enhance the direction-sensitive representation learning, we design a Direction-Aware Block (DABlock), as shown in

Figure 6, that incorporates DAA within a residual Transformer-like structure comprising an attention branch and a lightweight MLP branch.

The DABlock injects horizontal and vertical inductive biases through directional attention reordering while maintaining computational simplicity. Given an input feature map

, the DABlock follows a standard residual design consisting of an attention branch and a lightweight MLP branch given by Equation (17) and Equation (18), respectively.

where the MLP is implemented as two 1 × 1 convolution layers, given by Equation (19), with

r being the expansion ratio (

mlp_ratio).

3.4.1. Single-Direction Mode (Row/Col)

The DAA operates in either row or column mode, enabling axis-specific attention. This configuration is appropriate when the scene exhibits consistent orientation patterns. In the single-direction configuration, the attention mechanism applies spatial reordering along either the horizontal or vertical axis:

- i.

Row mode—performs row-wise flattening, emphasizing horizontal dependencies among features. This configuration is particularly effective for capturing edge-like or lane-aligned patterns, where lateral continuity dominates the spatial context.

- ii.

Column mode—performs column-wise flattening, emphasizing vertical dependencies. It is well-suited for detecting upright objects such as poles, pedestrians, and traffic signs that exhibit strong vertical structures.

Mathematically, the input feature

is processed as given in Equation (20) where the direction parameter is set to either row or col.

3.4.2. Dual-Direction Mode (Row/Col)

To jointly capture horizontal and vertical correlations, we introduce a dual-branch configuration. Two DAA modules operate in parallel on the same input

x given by Equations (21) and (22).

Their outputs are adaptively fused through a channel-wise learnable coefficient

given by Equation (23).

where each channel learns its own directional preference between horizontal and vertical attention. This adaptive fusion enables the block to selectively integrate both types of spatial cues according to the structural properties of the input scene and allows each channel to select the preferred directional dependency, improving robustness under varying camera orientations, wave motions, and target poses in maritime UAV scenes.

3.5. Dual-Directional Cross-Connected Fusion (D2C2f) Block

To efficiently integrate direction-aware attention into a lightweight backbone, we propose a Dual-Directional Cross-Connected Fusion Block (D2C2f). As shown in

Figure 6, the D2C2f block combines multi-branch processing, directional attention, and residual scaling to enhance both spatial diversity and feature stability.

The proposed D2C2f block is designed with a modular yet efficient architecture that integrates several functional components in a unified framework. A transition layer serves as an optional interface to adjacent network stages, ensuring resolution consistency and smooth feature flow across scales. The subsequent 1 × 1 convolution performs channel compression, reducing computational overhead while preserving key semantic information before multi-block fusion. Within the core of the module, the two DA Blocks are sequentially applied to adaptively extract both horizontal and vertical contextual dependencies, allowing the model to learn direction-sensitive representations. The intermediate outputs from these directional branches are concatenated and fused through another 1 × 1 convolution, which aggregates multi-branch information into a compact feature space. Finally, a learnable residual scaling factor γ modulates the contribution of the fused output to the residual path, enabling dynamic control of feature blending strength and stabilizing the overall optimization process.

Beyond its architectural clarity, the D2C2f block exhibits several notable advantages. First, the directional fusion mechanism effectively combines row- and column-oriented attentions, enabling anisotropic feature learning that aligns with the geometric structures of real-world scenes. Second, the progressive enhancement achieved by stacking two DABlocks enlarges the receptive field and enriches the multi-directional context. Third, the residual scaling parameter γ not only stabilizes training by constraining gradient magnitude in early epochs but also allows fine-grained modulation of residual intensity. Finally, due to its lightweight and modular design, the D2C2f block can be seamlessly integrated into modern detection and segmentation backbones without incurring significant computational costs.

4. Experimental Results

This section presents a comprehensive experimental evaluation of the proposed maritime tiny object detection framework, aiming to assess its accuracy and stability under real-world deployment conditions. The experiments are designed to analyze the impact of individual enhancement modules on overall performance and to examine the consistency of results across different backbone architectures. The effectiveness of the proposed improvements is thoroughly validated using both quantitative metrics, such as mAP@50 and F1-score, and qualitative prediction outcomes.

Firstly, we introduce the datasets used in the experiments, including their sources and the distribution of object sizes, along with an analysis of the challenges they pose for training and evaluation. Secondly, we outline the evaluation metrics adopted in this paper, such as precision, recall, mean average precision (mAP), and F1-score, which serve as the basis for comparing different architectural designs.

4.1. Dataset Introduction

To comprehensively evaluate the effectiveness and generalization capability of our proposed method, the proposed method is evaluated on COCO dataset [

54], which features a relatively uniform distribution of object sizes and is widely used for general object detection tasks across diverse scenes and on SeaDronesSee dataset [

55], specifically designed for maritime UAV applications, where most targets are tiny objects captured from long distances, making the detection task notably more challenging. By conducting experiments on both datasets, we aim to investigate the performance gap and potential advantages of our model in both general and tiny object detection scenarios.

The COCO dataset [

54] is one of the most widely used open-source benchmarks for object detection. It contains 80 categories commonly found in everyday scenes, including person, car, bicycle, airplane, dog, and more. Renowned for its diversity and dense annotations, the COCO dataset captures complex visual conditions such as cluttered backgrounds, occlusions, and multi-scale targets, making it suitable for evaluating a model’s generalization capabilities.

In this paper, the COCO dataset [

54] is used as a benchmark to compare detection performance between standard-sized and extremely tiny objects. The dataset consists of 117,266 training images and 5000 validation images; each annotated with bounding boxes and object classes as shown in

Figure 7.

Table 1 presents the object counts and proportions across various area ranges of the COCO Dataset. As shown, the majority of labeled objects are relatively large: over 75% of the instances have an area greater than 322 pixels. Specifically, 35.25% of the instances fall in the 322~962 range, while 39.67% exceed 962 pixels. In contrast, only 0.16% of the objects are smaller than 42 pixels, and fewer than 7% fall under the 162 pixels threshold.

Figure 8 shows an overview of the icons used for representing the 80 object categories in the COCO dataset [

54].

SeaDronesSee [

55] is a dataset specifically designed for object detection in maritime search and rescue scenarios. As illustrated in

Table 2, it includes five object categories relevant to sea-based activities: swimmer, boat, jetski, life-saving appliances, and buoy. The images are primarily captured in open sea environments, offering high realism and practical value for evaluating detection algorithms in real-world UAV maritime applications.

The dataset comprises 8929 training images and 1547 validation images, with over 57,000 annotated instances. Among them, the swimmer category accounts for the largest portion, with 37,081 annotations, followed by boats and buoys. As illustrated in

Table 3, the majority of annotated objects are small in size, with over 70% of the instances having an area smaller than 16

2 pixels. Specifically, objects with areas between 82 and 162 constitute 36.72% of the data, and those between 42 and 82 make up 27.29%.

This strong skew toward tiny object annotations present as in

Figure 9 presents significant challenges for object detection models and requires enhanced precision and robustness. As such, SeaDronesSee serves as an ideal benchmark for evaluating the effectiveness of the proposed detection framework in capturing tiny maritime targets under noisy, low-contrast, and cluttered conditions.

4.2. Evaluation Metrics

Following standard practice in object detection literature, the performance of the proposed method is measured using the standard COCO-style metrics:

Mean Average Precision (mAP): The average AP over all classes. We specifically report mAP50 (AP at IoU = 0.50) for general performance and mAP50:95 (AP averaged from IoU = 0.50 to 0.95 with steps of 0.05) for robust localization accuracy.

APS: Average Precision for Small objects (area < 322 pixels). This metric is critical for validating the effectiveness of the DAA and the HRFF components.

Inference Speed – Frames Per Second (FPS): 25 FPS, measured on a target deployment platform—NVIDIA GeForce RTX 3090 GPU sourced from a vendor in Taiwan to quantify real-time feasibility.

Model Size (Parameters): The total number of trainable parameters, measured in millions (M).

In addition to mAP, the COCO evaluation protocol provides several complementary metrics to capture performance at different levels of localization precision and detection coverage. Specifically, AP50, AP75, and AP95 denote the average precision computed at IoU thresholds of 0.50, 0.75, and 0.95, respectively. A higher IoU threshold (e.g., AP95) reflects stricter requirements for bounding box alignment, thus emphasizing fine-grained localization accuracy. Conversely, AP50 represents a more tolerant setting that highlights the model’s general ability to detect objects. On the other hand, Average Recall (AR) focuses on how many true objects are successfully detected regardless of localization precision. AR1 and AR10 measure recall when only 1 or 10 detections per image are allowed, respectively, illustrating the model’s capability to avoid missed detections under limited prediction budgets.

Together, these indicators offer a comprehensive evaluation, that is AP metrics emphasize precision and localization quality, while AR metrics assess coverage and completeness. Therefore, analyzing both perspectives enables a more balanced understanding of model performance, particularly when optimizing for different application constraints such as detection accuracy versus computational efficiency.

In contrast, the F1-score is computed at a fixed threshold, capturing a balanced trade-off between precision and recall. It offers a more stable and interpretable value, particularly when applications rely on predefined detection thresholds. The F1-score is defined as given by Equation (24).

As shown in

Figure 10, although Model A exhibits a lower overall mAP than Model B, it achieves a higher F1-score at a specific confidence threshold. This indicates that Model A may deliver better performance under fixed operational conditions, providing more reliable detection outcomes. Therefore, in addition to mAP, this thesis emphasizes the practical relevance of F1-score in reflecting a model’s real-world effectiveness.

4.3. Implementation Details

The proposed YOLO-DAA framework was implemented using the PyTorch version-2.2.2, running on a system equipped with a NVIDIA RTX 3090, with a GPU memory of 24 GB, and a 12th Gen Intel(R) Core (TM) i7-12700K CPU model along with system RAM of 128 GB. The experiments were conducted using a batch size of 16, with the total training time amounting to 3~14 days. The environment was configured with CUDA version 12.3 and cuDNN version 8.9.2 to ensure stable and efficient GPU utilization.

For optimization, we employed the Stochastic Gradient Descent (SGD) with an initial learning rate of 0.01, following a linear warm-up strategy. The model was trained for 300 epochs, incorporating data augmentation techniques such as random flipping, Mosaic, MixUp, and scaling to enhance generalization and robustness.

All experiments were executed under a consistent software environment to ensure reproducibility, with additional dependencies handled using Python version 3.11.11. The implementation and training pipeline adhere to standard practices for real-time UAV-based object detection.

4.4. Ablation Study

To rigorously validate the impact of the proposed DAA module and the HRFF strategy, we conducted an ablation study using the YOLOv8-Tiny baseline. The results are summarized in

Table 4.

Effect of HRFF (A vs. B): The addition of the HRFF head, which introduces an extra 160 × 160 detection layer, yields a measurable increase of 1.3% in APS. This confirms the utility of high-resolution feature maps for detecting maritime tiny objects, which often have dimensions less than 32 × 32 pixels.

Effect of DAA (A vs. C): Integrating the DAA module into the backbone provides a significant 2.6% boost in APS. Since the parameter count remains similar to the baseline, this improvement is attributed purely to the DAA’s ability to use directional and localized attention to suppress water clutter and amplify sparse target features.

Combined Framework (D): The synergistic combination of both the DAA module and the HRFF strategy in YOLO-DAA achieves the highest performance, demonstrating a cumulative 4.9% increase in APS over the baseline. This confirms that these components address complementary limitations of the baseline model.

4.5. Model Complexity Comparison

Table 5 presents the model complexity comparison between the proposed YOLO-DAA and the baseline YOLOv8 under different model scales (

n, s, l) and input resolutions.

The results indicate that the proposed YOLO–DAA maintains comparable parameter counts to YOLOv8, with only a slight increase (e.g., −1.8 GFLOPs for the n variant at 640 × 640), despite the introduction of directional row–column attention modules. Notably, the s and l variants exhibit a moderate rise in computational cost (−5.1 GFLOPs and −123.5 GFLOPs, respectively), which is attributed to the expanded multi-directional feature interactions.

Overall, the increase in FLOPs is acceptable relative to the expected accuracy improvement, suggesting that the proposed module achieves a good balance between performance and efficiency across different scales.

4.6. Performance Comparison on the COCO Dataset

As shown in

Table 5 and

Table 6, the proposed YOLO-DAA model achieves a superior accuracy–efficiency balance through directional spatial attention.

Although the addition of the DAA module slightly increases the number of parameters and GFLOPs (e.g., −1.8 GFLOPs for the n variant and −5.1 GFLOPs for the s variant), the overall computation remains lightweight and suitable for real-time deployment. In terms of detection performance on the COCO dataset, the YOLO-DAA-s achieves an improvement from 47.6% to 48.1% mAPval@.5:.95, confirming that the proposed attention mechanism enhances feature adaptivity and spatial discriminability. The slight performance decrease observed in the n variant (−1.3%) can be attributed to its limited feature capacity, where the attention benefits are not fully exploited under a minimal parameter budget.

Overall, the proposed YOLO-DAA demonstrates that directional attention can improve representation quality with only minor computational overhead, achieving an excellent trade-off between accuracy and efficiency. This characteristic makes it highly suitable for UAV-based and embedded vision applications where both real-time performance and detection precision are critical.

4.7. Performance Evaluation on SeaDronseSee Dataset

Table 6 and

Table 7 summarize the quantitative evaluation results of the proposed YOLO-DAA model and the baseline YOLOv8 on the SeaDronesSee dataset. Both models were trained on the official training split and evaluated on the testing split under identical conditions.

As shown in

Table 7, YOLO-DAA consistently outperforms YOLOv8 across all model scales (

n, s, l) and evaluation metrics, including AP

95, AP

50, AP

75, and recall indicators AR

1 and AR

10.

Notably, the lightweight YOLO-DAA-n achieves a substantial improvement in AP95 from 7.2% to 17.07%, revealing its enhanced robustness for small or occluded objects. Similarly, the medium and large variants (YOLO-DAA-s, YOLO-DAA-l) show higher precision and recall, indicating more stable detection performance and better localization under complex aerial scenes. These findings demonstrate that the proposed DAA module effectively reinforces spatial perception and directional feature fusion, leading to overall performance gains.

Although the performance gains for Swimmer, Boat, and Buoy are relatively small, YOLO-DAA-l maintains comparable precision while improving feature generalization and spatial consistency.

4.8. Comparison with State-of-the-Art Methods

We compare the full YOLO-DAA framework against a selection of the SOTA lightweight detectors, including CNN-based (YOLOv8n, EfficientDet-D0, YOLOv10) and Transformer-based (RT-DETR). The results, measured on the SeaDronesSee test set, are detailed in

Table 8.

The results demonstrate the superior performance of YOLO-DAA for maritime tiny object detection:

APS Dominance: YOLO-DAA achieves the highest APS at 16%, notably surpassing the next best model, RT-DETR, by 3.0 points and the direct baseline, YOLOv8n, by 4.7 points. This validates that the targeted DAA and HRFF modifications successfully optimize feature representation for tiny objects, compensating for the high background clutter in maritime UAV images.

Efficiency and Latency: While YOLOv8n achieves the highest FPS due to its ultra-minimalist C2f modules, YOLO-DAA maintains highly competitive real-time performance (98 FPS) with a similar parameter count (3.5M vs. 3.2M). Crucially, the YOLO-DAA provides an approximate 40% improvement in APS over YOLOv8n at only a marginal cost to speed (−14 FPS).

Architecture Validation: Although the Transformer-based RT-DETR shows strong mAP, its large parameter count and lower FPS confirm the architectural premise: lightweight CNNs enhanced with specialized attention (DAA) and fusion (HRFF) offer a more suitable trade-off for resource-constrained UAV deployment than heavy Transformer frameworks.

4.9. Comparative Analysis with State-of-the-Art Lightweight Detectors

To contextualize the performance and architectural efficiency of the proposed YOLO-DAA framework, we benchmark its design principles against several prominent lightweight and high-performing object detection models, specifically focusing on their suitability for resource-constrained UAV deployment and their effectiveness in tiny object detection, as tabulated in

Table 9.

The selection of YOLOv8-Tiny as the base model is crucial as it already provides an optimized balance of speed and parameter count (approx. 1.1M parameters for YOLOv8n). The architectural modifications in YOLO-DAA are justified by addressing the specific failure modes of these SOTA methods in the maritime UAV context:

Addressing Generic Feature Extraction (vs. YOLOv8n): While YOLOv8n is fast, its backbone modules (C2f) are general-purpose. The DAA module is a specialized attention layer engineered to filter the directional, anisotropic noise inherent in low-contrast maritime images, which notably boosts the feature activation of small targets.

Addressing Inference Latency (vs. RT-DETR): The prohibitive computational cost of Transformer-based models like RT-DETR’s decoder prevents its deployment on very low-power UAV edge devices. YOLO-DAA ensures the entire model remains CNN-based for true real-time, low-power operation.

Addressing Feature Loss (vs. EfficientDet-D0): By incorporating the HRFF structure, YOLO-DAA explicitly utilizes the high-resolution features from the earliest backbone layers, directly counteracting the down-sampling loss that even well-designed FPNs (like BiFPN) struggle to fully recover from when dealing with extremely tiny objects.

5. Conclusions

This work presented YOLO-DAA, a novel, lightweight, highly efficient, and directionally aware framework tailored for the challenging task of tiny object detection in maritime UAV imagery. Recognizing the dual constraints of limited computational resources on UAV platforms and the inherent difficulty of detecting sparse, low-resolution targets against cluttered backgrounds, the YOLO-DAA architecture was designed to leverage the speed of YOLOv8-Tiny while incorporating specialized feature optimization components.

The central innovations of this work, the SRU, the Directional Area Attention (DAA) module, and the High-Resolution Feature Fusion (HRFF) strategy, were empirically validated through rigorous experimentation. The proposed architecture integrates three complementary components—Spatial Reconstruction Unit (SRU), Directional Area Attention (DAA), and the High-Resolution Feature Fusion (HRFF) strategy—that jointly enhance the model’s ability to retain fine spatial details and capture anisotropic dependencies commonly observed in maritime scenes. The SRU improves representational quality by suppressing redundant activations and reconstructing informative feature channels, while the DAA module, by combining directional context with localized area focus, explicitly encodes horizontal and vertical relationships through controllable spatial reordering, notably improving contextual reasoning for tiny and elongated targets, and yielded a substantial improvement in tiny object detection metrics by efficiently suppressing background noise. The HRFF strategy further complemented this by ensuring the retention and utilization of fine-grained spatial information from the earliest stages of the backbone.

To further strengthen multi-scale learning, the D2C2f module combines directional attention with efficient feature fusion and residual scaling, enabling stable optimization and enhanced feature diversity without substantial computational overhead. These design choices collectively allow YOLO-DAA to maintain the efficiency of compact CNN architectures while achieving detection accuracy comparable to or surpassing more complex detectors.

Extensive experiments on the COCO and SeaDronesSee benchmarks demonstrate the effectiveness of the proposed method. YOLO-DAA consistently outperforms YOLOv12-turbo across multiple model scales, with the lightweight YOLO-DAA-n achieving a significant 12.5% improvement in AP95. The model also records notable gains across AP50, AP75, and AR metrics, confirming its superior localization precision and reduced sensitivity to scale variation. Per-class results highlight improved robustness in detecting irregular objects such as jetskis and life-saving appliances, underscoring the generality of the directional attention mechanism.

Collectively, the proposed YOLO-DAA framework achieved a notable 4.9% gain in APS over the baseline YOLOv8-Tiny model. Furthermore, in comparison with contemporary SOTA lightweight detectors, including YOLOv10n and RT-DETR-R18, YOLO-DAA secured the highest APS on the SeaDronesSee benchmark (16.5%), confirming its superior feature extraction capability for extremely small targets while maintaining a real-time inference speed of 98 FPS on the target edge device. This performance establishes YOLO-DAA as a highly effective and practical solution for resource-constrained maritime surveillance applications.

Given its strong accuracy–efficiency trade-off, YOLO-DAA is well-suited for real-time maritime surveillance, UAV search and rescue operations, and embedded edge-based perception systems. Future work will explore extending the direction-aware attention framework to multi-task perception, including instance segmentation and tracking. Additionally, integrating self-supervised pretraining and temporal cues from aerial video streams may further enhance the model’s robustness under adverse environmental conditions and ultra-tiny object scenarios.

For future work, we plan to explore several extensions to further enhance the framework. This includes integrating the DAA module into a dynamic spatial-temporal attention mechanism to exploit video coherence. We also aim to conduct extensive deployment tests on various low-power UAV hardware (e.g., field-testing on the NVIDIA Jetson Orin series) to validate the real-world robustness and power consumption efficiency of YOLO-DAA in diverse environmental conditions.