A Classification Algorithm of UAV and Bird Target Based on L/K Dual-Band Micro-Doppler and Mamba

Highlights

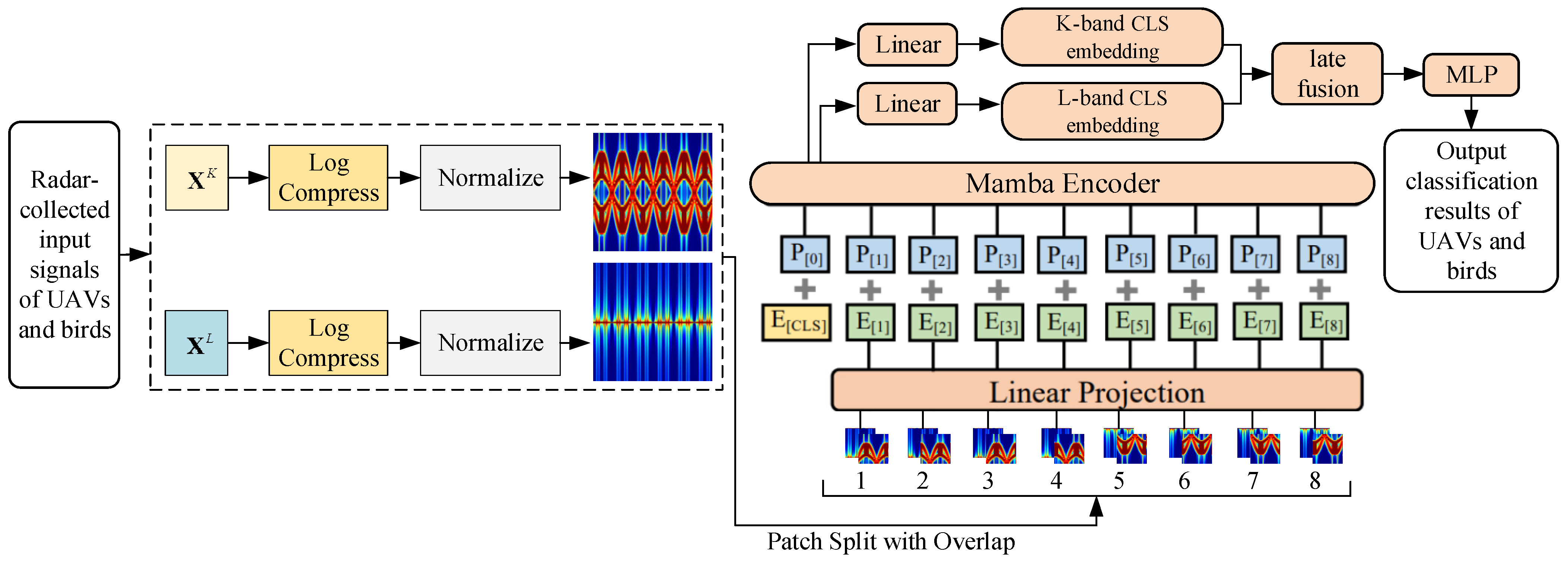

- We introduced the patch-tokenization mechanism to handle the two-dimensional micro-Doppler spectrograms, achieving a unification of the input representation under the serialized modeling framework, which is beneficial for the processing of radar echo signals.

- We propose a micro-Doppler spectrum classification architecture based on the state-space model and the construction of a classification framework for unmanned aerial vehicles (UAVs) and birds based on dual-branch parallel encoding and late fusion (LF) in the L-band and K-band, which accomplishes classification and recognition of the target.

- The first major finding is that the proposed block serialization mechanism for processing two-dimensional micro-Doppler spectra can effectively enhance the processing performance of radar echo signals.

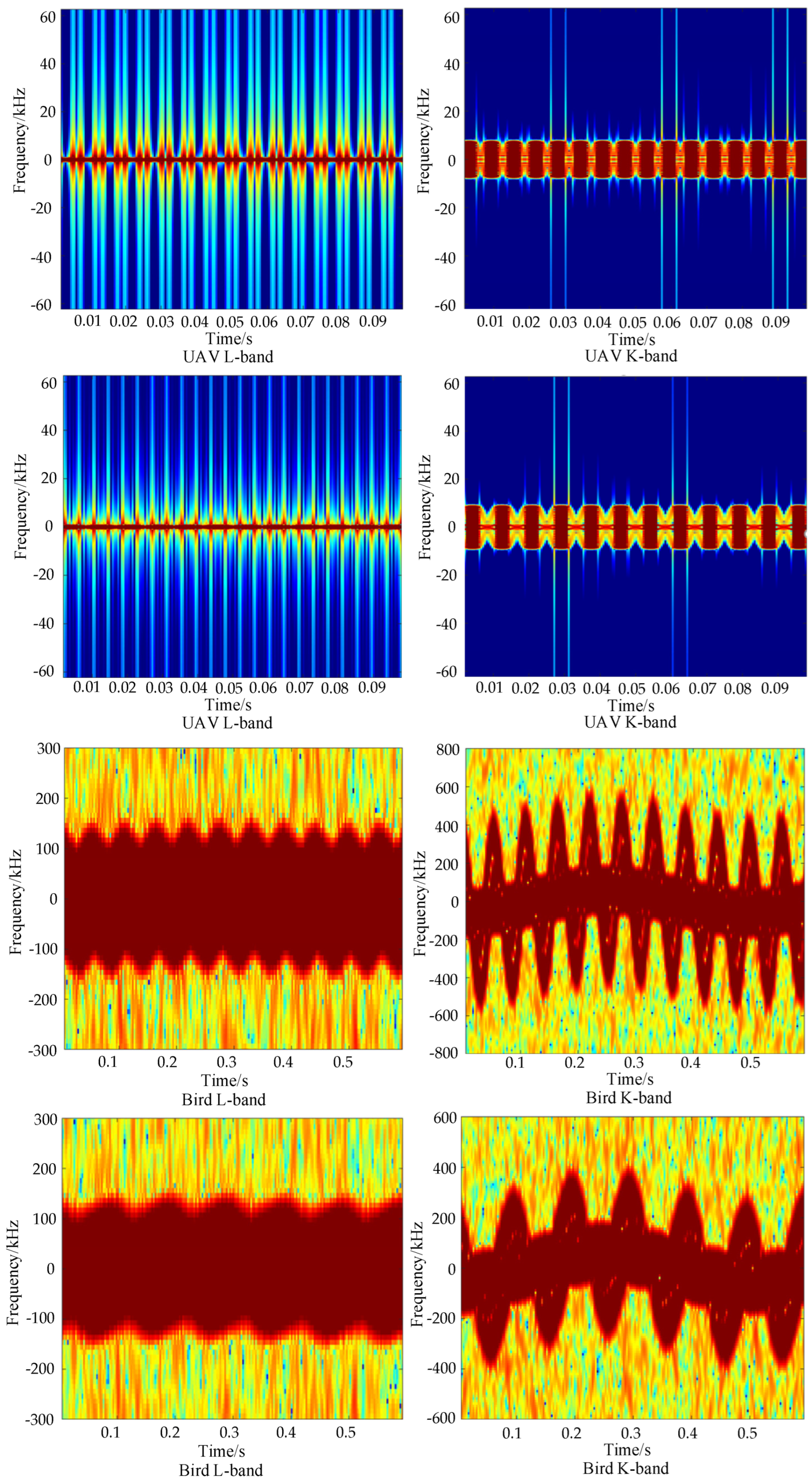

- The second major finding is the algorithm proposed for diagnosing and identifying drone and bird targets using dual-band radar signals. This mainly involves fully leveraging the complementary information of the two bands in terms of Doppler scale and fine motion texture details at the physical mechanism level of the recognition network, enabling highly accurate classification of radar echo signals.

Abstract

1. Introduction

- (1)

- A patch-tokenization mechanism is introduced to process two-dimensional micro-Doppler spectrograms. By mapping the time-frequency distribution into sequences of feature vectors, a unified input representation within the sequence modeling paradigm is achieved. This preserves local time-frequency correlations while providing a standardized input pipeline for isomorphic feature extraction and subsequent fusion of multi-band data.

- (2)

- A micro-Doppler spectrogram classification architecture based on a state-space model is proposed. The designed architecture utilizes the linear recurrence property of Mamba to replace the traditional self-attention (SA) mechanism. While maintaining the capability for modeling long-term micro-Doppler sequences, it effectively reduces computational complexity and memory overhead in large-scale time-frequency data processing.

- (3)

- A classification framework is constructed based on parallel L-band and K-band dual-branch encoding and late fusion (LF) to accomplish the classification and recognition of the target. From the perspective of physical mechanisms, the designed classification framework fully leverages the complementary information provided by the two bands regarding Doppler scale and micro-motion texture details. A combined loss function oriented towards dual-branch collaborative learning is introduced to jointly constrain the discriminative capability of individual branches and the consistency and complementarity of the fused representations, thereby enhancing the stability and generalization performance of cross-band joint discrimination.

2. Related Work

3. The Overall Research Framework and Ideas

4. Radar Echo Modeling for UAVs and Birds Based on Micro-Doppler Signatures

4.1. UAV Echo Model and Micro-Doppler Parametric Representation

4.2. Flapping Bird Echo Model and Parametric Representation of Micro-Doppler

5. Classification Algorithm Based on L/K Dual-Band Micro-Doppler and Mamba

5.1. L/K Dual-Band Micro-Doppler Spectrogram Representation and Positional Encoding

5.2. Mamba-Based SSM Spectrogram Encoding

5.3. L/K Dual-Branch Joint Classification Head

5.4. Joint Loss via Mutual Learning and Contrastive Learning

6. Experiments and Analysis

6.1. Dataset Construction

6.2. Experimental Setup

6.3. Analysis of Experimental Results

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Na, Z.; Cheng, L.; Sun, H.; Lin, B. Survey on UAV Detection and Identification Based on Deep Learning. J. Signal Process. 2024, 40, 609–624. [Google Scholar] [CrossRef]

- Yang, J.; Li, Y.; Zheng, B.; Li, X.; Meng, Z. Research on hierarchical fusion optimization algorithm for dynamic allocation of multi-UAV radar resources. Measurement 2026, 267, 120564. [Google Scholar] [CrossRef]

- Zhao, C.; Luo, G.; Wang, Y.; Chen, C.; Wu, Z. UAV recognition based on micro-Doppler dynamic attribute-guided augmentation algorithm. Remote Sens. 2021, 13, 1205. [Google Scholar] [CrossRef]

- Marco, P.; Neda, R.; Giovanni, C.; Alessandro, C. Modeling small UAV micro-Doppler signature using millimeter-wave FMCW radar. Electronics 2021, 10, 747. [Google Scholar] [CrossRef]

- Chen, X.; Nan, Z.; Zhang, H.; Chen, W.; Guan, J. Experimental research on radar micro-Doppler of flying bird and rotor UAV. Chin. J. Radio Sci. 2021, 36, 704–714. [Google Scholar] [CrossRef]

- Ogawa, K.; Tsagaanbayar, D.; Nakamura, R. ISAR imaging for drone detection based on backprojection algorithm using millimeter-wave fast chirp modulation MIMO radar. IEICE Commun. Express 2024, 13, 276–279. [Google Scholar] [CrossRef]

- Delleji, T.; Slimeni, F. RF-YOLO: A modified YOLO model for UAV detection and classification using RF spectrogram images. Telecommun. Syst. 2025, 88, 33–45. [Google Scholar] [CrossRef]

- Lin, X.; Niu, Y.; Yu, X.; Fan, Z.; Zhuang, J.; Zou, A.M. Paying more attention on backgrounds: Background-centric attention for UAV detection. Neural Netw. 2025, 185, 107182. [Google Scholar] [CrossRef]

- Noor, A.; Li, K.; Tovar, E.; Zhang, P.; Wei, B. Fusion flow-enhanced graph pooling residual networks for Unmanned Aerial Vehicles surveillance in day and night dual visions. Eng. Appl. Artif. Intell. 2024, 136, 108959. [Google Scholar] [CrossRef]

- Guthula, V.B.; Oehmcke, S.; Chilaule, R.; Zhang, H.; Lang, N.; Kariryaa, A.; Mottelson, J.; Igel, C. Drone imagery for roof detection, classification, and segmentation to support mosquito-borne disease risk assessment: The Nacala-Roof-Material dataset. Sci. Remote Sens. 2025, 12, 100306. [Google Scholar] [CrossRef]

- Kong, A.; Wang, Y.; Zhao, J. Lightweight and accurate infrared rangefinder-fused LiDAR-inertial localization for UAV-based bridge inspection in GPS-denied environments. Measurement 2026, 257, 118983. [Google Scholar] [CrossRef]

- Bao, L.; Guo, Z.; Gao, X.; Li, C. Stealth UAV Path Planning Based on DDQN Against Multi-Radar Detection. Aerospace 2025, 12, 774. [Google Scholar] [CrossRef]

- Björklund, S.; Hernnäs, H. Statistical analysis of the radar cross section of two small fixed-wing drones using typical flights. IET Radar Sonar Navig. 2023, 18, 125–136. [Google Scholar] [CrossRef]

- Ai, Y.; Li, R.; Xiang, C.; Liang, X. Real-Time occluded target detection and collaborative tracking method for UAVs. Electronics 2025, 14, 4034. [Google Scholar] [CrossRef]

- Liu, J.; Xu, Q.; Su, M.; Chen, W. UAV Swarm Target Identification and Quantification Based on Radar Signal Independency Characterization. Remote Sens. 2024, 16, 3512. [Google Scholar] [CrossRef]

- Dai, T.; Mei, L.; Zhang, Y.; Tian, B.; Guo, R.; Wang, T.; Du, S.; Xu, S. UAVs and birds classification using robust coordinate attention synergy residual split-attention network based on micro-Doppler signature measurement by using L-band staring radar. Measurement 2023, 222, 113692. [Google Scholar] [CrossRef]

- Fan, S.; Wu, Z.; Xu, W.; Zhu, J.; Tu, G. Micro-Doppler signature detection and recognition of UAVs Based on OMP Algorithm. Sensors 2023, 23, 7922. [Google Scholar] [CrossRef]

- Narayanan, R.M.; Tsang, B.; Bharadwaj, R. Classification and discrimination of birds and small drones using radar micro-doppler spectrogram images. Signals 2023, 4, 337–358. [Google Scholar] [CrossRef]

- Chen, Y.; Li, S.; Yang, J.; Cao, F. Rotor blades echo modeling and mechanism analysis of flashes phenomena. Acta Phys. Sin. 2016, 65, 287–297. [Google Scholar] [CrossRef]

- Berroth, M.; Jacob, A.F.; Schmidt, L.-P. Classification of small UAVs and birds by micro-Doppler signatures. Int. J. Microw. Wirel. Technol. 2014, 6, 435–444. [Google Scholar] [CrossRef]

- Yu, J.; Yu, J.; Zhang, Z.; Pan, Q.; Mu, Y. Lightweight imperfect wheat grain identification model based on improved efficientNet. J. Chin. Cereals Oils Assoc. 2025, 40, 192–202. [Google Scholar] [CrossRef]

- Li, H.; Cao, Z.; Zhang, X. A position distribution measurement method and mathematical modeling of two projectiles simultaneous hitting target based on three photoelectric encoder detection screens. Def. Technol. 2025, 53, 151–168. [Google Scholar] [CrossRef]

- Zhang, W.; Ma, H.; Li, X.; Liu, X.; Jiao, J.; Zhang, P.; Gu, L.; Wang, Q.; Bao, W.; Cao, S. Imperfect wheat grain recognition combined with an attention mechanism and residual network. Appl. Sci. 2021, 11, 5139. [Google Scholar] [CrossRef]

- Liang, X.; Huang, Z.; Lu, L.; Tao, Z.; Yang, B.; Li, Y. Deep learning method on target echo signal recognition for obscurant penetrating lidar detection in degraded visual environments. Sensors 2020, 20, 3424. [Google Scholar] [CrossRef]

- Li, H.; Zhang, X.; Kang, W. A testing and data processing method of projectile explosion position based on three UAVs’ visual spatial constrain mechanism. Expert Syst. Appl. 2025, 265, 125984. [Google Scholar] [CrossRef]

- Bing, Z.; Zhou, L.; Dong, S.; Wen, Z. A Classification and Recognition Method for UAV Targets Based on Micro⁃Doppler and Machine Learning. Radar Sci. Technol. 2024, 22, 549–556. [Google Scholar] [CrossRef]

- Ghazlane, Y.; Ahmed, E.H.A.; Hicham, M. Real-time lightweight drone detection model: Fine-grained Identification of four types of drones based on an improved Yolov7 model. Neurocomputing 2024, 596, 127941. [Google Scholar] [CrossRef]

- Seidaliyeva, U.; Akhmetov, D.; Ilipbayeva, L.; Matson, E.T. Real-Time and Accurate Drone Detection in a Video with a Static Background. Sensors 2020, 20, 3856. [Google Scholar] [CrossRef] [PubMed]

- Guo, D.; Qu, Y.; Sun, J.; Zhou, X.; Yin, S. Speckle suppression and Camshift algorithm optimization based on single-photon LiDAR for UAV tracking. Infrared Phys. Technol. 2025, 150, 106018. [Google Scholar] [CrossRef]

- Tian, M.; Wang, J.; Yu, S.; Cui, M.; Yang, H. Efficient unmanned aerial vehicle detection algorithm based on cross-weighted pixel reconstruction and feature selection network. Eng. Appl. Artif. Intell. 2025, 159, 111562. [Google Scholar] [CrossRef]

| Model | ACC | mAP | MF1 | KC | FLOPs (G) |

|---|---|---|---|---|---|

| VGG16 | 93.1 | 91.8 | 92.5 | 92.2 | 15.51 |

| ResNet50 | 94.9 | 93.7 | 94.1 | 93.2 | 6.25 |

| Swin Transformer | 95.8 | 95.1 | 94.8 | 94.3 | 23.64 |

| ConvNext | 94.3 | 93.6 | 93.9 | 93.3 | 15.41 |

| Proposed Algorithm | 97.5 | 96.2 | 95.6 | 95.2 | 18.95 |

| Experiment No. | Method | ACC | mAP | MF1 | KC |

|---|---|---|---|---|---|

| 1st | Swin Transformer | 95.5 | 94.7 | 94.6 | 94.1 |

| Ours | 97.2 | 95.9 | 95.2 | 94.8 | |

| 2nd | Swin Transformer | 95.8 | 95.1 | 94.8 | 94.4 |

| Ours | 97.4 | 96.2 | 95.6 | 95.2 | |

| 3rd | Swin Transformer | 95.9 | 95.5 | 95.0 | 94.4 |

| Ours | 97.7 | 96.4 | 95.9 | 95.5 |

| L/K Dual-Branch | Patching | Mamba-SSM | Late Fusion | Joint Loss | ACC | mAP | mF1 | KC |

|---|---|---|---|---|---|---|---|---|

| √ | × | × | × | × | 92.8 | 91.4 | 92.0 | 90.9 |

| √ | √ | × | × | × | 94.0 | 92.7 | 93.2 | 92.1 |

| √ | √ | √ | × | × | 95.4 | 94.1 | 94.6 | 93.6 |

| √ | √ | √ | √ | × | 96.8 | 95.5 | 95.0 | 94.3 |

| √ | √ | √ | √ | √ | 97.5 | 96.2 | 95.6 | 95.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhang, T.; Song, X. A Classification Algorithm of UAV and Bird Target Based on L/K Dual-Band Micro-Doppler and Mamba. Drones 2026, 10, 265. https://doi.org/10.3390/drones10040265

Zhang T, Song X. A Classification Algorithm of UAV and Bird Target Based on L/K Dual-Band Micro-Doppler and Mamba. Drones. 2026; 10(4):265. https://doi.org/10.3390/drones10040265

Chicago/Turabian StyleZhang, Tao, and Xiaoru Song. 2026. "A Classification Algorithm of UAV and Bird Target Based on L/K Dual-Band Micro-Doppler and Mamba" Drones 10, no. 4: 265. https://doi.org/10.3390/drones10040265

APA StyleZhang, T., & Song, X. (2026). A Classification Algorithm of UAV and Bird Target Based on L/K Dual-Band Micro-Doppler and Mamba. Drones, 10(4), 265. https://doi.org/10.3390/drones10040265