1. Introduction

When natural disasters such as earthquakes and flash floods strike, the communication infrastructure is often compromised. Therefore, establishing rapid emergency communication in disaster-affected areas is critical to the success of post-disaster rescue missions [

1]. Providing flexible and responsive emergency communication services presents a significant challenge, primarily due to the highly dispersed and dynamic distribution of affected users and their random and unpredictable service demands. Unmanned aerial vehicles (UAVs), renowned for their flexible deployment and low cost, have demonstrated considerable potential in emergency communication [

2]. Unlike conventional emergency communication technologies, UAV-assisted methods enable rapid and flexible deployment, unrestricted by ground traffic conditions [

3,

4]. However, optimizing UAV trajectories to provide reliable emergency communication for mobile users with stochastic service demands remains a challenging problem.

Significant research efforts have been undertaken in response to the aforementioned challenges, yielding a variety of targeted solutions with substantial theoretical and practical outcomes. Existing approaches can be broadly categorised into traditional optimization theory and machine learning-based methods. Specifically, traditional UAV trajectory optimization and resource allocation methods typically formulate the problem as a mathematical optimization program with objectives and constraints. Early studies often employed convex optimization [

5,

6], integer programming [

7,

8] or heuristic algorithms [

9,

10] solving them by decoupling the intertwined subproblems. For instance, building upon large-scale system analysis and the Dinkelbach method, the authors in [

11] transformed the nonlinear fractional objective function into a sequence of difference-of-convex problems. These subproblems are solved within a block coordinate descent framework to update the UAV trajectory and IoT communication resources iteratively. The work in [

12] decouples the complex nonlinear completion time minimization problem into a series of tractable convex subproblems. Employing a block coordinate descent framework, the algorithm alternately optimizes the UAV trajectory and communication resources. Non-convexity is resolved via the successive convex approximation method, thereby driving convergence to a feasible and efficient solution that yields significant system performance gains. To maximize the number of connected users for post-disaster data collection, Reference [

13] proposed a particle swarm optimization (PSO) algorithm incorporating an adaptive inertia weight factor to determine the UAV’s optimal velocity and bandwidth allocation. To address the challenges of task assignment and path planning for multiple UAVs in dynamic environments, Reference [

14] developed a receding horizon optimization framework based on an adaptive disturbance PSO algorithm. Furthermore, Reference [

15] investigated the problem of deploying a minimum number of UAVs. It introduced a bio-inspired algorithm for UAV network link optimization, which significantly enhanced network transmission performance and service coverage. However, traditional optimization-based methods are often sensitive to initial conditions and algorithmic choices [

16]. Inappropriate initial settings can severely degrade their performance. Furthermore, multi-dimensional coupled decision variables, such as user association and power control, render the joint UAV trajectory optimization and resource allocation problem highly nonlinear and non-convex [

17]. This complexity makes it challenging to model the system accurately using mathematical formulations. Consequently, solutions derived from traditional optimization approaches generally struggle to guarantee a global optimum.

Traditional methods for UAV trajectory optimization and resource allocation primarily rely on static optimization or pre-defined trajectories, which struggle to adapt to the dynamic changes in user distribution and real-time fluctuations in service demands. In practical communication scenarios, the mobility of ground users, the randomness of service demands, and the time-varying nature of channel conditions collectively form a highly complex dynamic system. Although existing mathematically driven optimization methods can derive optimal solutions under specific constraints, they typically require complete system information and substantial computational resources, making it challenging to meet the demands of real-time decision-making. Furthermore, these conventional approaches face the dual challenges of the curse of dimensionality and local optima when dealing with high-dimensional continuous action spaces and non-convex optimization problems.

Machine learning algorithms enable systems to learn from accumulated data. Through iterative processes that imitate human learning, these systems continuously improve their knowledge and capabilities [

18]. This capability allows UAVs to achieve autonomous learning within their operational environments, offering notable advantages such as a high degree of autonomy and superior real-time performance. Reference [

19] introduced an optimized method based on a machine learning Q-learning algorithm for rapid UAV trajectory planning in unknown environments. This approach utilizes the received signal strength as a dynamic reward signal to guide the UAV toward the signal source. Reference [

20] developed a trajectory optimization algorithm for UAVs based on the double deep Q-network (DDQN) to address the challenge of limited onboard computational power. However, in the context of UAV trajectory optimization, the dynamic and complex flight environment often involves high-dimensional raw data. Using this data directly as the state input for learning is difficult for computers to process and interpret, ultimately leading to the curse of dimensionality [

21]. Deep reinforcement learning (DRL) [

22] leverages deep neural networks’ feature extraction capability to process environmental state information layer by layer, resulting in superior processing and generalization power. By learning from interactions with the environment, DRL can master optimal strategies in complex scenarios without requiring an explicit system model. This positions DRL as an auspicious approach for achieving scalable, low-latency, and highly reliable spectrum decision-making [

23]. Reference [

24] proposed a reinforcement learning algorithm based on a competitive architecture for real-time path planning of uncrewed vehicles. The algorithm employs a DDQN structure that decomposes the Q-value function into a state-value function and an action advantage function. Although this design improves value estimation accuracy, value-based methods are generally limited to discrete action spaces, making them less suitable for continuous UAV trajectory control problems. In UAV trajectory optimization and communication resource allocation, the control variables typically form a high-dimensional continuous action space. Deterministic policy gradient methods such as DDPG can address continuous control problems. However, they often suffer from training instability and sensitivity to hyperparameters, particularly in dynamic environments with stochastic user mobility.

In the UAV trajectory optimization and resource allocation field, Actor–Critic-based DRL has emerged as a research hotspot in recent years. The Actor–Critic algorithm [

25] incorporates a value function to evaluate the policy function, enabling single-step updates for the policy learning method and thereby improving learning efficiency. Reference [

26] applied trust region policy optimization (TRPO) to deep deterministic policy gradient to mitigate gradient instability. While this approach improves training stability, it requires second-order optimization and Hessian approximation, resulting in relatively high computational overhead. The proximal policy optimization (PPO) algorithm [

27] simplifies the TRPO framework by introducing a clipped surrogate objective to constrain policy updates, thereby achieving improved training stability with lower computational complexity. However, conventional PPO implementations typically employ separate feature extraction networks for the actor and critic, which may lead to redundant representation learning and inefficient feature utilization when processing complex environmental states.

In addition to the algorithmic limitations discussed above, numerous technical challenges remain unresolved in the joint optimization of UAV trajectory and communication spectrum resource allocation for emergency communication services. Firstly, the complexity of Air-to-Ground channel modelling cannot be overlooked. The probabilistic switching between line-of-sight (LoS) and non-line-of-sight (NLoS) propagation conditions, distance-dependent path loss, and environmental factors influence accurate channel modelling, a foundational challenge for system design. Secondly, the random walk of user locations, the bursty nature of service demands, and the duration uncertainty demand a system capable of rapid response and adaptive adjustment. Finally, the continuous control of UAV trajectories requires efficient learning strategies. An algorithm design challenge is balancing sufficient exploration for optimal paths while avoiding policy instability.

Building upon the aforementioned research landscape and motivated by the need to address the multifaceted challenges in emergency communication, this paper capitalizes on the high training stability, strong sample efficiency, and implementation simplicity of the PPO algorithm. To this end, we propose the shared feature extraction (SFE)-enhanced PPO for trajectory optimization and resource allocation (SPOR) algorithm for the joint optimization of UAV trajectory and communication resources. In other words, SPOR is intended to address not only the stability–complexity trade-off in continuous-control learning, but also the representation redundancy caused by separately learning policy and value features from the same coupled emergency-network state. The main contributions of this work are summarized as follows:

A user service model is established where service demand arrivals follow a Poisson process and their durations obey a uniform distribution. This characterization effectively captures the bursty and time-varying nature of emergency communication traffic. For user mobility, a model based on the Maxwell–Boltzmann distribution is adopted. User speeds follow a two-dimensional Maxwell–Boltzmann distribution, with random movement directions incorporating a boundary reflection mechanism. This setup provides a tractable stochastic approximation for user mobility in emergency communication scenarios.

A UAV trajectory optimization and resource allocation algorithm is designed based on the Actor–Critic architecture. It leverages an SFE layer to reduce the computational complexity of the neural network. Furthermore, a comprehensive reward function integrating multi-dimensional performance metrics is constructed. This reward function combines data transmission rate rewards, service ratio rewards, and a distance penalty, thereby mitigating potential performance bias from single-objective optimization.

Comprehensive simulations are conducted to evaluate the performance of the proposed algorithm from the dimensions of user scale and service demand. The results demonstrate that our method outperforms the benchmark algorithms in key performance metrics, including training convergence speed, emergency communication service ratio, and average service distance.

The remainder of this paper is organized as follows.

Section 2 introduces the system model: the user mobility and service model; the UAV mobility and communication model.

Section 3 performs problem transformation for UAV trajectory optimization and resource allocation, and presents the SPOR algorithm.

Section 4 presents the simulation results. Finally,

Section 5 concludes the paper.

2. System Model

The wireless communication system assisted by a UAV in a disaster area is shown in

Figure 1. One UAV equipped with a radio frequency module serves as an aerial base station, providing communication services to ground users. Order

represents the collection of mobile users, and the service demands of mobile users follow the Poisson model. UAV provides radio frequency communication services for mobile users. The symbols and definitions of the relevant parameters are shown in

Table 1.

2.1. User Mobility Model and Service Model

Consider the random motion characteristics of mobile users in disaster areas on a two-dimensional plane. In time slot

t, the position coordinates and velocity vector of user

j are recorded as

, where

is the position vector of user

j and

is the velocity vector of user

j. The user location update formula is

where

notes the time slot length. Due to the inherently random nature of user mobility, the velocity distribution of users shares similar statistical properties with the motion of gas molecules described by kinetic theory. This similarity to the Maxwell–Boltzmann velocity distribution was initially observed in experimental studies reported in [

28]. Building on this observation, later works, such as [

29], approximate individual movement speeds using a two-dimensional Maxwell Boltzmann distribution to characterize mobility and contact behavior at the population level. Based on these insights, we adopt a probabilistic mobility model inspired by the Maxwell–Boltzmann distribution to describe user motion. The model provides a statistically grounded approximation of random mobility under disrupted emergency conditions. The probability density function for any velocity component

of a user is given by

where

is the user’s root mean square speed, the velocity components

and

are identically distributed. The joint probability density function of the user velocity vector is given by

In addition, this paper considers a binary decision variable, which is used to characterize the dynamic service demands of ground users in UAV communication networks. The arrival process of total service demands in the system can be modeled as a discrete-time poisson process [

30]. All slots have the same length. Assuming a quasi-static environment, the conditions within each time slot

t remain unchanged, and the user requests spectrum resource at the beginning of each time slot

t. As shown in

Figure 2, for each user

in the system, the service demand status is defined as

. When

, user

j has service demands in time slot

t; when

, user

j has no service demand in slot

t. For each time slot

t, users with services do not generate new services when processing current services, and users without service demands generate task requirements with a probability of

. The duration of services follows a continuous uniform distribution

, where

and

represent the minimum and maximum service duration time slots respectively.

2.2. UAV Mobility Model and Communication Model

The UAV operates as an aerial base station with controllable 3D mobility. In time slot t, the UAV position is denoted as , where and represent the horizontal coordinates, and represents the flight altitude.

The motion of UAV follows the first-order dynamic model. The velocity vector is defined as

. Where

,

, and

represent the UAV’s velocity components along the three coordinate axes, respectively. The UAV’s position is updated according to a first-order kinematic model

To ensure flight safety and mission area coverage, the UAV’s trajectory is subject to spatial boundary constraints: horizontal position constraints , , and an altitude constraint . These constraints enforce operation within the designated operational airspace while maintaining a suitable flight altitude for adequate communication coverage.

The radio frequency channel between the UAV and ground users adopts a probabilistic LoS channel model. The path loss between the UAV and user

j under LoS and NLoS conditions can be respectively expressed as [

31]

where

and

denote the path loss at the reference distance

,

is the distance between the UAV and user

j, and

and

represent the path loss exponents for LoS and NLoS transmissions, respectively.

G is a Gaussian random variable modeling the random shadowing effect, with its fluctuation measured by the standard deviation. The LoS probability depends on the elevation angle

, where

h denotes the UAV’s altitude. The LoS and NLoS probabilities are

where

and

are environment-dependent parameters. Therefore, the average path loss is calculated as follows

The system employs the signal-to-noise ratio (SNR) as a key physical-layer performance metric to quantify the transmission quality and reliability of communication links. The SNR is defined as the ratio of the received useful signal power to the noise power at the receiver. It is a crucial indicator for assessing signal transmission quality and system performance, characterizing the channel’s spectral efficiency and transmission reliability. The SNR of the radio frequency channel between the UAV and user

j is given by

where

is the UAV transmission power and

is the noise power. In time slot

t, the coverage of a ground user by the UAV is determined based on the aforementioned SNR threshold criterion. The coverage indicator function

is defined as:

When the SNR of the signal received by the user exceeds threshold

, it is deemed that the user is within the drone’s effective coverage range. Conversely, if the user’s SNR falls below threshold

, the user is considered disconnected, indicating a failed communication connection [

32]. Therefore, the SNR constraint is

.

Considering the dynamic characteristics of users’ service demands, this paper defines the service user set

of time slot

t as the set of all users with service demands, represented as

;

is the set of users who meet both coverage conditions and service demands, represented as

. The set of effective users successfully connected to the UAV in time slot

t is defined as

where

represents whether the UAV serves the user

j in time slot

t. Specifically, at

, the UAV serves user

j, and at

, the UAV does not serve user

j. To quantify the effectiveness of UAV emergency communication services, this paper defines the service ratio as the key performance indicator. The service ratio reflects the UAV’s ability to successfully provide communication services to users with service demands within a specific time slot. In time slot

t, the service ratio of the system is defined as

In UAV-assisted emergency communication systems, efficiently utilizing spectrum resources ensures service quality. This paper mainly considers the downlink data transmission from the UAV to ground users. Functioning as an aerial base station, the UAV is tasked with the efficient and reliable distribution of critical information, including high-definition images, real-time videos, and environmental sensor data gathered over disaster areas, to the ground users. This transmission scheme is based on orthogonal frequency division multiple access (OFDMA), allowing the UAV to allocate bandwidth to users with service demands in time slot

t. We define the bandwidth allocation matrix

as

, where

represents the normalized bandwidth proportion allocated to user

j in time slot

t. The bandwidth allocated to user

j is

where

B is the total channel bandwidth of the UAV. Therefore, according to the Shannon formula, the data transmission rate of user

j can be expressed as

To enable online decision-making, we assume that a lightweight feedback link is available between ground users and the UAV. Through this control link, each user periodically or event-triggeredly reports its location update and service-demand indicator to the UAV. Therefore, at each time slot, the UAV can obtain the user positions and demand states required for state construction and subsequently perform trajectory control, user association, and bandwidth allocation. Since this paper focuses on downlink emergency service performance, the uplink signaling overhead is assumed to be negligible and is not explicitly optimized. Moreover, in this paper, the communication process is modeled under an OFDMA-based downlink abstraction.

2.3. Problem Formulation

Considering the constraints of UAV trajectory control and communication system bandwidth, the system optimizes the UAV flight trajectory based on user locations and dynamic service demands, aiming to serve as many users with service demands as possible. The optimization problem is formulated to maximize the data transmission rate of UAV-assisted emergency communication services. A binary variable

indicates whether user

j has a service demand in time slot

t:

if user

j has a service demand in time slot

t, and

otherwise. Another binary variable

indicates whether the UAV is connected to user

j in time slot

t:

if the UAV serves user

j, and

otherwise. The variable

represents the normalized bandwidth proportion allocated to user

j in time slot

t. The optimization problem constructed in this paper is as follows

where constraint

indicates whether user

j has service demands in time slot

t. Constraint

indicates whether user

j is covered in time slot

t. Constraint

indicates whether the UAV serves user

j in time slot

t. Constraint

is a demand-aware service constraint, and the UAV is allowed to connect users only at

.

is an SNR constraint.

is the total bandwidth constraint, ensuring that the total bandwidth allocated by all users does not exceed the total system bandwidth. In addition, constraints

,

, and

are flight range constraints for the UAV.

3. UAV Trajectory Optimization and Resource Allocation Algorithm

The UAV faces significant challenges in trajectory optimization and resource allocation in dynamic environments. In post-disaster communication scenarios, the random movement of ground users leads to a continuously changing spatial distribution, making it difficult for the UAV to maintain stable service coverage. Additionally, the user service’s suddenness and unpredictable demands necessitate resource allocation strategies with real-time self-adaptability. Consequently, the UAV must rapidly adjust its flight trajectories and optimize resource allocation during operation to adapt to environmental dynamics and evolving user demands. With their response speed and adaptability limitations, traditional optimization algorithms struggle to meet the demands for UAV trajectory optimization and resource allocation in such highly dynamic settings.

3.1. Problem Transformation

Considering the above challenges, this paper models the dynamic optimization problem as a Markov decision process (MDP). In the emergency communication scenario, the UAV is the agent that executes a flight policy based on local observations. The agent’s objective is to maximize communication service quality by optimizing its trajectory, guided by user locations and their service demands. Specifically, the UAV inputs its observations into a policy function approximated by a neural network. This network then outputs an action, and a reward is generated upon interacting with the environment to complete this process. The state space, action space, state transition probability and reward function of the markov process under the time slot t are defined as , A, P and R respectively. The detailed definition of MDP elements is as follows

State

: The system status includes the position of the UAV, the position of mobile users, and the status of service demands. Therefore, the state space in a time slot can be defined as

where

represents the position of the UAV in time slot

t.

indicates the geographical location of the user

j in time slot

t. In addition,

indicates the service demand status of user

j.

Action : This action corresponds to the decision made by the UAV agent and determines the UAV’s behavior. The UAV agent needs to jointly make decisions on trajectory control, service connection, and bandwidth allocation. In time slot t, the action space of the agent contains three coupled decision variables: . Where is the normalized speed control vector. Each action component represents the normalized speed in the corresponding direction, and the actual flight speed is obtained through linear mapping , where represents the maximum flight speed of UAV. Here, represents whether the UAV serves user j in time slot t, and represents the bandwidth proportion allocated to user j in time slot t. In our implementation, the actor outputs a continuous latent action vector under a Gaussian policy, which is then mapped to the executable hybrid action. Specifically, the velocity-related outputs are linearly scaled to the physical UAV velocity range, the user-association-related outputs are converted into discrete service decisions through threshold-based binarization with feasibility constraints, and the bandwidth-allocation-related outputs are normalized to satisfy the bandwidth constraint.

In the considered emergency communication scenario, these action components are strongly coupled. UAV trajectory control affects user accessibility, service distance, and channel conditions, while bandwidth allocation determines how the available communication resources are distributed among the currently served users.

State transition probability

: The state transition is determined by the deterministic UAV dynamics model, the stochastic user mobility model, and the probability distribution of user service demands. The user

j’s position is updated via Equation (

1), its velocity given by (

3). The UAV’s position is updated by (

4). The update of user service demands

follows the Poisson process and generates new demands with probability

.

Reward

: In the context of UAV communication in the disaster area environment, this paper aims to serve as many users as possible with service demands, and maximize the data transmission rate of UAV emergency communication services. Given the service demand, the reward of UAV agent in time slot

t is expressed as

in which

,

, and

are weight coefficients, and

,

,

,

. The coefficients

,

and

are introduced as task-preference parameters in the multi-objective reward to balance the relative importance of transmission rate, service ratio, and distance penalty in the overall objective. Since the three reward components are normalized to comparable ranges, these coefficients are empirically chosen to reflect the desired trade-off among communication quality, service coverage, and spatial proximity to users with active demands. The data transmission rate reward

is

The service rate reward

is

The distance penalties

is

where

is the average horizontal distance from the UAV to all users with service demands, and

is the maximum communication distance of the UAV. In this paper, the average service distance is used as an efficiency-related metric to characterize how closely the UAV can approach users with active service demands during service provision. A smaller value indicates that the UAV can serve demanding users with better spatial proximity, which is generally beneficial for reducing path loss and improving communication efficiency.

3.2. SPOR Algorithm for UAV Trajectory Optimization and Resource Allocation

To solve the MDP formulated above, we propose the SPOR algorithm, a policy-gradient-based reinforcement learning method that balances training stability and sample efficiency by constraining the magnitude of policy updates.

The objective of the policy gradient is to maximize the expected cumulative reward

where

denotes the parameters of the policy network,

is a trajectory sampled from the policy

, and

is the discount factor. To reduce the variance of the gradient estimate, we introduce the advantage function

where

and

are the state-action value function and the state-value function, respectively. To balance bias and variance, we adopt the generalized advantage estimation (GAE) technique

where

is the temporal difference (TD) error, and

is the GAE parameter controlling the bias–variance trade-off.

The SPOR algorithm limits the magnitude of updates by using a clipping policy ratio, where the policy ratio is defined as

where

denotes the parameters of the behavior policy used to collect the trajectories.

The SPOR algorithm adopts an Actor–Critic architecture with an SFE module. As illustrated in

Figure 3, the Actor–Critic network consists of three core components: an SFE layer, an Actor network, and a Critic network. The SFE module in this work is introduced as a practical shared representation module for the considered joint UAV trajectory-control and resource-allocation task, where the system state contains strongly coupled mobility, service-demand, user-association, and bandwidth-related information. By allowing both networks to build upon the same encoded state representation, the model is intended to promote feature reuse between policy learning and value estimation, and may reduce redundant processing in practice.

Specifically, the SFE layer is responsible for mapping the raw state input into higher-order feature representations for use by both the actor and critic networks. Its structure includes two fully connected layers , , where and are weight matrices, , and H denotes the hidden layer dimension. By sharing the underlying feature extractor, the network reduces computational redundancy and ensures that both the policy and value functions are based on the same state representation, improving training efficiency and consistency. The Actor network selects actions according to the policy, while the Critic network evaluates the value of the chosen actions. This design facilitates efficient and stable policy learning by sharing the underlying state features.

The policy of the Actor network is modeled as a Gaussian distribution over a continuous latent action space. The sampled latent action is then converted into the final hybrid action through deterministic post-processing, including velocity scaling, user-association binarization, and bandwidth normalization:

where

is the mean of the latent action and

is the dimensionality of the action space, while

is a state-independent standard deviation vector. The latent action vector is partitioned into three parts corresponding to UAV velocity control, user association, and bandwidth allocation. The velocity-related outputs are linearly scaled to the physical UAV velocity range, the user-association-related outputs are converted into discrete service decisions through threshold-based binarization with feasibility constraints, and the bandwidth-allocation-related outputs are normalized to satisfy the bandwidth allocation constraint.

The Critic network, parameterized by

, estimates the state-value function

. It serves as an approximation of the value function

and is used to compute the TD error

and the generalized advantage estimator

in (

24) to guide the Actor in updating the policy. The Critic is trained by minimizing the TD-error-based value loss, providing a low-variance policy gradient signal that accelerates convergence and improves stability.

The clipped surrogate objective of the policy network is defined as

where

is the clipping factor. This objective function ensures that the new policy does not deviate too far from the old policy.

is the value function loss, and is used to train the Critic network to approximate the true state-value function. The expression is

where

is the value target. The total loss minimized by SPOR is

where

and

are loss coefficients, and

is an entropy regularization term given by

To improve the stability of training, the advantage function is standardized

where

and

are, respectively, the mean and standard deviation of the advantage function within the batch, and

is a small numerical stability constant. In addition, this paper employs the stochastic gradient descent with warm restarts (SGDR) policy to dynamically adjust the learning rate, thereby improving the training stability and convergence speed of the SPOR algorithm. The core idea is to periodically decrease the learning rate from its initial value to a minimum value using a cosine function, and then reset the learning rate to its initial value at the end of each cycle. The update formula of the learning rate in cycle

i is

where

is the current learning rate,

is the initial learning rate,

is the minimum learning rate,

is the number of iterations in the current cycle, and

is the total number of iterations in the cycle

i.

Algorithm 1 outlines the training procedure for the proposed SPOR algorithm. In each episode, the environment interacts with the current policy to generate on-policy experiences, which are stored in the replay buffer. These experiences are then used to compute the GAE-based advantage function, which is normalized to ensure stable training. The replay buffer ensures that transitions from each episode are efficiently stored and made available for calculating the advantages, which are essential for improving the policy. Subsequently, the shared feature extractor, actor, and critic networks are updated jointly for

K epochs. The update is guided by the SPOR clipped surrogate objective, value function loss, and entropy regularization. During the update process, the gradients from both the actor and critic networks are used to update the parameters of the shared feature extractor

, actor network

, and critic network

via gradient descent. The shared feature extractor is updated together with the actor and critic networks to ensure that all networks benefit from the same feature representation. After the parameter updates, the old policy parameters are replaced with the new ones, and the learning rate is updated for the next episode.

| Algorithm 1: SPOR Algorithm |

![Drones 10 00233 i001 Drones 10 00233 i001]() |

4. Simulation and Results

In this section, we present the simulation scenario along with three baseline methods. The experimental results are then described and analyzed.

4.1. Parameter Setting

The simulation environment is set to a three-dimensional area of 2000 m × 2000 m × 200 m with 30 users. A single UAV is employed within this environment. The UAV operates within an altitude range of 50 to 100 m, with a maximum speed of 15 m per second. Its transmission power

is set to 0.5 watts, and the total channel bandwidth

B is 10 MHz. The system noise power

is configured to −105 dBm, while the SNR threshold

is set at 15 dB. Environment-dependent parameters are characterized by values of

and

, and the service demand probability for each user

is 0.5. The neural network architecture employed in the SPOR algorithm consists of an SFE layer as well as actor and critic networks. The SFE layer consists of two fully connected layers, each with 256 neurons. The Actor and Critic networks each contain a single hidden layer with 128 neurons. The simulation parameters used in this work are summarized in

Table 2, with some settings chosen based on [

33,

34].

The simulation environment was developed in Python 3.10 with the PyTorch 2.0 deep learning framework. All experiments were performed on a workstation running Windows 10, configured with an Intel i7 CPU, 32 GB RAM, and an NVIDIA RTX 3060 GPU. The UAV-enabled emergency communication scenario evolves in discrete time slots. In each slot, user locations are updated according to the Maxwell–Boltzmann mobility model, while service-demand states evolve based on the Poisson-arrival demand model. Given the observed system state, the UAV agent determines the trajectory control, user association, and bandwidth allocation actions, after which the environment computes the corresponding reward and transitions to the next state. Unless otherwise specified, each algorithm was trained for 3000 episodes, and all reported results were obtained under identical simulation settings and random initialization conditions to ensure fairness.

4.2. Performance Benchmark

To evaluate the proposed trajectory optimization and resource allocation algorithm for UAVs based on SPOR, the PPO algorithm without the SFE layer, the deep deterministic policy gradient (DDPG) algorithm, and the fixed-position (FP) strategy are considered as benchmark methods. Among these baselines, PPO is used as a direct reference to evaluate the contribution of the proposed improvements over the standard PPO framework, especially the shared feature extraction mechanism. The FP strategy is included as a simple reference baseline to highlight the importance of UAV mobility and adaptive trajectory control. DDPG is adopted as a representative off-policy DRL baseline for continuous control, which provides a useful comparison with the on-policy PPO-based SPOR framework. Together, these methods provide complementary comparisons from the perspectives of algorithmic improvement, mobility adaptation, and different DRL learning mechanisms. In the present work, we focus on representative standard continuous-control baselines so that the proposed SFE-enhanced PPO framework can be evaluated under a unified task formulation and in a clearly interpretable comparison setting.

(1) PPO: This baseline is implemented based on the standard PPO framework, where the SFE module is removed. In this method, the actor and critic networks directly take the original observations as inputs and output the corresponding actions, without employing an SFE layer. The observation space, action space, reward design, and training procedure are kept identical to those of the proposed SPOR method, so that the comparison can explicitly evaluate the effect of the feature extraction mechanism under a common task objective. This controlled comparison helps isolate the practical effect of introducing SFE within the same learning framework. This comparison is intended to examine the effect of introducing SFE under a common PPO-based framework and matched task settings.

(2) DDPG: The DDPG algorithm is adopted as a deterministic policy gradient-based baseline. It consists of an Actor network that generates continuous actions and a Critic network that evaluates the action–value function. To ensure a fair comparison, DDPG uses the same observation space, action space, constraints, and reward function as the proposed SPOR method. Moreover, target networks and an experience replay buffer are employed following the standard DDPG training procedure.

For fairness, SPOR, PPO, and DDPG are implemented under the same task formulation, including the same observation space, action space, and reward design.

(3) FP: In the FP strategy, the UAV remains at a predetermined location throughout the entire episode, and no trajectory optimization is performed. This benchmark serves as a lower-bound reference, highlighting the performance gain achieved by enabling UAV mobility and trajectory control.

4.3. Performance Evaluation

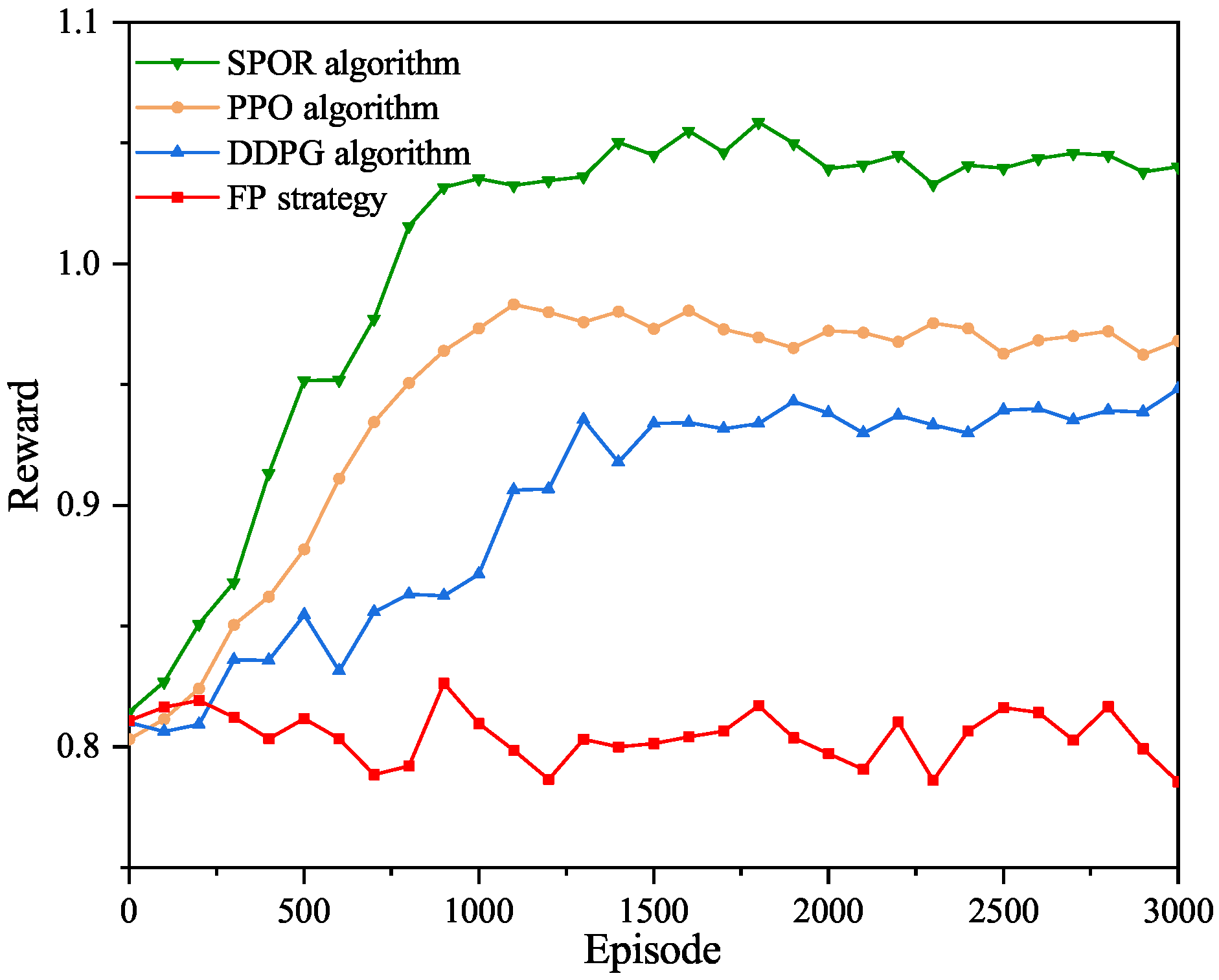

Figure 4 illustrates a comparison of training convergence rates under four different algorithms. In the same scenario, the UAV provides communication services to an identical number of users. As shown in the figure, the proposed SPOR algorithm achieves the fastest and most stable convergence, reaching a high reward level of approximately 1.04 after about 1000 training episodes. The PPO algorithm converges to a slightly lower reward level of around 0.97, while the DDPG algorithm stabilizes at approximately 0.93 after about 1500 episodes. In contrast, the FP strategy exhibits significantly inferior performance, with its reward remaining around 0.8 throughout the training process. These results demonstrate the superiority of the proposed SPOR algorithm in both convergence speed and achievable reward.

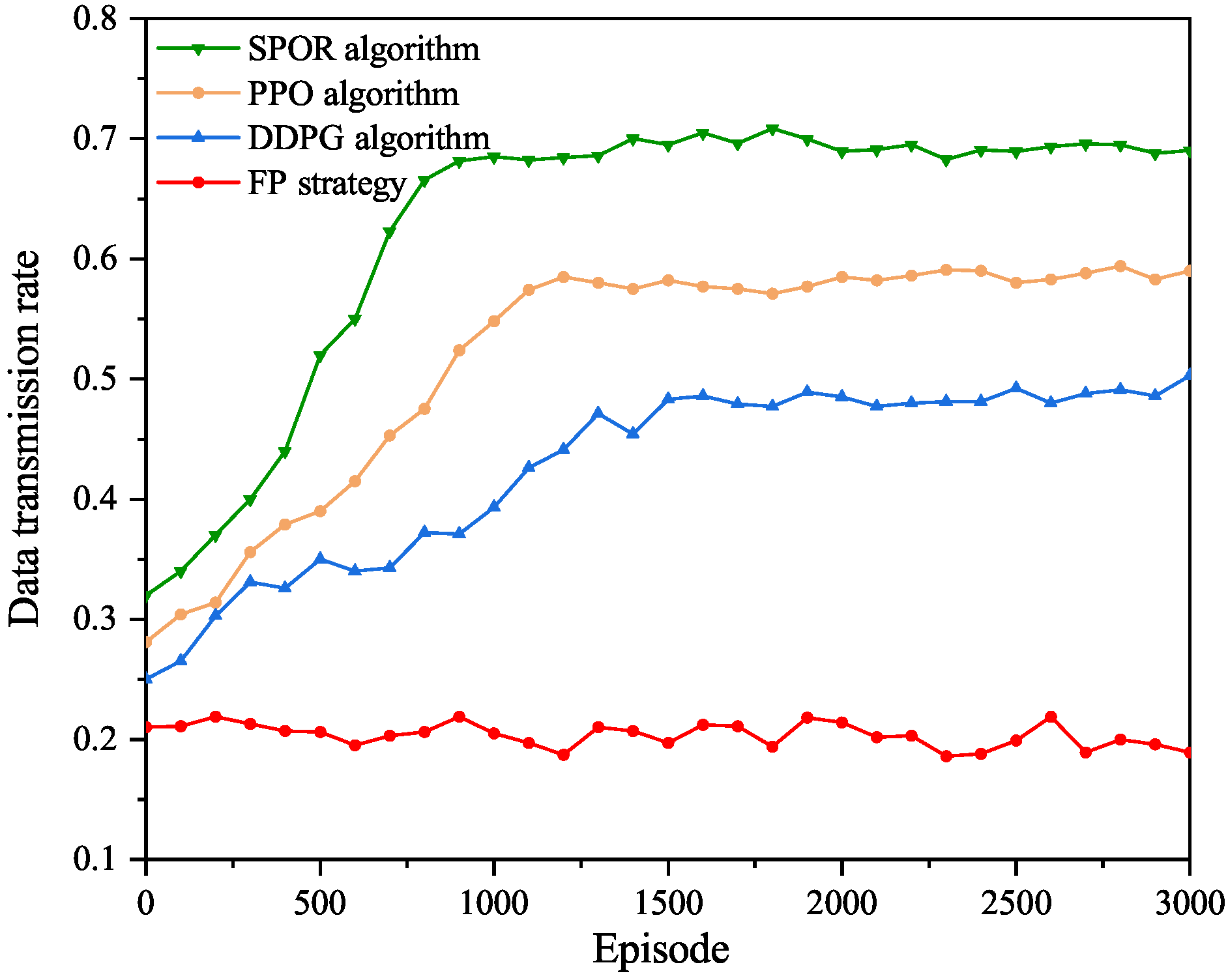

Figure 5 compares the data transmission rates achieved by four different algorithms. As shown in the figure, the proposed SPOR algorithm consistently outperforms the other approaches, converging to a stable data transmission rate of approximately 0.7 after around 1000 training episodes. The PPO algorithm achieves a lower stable rate of about 0.58, while the DDPG algorithm converges to approximately 0.47. The proposed algorithm achieves a data transmission rate improvement of over 48% compared with the DDPG baseline, and about 20% compared with PPO. In contrast, the FP strategy exhibits significantly inferior performance, with its data transmission rate remaining at a low level of around 0.2 throughout the training process. These results demonstrate the effectiveness of the proposed algorithm in improving data transmission performance.

Figure 6 illustrates the evolution of the service ratio over training episodes under different algorithms. As shown in the figure, the proposed SPOR algorithm consistently achieves the highest service ratio and converges to a stable level of approximately 0.82 after approximately 1200 training episodes, demonstrating its effectiveness in resource allocation and task scheduling. The PPO algorithm stabilizes at a lower service ratio of about 0.64, while the DDPG algorithm converges to approximately 0.55. The proposed algorithm achieves a service ratio improvement of over 49% compared with the DDPG baseline, and about 28% compared with PPO. In contrast, the FP strategy exhibits significantly inferior performance, with its service ratio remaining at a low level of around 0.28 throughout the training process.

Figure 7 depicts the performance of each algorithm in optimizing the average service distance between the UAV and users with service demands. As shown in the figure, the proposed SPOR algorithm demonstrates superior distance optimization capability, reducing the initial average distance of approximately 1000 m to around 650 m after convergence. The PPO algorithm converges to an average distance of about 740 m, while the DDPG algorithm stabilizes at approximately 800 m. In contrast, the FP strategy fails to achieve effective distance optimization, with the average service distance remaining at a high level of around 1240 m throughout the training process. Compared with the DDPG and PPO baselines, the proposed algorithm reduces the average service distance by about 19% and 12%, respectively. It should be noted that the average service distance is not intended to measure coverage robustness in this paper. Instead, it reflects the spatial efficiency of the UAV in approaching demanding users during dynamic service provision.

Figure 8 demonstrates the service ratio performance of four algorithms under scenarios with varying numbers of users. As shown in the figure, the proposed SPOR algorithm achieves the highest service ratio across all user population sizes. It attains a service ratio of about 0.82 when serving 20 users and maintains a relatively high level of around 0.77 when the number of users increases to 80, indicating strong scalability under increasing user demand. The PPO and DDPG algorithms achieve lower service ratios and exhibit a gradual decline as the user population grows. In contrast, the FP strategy provides severely limited service capability, with its service ratio remaining at a low level of about 0.26–0.30 and slightly decreasing as the number of users increases.

Figure 9 illustrates the service ratio performance of four algorithms under different user service demand probabilities. As shown in the figure, the proposed SPOR algorithm consistently achieves the highest service ratio across all demand levels. When the service demand probability is 0.2, it attains a service ratio of about 0.85, and it remains around 0.8 as the demand probability increases to 0.8, indicating strong robustness under heavier service loads. The PPO and DDPG algorithms exhibit lower service ratios and suffer more pronounced degradation as the demand probability increases. In contrast, the FP strategy shows limited service capability, and its service ratio decreases significantly under high service demand probabilities. These results demonstrate that the proposed algorithm can effectively adapt to varying service loads and provide reliable service assurance for post-disaster emergency communication.

Figure 10 illustrates a scenario where a UAV provides services to users with service demands under the proposed method. It is generated from a representative snapshot of one simulation episode after policy convergence, where the UAV position, user locations, service-demand states, and established communication links are extracted from the simulation environment and then visualized. In the figure, the green triangle represents the position of the UAV, red squares denote users with service demands, and purple circles indicate users without service demands. The solid lines indicate communication links established between the UAV and the users. It can be observed that the proposed method enables the UAV to autonomously optimize its flight trajectory and dynamically adjust its position to achieve effective coverage for users with service demands. In this representative simulation snapshot, the system achieves a service ratio of 80 percent, indicating that the UAV can effectively respond to users with service demands under the learned policy.

The superior performance of SPOR over PPO and DDPG can be attributed to several factors. First, the SFE layer enables the actor and critic networks to learn from a unified high-level state representation, which reduces redundant computation and improves the consistency between policy learning and value estimation. This is particularly beneficial in our problem, where the state space jointly includes the UAV position, user positions, and dynamic service-demand states. Second, SPOR inherits the clipped surrogate objective from PPO, which effectively constrains policy updates and improves training stability in a dynamic and stochastic environment. Third, the joint reward design simultaneously considers transmission rate, service ratio, and service-distance penalty, enabling the UAV to better balance communication quality, service coverage, and spatial proximity. Since SPOR, PPO, and DDPG are evaluated under the same reward formulation, the comparison between SPOR and PPO more directly reflects the contribution of the SFE-based shared representation under the same learning framework, whereas the comparison with DDPG is additionally influenced by differences in optimization mechanism and learning paradigm. Finally, the use of advantage estimation, normalization, and learning rate scheduling further improves convergence efficiency and robustness. As a result, SPOR is able to learn a more effective and stable policy than PPO and DDPG, leading to better overall performance.