1. Introduction

With the rapid development of UAV technology, UAVs have been widely adopted to assist humans in performing mundane, repetitive, and hazardous tasks, owing to their low cost, zero crew risk, high flexibility, and ease of upgrading [

1]. For example, UAVs have been widely applied in aerial surveillance, aerial mapping, agricultural monitoring, environmental monitoring, logistics, delivery, search and rescue, communication relay, and other missions across diverse engineering fields [

2,

3,

4]. Among these applications, a critical engineering challenge for UAVs, termed long-range UAV Guidance in Dynamic Environments (LRGDEs), demands urgent resolution as it plays a pivotal role in enabling efficient task execution. During LRGDE missions, a UAV is required to depart from a starting point, navigate to a target destination, and avoid obstacles or threats using airborne sensor data. These obstacles and threats include both mobile and stationary objects. Furthermore, the problem becomes significantly more complex when the target point is rigidly attached to a moving vehicle.

Figure 1 illustrates the process of a UAV performing an LRGDE mission. To address this problem, researchers have achieved notable progress toward the challenge described above.

The traditional solution for LRGDEs is to employ path-planning algorithms to obtain the optimal path from the start position to the target position [

5] and to design a controller using trajectory-tracking algorithms to guide the UAV and follow the predefined path. To date, various path-planning algorithms have been proposed to generate optimal paths, such as visibility graphs [

6]; randomly sampling search algorithms including rapidly exploring random tree [

7]; probabilistic roadmaps [

8]; heuristic algorithms including a-star [

9], sparse a-star [

10], and d-star [

11]; biologically inspired optimization algorithms including genetic algorithms [

12] and sand cat swarm optimization [

13]; and so on. Subsequently, various trajectory-tracking algorithms have been developed to design controllers that guide UAVs to track the optimal path [

14]. Although such solutions can effectively address the aforementioned problem, they suffer from inherent drawbacks and practical limitations. First, obtaining detailed environmental obstacle information (e.g., mountains, no-fly zones, and other threats) is highly challenging, which inherently limits the applicability of path-planning algorithms. Second, the above scheme lacks sufficient flexibility to adjust paths in real time when unexpected threats arise. Third, traditional path-planning algorithms struggle to generate feasible trajectories in the presence of moving targets. Fourth, conventional path-planning algorithms typically require excessive computation time to derive optimal solutions, making them unsuitable for real-time applications. Therefore, to achieve high-quality LRGDEs, it is essential to endow UAVs with fully autonomous flight capability, in which the UAV’s control variables are computed in real time by the algorithm, and the decision-making foundation consists of the UAV’s flight state, observed environmental information from airborne sensors, and predefined mission objectives. To realize autonomous flight for UAVs, a critical engineering problem must be solved—namely, UAV-maneuvering decision-making in LRGDEs—where the UAV’s control variables must be determined periodically based on all available information and the scheduled mission objective. Due to the Markovian nature of UAV-maneuvering decision-making in LRGDEs, some researchers have modeled this problem using Markov Decision Processes (MDPs) and proposed various algorithms based on reinforcement learning (RL) [

15,

16,

17,

18]. When applied to the UAV-maneuvering decision-making problem in LRGDEs, RL algorithms can reduce reliance on prior environmental information due to their model-free nature. Meanwhile, since deep reinforcement learning (DRL) algorithms inherently possess end-to-end characteristics, policies can be generated rapidly based on real-time observations of the surrounding environment, enabling the algorithms to address challenges posed by unknown or unexpected threats and satisfy strict real-time decision-making requirements.

When applying RL to solve the UAV-maneuvering decision-making problem in LRGDEs, a key challenge remains that limits RL performance in LRGDE tasks. During policy training for LRGDEs, the decision-making basis at each step mainly relies on observations from airborne sensors, such as radar, electro-optical sensors, and other active or passive sensors. For passive sensors such as optical sensors, only high-precision relative angular information of the target can be obtained, which leads to severe information dimensionality reduction. Even if the relative distance of the target can be inferred through algorithms, data at such a precision level is hardly sufficient to support decision-making. For active sensors such as radar, the relative distance of the target can be acquired, but high-precision velocity information cannot be obtained simultaneously due to the inherent Doppler ambiguity of radar. Thus, owing to incomplete state information from airborne sensors in LRGDEs, to apply RL to LRGDEs, the UAV-maneuvering decision-making problem in LRGDEs should be modeled based on Partially Observable Markov Decision Processes (POMDPs) rather than MDPs. One of the most well-known algorithms is the Monte Carlo Tree Search (MCTS) algorithm, which serves as the core technique of AlphaZero developed by Google DeepMind [

19]. Based on MCTS, several researchers have proposed various MCTS variants for POMDPs, such as POMCP [

20], DESPOT [

21], and other improved MCTS-based algorithms. Although these modified MCTS-based algorithms show effectiveness in solving POMDPs, their excessive computational resource consumption and high time intensity significantly hinder practical engineering applications, since the belief state distribution is estimated using massive samples via particle filters, and the optimal solution is computed through planning methods. In addition, another category of modified algorithms based on DRL leverages the advantages of Recurrent Neural Networks (RNNs) in processing time-series data and estimates the missing dimensions in observation information. Since the problem to be solved is not highly complex, observations such as images, signals, and other forms of information are stacked along the time dimension, and temporal sequences are used to support policy decision-making; this approach is adopted in the input processing of DQN [

22], DDPG [

23], TD3 [

24], PPO [

25], SAC [

26], and other DRL algorithms. As the number of missing dimensions increases, problems must be modeled as POMDPs, and such simple tricks can no longer solve the problem effectively. Therefore, several researchers have proposed various modified DRL algorithms based on RNNs, such as G-IPOMDP-PPO [

27], CBC-TP Net [

28], Fast-RDPG [

29], and FRSVG(0) [

30], among others. All the aforementioned methods introduce RNNs to improve traditional DRL algorithms for solving POMDPs, where RNNs are used to eliminate the impact caused by insufficient state information dimensions. The modified DRL algorithms can overcome the drawbacks of MCTS-based algorithms and satisfy real-time decision-making requirements. Therefore, to better address the UAV-maneuvering decision-making problem in LRGDEs, it is crucial to model LRGDEs based on POMDPs and improve policy networks using RNNs.

Aside from the partial observability in LRGDEs, two additional challenges arise: limited experience and sparse reward. Owing to the complex dynamics of LRGDEs, each simulation episode is time-consuming. Compared with standard MDPs, fewer state transitions are generated, resulting in limited experience that impedes policy convergence. Meanwhile, obtaining effective transitions with clear rewards requires extensive interactions between the agent and the environment, leading to slow convergence and unsatisfactory performance. This well-known challenge in policy training is referred to as sparse reward. To address these issues, previous studies have achieved promising results using methods such as uniform experience replay (UER) [

31] and prioritized experience replay (PER) [

32]. When applying UER and PER, before policy training using historical data, it is necessary to determine the sampling probability of each transition and sample a batch of transitions accordingly. This procedure is known as transition selection, in which transitions are evaluated from multiple perspectives. Based on UER and PER, various improved experience replay (ER) methods have been proposed, such as HER [

33], DCRL [

34], ERO [

35], CHER [

36], ACER [

37], among others. These methods differ from traditional ER in their transition evaluation strategies. Although such modified algorithms enhance the sampling efficiency and overall performance of agents during policy training, relying on only a single criterion to evaluate sample priority, such as TD-error, mission goal, cumulative reward, or sample diversity, is insufficient. To improve the sampling efficiency of RL, it is essential to evaluate transitions using multiple features. In addition to transition selection, adjusting the agent’s training process is critical for mitigating limited experience and sparse reward in MDPs. Operating at a higher level than transition selection, reshaping the transitions generated during policy training is beneficial; this is referred to as transition modification. Instead of directly modifying transitions, the timing of experience collection can be adjusted by designing intermediate task milestones, i.e., learning task objectives from simple to difficult. Accordingly, several researchers have structured the policy training process using task curricula and proposed corresponding algorithms, such as PCCL [

38], NavACL [

39], CURROT [

40], CDRL [

41], SCG [

42], and other curriculum-based DRL algorithms [

43]. These studies demonstrate that deliberate scheduling of the training process can significantly improve the sampling efficiency of RL and accelerate policy convergence. For both ER and the training process of RL algorithms, curriculum learning (CL), which has been widely discussed above, provides valuable guidance for our work. Inspired by human education and animal training, researchers in deep learning (DL) initially proposed CL to accelerate neural network training [

44]. Subsequently, CL has been adopted to enhance traditional RL algorithms with the goal of accelerating policy convergence [

45]. Although many algorithms have been developed to improve transition modification, manually adjusting task goals remains inadequate. Focusing on LRGDEs, this study aims to overcome the limitations of traditional methods caused by limited experience and sparse reward: (1) reliance on a single transition evaluation criterion, (2) inflexible and manually designed training processes. By integrating advances in both transition selection and transition modification, we seek to improve the ER mechanism while reshaping the agent’s training process based on curriculum learning.

To effectively execute LRGDE missions, this work addresses the aforementioned challenges and focuses on UAV-maneuvering decision-making for LRGDEs. The main contributions of this paper are summarized as follows:

- (a)

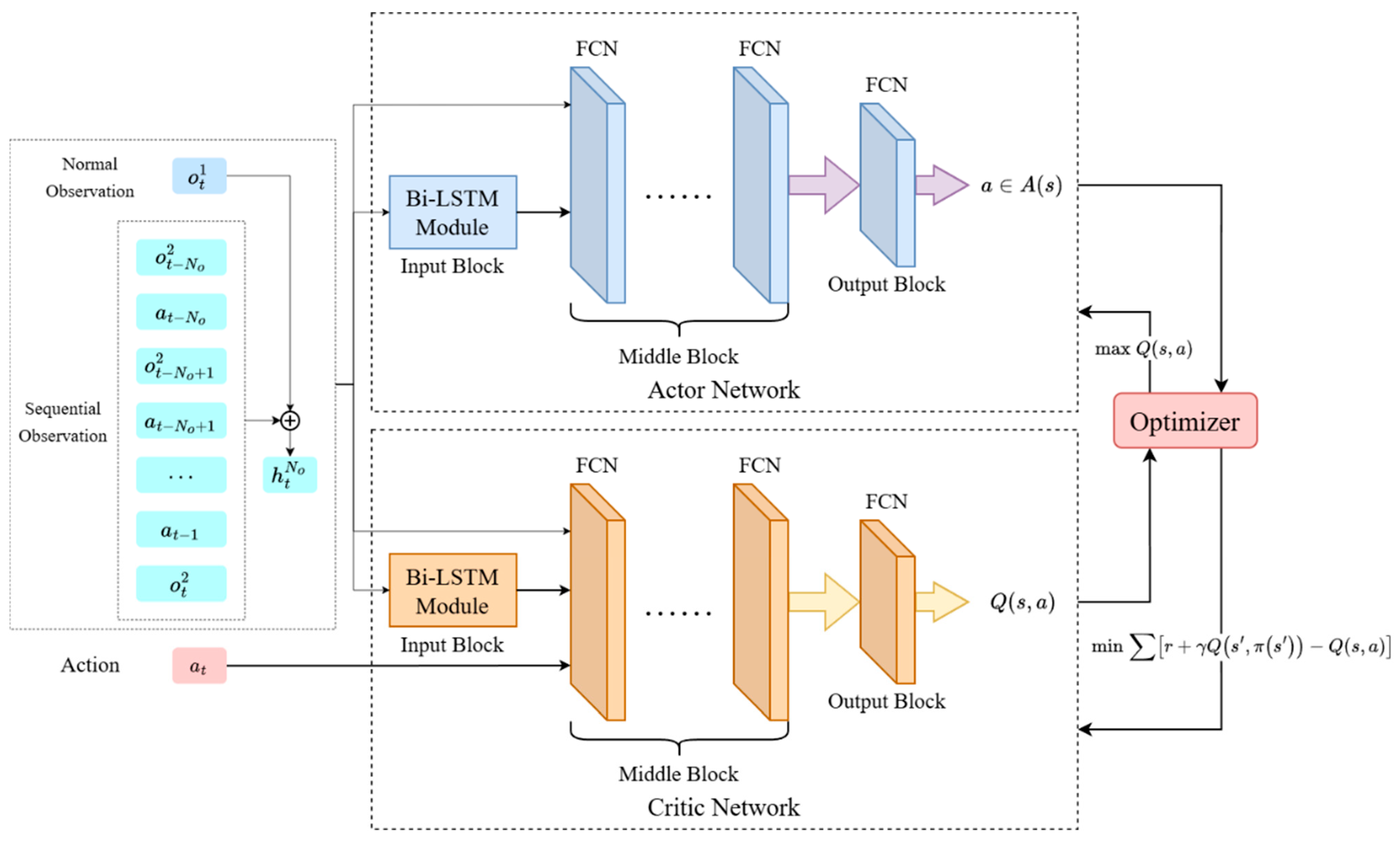

To tackle the partial observability challenge in LRGDEs, we redesign the structure of traditional actor–critic networks and construct a Bi-LSTM-based Policy Network (BLPN). During decision-making, historical observation sequences are vectorized and fed into the BLPN to mitigate the curse of dimensionality in LRGDEs.

- (b)

To fully exploit the latent value of limited transitions under sparse rewards, we propose an Adaptive Multi-Feature Evaluation Experience Replay (AMFER) method. This method integrates an adaptive dynamic termination (ADT) mechanism and a multi-feature transition evaluation (MFTE) model to reshape the policy training process, fully unlocking the potential value of data via a “from easy to difficult” learning paradigm.

- (c)

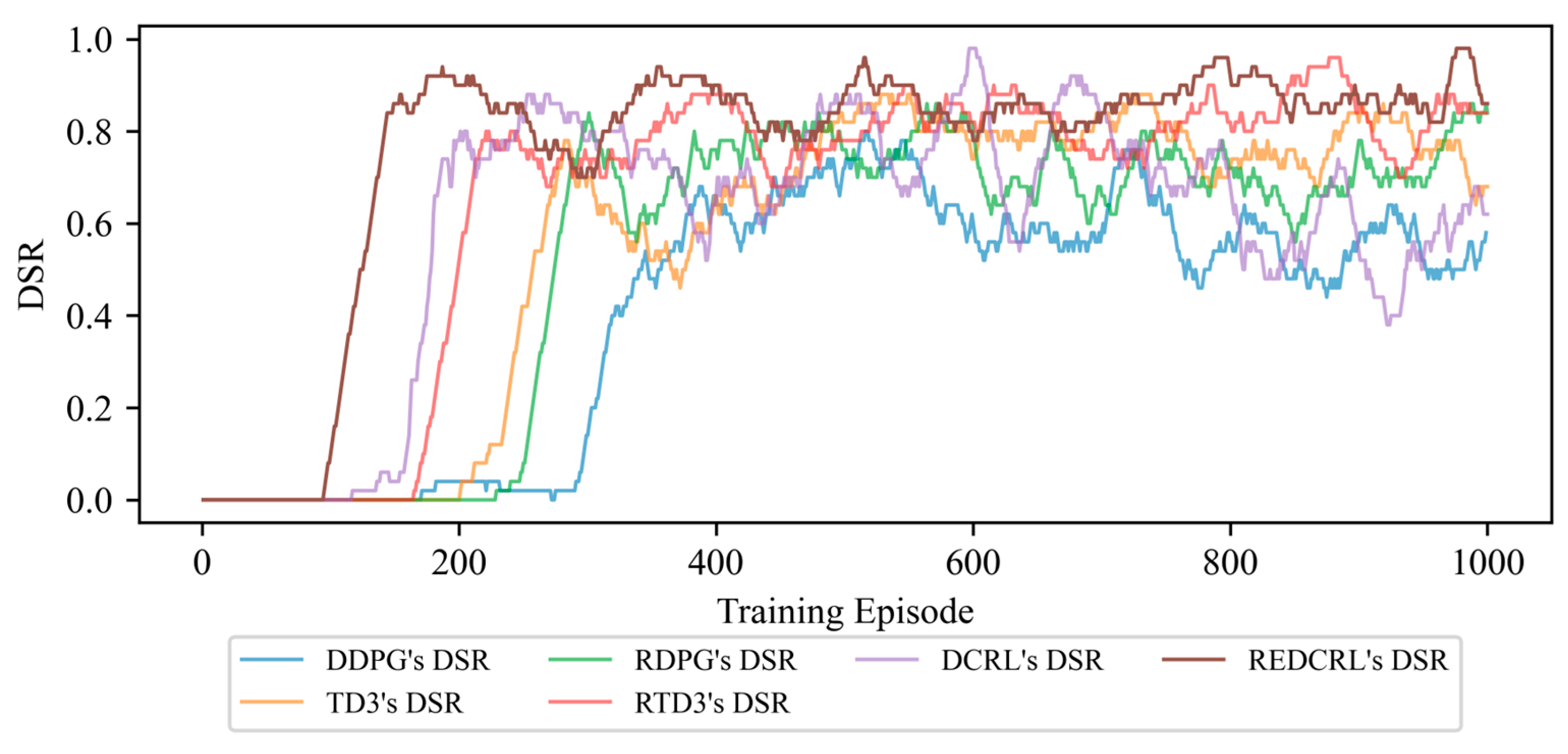

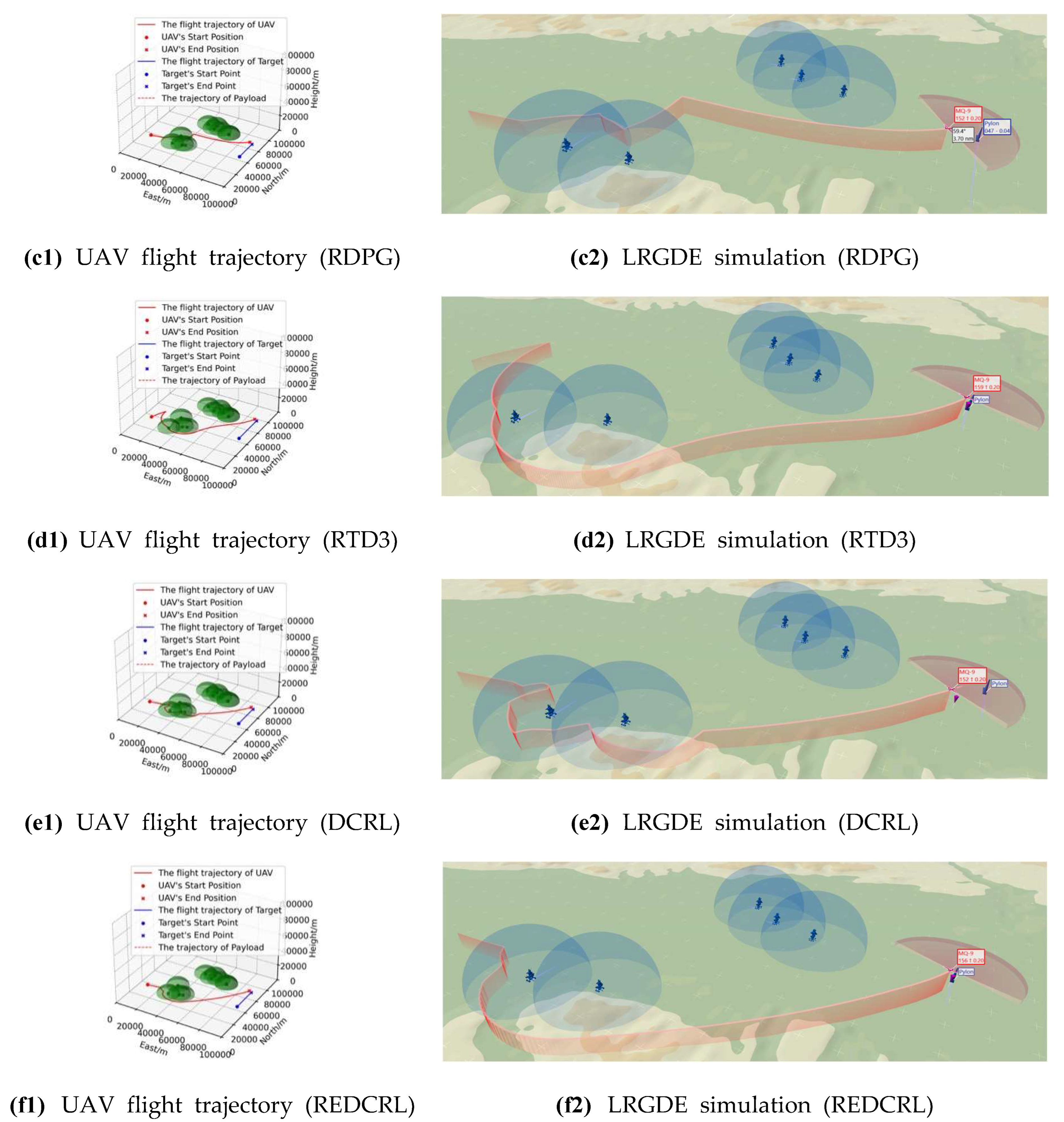

We further propose the REDCRL algorithm, which integrates the proposed BLPN and AMFER. The effectiveness of REDCRL is verified through extensive simulation experiments and comparisons with conventional DRL algorithms. Experimental results and analyses demonstrate that REDCRL significantly accelerates policy convergence and improves the performance of the trained policies.

4. RNN-Enhanced Diverse Curriculum-Driven Learning Algorithm

In this section, we present the structure of the REDCRL. First, we propose BLPN to address the UAV-maneuvering decision-making problem for LRGDEs. Second, we propose AMFER, which integrates an ADT mechanism and an MFTE model, to fully exploit the latent value of limited transitions under sparse reward conditions.

4.1. Structure of Algorithm

It is well known that DRL has been applied to solve numerous problems across diverse research fields, including Atari games, chess, robotics, and other decision-making and control problems. In this paper, we adopt the actor–critic architecture as the foundation of REDCRL, which features model-free and end-to-end characteristics and is well suited for UAV-maneuvering decision-making in LRGDEs. In particular, we employ Bi-LSTM to construct both the actor network and the critic network within the actor–critic architecture, leveraging Bi-LSTM’s strengths in processing temporal sequential data to mitigate the partial state observability problem in LRGDEs. On the other hand, to address the challenges of limited experience and sparse reward encountered when applying traditional DRL algorithms to LRGDEs, we introduce CL to reshape the policy training process. This includes a curriculum-based dynamic task objective and a comprehensive transition-evaluation experience replay method.

Figure 4 illustrates the overall framework of REDCRL, which consists of the BLPN, AMFER (incorporating an ADT mechanism and an MFTE model), and other necessary modules. While employing REDCRL to solve LRGDEs, the BLPN determines the current action

of the UAV, leveraging observations

and rewards

derived from the LRGDE environment. During each episode, the ADT mechanism in AMFER decides whether to terminate the episode using a dynamic task objective, which acts as the criterion for judging LRGDE termination conditions. Furthermore, each transition

generated via interactions between the BLPN policy and the LRGDE environment is stored into the experience memory. The MFTE model in AMFER is then adopted to evaluate the priority of each stored transition. Accordingly, a batch of transitions is sampled from the experience memory based on the sampling probabilities assigned according to their priorities. Finally, these sampled transitions are used to update and optimize the BLPN. In the subsequent sections, we elaborate on the design of the BLPN and AMFER (including the ADT mechanism and MFTE model).

4.2. Bi-LSTM-Modified Policy Networks

To address the sequential decision-making problem posed by partial observability in LRGDEs, a policy network that can effectively process historical observation–action sequences is required. Although various neural architectures are available for time-series data modeling, we select the Bi-LSTM for its proven effectiveness in capturing mid-range temporal dependencies and its computational efficiency for real-time embedded system applications. Compared with recent alternatives such as the Transformer architecture, which excels in modeling long-range contextual dependencies, Bi-LSTM imposes a much lower computational cost.

Figure 5 illustrates the structure of the BLPN, which consists of an actor network and a critic network. Both the actor network and the critic network follow a three-module structure: an input block, a middle block, and an output block. Since the input information includes the current action

and the historical trajectory

(a time-series vector consisting of recent states and actions), the input block is built upon Bi-LSTM, a variant of RNNs that processes sequential data in both forward and backward directions. The middle block and the output block are constructed using fully connected networks (FCNs), consistent with the policy networks used in traditional DRL algorithms.

Specifically, the input to the actor network consists of observations from the LRGDE environment, which are categorized into normal observation and sequential observation. At each decision step, the agent receives an observation that includes the normal observation for the UAV’s flight state and the relative observation for the target and threats. In this work, we consider that represents fully observable information about the UAV’s flight state and does not need to be included in sequential observations, which helps to reduce network size and conserve computational resources. In contrast, represents partially observable information about the target and threats relative to the UAV, which can be obtained via various airborne sensors.

Subsequently,

and

over a period of time are used to construct the sequential observation, as illustrated in

Figure 5. During interactions between the agent and the LRGDE environment,

is input into

, which outputs the corresponding action

. In contrast to the actor network, the current action

is incorporated into the input of the critic network,

. The critic network then outputs the Q-value

to guide the optimization of the actor network

.

The critic network

could be optimized based on TD3 [

24], and the optimization target is defined as

where

are the target critic networks, and the transition

is used to calculate the optimization target

. Thereby, we could optimize the hyperparameters of critic networks by the loss function, defined as

where

are the critic networks and

is the target of the corresponding critic networks.

The policy gradient of the actor network

is calculated according to the RDPG theorem [

47].

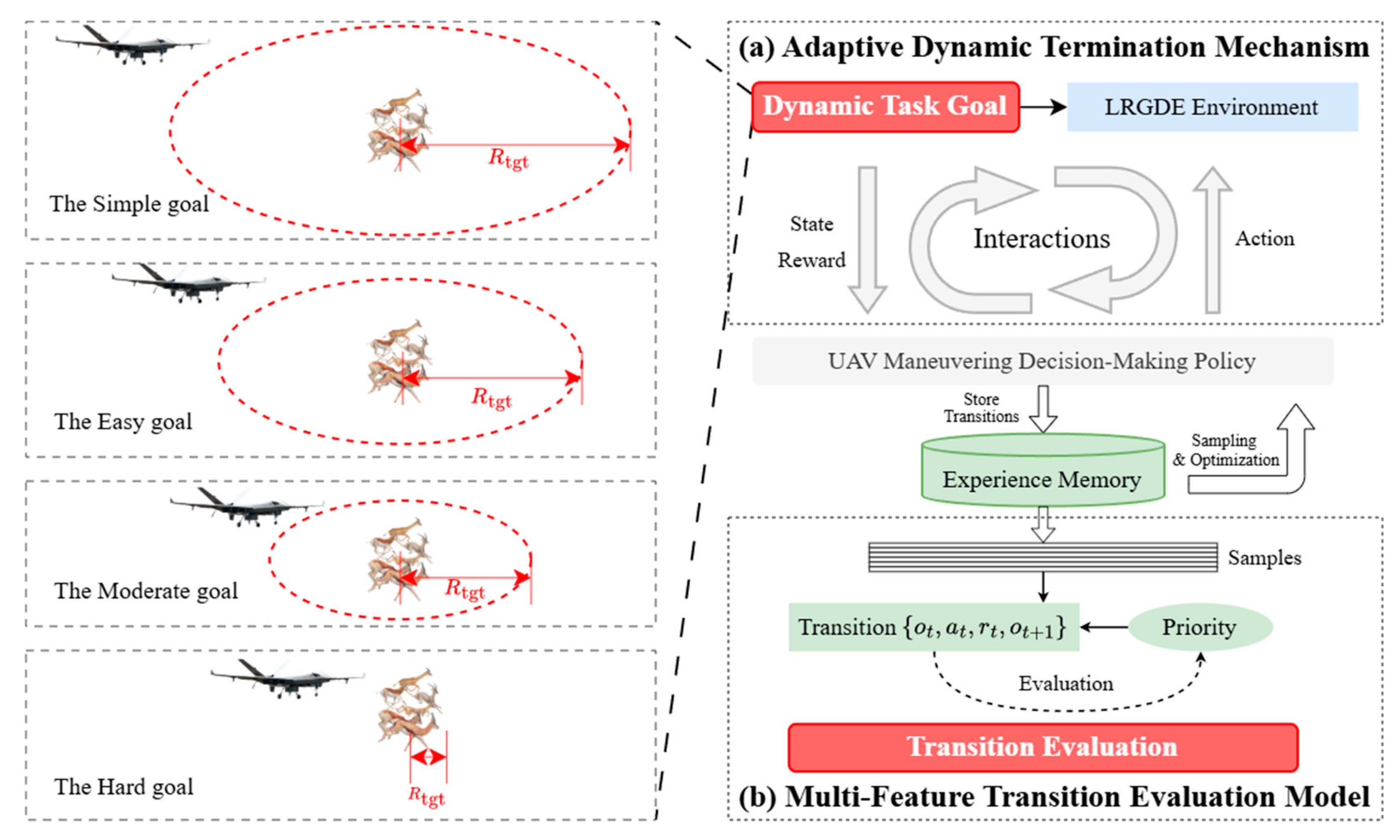

4.3. Adaptive Multi-Feature Evaluation Experience Replay

Based on the structure of REDCRL, it is critical to restructure the policy training process for effective policy learning. Reasonable restructuring of the policy training process helps to address the challenges of limited experience and sparse reward when applying DRL to agent training in LRGDEs. As illustrated in

Figure 6, we design the structure of AMFER, which comprises an ADT mechanism and an MFTE model.

During the policy training process, to reduce the computational burden of algorithm training, we design an ADT mechanism following the “from easy to difficult” principle, which is analogous to the sequential learning processes in human education and animal training. The ADT mechanism generates a dynamic task objective based on the state of the LRGDE environment to determine whether the mission in the current episode is successful or unsuccessful. When determining the current task objective, we select the most appropriate goal for the current policy from a predesigned curriculum comprising a sequence of tasks arranged in ascending order of difficulty.

Furthermore, during interactions between the UAV-maneuvering decision-making policy and the LRGDE environment, transitions are stored in the experience memory, where a batch of transitions is sampled and utilized to optimize the policy. Once a transition is stored in the experience memory, the priority of each transition is evaluated by the MFTE model, which is developed based on the traditional ER method and integrates a comprehensive evaluation function. Prior to policy training, these transitions are sampled based on the sampling probabilities associated with the priorities of the transitions.

4.3.1. Adaptive Dynamic Termination Mechanism

For LRGDEs, the successful termination of the mission depends on the distance between the UAV and the target, as well as the UAV’s detection range. If the current distance between the UAV and the target satisfies Equation (2), the simulation episode is terminated successfully. Accordingly, the UAV’s detection range defines the mission difficulty for the current episode. In the ADT mechanism, we define a dynamic task objective that can automatically adjust itself based on the performance of the current policy.

First, we define a curriculum, which consists of a sequence of subtasks and is formulated as

where

is a subtask.

represents the goal of

.

In this paper, considering the definition of LRGDEs, the subtask goal

is related to Equation (2) and the key parameter of the dynamic task goal, i.e., the control variable of

, is the UAV’s detection range

. Therefore, we can construct a curriculum about LRGDEs in terms of

, which is defined as

. Among the curriculum

, every subtask

is defined by

and all of the subtasks are sorted by the difficulty of the subtask goal in ascending order. While sorting the subtasks, the difficulty evaluation function

is defined as

According to the function defined above, we can obtain a curriculum arranged in ascending order of difficulty. In addition, during the policy training process, we design a module to update the current task objective, which in turn determines the termination condition of the current episode.

In this work, we consider that the difficulty perceived by the current policy is associated with the current task objective of LRGDEs. Therefore, after constructing the curriculum, we adopt the Dynamic Success Rate (DSR) metric to evaluate the performance of the current policy and determine whether to switch the current subtask objective. The DSR can be computed as

where

denotes the total number of simulation episodes used for DSR calculation, and

represents the number of successfully completed episodes. Moreover, during policy training, the simulation results used for DSR calculation are obtained from the most recent

experiments. Accordingly, we can determine whether to switch the current subtask goal by

, and the Converged Successful Rate (CSR) acts as the threshold for judging whether the current policy has converged.

Figure 7 illustrates the structure of the ADT mechanism designed in this section. During the operation of the ADT mechanism, we first construct a curriculum according to the definition of the target LRGDE. During policy training, the DSR metric is evaluated to quantify the policy performance based on feedback from the LRGDE environment. The DSR is then used to determine whether to update the current termination condition. After the termination condition is switched to a more difficult subtask objective, the old transitions collected under the previous subtask objective are retained in the experience memory. Although the policy is learning subtask

, these old transitions from subtask

still supply the policy with valuable prior knowledge.

The pseudocode of the ADT mechanism is shown in Algorithm 1.

| Algorithm 1. The ADT Mechanism in AMFER |

| 1: | Initialize a curriculum including a set of subtasks and select as the current subtask. |

| 2: | while not terminate the training experiment do |

| 3: | Obtain the state from LRGDE environment; |

| 4: | Calculate the current according to the current subtask ; |

| 5: | if then |

| 6: | Switch current goal to next subtask ; |

| 7: | end if |

| 8: | end while |

4.3.2. Multi-Feature Transition Evaluation Model

In addition to the ADT mechanism, reshaping the sampling process from the experience memory is critical for effective policy training. Traditional ER methods, such as UER and PER, can facilitate online learning for DRL algorithms within the training environment. However, they face limitations when applied to UAV-maneuvering decision-making problems in LRGDEs, since relying solely on TD-error to evaluate transition priorities is inadequate. In addition to UER and PER, various CL-based algorithms with improved transition selection strategies have been proposed in recent years, including HER, DCRL, ERO, CHER, CER, LSER, and ACER. However, evaluating sample priority from only a single factor (e.g., TD-error, mission objective, cumulative reward, or sample diversity) remains insufficient. Accordingly, we design the MFTE model to comprehensively evaluate the priorities of transitions.

Figure 8 illustrates the structure of the MFTE model, whose core component is a comprehensive transition evaluation function composed of three evaluation factor terms: learning value, diversity, and intrinsic value. After each sampling and policy training iteration, the priorities of the sampled transitions are re-evaluated according to the current policy, and the updated priorities are used to compute the sampling probabilities for the next iteration. The comprehensive transition evaluation function is defined as

where

denotes the learning value factor,

denotes the diversity factor, and

denotes the inherent value factor.

Within the MFTE model, the learning value of a transition characterizes the degree to which the transition contributes to policy optimization, and the learning value factor

is computed based on the TD-error

of the transition. In DL, transitions with large TD-error magnitudes require a smaller learning step to adapt to the curvature of the objective function. The larger the TD-error

, the greater the impact of the transition on the current policy, and thus the more the transition should be utilized for learning. In CL,

must satisfy specific constraints [

34,

37]. In this work, we design the learning value factor function, which is formulated as

where

and

are used to adjust the slope of function.

denotes the loss of the

-th transition for the current network.

represents the curriculum factor that indicates the learning stages.

Apart from the learning value, maintaining data diversity is also critical for effective policy training. In ER, excessive reuse of redundant transitions can lead to severe overtraining, and the policy is highly prone to becoming trapped in a local optimum. Therefore, maintaining the diversity of transitions sampled from the experience memory is one of the key issues to prevent the policy from becoming trapped in a local optimum. In this work, to achieve the exploration–exploitation tradeoff and maintain sufficient exploration in the state and action spaces, the diversity factor

is adopted to enhance the diversity of sampled transitions, and

is formulated as

where

denotes the maximum number of times all transitions in the experience memory have been sampled and

represents the number of times transition

is sampled.

Finally, to facilitate the policy’s faster convergence to a near-optimal solution, we incorporate the reward of each transition into the comprehensive evaluation value. In DRL algorithms, rewards can guide the policy to learn effective behaviors. In other words, rewards serve as the specified learning guidance for the mission. Therefore, from a non-generalizability perspective, prioritizing transitions with higher rewards for learning can accelerate the policy’s convergence. Accordingly, we specifically integrate the intrinsic value factor

into the comprehensive transition evaluation function, and

is formulated as

where

is the reward of the

i-th transition and

denotes the maximum reward across all transitions in the experience memory.

Based on the comprehensive transition evaluation function

, the priority

of each transition can be obtained. Accordingly, we can compute the sampling probability

of each transition, which is defined as

where

is a hyperparameter used to control the influence of transition priority on the sampling probability. Moreover, since the transition distribution from the environment is altered, Importance Sampling (IS) weights [

48] are adopted to correct the distribution bias caused by AMFER. The cumulative gradient of critic network could be calculated by

where

is TD-error of the

j-th transition and

is the cumulative gradient of the critic network

.

The pseudocode of the MFTE mechanism is shown in Algorithm 2.

| Algorithm 2. The MFTE Model in AMFER |

| 1: | for each transition in do |

| 2: | Calculate the TD-Error of the transition according to current and ; |

| 3: | Calculate the learning value factor according to Equation (21); |

| 4: | Count the and , and calculate the diversity factor according to Equation (22); |

| 5: | Search for the among and calculate the inherent value factor according to Equation (23); |

| 6: | Calculate the comprehensive transition evaluation value according to Equation (20); |

| 7: | Update the priority of -th transition based on and calculate the probability of -th transition. |

| 8: | end for |

4.4. Policy Training Process of REDCRL

As illustrated in

Figure 4, during REDCRL training in LRGDEs, the BLPN generates the action

based on the observation

and the reward

obtained from the LRGDE environment. Subsequently, transitions generated during training are stored in the experience memory. After each training epoch, the MFTE model integrated into AMFER is adopted to evaluate the priorities of sampled transitions. Meanwhile, the ADT mechanism integrated into AMFER is employed to determine whether to terminate the current simulation episode and update the task objective of the LRGDE environment.

Based on the implementations of the BLPN and AMFER modules, the integrated REDCRL algorithm is presented in Algorithm 3.

| Algorithm 3. The REDCRL algorithm for UAV-maneuvering decision-making in LRGDEs |

| 1: | Initialize policy networks and and their target networks , . |

| 2: | for to do |

| 3: | Reset environment and obtain the initial observation ; |

| 4: | Construct the history trajectory and output ; |

| 5: | for to do |

| 6: | Observe current observation and calculate current action ; |

| 7: | Observe next observation and receive reward from environment, and store transition . |

| 8: |

if

then |

| 9: | Reset the gradient of critic networks with IS; |

| 10: | Sample a batch of transitions according to the sampling probabilities of transitions; |

| 11: | Accumulate the parameters gradient of according to Equation (25). |

| 12: | Update the parameters of according to with learning rate ; |

| 13: | Update the parameters of actor network according to Equation (16); |

| 14: | Update the priorities of these transitions used for training according to MFTE defined in Algorithm 2 |

| 15: |

end if |

| 16: | If state meets Equation (2) then |

| 17: | Start the next episode. |

| 18: | Update the task goal according to ADT defined in Algorithm 1 |

| 19: | else if state satisfies Equation (1) then |

| 20: | Start the next episode. |

| 21: |

end if |

| 22: |

end for |

| 23: | end for |