Figure 1.

Overview of the proposed CASA-RCNN framework and the quality–scale collaborative loss. The input image is processed by a ResNet-50 backbone to produce multi-level features. ConvSwinMerge is inserted into shallow, high-resolution stages to enhance fine-grained localization features, while MambaBlock is introduced in mid-to-deep features to model global contextual dependencies. The enhanced features are aggregated by an FPN (P2–P6), followed by the RPN to generate proposals and the RoI head for classification and bounding-box regression. During training, the RPN uses focal loss and GIoU loss, whereas the RoI head adopts a quality–scale objective that combines Varifocal loss (classification), EIoU loss (regression), and an auxiliary ScaleAdaptive term.

Figure 1.

Overview of the proposed CASA-RCNN framework and the quality–scale collaborative loss. The input image is processed by a ResNet-50 backbone to produce multi-level features. ConvSwinMerge is inserted into shallow, high-resolution stages to enhance fine-grained localization features, while MambaBlock is introduced in mid-to-deep features to model global contextual dependencies. The enhanced features are aggregated by an FPN (P2–P6), followed by the RPN to generate proposals and the RoI head for classification and bounding-box regression. During training, the RPN uses focal loss and GIoU loss, whereas the RoI head adopts a quality–scale objective that combines Varifocal loss (classification), EIoU loss (regression), and an auxiliary ScaleAdaptive term.

Figure 2.

Structure of the proposed ConvSwinMerge module. Given an input feature map , the module first applies CoordAtt to inject position-sensitive attention and uses a residual connection to obtain . Then a convolution is employed for local detail refinement with another residual path, yielding . Finally, SaE performs channel excitation to produce the enhanced feature , where . Note that ConvSwinMerge is Swin-inspired in motivation but uses Coordinate Attention instead of shifted-window self-attention for efficiency.

Figure 2.

Structure of the proposed ConvSwinMerge module. Given an input feature map , the module first applies CoordAtt to inject position-sensitive attention and uses a residual connection to obtain . Then a convolution is employed for local detail refinement with another residual path, yielding . Finally, SaE performs channel excitation to produce the enhanced feature , where . Note that ConvSwinMerge is Swin-inspired in motivation but uses Coordinate Attention instead of shifted-window self-attention for efficiency.

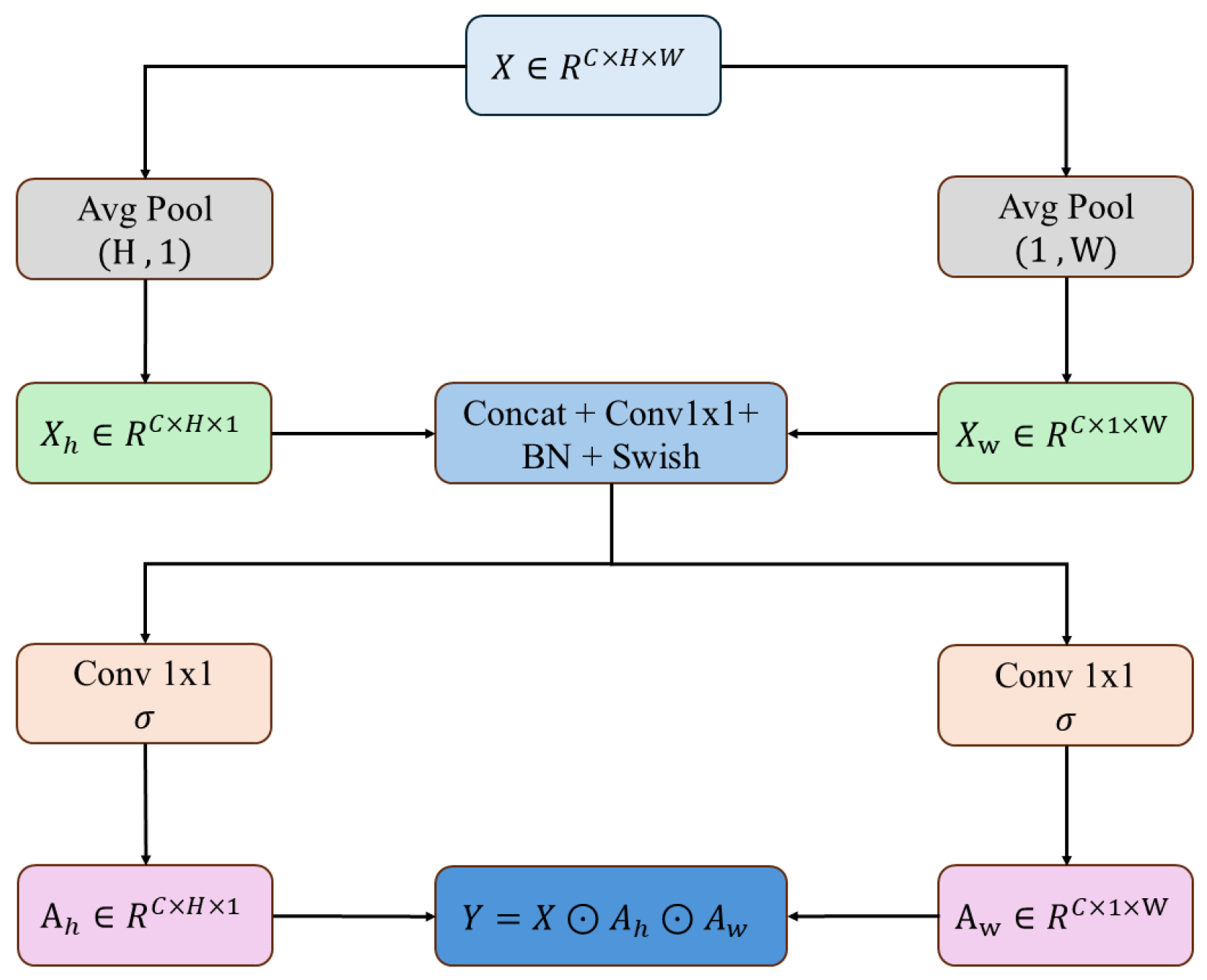

Figure 3.

Architecture of the CoordAtt block used in ConvSwinMerge. Given an input feature map , average pooling is performed along the width and height directions to obtain and , respectively. The two descriptors are concatenated and transformed by a convolution followed by BN and Swish. Two parallel convolutions with sigmoid activation generate the direction-aware attention maps and . The output is obtained by reweighting the input feature as , where ⊙ denotes element-wise multiplication.

Figure 3.

Architecture of the CoordAtt block used in ConvSwinMerge. Given an input feature map , average pooling is performed along the width and height directions to obtain and , respectively. The two descriptors are concatenated and transformed by a convolution followed by BN and Swish. Two parallel convolutions with sigmoid activation generate the direction-aware attention maps and . The output is obtained by reweighting the input feature as , where ⊙ denotes element-wise multiplication.

Figure 4.

Design of the proposed MambaBlock and its selective spatial aggregation operator MambaT. (

Left): MambaBlock adopts a dual-branch fusion strategy, where the enhancement branch

(MambaT) performs context aggregation and the fidelity branch

(identity/linear) preserves semantic details. The two branches are concatenated and fused by a

convolution

to obtain the output feature

. (

Right): MambaT computes a local pedestal

via group convolution and generates selection weights

A through a softmax gating function. The value tensor

V is produced by a

convolution and is selectively aggregated by

A to form the context term

. The final enhanced representation is obtained by combining

and

. MambaT has linear-time scaling with respect to the number of spatial tokens

(i.e., it avoids constructing an

attention map); the detailed complexity derivation is provided in

Section 3.3.

Figure 4.

Design of the proposed MambaBlock and its selective spatial aggregation operator MambaT. (

Left): MambaBlock adopts a dual-branch fusion strategy, where the enhancement branch

(MambaT) performs context aggregation and the fidelity branch

(identity/linear) preserves semantic details. The two branches are concatenated and fused by a

convolution

to obtain the output feature

. (

Right): MambaT computes a local pedestal

via group convolution and generates selection weights

A through a softmax gating function. The value tensor

V is produced by a

convolution and is selectively aggregated by

A to form the context term

. The final enhanced representation is obtained by combining

and

. MambaT has linear-time scaling with respect to the number of spatial tokens

(i.e., it avoids constructing an

attention map); the detailed complexity derivation is provided in

Section 3.3.

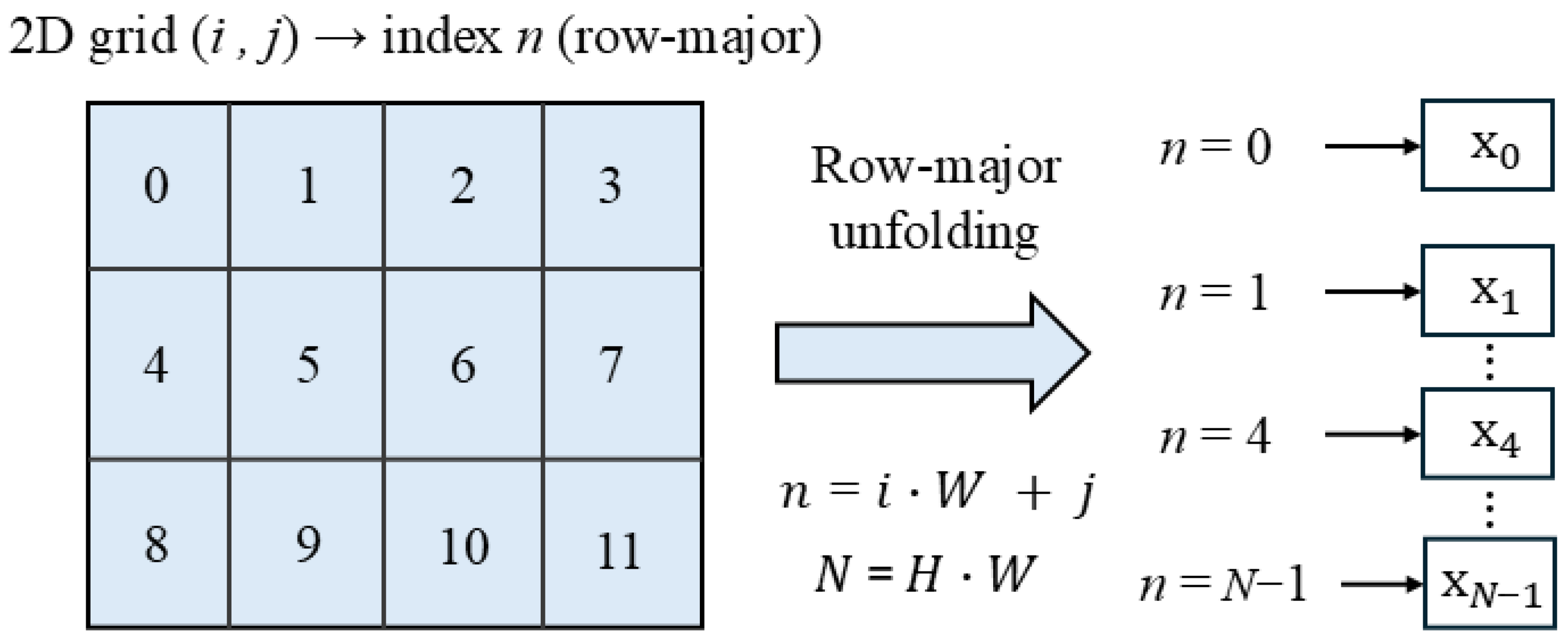

Figure 5.

Row-major 2D-to-1D mapping before MambaT. The spatial grid is serialized into a 1D token sequence in row-major order. Each spatial location is mapped to the sequence index , producing tokens that are fed into the MambaT operator.

Figure 5.

Row-major 2D-to-1D mapping before MambaT. The spatial grid is serialized into a 1D token sequence in row-major order. Each spatial location is mapped to the sequence index , producing tokens that are fed into the MambaT operator.

Figure 6.

Overall detection performance comparison on the VisDrone2021 validation set. CASA-RCNN achieves the highest mAP (22.9%) and (25.7%), demonstrating superior detection accuracy and localization quality compared to both classical detectors and recent YOLO variants.

Figure 6.

Overall detection performance comparison on the VisDrone2021 validation set. CASA-RCNN achieves the highest mAP (22.9%) and (25.7%), demonstrating superior detection accuracy and localization quality compared to both classical detectors and recent YOLO variants.

Figure 7.

Per-category AP comparison between Faster R-CNN (baseline) and CASA-RCNN. The values above bars indicate the absolute improvement. CASA-RCNN yields consistent gains across all 11 categories, with particularly substantial improvements on truck (+22.7%), bus (+20.4%), and van (+15.3%), demonstrating enhanced discriminability for medium and large vehicle categories under complex backgrounds.

Figure 7.

Per-category AP comparison between Faster R-CNN (baseline) and CASA-RCNN. The values above bars indicate the absolute improvement. CASA-RCNN yields consistent gains across all 11 categories, with particularly substantial improvements on truck (+22.7%), bus (+20.4%), and van (+15.3%), demonstrating enhanced discriminability for medium and large vehicle categories under complex backgrounds.

Figure 8.

Precision–recall (PR) curves on VisDrone2021-DET under three IoU thresholds (0.3/0.5/0.7). The star denotes the operating point that maximizes the F1 score for each method. CASA-RCNN consistently yields a higher PR envelope than the baseline across all IoU settings, indicating superior detection quality under both loose and strict matching criteria. Note that the absolute recall saturates at a relatively low level (around 0.45), which is typical for VisDrone due to the prevalence of extremely small objects (e.g., < pixels), heavy crowding/occlusion, and class imbalance.

Figure 8.

Precision–recall (PR) curves on VisDrone2021-DET under three IoU thresholds (0.3/0.5/0.7). The star denotes the operating point that maximizes the F1 score for each method. CASA-RCNN consistently yields a higher PR envelope than the baseline across all IoU settings, indicating superior detection quality under both loose and strict matching criteria. Note that the absolute recall saturates at a relatively low level (around 0.45), which is typical for VisDrone due to the prevalence of extremely small objects (e.g., < pixels), heavy crowding/occlusion, and class imbalance.

Figure 9.

Cumulative contribution of each proposed component to detection performance. Starting from the Faster R-CNN baseline (13.9% mAP), ConvSwinMerge contributes +4.8%, MambaBlock adds +1.8%, and the quality–scale collaborative loss (Q–S Loss) further improves by +2.4%, yielding a total gain of +9.0% mAP.

Figure 9.

Cumulative contribution of each proposed component to detection performance. Starting from the Faster R-CNN baseline (13.9% mAP), ConvSwinMerge contributes +4.8%, MambaBlock adds +1.8%, and the quality–scale collaborative loss (Q–S Loss) further improves by +2.4%, yielding a total gain of +9.0% mAP.

Figure 10.

Ablation study on loss function combinations (on top of ConvSwinMerge + MambaBlock). CE: CrossEntropy loss; VFL: Varifocal Loss; EIoU: EIoU regression loss; SA: ScaleAdaptiveLoss. Replacing CrossEntropy with Varifocal Loss improves the alignment between classification confidence and localization quality. Combining with EIoU further enhances high-precision localization (: 20.4%→24.2%). The proposed ScaleAdaptiveLoss yields the most substantial gain on small objects (: 10.1%→12.5%, +2.4%), leading to the best overall performance (22.9% mAP).

Figure 10.

Ablation study on loss function combinations (on top of ConvSwinMerge + MambaBlock). CE: CrossEntropy loss; VFL: Varifocal Loss; EIoU: EIoU regression loss; SA: ScaleAdaptiveLoss. Replacing CrossEntropy with Varifocal Loss improves the alignment between classification confidence and localization quality. Combining with EIoU further enhances high-precision localization (: 20.4%→24.2%). The proposed ScaleAdaptiveLoss yields the most substantial gain on small objects (: 10.1%→12.5%, +2.4%), leading to the best overall performance (22.9% mAP).

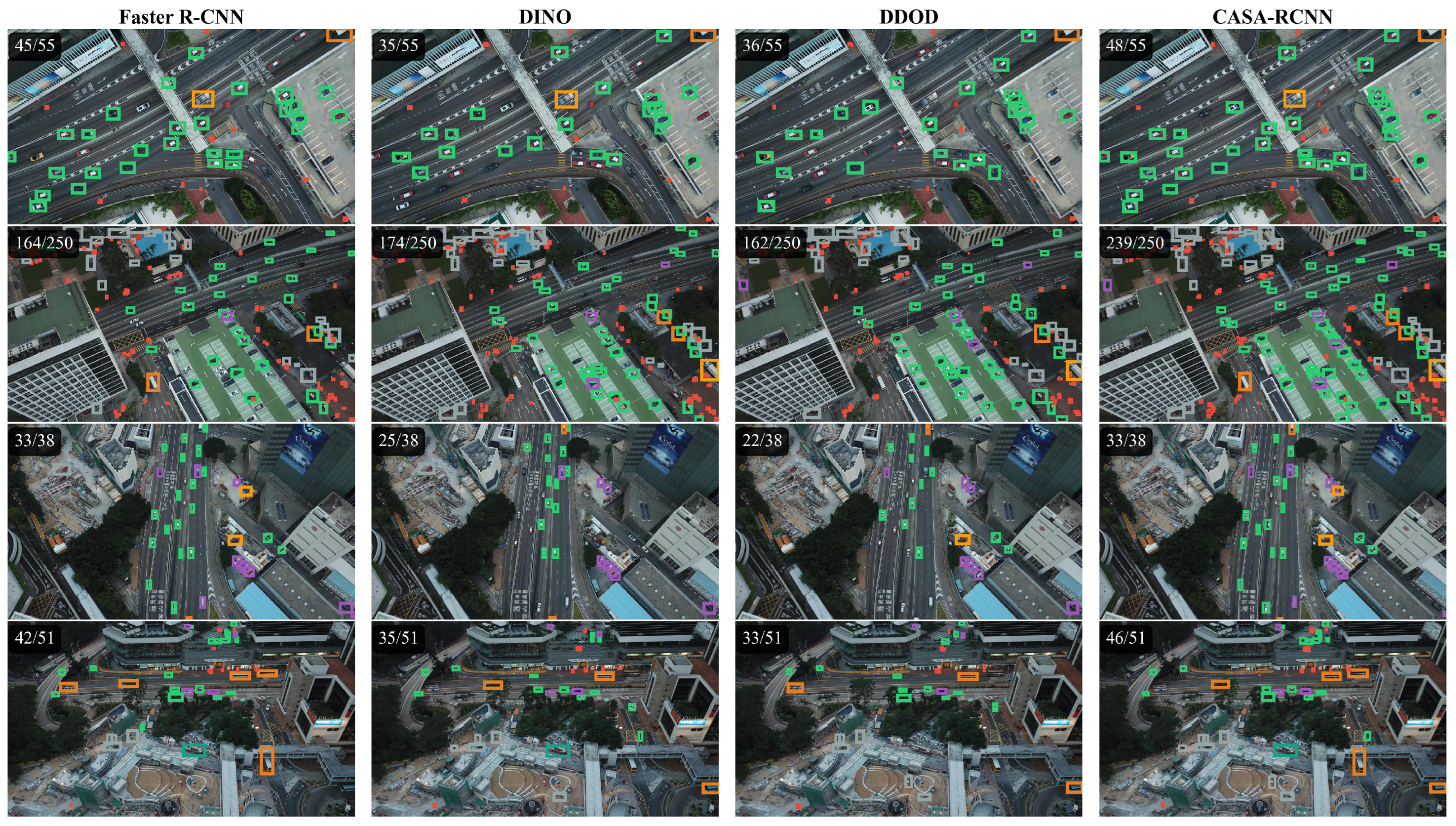

Figure 11.

Qualitative. The different color boxes here represent the different results detected comparison of detection results across different methods. Each column represents a detection algorithm (Faster R-CNN, DINO, DDOD, CASA-RCNN from left to right), and each row shows a different aerial scenario. The numbers in the top-left corner indicate detected/total objects. CASA-RCNN consistently achieves the highest detection rates across all scenarios, demonstrating superior performance in detecting small and densely distributed objects.

Figure 11.

Qualitative. The different color boxes here represent the different results detected comparison of detection results across different methods. Each column represents a detection algorithm (Faster R-CNN, DINO, DDOD, CASA-RCNN from left to right), and each row shows a different aerial scenario. The numbers in the top-left corner indicate detected/total objects. CASA-RCNN consistently achieves the highest detection rates across all scenarios, demonstrating superior performance in detecting small and densely distributed objects.

Figure 12.

Comparison results of different algorithms, comparison of detection results across four representative aerial scenarios. Columns from left to right: Faster R-CNN, DINO, DDOD, and CASA-RCNN. Rows from top to bottom: (1) dense traffic scene with multi-class vehicles, (2) complex intersection scene with severe occlusions, (3) parking-lot scene with scale variations, and (4) urban area scene with mixed object distributions. The detection ratios (detected/ground-truth) in each sub-figure demonstrate that CASA-RCNN consistently achieves the highest recall rates across all scenarios.

Figure 12.

Comparison results of different algorithms, comparison of detection results across four representative aerial scenarios. Columns from left to right: Faster R-CNN, DINO, DDOD, and CASA-RCNN. Rows from top to bottom: (1) dense traffic scene with multi-class vehicles, (2) complex intersection scene with severe occlusions, (3) parking-lot scene with scale variations, and (4) urban area scene with mixed object distributions. The detection ratios (detected/ground-truth) in each sub-figure demonstrate that CASA-RCNN consistently achieves the highest recall rates across all scenarios.

Table 1.

Conceptual correspondence between Mamba SSM and MambaBlock.

Table 1.

Conceptual correspondence between Mamba SSM and MambaBlock.

| Mamba SSM | Our Instantiation |

|---|

| Hidden state | Local pedestal (GroupConv) |

| Selective gating | Content-driven weights |

| State update | Weighted aggregation |

Table 2.

Statistics of the VisDrone2021-DET dataset.

Table 2.

Statistics of the VisDrone2021-DET dataset.

| Subset | # Images | # Objects | Avg. Objects/Image | Image Resolution |

|---|

| Train (train) | 6471 | 343,205 | 53.0 | |

| Val (val) | 548 | 38,759 | 70.7 | |

| Test (test-dev) | 1610 | – | – | |

Table 3.

Network architecture configuration of CASA-RCNN.

Table 3.

Network architecture configuration of CASA-RCNN.

| Component | Configuration Details |

|---|

| Feature Extraction |

| Backbone | ResNet-50, ImageNet pretraining |

| Frozen stage | Stage 0 (conv1 + bn1) |

| ConvSwinMerge | Applied to Stage 0 (256 channels) and Stage 1 (512 channels) |

| MambaBlock | Applied to Stage 2 (1024 channels) |

| Feature Fusion |

| FPN output channels | 256 |

| Number of FPN outputs | 5 (–) |

| Region Proposal Network (RPN) |

| Anchor scales | |

| Anchor ratios | |

| Anchor strides | |

| Positive IoU threshold | 0.7 |

| Negative IoU threshold | 0.3 |

| Training samples | 256/image (pos:neg = 1:1) |

| Detection Head (RoI Head) |

| RoI feature size | |

| FC layer dimension | 1024 |

| Positive IoU threshold | 0.5 |

| Training samples | 512/image (pos:neg = 1:3) |

Table 4.

Specific parameter configuration of loss function.

Table 4.

Specific parameter configuration of loss function.

| Hyperparameter | Value |

|---|

| Optimizer | AdamW |

| Initial learning rate | |

| Weight decay | |

| Gradient clipping | max_norm = 0.1 |

| Batch size | 6 (single GPU) |

| Total iterations | 20,000 |

| Validation interval | every 5000 iterations |

| Learning rate schedule | MultiStepLR |

| LR decay milestone | 15,000 iterations |

| LR decay factor | |

| Backbone LR multiplier | 0.1 |

| Data Augmentation |

| Input size | |

| Random horizontal flip | probability 0.5 |

Table 5.

Loss function configuration.

Table 5.

Loss function configuration.

| Stage | Loss Type | Implementation | Weight |

|---|

| RPN | Classification loss | Focal Loss (, ) | 1.0 |

| Regression loss | GIoU Loss | 2.0 |

| RoI Head | Classification loss | Varifocal Loss (, ) | 1.0 |

| Primary regression loss | EIoU Loss | 2.5 |

| Auxiliary regression loss | ScaleAdaptiveLoss | 1.5 |

Table 6.

Performance comparison on the VisDrone2021 validation set (%) under the budget-matched setting (BM, 20,000 iterations), including classical two-stage and one-stage detectors, recent YOLO variants for UAV detection, and Transformer-based end-to-end detectors. Bold is the best performing model.

Table 6.

Performance comparison on the VisDrone2021 validation set (%) under the budget-matched setting (BM, 20,000 iterations), including classical two-stage and one-stage detectors, recent YOLO variants for UAV detection, and Transformer-based end-to-end detectors. Bold is the best performing model.

| Method | Type | mAP | mAP50 | mAP75 | mAPs | mAPm | mAPl |

|---|

| SSD | One-stage | 3.6 | 8.4 | 2.6 | 0.5 | 5.5 | 12.3 |

| Deformable DETR | Transformer | 7.1 | 15.0 | 6.0 | 3.3 | 11.4 | 15.2 |

| Fast R-CNN | Two-stage | 12.8 | 23.4 | 12.9 | 6.4 | 20.0 | 25.6 |

| DINO | Transformer | 13.0 | 24.7 | 12.5 | 7.7 | 20.2 | 25.5 |

| Faster R-CNN | Two-stage | 13.9 | 24.7 | 14.4 | 6.9 | 21.9 | 23.1 |

| RetinaNet | One-stage | 14.5 | 25.8 | 14.8 | 5.9 | 23.7 | 32.4 |

| DDOD | One-stage | 14.7 | 26.2 | 14.7 | 6.9 | 23.1 | 30.9 |

| LUD-YOLO | One-stage | 19.3 | 35.2 | 18.5 | 13.7 | 34.9 | 37.1 |

| SAD-YOLO | One-stage | 19.1 | 34.1 | 17.9 | 12.8 | 33.7 | 36.2 |

| KL-YOLO | One-stage | 20.5 | 37.5 | 19.1 | 13.5 | 35.8 | 37.8 |

| CASA-RCNN | Two-stage | 22.9 | 36.6 | 25.7 | 12.5 | 35.7 | 37.9 |

Table 7.

Per-class detection performance comparison (AP, %). Bold is the best performing model.

Table 7.

Per-class detection performance comparison (AP, %). Bold is the best performing model.

| Method | ped. | peo. | bic. | Car | Van | Truck | tri. | a-tri. | Bus | Motor | Others | mAP |

|---|

| Faster R-CNN | 9.3 | 3.3 | 3.3 | 42.4 | 24.6 | 17.0 | 7.7 | 5.1 | 31.6 | 8.2 | 0.7 | 13.9 |

| RetinaNet | 8.8 | 4.1 | 4.7 | 41.2 | 22.0 | 19.6 | 8.3 | 5.9 | 34.3 | 7.7 | 3.5 | 14.5 |

| DDOD | 10.0 | 3.6 | 4.3 | 43.1 | 23.5 | 17.8 | 6.9 | 6.2 | 34.8 | 9.0 | 2.2 | 14.7 |

| DINO | 10.2 | 6.5 | 3.7 | 40.2 | 19.4 | 13.1 | 7.4 | 6.4 | 24.3 | 11.3 | 1.6 | 13.0 |

| CASA-RCNN | 11.5 | 4.6 | 7.2 | 48.1 | 39.9 | 39.7 | 13.8 | 13.2 | 52.0 | 13.4 | 8.7 | 22.9 |

| Improvements over Faster R-CNN |

| +2.2 | +1.3 | +3.9 | +5.7 | +15.3 | +22.7 | +6.1 | +8.1 | +20.4 | +5.2 | +8.0 | +9.0 |

Table 8.

Scale-wise performance improvement analysis.

Table 8.

Scale-wise performance improvement analysis.

| Scale | Area Range | Faster R-CNN | CASA-RCNN | Δ

| Relative Gain |

|---|

| Small (S) | | 6.9% | 12.5% | +5.6% | 81.2% |

| Medium (M) | | 21.9% | 35.7% | +13.8% | 63.0% |

| Large (L) | | 23.1% | 37.9% | +14.8% | 64.1% |

| Overall | – | 13.9% | 22.9% | +9.0% | 64.7% |

Table 9.

Stratified recall analysis by crowding and occlusion on VisDrone2021-DET. Bold is the best performing model.

Table 9.

Stratified recall analysis by crowding and occlusion on VisDrone2021-DET. Bold is the best performing model.

| Crowding bins (instances per image) |

| Model | Sparse (0–5) | Normal (5–20) | Dense (20–50) | Crowded (50+) |

| Baseline | 0.803 | 0.642 | 0.508 | 0.355 |

| CASA-RCNN | 0.901 | 0.768 | 0.612 | 0.393 |

| Occlusion bins (occlusion ratio) |

| Model | None (<0.1) | Slight (0.1–0.3) | Moderate (0.3–0.5) | Heavy (>0.5) |

| Baseline | 0.458 | 0.364 | 0.258 | 0.218 |

| CASA-RCNN | 0.519 | 0.444 | 0.328 | 0.258 |

Table 10.

Ablation study on core modules. Bold is the best performing model.

Table 10.

Ablation study on core modules. Bold is the best performing model.

| ConvSwin | Mamba | Q–S Loss | mAP | mAP50 | mAP75 | mAPs | mAPm | mAPl |

|---|

| – | – | – | 13.9 | 24.7 | 14.4 | 6.9 | 21.9 | 23.1 |

| ✓ | – | – | 18.7 | 31.7 | 19.9 | 9.8 | 29.3 | 36.9 |

| – | ✓ | – | 19.1 | 32.2 | 20.6 | 10.1 | 29.7 | 35.7 |

| ✓ | ✓ | – | 20.5 | 33.6 | 22.1 | 11.5 | 31.2 | 36.1 |

| ✓ | ✓ | ✓ | 22.9 | 36.6 | 25.7 | 12.5 | 35.7 | 37.9 |

Table 11.

Error analysis: FP/FN distribution.

Table 11.

Error analysis: FP/FN distribution.

| Model | False Positives | False Negatives |

|---|

| Cls Conf | BG | Loc | Dup | Small | Boundary | Occluded |

|---|

| Baseline | 3213 | 1126 | 1082 | 50 | 12,146 | 545 | 118 |

| +ConvSwinMerge | 2550 | 3222 | 1001 | 51 | 11,564 | 431 | 91 |

| +MambaBlock | 2537 | 1506 | 983 | 55 | 11,576 | 413 | 80 |

| CASA-RCNN | 2014 | 10,222 | 1131 | 54 | 11,429 | 321 | 77 |

Table 12.

Threshold Sensitivity Analysis.

Table 12.

Threshold Sensitivity Analysis.

| Model | Threshold | Precision | Recall | F1 |

|---|

| Baseline | 0.5 | 0.754 | 0.376 | 0.502 |

| Baseline | 0.7 | 0.834 | 0.321 | 0.464 |

| CASA-RCNN | 0.5 | 0.769 | 0.449 | 0.567 |

| CASA-RCNN | 0.7 | 0.883 | 0.404 | 0.554 |

Table 13.

Efficiency comparison of the baseline and CASA-RCNN variants.

Table 13.

Efficiency comparison of the baseline and CASA-RCNN variants.

| Model | Params (M) | FLOPs (G) | FPS | Latency (ms/img) | mAP |

|---|

| Baseline | 41.40 | 216.08 | 72.4 | 13.8 | 0.139 |

| +ConvSwinMerge | 46.16 | 295.59 | 57.5 | 17.4 | 0.187 |

| +MambaBlock | 46.06 | 235.65 | 64.9 | 15.4 | 0.191 |

| CASA-RCNN (full) | 50.82 | 315.16 | 54.7 | 18.3 | 0.229 |

Table 14.

Ablation study on ConvSwinMerge submodules.

Table 14.

Ablation study on ConvSwinMerge submodules.

| CoordAtt | Conv | SaE | mAP | mAPs | mAPm | ΔmAP |

|---|

| – | – | – | 13.9 | 6.9 | 21.9 | – |

| ✓ | – | – | 15.8 | 8.2 | 24.3 | +1.9 |

| – | ✓ | – | 14.6 | 7.3 | 22.5 | +0.7 |

| – | – | ✓ | 14.9 | 7.5 | 23.1 | +1.0 |

| ✓ | ✓ | – | 17.1 | 8.9 | 26.8 | +3.2 |

| ✓ | – | ✓ | 16.8 | 8.7 | 26.2 | +2.9 |

| ✓ | ✓ | ✓ | 18.7 | 9.8 | 29.3 | +4.8 |

Table 15.

Ablation study on combinations of loss functions (on top of ConvSwinMerge + MambaBlock).

Table 15.

Ablation study on combinations of loss functions (on top of ConvSwinMerge + MambaBlock).

| Classification Loss | Regression Loss | mAP | mAP75 | mAPs | ΔmAP |

|---|

| CrossEntropy | L1 Loss | 20.0 | 20.4 | 8.3 | – |

| CrossEntropy | EIoU Loss | 20.6 | 21.9 | 8.9 | +0.6 |

| Varifocal | L1 Loss | 20.9 | 22.8 | 9.3 | +0.9 |

| Varifocal | EIoU Loss | 21.4 | 24.2 | 10.1 | +1.4 |

| Varifocal | EIoU + ScaleAdaptive | 22.9 | 25.7 | 12.5 | +2.9 |

Table 16.

Fine-grained scale-stratified recall comparison around the ScaleAdaptiveLoss thresholds.

Table 16.

Fine-grained scale-stratified recall comparison around the ScaleAdaptiveLoss thresholds.

| Model | XS (0–16) | S (16–32) | SM (32–48) | M (48–64) | ML (64–96) | L (96+) |

|---|

| Baseline | 0.039 | 0.525 | 0.701 | 0.740 | 0.774 | 0.778 |

| CASA-RCNN | 0.050 | 0.610 | 0.799 | 0.858 | 0.896 | 0.881 |