1. Introduction

Advances in our understanding of the nature of

cognition in its myriad forms (embodied, embedded, extended and enactive) [

1,

2] displayed in all living beings (cellular

organisms, plants, animals and humans) and new theories of information,

info-computation and knowledge [

3,

4,

5,

6] are

throwing light on how we should build software systems (in the digital

universe) that mimic and interact with intelligent, sentient and resilient

beings in the physical universe. Info-computational constructivism asserts that

living organisms are cognizing agent structures who construct knowledge through

interactions with their environment. They process information through their own

cognitive apparatus and information exchange with other cognitive agents.

Computing processes, message communication networks and cognitive apparatus are

essentially the building blocks for sentient beings.

Meanwhile, agent technology has progressed with

mathematical models going beyond the boundaries of Church-Turing thesis [

7,

8]. Their utility is demonstrated in the novel

distributed intelligent managed element network architecture enabling

self-managing information processing structures and improving the computational

efficiency and resiliency going well beyond the boundaries of Church-Turing

thesis [

9,

10]. In addition, cognition as a

learning mechanism is associated with the neural network computing model and

has successfully been used to discern heretofore hidden correlations and

insights from large data sets. We present the impact of these advances on

conventional software engineering practice which so far has been focused mainly

on deterministic computing processes through programming languages. We address

ways to design intelligent, sentient and resilient information processing

structures that manage themselves and their environment in the face of

non-deterministic interactions without stopping. We use the new mathematics of

named sets, knowledge structures, theory of oracles and structural machines

with a hierarchy of controllers managing computing structures (from browsers to

databases) to design and implement a new class of digital sentient, resilient

and intelligent systems.

Sentience comes from the Latin word sentiens, “feeling,”

and it describes physical structures that are alive, able to feel and perceive,

and show awareness or responsiveness to external influences. They are aware of

their inner structure (self) and their interactions with external structures

both sentient and non-sentient. They have a degree of resilience, with the

capacity to recover quickly by rearranging their own structures (and also the

structures they interact with) from non-deterministic difficulties without

requiring a reboot or loosing self-awareness. Intelligence is the ability to

acquire, model and apply knowledge and skills using the cognitive apparatuses

the organism has developed.

As von Neumann [

11]

pointed out “It is very likely that on the basis of philosophy that every error

has to be caught, explained, and corrected, a system of the complexity of the

living organism would not last for a millisecond.” Cognition [

12] is the ability to process information, apply

knowledge and change the circumstance. Cognition is associated with intent and

its accomplishment through various processes that monitor and control a system

and its environment. Cognition is associated with a sense of “self” (the

observer) and the systems with which it interacts (the environment or the

“observed”). Cognition extensively uses knowledge of time and history in

executing and regulating tasks that constitute a cognitive process.

According to Dodig-Crnkovic [

3,

4,

5,

6], nature computes using information processing

going on in networks of agents, hierarchically organized in layers. Informational

structures self-organize through processes of natural/physical/embodied

computation. “This new concept of computation allows for nondeterministic

complex computational systems with self-* properties. Here self-* stands for

self-organization, self-configuration, self-optimization, self-healing,

self-protection, self-explanation, and self (context)-awareness.” Dodig-Crnkovic

further argues that natural computation (understood as processes acting on

informational structures) provides a basis within info-computational framework

for a unified understanding of phenomena of embodied cognition, intelligence

and knowledge generation. “Life and intelligence are the phenomena especially

characterized by info-computational structures and processes. Living systems

have the ability to act autonomously and store information, retrieve

information (remember), anticipate future behavior in the environment with help

of information stored (learn), adapt to the environment (in order to survive).

She presents a model which helps understanding mechanisms of information

processing and knowledge generation in an organism. Thinking of us and the

universe as a network of computational devices/processes allows easier approach

of questions about boundaries between living and non-living beings.”

The hierarchical network of computational devices and processes in nature which exhibit sentience, resilience and intelligence in some form or other have developed a way to encode information, store it and use it to execute the “life-processes” that define the best practices for sustenance and survival. The result is the emergence of two computing models in the form of the “gene” and the “neural network.” These two computing models along with cognizing-agent-based control architecture provide the means to capture the knowledge about itself and its environment, processes to define the intent, ways to find required resources, ways to execute them with available resources, monitor their progress, identify deviations and take corrective actions.

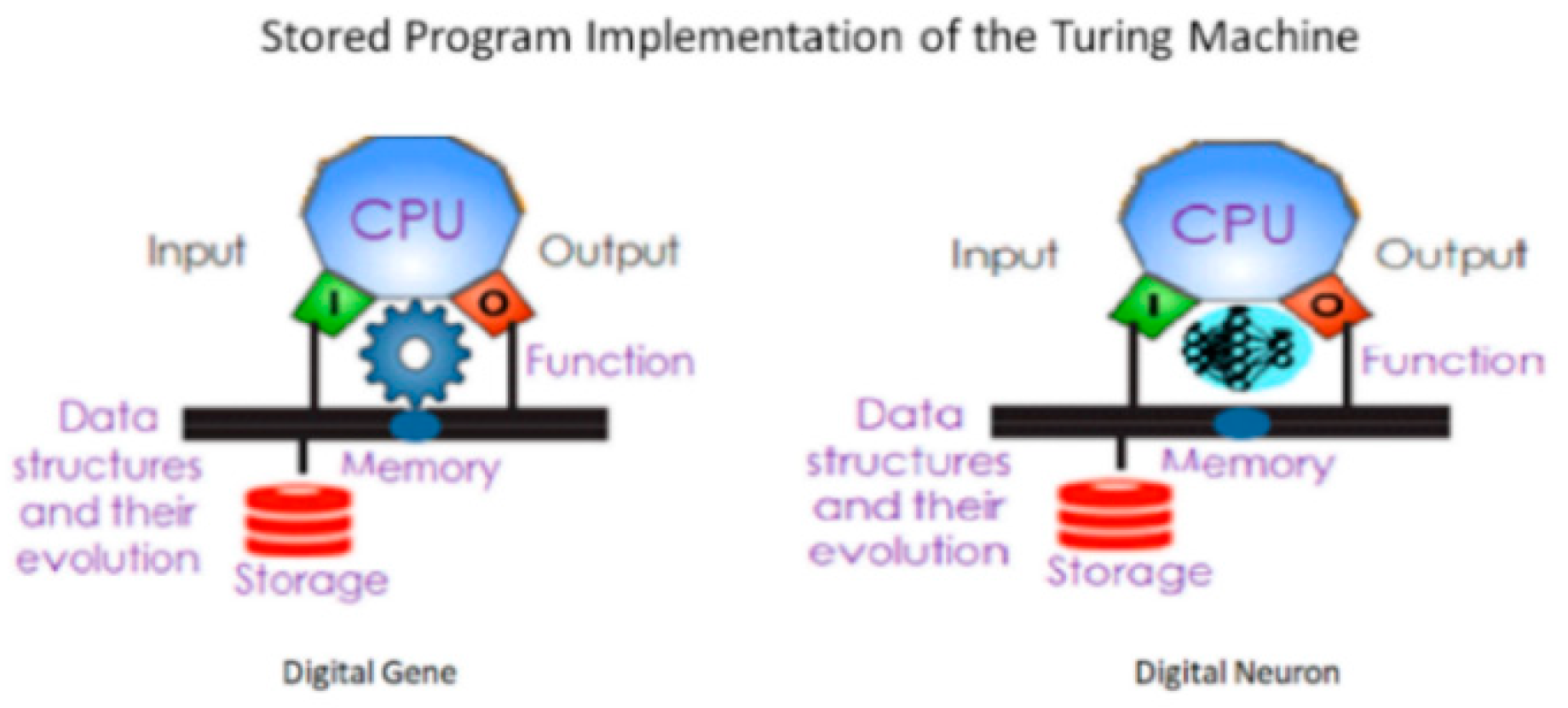

The thesis of this abstract is that the ingenuity of von Neumann’s stored program control implementation of the Turing machine provides a physical implementation of a cognitive apparatus to represent and transform knowledge structures that are created by physical or mental worlds in the form of data structures representing the domain under consideration.

Figure 1 represents the implementation of Turing Machines as a cognitive apparatus with locality and the ability to form information processing structures where information flows from one apparatus to another with a velocity defined by the medium. These implementations have allowed us to develop current state of the art of information processing structures using digital computing machines.

2. Digital Genes, Digital Neurons and Digital Information Processing Structures

The foundation for current state of the art is the Church-Turing thesis. All algorithms that are Turing computable fall within the of boundaries of the Church-Turing thesis, which can be stated as “a function on the natural numbers is computable by a human being following an algorithm, ignoring resource limitations, if and only if it is computable by a Turing machine.” As long as there is no resource limitation (such as the central processor unit, CPU, speed and memory), information processing continues.

Information processing structures are dynamic systems where software working with hardware delivers information processing services. The intent of the software components is to execute specific computational workflows implementing well-specified functional requirements. The software components contain the algorithms (à la the Turing machine) that specify information processing tasks of each component contributing to the execution of common intent. On the other hand, the intent of the hardware components is to provide the computing resources such as CPU speed, memory, network bandwidth, latency, storage input and output operations per second (IOPs), throughput and capacity so that the software components pursue their intent without interruption.

The meta-knowledge of the overall intent of the information processing system involving the global context, communication between components, constraints and control abstractions of the software components, the association of specific software component execution to a specific hardware device and the temporal evolution of information processing and exception handling when the computation deviates from the intent (be it because of software behavior or the hardware behavior or their interaction with the environment) is outside the software design and is expressed in non-functional requirements.

Mark Burgin’s observes [

13] that an information processing system (IPS) “has two structures—static and dynamic. The static structure reflects the mechanisms and devices that realize information processing, while the dynamic structure shows how this processing goes on and how these mechanisms and devices function and interact.” The software contains the algorithms (à la the Turing machine) that specify information processing tasks while the hardware provides the required resources to execute the algorithms. The static structure is defined by the association of software and hardware devices (function and structure) and the dynamic structure (influenced by the evolution and fluctuations in the structure) is defined by the execution of the algorithms. The meta-knowledge of the intent of the algorithm, the association of specific algorithm execution to a specific device and the temporal evolution of information processing and exception handling when the computation deviates from the intent (be it because of software behavior or the hardware behavior or their interaction with the environment) is outside the purview of software and hardware design and is expressed in non-functional requirements.

Church-Turing thesis boundaries are challenged when rapid non-deterministic fluctuations drive the demand for resource readjustment in real-time without interrupting the service transactions in progress. The information processing structures must become autonomous and predictive by extending their cognitive apparatus to include themselves and their behavior along with the information processing tasks at hand. True intelligence involves generalizations from observations, creating models, deriving new insights from the models through reasoning. In addition, human intelligence also creates history and uses past behaviors and experience in making the decision. In this abstract, we discuss extensions of these models using the learnings from new mathematics of named sets, knowledge structures, theory of oracles and structural machines which have been extensively discussed in the references given here [

14,

15,

16,

17,

18,

19,

20,

21]. The application of structural machines and theory of oracles to implement a self-managing application in an edge cloud is described in [

8].

According to Mark Burgin [

19,

20] “there is no knowledge per se but we always have knowledge about something. In other words, knowledge always involves some object.” Objects are distinguished by their “names.” A name may be a label, number, idea, text, even another object of a relevant nature. Objects are related to each other and the relationships can be described by algorithms or procedures. One type of relationship is provided by object recognition, construction or acquisition algorithms. Another type of relationship is provided by algorithms/procedures of measurement, evaluation or prediction. When an event occurs, the algorithms define the resulting behavior also captured in the knowledge structures.

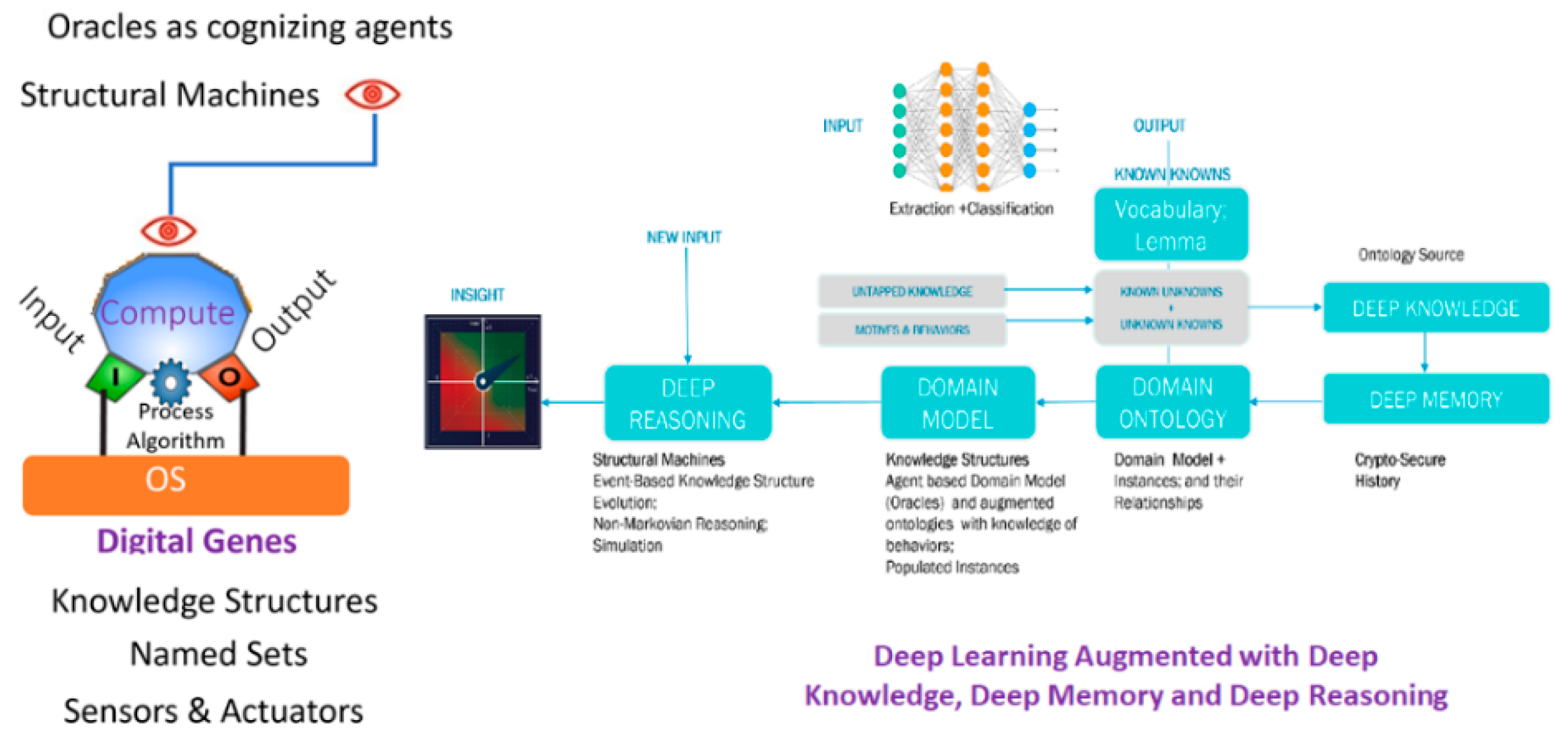

We describe here their application to implement information processing structures that are sentient, resilient and intelligent by integrating digital genes and augmenting deep learning with deep memory, deep knowledge, and deep reasoning as shown in

Figure 2.

Sentience is implemented by using cognizing agents that provision, monitor and manage downstream processes executed [

7,

10] in an information processing structure (shown in the left side of

Figure 2). On the right side we depict the process to augment deep learning with model-based reasoning. The domain ontology is used to create knowledge structures with inter-object and intra-object relationships and behaviors. The knowledge structures are evolved based on new events using the hierarchical structural machines with cognizing agents configuring, monitoring and reasoning about their evolution. An implementation of this architecture is applied to address fraud detection using financial statements, the details of which are presented in [

22].