A Review of Facial Landmark Extraction in 2D Images and Videos Using Deep Learning

Abstract

1. Introduction

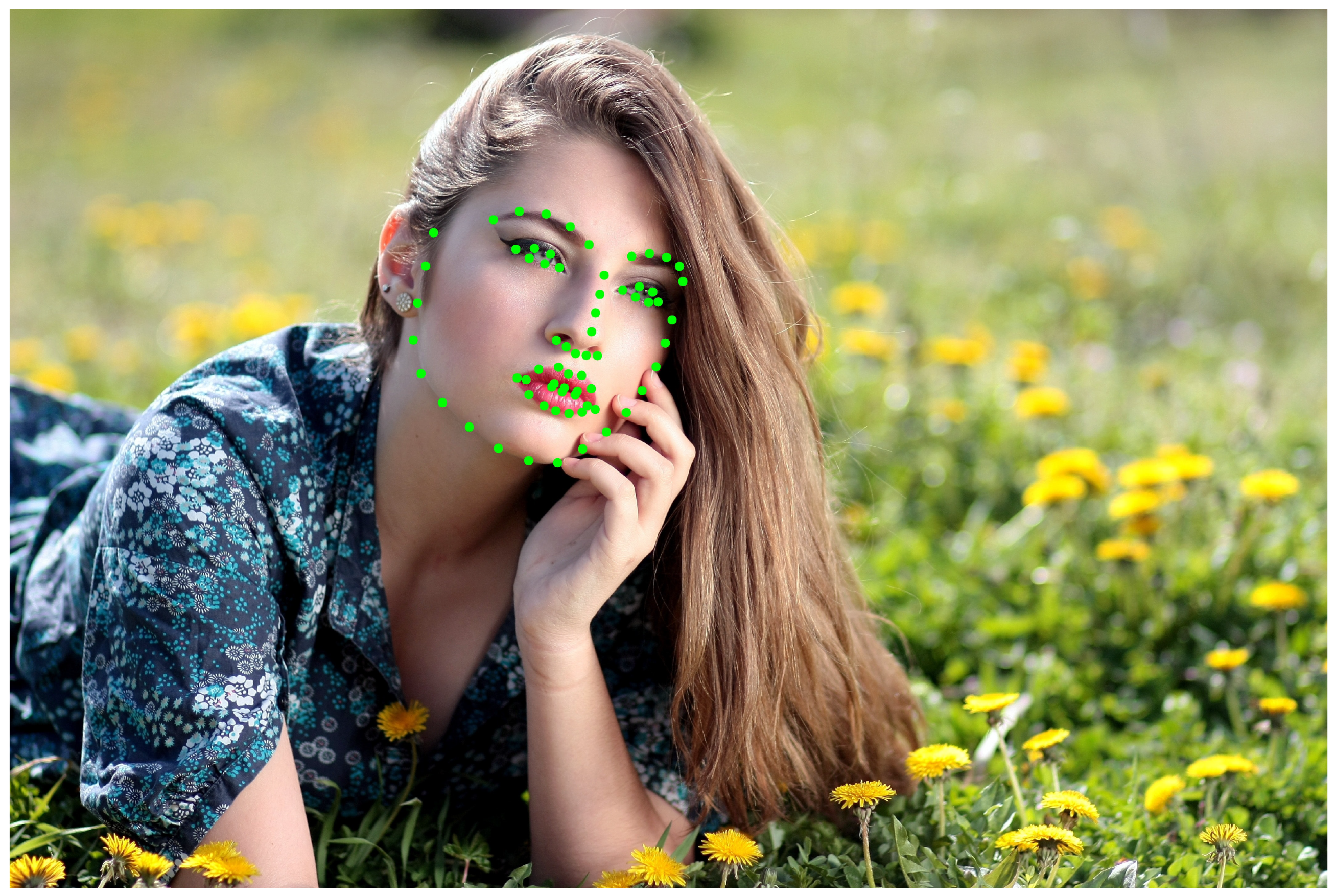

2. Landmarks Extraction

- Sparse facial landmarks detection;

- Dense facial landmarks detection;

- Landmarks detection with RNN;

- Landmark detection with likelihood maps;

- Landmark detection with multi-task learning.

3. Landmarks Tracking

4. Datasets

- Multi-PIE [75] is, above all datasets, one of the largest. It is constrained and contains 337 subjects in 15 views, with 19 illumination conditions and six different expressions. The facial landmarks are labeled with 39 points or 68 points.

- The 300-W [77] dataset (300 Faces in-the-Wild Challenge). Among all in-the-wild datasets, this has been the most popular one in recent years and it combines several datasets, such as Helen [78], LFPW [79], AFW [80], and a newly introduced challenging dataset, iBug. Summing up, it contains 3837 images and a further test set with 300 indoor and outdoor images, respectively. All the images are annotated with 68 points. The dataset is commonly divided in two parts: the usual subset, including LFPW and Helen, and the challenging dataset with AFW and iBug.

- The Menpo [81] dataset. It is the largest in-the-wild facial landmark dataset, and contains 6679 semi-front view face images, annotated with 68 points, and 5335 profile view face images, annotated with 39 points in the training set. The test set is composed of 12006 front view images and 4253 profile view images. It was introduced for the Menpo challenge in 2017 in order to raise an even more difficult challenge to test the robustness of facial landmark extraction algorithms, since it involves a high variation of poses, light conditions, and occlusions.

5. Evaluation Metrics and Comparison

6. Main Challenges

- Variability: Landmark appearances differ due to intrinsic factors, such as face variability between individuals, but also due to extrinsic factors, such as partial occlusion, illumination, expression, pose, and camera resolution. Facial landmarks can sometimes be only partially observed due to occlusions of hair, hand movements, or self-occlusion due to extensive head rotations. The other two major variations that compromise the success of landmark detection are illumination artifacts and facial expressions. A face landmarking algorithm that works well under and across all intrinsic variations of faces, and that delivers the target points in a time-efficient manner has not yet been feasible.

- Acquisition conditions: Much as in the case of face recognition, acquisition conditions, such as illumination, resolution, and background clutter, can affect the landmark localization performance. This is attested by the fact that landmark localizers trained in one database usually have inferior performance when tested on another database.

- The number of landmarks and their accuracy requirements: The accuracy requirements and the number of landmark points vary based on the intended application. For example, coarser detection of only the primary landmarks, e.g., nose tip, four eye and two mouth corners, or even the bounding box enclosing these landmarks, may be adequate for face detection or face recognition tasks. On the other hand, higher-level tasks, such as facial expression understanding or facial animation, require a greater number for landmarks that is from 20–30 to 60–80, as well as greater spatial accuracy. As for the accuracy requirement, fiducial landmarks, such as on the eyes and nose, need to be determined more accurately as they often guide the search for secondary landmarks with less prominent or reliable image evidence. It has been observed, however, that landmarks on the rim of the face, such as the chin, cannot be accurately localized in either manual annotation and automatic detection. Shape guide algorithms can benefit from the richer information coming from a larger set of landmarks. For example, Milborrow and Nicolls [88] have shown that the accuracy of landmark localization increases proportionally to the number of landmarks considered, and have recorded a 50% improvement as the ensemble increases from 3 to 68 landmarks.

7. Conclusions

Funding

Conflicts of Interest

References

- Murphy-Chutorian, E.; Trivedi, M.M. Head pose estimation in computer vision: A survey. IEEE Trans. Pattern Anal. Mach. Intell. 2009, 31, 607–626. [Google Scholar] [CrossRef] [PubMed]

- Pantic, M.; Rothkrantz, L.J.M. Automatic analysis of facial expressions: The state of the art. IEEE Trans. Pattern Anal. Mach. Intell. 2000, 22, 1424–1445. [Google Scholar] [CrossRef]

- Hansen, D.W.; Ji, Q. In the eye of the beholder: A survey of models for eyes and gaze. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 478–500. [Google Scholar] [CrossRef] [PubMed]

- Taigman, Y.; Yang, M.; Ranzato, M.; Wolf, L. Deepface: Closing the gap to human-level performance in face verification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1701–1708. [Google Scholar]

- Chrysos, G.G.; Antonakos, E.; Snape, P.; Asthana, A.; Zafeiriou, S. A comprehensive performance evaluation of deformable face tracking “in-the-wild”. Int. J. Comput. Vis. 2018, 126, 198–232. [Google Scholar] [CrossRef]

- Wu, Y.; Hassner, T.; Kim, K.; Medioni, G.; Natarajan, P. Facial landmark detection with tweaked convolutional neural networks. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 3067–3074. [Google Scholar] [CrossRef] [PubMed]

- Zhang, H.; Li, Q.; Sun, Z.; Liu, Y. Combining data-driven and model-driven methods for robust facial landmark detection. IEEE Trans. Inf. Forensics Secur. 2018, 13, 2409–2422. [Google Scholar] [CrossRef]

- Labati, R.D.; Genovese, A.; Muñoz, E.; Piuri, V.; Scotti, F.; Sforza, G. Computational intelligence for biometric applications: A survey. Int. J. Comput. 2016, 15, 40–49. [Google Scholar]

- Kazemi, V.; Sullivan, J. One millisecond face alignment with an ensemble of regression trees. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1867–1874. [Google Scholar]

- Cootes, T.F.; Taylor, C.J.; Cooper, D.H.; Graham, J. Active shape models-their training and application. Comput. Vis. Image Underst. 1995, 61, 38–59. [Google Scholar] [CrossRef]

- Cristinacce, D.; Cootes, T.F. Boosted regression active shape models. In Proceedings of the British Machine Vision Conference, Warwick, UK, 10–13 September 2007; Rajpoot, N.M., Bhalerao, A.H., Eds.; BMVA Press: San Francisco, CA, USA, 2007; pp. 1–10. [Google Scholar] [CrossRef]

- Cootes, T.F.; Edwards, G.J.; Taylor, C.J. Active appearance models. IEEE Trans. Pattern Anal. Mach. Intell. 2001, 23, 681–685. [Google Scholar] [CrossRef]

- Edwards, G.J.; Taylor, C.J.; Cootes, T.F. Interpreting face images using active appearance models. In Proceedings of the Third IEEE International Conference on Automatic Face and Gesture Recognition, Nara, Japan, 14–16 April 1998; pp. 300–305. [Google Scholar]

- Tzimiropoulos, G.; Pantic, M. Optimization problems for fast aam fitting in-the-wild. In Proceedings of the IEEE International Conference on Computer Vision, Sydney, Australia, 1–8 December 2013; pp. 593–600. [Google Scholar]

- Alabort-i Medina, J.; Zafeiriou, S. Bayesian active appearance models. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 3438–3445. [Google Scholar]

- Boccignone, G.; Bodini, M.; Cuculo, V.; Grossi, G. Predictive sampling of facial expression dynamics driven by a latent action space. In Proceedings of the 14th International Conference on Signal-Image Technology Internet-Based Systems (SITIS), Las Palmas de Gran Canaria, Spain, 26–29 November 2018; pp. 143–150. [Google Scholar] [CrossRef]

- Xiong, X.; De la Torre, F. Supervised descent method and its applications to face alignment. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 532–539. [Google Scholar]

- Cao, X.; Wei, Y.; Wen, F.; Sun, J. Face alignment by explicit shape regression. Int. J. Comput. Vis. 2014, 107, 177–190. [Google Scholar] [CrossRef]

- Asthana, A.; Zafeiriou, S.; Cheng, S.; Pantic, M. Incremental face alignment in the wild. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1859–1866. [Google Scholar]

- Bodini, M. Can We Automatically Assess the Aesthetic value of an Image? In Proceedings of the International Conference on ISMAC in Computational Vision and Bio-Engineering 2019 (ISMAC-CVB), Elayampalayam, India, 13–14 March 2019; Springer International Publishing: Cham, Switzerland, 2019. in press. [Google Scholar]

- Ren, S.; Cao, X.; Wei, Y.; Sun, J. Face alignment at 3000 fps via regressing local binary features. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1685–1692. [Google Scholar]

- Burgos-Artizzu, X.P.; Perona, P.; Dollár, P. Robust face landmark estimation under occlusion. In Proceedings of the IEEE International Conference on Computer Vision, Sydney, Australia, 1–8 December 2013; pp. 1513–1520. [Google Scholar]

- Zhu, S.; Li, C.; Change Loy, C.; Tang, X. Face alignment by coarse-to-fine shape searching. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 4998–5006. [Google Scholar]

- Celiktutan, O.; Ulukaya, S.; Sankur, B. A comparative study of face landmarking techniques. EURASIP J. Image Video Process. 2013, 2013, 13. [Google Scholar] [CrossRef]

- Yang, H.; Jia, X.; Loy, C.C.; Robinson, P. An empirical study of recent face alignment methods. arXiv, 2015; arXiv:1511.05049. [Google Scholar]

- Wang, N.; Gao, X.; Tao, D.; Yang, H.; Li, X. Facial feature point detection: A comprehensive survey. Neurocomputing 2018, 275, 50–65. [Google Scholar] [CrossRef]

- Jin, X.; Tan, X. Face alignment in-the-wild: A survey. Comput. Vis. Image Underst. 2017, 162, 1–22. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. In Proceedings of the 25th International Conference on Neural Information Processing Systems, Lake Tahoe, CA, USA, 3–6 December 2012; Pereira, F., Burges, C.J.C., Bottou, L., Weinberger, K.Q., Eds.; Curran Associates Inc.: New York, NY, USA, 2012; pp. 1097–1105. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436. [Google Scholar] [CrossRef] [PubMed]

- Duffner, S.; Garcia, C. A connexionist approach for robust and precise facial feature detection in complex scenes. In Proceedings of the 4th International Symposium on Image and Signal Processing and Analysis, ISPA 2005, Zagreb, Croatia, 15–17 September 2005; pp. 316–321. [Google Scholar]

- Luo, P.; Wang, X.; Tang, X. Hierarchical face parsing via deep learning. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 2480–2487. [Google Scholar]

- Sun, Y.; Wang, X.; Tang, X. Deep convolutional network cascade for facial point detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 3476–3483. [Google Scholar]

- Zhang, Z.; Luo, P.; Loy, C.C.; Tang, X. Facial landmark detection by deep multi-task learning. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014; Springer: Berlin, Germany, 2014; pp. 94–108. [Google Scholar]

- Kumar, A.; Ranjan, R.; Patel, V.; Chellappa, R. Face alignment by local deep descriptor regression. arXiv, 2016; arXiv:1601.07950. [Google Scholar]

- Zhang, S.; Yang, H.; Yin, Z.P. Transferred deep convolutional neural network features for extensive facial landmark localization. IEEE Signal Process. Lett. 2016, 23, 478–482. [Google Scholar] [CrossRef]

- Zhang, J.; Shan, S.; Kan, M.; Chen, X. Coarse-to-fine auto-encoder networks (cfan) for real-time face alignment. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014; Springer: Berlin, Germany, 2014; pp. 1–16. [Google Scholar]

- Zhang, J.; Kan, M.; Shan, S.; Chen, X. Occlusion-free face alignment: Deep regression networks coupled with de-corrupt autoencoders. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 3428–3437. [Google Scholar]

- Sun, P.; Min, J.K.; Xiong, G. Globally tuned cascade pose regression via back propagation with application in 2D face pose estimation and heart segmentation in 3D CT images. arXiv, 2015; arXiv:1503.08843. [Google Scholar]

- Liu, H.; Lu, J.; Feng, J.; Zhou, J. Two-stream transformer networks for video-based face alignment. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 2546–2554. [Google Scholar] [CrossRef] [PubMed]

- Wu, Y.; Ji, Q. Discriminative deep face shape model for facial point detection. Int. J. Comput. Vis. 2015, 113, 37–53. [Google Scholar] [CrossRef]

- Hinton, G.E. Training products of experts by minimizing contrastive divergence. Neural Comput. 2002, 14, 1771–1800. [Google Scholar] [CrossRef] [PubMed]

- Fan, H.; Zhou, E. Approaching human level facial landmark localization by deep learning. Image Vis. Comput. 2016, 47, 27–35. [Google Scholar] [CrossRef]

- Huang, Z.; Zhou, E.; Cao, Z. Coarse-to-fine Face Alignment with Multi-Scale Local Patch Regression. arXiv, 2015; arXiv:1511.04901. [Google Scholar]

- Lv, J.J.; Shao, X.; Xing, J.; Cheng, C.; Zhou, X. A Deep Regression Architecture with Two-Stage Re-initialization for High Performance Facial Landmark Detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; Volume 1, p. 4. [Google Scholar]

- Shao, Z.; Ding, S.; Zhao, Y.; Zhang, Q.; Ma, L. Learning deep representation from coarse to fine for face alignment. arXiv, 2016; arXiv:1608.00207. [Google Scholar]

- Trigeorgis, G.; Snape, P.; Nicolaou, M.A.; Antonakos, E.; Zafeiriou, S. Mnemonic descent method: A recurrent process applied for end-to-end face alignment. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 4177–4187. [Google Scholar]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Duffner, S.; Garcia, C. A hierarchical approach for precise facial feature detection. In Compression et Représentation des Signaux Audiovisuels; CORESA: Rennes, France, 2005; pp. 29–34. [Google Scholar]

- Zadeh, A.; Baltrušaitis, T.; Morency, L.P. Deep constrained local models for facial landmark detection. arXiv, 2016; 3, 6arXiv:1611.08657. [Google Scholar]

- Lai, H.; Xiao, S.; Pan, Y.; Cui, Z.; Feng, J.; Xu, C.; Yin, J.; Yan, S. Deep recurrent regression for facial landmark detection. IEEE Trans. Circuits Syst. Video Technol. 2018, 28, 1144–1157. [Google Scholar] [CrossRef]

- Xiao, S.; Feng, J.; Xing, J.; Lai, H.; Yan, S.; Kassim, A. Robust facial landmark detection via recurrent attentive-refinement networks. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016; Springer: Berlin, Germany, 2016; pp. 57–72. [Google Scholar]

- Bodini, M. Sound Classification and Localization in Service Robots with Attention Mechanisms. In Proceedings of the First Annual Conference on Computer-aided Developments in Electronics and Communication (CADEC-2019), Amaravati, India, 2–3 March 2019. in press. [Google Scholar]

- Bodini, M. Probabilistic Nonlinear Dimensionality Reduction through Gaussian Process Latent Variable Models: An Overview. In Proceedings of the First Annual Conference on Computer-aided Developments in Electronics and Communication (CADEC-2019), Amaravati, India, 2–3 March 2019. in press. [Google Scholar]

- Wang, L.; Yu, X.; Bourlai, T.; Metaxas, D.N. A coupled encoder-decoder network for joint face detection and landmark localization. Image Vis. Comput. 2018. [Google Scholar] [CrossRef]

- Gkioxari, G.; Girshick, R.; Malik, J. Contextual action recognition with r* cnn. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 1080–1088. [Google Scholar]

- Kowalski, M.; Naruniec, J.; Trzcinski, T. Deep alignment network: A convolutional neural network for robust face alignment. In Proceedings of the International Conference on Computer Vision & Pattern Recognition (CVPR), Faces-in-the-wild Workshop/Challenge, Honolulu, HI, USA, 21–27 July 2017; pp. 2034–2043. [Google Scholar] [CrossRef]

- Zhang, K.; Zhang, Z.; Li, Z.; Qiao, Y. Joint face detection and alignment using multitask cascaded convolutional networks. IEEE Signal Process. Lett. 2016, 23, 1499–1503. [Google Scholar] [CrossRef]

- Ranjan, R.; Sankaranarayanan, S.; Castillo, C.D.; Chellappa, R. An all-in-one convolutional neural network for face analysis. In Proceedings of the 12th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2017), Washington, DC, USA, 30 May–3 June 2017; pp. 17–24. [Google Scholar]

- Belharbi, S.; Chatelain, C.; Herault, R.; Adam, S. Facial landmark detection using structured output deep neural networks. arXiv, 2015; arXiv:1504.07550v3. [Google Scholar]

- Güler, R.A.; Trigeorgis, G.; Antonakos, E.; Snape, P.; Zafeiriou, S.; Kokkinos, I. DenseReg: Fully Convolutional Dense Shape Regression In-the-Wild. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; Volume 2, p. 5. [Google Scholar]

- Chrysos, G.G. Epameinondas Antonakos Patrick Snape Akshay Asthana and Stefanos Zafeiriou. A Comprehensive Performance Evaluation of Deformable Face Tracking In-the-Wild. Int. J. Comput. Vis. 2016, 126, 198–232. [Google Scholar] [CrossRef]

- Felzenszwalb, P.; McAllester, D.; Ramanan, D. A discriminatively trained, multiscale, deformable part model. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, CVPR, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Chrysos, G.G.; Zafeiriou, S. PD 2 T: Person-Specific Detection, Deformable Tracking. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 2555–2568. [Google Scholar] [CrossRef] [PubMed]

- Shen, J.; Zafeiriou, S.; Chrysos, G.G.; Kossaifi, J.; Tzimiropoulos, G.; Pantic, M. The first facial landmark tracking in-the-wild challenge: Benchmark and results. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Santiago, Chile, 7–13 December 2015; pp. 50–58. [Google Scholar]

- Sánchez-Lozano, E.; Martinez, B.; Tzimiropoulos, G.; Valstar, M. Cascaded continuous regression for real-time incremental face tracking. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016; Springer: Berlin, Germany, 2016; pp. 645–661. [Google Scholar]

- Sánchez-Lozano, E.; Tzimiropoulos, G.; Martinez, B.; De la Torre, F.; Valstar, M. A functional regression approach to facial landmark tracking. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 2037–2050. [Google Scholar] [CrossRef] [PubMed]

- Prabhu, U.; Seshadri, K.; Savvides, M. Automatic facial landmark tracking in video sequences using kalman filter assisted active shape models. In Proceedings of the European Conference on Computer Vision, Crete, Greece, 5–11 September 2010; Springer: Berlin, Germany, 2010; pp. 86–99. [Google Scholar]

- Yang, J.; Deng, J.; Zhang, K.; Liu, Q. Facial shape tracking via spatio-temporal cascade shape regression. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Santiago, Chile, 7–13 December 2015; pp. 41–49. [Google Scholar]

- Xiao, S.; Yan, S.; Kassim, A.A. Facial landmark detection via progressive initialization. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Santiago, Chile, 7–13 December 2015; pp. 33–40. [Google Scholar]

- Peng, X.; Huang, J.; Metaxas, D.N. Sequential Face Alignment via Person-Specific Modeling in the Wild. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 107–116. [Google Scholar]

- Peng, X.; Hu, Q.; Huang, J.; Metaxas, D.N. Track facial points in unconstrained videos. arXiv, 2016; arXiv:1609.02825. [Google Scholar]

- Peng, X.; Feris, R.S.; Wang, X.; Metaxas, D.N. A recurrent encoder-decoder network for sequential face alignment. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016; Springer: Berlin, Germany, 2016; pp. 38–56. [Google Scholar]

- De, J.G.X.Y.S.; Kautz, M.J. Dynamic facial analysis: From Bayesian filtering to recurrent neural network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Hou, Q.; Wang, J.; Bai, R.; Zhou, S.; Gong, Y. Face alignment recurrent network. Pattern Recognit. 2018, 74, 448–458. [Google Scholar] [CrossRef]

- Gross, R.; Matthews, I.; Cohn, J.; Kanade, T.; Baker, S. Multi-pie. Image Vis. Comput. 2010, 28, 807–813. [Google Scholar] [CrossRef] [PubMed]

- Messer, K.; Matas, J.; Kittler, J.; Luettin, J.; Maitre, G. XM2VTSDB: The extended M2VTS database. In Proceedings of the Second International Conference on Audio and Video-Based Biometric Person Authentication, Washington, DC, USA, 22–24 March 1999; Volume 964, pp. 965–966. [Google Scholar]

- Sagonas, C.; Antonakos, E.; Tzimiropoulos, G.; Zafeiriou, S.; Pantic, M. 300 faces in-the-wild challenge. Image Vis. Comput. 2016, 47, 3–18. [Google Scholar] [CrossRef]

- Le, V.; Brandt, J.; Lin, Z.; Bourdev, L.; Huang, T.S. Interactive facial feature localization. In Proceedings of the European Conference on Computer Vision, Florence, Italy, 7–13 October 2012; Springer: Berlin, Germany, 2012; pp. 679–692. [Google Scholar]

- Belhumeur, P.N.; Jacobs, D.W.; Kriegman, D.J.; Kumar, N. Localizing parts of faces using a consensus of exemplars. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 2930–2940. [Google Scholar] [CrossRef]

- Zhu, X.; Ramanan, D. Face detection, pose estimation, and landmark localization in the wild. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, RI, USA, 16–21 June 2012; pp. 2879–2886. [Google Scholar]

- Zafeiriou, S.; Trigeorgis, G.; Chrysos, G.; Deng, J.; Shen, J. The menpo facial landmark localisation challenge: A step towards the solution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, Honolulu, HI, USA, 21–26 July 2017; Volume 1, p. 2. [Google Scholar]

- Zafeiriou, S.; Chrysos, G.; Roussos, A.; Ververas, E.; Deng, J.; Trigeorgis, G. The 3d Menpo Facial Landmark Tracking Challenge. In Proceedings of the IEEE International Conference on Computer Vision Workshops (ICCVW), Venice, Italy, 22–29 October 2017; pp. 2503–2511. [Google Scholar] [CrossRef]

- Booth, J.; Antonakos, E.; Ploumpis, S.; Trigeorgis, G.; Panagakis, Y.; Zafeiriou, S. 3D face morphable models. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 48–57. [Google Scholar]

- Bulat, A.; Tzimiropoulos, G. How far are we from solving the 2d & 3d face alignment problem? (and a dataset of 230,000 3d facial landmarks). In Proceedings of the International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 1021–1030. [Google Scholar] [CrossRef]

- Asthana, A.; Zafeiriou, S.; Cheng, S.; Pantic, M. Robust discriminative response map fitting with constrained local models. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 3444–3451. [Google Scholar]

- Peng, X.; Zhang, S.; Yang, Y.; Metaxas, D.N. Piefa: Personalized incremental and ensemble face alignment. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 3880–3888. [Google Scholar]

- Peng, X.; Feris, R.S.; Wang, X.; Metaxas, D.N. RED-Net: A Recurrent Encoder–Decoder Network for Video-Based Face Alignment. Int. J. Comput. Vis. 2018, 126, 1–17. [Google Scholar] [CrossRef]

- Milborrow, S.; Nicolls, F. Locating facial features with an extended active shape model. In Proceedings of the European Conference on Computer Vision, Marseille, France, 12–18 October 2008; Springer: Berlin, Germany, 2008; pp. 504–513. [Google Scholar]

- Zhang, X.; Zhou, X.; Lin, M.; Sun, J. Shufflenet: An extremely efficient convolutional neural network for mobile devices. arXiv, 2017; arXiv:1707.01083. [Google Scholar]

- Dong, X.; Yu, S.I.; Weng, X.; Wei, S.E.; Yang, Y.; Sheikh, Y. Supervision-by-Registration: An unsupervised approach to improve the precision of facial landmark detectors. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 360–368. [Google Scholar]

- Mairal, J.; Bach, F.; Ponce, J.; Sapiro, G.; Zisserman, A. Discriminative Learned Dictionaries for Local Image Analysis; Technical Report; Minnesota Univ Minneapolis Inst for Mathematics and Its Applications: Minneapolis, MN, USA, 2008. [Google Scholar]

- Bodini, M.; D’Amelio, A.; Grossi, G.; Lanzarotti, R.; Lin, J. Single Sample Face Recognition by Sparse Recovery of Deep-Learned LDA Features. In Advanced Concepts for Intelligent Vision Systems; Blanc-Talon, J., Helbert, D., Philips, W., Popescu, D., Scheunders, P., Eds.; Springer International Publishing: Cham, Switzerland, 2018; pp. 297–308. [Google Scholar]

- Salakhutdinov, R.; Torralba, A.; Tenenbaum, J. Learning to share visual appearance for multiclass object detection. In Proceedings of the 2011 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Colorado Springs, CO, USA, 20–25 June 2011; pp. 1481–1488. [Google Scholar]

- Torralba, A.; Murphy, K.P.; Freeman, W.T. Sharing visual features for multiclass and multiview object detection. IEEE Trans. Pattern Anal. Mach. Intell. 2007, 29, 854–869. [Google Scholar] [CrossRef] [PubMed]

- Wang, H.; Ullah, M.M.; Klaser, A.; Laptev, I.; Schmid, C. Evaluation of local spatio-temporal features for action recognition. In Proceedings of the British Machine Conference, London, UK, 7–10 September 2009; Cavallaro, A., Prince, S., Alexander, D., Eds.; BMVA Press: San Francisco, CA, USA, 2009; pp. 1–11. [Google Scholar] [CrossRef]

| Non Deep Learning Method | Year | Database | NME | FPS on Video | |

| DRMF [85] | 2013 | 300W | 9.22 | - | 0.5 |

| RCPR [22] | 2013 | 300W | 8.35 | - | 80 |

| ESR [18] | 2014 | 300W | 7.58 | 43.12 | - |

| SDM [17] | 2013 | 300W | 7.52 | 42.94 | 40 |

| ERT [9] | 2014 | 300W | 6.40 | - | 25 |

| LBF [21] | 2014 | 300W | 6.32 | - | 3000 |

| CFSS [23] | 2015 | 300W | 5.76 | 55.9 * | 10 |

| Deep Learning Method | Database | NME | |||

| CFAN [36] | 2014 | 300W | 7.69 | - | 20 |

| TCDCN [33] | 2014 | 300W | 5.54 | 41.7 * | 58 |

| TSR [44] | 2017 | 300W | 4.99 | - | 111 |

| RAR [51] | 2016 | 300W | 4.94 | - | - |

| DRR [50] | 2018 | 300W | 4.90 | - | - |

| MDM [46] | 2016 | 300W | 4.05 | 52.12 | - |

| DAN [56] | 2017 | 300W | 3.59 | 55.33 | 73 |

| 2DFAN [84] | 2017 | 300W | - | 66.90 * | 30 |

| DenseReg + MDM [60] | 2017 | 300W | - | 52.19 | 8 |

| Video Facial Landmark Extraction Method Comparison on the 300VW Dataset | |||||

|---|---|---|---|---|---|

| Method NME FPS | ESR [18] 7.09 67 | SDM [17] 7.25 40 | CFSS [23] 6.13 10 | PIEFA [86] 6.37 - | CFAN * [36] 6.64 20 |

| Method NME FPS | TCDCN * [33] 7.59 59 | RED * [72] 6.25 33 | RED-Res * [87] 4.75 18 | RNN * [73] 6.16 - | TSTN * [39] 5.59 30 |

© 2019 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bodini, M. A Review of Facial Landmark Extraction in 2D Images and Videos Using Deep Learning. Big Data Cogn. Comput. 2019, 3, 14. https://doi.org/10.3390/bdcc3010014

Bodini M. A Review of Facial Landmark Extraction in 2D Images and Videos Using Deep Learning. Big Data and Cognitive Computing. 2019; 3(1):14. https://doi.org/10.3390/bdcc3010014

Chicago/Turabian StyleBodini, Matteo. 2019. "A Review of Facial Landmark Extraction in 2D Images and Videos Using Deep Learning" Big Data and Cognitive Computing 3, no. 1: 14. https://doi.org/10.3390/bdcc3010014

APA StyleBodini, M. (2019). A Review of Facial Landmark Extraction in 2D Images and Videos Using Deep Learning. Big Data and Cognitive Computing, 3(1), 14. https://doi.org/10.3390/bdcc3010014