Challenges and Trends in User Trust Discourse in AI Popularity

Abstract

1. Introduction

1.1. AI Popularity and the Discourse on Users’ Trust

1.2. TAI Conceptual Challenges

2. Discussion

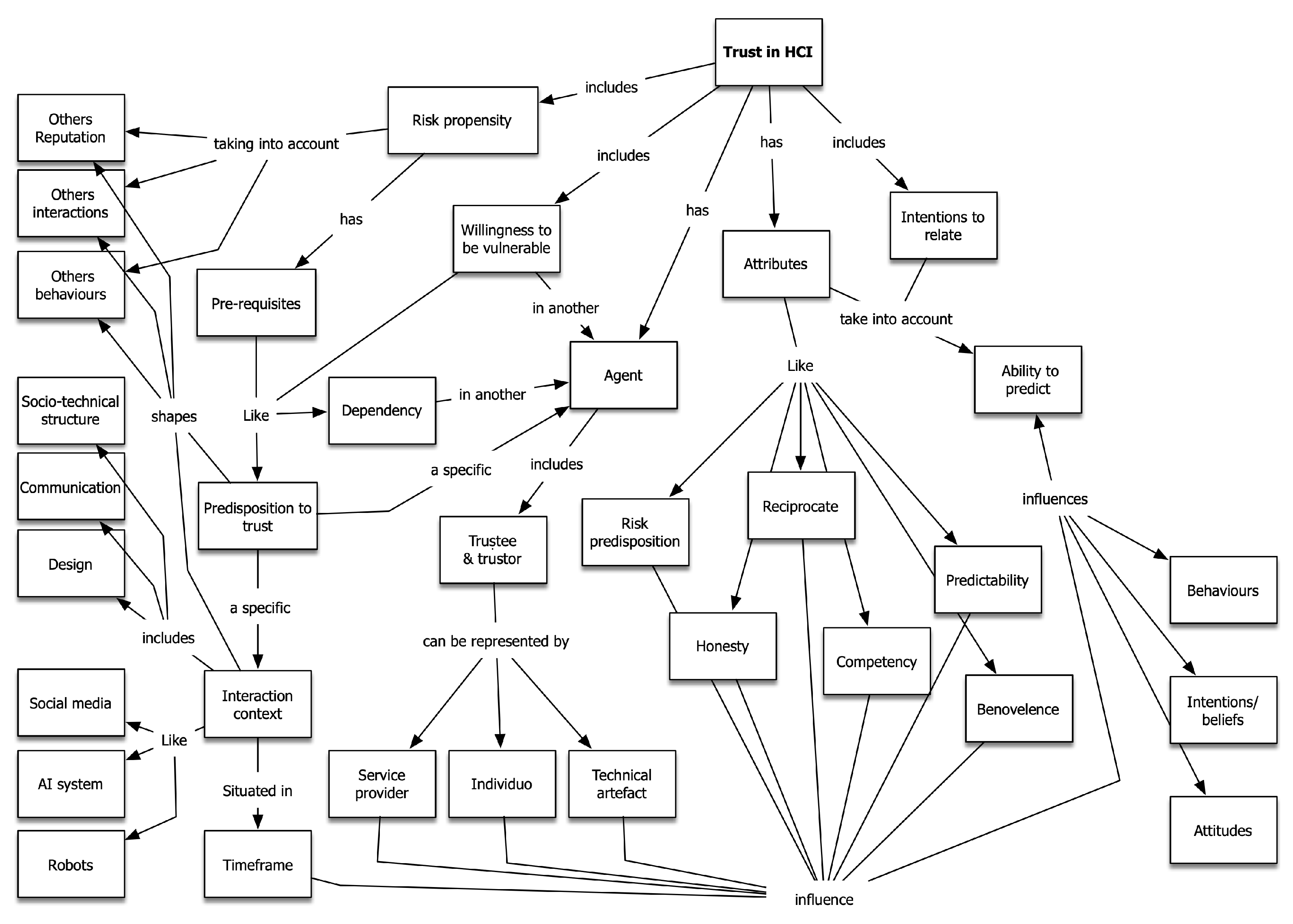

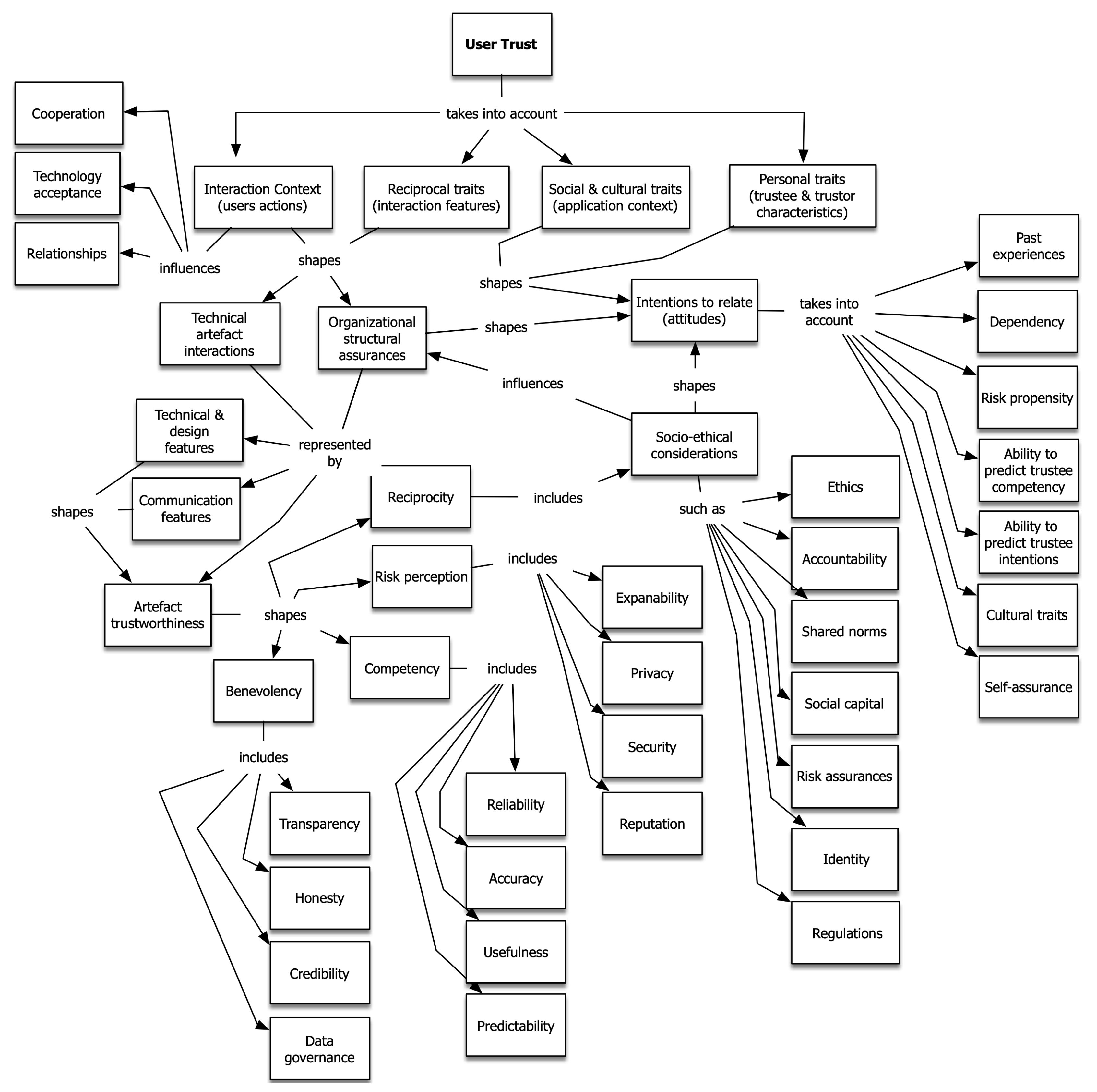

The Nature of Trust Research in HCI

3. Conclusions

Funding

Data Availability Statement

Conflicts of Interest

References

- Appari, A.; Johnson, M.E. Information security and privacy in healthcare: Current state of research. Int. J. Internet Enterp. Manag. 2010, 6, 279–314. [Google Scholar] [CrossRef]

- Oper, T.; Sousa, S. User Attitudes Towards Facebook: Perception and Reassurance of Trust (Estonian Case Study). In HCI International 2020-Posters: 22nd International Conference, HCII 2020, Copenhagen, Denmark, 19–24 July 2020, Proceedings, Part III 22; Springer International Publishing: Berlin/Heidelberg, Germany, 2020; pp. 224–230. [Google Scholar]

- Sousa, S.; Kalju, T. Modeling Trust in COVID-19 Contact-Tracing Apps Using the Human-Computer Trust Scale: Online Survey Study. JMIR Hum. Factors 2022, 9, e33951. [Google Scholar] [CrossRef] [PubMed]

- Sousa, S.; Bates, N. Factors influencing content credibility in Facebook’s news feed. Hum.-Intell. Syst. Integr. 2021, 3, 69–78. [Google Scholar] [CrossRef]

- Sundar, S.S. Rise of machine agency: A framework for studying the psychology of human–AI interaction (HAII). J. Comput.-Mediat. Commun. 2020, 25, 74–88. [Google Scholar] [CrossRef]

- McCarthy, J. What Is Artificial Intelligence? Springer: Dordrecht, The Netherlands, 2007. [Google Scholar]

- Russell, S.; Norvig, P. A modern, agent-oriented approach to introductory artificial intelligence. Acm Sigart Bull. 1995, 6, 24–26. [Google Scholar] [CrossRef]

- Xu, W.; Dainoff, M.J.; Ge, L.; Gao, Z. From Human-Computer Interaction to Human-AI Interaction: New Challenges and Opportunities for Enabling Human-Centered AI. arXiv 2021, arXiv:2105.05424. [Google Scholar]

- WIRED. AI Needs Human-Centered Design. 2021. Available online: https://www.wired.com/brandlab/2018/05/ai-needs-human-centered-design/ (accessed on 6 December 2022).

- Hickok, M. Lessons learned from AI ethics principles for future actions. AI Ethics 2021, 1, 41–47. [Google Scholar] [CrossRef]

- Watson, I. Trustworthy AI Research. 2021. Available online: https://research.ibm.com/topics/trustworthy-ai (accessed on 6 December 2022).

- Floridi, L.; Cowls, J.; Beltrametti, M.; Chatila, R.; Chazerand, P.; Dignum, V.; Luetge, C.; Madelin, R.; Pagallo, U.; Rossi, F.; et al. An Ethical Framework for a Good AI Society: Opportunities, Risks, Principles, and Recommendations. In Ethics, Governance, and Policies in Artificial Intelligence; Springer: Cham, Switzerland, 2021; pp. 19–39. [Google Scholar]

- Bryson, J.J. The artificial intelligence of the ethics of artificial intelligence. In The Oxford Handbook of Ethics of AI; Oxford University Press: Oxford, UK, 2020; p. 1. [Google Scholar]

- Shneiderman, B. Bridging the gap between ethics and practice: Guidelines for reliable, safe, and trustworthy Human-Centered AI systems. ACM Trans. Interact. Intell. Syst. (TiiS) 2020, 10, 1–31. [Google Scholar] [CrossRef]

- Zhang, Z.T.; Hußmann, H. How to Manage Output Uncertainty: Targeting the Actual End User Problem in Interactions with AI. In Proceedings of the IUI Workshops, College Station, TX, USA, 13–17 April 2021. [Google Scholar]

- Shneiderman, B. Human-centered artificial intelligence: Reliable, safe & trustworthy. Int. J. Hum. Interact. 2020, 36, 495–504. [Google Scholar]

- Araujo, T.; Helberger, N.; Kruikemeier, S.; De Vreese, C.H. In AI we trust? Perceptions about automated decision-making by artificial intelligence. AI Soc. 2020, 35, 611–623. [Google Scholar] [CrossRef]

- Lopes, A.G. HCI Four Waves within Different Interaction Design Examples. In Proceedings of the IFIP Working Conference on Human Work Interaction Design, Beijing, China, 15–16 May 2021; Springer: Cham, Switzerland, 2022; pp. 83–98. [Google Scholar]

- Glikson, E.; Woolley, A.W. Human trust in artificial intelligence: Review of empirical research. Acad. Manag. Ann. 2020, 14, 627–660. [Google Scholar] [CrossRef]

- Ajenaghughrure, I.B.; Sousa, S.D.C.; Lamas, D. Measuring Trust with Psychophysiological Signals: A Systematic Mapping Study of Approaches Used. Multimodal Technol. Interact. 2020, 4, 63. [Google Scholar] [CrossRef]

- Sousa, S.; Cravino, J.; Lamas, D.; Martins, P. Confiança e tecnologia: Práticas, conceitos e ferramentas. Rev. Ibérica Sist. Tecnol. InformaÇ 2021, 45, 146–164. [Google Scholar]

- Bach, T.A.; Khan, A.; Hallock, H.; Beltrão, G.; Sousa, S. A systematic literature review of user trust in AI-enabled systems: An HCI perspective. Int. J. Hum. Interact. 2022, 38, 1095–1112. [Google Scholar] [CrossRef]

- Li, L.; Ota, K.; Dong, M. Humanlike driving: Empirical decision-making system for autonomous vehicles. IEEE Trans. Veh. Technol. 2018, 67, 6814–6823. [Google Scholar] [CrossRef]

- Haigh, T. Remembering the office of the future: The origins of word processing and office automation. IEEE Ann. Hist. Comput. 2006, 28, 6–31. [Google Scholar] [CrossRef]

- Harrison, S.; Tatar, D.; Sengers, P. The three paradigms of HCI. In Proceedings of the Alt. Chi. Session at the SIGCHI Conference on Human Factors in Computing Systems, San Jose, CA, USA, 28 April–3 May 2007; pp. 1–18. [Google Scholar]

- Bødker, S. When second wave HCI meets third wave challenges. In Proceedings of the 4th Nordic Conference on Human-Computer Interaction: Changing Roles, Oslo, Norway, 14–18 October 2006; pp. 1–8. [Google Scholar]

- Davis, B.; Glenski, M.; Sealy, W.; Arendt, D. Measure Utility, Gain Trust: Practical Advice for XAI Researchers. In Proceedings of the 2020 IEEE Workshop on TRust and EXpertise in Visual Analytics (TREX), Salt Lake City, UT, USA, 25–30 October 2020; pp. 1–8. [Google Scholar] [CrossRef]

- Páez, A. The Pragmatic Turn in Explainable Artificial Intelligence (XAI). Minds Mach. 2019, 29, 441–459. [Google Scholar] [CrossRef]

- Ashby, S.; Hanna, J.; Matos, S.; Nash, C.; Faria, A. Fourth-wave HCI meets the 21st century manifesto. In Proceedings of the Halfway to the Future Symposium, Nottingham, UK, 19–20 November 2019; pp. 1–11. [Google Scholar]

- Zuboff, S.; Möllers, N.; Wood, D.M.; Lyon, D. Surveillance Capitalism: An Interview with Shoshana Zuboff. Surveill. Soc. 2019, 17, 257–266. [Google Scholar] [CrossRef]

- Marcus, G.; Davis, E. Rebooting AI: Building Artificial Intelligence We Can Trust; Vintage: Tokyo, Japan, 2019. [Google Scholar]

- Doshi-Velez, F.; Kim, B. Towards a rigorous science of interpretable machine learning. arXiv 2017, arXiv:1702.08608. [Google Scholar]

- OECD. Tools for Trustworthy AI. 2021. Available online: https://www.oecd.org/science/tools-for-trustworthy-ai-008232ec-en.htm (accessed on 6 December 2022).

- EU. Ethics Guidelines for Trustworthy AI; Report; European Commission: Brussels, Belgium, 2019. [Google Scholar]

- Mayer, R.C.; Davis, J.H.; Schoorman, F.D. An integrative model of organizational trust. In Organizational Trust: A Reader; Academy of Management: New York, NY, USA, 2006; pp. 82–108. [Google Scholar]

- Hilbert, M. Digital technology and social change: The digital transformation of society from a historical perspective. Dialogues Clin. Neurosci. 2020, 22, 189. [Google Scholar] [CrossRef]

- Thiebes, S.; Lins, S.; Sunyaev, A. Trustworthy artificial intelligence. Electron. Mark. 2021, 31, 447–464. [Google Scholar] [CrossRef]

- Dörner, D. Theoretical advances of cognitive psychology relevant to instruction. In Cognitive Psychology and Instruction; Springer: Berlin/Heidelberg, Germany, 1978; pp. 231–252. [Google Scholar]

- Smith, C.J. Designing trustworthy AI: A human-machine teaming framework to guide development. arXiv 2019, arXiv:1910.03515. [Google Scholar]

- Leijnen, S.; Aldewereld, H.; van Belkom, R.; Bijvank, R.; Ossewaarde, R. An agile framework for trustworthy AI. NeHuAI@ ECAI. 2020, pp. 75–78. Available online: https://www.semanticscholar.org/paper/An-agile-framework-for-trustworthy-AI-Leijnen-Aldewereld/880049a16c8fea47dcfe07450668f5507db5e96d (accessed on 6 December 2022).

- Seigneur, J.M. Trust, Security, and Privacy in Global Computing. Ph.D. Thesis, University of Dublin, Dublin, Ireland, 2005. [Google Scholar]

- Rossi, F. Building trust in artificial intelligence. J. Int. Aff. 2018, 72, 127–134. [Google Scholar]

- Hassenzahl, M.; Tractinsky, N. User experience-a research agenda. Behav. Inf. Technol. 2006, 25, 91–97. [Google Scholar] [CrossRef]

- Lee, D.; Moon, J.; Kim, Y.J.; Mun, Y.Y. Antecedents and consequences of mobile phone usability: Linking simplicity and interactivity to satisfaction, trust, and brand loyalty. Inf. Manag. 2015, 52, 295–304. [Google Scholar] [CrossRef]

- McCarthy, J.; Wright, P. Technology as experience. Interactions 2004, 11, 42–43. [Google Scholar] [CrossRef]

- Akash, K.; McMahon, G.; Reid, T.; Jain, N. Human trust-based feedback control: Dynamically varying automation transparency to optimize human-machine interactions. IEEE Control Syst. Mag. 2020, 40, 98–116. [Google Scholar] [CrossRef]

- Wogu, I.A.P.; Misra, S.; Udoh, O.D.; Agoha, B.C.; Sholarin, M.A.; Ahuja, R. Artificial Intelligence Politicking and Human Rights Violations in UK?s Democracy: A Critical Appraisal of the Brexit Referendum. In Proceedings of the The International Conference on Recent Innovations in Computing, Jammu, India, 20–21 March 2020; Springer: Berlin/Heidelberg, Germany, 2020; pp. 615–626. [Google Scholar]

- Alben, L. Quality of experience: Defining the criteria for effective interaction design. Interactions 1996, 3, 11–15. [Google Scholar] [CrossRef]

- Sousa, S.C.; Tomberg, V.; Lamas, D.R.; Laanpere, M. Interrelation between trust and sharing attitudes in distributed personal learning environments: The case study of lepress PLE. In Advances in Web-Based Learning-ICWL 2011; Springer: Berlin/Heidelberg, Germany, 2011; pp. 72–81. [Google Scholar]

- Sousa, S.; Lamas, D.; Hudson, B. Reflection on the influence of online trust in online learners performance. In Proceedings of the E-Learn: World Conference on E-Learning in Corporate, Government, Healthcare, and Higher Education, Honolulu, HI, USA, 13–17 October 2006; Association for the Advancement of Computing in Education (AACE): Morgantown, WV, USA, 2006; pp. 2374–2381. [Google Scholar]

- Sousa, S.; Lamas, D. Leveraging Trust to Support Online Learning Creativity–A Case Study. ELearning Pap. 2012, 30, 1–10. [Google Scholar]

- Sousa, S.; Lamas, D.; Dias, P. The Implications of Trust on Moderating Learner’s Online Interactions—A Socio-technical Model of Trust. In Proceedings of the CSEDU 2012-Proceedings of the 4th International Conference on Computer Supported Education, Porto, Portugal, 16–18 April 2012; e Maria João Martins e José Cordeiro, M.H., Ed.; SciTePress: Setúbal, Portugal, 2012; Volume 2, pp. 258–264. [Google Scholar]

- Lankton, N.K.; McKnight, D.H.; Tripp, J. Technology, humanness, and trust: Rethinking trust in technology. J. Assoc. Inf. Syst. 2015, 16, 1. [Google Scholar] [CrossRef]

- McKnight, D.; Chervany, N. Trust and distrust definitions: One bite at a time. In Trust in Cyber-Societies: Integrating the Human and Artificial Perspectives; Falcone, R., Singh, M.P., Tan, Y., Eds.; Springer: Berlin, Germany, 2002; pp. 27–54. [Google Scholar]

- Gambetta, D. Trust making and breaking co-operative relations. In Can We Trust Trust? Gambetta, D., Ed.; Basil Blackwell: Oxford, UK, 1998; pp. 213–237. [Google Scholar]

- Luhmann, N. Familiarity, confidence, trust: Problems and alternatives. Trust. Mak. Break. Coop. Relations 2000, 6, 94–107. [Google Scholar]

- Cavoukian, A.; Jonas, J. Privacy by Design in the Age of Big Data; Information and Privacy Commissioner of Ontario: Mississauga, ON, Canada, 2012. [Google Scholar]

- Gulati, S.; Sousa, S.; Lamas, D. Design, development and evaluation of a human-computer trust scale. Behav. Inf. Technol. 2019, 1–12. [Google Scholar] [CrossRef]

- Gulati, S.; Sousa, S.; Lamas, D. Modelling Trust: An Empirical Assessment. In Proceedings of the 16th IFIP TC 13 International Conference on Human-Computer Interaction—INTERACT 2017-Volume 10516, Mumbai, India, 25–29 September 2017; Springer: Berlin/Heidelberg, Germany, 2017; pp. 40–61. [Google Scholar] [CrossRef]

- Gulati, S.; Sousa, S.; Lamas, D. Modelling trust in human-like technologies. In Proceedings of the 9th Indian Conference on Human Computer Interaction, Bangalore, India, 16–18 December 2018; pp. 1–10. [Google Scholar]

- Sousa, S.; Lamas, D.; Dias, P. Value creation through trust in technological-mediated social participation. Technol. Innov. Educ. 2016, 2, 5. [Google Scholar] [CrossRef]

- Resnick, P.; Zeckhauser, R.; Friedman, E.; Kuwabara, K. Reputation systems: Facilitating trust in Internet interactions. Commun. ACM 2000, 43, 45–48. [Google Scholar] [CrossRef]

- Renaud, K.; Von Solms, B.; Von Solms, R. How does intellectual capital align with cyber security? J. Intellect. Cap. 2019, 20, 621–641. [Google Scholar] [CrossRef]

- Hansen, M. Marrying transparency tools with user-controlled identity management. In Proceedings of the IFIP International Summer School on the Future of Identity in the Information Society, Brno, Czech Republic, 1–7 September 2007; Springer: Berlin/Heidelberg, Germany, 2007; pp. 199–220. [Google Scholar]

- Buchanan, T.; Paine, C.; Joinson, A.N.; Reips, U.D. Development of measures of online privacy concern and protection for use on the Internet. J. Am. Soc. Inf. Sci. Technol. 2007, 58, 157–165. [Google Scholar] [CrossRef]

- Fimberg, K.; Sousa, S. The Impact of Website Design on Users’ Trust Perceptions. In Proceedings of the International Conference on Applied Human Factors and Ergonomics, San Diego, CA, USA, 25–29 July 2020; Springer: Berlin/Heidelberg, Germany, 2020; pp. 267–274. [Google Scholar]

- Kim, Y.; Peterson, R.A. A Meta-analysis of Online Trust Relationships in E-commerce. J. Interact. Mark. 2017, 38, 44–54. [Google Scholar] [CrossRef]

- Hancock, P.A.; Billings, D.R.; Schaefer, K.E. Can you trust your robot? Ergon. Des. 2011, 19, 24–29. [Google Scholar] [CrossRef]

- Schmager, S.; Sousa, S. A Toolkit to Enable the Design of Trustworthy AI. In Proceedings of the HCI International 2021-Late Breaking Papers: Multimodality, eXtended Reality, and Artificial Intelligence, Virtual Event, 24–29 July 2021; Stephanidis, C., Kurosu, M., Chen, J.Y.C., Fragomeni, G., Streitz, N., Konomi, S., Degen, H., Ntoa, S., Eds.; Springer International Publishing: Cham, Switzerland, 2021; pp. 536–555. [Google Scholar]

- Hardin, R. Trust and Trustworthiness; Russell Sage Foundation: New York, NY, USA, 2002. [Google Scholar]

- Bauer, P.C. Conceptualizing trust and trustworthiness. Political Concepts Working Paper Series. 2019. Available online: https://www.semanticscholar.org/paper/Conceptualizing-Trust-and-Trustworthiness-Bauer/e21946ddb6c3d66a347957d1e3cef434f63b22fb (accessed on 6 December 2022).

- Adadi, A.; Berrada, M. Peeking Inside the Black-Box: A Survey on Explainable Artificial Intelligence (XAI). IEEE Access 2018, 6, 52138–52160. [Google Scholar] [CrossRef]

- Sousa, S. Online Distance Learning: Exploring the Interaction between Trust and Performance. Ph.D Thesis, Seffield Hallam University, Sheffield, UK, 2006. [Google Scholar]

- Han, Q.; Wen, H.; Ren, M.; Wu, B.; Li, S. A topological potential weighted community-based recommendation trust model for P2P networks. Peer- Netw. Appl. 2015, 8, 1048–1058. [Google Scholar] [CrossRef]

- Hoffman, L.J.; Lawson-Jenkins, K.; Blum, J. Trust beyond security: An expanded trust model. Commun. ACM 2006, 49, 94–101. [Google Scholar] [CrossRef]

- Jensen, M.L.; Lowry, P.B.; Burgoon, J.K.; Nunamaker, J.F. Technology dominance in complex decision making: The case of aided credibility assessment. J. Manag. Inf. Syst. 2010, 27, 175–202. [Google Scholar] [CrossRef]

- Muise, A.; Christofides, E.; Desmarais, S. More information than you ever wanted: Does Facebook bring out the green-eyed monster of jealousy? CyberPsychology Behav. 2009, 12, 441–444. [Google Scholar] [CrossRef]

- EU, A.H. Assessment List for Trustworthy Artificial Intelligence (ALTAI) for Self-Assessment. 2021. Available online: https://digital-strategy.ec.europa.eu/en/library/assessment-list-trustworthy-artificial-intelligence-altai-self-assessment (accessed on 6 December 2022).

- Madsen, M.; Gregor, S. Measuring human-computer trust. In Proceedings of the 11th Australasian Conference on Information Systems, Brisbane, Australia, 6–8 December 2000; Citeseer: Princeton, NJ, USA, 2000; Volume 53, pp. 6–8. [Google Scholar]

- Goillau, P.; Kelly, C.; Boardman, M.; Jeannot, E. Guidelines for Trust in Future ATM Systems-Measures; European Organisation for the Safety of Air Navigation: Brussels, Belgium, 2003; Available online: https://skybrary.aero/bookshelf/guidelines-trust-future-atm-systems-measures-0 (accessed on 6 December 2022).

- Bachrach, M.; Guerra, G.; Zizzo, D. The self-fulfilling property of trust: An experimental study. Theory Decis. 2007, 63, 349–388. [Google Scholar] [CrossRef]

- Ajenaghughrure, I.B.; da Costa Sousa, S.C.; Lamas, D. Risk and Trust in artificial intelligence technologies: A case study of Autonomous Vehicles. In Proceedings of the 2020 13th International Conference on Human System Interaction (HSI), Tokyo, Japan, 6–8 June 2020; pp. 118–123. [Google Scholar]

- Benbasat, I.; Wang, W. Trust in and adoption of online recommendation agents. J. Assoc. Inf. Syst. 2005, 6, 4. [Google Scholar] [CrossRef]

- Söllner, M.; Hoffmann, A.; Hoffmann, H.; Wacker, A.; Leimeister, J.M. Understanding the Formation of Trust in IT Artifacts; Association for Information Systems: Atlanta, GA, USA, 2012. [Google Scholar]

- Mcknight, D.H.; Carter, M.; Thatcher, J.B.; Clay, P.F. Trust in a Specific Technology: An Investigation of Its Components and Measures. ACM Trans. Manage. Inf. Syst. 2011, 2, 12:1–12:25. [Google Scholar] [CrossRef]

- Söllner, M.; Leimeister, J.M. What we really know about antecedents of trust: A critical review of the empirical information systems literature on trust. In Psychology of Trust: New Research; Gefen, D., Ed.; Nova Science Publishers: Hauppauge, NY, USA, 2013. [Google Scholar]

- Friedman, B.; Khan, P.H., Jr.; Howe, D.C. Trust online. Commun. ACM 2000, 43, 34–40. [Google Scholar] [CrossRef]

- Zheng, J.; Veinott, E.; Bos, N.; Olson, J.S.; Olson, G.M. Trust without touch: Jumpstarting long-distance trust with initial social activities. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Minneapolis, MN, USA, 20–25 April 2002; pp. 141–146. [Google Scholar]

- Shneiderman, B. Designing trust into online experiences. Commun. ACM 2000, 43, 57–59. [Google Scholar] [CrossRef]

- Muir, B.M.; Moray, N. Trust in automation. Part II. Experimental studies of trust and human intervention in a process control simulation. Ergonomics 1996, 39, 429–460. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sousa, S.; Cravino, J.; Martins, P. Challenges and Trends in User Trust Discourse in AI Popularity. Multimodal Technol. Interact. 2023, 7, 13. https://doi.org/10.3390/mti7020013

Sousa S, Cravino J, Martins P. Challenges and Trends in User Trust Discourse in AI Popularity. Multimodal Technologies and Interaction. 2023; 7(2):13. https://doi.org/10.3390/mti7020013

Chicago/Turabian StyleSousa, Sonia, José Cravino, and Paulo Martins. 2023. "Challenges and Trends in User Trust Discourse in AI Popularity" Multimodal Technologies and Interaction 7, no. 2: 13. https://doi.org/10.3390/mti7020013

APA StyleSousa, S., Cravino, J., & Martins, P. (2023). Challenges and Trends in User Trust Discourse in AI Popularity. Multimodal Technologies and Interaction, 7(2), 13. https://doi.org/10.3390/mti7020013