Design for an Art Therapy Robot: An Explorative Review of the Theoretical Foundations for Engaging in Emotional and Creative Painting with a Robot

Abstract

1. Introduction

1.1. Terms

1.2. Motivation

2. Theoretical Foundations

2.1. Therapy Robots

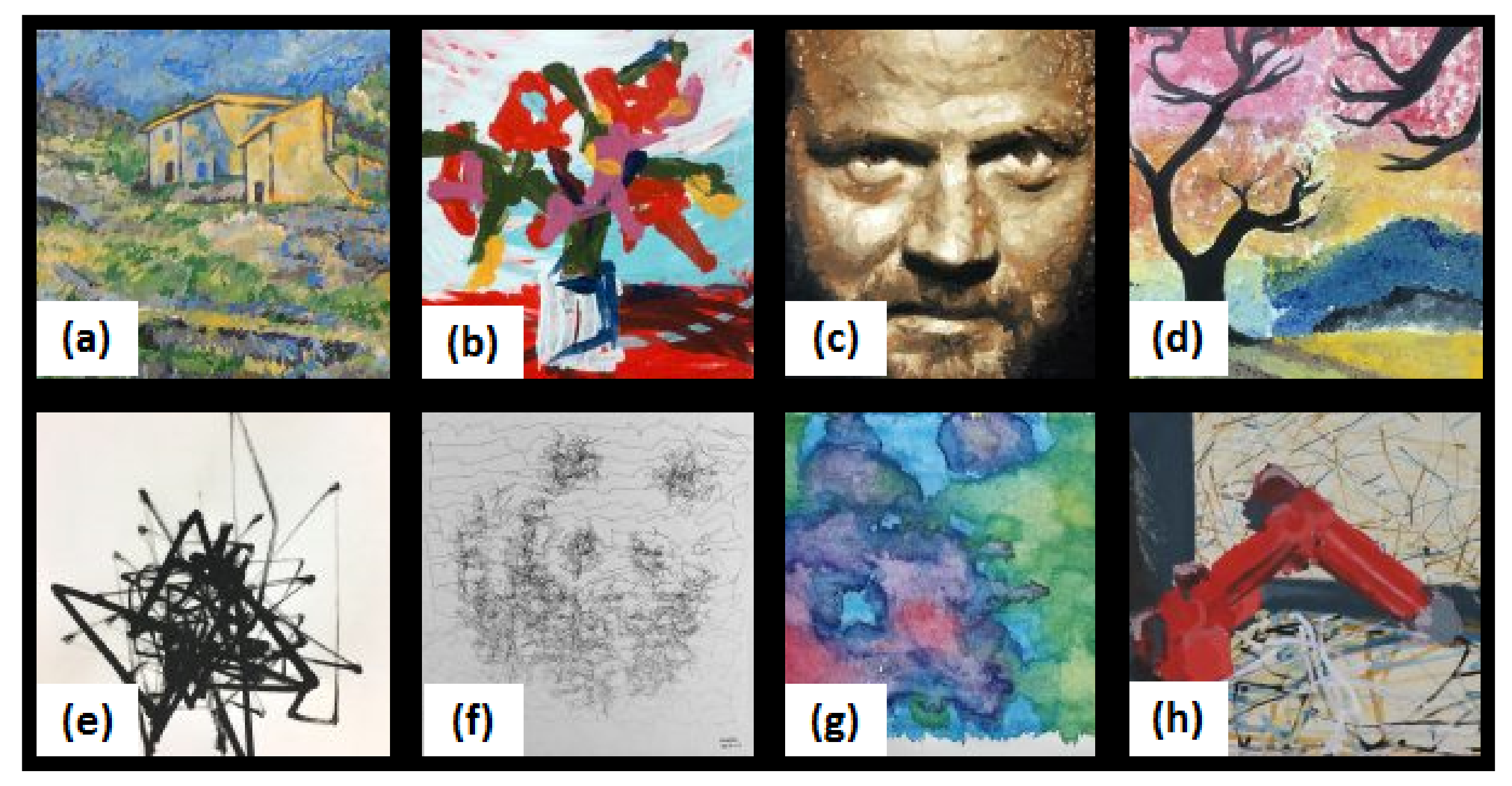

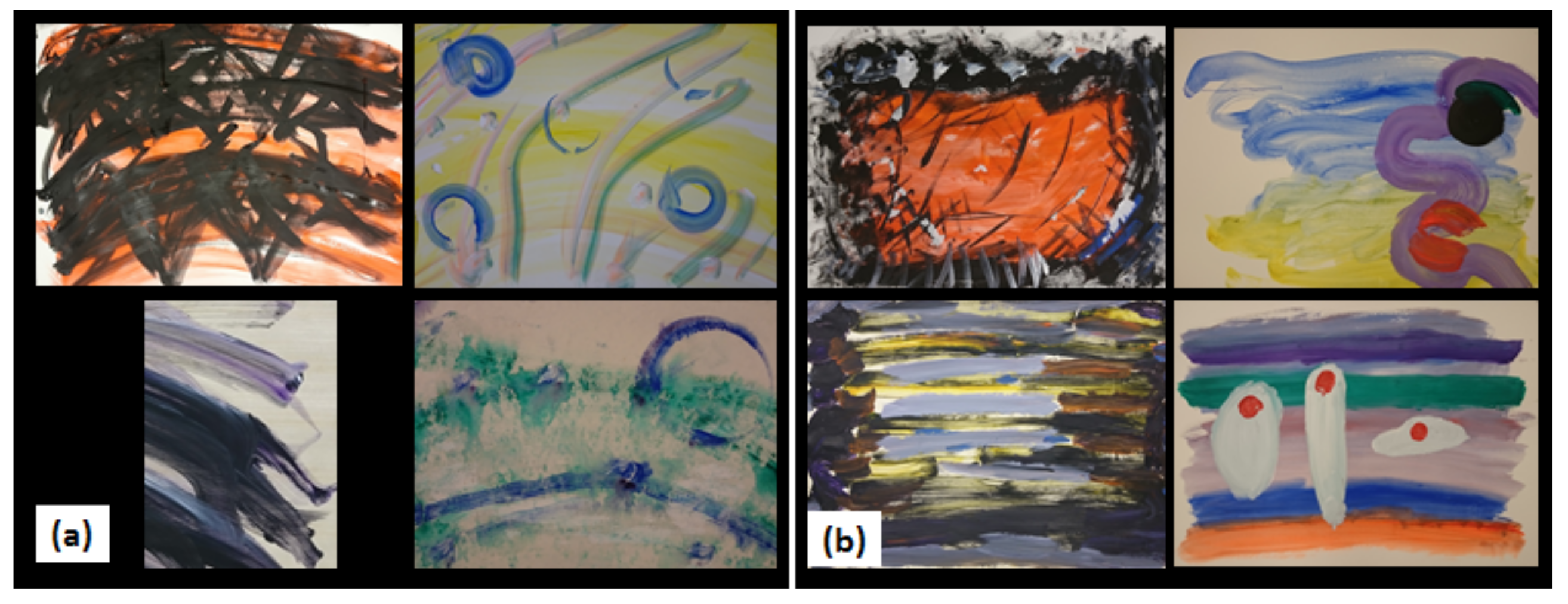

2.2. Robot Art

2.3. Interaction Strategies for Art Therapy

2.3.1. Humanistic Art Therapy: Robot as Partner

2.3.2. General Interactions

2.4. Ethical Pitfalls

2.4.1. Identifying Pitfalls

2.4.2. Proposed Solutions to Ethical Pitfalls

2.5. Emotions

2.6. Creativity

3. Design for an Art Therapy Robot

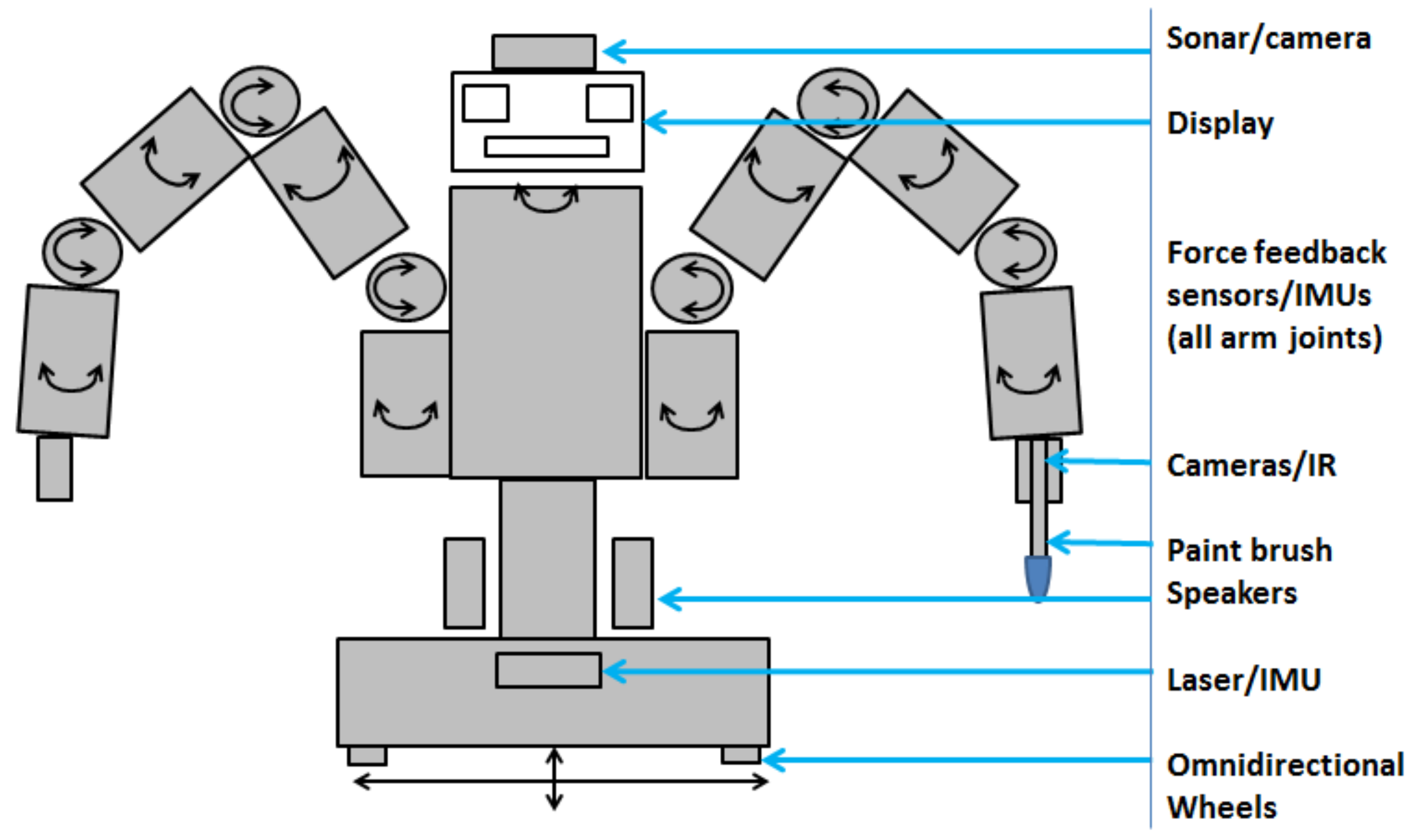

3.1. Requirements and Capabilities

- R1 Co-explore. The robot should investigate the meaning of the person’s art with the person, showing attention: expressing inferences about the person or their artwork in painting and verbally, and asking questions to confirm and to encourage the person to reflect. The robot can also track the state of the person’s artworks over time.

- R2 Enhance self-image. The robot should be positive, accepting and encouraging the communication of emotions. If sharing a substrate, the robot can also leave the most important parts of the painting for the person to paint, providing opportunities to feel independence, control, purpose, and growth, while possibly scaffolding and adjusting the challenge to the person’s skill level. The robot can seek to include positive personalized content tailored to the person in its painting.

- R3 Improve social interactions. The robot can suggest including others in paintings, mention others who have painted similar paintings, or seek to include interesting content in paintings which could lead to conversation.

- R4 Please. To promote a general perception of hedonic well-being, the robot should help the person to feel good about engaging in art therapy with the robot, by behaving in an enjoyable and likeable way. For easy good communication, the robot can offer a familiar interface such as humanoid behavior and capable of multimodal interaction. To be liked by the person, the robot can show empathy and match a person’s emotions, although it should not show negative emotions toward a person or their art; and be positive, showing sincere liking, as praise can be given for a person’s creativity and skill, even for negative depictions. Emotion expression can be made to be large and meaningful, and express sincerity by being clear, e.g., ensuring that referents are clearly conveyed. Creativity can show interesting variation within a stable core. Suggestions, delivered proactively, can invite interaction and clearly convey interactive affordances and a robot’s intentions. The robot can also infer when the person wants to end the interaction.

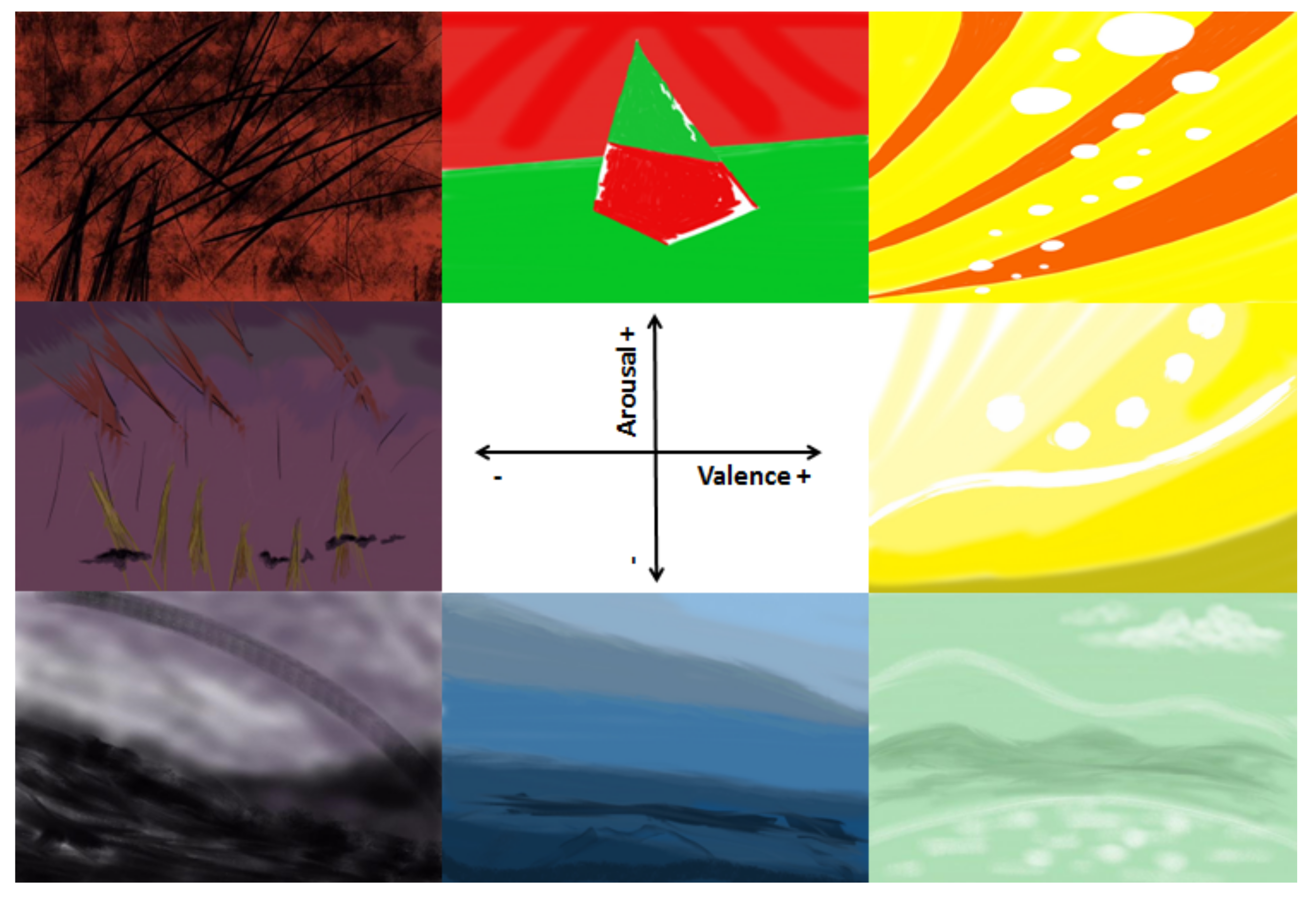

- R5 Engage Emotionally. The robot should seek to infer emotions embedded in a person’s artwork, and can also seek to infer a person’s emotions directly. Basic emotions can be conveyed abstractly based on heuristics, or via symbols such as a person’s face. The robot can also seek to infer and convey complex phenomena such as mixed emotions, referents, and progressions.

- R6 Engage Creatively. The robot should be able to make basic creative choices and discuss these with a person through conversation.

- R7 Avoid Pitfalls. The robot should avoid pitfalls including physical harm, psychological harm, mistakes, and deception.

- C1 Infer. C1.1 Emotions from painting or C1.2 emotions directly from a person. C1.3 Speech, when person wants to end the interaction, asks a question, or answers. C1.4 Problems.

- C2 Paint. Express C2.1 Matching emotions. C2.2 Positive emotions. C2.3 Creativity. C2.4 Complex Emotions.

- C3 Talk. C3.1 Ask. About person’s painting and permissions. C3.2 Greeting C3.3 Explain. C3.4 Suggest. Social interactions. C3.5 Be Positive. C3.6 Alert.

- C4 Track State. (If permitted) Store a record for a care giver.

- C5 Offer Familiar Interface.

- C6 Be Safe.

3.2. Simplified Implementation Example

3.2.1. Safe, Familiar Interface

3.2.2. Inference

3.2.3. Painting

3.2.4. Speech

- “Hello, welcome to art therapy.”

- “My name is Baxter and I am learning how to interact with humans. Please don’t be disappointed if I don’t understand what you say.”

- “Do you feel like doing some painting today? It’s fine to say ‘no’.”

- “Is it okay if I let… know that you have painted with me today, and take a photo of your painting to show them?”

- “Also, do you know that you can stop my arm at any time, or push the emergency button to make me turn off?’ I’m completely safe.”

- “Let’s get started! Please paint whatever you like, and I will do the same”

- “What are you feeling right now?”

- “Are you feeling…?”

- “Thank you for asking about my painting. I decided to…”

- “You’re done? Okay, then I’m done, too.”

- “Thank you for painting with me, and showing me your wonderful artwork, goodbye!”

4. Discussion

- Art therapy robots. We have motivated why art therapy robots would be useful, and described an apparent gap in the literature in regard to drama, writing, and gardening therapy for robots.

- Therapeutic interactions. We have suggested the usefulness of a humanistic, “responsive art” approach as a starting point for an interaction strategy for art therapy robots, comprising concepts such as “matching” and “distracting”.

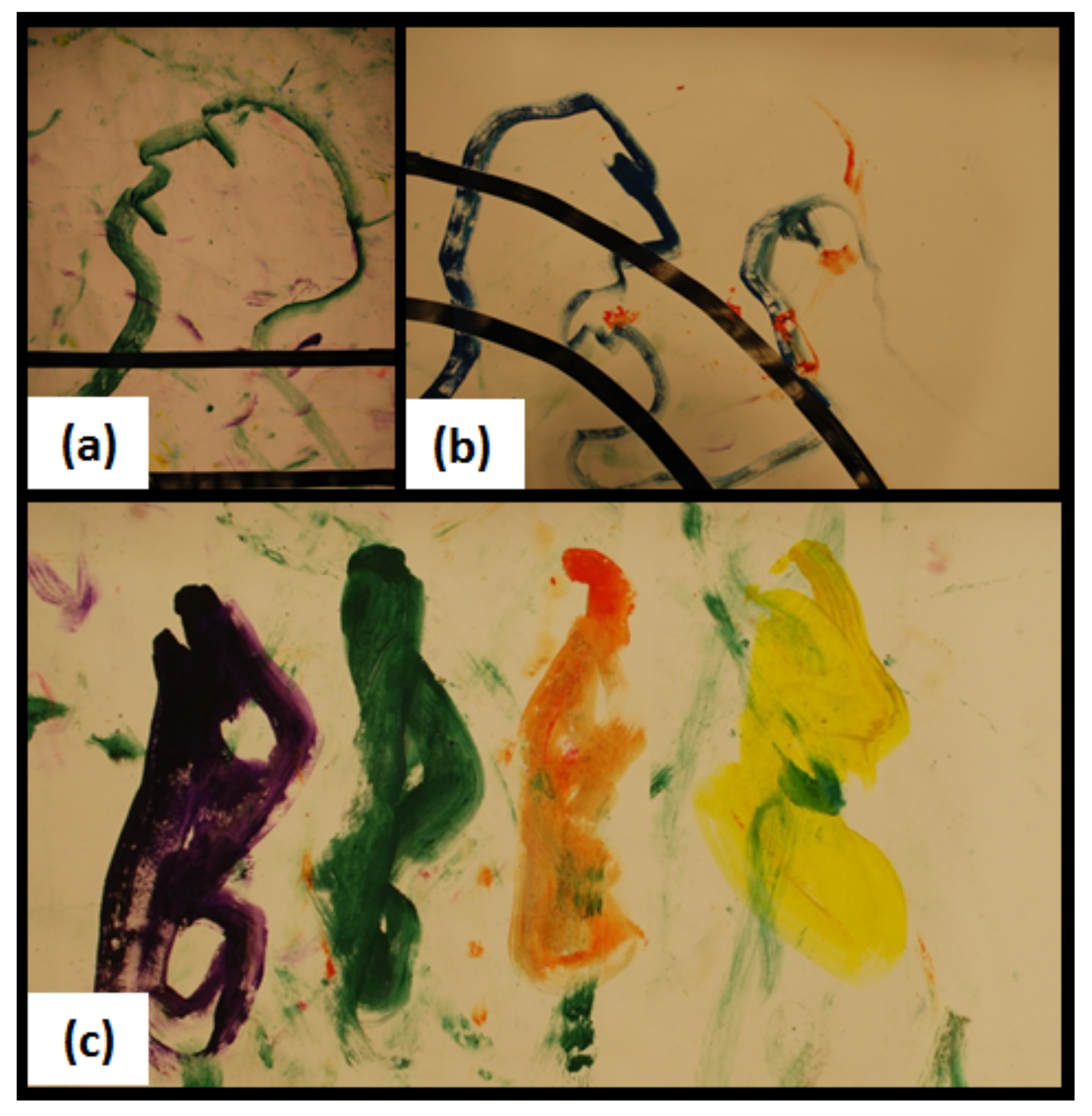

- Emotions. We have compiled with the help of the artists a list of heuristics for autonomously generating abstract emotional art based on simplified properties of color, lines, and composition. We have reported on some symbols which appear to strongly convey emotions, proposing the importance of one symbol in particular, a painted human face with various expressions, as a familiar and powerful symbol. Furthermore, we have highlighted a perceived gap between our understanding of emotions in human science and what is currently typically being addressed in engineering studies, in terms of mixed emotions, referents, timing, and polysemy, and suggested how such emotional characteristics can be considered or conveyed in art therapy.

- Creativity. We have discussed some issues in artificial creativity, and proposed that an art therapy robot should be able to discuss creative choices with a person through conversation.

- Ethics. We have identified some potential ethical pitfalls for an art therapy robot and proposed solutions for avoiding them.

- Art therapy robots. Theoretical work will extend our design to work with other forms of therapy such as music therapy.

- Therapeutic interactions. Interaction strategies will be refined, e.g., by clarifying how art should be used to provide therapeutic feedback, possibly through preparing datasets of patient art and responsive art from human therapists.

- Emotions. We will get a better understanding of how to convey complex emotions, also using symbols, and we will tackle some questions which emerged in our work such as: Will abstracted symbols be perceived more as conveying emotions, whereas realistic symbols will be perceived more as semantic or referential?

- Creativity. Various questions can be tackled, such as: How could an art therapy robot personalize creative artwork generated for a person?

- Ethics. Concerns for other forms of art or therapy should be addressed, as well as legal questions. For example, if an art therapy robot errs, who is at fault: the robot, the makers, or the entity offering its services? Moreover, who will own the copyright for the robot’s generated artwork if it is generated in a therapy session for a human?

Author Contributions

Acknowledgments

Conflicts of Interest

Appendix A. Emotions

- Co-occurrence. Humans can feel multiple, sometimes opposite, emotions simultaneously [177,178]. For example, conflicting emotions might include enjoying a scary movie, a “dumb” joke, or cacophonous music; feeling happy and sad at experiencing a small winning or loss in gambling; feeling hopeful at new prospects but sad to lose contact with friends, when moving; feeling happy but sad when a child leaves home; or feeling happy for students who excel and sad for those who do not, after a test. Some examples of complimentary emotions are feeling relaxed and happy, or sad and angry. Humans appear to be adept at recognizing such emotions; it has even been suggested that blended emotions are displayed more often in the face than single emotions, and that people are better able to process this mixed information [179].

- Referents. Another property we note is that emotions are typically directed toward some context (here, a “referent”), and not random phenomena disconnected from the world; this can be seen in some typical patterns of thought occurring during the experiencing of emotions described by appraisal theory [180]. For instance, appraisal of an event can involve assessing its relevance, how difficult it is to handle, the causes, and norms for how people typically react [181]. Referents are also generally important for phenomena related to emotions such as “sentiments”, attitudes, preferences and opinions, which are typically directed toward something or someone. We believe that identifying the referent of an emotion is vital for human interactions; for example, a robot might need to know if a person is angry at the robot or at someone else. However, referents can be quite complex. A person cut off while driving might feel angry toward another driver, their car, or even everyone in the vicinity, or the situation in general. Moreover, in some cases, the referent might be obvious, but in others, even humans can have difficulty identifying why others feel the way they do, for example when someone is angry with them. Furthermore, it has been suggested that sometimes emotions are not directed at anything in particular, in the case of “core affect”, in which a person might feel excited, depressed, or relaxed [182].

- Timing. Additionally, emotions change over time. In general, there is an internal homeostatic tendency for strong emotions to gradually fade over time, and emotions can be more easily regulated as they start than when they are in progress [183]. The role of timing in “emotion episodes” has also been examined in affective events theory, in which it is claimed that that the patterns with which emotions fluctuate over time are highly predictable [184]. In addition, some typical progressions of emotions have been described. For example, a grief response can proceed from denial, to anger, bargaining, depression, and finally acceptance [185]; in terms of basic emotions, this could be described as a progression from surprise to anger, sadness, and finally a neutral state. In addition, progressions of emotions from fear to anger are predicted in General Strain Theory [120,186]. We believe that humans have some intuitive understanding of such processes, which could be beneficial for a robot art therapist; for example, if it is known that scared people can become angry, predictions can be made about how a scared person might feel in the future.

- Polysemy. Additionally, emotional signals can be ambiguous. For example, nodding can be positive, indicating agreement, greeting, or thanks; neutral expressing confirmation; or negative conveying irony or emphatic insistence [124]. Robots should be aware of such nuances in order to communicate well with humans. In the current article, we seek to take into account such considerations, in exploring the complex phenomenon of the visual communication of emotions.

Appendix B. Creativity

Appendix C. Basic Forms of Art Therapy

Appendix D. The Emotional Meaning of Symbols

References

- Gray, C. Art therapy: When pictures speak louder than words. Can. Med. Assoc. J. 1978, 119, 488. [Google Scholar] [PubMed]

- Merback, M.B. Perfection’s Therapy: An Essay on Albrecht Dürer’s Melencolia I; MIT Press: Cambridge, MA, USA, 2018. [Google Scholar]

- Toussaint, L.; Friedman, P. Forgiveness, Gratitude, and Well-Being: The Mediating Role of Affect and Beliefs. J. Happiness Stud. 2008. [Google Scholar] [CrossRef]

- Sperling, C.; Holst, K. Do Muddy Waters Shift Burdens. Md. L. Rev. 2016, 76, 629. [Google Scholar]

- Jacobs, J. Dynamic Drawing: Broadening Practice and Participation in Procedural Art. Ph.D. Thesis, Massachusetts Institute of Technology, Cambridge, MA, USA, 2017. [Google Scholar]

- Malchiodi, C. The Art Therapy Sourcebook; McGraw-Hill: New York, NY, USA, 2006. [Google Scholar]

- Scherer, K.R. What are emotions? And how can they be measured? Soc. Sci. Inf. 2005, 44, 693–727. [Google Scholar] [CrossRef]

- Gershgorn, D. Can we Make a Computer Make Art? In Popular Science Special Edition: The New Artificial Intelligence; Time Inc. Books: New York, NY, USA, 2016; pp. 64–67. [Google Scholar]

- Holmén, K.; Ericsson, K.; Winblad, B. Social and emotional loneliness among non-demented and demented elderly people. Arch. Gerontol. Geriatr. 2000, 31, 177–192. [Google Scholar] [CrossRef]

- Seppala, E.; King, M. Burnout at work isn’t just about exhaustion. It’s also about loneliness. Harv. Bus. Rev. 2017, 29. Available online: https://hbr.org/2017/06/burnout-at-work-isnt-just-about-exhaustion-its-also-about-loneliness (accessed on 30 August 2018).

- Stuckey, H.L.; Nobel, J. The connection between art, healing, and public health: A review of current literature. Am. J. Public Health 2010, 100, 254–263. [Google Scholar] [CrossRef] [PubMed]

- Rusted, J.; Sheppard, L.; Waller, D. A Multi-centre Randomized Control Group Trial on the Use of Art Therapy for Older People with Dementia. Group Anal. 2006, 39, 517–536. [Google Scholar] [CrossRef]

- Bharatharaj, J.; Huang, L.; Mohan, R.E.; Al-Jumaily, A.; Krägeloh, C. Robot-assisted therapy for learning and social interaction of children with autism spectrum disorder. Robotics 2017, 6, 4. [Google Scholar] [CrossRef]

- Wada, K.; Shibata, T. Robot therapy in a care house-its sociopsychological and physiological effects on the residents. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Orlando, FL, USA, 15–19 May 2006; pp. 3966–3971. [Google Scholar]

- Wada, K.; Shibata, T. Living with seal robots—Its sociopsychological and physiological influences on the elderly at a care house. IEEE Trans. Robot. 2007, 23, 972–980. [Google Scholar] [CrossRef]

- Bitonte, R.A.; De Santo, M. Art therapy: An underutilized, yet effective tool. Ment. Illn. 2014, 6, 5354. [Google Scholar] [CrossRef] [PubMed]

- Evans, K.; Dubowski, J. Art Therapy with Children on the Autistic Spectrum: Beyond Words; Jessica Kingsley Publishers: London, UK, 2001. [Google Scholar]

- Rivera, R.A. Art Therapy for Individuals with Severe Mental Illness. Master’s Thesis, University of Southern California, Los Angeles, CA, USA, 2008. [Google Scholar]

- Uttley, L.; Scope, A.; Stevenson, M.; Rawdin, A.; Buck, E.T.; Sutton, A.; Stevens, J.; Kaltenthaler, E.; Dent-Brown, K.; Wood, C. Systematic review and economic modelling of the clinical effectiveness and cost-effectiveness of art therapy among people with non-psychotic mental health disorders. Health Technol. Assess. 2015, 19, 1–120. [Google Scholar] [CrossRef] [PubMed]

- Mihailidis, A.; Blunsden, S.; Boger, J.; Richards, B.; Zutis, K.; Young, L.; Hoey, J. Towards the development of a technology for art therapy and dementia: Definition of needs and design constraints. Arts Psychother. 2010, 37, 293–300. [Google Scholar] [CrossRef]

- Kanamori, M.; Suzuki, M.; Oshiro, H.; Tanaka, M.; Inoguchi, T.; Takasugi, H.; Saito, Y.; Yokoyama, T. Pilot study on improvement of quality of life among elderly using a pet-type robot. In Proceedings of the IEEE International Symposium on Computational Intelligence in Robotics and Automation, Kobe, Japan, 16–20 July 2003. [Google Scholar]

- Robinson, H.; MacDonald, B.; Kerse, N.; Broadbent, E. The Psychosocial Effects of a Companion Robot: A Randomized Controlled Trial. J. Am. Med. Dir. Assoc. 2013, 14, 661–667. [Google Scholar] [CrossRef] [PubMed]

- Bartneck, C. Interacting with an embodied emotional character. In Proceedings of the 2003 International Conference on Designing Pleasurable Products and Interfaces, Pittsburgh, PA, USA, 23–26 June 2003; pp. 55–60. [Google Scholar] [CrossRef]

- Leyzberg, D.; Spaulding, S.; Toneva, M.; Scassellati, B. The Physical Presence of a Robot Tutor Increases Cognitive Learning Gains. In Proceedings of the Annual Meeting of the Cognitive Science Society, Sapporo, Japan, 1–4 August 2012. [Google Scholar]

- Powers, A.; Kiesler, S.; Fussell, S.; Torrey, C. Comparing a Computer Agent with a Humanoid Robot. In Proceedings of the 2007 2nd ACM/IEEE International Conference on Human-Robot Interaction (HRI’07), Arlington, VA, USA, 9–11 March 2007. [Google Scholar]

- Shinozawa, K.; Naya, F.; Yamato, J.; Kogure, K. Differences in effect of robot and screen agent recommendations on human decision-making. Int. J. Hum. Comput. Stud. 2005, 62, 267–279. [Google Scholar] [CrossRef]

- Cave, S.; SOhÉigeartaigh, S. An AI Race for Strategic Advantage: Rhetoric and Risks. In Proceedings of the AAAI/ACM Conference on Artificial Intelligence, Ethics and Society, New Orleans, LA, USA, 2–3 February 2018. [Google Scholar]

- Colton, S.; Wiggins, G.A. Computational Creativity: The Final Frontier? In Proceedings of the 20th European Conference on Artificial Intelligence ECAI, Montpellier, France, 27–31 August 2012; Volume 12, pp. 21–26. [Google Scholar]

- Picard, R.W. Affective Computing; M.I.T Media Laboratory Perceptual Computing Section Technical Report No. 321; MIT Press: Cambridge, MA, USA, 1995. [Google Scholar]

- Wang, Z.; Xie, L.; Lu, T. Research progress of artificial psychology and artificial emotion in China. CAAI Trans. Intell. Technol. 2016, 1, 355–365. [Google Scholar] [CrossRef]

- Cooney, M.; Pashami, S.; Sant’Anna, A.; Fan, Y.; Nowaczyk, S. Pitfalls of Affective Computing: How can the automatic visual communication of emotions lead to harm, and what can be done to mitigate such risks? In Proceedings of the WWW’18 Companion: The 2018 Web Conference Companion, Lyon, France, 23–27 April 2018; ACM: New York, NY, USA, 2018. [Google Scholar] [CrossRef]

- Narasimman, S.V.; Westerlund, D. Robot Artwork Using Emotion Recognition. Master’s Thesis, Halmstad University, Halmstad, Sweden, 2017. [Google Scholar]

- Badnjević, A.; Gurbeta, L. Development and perspectives of biomedical engineering in South East European countries. In Proceedings of the 39th IEEE International Convention on Information and Communication Technology, Electronics and Microelectronics (MIPRO), Opatija, Croatia, 30 May–3 June 2016; pp. 457–460. [Google Scholar]

- Guan, X.; Ji, L.; Wang, R. Development of Exoskeletons and Applications on Rehabilitation. In Proceedings of the 2015 International Conference on Mechanical Engineering and Electrical Systems (ICMES 2015), Singapore, 16–18 December 2015; EDP Sciences: Les Ulis, France, 2016; Volume 40, p. 02004. [Google Scholar]

- Fasola, J.; Mataric, M.J. Using socially assistive human–robot interaction to motivate physical exercise for older adults. Proc. IEEE 2012, 100, 2512–2526. [Google Scholar] [CrossRef]

- Hansen, S.T.; Bak, T.; Risager, C. An adaptive game algorithm for an autonomous, mobile robot—A real world study with elderly users. In Proceedings of the 21st IEEE International Symposium on Robot and Human Interactive Communication, Paris, France, 9–13 September 2012; pp. 892–897. [Google Scholar]

- Hebesberger, D.V.; Dondrup, C.; Gisinger, C.; Hanheide, M. Patterns of use: How older adults with progressed dementia interact with a robot. In Proceedings of the Companion of the 2017 ACM/IEEE International Conference on Human-Robot Interaction, Vienna, Austria, 6–9 March 2017; pp. 131–132. [Google Scholar]

- Moyle, W.; Jones, C.; Sung, B.; Bramble, M.; O’Dwyer, S.; Blumenstein, M.; Estivill-Castro, V. What effect does an animal robot called CuDDler have on the engagement and emotional response of older people with dementia? A pilot feasibility study. Int. J. Soc. Robot. 2016, 8, 145–156. [Google Scholar] [CrossRef]

- Plaisant, C.; Druin, A.; Lathan, C.; Dakhane, K.; Edwards, K.; Vice, J.M.; Montemayor, J. A storytelling robot for pediatric rehabilitation. In Proceedings of the Fourth International ACM Conference on Assistive Technologies, Arlington, VA, USA, 13–15 November 2000; pp. 50–55. [Google Scholar]

- Dehkordi, P.S.; Moradi, H.; Mahmoudi, M.; Pouretemad, H.R. The design, development, and deployment of RoboParrot for screening autistic children. Int. J. Soc. Robot. 2015, 7, 513–522. [Google Scholar] [CrossRef]

- Gonzalez-Pacheco, V.; Ramey, A.; Alonso-Martín, F.; Castro-Gonzalez, A.; Salichs, M.A. Maggie: A social robot as a gaming platform. Int. J. Soc. Robot. 2011, 3, 371–381. [Google Scholar] [CrossRef]

- Pop, C.A.; Simut, R.; Pintea, S.; Saldien, J.; Rusu, A.; David, D.; Vanderfaeillie, J.; Lefeber, D.; Vanderborght, B. Can the social robot Probo help children with autism to identify situation-based emotions? A series of single case experiments. Int. J. Hum. Robot. 2013, 10, 1350025. [Google Scholar] [CrossRef]

- Salvador, M.J.; Silver, S.; Mahoor, M.H. An emotion recognition comparative study of autistic and typically-developing children using the zeno robot. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Seattle, WA, USA, 26–30 May 2015; pp. 6128–6133. [Google Scholar]

- Greczek, J.; Kaszubski, E.; Atrash, A.; Matarić, M. Graded cueing feedback in robot-mediated imitation practice for children with autism spectrum disorders. In Proceedings of the 23rd IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), Edinburgh, UK, 25–29 August 2014; pp. 561–566. [Google Scholar]

- Srinivasan, S.M.; Kaur, M.; Park, I.K.; Gifford, T.D.; Marsh, K.L.; Bhat, A.N. The effects of rhythm and robotic interventions on the imitation/praxis, interpersonal synchrony, and motor performance of children with autism spectrum disorder (ASD): A pilot randomized controlled trial. Autism Res. Treat. 2015, 2015, 736516. [Google Scholar] [CrossRef] [PubMed]

- Kosuge, K.; Hayashi, T.; Hirata, Y.; Tobiyama, R. Dance Partner Robot-Ms DanceR. In Proceedings of the IROS 2003, Las Vegas, NV, USA, 27–31 October 2003. [Google Scholar]

- Kozima, H.; Michalowski, M.P.; Nakagawa, C. Keepon. Int. J. Soc. Robot. 2009, 1, 3–18. [Google Scholar] [CrossRef]

- Tapus, A.; Mataric, M.J. Socially assistive robotic music therapist for maintaining attention of older adults with cognitive impairments. In Proceedings of the AAAI Fall Symposium AI in Eldercare: New Solutions to Old Problems, Washington, DC, USA, 7–9 November 2008. [Google Scholar]

- Lim, A.; Mizumoto, T.; Cahier, L.K.; Otsuka, T.; Takahashi, T.; Komatani, K.; Ogata, T.; Okuno, H.G. Robot musical accompaniment: Integrating audio and visual cues for real-time synchronization with a human flutist. In Proceedings of the 2010 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Taipei, Taiwan, 18–22 October 2010; pp. 1964–1969. [Google Scholar]

- Martín, F.; Agüero, C.E.; Cañas, J.M.; Valenti, M.; Martínez-Martín, P. Robotherapy with dementia patients. Int. J. Adv. Robot. Syst. 2013, 10, 10. [Google Scholar] [CrossRef]

- Graf, B.; Reiser, U.; Hägele, M.; Mauz, K.; Klein, P. Robotic Home Assistant Care-O-bot®3-Product Vision and Innovation Platform. In Proceedings of the 2009 IEEE Workshop on Advanced Robotics and Its Social Impacts (ARSO 2009), Tokyo, Japan, 23–25 November 2009; pp. 139–144. [Google Scholar]

- Odashima, T.; Onishi, M.; Tahara, K.; Takagi, K.; Asano, F.; Kato, Y.; Nakashima, H.; Kobayashi, Y.; Mukai, T.; Luo, Z.; et al. A Soft Human-Interactive Robot RIMAN. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Beijing, China, 9–15 October 2006. [Google Scholar]

- Pollack, M.; Engberg, S.; Thrun, S.; Brown, L.; Colbry, D.; Orosz, C.; Peintner, B.; Ramakrishnan, S.; Dunbar-Jacob, J.; McCarthy, C. Pearl: A Mobile Robotic Assistant for the Elderly. In Proceedings of the AAAI Workshop on Automation as Caregiver, Edmonton, AB, Canada, 28–29 July 2002. [Google Scholar]

- Richardson, K.; Coeckelbergh, M.; Wakunuma, K.; Billing, E.; Ziemke, T.; Gomez, P.; Vanderborght, B.; Belpaeme, T. Robot Enhanced Therapy for Children with Autism (DREAM): A Social Model of Autism. IEEE Technol. Soc. Mag. 2018, 37, 30–39. [Google Scholar] [CrossRef]

- McClelland, R.T. Robotic Alloparenting: A New Solution to an Old Problem? J. Mind Behav. 2016, 37. [Google Scholar]

- Liu, X.; Wu, Q.; Zhao, W.; Luo, X. Technology-Facilitated Diagnosis and Treatment of Individuals with Autism Spectrum Disorder: An Engineering Perspective. Appl. Sci. 2017, 7, 1051. [Google Scholar] [CrossRef]

- Mordoch, E.; Osterreicher, A.; Guse, L.; Roger, K.; Thompson, G. Use of social commitment robots in the care of elderly people with dementia: A literature review. Maturitas 2013, 74, 14–20. [Google Scholar] [CrossRef] [PubMed]

- Edwards, B. The Never-Before-Told Story of the World’s First Computer Art (It’s a Sexy Dame). The Atlantic, 24 January 2013. [Google Scholar]

- Brown, P. From systems art to artificial life: Early generative art at the Slade School of Fine Art. In White Heat Cold Logic: British Computer Art; MIT Press: Cambridge, CA, USA, 2008; pp. 275–290. [Google Scholar]

- Srikaew, A.; Cambron, M.E.; Northrup, S.; Peters, R.A., II; Wilkes, M.; Kawamura, K. Humanoid drawing robot. In Proceedings of the IASTED International Conference on Robotics and Manufacturing, Banff, AB, Canada, 1–4 July 1998. [Google Scholar]

- Kudoh, S.; Ogawara, K.; Ruchanurucks, M.; Ikeuchi, K. Painting robot with multi-fingered hands and stereo vision. Robot. Auton. Syst. 2009, 57, 279–288. [Google Scholar] [CrossRef]

- Calinon, S.; Epiney, J.; Billard, A. A humanoid robot drawing human portraits. In Proceedings of the 2005 5th IEEE RAS International Conference on Humanoid Robots, Tsukuba, Japan, 5 December 2005; pp. 161–166. [Google Scholar]

- Lee, Y.J.; Zitnick, C.L.; Cohen, M.F. Shadowdraw: Real-time user guidance for freehand drawing. In Proceedings of the ACM SIGGRAPH 2011 Papers (SIGGRAPH’11), Vancouver, BC, Canada, 7–11 August 2011; pp. 27:1–27:10. [Google Scholar]

- Ha, D.; Eck, D. A neural representation of sketch drawings. arXiv, 2017; arXiv:1704.03477. [Google Scholar]

- Khan, F.S.; Beigpour, S.; van de Weijer, J.; Felsberg, M. Painting-91: A large scale database for computational painting categorization. Mach. Vis. Appl. 2014, 25, 1385–1397. [Google Scholar] [CrossRef]

- Crick, C.; Scassellati, B. Inferring narrative and intention from playground games. In Proceedings of the 12th IEEE Conference on Development and Learning, Monterey, CA, USA, 9–12 August 2008. [Google Scholar]

- Huang, C.; Mutlu, B. Anticipatory Robot Control for Efficient Human-Robot Collaboration. In Proceedings of the ACM/IEEE International Conference on Human Robot Interaction, Christchurch, New Zealand, 7–10 March 2016; IEEE Press: Piscataway, NJ, USA, 2016; pp. 83–90. [Google Scholar]

- Montebelli, A.; Tykal, M. Intention Disambiguation: When does action reveal its underlying intention? In Proceedings of the HRI 2017, Vienna, Austria, 6–9 March 2017. [Google Scholar]

- Breazeal, C.; Buchsbaum, D.; Gray, J.; Gatenby, D.; Blumberg, B. Learning from and about others: Towards using imitation to bootstrap the social understanding of others by robots. Artif. Life 2005, 11, 31–62. [Google Scholar] [CrossRef] [PubMed]

- Broekens, J. Emotion and reinforcement: Affective facial expressions facilitate robot learning. In Artifical Intelligence for Human Computing, LNAI 4451; Huang, T.S., Nijholt, A., Pantic, M., Pentland, A., Eds.; Springer: Berlin/Heidelberg, Germany, 2007; pp. 113–132. [Google Scholar]

- Rubin, J.A. Art Therapy: An Introduction; Psychology Press: Wellington, New Zealand, 1999. [Google Scholar]

- Hogan, S. (Ed.) Feminist Approaches to Art Therapy; Psychology Press: Wellington, New Zealand, 1997. [Google Scholar]

- Talwar, S.; Iyer, J.; Doby-Copeland, C. The invisible veil: Changing paradigms in the art therapy profession. Art Ther. 2004, 21, 44–48. [Google Scholar] [CrossRef]

- Tapus, A.; Mataric, M.J. Emulating Empathy in Socially Assistive Robotics. In Proceedings of the AAAI Spring Symposium: Multidisciplinary Collaboration for Socially Assistive Robotics, Palo Alto, CA, USA, 26–28 March 2007; pp. 93–96. [Google Scholar]

- Kahn, P.H., Jr.; Ishiguro, H.; Friedman, B.; Kanda, T.; Freier, N.G.; Severson, R.L.; Miller, J. What is a Human? Toward psychological benchmarks in the field of human–robot interaction. Interact. Stud. 2007, 8, 363–390. [Google Scholar]

- Hart, G.J. The five W’s: An old tool for the new task of audience analysis. Tech. Commun. 1996, 43, 139–145. [Google Scholar]

- Kugel, P. How Professors Develop as Teachers. Stud. High. Educ. 1993, 18, 315–328. [Google Scholar] [CrossRef]

- Mills, A. The diagnostic drawing series. In Handbook of Art Therapy; Guilford Press: New York, NY, USA, 2003; pp. 401–409. [Google Scholar]

- Muri, S.A. Beyond the face: Art therapy and self-portraiture. Arts Psychother. 2007, 34, 331–339. [Google Scholar] [CrossRef]

- Riether, N.; Hegel, F.; Wrede, B.; Horstmann, G. Social facilitation with social robots? In Proceedings of the Seventh Annual ACM/IEEE International Conference on Human-Robot Interaction, Boston, MA, USA, 5–8 March 2012; pp. 41–48. [Google Scholar]

- Killeen, J.P.; Evans, G.W.; Danko, S. The role of permanent student artwork in students’ sense of ownership in an elementary school. Environ. Behav. 2003, 35, 250–263. [Google Scholar] [CrossRef]

- Turk, D.J.; van Bussel, K.; Waiter, G.; Macrae, C.N. Mine and me: Exploring the neural basis of object ownership. J. Cognit. Neurosci. 2011, 23, 3657–3668. [Google Scholar] [CrossRef] [PubMed]

- Phillips, J. Working with adolescents’ violent imagery. In Handbook of Art Therapy; Guilford Press: New York, NY, USA, 2003; pp. 229–238. [Google Scholar]

- Goetz, J.; Kiesler, S.; Powers, A. Matching robot appearance and behavior to tasks to improve human-robot cooperation. In Proceedings of the 12th IEEE International Workshop on Robot and Human Interactive Communication, Millbrae, CA, USA, 2 November 2003; pp. 55–60. [Google Scholar]

- Tapus, A.; Ţăpuş, C.; Matarić, M.J. User-robot personality matching and assistive robot behavior adaptation for post-stroke rehabilitation therapy. Intell. Serv. Robot. 2008, 1, 169. [Google Scholar] [CrossRef]

- Gross, J.J. Emotion regulation: Affective, cognitive, and social consequences. Psychophysiology 2002, 39, 281–291. [Google Scholar] [CrossRef] [PubMed]

- Byrne, D.; Clore, G.L.; Smeaton, G. The attraction hypothesis: Do similar attitudes affect anything? J. Personal. Soc. Psychol. 1986, 51, 1167–1170. [Google Scholar] [CrossRef]

- Hanson, D. Exploring the aesthetic range for humanoid robots. In Proceedings of the ICCS/CogSci-2006 Long Symposium: Toward Social Mechanisms of Android Science, Vancouver, BC, Canada, 26–29 July 2006; pp. 39–42. [Google Scholar]

- Mara, M.; Appel, M. Science fiction reduces the eeriness of android robots: A field experiment. Comput. Hum. Behav. 2015, 48, 156–162. [Google Scholar] [CrossRef]

- Campos, J.J. When the negative becomes positive and the reverse: Comments on Lazarus’s critique of positive psychology. Psychol. Inq. 2003, 14, 110–113. [Google Scholar]

- Drake, J.E.; Winner, E. Confronting Sadness Through Art-Making: Distraction Is More Beneficial than Venting. Psychol. Aesthet. Creat. Arts 2012, 6, 255–261. [Google Scholar] [CrossRef]

- Conrad, P. It’s boring: Notes on the meanings of boredom in everyday life. Qual. Sociol. 1997, 20, 465–475. [Google Scholar] [CrossRef]

- Cooney, M.; Nishio, S.; Ishiguro, H. Affectionate Interaction with a Small Humanoid Robot Capable of Recognizing Social Touch Behavior. ACM Trans. Interact. Intell. Syst. 2014, 4, 1–32. [Google Scholar] [CrossRef]

- Hobbes, T. Human Nature. In The English Works of Thomas Hobbes of Malmesbury; Molesworth, W., Ed.; Bohn: London, UK, 1840; Volume IV. [Google Scholar]

- Kim, S.; Bak, J.; Oh, A.H. Do You Feel What I Feel? Social Aspects of Emotions in Twitter Conversations. In Proceedings of the ICWSM, Dublin, Ireland, 4–7 June 2012; AAAI Press: Palo Alto, CA, USA. [Google Scholar]

- Giles, H.; Baker, S.C. Communication accommodation theory. Int. Encycl. Commun. 2008. [Google Scholar] [CrossRef]

- Damiano, L.; Dumouchel, P.; Lehmann, H. Artificial Empathy: An Interdisciplinary Investigation. Int. J. Soc. Robot. 2015, 7, 3–5. [Google Scholar] [CrossRef]

- De Vignemont, F.; Singer, T. The empathic brain: How, when and why? Trends Cognit. Sci. 2006, 10, 435–441. [Google Scholar] [CrossRef] [PubMed]

- Rodrigues, S.H.; Mascarenhas, S.; Dias, J.; Paiva, A. A process model of empathy for virtual agents. Interact. Comput. 2014, 27, 371–391. [Google Scholar] [CrossRef]

- Alenljung, B.; Andreasson, R.; Billing, E.A.; Lindblom, J.; Lowe, R. User Experience of Conveying Emotions by Touch. In Proceedings of the 26th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), Lisbon, Portugal, 28 August–1 September 2017; pp. 1240–1247. [Google Scholar] [CrossRef]

- Mehrabian, A.; Ferris, S.R. Inference of attitudes from nonverbal communication in two channels. J. Consult. Psychol. 1967, 31, 3. [Google Scholar] [CrossRef]

- Kobayashi, K.; Kitamura, Y.; Yamada, S. Action sloping as a way for users to notice a robot’s function. In Proceedings of the IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN’07), Jeju, Korea, 26–29 August 2007; pp. 445–450. [Google Scholar] [CrossRef]

- Christiansen, L.H.; Frederiksen, N.Y.; Jensen, B.S.; Ranch, A.; Skov, M.B.; Thiruravichandran, N. Don’t look at me, I’m talking to you: Investigating input and output modalities for in-vehicle systems. In Proceedings of the IFIP Conference on Human-Computer Interaction, Lisbon, Portugal, 5–9 September 2011; Springer: Berlin/Heidelberg, Germany, 2011; pp. 675–691. [Google Scholar]

- Frederick, S.; Loewenstein, G. 16 Hedonic Adaptation. In Well-Being. The Foundations of Hedonic Psychology; Kahneman, D., Diener, E., Schwarz, N., Eds.; Russell Sage: New York, NY, USA, 1999; pp. 302–329. [Google Scholar]

- Cooney, M.; Nishio, S.; Ishiguro, H. Designing Robots for Well-being: Theoretical Background and Visual Scenes of Affectionate Play with a Small Humanoid Robot. Lovotics 2014, 1, 101. [Google Scholar] [CrossRef]

- Cooney, M.; Sant’Anna, A. Avoiding Playfulness Gone Wrong: Exploring Multi-objective Reaching Motion Generation in a Social Robot. Int. J. Soc. Robot. 2017. [Google Scholar] [CrossRef]

- Ryff, C.D. Happiness Is Everything, or Is It? Explorations on the Meaning of Psychological Well-Being. J. Personal. Soc. Psychol. 1989, 57, 1069–1081. [Google Scholar] [CrossRef]

- Fava, G.A.; Ruini, C. Development and characteristics of a well-being enhancing psychotherapeutic strategy: Well-being therapy. J. Behav. Ther. Exp. Psychiatry 2003, 34, 45–63. [Google Scholar] [CrossRef]

- Korb, A. The Upward Spiral: Using Neuroscience to Reverse the Course of Depression, One Small Change at a Time; New Harbinger Publications: Oakland, CA, USA, 2015. [Google Scholar]

- Williams, L.E.; Bargh, J.A. Experiencing physical warmth promotes interpersonal warmth. Science 2008, 322, 606–607. [Google Scholar] [CrossRef] [PubMed]

- Jung, M.M.; Poel, M.; Poppe, R.; Heylen, D.K. Automatic recognition of touch gestures in the corpus of social touch. J. Multimodal User Interfaces 2017, 11, 81–96. [Google Scholar] [CrossRef]

- Shiomi, M.; Nakagawa, K.; Shinozawa, K.; Matsumura, R.; Ishiguro, H.; Hagita, N. Does a robot’s touch encourage human effort? Int. J. Soc. Robot. 2017, 9, 5–15. [Google Scholar] [CrossRef]

- Silvera-Tawil, D.; Rye, D.; Velonaki, M. Artificial skin and tactile sensing for socially interactive robots: A review. Robot. Auton. Syst. 2015, 63, 230–243. [Google Scholar] [CrossRef]

- Van Erp, J.B.; Toet, A. Social touch in human–computer interaction. Front. Digit. Hum. 2015, 2, 2. [Google Scholar]

- Nomura, T.; Uratani, T.; Matsumoto, K.; Kanda, T.; Kidokoro, H.; Suehiro, Y.; Yamada, S. Why do children abuse robots? In Proceedings of the International Conference on Human–Robot Interaction’15, Portland, OR, USA, 2–5 March 2015. [Google Scholar]

- Cooper, A.J.; Mendonca, J.D. A prospective study of patient assaults on nurses in a provincial psychiatric hospital in Canada. Acta Psychiatr. Scand. 1991, 84, 163–166. [Google Scholar] [CrossRef] [PubMed]

- Bartneck, C.; Nomura, T.; Kanda, T.; Suzuki, T.; Kennsuke, K. A cross-cultural study on attitudes towards robots. In Proceedings of the HCI International, Las Vegas, NV, USA, 22–27 July 2005. [Google Scholar]

- Corrigan, P. How stigma interferes with mental health care. Am. Psychol. 2004, 59, 614. [Google Scholar] [CrossRef] [PubMed]

- Turkle, S. Alone Together: Why We Expect more from Technology and less from Each Other; Basic Books; Hachette UK: London, UK, 2011. [Google Scholar]

- Agnew, R. General strain theory: Current status and directions for further research. Tak. Stock 2006, 15, 101–123. [Google Scholar]

- Haslam, N. Dehumanization: An integrative review. Personal. Soc. Psychol. Rev. 2006, 10, 252–264. [Google Scholar] [CrossRef] [PubMed]

- Scharrer, E. Media exposure and sensitivity to violence in news reports: Evidence of desensitization? J. Mass Commun. Q. 2008, 85, 291–310. [Google Scholar] [CrossRef]

- Ekman, P.; Friesen, W.V. Nonverbal leakage and clues to deception. Psychiatry 1969, 32, 88–106. [Google Scholar] [CrossRef] [PubMed]

- Poggi, I.; D’Errico, F.; Vincze, L. Types of Nods. The Polysemy of a Social Signal. In Proceedings of the LREC 2010, Valetta, Malta, 19–21 May 2010. [Google Scholar]

- O’Neill, C. Weapons of Math Destruction. How Big Data Increases Inequality and Threatens Democracy; Crown, Penguin Random House LLC: New York, NY, USA, 2016. [Google Scholar]

- Bullington, J. ‘Affective’ Computing and Emotion Recognition Systems: The Future of Biometric Surveillance? In Proceedings of the 2nd Annual Conference on Information Security Curriculum Development (InfoSecCD’05), Kennesaw, Georgia, 23–24 September 2008; ACM: New York, NY, USA, 2005; pp. 95–99. [Google Scholar] [CrossRef]

- Grote, T.; Korn, O. Risks and Potentials of Affective Computing. An Interdisciplinary View on the ACM Code of Ethics. In Proceedings of the CHI 2017 Workshop on Ethical Encounters in HCI, Denver, CO, USA, 6–11 May 2017. [Google Scholar]

- Sharkey, A.; Wood, N. The Paro seal robot: Demeaning or enabling. In Proceedings of the AISB, London, UK, 1–4 April 2014; Volume 36. [Google Scholar]

- Ullman, D.; Leite, I.; Phillips, J.; Kim-Cohen, J.; Scassellati, B. Smart human, smarter robot: How cheating affects perceptions of social agency. In Proceedings of the 36th Annual Conference of the Cognitive Science Society (CogSci 2014), Quebec City, QC, Canada, 23–26 July 2014. [Google Scholar]

- Chadalavada, R.T.; Andreasson, H.; Krug, R.; Lilienthal, A.J. That’s on my mind! robot to human intention communication through on-board projection on shared floor space. In Proceedings of the IEEE 2015 European Conference on Mobile Robots (ECMR), Lincoln, UK, 2–4 September 2015; pp. 1–6. [Google Scholar]

- Plutchik, R. Emotions: A general psychoevolutionary theory. In Approaches to Emotion; Scherer, K.R., Ekman, P., Eds.; Lawrence Erlbaum Associates: Mahwah, NJ, USA, 1984; pp. 197–219. [Google Scholar]

- Ståhl, A.; Sundström, P.; Höök, K. A foundation for emotional expressivity. In Proceedings of the 2005 Conference on Designing for User Experience, San Francisco, CA, USA, 3–5 November 2005; American Institute of Graphic Arts: New York, NY, USA, 2005; p. 33. [Google Scholar]

- Meier, B.P.; Robinson, M.D.; Clore, G.L. Why good guys wear white automatic inferences about stimulus valence based on brightness. Psychol. Sci. 2004, 15, 82–87. [Google Scholar] [CrossRef] [PubMed]

- Bertamini, M.; Palumbo, L.; Gheorghes, T.N.; Galatsidas, M. Do observers like curvature or do they dislike angularity? Br. J. Psychol. 2016, 107, 154–178. [Google Scholar] [CrossRef] [PubMed]

- Enquist, M.; Arak, A. Symmetry, beauty, and evolution. Nature 1994, 372, 169–172. [Google Scholar] [CrossRef] [PubMed]

- Grammer, K.; Thornhill, R. Human facial attractiveness and sexual selection: The role of symmetry and averageness. J. Comp. Psychol. 1994, 108, 233–242. [Google Scholar] [CrossRef] [PubMed]

- Joshi, D.; Datta, R.; Fedorovskaya, E.; Luong, Q.; Wang, J.Z.; Li, J.; Luo, J. Aesthetics and emotions in images. IEEE Signal Process. Mag. 2011, 28, 94–115. [Google Scholar] [CrossRef]

- Palmer, S.E.; Schloss, K.B.; Sammartino, J. Visual aesthetics and human preference. Annu. Rev. Psychol. 2013, 64, 77–107. [Google Scholar] [CrossRef] [PubMed]

- Lauer, D.A.; Pentak, S. Design Basics; Cengage Learning: Boston, MA, USA, 2011. [Google Scholar]

- Rodin, R. Mood Lines: Setting the Tone of Your Design. 2015. Available online: https://zevendesign.com/mood-lines-giving-designs-attitude/ (accessed on 15 June 2018).

- Machajdik, J.; Hanbury, A. Affective Image Classification using Features Inspired by Psychology and Art Theory. In Proceedings of the 18th ACM international conference on Multimedia (MM’10), Firenze, Italy, 25–29 October 2010. [Google Scholar]

- Shechtman, E.; Irani, M. Matching local self-similarities across images and videos. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR’07), Minneapolis, MN, USA, 17–22 June 2007; pp. 1–8. [Google Scholar]

- Crowley, E.; Zisserman, A. The State of the Art: Object Retrieval in Paintings using Discriminative Regions. In Proceedings of the British Machine Vision Conference.

- Jongejan, J.; Rowley, H.; Kawashima, T.; Kim, J.; Fox-Gieg, N. The Quick, Draw!—A.I. Experiment. 2016. Available online: https://quickdraw.withgoogle.com/ (accessed on 30 August 2018).

- Motzenbecker, D. Fast Drawing for Everyone. 2017. Available online: https://www.blog.google/technology/ai/fast-drawing-everyone/ (accessed on 30 August 2018).

- Ng, H.; Nguyen, V.D.; Vonikakis, V.; Winkler, S. Deep Learning for Emotion Recognition on Small Datasets Using Transfer Learning. In Proceedings of the 2015 ACM on International Conference on Multimodal Interaction (ICMI’15), Seattle, WA, USA, 9–13 November 2015; ACM: New York, NY, USA, 2015; pp. 443–449. [Google Scholar] [CrossRef]

- Picard, R.W.; Healey, J. Affective wearables. Pers. Technol. 1997, 1, 231–240. [Google Scholar] [CrossRef]

- Samara, A.; Menezes, M.L.R.; Galway, L. Feature Extraction for Emotion Recognition and Modelling Using Neurophysiological Data. In Proceedings of the International Conference on Ubiquitous Computing and Communications and 2016 International Symposium on Cyberspace and Security (IUCC-CSS), Granada, Spain, 14–16 December 2016; pp. 138–144. [Google Scholar]

- Leviathan, Y.; Matias, Y. Google Duplex: An AI System for Accomplishing Real World Tasks Over the Phone. Google AI Blog, 2018. Available online: https://ai.googleblog.com/2018/05/duplex-ai-system-for-natural-conversation.html (accessed on 30 August 2018).

- Cambria, E.; Poria, S.; Gelbukh, A.; Thelwall, M. Sentiment analysis is a big suitcase. IEEE Intell. Syst. 2017, 32, 74–80. [Google Scholar] [CrossRef]

- Breazeal, C.; Aryananda, L. Recognition of affective communicative intent in robot-directed speech. Auton. Robots 2002, 12, 83–104. [Google Scholar] [CrossRef]

- Gold, K.; Doniec, M.; Crick, C.; Scassellati, B. Robotic vocabulary building using extension inference and implicit contrast. Artif. Intell. 2009, 173, 145–166. [Google Scholar] [CrossRef]

- Rani, P.; Sarkar, N.; Smith, C.; Kirby, L. Anxiety detecting robotic system—Towards implicit human–robot collaboration. Robotica 2004, 22, 85–95. [Google Scholar] [CrossRef]

- Xu, H.; Plataniotis, K.N. Affect recognition using EEG signal. In Proceedings of the 14th IEEE International Workshop on Multimedia Signal Processing (MMSP), Banff, AB, Canada, 17–19 September 2012; pp. 299–304. [Google Scholar]

- Merla, A.; Romani, G.L. Thermal signatures of emotional arousal: A functional infrared imaging study. In Proceedings of the 29th Annual IEEE International Conference on Engineering in Medicine and Biology Society (EMBS 2007), Lyon, France, 22–26 August 2007; pp. 247–249. [Google Scholar]

- Liu, Z.; Wang, S. Emotion recognition using hidden markov models from facial temperature sequence. In Affective Computing and Intelligent Interaction; Springer: Berlin, Germany, 2011; pp. 240–247. [Google Scholar]

- Hadjeres, G.; Pachet, F.; Nielsen, F. DeepBach: A Steerable Model for Bach Chorales Generation. arXiv, 2016; arXiv:1612.01010. [Google Scholar]

- Lebrun, T. Who Is the Artificial Author? In Proceedings of the Canadian Conference on Artificial Intelligence, Edmonton, AB, Canada, 16–19 May 2017; Springer: Cham, Switzerland, 2017; pp. 411–415. [Google Scholar]

- Newitz, A. Movie Written by Algorithm Turns out to Be Hilarious and Intense. Ars Technica, 2016. Available online: https://arstechnica.com/gaming/2016/06/an-ai-wrote-this-movie-and-its-strangely-moving/ (accessed on 25 June 2018).

- Cook, M.; Colton, S.; Gow, J. The ANGELINA Videogame Design System—Part I. IEEE Trans. Comput. Intell. AI Games 2017, 9, 192–203. [Google Scholar] [CrossRef]

- Netzer, Y.; Gabay, D.; Goldberg, Y.; Elhadad, M. Gaiku: Generating Haiku with word associations norms. In Proceedings of the Workshop on Computational Approaches to Linguistic Creativity (CALC’09), Boulder, CO, USA, 4 June 2009; Association for Computational Linguistics: Stroudsburg, PA, USA, 2009; pp. 32–39. [Google Scholar]

- Elgammal, A.; Liu, B.; Elhoseiny, M.; Mazzone, M. CAN: Creative Adversarial Networks, Generating Art by Learning About Styles and Deviating from Style Norms. arXiv, 2017; arXiv:1706.07068. [Google Scholar]

- Kauffman, S.A. Investigations; Oxford University Press: Oxford, UK, 2000. [Google Scholar]

- Loreto, V.; Servedio, V.D.; Strogatz, S.H.; Tria, F. Dynamics on expanding spaces: Modeling the emergence of novelties. In Creativity and Universality in Language; Springer: Cham, Switzerland, 2016; pp. 59–83. [Google Scholar]

- Gunning, D. Explainable Artificial Intelligence (XAI). Technical Report by the Defense Advanced Research Projects Agency (DARPA). 2017. Available online: http://www.darpa.mil/attachments/DARPA-BAA-16-53.pdf (accessed on 27 June 2018).

- Schindler, M.; Lilienthal, A.J.; Chadalavada, R.; Ögren, M. Creativity in the eye of the student. Refining investigations of mathematical creativity using eye-tracking goggles. In Proceedings of the 40th Conference of the International Group for the Psychology of Mathematics Education (PME 40), Szeged, Hungary, 3–7 August 2016. [Google Scholar]

- Fox, M.; Long, D.; Magazzeni, D. Explainable Planning. In Proceedings of the IJCAI-17 Workshop on Explainable AI (XAI), Melbourne, Australia, 20 August 2017. [Google Scholar]

- Engelberg, D.; Seffah, A. A Framework for Rapid Mid-Fidelity Prototyping of Web Sites. In Usability: Gaining a Competitive Edge, Proceedings of the IFlP World Computer Congress, Deventer, The Netherlands, 25–30 August 2002; Hammond, J., Gross, T., Wesson, J., Hammond, J., Gross, T., Wesson, J., Eds.; Springer: Boston, MA, USA, 2002. [Google Scholar]

- McClellan, J.H.; Parks, T.W. A unified approach to the design of optimum FIR linear phase digital filters. IEEE Trans. Circuit Theory 1973, CT-20, 697–701. [Google Scholar] [CrossRef]

- Welch, P. The use of fast fourier transform for the estimation of power spectra: A method based on time averaging over short, modified periodograms. IEEE Trans. Audio Electroacoust. 1967, 15, 70–73. [Google Scholar] [CrossRef]

- Lovett, A.; Scassellati, B. Using a robot to reexamine looking time experiments. In Proceedings of the Third International Conference on Development and Learning, La Jolla, CA, USA, 20–22 October 2004; pp. 284–291. [Google Scholar]

- Darwin, C.R. The Expression of the Emotions in Man and Animals, 1st ed.; John Murray: London, UK, 1872; Available online: http://darwin-online.org.uk/content/frameset?itemID=F1142&viewtype=text&pageseq=1 (accessed on 4 November 2014).

- Ekman, P. An argument for basic emotions. Cognit. Emot. 1992, 6, 169–200. [Google Scholar] [CrossRef]

- Russell, J.A. A circumplex model of affect. J. Personal. Soc. Psychol. 1980, 39, 1161–1178. [Google Scholar] [CrossRef]

- Wundt, W. Outlines of Psychology; Wilhelm Englemann: Leipzig, Germany, 1897. [Google Scholar]

- Soleymani, M.; Garcia, D.; Jou, B.; Schuller, B.; Chang, S.; Pantic, M. A survey of multimodal sentiment analysis. Image Vis. Comput. 2017, 65, 3–14. [Google Scholar] [CrossRef]

- Cacioppo, J.T.; Gardner, W.L.; Berntson, G.G. The Affect System Has Parallel and Integrative Processing Components: Form Follows Function. J. Personal. Soc. Psychol. 1999, 76, 839–855. [Google Scholar] [CrossRef]

- Hong, J.; Lee, A.Y. Feeling Mixed but Not Torn: The Moderating Role of Construal Level in Mixed Emotions Appeals. J. Consum. Res. 2010, 37. [Google Scholar] [CrossRef]

- LaPlante, D.; Ambady, N. Multiple messages: Facial recognition advantage for compound expressions. J. Nonverbal Behav. 2000, 24, 211–224. [Google Scholar] [CrossRef]

- Ellsworth, P.C.; Scherer, K.R. Appraisal processes in emotion. In Series in affective science. Handbook of Affective Sciences; Davidson, R.J., Scherer, K.R., Goldsmith, H.H., Eds.; Oxford University Press: New York, NY, USA, 2007; pp. 572–595. [Google Scholar]

- Scherer, K.R.; Schorr, A.; Johnstone, T. (Eds.) Appraisal Processes in Emotion: Theory, Methods, Research; Oxford University Press: Oxford, UK, 2001. [Google Scholar]

- Russell, J.A.; Barrett, L.F. Core affect, prototypical emotional episodes, and other things called emotion: Dissecting the elephant. J. Personal. Soc. Psychol. 1999, 76, 805. [Google Scholar] [CrossRef]

- Sheppes, G.; Gross, J.J. Is timing everything? Temporal considerations in emotion regulation. Personal. Soc. Psychol. Rev. 2011, 15, 319–331. [Google Scholar] [CrossRef] [PubMed]

- Weiss, H.M.; Cropanzano, R. Affective events theory: A theoretical discussion of the structure, causes and consequences of affective experiences at work. Res. Organ. Behav. 1996, 18, 1–74. [Google Scholar]

- Kübler-Ross, E.; Kessler, D. On Grief and Grieving: Finding the Meaning of Grief through the Five Stages of Loss; Simon and Schuster: New York, NY, USA, 2014. [Google Scholar]

- Bonn, S.A. Fear-Based Anger Is the Primary Motive for Violence: Anger Induced Violence Is Rooted in Fear. Psychology Today 2017. Available online: https://www.psychologytoday.com/blog/wicked-deeds/201707/fear-based-anger-is-the-primary-motive-violence (accessed on 25 June 2018).

- Moss, R. Creative AI: Software Writing Software and the Broader Challenges of Computational Creativity. 2015. Available online: https://newatlas.com/creative-ai-computational-creativity-challenges-future/36353/ (accessed on 30 August 2018).

- Colton, S. Creativity versus the perception of creativity in computational systems. In Proceedings of the AAAI Spring Symposium: Creative Intelligent Systems, Palo Alto, CA, USA, 26–28 March 2008; p. 8. [Google Scholar]

- Diedrich, J.; Benedek, M.; Jauk, E.; Neubauer, A.C. Are Creative Ideas Novel and Useful? Psychol. Aesthet. Creat. Arts 2015, 9, 35–40. [Google Scholar] [CrossRef]

- Ritchie, G. Some Empirical Criteria for Attributing Creativity to a Computer Program. Minds Mach. 2007, 17, 67–99. [Google Scholar] [CrossRef]

- Kahn, P.H., Jr.; Kanda, T.; Ishiguro, H.; Gill, B.T.; Shen, S.; Ruckert, J.H.; Gary, H.E. Human creativity can be facilitated through interacting with a social robot. In Proceedings of the Eleventh ACM/IEEE International Conference on Human Robot Interaction, Christchurch, New Zealand, 7–10 March 2016; IEEE Press: Piscataway, NJ, USA, 2016; pp. 173–180. [Google Scholar]

- Benjamin, C.L.; Puleo, C.M.; Settipani, C.A.; Brodman, D.M.; Edmunds, J.M.; Cummings, C.M.; Kendall, P.C. History of cognitive-behavioral therapy in youth. Child Adolesc. Psychiatr. Clin. N. Am. 2011, 20, 179–189. [Google Scholar] [CrossRef] [PubMed]

- David, D.; Szentagotai, A.; Eva, K.; Macavei, B. A synopsis of rational-emotive behavior therapy (REBT); fundamental and applied research. J. Ration. Emot. Cognit. Behav. Ther. 2005, 23, 175–221. [Google Scholar] [CrossRef]

- Young, J.E.; Klosko, J.S.; Weishaar, M.E. Schema Therapy: A Practitioner’s Guide; Guilford Press: New York, NY, USA, 2003. [Google Scholar]

- Walker, L.G.; Walker, M.B.; Ogston, K.; Heys, S.D.; Ah-See, A.K.; Miller, I.D.; Hutcheon, A.W.; Sarkar, T.K.; Eremin, O. Psychological, clinical and pathological effects of relaxation training and guided imagery during primary chemotherapy. Br. J. Cancer 1999, 80, 262. [Google Scholar] [CrossRef] [PubMed]

- Wegner, D.M.; Schneider, D.J.; Carter, S.R.; White, T.L. Paradoxical effects of thought suppression. J. Pers. Soc. Psychol. 1987, 53, 5. [Google Scholar] [CrossRef] [PubMed]

- Kaplan, F.F. Art-based assessments. In Handbook of Art Therapy; Guilford Press: New York, NY, USA, 2003; pp. 25–35. [Google Scholar]

- Dan-Glauser, E.S.; Scherer, K.R. The Geneva affective picture database (GAPED): A new 730-picture database focusing on valence and normative significance. Behav. Res. Methods 2011, 43, 468. [Google Scholar] [CrossRef] [PubMed]

- Kurdi, B.; Lozano, S.; Banaji, M.R. Introducing the open affective standardized image set (OASIS). Behav. Res. Methods 2017, 49, 457–470. [Google Scholar] [CrossRef] [PubMed]

- Bradley, M.M.; Lang, P.J. The International Affective Picture System (IAPS) in the study of emotion and attention. In Handbook of Emotion Elicitation and Assessment; Coan, J.A., Allen, J.J.B., Eds.; Oxford University Press: Oxford, UK, 2007; pp. 29–46. [Google Scholar]

- Kralj Novak, P.; Smailović, J.; Sluban, B.; Mozetič, I. Sentiment of Emojis. PLoS ONE 2015, 10, e0144296. [Google Scholar] [CrossRef] [PubMed]

| Question | Proposal |

|---|---|

| Who | One robot engages with one human (dyadic) |

| What | In nondirective painting |

| Where | Using different canvases |

| When | During a single session (1 h; comprising a warm-up, a main activity, and reflection) |

| Requirement | Solution |

|---|---|

| Art therapy. | |

| Co-explore. | Infer, Paint, Ask, Track State. |

| Enhance self-image. | Be Positive. |

| Improve social interactions. | Suggest. |

| Please. | Offer familiar interface, Paint (Match, Creativity), Suggest, Be Positive, Infer, Greeting |

| Engage Emotionally. | Infer/Paint |

| Engage Creatively. | Paint creatively, Explain. |

| Avoid Pitfalls. | |

| Avoid Physical harm | Infer problems, Alert |

| (from robot) | Be Safe (safe design, self-diagnostics, transparency about activities and intentions; physics model, capability to detect paints, water, substrates, etc.) |

| (from art-making) | Explain (disclosure of potential dangers), Infer (checking for risks) |

| (from others) | Infer (detect negative human interactions), Alert (try to prevent/defuse) |

| Avoid Psychological harm | |

| (intimidating) | Explain (disclose safety) |

| (making people feel bad) | Praise (positivity toward the person) |

| Avoid Mistakes | |

| (Indiscriminately revealing emotions) | Be Safe (patient confidentiality, safe storage of paintings, secure system) |

| (Recognizing/showing the wrong emotions) | Paint Complex Emotions (use rich expressive model (e.g., mixed emotions, referents, timing)), Ask (give feedback and confirm), Be Safe (secure system) |

| (Mistaken judgements) | Explain (transparency, collaborative), Track State (not taking the place of humans) |

| Avoid Deception | |

| (Taking the place of humans) | Explain (prioritize/assist human therapists, transparency), Suggest (promote social ties) |

| (Manipulating emotions) | Be Safe (confidentiality, no financial incentive) |

| (Disappointing) | Explain (ensure expectations are clear) |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cooney, M.D.; Menezes, M.L.R. Design for an Art Therapy Robot: An Explorative Review of the Theoretical Foundations for Engaging in Emotional and Creative Painting with a Robot. Multimodal Technol. Interact. 2018, 2, 52. https://doi.org/10.3390/mti2030052

Cooney MD, Menezes MLR. Design for an Art Therapy Robot: An Explorative Review of the Theoretical Foundations for Engaging in Emotional and Creative Painting with a Robot. Multimodal Technologies and Interaction. 2018; 2(3):52. https://doi.org/10.3390/mti2030052

Chicago/Turabian StyleCooney, Martin Daniel, and Maria Luiza Recena Menezes. 2018. "Design for an Art Therapy Robot: An Explorative Review of the Theoretical Foundations for Engaging in Emotional and Creative Painting with a Robot" Multimodal Technologies and Interaction 2, no. 3: 52. https://doi.org/10.3390/mti2030052

APA StyleCooney, M. D., & Menezes, M. L. R. (2018). Design for an Art Therapy Robot: An Explorative Review of the Theoretical Foundations for Engaging in Emotional and Creative Painting with a Robot. Multimodal Technologies and Interaction, 2(3), 52. https://doi.org/10.3390/mti2030052