1. Introduction

Most agent-based research concerning teamwork has focused on agent–agent interaction. However, as interest in the use of intelligent virtual agents (IVAs) as companions and/or members of IVA–human teams grows, there is increased interest in understanding the ways in which a team-based bond is built and fostered, as this is likely to improve relationships and team outcomes [

1]. Research concerning human teams has identified that a team is not just a gathering of people; team members should have a shared goal, effort coordination [

2], shared mental model (SMM) [

3], high level of trust [

4], high commitment [

5], and effective communication [

6]. Furthermore, conventional human–human teamwork is known to require the development of trust, which is essential for the quality of the teamwork [

7]. Besides trust, teamwork requires the members to have shared understanding and commitment to the joint activity [

8]. There is a paucity of research studies considering these factors (i.e., communication, trust, commitment, shared mental model) in the context of human–IVA teams.

The increasing interest in human–IVA teamwork has led researchers to study the variables that may influence such teams [

9,

10,

11]. However, few studies go beyond finding a relationship between two variables and are thus unable to build a more holistic picture of the factors that could affect these teams. Moreover, to the best of our knowledge, there is no study that has investigated the impact of IVA–human multimodal communication (i.e., verbal and nonverbal communication) on certain factors that have been found to impact on the performance of human teams, such as SMM, commitment, and trust. This study investigates the impact of the behavioural aspect of human–agent teamwork (i.e., multimodal communication) on the development of the cognitive aspect of teamwork (i.e., SMM), the effect of the latter on the establishment of the social aspect of teamwork (i.e., a human’s trust in the IVA’s decision and the human’s commitment to honour his/her promises), and the collective effect of all of these aspects on human–agent team performance.

In the next section on background literature, the research model, research questions, and the research variables are presented.

Section 3 introduces the agent architecture. In

Section 3, the studies conducted to answer the five research questions are presented. The results are shown in

Section 4, followed by discussion in

Section 5. Finally, conclusions and future work are presented in

Section 6.

2. Background and Research Model

Studying the factors that impact teams is not new to human teamwork [

12]. However, when it comes to heterogeneous teams that include humans and IVAs, the mission becomes difficult, as humans and IVAs have different beliefs and different intentions that drive them while participating in teamwork. Research work studying human–IVA teams focus on one of three aspects in teamwork. These aspects are either behavioural aspects (i.e., communication), cognitive aspects (e.g., SMM), or social aspects (e.g., trust and commitment). Several studies have explored the effect of each of these aspects on team performance; yet, the relationship between these aspects is unknown.

2.1. Behavioural Aspects of Teamwork

This section describes some research work that studies communication between a human and an agent. Horvitz [

13] identified a number of challenges in human–machine interaction, including seeking mutual understanding or grounding of joint activity, recognising problem solving opportunities, decomposing problems into sub-problems, solving sub-problems, combining solutions found by humans and machines, and maintaining natural communication and coordination during these processes. Ferguson and Allen [

14] stated that true human–agent collaborative behaviour requires an agent to possess a number of capabilities including reasoning, communication, planning, execution, and learning. Allwood [

15] mentioned four requirements that characterise agent cooperation, these requirements are as follows: considering each other cognitively in the interaction, having a joint target, considering each other ethically in the interaction, and trusting each other.

Team communication includes two or more individuals and a meaningful message that a sender attempts to send to the receiver (teammate) either to influence his attitude, discuss tactics, or coordinate teamwork. Many research works target designing systems and models that involve human–agent communication. Some of these works focus only on verbal communication, for example, Luin and Akker [

16,

17] presented a natural language accessible navigation agent for a virtual theatre environment where the user can navigate into the environment, and the agents inside the system can answer the user’s questions. Other scholars are concerned with nonverbal communication, such as facial expressions, gesture, and body movement. For example, Miao et al. [

18,

19] presented a system to train learners to handle abnormal situations while driving cars by interacting nonverbally with the agent. A few studies have focused on multimodal communication that requires the interlocutors to express their intentions using different channels, that is, verbal and nonverbal. Multimodal communication includes acts such as observing the listener’s behaviour, expressing the speaker’s beliefs and intention, monitoring the listener’s reaction, and providing/responding to feedback [

20].

Although the previously presented works studied multimodal communication and its effect on team workflow, these studies did not investigate how communication influences the dynamics of teamwork, that is to say how communication elevates team performance. This missing link between communication and team performance was investigated in our current study in research questions 1–5 (RQ1–RQ5, see below).

2.2. Cognitive Aspects of Teamwork and SMMs

The cognitive aspect, the second aspect of teamwork, has, perhaps, gained the most interest of researchers. The impact of SMM on teamwork is identified in many research studies (e.g., [

21,

22]). A number of researchers have found that human team performance while achieving a shared goal will be effectively improved if team members have adequate shared understanding of the situation [

23,

24], task-based knowledge of SMM [

25], and team-based knowledge of SMM [

3]. Moreover, researchers have found that SMM plays a crucial role in collaborative activity [

26]. A number of studies have argued that the development of a SMM between interacting team members has a significant positive effect on team effectiveness [

27].

In recent years, researchers have been interested in studying the role of agents in human teamwork (e.g., [

28]). Special interest has been directed to teamwork between humans and IVAs [

29,

30]. The key issues to be addressed in designing agents as teammates to collaborate with humans include communicating their intent and making results intelligible to them [

31] and identifying shared understanding for human–agent coordination [

32]. As SMM has proven its positive impact on human teams, this notion has found its way into agent studies. Sycara and Sukthankar [

33] stated that the biggest challenge in human–agent teamwork is to establish a SMM. In recent years, extending the concept of SMM to include teams of agents or situations that combine the human and the agent in one team has inspired research effort [

34].

Most research into SMMs concern human–human teamwork and communication (e.g., [

35]). Some research exists that considers a SMM in the context of agent–agent teamwork. In this work, (e.g., [

36]) agents were designed to use SMM knowledge of the task to communicate information with other agents in a team. However, this work focused on a team of agents. In order to design an agent’s cognitive structure specifically for human–agent teamwork, Fan and Yen [

34] developed a system called shared mental models for all, SMMall. SMMall implements a hidden Markov model (HMM) to help the agent to predict its human partner’s cognitive load status. However, SMMall does not support communication. Our proposed model enables agents, on the one hand, to deduce the human’s intention and, on the other hand, to communicate their internal states. In other work, Hodhod et al. [

37] proposed a formal approach to construct SMMs between computational improvisational agents and human interactors. The authors used some socio-cognitive studies from human improvisers, in addition to fuzzy rules and confidence factors, to allow agents to reason about uncertainty. This approach was presented theoretically, and no result was provided to validate the approach. A few studies have investigated the relationship between multimodal communication and the degree of coordinated performance attained by teammates, which in turn fosters the development of a SMM [

35].

Some agent-based research in the area of teamwork has pursued goals similar to ours without the explicit use of a SMM [

38]. For example, the Alelo language and cultural training system [

39] allows a human trainee to observe via feedback bars and icons, whether they are communicating appropriately within the context of a specific cultural scenario. SMM concepts resemble Traum’s use of grounding models [

40] or mutual beliefs between humans and an IVA. Traum’s work focused on studying a human’s dialogue and creating a conversation system that mimics human verbal communication to establish mutual understanding with a conversational virtual human [

41]. However, collaborative activities need more than grounding based only on verbal conversation. Indeed, verbal communication alone is partial communication. Grosz’s work covered many aspects of agent teamwork including various techniques for collaborative planning [

42] and communication [

43]. However, the work was concerned more with agent–agent collaboration where planning and communication is built on shared beliefs and intentions between agents. The work does not use communication to build, maintain, and monitor the shared beliefs. Another noteworthy research work that has been studying agent communication and collaboration [

44] has been Dignum’s work. In research [

45], a model was presented for realising believable human-like interaction between virtual agents in a multi-agent system (MAS). In another research study, Jonker et al. [

46] introduced a framework that engineers agents on the basis of the notion of shared mental model. The framework aimed to incorporate the concept of SMMs in agent reasoning while in a teamwork situation with a human. The Blocks World for Team (BW4T) was used as a testing platform for the proposed framework.

An interesting stream of work was Pelachaud’s work on IVA’s multimodal communication in situations with a designated goal. For example, Pelachaud and Poggi [

47] presented a system to automatically select the appropriate facial and gaze behaviours corresponding to a communicative act for a given speaker and listener. Their system focused on adapting the nonverbal behaviour of agents and specifically facial expression during communication. The agent’s facial expressions were selected based on Ekman’s description of facial expressions. However, this stream of work focused more on the impact of the IVA’s multimodal communication (particularly nonverbal) on the human’s performance without investigating the interrelationships of the communication with other factors that make communication influential in human–IVA teams. In a recent study, Singh et al. [

48] investigated the influence of sharing intentions (e.g., goals) and world knowledge (e.g., beliefs) on agent team performance. The study used BW4T to explore which component(s) contribute most to artificial team performance across different forms of interdependent tasks. The results showed that with high levels of interdependence in tasks, communicating intentions contributes most to team performance, while for low levels of interdependence, communicating world knowledge contributes more. Additionally, SMM was found to correlate with improved team performance for the artificial agent teams.

These studies (in

Section 2.2) demonstrated the importance of multimodal communication and its positive influence on developing taskwork and teamwork SMMs between teammates. Nevertheless, these studies do not consider which components of multimodal communication (i.e., verbal and nonverbal) contributed more to which SMMs components (i.e., taskwork and teamwork). Additionally, these studies did not explain how SMMs effect team performance. In the current study, we aimed to investigate which multimodal communication effects which SMMs components (RQ1 and RQ2, see below). Additionally, the study aimed to explain the mechanism that makes SMMs between teammates influence team performance (RQ3–RQ5, see below).

2.3. Social Aspects of Teamwork

The third aspect of teamwork is the social aspect. Research works have investigated how to foster trust in human teams [

49] and strengthen commitment between teammates [

8]. A number of studies have investigated the commitment between a team of agents [

50,

51] or in multi-agent systems [

52]. These studies have taken advantage of being able to design agents that have a shared understanding of the common goal, as agents share the same world. The social relationship between humans and IVAs has drawn the interest of agent researchers. There has been particular interest in the development of human friendship with emotionally intelligent IVAs that can intentionally establish and strengthen social relations with other agents and humans [

53]. However, very few studies have considered commitment to fulfil a joint task executed by a team of humans and IVAs. In one of these few studies, van Wissen et al. [

54] found that humans tend to be unfair and less committed to agent teammates. In their study, there was a simplified means of communication between humans and agents via exchanging text messages with requests/replies. The human’s commitment was evaluated by calculating the ratio of fulfilled promises to give an agent a reward agreed on beforehand. Given the importance of commitment in human teams, commitment between agents and humans in heterogeneous teams has been understudied.

The third direction of studies have focused on the social aspects between teammates, such as trust and commitment. Although the social aspects determine team mechanics, the social aspects are not isolated from other cognitive and behavioural aspects. The link between these different aspects while collaborating with a team was studied in this paper (in RQ3 and RQ4, see below).

2.4. Research Contribution and Model

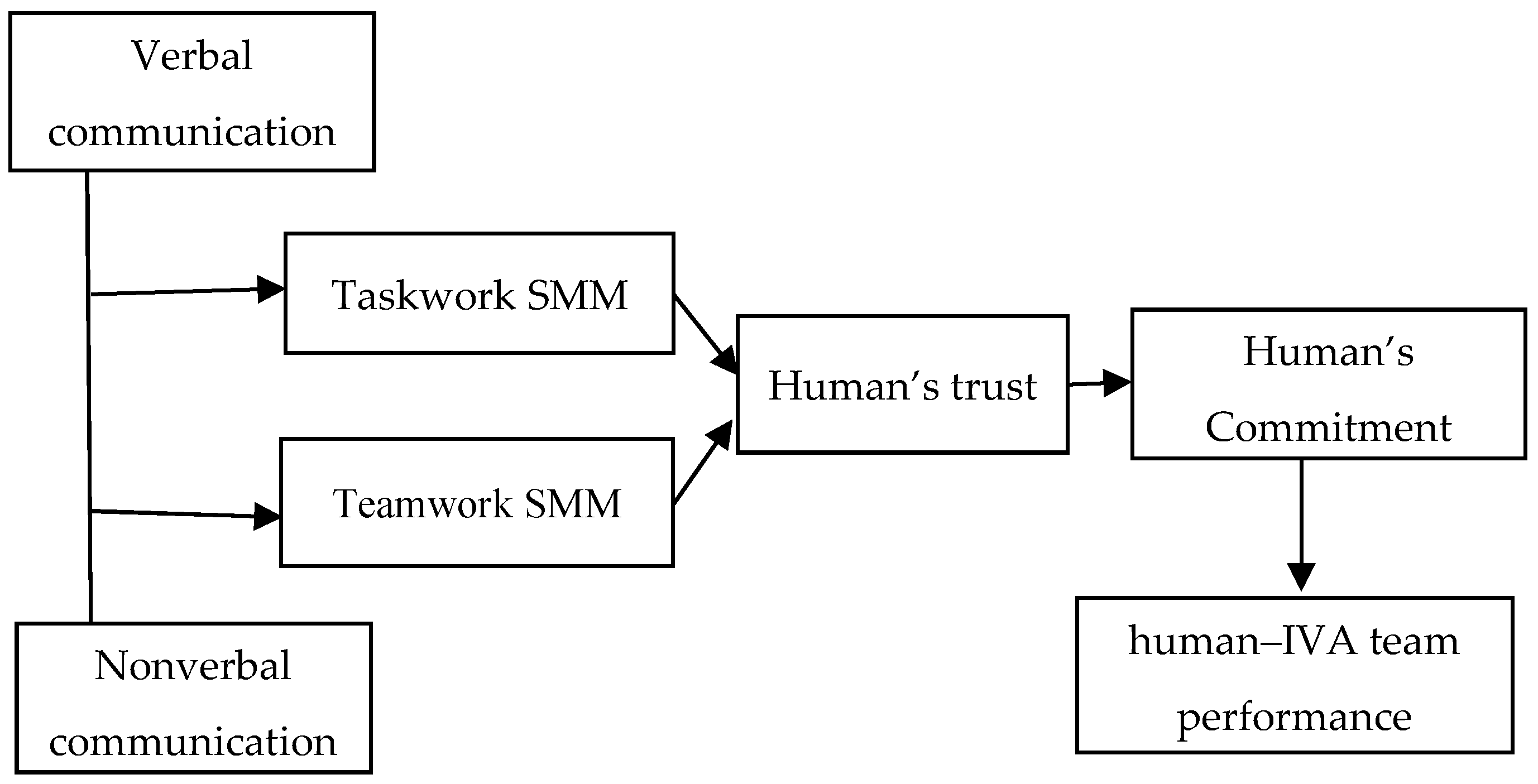

Novelly, this paper presents a missing link in human–agent interaction studies. To contribute to our understanding of human–IVA teamwork, this paper aims to investigate the influence of IVA multimodal communication on the development of a SMM between humans and IVAs and on the establishment of human trust in the IVA’s decision. In addition, this paper aims to study the influence of human trust in IVAs on human commitment to honour their promises to their IVA teammates. Moreover, the paper aims to explore the impact of the human commitment level on team performance. This work seeks to provide a holistic model of IVA–human teamwork, depicted in

Figure 1, to explore the research questions (RQ1–RQ5) defined at the end of this subsection. The seven components of our model correspond to the research variables under investigation, while the arrows in

Figure 1 show the direction of influence between the variables; for example, this study investigates the impact of verbal communication on taskwork SMMs (and not the other way). An introduction to each of the concepts follows.

Verbal and nonverbal communication. Psycholinguistic studies [

55,

56] have affirmed the complementary nature of verbal and nonverbal aspects in human expressions of communication. In the light of these results, several research studies sought to integrate verbal and nonverbal aspects in IVA communication. However, such studies focused mainly on the nonverbal communication of IVAs [

47] or on implementing synchronisation between speech, facial, and body movements in a manner similar to humans [

57,

58]. Multimodal communication was found to play a crucial role in teamwork [

59]. Furthermore, other studies report that computer-mediated communication does not differ from face-to-face communication in terms of the capability of social-information exchange [

60].

Taskwork and Teamwork SMM. The notion of a SMM has been studied widely in coordinating human teamwork [

3,

61]. The idea behind a SMM is that the overall performance of teams improves if team members have a shared understanding of the targeted task and of the capabilities of other the members. Many researchers studying SMMs have classified shared knowledge into two categories: knowledge about the team and knowledge about the task [

61]. Given the importance of a SMM on human team performance, this concept is discussed further in the following section.

Trust. There are many definitions of the term trust [

62]. A commonly cited definition was given by Mayer et al. [

63], “The willingness of a party to be vulnerable to the actions of another party based on the expectation that the other will perform a particular action important to the trustor, irrespective of the ability to monitor or control that other party (p. 712)”. Although trust is considered a major challenge for human teams [

64], minimal research has studied the development of human–IVA trust and commitment.

Commitment. The notion of commitment expresses a mental state of obligation to behave in a specific manner. There are different levels of commitment from personal to collective commitment [

65]. Collective commitment is the most effective motivation to be considered in teamwork [

66], as this level of commitment stimulates individual social behaviour towards the welfare of a group to which the individual belongs. Commitment in the context of teamwork could be classified in three levels: task-based commitment, individual team-based commitment, and collective team-based commitment. Task-based commitment refers to a pledge to oneself to complete a shared mission regardless of the involved participants, while individual team-based commitment denotes the long-term desire to maintain a valued partnership with one or more members from the whole team; this desire goes beyond the designated task. Collective team-based commitment indicates the social willingness to belong to a team. One of the earliest theories that modelled the relationship between trust and commitment was the commitment-trust theory of relationships in business teamwork [

67]. This interest in the principles of trust and commitment was later extended to other types of teamwork.

Performance. A number of measures could be used to evaluate team performance in a virtual environment (VE) including productivity, quality, timeliness, and efficiency. Productivity means the value of teamwork output divided by the cost to achieve the task [

68]. Quality means the degree to which the output of teamwork meets the expectations. Timeliness measures whether a unit of work is done correctly on time or in less time than estimated. Efficiency measures whether the teamwork produces the required output at the minimum resource cost, thus taking into account productivity and timeliness. To test the model in

Figure 1, we have proposed the following five research questions:

Research Question 1 (RQ1): Do multimodal, verbal and nonverbal, communication methods between humans and IVAs impact humans’ perceptions of a taskwork SMM between humans and IVAs? Moreover, which method contributes more in taskwork SMM prediction?

Research Question 2 (RQ2): Do multimodal, verbal and nonverbal, communication methods between humans and IVAs impact humans’ perceptions of teamwork SMM between humans and IVAs? Moreover, which method contributes more in teamwork SMM prediction?

Research Question 3 (RQ3): Do taskwork and teamwork SMMs impact the human’s trust in the IVA’s decision? Moreover, which one contributes more in trust prediction?

Research Question 4 (RQ4): Does the human’s trust in the IVA’s decision impact a human’s commitment to honour his/her promises towards achieving the shared task?

Research Question 5 (RQ5): Does the human’s commitment to honour his/her promises impact the human–IVA team performance?

3. Agent Architecture

There are a number of approaches that aimed at designing an agent architecture. Each approach has a point of view as to how an agent can mentally plan and make decisions. Among these approaches Shoham [

69] constructed an approach as a specialisation to object-oriented programming (OOP) in programming. OOP views a computational system as composed of modules/units/objects. Each object has its way to receive and send messages to other objects. As a specialised OOP concept related to agent architecture, Shoham presented the agent-oriented programming (AOP) approach, in which each module (the agent in this case) has components, such as beliefs about the world, capabilities, and decisions, forming the mental state of an agent. An agent has different ways and constraints to send and receive messages that express its mental states.

Taking another agent architecture perspective, Wooldridge and Jennings [

70] elaborated the notion of agency to be a weak or strong. The weak notion of agency may denote a software-based system with the following properties: autonomous, social ability, reactivity, and pro-activeness. A stronger notion of agency extends the properties of weak agency to include the concepts of artificial intelligence (AI) that aim to design a human-like agent that mimics the human mental state.

To manage agent reasoning and human–IVA collaboration and communication, we have designed and implemented an agent architecture and supporting communication module.

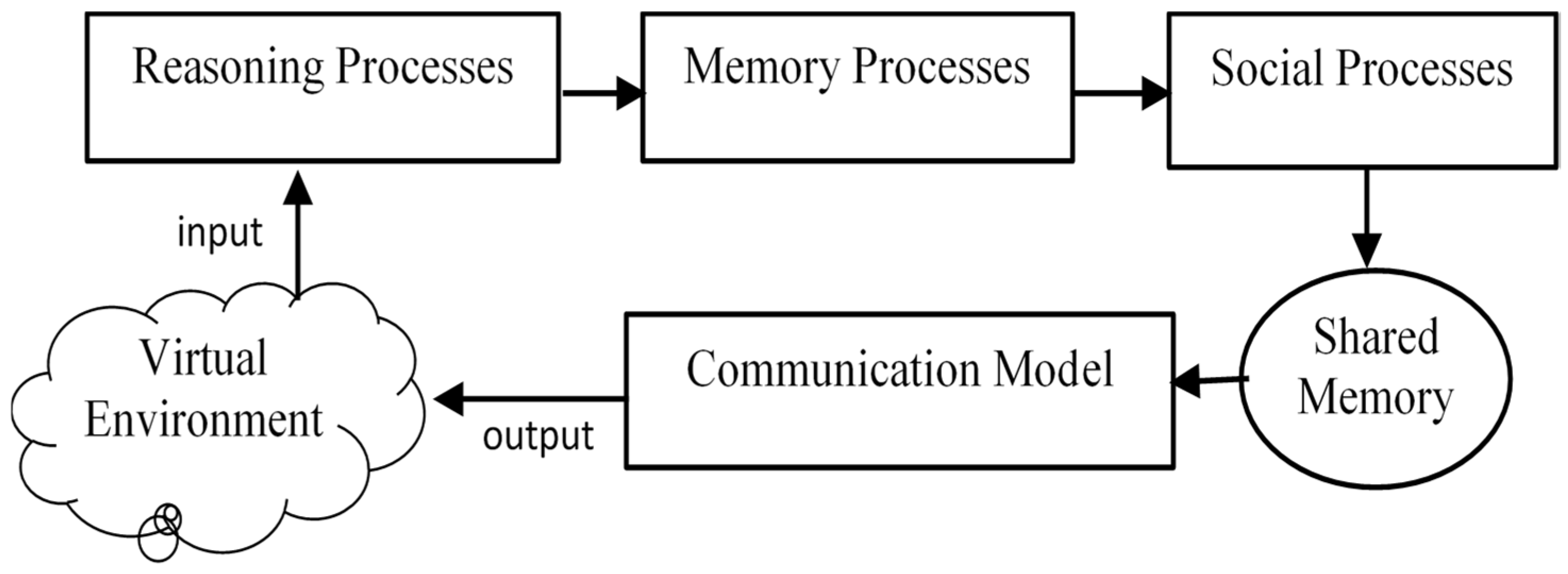

Figure 2 shows a high-level integration of key components of our agent architecture, including reasoning, memory, and social processes and the communication model. We used a pipes-and-filters architecture [

71] to design our agent’s cognitive and social capabilities. This architecture is suitable in a situation where a human and an IVA take turns to achieve a target where the agent has to take known steps, but the input and the output of each step is non-deterministic and unpredictable. Additionally, the pipes-and-filters approach supports our communication model that requires as input the output of agent cognition. This output should be shared on a blackboard with the communication model to express verbally and nonverbally the intention of the agent.

A blackboard is suitable for sharing memory between the agent architecture and the communication module because it is feasible for the agent to have access to the stored states and knowledge. However, we note that use of a blackboard is not suitable for sharing knowledge and state between the human and the IVA, as it is not possible to directly access the human’s mind. It is for this reason that our work focuses on the development and maintenance of a shared mental model for determining what the human may be thinking and if it is consistent with the agent’s reasoning. The architecture is compatible with the belief–desires–intentions model [

72].

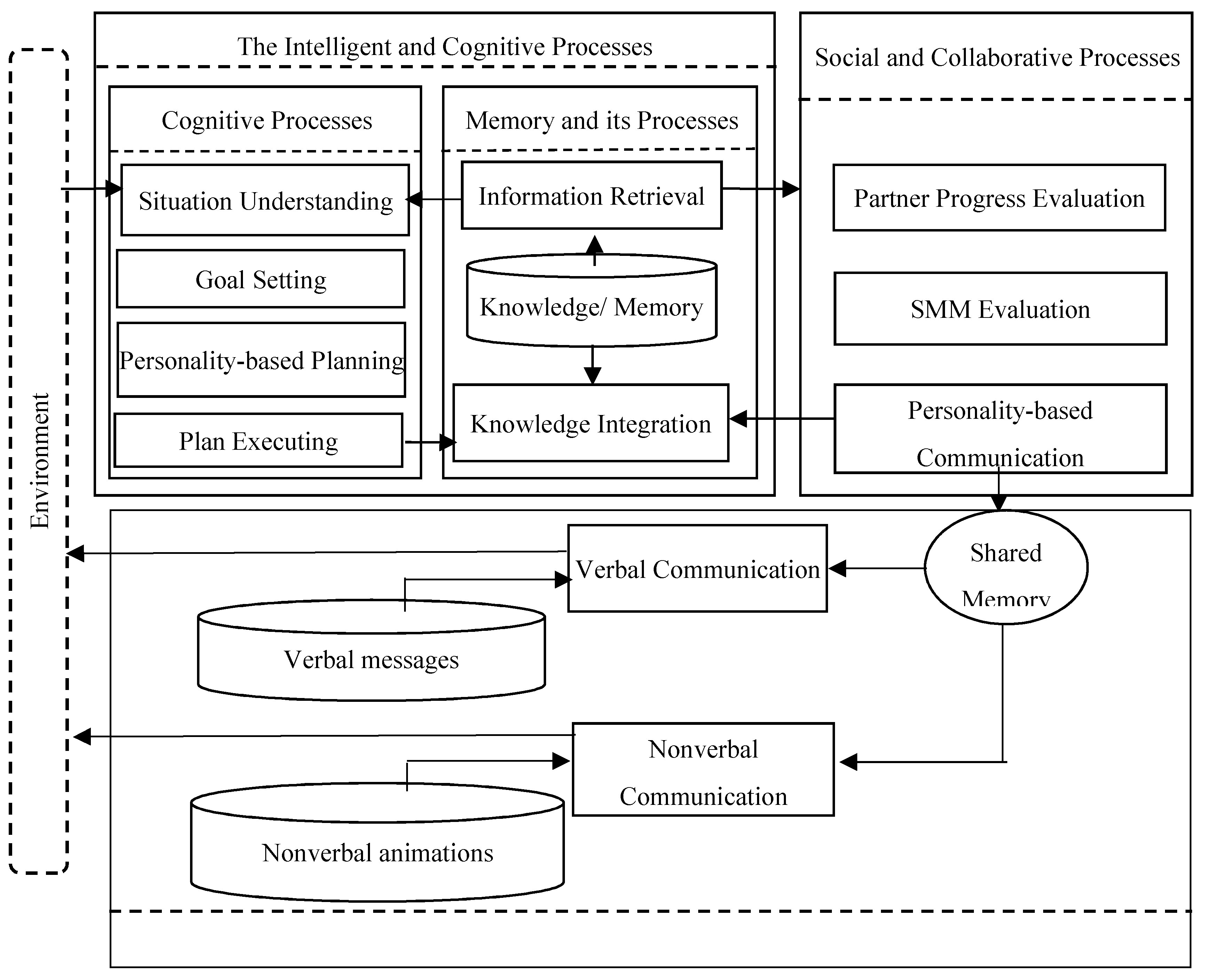

Agent architecture related to the communication model is presented is

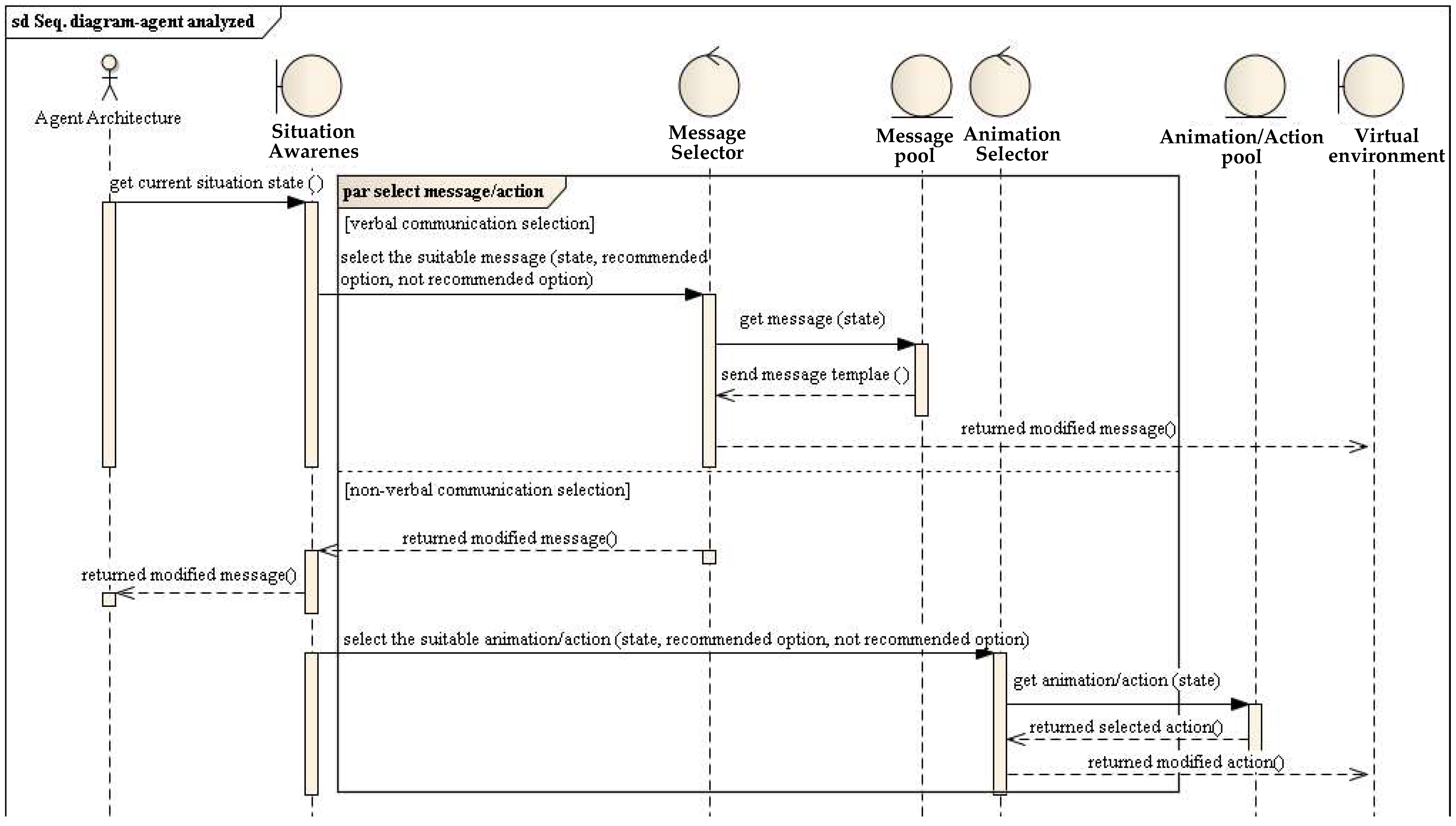

Figure 3. Agent architecture comprises number of modules. These modules are responsible for receiving the details about the current collaborative situation from the virtual environment and managing the decisions required.

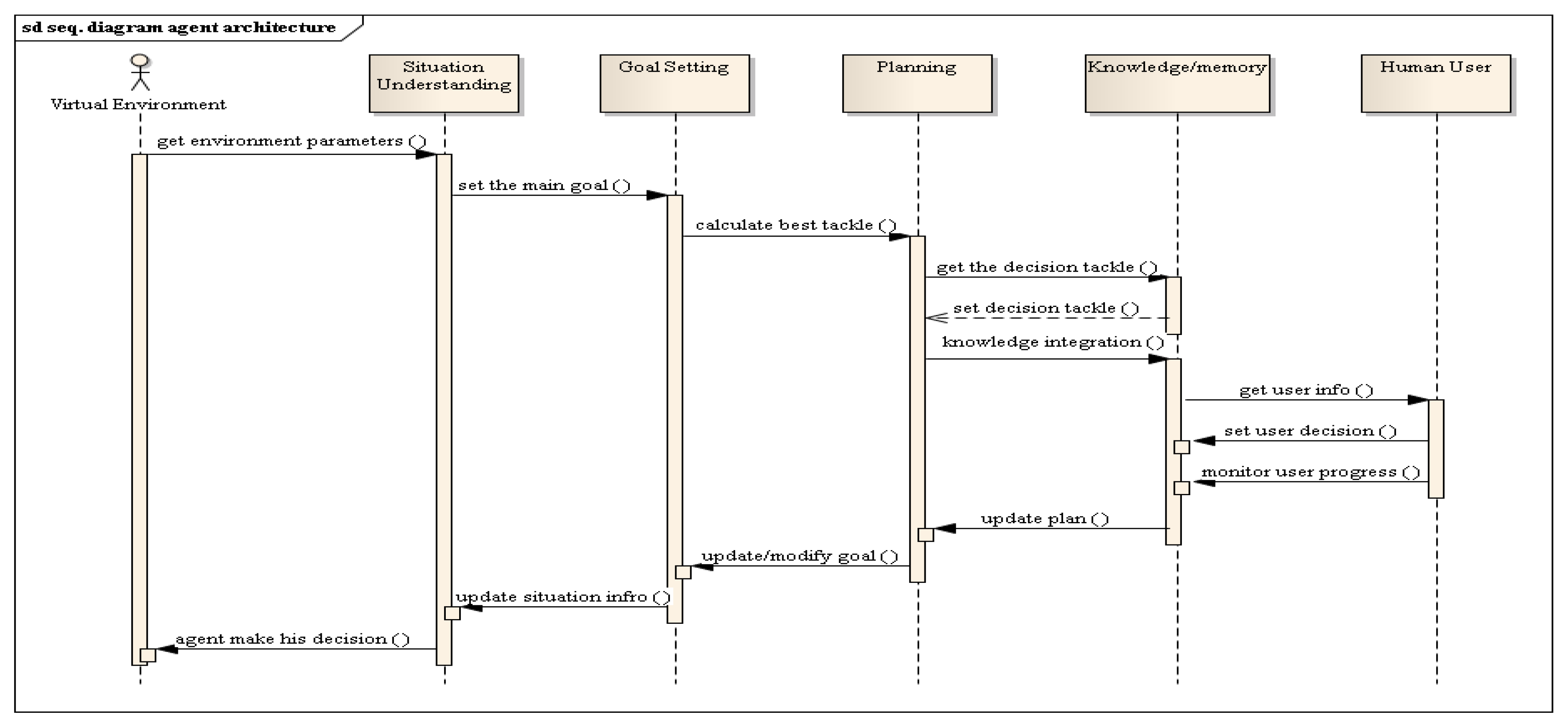

The agent architecture comprises several modules, represented as classes/objects in

Figure 4. These modules are responsible for receiving the details about the current collaborative situation from the virtual environment and managing the decisions required. A sequence diagram is presented in

Figure 3 to demonstrate how these modules interact and the tasks they perform. Virtual environment parameters are perceived by the

situation awareness module where these parameters will be collected and stored in a data structure. These data structures in turn will be passed to the

goal setting module. The goal setting class contains the main goal of the task to be achieved in the virtual environment and the sub-goals to be achieved to reach the main goal. However, as the virtual environment is dynamic, the sub-goals need to be dynamically planned each time there is a change in the environment. The task of continuous planning is achieved by the

planning module. Planning receives the updated sub-goals along with the current parameters of the virtual situation and calculates the best way to achieve the sub-goals. The generated plan telling the agent what sub-goals to achieve is stored in the knowledge repository of the agent in a

knowledge/memory class together with the results of calculating how best to achieve the sub-goals. More detail of our implemented agent architecture was presented in our previous publication [

73].

3.1. Agent Behaviour

As shown in

Figure 5, the initial state of the agent is to wait for the user’s decision/selection. The initial state is followed by continuous verbal and nonverbal prompting to the user to make his/her decision. When the human user makes his/her decision, the agent will update its knowledge about the current virtual environment and will make/select a plan to make its own decision. To investigate whether the human user shares the same understanding of the collaborative situation, the agent will ask the user to optionally give some recommendations for the agent to help it to make the decision. The match between the agent’s plan and human team recommendation is calculated by the agent. When the human offers/selects a recommendation to the agent, the agent selects and modifies an appropriate response (e.g., supportive/confirming statement or statement of disappointment depending on the match with the agent’s plan). Additionally, the agent will give a reason for the response it has provided. After providing the explanation, the agent will take its turn and begin a new round of achieving sub-goals.

3.2. HAT-CoM

The human–agent communication model (HAT-CoM) is a communication model that translates the agent’s plans into multimodal communication acts. HAT-CoM receives information about the current collaboration situation from the agent architecture via the

situation awareness module. The situation awareness module is modelled as an interface/boundary/view/presentation layer class. As shown in the sequence diagram in

Figure 4, the information passed includes the tasks achieved (array tasks), whether the human user accepts or rejects the agent recommendation (Boolean 0 or 1) and the possible planned tasks (array tasks). As shown in

Figure 6, situation awareness organises in parallel the agent’s verbal and nonverbal communication.

To manage verbal communication, the situation awareness interface will pass information to the message selector, modelled as a control class. The information passed includes the state of the current situation such as the coordinates of the agent, how many sub-tasks are left, the recommended choice/decision to achieve the task, and what decisions are not helpful toward task completion. The message selector will retrieve the suitable message template from the message pool, which is modelled as an entity class in

Figure 5. After the process of message retrieval, the message selector will modify the selected message to be suitable to the current situation and send it to the virtual environment interface.

Regarding nonverbal communication, the situation awareness interface will pass information to the animation selector. The same parameters are passed as for verbal communication. The animation selector will retrieve the suitable animation/action template from the animation/action pool. After the process of animation retrieval, the animation selector will modify the selected animation/action to be suitable to the current situation and send it to the virtual environment interface. The multimodal communication model was previously presented [

74] and evaluated [

75].

4. Evaluation

4.1. Design and Procedure

The main aim of the current work is to investigate the impact of agent multimodal communication on the development of SMMs between a human and an agent. A previous study [

75] by the authors investigated how an implausible communication by the agent, such as an unrelated request to the human, could negatively impact the development of SMMs.

Two ethics committee-approved studies, requiring parental consent for participants under 18, were conducted to investigate the five research questions. In both of the studies, humans had to collaborate with an IVA teammate to complete a task within a limited time. The task required humans and IVAs to communicate, make requests to one another, commit to fulfil/reject the requests, and execute the requests or select another option. The two studies used the same scenario, as described in

Table 1.

In the first study, 73 undergraduate students volunteered to participate. The goal of the first study was to evaluate the comprehensibility of the IVA’s verbal and nonverbal communication in addition to the evaluation of the plausibility of the scenario. To achieve this goal, the human participants’ interactions with the IVA and the virtual system were continuously and automatically recorded. A post-session survey was used to collect the humans’ impressions and perceptions of the comprehensibility of the IVA’s communication. The questions of relevance to this paper are presented as part of the results section.

Using descriptive analysis, the results of the first study, presented in detail below, showed that the participants’ perceptions of mutual understanding with the IVA tended to increase with an increase in their satisfaction with the communication with the IVA. However, the initial study did not investigate the correlation between verbal and nonverbal communication and the components of SMM (i.e., taskwork SMM and teamwork SMM). Furthermore, the study did not investigate the impact of the development of the SMM on the overall team performance. To address these gaps, we designed a second study to probe more into the relationship between verbal and nonverbal communication, on the one hand, and taskwork and teamwork, on the other hand. In the second study, 20 school students volunteered to participate.

Each study required the participant to do the following: first, complete a biographical survey (e.g., age, gender, frequency of playing video games); second, participate in the collaborative activity with the IVA; and finally, answer survey questions related to the study variables. The duration of each study was around 25 min.

4.2. Measurement of Study Variables and Data Collection

A correlation matrix is a measure of the association between two variables. A correlation matrix indicates if the value of one variable changes reliably in response to changes in the value of the other variable. In order to demonstrate whether any relationships existed between the study’s variables, correlation analysis was used. However, an additional statistical method was required to answer research questions 4 and 5, which ask whether human trust impacts and is likely to predict human commitment and whether human commitment to the goal and to honour their promises impacts human–IVA team performance.

While correlation analysis quantifies the degree to which two variables are related, regression analysis aims to learn more about the relationship between an independent or predictor variable and a dependent or criterion variable. Linear regression was used as an approach to model the relationship between trust and commitment and also to model the relationship between commitment and team performance. Multiple regression was used to investigate the predictive relationship of both verbal and nonverbal communication on humans’ perceptions of a taskwork and teamwork SMM and the prediction of taskwork and teamwork SMM and humans’ trust in the IVA. IBM SPSS v.20 was used for the statistical analysis, while Microsoft Excel was the tool used to plot the diagram (

Figure 7).

To further analyse the relationship between trust, commitment, and team performance over time, human behaviours regarding their trust in the IVA, commitment, and team performance were monitored in each interaction cycle required to complete the task (described further in the scenario description in

Section 4.3) to further understand the relationships between these variables.

Each of the seven research variables were measured using two means, one subjective involving self-reporting and the other objective involving capture of user actions. The first means was a post-session survey asking participants about their perceptions of their interaction with the agent. The survey included 5 items for each of the seven variables. The participants were required to indicate their level of agreement with each statement using a 5-point Likert scale (1 = strongly disagree, 5 = strongly agree). An example of a survey item to elicit the human’s satisfaction with the verbal communication of the IVA (in the scenario, the IVA is called Charlie) is the statement “Charlie’s requests and replies were helpful to complete the task” and for IVA’s nonverbal communication “Charlie’s actions were suitable to the situation”. As an example of a survey item about taskwork SMM, we asked for the participant’s level of agreement with the statement “Charlie and I had a shared understanding about how best to ensure we meet our goal”. As an example of a survey item about taskwork SMM, we asked for level of agreement with the statement “Charlie and I worked well together”. Examples of the survey items used to elicit the level of trust in the IVA’s decision include the following: “Over time, my trust in Charlie’s selections increased”. As an example of a survey item about team performance we asked for level of agreement with the statement, “I am satisfied with the performance of the teamwork of Charlie and I”.

The second means tracked the participant’s behaviour while using the VE. In both studies, all inputs from the user were logged to allow recreation of the participants’ navigation paths and record inputs such as responses and keystrokes. These inputs included selected regions in the scenario (see

Section 4.3), exchanged messages between humans and IVAs, human’s promises to the IVA, and the actual decisions after making the promises. The data in the log files were used to describe the relationships between SMM, trust, commitment, and performance during the collaborative task, while the survey responses were used to show the possible relationships between study variables.

Trust was measured by the ratio of acceptance by the human of the IVA’s request. This ratio represents the extent to which the human believed the IVA’s requests were the better option to achieve the shared task. A higher acceptance ratio of the IVA’s requests was likely to show more trust in the IVA’s decisions. Commitment was measured by the ratio of (mis)match between the human’s acceptance of the IVA’s recommendations and the human’s actual decision/action that carries out the acceptance.

The main aim of studying teamwork is to improve overall team performance. Human–IVA team performance was measured by the time needed to complete a single cycle. The time taken to complete each cycle was used as a reference to measure the improvement in team performance. The ratio of time for each cycle from the total time was calculated. Shorter cycles that take less time than the average time taken to complete each cycle were used to show better performance.

4.3. The Features of Collaborative Scenarios

A number of attempts have been made to define the elements of collaborative activity. In a series of studies, Dillenbourg et al. [

76] identified the features of collaborative tasks that serve to test developing a shared understanding as follows:

Sharing of the basic facts about the task: sharing the beliefs about the task between collaborators. Dillenbourg et al. [

76] stressed that it is important to share the basic information not only in an indirect way, such as using a whiteboard, but also in an intrusive ways, such as via dialogues or invitations to perform actions.

Interferences about the task: the requirement is directly connected to the goal of the collaborative task. The inferences are explicitly negotiated through verbal discussion.

Problem-solving strategy: as the collaborative activity includes a task to achieve; partners need to have a strategy to accomplish this task. This strategy is individual to each team member, but additionally it should take into account the role of the other partner.

Sharing information about positions: this element is related to sharing information about the position and progress of each party while achieving the collaborative task. The current position of the partner could be deduced through the partners action, while his/her future position could be communicated though discussion.

Knowledge representation codes: it is important to use clear notations that represent the required knowledge in the collaborative task. For example, using a red label to demonstrate crucial or critical knowledge.

Interaction rules: the rules the partners agree on to manage the interactions while achieving the task.

In line with these requirements, we proposed a scenario where a human and an IVA should collaborate to achieve a shared task.

4.4. The Proposed Scenario

To evaluate the impact of communication on the development of the SMM while achieving a collaborative task, we have designed and developed a three-dimensional (3D) virtual collaborative activity with Unity3D (

http://unity3d.com). In addition to the features presented in

Section 4.3 (and discussed further below), there were a number of other aspects that we took into consideration in the design of the scenario in order to answer our research questions. These aspects included the following:

First, the actions of both humans and IVAs must be dependent or interleaved; that is to say, none of them can do the task alone, and the contribution of the other teammate is crucial for the success of the task.

Second, the task should be divided into stages or sequences in order to observe the progress in team behaviour and performance.

Third, humans must have the option either to conform to the IVA’s requests or select a different decision.

Fourth, the verbal and nonverbal communication should be bidirectional; that is, the human and agent can send and receive messages.

Finally, communication must be task-oriented. That is not to say that social-oriented communication would not be beneficial; however, it was beyond the scope of this study.

The aim of the scenario. In the scenario-based activity, the human and the agent (a virtual scientist called Charlie) needed to collaborate to trap a virtual animal (called Yernt) for scientific research, see

Figure 7.

The human–IVA interaction procedure. The animal is surrounded by eight regions (four pairs of regions). To achieve the collaborative goal, the human and the agent take consecutive turns to select one region at a time to build a fence around the animal and then observe each other’s action (i.e., nonverbal behaviour). Choosing the region requires individual situation awareness and planning and collective negotiation and communication. During the activity, there is two-way communication; both parties exchange verbal messages to convey their intention and request a recommended selection (that is where to build the fence next) from the other counterpart. Each time both the human and IVA finish their turn to select a region is called a round or a cycle. Hence, the scenario consists of four cycles until the animal is surrounded by the fence. The idea of dividing the collaborative scenario into cycles or rounds is useful to understanding the progress of the study variable. This idea was also used in some other human–agent studies (e.g., [

77]).

In the scenario, the human and the agent should be able to select only neighbouring regions. Neighbouring regions are those adjacent to already selected regions. Any cycle, except the first one, will include exactly two available neighbouring regions. Log files are used to track whether the human demonstrates commitment to their promise of acceptance by actually performing the action.

Supporting the features of collaborative activities. Our scenario met the requirements presented in [

76] and introduced above in the following ways:

Sharing of the basic facts about the task: At the beginning of the scenario the agent stated the aim of the task to make sure the human partner is aware of what to do.

Interferences about the task: After each cycle, the agent proposes a recommendation to the human to consider before making the decision about which regions to select. In addition to giving a recommendation, the agent states the reason behind his recommendation. The IVA’s recommendation is accompanied by a justification to explain why the IVA believes that this selection is the best option. The human has the option either to reject the IVA’s recommendation or accept and promise to honour his approval.

Problem-solving strategy: the agent uses a particular strategy to select the target region. In our scenario, the human and the IVA need to trap the animal under a given time constraint, and so, the agent should always select the neighbouring regions with the shortest path to where the agent stands. Regarding the recommendation to the human teammate, the agent calculates the shortest path to the human’s last selection.

Sharing information about positions: after the completion of each cycle, the agent gives verbal/textual feedback about the human’s selection and the next target. During the scenario, the human is able to observe the IVA’s nonverbal communication as represented in the actions taken and/or gestures and based on these actions the IVA’s intention is to be deduced. Furthermore, both the IVA and human communicate via exchanging messages. These messages are selected from a pool of messages. In each cycle at the beginning of the human’s turn, the IVA recommends one neighbouring region for the human to select. The recommended region is nominated so that the other remaining neighbouring region would be close to where the IVA is currently standing.

Knowledge representation codes: the selected regions have a different colour to the unselected and the neighbour regions. To make it clear for human users, each region is identified by a coloured marker. Markers could be red, green, or grey. Red means a region is unselected, and it cannot be selected, because it is not yet a neighbouring region. Green region means it is an unselected region and could be selected, because it is a neighbouring region. Grey means a region has already been selected before and cannot be selected again.

Interaction rules: Turn taking was managed so that the humans and IVAs should take turns. At the beginning of the agent’s turn, the agent asks the human partner to propose any recommendation for the agent to consider. The agent has the right to accept or reject this recommendation. When it comes to the human’s turn, the agent proposes a recommended region to select. The human may ask the agent to give a reason for the recommendation. It is the human’s right to accept/reject the recommendation.

The goal, rules, sequences, and possible actions in our scenario are specific to the collaborative task we have designed. However, that is true of most tasks. The user is briefed at the start regarding the tasks goals and rules of engagement. The scenario encompasses all of the elements in the human–agent multimodal communication model we have developed and encompasses negotiation, planning, decision-making, and situation awareness by the IVA.

4.5. Participants

Human–IVA teamwork is not confined to certain ages. Thus, the studies were conducted using two different age groups: secondary school children and undergraduate university students.

The first study involved the voluntary participation of 73 second-year undergraduate students enrolled in a biology unit. A total of 66 out of 73 completed the collaborative task. Seven students were excluded, because they chose not to complete the task in the virtual scenario or did not complete the post-session survey. The data of these seven students was excluded from the evaluation except in calculating the ratio of those who did not complete the task to the total number. The participants were aged between 18 and 49 years (mean = 21.9; SD = 5.12). Concerning the participants’ linguistic skills, 92.42% were English native speakers. The non-native English speakers have been speaking English on a daily basis on average for 14.4 years. Regarding computer skills, 21.21% of the participants described themselves as having basic computers skills and 16.67% as having advanced skills, while 62.12% said they have proficient computer skills. Concerning their experience in using games and other 3D applications, the participants answered the question “How many hours a week do you play computer games?” with times ranging between 0–30 h weekly (mean = 4.24, SD = 6.66).

In the second study, 20 secondary school students volunteered to participate in the study. Five students did not complete the post-session survey. The participants were in a grade/year 8 class and aged between 13 and 14 years (mean = 13.5). We were not able to ask about their English language competency. All of the children were familiar with using computers and playing computer games. The participants’ linguistic and computer skills were surveyed to explore if any struggle in the communication with the IVA was because of the lack of linguistic or computer skills.

5. Results

Our first data analysis involved a number of tests for normality (

Section 5.1). In order to explore whether the study variables have internal-relationships, a correlation matrix was utilised (

Section 5.2). To answer the first three research questions, multiple regression analysis was used (

Section 5.3,

Section 5.4 and

Section 5.5). The fourth research question aimed to study the impact of trust on the development of commitment, while the fifth research questions aimed to investigate the impact of commitment on human–IVA team performance. Linear regression was used to analyse the data and answer these questions (

Section 5.6 and

Section 5.7).

Section 5.8 looks at the development of taskwork SMM, teamwork SMM, trust, commitment, and team performance over time.

5.1. Normality Test for Study Variables

Normality tests are statistical tests used to check if study variables are approximately normally distributed. In this study, because the number of participants was not large, there was a need to test if the results of their responses were normally distributed. The normality of the data determined whether parametric or non-parametric statistical tests should be used in analysing the collected data. We used three normality tests as follows:

- 1

Skewness and kurtosis z-values (the normality-distributed variable should be in the span of −1.96 to +1.96).

- 2

The Shapiro–Wilk test p-value (the normality-disturbed variable should be above 0.05).

- 3

Histograms, normal Q–Q plots, and box plots should visually indicate the data was normally distributed.

The first normality test measured skewness and kurtosis (see

Table 2). The result showed that there was little kurtosis for all the variables, but they did not differ significantly from normality. However, for the skewness measurement the results showed that the

z-value of the two variables teamwork SMM and verbal communication (−2.331 and −2.070 consecutively) was not in the span −1.96 and +1.96.

The second normality test used Shapiro–Wilk normality. In the Shapiro-Wilk normality test, the null hypothesis of this test of normality was that the variable was normally distributed. The null hypothesis was rejected if the

p-value was below 0.05. The results of Shapiro–Wilk normality test (see

Table 2) showed that for teamwork SMM and verbal communication the

p-value were (0.23 and 0.045, respectively) less than 0.05.

Based on the results of skewness and kurtosis, as well as the Shapiro–Wilk test, we concluded that the two variables teamwork SMM and verbal communication were not normally distributed. Hence, the statistical tests used to answer research question should be non-parametric.

5.2. Correlation Results

To measure the strength and direction of the association between the seven variables, Spearman’s rho correlation method was used. Spearman’s rho correlation was selected as it is more appropriate for small samples or non-normally distributed responses. A correlation result tests the null hypothesis that the population correlation is zero, while the alternative hypothesis is that population correction does not equal zero. A correlation of −1 indicates a perfect linear descending relationship, while a correlation of 1 indicates a perfect ascending linear relation.

Table 3 presents the means, standard deviations, and correlations for all measures. The results of correction (

r) that is not equal to zero rejects the null hypothesis and accepts the alternative hypothesis that there was a liner relationship. As shown in

Table 3, verbal and nonverbal communication methods between humans and IVAs were significantly positively related to humans–IVA taskwork SMM (

r = 0.901,

p < 0.01 and

r = 0.871,

p < 0.01, respectively). This result accepts the alternative hypothesis that verbal and nonverbal communication linearly related with taskwork SMMs.

In addition, the results showed that verbal and nonverbal communication methods between humans and IVAs were significantly positively related to humans–IVA teamwork SMM (

r = 0.877,

p < 0.01 and

r = 0.860,

p < 0.01, respectively). This result accepts the alternative hypothesis that verbal and nonverbal communication linearly related with teamwork SMMs. Moreover, the results showed that taskwork SMM and teamwork SMM were significantly positively related to the human’s trust in the IVA (

r = 0.825,

p < 0.01 and

r = 0. 691,

p < 0.01 respectively). The result showed that teammate trust was significantly positively related to task commitment (

r = 0.971,

p < 0.01), suggesting a positive association between the human’s trust in the IVA teammate and the human’s commitment to complete the shared task. Furthermore, the results in

Table 3 show that the human’s commitment to accomplish the shared task had a significant positive relationship with human–IVA team performance (

r = 0.941,

p < 0.01), suggesting a positive association between the human’s commitment to complete the task with the IVA and his/her perception of accomplishment.

5.3. Effect of Verbal and Nonverbal Communication on Taskwork SMM

Table 4 shows that 81.4% of the variance in taskwork SMM is accounted for by verbal and nonverbal communication between the human and the IVA. To assess the overall statistical significance of this relationship, the result of multiple regression indicated that both verbal and nonverbal communication were significant

R2 = 0.814,

F (2, 13) = 33.80,

p < 0.01. The results thus answer the first research question in the affirmative. Furthermore, to evaluate which one of the two factors (i.e., IVA’s verbal or nonverbal communication) contributes more to taskwork SMM, regression results, as shown in

Table 4, indicated that the standardised coefficient

β of IVA’s nonverbal communication (0.839) was greater than the standardised coefficient

β of the verbal communication (0.082), suggesting a stronger effect for nonverbal over verbal communication.

5.4. Effect of Verbal and Nonverbal Communication on Teamwork SMM

Regression analysis showed that 89.1% of the variance in task SMM could be accounted for by verbal and nonverbal communication between the human and IVA. To assess the overall statistical significance of the relationship, the result of multiple regression showed that both verbal and nonverbal communication were significant R2 = 0.891, F (2, 13) = 62.07, p < 0.01. The results thus answer the second research question in the affirmative.

Furthermore, to evaluate which one of the two factors (i.e., IVA’s verbal or nonverbal communication) contributes more to teamwork SMM, the results, as shown in

Table 4, indicated that the standardised coefficient

β of IVA’s verbal communication (0.752) was greater than the standardised coefficient

β of the nonverbal communication (0.210), suggesting a stronger effect for verbal over nonverbal communication.

5.5. Impact of Human Trust on Taskwork and Teamwork SMM

The results showed that 66.6% of the variance in the human’s trust could be accounted for by a taskwork and teamwork SMM between the human and the IVA. To assess the overall statistical significance of this relationship, the results indicated that both a taskwork and teamwork SMM were significant R2 = 0.666, F (2, 13) = 15.98, p < 0.01. The results thus answer the third research question in the affirmative.

Furthermore, to evaluate which one of the two factors (i.e., taskwork and teamwork SMM) contributes more to a human’s trust, regression results, as shown in

Table 4, indicated that the standardised coefficient

β of taskwork SMM (0.842) was greater than the standardised coefficient

β of teamwork SMM (0.001), suggesting a stronger effect for taskwork SMM over teamwork SMM with regard to human trust.

5.6. Relationship between Trust and Commitment

The single regression result, see

Table 4, showed that 97.3% of the variance in task commitment could be accounted for by teammate trust. To assess the overall statistical significance of this relationship, the result indicated that teammate trust significantly affected task commitment

R2 = 0.973,

F (2, 13) = 834.86,

p < 0.01.

5.7. Relationship between Commitment and Team Performance

The results, see

Table 4, showed that 92.5% of the variance in teammate trust could be accounted for by task commitment. To assess the overall statistical significance of this relationship, the result indicated that task commitment significantly impacted team performance

R2 = 0.925,

F (2, 13) = 185.99,

p < 0.01.

5.8. The Development of Taskwork SMM, Teamwork SMM, Trust, Commitment, and Team Performance over Time

To study how the dependent variables evolved overtime, data collected from the session log file was used to explore the human’s behaviour with respect to these variables during four consecutive cycles of interaction. Descriptive analysis results, as can be seen in

Figure 8, showed that taskwork SMM progressively increased from 42.51% in the first cycle to 83.33% in the last cycle. Meanwhile, teamwork SMM increased from 66.67% to 88.40%. Additionally, the humans’ trust in the IVA’s decision was 33.33% in the first cycle and continued to increase to 57.14% in the last cycle. Meanwhile, the human’s commitment to execute a promised decision also progressively increased from 38.15% in the first cycle to 71.43% in the last one. Concerning team performance, the results showed a decline in the ratio of time of each cycle divided by the total time to complete the task needed to complete each cycle from 42.51% in the first cycle to 9.57% in the last one. This decline in time ratio indicates an improvement in team performance.

6. Discussion

Integrating agents and humans to perform a dynamic task remains challenging due to many reasons including ensuring robust performance in the face of a dynamic environment, providing abstract task specifications without all the low-level organisation details, and finding appropriate agents to be included in the overall system [

78]. Apparently, performance robustness and agent inclusion rely on the agent to behave like a team companion to gain the trust of the human. Many studies in human–IVA teamwork have concluded that the trust between humans and IVAs relies on the exchanged information during the achieving of the target task [

79]. Harbers et al. [

80] argued that explaining the behaviour of the agent in human–agent teams can contribute to team performance in two ways. First, from a team dynamic point of view, when IVAs communicate the state of progress of the activities, they can adjust their actions to meet coordination needs. Second, from an interaction point of view, explanations can increase trust in a team member. Nevertheless, these studies did not show how the communication influenced trust.

According to Rousseau et al. [

81], there are three types of behaviours related to collaborative tasks: (1) information exchange or communication behaviour, (2) cooperation or contribution behaviour, and (3) integrating or coordination behaviour. Information exchange refers to the ease of sending and receiving information related to the interdependent task. Cooperation is defined as the contribution of team members toward the completion of the interdependent task. Coordination points to integrating and synchronising the individual activity to ensure task accomplishment. Although communication behaviour is considered as a separate activity in a collaborative task, communication is well documented as a requirement in cooperation and coordination. Communication allows team members to exchange information about their knowledge, expertise, and thoughts that are crucial to success of the shared task [

82].

Ferguson and Allen [

14] stated that communication is a requirement for IVAs that need to collaborate with humans. While exchanging verbal information is important in collaborative situations, an intelligent agent that could handle nonverbal information properly would improve the quality of interactions between the agent and the human users [

83]. The study of [

48] investigated the influence of communicating shared goals and beliefs on the performance of a team of agents involved in an interdependent task. The results showed that generally, the communication with goals and beliefs positively influenced the performance of a team of agents. This research did not investigate a human–agent team and so did not consider knowledge about teammate as a factor to study because the teammates were agents only.

Currently, while a vast number of studies address the multimodal clues between humans and IVAs in collaborative context, the majority of them are devoted to analysing how humans perceive these clues. Several studies have explored verbal communication [

41]. Nonverbal cues of communication have drawn an entire field of study [

84] including facial expression [

85], eye-gaze [

86], head pose [

87], gesture [

88], and smile [

89].

This paper aimed to investigate crucial factors in human–IVA heterogeneous teamwork. These factors are communication, SMM, trust, and commitment and their impact on team performance. To achieve this aim, five research questions were posed. In answer to the first research question, the result showed a significant positive association between the IVA’s communication (i.e., verbal and nonverbal) and the human’s perception of a taskwork SMM as perceived by the human teammate. To answer the second part of the research question about which method is more effective in building a taskwork SMM, the results demonstrated that the IVA’s nonverbal communication tends to contribute more towards the prediction of taskwork SMM rather than verbal communication. This finding suggested that humans are likely to build their understanding of a situation and the nature of the problem based on the actual actions (nonverbal behaviour) of their teammates rather than their teammate’s expressed thoughts (verbal behaviour).

A number of research studies have stressed the role of nonverbal communication on the development of a taskwork SMM. In their study about human–robot interaction, Breazeal et al. [

90] found that people tend to develop a task-based SMM with robots that interact with humans from both explicit and implicit nonverbal communication. Explicit nonverbal communication is used when there is an intention to communicate information to the human via actions, such as nods of the head and deictic gestures, while implicit behaviour includes how the robot behaves as they carry out the task. Using these descriptions, our experiment used implicit nonverbal communication. Consistent with Breazeal et al.’s finding, Eccles and Tenenbaum [

91] studied the relationship between communication and SMMs in human teams and suggested that the task and context characteristics depend on communication and particularly nonverbal communication. Although the relationship between nonverbal communication and the perception of taskwork SMM was studied in the human–human context, this was the first time this relationship has been identified in human–IVA teamwork.

In answer to the second research question, the result demonstrated a significant positive association between both the IVA’s verbal and nonverbal communication and the perception of teamwork SMM as perceived by the human. This result is consistent with the findings of other researchers, who found that human involvement with IVAs was likely to increase the possibilities of communication with IVAs [

92]. Moreover, to answer the second part of the research question about which method is more effective in building teamwork SMM, the result showed that the IVA’s verbal communication tends to contribute more to the human’s perception of teamwork SMM. This finding suggested that the exchanged messages give a better understanding of the teammate’s thoughts and capabilities. Previous studies in human teams suggested that the establishment of shared knowledge about the teammate is more likely to occur through verbal communication [

93]. Yet, this claim was not tested in human–IVA teams. Our results support this finding and extend it to human–IVA teams.

The third research question inquired whether the existence of taskwork and teamwork SMMs between humans and IVAs are likely to influence the human’s trust in an IVA. Some research work (e.g., [

7]) has demonstrated a direct relationship between teammate communication and trust, positing that effective communication improves the feeling of trust. However, this work did not explain how communication influences the development of trust between team members. Some studies took a step further to demonstrate the indirect effect of communication on creating a state of trust [

64,

94]. These studies identified that the development of trust between team members is a matter of exchanging norms, experience, and common knowledge. In their study with 35 human teams, Wu and Wu [

95] found that intra-communication between team members positively related with the existence of a SMM. In addition, their results showed that SMM was positively related to the feeling of satisfaction and trust between team members. Our finding is in line with the work reporting that SMM appears to strengthen and unify teams in VE. This affirmative relationship between SMM and building trust is consistent with previous work that indicated SMM between teammates in the workplace tends to foster trust between team members [

96].

The fourth research question inquired whether human trust in the IVA’s decision and recommendation is likely to affect the human’s commitment to accomplish the shared task with the IVA. The results of correlation matrix, as well as linear regression analysis, showed a positive association between the human’s trust in IVAs and fulfilling their pledge to IVAs. The results affirmatively answer the fourth research question. This finding appears to be inconsistent with the result in [

54], which indicated that human commitment was likely to stay low during a collaborative activity with IVAs. This inconsistency could be explained because in [

54], the agent was not visible to the humans and because the communication was very simple, it may not have engendered a sense of a shared goal. On the other hand, our result is consistent with other studies, which reported that satisfaction with communication provided in the virtual team system significantly tends to increase the level of involvement, trust, and commitment [

97]. This finding shed light on the importance of an IVA’s multimodal communication and especially the nonverbal elements to increase the human’s feeling of believability.

The last research question asked if human commitment to accomplish the task affects human–IVA team performance. The results showed that the increase in human commitment is positively associated with team performance. This result is consistent with other studies in virtual teams that found team commitment influences team satisfaction and performance [

98,

99]. This finding indicates that human commitment to a team including IVA team members has the same positive influence on team satisfaction and performance as has been found for virtual teams of humans.

The result of continuous monitoring of taskwork SMM, teamwork SMM, trust, commitment, and human–IVA team performance showed a synchronous increase in these variables overtime. Several projects have proposed IVA architectures to increase their believability. These architectures incorporate social and collaborative capabilities [

73], appraisal/emotion [

100], adaptiveness [

101], and personality [

102]. Nevertheless, with the increasing interest in designing and creating an IVA that is able to work with humans in teamwork, these architectures could be further extended to include teamwork skills. The results of this study suggest that the inclusion and monitoring of human–IVA communication and the development of taskwork and teamwork SMMs are important to foster human trust in and commitment to their IVA teammate. This commitment would effectively increase team performance.

7. Conclusions and Future Work

This paper investigated the impact of an IVA’s multimodal communication on building a bridge consisting of SMM, trust, and commitment from the human’s side to the IVA’s. Moreover, the paper indicated the positive influence of both trust and commitment on human–IVA team performance. Our main finding was that high performing heterogeneous human–IVA teams use a combination of aspects including cognitive (i.e., a SMM), behavioural (i.e., communication), and social aspects (i.e., trust and commitment) to produce the desired outcomes and performance. We argued that SMM, which is a fundamental element in human teams, is an important notion to consider when developing an IVA to be a teammate with a human. However, SMM is a very complex concept to demonstrate its effect on the final team performance. Hence, this study aimed to analyse the indirect relationship between SMM and human–IVA team performance. Although many studies have investigated some factors that foster human–IVA teamwork, this study aims to go beyond finding the relation between two variables and collect some of the pieces that make up the puzzle of human–IVA teams.

While this study was limited to a single task-based scenario, the findings from our two studies with different populations suggest that a human–IVA SMM can help to develop human trust in an IVA teammate and improve the results of human–IVA team performance. Therefore, we believe that measuring the existence and development of a SMM over time should be considered when creating or extending existing agent architectures. Furthermore, as there is a higher demand for IVAs capable of interacting with humans in a team context, SMM and trust need to be considered in existing agent architectures (e.g., FAtiMA (FearNot! Affective Mind Architecture) agent architecture [

103]). In the FAtiMA agent architecture, a new submodule could be included in the deliberative level in both the appraisal and coping modules. The deliberative level in the appraisal module needs to continuously sense/monitor the development of a SMM between humans and agents while achieving the collaborative task. In the situation where the agent senses a fluctuation in the development or maintenance of a SMM with humans, agents need to employ the deliberative level of the copying module that will consequently manipulate the agent’s effectors to employ multimodal communication to foster a SMM.

Since the work reported in this paper, we have created a new scenario with different tasks that implements an extended version of our agent architecture and HAT-CoM to incorporate IVA personality [

104]. As future work, more scenarios and tasks, including socially oriented tasks, should be designed and implemented to further validate and refine our architecture and communication model. Studies comparing the human–agent interaction modelled with human–human or agent–agent interaction using the same platform could reveal what interaction differences may exist. Moving beyond a single agent and single human would be another avenue to explore which would draw on the wider literature on norms and emergent behaviours of crowds, organisations, and societies (e.g., [

105]). Another possible future study is to incorporate a new feature in unity 3D that is a unity ML-agent (Machine Learning Agent). This tool has the ability to be trained using deep reinforcement learning and other learning methods and using Python Application programming interface (API).

Future studies are suggested that investigate the effect of trust on preferring certain methods of communication (i.e., verbal or nonverbal). A number of studies of human teamwork indicated that there is an association between an agent’s trust and the preferred communication technique [

106]. In addition, a study is required to demonstrate the effect of the quantity and the quality of communication to distinguish between better and worse performing teams.