On the Use of ROMOT—A RObotized 3D-MOvie Theatre—To Enhance Romantic Movie Scenes

Abstract

:1. Introduction

2. Materials and Methods

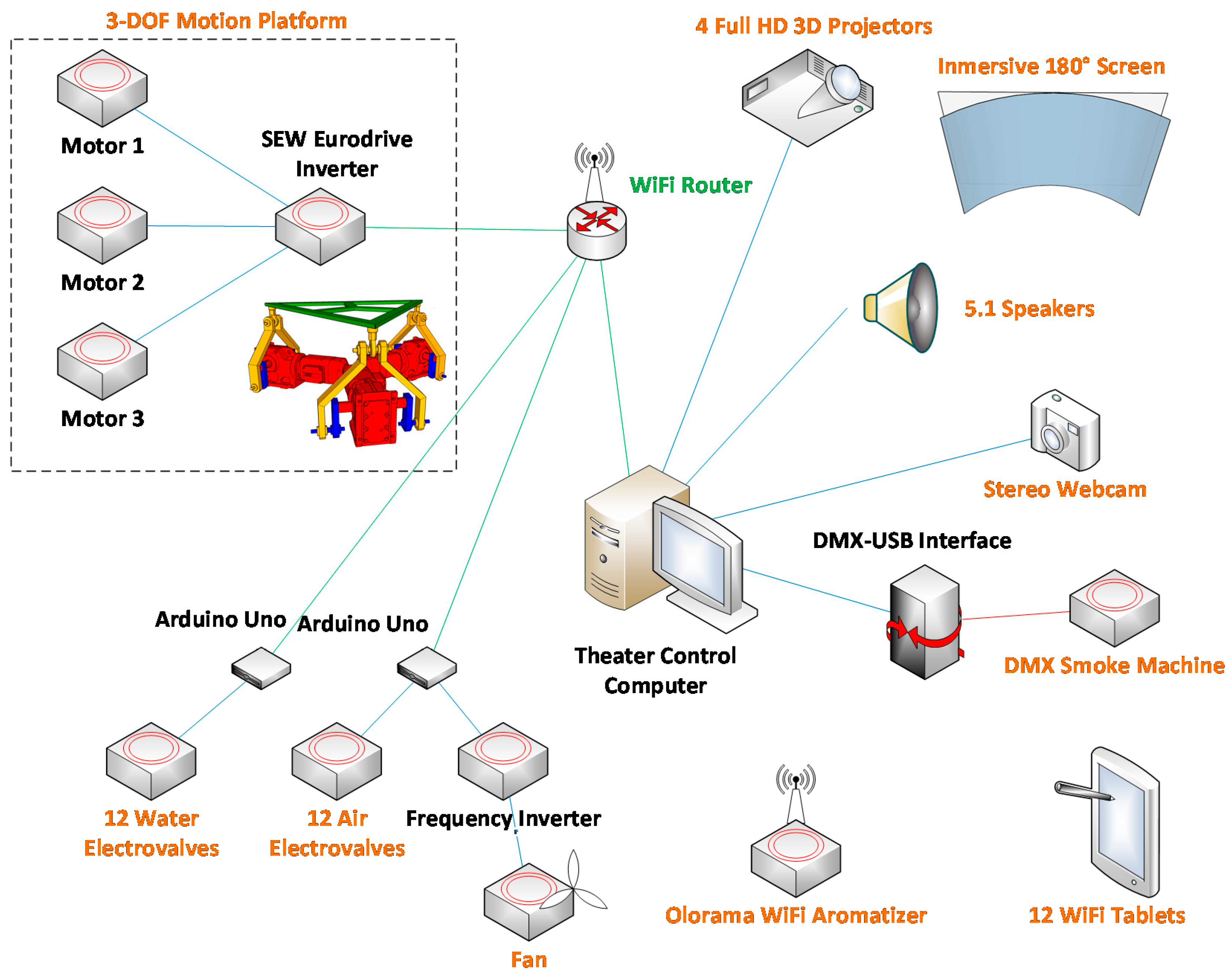

2.1. Hardware

- An olfactory display. We used the Olorama (Valencia, Spain) wireless aromatizer [20]. It features 12 scents arranged in 12 pre-charged channels that can be chosen and triggered by means of a UDP packet. The device is equipped with a programmable fan that spreads the scent around. Both the intensity of the chosen scent (amount of time the scent valve is open) and the amount of fan time can be programmed.

- A smoke generator. We used a Quarkpro QF-1200 (Madrid, Spain). It is equipped with a DMX interface, so it is possible to control and synchronize the amount of smoke from a computer, by using a DMX-USB interface such as the Enttec Open DMX USB [21] (Melbourne, Australia).

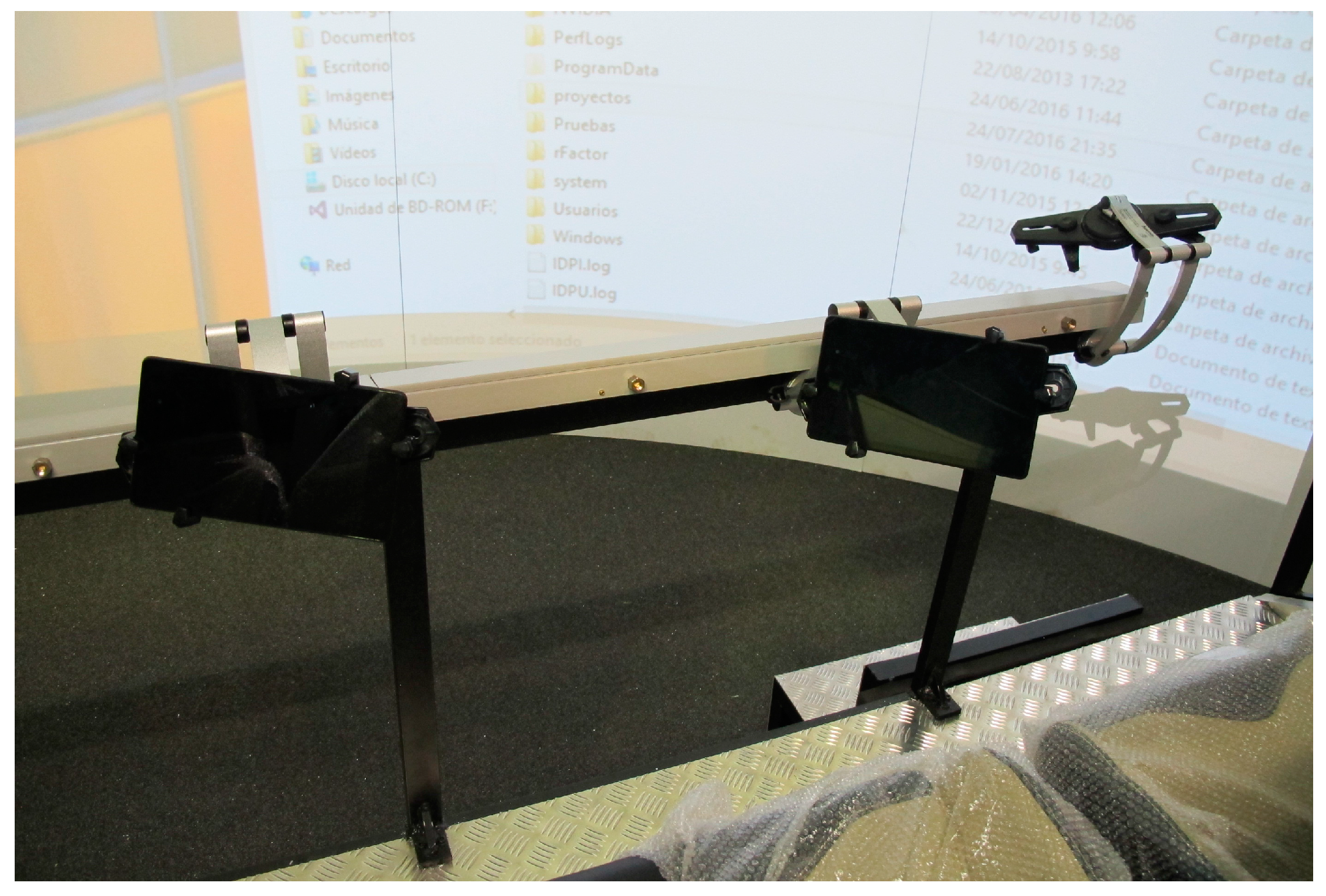

- Air and water dispensers. A total of 12 air and water dispensers (one for each seat) (Figure 3). The water and air system was built using an air compressor, a water recipient, 12 air electro-valves, 12 water electro-valves, 24 electric relays and two Arduino Uno (Ivrea, Italy) to be able to control the relays from the PC and open the electro-valves to spray water or produce air.

- An electric fan. This fan is controllable by means of a frequency inverter connected to one of the previous Arduino Uno devices.

- Projectors. A total of four full HD 3D projectors. Blending techniques are required to merge their corresponding images.

- Glasses. A total of 12 3D glasses (one for each person).

- Loudspeaker. A 5.0-channel loudspeaker system to produce binaural sound.

- Tablets. A total of 12 individual tablets (one for each person).

- Webcam. A stereoscopic webcam to be able to construct an augmented reality mirror-based environment.

- Sight: they can see a 3D representation of the scenes on the curved screen and through the 3D glasses; they can see additional interactive content on the tablets; they can see the smoke.

- Hearing: they can hear the sound synchronized with the 3D content.

- Smell: they can smell essences at certain events. For instance, when a car crashes, they can smell the smoke. In fact, they can even feel the smoke around them.

- Touch: they can feel the touch of air and water on their bodies; they can touch the tablets.

- Kinesthetic: they can feel the movement of the 3-DOF platform.

2.2. Software

- Traditional movies: Traditional movies can be displayed in ROMOT, while also having the possibility to see the contents stereoscopically, provided that the videos have been recorded with a pair of cameras. Additionally, with this setup first-person movies can be displayed, enhancing the possibilities to enhance users’ experiences, as they can feel like being the protagonists of the movies. As a first example, and to prove the concept, a set of videos were recorded using two GoPro cameras to create a 3D movie set in the streets of a city. Most of the videos were filmed by attaching the GoPro cameras to a car’s hood in order to locate the audience at the centre of the view, as if they were the protagonists of the journey. At every moment there is audio consisting of ambient sounds and/or a locution that reinforces the images the user is watching. The synchronized soft platform movements or effects, like a nice smell or a gentle breeze, help to create the perfect ambience at each part of the movie, making the experience more enjoyable for the audience. Of course, romantic and sexual content can be recorded and displayed too.

- Mixed reality environment: This setup consists of a combination of recorded videos and virtual content, thus creating a mixed reality movie that helps the audience perceive the virtual content as if it were real, making the transition from a real movie to a virtual situation easier. In this setup, the created 3D virtual content—a 3D virtual character—interacts with parts of the video by creating the virtual animation in such a way that it is synchronized with the content of the recorded real scene. Virtual shadows are also considered to make the whole scene more real. This could have interesting applications for romantic scenes, mixing real filmed characters with virtual content.

- Virtual Reality Interactive Environment: This setup consists of a pure virtual environment that is also interactive, as audiences can give their feedback to the system by means of the integrated tablets for each of the seats. As a first trial, we recreated a city where users could walk and drive, thus going through their streets and meeting other people and situations. Each situation was created using a storyboard that contains all of the content, camera movements, special effects, locutions, etc., so at the end, a set of situations were derived that could be part of a movie. In this case, we want the audience not to just look at the screen and enjoy a movie, but to make them feel each situation, to be part of it and to react to it. That is why platform movements and all of the other multimodal displays are so important. When each situation takes place, the audience can feel that they are driving inside the car or walking through the city, thanks to the platform movements that simulate the real movements. In some of the scenes, the virtual situation pauses and asks the audience for their collaboration. At that moment, the different tablets vibrate and a question appears, giving the individual users some time to answer it by selecting one of the possible answers (related to driving, in this case). When the time is up, they are prompted to report whether the answer was correct or not (as in this case, there are right and wrong answers), and the virtual situation resumes, showing the consequences of choosing a right or a wrong decision. When the deployed situation finishes, the audience can see the final score on the large curved screen. The people having the greatest score are the winners who are somehow rewarded by the system by receiving a special visit, a 3D virtual character that congratulates them for their safe driving (see next paragraph). The application to romantic/sexual content is straightforward, allowing, for instance, the creation of point of view (POV) immersive scenes with fictional characters.

- Augmented Reality Mirror-Based Scene: This setup consists of a video-based augmented reality mirror (ARM) [22,23] scene, which is also seen stereoscopically. ARMs can bring a further step in user immersion, as the audience can actually see a real-time image of them and feel they are part of the created environment. This ARM environment is used in the final scenes of the aforementioned virtual reality interactive environment (previous sub-section), where the user(s) with the highest score get(s) rewarded by a virtual 3D character that walks towards him/her/them. Together with this action, virtual confetti and coloured stars appear in the environment, accompanied with winning music that includes applause. The application of ARM to romantic and sexual content is more controversial in terms of ethical issues, yet completely feasible from a technological standpoint.

2.3. Usability and Satisfaciton Tests

3. Results

3.1. Description of the Selected Scenes

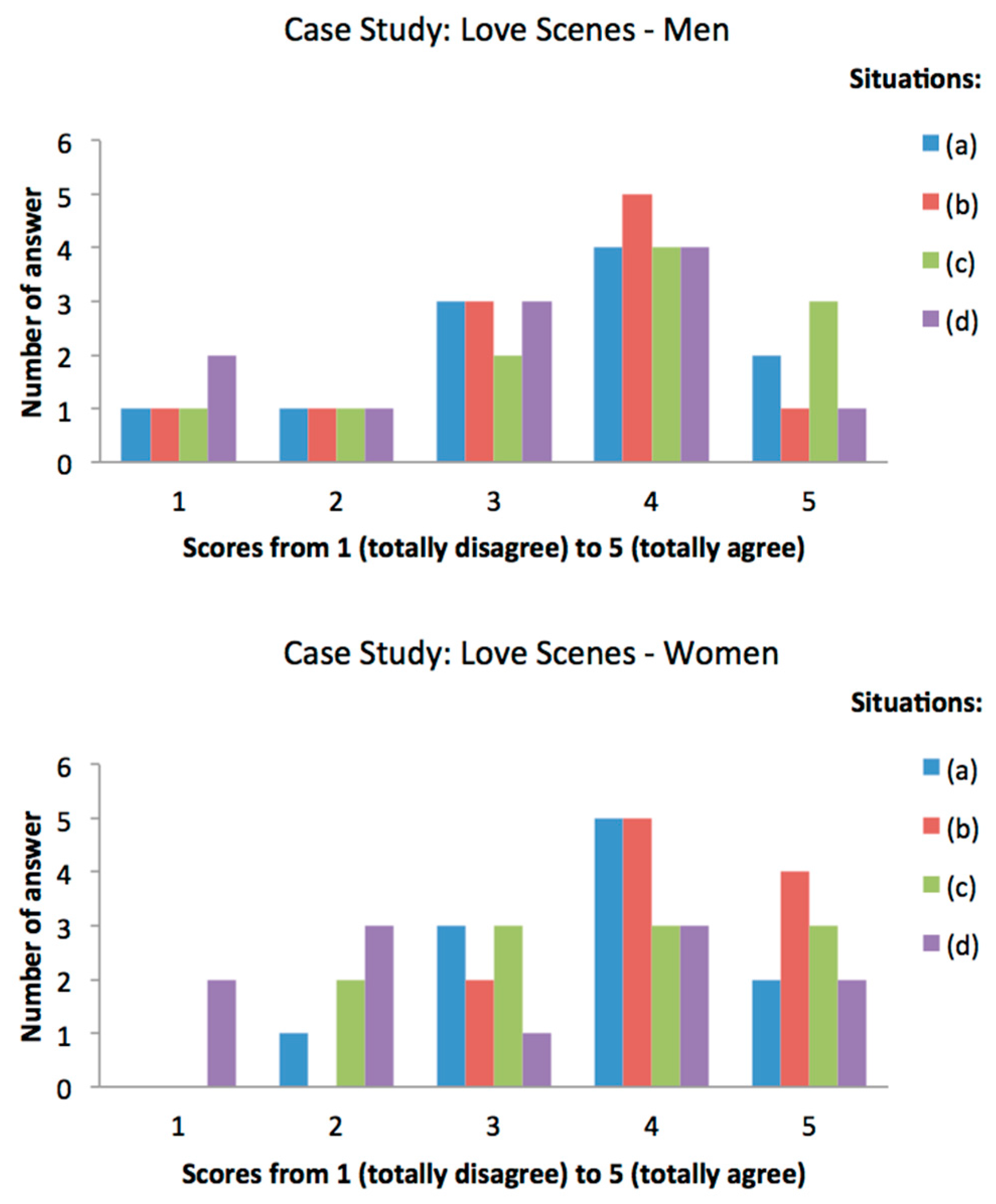

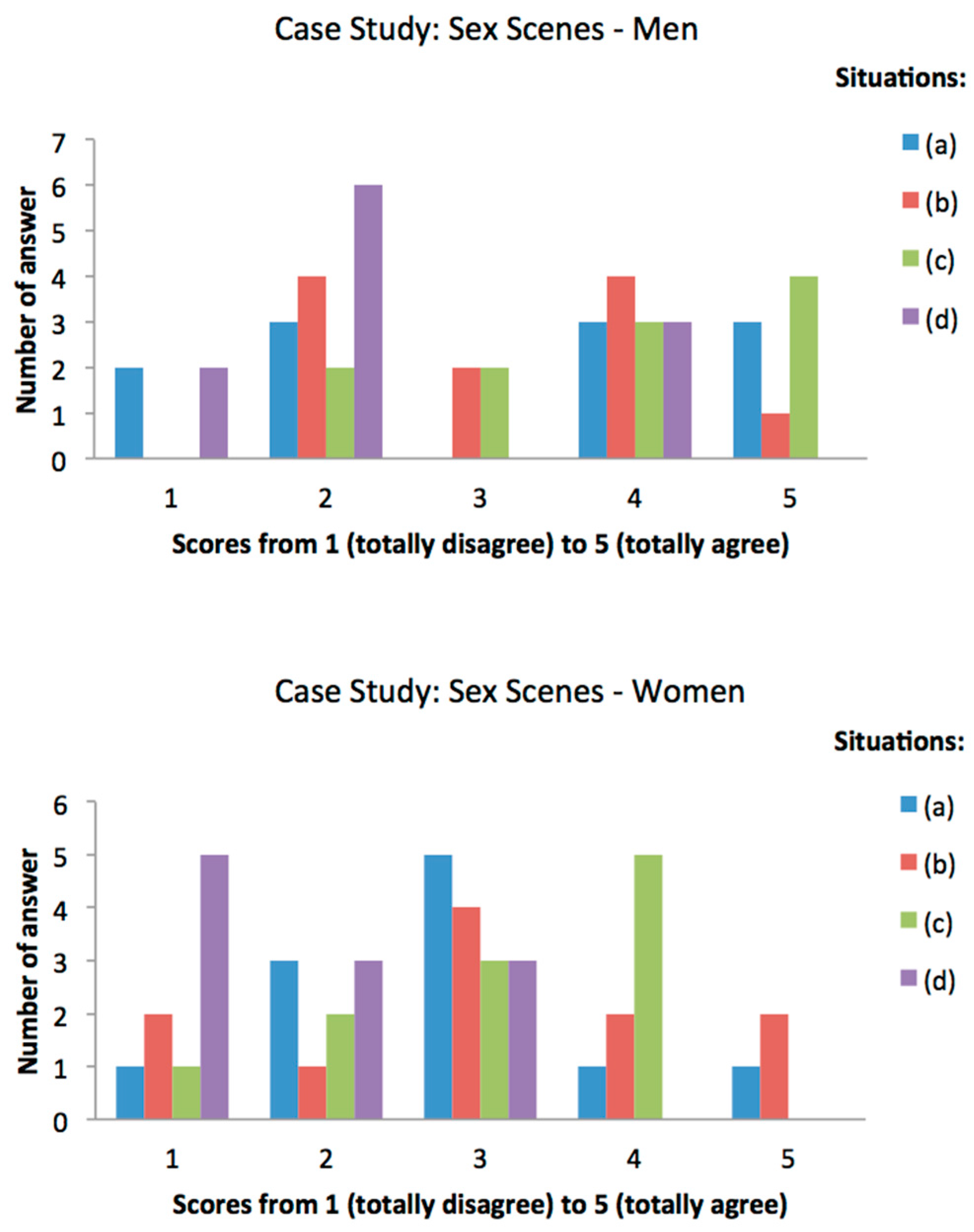

3.2. Results of the Questionnaire

3.3. Integrating Other Display Technologies

4. Conclusions

Author Contributions

Conflicts of Interest

References

- Heilig, M.L. Sensorama Simulator. U.S. Patent 3,050,870, 28 August 1962. [Google Scholar]

- Heilig, M.L. El cine del futuro: The cinema of the future. Presence Teleoper. Virtual Environ. 1992, 1, 279–294. [Google Scholar] [CrossRef]

- Robinett, W. Interactivity and individual viewpoint in shared virtual worlds: The big screen vs. Networked personal displays. SIGGRAPH Comput. Graph. 1994, 28, 127–130. [Google Scholar] [CrossRef]

- Ikei, Y.; Okuya, Y.; Shimabukuro, S.; Abe, K.; Amemiya, T.; Hirota, K. To relive a valuable experience of the world at the digital museum. In Human Interface and the Management of Information. Information and Knowledge in Applications and Services: 16th International Conference, HCI International 2014, Heraklion, Crete, Greece, June 22–27, 2014. Proceedings, Part II; Yamamoto, S., Ed.; Springer International Publishing: Cham, Germany, 2014; pp. 501–510. [Google Scholar]

- Matsukura, H.; Yoneda, T.; Ishida, H. Smelling screen: Development and evaluation of an olfactory display system for presenting a virtual odor source. IEEE Trans. Vis. Comput. Graph. 2013, 19, 606–615. [Google Scholar] [CrossRef] [PubMed]

- CJ 4DPLEX. 4Dx. Get into the Action. Available online: http://www.cj4dx.com/about/about.asp (accessed on 9 January 2017).

- Express Avenue. Pix 5D Cinema. Available online: http://expressavenue.in/?q=store/pix-5d-cinema (accessed on 9 January 2017).

- 5D Cinema Extreme. Fedezze Fel Most a Mozi új Dimenzióját! Available online: http://www.5dcinema.hu/ (accessed on 13 February 2017).

- Yecies, B. Transnational collaboration of the multisensory kind: Exploiting korean 4D cinema in China. Media Int. Aust. 2016, 159, 22–31. [Google Scholar] [CrossRef]

- Tryon, C. Reboot cinema. Converg. Int. J. Res. New Media Technol. 2013, 19, 432–437. [Google Scholar] [CrossRef]

- Zhuoyuan, G. The Difference between 4D, 5D, 6D, 7D, 8D, 9D, xD Cinema. Available online: http://www.xd-cinema.com/the-difference-between-4d-5d-6d-7d-8d-9d-xd-cinema/ (accessed on 9 January 2017).

- Casas, S.; Portalés, C.; García-Pereira, I.; Fernández, M. On a first evaluation of ROMOT—A RObotic 3D MOvie Theatre—for driving safety awareness. Multimodal Technol. Interact. 2017, 1, 6. [Google Scholar] [CrossRef]

- Groen, E.L.; Bles, W. How to use body tilt for the simulation of linear self motion. J. Vestib. Res. 2004, 14, 375–385. [Google Scholar] [PubMed]

- Stewart, D. A Platform with Six Degrees of Freedom. Proc. Inst. Mech. Eng. 1965, 180, 371–386. [Google Scholar] [CrossRef]

- Casas, S.; Coma, I.; Riera, J.V.; Fernández, M. Motion-cuing algorithms: Characterization of users’ perception. Hum. Factors J. Hum. Factors Ergon. Soc. 2015, 57, 144–162. [Google Scholar] [CrossRef] [PubMed]

- Nahon, M.A.; Reid, L.D. Simulator motion-drive algorithms—A designer’s perspective. J. Guid. Control Dyn. 1990, 13, 356–362. [Google Scholar] [CrossRef]

- Casas, S.; Coma, I.; Portalés, C.; Fernández, M. Towards a simulation-based tuning of motion cueing algorithms. Simul. Model. Pract. Theory 2016, 67, 137–154. [Google Scholar] [CrossRef]

- Küçük, S. Serial and Parallel Robot Manipulators—Kinematics, Dynamics, Control and Optimization; InTech: Vienna, Austria, 2012; p. 468. [Google Scholar]

- Sinacori, J.B. The Determination of Some Requirements for a Helicopter Flight Research Simulation Facility; Moffet Field: Mountain View, CA, USA, 1977. [Google Scholar]

- Olorama Technology. Olorama. Available online: http://www.olorama.com/en/ (accessed on 9 January 2017).

- Enttec. Controls, Lights, Solutions. Available online: http://www.enttec.com/ (accessed on 9 January 2017).

- Portalés, C.; Gimeno, J.; Casas, S.; Olanda, R.; Giner, F. Interacting with augmented reality mirrors. In Handbook of Research on Human-Computer Interfaces, Developments, and Applications; Rodrigues, J., Cardoso, P., Monteiro, J., Figueiredo, M., Eds.; IGI-Global: Hershey, PA, USA, 2016; pp. 216–244. [Google Scholar]

- Giner Martínez, F.; Portalés Ricart, C. The Augmented User: A Wearable Augmented Reality Interface. In Proceedings of the International Conference on Virtual Systems and Multimedia (VSMM’05) Ghent (Belgium), Ghent, Belgium, 3–7 October 2005; pp. 417–426. [Google Scholar]

- Brooke, J. SUS—A quick and dirty usability scale. In Usability Evaluation Ind.; Jordan, P.W., Thomas, B., Weerdmeester, B.A., McClelland, I.L., Eds.; Taylor & Francis: New York, NY, USA, 1996; pp. 189–194. [Google Scholar]

- Díaz, D.; Boj, C.; Portalés, C. Hybridplay: A new technology to foster outdoors physical activity, verbal communication and teamwork. Sensors 2016, 16, 586. [Google Scholar] [CrossRef] [PubMed]

- Peruri, A.; Borchert, O.; Cox, K.; Hokanson, G.; Slator, B.M. Using the system usability scale in a classification learning environment. In Interactive Collaborative Learning: Proceedings of the 19th ICL Conference—Volume 1; Auer, M.E., Guralnick, D., Uhomoibhi, J., Eds.; Springer International Publishing: Cham, Germany, 2017; pp. 167–176. [Google Scholar]

- Kortum, P.T.; Bangor, A. Usability ratings for everyday products measured with the system usability scale. Int. J. Hum. Comput. Interact. 2013, 29, 67–76. [Google Scholar] [CrossRef]

- Bangor, A.; Kortum, P.; Miller, J. Determining what individual sus scores mean: Adding an adjective rating scale. J. Usability Stud. 2009, 4, 114–123. [Google Scholar]

- Brooke, J. Sus: A retrospective. J. Usability Stud. 2013, 8, 29–40. [Google Scholar]

- Cameron, J. IMSDB. Titanic, a Screenplay by James Cameron. Available online: http://www.imsdb.com/scripts/Titanic.html (accessed on 9 January 2017).

- Sims, A. Hypable. Detailed Description of “Breaking Dawn” Honeymoon Scene. Available online: http://www.hypable.com/detailed-description-of-breaking-dawn-honeymoon-scene (accessed on 9 January 2017).

- Lovotics. Kissenger. Kiss Messenger, a Lovotics Application. Available online: http://kissenger.lovotics.com/ (accessed on 9 January 2017).

- Teh, J.K.S.; Cheok, A.D.; Peiris, R.L.; Choi, Y.; Thuong, V.; Lai, S. Huggy Pajama: A mobile parent and child hugging communication system. In Proceedings of the 7th International Conference on Interaction Design and Children, Chicago, IL, USA, 11–13 June 2008; pp. 250–257. [Google Scholar]

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Portalés, C.; Casas, S.; Vidal-González, M.; Fernández, M. On the Use of ROMOT—A RObotized 3D-MOvie Theatre—To Enhance Romantic Movie Scenes. Multimodal Technol. Interact. 2017, 1, 7. https://doi.org/10.3390/mti1020007

Portalés C, Casas S, Vidal-González M, Fernández M. On the Use of ROMOT—A RObotized 3D-MOvie Theatre—To Enhance Romantic Movie Scenes. Multimodal Technologies and Interaction. 2017; 1(2):7. https://doi.org/10.3390/mti1020007

Chicago/Turabian StylePortalés, Cristina, Sergio Casas, María Vidal-González, and Marcos Fernández. 2017. "On the Use of ROMOT—A RObotized 3D-MOvie Theatre—To Enhance Romantic Movie Scenes" Multimodal Technologies and Interaction 1, no. 2: 7. https://doi.org/10.3390/mti1020007

APA StylePortalés, C., Casas, S., Vidal-González, M., & Fernández, M. (2017). On the Use of ROMOT—A RObotized 3D-MOvie Theatre—To Enhance Romantic Movie Scenes. Multimodal Technologies and Interaction, 1(2), 7. https://doi.org/10.3390/mti1020007