1. Introduction

Until the 16th century, the world was relatively simple. Aristotle’s worldview and his physical laws formed the basis of reality. It was the wisdom of a ‘Golden Age’. The Earth is the centre of the universe and a material object falls to the Earth because all objects are attracted to the centre of the cosmos. A heavy object has a greater tendency to be attracted by it than a lighter object and therefore unquestionably falls faster. All heavenly bodies move around the Earth in orbits of the most perfect shape: the circle. These circles are located on spheres around the cosmic centre, the Earth. Experimental testing of scientific conclusions is unnecessary and scientifically irrelevant. Only pure reason is pure science and is regarded as an exact description of reality.

On the basis of this worldview, Ptolemy was even able to develop a mathematical description of the movement of the celestial bodies in the 2nd century AD. Although complex, this model, which is described in Almagest (‘The Greatest’), worked so well that it served as the basis for establishing calendars and made accurate navigation possible.

Until, that is, Nicolaus Copernicus published De revolutionibus orbium coelestium (‘On the Revolutions of the Celestial Spheres’) in 1543, just before his death. The prevailing worldview of the Earth as the centre of the universe was turned upside down; the Earth, as well as the other planets, revolved around the Sun. This assertion was very difficult to grasp at the time, for if we were moving, surely, we would feel it; the rapid motion would generate an unimaginable wind force. Intuitively, everything felt as though we were in fact standing still.

In the 17th century, Johannes Kepler came along with a detailed calculation of planetary motion. He described the discipline of celestial mechanics, known as Kepler’s laws. He nonetheless believed strongly in astrology and thought that angels pushed the planets along in their orbits, which to his regret had to be imperfect circles, namely, elliptical orbits. But he accepted what he observed and because of it, got into hot water with the inquisition.

In 1591, at 27 years of age, Galileo Galilei was appointed professor at the University of Padua, which he quickly left for a post in Florence. He strongly supported and defended the Copernican worldview and laid the foundation of experimental physics. He rejected the Aristotelian worldview and stated that science should only be concerned with what can be demonstrated. He rejected intuition and authority in science—the only criterion for judgment in science is experimental demonstration.

He was the first person to distinguish between speed and acceleration in order to corroborate the ideas of Copernicus, thus providing the basis for the later thinking of Albert Einstein in his theory of general relativity. The inquisition put a spoke in the wheel of Galileo’s work, too, for which the church ultimately apologised in 1992.

Galileo’s experimental approach to physics caused it to gain momentum. Newton achieved a description of reality in mathematical laws. Numerous other scientists contributed, and an increasingly accurate description of the world was elaborated. To such an extent that Lord Kelvin, at the end of the 19th century, asserted that we knew everything there was to know and that all that remained was more and more precise measurement.

However, then came Max Planck with his description of black-body radiation and the introduction of quanta—packets of energy. J.J. Thomson discovered the first elementary particle, the electron. Albert Einstein had his memorable year, 1905, in which he furnished indirect proof of the existence of atoms in his paper on Brownian motion. With the photoelectric effect explained and wave–particle duality introduced, he cemented his status as ‘a man apart’, and continued to do so even after his Nobel Prize for this in 1921. When he relativised time and established the equivalence of mass and energy. In 1915, he introduced his general theory of relativity, in which gravity and acceleration are equivalent and where the fabric of space and time is bent by objects with mass, producing gravity. While Newton’s laws generated artefacts in describing the greatest object of all—the cosmos—general relativity provided a much more accurate representation. In 1917, Einstein published his quantum theory of radiation and introduced the concept of stimulated emission, which later became the basis for the development of lasers.

At the same time, there was an intense quest for a description of the infinitesimal. Ernest Rutherford attempted to unravel the atomic model, but it was Niels Bohr and his followers who perfected the theory. Quantum mechanics was born. Niels Bohr described the atom as consisting of a positively charged atomic nucleus with negatively charged electrons orbiting around it, like a minuscule solar system. Wolfgang Pauli’s exclusion principle assigned the electrons to well-defined orbitals or energy states around the nucleus. Werner Heisenberg’s matrix mechanics and Erwin Schrödinger’s famous equation described a model for the movement of electrons around the nucleus as a probability function. Heisenberg introduced his uncertainty principle and Louis de Broglie demonstrated that if an electromagnetic wave can have a particle nature, each particle or object can also have a wave nature.

The world of the smallest appeared to be very strange and confusing. Here, not only concepts like wave–particle duality, but also entanglement, nonlocality, superposition, quantum tunnelling and nuclear spin resonance are regarded as perfectly normal. Nonrealism—in which a particle or object has no physical identity unless it is measured or observed—is the concept that a property has no value unless it is measured, and the outcome depends on how it is measured. This all felt completely counterintuitive. The Aristotelian worldview was more comprehensible, but the laws and equations of relativity theory and quantum mechanics led to very real applications. However incomprehensible, these theories give a much more accurate description of reality than the first, more intuitive concepts. Without a relative view of time, GPS would be impossible. Quantum mechanics has proven to be one of the most successful theories of physics, and today, around 30% of the global economy is based on it.

The most bizarre aspect of quantum physics appears to be the significance of observation, of perception and measurement, and of interaction with the environment. The fact that the ‘conscious’ observer interacting with the environment experiences another, macroscopic reality other than the microscopic reality that lies behind it (an incomplete list of references include [

1,

2,

3,

4,

5,

6,

7,

8,

9,

10,

11,

12,

13,

14,

15]).

2. Energons

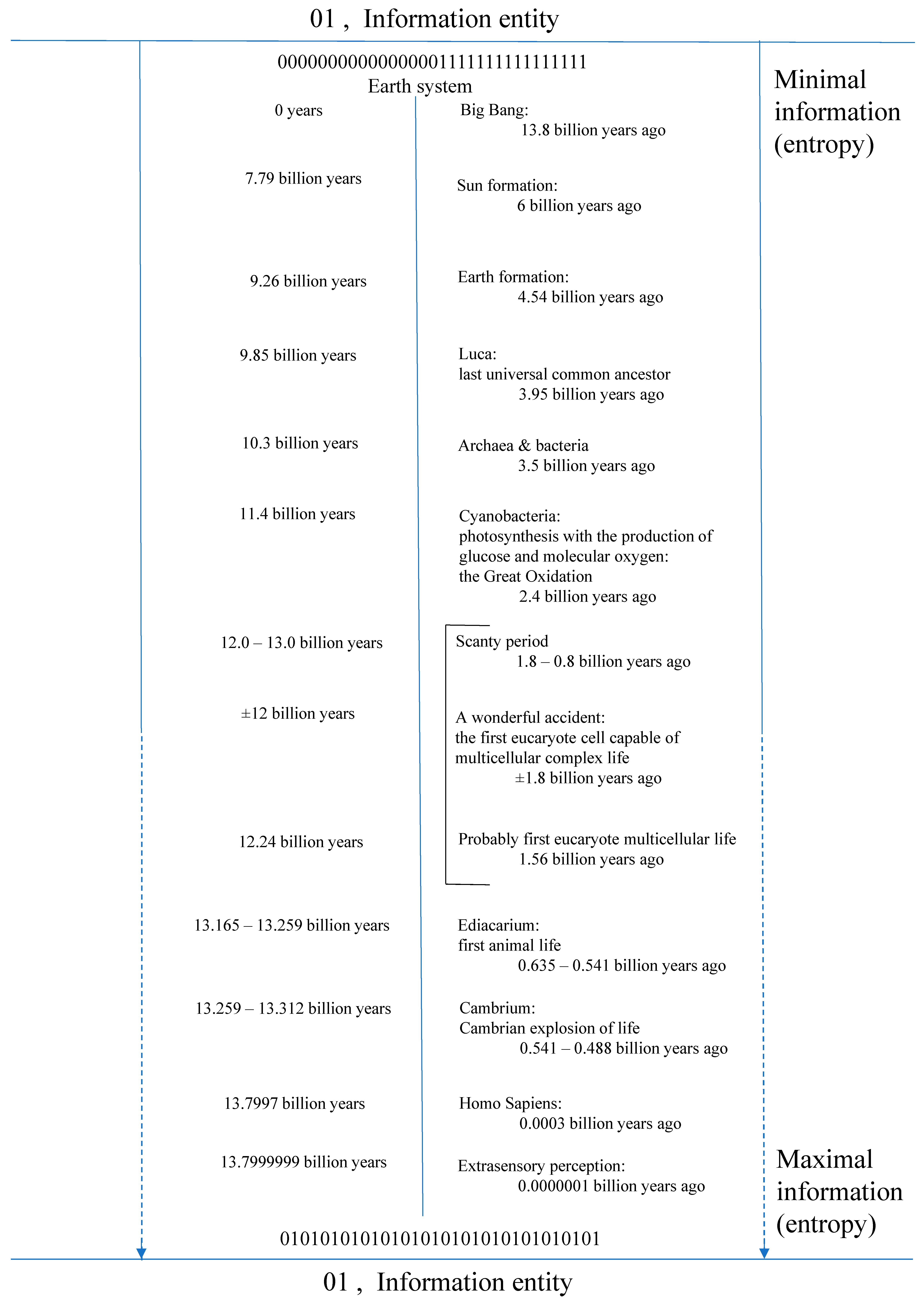

Aristotle’s worldview gave humanity an intelligible and static universe. Science has since discovered that the universe arose some 13.8 billion years ago. The general consensus is that this occurred in a ‘Big Bang,’ in which everything suddenly emerged from nothing.

Some scientists, such as Erik Verlinde, have a problem with this. It is intuitively very difficult to imagine that everything can arise from nothing. Galileo Galilei reduced the role of intuition in science and argued for the criterion of experimental verification, but assumptions that are impossible to verify or falsify experimentally and are contrary to sound intuition must be properly challenged.

In his 1958 lecture ‘There is plenty of room at the bottom’, Richard Feynman laid the foundation for the development of nanotechnology, a term introduced by Eric Drexler in 1986.

In nanoscience we learn that when material particles become smaller than a limit of around 100 nanometer, the surface area of the particle becomes more important than the volume. This is in contrast to the situation in which the bigger an object—and in cosmological terms, a space—is, the bigger and more significant the volume becomes relative to the surface area. This is an important aspect of Erik Verlinde’s idea of gravity as an emergent entropic force in an elastic universe [

16,

17,

18,

19,

20].

Returning to the microworld, we find that material particles of 100 nanometers or less start to show strange properties. When the surface area becomes more significant than the volume, even familiar macroscopic properties appear to change. Gold nanoparticles have a different colour and melting point. When they interact with the right wavelength of electromagnetic radiation, they are capable of plasmon resonance. A familiar substance like gold appears to show completely different physical behaviour and obeys different physical laws when it is split into dimensions where the surface area dominates over the volume [

21].

What is more, 100 nanometres in the microworld is still enormous. The diameter of atoms ranges between 0.6 and 6 Ångström, or 0.06 and 0.6 nanometres. Quantum dots—nanoparticles consisting of a number of semiconductor atoms and whose size is comparable to or smaller than the width of the wave function of an electron—enter a state where quantum effects predominate. They then behave like a single molecule and their energy bands shift accordingly. This is the effect of quantum confinement. They interact with electromagnetism, allowing them to radiate light of very specific wavelengths or, when they absorb it, to supply high-energy electrons capable of starting photochemical reactions [

4].

From 100 nanometres down to the dimensions that the LHC, CERN’s Large Hadron Collider, can currently penetrate is another order of magnitude. This allows scientists to see on the scale of elementary particles—to observe the interactions of the carriers of fundamental natural forces.

With the most advanced measuring equipment today, the LIGO or Laser Interferometer Gravitational-Wave Observatory, it is possible to detect a change of less than the diameter of an atomic nucleus at a distance of 4 km. This has allowed the gravitational waves predicted by Einstein to be detected. The changes measured are on the improbable order of 1 attometre: 10−18 m.

An attometre is an almost incomprehensible distance. It is far removed from 100 nanometres, the distance at which surface area begins to predominate over volume. If we then note that the distance between 1 m and 1 attometre is, relatively speaking, about the same as the distance between 1 attometre and 1 Planck length, we may be entering a completely different reality. Most physicists consider a Planck length, lp ≈ 1.616199 × 10−35 m, to be the smallest possible distance, because it is the limit to which Heisenberg’s uncertainty principle applies. At such small distances, we may be able to assert that volume becomes insignificant or even disappears completely, and only a one-dimensional surface remains.

Calculations of how much information empty space contains show that precisely a single bit, a qubit or quantum bit, fits into such a Planck area or Planck surface [

20]. This has led some scientists to suggest that information is a fundamental building block of the universe.

Using their theory of the ‘holographic principle’, Gerard’t Hooft, Leonard Susskind, and later, Juan Maldacena have demonstrated the law of ‘conservation of information’. That is why Erik Verlinde has trouble with the concept of the Big Bang, in which all information suddenly appears from nothing. According to him, it is impossible for the information from the very beginning of the universe to be equivalent to the rich collection of information found in the cosmos today.

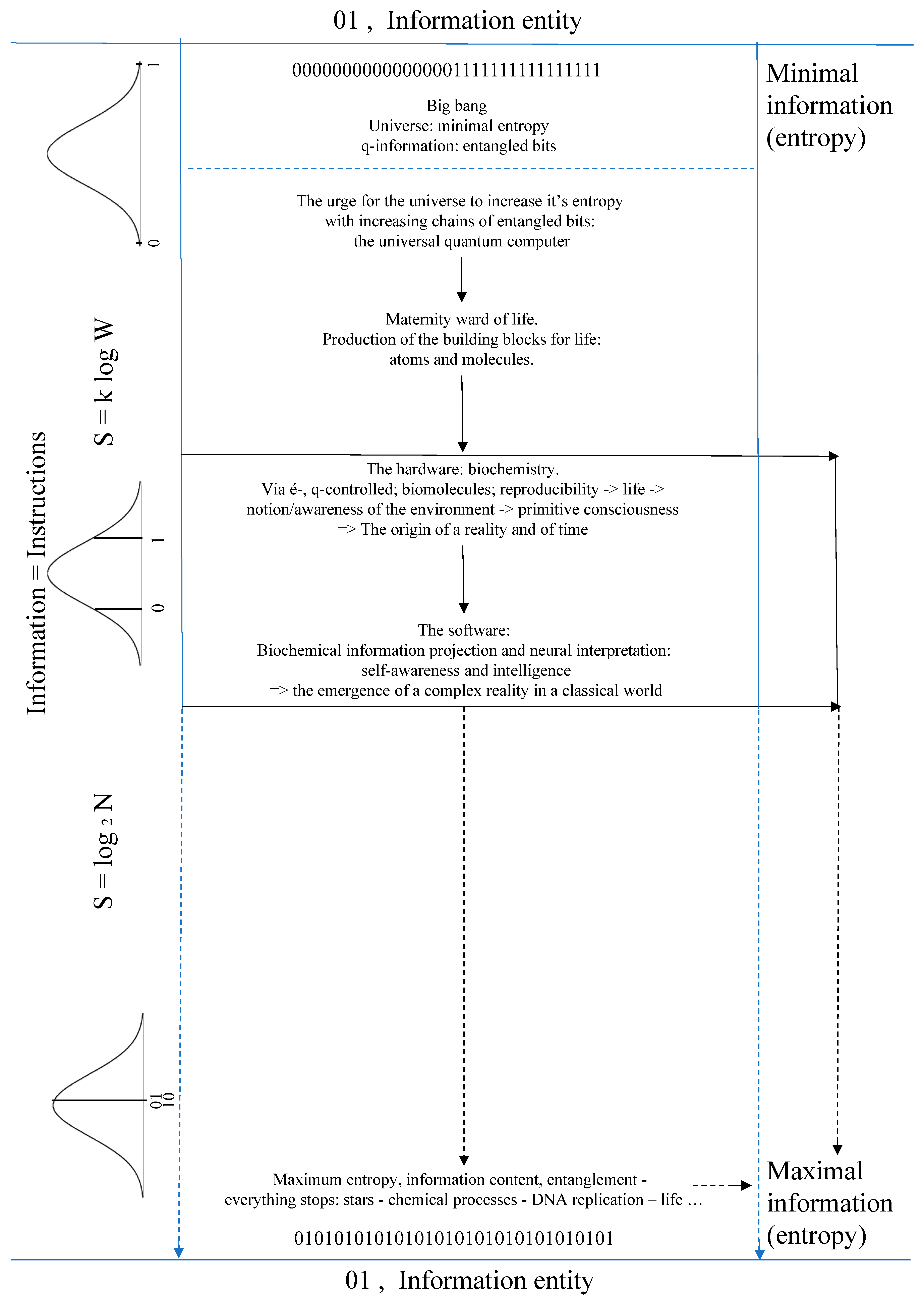

We can imagine a more fundamental entity than the universe. An ‘information entity,’ that consists only of qubits—of information, of energons as one-dimensional Planck surfaces, out of which information can bubble up like vapour bubbles in boiling water, comprising all the information for the birth, evolution and end of a universe. This end would then be that the system goes back to its state of maximum entropy—back into its pure information state.

3. Information

Nicolas Léonard Sadi Carnot was an officer in the French army at the time of Napoleon, but also a physicist and mathematician. To help France win its wars, he did research on heat exchange and ways of making steam engines more efficient. Previously, James Watt had been able to increase their efficiency from 1% to 19%. Carnot’s sole publication in 1824, Sur la puissance motrice du feu (‘On the motive power of fire’), received little attention during his lifetime, but he nevertheless laid the foundation for the science of thermodynamics. His work was later used by Rudolf Clausius and Lord Kelvin to formalise the second law of thermodynamics and to introduce the concept of entropy and define it as a measure of the disorder in a system. According to the second law of thermodynamics, the entropy of an isolated physical system can never decrease. This law is regarded as the physical law with the greatest impact outside the realm of physics itself.

Ludwig Boltzmann refined the concept in 1877 and characterised entropy as the total number of distinct microscopic states in which particles composing a parcel of matter can exist without altering the external appearance of the parcel.

Based on this, Claude Shannon introduced in 1948 the concept of entropy as a measure of information content: the Shannon entropy. The number of states calculated from the Boltzmann entropy reflects the amount of Shannon information required to bring about any specified arrangement of particles.

Rolf Landauer showed that for information storage and transfer, physical systems are always needed—information has to obey physical laws. He demonstrated that destruction of information costs energy, because discarding information has fundamental physical consequences [

22]. Later on, Lucas Céleri demonstrated that the ‘Landauer Principle’ also stands in a system obeying the mysterious laws of quantum physics [

23].

Gerard ’t Hooft and Leonard Susskind showed that even in black holes information cannot be lost. In 1986, Rafael Sorkin proved that the entropy of a black hole is precisely one quarter of the area of the event horizon of the black hole as measured in Planck surfaces. Jacob Bekenstein introduced the generalised second law of thermodynamics as—the sum of the entropies of black holes and the ordinary entropy of the universe never decreases.

Gerard ’t Hooft and Leonard Susskind postulated the holographic principle (L. Susskind, Journal of Mathematical Physics 36, 6377 (1995)), according to which the information content of a region of space and time is given by a quarter of the area of its horizon. It ensues from this that all the information describing the visible universe is encoded on a quarter of the de Sitter horizon.

The second law of thermodynamics could be redefined as: entropy, a measure of the amount of information in a system, must always increase.

Erik Verlinde puts information at the centre of his new theory of gravity, an interesting and innovative concept—but the theory still has many issues to solve. He describes the universe in terms of information, or more exactly, in terms of quantum mechanical entanglement entropy. Gravity arises from microscopic quantum information and quantum entanglement, with its associated entanglement entropy playing a central role in this. One qubit consists of two entangled particles and fits in the smallest possible volume: a Planck volume. For every bit of information you throw into a black hole, the surface of its horizon expands with one Planck length, suggesting that information is a fundamental building block of the universe. Changes in the density of information play the same role in the emergence of gravity as molecules do in the rising of temperature; entropy equals the total amount of information and energy is the speed by which information gets processed. In this, temperature equals the energy per amount of information. Spacetime and matter are all the same, they exist one by one out of the same building blocks. Information is located not only on the surface bounding a specific space but within the space itself as well, giving rise to the fact that entangled quantum information is not only responsible for the emerging of spacetime, but also leads directly to the arising of ordinary matter, dark matter and dark energy. The larger the region of space, the more important the volume relative to the surface. For very large regions of space, where relativity theory requires dark matter and dark energy to ensure that calculations agree with observations, Verlinde’s model describes the universe in terms of the distribution of information without the need for dark matter or dark energy. Erik Verlinde: “And from theoretical perspective, insights from black hole physics and string theory indicate that our macroscopic notions of spacetime and gravity are emergent from an underlying microscopic description in which they have not a priory meaning” [

16,

17,

18,

19,

20].

John Wheeler was the first to start using information to describe reality. He argued that the universe is characterised by ‘it from bit.’ In other words, a physical object, it, always consists of information, bits.

For scientists such as John Wheeler, Gerard ’t Hooft, Leonard Susskind, Juan Maldacena and Erik Verlinde, bits of information or qubits are a more fundamental building block of reality than quarks and electrons, although a qubit is not a physical object but contains information about the physical object. They argue that this deeper layer of information in all probability exists and that this insight alone has significant consequences for our understanding of reality [

19].

4. Information and Consciousness: Biochemical Information Projection

Our understanding of reality has had to be radically and drastically revised a number of times. The Earth of the ancient Greeks was the centre of the universe and most certainly flat, or else you would fall off. Logically it was dangerous to approach the edge. Until it became apparent that the Earth was demonstrably round and, remarkably, we did not appear to fall off after all. Then it transpired that the Earth also rotated and moved around the Sun, which in those days was contrary to all sense and reason—for movement, after all, was something you could feel.

Later it became apparent that space as we thought we knew it, and time, which is so familiar and which we thought we could measure so precisely with our accurate clocks, were relative as well.

However, things became completely baffling when the world that had always been hidden from us, the world of the infinitesimal, gradually began to reveal itself. The microscopic world proved to be fundamentally different from the world as it was normally perceived and described by physical laws. This world obeyed very different laws, and nothing was certain anymore. However, all the calculations of quantum mechanics are so amazingly accurate that there must be a reality behind it, but one that is hidden from our direct perception. There proved to be a bizarre but clear correlation between measurement or observation and the behaviour of the smallest. Niels Bohr and the Copenhagen School expressed it in the words—a microscopic property has no value unless it is a measured value, and the outcome depends on the measurement procedure. Perception, observation and measurement lead to quantum decoherence with the leaking of information and to quantum collapse with the release of all the information contained in the system [

4]. This nonrealism, which Albert Einstein found so difficult to accept, has recently been unequivocally demonstrated—like nonlocality, which he called ‘spooky action at a distance’ [

24].

Descartes had already feared it in the 17th century: “My senses deceive me.” How can the strange, bizarre world of the smallest, of which everything is made up, be so fundamentally different from the perception of reality we experience every day.

4.1. A Maternity Ward of Life

The universe began around 13.8 billion years ago. The natural elements or atoms were formed from hydrogen in the various generations of stars and supernovas. The triple-alpha process, which produces the element carbon (C) that is indispensable to organic chemistry, appears to be so remarkably fine-tuned that if the half-life of one of the components differed by only a fraction there would be too much or too little carbon in the universe, making organic chemistry, and hence life, impossible [

6].

And if the binding energy, the strong nuclear force, mediated by gluons, was slightly smaller than the energy of the difference in mass between a proton and a neutron, then all the neutrons in an atomic nucleus would decay and the fundamental building block of life, the atom, would be impossible [

25].

In a recent publication in Nature, Japanese researchers report that they found graphite in rock in Labrador that was 3.95 billion years old and that their isotope analysis indicates it must have originated from life [

26].

Earlier findings suggest that life on Earth arose around 3.7 billion years ago. The oldest fossils on Earth were discovered in rocks from the Isua Greenstone Belt in Greenland—the remains of a bacterial layer that grew there when the Earth was only 0.8 billion years old [

27]. Life therefore developed relatively quickly after the Earth was formed, but then took a substantially longer period—another 3.6997 billion years or so—before complex life forms, conscious of their origins and their place in ‘reality,’ could develop.

As far as we know and can assume now, the universe took about 10 billion years to develop, by trial and error, the first primitive life.

Life is apparently so incredibly difficult to make that you need a whole universe and a whole lot of time to do it.

4.2. From Nonrealism to Physical Objects

The laws of quantum physics have proved to be a more solid basis for describing reality than classical physics, because they are able to describe the world of the smallest, of which the macroscopic classical world is made up.

Quantum physics states that no quantum state has physical reality unless it collapses and that the corresponding collapse of the wave function can only be brought about by observation or measurement—by interaction with the environment [

1,

2,

4,

7,

11,

14,

24,

28,

29]. Without this, a closed system remains in its quantum state. At the Big Bang—where it is difficult, or rather impossible, to define ‘at’—the universe was in a quantum state and therefore in a superposition of all allowable possibilities. However, we experience a physical universe and not its quantum state. There must therefore have been something that terminated the original quantum coherence and superposition.

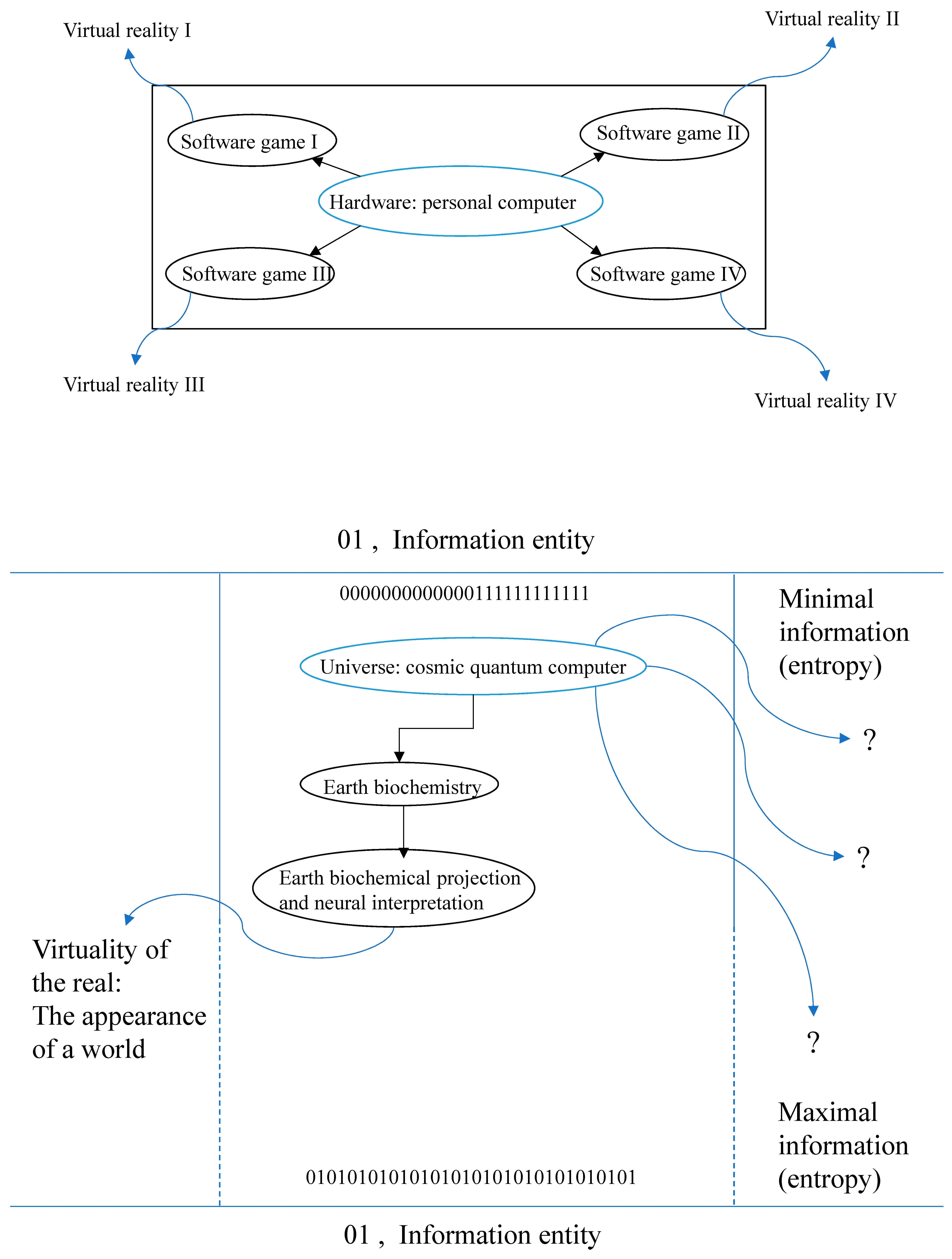

John Wheeler was the first to bring information to the fore—‘it from bit’—but to transform a bit into, or interpret it as an it, a transformer is needed in the same manner as a screen that is linked to a computer, laptop or smartphone.

Together with Martin Reese, John Wheeler is one of the pioneers of the participatory anthropic principle, which states that the universe could only come out of its quantum state through perception and observation by conscious observers (

Appendix A and

Appendix B). An extension of the anthropic principle which states that all natural laws in the universe and their parameters and constants must be precisely the way they are because otherwise there would be nothing or no one to observe them.

In a latest theory, Markus Müller describes the emergence of reality itself through perception and observation. The world is not governed by physical laws but by subjective experiences. A universe directly created by perception through first-person perspective experiences where information-theoretic contents of observers’ brains runs on algorithmic information processing with the creation of reality as an outcome [

30,

31,

32,

33]. Where John von Neumann and Eugene Wigner placed human consciousness on the forefront, Markus Müller is more reserved as he refers to brain contents and to the mind, which he places in a more fundamental position, in some specific sense, than the world itself.

However, again, this raises the question of ‘conscious observer’ and ‘consciousness’. Various interpretations of quantum physics juggle with consciousness and its interaction with quanta, wave functions and entanglement. Physicist Roger Penrose and anaesthetist Stuart Hameroff have given it an almost metaphysical explanation.

However, where then, lies the apparently demonstrable but invisible link between the tiny quanta with their information and what is described as consciousness.

4.3. Consciousness

Consciousness is an extremely difficult concept. A widespread theorem in neuroscience states that consciousness arises from biological information processing [

34]. In its purest form, it can subsequently be defined as ‘notion or awareness of the environment’. Under this definition, consciousness must have developed together with the very first life. Notion and awareness of the environment are essential to survival. The earliest self-reproducing systems some 3.95 billion years ago must have had a notion and awareness of their surroundings, because they had to be able to distinguish food sources, energy and construction, from toxic substances and destruction. Today’s single-celled organisms also move towards food sources and turn away from poisonous substances, and even show individual and collective ‘behaviour’ [

35,

36]. The information transfer required for this interaction takes place chemically. The most primitive forms of consciousness must therefore have involved the transfer of chemical signals. And when single-celled organisms began to organise themselves into colonies, they also exchanged information via chemical signals.

As life forms evolved from unicellular to multicellular complex organisms, this chemical signal transfer remained indispensable as a means of communication between all distinct units or cells, as well as a means of interaction with the environment.

At some point in the course of evolution, nature had to develop another way to register, forward, store and exchange information in order to organise increasingly complex life forms and allow them to interact with the environment. The method of signal transfer familiar to us, electrical signal transfer, involves electrons, but their unpredictable behaviour made them impractical and unusable in complex life forms. Life developed a signal transfer—or information management—system involving action potentials and ion channels—a flow of positively charged sodium and potassium ions and a Na–K–ATPase pump. This apparently allowed information to be exchanged more efficiently and, especially, more reliably in the warmth and humidity of biochemical systems; the signals carrying the information could be efficiently channelled. This can be called biochemically encrypted signal or information transfer. Recent insights reveal the existence of a ‘bioelectrical code’. Information could be transferred and saved by means of ions and ion channels, enabling cells not only to communicate but also to store information for dynamic control of growth and form [

37]. The intricate electrical circuits in the brain may have evolved from this much simpler and slower primary signal transfer between cells in an organism, and likewise, the nerve cells, with the unique characteristic that they do not divide.

With the development of these specialised structures—the nerves—having the sole function of signal transfer and information management, life entered a new phase of evolution. Not only could information be registered, forwarded, stored and exchanged, but with the appearance of the first knots of nerve cells, information could also be processed. This made possible the appearance of the senses—biochemical measuring instruments that could detect the environment with high precision and send on the information via nerve pathways for processing. The more complex these knots became, the more information from the environment they could process and the better and more efficient they became at it. The first primitive brain structures developed. This marked the start of an evolution towards complex brain structures that could also interpret incoming information, where interpretation signifies ‘to give meaning to.’ Sensory information that could be interpreted made it possible to interact actively with the environment. It was no longer necessary to merely experience the environment passively—active interaction made it possible to influence and to adapt it as well.

The increasingly complex organisation of this interpretation ability ultimately led to self-awareness—the notion or awareness of one’s ‘own’ environment. Mirror recognition is often used to determine self-awareness. A few animals other than human beings have this ability, but animal species for whom smell is more important than sight or seeing in their interaction with the environment have developed a form of self-awareness through the nose.

The most complex forms of neural organisation led to awareness of the ability to interact with the environment, to self-aware interpretation of information, to intelligence. Intelligence created the possibility not only of influencing the environment and actively interacting with and adapting it, but also of trying to understand it.

The role of information appears to be crucial in the emergence of complex awareness and self-awareness. A new-born baby has no self-awareness. This only develops from the first year of life onwards following a constant stream of information through sensory perception. Experiments in which volunteers are isolated as much as technically possible from all sensory information have to be terminated after only a short time. The test subjects start to hallucinate and are unable to continue. People who suddenly become blind start to see things and people who suddenly become deaf or experience hearing loss start to hear songs or voices. The brain apparently needs to compensate for a lack or total absence of information.

Sensory information is essential for a well-functioning brain and for complex awareness and self-awareness. Self-awareness is not a metaphysical concept or construct. The brain constantly constructs a ‘sense of self’ through sensory information [

34,

38,

39,

40,

41,

42].

Consciousness as we know it has its basis in the exchange of information via chemical and electrical signals.

4.4. Interpretation of Information

Until the 1950s, the human being was divided into two components, one physical and the other mental. Consciousness as a biochemical creation was considered taboo. The behaviourists, who were an influential movement back then, thought everything was deterministic—humans reacted to stimuli and produced the expected responses. Like Lord Kelvin, they thought that once we knew all the stimuli and the resulting responses, we would be able to describe and predict all behaviour.

Progressive brain research has made these ideas obsolete. Consciousness has been shown to be a distinctly biochemical concept [

34], and it appears to be an emergent property of life—the sum of the constituent parts acting in concert creates a new property. The brain is characterised not only by signal transfer via action potentials but also by chemical information transfer via synapses and neurotransmitters. The glial cells and astrocytes, which were assumed to play no more than a supportive role, now appear to be involved in generating crucial activities as well. This all leads to an organisation of incredible complexity, the complexity from which awareness and self-awareness emerge. As an analogy, we can imagine a clock. Materials are first needed to manufacture all the component parts. These must then be meticulously put together and assembled. But once this precision work is completed, a force is required to make the component parts interact. Only then, when the component parts start working together, is this construction of inert material able to show the time. So too, the components of the brain must work faultlessly together for awareness and self-awareness to emerge.

Via the senses, information is sent through the nerve pathways and action potentials to the thalamus. The thalamus is the initial processing unit of the brain. From here, information is relayed via the corpus callosum or white matter to the prefrontal cortex, where it is sifted and sorted and sent on to the trivial centre of the cerebral cortex, ready for any further processing and interpretation needed. Visual information is sent to the visual centre—the part of the cerebral cortex that processes and interprets visual information— sound information is sent to the auditory centre and spatial information to the centre for proprioceptive processing. Thus, all incoming information has its own centre or centres for processing and interpretation. Of crucial importance here is the precise coordination of the thalamus, the prefrontal cortex and the two hemispheres of the cerebral cortex, as well as their connection via the corpus callosum. Good left–right communication and information exchange between the two hemispheres of the cerebral cortex are indispensable for awareness and self-awareness. Sensory information from the muscles, the joints, from sight and sensory information from the environment are integrated and processed in the gyrus angularis, making it essential for self-awareness [

34,

38,

39,

40,

41,

42].

Damage to the thalamus, the information collector of the brain, leads to impaired awareness.

Severing of the corpus callosum by surgery in an attempt to treat patients with extreme forms of epilepsy was later found to have resulted in two separate consciousnesses. Nobel Prize winner Roger Sperry reported that one cerebral hemisphere was no longer aware of what the other hemisphere saw.

In the case of hemispheric neglect, where one cerebral hemisphere is damaged, affected persons appear to no longer have any awareness or consciousness at all on the correlated side. Left-hemisphere neglect leads to total loss on the right side. Self-awareness on the right side is completely lost—it no longer exists. Such persons completely neglect the right half of their bodies—they do not recognise or acknowledge it. They eat only what is on the left side of the plate. And even in abstract space, the right side does not exist for them in the place where they are [

38,

39].

Sensory information and its interpretation by our brain are our only link with the environment—with the reality around us. The way our brain ‘interprets’ incoming information forms our picture of reality.

4.4.1. Colours Do Not Exist

Everyone is convinced that we see colours. But what is a colour? In exact terms, it is electromagnetic radiation between roughly 380 and 800 nanometres. That precisely these wavelengths made it possible for sight to evolve is not surprising—they are the wavelengths at which electromagnetism can ‘play’ with electrons, wavelengths that can be absorbed and emitted by electrons. They are also the wavelengths of the photochemical process on which all life depends [

14,

43,

44]. Via photochemical processes and photoisomerisation, ‘visible light’ can alter the spatial arrangement of the atoms in the retinal molecule and, via opsins, trigger the optic nerve. Smaller, higher-energy wavelengths knock out electrons to produce ions. Longer, lower-energy wavelengths do not interact with electrons. Infrared radiation interacts with the vibrational state of atoms and their bonds in a molecule, causing a warm body to emit it and a cool body to absorb it and become warmer. Microwaves interact with the rotational state of an atomic bond—its absorption likewise causing a rise in temperature.

However, back to our brain, to seeing and to colours—it is in itself amazing that life has been able to organise itself in this way, that it is even able to detect and perceive one of the fundamental natural forces, electromagnetism, and, above all, to measure it with the utmost precision. Life is capable of very accurately measuring wavelengths of electromagnetic radiation that vary by less than a nanometre, and electromagnetism, as such, can be defined as information. For in interacting with electrons, the ejected photon provides information on the interaction that has taken place. Thus, electromagnetic radiation is the transfer of information.

The eye is a measuring instrument. It observes, measures, differentiates and sends information via action potentials to the thalamus and via the corpus callosum and the prefrontal cortex to the visual centre in the cerebral cortex, which is able to process and especially interpret the information it receives. For complex organisms, this was a great evolutionary advantage and of vital importance. How were photons (electromagnetism) containing information on the living environment to be interpreted in order to use them to interact with that environment. The solution was simple but ingenious. Give each wavelength a ‘colour’ and it allows every object in the environment to be clearly distinguished. It is possible that when vision first developed it was based on the brightness or contrast of electromagnetic radiation, but this was selected out because colours were superior for perception of the environment and for survival.

Colours are universal, even for animals. Conspicuous colours mean ‘keep away, danger.’ Camouflage colours are interpreted as edible. Life forms other than humans have the same colour perception without having learned it. The work of Nobel laureate Yoshinori Ohsumi on the mechanisms of autophagy has shown that the simplest yeast cell uses exactly the same mechanisms for this process, and exactly the same genes, as the much more complex and organised cells of higher organisms—including human beings. When autophagy developed in evolution it turned out to be so useful that all subsequent life forms acquired it. The development of sight was also so successful that all later life forms able to acquire this attribute did so. The interpretation of electromagnetic radiation in colour is therefore universal. But electromagnetic radiation has no colour—interpretation by intricate nerve cell complexes gives the colour.

We can assert that if there was nothing to perceive and interpret electromagnetic radiation as colour, colour would not exist. Colours are an interpretation of observed information.

4.4.2. The Deceptive Brain

Information is interpreted in specialised structures of the brain as a ‘reality vital to life’.

The senses serve as measuring instruments; they perceive, observe, detect and measure and send the information to the brain to be processed and interpreted. But just as electromagnetic radiation has no colour, so too are smell, taste, sound and feeling in the sense of touch, proprioception and our position in space a product of the brain, with the senses as biochemical measuring instruments.

Touch and proprioception place a living organism in a specific spatial position in its environment. Touch also led to the perception of pain, important for promptly interpreting and moving away from harmful stimuli.

Hearing permitted direct interaction with the environment at a distance. It can also provide information when seeing is no longer possible—in darkness and at night.

The same applies to smells—interaction at a distance. So much information floats in the air—the dangerous smell of an enemy, the pheromones of a potential partner, the attractive smell of something edible. Even flowers invest a lot of energy in their fragrances to attract bees and ensure their diversity.

Flavours, too, were developed not to provide us with culinary pleasure but as an absolute necessity and a factor that enables us to distinguish between edible and harmful.

But just as sight and colours are a by-product of neural interpretation, touch, proprioception, sound, smell and taste have no physical identity but are the product of an apparent world that is vital to life, created by the brain to enable it to interact appropriately with the environment.

Take capsaicin, a product of spicy peppers. The mouth contains temperature sensors, which are cells with temperature-sensitive ion channels, TRPA1 and TRPV1, in their cell membrane. These structures prevent tissue damage from hot food and the ion channels are only opened or activated at temperatures of 43 °C or more—temperatures at which protein denaturation and tissue damage occur. Capsaicin has the property that it also activates these channels, but in a manner completely independent of temperature. As it is the TRPA1 and TRPV1 receptors that transmit the signal, the brain interprets this as—‘danger, be careful, too hot.’ We react even in a cool environment as if we were sitting in a sauna. We perspire and activate all the other body mechanisms that reduce our body temperature. And yet none of this has anything to do with temperature, heat or the vibrational energy of atoms and molecules. Capsaicin triggers a well-defined interpretation by the brain without the underlying physical mechanism.

Smell appears to depend on the molecular structure of odorous molecules. However, molecules with a completely different structure can trigger the same smell sensations, while almost identical structures can smell completely differently. Recent research has made it clear that the vibration frequency of an odorous molecule is also a determining factor in neural interpretation. Fruit flies easily follow specific odour cues in a maze, but if they encounter a molecule with a bond that has a characteristic vibration of around 66 terahertz, they turn away disgusted and choose another route. So far, the only proposed explanation for this is that at the appropriate vibration frequency an electron in the smell receptor jumps across by quantum tunnelling and activates a biochemical mechanism that triggers a smell sensation in the brain. The molecular vibration is interpreted in the brain as smell [

14].

Scientific research has advanced to the point where it is even possible to individually stimulate various parts of a specific zone of the brain with optogenetics. Light sensitive proteins in combination with integrated fiberoptic-coupled diode/LED technology can stimulate the brain very precisely and specifically. The taste centre contains a separate zone for sweet, sour, salt, bitter and umami. Studies show that these tastes do not have any physical basis but are a product of neural activity and biochemical interpretation. When laboratory animals are given a very unpleasant, bitter-tasting substance to drink, but at the same time the sweet zone of their brain is stimulated, they drink to their heart’s content. But when they receive an extremely pleasant, sweet-tasting drink and at the same time the bitter zone is activated, they begin to shiver violently and immediately stop drinking [

45].

Neural interpretation of incoming information therefore does not appear to be based on an objective physical reality. The brain seems to do its best to interact as successfully as possible with the environment. That this approach pays off can be seen from how successful our species is and how diverse life on Earth is.

Nothing is what it seems and nothing seems like what it is.

4.5. Primitive Information

Information about the environment through awareness is essential for life, but just as essential was the development of capabilities for storing information and passing it on to future generations. Life developed a complex molecule for this: DNA, deoxyribonucleic acid—whose chemical structure, a double helix, was not elucidated until 1953 by James Watson and Francis Crick. Life has a biochemical information storage capacity. DNA contains not only all the information on the development and construction of an organism, but also primitive information that is vital for survival—instincts. A fine example of this is sea turtles. The mother turtle lays her fertilised eggs outside her familiar protective environment, the ocean, on land, deep in the sand. When the warmth of the sun and the information in the DNA have transformed the egg into a baby turtle, it knows exactly what to do. All on its own, it crawls out of the sand, aware that this is not its habitat, and hurries towards something it has never experienced—the wild ocean. But ‘something tells’ the little creature that this is not something frightening, but the place where it has to go. All the information on what the animal has to do to survive was already there from its very beginning, in the fertilised egg from which it originated.

Not only the collection of information, but also its storage and the ability to pass it on are basic requirements for life and consciousness.

Primitive information creates a genetic code for how to interpret environmental information.

4.6. Extrasensory Perception

Until the end of the 19th century, the world seemed to be completely graspable; some thorough calculations and we would know exactly how everything worked. Until, that is, the first glimpses of a previously completely hidden world became apparent—the world of the infinitesimal. The macroscopic world as it had been felt and experienced until then was found to consist of microcomponents. And the strange thing about these microcomponents was that they appeared to obey completely unfamiliar laws. None of the familiar, known world of physics and its laws seemed to apply. The laws of the very smallest, of quantum physics, were completely counterintuitive. However, it quickly became apparent that these laws gave a more accurate description of ‘reality’ than classical physics.

The world of the very smallest could only be penetrated by building on existing knowledge and information: through mathematics, another product of endless information gathering and evolution—‘a product of human thought’, as Albert Einstein put it—, and through measuring instruments capable of ‘extrasensory perception’. This world turned out to be completely astonishing and bewildering. Reality as it was revealed by experiments and increasingly sophisticated measuring equipment was completely different from the familiar world of experience known to us through our senses. For the first time, the world could be explored without the filter of the brain. We could observe wavelengths that we had never been able to see before. We obtained a direct view of reality without interpretation or modification by the brain.

We were able to penetrate ever deeper into the strange reality that appears to lie behind our familiar world of sensory experience.

4.7. Biochemical Information Projection

John Wheeler predicted it, but it was not until the end of the 20st century before it became technically possible—a double-slit experiment with a delayed ‘choice’ quantum eraser. The results were truly astonishing. This experiment confirms the hypothesis in quantum physics that an observation simultaneously creates the relevant past history [

46].

From the very first developments in quantum physics, the importance of perception and observation, of measurement, was evident. Today, some 61 fundamental particles with about 20 different properties are known. The prevailing interpretation of quantum physics is still the interpretation of Niels Bohr and the Copenhagen School—a microscopic property has no value unless it is a measured value, and the outcome depends on the measurement procedure. The wholeness postulate predicates that the outcome of an experiment depends on the whole setup of the experiment. John Wheeler: “No microscopic property is a property until it is an observed property” [

6].

A closed system remains in its quantum state until it is observed. Regarded in this way, the universe must also be in a superposition of all possible states—that is, until life arose, which required direct interaction with the environment to survive. Information was translated into matter. Matter organised itself into information processing and self-reproducibility. Perception of the immediate environment became possible with accompanying quantum collapse. Consciousness arose in its most primitive form, creating a reality. Paul Davies: “Wave function collapse is projection into a single concrete reality” [

47].

As life developed it became a growing source of constant perception, observation and measurement. Progressively, a past developed from this—the sum of the states that the resulting mass particles had occupied until then. Observation creates the past but simultaneously closes off the future. From a state of quantum coherence, the classical laws of causality must at all stages be obeyed—and also those of time, which can be regarded as a well-defined progression of physical processes in a specific order, the extremely precise vibration of caesium atoms.

Life and consciousness continued to develop in ever more complex organisms. It took about 3.6997 billion years before an organism began to evolve that was able to combine information and consciousness into intelligence. This organism was not only in touch with the environment and with itself, but also began to influence the environment and manipulate it to its own advantage. Observation, measurement and perception grew exponentially. Extrasensory perception became possible. Ever larger pieces of the universe were brought out of their superposition state. Today’s sophisticated measuring instruments allow us to look further than ever into the past, which thereby takes form, and to penetrate ever deeper into the world of the smallest, the microscopic world where information plays a central role.

All this via matter, produced by nucleosynthesis, capable of a form of organisation called life—matter with the unique property that it can take part in chemical and biochemical reactions. These chemical and biochemical reactions are completely controlled by quantum processes; they are entirely determined by the interplay of electrons, obeying only the laws of quantum physics [

14,

48,

49].

We are matter that has organised itself in such a way that it is able to measure and observe, as well as record, process and store, the information of which it consists of and from which it originates—and above all, to interpret it as vital concepts that have nothing to do with their underlying reality, as in virtual reality, where a complete 3D world arises in a simulation and objects appear to have real existence. Yet the reality behind it, electrical circuits and software, looks nothing like the reality it creates.

Everything appears to consist of information, of microscopic quantum information, from which a biochemical projector distils a reality in which macroscopic objects and phenomena are experienced as having real existence but are not present on a microscopic scale.

John Wheeler raised the idea—‘The world around us is not at all what it seems’ in ‘Geometrodynamics, Academic Press, 1962′—where he refers to ‘law without law’, gravity without gravity’, ‘mass without mass’, and ‘elasticity without elasticity’.

Figuring out the truly physical nature of information and the mathematical foundations behind it, together with its links to neuroscience and the brain, will be a major future challenge.

Anton Zeilinger: “The distinction between reality and our knowledge of reality, between reality and information, cannot be made” [

50,

51].

5. Closing Remark

Chains of entangled bits shape the DNA of the universe.

Just like the ones and the zeros, the binary information in the cloud, do not mean anything in themselves, but need hard- and software to be translated, so the qubits, the quantum information that makes up the universe, do not signify anything concrete, but are transformed by biochemistry and projection into apparent reality.

Reality, the physical world as we perceive it, is a neural interpretation of perceived information.

The brain holds a mask in front of reality, behind which the real world, that of information and quanta, lies concealed—“the varnish layer,” Erik Verlinde says, “that we discern through our telescopes” [

19]. Markus Müller: “A reality emerging from subjective experiences” [

30].

We live, not in a virtual reality, but in a virtuality of the real. As a consequence, we will never be able to detect any possible existing extraterrestrial life, as this would have created an own reality, different and separated from our reality.

Further readings

D. Eagleman, “Livewired. The Inside Story of the Ever-Changing Brain,” Pantheon (2020).

D. Suhr, “Das Mosaik der Menschwerdung. Vom Aufrechten Gang zur Eroberung der Erde. Humanevolution im Uberblick,” Springer Berlin Heidelberg (2018).

A. Akhlesh, “Models and modelers of hydrogen,” World Scientific, London (1996).

V. Simulik, “What is the electron?”, Apeiron, Montreal (2005).