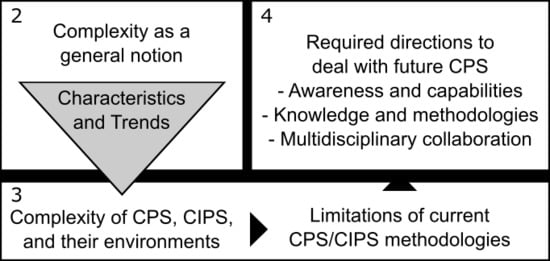

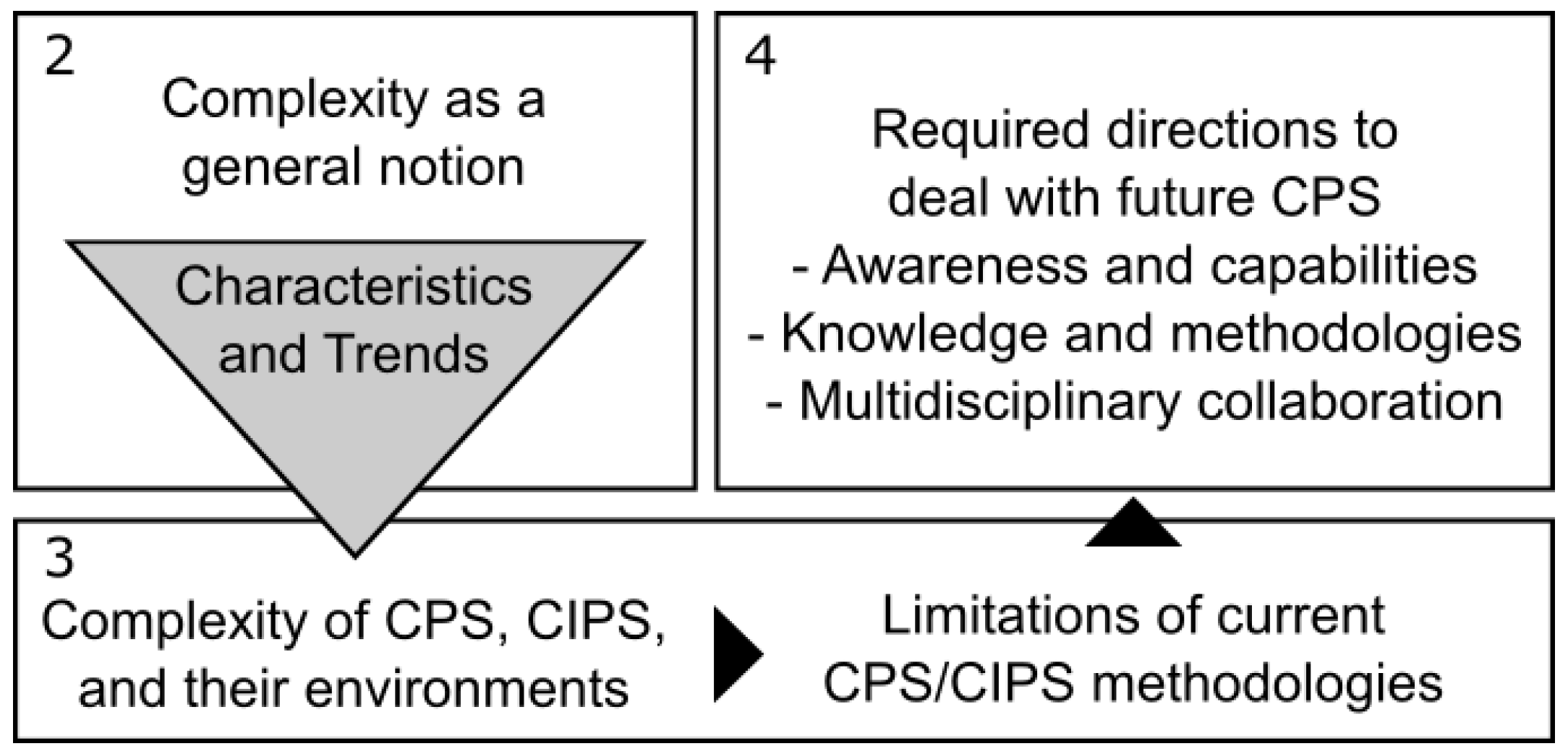

How to Deal with the Complexity of Future Cyber-Physical Systems?

Abstract

1. Introduction

2. Perspectives on Complexity

2.1. Sources of Complexity

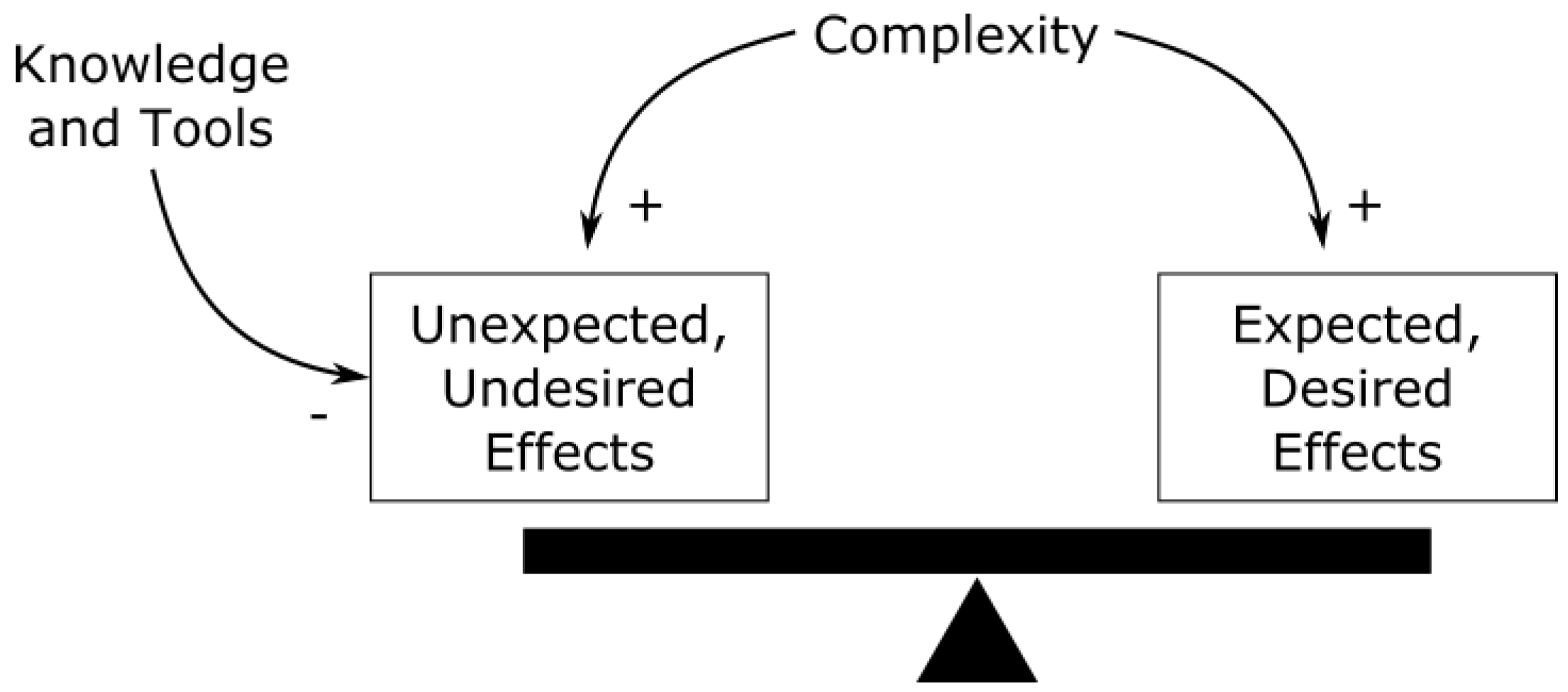

2.2. Effects of Complexity

2.3. Evolution of Complexity

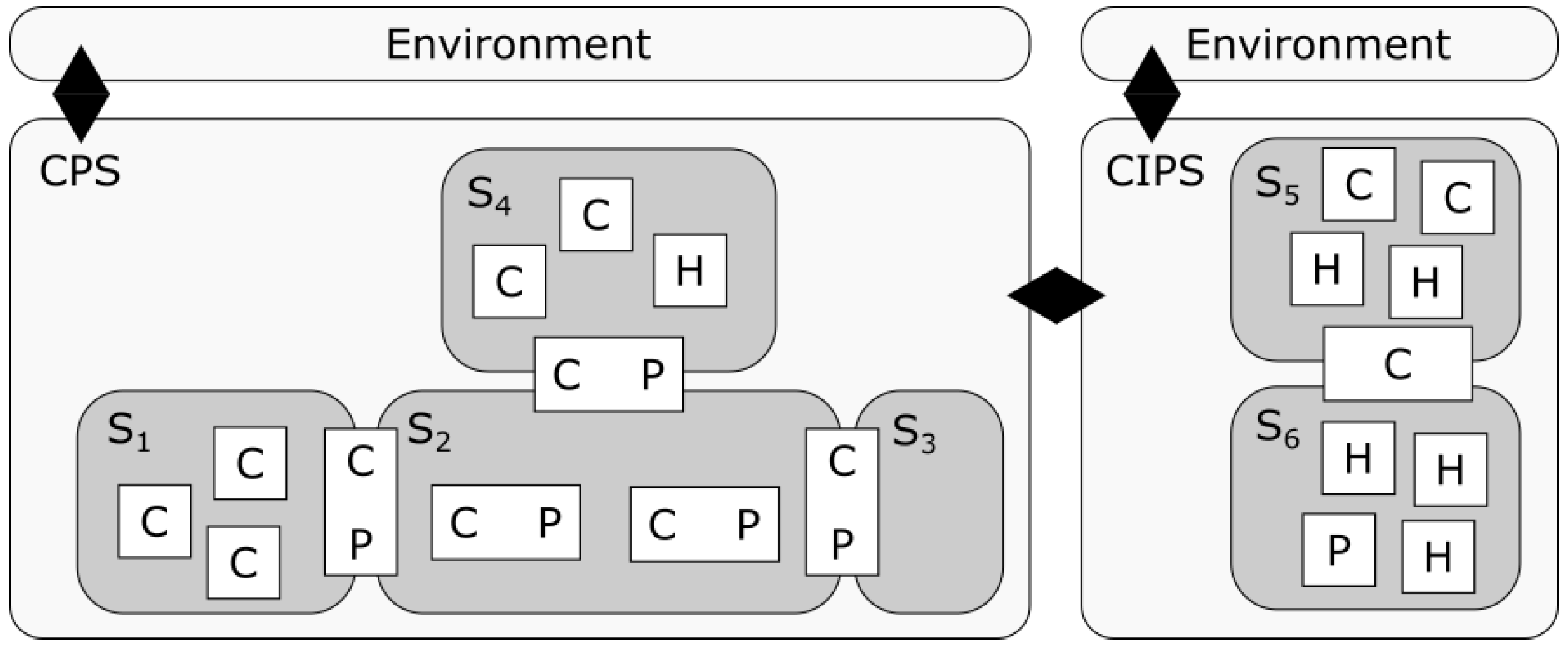

3. Complexity in CPS

3.1. Facets of Complexity

- The environment in which a CPS acts and undertakes tasks corresponding to its functional and extra-functional requirements. Environments and tasks are intimately related to the capabilities of CPS [36].

- The cyber components, where software-defined behaviors and sophisticated electronics platforms lead to very large state-spaces with implications for understanding, maintaining and predicting system behaviors. Unintended effects may arise from behaviors and assumptions referring to other software components, resource sharing and the CPS environment. Interactions between software and the electronics platforms and reuse of legacy components (sometimes black-box or poorly documented/understood), further contribute to complexity. Interactions between components in computer systems include both local and global interactions and exhibit much faster characteristic timescales compared to physical interactions [10].

- The physical components, where a key source of complexity arises from side effects which can be the same order of magnitude as the intended behavior (e.g., friction-induced thermal effects between surfaces in contact) [37]. Component interactions and side effects are further multifold (e.g., motion, heat, electromagnetism) and are characterized by strong local effects.

- Interactions between the cyber and physical components. Combining cyber and physical components enables feedback and adaptive systems, providing cost-efficient capabilities otherwise impossible but also characterized by more complex behaviors (e.g., hybrid real-time systems) including a multitude of possible faults and failure modes. As a particular characteristic, a CPS will be characterized by a multiplicity of interfaces and interrelations that encompass both explicit and implicit dependencies among CPS components, and between the CPS and its environment [10]. Correspondingly, a change in one part of a system may affect many others, producing unexpected or undesired side effects from the close coupling and tight integration. The integration of cyber and physical components moreover requires reconciling different worldviews and traditions (see e.g., [38]). Lacking timing (real-time) abstractions for software systems poses a key limitation for predictability [39].

3.2. Evolution of Future CPS

- Technological areas such as physical, embedded, networked and information (e.g., cloud and edge computing) systems,

- Standalone systems, for example integrating vehicles and infrastructure to form intelligent transportation systems, and

- Life-cycle stages, in particular making data available throughout the life-cycle and enabling software upgrades. These concepts are closely related to so-called DevOps (Development- Operations integration) associated with continuous software development, integration, and deployment with feedback from operational systems.

3.3. Limitations of Existing Methodologies

- Systems and components: architecture, design and integration,

- Connectivity and interoperability,

- Safety, security and reliability,

- Computing and storage,

- Electronics process technology, equipment, materials and manufacturing.

- Composability across components, disciplines and aspects. When composing CPS components, multiple technologies needs to be integrated (electrical, mechanical, hydraulic, software, etc.) and multiple aspects needs to be reconciled — referring to for example properties such as cost, safety and availability. When reasoning about such compositions, multiple theories will apply, each focusing on one or more aspects of integration (e.g., logic-interface automata, composing transfer functions, scheduling, overall reliability, etc.). Real and successful composition must consider all these aspects, including characteristic side effects and dependencies of CPS. For this there is no existing comprehensive theory or methodology. Current best practices can manage systems of today’s complexity, albeit using heuristics and with questionable cost-efficiency. Beyond composition at design or production time, CPS will be increasingly adaptive and able to reconfigure during run-time. There are thus needs to improve interoperability and reason about composability over multiple aspects also at run-time. In a similar vein, with the use of DevOps and learning systems with CPS, the software behaviors of parts of the CPS will change during operation, requiring the ability to monitor and ensure proper operations, see e.g., [1,8].

- Human-CPS integration. Regardless of the system type and level of automation, humans acting as developers, operators, users, and maintainers will increasingly have to deal with and interact with CPS. Increasing levels of automation poses challenges for human-machine interaction, e.g., for cases where humans (e.g., pilots in an aircraft) may still be responsible to act in emergency situations. We are currently transitioning to increasing levels of automation and smarter systems and the lessons learned in the automation history remain highly relevant to improve support for humans interacting with highly capable CPS [53]. As an example of a related challenge in automated driving, leading companies have abandoned the so-called “level 3” of automated driving, moving directly to “level 4” where the automation system is also responsible for fall-back maneuvers, see e.g., [54]. A key notion for human CPS is that of intent, i.e., the understanding of what drives the action or inaction of an agent. There is also a need to identify deviations from normal behaviour of such (human) agents, in particular behaviour that leads to decisions/actions of significance for the functioning of CPS [8] (e.g., Chapter 5.3.3).

- AI within CPS. While AI and machine learning technologies enable entirely new types of applications, the use of in particular machine learning in terms of neural networks raises concerns about how to deal with transparency (black-box behavior), robustness and predictability (e.g., when data is outside of a training set), and how to cost-efficiently verify, validate and assure such systems, see for example [55,56].

- Trustworthiness. Trust involves properties such as privacy, cybersecurity, safety and availability, which affect each other, requiring new holistic methodologies. Security risks already exist for current CPS and will increase as CPS become even more widely adopted, connected and with an increasing use of open source software. Absolute safety and security will not be possible so on-line measures are necessary to deal with security breaches and safety related anomalies. Moreover, the increasing deployment and use of CPS increases the importance of their availability, implying traditional fail-safe solutions are not an option. Future systems must be fault-tolerant while balancing the complexity increase due to the introduction of redundancy, adaptability and fall-back measures. Finally, issues related to liability, ethics and assurance are recognized as essential. Who if any will/should take responsibility when a complex CPS fails, what are the ethical considerations of decisions made by highly automated systems, and what is required by an assurance case for a future complex CPS? [8]

- CPSoS. Future CPS are likely to form part of CPSoS. Such systems, may also because of their novelty and scale, relate to multiple domains, and require consideration a larger set of stakeholders, jurisdictions, regulations and standards [9].

4. Addressing Limitations to Complexity

- Complexity awareness by people and organizations, as well as improving training, leveraging existing knowledge and best practices,

- Research towards establishing CPS foundations that addresses the identified limitations of current CPS methodologies,

- Multi-disciplinary collaboration for the above mentioned educational and research efforts, and also in terms of exchange of best practices across industrial domains and between academia and industry.

5. Discussion and Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Damm, W.; Sztipanovits, J.; Baras, J.S.; Beetz, K.; Bensalem, S.; Broy, M.; Grosu, R.; Krogh, B.H.; Lee, I.; Ruess, H.; et al. Towards a Cross-Cutting Science of Cyber-Physical Systems for Mastering all-Important Engineering Challenges. 2016. Available online: https://cps-vo.org/node/27006 (accessed on 4 August 2018).

- Lee, E.A.; Seshia, S.A. Introduction to Embedded Systems: A Cyber-Physical Systems Approach, 2nd ed.; MIT Press: Cambridge, MA, USA, 2017. [Google Scholar]

- Cengarle, M.V.; Bensalem, S.; McDermid, J.; Passerone, R.; Sangiovanni-Vincentelli, A.; Törngren, M. Characteristics, Capabilities, Potential Applications of Cyber-Physical Systems: A Preliminary Analysis. 2013. Available online: http://www.cyphers.eu/sites/default/files/D2.1.pdf (accessed on 11 July 2018).

- Cyber. Merriam-Webster Dictionary; Merriam-Webster: Springfield, MA, USA, 2018. [Google Scholar]

- Wiener, N. Cybernetics. Sci. Am. 1948, 179, 14–19. [Google Scholar] [CrossRef] [PubMed]

- Platforms4CPS. Foundations of CPS—Related Work. 2017. Available online: https://platforum.proj.kth.se/tiki-index.php?page=Foundations+of+CPS+-+Related+Work (accessed on 11 July 2018).

- Schätz, B.; Törngren, M.; Bensalem, S.; Cengarle, M.V.; Pfeifer, H.; McDermid, J.; Passerone, R.; Sangiovanni-Vincentelli, A. Research Agenda and Recommendations for Action. 2015. Available online: http://cyphers.eu/sites/default/files/d6.1+2-report.pdf (accessed on 20 October 2018).

- AENEAS; ARTEMIS Industry Association; EPoSS. Strategic Research Agenda for Electronic Components and Systems. 2018. Available online: https://efecs.eu/publication/download/ecs-sra-2018.pdf (accessed on 20 October 2018).

- Engells, S. European Research Agenda for Cyber-Physical Systems of Systems and Their Engineering Needs. 2015. Available online: http://www.cpsos.eu/wp-content/uploads/2016/06/CPSoS-D3.2-Policy-Proposal-European-Research-Agenda-for-CPSoS-and-their-engineering-needs.pdf (accessed on 10 August 2018).

- Törngren, M.; Sellgren, U. Complexity Challenges in Development of Cyber-Physical Systems. In Principles of Modeling; Lohstroh, M., Derler, P., Sirjani, M., Eds.; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2018; Volume 10760. [Google Scholar] [CrossRef]

- Grogan, P.T.; de Weck, O.L. Collaboration and Complexity: An experiment on the effect of multi-actor coupled design. Res. Eng. Des. 2016, 27, 221–235. [Google Scholar] [CrossRef]

- Ullman, D.G. Robust Decision-making for Engineering Design. J. Eng. Des. 2001, 12, 3–13. [Google Scholar] [CrossRef]

- Snowden, D.J.; Boone, M.E. A Leader’s Framework for Decision Making. Harv. Bus. Rev. 2007, 85, 68–76. [Google Scholar] [PubMed]

- Jackson, M.; Keys, P. Towards a System of Systems Methodologies. J. Oper. Res. Soc. 1984, 35, 473–486. [Google Scholar] [CrossRef]

- Bashir, H.A.; Thomson, V. Models for estimating design effort and time. Des. Stud. 2001, 22, 141–155. [Google Scholar] [CrossRef]

- Suh, N.P. A theory of complexity, periodicity and the design axioms. Res. Eng. Des. 1999, 11, 116–132. [Google Scholar] [CrossRef]

- Schlindwein, S.L.; Ison, R. Human knowing and perceived complexity: Implications for systems practice. Emerg. Complex. Organ. 2004, 6, 27–32. [Google Scholar]

- Brooks, F.P. No Silver Bullet: Essence and Accidents of Software Engineering. IEEE Comput. 1987, 20, 10–19. [Google Scholar] [CrossRef]

- Summers, J.D.; Shah, J.J. Mechanical engineering design complexity metrics: Size, coupling, and solvability. J. Mech. Des. 2010, 132, 021004. [Google Scholar] [CrossRef]

- Braha, D.; Maimon, O. A Mathematical Theory of Design: Foundations, Algorithms and Applications; Springer Science & Business Media: Dordrecht, The Netherlands, 1998. [Google Scholar] [CrossRef]

- Arena, M.V.; Younossi, O.; Brancato, K.; Blickstein, I.; Grammich, C.A. Why Has the Cost of Fixed-Wing Aircraft Risen? A Macroscopic Examination of the Trends in U.S. Military Aircraft Costs over the Past Several Decades; Monograph MG-696-NAVY/AF; RAND Corporation: Santa Monica, CA, USA, 2008. [Google Scholar]

- Albrecht, A.J.; Gaffney, J.E. Software Function, Source Lines of Code, and Development Effort Prediction: A Software Science Validation. IEEE Trans. Softw. Eng. 1983, SE-9, 639–648. [Google Scholar] [CrossRef]

- McCabe, T.J. A Complexity Measure. IEEE Trans. Softw. Eng. 1976, 4, 308–320. [Google Scholar] [CrossRef]

- Sinha, K.; de Weck, O.L. Empirical Validation of Structural Complexity Metric and Complexity Management for Engineering Systems. Syst. Eng. 2016, 19, 193–206. [Google Scholar] [CrossRef]

- Deshmukh, A.V.; Talavage, J.J.; Barash, M.M. Complexity in Manufacturing Systems. Part 1: Analysis of Static Complexity. IIE Trans. 1998, 30, 645–655. [Google Scholar] [CrossRef]

- Simon, H.A. The Steam Engine and the Computer: What Makes Technology Revolutionary. Comput. People 1987, 36, 7–11. [Google Scholar]

- Koh, H.; Magee, C.L. A functional approach for studying technological progress: Application to information technology. Technol. Forecast. Soc. Chang. 2006, 73, 1061–1083. [Google Scholar] [CrossRef]

- Koh, H.; Magee, C.L. A functional approach for studying technological progress: Extension to energy technology. Technol. Forecast. Soc. Chang. 2008, 75, 735–758. [Google Scholar] [CrossRef]

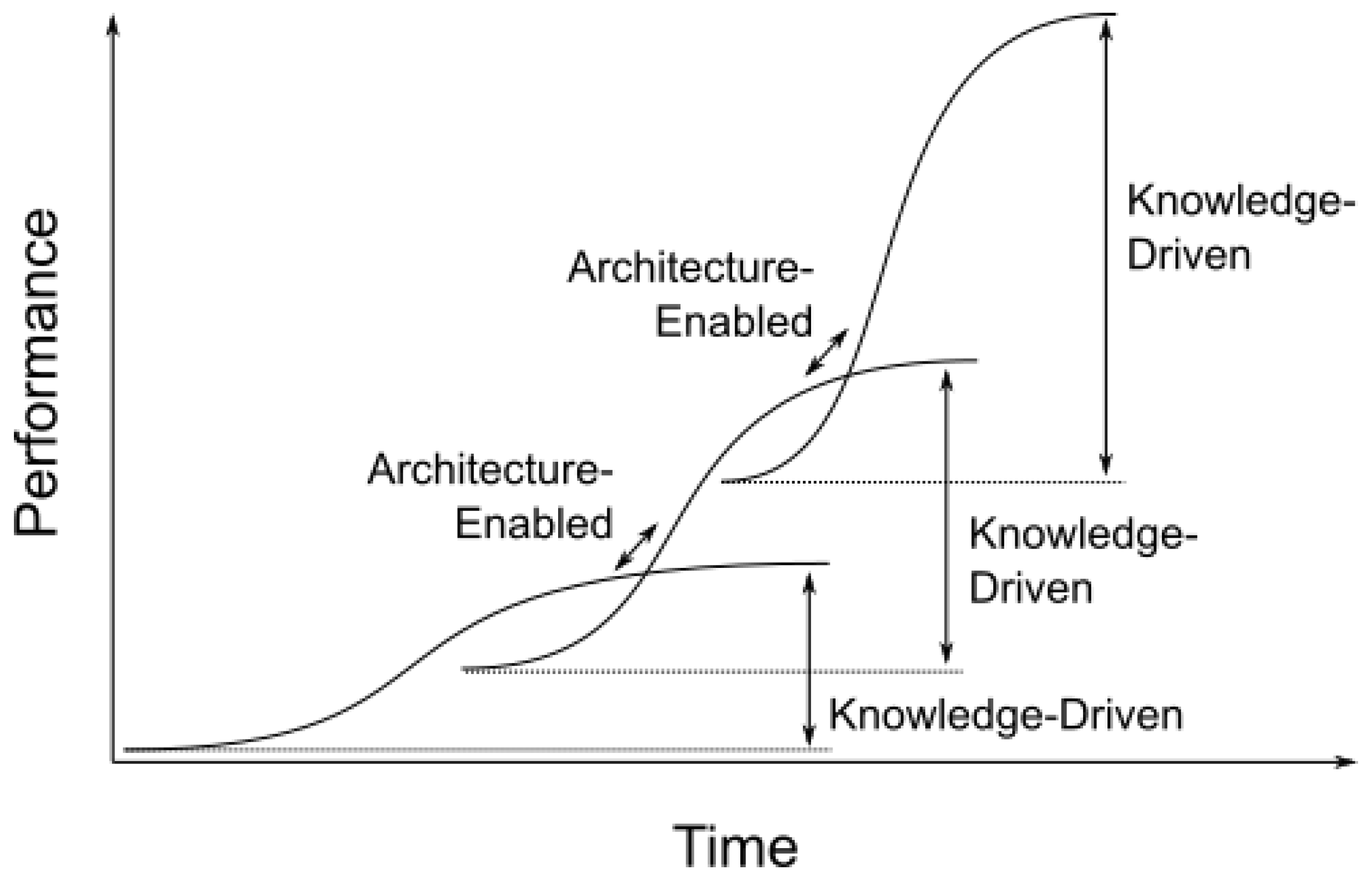

- Christensen, C.M. Exploring the Limits of the Technology S-Curve. Part I: Component Technologies. Prod. Oper. Manag. 1992, 1, 334–357. [Google Scholar] [CrossRef]

- Christensen, C.M. Exploring the Limits of the Technology S-Curve. Part II: Architectural Technologies. Prod. Oper. Manag. 1992, 1, 358–366. [Google Scholar] [CrossRef]

- Kopetz, H. Real-Time Systems: Design Principles for Distributed Embedded Applications, 1st ed.; Kluwer Academic Press: Dordrecht, The Netherlands, 1997. [Google Scholar] [CrossRef]

- Baldwin, C.Y.; Clark, K.B. Design Rules: The Power of Modularity; MIT Press: Cambridge, MA, USA, 2000. [Google Scholar]

- Doyle, J.C.; Csete, M. Architecture, constraints, and behavior. Proc. Natl. Acad. Sci. USA 2011, 108, 15624–15630. [Google Scholar] [CrossRef] [PubMed]

- Maier, M.W. The Art of Systems Architecting, 3rd ed.; CRC Press: Boca Raton, FL, USA, 2009. [Google Scholar] [CrossRef]

- Eppinger, S.D.; Salminen, V. Patterns of Product Development Interactions. In Proceedings of the International Conference on Engineering Design, Glasgow, UK, 21–23 August 2001. [Google Scholar]

- Simon, H. The Sciences of the Artificial, 3rd ed.; MIT Press: Cambridge, MA, USA, 1996. [Google Scholar]

- Whitney, D.E. Why mechanical design cannot be like VLSI design. Res. Eng. Des. 1996, 8, 125–138. [Google Scholar] [CrossRef]

- Derler, P.; Lee, E.A.; Torngren, M.; Tripakis, S. Cyber-Physical System Design Contracts. In Proceedings of the ICCPS ’13: ACM/IEEE 4th International Conference on Cyber-Physical Systems, Philadelphia, PA, USA, 8–11 April 2013. [Google Scholar]

- Lee, E.A. Computing Needs Time. Commun. ACM 2009, 52, 70–79. [Google Scholar] [CrossRef]

- National Institute of Standards and Technology, Cyber Physical Systems Public Working Group. Framework for Cyber-Physical Systems—Release 1.0. 2016. Available online: https://pages.nist.gov/cpspwg/ (accessed on 4 August 2018).

- Johansson, K.H.; Törngren, M.; Nielsen, L. Vehicle Applications of Controller Area Network. In Handbook of Networked and Embedded Control Systems; Birkhäuser Boston: Boston, MA, UAS, 2005; pp. 741–765. [Google Scholar] [CrossRef]

- MacCormack, A.; Baldwin, C.; Rusnak, J. Exploring the duality between product and organizational architecture: A test of the “mirroring” hypothesis. Res. Policy 2012, 41, 1309–1324. [Google Scholar] [CrossRef]

- Adamsson, N. Interdisciplinary Integration in Complex Product Development: Managerial Implications of Embedding Software in Manufactured Goods. Ph.D. Thesis, Department of Machine Design, KTH Royal Institute of Technology, Stockholm, Sweden, 2007. [Google Scholar]

- Sillitto, H. Architecting Systems: Concepts, Principles and Practice. Volume 6: Systems; College Publications: London, UK, 2014. [Google Scholar]

- Networking and Information Technology Research and Development Subcommittee. The National Artificial Intelligence Research and Development Strategic Plan; Executive Office of the President or the United States: Washington, DC, USA, 2016. [Google Scholar]

- Zhang, M.; Selic, B.; Ali, S.; Yue, T.; Okariz, O.; Norgren, R. Understanding Uncertainty in Cyber-Physical Systems: A Conceptual Model. In Proceedings of the 12th European Conference on Modelling Foundations and Applications, Vienna, Austria, 4–8 July 2016; Springer-Verlag: Berlin/Heidelberg, Germany, 2016; Volume 9764, pp. 247–264. [Google Scholar] [CrossRef]

- Rajkumar, R.; Lee, I.; Sha, L.; Stankovic, J. Cyber-physical systems: The next computing revolution. In Proceedings of the 47th Design Automation Conference, Anaheim, CA, USA, 13–18 June 2010; IEEE: Anaheim, CA, USA, 2010. [Google Scholar] [CrossRef]

- Horváth, I.; Rusák, Z.; Li, Y. Order beyond chaos: Introducing the notion of generation to characterize the continuously evolving implementations of cyber-physical systems. In Proceedings of the ASME 2017 International Design Engineering Technical Conferences and Computers and Information in Engineering Conference, Cleveland, OH, USA, 6–9 August 2017; ASME: Cleveland, OH, USA, 2017. [Google Scholar] [CrossRef]

- Satyanarayanan, M. The Emergence of Edge Computing. IEEE Comput. 2017, 50, 30–39. [Google Scholar] [CrossRef]

- Jacobson, I.; Lawson, H. Software and systems. In Software Engineering in the Systems Context: Addressing Frontiers, Practice and Education; College Publications, 2015; Volume 6, Chapter 1. [Google Scholar]

- National Academies of Sciences, Engineering, and Medicine. A 21st Century Cyber-Physical Systems Education; The National Academies Press: Washington, DC, USA, 2016. [Google Scholar] [CrossRef]

- Acatech National Academy of Science and Engineering. Living in a Networked World. Integrated Research Agenda Cyber-Physical Systems (agendaCPS). 2015. Available online: http://www.cyphers.eu/sites/default/files/acatech_STUDIE_agendaCPS_eng_ANSICHT.pdf (accessed on 4 August 2018).

- Bainbridge, L. Ironies of automation. Automatica 1983, 19, 775–779. [Google Scholar] [CrossRef]

- Waymo. Waymo Safety Report: On the road to Fully Self-Driving. 2018. Available online: https://storage.googleapis.com/sdc-prod/v1/safety-report/Safety%20Report%202018.pdf (accessed on 30 August 2018).

- Wagner, M.; Koopman, P. A Philosophy for Developing Trust in Self-driving cars. In Road Vehicle Automation 2; Meyer, G., Beiker, S., Eds.; Lecture Notes in Mobility; Springer: Cham, Switzerland, 2015; pp. 163–171. [Google Scholar] [CrossRef]

- Amodei, D.; Olah, C.; Steinhardt, J.; Christiano, P.; Schulman, J.; Mané, D. Concrete Problems in AI Safety. arXiv, 2016; arXiv:1606.06565. [Google Scholar]

- Sheard, S.A.; Mostashari, A. A Complexity Typology for Systems Engineering. In Proceedings of the INCOSE International Symposium, Chicago, IL, USA, 12–15 July 2010. [Google Scholar] [CrossRef]

- Törngren, M.; Grimheden, M.E.; Gustafsson, J.; Birk, W. Strategies and considerations in shaping cyber-physical systems education. ACM SIGBED Rev. 2016, 14, 53–60. [Google Scholar] [CrossRef]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Törngren, M.; Grogan, P.T. How to Deal with the Complexity of Future Cyber-Physical Systems? Designs 2018, 2, 40. https://doi.org/10.3390/designs2040040

Törngren M, Grogan PT. How to Deal with the Complexity of Future Cyber-Physical Systems? Designs. 2018; 2(4):40. https://doi.org/10.3390/designs2040040

Chicago/Turabian StyleTörngren, Martin, and Paul T. Grogan. 2018. "How to Deal with the Complexity of Future Cyber-Physical Systems?" Designs 2, no. 4: 40. https://doi.org/10.3390/designs2040040

APA StyleTörngren, M., & Grogan, P. T. (2018). How to Deal with the Complexity of Future Cyber-Physical Systems? Designs, 2(4), 40. https://doi.org/10.3390/designs2040040