1. Introduction

In everyday life, as laymen, we tend to think of attention as a unitary concept, similar to memory or perception: we recall paying attention to something we saw or heard. Analysis of the concept needs to keep this unity in mind while breaking down its elements. In this article, I discuss paying attention in terms of selection, which guides action by privileging some things at the expense of others (James, [

1]). It is possible to formalize this idea narrowly in terms of a relationship between input and output; attention discards some inputs and selects others for further processing (Broadbent [

2], although since attention can also attenuate inputs (Treisman and Geffen [

3]), it may be graded, not just all-or-none. This paper first offers some general models of selective attention that are, in order of increasing specificity,

prescriptive and

descriptive, and can be made

predictive if sufficiently delimited. Examples are then given in the visual domain. The discussion at the end compares these models to other ways in which attention may potentiate information. I conclude that about half of the current meanings of “attention” can be related to selection, as defined here, but selection alone is not general enough to satisfy lay intuition.

As a mental construct, selective attention (e.g., concentration, focusing, activation, selection) can be distinguished from attention as

synthesis (i.e., feature integration; Treisman and Gelade [

4]), attention as

effort (Kahneman [

5]), attention as a

processing mode (top-down, bottom-up; endogenous or exogenous; parallel, serial, or hybrid (Palmer [

6]; Carrasco [

7]), attention as an

individual difference (being attentive or distractible; global or analytic; Murphy [

8], attention as “

stimulus control” (attending to a stimulus means being controlled by it; e.g., Dinsmoor [

9]), attention as a

philosophical construct (choice or free will; awareness or consciousness; James [

1]), and attention as a naive

explanation, as in, “I didn’t look carefully after I stopped paying attention”. Attention can also be studied as a

neurological construct (a change in single cell responses or in gross recordings like the EEG, VEP, or BOLD response) and as exemplifying underlying neural processes such as gain control and normalization (Desimone and Duncan [

10]; Denison, Carrasco, and Heeger [

11]). All of these usages can be found to apply in studies of vision, visual perception, and visual memory. Since they cover such a disparate range of concepts, it seems that no theory of attention can draw all of them into a larger scheme. Here, I will argue that selection, as a basic process, encompasses some of these, consistent with the lay intuition that attention is unitary. Concentrating on visual selective attention allows me to narrow the field to a well-studied area, but the ideas could also be applied to attention to sound and smell. Attention to internal sensations such as pain and pleasure should be included in a comprehensive treatment, but is excluded here.

2. A Prescriptive Model

The purpose of a

prescriptive model or “framework” is to define terms sufficiently and precisely so that it can be determined whether or not an event or process meets the requirements of the model. Such a model relies on rules and definitions and is a priori, that is, it has no necessary application. Ideally, a prescriptive model is simple to state, even if complex to apply. To prescribe rules for attentional selection, let

y = w(x), where

x is an input sequence and

y is an output sequence, and the operation

w(.) captures the effect of attention (bold-face letters like

x denote arrays; normal letters like x denote elements). In a “snap-shot world”,

x and

y are discrete sequences which can be indexed to denote distinct episodes (times, places, or qualities), such that each episode, indexed by the subscript t, is independent of preceding and succeeding episodes, so y

t = w

t (x

t). This postulate needs modification when inputs and actions are continuous changes or flows (Gibson [

12]), but it is realistic for discrete tasks such as reading text or bird-spotting. In intermediate cases, each episode may bleed a fraction into the next one, i.e., y

t = w

t (x

t + αx

t−1), where 0 ≤ α < 1, as in attending to phonetic variants in speech (Miller, Green, and Reeves [

13]), or to slow changes in color (Callahan-Flintoft, Holcombe, and Wyble [

14]). Discreteness leads to the following:

Definition 1. Let x be an input sequence and y an output sequence, where

x = [x1, x2,…xt, …..xT], where 1 ≤ t ≤ T denotes successive inputs (indexed by moments or places), and

y = [y1, y2, …yt,…. yT] denotes the corresponding outputs, or actions consequent on them.

Not every input generates an output, so let yt = [] be nul in the case of no output. Then each sequence has from 1 to T corresponding “episodes”, using the term episode to cover both input and output events.

Attention (A) is assumed to select inputs from x by weighting them by w, as in the “theory of visual attention” (TVA: Bundesen [15]; Bundesen, Vangkilde, and Petersen [16]), where w = [w1, w2, …wt….wT], so the selected input is xw.

Using a product implies that w and x are both numerical, the weights being applied to the strengths of the input events. The strength of each xt is determined by both sensory and perceptual factors and by top-down factors such as priming or long-term memory effects like word frequency. In TVA, this concept is captured by assuming that bottom-up (feature contrast) and top-down pertinence (priming, memory, feature relevance) factors also multiply together. Here, I do not need this additional assumption because the purpose of selective attention is to select objects or events that already have defined strengths, whether or not these depend on multiplicative factors.

The weight for episode t is applied to both xt and αxt−1, such that yt = wt (xt, αxt−1), if bleeding (α > 0) occurs at a sensory or perceptual stage prior to the assignment of attentional weights; otherwise, yt = (wt xt, + αwt−1xt−1). In pure all-or-none selection, α = 0 and wt = {0 or 1}; in graded selection, 0 ≤ α < 1 and wt < 1.0, where wt indicates the extent of attenuation. In the graded case, inputs can be removed by zeroing any wt < β, where β is a threshold value, to permit some-or-none selection.

Suppression may also occur in the graded case, if −1 ≤ w

t < 0 for some t. In this case, an item is selected, but then actively rejected—for example, classified as a distractor in visual search, or as a flanker in crowding—yet interferes with processing, slowing search (Wolf [

17]) or negatively priming later items (Tipper [

18]). Truly rejected items, as in the all-or-none case, have w

t = 0 and no further role to play, positive or negative.

Costs. Generating an output y imposes a computational cost, c, where c = [c1, c2, …. ct, …cT]. Beyond the fixed cost Fc > 0 of applying a weighting scheme, rejected items impose no further cost, so if wt = 0, or if 0 < wt < β in the graded case, then wtct = 0. More highly weighted inputs are assumed to require more processing and thus impose proportionally greater costs. The total cost is then C, where C = Σ(x|w|c) +Fc, that is, x and c multiply the absolute value, |w|, as cost also increases with suppression, if suppression occurs. The assumption that costs increase with weights is prescriptive, since by “selection” is meant that less-attended inputs are treated more superficially than inputs that receive focused attention and are processed more deeply. Requiring a subject to report a feature of one item in a to-be-ignored stream of items could violate the cost assumption and undermine “selection”.

Error. Let the actor have a definite output goal

y’, such that

y’ = [y’

1, y’

2, … y’

t, ... y’

T]. The error in approximating each goal is y

t − y’

t and the total error E = √Σ[(

y − y’)

2]. Here, the term “error” refers to missing the goal and does not necessarily imply knowledge of a correct solution, as indicated by the term “mistake”. Rather, it implies that desired elements may be overlooked. If the goal is to satisfy an objective criterion, however, as in tracking a moving target, then E may include mistakes and prediction errors (as noted by a reviewer). Selection implies minimizing the total error and the total cost, i.e., G = λC + (1 − λ)E, where parameter λ weights the cost (or processing time) versus the error. Speed–accuracy trade-offs (SATOs) were first demonstrated in 1973 by Reed [

19]; d’ grew at a negatively accelerated function of retrieval delay from 0.5 s to 2 s in a memory experiment with delay filled to prevent rehearsal. Such SATOs, which have also been shown in visual search (McElree and Carrasco [

20]), illustrate variation in λ. The

prescriptive attention model is then

where “A” is a place-holder for a detailed model of attention and its control parameters.

If Equation (1) is accepted as a prescriptive model, then selection fails if A is undefined, the subject does not know how to attend to x, or w or y’ or c is unspecified, or there is no output y. Moreover, 0 < λ < 1.0, since if λ = 1, error does not matter and behavior is arbitrary, and if λ = 0, the system need not select at all, as doing so only increases error. Absent or unmotivated responses can coexist with passive awareness, but not with selective attention.

Walking through rough country is illustrative. Index t points to successive positions; x1 is the start, xT is the ultimate goal, and x = [x2,…,xt,…xT−1] are (visible) turning points. Attention selects the next turning point, xt, and the actor walks towards it, tracing out y, until the goal is reached. Each point during a slow or moderate walk (though not a run, a flow) can be attended separately, as required by the assumption of discrete independence. Total error E must be small, of the order of one pace, so minimizing G requires adjusting λ upwards and reducing the cost of turning. Failures to set a goal or to restrict error imply inattentive behavior.

Attentional Bandwidth and Capacity

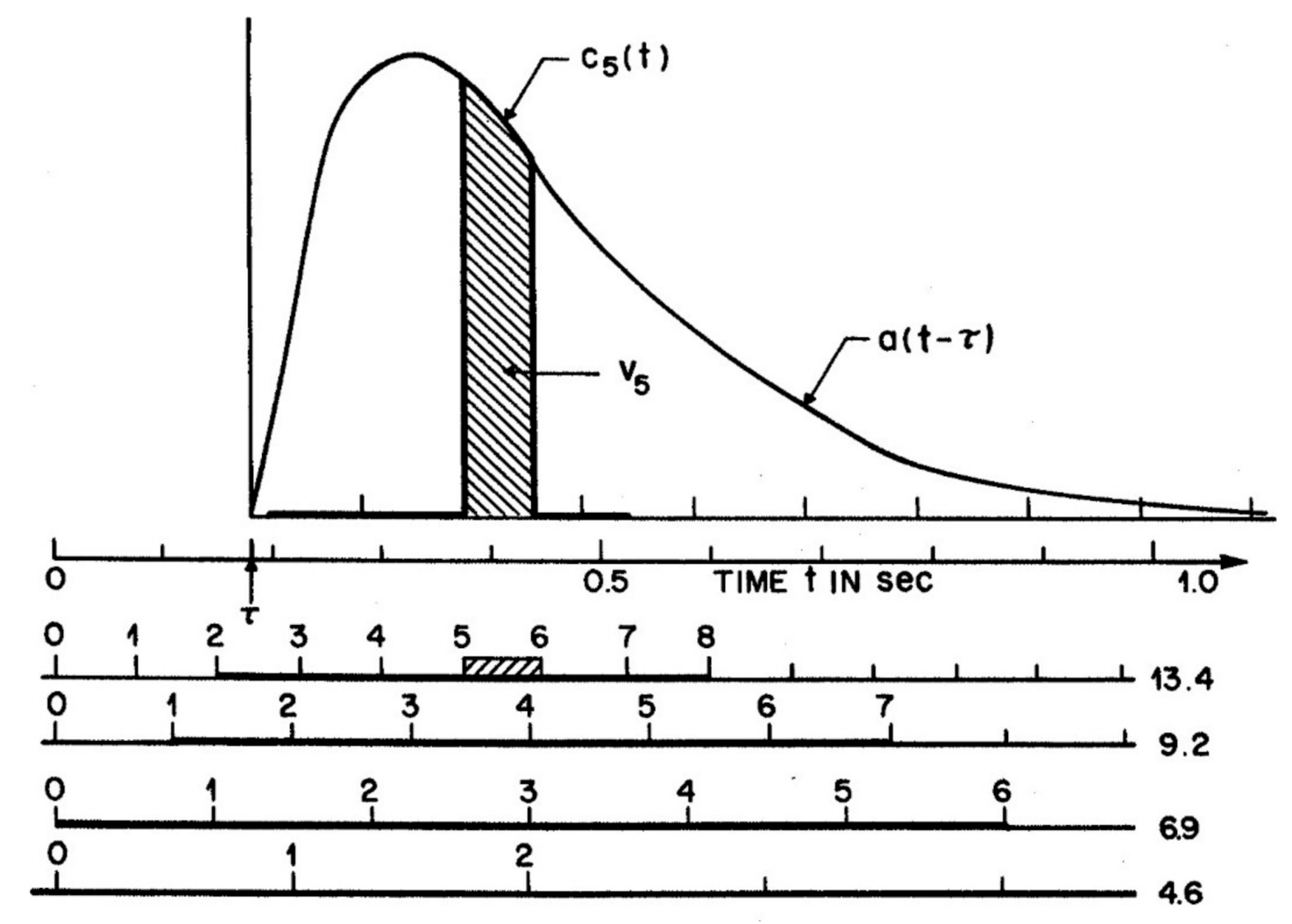

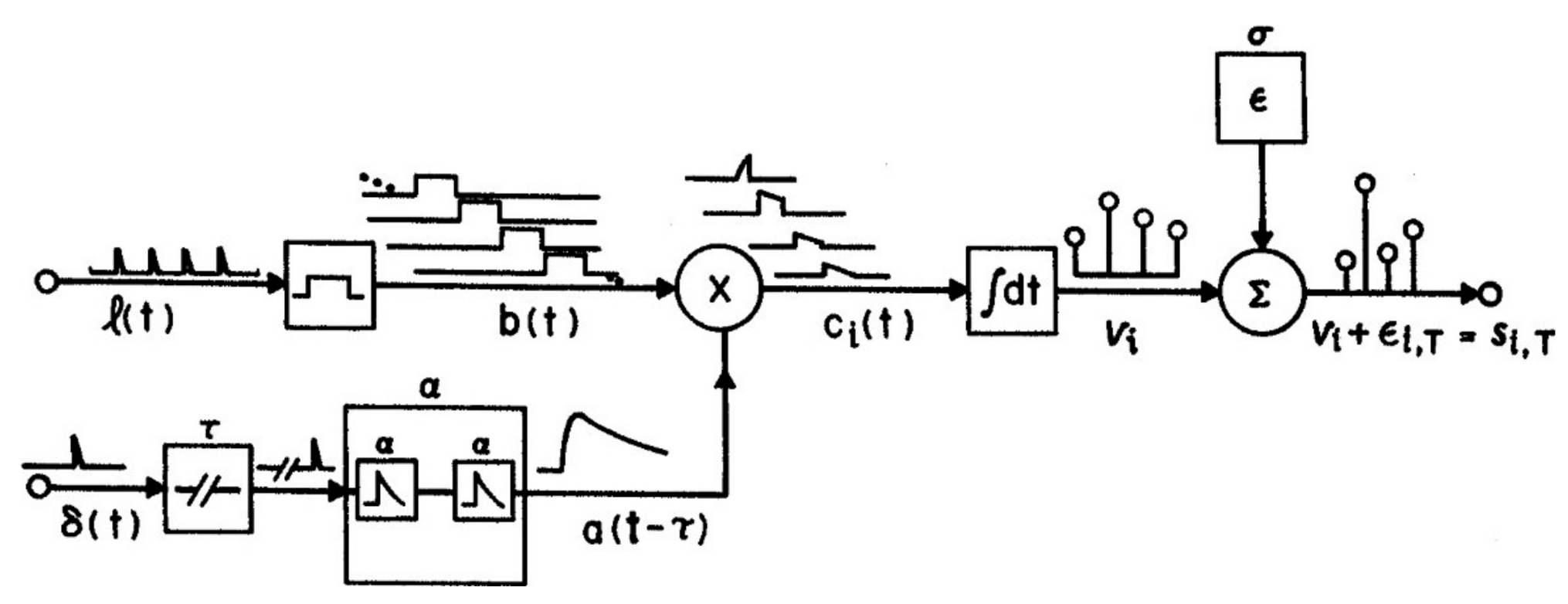

Equation (1) permits the definition of an “attentional bandwidth” B, the integral of the product of the weights with the stimuli, that is, B

= Σ

wx. Note that B is implicitly a function of A(t, s), the attention window taken over both time and space, since each weight w

t on x

t can be derived from the integral of A(t, s) over the period t to t + 1. (A similar logic applies to spatial positions s1, s2…, if the inputs are arranged in space.) Thus Σ

wx is computable for any particular combination of

x and A(t, s). Note that the output,

y, may overwhelm subsequent processes such as short-term memory (STM) if too many weights are non-zero. For example, if B > 4, STM may fail (Sperling [

21]; Luck and Vogel, [

22]), or B > 7 for some slowed inputs (Alvarez and Cavanagh [

23]). If retrieval is called for, the duration and spatial extent of the attention window, A(t, s), must be curtailed to ensure that the attention bandwidth B does not exceed STM capacity.

3. A Descriptive Model

Applications of Equation (1) require matching the model to consequences, which, for behavior, refer to

costs, speed, and accuracy. In general, neural calculations take time, so the model term λ defines a speed–accuracy trade-off (SATO): the higher λ, the faster but the less accurate; the lower λ, the converse (Reed [

19]). When the SATO is under the actor’s control, he or she can choose whether time or error will dominate. A task for which goals must be met precisely, implying a small λ, is one that will take a longer time or more energy. If G is to be minimized subject to a tolerable error, then λ must be adjusted downwards. Learning implies re-weighting

w over trials so as to reduce G. Once a task is learnt,

w will be nearly stationary. Performance should now prioritize speed, so G will be minimized if λ is adjusted upwards. Learning a difficult perceptual task requires emphasizing accuracy at the cost of speed early on, and later on, when accuracy is near asymptote, emphasizing speed. Violating this principle by forcing speed too early or too great a precision later on impedes learning.

Suppose that the conditions of the prescriptive model are met (x, w and y’ are defined), then to be useful, a descriptive model also requires specifying the “linking hypotheses”, which here are the sources of attentional error relatable to observable behaviors (speed, accuracy, energy or load, and confidence.) Error can arise in encoding and decision. Encoding errors can arise because of poor sensory information or because attention is sloppy, mixing one event with a subsequent event into one attentional window. Errors in weighting include over-weighting irrelevant but salient information (“distraction”) and underweighting and therefore missing relevant information, that is, underestimating the fixed cost (Fc) in choosing appropriate weights. Decision errors include poor choices of weighting (λ) and of goals (y’).

If episodes are spaced far enough apart in time and space that each episode can be separately attended, even though attention is sloppy, then errors at input can only arise in encoding and weighting. Since the selected input is

xw, one may model errors by

(x + e)p(w), where

e is a vector of encoding errors and

p(w) assigns a random increase or decrease to each element of

w. In this case,

This model can be developed by defining

e and

p(w). For example,

e is a set of normally distributed errors Ñ with mean 0 and standard deviation σ, that is,

e = Ñ(0, σ). To ensure that weights remain bounded, let 0 ≤ f(z) ≤ 1 be a monotonically increasing function, e.g., f(w) = tan(w)/(π/2). Then,

p(w) = f(

g + f

−1(

w)), where

g = Ñ(0, σ

f) is a normal random variable, and the model becomes

If w is discrete, then p(w) simply switches 0 for 1, or the reverse, with probability P.

With goals and costs fixed, the question arises whether the errors, weights, and decision rules are stationary, i.e., stable over episodes. If so, parameters (e, p(w), λ) can be estimated by averaging over the length of one entire run, and if not, only by combining trials from short sequences taken from many runs, each sequence requiring its own parameter set. Stationarity can be tested by checking data for order effects and for drift over time.

6. Visual Search

A model such as M should apply to standard methods for assessing selective attention. Although visual search was originally used to define basic features (Treisman and Gelade [

4]), it has become a tool used to study attention itself (Wolfe [

17]; Bravo and Nakayama [

30]; Palmer [

6], and many others.) In modeling search with M = {A(t

x, σ

a), x + e, p(w), y, y’, c, λ}, given

p(w) = f(w, σ

f), the input array again consists of an array of items

x = {0,0,.., 1}, where 0 represents a distractor and 1 represents a target. The items are either known in advance (“feature search”) or known to be unlike the distractors (“oddity search”). The output

y = {[ ], [ ], …1} represents successful search on a target-present trial. Here, [ ] denotes a nul output for a distractor, so in this case,

y = y’. An output such as

y = {[ ],1,…[ ]} represents a false alarm to a distractor,

y differing from

y’. The cost refers to the shifts of eye position and attention required to find the target. As cost increases with eccentricity (Geisler and Chou [

31]),

c also depends on spatial position.

The choice of criterion λ has defined almost distinct search literatures. In experiments following Triesman and Gelade [

4] subjects are asked to avoid errors and nearly always find the target, at the expense of time, implying that λ is held low. In other search experiments, variations in error rate are studied, but time is uncontrolled, implying λ is high (e.g., Eckstein [

32]; Eckstein et al. [

33]). A measure of performance that takes both speed and accuracy in account may be useful. Santhi and Reeves [

34] tested a parallel search model in which stimulus signal/noise ratio, which depended on target contrast and distractor noise as well as internal noise, predicted the ratio (d’)

2/T, a measure of performance derived by Swensson and Thomas [

35] assuming that information accumulates over a period, T. Performance expressed by this ratio, but not by RT or d’ separately, was consistent with the noise variance increasing linearly with the number, m, of distractors. We assumed that the signal S = Tc, where c is the target contrast and T = (RT-RTo) is the “observation interval”, the reaction time RT to find the target minus the motor component, RTo. Given that the range of eccentricities was strictly limited, we could assume constant noise per distractor, σ

2E. The total noise is then N = √(Tmσ

2E + Tσ

2I), the sum of the internal noise (σ

2I) and the total external noise from the m distractors (mσ

2E) during the observation interval. Thus d’ = S/N = Tc/√(Tmσ

2E + Tσ

2I). Squaring and dividing by T,

The left-hand side of Equation (5) combined latency and accuracy by expressing performance as information conveyed per unit time, and the right-hand side is the signal/noise ratio determined by the conditions of stimulation. Thus, Equation (5) predicts performance given the stimuli.

Small changes in accuracy imply large changes in d’ when accuracy is high, and, in Santhi and Reeves [

34], such changes in d’ were of greater weight when m was varied from 1 to 40 than were the changes in RT often used to characterize visual search (e.g., by Wolfe [

17]). Conditions classified as “parallel search” using Wolfe’s criterion that RT increases less than 10 ms per distractor may count as serial or hybrid using Equation (5). Note that for constant stimulation, Equation (5) predicts a linear increase in (d’)

2 as T increases. McElree and Carrasco [

20] reported instead that in visual search, d’ increases with T at a diminishing rate, with an asymptote depending on display size. However, a linear increase is seen in their data when replotted as (d’)

2 against T, up to the asymptotic level.

An equation for visual search similar to Equation (5) is due to Geisler, Perry and Najemnik [

36]. Their equation B1, which expresses search accuracy versus target eccentricity, is as follows:

where c is the target contrast, En is the visual noise from the distractors, and α and β depend on eccentricity, k. The second term in the denominator expresses how the cost increases with eccentricity. Equation (6) is like Equation (5) in predicting d’

2 from target energy (i.e., squared contrast) and noise, although it lacks a term for T, the observation period, which they did not record.

9. The Attention Repulsion Effect

One advantage of a model for selective attention is that it can be applied to other situations in which attention is claimed to act. The

Attentional Repulsion Effect occurs when a briefly flashed peripheral cue repulses the perceived position of a subsequently presented foveal probe (Suzuki and Cavanagh [

41]). Baumeler et al. [

42] review recent studies of the effect and also show it is not caused by micro-saccades, but represents capture of attention by the cue. Here, the input is [cue, probe] or

x = [

1,

1] and goal is

y’ = [0,1]. Strict selection would obviate the cue and leave the probe unaltered, i.e.,

y = [0,1]. However, the data indicate that the cue is attenuated, say by β < 1, such that

w = [β,1]. To describe this effect, the model, M must include a spatial interaction, for example:

where t

x denotes the temporal sequence of episodes, i.e., cue followed by probe, and σ

a denotes the spatial smoothing by the attention window. The probe weight β cannot be constant but must vary enough, as determined by σ

f, to approach zero on the 30% of trials in which repulsion is not observed (Baumeler et al. [

42]). For spatial smoothing to alter the perceived position of the probe, the probe–cue distance < 2σ

a. In the published data, the greatest repulsion occurs at 200 ms and 3.5 deg. of separation (Suzuki and Cavanaugh [

41]. For the model to be predictive, full temporal and spatial tuning curves would be needed to specify A(t

x, σ

a). Note that λ is likely low, so that the cost function,

c, ensures that the error E in spatial position is small, without penalizing tardiness or “variability” in latency (Baumeler et al. [

42]). Note that the selection model does not explain why spatial repulsion rather than attraction happened; for this, additional assumptions must be made.

11. Attention as Improved Performance

The preceding discussion of attention as selection conforms to the standard view that paying attention increases performance, for example, by making it more likely that a target is detected and possibly less likely that a distractor or non-target is detected. Indeed, intuition suggests that paying attention should enhance performance; thus, it has been taken as a defining characteristic that items selected by attention will be processed better, and rejected items worse, than neutral items (Posner, Nissen and Ogden [

38]; for corresponding neural data, see Kanwisher and Wojciulik [

47]; Petersen and Posner [

48]. Eriksen and Yeh [

49] reported that cued letters are reported more accurately than uncued letters in a canonical experiment in which letters are presented in a ring around fixation to equalize acuity, as if cuing aids spatial attention. Bahcall and Kowler [

50] found that letters neighboring a cued location are reported less accurately than other un-cued letters. In an

object-cueing procedure, attended objects are typically processed more precisely than un-attended ones (Egly, Driver, and Rafal [

51]), even when the locations of attended and unattended objects are identical (Blaser, Pylyshyn, and Holcombe [

52].) In the

probe-signal method, a “signal” tone presented at an expected frequency is detected better (in d’ units) than a “probe” tone presented at an unexpected frequency (Greenberg and Larkin [

53]; Scharf, Reeves, and Suciu [

54]), and, in a visual application, line segments briefly flashed at an expected orientation (“signals”) are detected more accurately than segments (“probes”) at an unexpected orientation (Kurylo, Reeves, and Scharf [

55]). Attention to an object also reduces change blindness produced by inter-woven flashes (Rensink et al. [

56]).

Thus signal detection-inspired models have supposed that attention filters out noise, decreases uncertainty about the signal (Lu and Dosher, [

57,

58]), suppresses irrelevant channels while leaving the signal unchanged (Scharf, Chays, and Magnan [

59]), or directly enhances the signal (Carrasco, Williams, and Yeshurun [

60]). In each case, attention is predicted to improve sensitivity (d’) or accuracy measured with a criterion-free procedure (Eckstein et al. [

33]), implying that increased accuracy with attention is due to better processing, not variations in response criteria.

A focus on signal detection, rather than on broader organismic factors, is surely justified when subjects are appropriately motivated, that is, not distracted, indifferent, lethargic, unclear about the task, overly aroused, or abnormal. Since attention to the wrong location or the wrong spatial frequency band impairs performance even when the subject is motivated (Yeshurun and Carrasco [

61]), the generalization that attention improves performance also presupposes

validity, that is, attention is paid to the signal rather than to irrelevant or competing locations or features, and the task instructions and cuing procedures are understood and are not misleading.

However, given appropriate motivation and valid instructions and cues, is the generalization that attention always aids performance right? When

the signal is known in advance, its form unvarying, and its spatial location is validly cued, and the method is

criterion free, one has an ideal situation for determining whether instructions to focus attention necessarily improve processing. Fine and Reeves [

62]; see Reeves [

63]) found conditions in a visual masking experiment in which focusing attention on two of four possible stimulus locations actually

increased latency and

reduced sensitivity (d’) to offset letters, compared to broadly attending to all four locations. The signal was known in advance, unvarying, and validly cued; the method was criterion free; and focusing on optotypes (forward and reversed E’s) in the same conditions did improve performance. I modeled this by assuming that (in the case of letters) focusing attention increased visual noise

1 from the mask to a greater extent than it increases the signal, lowering the signal/noise ratio (Reeves [

63]). This result, along with that of Yeshurun and Carrasco [

61], implies that although increasing attention normally improves performance, it need not do so, and, therefore, attention should be operationalized by the instruction or by the task, rather than by the outcome, as is (correctly) implied by Equation (1).

12. Generalization to Other Meanings of Attention

Finally, I ask whether a prescriptive model or framework like M = (A(t

x, s

a), x, w, y, y’, c, λ) provides any insight into the other usages of the term “attention”. I suggest that about half of the common usages can be related to the selective model, but the remaining meanings must be treated as distinct. A basic distinction concerning mental processes is that between

state and

process. Here, the state is “being attentive” and the process is “selecting relevant and discarding irrelevant information”. Some terms in the psychology of attention, like the personality variable of being easily distracted, are best thought of as a

state; others, such as visual search, are best characterized as a

process; and yet others are mixed.

Table 1 lists various uses of attention with their methods of measurement, adapted from Fine and Reeves [

62] and embellished with scores such that +1, −1 and 0 mean that each of the six listed properties applies, does not apply, or is indifferent. Properties

obj, state, input, output, WM, and select indicate whether the measurement is objective (+1 means yes), whether a state (+1) or process (−1) is implied, whether some input is required (+1), whether an output needs specification (+1), whether short-term or working memory is implicated (+1), and whether the concept of attention can be taken as “selective” (+1) or not (−1). These properties are scored in columns. The rows of the table refer to the type of attention and its typical method of measurement. Attention can be operationalized as a direct effect of an instruction (e.g., “pay attention”; “concentrate”; “split your attention 80: 20” [

40]), or as an inference from a trade-off (a loss on task A when task B is concurrent [

39]), or as higher sensitivity to an attended or cued stimulus [

6,

56], or as feature integration [

4], or from search rate, or as selective reinforcement [

9], or from cell responses (a sharpened receptive field or a speeding up [

48]), or from individual differences (e.g., attention deficit disorder.) One way to organize these disparate notions is with the three-fold division of Posner (updated in 2012 by Petersen and Posner [

48]) into “orienting” or selection, “activation” or effort, and “executive control”, with their associated pathways in the brain.

Here, we consider “selection” to be relevant to feature search, the probe-signal procedure, the balance of top-down versus bottom up factors (

x versus

w), the use of cues, capacity, the processing mode (serial, parallel), and the topics of vigilance and working memory. Only in search, cuing, and probe-signal is selection a defining feature; in the other cases, selection is mostly used as a probe. In the cases marked “0”, such as awareness, selection may occur or may not, as in some forms of meditation. There are other examples in which attention is not selective (−1 in the Table), but refer to a

state (e.g., global processing and ADHD as personality variables). Cathexis is also scored as −1 as it involves a drive to associate unconscious ideas, which is out of the subject’s control and so does not meet the definition in Equation (1), even though masked priming experiments demonstrate unconscious selectivity (Marcel [

64]). Overall, selection seems relevant to about half of the examples, although with such a plethora of different meanings, it is not easy to be sure.

In each case, if attentional selection changes behavior relative to an appropriate control, one may further ask whether this is due to changes in sensitivity or in the criterion. If due to a change in sensitivity, is this from enhancing targets, from increasing overall activation, from selecting a sub-set of items, or from suppressing distractors (Lu and Dosher [

57])? If due to a change in criterion, is this related to perceived pay-offs, and is the change optimal? Finally, does any change due to attention reflect the outcome of a parallel, a serial, or a hybrid mechanism? These questions are complicated but not infinite; each can be answered in turn.