Digitization and Visualization of Folk Dances in Cultural Heritage: A Review

Abstract

1. Introduction

- Promoting cultural diversity,

- making local communities and Indigenous people aware of the richness of their intangible heritage; and

- strengthening cooperation and intercultural dialogue between people, different cultures, and countries.

2. Dance Digitization and Archival

- Preparation—decision about technique and methodology to be adopted, as well as the place of digitization;

- digital recording—main digitization process; and

- data processing and archival—post-processing, modeling, and archival of the digitized dances.

2.1. Dance Digitization Systems

2.1.1. Optical Marker-Based Systems

Active Markers

Passive Markers

2.1.2. Marker-Less Motion Capture Systems

Depth Sensors

2D and 3D Pose Estimation Based on a Single RGB Camera

Multiview RGB-D Systems

2.1.3. Non-Optical Marker-Based Systems

- Acoustic systems;

- mechanical systems;

- magnetic systems; and

- inertial systems.

2.1.4. Comparison of Motion Capture Technologies

- Cost;

- required accuracy;

- requirements for interactivity/real-time performance;

- required easy calibration/self-calibration;

- number of joints to be tracked;

- weight/size of markers;

- level of restriction to (dancer) movements; and

- environmental constraints (e.g., existence of metallic objects or other noise sources affecting specific techniques).

2.2. Post-Processing

- Direct acquisition; and

- indirect acquisition.

2.3. Archiving and Data Retrieval

3. Visualization

3.1. Types of Visualization and Feedback

3.2. Movements Recognition

4. Performances Evaluation

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- UNESCO. What Is Intangible Cultural Heritage. Available online: https://ich.unesco.org/en/what-is-intangible-heritage-00003 (accessed on 5 June 2018).

- Protopapadakis, E.; Grammatikopoulou, A.; Doulamis, A.; Grammalidis, N. Folk Dance Pattern Recognition over Depth Images Acquired via Kinect Sensor. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, XLII-2/W3, 587–593. [Google Scholar] [CrossRef]

- Hachimura, K.; Kato, H.; Tamura, H. A Prototype Dance Training Support System with Motion Capture and Mixed Reality Technologies. In Proceedings of the 2004 IEEE International Workshop on Robot and Human Interactive Communication Kurashiki, Okayama, Japan, 20–22 September 2004; pp. 217–222. [Google Scholar]

- Magnenat Thalmann, N.; Protopsaltou, D.; Kavakli, E. Learning How to Dance Using a Web 3D Platform. In Proceedings of the 6th International Conference Edinburgh, Revised Papers, UK, 15–17 August 2007; Leung, H., Li, F., Lau, R., Li, Q., Eds.; Springer: Berlin/Heidelberg, Germany, 2008; pp. 1–12. [Google Scholar]

- Doulamis, A.; Voulodimos, A.; Doulamis, N.; Soile, S.; Lampropoulos, A. Transforming Intangible Folkloric Performing Arts into Tangible Choreographic Digital Objects: The Terpsichore Approach. In Proceedings of the 12th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications (VISIGRAPP 2017), Porto, Portugal, 27 February 2017–1 March 2017; Volume 5, pp. 451–460. [Google Scholar]

- Transforming Intangible Folkloric Performing Arts into Tangible Choreographic Digital Objects. Available online: http://terpsichore-project.eu/ (accessed on 25 June 2018).

- Grammalidis, N.; Dimitropoulos, K.; Tsalakanidou, F.; Kitsikidis, A.; Roussel, P.; Denby, B.; Chawah, P.; Buchman, L.; Dupont, S.; Laraba, S.; et al. The i-Treasures Intangible Cultural Heritage dataset. In Proceedings of the 3rd International Symposium on Movement and Computing (MOCO’16), Thessaloniki, Greece, 5–6 July 2016; ISBN 978-1-4503-4307-7. [Google Scholar] [CrossRef]

- Dimitropoulos, K.; Manitsaris, S.; Tsalakanidou, F.; Denby, B.; Crevier-Buchman, L.; Dupont, S.; Nikolopoulos, S.; Kompatsiaris, Y.; Charisis, V.; Hadjileontiadis, L.; et al. A Multimodal Approach for the Safeguarding and Transmission of Intangible Cultural Heritage: The Case of i-Treasures. IEEE Intell. Syst. 2018. [Google Scholar] [CrossRef]

- Kitsikidis, A.; Dimitropoulos, K.; Ugurca, D.; Baycay, C.; Yilmaz, E.; Tsalakanidou, F.; Douka, S.; Grammalidis, N. A Game-like Application for Dance Learning Using a Natural Human Computer Interface. In Part of HCI International, Proceedings of the 9th International Conference (UAHCI 2015), Los Angeles, CA, USA, 2–7 August 2015; Antona, M., Stephanidis, C., Eds.; Springer International Publishing: Basel, Switzerland, 2015; pp. 472–482. [Google Scholar]

- Nogueira, P. Motion Capture Fundamentals—A Critical and Comparative Analysis on Real World Applications. In Proceedings of the 7th Doctoral Symposium in Informatics Engineering, Porto, Portugal, 26–27 January 2012; Oliveira, E., David, G., Sousa, A.A., Eds.; Faculdade de Engenharia da Universidade do Porto: Porto, Portugal, 2012; pp. 303–331. [Google Scholar]

- Tsampounaris, G.; El Raheb, K.; Katifori, V.; Ioannidis, Y. Exploring Visualizations in Real-time Motion Capture for Dance Education. In Proceedings of the 20th Pan-Hellenic Conference on Informatics (PCI’16), Patras, Greece, 10–12 November 2016; ACM: New York, NY, USA, 2016. [Google Scholar]

- Hachimura, K. Digital Archiving on Dancing. Rev. Natl. Cent. Digit. 2006, 8, 51–60. [Google Scholar]

- Hong, Y. The Pros and Cons about the Digital Recording of Intangible Cultural Heritage and Some Strategies. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2015, XL-5/W7, 461–464. [Google Scholar] [CrossRef]

- Giannoulakis, S.; Tsapatsoulis, N.; Grammalidis, N. Metadata for Intangible Cultural Heritage—The Case of Folk Dances. In Proceedings of the 13th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications, Funchal, Madeira, 27–29 January 2018; pp. 534–545. [Google Scholar]

- Pavlidis, G.; Koutsoudis, A.; Arnaoutoglou, F.; Tsioukas, V.; Chamzas, C. Methods for 3D digitization of Cultural Heritage. J. Cult. Herit. 2007, 8, 93–98. [Google Scholar] [CrossRef]

- Sementille, A.C.; Lourenco, L.E.; Brega, J.R.F.; Rodello, I. A Motion Capture System Using Passive Markers. In Proceedings of the 2004 ACM SIGGRAPH International Conference on Virtual Reality Continuum and Its Applications in Industry (VRCAI’04), Singapore, 16–18 June 2004; ACM: New York, NY, USA, 2004; pp. 440–447. [Google Scholar]

- Stavrakis, E.; Aristidou, A.; Savva, M.; Loizidou Himona, S.; Chrysanthou, Y. Digitization of Cypriot Folk Dances. In Proceedings of the 4th International Conference (EuroMed 2012), Limassol, Cyprus, 29 October–3 November 2012; Ioannides, M., Fritsch, D., Leissner, J., Davies, R., Remondino, F., Caffo, R., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 404–413. [Google Scholar]

- Johnson, L.M. Redundancy Reduction in Motor Control. Ph.D. Thesis, The University of Texas at Austin, Austin, TX, USA, December 2015. [Google Scholar]

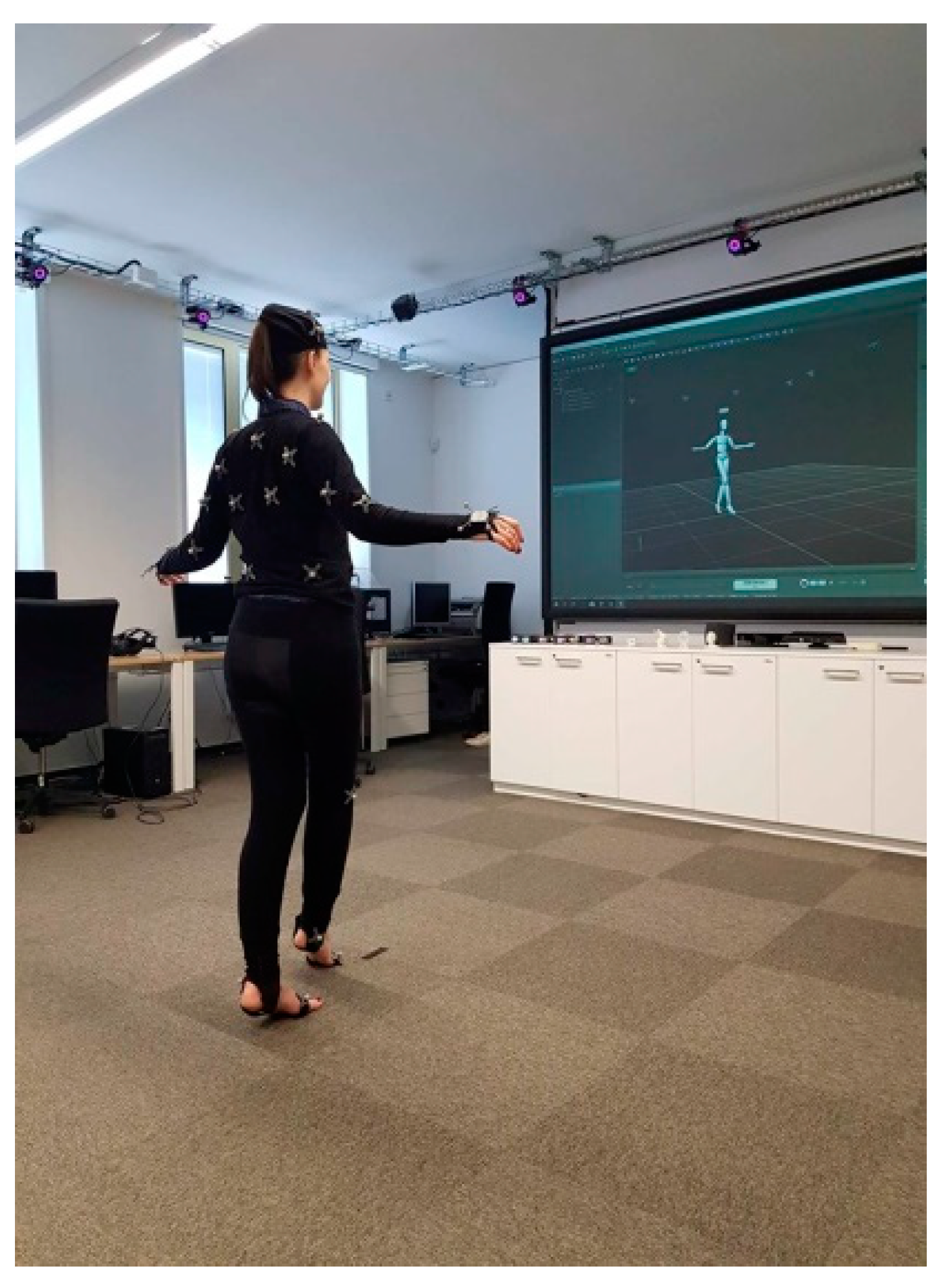

- Matus, H.; Kico, I.; Dolezal, M.; Chmelik, J.; Doulamis, A.; Liarokapis, F. Digitization and Visualization of Movements of Slovak Folk Dances. In Proceedings of the International Conference on Interactive Collaborative Learning (ICL), Kos Island, Greece, 25–28 September 2018. [Google Scholar]

- Mustaffa, N.; Idris, M.Z. Acessing Accuracy of Structural Performance on Basic Steps in Recording Malay Zapin Dance Movement Using Motion Capture. J. Appl. Environ. Boil. Sci. 2017, 7, 165–173. [Google Scholar]

- Hegarini, E.; Syakur, A. Indonesian Traditional Dance Motion Capture Documentation. In Proceedings of the 2nd International Conference on Science and Technology-Computer (ICST), Yogyakarta, Indonesia, 27–28 October 2016. [Google Scholar]

- Pons, J.P.; Keriven, R. Multi-View Stereo Reconstruction and Scene Flow Estimation with a Global Image-Based Matching Score. Int. J. Comput. Vis. 2007, 72, 179–193. [Google Scholar] [CrossRef]

- Li, R.; Sclaroff, S. Multi-scale 3D Scene Flow from Binocular Stereo Sequences. Comput. Vis. Image Underst. 2008, 110, 75–90. [Google Scholar] [CrossRef]

- Chun, C.W.; Jenkins, O.C.; Mataric, M.J. Markerless Kinematic Model and Motion Capture from Volume Sequences. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Madison, WI, USA, 18–20 June 2003. [Google Scholar]

- Sell, J.; O’Connor, P. The Xbox One System on a Chip and Kinect Sensor. IEEE Micro 2014, 34, 44–53. [Google Scholar] [CrossRef]

- Izadi, S.; Kim, D.; Hilliges, O.; Molyneaux, D.; Newcombe, R.; Kohli, P.; Shotton, J.; Hodges, S.; Freeman, D.; Davison, A.; et al. KinectFusion: Real-time 3D Reconstruction and Interaction Using a Moving Depth Camera. In Proceedings of the 24th Annual ACM Symposium on User Interface Software and Technology (UIST’11), Santa Barbara, CA, USA, 16–19 October 2011; ACM: New York, NY, USA, 2011; pp. 559–568. [Google Scholar]

- Newcombe, R.A.; Fox, D.; Seitz, S.M. DynamicFusion: Reconstruction and tracking of non-rigid scenes in real-time. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Shotton, J.; Fitzgibbon, A.; Cook, M.; Sharp, T.; Finocchio, M.; Moore, R.; Blake, A. Real-time human pose recognition in parts from single depth images. In Proceedings of the Computer Vision and Pattern Recognition (CVPR) 2011, Colorado Springs, CO, USA, 20–25 June 2011. [Google Scholar]

- Kanawong, R.; Kanwaratron, A. Human Motion Matching for Assisting Standard Thai Folk Dance Learning. GSTF J. Comput. 2018, 5, 1–5. [Google Scholar] [CrossRef]

- Laraba, S.; Tilmanne, J. Dance performance evaluation using hidden Markov models. Comput. Animat. Virtual Worlds 2016, 27, 321–329. [Google Scholar] [CrossRef]

- Moeslund, T.B.; Hilton, A.; Kruger, V. A survey of advances in vision-based human motion capture and analysis. Comput. Vis. Image Underst. 2006, 104, 90–126. [Google Scholar] [CrossRef]

- Andriluka, M.; Pishchulin, L.; Gehler, P.; Schiele, B. 2D human pose estimation: New benchmark and state of the art analysis. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014. [Google Scholar]

- Cao, Z.; Simon, T.; Wei, S.E.; Sheikh, Y. Realtime multi-person 2D pose estimation using part affinity fields. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 201.

- Wei, S.E.; Ramakrishna, V.; Kanade, T.; Sheikh, Y. Convolutional pose machines. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 4724–4732. [Google Scholar]

- Simon, T.; Joo, H.; Matthews, I.; Sheikh, Y. Hand keypoint detection in single images using multiview bootstrapping. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; Volume 2. [Google Scholar]

- Zhou, X.; Huang, Q.; Sun, X.; Xue, X.; Wei, Y. Towards 3D human pose estimation in the wild: A weakly-supervised approach. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017. [Google Scholar]

- Newell, A.; Yang, K.; Deng, J. Stacked hourglass networks for human pose estimation. In Proceedings of the 14th European Conference Computer Vision (ECCV) 2016, Amsterdam, The Netherlands, 11–14 October 2016; Liebe, B., Matas, J., Sebe, N., Welling, M., Eds.; Springer: Cham, Switzerland, 2016; pp. 483–499. [Google Scholar]

- Mehta, D.; Sridhar, S.; Sotnychenko, O.; Rhodin, H.; Shafiei, M.; Seidel, H.P.; Theobalt, C. VNect: Real-time 3D human pose estimation with a single RGB camera. ACM Trans. Gr. 2017, 36, 44. [Google Scholar] [CrossRef]

- Mehta, D.; Rhodin, H.; Casas, D.; Fua, P.; Sotnychenko, O.; Xu, W.; Theobalt, C. Monocular 3D human pose estimation in the wild using improved CNN supervision. In Proceedings of the 2017 International Conference on 3D Vision (3DV), Qingdao, China, 10–12 October 2017; pp. 506–516. [Google Scholar]

- Güler, R.A.; Neverova, N.; Kokkinos, I. DensePose: Dense human pose estimation in the wild. In Proceedings of the CVPR, Salt Lake, UT, USA, 18–22 June 2018. [Google Scholar]

- Güler, R.A.; Trigeorgis, G.; Antonakos, E.; Snape, P.; Zafeiriou, S.; Kokkinos, I. DenseReg: Fully convolutional dense shape regression in-the-wild. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- He, K.; Gkioxari, G.; Dollar, P.; Girshick, R. Mask R-CNN. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017. [Google Scholar]

- Kanazawa, A.; Black, M.J.; Jacobs, D.W.; Malik, J. End-to-end recovery of human shape and pose. In Proceedings of the Computer Vision and Pattern Recognition (CVPR), Salt Lake, UT, USA, 18–22 June 2018. [Google Scholar]

- Loper, M.; Mahmood, N.; Romero, J.; Pons-Moll, G.; Black, M.J. SMPL: A skinned multi-person linear model. ACM Trans. Gr. 2015, 34, 248. [Google Scholar] [CrossRef]

- Gong, W.; Zhang, X.; Gonzalez, J.; Sobral, A.; Bouwmans, T.; Tu, C.; Zahzah, E. Human Pose Estimation from Monocular Images: A Comprehensive Survey. Sensors 2016, 16, 1996. [Google Scholar] [CrossRef] [PubMed]

- Ke, S.; Thuc, H.L.U.; Lee, Y.J.; Hwang, J.N.; Yoo, J.H.; Choi, K.H. A Review on Video-Based Human Activity Recognition. Computers 2013, 2, 88–131. [Google Scholar] [CrossRef]

- Neverova, N. Deep Learning for Human Motion Analysis. Ph.D. Thesis, Universite de Lyon, Lyon, France, 2016. [Google Scholar] [CrossRef]

- Alexiadis, D.S.; Chatzitofis, A.; Zioulis, N.; Zoidi, O.; Louizis, G.; Zarpalas, D.; Daras, P. An integrated platform for live 3D human reconstruction and motion capturing. IEEE Trans. Circuits Syst. Video Technol. 2017, 27, 798–813. [Google Scholar] [CrossRef]

- Alexiadis, D.S.; Zarpalas, D.; Daras, P. Real-time, full 3-D reconstruction of moving foreground objects from multiple consumer depth cameras. IEEE Trans. Multimed. 2013, 15, 339–358. [Google Scholar] [CrossRef]

- Alexiadis, D.S.; Zarpalas, D.; Daras, P. Real-time, realistic full body 3D reconstruction and texture mapping from multiple Kinects. In Proceedings of the IVMSP 2013, Seoul, Korea, 10–12 June 2013. [Google Scholar]

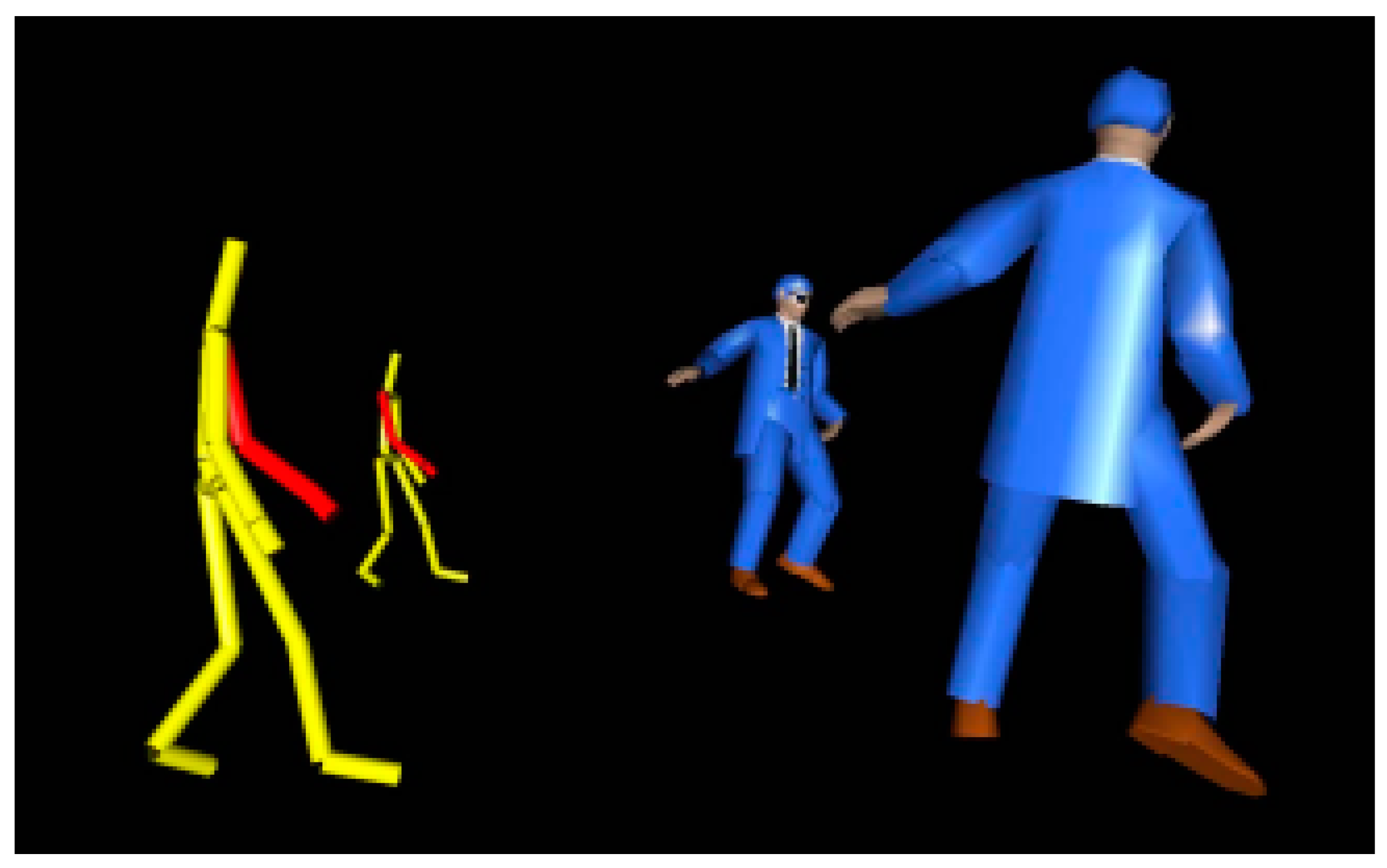

- Kitsikidis, A.; Dimitropoulos, K.; Yilmaz, E.; Douka, S.; Grammalidis, N. Multi-sensor technology and fuzzy logic for dancer’s motion analysis and performance evaluation within a 3D virtual environment. In Part of HCI International 2014, Proceedings of the 8th International Conference (UAHCI 2014), Heraklion, Crete, Greece, 22–27 June 2014; Stephanidis, C., Antona, M., Eds.; Springer International Publishing: Basel, Switzerland, 2014; pp. 379–390. [Google Scholar]

- Kahn, S.; Keil, J.; Muller, B.; Bockholt, U.; Fellner, D.W. Capturing of Contemporary Dance for Preservation and Presentation of Choreographies in Online Scores. In Proceedings of the 2013 Digital Heritage International Congress, Marseille, France, 28 October–1 November 2013. [Google Scholar]

- Robertini, N.; Casas, D.; Rhodin, H.; Seidel, H.P.; Theobalt, C. Model-based outdoor performance capture. In Proceedings of the 2016 Fourth International Conference on 3D Vision (3DV), Stanford, CA, USA, 25–28 October 2016. [Google Scholar]

- Meta Motion. Available online: http://metamotion.com/ (accessed on 10 September 2018).

- Vlasic, D.; Adelsberger, R.; Vannucci, G.; Barnwell, J.; Gross, M.; Matusik, W.; Popovic, J. Practical Motion Capture in Everyday Surroundings. ACM Trans. Gr. 2007, 26. [Google Scholar] [CrossRef]

- Yabukami, S.; Yamaguchi, M.; Arai, K.I.; Takahashi, K.; Itagaki, A.; Wako, N. Motion Capture System of Magnetic Markers Using Three-Axial Magnetic Field Sensor. IEEE Trans. Magn. 2000, 36, 3646–3648. [Google Scholar] [CrossRef]

- Sharma, A.; Agarwal, M.; Sharma, A.; Dhuria, P. Motion Capture Process, Techniques and Applications. Int. J. Recent Innov. Trends Comput. Commun. 2013, 1, 251–257. [Google Scholar]

- Bodenheimer, B.; Rose, C.; Rosenthal, S.; Pella, J. The Process of Motion Capture: Dealing with the Data. In Proceedings of the Eurographics Workshop, Budapest, Hungary, 2–3 September 1997; Thalmann, D., van de Panne, M., Eds.; Springer: Vienna, Austria, 1997; pp. 3–18. [Google Scholar]

- Gutemberg, B.G. Optical Motion Capture: Theory and Implementation. J. Theor. Appl. Inform. 2005, 12, 61–89. [Google Scholar]

- University of Cyprus. Dance Motion Capture Database. Available online: http://www.dancedb.eu/ (accessed on 28 June 2018).

- Carnegie Mellon University Graphics Lab: Motion Capture Database. Available online: http://mocap.cs.cmu.edu (accessed on 25 June 2018).

- Vogele, A.; Kruger, B. HDM12 Dance—Documentation on a Data Base of Tango Motion Capture; Technical Report, No. CG-2016-1; Universitat Bonn: Bonn, Germany, 2016; ISSN 1610-8892. [Google Scholar]

- Muller, M.; Roder, T.; Clausen, M.; Eberhardt, B.; Kruger, B.; Weber, A. Documentation Mocap Database HDM05; Computer Graphics Technical Reports, No. CG-2007-2; Universitat Bonn: Bonn, Germany, 2007; ISSN 1610-8892. [Google Scholar]

- ICS Action Database. Available online: http://www.miubiq.cs.titech.ac.jp/action/ (accessed on 25 June 2018).

- Demuth, B.; Roder, T.; Muller, M.; Eberhardt, B. An Information Retrieval System for Motion Capture Data. In Proceedings of the 28th European Conference on Advances in Information Retrieval (ECIR’06), London, UK, 10–12 April 2006; Springer: Berlin/Heidelberg, Germany, 2006; pp. 373–384. [Google Scholar]

- Feng, T.C.; Gunwardane, P.; Davis, J.; Jiang, B. Motion Capture Data Retrieval Using an Artist’s Doll. In Proceedings of the 2008 19th International Conference on Pattern Recognition, Tampa, FL, USA, 8–11 December 2008. [Google Scholar]

- Wu, S.; Wang, Z.; Xia, S. Indexing and Retrieval of Human Motion Data by a Hierarchical Tree. In Proceedings of the 16th ACM Symposium on Virtual Reality Software and Technology (VRST’09), Kyoto, Japan, 18–20 November 2009; ACM: New York, NY, USA, 2009. [Google Scholar]

- Muller, M.; Roder, T.; Clausen, M. Efficient Content-Based Retrieval of Motion Capture Data. ACM Trans. Gr. 2005, 24, 677–685. [Google Scholar] [CrossRef]

- Muller, M.; Roder, T. Motion Templates for Automatic Classification and Retrieval of Motion Capture Data. In Proceedings of the 2006 ACM SIGGRAPH/Eurographics Symposium on Computer Animation, Vienna, Austria, 2–4 September 2006; pp. 137–146. [Google Scholar]

- Ren, C.; Lei, X.; Zhang, G. Motion Data Retrieval from Very Large Motion Databases. In Proceedings of the 2011 International Conference on Virtual Reality and Visualization, Beijing, China, 4–5 November 2011. [Google Scholar]

- Muller, M. Information Retrieval for Music and Motion, 1st ed.; Springer: Berlin/Heidelberg, Germany, 2007; ISBN 978-3-540-74048-3. [Google Scholar]

- Chan, C.P.J.; Leung, H.; Tang, K.T.J.; Komura, T. A Virtual Reality Dance Training System Using Motion Capture Technology. IEEE Trans. Learn. Technol. 2011, 4, 187–195. [Google Scholar] [CrossRef]

- Bakogianni, S.; Kavakli, E.; Karkou, V.; Tsakogianni, M. Teaching Traditional Dance using E-learning tools: Experience from the WebDANCE project. In Proceedings of the 21st World Congress on Dance Research, Athens, Greece, 5–9 September 2007; International Dance Council CID-UNESCO: Paris, France, 2007. [Google Scholar]

- Aristidou, A.; Stavrakis, E.; Charalambous, P.; Chrysanthou, Y.; Loizidou Himona, S. Folk Dance Evaluation Using Laban Movement Analysis. ACM J. Comput. Cult. Herit. 2015, 8. [Google Scholar] [CrossRef]

- Hamari, J.; Koivisto, J.; Sarsa, H. Does Gamification Work—A Literature Review of Empirical Studies on Gamification. In Proceedings of the 2014 47th Hawaii International Conference on System Science, Waikoloa, HI, USA, 6–9 January 2014. [Google Scholar]

- Alexiadis, D.; Daras, P.; Kelly, P.; O’Connor, N.E.; Boubekeur, T.; Moussa, M.B. Evaluating a Dancer’s Performance using Kinect-based Skeleton Tracking. In Proceedings of the 19th ACM International Conference on Multimedia (MM’11), Scottsdale, AZ, USA, 28 November–1 December 2011; ACM: New York, NY, USA, 2011; pp. 659–662. [Google Scholar]

- Kyan, M.; Sun, G.; Li, H.; Zhong, L.; Muneesawang, P.; Dong, N.; Elder, B.; Guan, L. An Approach to Ballet Dance Training through MS Kinect and Visualization in a CAVE Virtual Reality Environment. ACM Trans. Intell. Syst. Technol. 2015, 6. [Google Scholar] [CrossRef]

- Drobny, D.; Borchers, J. Learning Basic Dance Choreographies with Different Augmented Feedback Modalities. In Proceedings of the Extended Abstracts on Human Factors in Computing Systems (CHI ‘10), Atlanta, GA, USA, 14–15 April 2010; ACM: New York, NY, USA, 2010; pp. 3793–3798. [Google Scholar]

- Aristidou, A.; Stavrakis, E.; Papaefthimiou, M.; Papagiannakis, G.; Chrysanthou, Y. Style-based Motion Analysis for Dance Composition. Int. J. Comput. Games 2018, 34, 1–13. [Google Scholar] [CrossRef]

- Aristidou, A.; Zeng, Q.; Stavrakis, E.; Yin, K.; Cohen-Or, D.; Chrysanthou, Y.; Chen, B. Emotion Control of Unstructured Dance Movements. In Proceedings of the ACM SIGGRAPH/Eurographics Symposium on Computer Animation (SCA’17), Los Angeles, CA, USA, 28–30 July 2017; ACM: New York, NY, USA, 2017. [Google Scholar]

- Masurelle, A.; Essid, S.; Richard, G. Multimodal Classification of Dance Movements Using Body Joint Trajectories and Step Sounds. In Proceedings of the 2013 14th International Workshop on Image Analysis for Multimedia Interactive Services (WIAMIS), Paris, France, 3–5 July 2013. [Google Scholar]

- Rallis, I.; Doulamis, N.; Doulamis, A.; Voulodimos, A.; Vescoukis, V. Spatio-temporal summarization of dance choreographies. Comput. Gr. 2018, 73, 88–101. [Google Scholar] [CrossRef]

- Min, J.; Liu, H.; Chai, J. Synthesis and Editing of Personalized Stylistic Human Motion. In Proceedings of the 2010 ACM SIGGRAPH Symposium on Interactive 3D Graphics and Games (I3D’10), Washington, DC, USA, 19–21 February 2010; ACM: New York, NY, USA, 2010. [Google Scholar]

- Cho, K.; Chen, X. Classifying and Visualizing Motion Capture Sequences using Deep Neural Networks. In Proceedings of the 9th International Conference on Computer Vision Theory and Applications (VISAPP 2014), Lisbon, Portugal, 5–8 January 2014. [Google Scholar]

- Protopapadakis, E.; Voulodimos, A.; Doulamis, A.; Camarinopoulos, S.; Doulamis, N.; Miaoulis, G. Dance Pose Identification from Motion Capture Data: A Comparison of Classifiers. Technologies 2018, 6, 31. [Google Scholar] [CrossRef]

- Balazia, M.; Sojka, P. Walker-Independent Features for Gait Recognition from Motion Capture Data. In Structural, Syntactic, and Statistical Pattern Recognition; Robles-Kelly, A., Loog, M., Biggio, B., Escolano, F., Wilson, R., Eds.; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2016; Volume 10029. [Google Scholar]

- Gait Recognition from Motion Capture Data. Available online: https://gait.fi.muni.cz/ (accessed on 8 September 2018).

- Balazia, M.; Sojka, P. Gait Recognition from Motion Capture Data. ACM Trans. Multimed. Comput. Commun. Appl. 2018, 14. [Google Scholar] [CrossRef]

- Balazia, M.; Sojka, P. Learning Robust Features for Gait Recognition by Maximum Margin Criterion. In Proceedings of the 23rd IEEE/IAPR International Conference on Pattern Recognition (ICPR 2016), Cancun, Mexico, 4–8 September 2016. [Google Scholar]

- Sedmidubsky, J.; Valcik, J.; Balazia, M.; Zezula, P. Gait Recognition Based on Normalized Walk Cycles. In Advances in Visual Computing; Bebis, G., Ed.; Lecture Notes in Computer Science; Springer: Berlin/Heidelberg, Germany, 2012; Volume 7432. [Google Scholar]

- Black, J.; Ellis, T.; Rosin, P.L. A Novel Method for Video Tracking Performance Evaluation. In Proceedings of the IEEE International Workshop on Visual Surveillance and Performance Evaluation of Tracking and Surveillance (VS-PETS), Nice, France, 11–12 October 2003. [Google Scholar]

- Essid, S.; Alexiadis, D.; Tournemenne, R.; Gowing, M.; Kelly, P.; Monaghan, D.; Daras, P.; Dremeau, A.; O’Connor, E.N. An Advanced Virtual Dance Performance Evaluator. In Proceedings of the 2012 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Kyoto, Japan, 25–30 March 2012. [Google Scholar]

- Wei, Y.; Yan, H.; Bie, R.; Wang, S.; Sun, L. Performance monitoring and evaluation in dance teaching with mobile sensing technology. Pers. Ubiquitous Comput. 2014, 18, 1929–1939. [Google Scholar] [CrossRef]

- Wang, Y.; Baciu, G. Human motion estimation from monocular image sequence based on cross-entropy regularization. Pattern Recognit. Lett. 2003, 24, 315–325. [Google Scholar] [CrossRef]

- Tong, M.; Liu, Y.; Huang, T.S. 3D human model and joint parameter estimation from monocular image. Pattern Recognit. Lett. 2007, 28, 797–805. [Google Scholar] [CrossRef]

- Luo, W.; Yamasaki, T.; Aizawa, K. Cooperative estimation of human motion and surfaces using multiview videos. Comput. Vis. Image Underst. 2013, 117, 1560–1574. [Google Scholar] [CrossRef]

| System | Advantages | Disadvantages | Data Captured/Data Analysis/Real Time (or Not) | References |

|---|---|---|---|---|

| Optical marker-based systems |

|

|

| [4,7,17,20,21] |

| Marker-less systems |

|

|

| [2,7,9,29,30,51] |

| Acoustic systems |

|

|

| [10,16] |

| Mechanical systems |

|

|

| [10,16] |

| Magnetic systems |

|

|

| [10,55,56] |

| Inertial systems |

|

|

| [10,11,16,55] |

| Type of Visualization | Advantages | Disadvantages |

|---|---|---|

| Video |

|

|

| Virtual reality (VR) environment |

|

|

| Game-like application (3D game environment) |

|

|

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kico, I.; Grammalidis, N.; Christidis, Y.; Liarokapis, F. Digitization and Visualization of Folk Dances in Cultural Heritage: A Review. Inventions 2018, 3, 72. https://doi.org/10.3390/inventions3040072

Kico I, Grammalidis N, Christidis Y, Liarokapis F. Digitization and Visualization of Folk Dances in Cultural Heritage: A Review. Inventions. 2018; 3(4):72. https://doi.org/10.3390/inventions3040072

Chicago/Turabian StyleKico, Iris, Nikos Grammalidis, Yiannis Christidis, and Fotis Liarokapis. 2018. "Digitization and Visualization of Folk Dances in Cultural Heritage: A Review" Inventions 3, no. 4: 72. https://doi.org/10.3390/inventions3040072

APA StyleKico, I., Grammalidis, N., Christidis, Y., & Liarokapis, F. (2018). Digitization and Visualization of Folk Dances in Cultural Heritage: A Review. Inventions, 3(4), 72. https://doi.org/10.3390/inventions3040072