1. Introduction

Seafood production plays a critical role in the manufacture and distribution of a wide variety of food products, and it has established itself as one of the most important global industries [

1,

2]. However, the productivity of this industry remains limited due to its continued reliance on manual labor and outdated processing equipment. With the growing public interest in seafood consumption, there is increasing demand for enhanced quality control and reduced distribution time to preserve product freshness. To meet these demands and improve overall efficiency, the seafood industry is undergoing a transition from traditional labor-intensive methods to smart, digital technologies. This transformation is essential not only for improving productivity but also for ensuring the consistent and safe production of food. Automation and robotic technologies are being actively adopted to reduce production costs and improve product quality [

3,

4,

5].

Despite this progress, most automation applications in the food industry are still limited to material handling tasks such as box packaging and palletizing. This is mainly because the wide variability in the shape, size, and texture of seafood products makes automated handling and manipulation challenging. As a result, the development of specialized grippers suited to the diverse forms of seafood has become a critical area of research. Recent studies have focused on recognizing objects on high-speed conveyor systems and performing reliable grasping using soft grippers [

6].

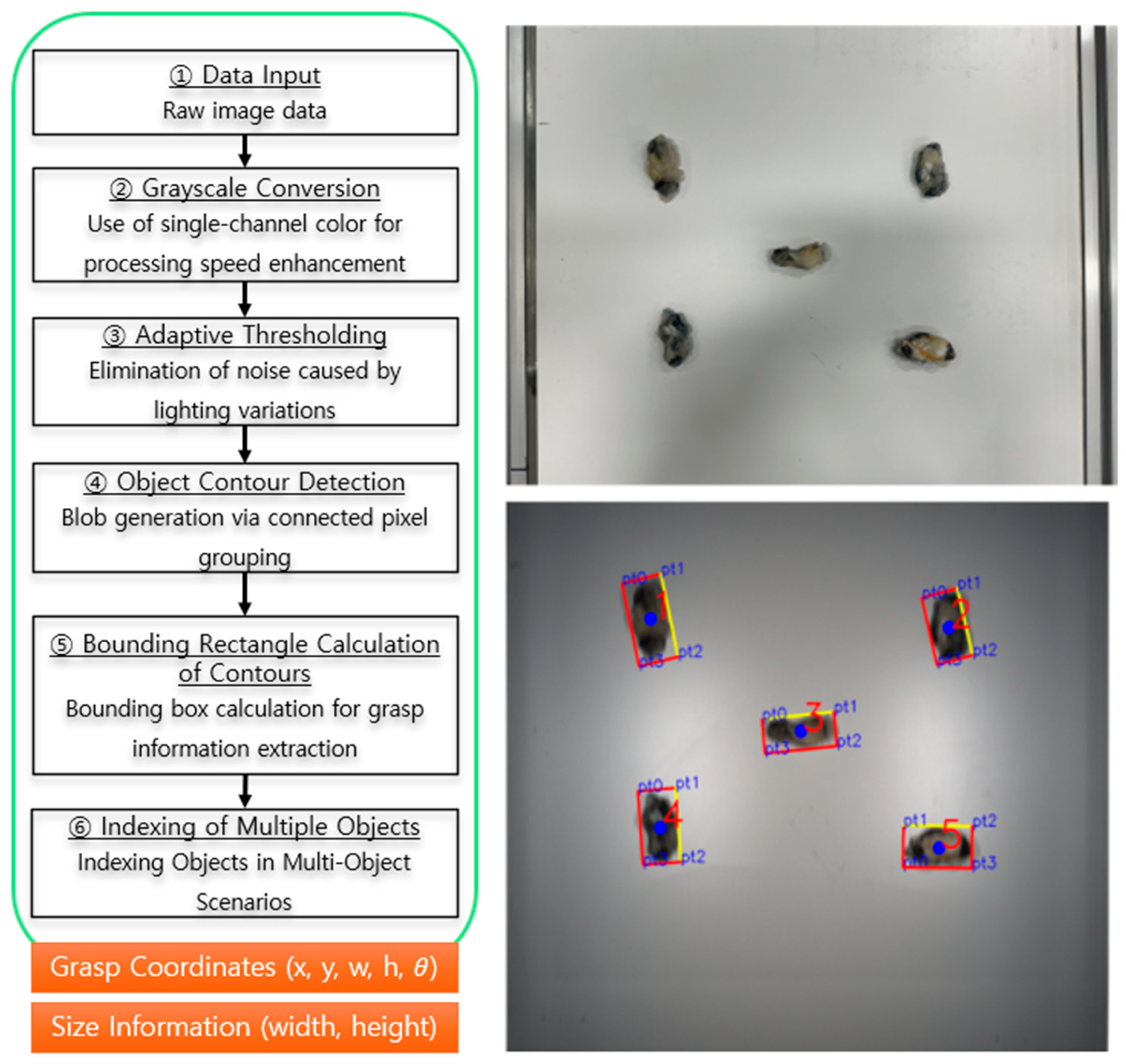

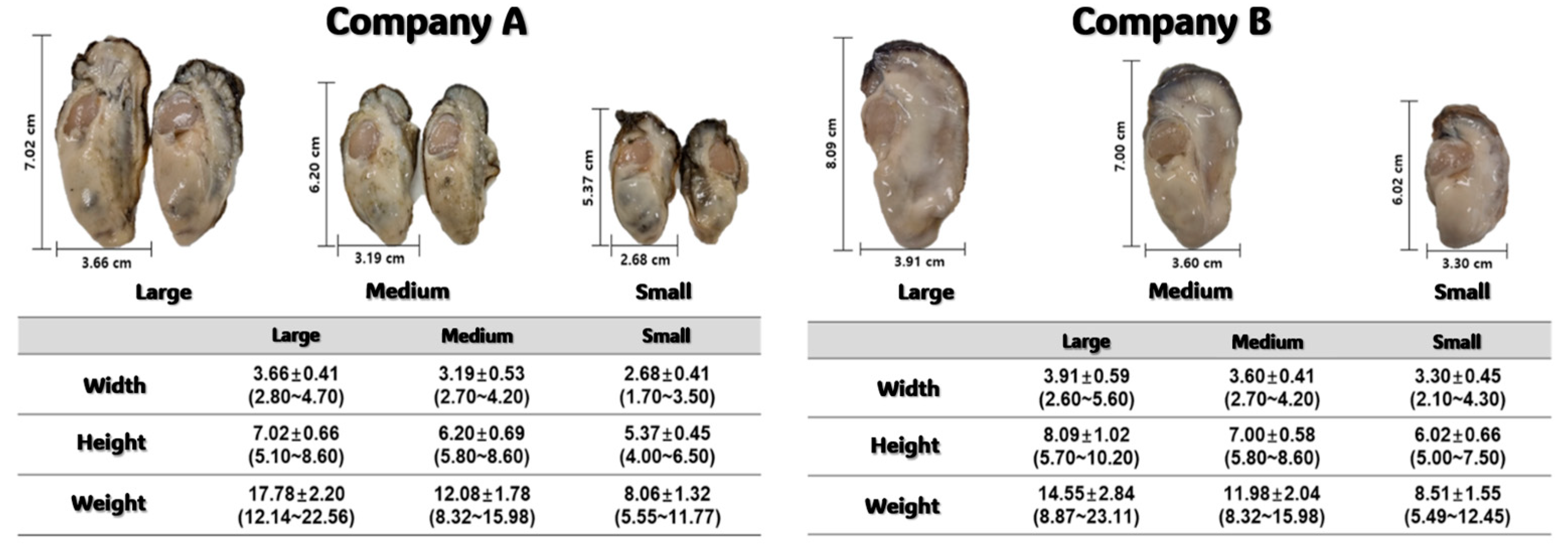

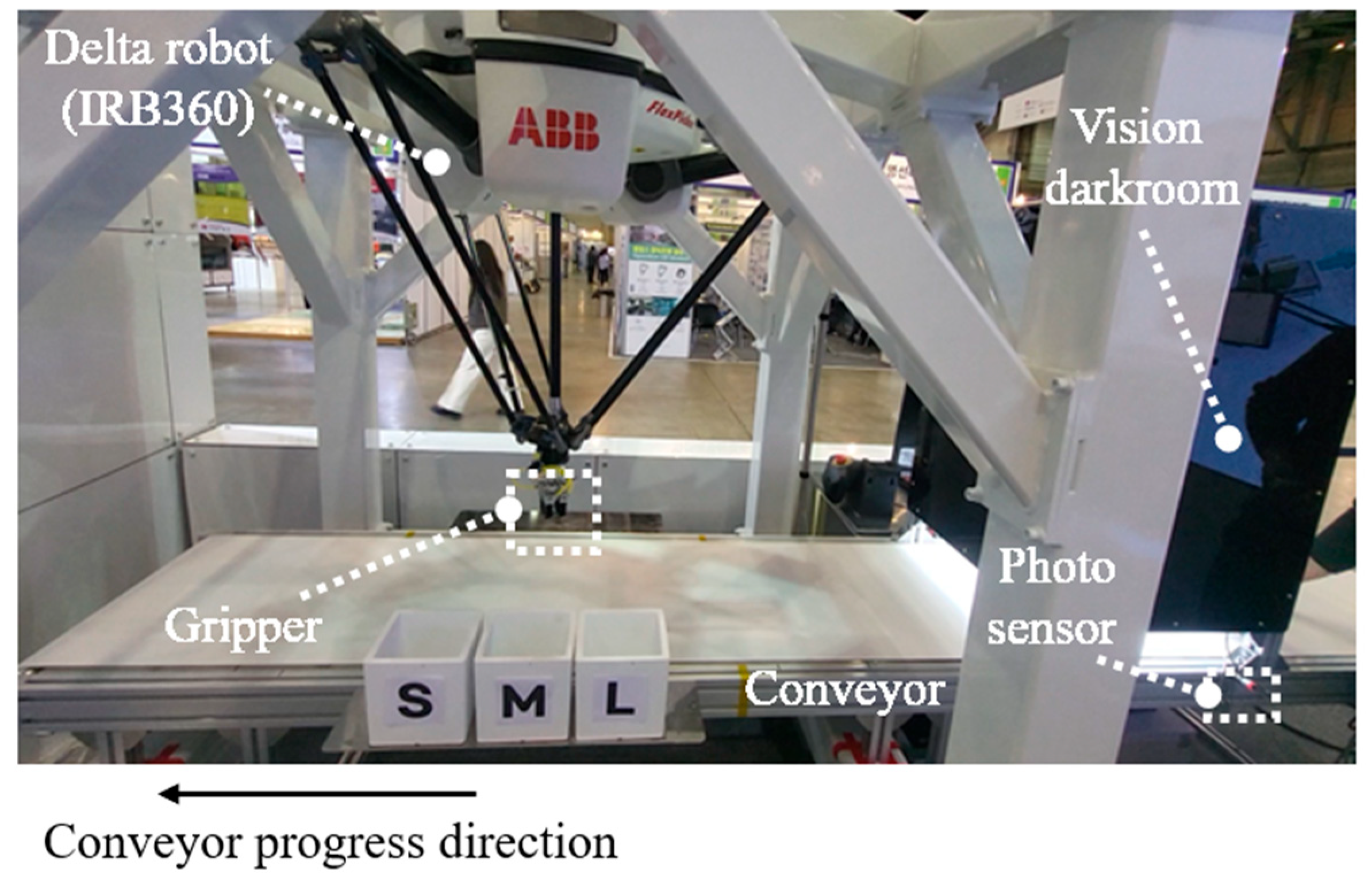

As shown in

Figure 1, the conventional oyster classification process relies on electronic scales and the subjective judgment of workers to classify oysters into large, medium, and small categories. The classified oysters are then placed into fixed molds to maintain their shape and prevent physical damage during freezing [

7]. After classification, the oysters go through a series of processing steps, including rapid freezing, depanning, glazing, refinement, and final packaging for distribution.

Commercial seafood graders typically perform non-contact size/quality sorting on lanes or flumes with vision inspection and pneumatic or mechanical rejection (e.g., SED Vision Grader, Lizotte, GP Graders), delivering high throughput but without closed-loop grasping or tray placement [

8,

9,

10]. In academia, recent oyster and shrimp studies emphasize deep-learning segmentation or recognition for quality/biomass estimation and counting—such as U-Net–based oyster contour/quality analysis, robotic oyster recognition with motion prediction on conveyors, and camera-based shrimp counting/weight estimation—again focusing on classification rather than gentle pick-and-place [

11,

12,

13,

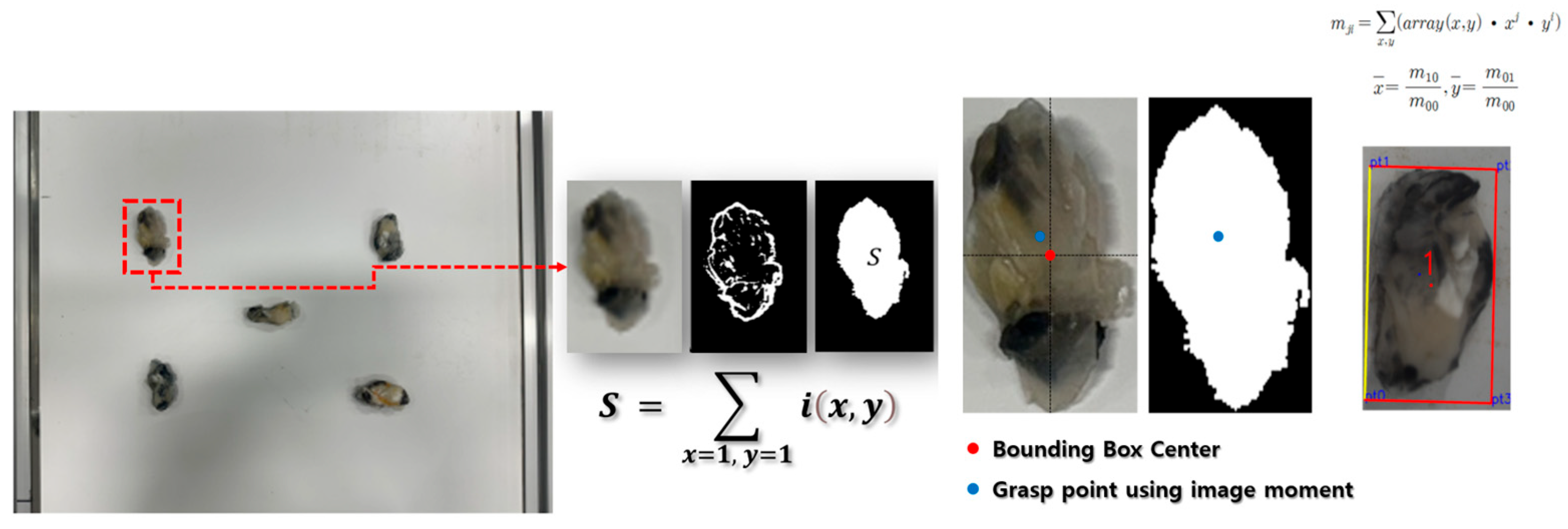

14]. By contrast, our system integrates a lightweight classical vision pipeline (adaptive thresholding with distance-transform watershed and image moments for grasp-point estimation) with a delta-robot (parallel robot) and a soft, drainage-textured gripper, enabling direct manipulation of wet, deformable oysters from a moving belt into trays under partial overlaps—thus obviating dataset collection and labeling, meeting hygiene requirements with a soft, drainage-textured gripper, and enabling end-to-end pick-and-place under mild clutter, using only classical vision.

This study proposes an automated oyster size classification process to replace the conventional manual oyster production method with a smart automation process using a parallel robot. Targeting oysters conveyed after the washing stage, an integrated automation system was developed and analyzed from the perspective of productivity, enabling continuous production through automated size classification and grasping. To achieve this, a vision-based algorithm was proposed as a core technology to accurately detect and grasp oysters in real time, despite their varying shapes and orientations. Using this algorithm, a pick-and-place system employing a parallel robot was controlled to perform precise classification and automatic placement into fixed molds. The performance of the proposed system was validated through experimental evaluations and simulations of the core technologies, demonstrating significant improvements in classification accuracy and operational efficiency compared to manual processing.

2. System Configuration of an Automated Oyster Classification Process

The automated oyster classification system was developed to streamline the process of oyster production by automating the classification of washed oysters by size and loading them into trays in preparation for rapid freezing.

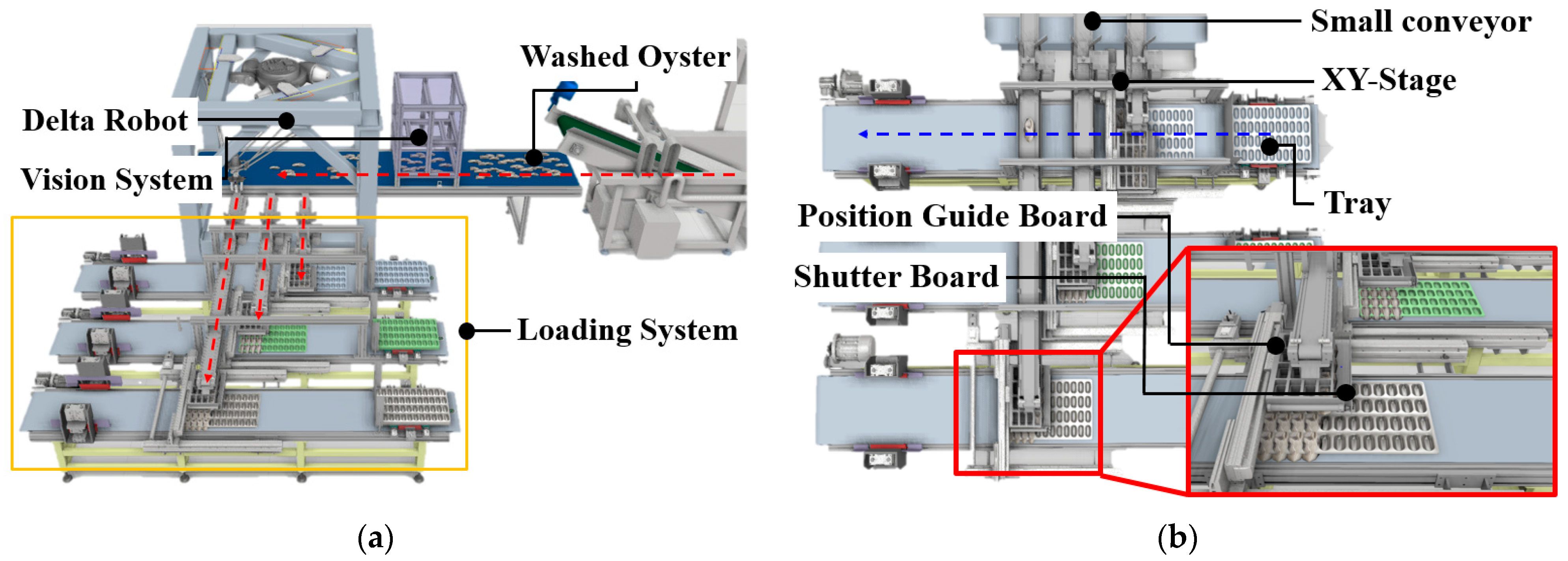

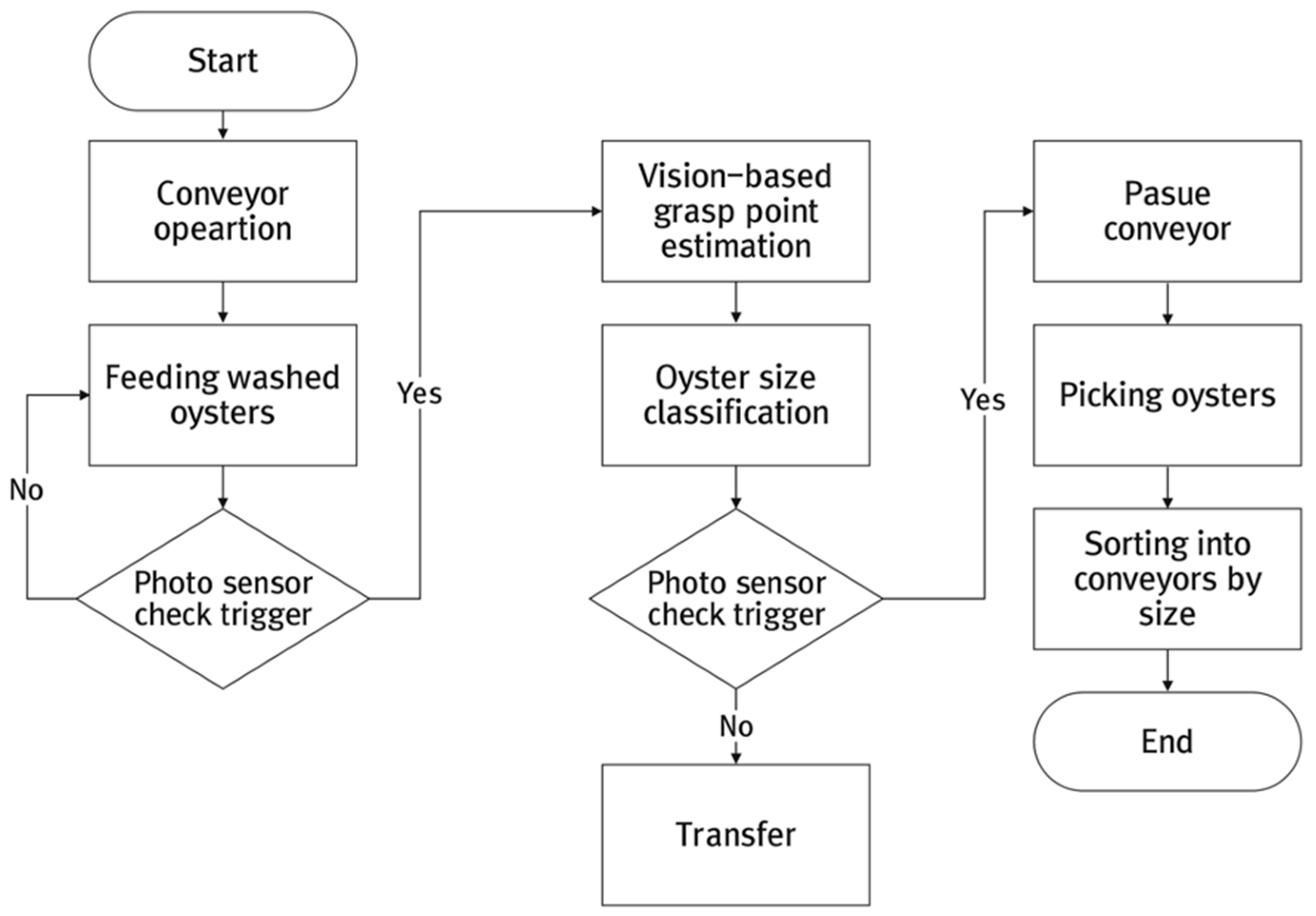

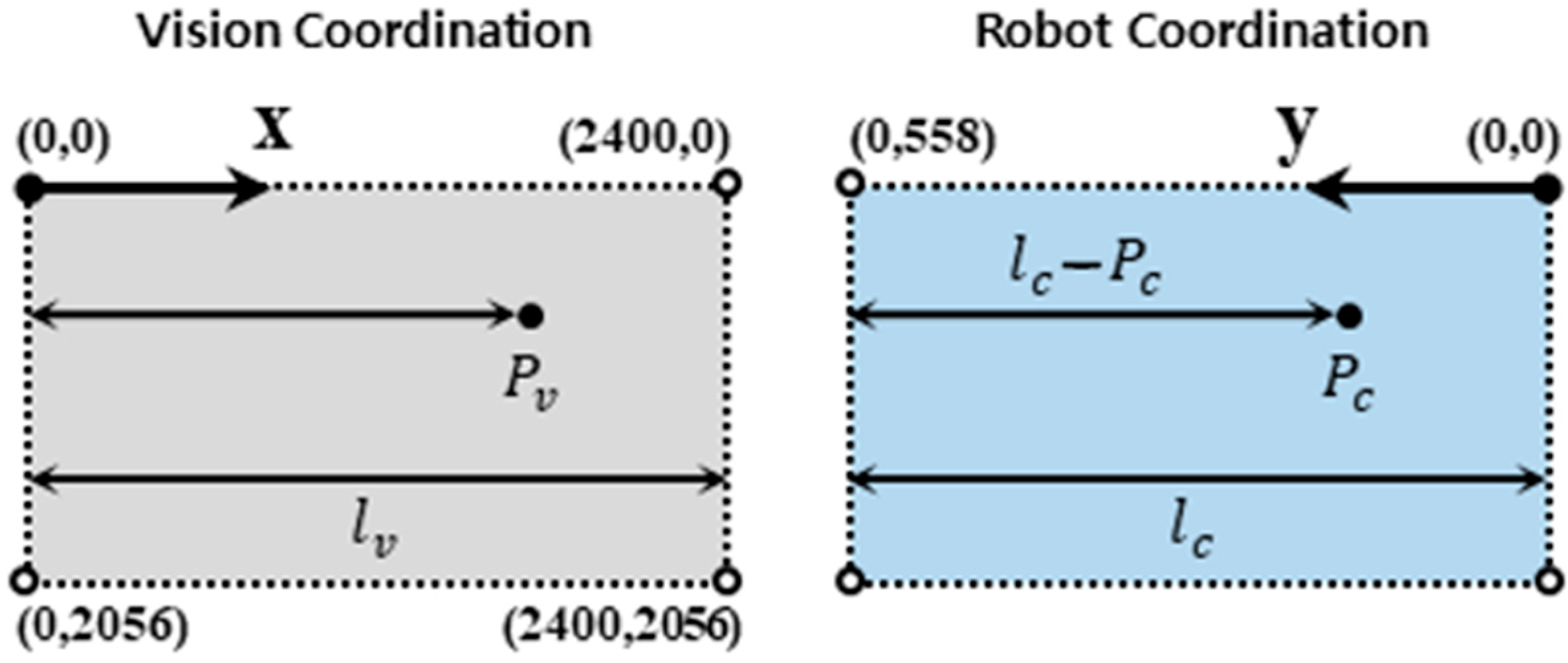

Figure 2a shows the configuration of the developed system, which consists of a vision system, a delta robot (KR3 D1200 HM, KUKA in Augsburg, Germany), and a loading system [

15]. After the oysters are washed, they are transported via a conveyor to the vision system, where object detection and coordinate recognition are performed. The vision system classifies each oyster into one of three size categories—large, medium, or small—and transmits the corresponding size and positional data to the delta robot for classification. The delta robot, equipped with a soft gripper, then picks up the oysters within the designated workspace and places them onto one of the small conveyors assigned to each size category. These small conveyors subsequently transfer the oysters to the loading system. To ensure precise placement, the conveyor is temporarily paused during each robot operation cycle.

Figure 2b illustrates the structure of the loading system. It is designed to accurately load the transferred oysters into trays and is composed of an XY-stage, a position guide board, and a shutter board. Both the position guide board and the shutter board are mounted on the XY-stage and can move along the X and Y axes. The system is structured with three separate lines to handle each oyster size category independently, enabling parallel and efficient loading operations.

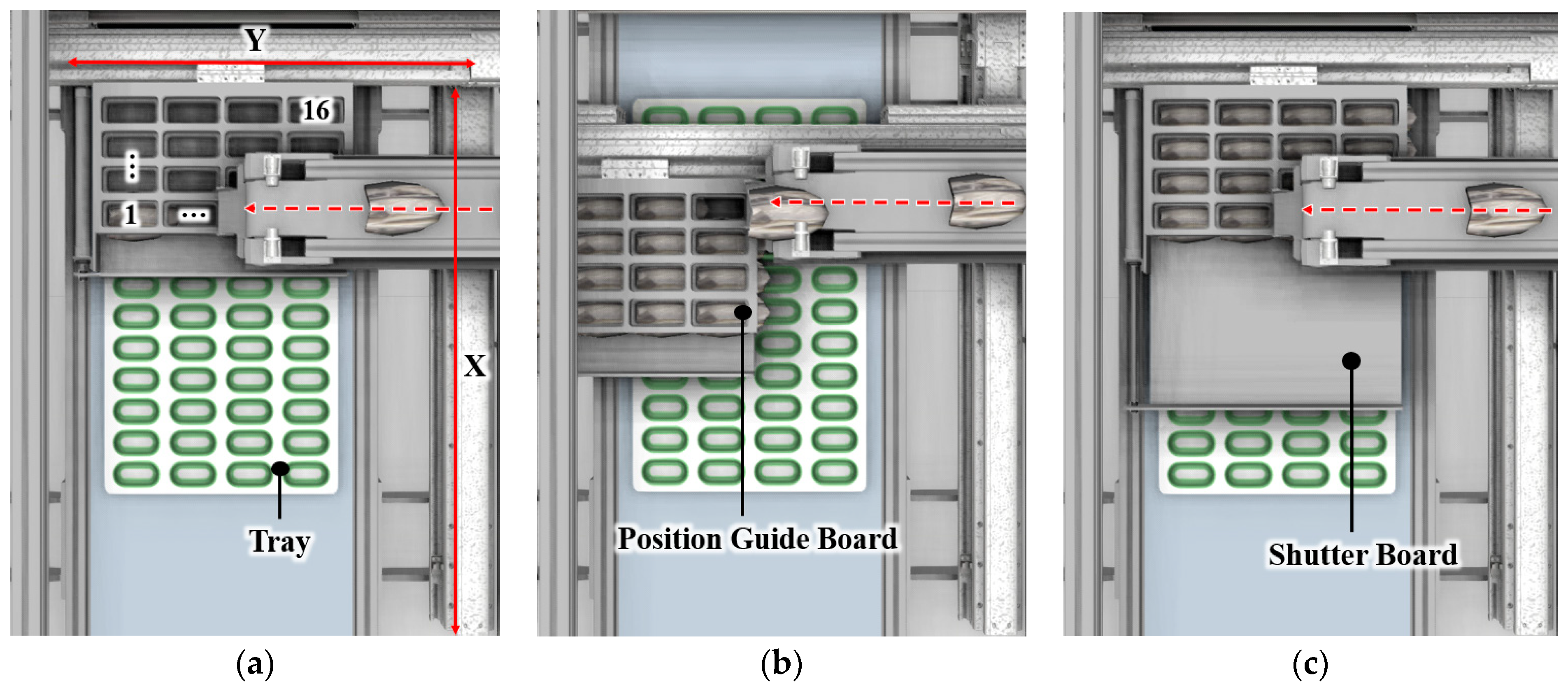

Figure 3 illustrates the loading system. It consists of an XY-stage, a position guide board, and a shutter board, which together enable the transferred oysters to be accurately loaded into trays. Both the position guide board and the shutter board are mounted on the XY-stage and are designed to move along the X and Y axes. Since the loading system sorts oysters into large, medium, and small categories, it is structured into three separate lines: the Large-line, Medium-line, and Small-line [

16].

Figure 3a shows oysters being placed onto the position guide board while the shutter board remains closed. At the same time, an empty fixed tray is positioned beneath the XY-stage via the conveyor.

Figure 3b depicts the position guide board moving along the X and Y axes to arrange 16 oysters in their designated positions.

Figure 3c illustrates the moment when, after all 16 oysters have been placed on the guide board, the shutter board opens to load the oysters into the fixed tray. This operation is repeated three times, and once a total of 48 oysters have been loaded into the tray, the filled tray is discharged via the conveyor.

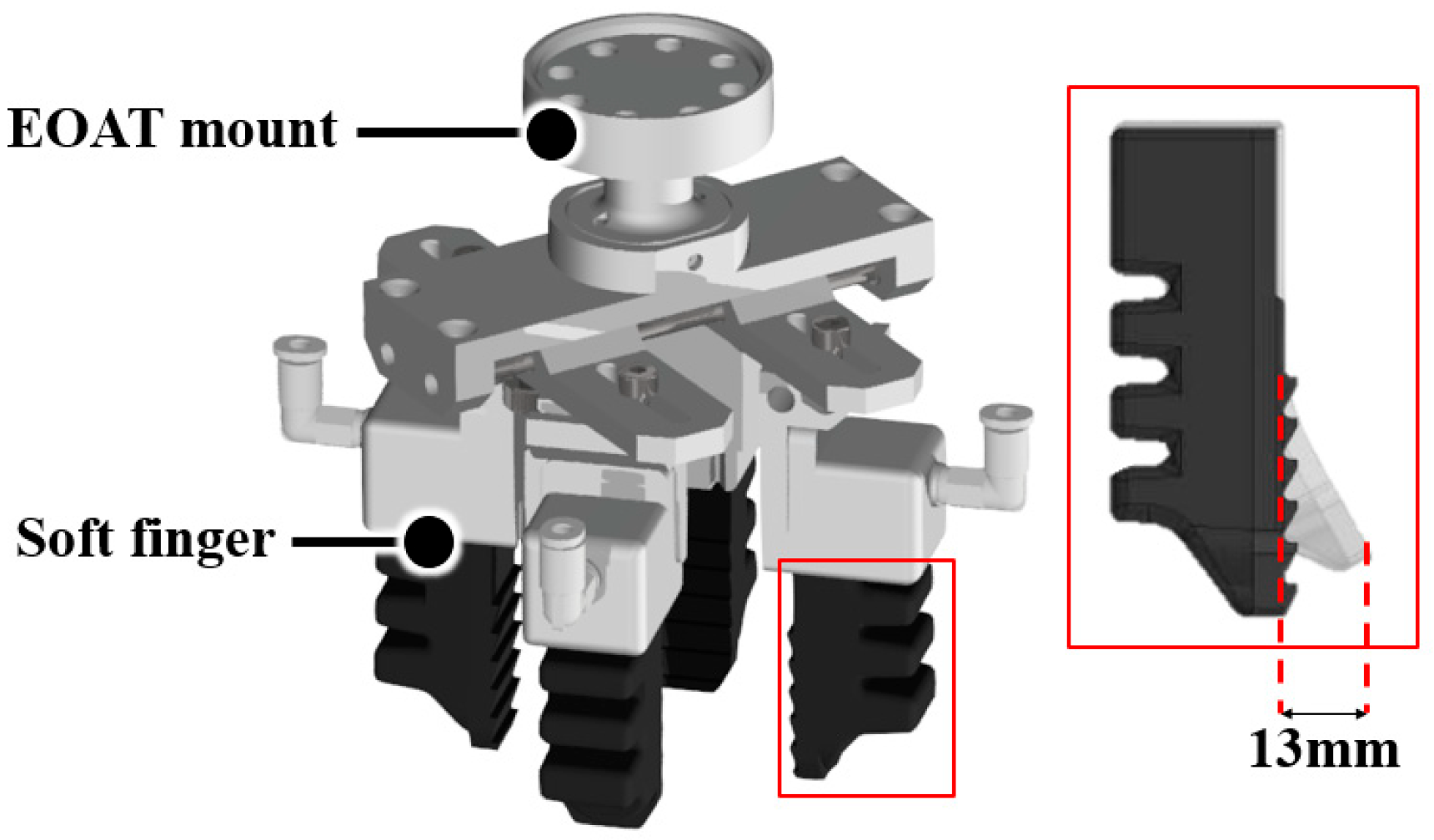

Figure 4 illustrates the soft gripper applied to the system. To effectively grasp oysters, the gripper was equipped with four soft fingers (F-B4T/LS8[P], Rochu in Zhangjiagang, China). When positive pressure is applied to the soft fingers, they bend inward toward the center, thereby converging toward the center of the gripper to securely hold the oyster. In addition, protrusions are formed on the contact surfaces with the oyster, which help prevent slippage or downward movement after grasping [

17].

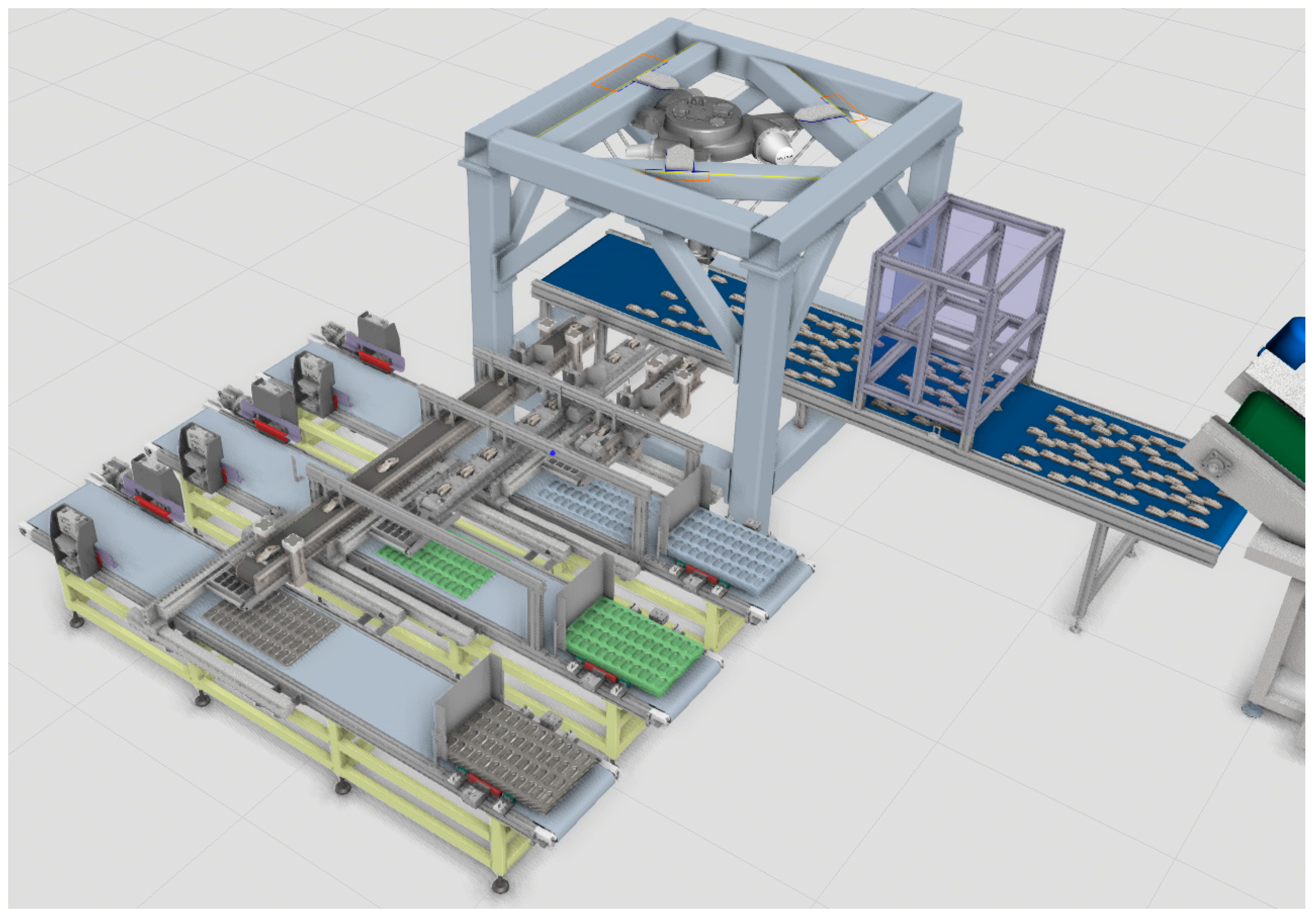

5. Productivity Evaluation Based on Process Simulation

As shown in

Figure 12, a process simulation was conducted using the Visual Components software environment to perform a comparative productivity analysis between the conventional manual oyster classification process and the developed automated oyster classification system. The simulation was based on the actual working conditions of Deokyeon Seafood Co., Ltd. in Tongyeong-si, a domestic oyster processing company in South Korea. In the manual process, 60 workers operate for 8 h a day, achieving a daily production volume of 7 tons. Based on harvest data from October to the following May, the distribution of oyster sizes was approximately 30% large, 50% medium, and 20% small, with average weights of 20 g, 15 g, and 10 g, respectively. It was confirmed that, assuming a 100% recognition and grasping success rate, at least seven automated systems would be required to match the production volume of the manual process.

Table 4 summarizes the environmental parameters and values configured in the simulation. Based on the performance evaluation, a recognition and grasping success rate of 99% was applied to the simulation, and the results are shown in

Table 5. The 8 h simulation demonstrated that a single automated oyster classification system could produce 1472 filled trays. To match the production volume of the manual process, it was determined that at least seven automated systems would be required. Compared to a theoretical scenario with a 100% recognition and grasping success rate, the number of processed oysters decreased by approximately 715, and the tray output was reduced by about 15 trays.

6. Conclusions

In this study, we developed and evaluated an automated oyster classification system capable of classifying oysters by size and placing them into trays for freezing, thereby automating a process traditionally reliant on manual labor. By incorporating a vision-based recognition algorithm and a parallel robot equipped with a soft gripper, the system demonstrated high classification and grasping accuracy—99% each. Experimental validations confirmed that the use of image moment-based grasp point estimation significantly enhanced grasping precision compared to bounding-box center methods. In simulation, the system showed that one unit could produce 1472 trays in an 8 h shift, and at least seven units would be needed to match the manual daily output of 7 tons under current performance metrics. Compared to a hypothetical 100% success rate, the difference in production amounted to approximately 715 oysters and 15 trays.

This study provides clear evidence that an automated oyster classification process can significantly enhance efficiency, consistency, and productivity. Future work will focus on enhancing mechanical components, particularly through the optimization of gripper design to reduce slippage of small-sized objects [

18,

19]. Additionally, system parameters will be refined to further improve overall throughput, operational stability, and process reliability. To further substantiate identification performance in complex environments, we will, as future work, evaluate CNN-based approaches (e.g., YOLO) alongside the current classical pipeline and report objective metrics—precision, recall, F1, and mAP—under higher density, occlusion, and illumination variation. The proposed system is designed for scalable deployment via modular units that can be replicated across lanes and integrated at the factory testbed, with OMS-based orchestration supporting parallel, line-level scale-out. Field adaptation is addressed through planned analyses of environmental variability (e.g., moisture, residues) and error sources, with iterative optimization on the testbed prior to rollout with partner companies. Readiness for deployment is supported by a living-lab pathway (on-site verification and applicability evaluation), progression toward TRL-8 commercialization, and program benchmarks (recognition/inspection accuracy > 90% and in-line operation at 6 m/min). Economic feasibility (NPV/IRR/BCR) will guide scale decisions during technology transfer and diffusion.