Intelligent Diagnosis of Fish Behavior Using Deep Learning Method

Abstract

:1. Introduction

2. Materials and Methods

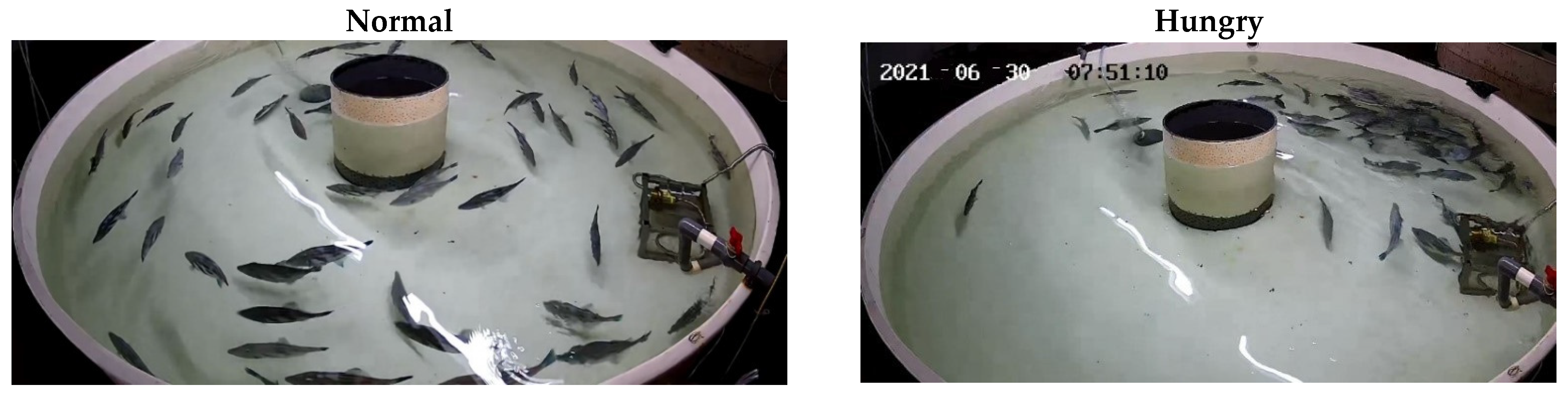

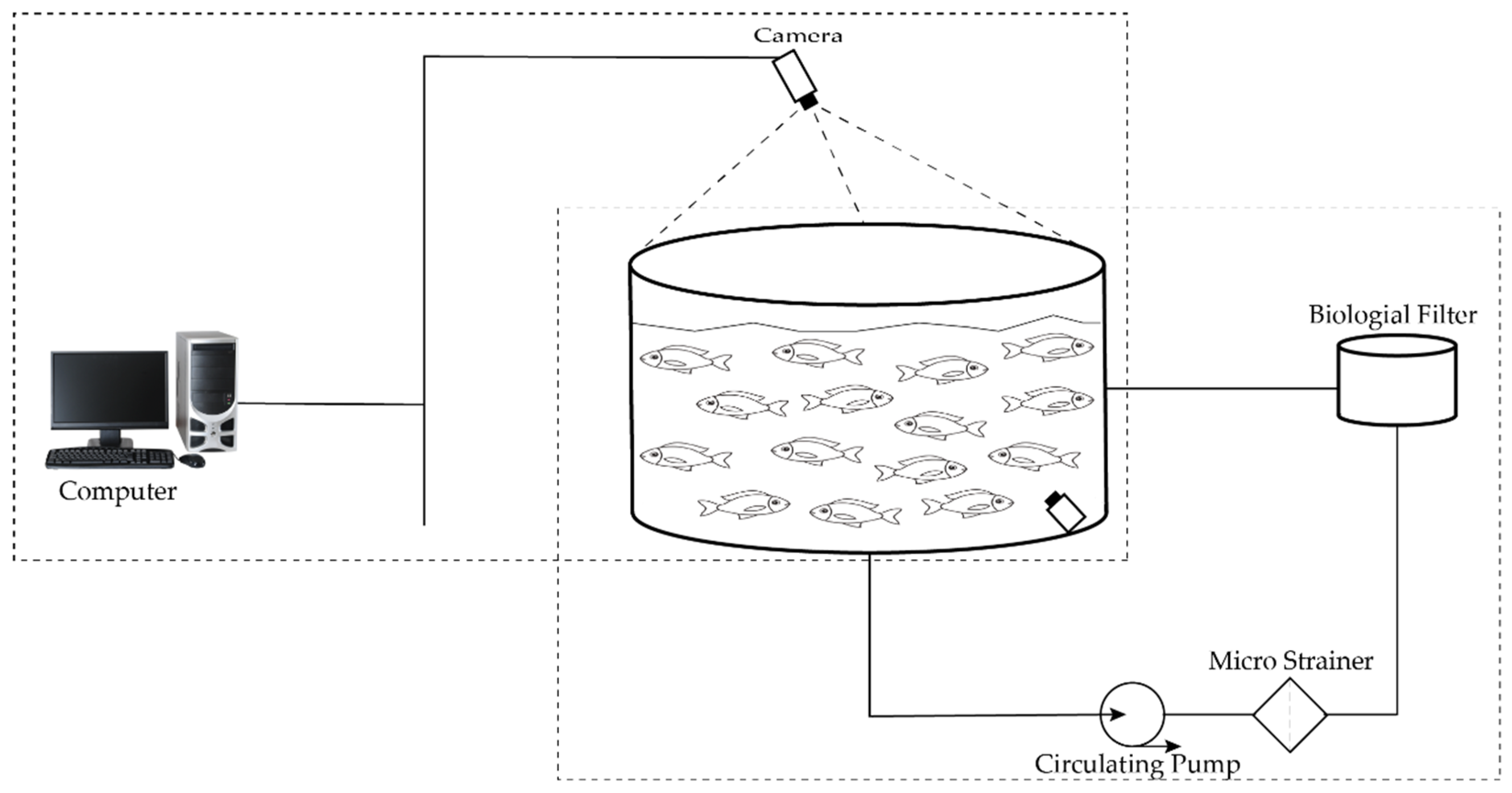

2.1. Fish Samples and Experimental Environment Creation

2.2. Dataset Description

2.3. Training Methodology

2.4. Software and Hardware System Description

2.5. Proposed Convolutional Neural Network (CNN) Model

3. Results

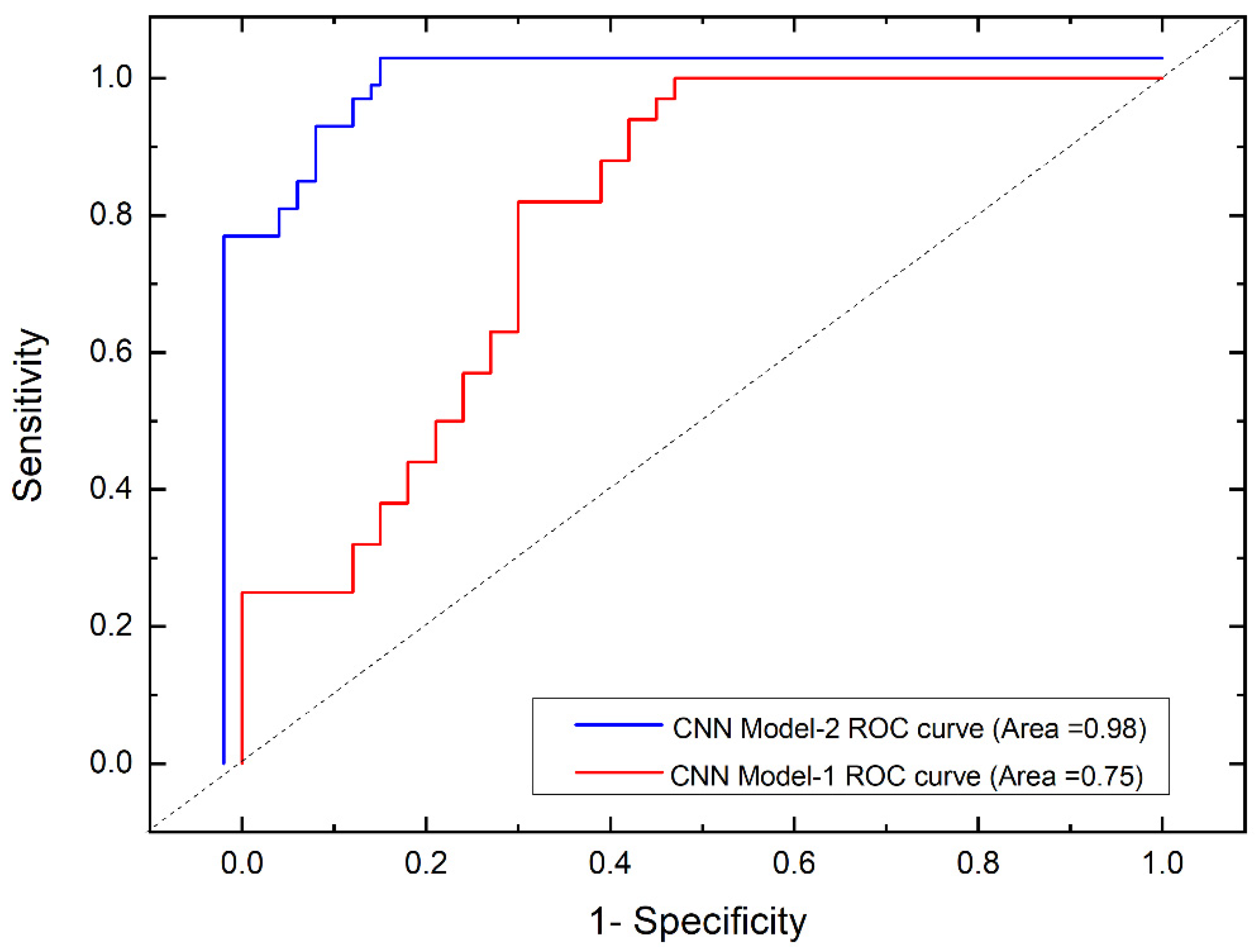

3.1. Evaluation Matrices

3.2. Quantitative Evaluation with Statistical Analysis

3.2.1. Experiment 1: CNN Model-1

3.2.2. Experiment 2: CNN Model-2

4. Discussion

5. Conclusions

- Proposed a state-of-the-art convolutional neural network with an additional fully connected layer for a high-performance detection and classification system.

- Due to the correlation between environmental complexity and uncertainty of fish behavior, the behavior recognition accuracy is generally low. The proposed method achieved excellent accuracy with these substantial challenges.

- The proposed model addressed the problem of poor generalization ability with the shallow neural network and classified the fish images into two categories with an accuracy of 98%.

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Food and Agriculture Organization of the United Nations. The State of World Fisheries and Aquaculture 2022; FAO: Rome, Italy, 2022. [Google Scholar]

- Food and Agriculture Organization of the United Nations. The State of World Fisheries and Aquaculture—Meeting the Sustainable Goals. Nat. Resour. 2018, 210. [Google Scholar]

- Shi, C.; Wang, Q.; He, X.; Zhang, X.; Li, D. An Automatic Method of Fish Length Estimation Using Underwater Stereo System Based on LabVIEW. Comput. Electron. Agric. 2020, 173, 105419. [Google Scholar] [CrossRef]

- Yang, X.; Zhang, S.; Liu, J.; Gao, Q.; Dong, S.; Zhou, C. Deep Learning for Smart Fish Farming: Applications, Opportunities and Challenges. Rev. Aquac. 2021, 13, 66–90. [Google Scholar] [CrossRef]

- Wang, T.; Xu, X.; Wang, C.; Li, Z.; Li, D. From Smart Farming towards Unmanned Farms: A New Mode of Agricultural Production. Agriculture 2021, 11, 145. [Google Scholar] [CrossRef]

- Akbar, M.O.; Shahbaz Khan, M.S.; Ali, M.J.; Hussain, A.; Qaiser, G.; Pasha, M.; Pasha, U.; Missen, M.S.; Akhtar, N. IoT for Development of Smart Dairy Farming. J. Food Qual. 2020, 2020, 1212805. [Google Scholar] [CrossRef]

- O’Neill, E.A.; Stejskal, V.; Clifford, E.; Rowan, N.J. Novel Use of Peatlands as Future Locations for the Sustainable Intensification of Freshwater Aquaculture Production—A Case Study from the Republic of Ireland. Sci. Total Environ. 2020, 706, 136044. [Google Scholar] [CrossRef]

- Yang, L.; Liu, Y.; Yu, H.; Fang, X.; Song, L.; Li, D.; Chen, Y. Computer Vision Models in Intelligent Aquaculture with Emphasis on Fish Detection and Behavior Analysis: A Review. Arch. Comput. Methods Eng. 2020, 28, 2785–2816. [Google Scholar] [CrossRef]

- Siddiqui, S.A.; Salman, A.; Malik, M.I.; Shafait, F.; Mian, A.; Shortis, M.R.; Harvey, E.S. Automatic Fish Species Classification in Underwater Videos: Exploiting Pre-Trained Deep Neural Network Models to Compensate for Limited Labelled Data. ICES J. Mar. Sci. 2018, 75, 374–389. [Google Scholar] [CrossRef]

- Chen, L.; Yang, X.; Sun, C.; Wang, Y.; Xu, D.; Zhou, C. Feed Intake Prediction Model for Group Fish Using the MEA-BP Neural Network in Intensive Aquaculture. Inf. Process. Agric. 2020, 7, 261–271. [Google Scholar] [CrossRef]

- De Verdal, H.; Komen, H.; Quillet, E.; Chatain, B.; Allal, F.; Benzie, J.A.H.; Vandeputte, M. Improving Feed Efficiency in Fish Using Selective Breeding: A Review. Rev. Aquac. 2018, 10, 833–851. [Google Scholar] [CrossRef]

- Hu, W.-C.; Wu, H.-T.; Zhang, Y.-F.; Zhang, S.-H.; Lo, C.-H. Shrimp Recognition Using ShrimpNet Based on Convolutional Neural Network. J. Ambient Intell. Humaniz. Comput. 2020. [Google Scholar] [CrossRef]

- Liu, Z.; Li, X.; Fan, L.; Lu, H.; Liu, L.; Liu, Y. Measuring Feeding Activity of Fish in RAS Using Computer Vision. Aquac. Eng. 2014, 60, 20–27. [Google Scholar] [CrossRef]

- Zhang, S.; Yang, X.; Wang, Y.; Zhao, Z.; Liu, J.; Liu, Y.; Sun, C.; Zhou, C. Automatic Fish Population Counting by Machine Vision and a Hybrid Deep Neural Network Model. Animals 2020, 10, 364. [Google Scholar] [CrossRef] [Green Version]

- Wang, H.; Zhang, S.; Zhao, S.; Wang, Q.; Li, D.; Zhao, R. Real-Time Detection and Tracking of Fish Abnormal Behavior Based on Improved YOLOV5 and SiamRPN++. Comput. Electron. Agric. 2022, 192, 106512. [Google Scholar] [CrossRef]

- Vo, T.T.E.; Ko, H.; Huh, J.H.; Kim, Y. Overview of Smart Aquaculture System: Focusing on Applications of Machine Learning and Computer Vision. Electronics 2021, 10, 2882. [Google Scholar] [CrossRef]

- Bradley, D.; Merrifield, M.; Miller, K.M.; Lomonico, S.; Wilson, J.R.; Gleason, M.G. Opportunities to Improve Fisheries Management through Innovative Technology and Advanced Data Systems. Fish Fish. 2019, 20, 564–583. [Google Scholar] [CrossRef]

- Schneider, S.; Taylor, G.W.; Linquist, S.; Kremer, S.C. Past, Present and Future Approaches Using Computer Vision for Animal Re-Identification from Camera Trap Data. Methods Ecol. Evol. 2019, 10, 461–470. [Google Scholar] [CrossRef] [Green Version]

- Rauf, H.T.; Lali, M.I.U.; Zahoor, S.; Shah, S.Z.H.; Rehman, A.U.; Bukhari, S.A.C. Visual Features Based Automated Identification of Fish Species Using Deep Convolutional Neural Networks. Comput. Electron. Agric. 2019, 167, 105075. [Google Scholar] [CrossRef]

- Zhou, C.; Yang, X.; Zhang, B.; Lin, K.; Xu, D.; Guo, Q.; Sun, C. An Adaptive Image Enhancement Method for a Recirculating Aquaculture System. Sci. Rep. 2017, 7, 6243. [Google Scholar] [CrossRef] [Green Version]

- Zhou, C.; Zhang, B.; Lin, K.; Xu, D.; Chen, C.; Yang, X.; Sun, C. Near-Infrared Imaging to Quantify the Feeding Behavior of Fish in Aquaculture. Comput. Electron. Agric. 2017, 135, 233–241. [Google Scholar] [CrossRef]

- Zhou, C.; Lin, K.; Xu, D.; Chen, L.; Guo, Q.; Sun, C.; Yang, X. Near Infrared Computer Vision and Neuro-Fuzzy Model-Based Feeding Decision System for Fish in Aquaculture. Comput. Electron. Agric. 2018, 146, 114–124. [Google Scholar] [CrossRef]

- Zhou, C.; Xu, D.; Chen, L.; Zhang, S.; Sun, C.; Yang, X.; Wang, Y. Evaluation of Fish Feeding Intensity in Aquaculture Using a Convolutional Neural Network and Machine Vision. Aquaculture 2019, 507, 457–465. [Google Scholar] [CrossRef]

- Måløy, H.; Aamodt, A.; Misimi, E. A Spatio-Temporal Recurrent Network for Salmon Feeding Action Recognition from Underwater Videos in Aquaculture. Comput. Electron. Agric. 2019, 167, 105087. [Google Scholar] [CrossRef]

- Adegboye, M.A.; Aibinu, A.M.; Kolo, J.G.; Aliyu, I.; Folorunso, T.A.; Lee, S.H. Incorporating Intelligence in Fish Feeding System for Dispensing Feed Based on Fish Feeding Intensity. IEEE Access 2020, 8, 91948–91960. [Google Scholar] [CrossRef]

- Han, F.; Zhu, J.; Liu, B.; Zhang, B.; Xie, F. Fish Shoals Behavior Detection Based on Convolutional Neural Network and Spatiotemporal Information. IEEE Access 2020, 8, 126907–126926. [Google Scholar] [CrossRef]

- Stochastic Gradient Descent—Wikipedia. Available online: https://en.wikipedia.org/wiki/Stochastic_gradient_descent (accessed on 24 July 2022).

- Rehman, H.A.U.; Lin, C.Y.; Su, S.F. Deep Learning Based Fast Screening Approach on Ultrasound Images for Thyroid Nodules Diagnosis. Diagnostics 2021, 11, 2209. [Google Scholar] [CrossRef]

- Bansal, A.; Castillo, C.; Ranjan, R.; Chellappa, R. The Do’s and Don’ts for CNN-Based Face Verification. In Proceedings of the IEEE International Conference on Computer Vision Workshops, Venesia, Italy, 22–29 October 2017; pp. 2545–2554. [Google Scholar] [CrossRef]

- Basha, S.H.S.; Dubey, S.R.; Pulabaigari, V.; Mukherjee, S. Impact of Fully Connected Layers on Performance of Convolutional Neural Networks for Image Classification. Neurocomputing 2019, 378, 112–119. [Google Scholar] [CrossRef] [Green Version]

| Behavior Analysis | Test | Train | Total |

|---|---|---|---|

| Starvation | 175 | 560 | 735 |

| Normal | 425 | 840 | 1265 |

| Total | 600 | 1400 | 2000 |

| Parameters (%) | Model-1 (Max-Pooling) | Model-1 (Without Max-Pooling) |

|---|---|---|

| Accuracy | 88.9 | 86.02 |

| Error rate | 11.1 | 13.98 |

| Recall | 90.09 | 79 |

| Selectivity | 85.71 | 90 |

| Fall out | 14.28 | 85 |

| Miss rate | 9.9 | 21 |

| MCC | 73.92 | 66.3 |

| F1-score | 91.91 | 85 |

| Parameters (%) | Model-2 (Max-Pooling) | Model-2 (Without Max-Pooling) |

|---|---|---|

| Accuracy | 98 | 93 |

| Error rate | 2 | 7 |

| Recall | 98 | 93.94 |

| Selectivity | 98 | 90.90 |

| Fall out | 2 | 9.09 |

| Miss rate | 2 | 9.06 |

| MCC | 93 | 83.83 |

| F1-score | 98 | 94.89 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Iqbal, U.; Li, D.; Akhter, M. Intelligent Diagnosis of Fish Behavior Using Deep Learning Method. Fishes 2022, 7, 201. https://doi.org/10.3390/fishes7040201

Iqbal U, Li D, Akhter M. Intelligent Diagnosis of Fish Behavior Using Deep Learning Method. Fishes. 2022; 7(4):201. https://doi.org/10.3390/fishes7040201

Chicago/Turabian StyleIqbal, Usama, Daoliang Li, and Muhammad Akhter. 2022. "Intelligent Diagnosis of Fish Behavior Using Deep Learning Method" Fishes 7, no. 4: 201. https://doi.org/10.3390/fishes7040201

APA StyleIqbal, U., Li, D., & Akhter, M. (2022). Intelligent Diagnosis of Fish Behavior Using Deep Learning Method. Fishes, 7(4), 201. https://doi.org/10.3390/fishes7040201