1. Introduction

Human reliability analysis (HRA) is becoming increasingly important as a tool for risk control in activities that have catastrophic potential, such as nuclear power generation and offshore drilling. The main purpose of HRA of activities is to identify and evaluate the key human behaviour-oriented risk factors that concern major accident prevention for any operator-intensive system under different operational modes. An offshore operating company may typically employ HRA during the planning and follow-up of drilling activities to control the blowout risk associated with interactions among service providers [

1]. In this case, HRA could be considered critical to assist an operator to maintain two barriers during drilling operations [

2], and thereby to provide an acceptable level of safety as stipulated by society [

3]. As an example, there are requirements for the driller to manually activate the blowout preventer (BOP), a main well safety barrier, during operations. The need to activate the BOP may occur relatively often, according to data [

4]. Therefore, HRA helps to identify and evaluate the influences of human and organisational factors in drilling that nowadays may be considered a prerequisite to risk management.

This article comprises the last part in a trilogy [

5] that proposes a new method for probabilistic risk assessment of offshore drilling activities [

1]. This final part proposes that further improvements could be made to complete the procedure method; namely, for the procedure to explicitly describe the link in a HRA causal model to the performance of generic task analysis, since every well design is unique from Mother Nature’s side. As such, the objective of this procedure enhancement is to include an explicit link between the collective term of task analysis and HRA method to reduce the tendency for analyst-to-analyst variability, which remains a potential prevailing quality assurance issue in HRA [

6,

7,

8,

9,

10].

HRA critique points to several factors that may help compromise HRA quality, which are also associated with task analysis and procedure. For example, NUREG-1792 [

6] describes many HRA methods as merely quantification methods that need to be tailored to specific activity requirements. Even this may not be straightforward, since task requirements vary between different industries and workplace conditions [

9]. Notably, different requirements can also be found within an industry, such as the risk assessment performed on the installation level versus the well system level [

5].

The literature also includes discussions related to: (i) adopting knowledge about human behaviour that may be outdated or only applicable to simple tasks; (ii) the ‘black box’ nature of many causal models that make validation difficult; (iii) use of terminology not particularly suited for proactive human failure analysis [

9,

11,

12,

13]. Issues related to terminology may presumably also have links to the many knowledge domains found commingled in HRA methods, notably different human factor concepts in methods such as: (i) organisational and normal (sociotechnical) accidents [

14,

15]; (ii) heuristics and biases [

16]; (iii) perceptual cycle and sensemaking [

10,

17]; and (iv) situation awareness [

18,

19].

Table 1 summarizes the literature relevant to categorical task analysis and HRA in the oil and gas industry. As shown, the literature may be classified with different causality focuses that, in turn, are organised in influence structures of one to four levels in total. The most popular framework today in task analysis, with adaptations also for oil and gas, are the human factor analysis and classification system (HFACS) [

20,

21], which is based on the energy defence model ([

15], Figure 1 and Figure 6). HFACS is found adapted and demonstrated for several applications in the literature, among others, in oil refinery accident investigations [

22]. HFACS represents a further development of Reason’s energy defence hierarchical causal classification scheme that also is adopted in the drilling HRA method [

1]. Whereas HFACS also considers that preconditions for unsafe act as an extra level within the hierarchy, the drilling HRA includes a separate checklist developed with elements from social and cognitive psychology.

Interestingly, a keyword search in the

Table 1 literature produced limited explicit discussion relevant to important offshore barrier management and failure analysis concepts such as performance influences and performance requirements. For example, in the Norwegian oil and gas industry, the safety authorities emphasise the explicit need for definition of the human, organizational, or technical barrier elements put in place to realise a main safety function in oil and gas activities [

2]. The guideline suggests definitions in risk analysis based on a hierarchical breakdown as follows: (i) Main barrier function and subfunctions, which describe what is to be achieved by the barrier. (ii) Barrier elements, which describe equipment, personnel, and operations that are necessary to achieve the functions. (iii) Performance requirements, which describe measureable requirements about element properties. (iv) Performance-influencing factors (PIF), which describe identified conditions that may impair the ability of elements to perform as intended.

The literature review suggests three main practical requirements towards an approach to create a better link between task analyses, i.e., categorical human error analysis, and HRA, i.e., human error probability calculations, as follows:

Multidisciplinary. Relevant across popular human factors and engineering domains that study technical, organizational, and human factors in safety management.

Generic. Relevant across process control technologies and human behavioural constructs with levels for describing human task performance, i.e., relevant to both generic task analysis and to models of causality adopted in the quantification of human error probabilities in HRA.

Compliant. Relevant to governing barrier management principles in offshore regulations. An example is the Petroleum Safety Authority Norway (PSA) guideline to barrier management in the Norwegian offshore industry [

2].

This article describes research performed to address the quality assurance issues in drilling HRA that may result from poor integration of task analysis in the drilling HRA procedure. The objective of this research is to improve well system safety through the consistent performance of HRA in probabilistic risk assessments of offshore drilling activities.

The structure of the article is as follows:

Section 2 describes the approach developed, which includes selected steps in the procedure for the offshore drilling HRA method proposed [

1]. The approach includes clarifications and modifications made to a generic hierarchical task analysis (HTA) framework relevant to the categorical evaluation of human task performance requirements in the HRA procedure. In

Section 3, a drilling crew training scenario is used as a case study to realistically demonstrate and discuss an application of the approach. Finally,

Section 4 includes concluding remarks from the research and suggestions for further work.

2. Proposed Task Analysis Method in HRA

This article represents the completion of previous work related to developing an explicit integration of generic task analysis within the procedure of the drilling probabilistic risk assessment (DPRA) method, which is proposed for risk control during offshore drilling activities [

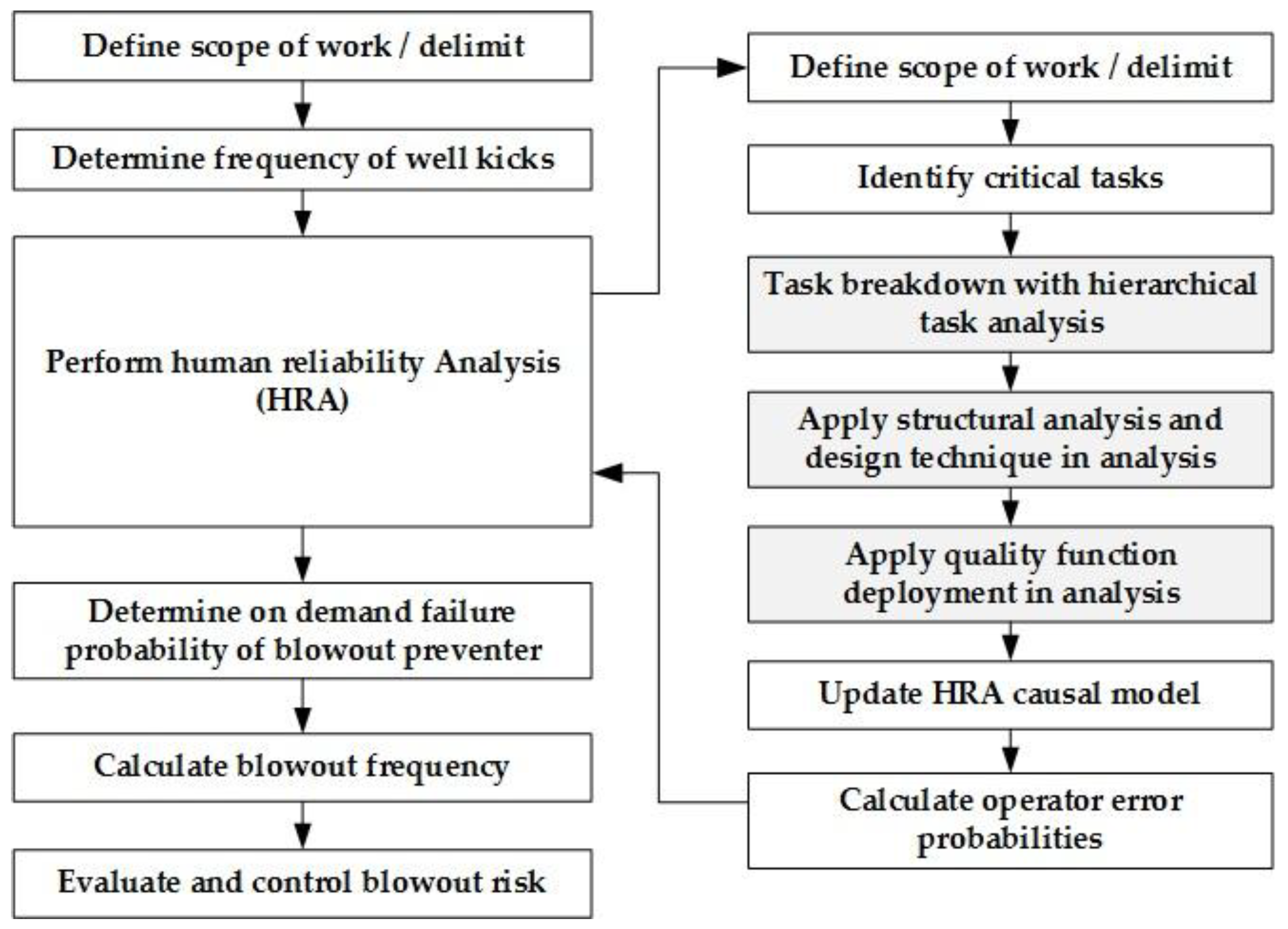

5]. The boxes shown with greyscale in

Figure 1 illustrate the focus of the research presented in this article in the context of the DPRA method procedure [

19]. From

Figure 1, the task analysis follows a task screening process that identifies critical tasks to be analysed, and where the task analysis results are to be further used to update the DPRA causal model [

1,

19]. The adaptations are based on recognized concepts: (i) hierarchical task analysis (HTA) [

39]; (ii) the structured analysis and design technique (SADT) [

40] and basic concepts of failure analysis [

41]; and (iii) quality function deployment (QFD) [

42] and the analytical hierarchy process (AHP) [

43]. A description of the key elements in the approach follows in the next sections.

2.1. Terminology in Task Analysis

A crisp definition of key concepts is crucial to the quality of any multidisciplinary risk analysis. This section introduces the main concepts for task analysis based on the article literature review and previous work on the integration of engineering failure and risk analysis with traditional human factor task analysis [

5].

Task analysis may be defined as an analysis of human performance requirements, which if not accomplished in accordance with system requirements, may have adverse effects on system cost, reliability, efficiency, effectiveness, or safety ([

44], p. 1). Task analysis aims to describe the manual and mental processes required for one or more operators to perform a required task [

45]. The analysis typically results in a hierarchical representation of the steps required to perform a main task for which there is a desired outcome(s) and for which there is some lowest-level action, or interaction, between humans and machines, denoted as the human–machine interface (HMI).

Human (operator) error probability (HEP) and human failure events (HFE) are the main concepts in HRA, which generally refer to basic events in bowtie risk analysis. For example, NUREG/CR-6883 ([

7], p. 27), similarly to NUREG/CR-6350 ([

29], p. 2–10), states that “HEP is the probability of the HFE”, where HFE is defined as “a basic event that represents a failure or unavailability of a component, system, or function that is caused by human inaction or an inappropriate action”.

Table 2 summarizes terms relevant to task analysis for offshore drilling activity.

2.2. HTA in Task Analysis

HTA is a popular task analysis technique that is considered a central approach in ergonomic studies [

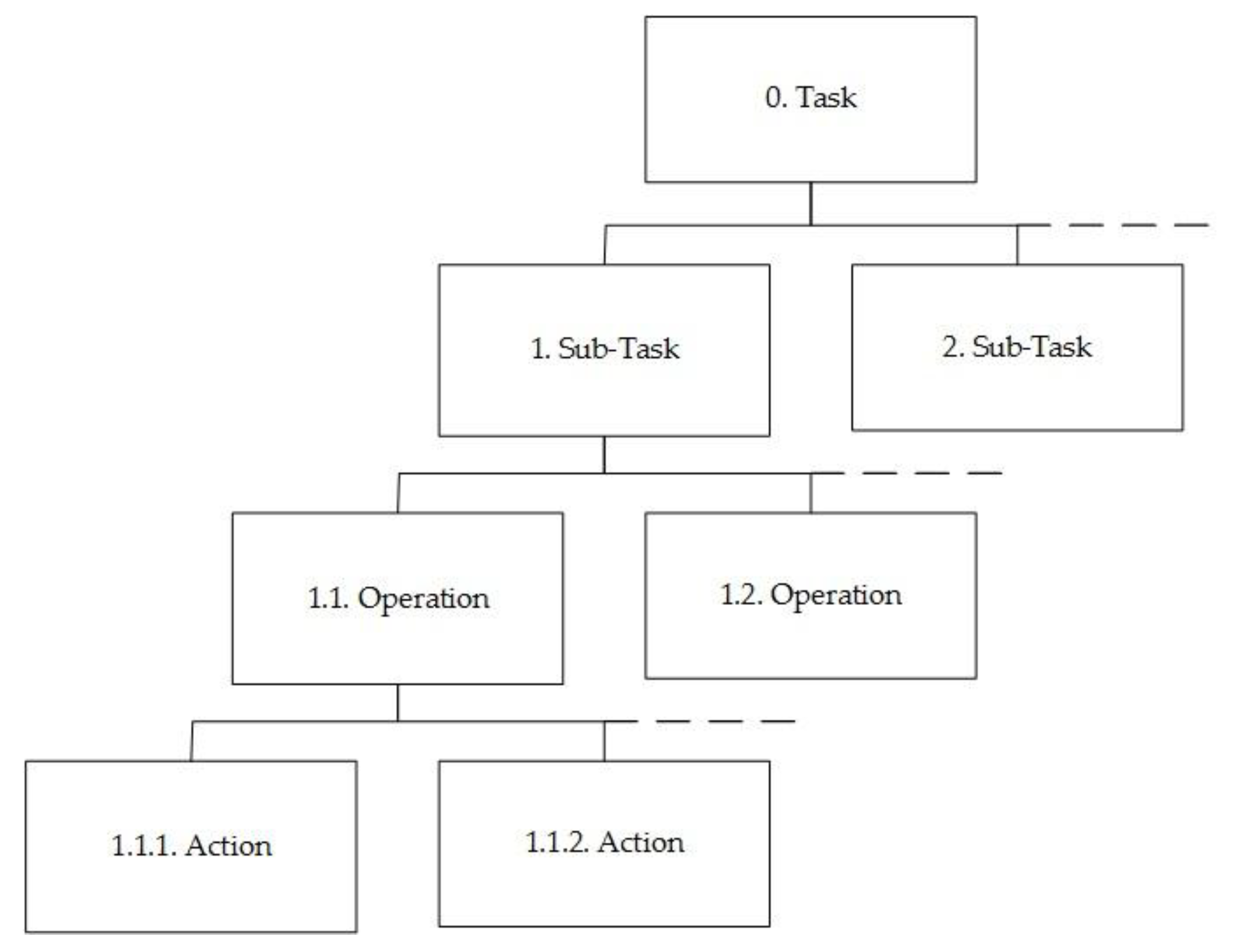

39]. As illustrated in

Figure 2, the HTA produces a description of tasks in a hierarchy, beginning with a task at the highest level consisting of objectives expressed by the goals of the sociotechnical system, which in turn are decomposed into operation subobjectives and lower-level actions [

39]. Actions are defined as the smallest individual specific operation carried out by operators interacting with a technical system or by the system itself, and are often procedural in nature, with an implied or explicit intended sequence.

2.3. SADT in Task Analysis

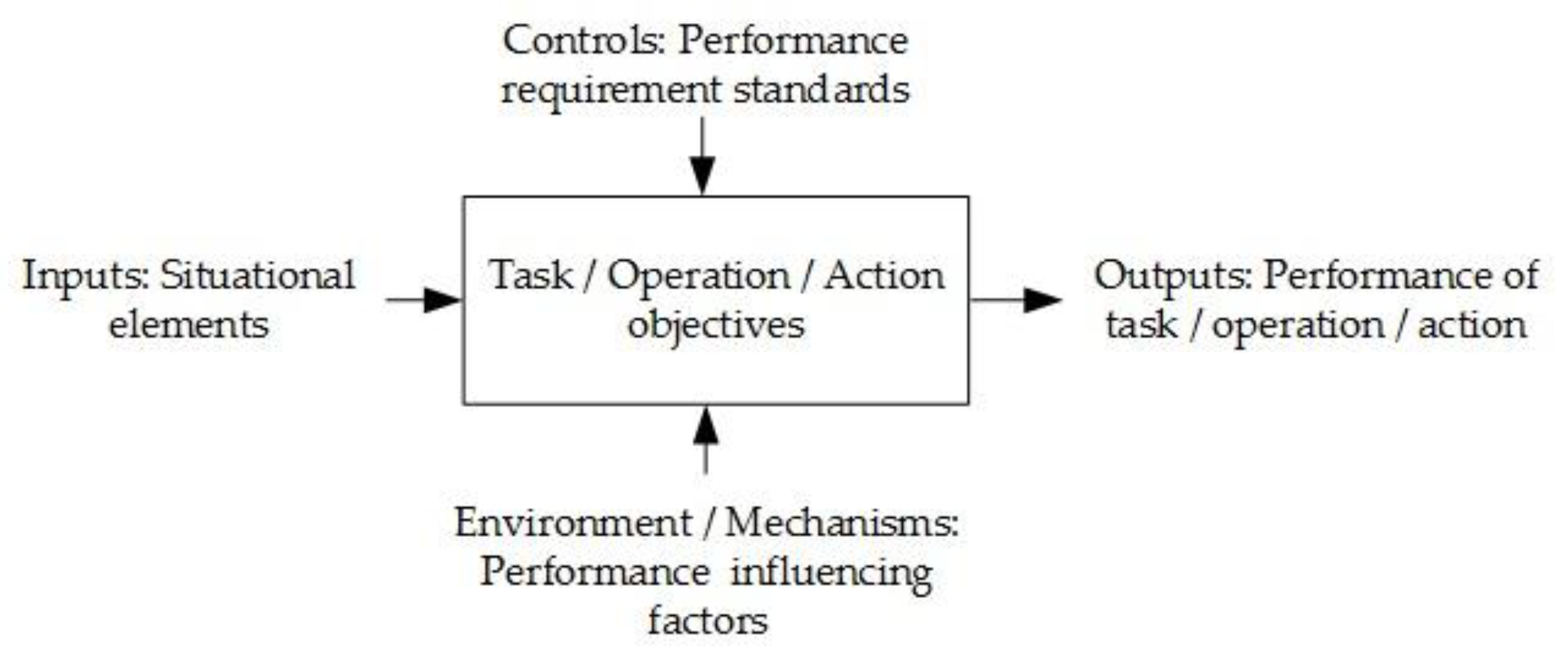

SADT is a popular failure analysis technique that, similarly to HTA, describes technical function objectives at different system breakdown levels. However, the function requirements in SADT are depicted as process blocks, with arrows that describe function level inputs and outputs, as shown in

Figure 3 [

40]. Input takes the form of the basic energy, materials, and information required to perform the function. Control elements govern or constrain how the function is performed. Mechanism or environment refers to the people, facilities, and equipment necessary to carry out the function.

The HTA in

Figure 2 describes three task breakdown levels with parallels in failure analysis [

41]: (i) system; (ii) items; and (iii) components. With the structural similarity in mind, we develop the HTA further by adopting concepts from SADT [

40] and functional block diagrams [

41]. With consideration of the DPRA causal model [

1,

19], we consider the following HTA-SADT diagram definitions:

Task, operation, and action objectives as ‘functions’ stated in the block.

Performance requirement standards serve as the ‘control system’.

Situational elements provide the ‘inputs’, which may be described in terms of operator perception and focus of attention; for example, a process of hearing, seeing, smelling, tasting, and feeling the vicinity at the action level, and on a higher level as objects, events, people, systems, and environmental factors associated with goals [

46].

Results from the performance of tasks, operations, and actions are the ‘outputs’.

PIFs provide the supporting ‘environment and mechanisms’.

To maintain three levels of coherence in analysis, it is advised to follow the documentation from performance requirement standards identified at action-level plans and procedures, tracing upwards in the organisation via relevant work process objectives. As such, the result from the combination of HTA and SADT is a bottom-up approach to task analysis.

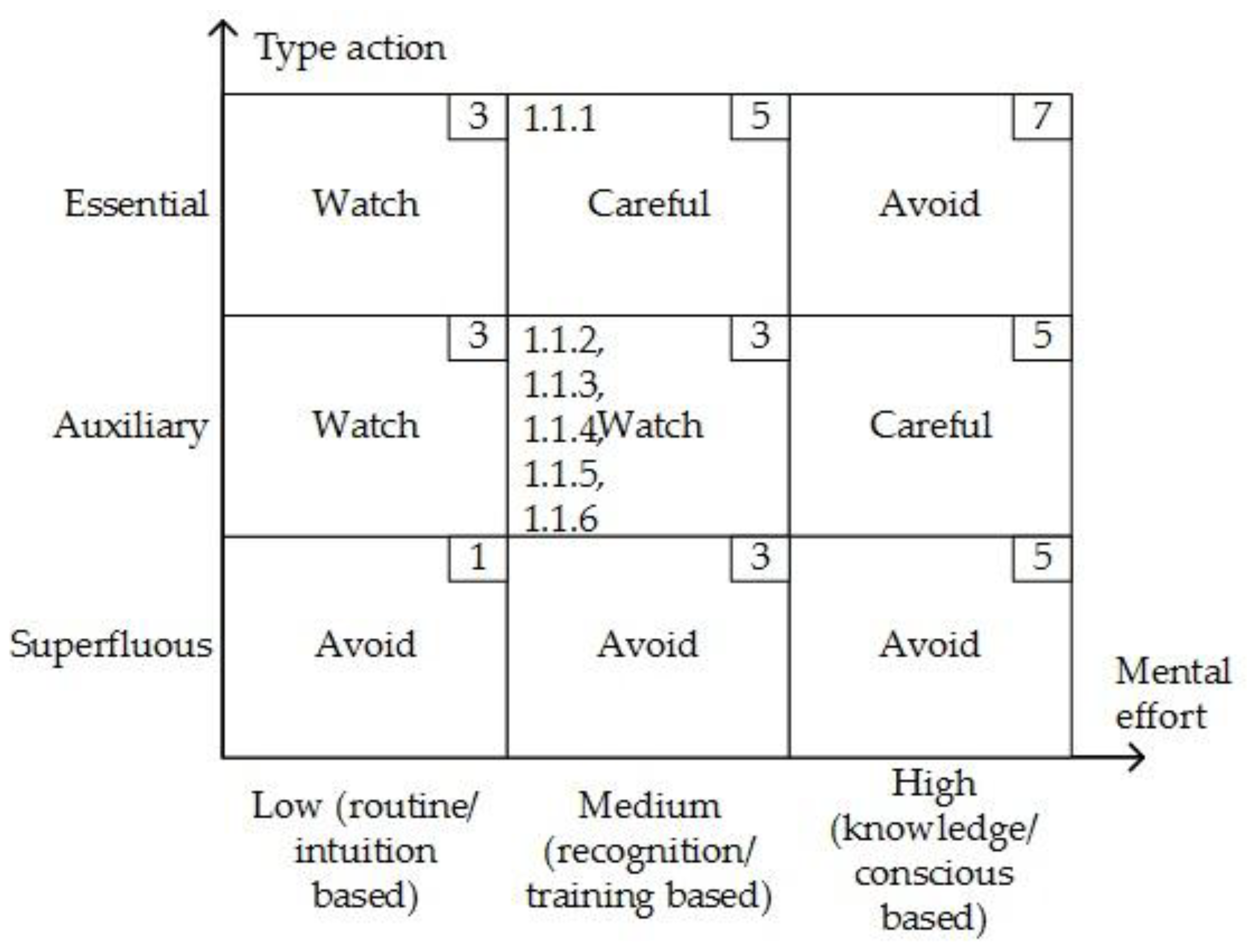

In failure analysis, we assign criticality classifications to actions in the task analysis to help prioritise further efforts according to the matrix shown in

Figure 4. For example, monitoring of changes in mud pit levels during drilling is viewed as an essential action in well kick detection. Actions may also be viewed as auxiliary, i.e., introduced in support of essential actions. Examples of auxiliary actions in drilling are typically actions performed to reduce the risk of drilling process upsets, such as stuck pipe incidents. A planned drilling operation may also conceivably include superfluous actions that are actions not required for successful task completion. Superfluous actions are undesired, since they may create a high noise-to-signal ratio [

14]. For the purpose of the HRA matrix in

Figure 4, we also classify the degree of mental and physical effort involved for the operator or crew to perform actions based on popular levels of human behaviour [

47]. Indicated on

Figure 4 are the scores assigned to each class (upper right-hand box) and tag numbers relevant to classifications made of the actions considered in the case study in the next section.

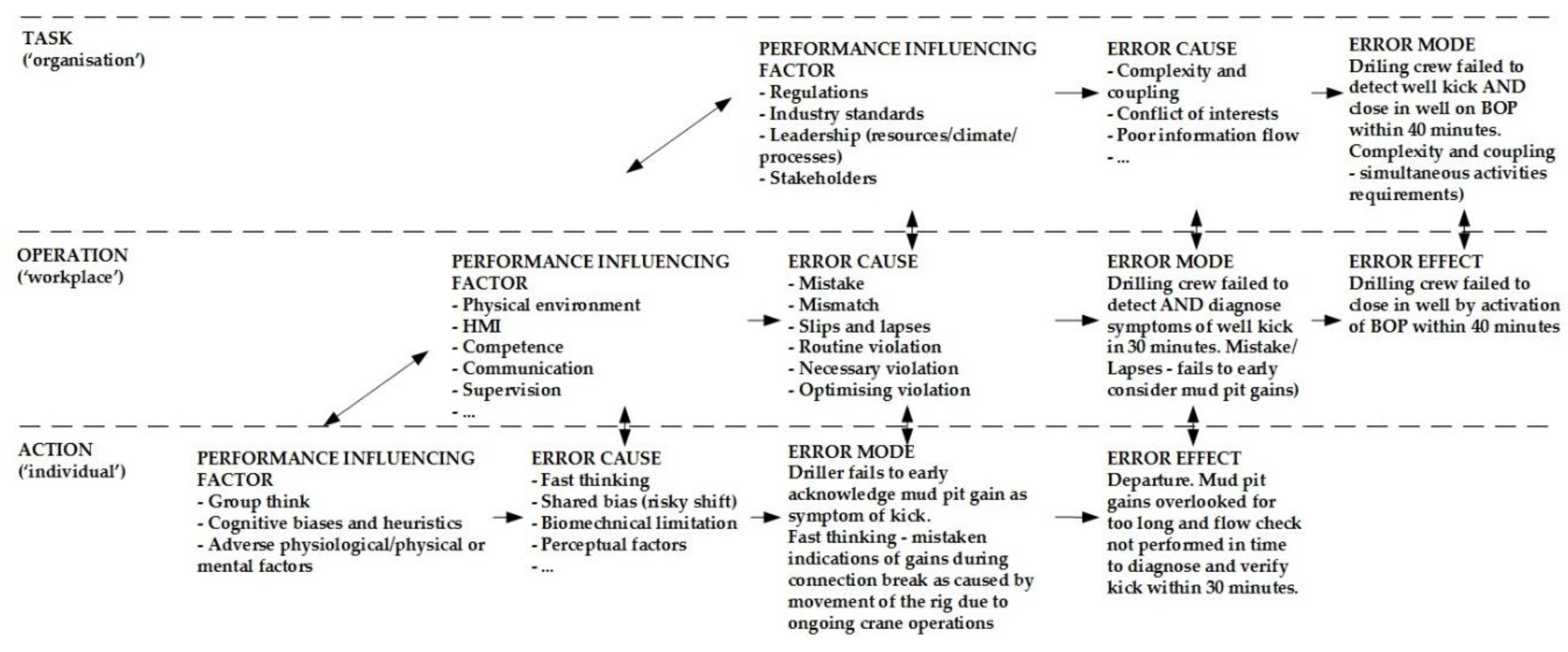

2.4. Causality Classifications in Task Analysis

Figure 5 illustrates the causal classification scheme used for the task analysis. As can be seen, operator error mechanisms are divided into individual, workplace, and organisational PIFs. The PIFs are also associated with other cause categories, shown in boxes with dashed lines below. The scheme reflects operator error as a process of departure that follows as a result of natural exploratory behaviour [

47], where PIFs describe an error-forcing context [

29] as encountered in a situation with a set of circumstances where workplace factors and latent human error tendencies may easily combine and result in operator error [

19].

The categories derive from HFACS and DPRA, which both adopt Reason’s hierarchical energy defence model. The combination in

Figure 5 of preconditions from HFACS with existing individual factors defined in DPRA could lead to the introduction of ambiguous terms. We therefore consider the preconditions from HFACS strictly as non-workplace-related error tendencies in the task analysis.

For the purpose of validation, the causal classification scheme has been applied to four well accident sequence descriptions provided in previous work [

19]. The results from this exercise are shown in

Table 3. The data sources are publicly available reports from well accident investigations. The authors faced challenges in classifying or quantifying explicit contributions from individual causal factors that were documented with limited details.

The Snorre accident may be described as the result of deficient competence, oversight, and information: First, a mistake made by the crew and supervisors in accepting the plan to use the outer casing and openhole as the main barrier. Next, a lack of recovery caused by not noticing the situation and not maintaining the mandatory two well barriers. The Montara accident may be described as the result of deficient governance, competence, oversight, and information: First, a mistake made by the crew and supervisors in agreeing to move the rig (main barrier) from the well without compensation, presumably motivated in part by cost-saving. Next, a lack of recovery caused by not noticing the situation and not maintaining the mandatory two main barriers. The Macondo accident may be described as the result of deficient governance, competence, oversight, and information: First, a mistake/violation made by the crew and supervisors who accepted an inconclusive barrier verification test. Next, a lack of recovery caused by not noticing the situation and not maintaining the mandatory two well barriers. The Gullfaks accident is complex, but may be described as the result of deficient competence and oversight associated with the application of a new technology. First, a mistake/violation made by the crew and supervisors who accepted a revision of the drilling program without formal change management. The intention, presumably, was to follow recognised practices established with older technology, without considering the subtle implications of decisions affecting risk factors such as casing design, casing wear, casing stress, and wellbore stability.

2.5. Apply QFD in Task Analysis

In this section, we apply a familiar formal approach to the task analysis as part of updating the drilling HRA causal model. The approach is based on QFD [

42], which is used as a means for generating normalised weights,

, of operational-level PIFs, denoted RIF

Is, in the HRA [

1]. The QFD concept, with its application of “quality houses”, includes well-known methods and techniques for stakeholder preference elicitation and evaluation in product or process development ([

42], Annex A). For example, evaluations may concern relationships between action performance requirements and action error causes, shown with quality house number one to the left in

Figure 6.

Figure 6 illustrates the QFD approach with use of two quality houses that result in an evaluation of priority weights,

, which corresponds to an evaluation of operation-level PIFs in HTA and HRA. Respectively, these PIFs are recognised as workplace influences in generic causal scheme shown in

Figure 5 (see also

Table 3).

The proposed QFD-based approach consists of two main stages, described respectively by house of quality (HoQ) number one and two in

Figure 6. The first stage covers an evaluation made of action performance requirements versus action error causes identified in the activity HEP/HFE. Next, the action error causes with normalised weights produced in the first stage are reapplied in evaluation of the same action error causes versus relevant operation-level PIFs in the HRA for the same activity. The resulting normalised weights are used directly as updated weights for PIFs in the HRA causal model.

The HoQ 1 is seen to include a roof (correlation matrix) that facilitates the orthogonal treatment of the action-level causes, which similarly are handled by the existing HRA procedure on the operational level of HoQ 2. The HoQ 1 correlation matrix is resolved in the approach with the use of AHP. The action-level causes are treated in AHP as three independent subgroups in the approach to reduce the efforts required for achieving consistent pairwise comparisons. The subgroups are defined according to the classification given for causes under individual influences in

Figure 5, and are represented with submatrices

,

, and

. In practice, the QFD is carried out for an activity according to the following procedure:

Definition of the list of actions in (1, …, m). Assign each with a priority score,

, by adopting the critical importance score assigned in task analysis (

Figure 4); i.e., scores are in (1, 3, 5, 7).

Evaluate correlation matrices

,

, and

. Use AHP to determine the normalised weights of causes defined in each subgroup,

,

, and

. Evaluate the correlations with scores in (1—weak, 3—moderate, 5—strong). Check that the consistency ratio becomes less than 0.1 to validate judgments made [

43].

Evaluate the relationship matrix to determine the normalised priority weight of each subgroup matrix , , and . The relationship between the submatrices and actions is quantified using scores, , in (1—weak, 3—moderate, 5—strong). The subgroup priority weight is defined as , and the submatrix normalised priority weight is defined as .

Update weights of action error causes defined within each submatrix. The updating of a weight in submatrix is defined as .

Define priority scores to the action error causes transferred to HoQ 2. The updated weights from previous step 4 are here reused as priority scores, , in the listing.

Evaluate the relationship matrix to determine the normalised priority weight of each operational-level PIF in the activity given in (1, …, n). The priority weight for PIF is defined as , and the normalised priority weight as .

A search made of the internet and Scopus indicates that there are few explicit associations made between QFD and HRA in the literature. However, the use of QFD is not new to safety analysis. For example, several basic applications of QFD are found proposed in reliability engineering [

49] and to evaluate hazards within occupational safety analysis [

49,

50,

51,

52]. The safety analysis literature also includes a more complicated adaption of QFD, with the use of fuzzy set theory to describe uncertainties related to the elicitations and evaluations performed [

53]. The implementation of fuzzy set theory or similar to augment uncertainties may also be attractive for further work; for example, the use of triangle-, trapezoid-, or bell-shaped fuzzy numbers may typically be investigated for the various linguistic evaluations. Alternatively, as a first modification to procedure Step 3 and Step 6, we may simply consider that a priority score defines the probability distribution for the random variable

. Let

represent the joint probability distribution function for

column entries. The updated impacts of the scores on priority weights can then be calculated numerically as

where

denotes the sum over all possible values of the vector

. For example,

Table 4 simply treats HoQ 1 relationship scores used in the case study in the next section as being representative for independent triangle distributions, defined respectively with: score is 1

(1, 1, 3); score is 3

(1, 3, 5); and score is 5

(3, 5, 5); where (.,.,.) denotes the minimum, peak, and maximum triangle values.

The HoQ approach provides a systematic means for the orthogonal evaluation of PIFs within and between causal levels for the purpose of HRA. However, the potential reliance on the anchored judgment and intuition of single individuals in AHP should be avoided [

1]. For example, the Delphi method may be adopted to combine results from multiple expert elicitations [

54]. The list of action error causes also should be ordered according to importance in order to reduce any tendency for bias introduced by typical linear evaluations made with AHP. The ordering of the causes in the case study example follows from the validation performed of the causal scheme with accident data in

Table 3.

4. Discussion

This section includes a discussion of a proposed task analysis method in HRA in terms of its broader application to HRA causal evaluations in the nuclear power industry HRA. NUREG-2199 [

10] describes a method developed based on cognitive basis [

34] in order to secure more consistent HRA among analysts in the nuclear power industry. The NUREG method delimitations suggest prescriptive application, which is more restrictive than the method proposed, which considers the harsh physical environment and complex interactions among service providers descriptive of offshore drilling activities. The task analysis procedure focuses on specific requirements for team recovery scenarios based on the given initiating events and diagrams of crew response options during internal, at-power situations.

NUREG-2199 ([

10], p. 14) describes the HFE probability estimation based on the following procedure:

- (i)

Identify the crew failure mode (CFM) of critical tasks part of HFE defined with an internal at-power accident scenario.

- (ii)

Deduce the HEP of each CFM; i.e., apply the decision tree provided in the method, which includes appropriate HEP values based on evaluations made of relevant PIF sets. The HEP estimation follows a predefined one-to-many framework:

An accident scenario includes HFEs,

HFEs include critical tasks,

critical tasks include CFMs,

each CFM can be linked to a HEP,

HEPs are linked to sets of traditional PIFs in HRA.

The PIF sets are adopted from a cognitive basis [

34]. NUREG-2114 [

34] presents a consolidation of human performance and cognitive research into a framework for human error causal analysis. The framework comprises five macrocognitive functions associated with CFMs. Teamwork is an example of one such function defined that is associated with over forty PIFs. A large PIF list becomes unwieldly in QFD and AHP, but we may note proximate causes defined as a means of grouping the PIFs in an evaluation. This is similar to the grouping of action error causes in the method proposed.

The cognitive basis builds on concepts of perceptual cycle and sensemaking, which may be reasonable for causal analysis by trained experts who diligently follow procedures when performing control room tasks during internal, at-power events. These concepts suggest a causality focused on the long-term strategic and educational purpose of situation assessments, which involve recursive cognitive adaption to familiar control room scenarios ([

34], p. 76). Argued differently [

18], the situation awareness concept may also consider situation assessments as fast and linear, as a basis for near-future actions directed at a novel, fast-paced, and noisy work environment. This may help explain the different definitions of mental factors noted between cognitive basis and

Figure 5, and indicate a potential desire for a different cognitive basis in task analysis tailored to the HRA scope. The implications of workplace conditions in task analysis that follows from different cognitive concepts used in HRA is not addressed here, but could be of interest as further work. This also may concern performance requirements, which only considers a teamwork setting, since no individual can be made responsible for operating such complex power plants alone. For example, NUREG-2199 ([

10], p. 16) only briefly discusses general requirements for task analysis, which are described by terms such as success requirements, cognitive requirements, maximum time requirements, task requirements, resource requirements, and physical requirements. Hence, the proposed method is more robust for task analysis for HRA for offshore drilling.