A Detection Method of Operated Fake-Images Using Robust Hashing

Abstract

:1. Introduction

2. Related Work

2.1. Fake-Image Generation

2.2. Fake-Image Detection Methods

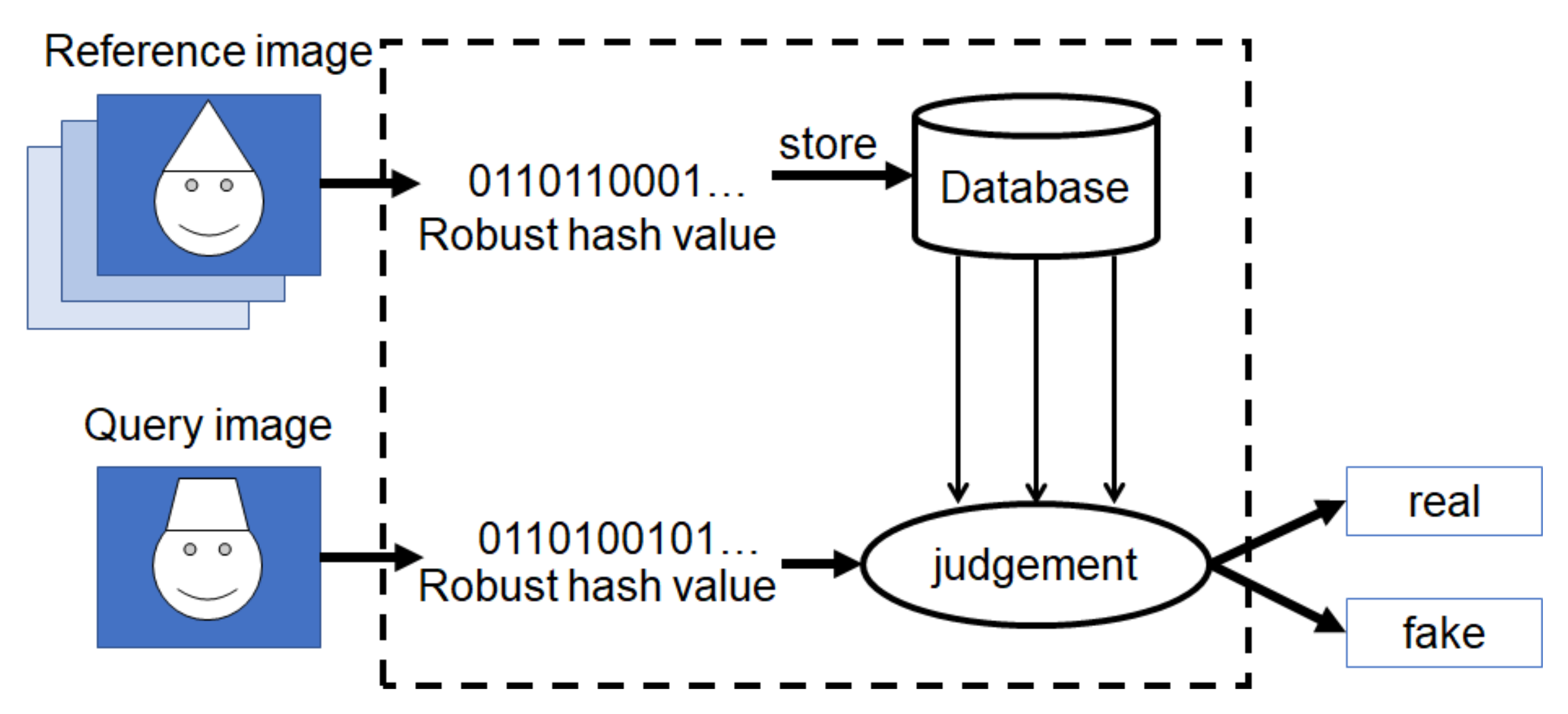

3. Proposed Method with Robust Hashing

3.1. Overview

3.2. Selection of Robust Hashing Methods

3.3. Fake Detection with Robust Hashing

- (a)

- Resizing images to 128 × 128 pixels prior to feature extraction.

- (b)

- Performing 5 × 5-Gaussian low-pass filtering with a standard deviation of a value of one.

- (c)

- Using features related to spatial and chromatic characteristics from images.

- (d)

- Outputting a bit string with a length of 120 bits as a hash value.

4. Results of Experiment

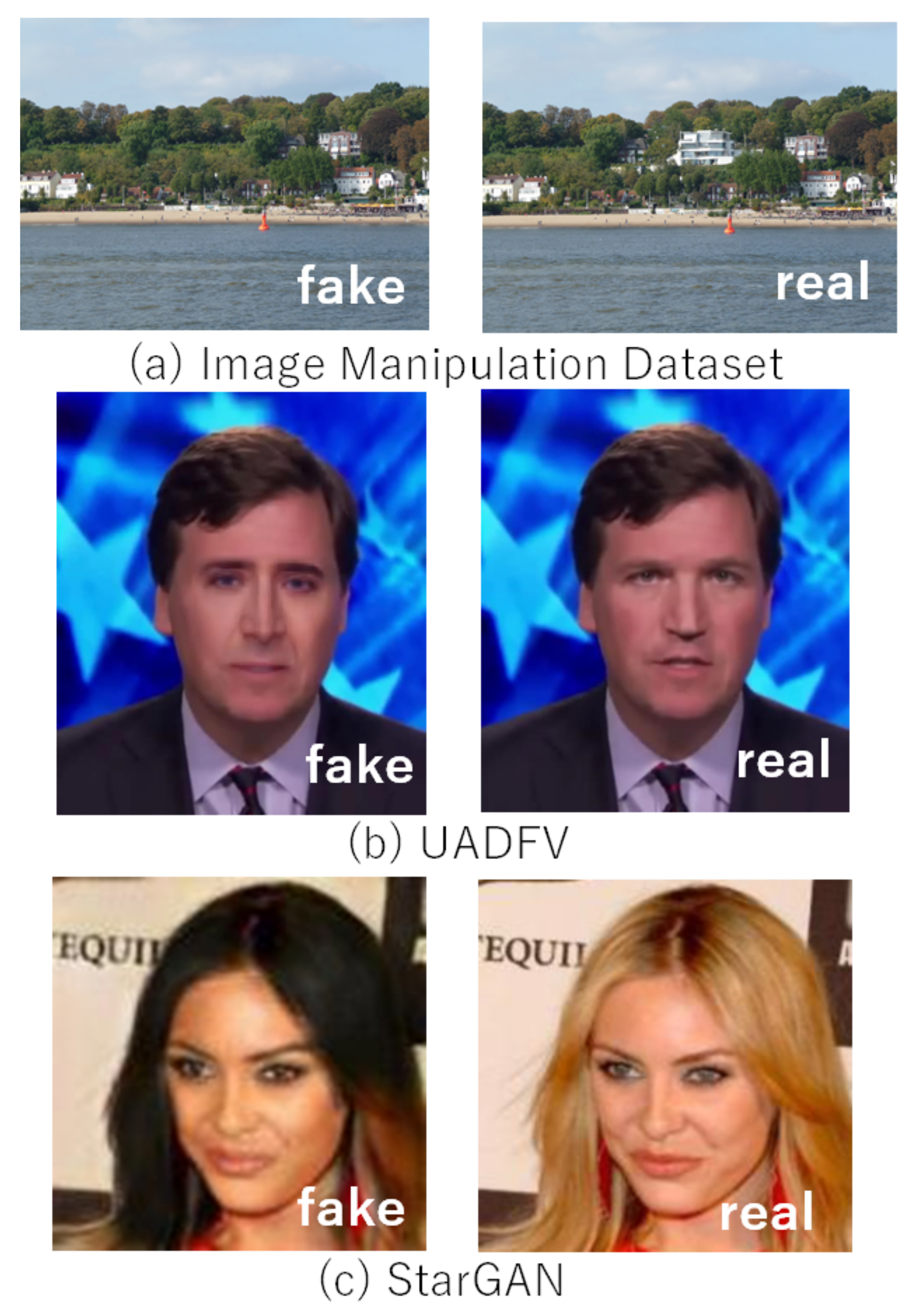

4.1. Experiment Setup

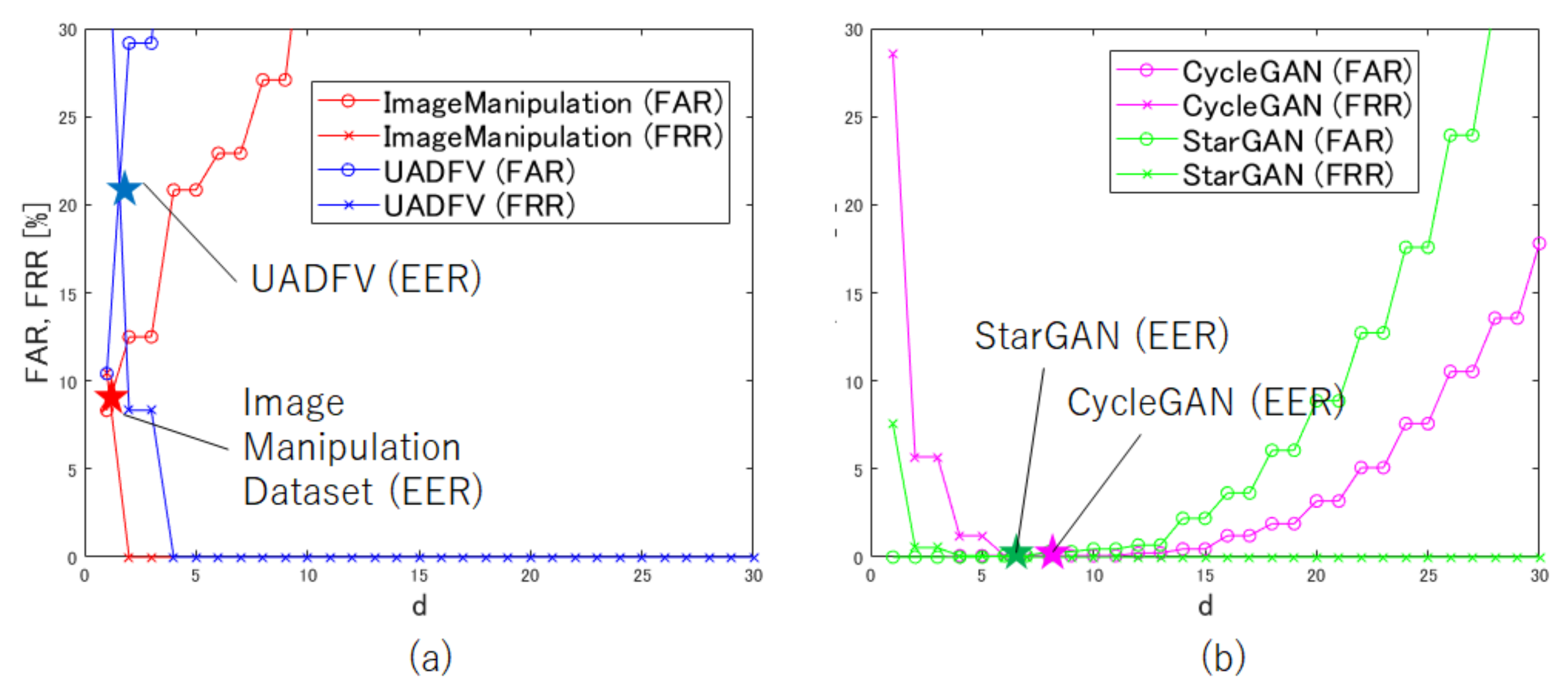

4.2. Selection of Threshold Value d

4.3. Robust Hashing

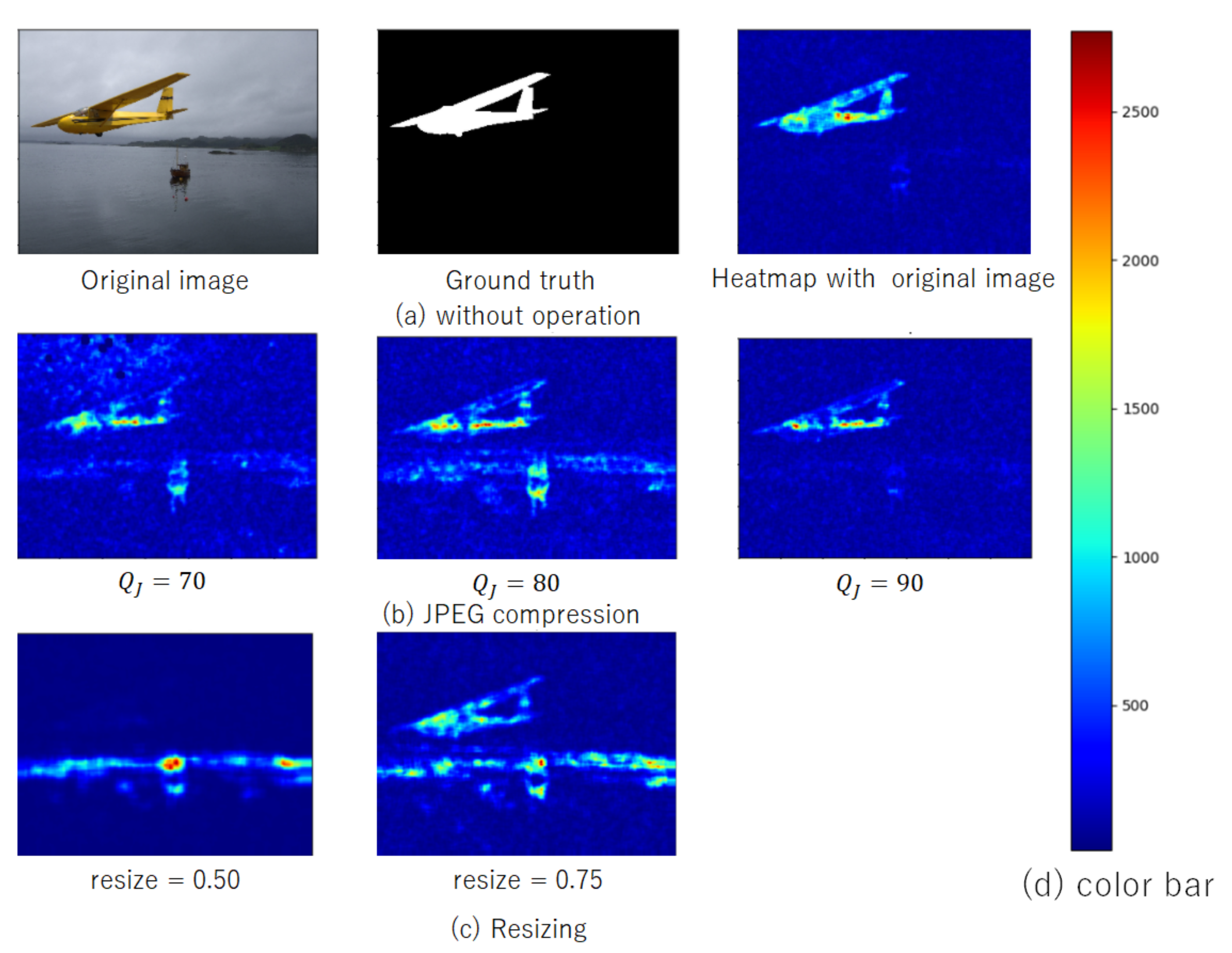

4.4. Suitability of Li et al.’s Method

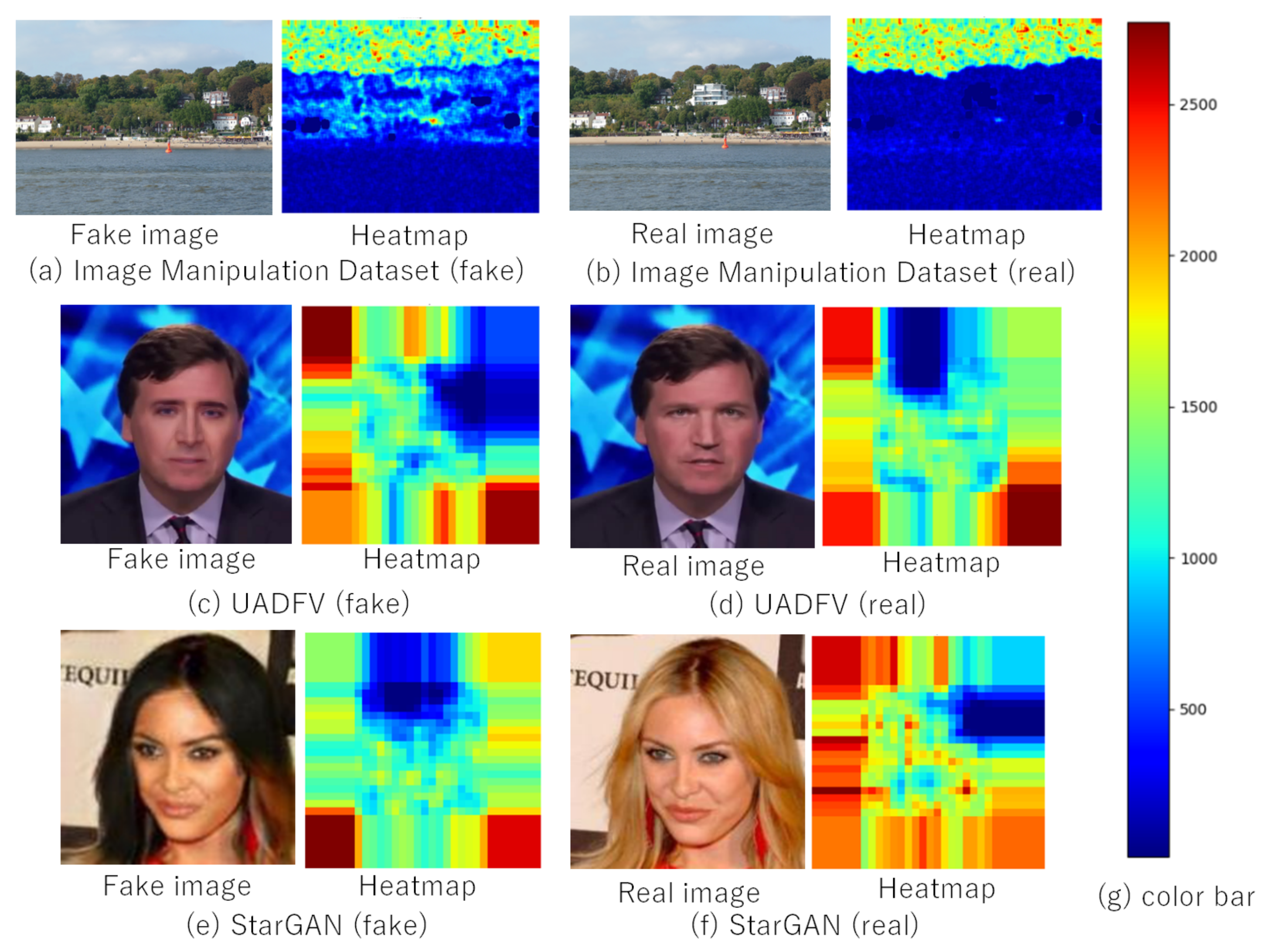

4.5. Results without Additional Operation

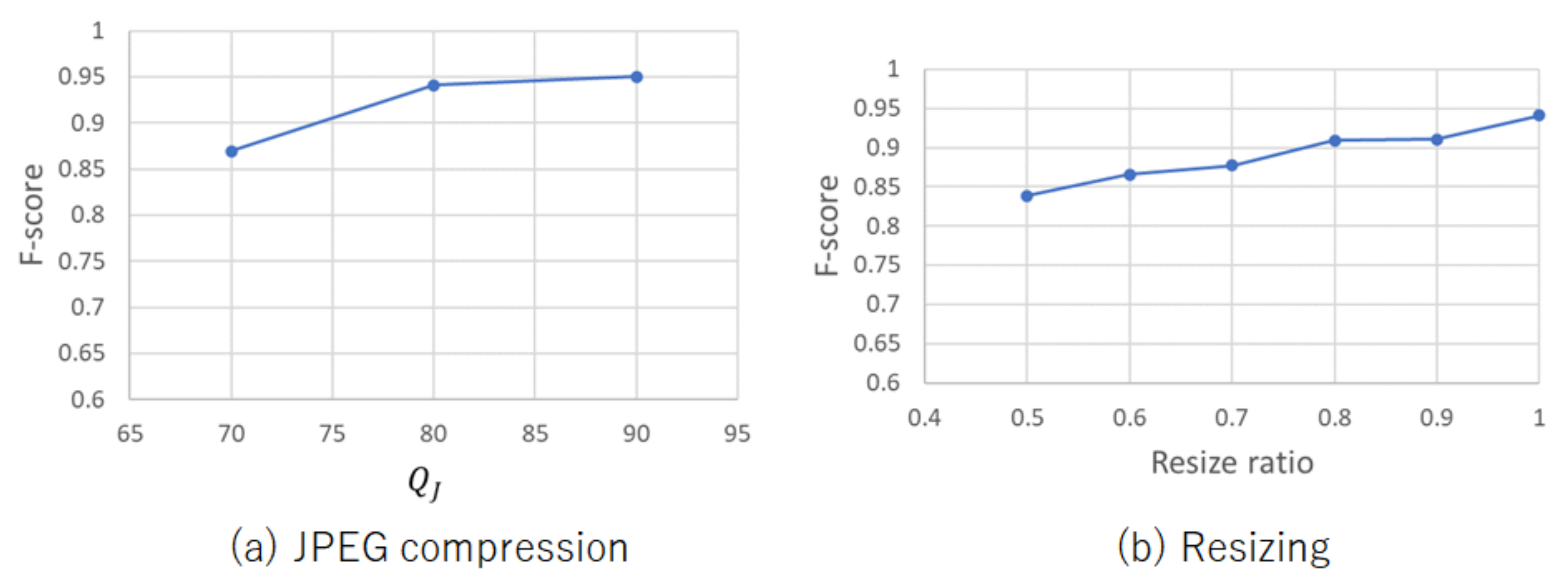

4.6. Results with Additional Operation

4.7. Comparison with Noiseprint Algorithm

4.8. Computational Complexity

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Verdoliva, L. Media Forensics and DeepFakes: An Overview. IEEE J. Sel. Top. Signal Process. 2020, 14, 910–932. [Google Scholar] [CrossRef]

- Sugawara, Y.; Shiota, S.; Kiya, H. Super-Resolution Using Convolutional Neural Networks Without Any Checkerboard Artifacts. In Proceedings of the IEEE International Conference on Image Processing, Athens, Greece, 7–10 October 2018; pp. 66–70. [Google Scholar] [CrossRef] [Green Version]

- Sugawara, Y.; Shiota, S.; Kiya, H. Checkerboard artifacts free convolutional neural networks. APSIPA Trans. Signal Inf. Process. 2019, 8, e9. [Google Scholar] [CrossRef] [Green Version]

- Kinoshita, Y.; Kiya, H. Fixed Smooth Convolutional Layer for Avoiding Checkerboard Artifacts in CNNS. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Barcelona, Spain, 4–8 May 2020; pp. 3712–3716. [Google Scholar] [CrossRef] [Green Version]

- Osakabe, T.; Tanaka, M.; Kinoshita, Y.; Kiya, H. CycleGAN without checkerboard artifacts for counter-forensics of fake-image detection. Int. Workshop Adv. Imaging Technol. 2021, 11766, 51–55. [Google Scholar] [CrossRef]

- Chuman, T.; Iida, K.; Sirichotedumrong, W.; Kiya, H. Image Manipulation Specifications on Social Networking Services for Encryption-then-Compression Systems. IEICE Trans. Inf. Syst. 2019, 102, 11–18. [Google Scholar] [CrossRef] [Green Version]

- Li, Y.N.; Wang, P.; Su, Y.T. Robust Image Hashing Based on Selective Quaternion Invariance. IEEE Signal Process. Lett. 2015, 22, 2396–2400. [Google Scholar] [CrossRef]

- Adobe Stock, Inc. Stock Photos, Royalty-Free Images, Graphics, Vectors & Videos. Available online: https://stock.adobe.com/ (accessed on 12 July 2021).

- Shutterstock, Inc. Stock Images, Photos, Vectors, Video, and Music. Available online: https://www.shutterstock.com/ (accessed on 12 July 2021).

- Stock Images, Royalty-Free Pictures, Illustrations & Videos-iStock. Available online: https://www.istockphoto.com/ (accessed on 12 July 2021).

- Generated Photos|Unique, Worry-Free Model Photos. Available online: https://generated.photos/# (accessed on 12 July 2021).

- Zhu, J.Y.; Park, T.; Isola, P.; Efros, A.A. Unpaired Image-To-Image Translation Using Cycle-Consistent Adversarial Networks. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017. [Google Scholar]

- Choi, Y.; Choi, M.; Kim, M.; Ha, J.W.; Kim, S.; Choo, J. StarGAN: Unified Generative Adversarial Networks for Multi-Domain Image-to-Image Translation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar]

- Thies, J.; Zollhofer, M.; Stamminger, M.; Theobalt, C.; Niessner, M. Face2Face: Real-Time Face Capture and Reenactment of RGB Videos. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Nirkin, Y.; Masi, I.; Tran Tuan, A.; Hassner, T.; Medioni, G. On Face Segmentation, Face Swapping, and Face Perception. In Proceedings of the IEEE International Conference on Automatic Face Gesture Recognition, Xi’an, China, 15–19 May 2018; pp. 98–105. [Google Scholar] [CrossRef] [Green Version]

- Ho, A.T.S.; Zhu, X.; Shen, J.; Marziliano, P. Fragile Watermarking Based on Encoding of the Zeroes of the z-Transform. IEEE Trans. Inf. Forensics Secur. 2008, 3, 567–569. [Google Scholar] [CrossRef]

- Gong, Z.; Niu, S.; Han, H. Tamper Detection Method for Clipped Double JPEG Compression Image. In Proceedings of the International Conference on Intelligent Information Hiding and Multimedia Signal Processing, Adelaide, Australia, 23–25 September 2015; pp. 185–188. [Google Scholar] [CrossRef]

- Bianchi, T.; Piva, A. Detection of Nonaligned Double JPEG Compression Based on Integer Periodicity Maps. IEEE Trans. Inf. Forensics Secur. 2012, 7, 842–848. [Google Scholar] [CrossRef] [Green Version]

- Chen, M.; Fridrich, J.; Goljan, M.; Lukas, J. Determining Image Origin and Integrity Using Sensor Noise. IEEE Trans. Inf. Forensics Secur. 2008, 3, 74–90. [Google Scholar] [CrossRef] [Green Version]

- Chierchia, G.; Poggi, G.; Sansone, C.; Verdoliva, L. A Bayesian-MRF Approach for PRNU-Based Image Forgery Detection. IEEE Trans. Inf. Forensics Secur. 2014, 9, 554–567. [Google Scholar] [CrossRef]

- Rao, Y.; Ni, J. A deep learning approach to detection of splicing and copy-move forgeries in images. In Proceedings of the IEEE International Workshop on Information Forensics and Security, Abu Dhabi, United Arab Emirates, 4–7 December 2016; pp. 1–6. [Google Scholar] [CrossRef]

- Bappy, J.H.; Roy-Chowdhury, A.K.; Bunk, J.; Nataraj, L.; Manjunath, B.S. Exploiting Spatial Structure for Localizing Manipulated Image Regions. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017. [Google Scholar]

- Zhou, P.; Han, X.; Morariu, V.I.; Davis, L.S. Pros of Learning Rich Features for Image Manipulation Detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar]

- Wang, S.Y.; Wang, O.; Zhang, R.; Owens, A.; Efros, A.A. CNN-Generated Images Are Surprisingly Easy to Spot …for Now. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020. [Google Scholar]

- Zhang, X.; Karaman, S.; Chang, S. Detecting and Simulating Artifacts in GAN Fake Images. In Proceedings of the IEEE International Workshop on Information Forensics and Security, Delft, Netherlands, 9–12 December 2019; pp. 1–6. [Google Scholar] [CrossRef] [Green Version]

- Matern, F.; Riess, C.; Stamminger, M. Exploiting Visual Artifacts to Expose Deepfakes and Face Manipulations. In Proceedings of the IEEE Winter Applications of Computer Vision Workshops, Waikoloa Village, HI, USA, 7–11 January 2019; pp. 83–92. [Google Scholar] [CrossRef]

- Li, Y.; Chang, M.; Lyu, S. In Ictu Oculi: Exposing AI Created Fake Videos by Detecting Eye Blinking. In Proceedings of the IEEE International Workshop on Information Forensics and Security, Hong Kong, China, 11–13 December 2018; pp. 1–7. [Google Scholar] [CrossRef]

- Yang, X.; Li, Y.; Qi, H.; Lyu, S. Exposing GAN-Synthesized Faces Using Landmark Locations. In Proceedings of the ACM Workshop on Information Hiding and Multimedia Security, Paris, France, 3–5 July 2019; pp. 113–118. [Google Scholar] [CrossRef] [Green Version]

- Yang, X.; Li, Y.; Lyu, S. Exposing Deep Fakes Using Inconsistent Head Poses. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Brighton, UK, 12–17 May 2019; pp. 8261–8265. [Google Scholar] [CrossRef] [Green Version]

- Gong, Y.; Lazebnik, S.; Gordo, A.; Perronnin, F. Iterative Quantization: A Procrustean Approach to Learning Binary Codes for Large-Scale Image Retrieval. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 2916–2929. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Li, Y.; Wang, P. Robust image hashing based on low-rank and sparse decomposition. In Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Shanghai, China, 20–25 March 2016; pp. 2154–2158. [Google Scholar] [CrossRef]

- Iida, K.; Kiya, H. Robust Image Identification with DC Coefficients for Double-Compressed JPEG Images. IEICE Trans. Inf. Syst. 2019, 102, 2–10. [Google Scholar] [CrossRef] [Green Version]

- Image Manipulation Dataset. Available online: https://www5.cs.fau.de/research/data/image-manipulation/ (accessed on 10 July 2021).

- Cozzolino, D.; Verdoliva, L. Noiseprint: A CNN-based camera model fingerprint. arXiv 2018, arXiv:1808.08396. [Google Scholar] [CrossRef] [Green Version]

- Chen, C.; Xiong, Z.; Liu, X.; Wu, F. Camera Trace Erasing. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 14–19 June 2020. [Google Scholar]

- Chen, C.; Zhao, X.; Stamm, M.C. Generative Adversarial Attacks Against Deep-Learning-Based Camera Model Identification. IEEE Trans. Inf. Forensics Secur. 2019. [Google Scholar] [CrossRef]

| Dataset | Fake-Image Generation | Real | Fake |

|---|---|---|---|

| No. of Images | |||

| Image | copy-move | 48 | 48 |

| Manipulation | |||

| Dataset [33] | |||

| UADFV [27] | face swap | 49 | 49 |

| CycleGAN [12] | GAN | 1320 | 1320 |

| StarGAN [13] | GAN | 1999 | 1999 |

| Robust Hash Dataset | Li et al.’s Method [7] | Modified Li’s Method [31] | Gong’s Method [30] | Iida’s Method [32] |

|---|---|---|---|---|

| Image | 0.9412 | 0.8348 | 0.7500 | 0.768 |

| Manipulation | ||||

| Dataset | ||||

| UADFV | 0.8302 | 0.6815 | 0.6906 | 0.7934 |

| Dataset | F-Score |

|---|---|

| Greyscale | 0.7869 |

| RGB | 0.9412 |

| Dataset | Wang’s Method [24] | Xu’s Method [25] | Proposed | |||

|---|---|---|---|---|---|---|

| AP | F-Score | AP | F-Score | AP | F-Score | |

| Image Manipulation Dataset | 0.5185 | 0.0000 | 0.5035 | 0.5192 | 0.9760 | 0.9412 |

| UADFV | 0.5707 | 0.0000 | 0.5105 | 0.6140 | 0.8801 | 0.8302 |

| CycleGAN | 0.9768 | 0.7405 | 0.8752 | 0.7826 | 1.0000 | 0.9708 |

| StarGAN | 0.9594 | 0.7418 | 0.4985 | 0.6269 | 1.0000 | 0.9973 |

| Additional Operation | Wang’s Method [24] | Xu’s Method [25] | Proposed | |||

|---|---|---|---|---|---|---|

| AP | F-Score | AP | F-Score | AP | F-Score | |

| None | 0.9833 | 0.7654 | 0.9941 | 0.8801 | 0.9941 | 0.9800 |

| JPEG () | 0.9670 | 0.7407 | 0.8572 | 0.7040 | 0.9922 | 0.8667 |

| resize (0.5) | 0.8264 | 0.3871 | 0.5637 | 0.6666 | 0.9793 | 0.5217 |

| copy-move | 0.9781 | 0.6400 | 0.9798 | 0.8764 | 1.0000 | 1.0000 |

| splicing | 0.9666 | 0.6923 | 0.9801 | 0.8666 | 0.9992 | 1.0000 |

| Processor | Intel Core i7-7700 3.60 GHz |

| Memory | 16 GB |

| OS | Windows 10 Education Insider Preview |

| Software | MATLAB R2020a |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tanaka, M.; Shiota, S.; Kiya, H. A Detection Method of Operated Fake-Images Using Robust Hashing. J. Imaging 2021, 7, 134. https://doi.org/10.3390/jimaging7080134

Tanaka M, Shiota S, Kiya H. A Detection Method of Operated Fake-Images Using Robust Hashing. Journal of Imaging. 2021; 7(8):134. https://doi.org/10.3390/jimaging7080134

Chicago/Turabian StyleTanaka, Miki, Sayaka Shiota, and Hitoshi Kiya. 2021. "A Detection Method of Operated Fake-Images Using Robust Hashing" Journal of Imaging 7, no. 8: 134. https://doi.org/10.3390/jimaging7080134

APA StyleTanaka, M., Shiota, S., & Kiya, H. (2021). A Detection Method of Operated Fake-Images Using Robust Hashing. Journal of Imaging, 7(8), 134. https://doi.org/10.3390/jimaging7080134