DCNet: Noise-Robust Convolutional Neural Networks for Degradation Classification on Ancient Documents

Abstract

:1. Introduction

2. Literature Review

2.1. Degraded Ancient Documents

2.1.1. Uniform Degradation

2.1.2. Bleed-Through

2.1.3. Faint Text and Low Contrast

2.1.4. Smears, Stains, or Spots

2.2. Support Vector Machine (SVM)

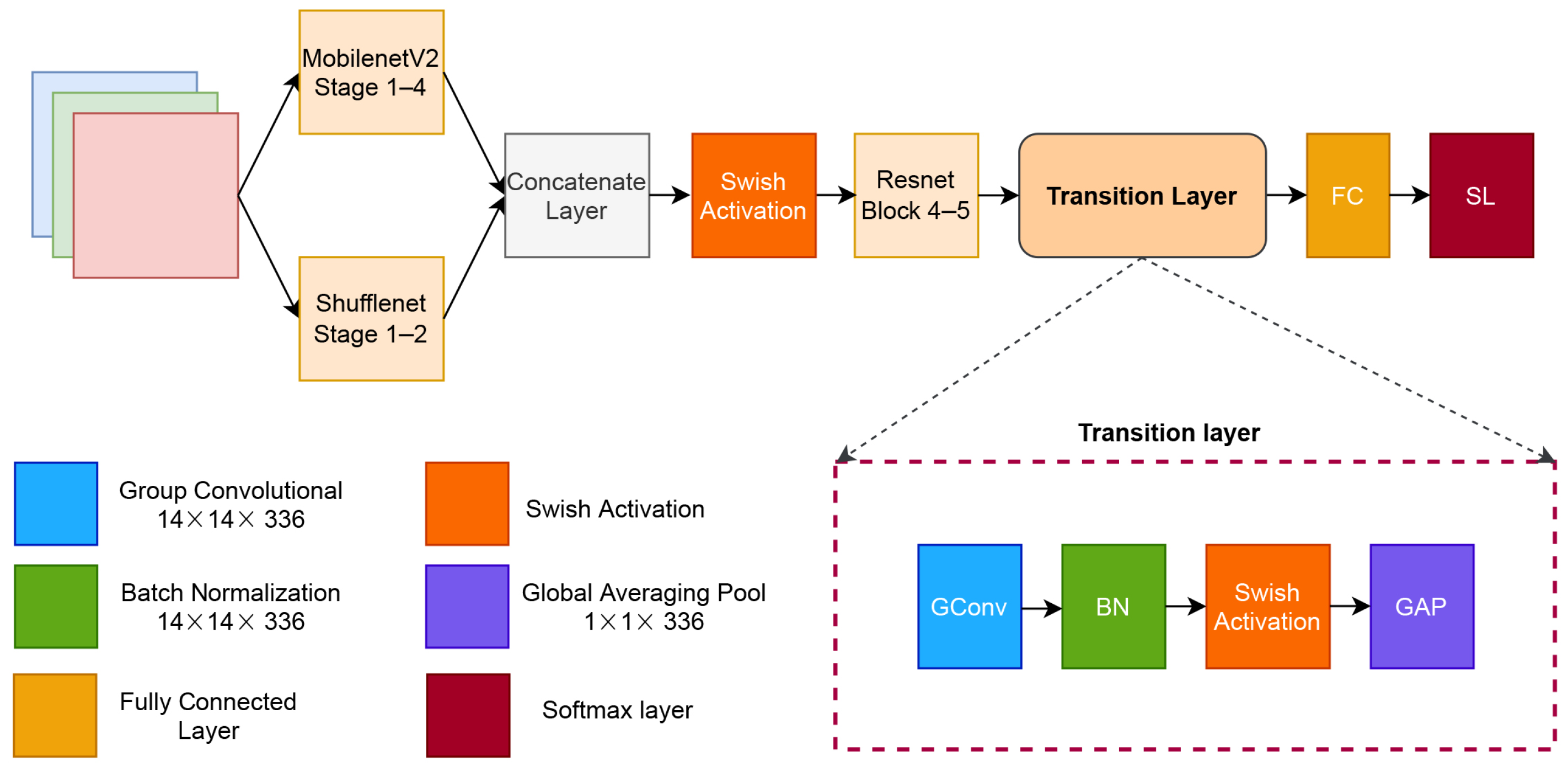

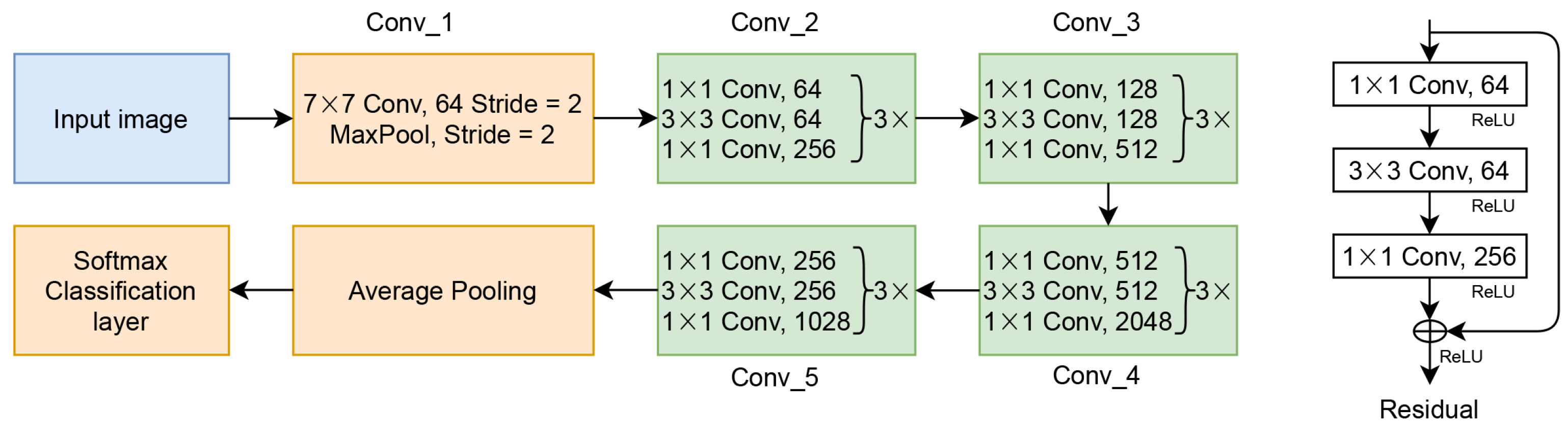

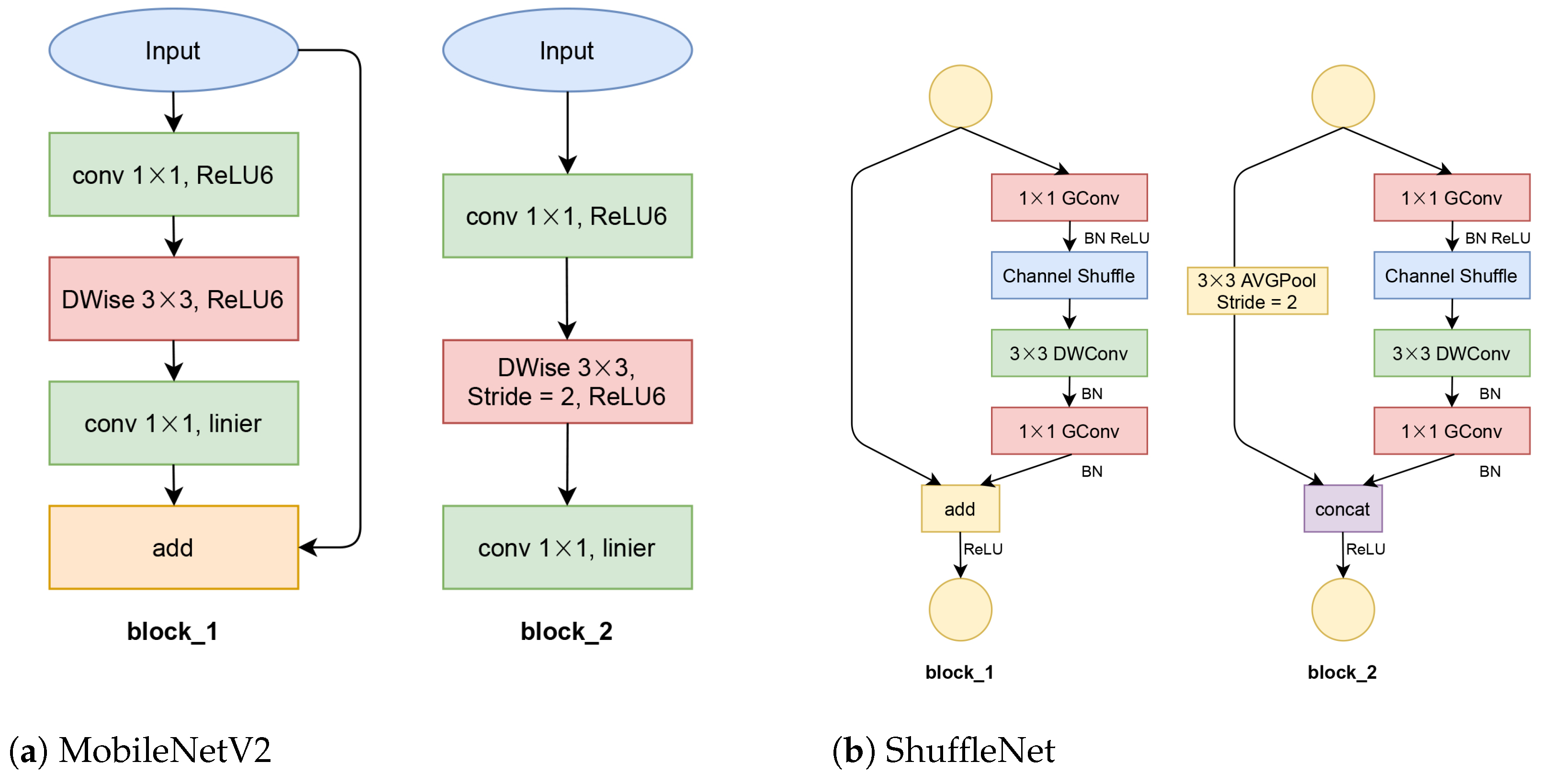

3. The Proposed Method

4. Experimental Setup

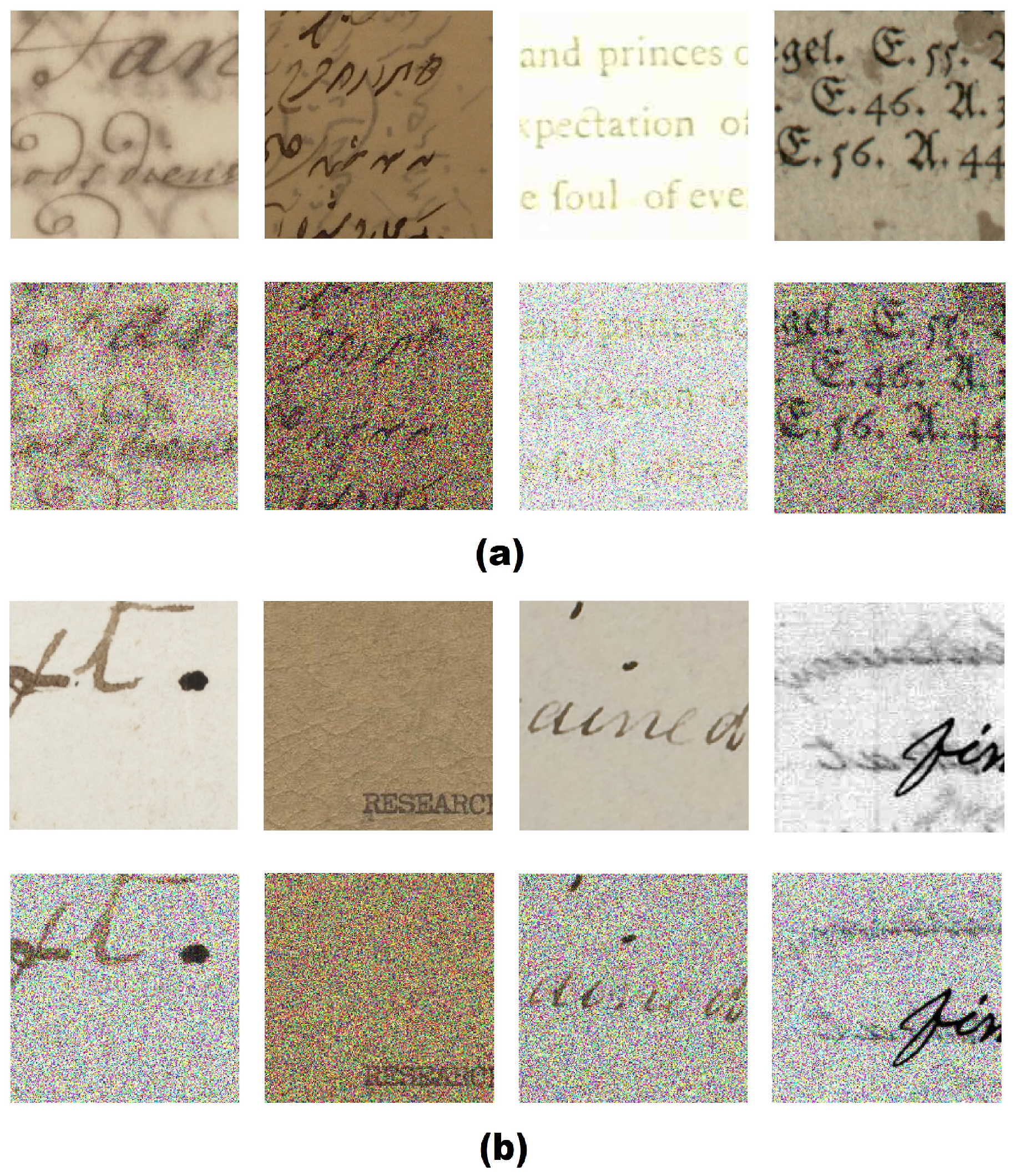

4.1. Datasets

4.2. Parameter Settings

4.3. Simulations

4.4. Evaluation Performance

5. Result and Discussion

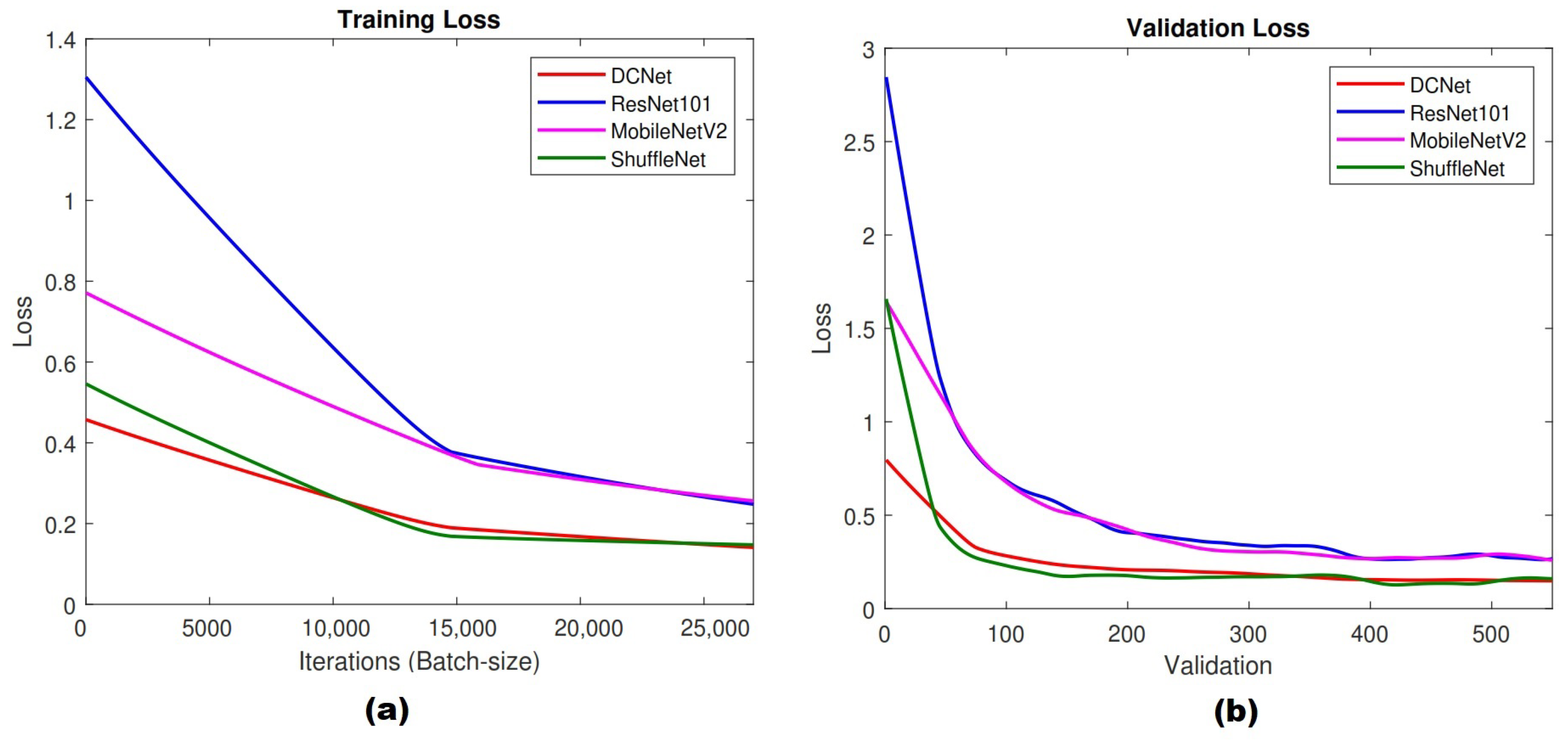

5.1. Training Results

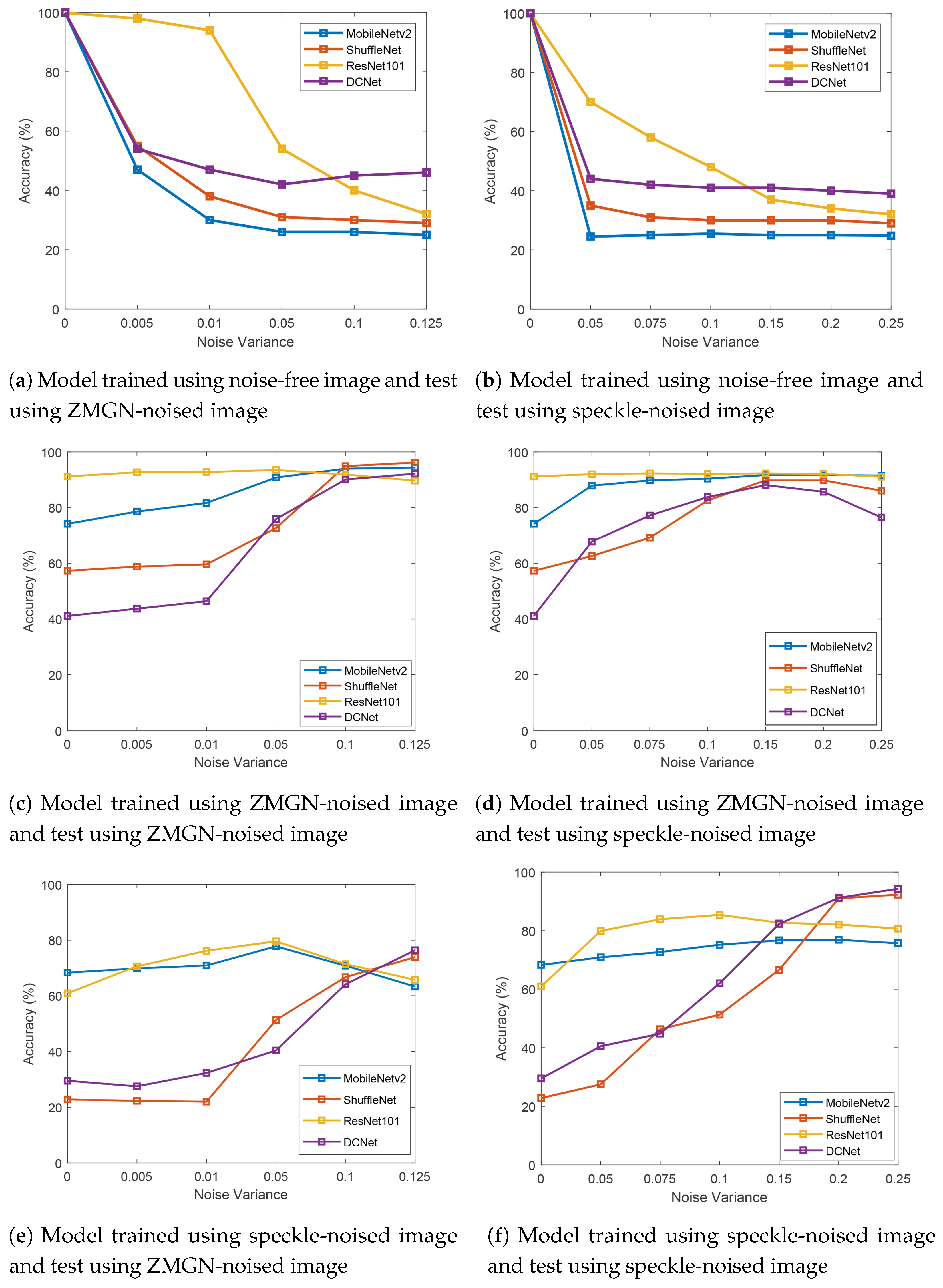

5.2. Results of the Noise-Free Model

5.3. Results of the ZMGN-Noise Model

5.4. Results of the Speckle-Noise Model

5.5. Comparison with Traditional Machine Learning

5.6. Performance of Deep Learning Models in Degradation Classification

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Lins, R.D.; de Almeida, M.M.; Bernardino, R.B.; Jesus, D.; Oliveira, J.M. Assessing binarization techniques for document images. In Proceedings of the 2017 ACM Symposium on Document Engineering, Valletta, Malta, 4–7 September 2017; pp. 183–192. [Google Scholar]

- Boult, T.E.; Scheirer, W. Long-range facial image acquisition and quality. In Handbook of Remote Biometrics; Springer: Berlin/Heidelberg, Germany, 2009; pp. 169–192. [Google Scholar]

- Cattin, D.P. Image restoration: Introduction to signal and image processing. MIAC Univ. Basel. Retrieved 2013, 11, 93. [Google Scholar]

- Yoo, Y.; Im, J.; Paik, J. Low-light image enhancement using adaptive digital pixel binning. Sensors 2015, 15, 14917–14931. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Saddami, K.; Munadi, K.; Away, Y.; Arnia, F. Effective and fast binarization method for combined degradation on ancient documents. Heliyon 2019, 5, e02613. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Namboodiri, A.M.; Jain, A.K. Document structure and layout analysis. In Digital Document Processing; Springer: Berlin/Heidelberg, Germany, 2007; pp. 29–48. [Google Scholar]

- Lins, R.D.; Banergee, S.; Thielo, M. Automatically detecting and classifying noises in document images. In Proceedings of the 2010 ACM Symposium on Applied Computing, Sierre, Switzerland, 22–26 March 2010; pp. 33–39. [Google Scholar]

- Shahkolaei, A.; Nafchi, H.Z.; Al-Maadeed, S.; Cheriet, M. Subjective and objective quality assessment of degraded document images. J. Cult. Herit. 2018, 30, 199–209. [Google Scholar] [CrossRef]

- Shahkolaei, A.; Beghdadi, A.; Cheriet, M. Blind quality assessment metric and degradation classification for degraded document images. Signal Process. Image Commun. 2019, 76, 11–21. [Google Scholar] [CrossRef]

- Han, D.; Liu, Q.; Fan, W. A new image classification method using CNN transfer learning and web data augmentation. Expert Syst. Appl. 2018, 95, 43–56. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. Mobilenetv2: Inverted residuals and linear bottlenecks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 4510–4520. [Google Scholar]

- Zhang, X.; Zhou, X.; Lin, M.; Sun, J. Shufflenet: An extremely efficient convolutional neural network for mobile devices. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 6848–6856. [Google Scholar]

- Saddami, K.; Munadi, K.; Arnia, F. Degradation Classification on Ancient Document Image Based on Deep Neural Networks. In Proceedings of the International Conference on Information and Communications Technology, Yogyakarta, Indonesia, 24–25 November 2020; IEEE: New York, NY, USA, 2020; pp. 405–410. [Google Scholar]

- Sulaiman, A.; Omar, K.; Nasrudin, M.F. Degraded historical document binarization: A review on issues, challenges, techniques, and future directions. J. Imaging 2019, 5, 48. [Google Scholar] [CrossRef] [Green Version]

- Lombardi, F.; Marinai, S. Deep Learning for Historical Document Analysis and Recognition—A Survey. J. Imaging 2020, 6, 110. [Google Scholar] [CrossRef]

- Lu, Z.; Rallapalli, S.; Chan, K.; La Porta, T. Modeling the resource requirements of convolutional neural networks on mobile devices. In Proceedings of the 25th ACM international conference on Multimedia, Mountain View, CA, USA, 23–27 October 2017; pp. 1663–1671. [Google Scholar]

- Ntirogiannis, K.; Gatos, B.; Pratikakis, I. A combined approach for the binarization of handwritten document images. Pattern Recognit. Lett. 2014, 35, 3–15. [Google Scholar] [CrossRef]

- Su, B.; Lu, S.; Tan, C.L. Robust document image binarization technique for degraded document images. IEEE Trans. Image Process. 2012, 22, 1408–1417. [Google Scholar]

- Bataineh, B.; Abdullah, S.N.; Omar, K.; Faidzul, M. Adaptive thresholding methods for documents image binarization. In Proceedings of the Mexican Conference on Pattern Recognition, Cancun, Mexico, 29 June–2 July 2011; Springer: Berlin/Heidelberg, Germany, 2011; pp. 230–239. [Google Scholar]

- Ramírez-Ortegón, M.A.; Tapia, E.; Rojas, R.; Cuevas, E. Transition thresholds and transition operators for binarization and edge detection. Pattern Recognit. 2010, 43, 3243–3254. [Google Scholar] [CrossRef]

- Otsu, N. A threshold selection method from gray-level histograms. Automatica 1975, 11, 23–27. [Google Scholar] [CrossRef] [Green Version]

- Sauvola, J.; Pietikäinen, M. Adaptive document image binarization. Pattern Recognit. 2000, 33, 225–236. [Google Scholar] [CrossRef] [Green Version]

- Gatos, B.; Ntirogiannis, K.; Pratikakis, I. ICDAR 2009 document image binarization contest (DIBCO 2009). In Proceedings of the 2009 10th International Conference on Document Analysis and Recognition, Barcelona, Spain, 26–29 July 2009; IEEE: New York, NY, USA, 2009; pp. 1375–1382. [Google Scholar]

- Pratikakis, I.; Gatos, B.; Ntirogiannis, K. H-DIBCO 2010-handwritten document image binarization competition. In Proceedings of the 2010 12th International Conference on Frontiers in Handwriting Recognition, Kolkata, India, 16–18 November 2010; IEEE: New York, NY, USA, 2010; pp. 727–732. [Google Scholar]

- Pratikakis, I.; Gatos, B.; Ntirogiannis, K. ICDAR 2011 document image binarization contest (DIBCO 2011). In Proceedings of the 11th International Conference Document Analysis and Recognition, Beijing, China, 18–21 September 2011; IEEE: New York, NY, USA, 2011; pp. 1506–1510. [Google Scholar]

- Pratikakis, I.; Gatos, B.; Ntirogiannis, K. ICFHR 2012 competition on handwritten document image binarization (H-DIBCO 2012). In Proceedings of the 2012 International Conference on Frontiers in Handwriting Recognition, Bari, Italy, 18–20 September 2012; IEEE: New York, NY, USA, 2012; pp. 817–822. [Google Scholar]

- Pratikakis, I.; Gatos, B.; Ntirogiannis, K. ICDAR 2013 document image binarization contest (DIBCO 2013). In Proceedings of the 2013 12th International Conference on Document Analysis and Recognition, Washington, DC, USA, 25–28 August 2013; IEEE: New York, NY, USA, 2013; pp. 1471–1476. [Google Scholar]

- Ntirogiannis, K.; Gatos, B.; Pratikakis, I. ICFHR2014 competition on handwritten document image binarization (H-DIBCO 2014). In Proceedings of the 2014 14th International Conference on Frontiers in Handwriting Recognition, Crete, Greece, 1–4 September 2014; IEEE: New York, NY, USA, 2014; pp. 809–813. [Google Scholar]

- Pratikakis, I.; Zagoris, K.; Barlas, G.; Gatos, B. ICFHR2016 Handwritten Document Image Binarization Contest (H-DIBCO 2016). In Proceedings of the 2016 15th International Conference on Frontiers in Handwriting Recognition (ICFHR), Shenzhen, China, 23–26 October 2016; IEEE: New York, NY, USA, 2016; pp. 619–623. [Google Scholar]

- Pratikakis, I.; Zagoris, K.; Barlas, G.; Gatos, B. ICDAR2017 competition on document image binarization (DIBCO 2017). In Proceedings of the 2017 14th IAPR International Conference on Document Analysis and Recognition (ICDAR), Kyoto, Japan, 9–15 November 2017; IEEE: New York, NY, USA, 2017; Volume 1, pp. 1395–1403. [Google Scholar]

- Ayatollahi, S.M.; Nafchi, H.Z. Persian heritage image binarization competition (PHIBC 2012). In Proceedings of the 2013 First Iranian Conference on Pattern Recognition and Image Analysis (PRIA), Birjand, Iran, 6–8 March 2013; IEEE: New York, NY, USA, 2013; pp. 1–4. [Google Scholar]

- Tonazzini, A.; Salerno, E.; Bedini, L. Fast correction of bleed-through distortion in grayscale documents by a blind source separation technique. Int. J. Doc. Anal. Recognit. (IJDAR) 2007, 10, 17–25. [Google Scholar] [CrossRef]

- Moghaddam, R.F.; Cheriet, M. Low quality document image modeling and enhancement. Int. J. Doc. Anal. Recognit. (IJDAR) 2009, 11, 183–201. [Google Scholar] [CrossRef]

- Young, I.T.; Gerbrands, J.J.; Van Vliet, L.J. Fundamentals of Image Processing; Delft University of Technology: Delft, The Netherlands, 1998. [Google Scholar]

- Saddami, K.; Munadi, K.; Muchallil, S.; Arnia, F. Improved thresholding method for enhancing Jawi binarization performance. In Proceedings of the 2017 14th IAPR International Conference on Document Analysis and Recognition (ICDAR), Kyoto, Japan, 9–15 November 2017; IEEE: New York, NY, USA, 2017; Volume 1, pp. 1108–1113. [Google Scholar]

- Kumar, S.D.; Esakkirajan, S.; Bama, S.; Keerthiveena, B. A microcontroller based machine vision approach for tomato grading and sorting using SVM classifier. Microprocess. Microsyst. 2020, 76, 103090. [Google Scholar] [CrossRef]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Nugroho, A.S. Pengantar support vector machine. J. Data Min. Jakarta 2007, 3. [Google Scholar]

- Shawe-Taylor, J.; Cristianini, N. Kernel Methods for Pattern Analysis; Cambridge University Press: Cambridge, MA, USA, 2004. [Google Scholar]

- Faizollahzadeh Ardabili, S.; Najafi, B.; Alizamir, M.; Mosavi, A.; Shamshirband, S.; Rabczuk, T. Using SVM-RSM and ELM-RSM approaches for optimizing the production process of methyl and ethyl esters. Energies 2018, 11, 2889. [Google Scholar] [CrossRef] [Green Version]

- Ma, N.; Zhang, X.; Zheng, H.T.; Sun, J. Shufflenet v2: Practical guidelines for efficient cnn architecture design. In Proceedings of the European conference on computer vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 116–131. [Google Scholar]

- Xie, S.; Girshick, R.; Dollár, P.; Tu, Z.; He, K. Aggregated residual transformations for deep neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1492–1500. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- Howard, A.; Sandler, M.; Chu, G.; Chen, L.C.; Chen, B.; Tan, M.; Wang, W.; Zhu, Y.; Pang, R.; Vasudevan, V.; et al. Searching for mobilenetv3. In Proceedings of the IEEE International Conference on Computer Vision, Seoul, Korea, 27 October–2 November 2019; IEEE: New York, NY, USA, 2019; pp. 1314–1324. [Google Scholar]

- Ramachandran, P.; Zoph, B.; Le, Q.V. Searching for activation functions. In Proceedings of the 6th International Conference on Learning Representation, Vancouver, BC, Canada, 30 April–3 May 2018. [Google Scholar]

- Wang, X.; Kan, M.; Shan, S.; Chen, X. Fully learnable group convolution for acceleration of deep neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 9049–9058. [Google Scholar]

- Ioffe, S.; Szegedy, C. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift. In Proceedings of the International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 448–456. [Google Scholar]

- Lin, M.; Chen, Q.; Yan, S. Network in network. arXiv 2013, arXiv:1312.4400. [Google Scholar]

- Saddami, K.; Munadi, K.; Arnia, F. A database of printed Jawi character image. In Proceedings of the 2015 Third International Conference on Image Information Processing (ICIIP), Waknaghat, India, 21–24 December 2015; IEEE: New York, NY, USA, 2015; pp. 56–59. [Google Scholar]

- Saddami, K.; Munadi, K.; Away, Y.; Arnia, F. DHJ: A database of handwritten Jawi for recognition research. In Proceedings of the 2017 International Conference on Electrical Engineering and Informatics (ICELTICs), Banda Aceh, Indonesia, 18–20 October 2017; IEEE: New York, NY, USA, 2017; pp. 292–296. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Tieleman, T.; Hinton, G. Lecture 6.5-rmsprop: Divide the gradient by a running average of its recent magnitude. COURSERA Neural Netw. Mach. Learn. 2012, 4, 26–31. [Google Scholar]

| Testing Image Applied ZMGN Noise | ||||||

|---|---|---|---|---|---|---|

| Model Trained on noise-free image | ||||||

| Methods | = 0.0 | = 0.005 | = 0.01 | = 0.05 | = 0.1 | = 0.125 |

| DCNet | 100.0 | 54.00 | 47.0 | 42.00 | 45.00 | 46.00 |

| SVM | 78.00 | 35.60 | 35.00 | 35.57 | 35.77 | 34.03 |

| RF | 70.17 | 27.17 | 27.03 | 27.17 | 26.57 | 28.67 |

| Model Trained on ZMGN image | ||||||

| Methods | = 0.0 | = 0.005 | = 0.01 | = 0.05 | = 0.1 | = 0.125 |

| DCNet | 41.10 | 43.70 | 64.40 | 75.90 | 90.10 | 92.20 |

| SVM | 25.00 | 25.23 | 25.53 | 23.70 | 21.57 | 22.33 |

| RF | 37.37 | 34.32 | 34.13 | 37.70 | 41.33 | 41.17 |

| Model Trained on Speckle image | ||||||

| Methods | = 0.0 | = 0.005 | = 0.01 | = 0.05 | = 0.1 | = 0.125 |

| DCNet | 29.50 | 27.50 | 32.30 | 40.40 | 64.10 | 76.40 |

| SVM | 25.00 | 31.73 | 31.67 | 30.63 | 30.23 | 31.50 |

| RF | 64.97 | 34.17 | 34.50 | 36.70 | 36.47 | 35.80 |

| Testing Image Applied Speckle Noise | |||||||

|---|---|---|---|---|---|---|---|

| Model Trained on noise-free image | |||||||

| Methods | = 0.0 | = 0.05 | = 0.075 | = 0.1 | = 0.15 | = 0.2 | = 0.25 |

| DCNet | 100.0 | 44.00 | 42.00 | 41.00 | 41.00 | 40.10 | 39.00 |

| SVM | 78.00 | 40.13 | 40.50 | 34.03 | 44.90 | 45.47 | 46.03 |

| RF | 70.17 | 23.57 | 23.83 | 28.67 | 24.37 | 23.47 | 23.67 |

| Model Trained on ZMGN image | |||||||

| Methods | = 0.0 | = 0.05 | = 0.075 | = 0.1 | = 0.15 | = 0.2 | = 0.25 |

| DCNet | 41.1 | 67.8 | 77.2 | 83.8 | 88.1 | 85.7 | 76.5 |

| SVM | 25.00 | 31.03 | 34.57 | 37.47 | 40.37 | 43.90 | 46.37 |

| RF | 37.37 | 42.40 | 40.90 | 41.83 | 48.63 | 53.05 | 55.83 |

| Model Trained on Speckle image | |||||||

| Methods | = 0.0 | = 0.05 | = 0.075 | = 0.1 | = 0.15 | = 0.2 | = 0.25 |

| DCNet | 29.5 | 40.5 | 44.8 | 62.0 | 82.3 | 91.2 | 94.3 |

| SVM | 25.00 | 40.93 | 46.27 | 53.60 | 66.17 | 71.63 | 72.63 |

| RF | 65.37 | 44.87 | 50.80 | 56.80 | 65.97 | 73.50 | 74.67 |

| = 0.005 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 98.14 | 99.10 | 98.22 | 97.19 |

| MobileNetV2 | 48.60 | 79.41 | 27.11 | 1.73 |

| ShuffleNet | 50.89 | 43.26 | 79.45 | 49.87 |

| DCNet | 59.26 | 68.00 | 55.49 | 0.00 |

| = 0.01 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 93.36 | 96.05 | 91.83 | 93.96 |

| MobileNetV2 | 45.20 | 25.75 | 10.79 | 0.00 |

| ShuffleNet | 44.33 | 11.32 | 62.35 | 13.11 |

| DCNet | 49.34 | 59.85 | 47.44 | 0.00 |

| = 0.05 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 67.73 | 52.51 | 44.12 | 1.78 |

| MobileNetV2 | 44.72 | 0.00 | 0.20 | 0.38 |

| ShuffleNet | 41.65 | 4.76 | 25.02 | 0.00 |

| DCNet | 32.85 | 51.94 | 42.89 | 0.00 |

| = 0.1 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 33.78 | 43.51 | 25.63 | 0.00 |

| MobileNetV2 | 45.67 | 0.00 | 0.00 | 0.00 |

| ShuffleNet | 40.89 | 2.18 | 0.00 | 0.51 |

| DCNet | 36.65 | 56.37 | 40.82 | 0.00 |

| = 0.125 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 27.14 | 42.52 | 19.98 | 0.00 |

| MobileNetV2 | 45.87 | 0.00 | 0.00 | 0.48 |

| ShuffleNet | 40.67 | 0.00 | 0.00 | 0.00 |

| DCNet | 40.58 | 58.15 | 41.53 | 0.00 |

| = 0.05 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 87.57 | 66.82 | 83.72 | 35.02 |

| MobileNetV2 | 44.96 | 0.00 | 6.00 | 1.42 |

| ShuffleNet | 43.11 | 6.71 | 47.68 | 2.37 |

| DCNet | 43.42 | 55.48 | 45.56 | 0.00 |

| = 0.075 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 77.34 | 57.85 | 65.32 | 11.08 |

| MobileNetV2 | 44.84 | 0.00 | 4.85 | 1.07 |

| ShuffleNet | 42.02 | 6.63 | 30.94 | 0.00 |

| DCNet | 39.54 | 55.80 | 45.20 | 0.00 |

| = 0.1 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 62.12 | 51.85 | 50.75 | 2.91 |

| MobileNetV2 | 44.95 | 0.00 | 2.54 | 0.53 |

| ShuffleNet | 41.40 | 5.57 | 21.91 | 0.00 |

| DCNet | 37.74 | 55.82 | 44.60 | 0.00 |

| = 0.15 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 37.23 | 45.4 | 27.73 | 0.00 |

| MobileNetV2 | 45.32 | 0 | 1.76 | 0.64 |

| ShuffleNet | 41.14 | 6.39 | 18.68 | 0.00 |

| DCNet | 38.06 | 59.82 | 42.27 | 0.00 |

| = 0.2 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 33.57 | 43.87 | 20.14 | 0 |

| MobileNetV2 | 45.28 | 0 | 0.59 | 0.64 |

| ShuffleNet | 41.14 | 6.2 | 18.84 | 0.00 |

| DCNet | 39.63 | 58.13 | 36.03 | 0.00 |

| = 0.25 | ||||

| BLT (%) | FTLC (%) | SSS (%) | UN (%) | |

| ResNet101 | 31.89 | 42.89 | 17.51 | 0 |

| MobileNetV2 | 45.32 | 0 | 0 | 0.64 |

| ShuffleNet | 41.02 | 6.19 | 17.51 | 0 |

| DCNet | 42.38 | 47.26 | 26.09 | 0 |

| Models | Number of Parameters (millions) |

|---|---|

| ResNet101 | 44.6 |

| MobileNetV2 | 3.5 |

| ShuffleNet | 1.4 |

| DCNet | 18.9 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Arnia, F.; Saddami, K.; Munadi, K. DCNet: Noise-Robust Convolutional Neural Networks for Degradation Classification on Ancient Documents. J. Imaging 2021, 7, 114. https://doi.org/10.3390/jimaging7070114

Arnia F, Saddami K, Munadi K. DCNet: Noise-Robust Convolutional Neural Networks for Degradation Classification on Ancient Documents. Journal of Imaging. 2021; 7(7):114. https://doi.org/10.3390/jimaging7070114

Chicago/Turabian StyleArnia, Fitri, Khairun Saddami, and Khairul Munadi. 2021. "DCNet: Noise-Robust Convolutional Neural Networks for Degradation Classification on Ancient Documents" Journal of Imaging 7, no. 7: 114. https://doi.org/10.3390/jimaging7070114

APA StyleArnia, F., Saddami, K., & Munadi, K. (2021). DCNet: Noise-Robust Convolutional Neural Networks for Degradation Classification on Ancient Documents. Journal of Imaging, 7(7), 114. https://doi.org/10.3390/jimaging7070114