The evaluation is performed qualitatively and quantitatively. In particular, we used 12 objective fusion quality assessment metrics to extensively evaluate their results. We also rank the algorithms using the Borda count technique [

73,

74,

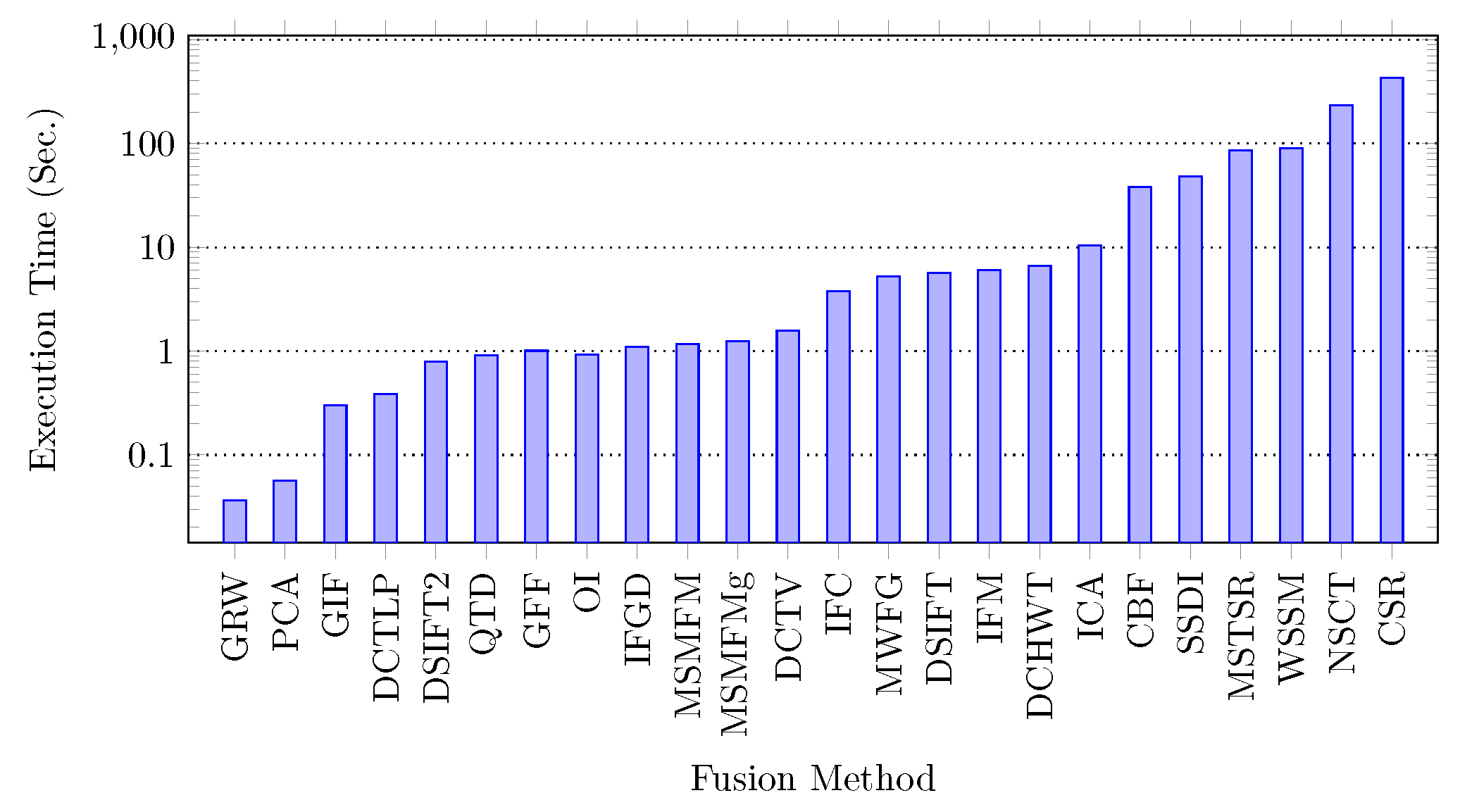

75] to find the top performers. Moreover, an analysis of the fusion quality assessment techniques is also presented. A time complexity analysis is also carried out to measure the overall effectiveness of the algorithm.

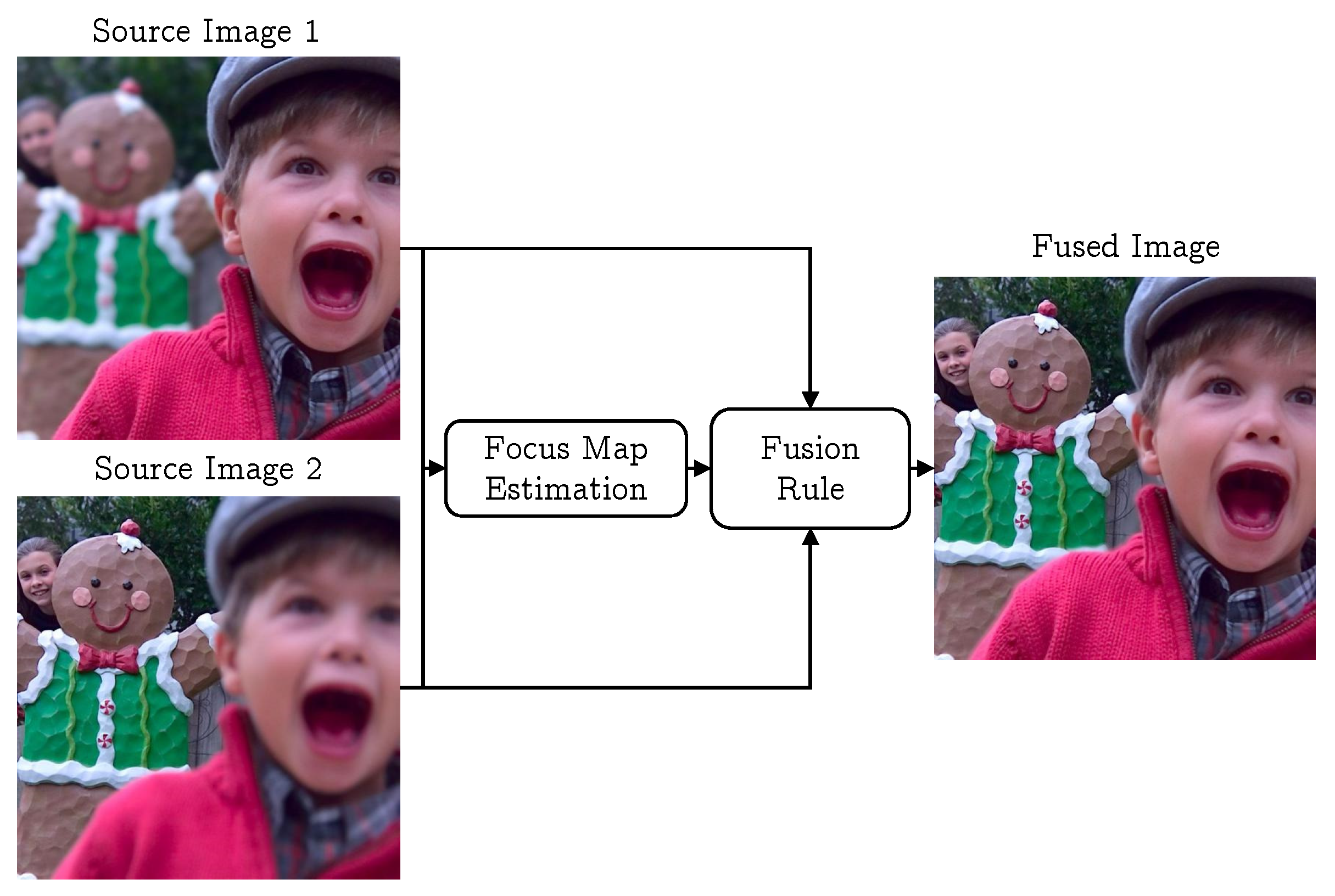

5.2. Qualitative Evaluation

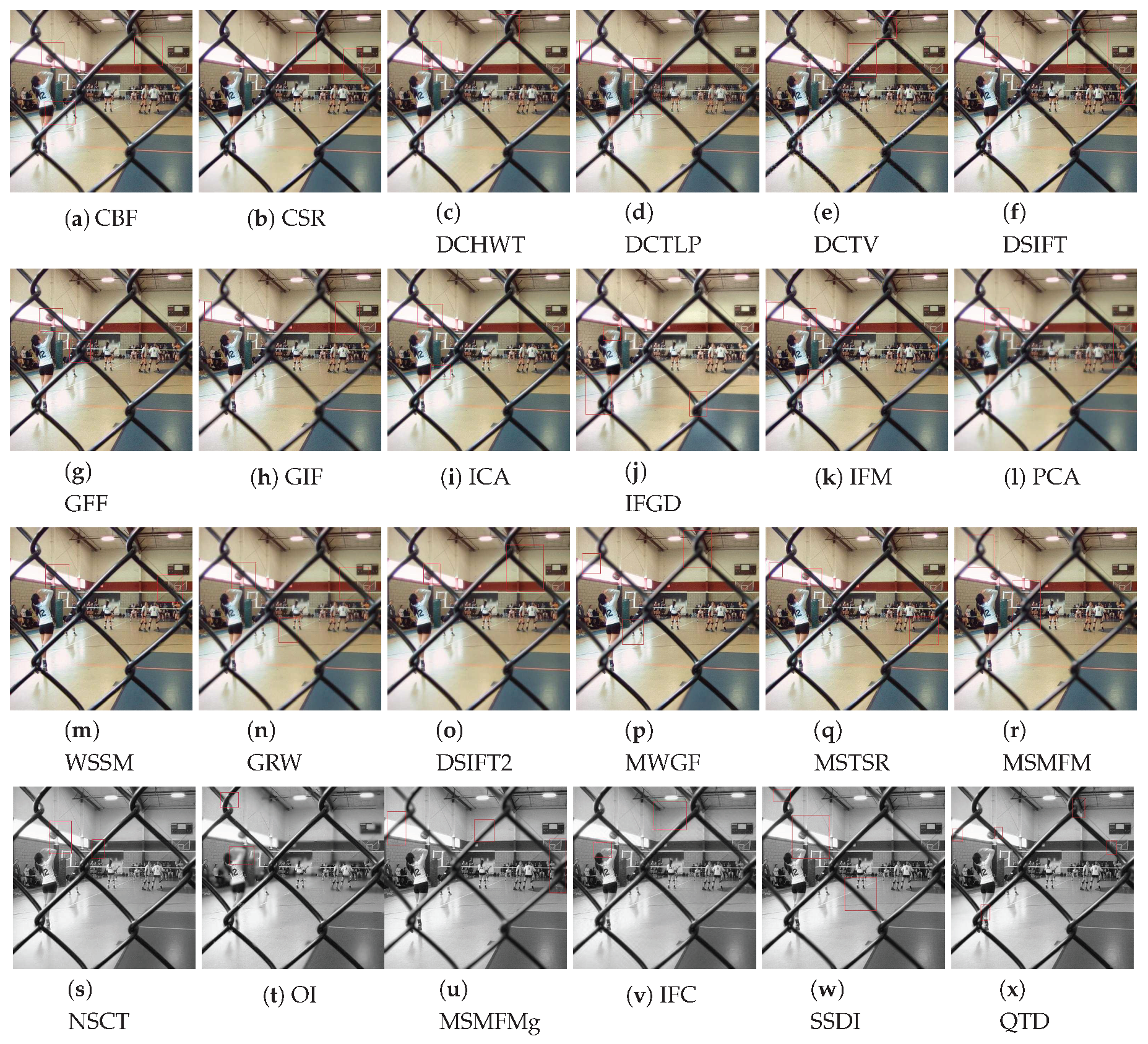

The qualitative evaluation is performed by comparing the visual quality of the fused images. To perform this comparison, we carefully evaluated the results achieved by the compared methods on all test image pairs. However, for conciseness, we chose one representative image from both datasets and report the comparison of the results achieved by the competing algorithm. From Lytro dataset, the ‘fence’ image set is selected as the fence has cross-section areas which give us more details of edge and boundary information. From Grayscale dataset, the ‘clock’ image pair is selected which has been widely used in the image fusion literature for performance evaluation. To better express the outcomes of the visual inspection, the regions with artifacts are highlighted with red rectangles. Note that the image fusion algorithms QTD, SSDI, MSMFMg, NSCT, and OI use intensity images as input and therefore the resultant fused images are grayscale.

Figure 5 shows the fusion results achieved by the compared methods on the fence image set. The results show that the fused images generated by MSMFM, MSMFMg, IFGD, and SSDI methods have unfocused fence regions as highlighted in

Figure 5. In the results of CSR, few parts of the foreground are not sharp enough as in original images. Furthermore, the boundary of foreground and background regions are little blurred, and the color of the floor exhibits the reduction in contrast and brightness. In the DCT based approaches, DCHWT, DCTLP, and PCA, misregistration of edges is evident. The fused images look distorted, the correlation between colors also not appeared to be as agreeable as in focused part of source images. In addition to that, the ringing artifact is visible on the wall and around the boundaries of the fence.

In the fusion results of DCHWT method, the rippling artifacts appeared near the edges. Moreover, the details are not sharp, e.g., net’s pillar and people standing at the distance from the camera are indistinct-able. In case of DSIFT, in the fused image the top middle part of the fence is blurred. The foreground image is not fused well, there are solid lines, and the boundaries of focused and unfocused regions are over sharpened. The GFF method has adequate results but in this technique, fence joints are blurred and foreground image is little brighter too. However, the GFF preserves color contrast and brightness. The result of ICA exhibits blurriness near shooter’s head, near the boundaries of focused and unfocused regions and the door. The foreground is transparent near the ball. The IFM results suffer from the color distortion on the fence and the ball. Details of girl’s face are also missing. It appears that it is merged with the wall’s color and contrast.

The fused images created by the PCA algorithm are highly blurred and exhibits the ringing effect. In this example, the edges of the fence are not defined and the brightness of the image is reduced. The fusion results of WSSM method show that the edges are not fused perfectly. The fence is distorted and suffer from ghost effect at a number of regions. Moreover, the fused image has ringing effect near the wall. In the results of GIF, the fence is not clear—it is transparent and unfocused at some regions. The results of MWGF and NSCT methods still have minor unfocused regions as highlighted with red rectangles in

Figure 5. The results of CBF algorithm suffer from the face over sharpening, reduction in color contrast, transparency and existence of unfocused regions at different sections. Similar artifacts can be spotted in in the fusion results achieved by the DSIFT2 and GRW methods. The results of DCTV and OI algorithms are very poor, resultant images have spherical artifacts, unfocused regions, and over sharpening of edges. The results show that the QTD algorithm performs better than other compared methods, its fusion results are free from most artifacts.

The performance of multi-focus image fusion approaches on the Grayscale dataset is discussed with the help of the clock test image pair. This image pair shows two clocks, one image shows the foreground clock in focus and in the other image the background clock is focused. The results achieved by the compared methods on this image pair are shown in

Figure 6. The result shows that MSMFM and MSMFMg techniques do not exhibit any type of undecided pixel focus as well as no ghost effected area exists. Moreover, the ringing effect does not appear in the resultant images. The SSDI algorithm also shows good visual result, the only problem it faces is a small unfocused portion at the bottom left boundary of the foreground clock. A number of regions are not in focus in QTD and IFGD results. The fused image created by CSR algorithm exhibits distortion on the left boundary of the smaller timepiece. The result obtained from DCHWT approach has certain horizontal and vertical artifacts on the lower side and the upper side of the image respectively. The upper region of the clock is pixelated and similar horizontal and vertical lines are also visible.

The fused image generated by the DCTLP algorithm does not have color contrast as that exists in the original images. The DSIFT fused image exhibits good quality except few regions are grainy and has blurriness at the boundary of focused part of the foreground and the background image. The blurriness can also be spotted in the fusion results of GFF, GIF, and IFM techniques. The PCA and WSSM do not show convincing results as can be seen from

Figure 6, the PCA fused image is blurry and color contrast is also not appropriate. Whereas, WSSM and MWGF exhibit structural distortion and ghost regions on the right side of the foreground clock and upper side of background clock. The fusion results achieved by CBF and MSTSR techniques suffer from color distortion and the so called grainy effect due to imperfect fusion maps. The fusion results of DCTV and OI technique are blurry and suffer from structural distortions.

From the visual comparison of the results achieved by the compared methods presented in

Figure 5 and

Figure 6, we conclude that considering both datasets, the visual comparisons come to the agreement that DSIFT, QTD, GFF, NSCT, and MSTSR are among the five best fusion methods for the Lytro dataset and MSMFM, MSMFMg, DSIFT, QTD, and SSDI are the best five for the Grayscale dataset.

5.3. Quantitative Evaluation

It is not easy to evaluate the performance of fusion algorithms. For investigation of effectiveness and evaluation of performance, two practices can be followed. First is to compare the fused image with a ground truth image (reference image), but in most practical applications, the ground truths are not available. Due to this reason the second practice came into existence, which is to assess the fused image blindly without using reference images. To validate the performance of an algorithm, along with the visual assessment the objective assessment is necessary. Therefore, numerous objective assessment models for evaluation of fusion parameters are proposed e.g., [

76,

77,

78,

79,

80].

An extensive objective evaluation is performed to assess the fusion quality of the compared multi-focus image fusion algorithms. In particular, we used 12 different objective fusion quality assessment metrics in this evaluation. These metrics use various image characteristics to assess its quality and based on these characteristics they are divided in to four groups [

77].

Information theory based metrics measure the quality of the fused image using probability based methods i.e., mutual information, divergence, and correlation, between the fused image and the source images.

Feature based metrics estimate the quality of a fused image by considering different type of features, such as, gradient, edge information, spatial frequency, etc.

Structural similarity based metrics compare the structural information of the fused image and the source images to estimate the fusion quality.

Human perception based metrics consider the contrast, overlapping regions, misregistration of pixel, and edge information in estimating the quality of the fused image with respect to the source images.

The metrics used in this evaluation with their respective category are listed in

Table 2.

The fusion results obtained by each algorithm on both datasets are evaluated using all 12 objective quality assessment metrics listed in

Table 2. In this evaluation, the fusion quality assessment library proposed in [

77] is used. The detailed evaluation results on the Grayscale dataset are presented in

Figure 7 and on the Lytro dataset are shown in

Figure 8. The

evaluates the fused images on the basis of joint and marginal probability distribution of the fused image and the source images. It ranks MSMFMg, DSIFT, and QTD as the three best algorithms for the Grayscale and QTD, MSMFM, and SSDI for Lytro. The

metric takes non-linear correlation coefficient into account while evaluating the fused image. It ranks OI, MSMFM, and QTD as top algorithms for both datasets. The

uses entropy to calculate quality of fused images and it ranks OI, DSIFT2, and NSCT on top of ranking for the Grayscale dataset. It evaluates ICA, GFF, and DSIFT2 as the top performing algorithms for the Lytro dataset. VIFF uses different models such as Gaussian scale mixture model for evaluating fused image quality. According to VIFF metric evaluation, IFGD, ICA, and MSTSR are ranked as the best performers for Grayscale whereas IFGD, MSTSR, and QTD for the Lytro dataset. The

Table 3 summarizes the evaluation results based on information theory metrics and presents the best three image fusion algorithms for both datasets.

The

metric calculates edge preservation using Sobel edge detection operator as well as orientation to evaluate the fused image quality. According to this metric, MSMFMg, GIF, and QTD are amongst the best for the Grayscale dataset and MSMFM, DSIFT, and QTD for the Lytro dataset. The

metric takes gradient information in four different directions of fused image and source images in consideration. In evaluations

ranked IFGD, ICA, and DCTV as are the best three algorithms for the Grayscale dataset, whereas for the Lytro dataset, DSIFT, DCTV, and IFM are top ranked. The

uses the phase congruency information of the fused and source images to assess the quality. On the basis of its evaluations GIF, DSIFT, and MSMFMg are the top performing algorithms on the Grayscale dataset and GFF, GIF, and MSMFM for Lytro dataset. The

uses edge information calculated by low- and high-pass components of wavelet. The results of feature based metrics evaluation are summarized in

Table 4 that shows that DSIFT, QTD, and DCTV are amongst the best algorithms for both the Grayscale and Lytro datasets. The same results are also identifiable in our visual inspection.

The structural similarity based metric

evaluates the fused image quality on the basis of variance. It ranks ICA, DSIFT2, and CBF as the best algorithms for the Grayscale dataset and ICA, CBF, and MSTSR for the Lytro dataset. The

which takes other statistical features such as correlation, co-variance, and edge dependent information into account too while evaluating the fused image quality. It ranks GIF, MSMFMg, and QTD as the top algorithms for the Grayscale dataset and MSMFM, QTD, and DSIFT for the Lytro images. The results of structural similarity based metrics are shown in

Table 5.

The summary of performance evaluation using human perception based metrics

and

is presented in

Table 6. The

metric considers the edge quality, similarity measurement of local regions, and global quality measurement of non-overlapping regions in evaluating the fused image quality. This metric ranks IFGD and OI as the best algorithms for both the Lytro and Grayscale datasets. The

metric uses contrast based features to assess the fusion results. It assesses GIF, MSMFMg, and QTD as the best for the Grayscale and MSMFM and DSIFT for the Lytro dataset.

5.4. Borda Count Ranking of Image Fusion Algorithms

The Borda count [

73,

74,

75] is a voting technique that ranks candidates according to voters preferences. The preferences are converted to scores and the candidate that has maximum score becomes the winner, the second highest scorer gets the second spot and so on. We use this technique here to rank the image fusion algorithms based on their ratings determined by the objective image fusion quality assessment metrics. In the present scenario, since there are 24 algorithms being evaluated, therefore an algorithm is assigned an integral value between 1 and 24 based on its performance measured by an objective quality metric. The scores are given to each algorithm in reverse proportion to their ranking. That is the best performing approach is assigned score 24 and the worst is assigned 1 score. For each fusion algorithm, these scored are accumulated to obtain an aggregated score which decides its rank.

The Borda count scores that each algorithm received on the Grayscale and Lytro datasets are presented in

Table 7. The statistics reveal that for the Grayscale dataset MSMFM, MSMFMg, QTD, DSIFT, and GIF algorithms are the best five, highlighted in bold. Interestingly, these results are the same as those obtained from visual evaluation. On the Lytro dataset, the Borda count rated QTD, DSIFT, MSMFMg, MSMFM, and SSDI as the best five fusion techniques. To get an overall picture of this analysis, the scores obtained by a method on each dataset are aggregated. The algorithms are ranked based on the aggregated scores and the results are presented in

Figure 9. The results show that MSMFMg is rated as the best algorithm with an aggregate score of 462, followed by QTD, MSMFM, and DSIFT with very close scores of 459, 454, and 453, respectively.

5.5. Summary

A summary of the findings of qualitative and quantitative evaluations is presented in

Table 8. It shows that the results of both evaluations on each dataset and also an overall assessment. On the Grayscale dataset, the results of the visual and objective evaluations are the same except the visual evaluation includes SSDI where as the objective assessment brings GIF in the five best algorithms. On the Lytro dataset, the lists visually and objectively five best algorithms are the same except three differences, the qualitative list includes MSTSR, GIF, and NSCT whereas the objective list includes MSMFM, SSDI, and MSMFMg. The overall evaluation considering both datasets is the same as that of the Grayscale dataset. That is, the list of the best five algorithms contain approximately the same methods with slightly different ordering. From the statistics presented in

Table 8, it can be noted that the results of the visual and the objective evaluations mostly agree, confirming the results and authenticating the effectiveness of the best rated image fusion methods.

Another interesting fact notable from

Table 8 is that all the best performing methods are spatial domain based. To investigate it further, in

Table 9, we list the five best performing MIF algorithms of each category with their Borda count based rank (

Figure 9). The results reveal that the best eight methods among the 24 compared methods are spatial domain based algorithms. In the transform domain based methods, DCTV is the best performing and ranked at number 9 among the compared methods. Since we grouped the methods in each category into different groups, the best ranked algorithm in each group with its BC ranking is presented in

Table 10. These statistics further shed light on the most suited domain/representation for efficient multi-focus image fusion. The results show that in spatial domain, the pixel based MIF algorithms are particularly performing better than other groups. Moreover, in the transform domain, the DCT based method is of particular interest. These are very interesting facts which need further investigation to discover the limitations of the frequency domain for multi-focus image fusion. We observed that the better performance of the spatial domain based methods is due to their accurate detection of focused and defocused regions which leads to crisp fusion results; such precise segmentation is not witnessed in most frequency domain methods that suffer with different artifacts e.g., ghost effect.