A Prototypical Network-Based Approach for Low-Resource Font Typeface Feature Extraction and Utilization

Abstract

:1. Introduction

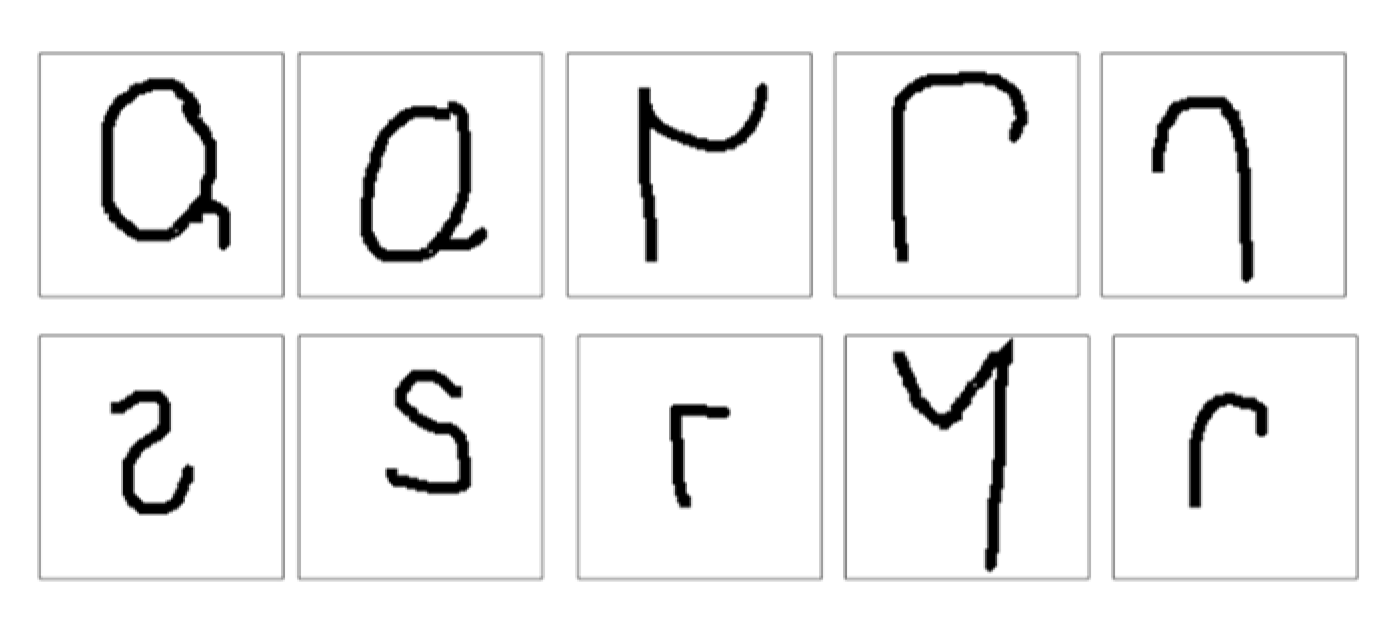

1.1. Shirakawa Font

2. Related Work and State-of-the-Art

2.1. Ancient Character Recognition

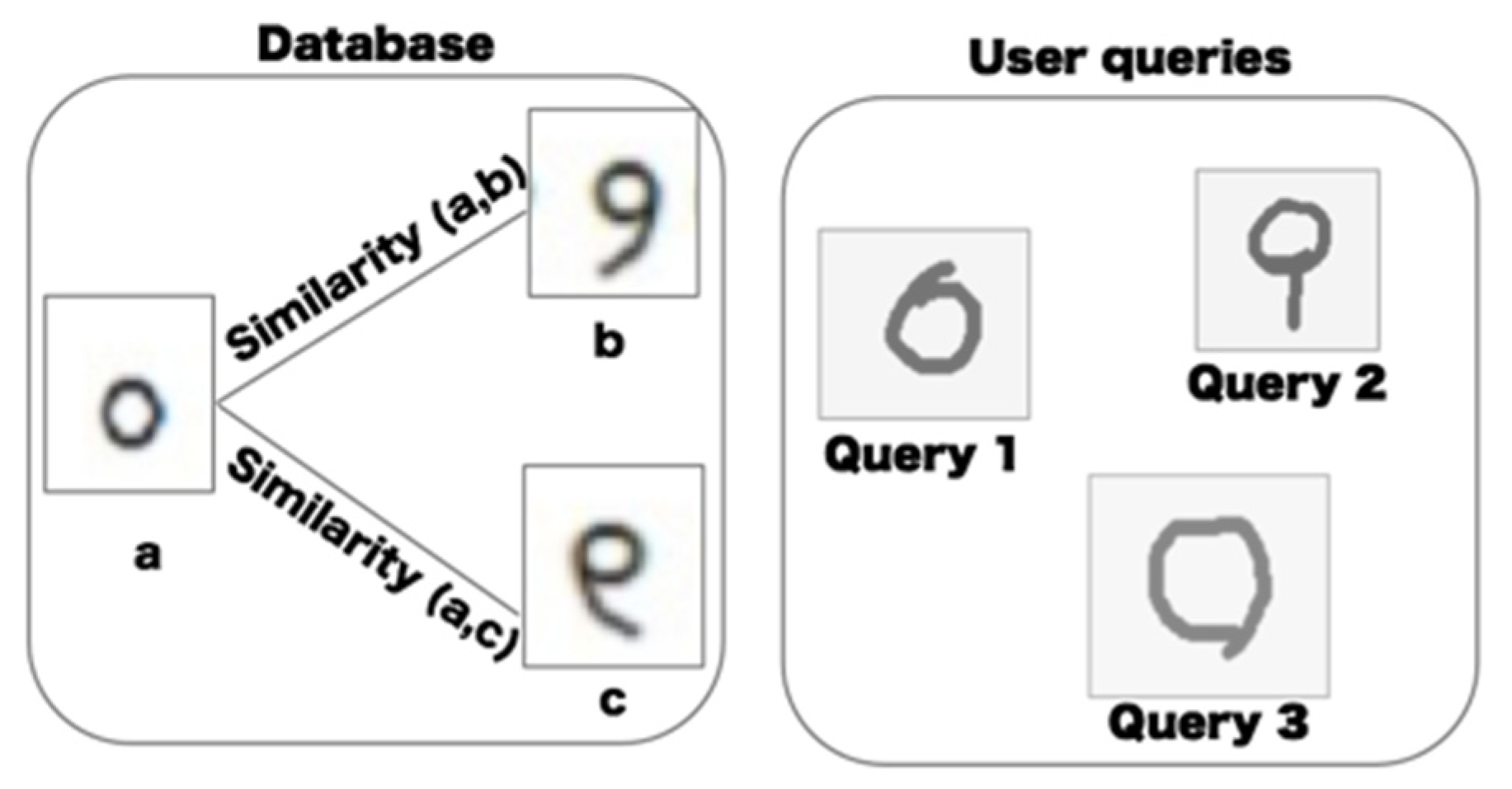

2.2. Sketch-Based Image Retrieval Based on Shape-Matching

2.3. Meta-Learning and Metric-Based Method

3. Main Contributions of This Paper

- (1)

- From a technical aspect, we present a new model structure based on metric learning to use a low-resource ancient character typeface dataset. It is a new attempt to apply generic handcrafted features combined with few-shot metric learning to the model, which works in low-resource data. Thus, the proposed method can obtain more low-latitude features that are conducive to the retrieval of symbols and ancient characters. Comparing with other existing metric-learning based methods, the features extracted from our proposed method have better advantages in finding the highest-ranked relevant item.

- (2)

- Training on other sufficient public character datasets such as OMIGLOT and testing on font typeface-based datasets. This method can learn to represent the typeface images into a vector space more appropriate to their geometric properties. The method proposed in this paper also performs better than several other metric-learning based few-shot learning methods on the cross-domain task using the benchmark datasets.

- (3)

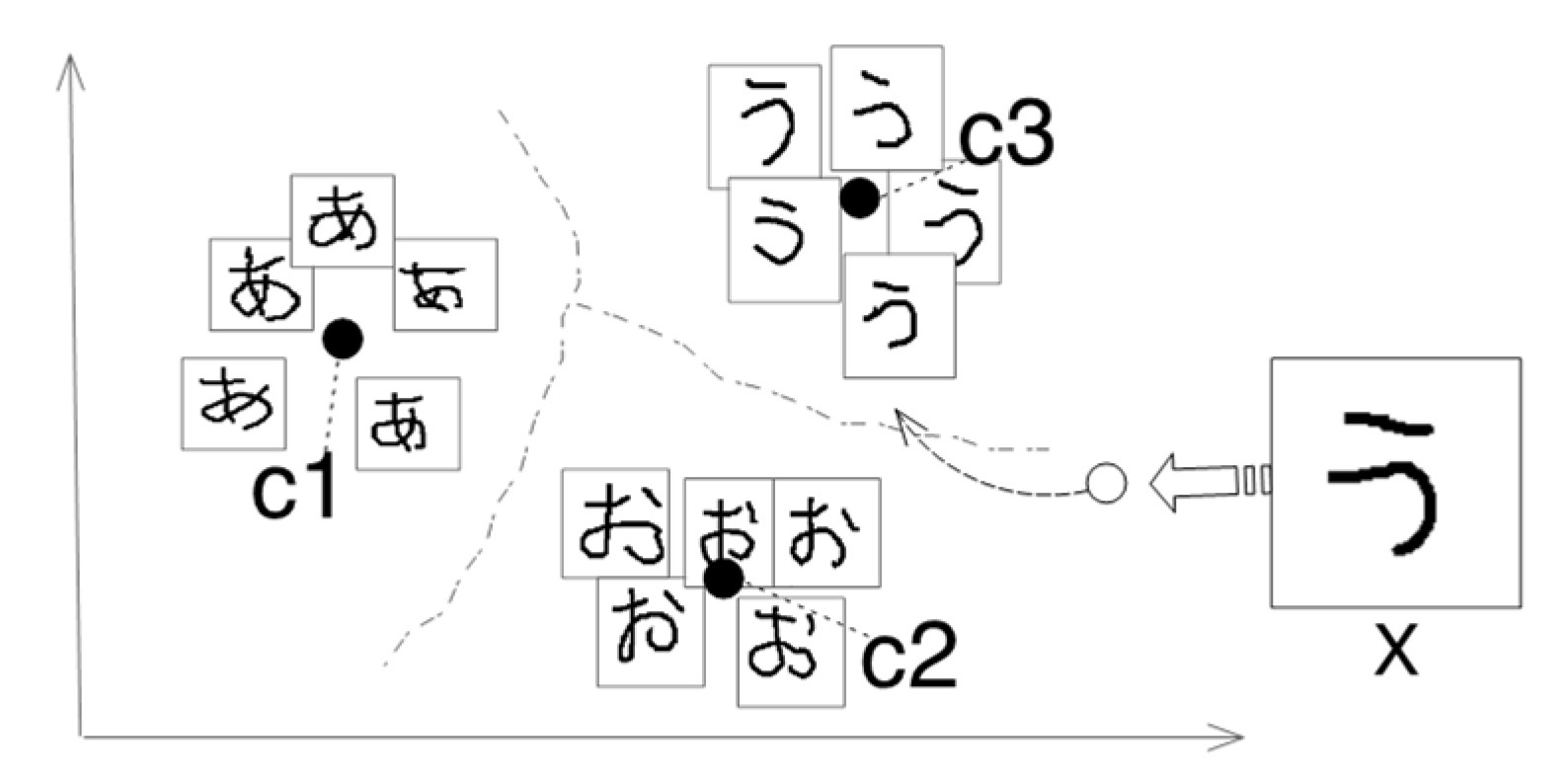

- The calculation of the dynamic mean vector is imported to enhance the robustness of prototypical networks. In the mini-batch training process, we not only select a unique prototypical center but also adapt to the deformation of the support set from test data.

- (4)

- Our proposed framework consists of several components, i.e., spatial transformer network, feature fusion, dynamic prototype distance calculator, and ensemble-learning-based classifier. Each component has reasonable contributions to the classification task.

- (5)

- We apply the proposed method to retrieving ancient characters that support handwritten input, providing a new perspective for the flexible use of low-resource data. This is an innovative effort in terms of one-shot-based ancient character recognition by utilizing the metric-learning method. It provides a reference for the application of ancient font typeface resources in digital humanities and other fields in the future.

4. Methods

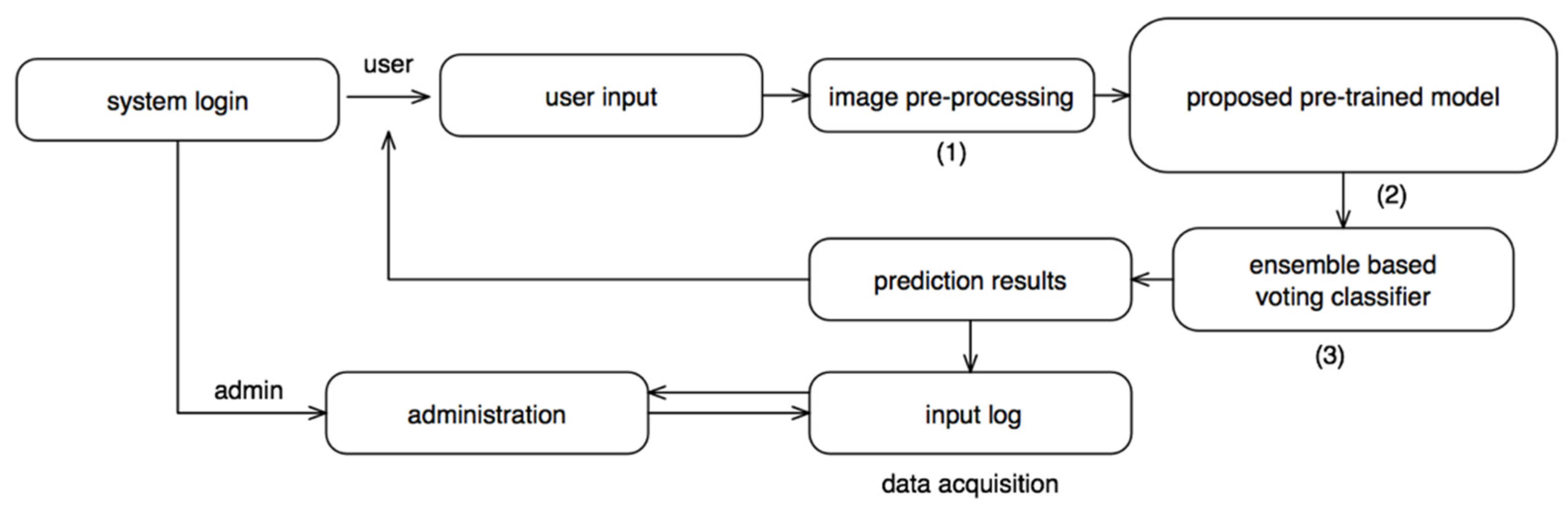

4.1. Framework Structure

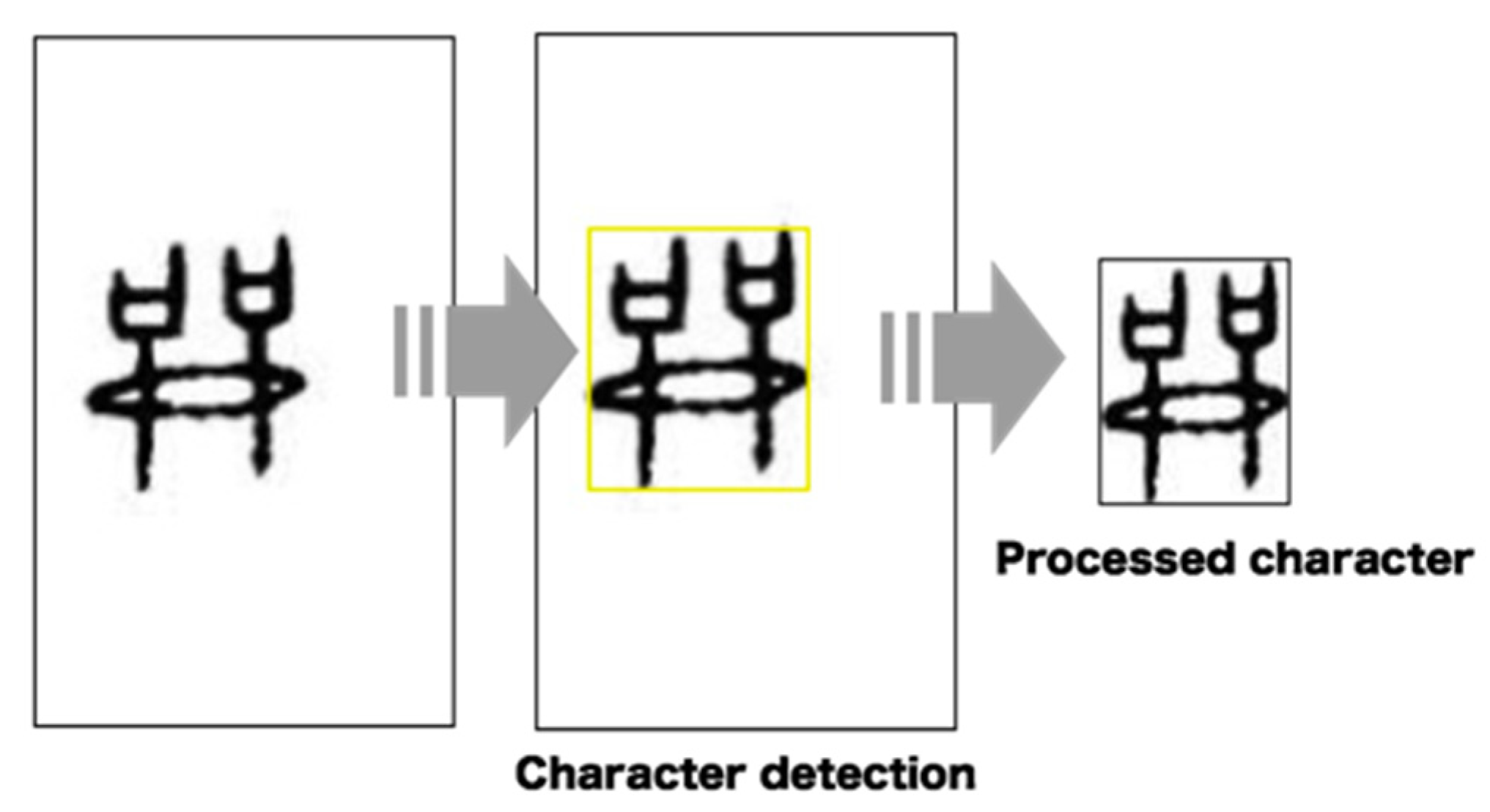

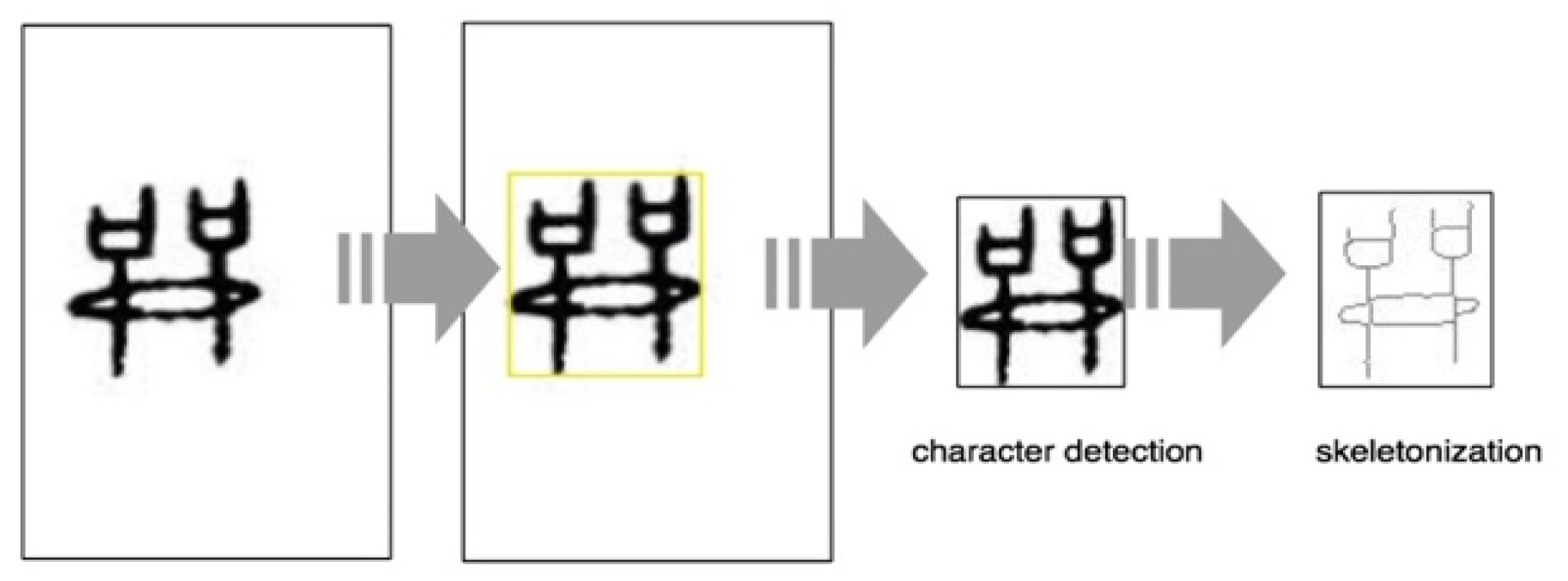

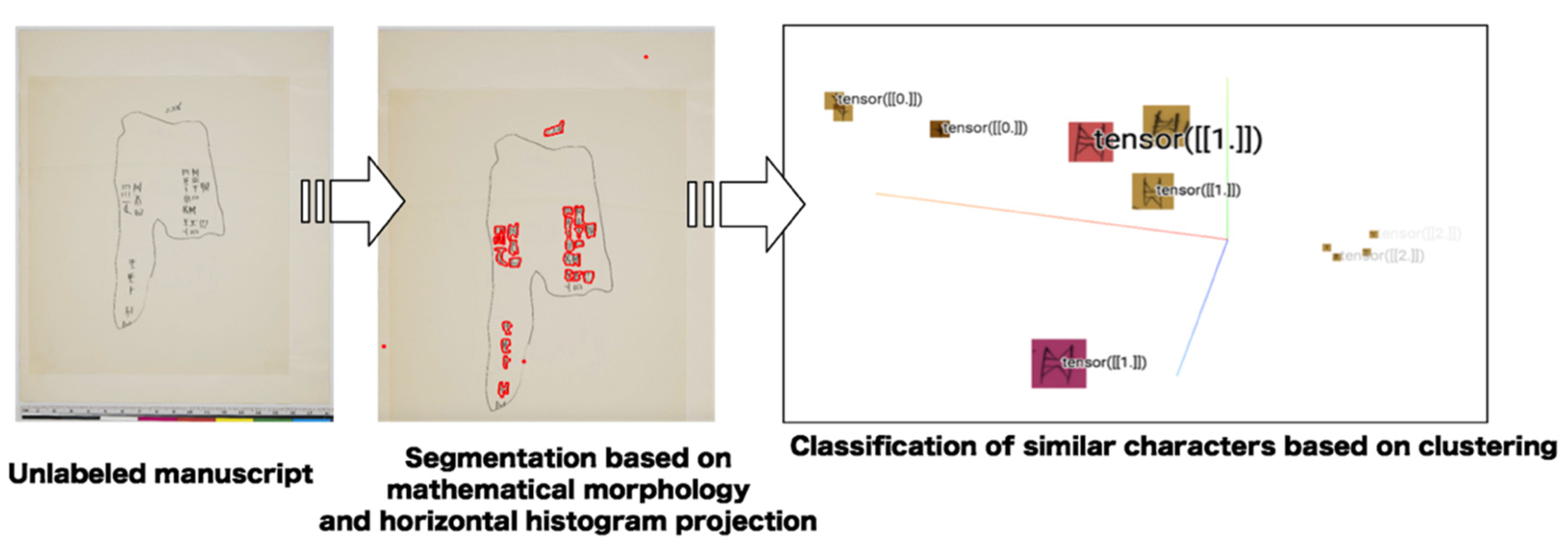

4.2. Image Pre-Processing

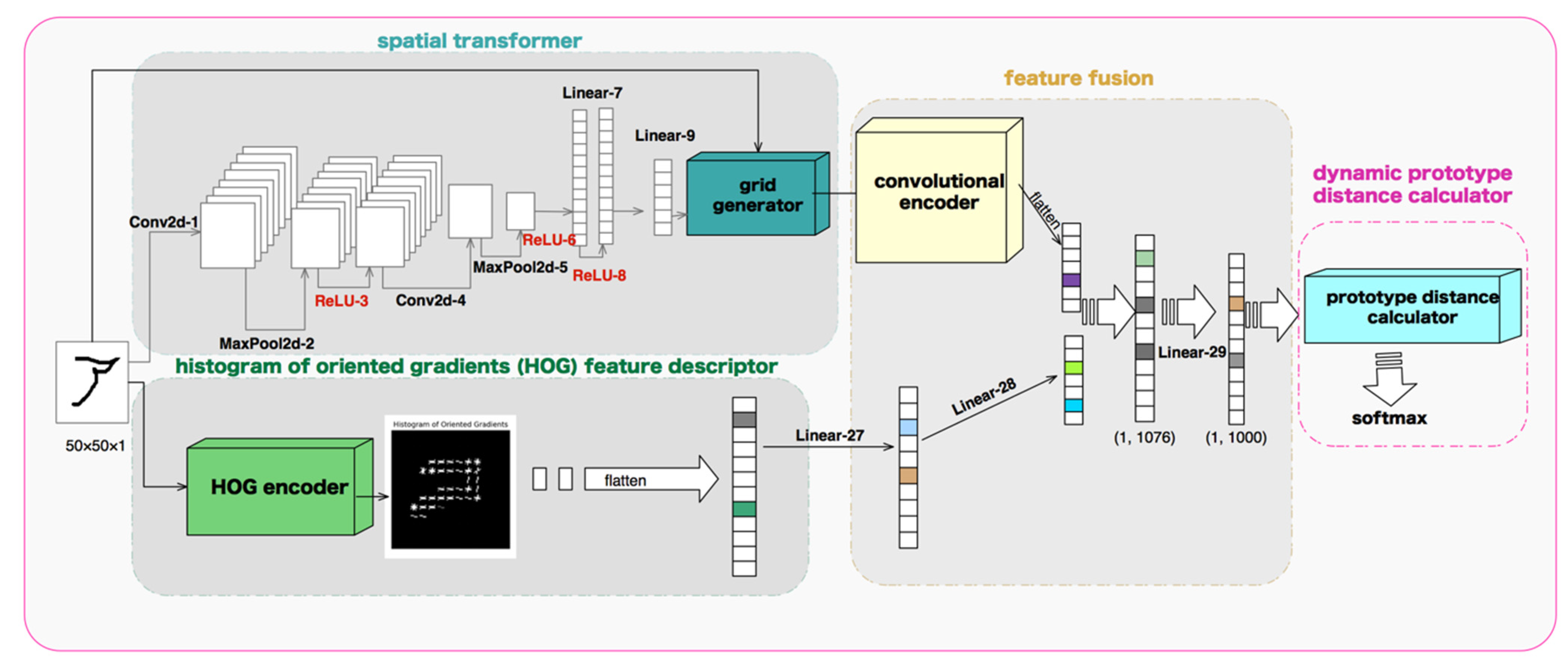

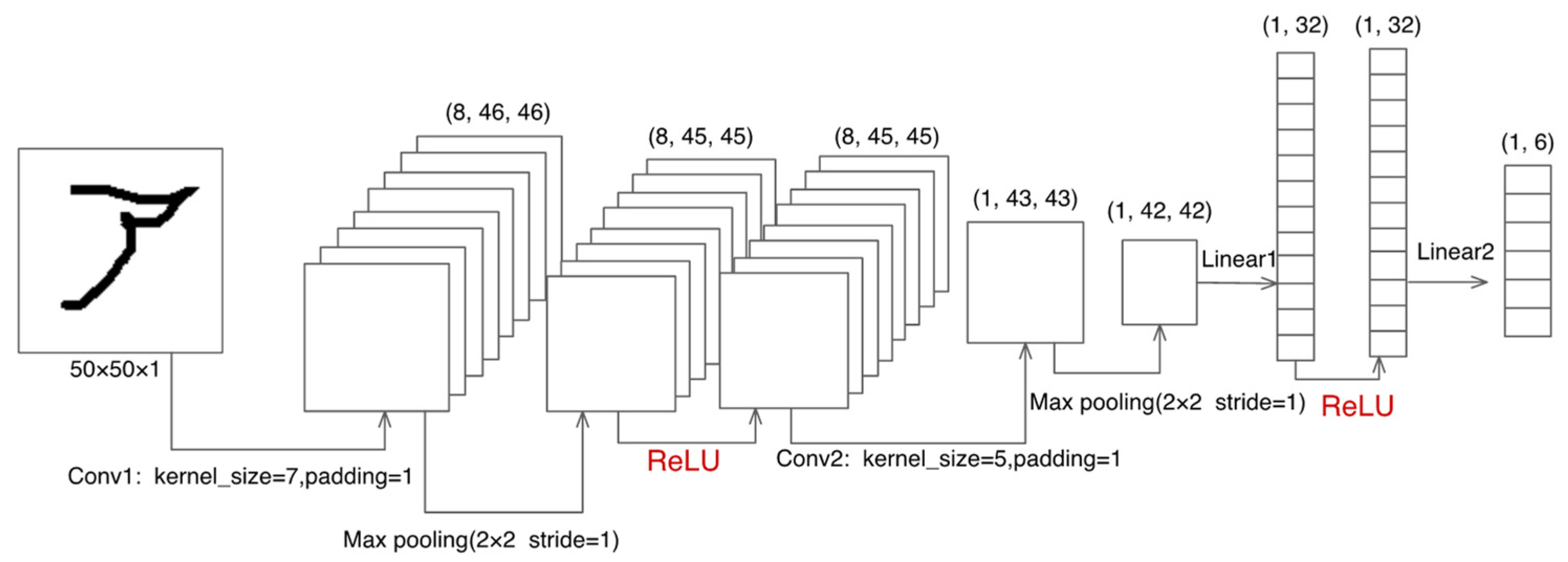

4.3. Model Structure

4.3.1. Spatial Transformer Module

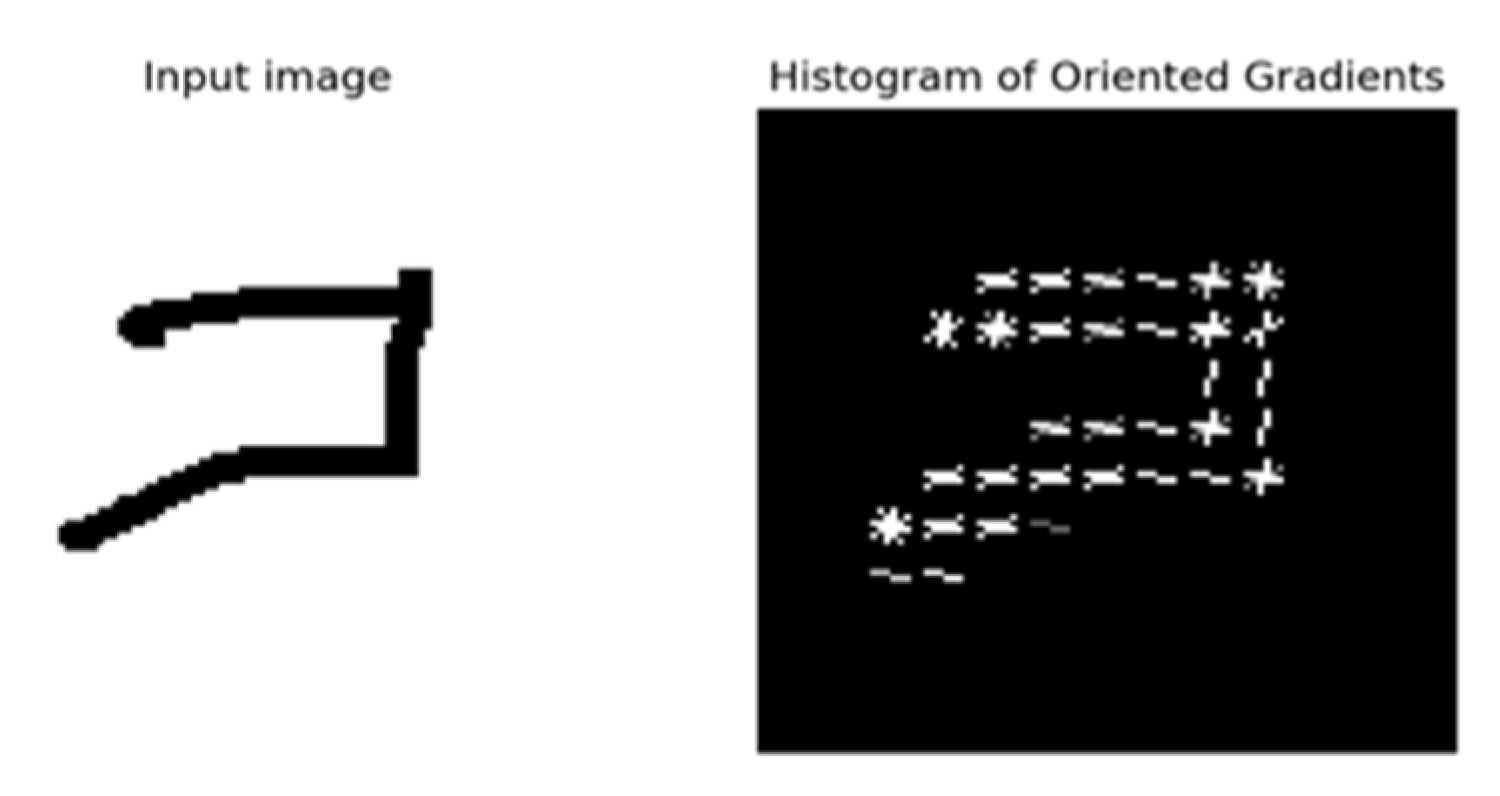

4.3.2. Histogram of Oriented Gradients (HOG) Feature Descriptor Module

4.3.3. Feature Fusion Module

4.3.4. Dynamic Prototype Distance Calculator Module

| Algorithm 1 The algorithm to compute the loss in our network. Training set contains the support set S and query set Q. K is defined as the number of classes per episode. is the number of queries of each class in Q. RANDOMSAMPLE(S, n) denotes a set of n elements chosen uniformly at random from the set S, without replacement. is the embedding function, and is the distance function. |

end for |

4.3.5. Improvement of the Classification with Ensemble Learning

5. Experiments and Results

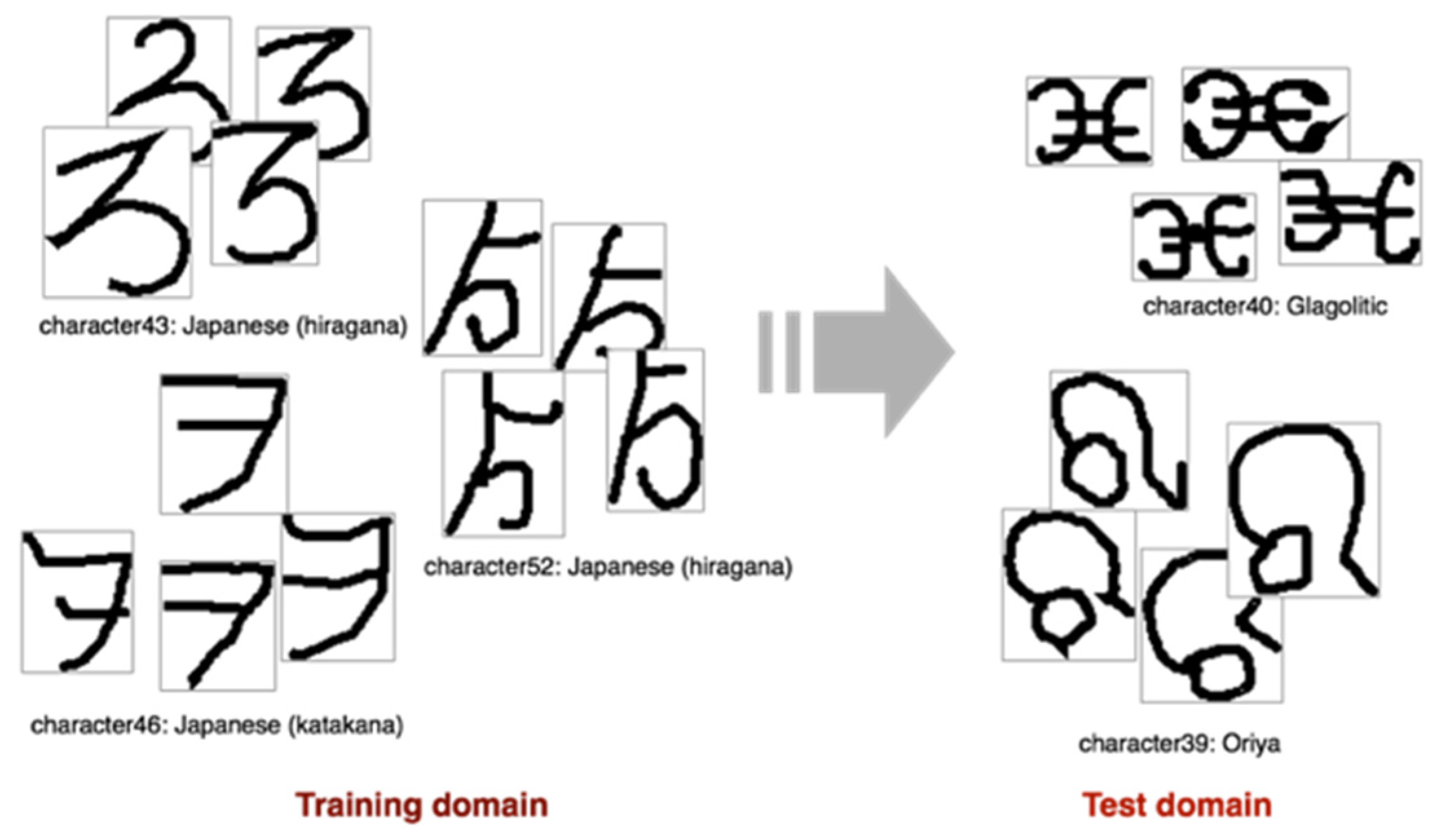

5.1. Datasets and Basic Experimental Setup

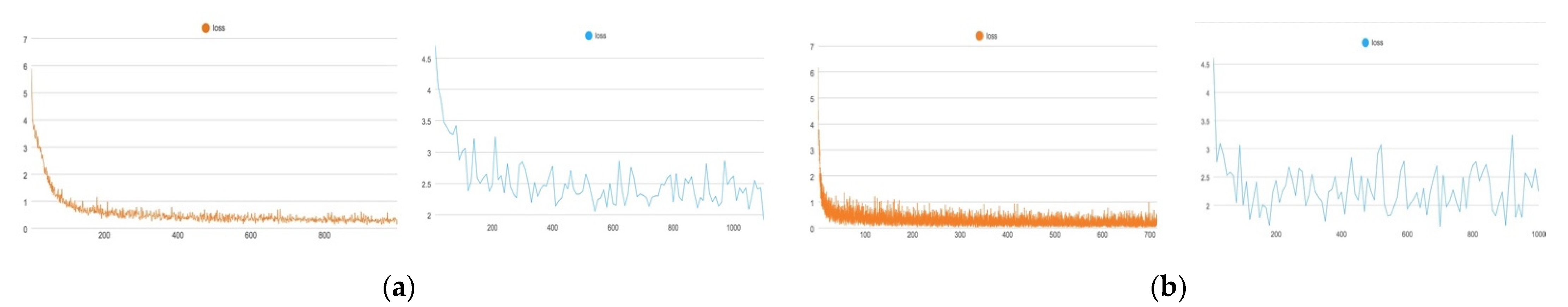

5.2. Cross-Domain, Few-Shot Classification Performance

5.2.1. Cross-Domain, Few-Shot Classification Performance on the ‘OMNIGLOT→EMNIST’ Task

5.2.2. Evaluation of the Ensemble-Learning-Based Classification Method

5.2.3. Performance on the Forty-Way Classification Task

5.2.4. Ablation Experiments

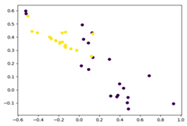

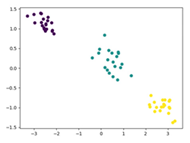

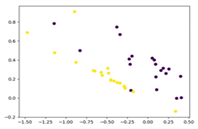

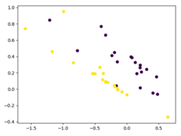

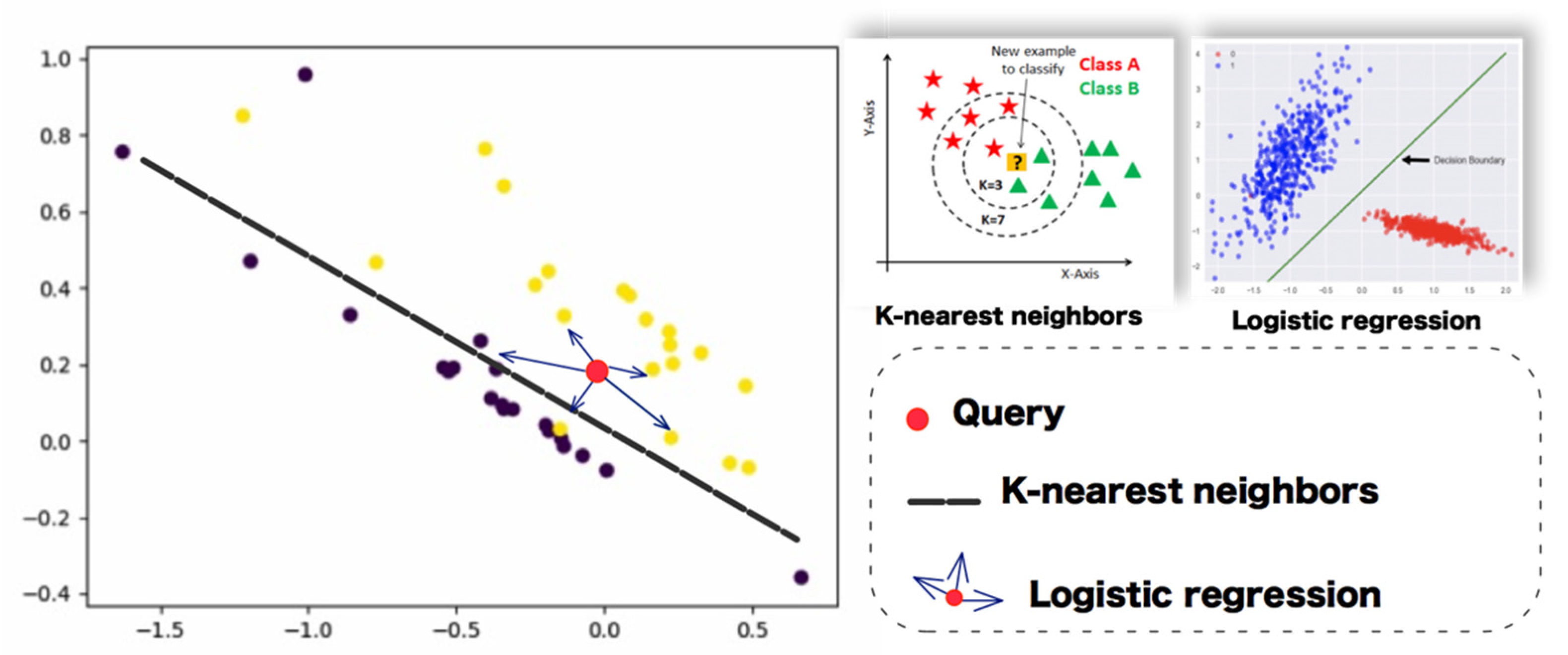

5.3. Contrastive Experiment of Features Extracted from Pre-Trained Models for the Retrieval Task

5.4. Comparison with the State-of-the-Art Methods

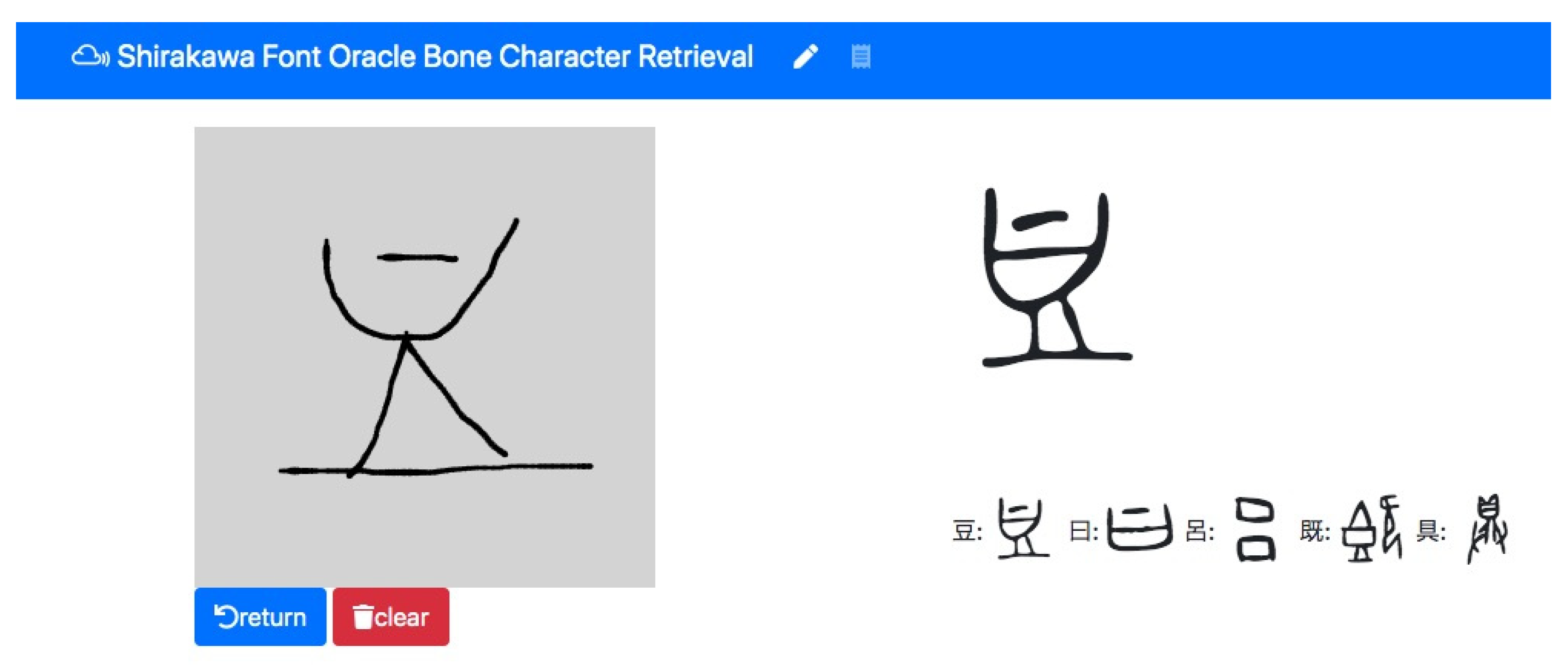

5.5. Demo Application Implementation

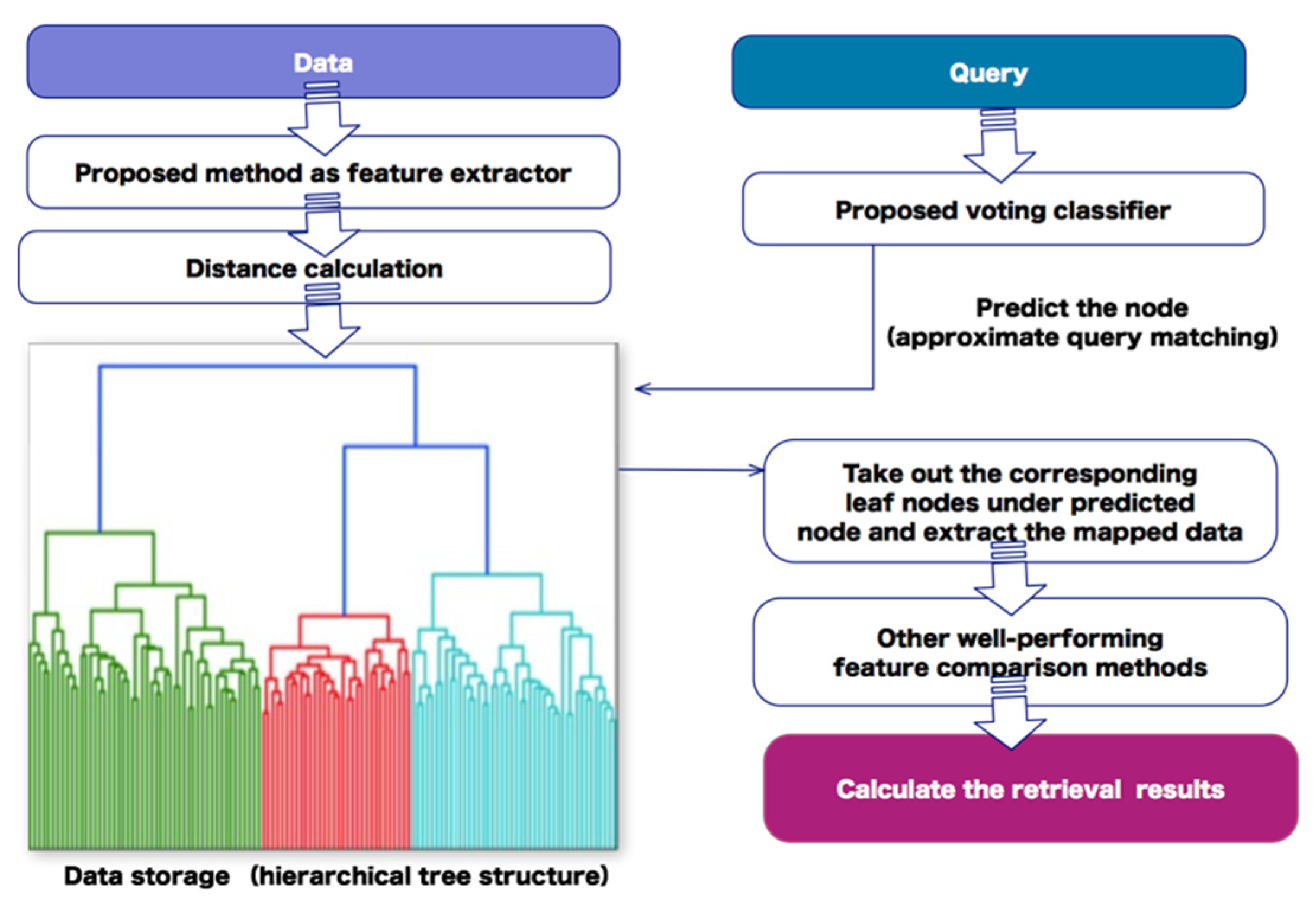

5.6. Other Utilization

6. Discussion

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| Layer | Settings |

|---|---|

| Conv2d-10 | kernel_size = (3, 3), stride = (1, 1), padding = (1, 1) |

| Conv2d-11 | kernel_size = (3, 3), stride = (1, 1), padding = (1, 1) |

| ReLU-13 | (-) |

| MaxPool2d-14 | kernel_size = 2, stride = 2, padding = 0, dilation = 1 |

| Conv2d-15 | kernel_size = (3, 3), stride = (1, 1), padding = (1, 1) |

| BatchNorm2d-16 | eps = 1 × 10−5, momentum = 0.1 |

| ReLU-17 | (-) |

| MaxPool2d-18 | kernel_size = 2, stride = 2, padding = 0, dilation = 1 |

| Conv2d-19 | kernel_size = (3, 3), stride = (1, 1), padding = (1, 1) |

| BatchNorm2d-20 | eps = 1 × 10−5, momentum = 0.1 |

| ReLU-21 | (-) |

| MaxPool2d-22 | kernel_size = 2, stride = 2, padding = 0, dilation = 1 |

| Conv2d-23 | kernel_size = (3, 3), stride = (1, 1), padding = (1, 1) |

| BatchNorm2d-24 | eps = 1 × 10−5, momentum = 0.1 |

| ReLU-25 | (-) |

| MaxPool2d-26 | kernel_size = 2, stride = 2, padding = 0, dilation = 1 |

Appendix B

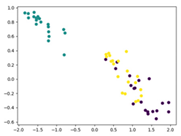

| Epoch | Training Domain | Test Domain |

|---|---|---|

| 1 |  |  |

| 50 |  |  |

| 200 |  |  |

| 500 |  |  |

Appendix C

References

- ETRVSCA Sans-Font Typeface. Available online: https://arro.anglia.ac.uk/id/eprint/705654/ (accessed on 19 September 2021).

- The Shirakawa Shizuka Institute of East Asian Characters and Culture, Shirakawa Font Project. Available online: http://www.ritsumei.ac.jp/acd/re/k-rsc/sio/shirakawa/index.html (accessed on 19 February 2021).

- MERO_HIE Hieroglyphics Font. Available online: https://www.dafont.com/meroitic-hieroglyphics.font (accessed on 9 October 2021).

- Aboriginebats Font. Available online: https://www.dafont.com/aboriginebats.font (accessed on 9 October 2021).

- Narang, S.R.; Jindal, M.K.; Sharma, P. Devanagari ancient character recognition using HOG and DCT features. In Proceedings of the 2018 Fifth International Conference on Parallel, Distributed and Grid Computing (PDGC), Solan, India, 20–22 December 2018; IEEE: Piscataway, NJ, USA, 2019; pp. 215–220. [Google Scholar]

- Vellingiriraj, E.K.; Balamurugan, M.; Balasubramanie, P. Information extraction and text mining of Ancient Vattezhuthu characters in historical documents using image zoning. In Proceedings of the 2016 International Conference on Asian Language Processing (IALP), Tainan, Taiwan, 21–23 November 2016; IEEE: Piscataway, NJ, USA, 2017; pp. 37–40. [Google Scholar]

- Das, A.; Patra, G.R.; Mohanty, M.N. LSTM based odia handwritten numeral recognition. In Proceedings of the 2020 International Conference on Communication and Signal Processing (ICCSP), Tamilnadu, India, 28–30 July 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 0538–0541. [Google Scholar]

- Jayanthi, N.; Indu, S.; Hasija, S.; Tripathi, P. Digitization of ancient manuscripts and inscriptions—A review. In Proceedings of the International Conference on Advances in Computing and Data Sciences, Ghaziabad, India, 11–12 November 2016; pp. 605–612. [Google Scholar]

- Rajakumar, S.; Bharathi, V.S. Century identification and recognition of ancient Tamil character recognition. Int. J. Comput. Appl. 2011, 26, 32–35. [Google Scholar] [CrossRef]

- Romulus, P.; Maraden, Y.; Purnamasari, P.D.; Ratna, A.A. An analysis of optical character recognition implementation for ancient Batak characters using K-nearest neighbors principle. In Proceedings of the 2015 International Conference on Quality in Research (QiR), Lombok, Indonesia, 1–13 August 2015; IEEE: Piscataway, NJ, USA, 2016; pp. 47–50. [Google Scholar]

- Yue, J.; Li, Z.; Liu, L.; Fu, Z. Content-based image retrieval using color and texture fused features. Math. Comput. Model. 2011, 54, 1121–1127. [Google Scholar] [CrossRef]

- Chalechale, A.; Naghdy, G.; Mertins, A. Sketch-based image matching using angular partitioning. IEEE Trans. Syst. Man Cybern. 2005, 35, 28–41. [Google Scholar] [CrossRef]

- Riemenschneider, H.; Donoser, M.; Bischof, H. LSTM based odia handwritten numeral recognition. In Proceedings of the 2011 Computer Vision Winter Workshop (CVWW), Mitterberg, Austria, 2–4 February 2011. [Google Scholar]

- Bhattacharjee, S.D.; Yuan, J.; Hong, W.; Ruan, X. Query adaptive instance search using object sketches. In Proceedings of the 2016 ACM Multimedia, Amsterdam, The Netherlands, 15–19 October 2016. [Google Scholar]

- Zhao, W.; Zhou, D.; Qiu, X.; Jiang, W. Compare the performance of the models in art classification. PLoS ONE 2021, 16, e0248414. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16 × 16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Sung, F.; Yang, Y.; Zhang, L.; Xiang, T.; Torr, P.H.; Hospedales, T.M. I learning to compare: Relation network for few-shot learning. In Proceedings of the 2018 Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; IEEE: Piscataway, NJ, USA, 2018. [Google Scholar]

- Vinyals, O.; Blundell, C.; Lillicrap, T.; Wierstra, D. Matching networks for one shot learning. In Proceedings of the Advances in Neural Information Processing Systems, Barcelona, Spain, 5–10 December 2016. [Google Scholar]

- Koch, G.; Zemel, R.; Salakhutdinov, R. Siamese neural networks for one-shot image recognition. In Proceedings of the ICML Deep Learning Workshop, Lille, France, 6–11 July 2015. [Google Scholar]

- Snell, J.; Swersky, K.; Zemel, R.S. Prototypical networks for few-shot learning. arXiv 2017, arXiv:1703.05175. [Google Scholar]

- Finn, C.; Abbeel, P.; Levine, S. Model-agnostic meta-learning for fast adaptation of deep networks. In Proceedings of the International Conference on Machine Learning, Sydney, Australia, 6–11 August 2017. [Google Scholar]

- Patacchiola, M.; Turner, J.; Crowley, E.J.; O’Boyle, M.; Storkey, A. Bayesian meta-learning for the few-shot setting via deep kernels. In Proceedings of the Advances in Neural Information Processing, Online, 6–12 December 2020. [Google Scholar]

- Pytorch Implementation of DKT. Available online: https://github.com/BayesWatch/deep-kernel-transfer (accessed on 10 November 2021).

- Mikołajczyk, A.; Grochowski, M. Data augmentation for improving deep learning in image classification problem. In Proceedings of the 2018 International Interdisciplinary PhD Workshop (IIPhDW), Świnoujście, Poland, 9–12 May 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 117–122. [Google Scholar]

- Ono, T.; Hattori, S.; Hasegawa, H.; Akamatsu, S.I. Digital mapping using high resolution satellite imagery based on 2D affine projection model. Int. Arch. Photogramm. Remote Sens. 2000, 33, 672–677. [Google Scholar]

- Golubitsky, O.; Mazalov, V.; Watt, S.M. Toward affine recognition of handwritten mathematical characters. In Proceedings of the 9th IAPR International Workshop on Document Analysis Systems, Boston, MA, USA, 26–29 July 2020; pp. 35–42. [Google Scholar]

- Jaderberg, M.; Simonyan, K.; Zisserman, A.; Kavukcuoglu, K. Spatial transformer networks. arXiv 2015, arXiv:1506.02025. [Google Scholar]

- Iamsa-at, S.; Horata, P. Handwritten character recognition using histograms of oriented gradient features in deep learning of artificial neural network. In Proceedings of the 2013 International Conference on IT Convergence and Security (ICITCS), Macau, China, 16–18 December 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 1–5. [Google Scholar]

- Dou, T.; Zhang, L.; Zheng, H.; Zhou, W. Local and non-local deep feature fusion for malignancy characterization of hepatocellular carcinoma. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Granada, Spain, 16–20 September 2018; Springer: Berlin/Heidelberg, Germany, 2018; pp. 472–479. [Google Scholar]

- Huang, S.; Xu, H.; Xia, X.; Yang, F.; Zou, F. Multi-feature fusion of convolutional neural networks for Fine-Grained ship classification. J. Intell. Fuzzy 2019, 37, 125–135. [Google Scholar] [CrossRef]

- Omniglot—The Encyclopedia of Writing Systems and Languages. Available online: https://omniglot.com/index.htm (accessed on 9 October 2021).

- Emnist. Available online: https://www.tensorflow.org/datasets/catalog/emnist (accessed on 9 October 2021).

- Oracle Bone Script. Available online: https://omniglot.com/chinese/jiaguwen.htm (accessed on 9 October 2021).

- Kingma, D.P.; Ba, J.S. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Zhang, T.Y.; Suen, C.Y. A fast parallel algorithm for thinning digital patterns. Commun. ACM 1984, 27, 236–239. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the International Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Shirakawa, S. Kokotsu kinbungaku ronso. In The Collection of Shirakawa Szhizuka; Heibonsha: Tokyo, Japan, 2008. (In Japanese) [Google Scholar]

- Li, K.; Batjargal, B.; Maeda, A. Character segmentation in Asian collector’s seal imprints: An attempt to retrieval based on ancient character typeface. arXiv 2021, arXiv:2003.00831. [Google Scholar] [CrossRef]

| Script | Method | Task and Data Availability |

|---|---|---|

| Devanagari script | Naive Bayes, RBF-SVM, and decision tree using HOG and DCT features [5] | 33 classes and 5484 characters for training; dataset is unavailable |

| Tamil character | Fuzzy median filter for noise removal, a neural network including 3 layers [9] | Total class number is unknown; dataset is unavailable |

| Batak script | K-Nearest Neighbors [10] | Total class number is unknown; dataset is unavailable |

| Vattezhuthu character | Image Zoning [6] | 237 classes and 5000 characters for training; dataset is unavailable |

| Odia numbers | LSTM [7] | 10 classes and 5166 characters for training; dataset is unavailable |

| Method | Average |

|---|---|

| MatchingNet (Vinyals et al. 2016) [18] | 75.01 |

| ProtoNet (Snell et al. 2017) [20] | 72.77 0.24 |

| RelationNet (Sung et al. 2018) [17] | 75.62 |

| Our proposed method | 74.00 |

| Methods | Number of Samples in Support Set | Average Score | ||

|---|---|---|---|---|

| 50 Dimensions | 500 Dimensions | 1000 Dimensions | ||

| K-nearest neighbors | one shot | 0.74 | 0.5 | 0.66 |

| five shots | 0.78 | 0.48 | 0.8 | |

| ten shots | 0.80 | 0.84 | 0.82 | |

| Logistic regression | one shot | 0.64 | 0.48 | 0.68 |

| five shots | 0.78 | 0.74 | 0.92 | |

| ten shots | 0.94 | 0.72 | 0.90 | |

| Ensemble learning (proposed method) | one shot | 0.68 | 0.64 | 0.74 |

| five shots | 0.76 | 0.72 | 0.83 | |

| ten shots | 0.88 | 0.82 | 0.86 | |

| Number of Classification Categories | P@1 | P@5 | P@10 |

|---|---|---|---|

| 20 | 0.55 | 0.85 | 0.9 |

| 30 | 0.3 | 0.83 | 0.9 |

| 47 * | 0.28 | 0.65 | 0.76 |

| No. | HOG Encoder 1 | Spatial Transformer 2 | Dynamic 3 | Skeletonization 4 | P@1 | P@5 | P@10 |

|---|---|---|---|---|---|---|---|

| 1 | ✔ | ✖ | ✔ | ✖ | 0.19 | 0.43 | 0.63 |

| 2 | ✖ | ✔ | ✔ | ✖ | 0.24 | 0.61 | 0.73 |

| 3 | ✔ | ✔ | ✖ | ✖ | 0.17 | 0.52 | 0.6 |

| 4 | ✔ | ✔ | ✔ | ✖ | 0.28 | 0.65 | 0.76 |

| 5 | ✔ | ✔ | ✔ | ✔ | 0.20 | 0.26 | 0.41 |

| Model | Feature Dimension | MRR Score |

|---|---|---|

| VGG19 [36] | 1000 | 0.0580 |

| ResNet50 [37] | 1000 | 0.0563 |

| ViT [16] | 1000 | 0.0586 |

| Our proposed method | 1000 | 0.1943 |

| Models: | Our Proposed Method | Pre-Trained ResNet50 |

|---|---|---|

Query: |  |  |

| Dimensions: | 500 | 1000 |

| Top retrieval result: |  |  |

| Method | One-Shot | Five-Shot | ||

|---|---|---|---|---|

| Reported | Our Re-Tested Results | Reported | Our Re-Tested Results | |

| MAML (Finn et al., 2018) | 72.04 ± 0.83 | 71.12 ± 0.84 | 88.24 ± 0.56 | 88.80 ± 0.24 |

| DKT + BNCosSim (Patacchiola et al., 2020) | 75.40 ± 1.10 | 74.90 ± 0.71 | 90.30 ± 0.49 | 90.11 ± 0.20 |

| DKT + CosSim (Patacchiola et al., 2020) | 73.06 ± 2.36 | 76.00 ± 0.42 | 88.10 ± 0.78 | 89.31 ± 0.19 |

| Our proposed method | (-) | 73.33± 2.67 | (-) | 83.31 ± 0.87 |

| Task | Our Proposed Method | Model-Based Meta-Learning Methods | Metric-Learning Based Methods | CNN Based and Transformer Based Pre-Trained Models |

|---|---|---|---|---|

| Five-way classification tasks on benchmark dataset | The performance is not as good as the model-based meta-learning method, which is relatively unstable, but has relatively good best results. | Bayesian framework Deep Kernel Transfer (DKT) proposed by Patacchiola et al. [22] has the best results but consumes more training resources. | RelationNet (Sung et al., 2018) has good results, but it is not as good as the model-based meta-learning method on the cross-domain character images classification task. | It is not a common method of few-shot learning and has not been evaluated by this research. |

| Used as feature-extractor for character images | Due to the focus on geometric feature processing and feature extraction, our model has a better performance on character data when used as a feature-extractor. | Due to insufficient descriptions and cases for feature extraction, this research did not conduct evaluation experiments using such methods. | Due to the use of pair images for learning, methods such as RelationNet and MatchingNet are more suitable for use as feature-extractor for the identification of the authenticity of handwritten characters. ProtoNet (Snell et al., 2017) is more suitable for use as a feature-extractor for retrieval, but if it is character data, some optimizations that focus on the use of geometric features are worth recommending. | Used large-scale datasets such as ImageNet for training, which is very effective for real-life image feature extraction with texture and color features. However, when extracting character data with obvious geometric features, performance needs to be improved. |

| Model | Feature Dimension | MRR Score |

|---|---|---|

| RelationNet (Sung et al., 2018) | 1600 | 0.1022 |

| MatchingNet (Vinyals et al., 2016) | 1600 | 0.0605 |

| ProtoNet (Snell et al., 2017) | 1600 | 0.0817 |

| Our proposed method | 1000 | 0.1943 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, K.; Batjargal, B.; Maeda, A. A Prototypical Network-Based Approach for Low-Resource Font Typeface Feature Extraction and Utilization. Data 2021, 6, 134. https://doi.org/10.3390/data6120134

Li K, Batjargal B, Maeda A. A Prototypical Network-Based Approach for Low-Resource Font Typeface Feature Extraction and Utilization. Data. 2021; 6(12):134. https://doi.org/10.3390/data6120134

Chicago/Turabian StyleLi, Kangying, Biligsaikhan Batjargal, and Akira Maeda. 2021. "A Prototypical Network-Based Approach for Low-Resource Font Typeface Feature Extraction and Utilization" Data 6, no. 12: 134. https://doi.org/10.3390/data6120134

APA StyleLi, K., Batjargal, B., & Maeda, A. (2021). A Prototypical Network-Based Approach for Low-Resource Font Typeface Feature Extraction and Utilization. Data, 6(12), 134. https://doi.org/10.3390/data6120134