Author Contributions

Conceptualization, A.H.; methodology, A.H.; software, A.H.; validation, A.H.; formal analysis, A.H.; investigation, A.H. and G.F.V.; resources, A.H. and O.G.-L.; data curation, A.H. and O.G.-L.; writing—original draft preparation, A.H.; writing—review and editing, A.H., G.F.V., C.D. and O.G.-L.; visualization, A.H.; supervision, A.H.; project administration, A.H.; funding acquisition, O.G.-L. All authors have read and agreed to the published version of the manuscript.

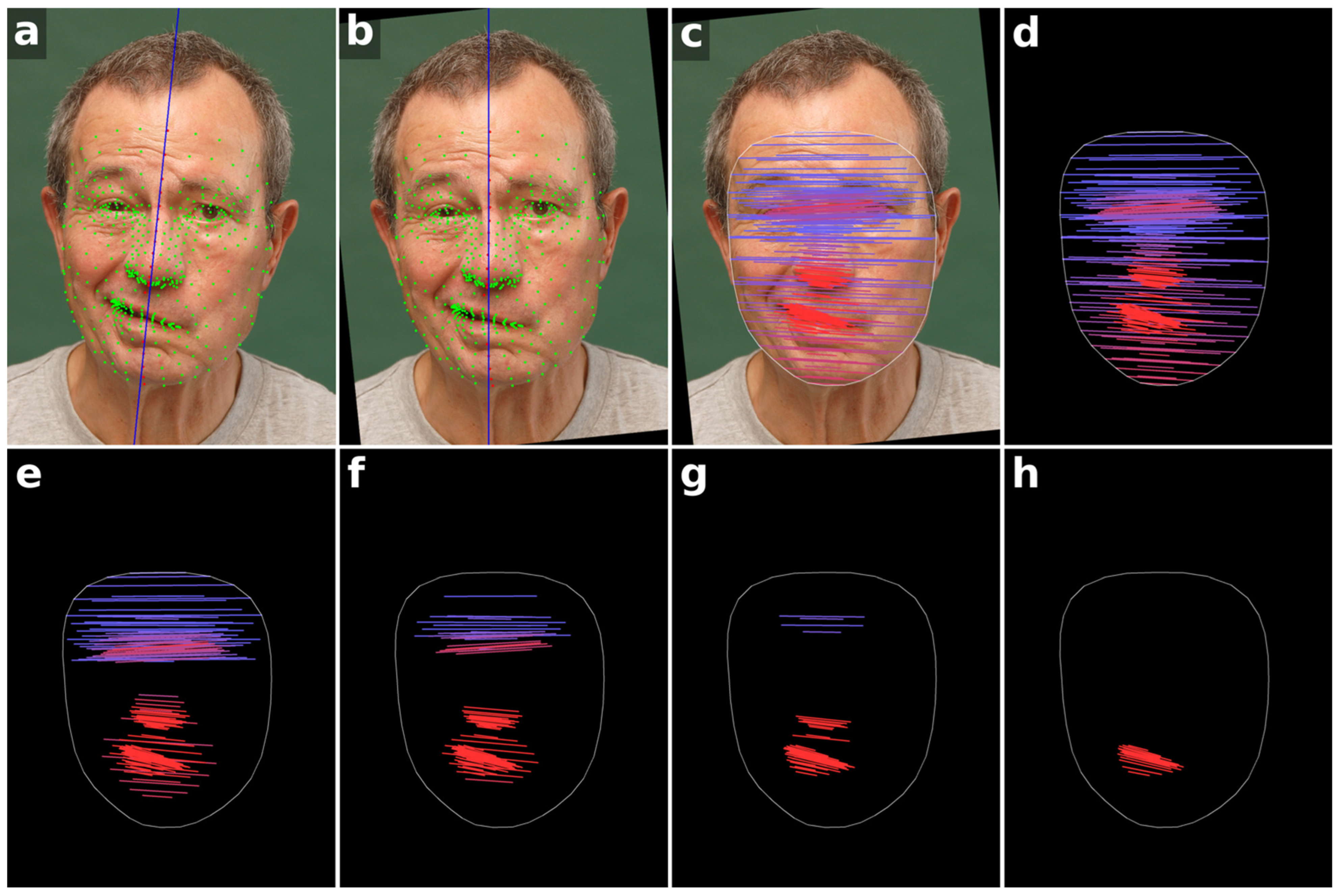

Figure 1.

Workflow of the facial symmetry analysis method. (a) All 478 facial landmarks are initially detected (green and red points). A midline is estimated from the central red landmarks (blue line). (b) The image is rotated so that this midline becomes vertical, aligning the head horizontally. (c) Landmark pairs on the left and right sides of the face relative to the vertical midline are connected by lines, with color indicating the angle relative to the horizontal, transitioning from blue to red as the angle increases. (d) Visualization of the angle map without the patient photo, showing 225 landmark pairs. (e–h) Angle maps with progressively reduced landmark sets of 140, 91, 50, and 21 pairs, highlighting that the most informative features are concentrated in the mouth, nose and eye regions. Written informed consent for publication of these identifiable photographs was obtained from the patient.

Figure 1.

Workflow of the facial symmetry analysis method. (a) All 478 facial landmarks are initially detected (green and red points). A midline is estimated from the central red landmarks (blue line). (b) The image is rotated so that this midline becomes vertical, aligning the head horizontally. (c) Landmark pairs on the left and right sides of the face relative to the vertical midline are connected by lines, with color indicating the angle relative to the horizontal, transitioning from blue to red as the angle increases. (d) Visualization of the angle map without the patient photo, showing 225 landmark pairs. (e–h) Angle maps with progressively reduced landmark sets of 140, 91, 50, and 21 pairs, highlighting that the most informative features are concentrated in the mouth, nose and eye regions. Written informed consent for publication of these identifiable photographs was obtained from the patient.

Figure 2.

Discriminatory power of landmark sets across Stennert index grades. Boxplots show the distribution of ScoreAI for each patient grouped by Stennert index levels at rest (top) and during voluntary movement (bottom) for five different landmark sets (225, 140, 91, 50, 21 pairs). The mean is marked with a blue diamond. The Kruskal–Wallis H-test statistic and associated p-value for each landmark set are indicated above the respective groups, reflecting the ability of each configuration to differentiate clinical severity. Larger H-values correspond to stronger separation between Stennert index grades, highlighting that the 91-pair configuration achieves optimal discriminatory performance.

Figure 2.

Discriminatory power of landmark sets across Stennert index grades. Boxplots show the distribution of ScoreAI for each patient grouped by Stennert index levels at rest (top) and during voluntary movement (bottom) for five different landmark sets (225, 140, 91, 50, 21 pairs). The mean is marked with a blue diamond. The Kruskal–Wallis H-test statistic and associated p-value for each landmark set are indicated above the respective groups, reflecting the ability of each configuration to differentiate clinical severity. Larger H-values correspond to stronger separation between Stennert index grades, highlighting that the 91-pair configuration achieves optimal discriminatory performance.

Figure 3.

Distribution of ScoreAI across facial expressions and Stennert index levels. Top: Boxplots of ScoreAI for all nine expressions and the overall mean (“all”) for five landmark sets (225, 140, 91, 50, 21 pairs) across all patients, illustrating overall variability, with each set indicated by a different shade of gray. The mean is marked with a blue diamond. Middle: Boxplots for the 91-pair configuration, stratified by Stennert index at rest (levels 0–4), showing the distribution of ScoreAI across the nine expressions and overall mean for patients with available clinical scores. Bottom: Boxplots for the 91-pair configuration, stratified by Stennert index during voluntary movement (levels 0–6), displaying the same information. Facial expressions: (1) neutral, (2) gentle eye closure, (3) forced eye closure, (4) frowning/forehead wrinkling, (5) nose wrinkling, (6) closed-mouth stretch, (7) mouth stretch with teeth visible, (8) lip pursing, and (9) downward movement of the mouth corners.

Figure 3.

Distribution of ScoreAI across facial expressions and Stennert index levels. Top: Boxplots of ScoreAI for all nine expressions and the overall mean (“all”) for five landmark sets (225, 140, 91, 50, 21 pairs) across all patients, illustrating overall variability, with each set indicated by a different shade of gray. The mean is marked with a blue diamond. Middle: Boxplots for the 91-pair configuration, stratified by Stennert index at rest (levels 0–4), showing the distribution of ScoreAI across the nine expressions and overall mean for patients with available clinical scores. Bottom: Boxplots for the 91-pair configuration, stratified by Stennert index during voluntary movement (levels 0–6), displaying the same information. Facial expressions: (1) neutral, (2) gentle eye closure, (3) forced eye closure, (4) frowning/forehead wrinkling, (5) nose wrinkling, (6) closed-mouth stretch, (7) mouth stretch with teeth visible, (8) lip pursing, and (9) downward movement of the mouth corners.

![Bioengineering 13 00426 g003 Bioengineering 13 00426 g003]()

Figure 4.

Visualization of facial symmetry in a single patient across multiple time points and nine standardized facial expressions. Each row corresponds to one time point, ordered chronologically (a–d), with the ScoreAI of all 91 landmark pairs displayed above each row. Each column represents one facial expression (1–9), and the associated angle map is color-coded from blue (0° deviation) to red (≥5° deviation) to indicate the degree of asymmetry between paired landmarks. Facial expressions: (1) neutral, (2) gentle eye closure, (3) forced eye closure, (4) frowning/forehead wrinkling, (5) nose wrinkling, (6) closed-mouth stretch, (7) mouth stretch with teeth visible, (8) lip pursing, and (9) downward movement of the mouth corners.

Figure 4.

Visualization of facial symmetry in a single patient across multiple time points and nine standardized facial expressions. Each row corresponds to one time point, ordered chronologically (a–d), with the ScoreAI of all 91 landmark pairs displayed above each row. Each column represents one facial expression (1–9), and the associated angle map is color-coded from blue (0° deviation) to red (≥5° deviation) to indicate the degree of asymmetry between paired landmarks. Facial expressions: (1) neutral, (2) gentle eye closure, (3) forced eye closure, (4) frowning/forehead wrinkling, (5) nose wrinkling, (6) closed-mouth stretch, (7) mouth stretch with teeth visible, (8) lip pursing, and (9) downward movement of the mouth corners.

Figure 5.

Visualization of facial symmetry across patients with peripheral facial palsy, showing extreme and percentile cases for each of the nine standardized facial expressions. Each row (a–e) represents one target group based on ScoreAI values across 91 landmark pairs: maximum, 75th percentile, median, 25th percentile, and minimum. Columns correspond to the nine facial expressions (1–9). The angle maps are color-coded from blue (0° deviation) to red (≥5° deviation), indicating the degree of asymmetry between paired landmarks. Facial expressions: (1) neutral, (2) gentle eye closure, (3) forced eye closure, (4) frowning/forehead wrinkling, (5) nose wrinkling, (6) closed-mouth stretch, (7) mouth stretch with teeth visible, (8) lip pursing, and (9) downward movement of the mouth corners.

Figure 5.

Visualization of facial symmetry across patients with peripheral facial palsy, showing extreme and percentile cases for each of the nine standardized facial expressions. Each row (a–e) represents one target group based on ScoreAI values across 91 landmark pairs: maximum, 75th percentile, median, 25th percentile, and minimum. Columns correspond to the nine facial expressions (1–9). The angle maps are color-coded from blue (0° deviation) to red (≥5° deviation), indicating the degree of asymmetry between paired landmarks. Facial expressions: (1) neutral, (2) gentle eye closure, (3) forced eye closure, (4) frowning/forehead wrinkling, (5) nose wrinkling, (6) closed-mouth stretch, (7) mouth stretch with teeth visible, (8) lip pursing, and (9) downward movement of the mouth corners.

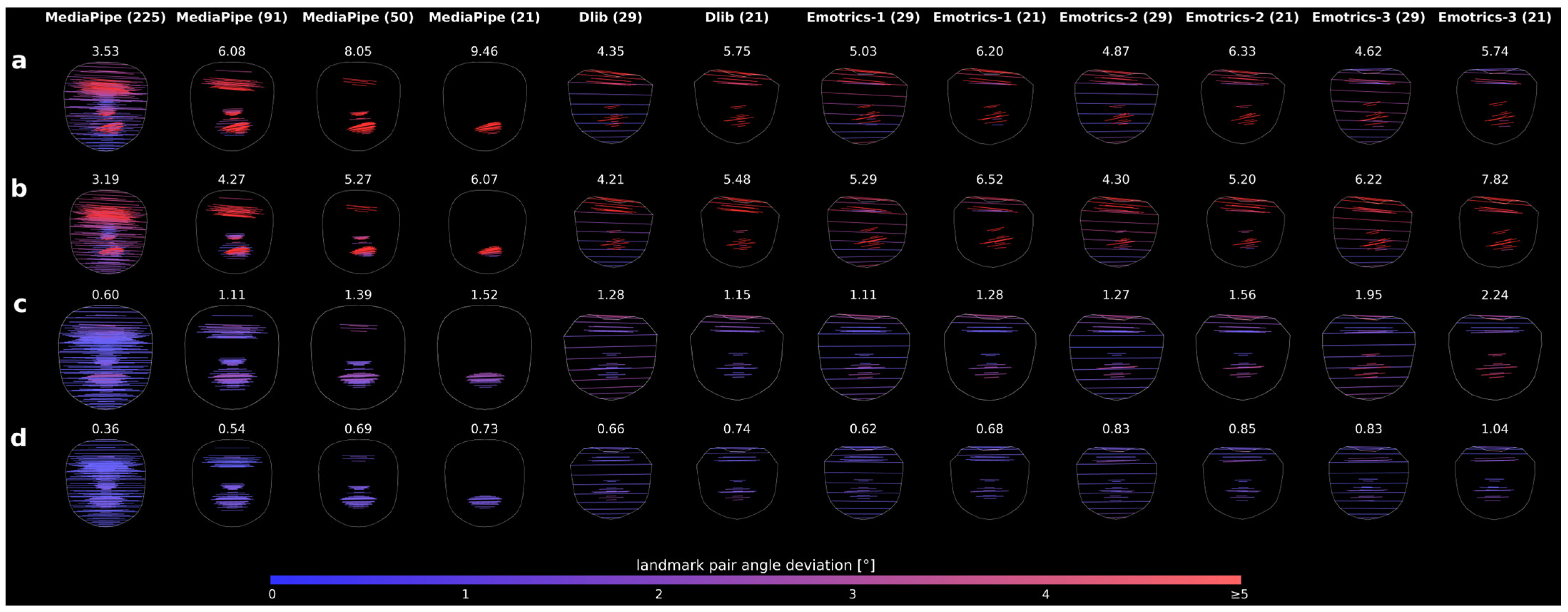

Figure 6.

Comparison of landmark detection AI models using the same original image as in

Figure 4, row 7. Rows (

a–

d) show different time points, and columns show the respective model configurations. The Score

AI is displayed above each image, and the color bar shows the angular deviation scale from 0° to ≥5°.

Figure 6.

Comparison of landmark detection AI models using the same original image as in

Figure 4, row 7. Rows (

a–

d) show different time points, and columns show the respective model configurations. The Score

AI is displayed above each image, and the color bar shows the angular deviation scale from 0° to ≥5°.

Table 1.

Summary statistics of ScoreAI derived from 91 landmark pairs for each of the nine facial expressions and overall average (“all”) across all 405 datasets, as well as region-specific results for the eye, nose and mouth.

Table 1.

Summary statistics of ScoreAI derived from 91 landmark pairs for each of the nine facial expressions and overall average (“all”) across all 405 datasets, as well as region-specific results for the eye, nose and mouth.

| | ScoreAI of Expressions [°] |

|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | All |

|---|

| mean | 1.24 | 1.26 | 1.61 | 1.36 | 2.33 | 2.27 | 2.21 | 1.61 | 1.55 | 1.72 |

| SD | 0.86 | 0.81 | 1.18 | 0.79 | 1.67 | 1.88 | 1.77 | 0.99 | 1.11 | 0.96 |

| variance | 0.74 | 0.66 | 1.39 | 0.63 | 2.78 | 3.53 | 3.15 | 0.99 | 1.24 | 0.92 |

| median | 0.99 | 1.07 | 1.30 | 1.14 | 1.86 | 1.60 | 1.59 | 1.34 | 1.28 | 1.48 |

| max | 5.31 | 5.93 | 7.86 | 4.25 | 7.95 | 10.15 | 10.16 | 6.16 | 8.11 | 5.75 |

| min | 0.22 | 0.23 | 0.26 | 0.25 | 0.29 | 0.18 | 0.20 | 0.21 | 0.26 | 0.44 |

| region-specific eye analysis (24 landmark pairs) |

| mean | 1.23 | 1.47 | 1.58 | 1.82 | 1.56 | 1.49 | 1.55 | 1.46 | 1.35 | 1.50 |

| SD | 0.77 | 1.0 | 1.08 | 1.2 | 1.07 | 1.09 | 1.11 | 0.97 | 0.88 | 0.70 |

| region-specific nose analysis (23 landmark pairs) |

| mean | 0.83 | 0.81 | 1.01 | 0.85 | 1.77 | 1.54 | 1.69 | 1.06 | 1.07 | 1.18 |

| SD | 0.67 | 0.59 | 0.91 | 0.59 | 1.46 | 1.41 | 1.52 | 0.85 | 0.94 | 0.78 |

| region-specific mouth analysis (44 landmark pairs) |

| mean | 1.45 | 1.38 | 1.94 | 1.38 | 3.04 | 3.07 | 2.85 | 1.99 | 1.92 | 2.12 |

| SD | 1.31 | 1.19 | 1.78 | 1.12 | 2.57 | 2.9 | 2.65 | 1.51 | 1.76 | 1.42 |

Table 2.

ScoreAI (±standard deviation) derived from 91 landmark pairs, stratified by Stennert index for rest (0–4) and movement (0–6) across nine facial expressions. Higher ScoreAI indicates lower facial symmetry between the affected and unaffected sides. A trend of increasing ScoreAI with increasing Stennert index can be observed, particularly in nose wrinkling (expression 5) and mouth-related expressions (expressions 6 and 7) for movement, reflecting the association between higher facial palsy severity and reduced symmetry.

Table 2.

ScoreAI (±standard deviation) derived from 91 landmark pairs, stratified by Stennert index for rest (0–4) and movement (0–6) across nine facial expressions. Higher ScoreAI indicates lower facial symmetry between the affected and unaffected sides. A trend of increasing ScoreAI with increasing Stennert index can be observed, particularly in nose wrinkling (expression 5) and mouth-related expressions (expressions 6 and 7) for movement, reflecting the association between higher facial palsy severity and reduced symmetry.

| | n | ScoreAI of Expressions [°] |

|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 |

|---|

| Stennert index rest |

| 0 | 99 | 0.91 ± 0.51 | 1.00 ± 0.50 | 1.09 ± 0.66 | 1.01 ± 0.53 | 1.34 ± 0.86 | 1.14 ± 1.02 | 1.11 ± 0.88 | 1.16 ± 0.60 | 1.10 ± 0.74 |

| 1 | 53 | 1.05 ± 0.54 | 1.09 ± 0.66 | 1.63 ± 1.08 | 1.22 ± 0.61 | 2.23 ± 1.60 | 1.91 ± 1.32 | 1.88 ± 1.21 | 1.46 ± 0.92 | 1.40 ± 0.76 |

| 2 | 59 | 1.33 ± 0.72 | 1.25 ± 0.61 | 1.66 ± 1.18 | 1.51 ± 0.87 | 2.90 ± 1.66 | 2.77 ± 1.71 | 3.00 ± 1.74 | 1.84 ± 0.94 | 1.63 ± 0.90 |

| 3 | 39 | 1.54 ± 0.87 | 1.46 ± 0.62 | 2.03 ± 1.20 | 1.67 ± 0.69 | 3.37 ± 1.39 | 3.62 ± 1.47 | 3.31 ± 1.68 | 1.88 ± 0.90 | 1.85 ± 1.07 |

| 4 | 16 | 2.43 ± 1.49 | 2.37 ± 1.37 | 3.09 ± 1.59 | 2.23 ± 0.99 | 4.22 ± 2.02 | 4.37 ± 2.42 | 3.77 ± 2.05 | 2.25 ± 1.29 | 2.64 ± 1.99 |

| Stennert index movement |

| 0 | 58 | 0.86 ± 0.48 | 0.91 ± 0.46 | 0.97 ± 0.57 | 0.91 ± 0.50 | 1.05 ± 0.47 | 0.92 ± 0.51 | 0.80 ± 0.43 | 1.06 ± 0.51 | 0.91 ± 0.46 |

| 1 | 27 | 0.96 ± 0.32 | 0.98 ± 0.34 | 1.17 ± 0.59 | 1.13 ± 0.48 | 1.54 ± 0.69 | 1.19 ± 0.55 | 1.15 ± 0.49 | 1.19 ± 0.53 | 1.24 ± 0.69 |

| 2 | 35 | 1.04 ± 0.42 | 1.02 ± 0.63 | 1.23 ± 0.70 | 1.23 ± 0.66 | 1.54 ± 0.95 | 1.12 ± 0.76 | 1.31 ± 0.74 | 1.32 ± 0.70 | 1.10 ± 0.45 |

| 3 | 21 | 1.23 ± 0.74 | 1.35 ± 0.64 | 1.62 ± 0.81 | 1.31 ± 0.66 | 2.27 ± 1.07 | 2.11 ± 1.07 | 2.07 ± 0.87 | 1.49 ± 0.73 | 1.35 ± 0.59 |

| 4 | 39 | 1.41 ± 0.86 | 1.21 ± 0.69 | 1.61 ± 0.93 | 1.66 ± 0.86 | 2.94 ± 1.66 | 2.53 ± 1.70 | 2.59 ± 1.74 | 2.07 ± 1.00 | 1.73 ± 0.95 |

| 5 | 22 | 1.21 ± 0.80 | 1.26 ± 0.65 | 1.63 ± 0.81 | 1.47 ± 0.79 | 2.72 ± 1.31 | 2.82 ± 1.41 | 3.18 ± 1.90 | 1.82 ± 1.10 | 1.76 ± 0.99 |

| 6 | 64 | 1.63 ± 1.09 | 1.66 ± 0.94 | 2.46 ± 1.60 | 1.62 ± 0.86 | 3.79 ± 1.82 | 4.05 ± 1.82 | 3.73 ± 1.58 | 1.85 ± 1.04 | 2.09 ± 1.44 |

Table 3.

Spearman’s rank correlation coefficients and corresponding p-values for the association between the Stennert index (rest and movement) and ScoreAI derived from 91 landmark pairs across nine facial expressions in 266 datasets. Positive correlation coefficients indicate that higher clinical severity (higher Stennert index) is associated with higher ScoreAI, reflecting lower facial symmetry.

Table 3.

Spearman’s rank correlation coefficients and corresponding p-values for the association between the Stennert index (rest and movement) and ScoreAI derived from 91 landmark pairs across nine facial expressions in 266 datasets. Positive correlation coefficients indicate that higher clinical severity (higher Stennert index) is associated with higher ScoreAI, reflecting lower facial symmetry.

| | Spearman’s Rank Correlation Coefficients of Expressions |

|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | All |

|---|

| rest | 0.38 | 0.35 | 0.44 | 0.42 | 0.58 | 0.63 | 0.61 | 0.38 | 0.38 | 0.46 |

| movement | 0.32 | 0.38 | 0.49 | 0.36 | 0.64 | 0.71 | 0.73 | 0.36 | 0.44 | 0.49 |

| p-value | <0.001 |

| region-specific eye analysis (24 landmark pairs) |

| rest | 0.24 | 0.33 | 0.41 | 0.45 | 0.22 | 0.35 | 0.39 | 0.28 | 0.24 | 0.32 |

| movement | 0.23 | 0.42 | 0.51 | 0.48 | 0.28 | 0.44 | 0.52 | 0.28 | 0.28 | 0.38 |

| p-value | <0.001 |

| region-specific nose analysis (23 landmark pairs) |

| rest | 0.26 | 0.15 | 0.32 | 0.15 | 0.54 | 0.59 | 0.52 | 0.34 | 0.35 | 0.36 |

| movement | 0.18 | 0.13 | 0.31 | 0.06 | 0.59 | 0.61 | 0.63 | 0.35 | 0.39 | 0.36 |

| p-value rest | <0.001 | 0.013 | <0.001 | 0.013 | <0.001 |

| p-value movement | 0.003 | 0.040 | <0.001 | 0.359 | |

| region-specific mouth analysis (44 landmark pairs) |

| rest | 0.36 | 0.24 | 0.32 | 0.28 | 0.55 | 0.63 | 0.6 | 0.27 | 0.29 | 0.39 |

| movement | 0.3 | 0.23 | 0.34 | 0.22 | 0.61 | 0.71 | 0.71 | 0.26 | 0.34 | 0.41 |

| p-value | <0.001 |

Table 4.

Comparison of landmark-based methods for facial asymmetry across Stennert index grades. ScoreAI, along with range and slope, is shown for rest (Stennert 0–4) and movement (Stennert 0–6) conditions, with methods ranked by slope to indicate the strength of separation between severity levels.

Table 4.

Comparison of landmark-based methods for facial asymmetry across Stennert index grades. ScoreAI, along with range and slope, is shown for rest (Stennert 0–4) and movement (Stennert 0–6) conditions, with methods ranked by slope to indicate the strength of separation between severity levels.

| At Rest (Stennert 0–4) | During Facial Movement (Stennert 0–6) |

|---|

Ranked Method

(LM Pairs) | ScoreAI [°] | Range | Slope | Ranked Method

(LM Pairs) | ScoreAI [°] | Range | Slope |

|---|

| MediaPipe (21) | 2.82 ± 0.59 | 3.14 | 0.76 | MediaPipe (21) | 2.24 ± 0.49 | 2.58 | 0.42 |

| MediaPipe (50) | 2.47 ± 1.04 | 2.63 | 0.63 | MediaPipe (50) | 1.99 ± 0.86 | 2.14 | 0.35 |

| Emotrics-2 (21) | 2.95 ± 2.22 | 1.99 | 0.47 | Emotrics-1 (21) | 2.80 ± 2.13 | 1.72 | 0.29 |

| MediaPipe (91) | 1.99 ± 1.21 | 1.95 | 0.47 | Emotrics-2 (21) | 2.59 ± 1.93 | 1.68 | 0.26 |

| Emotrics-1 (21) | 3.10 ± 2.34 | 1.76 | 0.41 | MediaPipe (91) | 1.64 ± 1.00 | 1.61 | 0.26 |

| Dlib (21) | 2.42 ± 1.48 | 1.73 | 0.39 | Emotrics-3 (21) | 2.74 ± 2.14 | 1.40 | 0.25 |

| Emotrics-2 (29) | 2.49 ± 2.11 | 1.57 | 0.37 | Emotrics-1 (29) | 2.32 ± 2.03 | 1.34 | 0.23 |

| MediaPipe (140) | 1.69 ± 1.17 | 1.51 | 0.36 | Dlib (21) | 2.12 ± 1.29 | 1.50 | 0.22 |

| Emotrics-3 (21) | 3.01 ± 2.36 | 1.46 | 0.35 | Emotrics-2 (29) | 2.21 ± 1.83 | 1.32 | 0.21 |

| Dlib (29) | 2.13 ± 1.47 | 1.40 | 0.32 | MediaPipe (140) | 1.41 ± 0.96 | 1.29 | 0.20 |

| Emotrics-1 (29) | 2.56 ± 2.24 | 1.38 | 0.32 | Emotrics-3 (29) | 2.27 ± 2.03 | 1.07 | 0.19 |

| Emotrics-3 (29) | 2.48 ± 2.24 | 1.13 | 0.27 | Dlib (29) | 1.89 ± 1.28 | 1.20 | 0.18 |

| MediaPipe (225) | 1.39 ± 1.07 | 1.10 | 0.26 | MediaPipe (225) | 1.18 ± 0.88 | 0.97 | 0.14 |

Table 5.

Absolute deviations of the ScoreAI (derived from the 91-pair asymmetry feature vector) from the original images across selected artificial head rotations for all 405 datasets.

Table 5.

Absolute deviations of the ScoreAI (derived from the 91-pair asymmetry feature vector) from the original images across selected artificial head rotations for all 405 datasets.

| Image Rotation [°] | Deviation of ScoreAI from Original Image [°] | Image Rotation [°] | Deviation of ScoreAI from Original Image [°] |

|---|

| Mean | Median | Mean | Median |

|---|

| 1 | 0.15 ± 0.14 | 0.11 | −1 | 0.15 ± 0.14 | 0.11 |

| 5 | 0.18 ± 0.16 | 0.14 | −5 | 0.18 ± 0.17 | 0.13 |

| 10 | 0.21 ± 0.19 | 0.16 | −10 | 0.21 ± 0.18 | 0.16 |

| 15 | 0.24 ± 0.21 | 0.18 | −15 | 0.23 ± 0.21 | 0.18 |

| 20 | 0.27 ± 0.24 | 0.20 | −20 | 0.26 ± 0.23 | 0.19 |

| 25 | 0.29 ± 0.27 | 0.22 | −25 | 0.29 ± 0.26 | 0.22 |