Reflexive Behaviour: How Publication Pressure Affects Research Quality in Astronomy

Abstract

1. Introduction

2. Theoretical Background: Explanations for Misconduct

- (i)

- Failures on the individual actor’s level (“impure individuals”);

- (ii)

- Failures on an institutional level (of a particular university/institute);

- (iii)

- Failures on the structural system of science level.

- (1)

- Bad apple theories;

- (2)

- General strain theory;

- (3)

- Organisational culture theories;

- (4)

- New public management; and

- (5)

- Rational choice theory.

“Academic misconduct is considered to be the logical behavioral consequence of output-oriented management practices, based on performance incentives.”[44] (p. 1140).

3. Methods

3.1. Sample Selection and Procedure

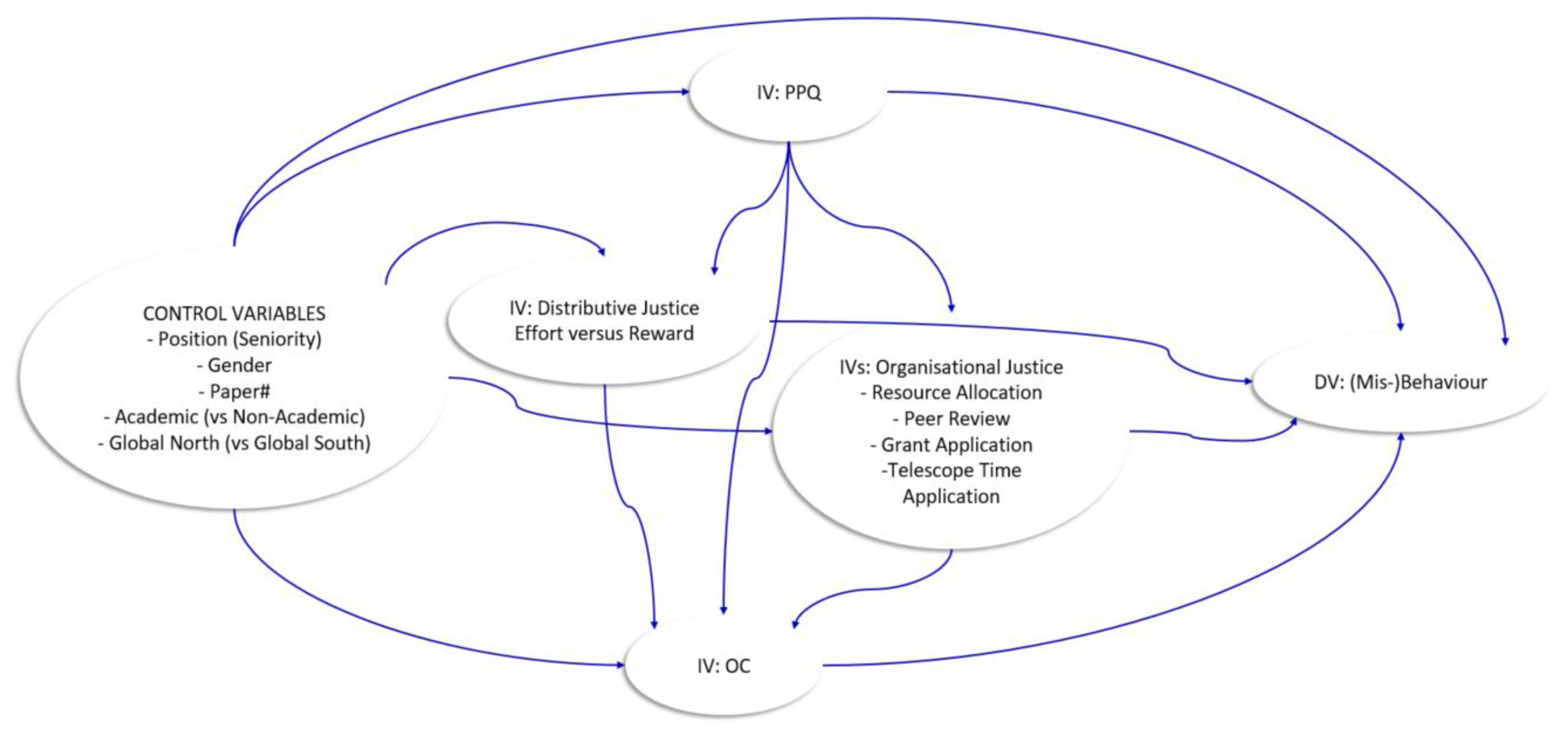

3.2. Instruments

3.2.1. Dependent Variables

- Scientific (Mis-)Behaviour

- Research Quality

3.2.2. Independent Variables

- Perceived Publication Pressure

- Perceived Organisational Justice: Distributive and Procedural Justice

- Perceived Overcommitment

3.2.3. Control Variables

3.3. Research Question and Hypotheses

- (H1): The greater the perceived distributive injustice in astronomy, the greater the likelihood of a scientist observing misbehaviour.

- (H2): The greater the perceived organisational injustice in astronomy, the greater the likelihood of a scientist observing misbehaviour.

- (H3): The greater the perceived publication pressure in astronomy, the greater the likelihood of a scientist observing misbehaviour.

- (H4): Those for whom injustice and publication demands pose a more serious threat to their academic career (e.g., early-career and female researchers in a male-dominated field) will perceive the organisational culture to be more unjust, the publication pressure to be higher, and subsequently there will be more occurring misbehaviour.

- (H5): Scientific misbehaviour has a negative effect on the research quality in astronomy.

- (H6): The greater the perceived publication pressure in astronomy, the greater the likelihood of a scientist perceiving a greater distributive and organisational injustice.

3.4. Statistical Analyses

4. Results

4.1. Descriptive Statistics

4.2. Exploratory Factor Analyses

4.3. Confirmatory Factor Analyses

4.4. Structural Equation Model

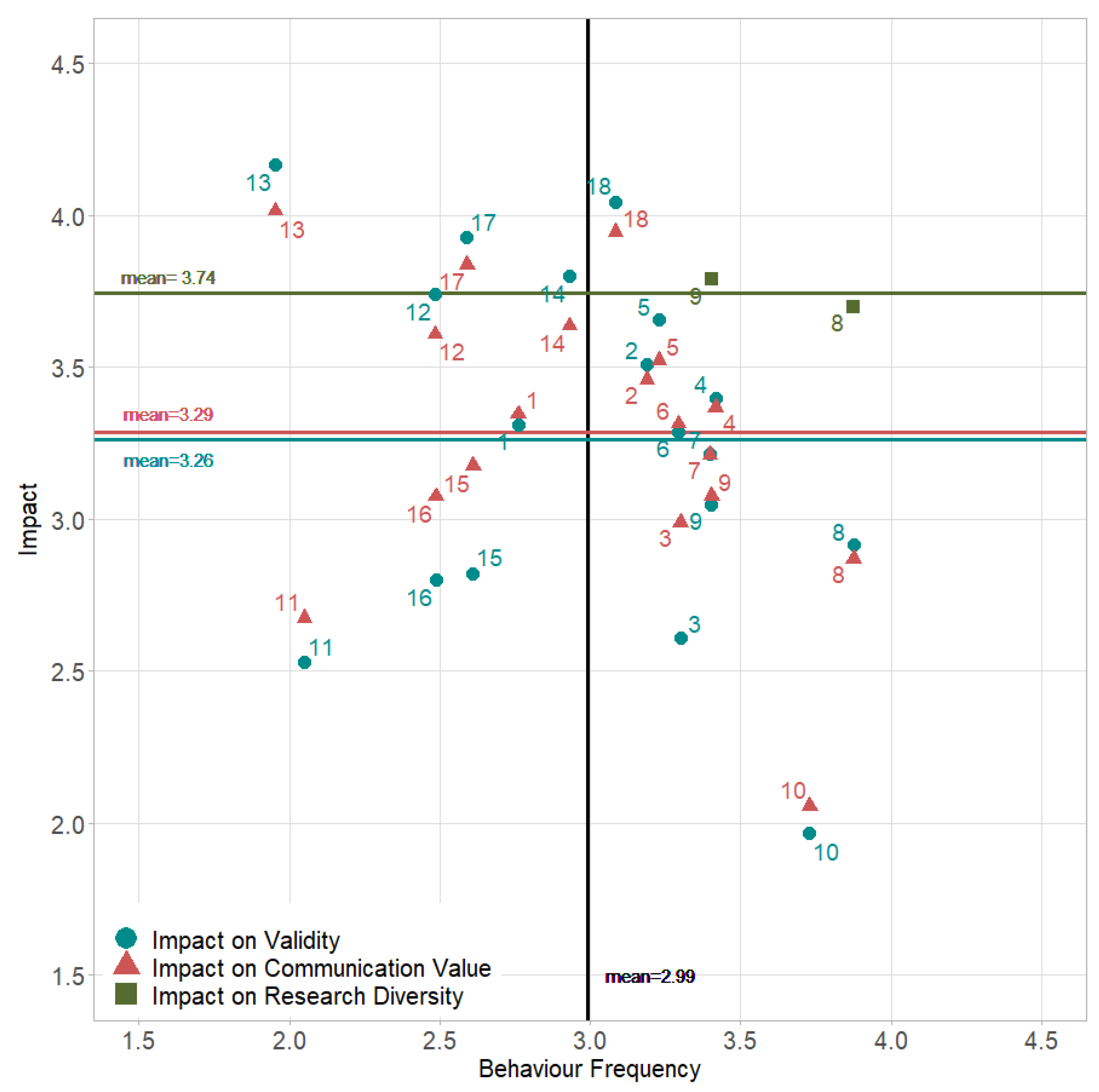

4.5. Perceived Impact on Research Quality

5. Discussion

6. Strengths/Limitations

7. Conclusions, Implications and Outlook for Further Research

Supplementary Materials

Funding

Institutional Review Board Statement

Informed Consent Statement

Acknowledgments

Conflicts of Interest

References

- Hesselmann, F.; Wienefoet, V.; Reinhart, M. Measuring Scientific Misconduct—Lessons from Criminology. Publications 2014, 2, 61–70. [Google Scholar] [CrossRef]

- Stephan, P. How Economics Shapes Science; Harvard University Press: Cambridge, MA, USA, 2012. [Google Scholar]

- Laudel, G.; Gläser, J. Beyond breakthrough research: Epistemic properties of research and their consequences for research funding. Res. Policy 2014, 43, 1204–1216. [Google Scholar] [CrossRef]

- Fochler, M.; De Rijcke, S. Implicated in the Indicator Game? An Experimental Debate. Engag. Sci. Technol. Soc. 2017, 3, 21–40. [Google Scholar] [CrossRef]

- Desrosières, A. The Politics of Large Numbers—A History of Statistical Reasoning; Harvard University Press: Cambridge, MA, USA, 1998; ISBN 9780674009691. [Google Scholar]

- Porter, T. Trust in Numbers; Princeton University Press: Princeton, NJ, USA, 1995. [Google Scholar]

- Dahler-Larsen, P. Constitutive Effects of Performance Indicators: Getting beyond unintended consequences. Public Manag. Rev. 2014, 16, 969–986. [Google Scholar] [CrossRef]

- Dahler-Larsen, P. Quality—From Plato to Performance; Palgrave Macmillan: Cham, Switzerland, 2019. [Google Scholar] [CrossRef]

- Wouters, P. Bridging the Evaluation Gap. Engag. Sci. Technol. Soc. 2017, 3, 108–118. [Google Scholar] [CrossRef]

- Heuritsch, J. The Evaluation Gap in Astronomy—Explained through a Rational Choice Framework. arXiv 2021, arXiv:2101.03068. [Google Scholar]

- Lorenz, C. If You’re So Smart, Why Are You under Surveillance? Universities, Neoliberalism, and New Public Management. Crit. Inq. 2012, 38, 599–629. [Google Scholar] [CrossRef]

- Anderson, M.S.; Ronning, E.A.; De Vries, R.; Martinson, B.C. The Perverse Effects of Competition on Scientists’ Work and Relationships. Sci. Eng. Ethics 2007, 13, 437–461. [Google Scholar] [CrossRef]

- Tijdink, J.K.; Smulders, Y.M.; Vergouwen, A.C.M.; de Vet, H.C.W.; Knol, D.L. The assessment of publication pressure in medical science; validity and reliability of a Publication Pressure Questionnaire (PPQ). Qual. Life Res. 2014, 23, 2055–2062. [Google Scholar] [CrossRef]

- Moosa, I.A. Publish or Perish—Perceived Benefits Versus Unintended Consequences; Edward Elgar Publishing: Northampton, MA, USA, 2018. [Google Scholar] [CrossRef]

- Rushforth, A.D.; De Rijcke, S. Accounting for Impact? The Journal Impact Factor and the Making of Biomedical Research in the Netherlands. Minerva 2015, 53, 117–139. [Google Scholar] [CrossRef] [PubMed]

- U.S. Office of Science and Technology Policy, Executive Office of the President. OSTP, Federal Policy on Research Misconduct. 2000. Available online: http://www.Ostp.Gov/html/001207_3.Html (accessed on 10 September 2021).

- Martinson, B.C.; Anderson, M.S.; de Vries, R. Scientists behaving badly. Nature 2005, 435, 737–738. [Google Scholar] [CrossRef]

- Haven, T.L.; van Woudenberg, R. Explanations of Research Misconduct, and How They Hang Together. J. Gen. Philos. Sci. 2021, 19, 1–9. [Google Scholar]

- Martinson, B.C.; Anderson, M.S.; Crain, A.L.; De Vries, R. Scientists’ perceptions of organizational justice and self-reported misbehaviors. J. Empir. Res. Hum. Res. Ethics 2006, 1, 51–66. [Google Scholar] [CrossRef]

- Heuritsch, J. Effects of metrics in research evaluation on knowledge production in astronomy a case study on Evaluation Gap and Constitutive Effects. In Proceedings of the STS Conference Graz 2019, Graz, Austria, 6–7 May 2019. [Google Scholar] [CrossRef]

- Crain, L.A.; Martinson, B.C.; Thrush, C.R. Relationships between the Survey of Organizational Research Climate (SORC) and self-reported research practices. Sci. Eng. Ethics 2013, 19, 835–850. [Google Scholar] [CrossRef]

- Martinson, B.C.; Thrush, C.R.; Crain, A.L. Development and validation of the Survey of Organizational Research Climate (SORC). Sci. Eng. Ethics 2013, 19, 813–834. [Google Scholar] [CrossRef]

- Wells, J.A.; Thrush, C.R.; Martinson, B.C.; May, T.A.; Stickler, M.; Callahan, E.C. Survey of organizational research climates in three research intensive, doctoral granting universities. J. Empir. Res. Hum. Res. Ethics 2014, 9, 72–88. [Google Scholar] [CrossRef]

- Martinson, B.C.; Nelson, D.; Hagel-Campbell, E.; Mohr, D.; Charns, M.P.; Bangerter, A. Initial results from the Survey of Organizational Research Climates (SOuRCe) in the U.S. department of veterans affairs healthcare system. PLoS ONE 2016, 11, e0151571. [Google Scholar] [CrossRef] [PubMed]

- Martinson, B.C.; Crain, A.L.; Anderson, M.S.; De Vries, R. Institutions ‘Expectations for Researchers’ Self-Funding, Federal Grant Holding, and Private Industry Involvement: Manifold Drivers of Self-Interest and Researcher Behavior. Acad. Med. 2009, 84, 1491–1499. [Google Scholar] [CrossRef] [PubMed]

- Martinson, B.C.; Crain, L.A.; De Vries, R.; Anderson, M.S. The importance of organizational justice in ensuring research integrity. J. Empir. Res. Hum. Res. Ethics 2010, 5, 67–83. [Google Scholar] [CrossRef] [PubMed]

- Haven, T.L.; Bouter, L.M.; Smulders, Y.M.; Tijdink, J.K. Perceived publication pressure in Amsterdam—Survey of all disciplinary fields and academic ranks. PLoS ONE 2019, 14, e0217931. [Google Scholar] [CrossRef]

- Zuiderwijk, A.; Spiers, H. Sharing and re-using open data: A case study of motivations in astrophysics. Int. J. Inf. Manag. 2019, 49, 228–241. [Google Scholar] [CrossRef]

- Bedeian, A.; Taylor, S.; Miller, A. Management science on the credibility bubble: Cardinal sins and various misdemeanors. Acad. Manag. Learn Educ. 2010, 9, 715–725. [Google Scholar]

- Bouter, L.M. Commentary: Perverse incentives or rotten apples? Acc. Res. 2015, 22, 148–161. [Google Scholar] [CrossRef] [PubMed]

- Tijdink, J.K.; Verbeke, R.; Smulders, Y.M. Publication pressure and scientific misconduct in medical scientists. J. Empir. Res. Hum. Res. Ethics 2014, 9, 64–71. [Google Scholar] [CrossRef] [PubMed]

- Tijdink, J.K.; Vergouwen, A.C.M.; Smulders, Y.M. Publication pressure and burn out among Dutch medical professors: A nationwide survey. PLoS ONE 2013, 3, e73381. [Google Scholar] [CrossRef] [PubMed]

- Tijdink, J.K.; Schipper, K.; Bouter, L.M.; Pont, P.M.; De Jonge, J.; Smulders, Y.M. How do scientists perceive the current publication culture? A qualitative focus group interview study among Dutch biomedical researchers. BMJ Open 2016, 6, e008681. [Google Scholar] [CrossRef] [PubMed]

- Miller, A.N.; Taylor, S.G.; Bedeian, A.G. Publish or perish: Academic life as management faculty live it. Career Dev. Int. 2011, 16, 422–445. [Google Scholar] [CrossRef]

- Van Dalen, H.P.; Henkens, K. Intended and unintended consequences of a publish-or-perish culture: A worldwide survey. J. Am. Soc. Inf. Sci. Technol. 2012, 63, 1282–1293. [Google Scholar] [CrossRef]

- Haven, T.L.; Tijdink, J.K.; Martinson, B.C.; Bouter, L.; Oort, F. Explaining variance in perceived research misbehaviour. Res. Integr. Peer Rev. 2021, 6, 7. [Google Scholar] [CrossRef]

- Haven, T.L.; Tijdink, J.K.; De Goede, M.E.E.; Oort, F. Personally perceived publication pressure—Revising the Publication Pressure Questionnaire (PPQ) by using work stress models. Res. Integr. Peer. Rev. 2019, 4, 7. [Google Scholar] [CrossRef]

- Sovacool, B.K. Exploring scientific misconduct: Isolated individuals, impure institutions, or an inevitable idiom of modern science? J. Bioethical Inq. 2008, 5, 271–282. [Google Scholar] [CrossRef]

- Hackett, E.J. A Social Control Perspective on Scientific Misconduct. J. High. Educ. 1994, 65, 242–260. [Google Scholar] [CrossRef]

- Agnew, R. Foundation for a general strain theory of crime and delinquency. Criminology 1992, 30, 47–87. [Google Scholar] [CrossRef]

- Merton, R.K. Social structure and anomie. Am. Sociol. Rev. 1938, 3, 672–682. [Google Scholar] [CrossRef]

- Espeland, W.N.; Vannebo, B. Accountability, Quantification, and Law. Annu. Rev. Law Soc. Sci. 2008, 3, 21–43. [Google Scholar] [CrossRef]

- Halffman, W.; Radder, H. The Academic Manifesto: From an Occupied to a Public University. Minerva 2015, 53, 165–187. [Google Scholar] [CrossRef]

- Overman, S.; Akkerman, A.; Torenvlied, R. Targets for honesty: How performance indicators shape integrity in Dutch higher education. Public Adm. 2016, 94, 1140–1154. [Google Scholar] [CrossRef]

- Atkinson-Grosjean, J.; Fairley, C. Moral Economies in Science: From Ideal to Pragmatic. Minerva 2009, 47, 147–170. [Google Scholar] [CrossRef]

- Esser, H. Soziologie. Spezielle Grundlagen. Band 1: Situationslogik und Handeln. KZfSS Kölner Z. Soziologie Soz. 1999, 53, 773. [Google Scholar] [CrossRef][Green Version]

- Coleman, J.S. Foundations of Social Theory; Belknap Press of Harvard University Press: Cambridge, MA, USA; London, UK, 1990. [Google Scholar]

- Roy, J.R.; Mountain, M. The Evolving Sociology of Ground-Based Optical and Infrared astronomy at the Start of the 21st Century. In Organizations and Strategies in Astronomy; Heck, A., Ed.; Astrophysics and Space Science Library; Springer: Dordrecht, The Netherlands, 2006; Volume 6, pp. 11–37. [Google Scholar]

- Chang, H.-W.; Huang, M.-H. The effects of research resources on international collaboration in the astronomy community. J. Assoc. Inf. Sci. Technol. 2015, 67, 2489–2510. [Google Scholar] [CrossRef]

- Heidler, R. Cognitive and Social Structure of the Elite Collaboration Network of Astrophysics: A Case Study on Shifting Network Structures. Minerva 2011, 49, 461–488. [Google Scholar] [CrossRef]

- Bouter, L.M.; Tijdink, J.; Axelsen, N.; Martinson, B.C.; ter Riet, G. Ranking major and minor research misbehaviors: Results from a survey among participants of four World Conferences on Research Integrity. Res. Integr. Peer Rev. 2016, 1, 17. [Google Scholar] [CrossRef] [PubMed]

- Siegrist, J.; Li, J.; Montano, D. Psychometric Properties of the Effort-Reward Lmbalance Questionnaire; Department of Medical Sociology, Faculty of Medicine, Duesseldorf University: Düsseldorf, Germany, 2014. [Google Scholar]

- Rosseel, Y. lavaan: An R Package for Structural Equation Modeling. J. Stat. Softw. 2012, 48, 1–36. Available online: https://www.jstatsoft.org/v48/i02/ (accessed on 10 September 2021). [CrossRef]

- Haven, T.L. Towards a Responsible Research Climate: Findings from Academic Research in Amsterdam. 2021. Available online: https://research.vu.nl/en/publications/towards-a-responsible-research-climate-findings-from-academic-res (accessed on 10 September 2021).

- Kurtz, M.J.; Henneken, E.A. Measuring Metrics—A 40-Year Longitudinal Cross-Validation of Citations, Downloads, and Peer Review in Astrophysics. J. Assoc. Inf. Sci. Technol. 2017, 68, 695–708. [Google Scholar] [CrossRef]

| Baseline Characteristic | n Valid | Frequency | Percent | Valid Percent |

|---|---|---|---|---|

| Gender | 1827 | |||

| Male | 1333 | 38 | 73.0 | |

| Female | 482 | 13.7 | 26.4 | |

| Non-Binary | 12 | 0.3 | 0.7 | |

| Academic Position | 2188 | |||

| PhD candidate | 332 | 9.5 | 15.2 | |

| Postdoc/Research associate | 504 | 14.4 | 23.0 | |

| Assistant professor | 186 | 5.3 | 8.5 | |

| Associate professor | 330 | 9.4 | 15.1 | |

| Full professor | 568 | 16.2 | 26.0 | |

| Other | 268 | 7.6 | 12.2 | |

| Academic/Non-Academic | 2478 | |||

| Academic | 2086 | 59.4 | 84.2 | |

| Non-Academic | 392 | 11.2 | 15.8 | |

| Location of Employment | 1624 | |||

| Global North | 1377 | 39.2 | 84.8 | |

| Global South | 247 | 7 | 15.2 | |

| Numbers of published papers (as first or co-author during the past 5 years) | 2610 | |||

| 1st paper currently under review | 54 | 1.5 | 2.1 | |

| 0 | 150 | 4.3 | 5.7 | |

| 1–5 | 828 | 23.6 | 31.7 | |

| 6–10 | 389 | 11.1 | 14.9 | |

| 11–20 | 423 | 12.1 | 16.2 | |

| 21–30 | 173 | 4.9 | 6.6 | |

| 31–40 | 158 | 4.5 | 6.1 | |

| 41–50 | 131 | 3.7 | 5.0 | |

| 51–60 | 61 | 1.7 | 2.3 | |

| 61–70 | 43 | 1.2 | 1.6 | |

| 71–80 | 40 | 1.1 | 1.5 | |

| 81–90 | 31 | 0.9 | 1.2 | |

| 91–100 | 37 | 1.1 | 1.4 | |

| >100 | 92 | 2.6 | 3.5 |

| n Valid | Mean | SD | |

|---|---|---|---|

| Independent Variables | |||

| Publication Pressure (PPQ) | 1949 | 3.16 | 0.77 |

| Distributive Justice: Effort | 1757 | 3.68 | 0.86 |

| Distributive Justice: Reward | 1752 | 3.21 | 0.84 |

| Organisational Justice: Resource Allocation | 1852 | 3.02 | 0.8 |

| Organisational Justice: Peer Review | 1967 | 3.69 | 0.74 |

| Organisational Justice: Grant Application | 1267 | 3.14 | 0.79 |

| Organisational Justice: Telescope Time Application | 1185 | 3.36 | 0.72 |

| Overcommitment | 1755 | 3.39 | 0.84 |

| Dependent Variables | |||

| Occurrence of Misconduct | 1869 | 2.99 | 0.66 |

| Impact on Quality Criterion 1 | 1868 | 3.26 | 0.62 |

| Impact on Quality Criterion 2 | 1868 | 3.29 | 0.64 |

| Impact on Quality Criterion 3 | 1902 | 3.74 | 0.93 |

| IV: Publication Pressure | Estimate | Std.Err | z-Value | p (>|z|) | Std.lv | Std.all |

|---|---|---|---|---|---|---|

| Intercept: | 3.159 | |||||

| Gender: Male | −0.186 | 0.07 | −2.64 | 0.008 * | −0.278 | −0.124 |

| Position: PhD | 0.387 | 0.175 | 2.211 | 0.027 * | 0.58 | 0.106 |

| Position: Postdoc | 0.506 | 0.091 | 5.537 | <0.001 * | 0.757 | 0.313 |

| Position: Assistant Prof. | 0.413 | 0.108 | 3.819 | <0.001 * | 0.618 | 0.194 |

| Position: Associate Prof. | 0.052 | 0.091 | 0.576 | 0.565 | 0.078 | 0.03 |

| Position: Other | 0.251 | 0.104 | 2.415 | 0.016 * | 0.376 | 0.128 |

| Primary Employer: Academic | 0.052 | 0.121 | 0.426 | 0.67 | 0.077 | 0.02 |

| Location: Global North | −0.357 | 0.094 | −3.809 | <0.001 * | −0.534 | −0.181 |

| Papers published: 6–20 | −0.013 | 0.089 | −0.147 | 0.883 | −0.02 | −0.009 |

| Papers published: >20 | −0.182 | 0.086 | −2.109 | 0.035 * | −0.272 | −0.136 |

| IV: Reward (ERI) | Estimate | Std.Err | z-Value | p (>|z|) | Std.lv | Std.all |

|---|---|---|---|---|---|---|

| Intercept: | 3.208 | |||||

| PPQ | −0.385 | 0.072 | −5.377 | <0.001 * | −0.36 | −0.36 |

| Gender: Male | 0.074 | 0.078 | 0.947 | 0.344 | 0.103 | 0.046 |

| Position: PhD | −0.165 | 0.194 | −0.851 | 0.395 | −0.23 | −0.042 |

| Position: Postdoc | −0.32 | 0.102 | −3.134 | 0.002 * | −0.447 | −0.185 |

| Position: Assistant Prof. | −0.176 | 0.119 | −1.473 | 0.141 | −0.245 | −0.077 |

| Position: Associate Prof. | −0.323 | 0.102 | −3.169 | 0.002 * | −0.451 | −0.171 |

| Position: Other | −0.123 | 0.115 | −1.071 | 0.284 | −0.172 | −0.058 |

| Primary Employer: Academic | −0.015 | 0.134 | −0.111 | 0.912 | −0.021 | −0.005 |

| Location: Global North | −0.116 | 0.103 | −1.126 | 0.26 | −0.163 | −0.055 |

| Papers published: 6–20 | 0.225 | 0.1 | 2.261 | 0.024 * | 0.314 | 0.144 |

| Papers published: >20 | 0.248 | 0.096 | 2.57 | 0.01 * | 0.346 | 0.173 |

| IV: Effort (ERI) | ||||||

| Intercept: | 3.556 | |||||

| PPQ | 0.476 | 0.079 | 6.027 | <0.001 * | 0.414 | 0.414 |

| Gender: Male | −0.226 | 0.088 | −2.575 | 0.01 * | −0.294 | −0.131 |

| Position: PhD | −0.217 | 0.218 | −0.999 | 0.318 | −0.283 | −0.052 |

| Position: Postdoc | −0.277 | 0.113 | −2.449 | 0.014 * | −0.361 | −0.149 |

| Position: Assistant Prof. | −0.121 | 0.134 | −0.901 | 0.368 | −0.157 | −0.049 |

| Position: Associate Prof. | −0.011 | 0.113 | −0.095 | 0.924 | −0.014 | −0.005 |

| Position: Other | −0.292 | 0.129 | −2.253 | 0.024 * | −0.38 | −0.129 |

| Primary Employer: Academic | −0.049 | 0.151 | −0.324 | 0.746 | −0.064 | −0.017 |

| Location: Global North | 0.174 | 0.116 | 1.501 | 0.133 | 0.227 | 0.077 |

| Papers published: 6–20 | −0.092 | 0.111 | −0.83 | 0.406 | −0.12 | −0.055 |

| Papers published: >20 | 0.069 | 0.107 | 0.644 | 0.52 | 0.09 | 0.045 |

| IV: Organisational Justice (Resource Allocation) | Estimate | Std.Err | z-Value | p (>|z|) | Std.lv | Std.all |

|---|---|---|---|---|---|---|

| Intercept: | 3.02 | |||||

| PPQ | −0.274 | 0.057 | −4.797 | <0.001 * | −0.298 | −0.298 |

| Gender: Male | 0.118 | 0.062 | 1.883 | 0.06 | 0.191 | 0.085 |

| Position: PhD | 0.359 | 0.156 | 2.294 | 0.022 * | 0.582 | 0.106 |

| Position: Postdoc | 0.198 | 0.081 | 2.439 | 0.015 * | 0.322 | 0.133 |

| Position: Assistant Prof. | 0.003 | 0.095 | 0.036 | 0.972 | 0.005 | 0.002 |

| Position: Associate Prof. | −0.111 | 0.08 | −1.391 | 0.164 | −0.181 | −0.068 |

| Position: Other | 0.071 | 0.092 | 0.773 | 0.44 | 0.115 | 0.039 |

| Primary Employer: Academic | 0.01 | 0.107 | 0.09 | 0.929 | 0.016 | 0.004 |

| Location: Global North | 0.072 | 0.082 | 0.877 | 0.38 | 0.117 | 0.04 |

| Papers published: 6–20 | 0.15 | 0.079 | 1.896 | 0.058 | 0.244 | 0.112 |

| Papers published: >20 | 0.088 | 0.076 | 1.151 | 0.25 | 0.142 | 0.071 |

| IV: Organisational Justice (Peer Review) | ||||||

| Intercept: | 3.692 | |||||

| PPQ | −0.418 | 0.074 | −5.659 | <0.001 * | −0.338 | −0.338 |

| Gender: Male | 0.034 | 0.082 | 0.414 | 0.679 | 0.041 | 0.018 |

| Position: PhD | 0.526 | 0.206 | 2.557 | 0.011 * | 0.638 | 0.117 |

| Position: Postdoc | 0.23 | 0.107 | 2.159 | 0.031 * | 0.279 | 0.115 |

| Position: Assistant Prof. | 0.214 | 0.126 | 1.696 | 0.09 | 0.26 | 0.082 |

| Position: Associate Prof. | 0.066 | 0.106 | 0.62 | 0.535 | 0.08 | 0.03 |

| Position: Other | 0.152 | 0.122 | 1.245 | 0.213 | 0.184 | 0.062 |

| Primary Employer: Academic | 0.088 | 0.142 | 0.618 | 0.536 | 0.106 | 0.028 |

| Location: Global North | −0.447 | 0.111 | −4.041 | <0.001 * | −0.542 | −0.184 |

| Papers published: 6–20 | 0.324 | 0.105 | 3.083 | 0.002 * | 0.393 | 0.18 |

| Papers published: >20 | 0.241 | 0.101 | 2.376 | 0.017 * | 0.292 | 0.146 |

| IV: Organisational Justice (Grant Application) | Estimate | Std.Err | z-Value | p (>|z|) | Std.lv | Std.all |

| Intercept: | 3.143 | |||||

| PPQ | −0.473 | 0.081 | −5.818 | <0.001 * | −0.354 | −0.354 |

| Gender: Male | 0.08 | 0.09 | 0.891 | 0.373 | 0.089 | 0.04 |

| Position: PhD | 0.648 | 0.225 | 2.886 | 0.004 * | 0.725 | 0.132 |

| Position: Postdoc | 0.175 | 0.116 | 1.506 | 0.132 | 0.195 | 0.081 |

| Position: Assistant Prof. | 0.175 | 0.138 | 1.272 | 0.203 | 0.196 | 0.062 |

| Position: Associate Prof. | −0.183 | 0.116 | −1.579 | 0.114 | −0.204 | −0.077 |

| Position: Other | 0.128 | 0.133 | 0.966 | 0.334 | 0.143 | 0.049 |

| Primary Employer: Academic | 0.097 | 0.155 | 0.63 | 0.529 | 0.109 | 0.029 |

| Location: Global North | −0.262 | 0.12 | −2.194 | 0.028 * | −0.293 | −0.099 |

| Papers published: 6–20 | 0.202 | 0.114 | 1.769 | 0.077 | 0.226 | 0.103 |

| Papers published: >20 | 0.193 | 0.11 | 1.749 | 0.08 | 0.215 | 0.108 |

| IV: Organisational Justice (Telescope Time Application) | ||||||

| Intercept: | 3.356 | |||||

| PPQ | −0.298 | 0.069 | −4.303 | <0.001 * | −0.255 | −0.255 |

| Gender: Male | 0.121 | 0.081 | 1.501 | 0.133 | 0.155 | 0.069 |

| Position: PhD | 0.078 | 0.2 | 0.391 | 0.696 | 0.1 | 0.018 |

| Position: Postdoc | 0.085 | 0.104 | 0.822 | 0.411 | 0.109 | 0.045 |

| Position: Assistant Prof. | 0.002 | 0.123 | 0.016 | 0.987 | 0.003 | 0.001 |

| Position: Associate Prof. | 0.003 | 0.104 | 0.031 | 0.975 | 0.004 | 0.002 |

| Position: Other | 0.081 | 0.119 | 0.678 | 0.498 | 0.103 | 0.035 |

| Primary Employer: Academic | −0.072 | 0.139 | −0.515 | 0.606 | −0.092 | −0.024 |

| Location: Global North | −0.215 | 0.107 | −1.999 | 0.046 * | −0.274 | −0.093 |

| Papers published: 6–20 | 0.146 | 0.102 | 1.429 | 0.153 | 0.187 | 0.086 |

| Papers published: >20 | 0.106 | 0.099 | 1.076 | 0.282 | 0.136 | 0.068 |

| IV: Overcommitment (ERI) | Estimate | Std.Err | z-Value | p (>|z|) | Std.lv | Std.all |

|---|---|---|---|---|---|---|

| Intercept: | 3.433 | |||||

| Publication Pressure | 0.117 | 0.059 | 1.978 | 0.048 * | 0.134 | 0.134 |

| Reward (ERI) | −0.071 | 0.074 | −0.964 | 0.335 | −0.087 | −0.087 |

| Effort (RI) | 0.459 | 0.059 | 7.714 | <0.001 * | 0.602 | 0.602 |

| Organisational Justice (RA) | −0.039 | 0.072 | −0.55 | 0.582 | −0.041 | −0.041 |

| Organisational Justice (PR) | 0.062 | 0.038 | 1.654 | 0.098 | 0.088 | 0.088 |

| Organisational Justice (GA) | −0.001 | 0.037 | −0.026 | 0.98 | −0.001 | −0.001 |

| Organisational Justice (TA) | −0.068 | 0.041 | −1.657 | 0.097 | −0.091 | −0.091 |

| Gender: Male | 0.006 | 0.056 | 0.098 | 0.922 | 0.009 | 0.004 |

| Position: PhD | 0.153 | 0.145 | 1.055 | 0.292 | 0.262 | 0.048 |

| Position: Postdoc | 0.042 | 0.081 | 0.516 | 0.606 | 0.072 | 0.03 |

| Position: Assistant Prof. | −0.076 | 0.087 | −0.878 | 0.38 | −0.13 | −0.041 |

| Position: Associate Prof. | −0.164 | 0.074 | −2.203 | 0.028 * | −0.28 | −0.106 |

| Position: Other | −0.01 | 0.084 | −0.121 | 0.903 | −0.017 | −0.006 |

| Primary Employer: Academic | −0.036 | 0.096 | −0.379 | 0.705 | −0.062 | −0.016 |

| Location: Global North | 0.043 | 0.077 | 0.555 | 0.579 | 0.074 | 0.025 |

| Papers published: 6–20 | 0.006 | 0.072 | 0.087 | 0.931 | 0.011 | 0.005 |

| Papers published: >20 | 0.003 | 0.069 | 0.048 | 0.962 | 0.006 | 0.003 |

| DV: Frequency of Misbehaviour Occurrence | Estimate | Std.Err | z-Value | p (>|z|) | Std.lv | Std.all |

|---|---|---|---|---|---|---|

| Intercept: | 2.992 | |||||

| Publication Pressure | 0.373 | 0.066 | 5.679 | <0.001 * | 0.433 | 0.433 |

| Reward (ERI) | −0.138 | 0.072 | −1.932 | 0.053 | −0.172 | −0.172 |

| Effort (RI) | −0.021 | 0.057 | -0.366 | 0.714 | −0.028 | −0.028 |

| Organisational Justice (RA) | 0.09 | 0.068 | 1.321 | 0.187 | 0.096 | 0.096 |

| Organisational Justice (PR) | −0.062 | 0.036 | −1.733 | 0.083 | −0.089 | −0.089 |

| Organisational Justice (GA) | −0.004 | 0.035 | −0.112 | 0.911 | −0.006 | −0.006 |

| Organisational Justice (TA) | −0.106 | 0.04 | −2.673 | 0.008 * | −0.144 | −0.144 |

| Overcommitment (ERI) | 0.073 | 0.072 | 1.022 | 0.307 | 0.074 | 0.074 |

| Gender: Male | −0.06 | 0.053 | −1.13 | 0.259 | −0.104 | −0.046 |

| Position: PhD | −0.062 | 0.137 | −0.455 | 0.649 | −0.108 | −0.02 |

| Position: Postdoc | 0.014 | 0.077 | 0.186 | 0.853 | 0.025 | 0.01 |

| Position: Assistant Prof. | 0.052 | 0.082 | 0.641 | 0.522 | 0.091 | 0.029 |

| Position: Associate Prof. | −0.023 | 0.07 | −0.325 | 0.745 | −0.04 | −0.015 |

| Position: Other | −0.054 | 0.079 | −0.677 | 0.498 | −0.093 | −0.032 |

| Primary Employer: Academic | −0.158 | 0.09 | −1.752 | 0.08 | −0.275 | −0.072 |

| Location: Global North | 0.205 | 0.074 | 2.762 | 0.006 * | 0.355 | 0.121 |

| Papers published: 6–20 | 0.005 | 0.067 | 0.071 | 0.943 | 0.008 | 0.004 |

| Papers published: >20 | 0.074 | 0.065 | 1.137 | 0.255 | 0.129 | 0.065 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Heuritsch, J. Reflexive Behaviour: How Publication Pressure Affects Research Quality in Astronomy. Publications 2021, 9, 52. https://doi.org/10.3390/publications9040052

Heuritsch J. Reflexive Behaviour: How Publication Pressure Affects Research Quality in Astronomy. Publications. 2021; 9(4):52. https://doi.org/10.3390/publications9040052

Chicago/Turabian StyleHeuritsch, Julia. 2021. "Reflexive Behaviour: How Publication Pressure Affects Research Quality in Astronomy" Publications 9, no. 4: 52. https://doi.org/10.3390/publications9040052

APA StyleHeuritsch, J. (2021). Reflexive Behaviour: How Publication Pressure Affects Research Quality in Astronomy. Publications, 9(4), 52. https://doi.org/10.3390/publications9040052