1. Introduction

Fredholm equations of the second kind are integral equations of the form

with

an unknown function in a Banach space

. The kernel

and the right-hand side

are given functions.

They appear in different areas of applied mathematics, sometimes as equivalent formulation for boundary value problems with ordinary differential equations, and there are many problems of mathematical physics that are modelled with Fredholm integral equations with different kernels (see, for example, [

1,

2,

3]).

The equation may be written

by defining the operator

,

.

If for the kernel

the operator

is bounded, a sufficient condition to guarantee the existence and uniqueness of a solution of Equation (2) is that

(see [

4], Theorem 2.14, p. 23).

Petrov–Galerkin is a projection method often proposed to find numerical approximate solutions to this type of integral equation. The idea is to choose appropriate sequences of finite dimensional subspaces of , and , the trial and test subspaces respectively, where the unknown and the data are to be projected.

In the case of being

a Hilbert space with inner product

, the Petrov–Galerkin method looks for

such that

and, as

and

are subspaces of dimension

, solving Equation (3) reduces to solve a linear algebraic system of equations represented by a

matrix.

In [

5] it is proved that, if

is a compact linear operator not having 1 as an eigenvalue, and the pair

is a regular pair—a concept to be defined in the next section—Equation (3) has a unique solution

which satisfies

where the constant C does not depend on

.

Solvability and numerical stability of the approximation scheme are, in this way, assured, and the accuracy of the approximation to the unique solution of Equation (2) does not depend formally on , as can be noted in Equation (4). The goal is to choose test function subspaces that are easy to handle, while the quality of convergence of the method is preserved.

In addition, the convergence can even be improved by means of an iteration of the method: once the approximations

are obtained, a new sequence of approximate solutions

can be built by means of a simple procedure (see [

6,

7,

8]):

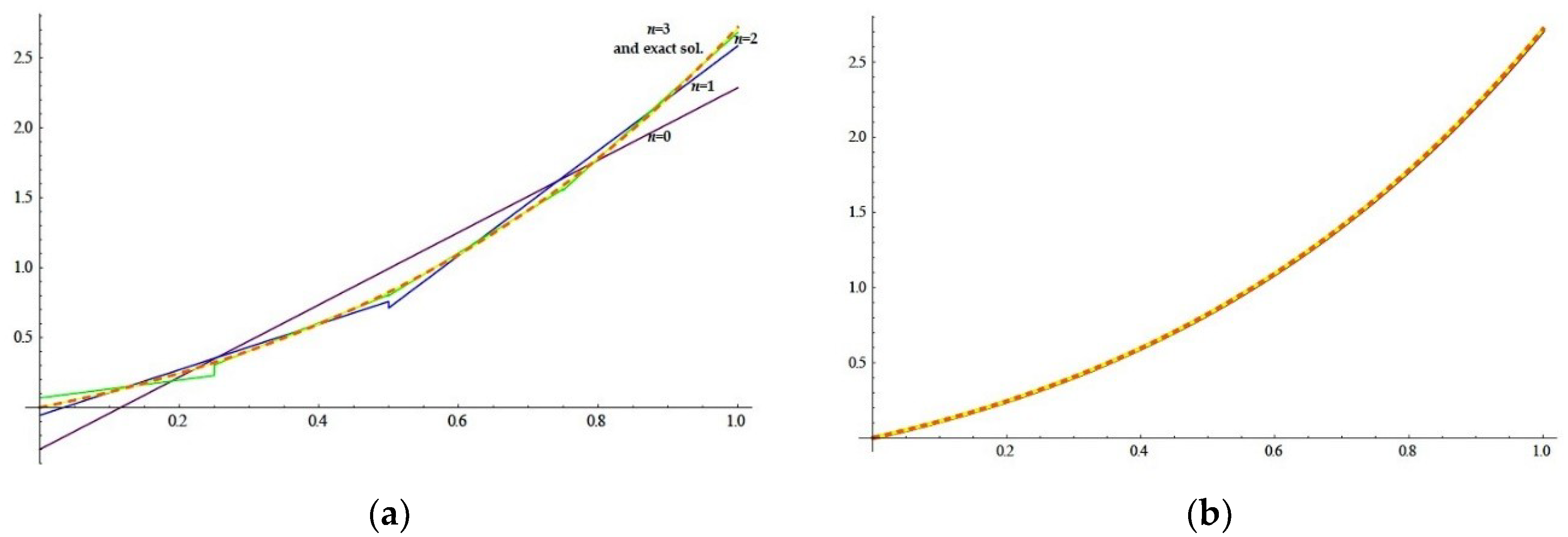

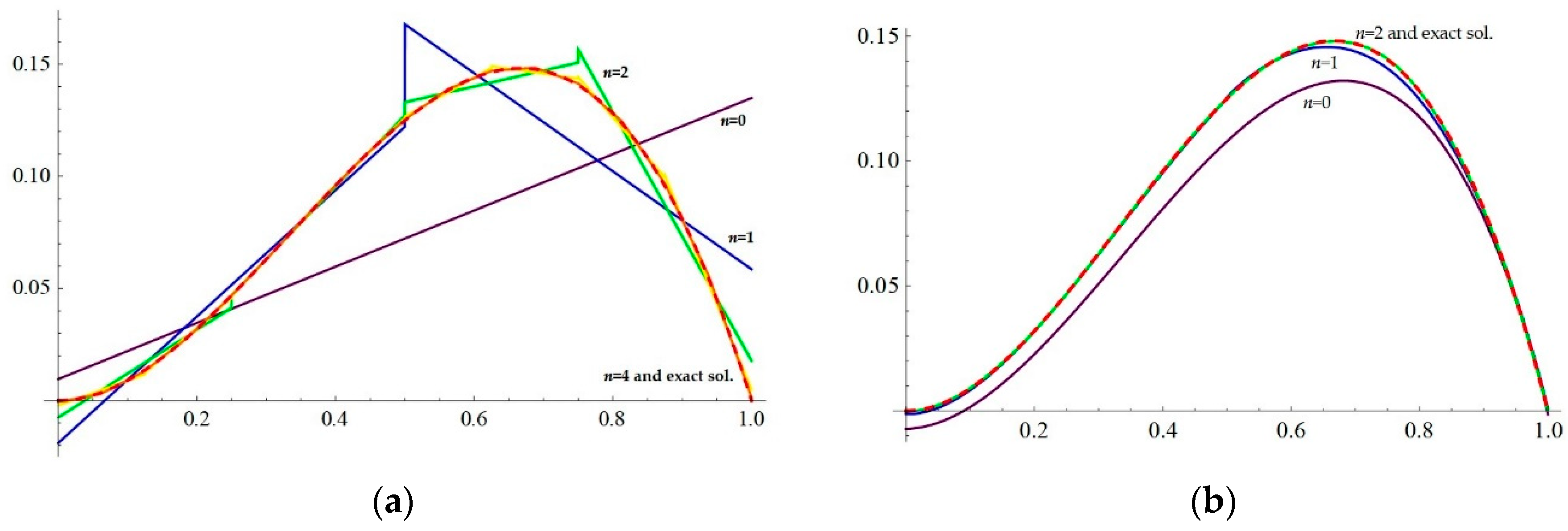

In this work we choose pairs of simple subspaces generated by Legendre polynomials and show the goodness of the approximations in two numerical examples with known solution, one of them having singular kernel. We then improved the convergence by means of an iteration of the method and show why the approximation is better, even for small values of .

2. Method

Let

be a Hilbert space,

the associated norm, and

a compact linear operator. It is shown in [

4] that, if

, there exists a solution

to Equation (2) for

a given function, and it is unique. We are interested in looking for a good approximation to

satisfying Equation (2).

For each let us consider subspaces , with . The Petrov–Galerkin method for Equation (2) is a numerical method to find satisfying Equation (3).

For the method to be useful, it is necessary to establish conditions under which Equation (3) has a unique solution and for the unique solution of Equation (2).

It is easy to show that the condition

ensures the existence of a unique solution

for Equation (3). From [

4] (p. 243), convergence can be expected only if

so, from now on, the sequences of subspaces

and

are both chosen verifying this condition of denseness.

Following [

5], and denoting by

the sequences of subspaces, the pair

is said to be ‘’regular’’ if there exists a linear surjective operator

satisfying

It is easy to show that the surjectivity of and (i.) assure the condition of Equation (6).

From [

5] (p. 411), the following theorem summarizes the conditions for the existence and uniqueness of the solutions of Equation (3) and their convergence to the solution of Equation (2):

Theorem 1. Letbe a Hilbert space anda compact linear operator not having 1 as eigenvalue. Supposeandare finite dimensional subspaces of, with , verifying thatis a regular pair and, for each, there exist sequencesandso thatand. Then, there existssuch that, for, equationhas a unique solutionfor any given, that satisfies, whereis the unique solution ofand C is constant not dependent of n.

From [

9], the characterization of a regular pair is simple by means of the so called “correlation matrix”. Let

and

be bases of

and

, respectively, and define the

matrices

,

, the correlation matrix

, and

, for

.

Note that for the real case,

and

are positive definite and

is the symmetric part of the correlation matrix. We have proven (see [

10]) the following

Proposition 1. Ifis positive definite,is a regular pair.

For conciseness, we assume from here on.

Let us consider .

For the interval , is the subspace of polynomials of degree less than on each subinterval and . since every continuous functions with compact support on can be approximated by steps functions on subintervals of the form , and they are dense in . The condition of Equation (7) of denseness is, so, satisfied.

As the basis of , we will choose Legendre polynomials of degree less than , adapted to each of the subintervals : with , the Legendre polynomial of degree on and the characteristic function of the subinterval .

We rename to simplify the notation and choose the sequences of subspaces and , with .

Note that the condition of Equation (6), which assures the uniqueness of the solution of Equation (3) for each is fulfilled.

Indeed, suppose that , for between and satisfies that and for every ; then if is even or if is odd, which is impossible, since and for every and every .

Renaming the elements of the basis as

for

odd,

for

even and

, it is easy to show that

is a regular pair, since

is a

matrix with definite positive

blocks on its principal diagonal and 0s everywhere else (for details, see [

10]).

Once the approximations

are obtained, an almost natural iteration procedure is possible to obtain new approximations of the real solution

. Since the equation being solved is

, or

, we can define

. This first iteration, applied to the Galerkin method, has been studied since the 1970s, because, under appropriated conditions of

and

, it reveals an interesting phenomenon called “superconvergence” (see [

6,

11], for instance), as the order of convergence can be notably improved.

In [

11] (p. 42), the existence of a unique solution

for Equation (5) and the improvement of the order of convergence of the iterated approximation for any projection method are guaranteed.

In [

5] (p. 419), the superconvergence in the Petrov–Galerkin scheme applied to Fredholm equations of the second kind is explained and, under the same conditions of the Theorem 1 we have just enunciated, a theorem establishes that

satisfies

for

the unique solution in

of Equation (1), showing that the improvement of the order of convergence by the iteration procedure is due to the approximation of the kernel

by elements

of test subspace

. In our work, the elements of test subspaces

are piecewise constant functions on the dyadic subintervals

.

We will now follow an idea from [

6] (p. 67). For

a Lipschitz function on an interval

, with Lipschitz constant

, let

be the piecewise constant function defined by

for

, with

a regular partition of

with norm

.

For any , if , so .

If the kernel satisfies that is a Lipschitz function with Lipschitz constant for each , it is and, then, for each and, consequently, .

Moreover, if , from Equation (8), , and the approximation is actually improved.