1. Introduction

Artificial Neural Networks (ANNs) have emerged as a powerful computational model, developed by modelling the biological brain processing information into systematic procedures of mathematical formulation. ANNs are extensively applied in various computational and prediction tasks such as in pandemic diseases analysis [

1], pattern recognition [

2], logic extraction [

3], function approximation [

4], and complex analysis [

5]. Over the years, researchers have utilized ANN to solve complex optimization problems suitable to an ANN’s capability to provide alternative ways to perform computation and understand information compared to conventional statistical methods.

Hopfield and Tank formulated Hopfield Neural Network (HNN) in 1985 to provide a network for solving combinatorial problems [

6]. HNN is a variant of ANN, which demonstrates the structure of feedback and recurrent interconnected neurons with no existence of hidden layers. HNNs exhibit great performance in pattern recognition [

7], fault detection [

8], and clustering tasks [

9]. Several distinctive features of HNNs include Content Addressable Memory (CAM), Minimization of Energy as the neuron state changed, and fault tolerance [

10]. Conjointly, HNN complies with the discrete structure of the problem and solves it by minimizing the energy function that corresponds to the solution of the problem. One of the most relevant challenges faced by the HNN is the output representation produced in solving and learning the intended problem. This argument leads to the introduction of a symbolic rule that governs the information embedded in the HNN. One of the earliest pursuits in representing ANN in terms of logical rules was coined by Abdullah [

11]. This work implemented a logical rule into the standard HNN by utilizing the relationship of the cost function and the energy function. In pursuing the argument of this paradigm, one may ask: what type of logical rule can be represented in an ANN? Sathasivam [

12] proposed Horn Satisfiability (HornSAT) in HNN by implementing nonoscillatory synaptic weight. From this perspective, Kasihmuddin et al. [

10] proposed 2 Satisfiability (2SAT) representation in HNN. The proposed network achieved more than 90% of global minimum solutions during the retrieval phase of HNN. Similar observations were made in [

13] as 3 Satisfiability (3-SAT) was implemented as the logical rule in HNN. As an extension of

k Satisfiability representation, Maximum Satisfiability [

14] became the first unsatisfiable logical rule that has been implemented in HNN. Although the cost function obtained is not zero, the performance metric showed that most of the retrieved states achieved global minimum energy. Since the introduction of these logical rules, [

15] initiated a hybrid HNN model by implementing 2SAT to verify the properties of the Bezier Curve model. In addition, a work by [

16] used an HNN with 3-SAT to optimize pattern satisfiability (Pattern-SAT). The proposed work showed that information embedded in 3SAT yielded a better result for Pattern-SAT. The work by [

17] utilized 3SAT integrated with an HNN to configure a Very Large-Scale Integrated (VLSI) circuit. The proposed hybrid network achieved more that 90% accuracy in terms of circuit verification. In another development, Hamadneh et al. [

18] proposed logic programming in a Radial Basis Function Neural Network (RBFNN). Logic programming is embedded in RBFNN by calculating the width and the centre of the hidden layer. These studies were extended by Alzaeemi et al. [

19] and Mansor et al. [

20] where they proposed 2 Satisfiability in RBFNN. The proposed logical rule reduced the complexity of the network by fixing the value of the parameters involved in the RBFNN. Note that, the common denominator in these studies is the implementation of the systematic logical rule in the ANN. There is no recent effort to implement a nonsystematic logical rule in an ANN.

From a computational intelligence point of view, metaheuristics algorithms are interesting for several reasons. First, the computation via metaheuristics can be implemented with a minimum level of bias. The algorithm can search for the optimal solution without complex mathematical derivation. For instance, Genetic Algorithm (GA) can screen the whole search space without compromising any possible optimal search space. This is due to the capability of the metaheuristics algorithm to utilize both local search and global search mechanisms to find the optimal solution. Second, metaheuristics algorithms are commonly used to reduce the computational complexity of another intelligence system. As the number of constraints grow, standard standalone ANN will be computationally burdening and tend to be trapped in a suboptimal solution. In several studies [

21,

22,

23], metaheuristics algorithms were reported to compliment ANN in solving optimization problems. Extensive empirical studies have been conducted to investigate the effect of metaheuristics in optimizing HNN. Kasihmuddin et al. [

10,

24] proposed GA and Artificial Bee Colony (ABC) for optimizing 2SAT in HNN. The proposed hybrid HNN minimizes the cost function of the 2SAT in the HNN. In another development, Mansor et al. [

13] proposed the use of the Artificial Immune System (AIS) in optimizing 3SAT integrated in HNN. The proposed AIS is later implemented in Maximum Satisfiability [

25]. The main challenge in finding a suitable metaheuristic for Satisfiability representation is the structure of the logical rule. In this case, the first order logical rule coupled with different logical order is difficult to satisfy compared to higher systematic order logical rules.

In practice, an optimal metaheuristics algorithm must be able to cover a wide range of solutions and create several independent computations. Election Algorithm (EA) is a class of socio-political metaheuristics [

26], which combines the mechanisms of evolutionary algorithm and swarm optimization. It was coined by [

27], in which the algorithm was developed by modelling the presidential election process. The algorithm involves multiple layers of optimization, namely, positive advertisement, negative advertisement, and coalition, which are suitable for use by the learning algorithm. Similar to other metaheuristics algorithms such as GA and ABC, EA can be used in both continuous and discrete optimizations. The whole process is governed by the campaign process by improving the eligibility of the candidates (solutions of the constrained optimization problem) [

28]. This algorithm combines the capability of the local search in a partitioned search space. Due to its unique way of improving the current solution, clinical iterative improvement for EA is reported to reduce the probability of the solution to achieve a nonimproving solution (suboptimal solution). The capacity of the EA in searching the optimal solution for constrained optimization has led to a more robust EA, such as Chaotic EA. In current development, [

29] proposed a novel Chaotic Election Algorithm for function optimization by using the standard boundary-constrained benchmark function. Although chaotic EA has been reported as a tremendous success in finding the optimal solution, the capacity of the basic EA is worth investigating. In this study, we will adopt EA as the learning algorithm in an HNN to generate global minimum solutions for Random

k Satisfiability (RAN

kSAT).

The contributions of the present paper are: (1) New logical rule;RAN2SAT is proposed by considering first and second order logic

. (2) We implemented RAN2SAT in the HNN by minimizing the cost function and Lyapunov Energy Function. (3) A new EA is proposed to optimize the learning phase of HNN by incorporating RAN2SAT. The effectiveness of the EA using RAN2SAT will be compared to the state-of-the-art GA. By constructing an effective HNN work model, the proposed learning method will be beneficial for logic mining [

3] and other variants of HNN [

30]. The rest of this paper is organized in the following way. The new Random

k Satisfiability representation is formally described in

Section 2. In

Section 3, the proposed RAN2SAT is embedded into HNN. The structure of the cost function and the energy function for RAN2SAT will be explained in detail.

Section 4 presents the proposed EA and the existing work of GA using RAN2SAT.

Section 5 reports the experimental setup, the performance metrics involved, and the general implementation of the network. The results and discussion are reported in

Section 6. Finally,

Section 7 concludes the paper with future directions.

2. The Proposed Random k Satisfiability (RANkSAT)

Random

k Satisfiability (RAN

kSAT) is a class of nonsystematic Boolean logic representation. It consists of random number of literals (can be the negated literals) per clause. RAN

kSAT is represented in Conjunctive Normal Form (CNF), where each clause contains random number of variables connected by an OR operator. The fundamental structure of RAN

kSAT is not restricted compared to conventional

kSAT [

17] logical representation. Hence, the general formulation for RAN

kSAT is given as

where

,

and

,

. Therefore, the clause in

is defined as

where

,

, and

. In particular, the first and second order clause are denoted as

and

, respectively. In this study,

is a Conjunctive Normal Form (CNF) formula where the clauses are chosen uniformly, independently without replacement among all

nontrivial clause of length

. Note that,

exists in the

, if the

contains either

or

and the mapping of

is called logical interpretation. According to [

3], the Boolean value for the mapping is expressed as 1 (TRUE) and −1 (FALSE). In theory, the example of RAN

kSAT formula for

is given as

According to Equation (3), comprises of , , and . Therefore, the outcome of Equation (3) is if with two clauses satisfied . In this study, we investigated the RAN2SAT for the case of .

3. RAN2SAT in a Hopfield Neural Network

The fundamental architecture and structure of a Hopfield Neural Network (HNN) consists of discrete interconnected bipolar neurons without any hidden neurons [

31]. The synaptic weights are strictly symmetrical in manner, without self-mapping among the interconnected neurons. Hence, the Content Addressable Memory (CAM) is studied as a dynamic storage system for the synaptic weights [

12]. Given an initial vector that is mapped to the neuron state

and without any noise intervention, the HNN will converge to the equilibrium that corresponds to the minimum value of

[

32]. Henceforth, the final state of the HNN corresponds to the solution of the combinatorial problem. The neurons in HNN are represented in bipolar form,

conform to the dynamics

. The general asynchronous updating rule of the HNN is given by:

where

describes the synaptic weight matrix of HNN, which establishes the strength of the connections from neuron

j to

i with predetermined bias

. In this study, the HNN is implemented as the central network in training the

. The formalism of logic programming in HNN does not impose any restriction on the accepted type of clauses as long as the proposed propositional logic is satisfiable [

33].

can be embedded into the HNN by assigning each variable with neurons

to the defined cost function. Furthermore, the generalized cost function

that governs the combinations of HNN and

is given as

where

and

are the number of clauses and the number variables in

, respectively. Note that the inconsistency of

is given as:

The value of

is proportional to the number of “inconsistencies” of the clause

. The more

that is unsatisfied, the higher the value of

. Minimum

corresponds to the “most consistent” selection of

. Hence, the updating rule for

in HNN is defined as:

where

and

are second and first order synaptic weights of the embedded

. Equations (7) and (8) are important to ensure the neurons

will always converge to

. The quality of the retrieved

can be evaluated by employing the Lyapunov energy function,

, defined as:

The structure of Equation (9) is valid for RAN2SAT logical representation for the case of

. Equation (7) describes that the energy portrayed from the

always decreases monotonically. The value of

indicates the value of the energy with respect to the absolute final energy

attained from

[

11]. Hence, the value of

can be further computed by using the following formula:

where

and

that corresponds to

. Hence, the quality of the final neuron state can be properly examined by checking the following condition:

where

is the predetermined tolerance value. Note that, if the embedded

does not satisfy Equation (11), the final state attained will be trapped in a local minimum solution. It should be mentioned that,

and

can be effectively obtained by using the Wan Abdullah method [

11]. Hebbian learning was reported to produce an oscillating neuron state that will result in a suboptimal value of

. In this paper, the implementation of

in HNN is denoted as the HNN-RAN2SAT model.

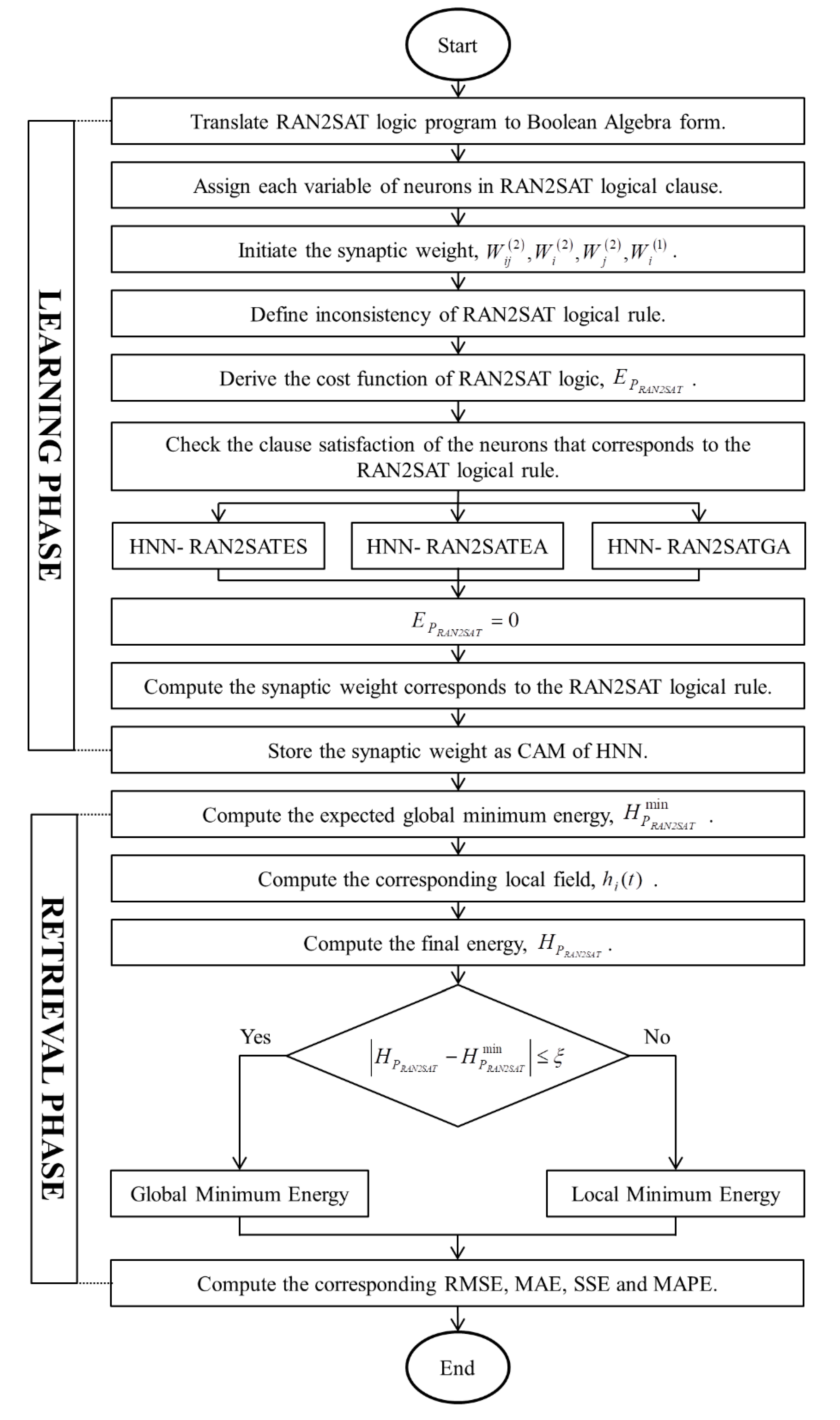

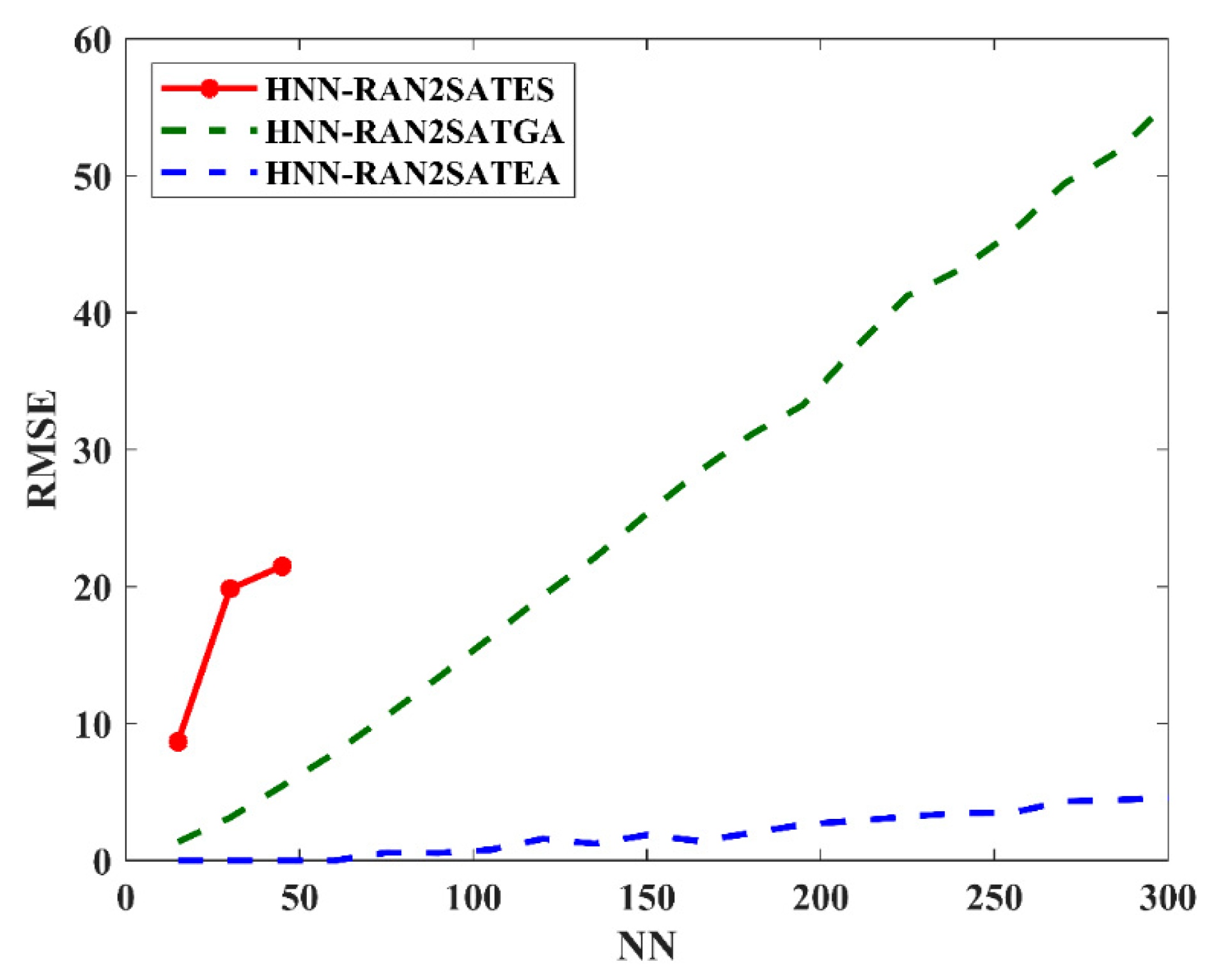

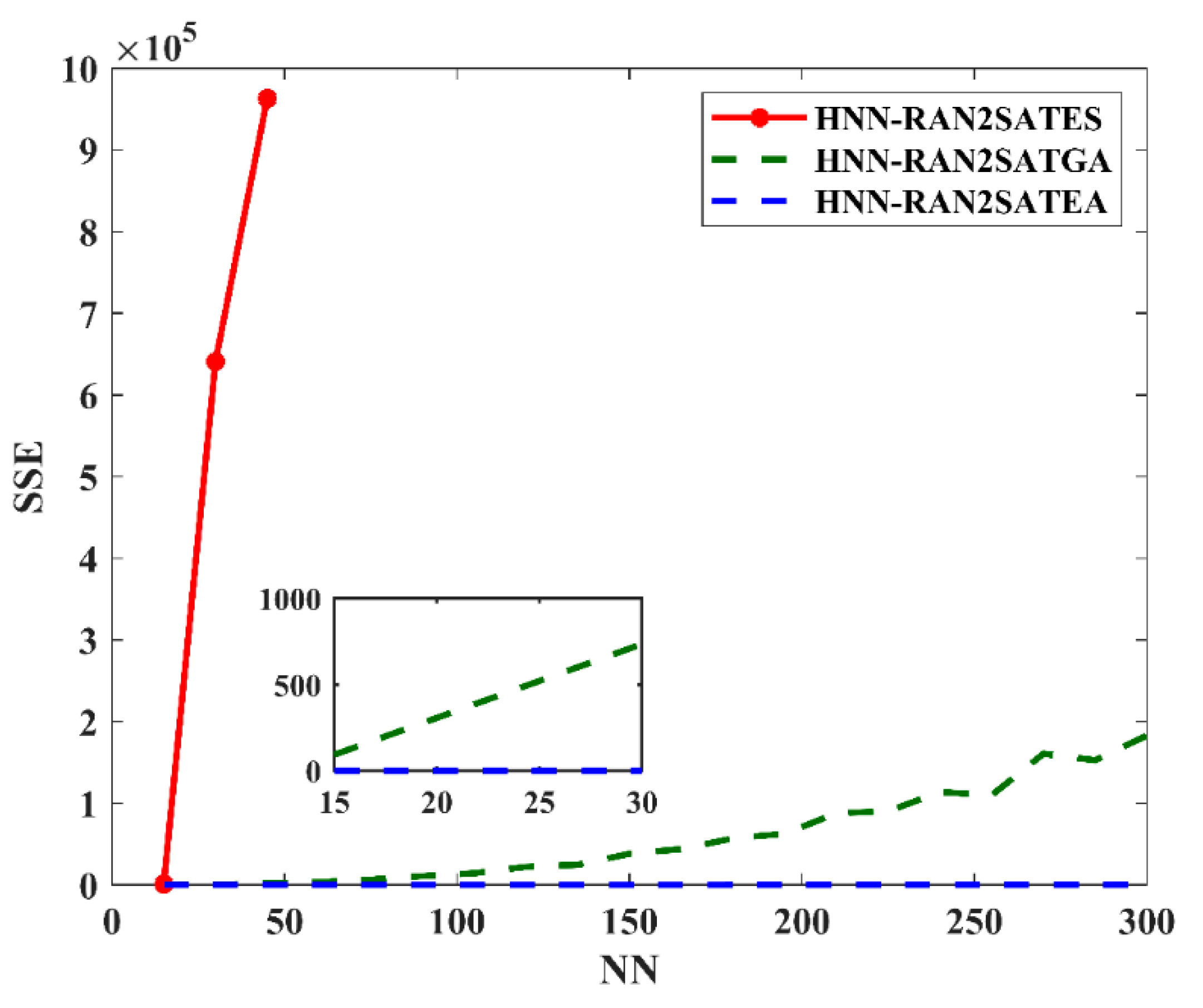

6. Results and Discussion

Figure 2,

Figure 3,

Figure 4 and

Figure 5 demonstrate the performance of HNN-RAN2SAT in terms of RMSE, MAE, SSE, and MAPE, respectively. Based on

Figure 2 and

Figure 5, the general trend of the RMSE, MAE, SSE, and MAPE values for HNN-RAN2SAT increased with the increase of the number of neurons. The increment in the error evaluations portrays the complexities of the neuron states of RAN2SAT. Based on the RMSE and MAE evaluation during the learning phase, the proposed method, HNN-RAN2SATEA, manages to achieve

, 1200% lower than HNN-RAN2SATGA. The main reason is that the optimization layers in EA have a better partition in solution spaces, meaning

can be achieved in fewer iterations. According to SSE analysis, it was reported that HNN-RAN2SATEA recorded a lower SSE, about 2150% lower than HNN-RAN2SATGA. This demonstrates the capability of ES in reducing the sensitivity of the model towards error by minimizing the iterations.

In addition, the MAPE for HNN-RAN2SATEA is 26% lower than that of HNN-RAN2SATGA. Based on MAPE, we can observe the percentage of error of the models. To sum up, based on

Figure 2,

Figure 3,

Figure 4 and

Figure 5, HNN-RAN2SATEA can retrieve a more accurate final state that than HNN-RAN2SATGA and HNN-RAN2SATES. Meanwhile, the ES employed the ‘exhaustive’ trial and error searching technique, and only functions until

. This is due to the nature of ES, which suffers from neuron oscillation and computational burden, especially in the case of inconsistent interpretation

as the number of neuron increases. Thus, RMSE, MAE, SSE, and MAPE analysis are stopped at

for HNN-RAN2SATES due to the ineffectiveness of the learning algorithm. The solutions were trapped at the local minima due to neuron oscillations. From

Figure 2,

Figure 3,

Figure 4 and

Figure 5, it is clear that HNN-RAN2SATEA outperformed the other two models, HNN-RAN2SATGA and HNN-RAN2SATES, in optimizing the global minimum solutions based on RAN2SAT logical representation.

The effectiveness of HNN-RAN2SATEA can be seen from the perspective of the logical representation, RAN2SAT, and EA. The randomized structure of RAN2SAT diversifies the logical structure during the learning phase. Thus, the structure indicates the diversification of the final states produced by the model. Hence, dynamic exchanges of solutions occur in EA, where the chance of attaining diversified solutions is much higher. Hence, HNN-RAN2SATEA will generate more variation of clauses that can attain . On the contrary, the nature of ES in HNN-RAN2SATES will cause problems for the case of inconsistent interpretation . Additionally, the mechanism of GA will create lower diversification of as the early solutions are typically nonfit and require optimization operators such as cloning, crossover, and mutation before achieving . The utilization of EA deals effectively with the higher learning complexity of as the number of neurons increases during the simulation. This indicates the robustness of the global and local search procedures in HNN-RAN2SATEA.

The capability of HNN-RAN2SATEA to generate the global solution is related to the effectiveness of the global search and local search EA, which act as the learning algorithm. The local search in EA is promising during the early stage compared to GA and ES. This implies that the better optimization operators in EA facilitate the learning process for logical representation. Leader selection (candidate eligibility) requires an optimization operator that accelerates the process of obtaining the best leader (solution).

HNN-RAN2SATEA employs a more diversified optimization layer consisting of three layers in order to improve the solution in a particular partition of the solution space [

27]. The first optimization layer, known as positive advertisement, will create the optimization among the party. Secondly, the negative advertisement allows the other party to take the voters from a specific part. Thirdly, coalitions provide a tremendous optimization impact in obtaining the most voters (more solutions), as our case is in attaining global solutions. The coalition process will form a unified party with greater eligibility within a shorter timeframe [

28]. These features in EA lead HNN-RAN2SAT to reduce the iterations needed during the learning phase, ensuring minimum error evaluation at the end of the simulations.

The systematic solution space partition in HNN-RAN2SATEA improves the global and local search process for obtaining global solutions. The partition of the solution space allows the model to effectively find the solution in all defined spaces. Specifically, the solution spaces for HNN-RAN2SATEA are given as four spaces. On the contrary, HNN-RAN2SATGA adopted one partition of the overall solution space, which results in nonfit solutions during early stages of the model. On the same note, HNN-RAN2SATGA assimilated only one solution space, and the searching process utilized the trial and error mechanism, which requires more iterations to obtain the global solution.

EA was only implemented as the learning algorithm, without direct intervention in the retrieval phase. A different approach can be employed for optimizing the retrieval phase of HNN-RAN2SATEA. Different types of Hopfield Neural Networks, such as Mutation Hopfield Neural Network [

30], Mean Field Theory Hopfield Network [

46], Boltzman Hopfield [

47], and Kernel Hopfield Network [

48], drive the local minimum solution to the global minimum solution in different ways. More performance metrics can be investigated to authenticate our results. Similarity indices, such as Jaccard’s Index [

49], Sokhal-Sneath2 Index [

50], and Variation Index [

50], can be employed to assess the similarity between the final states obtained by the model. In addition, we adopt Symmetric Mean Absolute Percentage Error (SMAPE) [

51], Median Absolute Percentage Error [

48], Fitness energy landscape [

52], computation time [

53], and specificity analysis [

54].