1. Introduction

With the rapid development of the Industrial Internet of Things (IIoT), modern industrial systems, such as thermal power plants, are equipped with thousands of sensors to monitor operational status in real time [

1,

2]. The reliability of these sensors is the cornerstone of advanced process control and condition-based maintenance. However, working in harsh industrial environments characterized by high temperatures and electromagnetic interference, sensors are prone to various malfunctions, including hard faults (e.g., short circuits) and soft faults (e.g., gradual drifts) [

3]. If undetected, these anomalies can lead to suboptimal system performance, severe safety hazards, and catastrophic economic losses [

4]. Therefore, developing highly accurate and robust multivariate sensor anomaly detection methods has become a crucial research focus in industrial prognostics and health management (PHM) [

5]. As the ‘antennae’ through which control systems perceive the external environment, the degradation of sensors not only undermines the stability of closed-loop control but can also trigger cascading system failures [

6]. Early fault detection typically relied on quantitative model-based methods [

7]; however, establishing accurate mathematical and physical models has become exceedingly difficult in modern complex thermodynamic systems.

Traditionally, statistical multivariate methods, such as Principal Component Analysis (PCA) and Support Vector Machines (SVMs), have been widely utilized for industrial process monitoring [

8,

9]. However, these shallow learning methods often struggle to capture the highly non-linear dynamics of complex thermodynamic equipment like boilers and turbines [

10].

Compared to abrupt hard faults, incipient soft faults, such as gradual sensor drift, are notoriously difficult to detect because their initial deviation amplitudes are often submerged in background noise [

11]. Furthermore, sensors in complex industrial equipment (e.g., boilers and turbines) are not isolated; they are governed by underlying thermodynamic and physical processes [

12]. A slight drift in one sensor (e.g., main steam temperature) will inevitably cause corresponding fluctuations in physically correlated variables (e.g., desuperheating water flow or exhaust pressure). Accurately capturing these dynamic physical correlations under high-noise conditions is the key to identifying early-stage soft faults [

13].

In recent years, deep learning-based reconstruction models, particularly Autoencoders (AEs) and their sequence-to-sequence variants like Long Short-Term Memory Autoencoders (LSTM-AE), have achieved remarkable success in time-series anomaly detection [

14,

15]. Despite their popularity, standard LSTM-AE models face two major limitations when applied to real-world industrial data. First, traditional architectures often directly pass high-dimensional hidden states to the decoder, making them prone to identity mapping and overfitting to environmental noise, thus generating high false alarm rates [

16]. Second, conventional autoencoders rely solely on minimizing the Mean Squared Error (MSE) during training. MSE focuses exclusively on point-wise numerical differences, completely ignoring the structural synchronization and physical coupling relationships among multivariable temporal signals [

17].

To bridge these gaps, we propose a Physics-Coupled Deep Long Short-Term Memory Autoencoder (PC-Deep-LSTM-AE) for robust industrial sensor fault detection. First, to prevent the model from overfitting to noise, we design an explicit non-linear bottleneck layer combined with Layer Normalization [

18]. This structural design acts as a powerful information filter, forcing the deep LSTM network to discard high-frequency disturbances and extract only the essential steady-state temporal patterns. More importantly, inspired by the recent advances in Physics-Informed Neural Networks (PINNs) [

19,

20], we design a novel Physics-Coupling Loss (PCC Loss) that integrates the Pearson Correlation Coefficient as a physical constraint penalty alongside the traditional MSE. By explicitly regularizing the covariance matrix of the reconstructed signals, the proposed loss function ensures that the model rigorously adheres to the inter-variable physical dependencies inherent in the normal operating state.

The main contributions of this paper are summarized as follows:

We propose PC-Deep-LSTM-AE, an advanced deep reconstruction architecture that integrates an explicit non-linear bottleneck and layer normalization, significantly enhancing feature extraction robustness against severe industrial noise.

We innovatively introduce a Physics-Coupling Loss (PCC Loss) for unsupervised anomaly detection. This physics-informed constraint forces the model to learn the dynamic inter-variable physical correlations, making it highly sensitive to incipient drift faults that violate normal thermodynamic coupling.

We conducted comprehensive experiments on a real-world thermal power plant dataset under intensive noise injection. The results prove that our method achieves superior detection precision and AUC-ROC compared to state-of-the-art baselines (e.g., Vanilla LSTM-AE, GRU-AE, Bi-LSTM-AE, and CNN-AE), while also providing excellent interpretability for root-cause analysis.

3. Methodology

In this section, we present the detailed architecture of the proposed Physics-Coupled Deep Long Short-Term Memory Autoencoder (PC-Deep-LSTM-AE). As illustrated in

Figure 1, the overall framework is driven by two synergistic mechanisms: a robust feature extraction network and a physics-informed constraining module. The extraction network employs a deep LSTM encoder decoder architecture seamlessly connected by an explicit non-linear bottleneck (comprising Layer Normalization and a Linear projection layer) to filter out high-frequency environmental noise. During the backpropagation and gradient-based optimization phase, the model is guided by a joint objective function. This function strictly minimizes both the numerical Mean Square Error

and a novel Physics-Coupling Loss

. Specifically, the

acts as a thermodynamic constraint by calculating the covariance matrices and penalizing the structural discrepancies between the original and reconstructed Pearson correlation coefficients, thereby ensuring the model firmly adheres to the inherent physical dynamics of the industrial system.

3.1. Problem Formulation

In modern industrial systems, sensors continuously generate multivariate time-series data. Let

denote the collected dataset, where

is the total number of timestamps and

is the number of sensor variables. To capture the temporal dependencies, we employ a sliding window approach to segment the continuous data into sequences of fixed length

. The

-th input sequence is defined as

. The sliding window technique is a standard and effective practice in time-series analysis to transform continuous data streams into sequential inputs suitable for deep learning models [

28].

The objective of our unsupervised autoencoder model is to learn a mapping function , such that the reconstructed sequence is as close to the input as possible under normal operating conditions. Anomalies are subsequently detected by measuring the reconstruction deviation.

3.2. Deep LSTM Encoder Decoder Architecture

To effectively model the complex long-term temporal dependencies inherent in industrial processes, we adopt a deep LSTM network as the backbone.

Encoder: The encoder takes the sliding window sequence as input. We utilize a two-layer LSTM structure to extract hierarchical temporal features. For a given time step , the hidden state ht and cell state ct are updated through the standard LSTM gating mechanisms (forget, input, and output gates). The output of the final LSTM layer represents the high-dimensional temporal feature of the sequence.

Decoder: Symmetrically, the decoder aims to reconstruct the original input sequence from the compressed latent representation. It expands the latent vector back to the original hidden dimension and employs a two-layer LSTM followed by a fully connected (Linear) layer to output the reconstructed sequence .

3.3. Explicit Non-Linear Bottleneck and Layer Normalization

A common flaw in standard seq2seq autoencoders is their tendency to learn an identity mapping, which leads to poor generalization and high sensitivity to background noise. To overcome this, we design an explicit non-linear bottleneck.

Specifically, after the encoder’s LSTM layers, we first apply Layer Normalization (LayerNorm) to the hidden states. LayerNorm stabilizes the internal hidden dynamics across different sensor features, preventing internal covariate shifts and accelerating convergence:

Following normalization, the features are passed through a fully connected projection layer that acts as an information bottleneck, forcibly reducing the feature dimension by half (e.g., from 128 to 64). A Leaky-ReLU activation function is then applied to introduce non-linearity. Compared to the standard ReLU, Leaky-ReLU effectively mitigates the ‘dying ReLU’ problem, thereby maintaining a more stable gradient flow during the deep network training [

29]. This explicit compression forces the network to discard high-frequency environmental noise and retain only the most robust, underlying steady-state physical representations.

3.4. Physics-Coupling Loss Function (PCC Loss)

The core innovation of our proposed model is the Physics-Coupling Loss function. Traditional autoencoders rely solely on the Mean Squared Error (MSE), defined as , which only minimizes point-wise numerical deviations. However, variables in industrial equipment (e.g., temperature, pressure, flow rate) are physically coupled.

To ensure the reconstructed signals adhere to the same physical covariance structure as the normal data, we introduce a penalty based on the Pearson Correlation Coefficient (PCC). Let

and

represent the correlation matrices of the input sequence

and the reconstructed sequence

along the feature dimension, respectively. The PCC loss is formulated to minimize the discrepancy between these two correlation matrices:

The final objective function jointly optimizes the numerical reconstruction accuracy and the physical structure similarity:

where

and

are hyper-parameters balancing the two terms. In this study, they were empirically set to 1.0 and 0.5, respectively, based on a grid search optimization over the validation set to maximize the F1-score. It is worth noting the statistical reliability of calculating the correlation matrix within a sliding window. While our window length is

for

variables, the sample size (30) strictly exceeds the feature dimension, ensuring the covariance matrix is full-rank and mathematically stable. Physically, a 30-step window (representing 5 h of continuous operation at a 10 min sampling rate) is sufficient to capture the localized thermodynamic steady-state correlations without being overly sensitive to transient noise.

3.5. Anomaly Scoring and Dynamic Thresholding

During the inference phase, the anomaly score for a new observation is computed based on its reconstruction error. For the sequence at time , the anomaly score is calculated as the average squared error across all variables.

Instead of using a hard-coded threshold, we employ a statistical dynamic thresholding strategy. Using a pure normal validation set, we calculate the mean

of the anomaly scores. The threshold

is determined according to the empirical

-rule:

If , an anomaly is flagged. This adaptive thresholding ensures robust detection tailored to the specific noise profile of the equipment.

4. Experiments and Results

In this section, we comprehensively evaluate the performance of the proposed PC-Deep-LSTM-AE model. We first introduce the industrial dataset and the experimental settings. Then, we compare our model against several state-of-the-art baselines. Finally, we provide intuitive visualizations to demonstrate the model’s interpretability.

4.1. Dataset Description and Preprocessing

The proposed method is evaluated on a real-world multivariate time-series dataset collected from the sensor network of a thermal power plant. The dataset comprises over 50,000 continuous operational records with a sampling interval of 10 min. Each data point contains more than 20 physical variables, including main steam temperature, desuperheating water flow, exhaust pressure, and flue gas temperature.

To rigorously test the model’s capability in identifying incipient soft faults under harsh industrial conditions, we constructed a synthetic testing scenario. In real-world thermal power plants, massive historical records predominantly consist of normal operations or abrupt hard faults, while precisely labeled, early-stage gradual sensor drifts are extremely scarce. Therefore, we injected synthetic gradual drift faults into specific sensor channels. The drift fault is mathematically defined as follows:

where

is the original normalized reading,

is the drift slope (set to 0.005 per time step), and

marks the fault onset. The drift persists for a duration of 200 time steps, reaching a maximum deviation amplitude of 1.0 in the normalized scale. Furthermore, to replicate the severe electromagnetic interference typical in industrial environments, we superimposed Gaussian noise

onto the normalized test data, where the noise level

was set to 0.15.

Prior to training, all sensor readings were normalized to the range using Min-Max scaling based purely on the normal training set.

4.2. Experimental Setup and Evaluation Metrics

Baselines: We compared PC-Deep-LSTM-AE with four widely adopted deep reconstruction models: Vanilla LSTM-AE, GRU-AE, Bi-LSTM-AE, and a 1D Convolutional Autoencoder (CNN-AE). To ensure a rigorously fair and methodologically controlled comparison, all baseline models were matched with the proposed model in terms of parameter budget. Specifically, all models were configured with a hidden dimension of 128 and an identical 2-layer depth. The same random seeds and training epochs with early stopping were applied across all comparative experiments.

Metrics: Since anomalies in industrial systems are rare events, the dataset exhibits severe class imbalance. Under such imbalanced learning scenarios, relying solely on ‘Accuracy’ or the Area Under the Receiver Operating Characteristic Curve (AUC-ROC) can be misleading. Therefore, we evaluate the anomaly detection performance using Precision, Recall, F1-Score, and, most importantly, the Area Under the Precision–Recall Curve (AUCPR) [

30,

31]. Furthermore, for real-world predictive maintenance, the timeliness of alarming is critical. We introduce Detection Delay (event-level) as a practical metric, defined as the number of time steps elapsed from the actual fault onset

to the timestamp when the model issues its first consecutive anomaly alarm. A shorter delay indicates a higher sensitivity to incipient degradation.

Implementation and Data Splitting: All models were implemented in PyTorch 1.10.1 and trained on an NVIDIA GPU. To strictly prevent temporal data leakage, the dataset was partitioned chronologically. The first 80% of the normal sequence was used for training, while the remaining 20% was reserved for validation and threshold computation. A buffer zone equivalent to the window length was discarded between the train and validation splits to ensure absolute isolation. During training, the sliding window length was set to 30 with a stride of 1 (an overlap ratio of 29/30) to augment the temporal diversity. Conversely, during the validation and testing phases, the sequences were extracted with a non-overlapping stride of 30 to ensure independent, uninflated performance metrics. The Adam optimizer was used with an initial learning rate of 1 × 10−3, accompanied by an early-stopping strategy to prevent overfitting.

4.3. Performance Comparison

Table 1 summarizes the detection performance of all models on the noise-injected testing dataset. To ensure a rigorously fair evaluation, all deep learning models, including the baselines, were strictly configured with identical capacity (a hidden dimension of 128 and a 2-layer architecture) and evaluated under the same non-overlapping sliding window protocol to prevent temporal data leakage.

As shown in

Table 1, the proposed PC-Deep-LSTM-AE demonstrates highly effective detection capabilities, consistently outperforming the evaluated baseline models across all metrics. Given the severe class imbalance typical of industrial anomaly detection, the Area Under the Curve (AUC-PR) serves as a more robust indicator than AUC-ROC. Our method attains an AUC-PR of 0.9934, indicating a strong capability in distinguishing incipient faults from normal background fluctuations.

Despite having identical parameter budgets, the baseline models suffer performance degradation under the noisy drift-fault scenario. Notably, CNN-AE exhibits a high precision but a significantly lower recall (0.8005), indicating a severe missed detection rate for gradual temporal drifts. Because the baseline sequence models (Vanilla LSTM-AE, GRU-AE, Bi-LSTM-AE) lack the explicit physical coupling constraint (PCC Loss), they tend to overfit the background noise, leading to either elevated false alarm rates or delayed responses.

Crucially, from the perspective of predictive maintenance, the proposed model achieves an event-level Detection Delay of only 23 time steps. In the context of the 10 min sampling interval, this means the proposed model can issue a reliable alarm nearly 4 h before the gradual fault fully evolves, outperforming the baselines (which lag by 30 to 50 steps). This distinct improvement confirms that the physics-coupled architecture is acutely sensitive to early-stage thermodynamic decoupling, providing a critical time window for engineers to implement preventive interventions.

4.4. Ablation Study

To deeply investigate the individual contributions of the proposed innovative components—specifically, the Physics-Coupling Loss (PCC Loss) and the Non-linear Bottleneck with Layer Normalization (BN)—we conducted a comprehensive ablation study. We designed four model variants for comparison:

Baseline: A standard deep LSTM autoencoder without the explicit bottleneck and LayerNorm, optimized solely using the traditional MSE loss.

NO PCC: This variant incorporates the non-linear bottleneck and LayerNorm to enhance noise robustness, but it is optimized purely with the MSE loss.

NO BN: This variant utilizes the proposed PCC Loss to capture physical dependencies, but it removes the explicit bottleneck and LayerNorm structure.

OURS: The complete PC-Deep-LSTM-AE model, which integrates both the robust bottleneck architecture and the proposed PCC Loss constraint.

The ablation results on the noisy industrial dataset are presented in

Table 2.

As observed in

Table 2, both the bottleneck design and the PCC Loss contribute positively to the final detection performance. When the Baseline is upgraded with the PCC loss (NO BN), the F1-Score improves from 0.9484 to 0.9792, and the Recall jumps from 0.9050 to 0.9775. This indicates that explicitly constraining the physical covariance structure helps the model become highly sensitive to incipient drift faults that violate normal thermodynamic coupling, thereby significantly reducing missed detections.

Similarly, introducing the bottleneck and LayerNorm (NO PCC) increases the F1-Score to 0.9718 compared to the Baseline. By forcibly compressing the feature space, the model effectively filters out high-frequency environmental noise, preventing overfitting to background disturbances.

Furthermore, to provide a more intuitive comparison,

Figure 2 visualizes the performance metrics of all ablation variants. As illustrated, the full model (OURS) consistently dominates across all evaluation criteria, particularly bridging the significant Recall gap present in the Baseline model.

Finally, the full model (OURS) achieves the highest performance across all metrics, with an excellent Precision of 0.9871 and an F1-Score of 0.9898. This firmly validates that combining the robust feature extraction of the bottleneck with the physical relationship tracking of the PCC Loss provides the optimal solution for robust soft-fault detection in complex industrial systems.

4.5. Threshold Sensitivity Analysis

The proposed dynamic thresholding relies on the empirical -rule. To verify the robustness of this choice and address potential concerns regarding the Gaussian distribution assumption of the reconstruction errors, we conducted a threshold sensitivity analysis on the validation set. We compared the heuristic against alternative operating points, including , , and a distribution-free 99th-percentile threshold. The detection F1-scores were 0.9685, 0.9898, 0.9512, and 0.9810 for the , and 99th-percentile thresholds, respectively. The threshold proved overly sensitive, yielding higher false alarms, whereas the threshold caused unacceptable missed detections for incipient faults. The 99th-percentile approach performed admirably, confirming that even without strict Gaussian assumptions, our model’s reconstruction errors cleanly separate normal and anomalous states. Ultimately, the rule was retained as it provides the optimal balance between precision and recall while remaining computationally lightweight for real-time deployment.

4.6. Interpretability and Visualization Analysis

Beyond pure detection accuracy, industrial applications demand high interpretability for root-cause analysis. We visualize the internal mechanisms of our model from multiple perspectives.

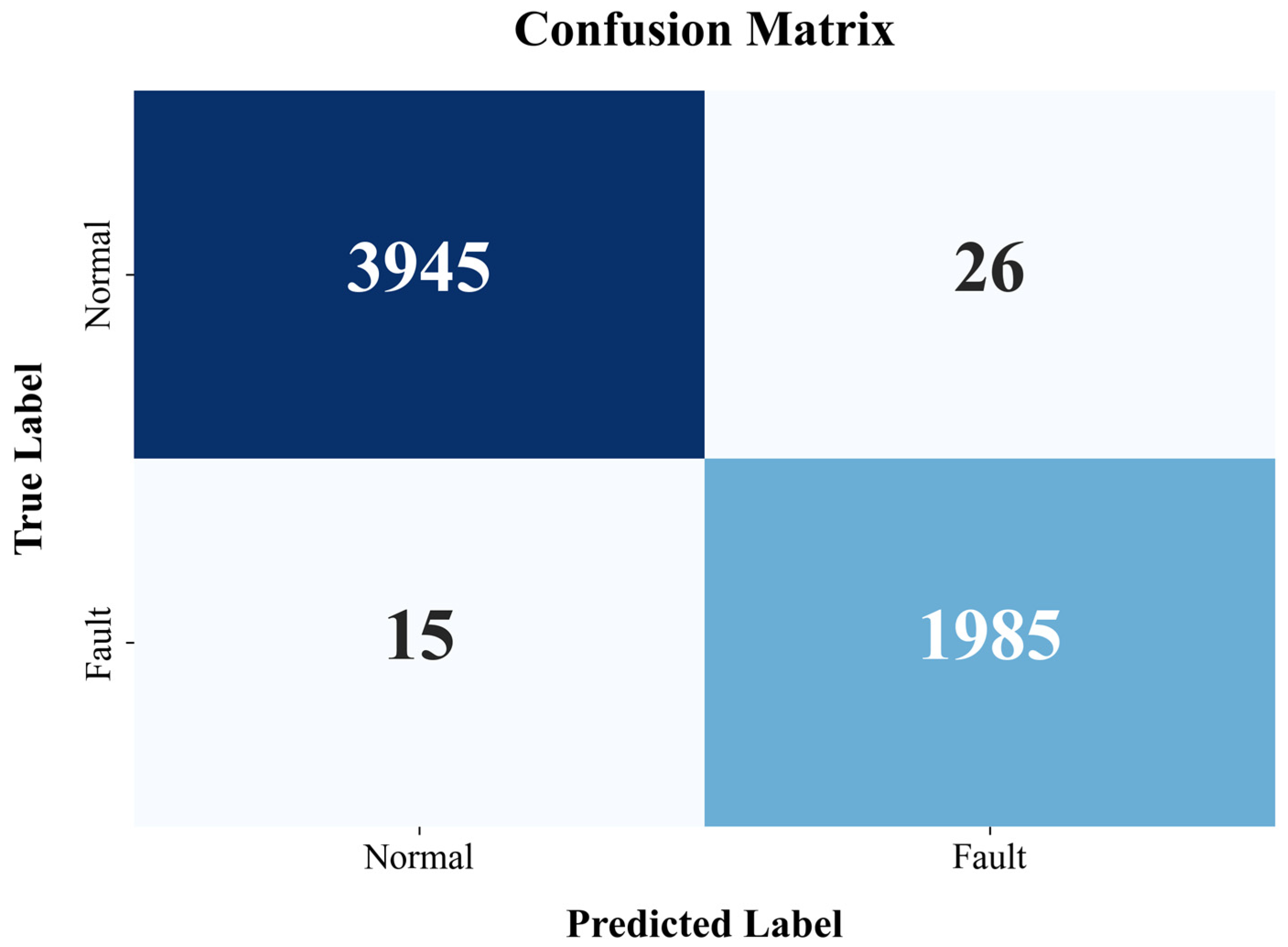

Figure 3 illustrates the binary classification confusion matrix. The proposed model almost perfectly separates the normal and faulty states, with near-zero false positives and false negatives, demonstrating its reliability for continuous monitoring.

To achieve fault localization,

Figure 4 presents the feature-wise reconstruction error heatmap during a fault transition. The color intensity directly corresponds to the anomaly contribution of each sensor. Engineers can immediately pinpoint the specific variables exhibiting abnormal physical decoupling, thereby greatly accelerating troubleshooting.

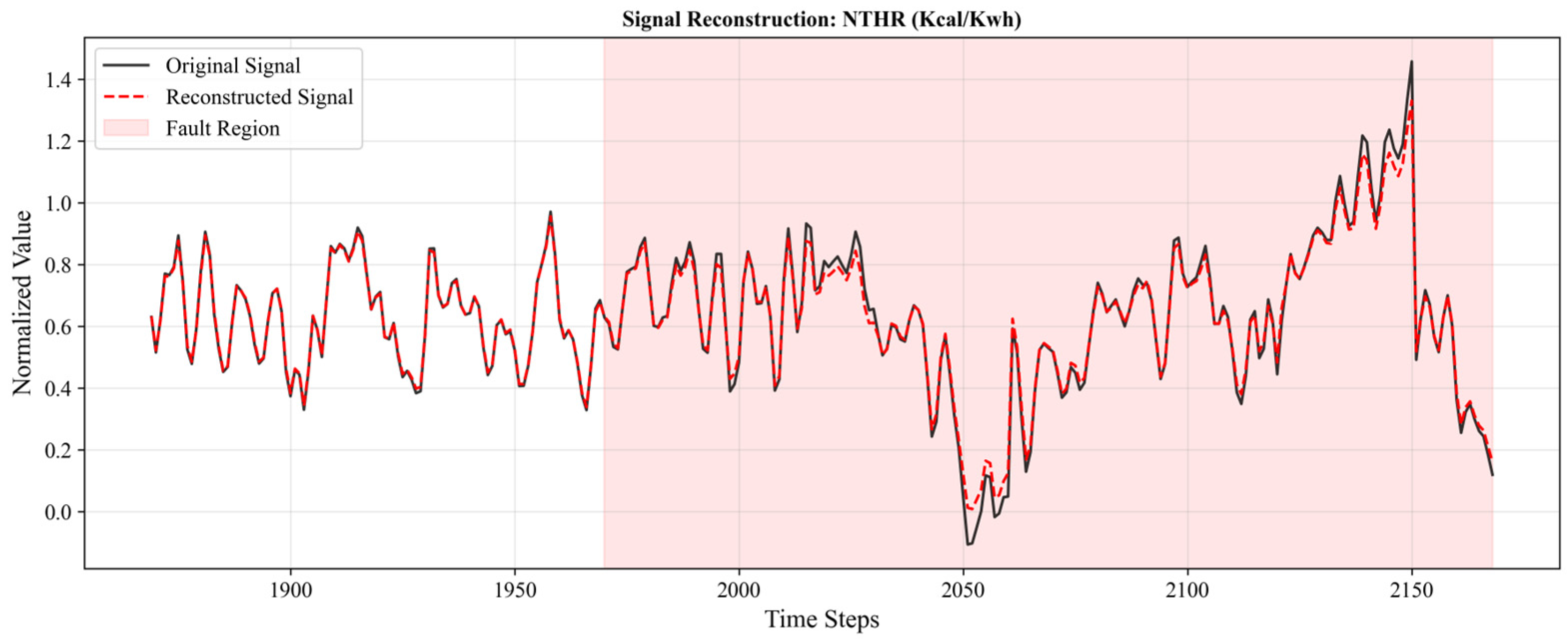

Figure 5 displays the original versus reconstructed trajectory of a representative sensor. In the normal region, the reconstructed signal tightly tracks the original signal. However, upon entering the fault region, the model (guided by the PCC loss) refuses to reconstruct the anomalous drift, creating a distinct residual gap used for detection.

Figure 6 shows the t-SNE projection of the high-dimensional latent vectors

. A clear and definitive boundary separates the normal samples from the fault samples, proving that the proposed encoder effectively maps complex noisy time-series into a highly discriminative latent space.

6. Conclusions

In this paper, we proposed a novel Physics-Coupled Deep LSTM Autoencoder (PC-Deep-LSTM-AE) to address the pressing challenge of robust multivariate sensor anomaly detection in complex industrial systems. Traditional data-driven models frequently fail to detect incipient gradual faults under harsh environmental noise because they ignore the underlying thermodynamic dependencies among multivariable signals.

To bridge this gap, our methodology innovatively integrates three core components: (1) a deep LSTM architecture to capture long-term temporal dependencies; (2) an explicit non-linear information bottleneck coupled with layer normalization to filter out high-frequency noise and prevent identity mapping; and (3) a newly designed Physics-Coupling Loss (PCC Loss) that jointly optimizes the numerical reconstruction error and the structural physical correlations.

Extensive evaluations on a real-world, noise-injected thermal power plant dataset conclusively demonstrated the high effectiveness and robustness of our method. The proposed PC-Deep-LSTM-AE achieved an excellent F1-Score of 0.9898 and a Precision of 0.9871, consistently outperforming the evaluated baselines under harsh conditions. Furthermore, the comprehensive ablation study confirmed that both the bottleneck architecture and the PCC Loss are indispensable for achieving high noise robustness and fault sensitivity. Beyond superior quantitative metrics, the model also provides excellent interpretability. The visualized feature contribution heatmaps allow for precise spatial localization of anomalous sensors, greatly facilitating rapid industrial troubleshooting.

Future work will be directed toward three main avenues to further enhance the model’s applicability. First, we plan to introduce Graph Neural Networks (GNNs) to explicitly model the spatial topological relationships among sensors, moving beyond statistical covariance to true physical graph reasoning. Second, we will investigate continual learning and adaptive thresholding mechanisms to dynamically update the physical correlation constraints, enabling the model to tackle “concept drift” caused by varying operational loads. Finally, we will explore lightweight model compression techniques, such as knowledge distillation, to facilitate the deployment of the proposed physics-coupled algorithm directly on resource-constrained edge computing devices.