Rate of Penetration Prediction in Steeply Dipping Coal Seams Using Data-Driven Modeling and Feature Engineering

Abstract

1. Introduction

2. Data Processing and Feature Analysis

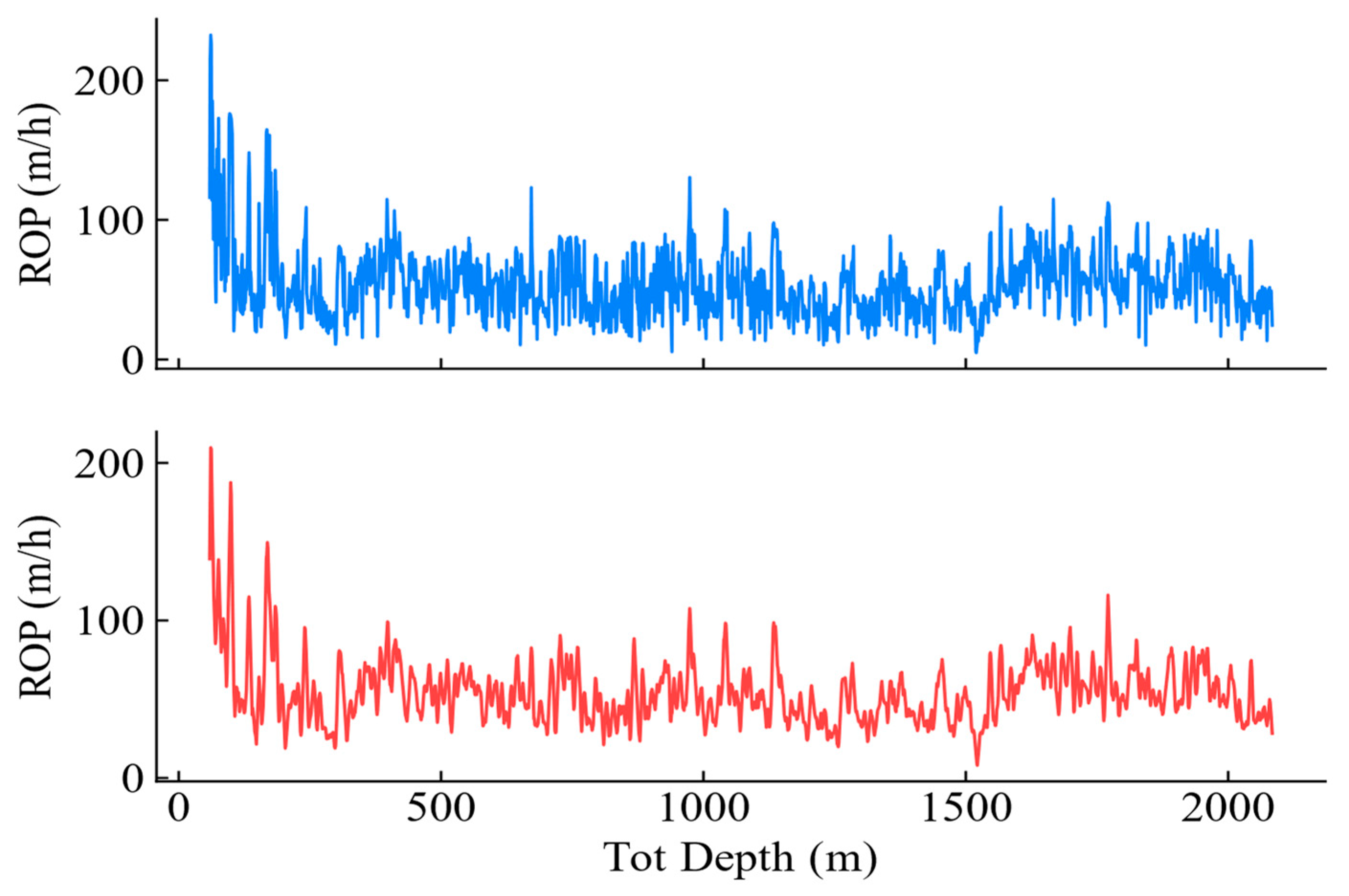

2.1. Data Composition

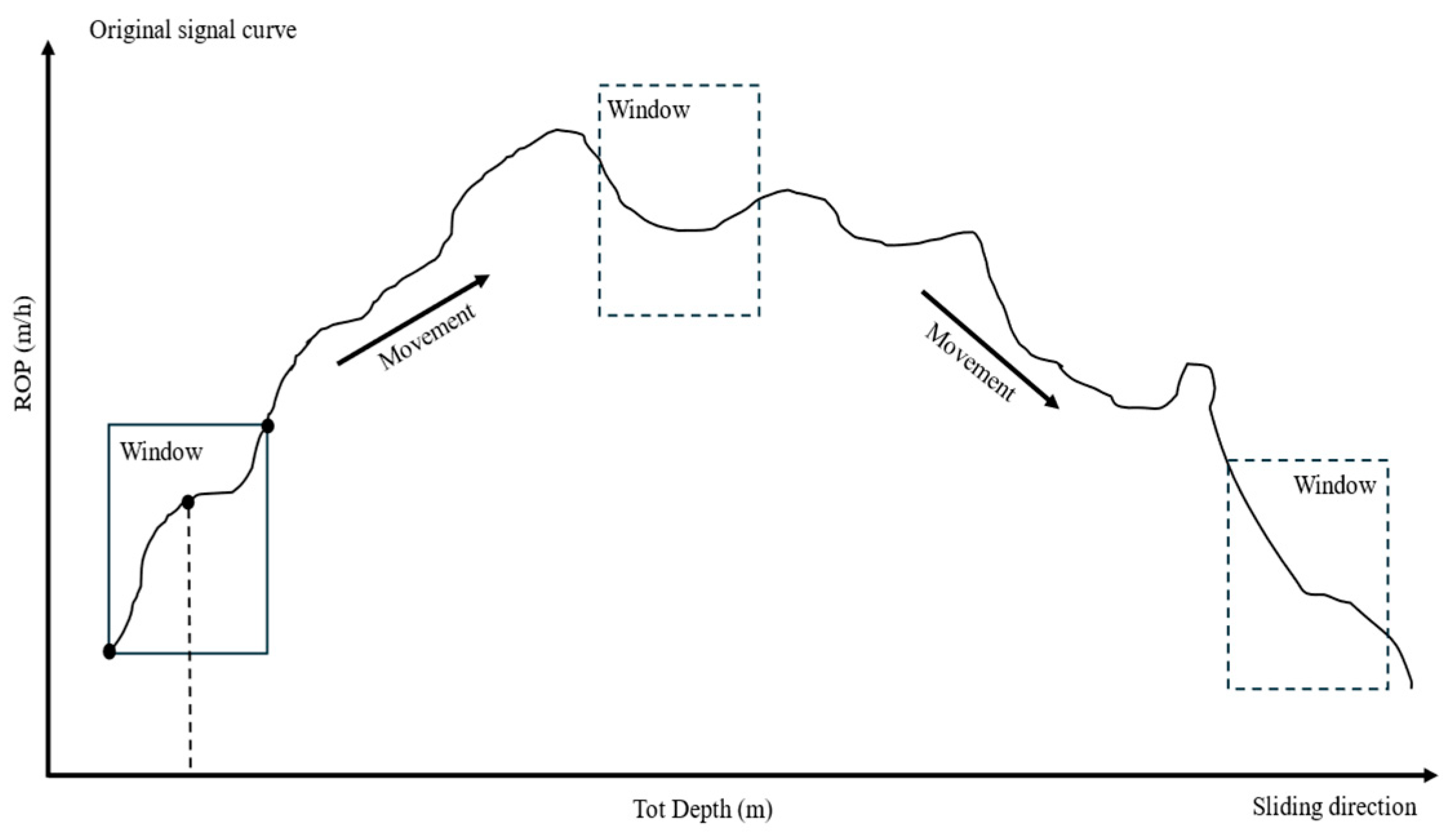

2.2. Data Processing

2.3. Feature Analysis

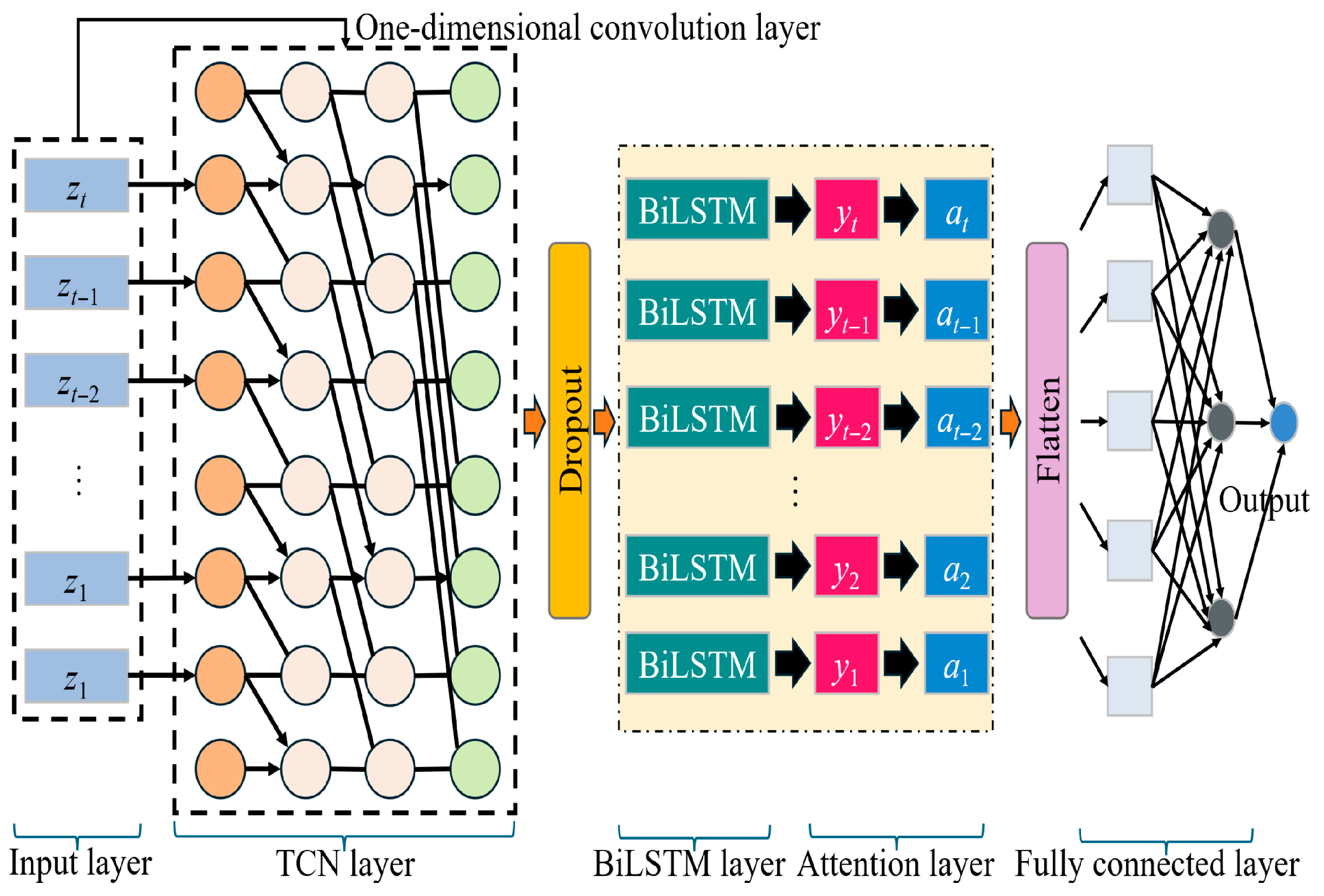

3. Data-Driven ROP Prediction

3.1. Overall Framework

3.2. TCN-BiLSTM-Attention Model Theory

3.3. Model Evaluation Metrics

4. ROP Prediction Results

4.1. Hyperparameter Optimization

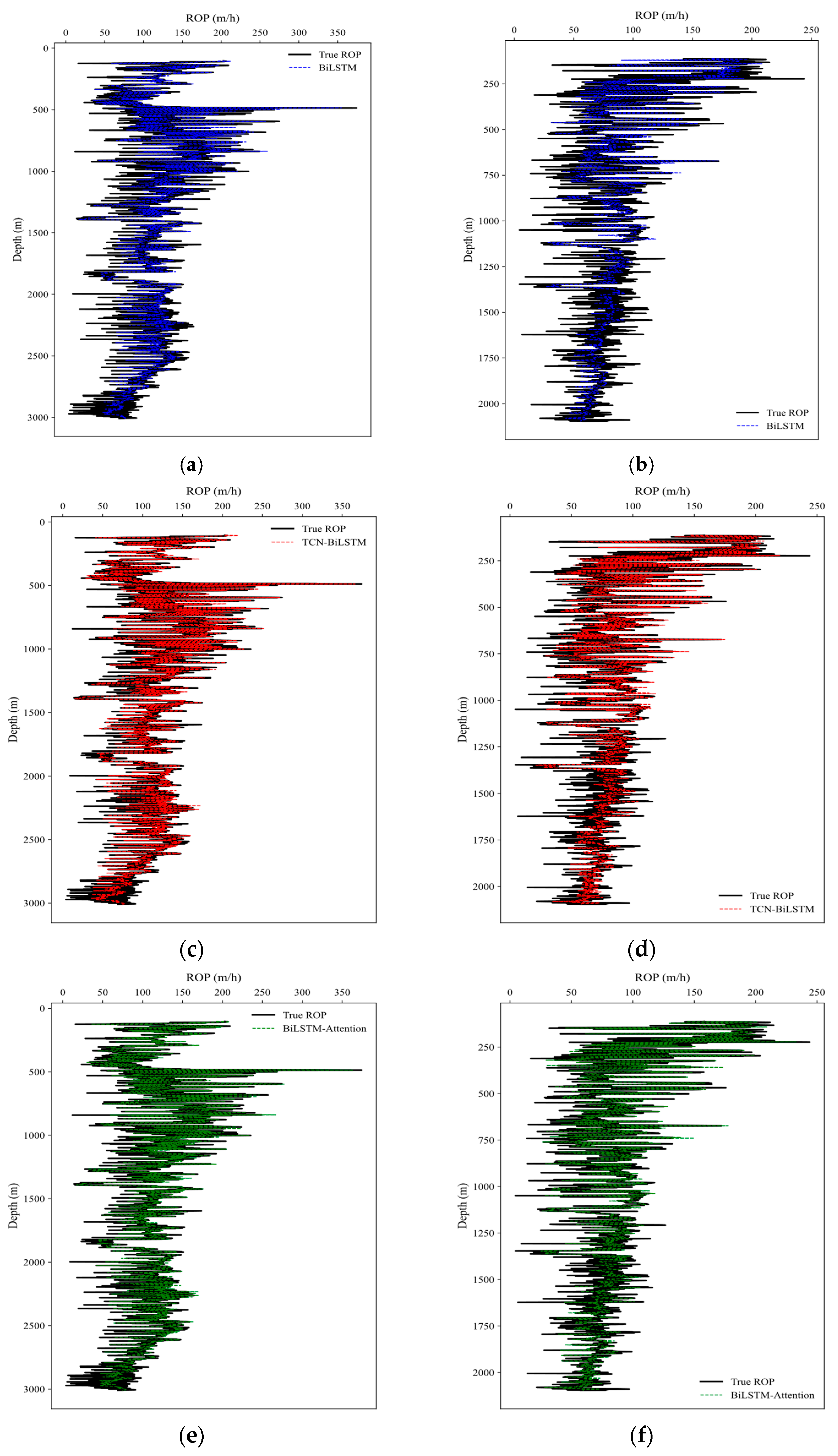

4.2. Model Comparison

5. Conclusions and Future Work

- (1)

- Feature processing of model input parameters was conducted through linear and nonlinear analysis using Pearson correlation analysis, Spearman correlation analysis, and mutual information methods. Considering the characteristics of the SG denoising method, window widths and polynomial orders were analyzed to determine that SG smoothing with a window width of 11 and a polynomial order of 3 effectively denoises the data.

- (2)

- Based on engineering parameter features and geological features, a TCN-BiLSTM-Attention model was constructed under well depth constraints, integrating temporal and geological parameters. This model comprehensively accounts for the combined effects of engineering parameters and geological features during drilling operations, providing an efficient technical solution for accurate ROP prediction.

- (3)

- The proposed model was compared with BiLSTM, BiLSTM-Attention, and TCN-BiLSTM models in terms of prediction results. For the X1 and X2 well sections in Block L, Xinjiang, the model predictions outperformed other models in evaluation metrics.

- (4)

- To comprehensively understand the influence of input variables on ROP, this study employs model interpretability methods. Through explanatory analysis provided by the SHAP method, the contribution of feature variables is ranked in ascending order as follows: TVD, RPM, WOB, SPP, FLWpmps, BT, and TORQUE.

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviation

| ROP | rate of penetration |

| SG | Savitzky–Golay |

| TCN | Time Convolutional Network |

| BiLSTM | Bidirectional Long Short-Term Memory Neural Network |

| ML | Machine Learning |

| SVM | Support Vector Machine |

| XGBoost | Extreme Gradient Boosting |

| LSTM | Long Short-Term Memory |

| GRU | Gated Recurrent Unit |

| BPNN | Backpropagation Neural Network |

| WOH | Weight On Hook |

| TVD | True Vertical Depth |

| WOB | Weight on Bit |

| RPM | Revolutions Per Minute |

| SPP | Standpipe Pressure |

| PT | Pump Time |

| HKH | Hook Height |

| TO | Flow Temperature |

| MWO | Mud Weight |

| FO | Fluid Out |

| BT | Bit Time |

| MSE | mean squared error |

| CNN | Convolutional Neural Network |

| RMSE | Root Mean Squared Error |

| MAE | Mean Absolute Error |

| R2 | Coefficient of Determination |

| DBO | Dung Beetle Optimization |

| PSO | Particle Swarm Optimization |

| SHAP | SHapley Additive exPlanations |

References

- Pei, Z.-J.; Song, X.-Z.; Wang, H.-T.; Shi, Y.-Q.; Tian, S.-C.; Li, G.-S. Interpretation and characterization of rate of penetration intelligent prediction model. Pet. Sci. 2024, 21, 582–596. [Google Scholar] [CrossRef]

- Motahhari, H.R.; Hareland, G.; James, J. Improved drilling efficiency technique using integrated PDM and PDC bit parameters. J. Can. Pet. Technol. 2010, 49, 45–52. [Google Scholar] [CrossRef]

- Winters, W.J.; Warren, T.; Onyia, E. Roller bit model with rock ductility and cone offset. In Proceedings of the SPE Annual Technical Conference and Exhibition? SPE: Calgary, AB, Canada, 1987; p. SPE-16696-MS. [Google Scholar]

- Bingham, M.G. A New Approach to Interpreting—Rock Drillability; Petroleum Pub. Co.: Tulsa, OK, USA, 1965. [Google Scholar]

- Warren, T. Penetration-rate performance of roller-cone bits. SPE Drill. Eng. 1987, 2, 9–18. [Google Scholar] [CrossRef]

- Soares, C.; Daigle, H.; Gray, K. Evaluation of PDC bit ROP models and the effect of rock strength on model coefficients. J. Nat. Gas Sci. Eng. 2016, 34, 1225–1236. [Google Scholar] [CrossRef]

- Sun, Y.; Mao, L.; Liu, Q.; Zhao, P.; Zhu, H. Sand Screenout Early Warning Model Based on Combinatorial Neural Network. Rock Mech. Rock Eng. 2025, 1–24. [Google Scholar] [CrossRef]

- Zhu, Z.; Wan, M.; Sun, Y.; Gong, X.; Lei, B.; Tang, Z.; Mao, L. A Multi-Source Data-Driven Fracturing Pressure Prediction Model. Processes 2025, 13, 3434. [Google Scholar] [CrossRef]

- Li, Q.; Li, J.-P.; Xie, L.-L. A systematic review of machine learning modeling processes and applications in ROP prediction in the past decade. Pet. Sci. 2024, 21, 3496–3516. [Google Scholar] [CrossRef]

- Nautiyal, A.; Mishra, A. Drill bit selection and drilling parameter optimization using machine learning. In Proceedings of the IOP Conference Series: Earth and Environmental Science; IOP Publishing: Bristol, UK, 2023; p. 012027. [Google Scholar]

- Allawi, R.H.; Al-Mudhafar, W.J.; Abbas, M.A.; Wood, D.A. Leveraging boosting machine learning for drilling rate of penetration (ROP) prediction based on drilling and petrophysical parameters. Artif. Intell. Geosci. 2025, 6, 100121. [Google Scholar] [CrossRef]

- Liu, Y.; Zhang, F.; Yang, S.; Cao, J. Self-attention mechanism for dynamic multi-step ROP prediction under continuous learning structure. Geoenergy Sci. Eng. 2023, 229, 212083. [Google Scholar] [CrossRef]

- Zhang, C.; Song, X.; Su, Y.; Li, G. Real-time prediction of rate of penetration by combining attention-based gated recurrent unit network and fully connected neural networks. J. Pet. Sci. Eng. 2022, 213, 110396. [Google Scholar] [CrossRef]

- Ji, H.; Lou, Y.; Cheng, S.; Xie, Z.; Zhu, L. An advanced long short-term memory (LSTM) neural network method for predicting rate of penetration (ROP). ACS Omega 2022, 8, 934–945. [Google Scholar] [CrossRef] [PubMed]

- Gan, C.; Wang, X.; Wang, L.-Z.; Cao, W.-H.; Liu, K.-Z.; Gao, H.; Wu, M. Multi-source information fusion-based dynamic model for online prediction of rate of penetration (ROP) in drilling process. Geoenergy Sci. Eng. 2023, 230, 212187. [Google Scholar] [CrossRef]

- Feng, Y.; Zhu, J.; Qiu, P.; Zhang, X.; Shuai, C. Short-term power load forecasting based on TCN-BiLSTM-attention and multi-feature fusion. Arab. J. Sci. Eng. 2025, 50, 5475–5486. [Google Scholar] [CrossRef]

- Liang, J.; Yue, J.; Xin, Y.; Pan, S.; Tian, J.; Sun, J. Short-Term photovoltaic power forecasting based on K-means++ clustering, secondary decomposition and TCN-BiLSTM-Attention model. Electr. Power Syst. Res. 2026, 255, 112749. [Google Scholar] [CrossRef]

- Wang, S.; Jia, H.; Wang, A.; Wu, L.; Li, Q. Remaining Useful Life Prediction of Cutting Tools Based on a Depthwise Separable TCN-BiLSTM Model with Temporal Attention. Lubricants 2025, 13, 507. [Google Scholar] [CrossRef]

- Xie, Z.; Chen, L.; Li, Y. Optimization of dam safety monitoring models based on residual compensation: A multidimensional temporal framework integrating TCN-BiLSTM-attention mechanism. Eng. Struct. 2026, 348, 121869. [Google Scholar] [CrossRef]

- Sharma, A.; Burak, T.; Nygaard, R.; Hoel, E.; Kristiansen, T.; Welmer, M. Hybrid ROP modeling: Combining analytical and data-driven approaches for drilling. Geoenergy Sci. Eng. 2025, 251, 213877. [Google Scholar] [CrossRef]

- Liu, S.; Xu, T.; Du, X.; Zhang, Y.; Wu, J. A hybrid deep learning model based on parallel architecture TCN-LSTM with Savitzky-Golay filter for wind power prediction. Energy Convers. Manag. 2024, 302, 118122. [Google Scholar] [CrossRef]

- Huang, L.; Qin, J.; Zhou, Y.; Zhu, F.; Liu, L.; Shao, L. Normalization techniques in training dnns: Methodology, analysis and application. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 10173–10196. [Google Scholar] [CrossRef] [PubMed]

- Huang, F.; Sun, Y.; Yang, J.; Sha, Z.; Lu, J.; Qi, R. Early Warning of Lost Circulation Based on Physical Models and a Hybrid Neural Network. Processes 2026, 14, 559. [Google Scholar] [CrossRef]

- Gong, H.; Li, Y.; Zhang, J.; Zhang, B.; Wang, X. A new filter feature selection algorithm for classification task by ensembling pearson correlation coefficient and mutual information. Eng. Appl. Artif. Intell. 2024, 131, 107865. [Google Scholar] [CrossRef]

- Li, Y.; Song, L.; Zhang, S.; Kraus, L.; Adcox, T.; Willardson, R.; Komandur, A.; Lu, N. A TCN-based hybrid forecasting framework for hours-ahead utility-scale PV forecasting. IEEE Trans. Smart Grid 2023, 14, 4073–4085. [Google Scholar] [CrossRef]

- Singla, P.; Duhan, M.; Saroha, S. An ensemble method to forecast 24-h ahead solar irradiance using wavelet decomposition and BiLSTM deep learning network. Earth Sci. Inform. 2022, 15, 291–306. [Google Scholar] [CrossRef]

- Guo, M.-H.; Xu, T.-X.; Liu, J.-J.; Liu, Z.-N.; Jiang, P.-T.; Mu, T.-J.; Zhang, S.-H.; Martin, R.R.; Cheng, M.-M.; Hu, S.-M. Attention mechanisms in computer vision: A survey. Comput. Vis. Media 2022, 8, 331–368. [Google Scholar] [CrossRef]

- Gan, C.; Wang, Y.; Cao, W.-H.; Liu, K.-Z.; Wu, M. Real-time formation drillability sensing-based hybrid online prediction method for the rate of penetration (ROP) and its industrial application for drilling processes. Control Eng. Pract. 2025, 164, 106487. [Google Scholar] [CrossRef]

- Lyu, L.; Jiang, H.; Yang, F. Improved dung beetle optimizer algorithm with multi-strategy for global optimization and UAV 3D path planning. IEEE Access 2024, 12, 69240–69257. [Google Scholar] [CrossRef]

- Reguero, Á.D.; Martínez-Fernández, S.; Verdecchia, R. Energy-efficient neural network training through runtime layer freezing, model quantization, and early stopping. Comput. Stand. Interfaces 2025, 92, 103906. [Google Scholar] [CrossRef]

- Takefuji, Y. Beyond XGBoost and SHAP: Unveiling true feature importance. J. Hazard. Mater. 2025, 488, 137382. [Google Scholar] [CrossRef]

- Zhang, Y.; Yu, L.; Yang, L.; Hu, Z.; Liu, Y. Data-driven framework for predicting rate of penetration in deepwater granitic formations: A marine engineering geology perspective with comprehensive model interpretability. Eng. Geol. 2025, 351, 108039. [Google Scholar] [CrossRef]

| Category | Input Parameters |

|---|---|

| Construction parameters | Weight On Hook (WOH), Total Depth, True Vertical Depth (TVD), TORQUE, Weight on Bit (WOB), Revolutions Per Minute (RPM), Standpipe Pressure (SPP), FLWpmps, Pump Time (PT), Hook Height (HKH) |

| Drilling fluid parameters | Flow Temperature (TO), Mud Weight (MWO), Fluid Out (FO) |

| Drill bit parameters | Drill model, drill diameter, drill type |

| Other parameters | Stratigraphic information, Bit Time (BT), Construction unit |

| Construction Contractor | … | Drill Bit Type | … | Stratigraphic Information | … | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| _1 | _2 | _3 | _4 | _5 | … | _1 | _2 | _3 | _4 | … | _1 | _2 | _3 | _4 | _5 | _6 | … |

| 0 | 0 | 1 | 0 | 0 | … | 0 | 0 | 0 | 1 | … | 0 | 0 | 0 | 0 | 1 | 0 | … |

| 0 | 0 | 0 | 0 | 1 | … | 0 | 0 | 1 | 0 | … | 0 | 1 | 0 | 0 | 0 | 0 | … |

| 0 | 1 | 0 | 0 | 0 | … | 0 | 1 | 0 | 0 | … | 0 | 0 | 0 | 1 | 0 | 0 | … |

| 1 | 0 | 0 | 0 | 0 | … | 1 | 0 | 0 | 0 | … | 1 | 0 | 0 | 0 | 0 | 0 | … |

| Serial Number | Characteristic Parameters | Feature Selection | ||

|---|---|---|---|---|

| Pearson | Spearman | Mutual Information | ||

| 1 | TVD | √ | √ | √ |

| 2 | WOB | √ | √ | √ |

| 3 | RPM | √ | √ | √ |

| 4 | SPP | × | × | |

| 5 | FLWpmps | × | × | √ |

| 6 | MWO | × | × | × |

| 7 | TO | × | × | × |

| 8 | WOH | × | × | × |

| 9 | PT | × | × | × |

| 10 | BT | √ | √ | √ |

| 11 | TORQUE | √ | √ | √ |

| 12 | HKH | × | × | × |

| 13 | FO | × | × | × |

| Parameter | Unit | Mean | Standard Deviation | Min | Max |

|---|---|---|---|---|---|

| TVD | m | 1401.55 | 833.91 | 18.99 | 2850.69 |

| WOB | t | 6.63 | 2.16 | 0 | 16.30 |

| RPM | r/min | 51.24 | 19.38 | 0 | 132 |

| SPP | MPa | 15.92 | 5.15 | 1.99 | 25.41 |

| FLWpmps | L/h | 2742.81 | 3851.72 | 2988.01 | 4298.97 |

| BT | h | 14.47 | 13.48 | 0 | 86.74 |

| TORQUE | kN*m | 14.98 | 6.93 | 0 | 35.16 |

| Parameter | Parameter Range | |

|---|---|---|

| Maximum Value | Minimum Value | |

| TCN layers | 5 | 1 |

| TCN convolution kernel size | 5 | 2 |

| LSTM hidden layer | 512 | 32 |

| Number of LSTM layers | 6 | 2 |

| Initial learning rate | 0.1 | 0.0001 |

| Dropout | 0.5 | 0.1 |

| Sliding window size | 30 | 5 |

| Batch size | 1024 | 256 |

| Parameter | Optimum Value | Parameter | Optimum Value |

|---|---|---|---|

| TCN layers | 2 | Initial learning rate | 0.001 |

| TCN convolution kernel size | 4 | Dropout | 0.3 |

| LSTM hidden layer | 512 | Sliding window size | 8 |

| Number of LSTM layers | 4 | Batch size | 256 |

| Model | Well Section | RMSE | MAE | R2 |

|---|---|---|---|---|

| BiLSTM | X1 | 16.9416 | 12.1842 | 0.8301 |

| BiLSTM-Attention | X1 | 13.4011 | 9.5337 | 0.8937 |

| TCN-BiLSTM | X1 | 13.6465 | 9.7094 | 0.8898 |

| TCN-BiLSTM-Attention | X1 | 8.6151 | 6.1536 | 0.9561 |

| BiLSTM | X2 | 16.2312 | 11.6502 | 0.7701 |

| BiLSTM-Attention | X2 | 12.4140 | 8.8078 | 0.8655 |

| TCN-BiLSTM | X2 | 12.0388 | 8.6520 | 0.8735 |

| TCN-BiLSTM-Attention | X2 | 8.8668 | 6.4939 | 0.9314 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Xue, J.; Mao, L.; Xing, X.; Sun, Y.; Mo, R.; Pang, Z. Rate of Penetration Prediction in Steeply Dipping Coal Seams Using Data-Driven Modeling and Feature Engineering. Processes 2026, 14, 1174. https://doi.org/10.3390/pr14071174

Xue J, Mao L, Xing X, Sun Y, Mo R, Pang Z. Rate of Penetration Prediction in Steeply Dipping Coal Seams Using Data-Driven Modeling and Feature Engineering. Processes. 2026; 14(7):1174. https://doi.org/10.3390/pr14071174

Chicago/Turabian StyleXue, Jiawen, Liangjie Mao, Xuesong Xing, Yanwei Sun, Rihe Mo, and Zhaoyu Pang. 2026. "Rate of Penetration Prediction in Steeply Dipping Coal Seams Using Data-Driven Modeling and Feature Engineering" Processes 14, no. 7: 1174. https://doi.org/10.3390/pr14071174

APA StyleXue, J., Mao, L., Xing, X., Sun, Y., Mo, R., & Pang, Z. (2026). Rate of Penetration Prediction in Steeply Dipping Coal Seams Using Data-Driven Modeling and Feature Engineering. Processes, 14(7), 1174. https://doi.org/10.3390/pr14071174