1. Introduction

Foundational work in industrial systems modeling established that production, warehousing, and supply chain operations are dynamic, interdependent, and event-driven, motivating formal modeling and intelligent control. Agent-based systems combined with Colored Petri Nets (CPNs) were shown to capture this complexity effectively, improving coordination in warehouse order picking and resource allocation under demand uncertainty [

1]. These approaches were extended to multi-echelon supply chains, where hierarchical CPNs enabled the analysis of inventory propagation, order variability, and disruption effects across tiers [

2]. Empirical studies further demonstrated that inventory inaccuracy significantly degrades service levels and amplifies upstream instability, highlighting the need for system-wide coordination [

3]. Reinforcement learning integrated with timed CPNs enabled adaptive manufacturing scheduling under stochastic disturbances, reinforcing the view of supply chains as networked decision systems with cascading effects [

4,

5].

Building on this trajectory, modern supply chains are increasingly modeled as large-scale networks of suppliers, manufacturers, distributors, and retailers, where efficiency, resilience, and sustainability are critical. AI-based process modeling has therefore become central to supply chain optimization. In this context, Graph Neural Networks (GNNs) are particularly suitable, as supply chains can be naturally represented as graphs with entities as nodes and material, informational, or financial dependencies as edges [

6]. Empirical evidence shows that GNN-based models outperform conventional statistical and deep learning baselines on tasks such as forecasting and classification, often achieving substantial improvements by learning non-linear structural dependencies and propagation effects [

6].

Attention-based graph models further enhance supply chain learning. Graph Attention Networks (GATs) assign non-uniform weights to neighboring nodes during aggregation, enabling models to emphasize critical supplier–customer relationships while suppressing less relevant interactions [

7]. Beyond predictive gains, attention mechanisms improve interpretability by revealing influential dependencies, and recent GAT-based resilience models demonstrate strong disruption–classification performance while exposing structural vulnerabilities through learned attention patterns [

8].

Despite these advances, real-world deployment is constrained by data decentralization and confidentiality. Supply chain data are distributed across organizations and are rarely shareable due to commercial, contractual, and regulatory restrictions, rendering centralized GNN training impractical in multi-enterprise settings [

9]. Federated learning (FL) addresses these constraints by enabling collaborative model training without exchanging raw data. The foundational Federated Averaging approach demonstrated that high-quality neural models can be trained across decentralized, non-IID data while retaining data locally [

10]. In industrial ecosystems, FL enables data-limited manufacturers and logistics partners to benefit from collective learning under confidentiality constraints [

11].

A further driver shaping supply chain analytics is the need to integrate sustainability objectives. Supply chains contribute significantly to greenhouse gas emissions and resource use, motivating sustainability-aware AI that incorporates metrics such as carbon emissions or energy intensity directly into learning and decision-making. AI-driven logistics and operational optimization have been associated with measurable environmental benefits, including substantial CO

2 reductions [

12]. In adjacent domains, federated learning combined with control and optimization—such as reinforcement learning in energy systems—has achieved simultaneous cost and emissions reductions through decentralized coordination [

13]. Nonetheless, most supply chain ML systems remain predominantly single-objective, and explicit sustainability integration during training is still uncommon, particularly under decentralization constraints.

Consequently, important gaps remain. Centralized GNNs struggle with data silos and governance, while conventional FL is typically designed for tabular data and does not naturally accommodate graph-structured dependencies spanning organizational boundaries. Only recently has federated graph learning been applied to supply chain visibility, risk modeling, and demand–inventory prediction, including early attempts to integrate sustainability considerations [

14,

15]. These studies demonstrate feasibility but also underscore the absence of a unified framework jointly addressing decentralization, graph-based interdependence modeling, and sustainability-aware optimization.

Despite recent advances in federated graph learning, existing approaches remain insufficient for sustainability-critical supply chain modeling for three primary reasons. First, most federated GNN frameworks optimize predictive objectives without incorporating an explicit and controllable sustainability bias within the message-passing mechanism. Second, they provide limited governance-oriented interpretability, offering no structured means to regulate the trade-off between predictive accuracy and environmental alignment. Third, sustainability signals are typically treated as auxiliary features rather than as structural modifiers of relational influence.

To address these limitations, we propose a federated sustainability-aware graph attention framework in which transport-related environmental signals directly modulate attention weights during aggregation. A tunable parameter λ enables explicit control over the strength of sustainability penalization, thereby introducing a governance-relevant trade-off between predictive performance and environmental alignment. This transforms sustainability from a passive feature into an active structural constraint within decentralized graph learning.

The remainder of the article is organized as follows.

Section 2 reviews related work on GNNs, federated learning, sustainability-aware AI, and federated graph learning.

Section 3 presents the methodology and system architecture.

Section 4 reports experimental results.

Section 5 discusses implications and limitations, and

Section 6 concludes with directions for future research.

2. Related Work

To contextualize our proposed framework, we survey the relevant literature in four key areas. First, we discuss how Graph Neural Networks have been applied in supply chain modeling and optimization, highlighting their benefits over traditional methods. Next, we review federated learning approaches in industrial domains, focusing on how privacy-preserving collaboration has been enabled in manufacturing and supply chain scenarios. Third, we examine research on sustainability-aware AI methods that integrate environmental objectives into model training or decision-making, including multi-objective optimization techniques. Finally, we cover the relatively few works that have attempted to combine GNNs with federated learning, noting their achievements and limitations.

2.1. Graph Neural Networks in Supply Chain Management

Supply chains are inherently graph-structured systems, with nodes representing firms or facilities and edges encoding material, contractual, transportation, or information dependencies. This structure makes Graph Neural Networks (GNNs) particularly suitable, as they learn over non-Euclidean data by propagating information across network topology. Unlike independent-sample models, GNNs capture higher-order interdependencies and cascading effects, enabling them to model how local disruptions propagate across multiple supply chain tiers through message passing [

6].

Empirical evidence consistently shows that GNNs outperform classical time-series and conventional machine learning approaches in supply chain tasks such as demand forecasting, inventory control, risk assessment, anomaly detection, and network reconstruction. Wasi et al. report performance gains typically in the range of 10–30%, attributable to GNNs’ ability to exploit shared structural context (e.g., common upstream suppliers) and enable cross-entity generalization [

6]. Industrial studies further confirm these advantages, particularly under disruption scenarios where structural dependencies dominate system behavior, as demonstrated in automotive supply networks by Gupta et al. [

16].

Beyond accuracy, GNNs provide structural interpretability, which is increasingly critical for decision support, risk governance, and regulatory compliance. Graph Attention Networks (GATs) enhance this capability by learning adaptive attention weights over neighbors, allowing models to emphasize influential entities and relationships. Attention-based graph models have been shown to improve both predictive performance and interpretability in large-scale supply networks, disruption prediction, and manufacturing resilience applications [

17,

18,

19].

GNNs are also effective for uncovering latent or incomplete supply chain structure. Prior work formulates supply chain visibility as a link-prediction problem, showing that GNNs can infer hidden supplier relationships and indirect dependencies absent from enterprise records [

20]. Extensions that integrate graph embeddings with heterogeneous data sources, such as textual risk signals, further improve systemic risk identification in ICT and critical infrastructure supply chains [

21].

Despite these advances, most GNN-based supply chain studies assume access to a centralized and fully observable supply chain graph. In practice, this assumption is often invalid due to confidentiality, data-protection regulation (e.g., GDPR), contractual restrictions, and competition law, which collectively limit cross-enterprise data sharing. Additionally, existing GNN applications predominantly optimize predictive or operational performance, while sustainability objectives—such as emissions intensity or energy use—are typically treated as ex-post evaluation criteria rather than embedded learning targets.

These limitations motivate decentralized graph learning approaches that operate under partial visibility and organizational boundaries, while supporting multi-objective optimization aligned with emerging sustainability and regulatory requirements. This gap is addressed in subsequent sections through the integration of federated learning, graph attention mechanisms, and sustainability-aware modeling objectives.

2.2. Federated Learning in Industrial and Supply Chain Settings

Federated learning (FL), introduced by McMahan et al. through Federated Averaging, enables the effective training of deep neural networks across decentralized and non-IID data without sharing raw data [

10]. Subsequent surveys identify FL as particularly suitable for cross-silo industrial environments characterized by strong heterogeneity in scale, data quality, and processes [

22]. In Industrial IoT settings, hierarchical and two-stage aggregation strategies improve convergence stability and reduce communication overhead, supporting factory-scale deployment [

23].

Within Industry 4.0 and Industry 5.0 frameworks, FL is increasingly regarded as a core enabler of privacy-preserving collaborative intelligence. Surveys in smart manufacturing and product lifecycle management demonstrate its applicability to quality control, predictive maintenance, and process optimization while maintaining data locality [

24]. Empirical studies further show that FL enables data-limited manufacturers to achieve performance comparable to centralized learning without exposing proprietary data, as demonstrated in additive manufacturing condition monitoring [

25]. These properties align with emerging trustworthy-AI principles emphasizing privacy, auditability, and human-centric governance [

26].

In supply chain management, FL applications remain limited but are expanding. Nguyen et al. propose a federated framework for delivery-delay prediction across textile suppliers, demonstrating feasible privacy-preserving collaboration and improved generalization relative to single-organization baselines [

27]. The same study shows that federated models can rival or exceed centralized performance by mitigating overfitting across heterogeneous participants [

27]. Related work extends FL to cross-border logistics, enabling early-warning systems for disruption risk while respecting jurisdiction-specific data-sovereignty constraints [

28]. Comparable federated demand-forecasting architectures in retail and agri-food supply chains rely on encrypted model updates and align with emerging sectoral data-governance frameworks [

29,

30].

Despite its promise, cross-silo FL introduces substantial system and governance challenges, including coordination across autonomous organizations, heterogeneous compute resources and data schemas, and exposure to inference attacks. Secure aggregation and cryptographic mechanisms are therefore required in adversarial or semi-honest settings [

22]. Tang et al. address these challenges by integrating FL with Graph Neural Networks for privacy-preserving supply chain data sharing [

31]. At the organizational level, FL deployments must also comply with data-protection, trade-secret, and competition regulations, which may restrict even indirect information leakage.

Overall, FL is increasingly recognized as a cornerstone technology for collaborative industrial AI, yet supply chain adoption remains largely exploratory. Existing applications predominantly rely on conventional neural architectures and tabular representations, despite the inherently relational structure of supply chains. This gap motivates the integration of FL with graph-based and attention-driven models to enable privacy-preserving, structure-aware learning across multi-enterprise supply networks.

2.3. Sustainability-Aware Artificial Intelligence

As sustainability targets intensify, AI research increasingly distinguishes between AI-for-sustainability, which seeks to reduce emissions, energy use, and resource intensity in operational systems, and “green” AI, which focuses on minimizing the environmental footprint of AI itself through efficient training, inference, and reporting. This work primarily addresses the former by embedding environmental objectives directly into AI models for supply chain process management, while accounting for computational and communication costs.

In supply chain management, sustainability has become a central operational concern, as supply chain activities contribute significantly to greenhouse gas emissions and resource use, requiring the joint optimization of environmental, cost, and service objectives [

32]. Accordingly, indicators such as carbon footprint, energy intensity, waste, and circularity are increasingly treated as decision variables rather than ex-post reporting metrics. AI methods are particularly suited to this setting, as high-dimensional operational data and complex constraints limit analytical optimization. Empirical evidence shows that AI-driven analytics can improve green supply chain process integration and environmental performance when aligned with operational governance [

32], while systematic reviews identify logistics optimization, demand–inventory coordination, and risk-aware planning as the most mature application areas, where emissions and energy use are tightly coupled to operational decisions [

33].

A core methodological principle in sustainability-aware AI is treating environmental performance as a first-class learning objective. This includes integrating environmental impacts into loss functions, enforcing emissions constraints, or learning policies that explicitly trade-off operational and environmental KPIs under uncertainty. In supply chains, Abushaega et al. formalize this approach through a multi-objective framework combining federated learning and Graph Neural Networks to jointly optimize operational and environmental objectives [

15]. Related work in energy systems demonstrates that decentralized learning can yield simultaneous cost and emissions reductions, including empirically observed savings under federated reinforcement learning for building energy management [

13]. Additional studies show that federated sequence models support sustainability optimization beyond centralized deployments [

34,

35].

Sustainability-aware AI also encompasses measurement and accountability of AI’s own environmental footprint. The literature emphasizes standardized green evaluation metrics for comparability across models and deployments. Borraccia et al. propose hybrid metrics for assessing energy–carbon impacts of AI pipelines [

36], while bibliometric analyses document rapid growth in sustainability-oriented machine learning research alongside a persistent gap between methodological advances and deployment-level impact [

37,

38]. Organizational studies further indicate that effective AI-for-sustainability outcomes depend on governance structures and integration into decision workflows, not solely on model design [

39].

Finally, sustainability benefits from AI in supply chains are not guaranteed and may be offset by rebound effects, misaligned incentives, or increased computational burden. Practitioner reports often claim large emissions reductions from AI-driven logistics and planning interventions [

40], but the research literature stresses rigorous evaluation, transparent baselines, and robust measurement to distinguish genuine environmental improvements from confounded operational effects [

36,

41]. While the feasibility of sustainability-aware AI is well supported, most supply chain ML systems still prioritize operational accuracy, treating sustainability as an external constraint. The novelty of our approach lies in explicitly encoding sustainability signals within a decentralized federated graph learning framework, enabling balanced optimization of operational and environmental KPIs under realistic data-governance constraints.

2.4. Combining Graph Neural Networks and Federated Learning

Bringing together GNNs and FL poses both conceptual and system-level challenges, and only a limited body of work has explored this intersection. Standard FL formulations implicitly assume that data points are independent and identically distributed across clients. Graph-structured data violates these assumptions in two fundamental ways: (i) observations (nodes and edges) are relationally coupled and (ii) the graph topology itself may be partitioned across organizational boundaries. In federated settings, this implies that informative dependencies can span clients, while each participant only observes a local subgraph. These properties complicate gradient aggregation, convergence, and representation learning. Despite these difficulties, early studies demonstrate that federated optimization can be extended to graph-based models, giving rise to the paradigm of Federated Graph Neural Networks (FedGNN).

The earliest line of work has been driven by privacy-sensitive applications such as recommender systems and financial networks. Wu et al. introduced FedGNN for a privacy-preserving recommendation, training GNNs over decentralized user–item interaction graphs while applying local privacy mechanisms to gradients and structural signals [

35]. Their results show that a federated GNN can achieve recommendation quality comparable to centralized baselines, while significantly reducing information leakage under realistic threat models. This finding is important because it establishes that graph-based representation learning remains viable under strict data-locality constraints, even when the global graph never materializes.

Subsequent work extends federated graph learning to spatio-temporal domains. Meng et al. propose a cross-node federated GNN for traffic forecasting, where each client manages a regional subgraph and the global model captures dependencies that span geographic boundaries [

35]. Their framework improves forecasting accuracy relative to purely local models and introduces mechanisms for mitigating non-IID graph distributions. Complementary research addresses vertical graph partitioning, in which different clients hold disjoint feature sets or edge views for the same nodes. Chen et al. propose a vertically federated GNN (VFGNN) that enables privacy-preserving node classification when features, edges, and labels are distributed across parties [

36]. These works collectively demonstrate that both horizontal and vertical graph partitioning can be accommodated within federated optimization, albeit with additional architectural and cryptographic complexity.

Our framework distinguishes itself by introducing a Graph Attention Network within a federated learning architecture tailored to multi-enterprise supply chains. Attention is particularly valuable in federated graph settings because local subgraphs differ in topology, density, and informational relevance. An attention-based aggregator can dynamically weight inter-node and inter-client dependencies, allowing the global model to adapt to heterogeneous structural contexts more flexibly than fixed-weight GCNs. Moreover, we explicitly integrate sustainability objectives into the federated GNN training process—an element largely absent from the existing FedGNN literature, which typically prioritizes predictive performance and privacy guarantees alone. While Abushaega et al. take an important step toward multi-objective federated graph learning for supply chains [

15], their architecture does not exploit attention mechanisms and does not explicitly address the interpretability and cross-client structural asymmetries inherent in real-world networks.

As summarized in

Table 1, prior work on supply chain sustainability primarily operates either within centralized graph learning frameworks [

8,

14,

17,

20,

21] or federated learning settings without sustainability-aware aggregation [

9,

10,

24,

31]. Recent AI-driven decarbonization efforts [

12,

32,

33] focus on predictive optimization but lack federated graph structures. Although Federated Graph Neural Networks have been proposed for privacy-preserving supply chain data sharing [

9,

31], they do not introduce explicit environmental bias within the attention mechanism.

The proposed framework uniquely integrates federated graph learning with sustainability-modulated attention. By embedding environmental signals directly into message-passing weights and introducing λ as an explicit governance parameter, the method enables controllable trade-offs between predictive performance and environmental alignment in decentralized supply chain networks.

3. Methodology and Experimental Setup

This section describes the experimental design used to evaluate the proposed sustainability-aware federated graph attention framework. The setup is deliberately constructed to reflect key structural and data-governance characteristics of real industrial supply chains, while remaining fully reproducible and analytically controlled.

The proposed model is trained through an iterative sustainability-aware federated optimization process that preserves data locality at each client while enabling coordinated global learning. Unlike conventional federated graph training, sustainability modulation is embedded directly within the local attention mechanism, and the server additionally aggregates privacy-preserving alignment diagnostics to monitor environmental bias during training. Communication proceeds through repeated rounds between the central server and distributed clients, where model parameters are exchanged but no raw graph data or labels are shared. Algorithm 1 presents the complete governance-aware federated training workflow.

| Algorithm 1 Sustainability-Aware Federated Graph Attention Training |

Input: Initial global parameters ; rounds ; clients each holding a local subgraph with node features and labels; emission attribute for edges; sustainability control parameter (fixed or scheduled); local epochs ; aggregation weights (e.g., proportional to or number of local training nodes).

Output: Final global parameters .

Initialize (Server):

Server initializes model parameters . Server selects sustainability policy (fixed or schedule ) and broadcasts to all clients.

For each communication round :

- (a)

Client-side sustainability-aware local training (parallel for each client ):

Receive .

Train the GAT on local subgraph for epochs using sustainability-modulated attention.

For each incoming edge , attention coefficients are reweighted by an emission penalty after softmax:where is normalized transport emissions and governs sustainability strength.

Compute local model update (or updated weights ).

Compute local alignment diagnostics (no raw data shared), e.g.:

over local edges;

fraction of attention mass allocated to high-emission edges (top quantile).

Send to the server.

- (b)

Server-side aggregation with sustainability monitoring:

Aggregate model updates:

Aggregate diagnostics:

Governance step (optional): update according to a predefined policy (not tuned post hoc), e.g., monotonic schedule or target-alignment rule:

(If fixed-policy, set .)

- (c)

Broadcast:

Server broadcasts to all clients.

Return: final global model parameters . |

3.1. Synthetic Supply Chain Simulation

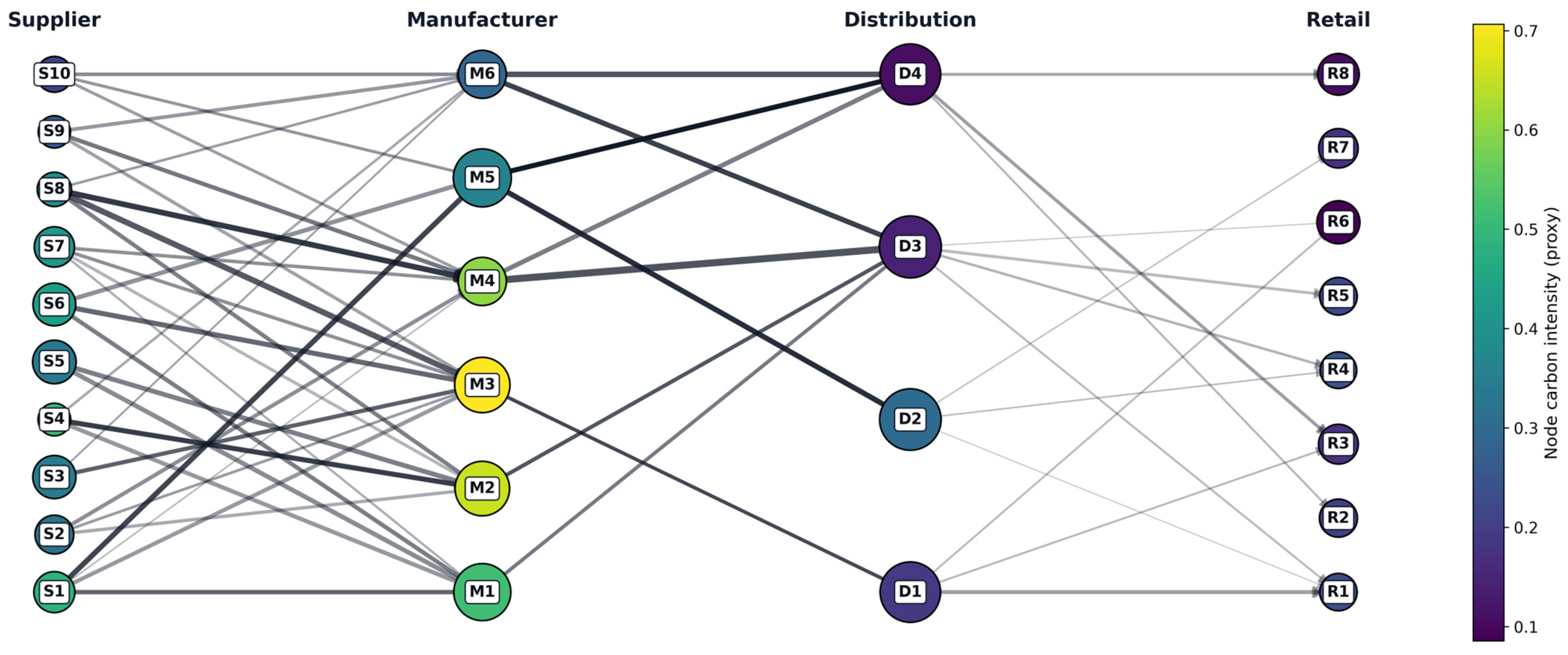

We evaluate the proposed Federated GAT on a realistic synthetic multi-tier supply chain case study. The supply network (illustrated schematically in

Figure 1) is modeled as a directed graph G = (V, E) with multiple echelons including suppliers, manufacturers, distribution centers, and retailers. Each node represents an entity (e.g., a factory, warehouse, or retail outlet) and each directed edge represents a supply relationship or material flow between entities. Nodes are assigned to tiers by operational role (upstream suppliers in Tier 1, intermediate manufacturers in Tier 2, distribution centers in Tier 3, and retailers in Tier 4), creating a layered structure analogous to a consumer goods supply chain.

To ensure structural transparency, we report descriptive statistics of the generated graphs. For the baseline configuration (N = 28 nodes, 41 edges), the mean in-degree and out-degree distributions, as well as transport-emissions statistics, are summarized withing the main text. Client partition statistics further quantify intra- and inter-client edge cuts, clarifying the degree of structural fragmentation introduced by federated partitioning. These statistics demonstrate that the synthetic network preserves tiered supply chain structure while enabling the controlled evaluation of decentralized learning effects.

In the implementation, tier sizes are explicitly defined as 10 suppliers (S1–S10), 6 manufacturers (M1–M6), 4 distribution centers (D1–D4), and 8 retailers (R1–R8) for a total of

N = 28 nodes. Directed edges are generated only between adjacent tiers (S→M, M→D, D→R), producing a multi-tier dependency structure that naturally yields converging flows (multiple suppliers feeding a manufacturer) and diverging flows (a distributor feeding multiple retailers). Concretely, the stochastic edge generator draws for each source node an out-degree:

then connects to a uniformly sampled subset of downstream nodes. This construction yields sparse, tier-constrained connectivity consistent with hierarchical supply networks.

To ensure that downstream tiers are not disconnected from upstream supply, we enforce a weak connectivity constraint, where for every node

in tiers 2–4, at least one inbound edge must exist. Formally, if

a random inbound edge

is added from the upstream tier. This prevents isolated nodes and ensures that message passing is well-defined for most nodes in the node-regression task.

Each node

is assigned operational and sustainability proxy attributes drawn from tier-specific Gaussian distributions, namely capacity, CO

2 intensity, and energy intensity. These distributions are explicitly encoded inducing systematic heterogeneity across tiers (e.g., manufacturers have higher mean carbon intensity than retailers) while maintaining stochastic variability under a fixed random seed,

. Each edge

is parameterized by flow and distance (also positive truncated normal draws), and a deterministic transport-emissions proxy:

This proxy is used consistently both for feature construction and for sustainability-aware attention modulation.

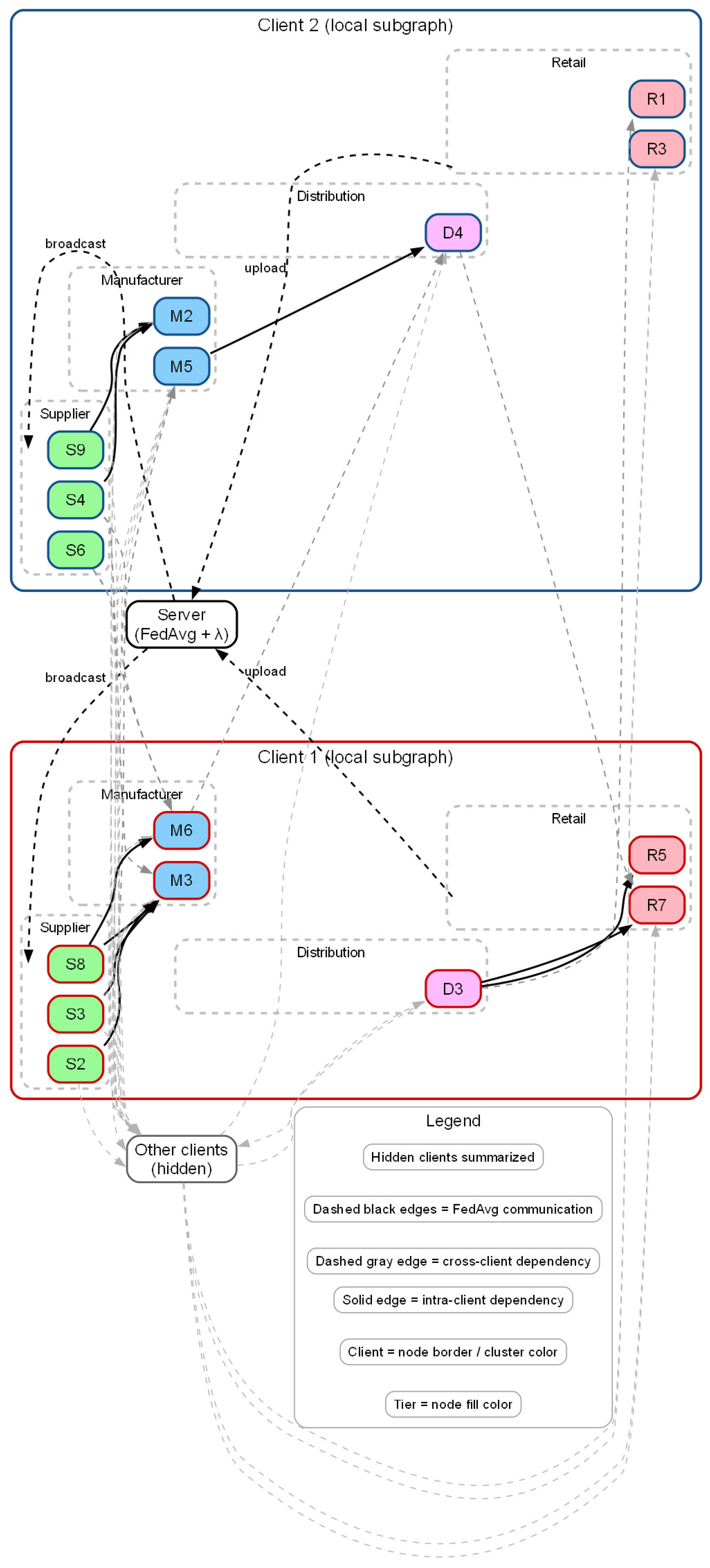

To simulate federated data partitioning, we divided the global supply chain graph into subgraphs held by distinct client organizations.

Figure 2 illustrates this partitioning: each company (or region) in the supply chain acts as a federated client that observes only its portion of the graph. In the implementation, 4 federated clients are created.

Table 2 quantifies the federated partitioning illustrated in

Figure 2 for the baseline graph (

N = 28). Clients contain between 6 and 8 nodes, indicating moderate size imbalance. Intra-client connectivity is sparse (0–3 edges per client), whereas the number of cross-client (cut) edges is substantially larger (16–19 per client). This confirms that most relational dependencies span across client boundaries. Consequently, each client observes only a highly fragmented local subgraph, and global supply chain dependencies cannot be directly reconstructed during local message passing. This validates the structural decentralization assumed in the federated learning setup.

Client assignment is performed independently within each tier using a seeded shuffle followed by round-robin allocation:

This produces tier-balanced client memberships but non-identical local structures. Edges that cut across clients represent inter-company links (e.g., a supplier shipping to a manufacturer in a different organization)—these links exist in the overall graph but no single client can exploit them during local training. This setup reflects real-world data silos: each enterprise has visibility into its local operations and direct partners, but not the full end-to-end supply chain.

Critically, the federated training loop enforces strict locality: each client trains on its induced subgraph consisting only of nodes it owns and intra-client edges. Formally, client

observes

This is implemented by filtering the global edge list. Hence, cross-client dependencies exist globally but are missing locally, creating a non-IID, partial-observability regime.

3.2. Data Generation and Sustainability Labeling

We generate synthetic operational data on the supply chain graph to train and evaluate the models. Each node is assigned a feature vector representing its operational state and sustainability profile, and each edge carries logistics attributes, including for transport emissions. In the code, the node-level feature vector is explicitly defined as a 5-dimensional descriptor:

where the last two components summarize the upstream dependency and upstream logistics footprint:

This construction makes the prediction problem structurally dependent: the feature vector is not purely local, but contains aggregated information from incoming edges, which graph-based learners can exploit more naturally than independent baselines.

The feature matrix

is standardized using dataset-level z-scoring,

and converted into the tensor

for training on CPU/GPU depending on availability.

Using this data, we formulate a synthetic target variable at each node called the “stress score.” This node-level stress score is designed as a composite outcome that increases under capacity scarcity, upstream dependency, and carbon-intensive logistics. In the implementation, the pre-normalized stress score

is constructed as a weighted combination of interpretable drivers:

with

used only for numerical stability. The value is min–max scaled to [0, 1] and perturbed with Gaussian noise of standard deviation std = 0.05:

By construction, this target is influenced by both operational dynamics (capacity, inbound dependency) and environmental factors (carbon/energy intensity and transport emissions), aligning with the objective of sustainability-aware modeling.

We split the dataset into training, validation, and test sets by randomly partitioning the node set (not multiple temporal snapshots). Specifically, a random permutation of node indices is drawn and masks are created using train ratio = 0.7 and validation ratio = 0.15, with the test set comprising the remaining nodes. This yields a transductive node-regression setting where the graph structure and all nodes are present during training, but only training nodes contribute to the supervised loss, while validation and test nodes are used strictly for evaluation.

3.3. Model Configurations and Training Procedure

We compare four model configurations in our experiments, corresponding to baseline approaches and our proposed method.

Centralized GAT: A Graph Attention Network model that has access to the entire supply chain graph during training. It represents an ideal pooled-data scenario and provides an upper-bound reference under full observability. The implemented GAT uses a single attention layer with 32 hidden dimensions and 2 heads. Message passing is performed over incoming neighborhoods. For head , node projections are computed as

and attention logits for an incoming edge

are computed using concatenated projected states and edge features

(flow and transport emissions, min–max normalized over global edges):

Normalization is performed by softmax over

:

The head outputs are aggregated by weighted neighbor sums and concatenated before a linear readout.

- 2.

Federated GAT (FedAvg): A standard federated version of the GAT model trained with synchronous Federated Averaging. Each client trains on its local induced subgraph

for 10 local epochs over 40 rounds. After each round, client models are aggregated using node-count weights:

This baseline isolates the impact of partial observability and non-IID graph partitioning without additional sustainability mechanisms.

- 3.

Federated Sustainability-Aware GAT: Our proposed model, which extends the Federated GAT with a sustainability-aware attention mechanism. In the implementation, sustainability is incorporated directly into the attention coefficients. After computing by softmax, the model applies an exponential penalty based on normalized transport emissions (the second edge-feature component),

followed by re-normalization over the incoming neighborhood,

The supervised objective is defined as the mean squared error for node stress prediction, i.e.,

where

are the client’s training nodes defined by the global train mask restricted to

.

All models were implemented in Python 3.11.9 using PyTorch 2.9.0 and trained with the Adam optimizer using learning rate LR = 10−3 and weight decay WD = 10−4. Centralized models are trained for 250 epochs. Federated models are trained for 40 rounds with 10 local epochs per round, yielding 400 local epochs per client in total, while global validation RMSE is monitored each round by evaluating the aggregated model on the full graph and the global validation mask.

3.4. Evaluation Metrics

We evaluate model performance on the held-out test nodes using standard regression metrics, root mean squared error (RMSE) and mean absolute error (MAE), between the predicted and true stress scores. For a test set

, these are:

In addition to the prediction error, a key evaluation in our study is the attention–emission relationship. Since the GAT explicitly outputs per-edge attention values, we can quantify sustainability alignment by pairing each learned attention coefficient with its corresponding normalized edge emission. Concretely, the head-averaged attention coefficient for each edge is computed as:

where emissions are given by

, we compute rank-based associations (e.g., Spearman correlation) between

and

, and complementary diagnostics such as the fraction of attention mass assigned to the highest-emission quantile of incoming edges. These measures are defined at the setup level here, while empirical values and comparative analysis are reported in the results section.

4. Results

4.1. Predictive Performance Comparison

Table 3 reports the predictive performance of the evaluated graph-based models on the held-out test node subset

, using root mean squared error (RMSE) and mean absolute error (MAE). Reported values correspond to the mean and standard deviation over five independent random seeds, with model selection performed using validation RMSE. For centralized training, validation performance is tracked per epoch, while for federated training it is tracked per communication round.

The centralized GAT achieves the lowest prediction error across both metrics (RMSE = 0.2133 ± 0.1141, MAE = 0.1873 ± 0.1063), reflecting its unrestricted access to the full supply chain graph. In this setting, the model can exploit complete neighborhood structure and edge attributes across all tiers. Given that the synthetic node-level stress signal depends on both node-local features and aggregated upstream quantities (e.g., total inbound flow and transport emissions), centralized message passing enables coherent propagation of relational information throughout the network.

The predictive advantage of centralized graph learning can be attributed to two complementary architectural properties. First, relational aggregation over incoming neighborhoods, , smooths node representations by incorporating upstream information, mitigating label noise through structural correlation. Second, edge-aware attention weighting allows differential emphasis on upstream connections based on both node embeddings and normalized edge attributes, enabling the model to identify structurally influential pathways for downstream stress propagation.

Both federated graph attention models exhibit higher prediction error than the centralized baseline, highlighting the intrinsic challenges of decentralized graph learning under strict locality constraints. The standard Federated GAT (FedAvg) attains RMSE = 0.2606 ± 0.1124 and MAE = 0.2235 ± 0.1059. In this setting, each client trains exclusively on its induced subgraph and cross-client edges are unavailable during message passing. Consequently, global relational dependencies spanning multiple clients cannot be directly exploited during local updates. The global model obtained via weighted FedAvg reconciles fragmented client views only indirectly through parameter aggregation, leading to predictable performance degradation relative to centralized training.

The Federated Sustainability-Aware GAT achieves RMSE = 0.2593 ± 0.1131 and MAE = 0.2195 ± 0.1075, statistically indistinguishable from the standard federated baseline. A paired sign test on RMSE across seeds yields , indicating no statistically significant difference between the two federated variants. Similar behavior is observed for MAE. These results demonstrate that embedding sustainability-aware modulation directly within the attention mechanism does not degrade federated predictive performance.

Importantly, sustainability is not introduced via an auxiliary loss term but is embedded directly into the attention mechanism through an emission-aware bias applied after softmax normalization (Equations (15) and (16)). With , high-emission transport edges are systematically down-weighted during message passing. While this mechanism imposes an explicit inductive bias, it preserves predictive accuracy relative to conventional federated graph learning.

From an applied perspective, the results highlight a structured trade-off landscape. Centralized graph learning represents a best-case scenario for predictive accuracy but is often infeasible due to data-sharing and privacy constraints. Standard federated learning preserves decentralization but incurs performance loss when global relational dependencies are critical. The Sustainability-Aware Federated GAT occupies an intermediate position: it remains fully decentralized while embedding environmental preferences directly into relational inference, achieving comparable predictive performance alongside structured sustainability alignment examined in the subsequent λ-ablation analysis.

Before examining training dynamics and sustainability–accuracy trade-offs, we assess whether the sustainability-aware formulation introduces any circularity or label-dependent bias.

4.2. Construct Validity and Label Sensitivity Analysis

A potential methodological concern in synthetic experimental settings is circularity: if sustainability-related variables contribute directly to the target label, a sustainability-aware model may appear to perform well simply because its inductive bias aligns with the label construction. In such a case, improvements in predictive performance could reflect label leakage rather than genuine modeling advantages. To address this concern rigorously, we conduct a structured label sensitivity analysis designed to decouple sustainability-aware attention from the specific stress formulation used in the baseline experiments.

Specifically, we evaluate five distinct label-generation regimes by systematically varying the contribution of sustainability-related components in the synthetic stress definition:

Full sustainability weighting: Original configuration.

Reduced sustainability weighting: Sustainability coefficients scaled by 0.5.

No sustainability contribution: All sustainability-related terms removed.

Emissions-only contribution: Only transport emissions included.

Node-level sustainability only: Only node carbon intensity and energy intensity included.

For each regime, the complete multi-seed federated evaluation protocol is repeated. This ensures that any observed behavior reflects model robustness rather than incidental alignment between attention bias and label structure.

The most critical test is the No sustainability contribution regime, where all sustainability variables are removed from the stress definition. In this setting, the standard Federated GAT achieves RMSE = 0.2758, while the Sustainability-Aware Federated GAT achieves RMSE = 0.2740 (Δ = −0.00177). The negligible difference indicates that the sustainability-aware attention mechanism does not rely on label-specific environmental components for predictive performance. Rather, its inductive bias remains stable even when sustainability plays no role in the target variable.

Across all five regimes, predictive differences between the two federated variants remain statistically insignificant. A paired sign test on RMSE across seeds yields p = 1.0 in each configuration, and similar patterns are observed for MAE. These results demonstrate that the sustainability-aware formulation does not artificially inflate performance through circular label construction.

Importantly, while predictive accuracy remains statistically comparable, the sustainability-aware model continues to exhibit systematically stronger environmental alignment in attention weights (as shown in the λ-ablation analysis). This confirms that the model’s environmental behavior arises from structured attention modulation rather than from overfitting to label components.

Taken together, the label sensitivity study provides strong evidence of construct validity. The sustainability-aware attention mechanism functions as an inductive bias embedded within decentralized message passing, not as a proxy for explicit label encoding. Consequently, concerns of circular performance inflation are empirically mitigated.

4.3. Training Dynamics and Convergence

Figure 3 presents the validation RMSE trajectories of the centralized and federated graph attention models, averaged over multiple random seeds, with shaded regions indicating one standard deviation. To ensure comparability across training paradigms, all curves are reported over a unified horizontal axis of 40 evaluation steps. For federated models, each step corresponds to one communication round, while for centralized training the validation trajectory is resampled from the full 250-epoch optimization history. The horizontal axis therefore represents aligned reporting checkpoints rather than equivalent optimization time.

The centralized GAT exhibits smooth and stable convergence, with a consistent reduction in validation RMSE and comparatively narrow variability across seeds. This behavior reflects the advantages of centralized graph learning, where full access to the global supply chain topology enables uninterrupted message passing across all tiers. At each optimization step, gradients are computed using complete neighborhood information and edge attributes, yielding low-variance updates and reliable convergence.

Both federated graph attention models converge more slowly and display wider uncertainty bands. This behavior is characteristic of decentralized graph learning under non-identically distributed (non-IID) subgraph partitions. Each client optimizes a local objective defined on its induced subgraph , where neighborhood structure, edge-attribute distributions, and structural roles within the supply chain differ across clients. These heterogeneous local optima are periodically reconciled through weighted parameter aggregation, which can introduce non-smooth global parameter updates and contributes to the increased variance observed in federated training. By construction, this model reshapes the message-passing process through an emission-aware attention bias that penalizes high-emission transport edges prior to renormalization. Consequently, information propagation is progressively concentrated on lower-emission pathways, leading to a more selective and structured flow of information during training. From an optimization standpoint, this mechanism introduces a controlled inductive bias that affects convergence speed but enhances consistency in how sustainability considerations are incorporated into the learned representations. In federated settings, where each client observes only a fragment of the global graph, unconstrained attention can overemphasize locally predictive but environmentally undesirable edges. The sustainability-aware formulation counteracts this tendency by steering optimization toward solutions that balance predictive performance with environmental alignment.

The modest elevation in validation RMSE relative to the standard federated baseline should therefore be interpreted as the explicit cost of embedding sustainability constraints directly into the inference pathway, rather than as evidence of instability or inefficient training. Notably, the overlap of uncertainty bands in

Figure 3 indicates that the sustainability-aware model remains stable across random initializations and does not exhibit pathological convergence behavior.

Overall,

Figure 3 demonstrates that sustainability-aware federated graph learning converges reliably under strict locality constraints, while embedding environmental priorities directly into the message-passing mechanism. This positions the proposed model as a principled extension of federated graph learning for sustainability-critical supply chain applications, where predictive accuracy must be balanced against environmentally informed decision-making.

4.4. Sustainability-Aware Attention Patterns

A key objective of the proposed Sustainability-Aware Federated GAT is to reduce reliance on carbon-intensive transport links during message passing. This behavior can be directly examined by analyzing learned attention coefficients, extracted during evaluation with. For each directed edge , attention weights are averaged across heads to obtain a single scalar .

Figure 4 shows the relationship between normalized transport emissions and mean attention weights for the standard Federated GAT (

) and the sustainability-aware variant (

). In the unconstrained model, attention weights are widely dispersed across the full emissions range, with no systematic dependence on emissions. Several high-emission edges receive moderate or high attention, reflecting their predictive utility under the synthetic stress generation mechanism, which explicitly incorporates inbound emissions.

In contrast, the sustainability-aware model exhibits a clear shift in attention allocation. High-emission edges are systematically down-weighted, while low- and medium-emission edges retain greater attention mass. This pattern is induced by the multiplicative emissions penalty applied within the attention mechanism, followed by neighborhood renormalization. The resulting behavior reflects a soft inductive bias: carbon-intensive links are discouraged but not entirely suppressed, allowing predictive relevance to partially offset the sustainability penalty when necessary.

From an interpretability standpoint, the learned attention distributions provide graph-native explanations of information flow. The observed reduction in attention assigned to high-emission edges confirms that sustainability considerations are embedded directly into the inference pathway, yielding representations that balance predictive performance with environmentally informed decision-making.

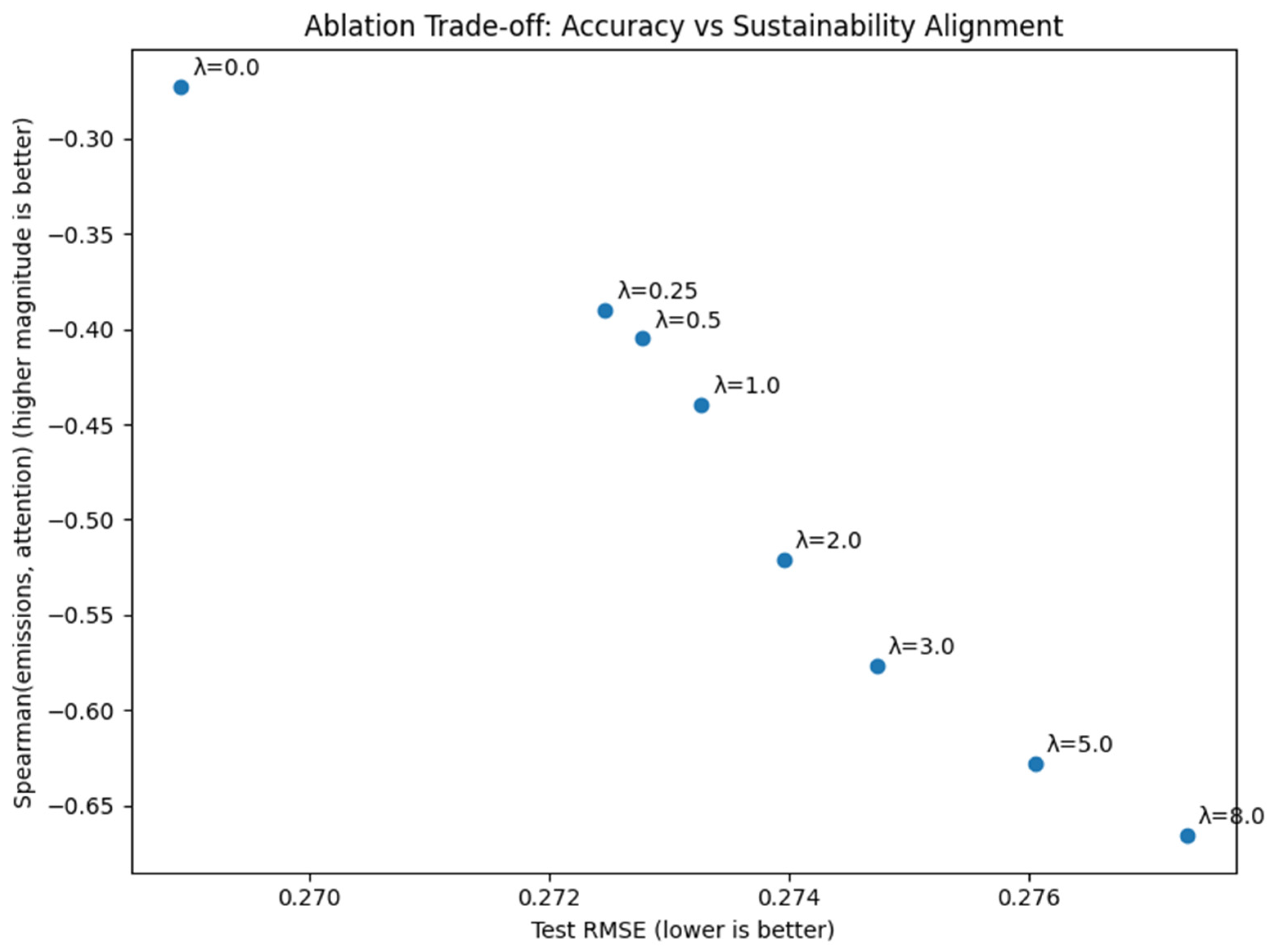

4.5. Ablation Study: Impact of Sustainability Weight (λ)

To systematically assess how the strength of sustainability bias influences the behavior of the proposed attention mechanism, we conduct a λ-ablation study over a predefined and fixed grid,

with all configurations evaluated across multiple random seeds. The λ values are specified a priori and are not tuned post hoc, ensuring a transparent and reproducible evaluation protocol. While predictive performance is summarized separately, this subsection focuses specifically on how sustainability alignment evolves as a function of λ, isolating the effect of the attention penalty on message-passing behavior.

Figure 5 reports two complementary sustainability alignment diagnostics as functions of λ, shown as mean ± standard deviation over seeds:

- (i)

The Spearman rank correlation between normalized transport emissions and learned attention weights;

- (ii)

The fraction of incoming attention mass allocated to high-emission edges (top emission quantile).

Both metrics exhibit smooth and monotonic trends as λ increases. The Spearman correlation becomes progressively more negative, indicating that attention weights are increasingly de-emphasized on high-emission edges. In parallel, the share of attention mass assigned to carbon-intensive links decreases steadily. These consistent patterns confirm that the proposed exponential attention penalty induces a systematic and stable reorientation of message passing away from high-emission transport links, rather than producing noisy or irregular effects.

Notably, the variability across random seeds decreases at higher λ values, suggesting that stronger sustainability bias leads to more reproducible alignment behavior under federated training conditions. This indicates that the mechanism functions as a stable inductive bias, rather than introducing additional instability into the learning process.

Figure 6 summarizes the ablation results by plotting predictive error against sustainability alignment, yielding a trade-off frontier across λ values. Each point corresponds to a distinct operating regime of the Sustainability-Aware Federated GAT, with sustainability alignment measured as the negative Spearman correlation between emissions and attention (higher values indicate stronger de-emphasis of high-emission edges).

The resulting frontier reveals a clear and structured trade-off. As λ increases, sustainability alignment improves monotonically, while predictive error increases gradually. Importantly, the degradation in accuracy is smooth rather than abrupt, indicating that the attention penalty suppresses high-emission links in a soft and continuous manner rather than eliminating large portions of the graph structure.

Intermediate λ values (approximately λ ≈ 2–3) occupy favorable operating regimes near the knee of the trade-off curve. In this range, substantial reductions in emission–attention coupling are achieved while predictive degradation remains limited, representing practical compromises between environmental alignment and predictive fidelity.

Overall, this analysis reframes λ not as a hyperparameter to be optimized solely for accuracy, but as a policy-relevant control variable. Adjusting λ directly governs how strongly sustainability considerations shape the model’s inference pathway, enabling controlled, interpretable, and reproducible trade-offs in sustainability-critical federated supply chain settings.

4.6. Scalability and Communication Analysis

To evaluate scalability, we extend experiments to larger synthetic supply chain graphs (N28, N100, N500) and vary the number of federated clients (K ∈ {2, 4, 8}). Results for representative configurations are shown in

Table 4.

Runtime increases with graph size, as expected from attention-based aggregation. The sustainability-aware model introduces moderate computational overhead (≈15–20%) but remains stable at larger scales

Communication overhead is determined by model size (517 parameters; 2068 bytes in float32). Per-round communication scales linearly with K: 8272 bytes (K = 2), 16,544 bytes (K = 4), and 33,088 bytes (K = 8). Across 40 rounds, total communication remains below 1.32 MB, and the sustainability-aware formulation introduces no additional transmission cost.

5. Discussion

This study examined whether sustainability-aware relational learning can be embedded within a federated graph learning framework without compromising predictive reliability under strict data-locality constraints. The findings yield four principal insights.

First, the performance hierarchy confirms expected structural properties of decentralized graph learning. The centralized GAT provides an upper-bound benchmark (RMSE = 0.2133 ± 0.1141), reflecting full access to global topology. Under federated optimization, performance degrades to RMSE = 0.2606 ± 0.1124, quantifying the structural cost of decentralization and cross-client edge fragmentation. This degradation is consistent with partial observability rather than model instability.

Second, embedding sustainability directly into the attention mechanism does not degrade predictive performance relative to the standard federated baseline. The Sustainability-Aware Federated GAT achieves RMSE = 0.2593 ± 0.1131, and a paired sign test across multiple random seeds yields p = 1.000. This indicates no statistically significant predictive deterioration due to the sustainability-aware modulation.

Third, structured label-sensitivity analysis mitigates concerns of circularity. When sustainability components are entirely removed from the synthetic stress definition (“no_sust” regime), the sustainability-aware model remains statistically indistinguishable from the baseline (ΔRMSE ≈ −0.0018). This confirms that environmental modulation functions as a structural inductive bias within message passing rather than exploiting label construction.

Fourth, λ-ablation experiments demonstrate a smooth and controllable trade-off between predictive accuracy and environmental alignment. As λ increases, attention allocation to high-emission links systematically decreases while prediction error rises only moderately. This monotonic behavior suggests that sustainability modulation acts as a stable structural regularizer. Interpreting λ as a governance parameter enables the mapping of different parameter intervals to distinct regulatory or corporate sustainability strategies, strengthening managerial relevance.

Scalability experiments further demonstrate predictable computational behavior. Increasing graph size from 28 nodes to 500 nodes increases federated training time from 2.64 s to 49.52 s (K = 2), while sustainability modulation introduces only modest additional overhead and no extra communication complexity. These results indicate computational feasibility for medium-scale decentralized networks.

A dedicated methodological clarification is necessary regarding the synthetic nature of the benchmark. The supply chain graphs and stress signals are procedurally generated to ensure controlled experimentation, reproducibility, and structured sensitivity analysis. The synthetic construction preserves the tiered structure, cross-client fragmentation, and heterogeneous degree patterns, allowing isolation of decentralization and governance effects. However, the present study should be interpreted as a controlled methodological validation rather than a fully empirical industrial deployment. Real-world supply chains exhibit dynamic topology, incomplete information, contractual asymmetries, and regulatory heterogeneity that extend beyond the current benchmark. Empirical validation on operational multi-enterprise datasets remains a critical direction for future work.

Overall, the findings demonstrate that sustainability-aware federated graph learning is statistically robust, structurally interpretable, and computationally scalable under controlled conditions.

6. Conclusions

This paper introduced a sustainability-aware federated graph attention framework for decentralized supply chain process modeling. The proposed method integrates Graph Attention Networks with federated optimization and embeds transport-related environmental signals directly into the message-passing mechanism through an emission-weighted attention modulation governed by parameter λ.

Multi-seed evaluation confirms that federated learning incurs a predictable decentralization cost relative to centralized training, while sustainability-aware modulation preserves predictive performance without statistically significant degradation. Label-sensitivity analysis further eliminates concerns of circularity, confirming that environmental bias operates as an inductive structural constraint rather than as label-aligned leakage.

The λ-ablation study establishes a controllable accuracy–sustainability frontier, positioning λ as an explicit governance-relevant control parameter. Scalability experiments demonstrate predictable computational scaling and limited additional overhead due to sustainability modulation.

The study should be understood as a controlled methodological validation conducted on a structurally realistic but synthetic supply chain benchmark. Its primary contribution lies in demonstrating the feasibility, statistical robustness, interpretability, and scalability of sustainability-modulated federated graph learning under analytically transparent conditions. Future work should extend this framework to dynamic real-world datasets, incorporate secure aggregation mechanisms, and evaluate multi-objective environmental indicators in operational multi-enterprise settings.

By unifying decentralization, relational modeling, and sustainability-aware optimization within a single architecture, this work provides a principled foundation for privacy-preserving and environmentally aligned AI in supply chain networks.