Domain-Adaptive Multimodal Large Language Models for Photovoltaic Fault Diagnosis via Dynamic LoRA Routing

Abstract

1. Introduction

- We develop a multimodal large model for PV fault analysis by designing a novel fine-tuning method and constructing a dedicated dataset. To the best of our knowledge, this is the first multimodal large model specifically developed for PV fault analysis.

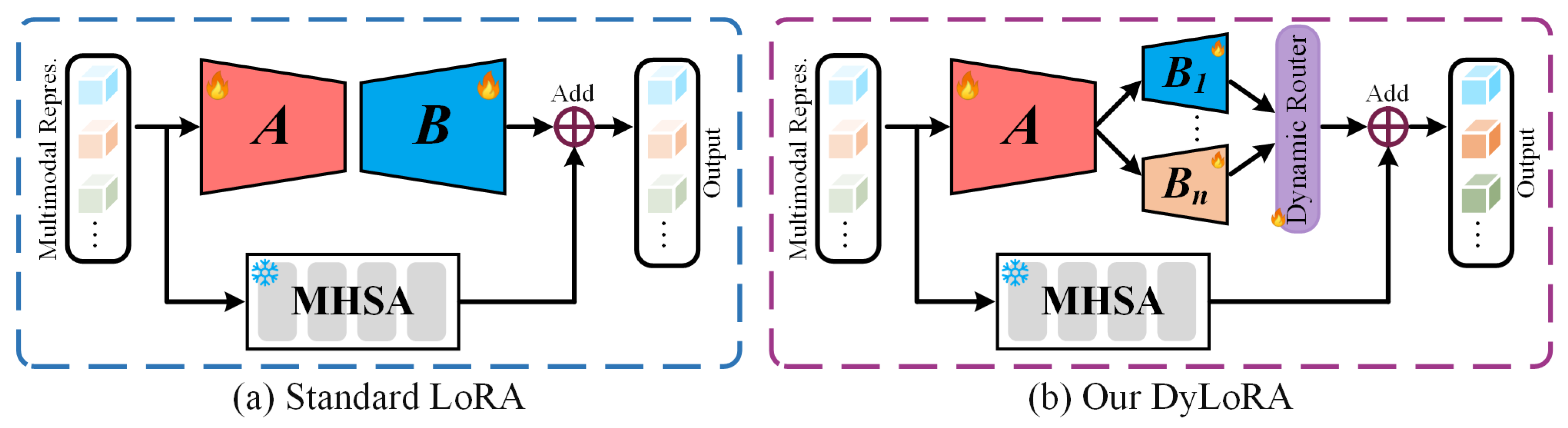

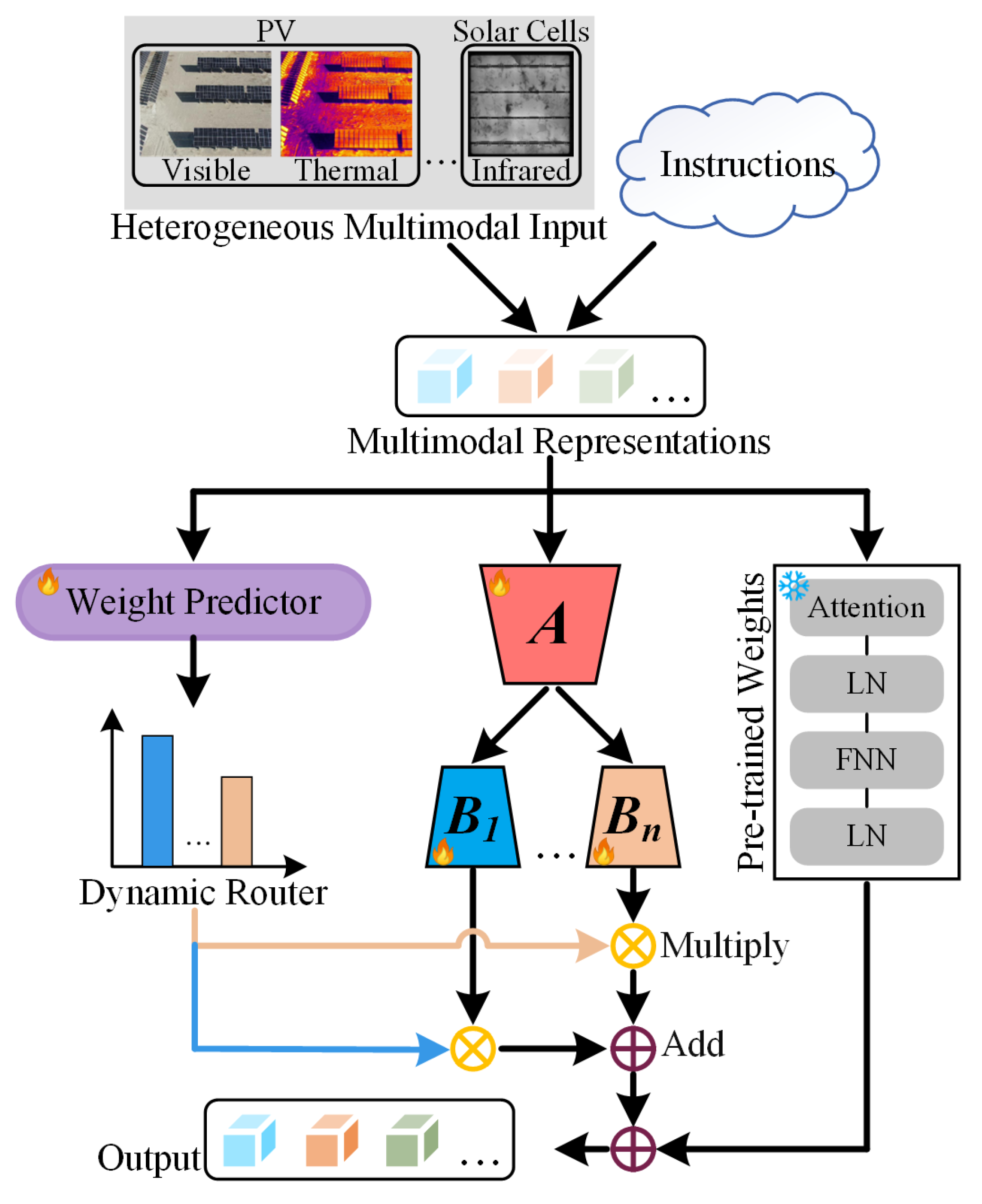

- We propose DyLoRA, a dynamic expert routing mechanism that enables input-aware adaptation. It advances beyond the limitations of standard LoRA by integrating a shared low-rank matrix with multiple, expert-specific branches, significantly improving model flexibility and robustness in complex multimodal PV scenarios.

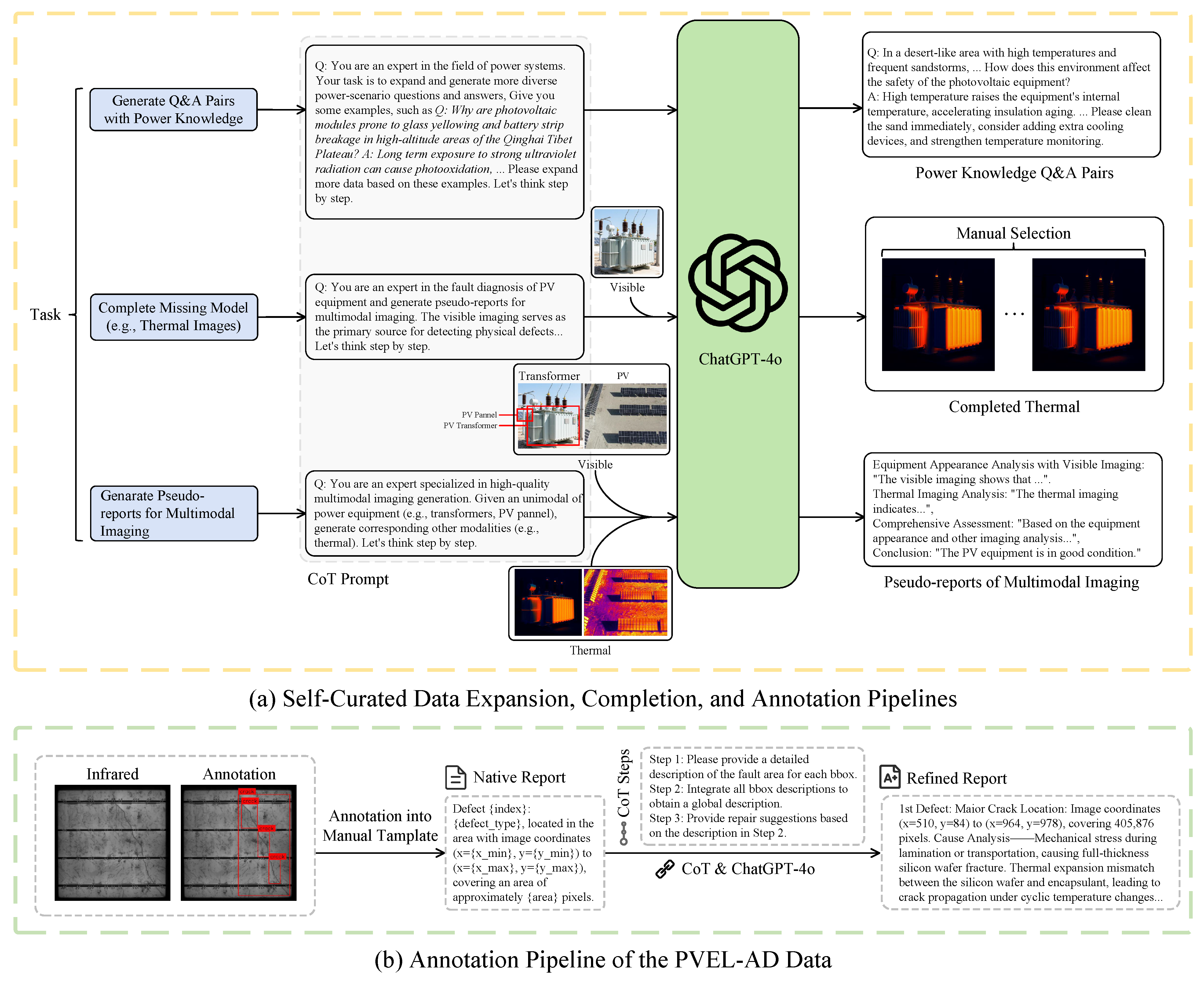

- We construct a PV fault dataset over 35,000 samples with CoT-based pseudo-annotations and multimodal inputs.

2. Related Work

2.1. Multimodal Large Language Models

2.2. Parameter-Efficient Fine-Tuning

2.3. Power System with MLLM

3. Method

3.1. Overview

3.2. Dynamic Expert Routing with LoRA

3.3. Dataset Curation

3.4. Loss Function

3.4.1. Pre-Training for Feature Alignment

3.4.2. Fine-Tuning on Fault Analysis

4. Experiments

4.1. MLLMs for Fault Analysis

4.2. Datasets

4.3. Evaluation Metrics

- Accuracy evaluates whether the generated report correctly identifies the fault type and cause. Let be the set of key diagnostic elements (e.g., fault types, causes) from the reference report, and is from the generated report. Accuracy is defined as follows:

- Completeness measures whether the generated report includes all necessary information that appears in the ground truth. Let be the set of expected components and analysis items from the reference report, and is from the generated report. The computation follows the same set-overlap formulation as Accuracy,

- Practicality assesses whether the report provides clear and actionable maintenance instructions. Let be the set of expected repair actions in the reference report, and is those extracted from the generated report. Similar to Accuracy, it is computed based on the overlap between expected and generated repair instructions:

- Average Score is a metric for the report’s overall quality, defined as the arithmetic mean of the four criteria above:

4.4. Implementation Details

4.5. Baselines

4.6. Reasoning Analysis

4.7. Comparative Results

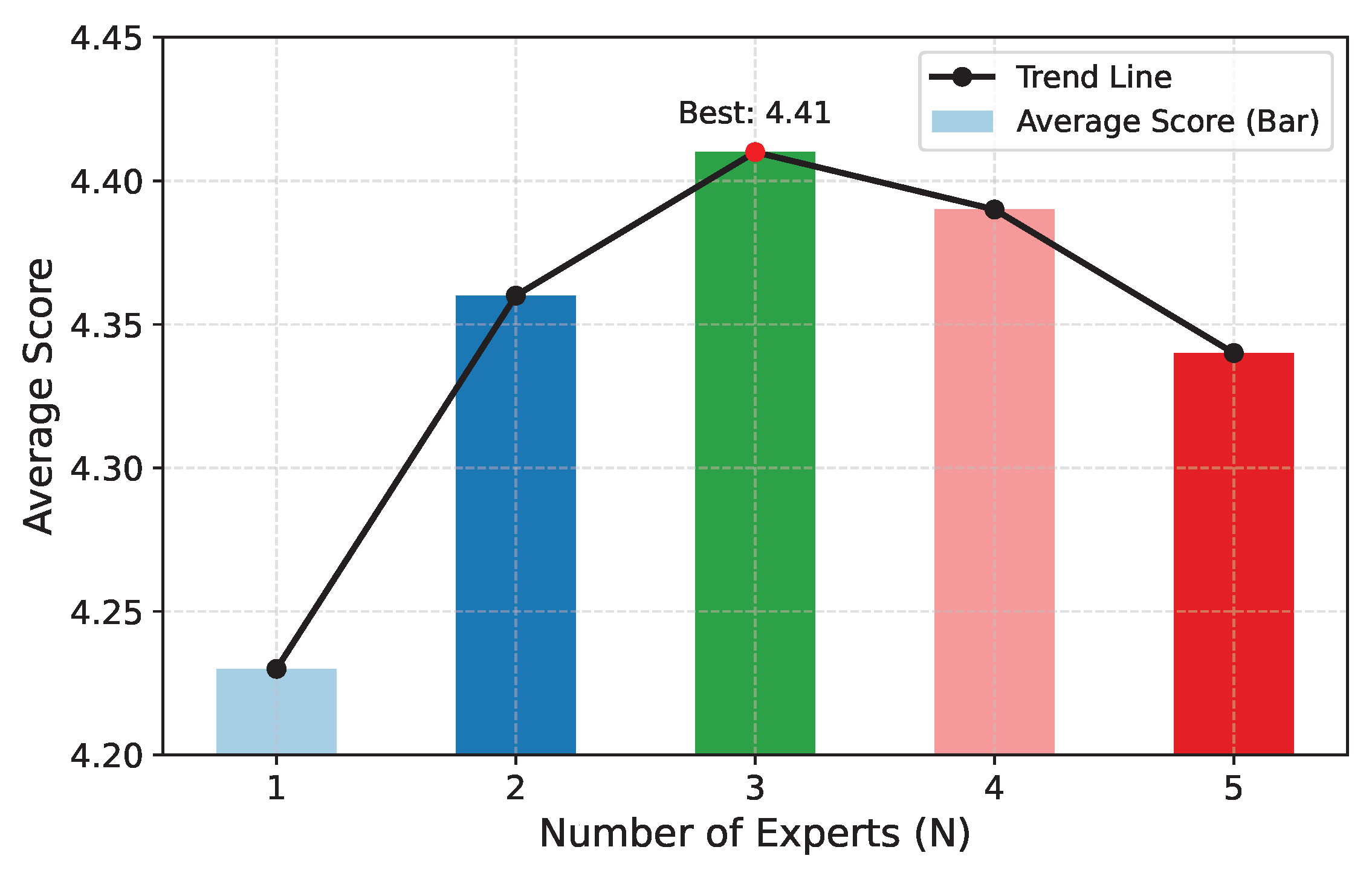

4.8. Ablation Study

4.9. Computational Efficiency

5. Conclusions

6. Limitations

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Osmani, K.; Haddad, A.; Lemenand, T.; Castanier, B.; Ramadan, M. A review on maintenance strategies for PV systems. Sci. Total Environ. 2020, 746, 141753. [Google Scholar]

- Aram, M.; Zhang, X.; Qi, D.; Ko, Y. A state-of-the-art review of fire safety of photovoltaic systems in buildings. J. Clean. Prod. 2021, 308, 127239. [Google Scholar] [CrossRef]

- Shafiullah, M.; Ahmed, S.D.; Al-Sulaiman, F.A. Grid integration challenges and solution strategies for solar PV systems: A review. IEEE Access 2022, 10, 52233–52257. [Google Scholar] [CrossRef]

- Kheirrouz, M.; Melino, F.; Ancona, M.A. Fault detection and diagnosis methods for green hydrogen production: A review. Int. J. Hydrogen Energy 2022, 47, 27747–27774. [Google Scholar] [CrossRef]

- Chang, Z.; Han, T. Prognostics and health management of photovoltaic systems based on deep learning: A state-of-the-art review and future perspectives. Renew. Sustain. Energy Rev. 2024, 205, 114861. [Google Scholar] [CrossRef]

- Li, Y.; Cao, Y.; Cui, X.; Zhang, Y.; Mukhopadhyay, S.C.; Li, Y.; Cui, L.; Liu, Z.; Li, S. Semantic consistency guided hybrid-invariant transformer for domain adaptation in multi-view echo quality assessment. IEEE Trans. Instrum. Meas. 2025, 74, 4008519. [Google Scholar]

- Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; Almeida, D.; Altenschmidt, J.; Altman, S.; Anadkat, S.; et al. Gpt-4 technical report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Anthropic. Claude 3 Haiku: Our Fastest Model Yet. 2024. Available online: https://www.anthropic.com/news/claude-3-haiku (accessed on 30 July 2025).

- Liu, H.; Li, C.; Wu, Q.; Lee, Y.J. Visual instruction tuning. In Proceedings of the 37th International Conference on Neural Information Processing Systems, New Orleans, LA, USA, 10–16 December 2023. [Google Scholar]

- Wang, P.; Bai, S.; Tan, S.; Wang, S.; Fan, Z.; Bai, J.; Chen, K.; Liu, X.; Wang, J.; Ge, W.; et al. Qwen2-VL: Enhancing Vision-Language Model’s Perception of the World at Any Resolution. arXiv 2024, arXiv:2409.12191. [Google Scholar]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. In Proceedings of the International Conference on Learning Representations, Virtual, 25–29 April 2022. [Google Scholar]

- Liang, J.; Huang, W.; Wan, G.; Yang, Q.; Ye, M. LoRASculpt: Sculpting LoRA for Harmonizing General and Specialized Knowledge in Multimodal Large Language Models. In Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR), Nashville, TN, USA, 11–15 June 2025. [Google Scholar]

- Xue, F.; Shi, Z.; Wei, F.; Lou, Y.; Liu, Y.; You, Y. Go wider instead of deeper. In Proceedings of the AAAI Conference on Artificial Intelligence, Online, 22 February–1 March 2022. [Google Scholar]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D. Chain-of-thought prompting elicits reasoning in large language models. Adv. Neural Inf. Process. Syst. 2022, 35, 24824–24837. [Google Scholar]

- OpenAI. ChatGPT-4o. 2025. Available online: https://chat.openai.com/ (accessed on 30 July 2025).

- Li, J.; Li, D.; Xiong, C.; Hoi, S. Blip: Bootstrapping language-image pre-training for unified vision-language understanding and generation. In Proceedings of the International Conference on Machine Learning (PMLR), Baltimore, ML, USA, 17–23 July 2022. [Google Scholar]

- Zhu, D.; Ye, M.; Shen, X.; Li, X.; Elhoseiny, M. MiniGPT-4: Enhancing Vision-Language Understanding with Advanced Large Language Models. arXiv 2023, arXiv:2304.10592. [Google Scholar]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning transferable visual models from natural language supervision. In Proceedings of the International Conference on Machine Learning, PMLR, Virtual, 18–24 July 2021. [Google Scholar]

- Fang, Y.; Wang, W.; Xie, B.; Sun, Q.; Wu, L.; Wang, X.; Huang, T.; Wang, X.; Cao, Y. Eva: Exploring the limits of masked visual representation learning at scale. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 18–22 June 2023. [Google Scholar]

- Chiang, W.L.; Li, Z.; Lin, Z.; Sheng, Y.; Wu, Z.; Zhang, H.; Zheng, L.; Zhuang, S.; Zhuang, Y.; Gonzalez, J.E.; et al. Vicuna: An Open-Source Chatbot Impressing GPT-4 with 90%* ChatGPT Quality. 2023. Available online: https://lmsys.org/blog/2023-03-30-vicuna/ (accessed on 14 April 2023).

- Touvron, H.; Martin, L.; Stone, K.; Albert, P.; Almahairi, A.; Babaei, Y.; Bashlykov, N.; Batra, S.; Bhargava, P.; Bhosale, S.; et al. Llama 2: Open foundation and fine-tuned chat models. arXiv 2023, arXiv:2307.09288. [Google Scholar] [CrossRef]

- Zhu, K.; Wang, Y.; Sun, Y.; Chen, Q.; Liu, J.; Zhang, G.; Wang, J. Continual sft matches multimodal rlhf with negative supervision. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 11–15 June 2025. [Google Scholar]

- Sun, Y.; Zhang, H.; Chen, Q.; Zhang, X.; Sang, N.; Zhang, G.; Wang, J.; Li, Z. Improving multi-modal large language model through boosting vision capabilities. arXiv 2024, arXiv:2410.13733. [Google Scholar] [CrossRef]

- Chen, Z.; Wu, J.; Wang, W.; Su, W.; Chen, G.; Xing, S.; Zhong, M.; Zhang, Q.; Zhu, X.; Lu, L.; et al. Internvl: Scaling up vision foundation models and aligning for generic visual-linguistic tasks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–22 June 2024. [Google Scholar]

- Wang, Q.; Zhang, J.; Du, J.; Zhang, K.; Li, R.; Zhao, F.; Zou, L.; Xie, C. A fine-tuned multimodal large model for power defect image-text question-answering. Signal Image Video Process. 2024, 18, 9191–9203. [Google Scholar]

- Mu, J.; Wang, W.; Liu, W.; Yan, T.; Wang, G. Multimodal Large Language Model with LoRA Fine-Tuning for Multimodal Sentiment Analysis. ACM Trans. Intell. Syst. Technol. 2024, 16, 139. [Google Scholar]

- Han, Z.; Gao, C.; Liu, J.; Zhang, J.; Zhang, S.Q. Parameter-efficient fine-tuning for large models: A comprehensive survey. arXiv 2024, arXiv:2403.14608. [Google Scholar]

- Wang, J.; Song, Q.; Qian, L.; Li, H.; Peng, Q.; Zhang, J. SubstationAI: Multimodal Large Model-Based Approaches for Analyzing Substation Equipment Faults. arXiv 2024, arXiv:2412.17077. [Google Scholar]

- Biderman, D.; Portes, J.; Ortiz, J.J.G.; Paul, M.; Greengard, P.; Jennings, C.; King, D.; Havens, S.; Chiley, V.; Frankle, J.; et al. Lora learns less and forgets less. arXiv 2024, arXiv:2405.09673. [Google Scholar] [CrossRef]

- Houlsby, N.; Giurgiu, A.; Jastrzebski, S.; Morrone, B.; De Laroussilhe, Q.; Gesmundo, A.; Attariyan, M.; Gelly, S. Parameter-efficient transfer learning for NLP. In Proceedings of the International Conference on Machine Learning, PMLR, Long Beach, CA, USA, 9–15 June 2019. [Google Scholar]

- He, J.; Zhou, C.; Ma, X.; Berg-Kirkpatrick, T.; Neubig, G. Towards a unified view of parameter-efficient transfer learning. arXiv 2021, arXiv:2110.04366. [Google Scholar]

- Li, X.L.; Liang, P. Prefix-tuning: Optimizing continuous prompts for generation. arXiv 2021, arXiv:2101.00190. [Google Scholar] [CrossRef]

- Liu, X.; Ji, K.; Fu, Y.; Du, Z.; Yang, Z.; Tang, J. P-Tuning v2: Prompt Tuning Can Be Comparable to Fine-tuning Universally Across Scales and Tasks. arXiv 2021, arXiv:2110.07602. [Google Scholar]

- Jia, M.; Tang, L.; Chen, B.C.; Cardie, C.; Belongie, S.; Hariharan, B.; Lim, S.N. Visual prompt tuning. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; Springer: Cham, Switzerland, 2022. [Google Scholar]

- Das, S.S.S.; Zhang, R.H.; Shi, P.; Yin, W.; Zhang, R. Unified low-resource sequence labeling by sample-aware dynamic sparse finetuning. arXiv 2023, arXiv:2311.03748. [Google Scholar]

- Lawton, N.; Kumar, A.; Thattai, G.; Galstyan, A.; Steeg, G.V. Neural architecture search for parameter-efficient fine-tuning of large pre-trained language models. arXiv 2023, arXiv:2305.16597. [Google Scholar]

- Zhang, Q.; Chen, M.; Bukharin, A.; Karampatziakis, N.; He, P.; Cheng, Y.; Chen, W.; Zhao, T. Adalora: Adaptive budget allocation for parameter-efficient fine-tuning. arXiv 2023, arXiv:2303.10512. [Google Scholar]

- Mao, Y.; Mathias, L.; Hou, R.; Almahairi, A.; Ma, H.; Han, J.; Yih, W.t.; Khabsa, M. Unipelt: A unified framework for parameter-efficient language model tuning. arXiv 2021, arXiv:2110.07577. [Google Scholar]

- Chen, S.; Jie, Z.; Ma, L. Llava-mole: Sparse mixture of lora experts for mitigating data conflicts in instruction finetuning mllms. arXiv 2024, arXiv:2401.16160. [Google Scholar]

- Sheng, Y.; Cao, S.; Li, D.; Hooper, C.; Lee, N.; Yang, S.; Chou, C.; Zhu, B.; Zheng, L.; Keutzer, K.; et al. S-lora: Serving thousands of concurrent lora adapters. arXiv 2023, arXiv:2311.03285. [Google Scholar]

- Lopes, F.; Rocha, P.; Coelho, A. Towards Automated Visual Inspection of Electrical Grid Assets for the Smart Grid - An Application to HV Insulators. In Proceedings of the 2024 IEEE International Conference on Communications, Control, and Computing Technologies for Smart Grids (SmartGridComm), Oslo, Norway, 17–20 September 2024. [Google Scholar]

- Zheng, J.; Wu, H.; Zhang, H.; Wang, Z.; Xu, W. Insulator-Defect Detection Algorithm Based on Improved YOLOv7. Sensors 2022, 22, 8801. [Google Scholar] [CrossRef]

- Merkelbach, S.; Diedrich, A.; Sztyber-Betley, A.; Travé-Massuyès, L.; Chanthery, E.; Niggemann, O.; Dumitrescu, R. Using Multi-Modal LLMs to Create Models for Fault Diagnosis (Short Paper). In Proceedings of the 35th International Conference on Principles of Diagnosis and Resilient Systems (DX 2024), Vienna, Austria, 4–7 November 2024; Schloss Dagstuhl–Leibniz-Zentrum für Informatik: Wadern, Germany, 2024. [Google Scholar]

- Wang, H.; Li, C.; Li, Y.F.; Tsung, F. An Intelligent Industrial Visual Monitoring and Maintenance Framework Empowered by Large-Scale Visual and Language Models. IEEE Trans. Ind. Cyber-Phys. Syst. 2024, 2, 166–175. [Google Scholar] [CrossRef]

- Jin, H.; Kim, K.; Kwon, J. GridMind: LLMs-Powered Agents for Power System Analysis and Operations. In Proceedings of the SC’25 Workshops of the International Conference for High Performance Computing, Networking, Storage and Analysis, St. Louis, MO, USA, 16–21 November 2025. [Google Scholar]

- Wen, Y.; Chen, X. X-GridAgent: An LLM-Powered Agentic AI System for Assisting Power Grid Analysis. arXiv 2025, arXiv:2512.20789. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. Adv. Neural Inf. Process. Syst. 2017, 30, 5998–6008. [Google Scholar]

- Su, B.; Zhou, Z.; Chen, H. PVEL-AD: A large-scale open-world dataset for photovoltaic cell anomaly detection. IEEE Trans. Ind. Inform. 2022, 19, 404–413. [Google Scholar]

- GB/T 38335-2019; Code of Operation for Photovoltaic Power Station. Standards Press of China: Beijing, China, 2019.

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. Bleu: A method for automatic evaluation of machine translation. In Proceedings of the 40th Annual Meeting of the Association for Computational Linguistics, Philadelphia, PA, USA, 7–12 July 2002. [Google Scholar]

- Lin, C.Y. Rouge: A package for automatic evaluation of summaries. In Proceedings of the Text Summarization Branches Out, Barcelona, Spain, 25–26 July 2004. [Google Scholar]

- Lynch, S.; Savary-Bataille, K.; Leeuw, B.; Argyle, D. Development of a questionnaire assessing health-related quality-of-life in dogs and cats with cancer. Vet. Comp. Oncol. 2011, 9, 172–182. [Google Scholar] [CrossRef]

- Lahat, A.; Sharif, K.; Zoabi, N.; Shneor Patt, Y.; Sharif, Y.; Fisher, L.; Shani, U.; Arow, M.; Levin, R.; Klang, E. Assessing generative pretrained transformers (GPT) in clinical decision-making: Comparative analysis of GPT-3.5 and GPT-4. J. Med. Internet Res. 2024, 26, e54571. [Google Scholar] [CrossRef]

- Zhang, H.; Chen, J.; Jiang, F.; Yu, F.; Chen, Z.; Li, J.; Chen, G.; Wu, X.; Zhang, Z.; Xiao, Q.; et al. Huatuogpt, towards taming language model to be a doctor. arXiv 2023, arXiv:2305.15075. [Google Scholar] [CrossRef]

- Tiffin, P.A.; Finn, G.M.; McLachlan, J.C. Evaluating professionalism in medical undergraduates using selected response questions: Findings from an item response modelling study. BMC Med. Educ. 2011, 11, 43. [Google Scholar] [CrossRef]

- Ghafourian, Y.; Hanbury, A.; Knoth, P. Readability measures as predictors of understandability and engagement in searching to learn. In Linking Theory and Practice of Digital Libraries, Proceedings of the 27th International Conference on Theory and Practice of Digital Libraries, TPDL 2023, Zadar, Croatia, 26–29 September 2023; Springer: Cham, Switzerland, 2023; pp. 173–181. [Google Scholar]

- Karunaratne, S.; Dharmarathna, D. A review of comprehensiveness, user-friendliness, and contribution for sustainable design of whole building environmental life cycle assessment software tools. Build. Environ. 2022, 212, 108784. [Google Scholar] [CrossRef]

- Bai, Y.; Ying, J.; Cao, Y.; Lv, X.; He, Y.; Wang, X.; Yu, J.; Zeng, K.; Xiao, Y.; Lyu, H.; et al. Benchmarking foundation models with language-model-as-an-examiner. Adv. Neural Inf. Process. Syst. 2023, 36, 78142–78167. [Google Scholar]

- Li, Z.; Xu, X.; Shen, T.; Xu, C.; Gu, J.C.; Lai, Y.; Tao, C.; Ma, S. Leveraging large language models for NLG evaluation: Advances and challenges. arXiv 2024, arXiv:2401.07103. [Google Scholar]

- Bavaresco, A.; Bernardi, R.; Bertolazzi, L.; Elliott, D.; Fernández, R.; Gatt, A.; Ghaleb, E.; Giulianelli, M.; Hanna, M.; Koller, A.; et al. Llms instead of human judges? a large scale empirical study across 20 nlp evaluation tasks. arXiv 2024, arXiv:2406.18403. [Google Scholar] [CrossRef]

- Bai, Y.; Jones, A.; Ndousse, K.; Askell, A.; Chen, A.; DasSarma, N.; Drain, D.; Fort, S.; Ganguli, D.; Henighan, T.; et al. Training a helpful and harmless assistant with reinforcement learning from human feedback. arXiv 2022, arXiv:2204.05862. [Google Scholar] [CrossRef]

- Ziegler, D.M.; Stiennon, N.; Wu, J.; Brown, T.B.; Radford, A.; Amodei, D.; Christiano, P.; Irving, G. Fine-tuning language models from human preferences. arXiv 2019, arXiv:1909.08593. [Google Scholar]

- Askell, A.; Bai, Y.; Chen, A.; Drain, D.; Ganguli, D.; Henighan, T.; Jones, A.; Joseph, N.; Mann, B.; DasSarma, N.; et al. A general language assistant as a laboratory for alignment. arXiv 2021, arXiv:2112.00861. [Google Scholar] [CrossRef]

- Liu, H.; Li, C.; Li, Y.; Lee, Y.J. Improved baselines with visual instruction tuning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–22 June 2024. [Google Scholar]

- Du, Z.; Qian, Y.; Liu, X.; Ding, M.; Qiu, J.; Yang, Z.; Tang, J. Glm: General language model pretraining with autoregressive blank infilling. arXiv 2021, arXiv:2103.10360. [Google Scholar]

- Ding, M.; Yang, Z.; Hong, W.; Zheng, W.; Zhou, C.; Yin, D.; Lin, J.; Zou, X.; Shao, Z.; Yang, H.; et al. Cogview: Mastering text-to-image generation via transformers. Adv. Neural Inf. Process. Syst. 2021, 34, 19822–19835. [Google Scholar]

- Bai, J.; Bai, S.; Yang, S.; Wang, S.; Tan, S.; Wang, P.; Lin, J.; Zhou, C.; Zhou, J. Qwen-VL: A Versatile Vision-Language Model for Understanding, Localization, Text Reading, and Beyond. arXiv 2023, arXiv:2308.12966. [Google Scholar]

- Chen, J.; Zhu, D.; Shen, X.; Li, X.; Liu, Z.; Zhang, P.; Krishnamoorthi, R.; Chandra, V.; Xiong, Y.; Elhoseiny, M. Minigpt-v2: Large language model as a unified interface for vision-language multi-task learning. arXiv 2023, arXiv:2310.09478. [Google Scholar]

- Xue, Y.; Xu, T.; Rodney Long, L.; Xue, Z.; Antani, S.; Thoma, G.R.; Huang, X. Multimodal recurrent model with attention for automated radiology report generation. In Medical Image Computing and Computer Assisted Intervention—MICCAI 2018: 21st International Conference, Granada, Spain, 16–20 September 2018, Proceedings, Part I; Springer: Cham, Switzerland, 2018; pp. 457–466. [Google Scholar]

- Chen, Z.; Song, Y.; Chang, T.H.; Wan, X. Generating radiology reports via memory-driven transformer. arXiv 2020, arXiv:2010.16056. [Google Scholar]

- Yang, D.; Wei, J.; Xiao, D.; Wang, S.; Wu, T.; Li, G.; Li, M.; Wang, S.; Chen, J.; Jiang, Y.; et al. Pediatricsgpt: Large language models as chinese medical assistants for pediatric applications. Adv. Neural Inf. Process. Syst. 2024, 37, 138632–138662. [Google Scholar]

- Hinton, G.; Vinyals, O.; Dean, J. Distilling the knowledge in a neural network. arXiv 2015, arXiv:1503.02531. [Google Scholar] [CrossRef]

- Sanh, V.; Debut, L.; Chaumond, J.; Wolf, T. DistilBERT, a distilled version of BERT: Smaller, faster, cheaper and lighter. arXiv 2019, arXiv:1910.01108. [Google Scholar]

- Marafioti, A.; Zohar, O.; Farré, M.; Noyan, M.; Bakouch, E.; Cuenca, P.; Zakka, C.; Allal, L.B.; Lozhkov, A.; Tazi, N.; et al. Smolvlm: Redefining small and efficient multimodal models. arXiv 2025, arXiv:2504.05299. [Google Scholar] [CrossRef]

- Zhou, B.; Hu, Y.; Weng, X.; Jia, J.; Luo, J.; Liu, X.; Wu, J.; Huang, L. Tinyllava: A framework of small-scale large multimodal models. arXiv 2024, arXiv:2402.14289. [Google Scholar]

| Method | Acc. | Cla. | Com. | Pra. | Avg. |

|---|---|---|---|---|---|

| MRG [70] | / | / | / | / | / |

| MDTransformer [71] | / | / | / | / | / |

| GPT-4 [7] | / | / | / | / | / |

| Claude-3 [8] | / | / | / | / | / |

| LLaVA1.5-7B [9] | / | / | / | / | / |

| VisualGLM-6B [66] | / | / | / | / | / |

| Qwen2-VL-7B [10] | / | / | / | / | / |

| MiniGPT-4 [69] | / | / | / | / | / |

| LLaVA1.5-7B † | / | / | / | / | / |

| PV-FaultExpert † (ours) | / | / | / | / | / |

| Method | Acc. | Cla. | Com. | Pra. | Avg. |

|---|---|---|---|---|---|

| PV-FaultExpert † (DyLoRA) | 4.45 | 4.26 | 4.55 | 4.39 | 4.41 |

| ↪ (a) w/o Fusion | 4.01 | 3.82 | 4.02 | 3.87 | 3.93 |

| ↪ (b) w/o Router | 3.88 | 3.70 | 3.85 | 3.66 | 3.77 |

| ↪ (c) w/o MoE | 3.62 | 3.48 | 3.61 | 3.40 | 3.53 |

| Modalities | Expert #1 | Expert #2 | Expert #3 |

|---|---|---|---|

| Infrared + Text | |||

| RGB + Text | |||

| Thermal + Text | |||

| RGB + Thermal + Text |

| Method | GFlops | Runtime (s) | Memory (GB) |

|---|---|---|---|

| MRG(CNN with LSTM) [70] | 5.5 | 0.0048 | 2.60 |

| MDTransformer [71] | 20.6 | 0.2832 | 3.55 |

| LLaVA1.5-7B [9] | 578.4 | 0.6091 | 15.62 |

| MiniGPT-4 [69] | 2107.9 | 0.7574 | 12.67 |

| PV-FaultExpert † (ours) | 584.2 | 0.6219 | 15.74 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Wu, J.; Chen, Y.; Min, Q.; Chen, M.; Zhao, J.; Ye, M. Domain-Adaptive Multimodal Large Language Models for Photovoltaic Fault Diagnosis via Dynamic LoRA Routing. Processes 2026, 14, 653. https://doi.org/10.3390/pr14040653

Wu J, Chen Y, Min Q, Chen M, Zhao J, Ye M. Domain-Adaptive Multimodal Large Language Models for Photovoltaic Fault Diagnosis via Dynamic LoRA Routing. Processes. 2026; 14(4):653. https://doi.org/10.3390/pr14040653

Chicago/Turabian StyleWu, Junjian, Yiwei Chen, Qihao Min, Ming Chen, Jie Zhao, and Mang Ye. 2026. "Domain-Adaptive Multimodal Large Language Models for Photovoltaic Fault Diagnosis via Dynamic LoRA Routing" Processes 14, no. 4: 653. https://doi.org/10.3390/pr14040653

APA StyleWu, J., Chen, Y., Min, Q., Chen, M., Zhao, J., & Ye, M. (2026). Domain-Adaptive Multimodal Large Language Models for Photovoltaic Fault Diagnosis via Dynamic LoRA Routing. Processes, 14(4), 653. https://doi.org/10.3390/pr14040653