Improved YOLOv9 with Dual Convolution and LSKA Attention for Robust Small Defect Detection in Textiles

Abstract

1. Introduction

2. Improved YOLOv9 Fabric Defect Detection Algorithm

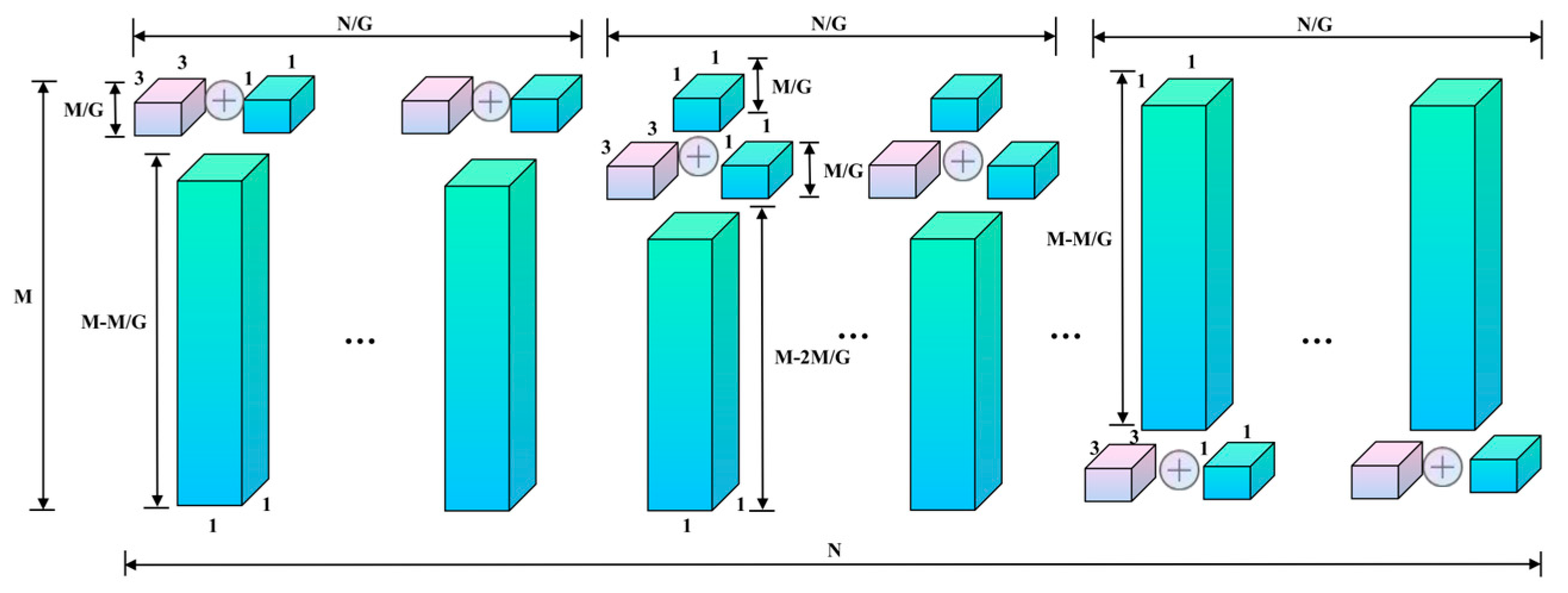

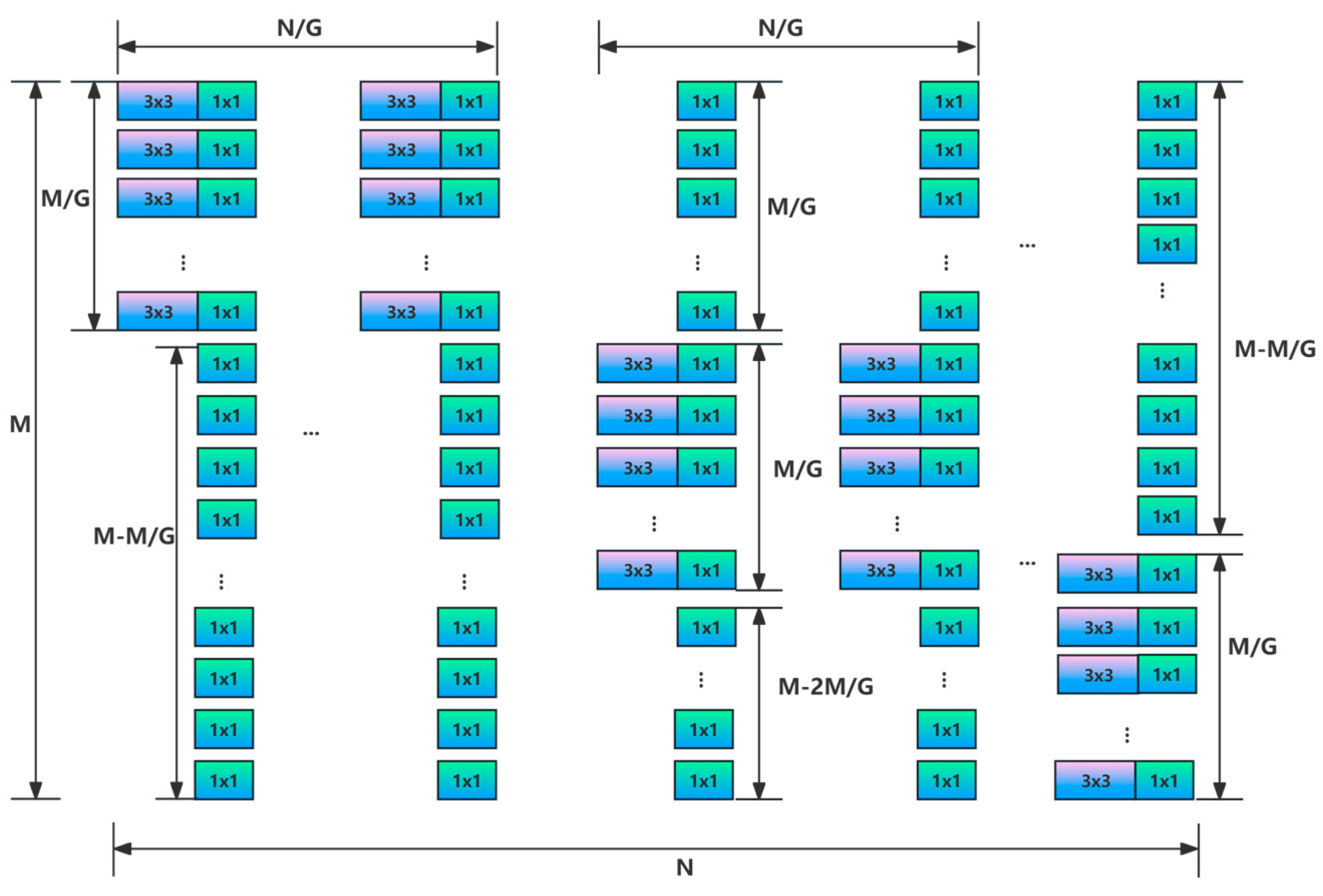

2.1. Subsection

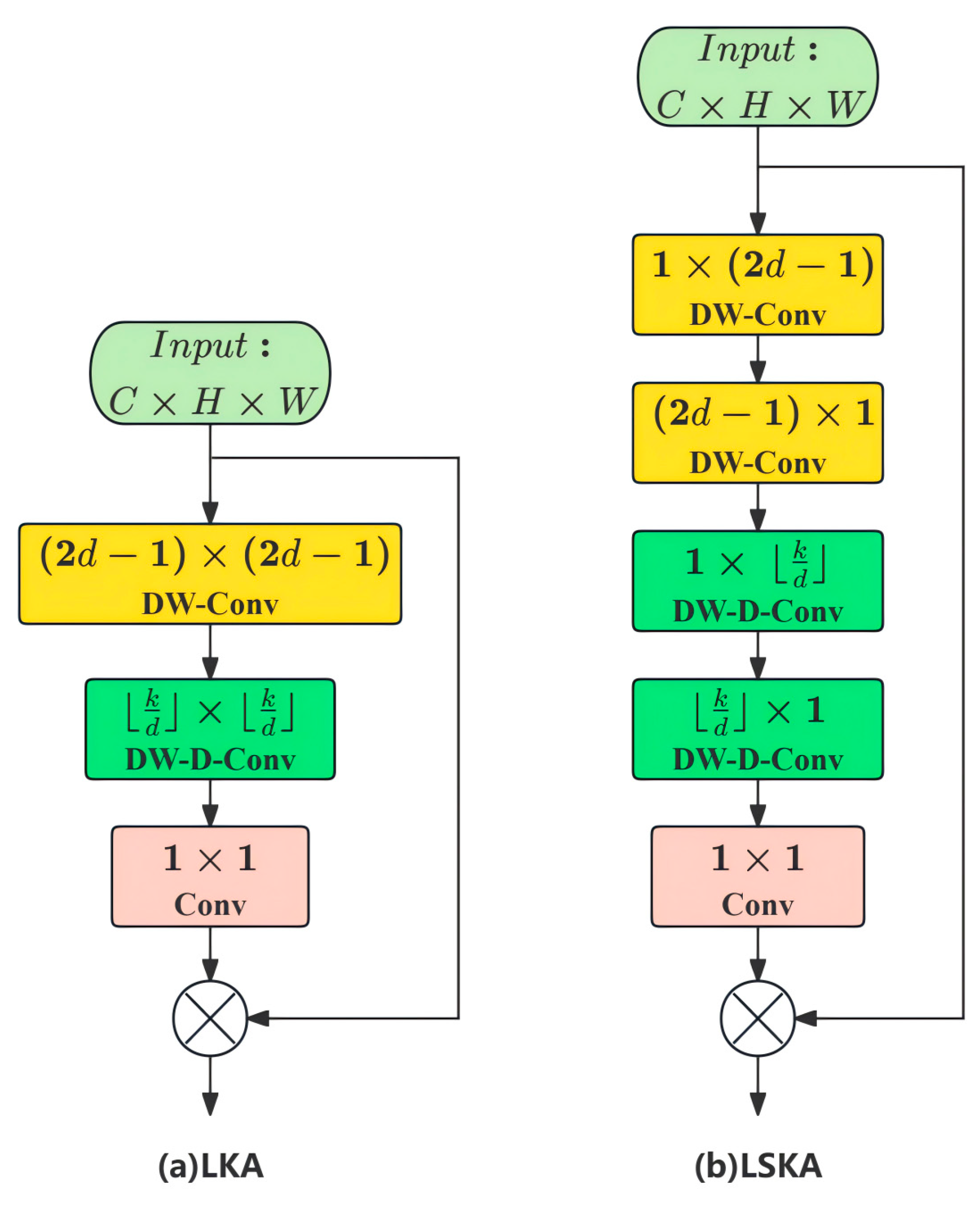

2.2. Adding Large Separable Kernel Attention Mechanism (LSKA)

2.3. Feature Extraction Using Focal Modulation Networks

2.3.1. Focus Contextualization

2.3.2. Contextual Aggregation

2.3.3. Element-Wise Affine Transformation

2.4. Modification of the Feature Fusion Module Structure Using BiFPN

- Bidirectional feature fusion: Bidirectional feature fusion in the bidirectional feature pyramid network refers to a mechanism that allows information in the feature network layer to flow and fuse in both the bottom-up and top-down directions. The above operation yields a simplified bidirectional network, enhancing the network’s capacity for feature fusion, enabling the network to more effectively utilize information at different scales, thereby improving the performance of target detection without adding additional computational costs.

- Weighted fusion mechanism: The weighted fusion mechanism is a technique designed to enhance the effectiveness of feature fusion. In traditional feature pyramid networks, all input features are typically treated equally, with no distinction made between them. This approach results in the simple addition of features with different resolutions, without accounting for the differences in their output characteristics. To address this issue, BiFPN introduces an additional weight to each input feature, allowing the network to learn the relative importance of each feature. The weighted fusion mechanism is expressed as shown in Equation (17):

- 3.

- Structural optimization: Structural optimization is to determine the number of different layers through the compound scaling method under different resource constraints, thereby improving accuracy while maintaining efficiency. Its structural optimization includes:

- Simplified bidirectional network: By optimizing the structure, the number of nodes in the network is reduced and nodes with single input edges are removed.

- Adding additional edges: Additional edges are introduced between the input and output nodes at the same level to facilitate greater feature fusion without significantly increasing the computational cost.

- Reuse bidirectional paths: Each bidirectional path is considered an independent feature network layer, and these layers can be iterated multiple times to enable more sophisticated feature fusion.

2.5. Using Shape-IoU to Optimize the Loss Function

3. Experiment Result and Analysis

3.1. Construction of the Experiment Environment

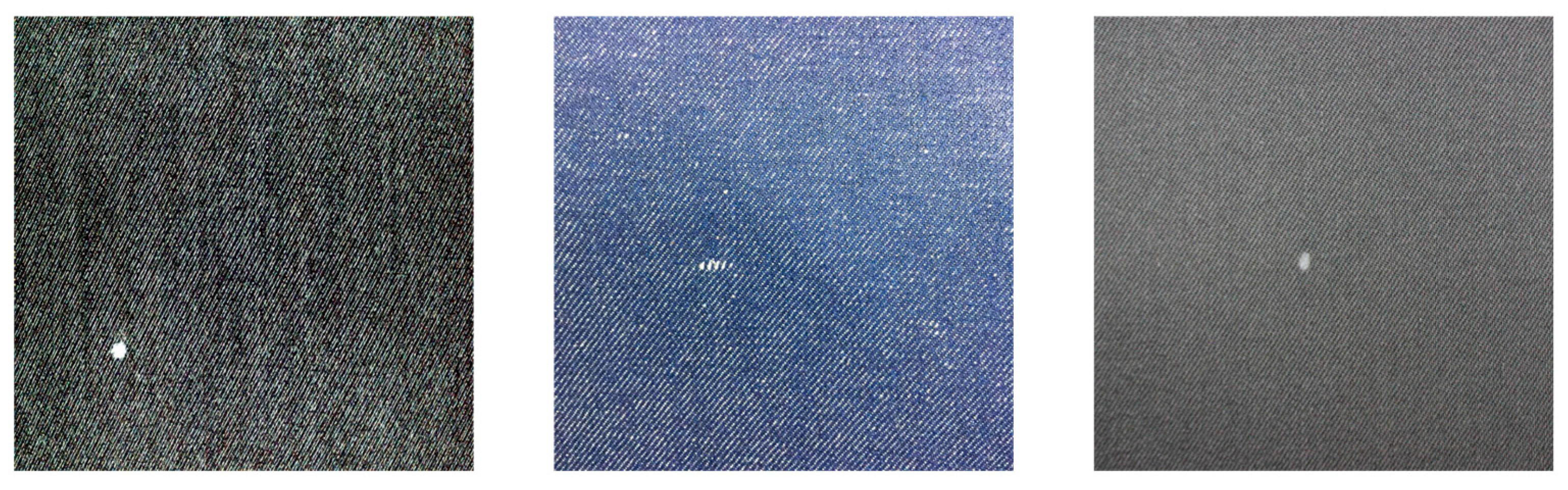

3.2. Textile Defect Dataset

3.3. Experiment Results and Analysis

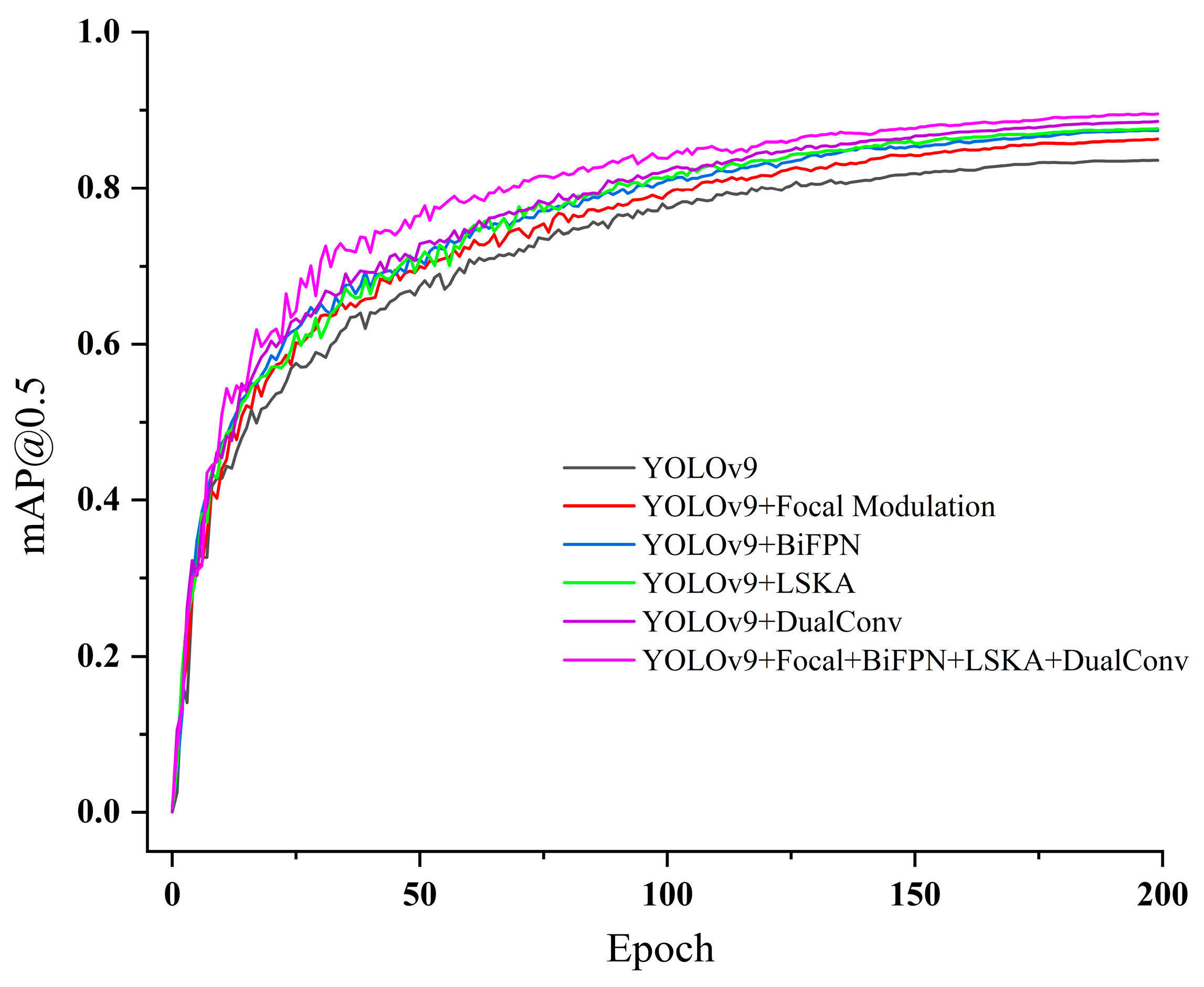

3.3.1. Single Improvement Effectiveness Comparative Experiment

3.3.2. Loss Function Comparison Test

3.3.3. Model Feature Visualization

3.3.4. Ablation Experiment

3.3.5. Multi-Model Comparison Experiment

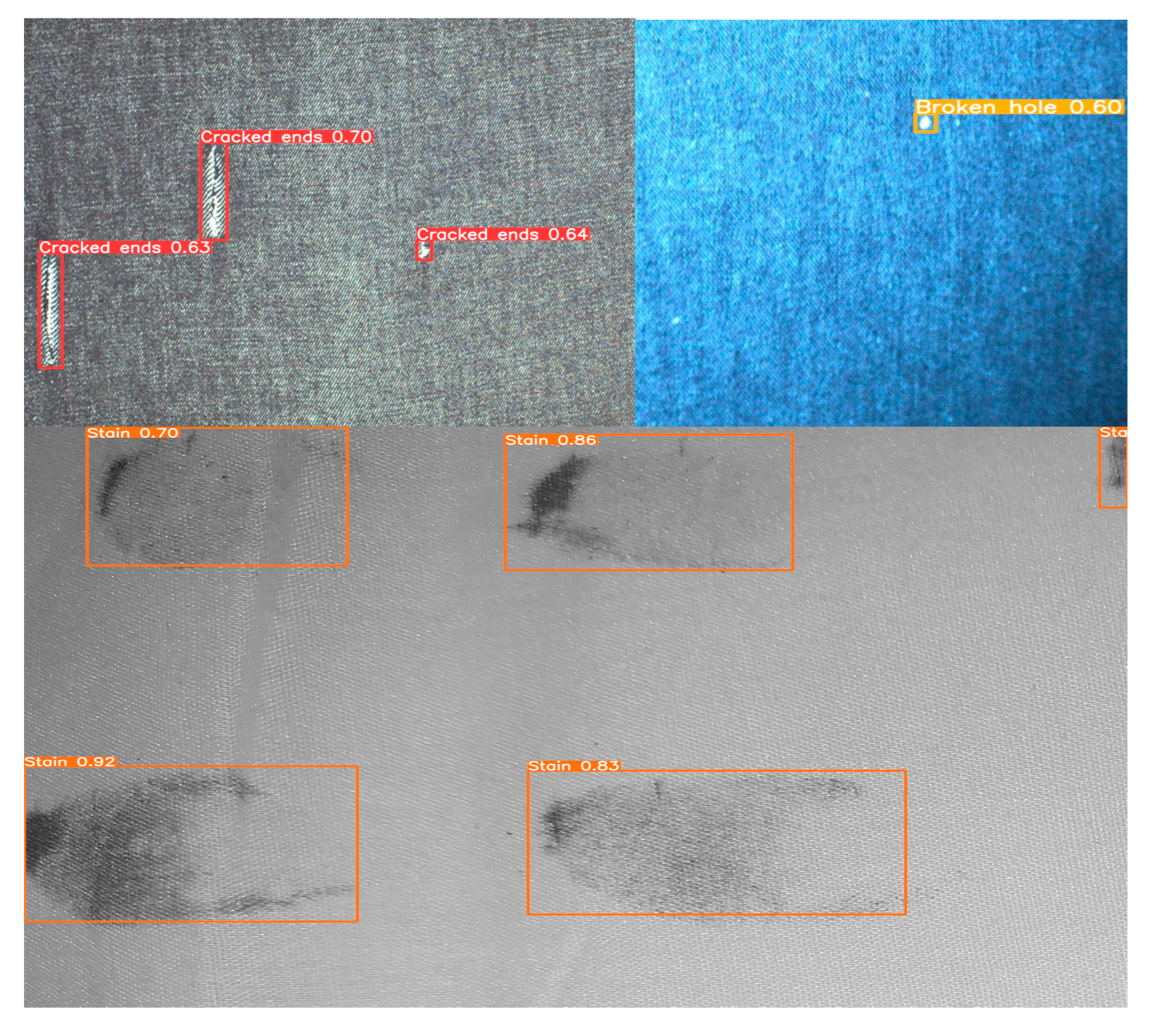

3.3.6. Model Improvement Detection Effect Experiment

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Girshick, R. Fast R-CNN. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; IEEE Computer Society: Los Alamitos, CA, USA, 2015; pp. 1440–1448. [Google Scholar]

- Redmon, J. You Only Look Once: Unified, Real-Time Object Detection. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Xu, S.; Zheng, L.; Yuan, D. A method for fabric defect detection based on improved cascade R-CNN. Adv. Text. Technol. 2022, 30, 48. [Google Scholar]

- Jun, X.; Wang, J.; Zhou, J.; Meng, S.; Pan, R.; Gao, W. Fabric defect detection based on a deep convolutional neural network using a two-stage strategy. Text. Res. J. 2021, 91, 130–142. [Google Scholar] [CrossRef]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going Deeper with Convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- Zheng, L.; Wang, X.; Wang, Q.; Wang, S.; Liu, X. A Fabric Defect Detection Method Based on Improved YOLOv5. In Proceedings of the 7th International Conference on Computer and Communications, ICCC 2021, Chengdu, China, 10–13 December 2021. [Google Scholar]

- Wang, A.; Yuan, J.; Zhu, Y.; Chen, C.; Wu, J. Drum roller surface defect detection algorithm based on improved YOLOv8s. J. Zhejiang Univ. Eng. Sci. 2024, 58, 370–380. [Google Scholar]

- Zhang, Y.; Sun, J.-X.; Sun, Y.-M.; Liu, S.-D.; Wang, C.-Q. Lightweight object detection based on split attention and linear transformation. J. Zhejiang Univ. Eng. Sci. 2023, 57, 1195–1204. [Google Scholar]

- Kang, X.; Li, J. AYOLOv7-tiny: Towards efficient defect detection in solid color circular weft fabric. Text. Res. J. 2024, 94, 225–245. [Google Scholar] [CrossRef]

- Liu, B.; Wang, H.; Cao, Z.; Wang, Y.; Tao, L.; Yang, J.; Zhang, K. PRC-Light YOLO: An Efficient Lightweight Model for Fabric Defect Detection. Appl. Sci. 2024, 14, 938. [Google Scholar] [CrossRef]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.-Y.; Berg, A.C. SSD: Single Shot MultiBox Detector. In Proceedings of the Computer Vision—ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, 11–14 October 2016; Springer: Cham, Switzerland, 2016; pp. 21–37. [Google Scholar]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the 27th IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014; pp. 580–587. [Google Scholar]

- Ren, S.Q.; He, K.M.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. In Proceedings of the 29th Annual Conference on Neural Information Processing Systems (NIPS), Montreal, QC, Canada, 7–12 December 2015. [Google Scholar]

- Wang, C.-Y.; Yeh, I.-H.; Liao, H.-Y.M. YOLOv9: Learning What You Want to Learn Using Programmable Gradient Information. In Proceedings of the European Conference on Computer Vision—ECCV 2024, Milan, Italy, 29 September–4 October 2024; Springer Nature: Cham, Switzerland, 2024. [Google Scholar]

- Zhong, J.; Chen, J.; Mian, A. DualConv: Dual Convolutional Kernels for Lightweight Deep Neural Networks. IEEE Trans. Neural Netw. Learn. Syst. 2023, 34, 9528–9535. [Google Scholar] [CrossRef] [PubMed]

- Lau, K.W.; Po, L.M.; Rehman, Y.A.U. Large Separable Kernel Attention: Rethinking the Large Kernel Attention design in CNN. Expert Syst. Appl. 2024, 236, 121352. [Google Scholar] [CrossRef]

- Yu, F.; Koltun, V. Multi-Scale Context Aggregation by Dilated Convolutions. arXiv 2015, arXiv:1511.07122. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Spatial Pyramid Pooling in Deep Convolutional Networks for Visual Recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2014, 37, 1904–1916. [Google Scholar] [CrossRef] [PubMed]

- Yang, J.; Li, C.; Dai, X.; Yuan, L.; Gao, J. Focal Modulation Networks. arXiv 2022, arXiv:2203.11926. [Google Scholar]

- Lin, T.-Y.; Dollár, P.; Girshick, R.B.; He, K.; Hariharan, B.; Belongie, S.J. Feature Pyramid Networks for Object Detection. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 936–944. [Google Scholar]

- Tan, M.; Pang, R.; Le, Q.V. EfficientDet: Scalable and Efficient Object Detection. In Proceedings of the 2019 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019. [Google Scholar]

- Zheng, Z.H.; Wang, P.; Liu, W.; Li, J.Z.; Ye, R.G.; Ren, D.W.; Association for the Advancement of Artificial Intelligence. Distance-IoU Loss: Faster and Better Learning for Bounding Box Regression. In Proceedings of the 34th AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020; pp. 12993–13000. [Google Scholar]

- Yu, J.; Jiang, Y.; Wang, Z.; Cao, Z.; Huang, T. UnitBox: An advanced object detection network. In Proceedings of the 24th ACM Multimedia Conference, MM 2016, Amsterdam, The Netherlands, 15–19 October 2016. [Google Scholar]

- Rezatofighi, H.; Tsoi, N.; Gwak, J.; Sadeghian, A.; Reid, I.; Savarese, S.; Soc, I.C. Generalized Intersection over Union: A Metric and A Loss for Bounding Box Regression. In Proceedings of the 32nd IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 658–666. [Google Scholar]

- Zhang, H.; Zhang, S. Shape-IoU: More Accurate Metric considering Bounding Box Shape and Scale. arXiv 2023, arXiv:2312.17663. [Google Scholar]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. Int. J. Comput. Vis. 2020, 128, 336–359. [Google Scholar] [CrossRef]

| Configure the Environment | Configuration Name (Version) |

|---|---|

| Operating system | Windows 10 |

| CPU | AMD Ryzen 5700X3D 8-Core Processor 3.00 GHz |

| GPU | NVIDIA GeForce RTX 4070 Ti SUPER (16 G)×1 |

| Compiler | Python 3.8 |

| Deep learning frameworks | Pytorch 1.13.1 |

| Acceleration module | CUDA Toolkit-11.7 |

| Parameter | Explanation | Parameter Value |

|---|---|---|

| Image size | Image size | 640 × 640 |

| Banch size | Batch number | 16 |

| Epochs | Iterations | 200 |

| Ir | Learning rate | 0.001 |

| Momentum | momentum | 0.937 |

| Weight_decay | Weight decay rate | 0.0005 |

| Model | P | R | mAP@ 0.5 | mAP@0.5–0.95 | FLOPs | Np/106 |

|---|---|---|---|---|---|---|

| YOLOv9 | 0.857 | 0.797 | 0.839 | 0.468 | 20.7 | 4.54 |

| YOLOv9 + DualConv | 0.879 | 0.814 | 0.88 5 | 0.49 9 | 18.1 | 3.88 |

| YOLOv9 + LSKA | 0.867 | 0.808 | 0.876 | 0.496 | 19.0 | 4.34 |

| YOLOv9 + Focal Modulation | 0.869 | 0.802 | 0.863 | 0.479 | 18.9 | 4.14 |

| YOLOv9 + BiFPN | 0.866 | 0.804 | 0.873 | 0.485 | 18.5 | 4.06 |

| YOLOv9 + DualConv + LSKA + Focal Modulation + BiFPN + Shape IoU | 0.905 | 0.828 | 0.896 | 0.537 | 17.7 | 3.73 |

| Model | P | R | mAP@0.5 | mAP@0.5–0.95 |

|---|---|---|---|---|

| GIoU | 0.887 | 0.819 | 0.879 | 0.488 |

| CIoU | 0.895 | 0.824 | 0.8 81 | 0.492 |

| Shape-IoU | 0.905 | 0.828 | 0.896 | 0.537 |

| YOLOv9 | DualConv | LSKA | Focal Modulation | BiFPN | Shape-IoU | mAP@ 0.5 | mAP@ 0.5–0.95 | Np/106 |

|---|---|---|---|---|---|---|---|---|

| √ | 0.839 | 0.468 | 4.54 | |||||

| √ | √ | 0.885 | 0.499 | 3.88 | ||||

| √ | √ | √ | 0.889 | 0.501 | 3.88 | |||

| √ | √ | √ | √ | 0.891 | 0510 | 3.80 | ||

| √ | √ | √ | √ | √ | 0.895 | 0.533 | 3.76 | |

| √ | √ | √ | √ | √ | √ | 0.896 | 0.537 | 3.73 |

| Model | mAP @0.5 | mAP @0.5–0.95 | FPS (Frames/s) | FLOPs | Np/106 |

|---|---|---|---|---|---|

| YOLOv5 | 0.801 | 0.351 | 43.5 | 16.5 | 7.2 |

| Faster R-CNN | 0.821 | 0.398 | 24.1 | 240 | 45.2 |

| YOLOv8n | 0.832 | 0.461 | 60.2 | 8.9 | 3.2 |

| YOLOv9 | 0.839 | 0.468 | 66.4 | 20.7 | 4.54 |

| YOLOv9-SPD | 0.852 | 0.511 | 58.3 | 18.56 | 4.10 |

| EfficientDet-Lite | 0.837 | 0.498 | 62.1 | 18 | 17 |

| YOLOv10 | 0.864 | 0.492 | 64.7 | 16.8 | 4.92 |

| YOLOv11 | 0.871 | 0.503 | 63.8 | 17.2 | 5.41 |

| This research model | 0.896 | 0.537 | 55.8 | 17.7 | 3.73 |

| Model | mAP@0.5 | ||

|---|---|---|---|

| Hole | Cracked Ends | Stains | |

| YOLOv9 | 0.825 | 0.833 | 0.842 |

| This research model | 0.896 | 0.894 | 0.899 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Xuan, C.; Shi, W.; Sun, L.; Wu, J.; Zhang, Y.; Tu, J. Improved YOLOv9 with Dual Convolution and LSKA Attention for Robust Small Defect Detection in Textiles. Processes 2026, 14, 149. https://doi.org/10.3390/pr14010149

Xuan C, Shi W, Sun L, Wu J, Zhang Y, Tu J. Improved YOLOv9 with Dual Convolution and LSKA Attention for Robust Small Defect Detection in Textiles. Processes. 2026; 14(1):149. https://doi.org/10.3390/pr14010149

Chicago/Turabian StyleXuan, Chang, Weimin Shi, Lei Sun, Ji Wu, Yongchao Zhang, and Jiajia Tu. 2026. "Improved YOLOv9 with Dual Convolution and LSKA Attention for Robust Small Defect Detection in Textiles" Processes 14, no. 1: 149. https://doi.org/10.3390/pr14010149

APA StyleXuan, C., Shi, W., Sun, L., Wu, J., Zhang, Y., & Tu, J. (2026). Improved YOLOv9 with Dual Convolution and LSKA Attention for Robust Small Defect Detection in Textiles. Processes, 14(1), 149. https://doi.org/10.3390/pr14010149