Abstract

Maritime navigation safety relies on high-precision perception systems. However, hazy weather often significantly compromises system performance, particularly by reducing image quality and increasing navigational risks. Although image dehazing techniques provide an effective solution, the lack of dedicated overwater dehazing datasets limits the generalization of dehazing algorithms. To overcome this problem, we present a large-scale overwater paired image dehazing dataset: Overwater-Haze. The dataset contains 21,000 synthetic overwater hazy images generated based on the atmospheric scattering model (ASM), categorized into Mist, Moderate, and Dense subsets based on varying haze concentrations, and 500 real overwater hazy images, which form the Real-Test portion of the test set. In order to meet the requirements for background interference mitigation, image diversity, and high quality, we performed extensive data augmentation and developed a comprehensive dataset creation pipeline. Our evaluation of five dehazing algorithms shows that models trained on Overwater-Haze achieve 9.96% and 10.47% lower Natural Image Quality Evaluator (NIQE) and Blind/Referenceless Image Spatial Quality Evaluator (BRISQUE) scores than pre-trained models on real overwater scenes, demonstrating the value of Overwater-Haze in assessing algorithm performance in overwater environments.

1. Introduction

Driven by the global trend toward intelligent shipping, the rapid development of intelligent ships has raised critical concerns regarding navigational safety, making it an important topic of research [1]. To achieve safe and efficient maritime operations, intelligent ships depend heavily on accurate perception and robust decision-making systems, where visual sensors serve as core components for environmental awareness [2]. However, the overwater environment is inherently complex and dynamic. In particular, hazy weather conditions severely impact visual perception in overwater environments. They significantly reduce visibility and cause onboard cameras to capture degraded images with low contrast, limited clarity, and substantial information loss. These visual impairments directly compromise the performance of high-level computer vision tasks, such as image segmentation [3,4,5] and object detection [6,7,8]. As a result, maritime transport efficiency is reduced, and the risk of navigation-related accidents is considerably increased. To address the degradation of visual perception systems under hazy conditions, image dehazing technologies have emerged as a key approach for enhancing visual performance, improving navigation efficiency, and ensuring navigational safety.

In recent years, single image dehazing has attracted increasing attention from the research community, with the goal of restoring clear visual information from hazy images [9,10,11,12,13,14]. Among existing approaches, data-driven deep learning methods have become the mainstream solution for this task. These methods typically rely on paired datasets composed of hazy images and their corresponding clear ground truths. Representative examples include the following: RESIDE [15], a large-scale dataset that contains both indoor and outdoor hazy scenes; HazeSpace2M [16], designed with haze-level stratification and scene classification; and synthetic datasets like I-Haze [17] and O-Haze [18], which were created using haze machines to simulate real-world hazy conditions.

However, these datasets are primarily constructed for terrestrial scenes and, therefore, fail to adequately represent the visual characteristics of overwater environments. Unlike land-based settings, overwater scenes are affected by complex wave-induced reflections, spatially non-uniform haze distributions, and broader variations in illumination. Such factors introduce significant domain shifts, leading to suboptimal performance when dehazing models trained on terrestrial datasets are applied to overwater scenarios. To the best of our knowledge, there is currently no publicly available, standardized paired dataset specifically designed for hazy overwater scenes. In addition, publicly accessible real-world hazy overwater images for testing purposes are lacking, which makes it challenging to perform fair and consistent benchmarking of dehazing algorithms. This absence of standardized evaluation data has become a major obstacle to the development, comparison, and validation of dehazing algorithms in overwater environments.

To fill this gap and help research overwater image dehazing, we present the Overwater-Haze dataset. Inspired by the RESIDE benchmark and recent research [19,20,21,22] in overwater image dehazing, we adopt a synthetic data generation pipeline based on the ASM [23] to create paired hazy and clear image samples. To alleviate the high costs and logistical challenges associated with capturing real-world hazy images in non-coastal areas—such as heavy labor, resource demands, and the unpredictability of haze occurrence—we leverage an existing, high-quality ship detection dataset as the base for haze synthesis. Although object detection and image dehazing are fundamentally different tasks, both are built upon overwater visual scenes that share similar semantic content and visual characteristics. This insight enables an efficient reuse of annotated resources for dehazing purposes. We believe this strategy holds great potential. Extensive experiments and studies confirm its effectiveness in practice. In addition, we collected a large number of real-world hazy overwater images from online sources, followed by a careful data cleaning and augmentation process. These real images are incorporated into the test set to evaluate the generalization capability of dehazing algorithms under authentic overwater haze conditions.

The Overwater-Haze dataset consists of 26,100 synthetically generated overwater hazy images and an additional 500 real-world hazy overwater images. We applied a haze-level stratification strategy to the synthetic portion of the dataset, resulting in three distinct subsets—Mist, Moderate, and Dense. This design enables comprehensive evaluation of dehazing models under varying haze concentrations. In addition, the real-world images are organized into a separate subset named Real-Test, which serves as a complementary test set. We conduct extensive benchmarking of existing single image dehazing algorithms from both subjective and objective perspectives. In addition, we performed inference on the Real-Test subset using the official pre-trained models of the evaluated algorithms and compared these results with those obtained from our previous experiments on the synthetic haze subsets. Through this comparative analysis, we systematically examined the effectiveness and limitations of existing dehazing algorithms in overwater scenarios, while also highlighting the positive impact of Overwater-Haze in advancing dehazing performance for such specific environments. In summary, our main contributions are as follows:

- We construct the first publicly available large-scale paired image dehazing dataset specifically designed for overwater scenes: Overwater-Haze. We provide a dedicated resource for dehazing research in maritime and navigation-related applications.

- Our dataset is carefully structured into three subsets corresponding to different haze intensities, effectively addressing the limitations of insufficient data quantity and poor data quality, and providing a reliable foundation for the development and evaluation of dehazing algorithms.

- Based on the Overwater-Haze dataset, we perform extensive comparisons and analyses of representative dehazing algorithms. By employing multiple evaluation metrics, we systematically assess the performance of these methods in overwater environments.

- We validate the effectiveness of the Overwater-Haze dataset in enhancing algorithm adaptation to overwater scenes, highlighting the dataset’s unique value in advancing dehazing research for maritime environments.

2. Related Work

2.1. Dehazing Datasets

In image dehazing, the pairing relationship between images plays a critical role in determining the learning paradigm adopted by deep learning algorithms. Specifically, the correspondence between clear and hazy images directly influences whether supervised, semi-supervised, or unsupervised approaches can be applied during training. However, acquiring paired hazy and haze-free images from real-world scenes remains highly challenging, as it is nearly impossible to capture two images of exactly the same scene.

To address this, the vast majority of previous works have constructed paired datasets by synthesizing hazy images based on the ASM or through manually synthesizing hazy images. Representative datasets are summarized in Table 1.

Table 1.

Comparison of image dehazing datasets. The “Paired” column indicates the pairing relationship between images, the “Availability” column denotes whether the dataset is publicly accessible, and the “Scene” column specifies the types of scenes included in the dataset, where “C” represents City, “I” represents Indoor, and “O” represents Overwater.

For example, the Fattal dataset [24], one of the earliest and most widely used dehazing datasets, contains a mixture of synthetic and real hazy images. It provides 12 synthetic hazy images and 31 real-world hazy images captured under natural conditions. The FRIDA dataset [25] was generated using the SiVIC™ simulation software, which emulates the viewpoint of a virtual onboard camera in driving scenarios. It initially contained 90 synthetic hazy images and was later extended to 330 images in the follow-up version FRIDA2 [26]. These datasets were among the most commonly used benchmarks in early dehazing research. In more recent studies [33,34], researchers have continued to synthesize hazy datasets using game engines or image processing software, following the same principle of simulated atmospheric effects. Among these, the RESIDE dataset [15] has become the most widely adopted and standardized benchmark in the field of single image dehazing. It is a large-scale synthetic dataset consisting of five subsets: the Indoor Training Set (ITS), the Synthetic Objective Testing Set (SOTS), the Hybrid Subjective Testing Set (HSTS), the Outdoor Training Set (OTS), and the Real-world Task-driven Testing Set (RTTS). The ITS subset is generated from 1399 haze-free indoor images sourced from the NYU2 and Middlebury stereo datasets, with 10 synthetic hazy versions created for each clear image. The SOTS subset contains 500 synthetic hazy images, with white scenes and dense haze also generated from NYU2 data and intended for objective testing. HSTS includes 10 synthetic outdoor hazy images and 10 real-world hazy images for subjective evaluation. To complement training data for outdoor scenarios, the OTS subset includes 72,135 synthetic hazy images. Additionally, RESIDE provides the RTTS subset, consisting of 4322 real-world hazy images, which are annotated and intended for task-driven evaluation of dehazing performance. Notably, each subset in the RESIDE dataset has been extensively used and validated across a wide range of image dehazing studies.

Recent studies have begun to focus on constructing subsets with varying haze intensities and expanding the scale of dehazing datasets. LMHaze [31] includes paired hazy and clear images captured in diverse indoor and outdoor environments, covering multiple scene types and haze levels. The dataset contains over 5000 high-resolution image pairs, offering a more comprehensive benchmark for evaluating dehazing algorithms across different conditions. HazeSpace2M [16] represents one of the largest single image dehazing datasets in recent years. It comprises over 2 million images and is specifically designed to enhance dehazing performance through haze-type classification. The dataset captures a wide range of hazy conditions, characterized by fog, clouds, and ambient haze, and includes scenes annotated across 10 distinct haze intensity levels.

In addition, several researchers have explored the use of artificially generated haze to simulate various scenes and haze densities. The I-Haze dataset [17] contains 35 pairs of indoor hazy and haze-free images. The hazy images were produced using a professional haze machine, ensuring realistic haze effects under strictly controlled conditions with consistent lighting for each image pair. Similarly, the O-Haze dataset [18] consists of 45 outdoor scenes, each with a corresponding pair of real hazy and haze-free images. Like I-Haze, the haze in O-Haze was also generated using a professional haze machine to maintain visual realism. Dense-Haze [28] includes 33 pairs of real-world hazy and clear images, specifically designed to evaluate dehazing algorithms under dense haze conditions. The NH-Haze dataset [29] introduces scenes with non-uniform haze distribution and consists of 55 pairs of real outdoor hazy and haze-free images, again created using a haze machine to simulate more complex atmospheric conditions. Although these high-quality datasets have significantly contributed to the development of dehazing algorithms, they are typically limited in scale or constrained to indoor and terrestrial environments. As a result, their applicability to overwater scenarios is limited due to substantial domain discrepancies, making them insufficient for addressing the unique challenges of dehazing in overwater environments.

To mitigate the lack of overwater dehazing datasets, some researchers have attempted to construct dedicated hazy image datasets specifically for overwater scenes. For example, ref. [27] introduced the HazyWater Dataset, an unpaired real-world dataset consisting of 4531 images collected via Google search, including 2090 hazy images and 2441 clear images. This dataset was used to support the training and evaluation of OWI-DehazeGAN [27] for dehazing in overwater settings. Similarly, REMIDE [30] was built by crawling 2047 unpaired images from Google as the training set, along with 51 real-world hazy images sourced from the RESIDE dataset as the testing set, to support the development of the MID-GAN architecture [30]. In another effort, ref. [32] proposed the MORHL dataset to improve maritime object detection under hazy conditions. This dataset includes 13,280 annotated images spanning six object categories, with haze conditions classified into three levels: light, moderate, and heavy. In [35], the authors used a combination of existing synthetic paired datasets, such as RESIDE-OTS [15], SMD [36], and Seaships [37], to train their proposed all-in-one visual perception enhancement network AiOENet for maritime low-visibility conditions.

While these datasets have contributed to advancing dehazing research for overwater imagery, their accessibility remains limited. Furthermore, unpaired datasets lack a one-to-one correspondence between hazy and clear images, which makes it challenging for models to directly learn the mapping between the two domains. This also complicates the objective evaluation of dehazing performance, thereby hindering large-scale and consistent benchmarking of overwater image dehazing algorithms.

2.2. Dehazing Algorithm

In recent years, driven by the rapid advancement of deep learning methods and the availability of large-scale image datasets, significant progress has been made in image dehazing technology. Existing single image dehazing algorithms can generally be categorized into traditional methods and data-driven methods [38]. The former includes strategies based on image enhancement and image restoration, while the latter primarily relies on deep neural networks to learn the mapping relationship between hazy and clear images.

Among traditional methods, the Dark Channel Prior (DCP) [39] is one of the most representative approaches. It estimates atmospheric light and transmission maps based on statistical observations. However, this method performs poorly when dealing with regions containing large white backgrounds or where atmospheric light is close to the background brightness of the image. Similarly, other manually defined prior-based methods, such as Color Attenuation Prior (CAP) [40] and haze-lines [41], may fail when the scene does not conform to these priors. Additionally, image enhancement techniques, such as multi-scale Retinex [42], histogram equalization [43], and Gamma correction [44], although they highlight certain image details, generally lack a physical model for haze and are unable to effectively remove dense haze.

With the rise of Convolutional Neural Networks (CNN) and Generative Adversarial Networks (GAN), many end-to-end learning methods have been proposed. For instance, DehazeNet [45] and MSCNN (Multi-Scale Convolutional Neural Networks) [46] are pioneers in applying convolutional neural networks for image dehazing. These methods learn the mapping relationship between hazy images and corresponding transmission maps to achieve dehazing. AOD-Net (All-in-One Dehazing Network) [9] further unifies the estimation of the transmission map and atmospheric light into a single model, reducing the cumulative error in atmospheric scattering model estimation and thus enhancing the simplicity and inference speed of the model. Many subsequent works [47,48,49,50] have continued this lightweight approach, attempting to strike a balance between dehazing efficiency and adaptability. Furthermore, unsupervised learning methods have gained increasing attention. CycleDehaze [10] adopts an unsupervised learning approach, utilizing a CycleGAN structure and improving the cyclic consistency loss with perceptual loss to demonstrate dehazing ability without paired data. D4 [12] estimates the predicted density and depth of the transmission map and uses re-rendered hazy images as training input, achieving promising dehazing results. To improve detail restoration during dehazing, attention mechanisms have been applied within models to enhance feature representation capabilities. EAA-Net (Effective Edge Assisted Attention Network) [13] constructs an edge-assisted attention network with dehazing, edge, and feature fusion residual branches, which preserves image details while maintaining color fidelity. FFA-Net (Feature Fusion Attention Network) [14] incorporates both channel and spatial attention mechanisms, providing additional flexibility in handling different features and pixels.

In more recent work, Transformer models have also been introduced to the image dehazing task, demonstrating strong competitiveness. For example, Dehamer [51] modulates CNN features by learning modulation matrices based on Transformer features, rather than simple feature addition or concatenation. By incorporating a prior dataset related to haze density into the Transformer, the model exhibits excellent performance under dense haze conditions. DehazeFormer [52] identifies that LayerNorm and GELU are the primary reasons behind the poor performance of Vision Transformers in dehazing tasks. By improving the model based on the Swin-Transformer architecture, it achieves outstanding performance with minimal computational cost on the RESIDE-SOTS dataset.

In the preceding sections, we have reviewed existing dehazing datasets and mainstream dehazing algorithms. These algorithms, developed and optimized based on land-centric data, have indeed achieved impressive results in handling haze in terrestrial scenes. However, although they have demonstrated strong performance on standard datasets, like RESIDE, NH-Haze, O-Haze, and I-Haze, the lack of dedicated overwater dehazing datasets means their applicability and generalization ability in overwater scenarios have not been systematically studied or evaluated. To tackle this problem, we introduce the Overwater-Haze dataset, the first large-scale image dehazing dataset specifically designed for overwater scenes. Leveraging this dataset, we conduct a systematic evaluation of mainstream single-image dehazing algorithms, covering methods ranging from traditional prior-based models to the latest Transformer-based approaches. Our work not only reveals the performance bottlenecks of these algorithms in overwater environments but also provides essential data support and performance baselines for the future design of dehazing algorithms that are better adapted to overwater environments.

3. Dataset

Overwater-Haze is a large-scale overwater image dehazing dataset consisting of 27,600 images. It comprises three synthetic subsets: Mist, Moderate, and Dense, corresponding to different haze intensities, as well as a real-world test set, Real-Test, composed of 500 authentic overwater hazy images. To the best of our knowledge, Overwater-Haze is the first publicly available paired image dehazing dataset specifically designed for overwater environments. It fills a critical gap in the current literature, where the lack of dedicated benchmark datasets for overwater scenes has posed a major limitation in advancing dehazing research. The Overwater-Haze dataset is publicly available at https://github.com/xie-xyh/Overwater-Haze-Dataset (accessed on 17 July 2025).

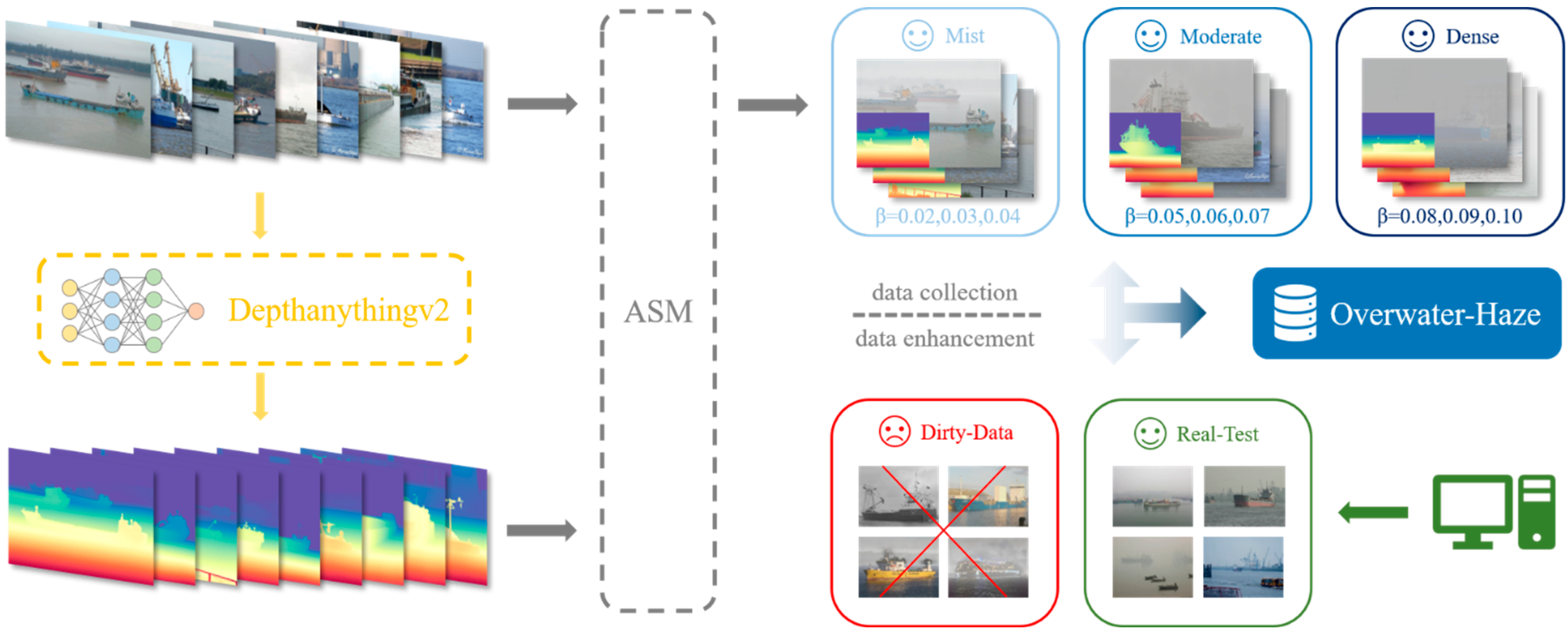

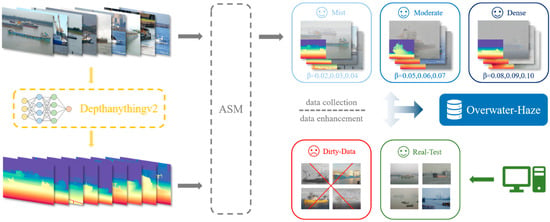

The construction of Overwater-Haze follows a systematic pipeline, as illustrated in Figure 1. High-quality clear overwater images are first sourced from existing ship detection datasets; synthetic hazy images are generated by using depth maps from DepthAnythingv2 [53], combined with the ASM [23] under controlled haze intensities to form the three synthetic subsets. Finally, data diversity is enhanced through augmentation, low-quality samples are filtered out, and real-world hazy images are curated to constitute the Real-Test set for comprehensive evaluation. In the following sections, we provide a detailed explanation of the dataset construction process and data preprocessing methodology.

Figure 1.

Construction pipeline of the Overwater-Haze dataset.

3.1. Data Collection

To construct the Overwater-Haze dataset, we first address the unique challenges of acquiring paired overwater hazy and clear images. Land-based scenes allow for systematic data collection through controlled haze generation, such as via fog machines [17,18,28]. In contrast, overwater environments involve inherent complexities introduced by dynamic factors including varying illumination, wave-induced reflections, and surface fluctuations disrupt temporal and spatial alignment of image pairs. Additionally, driven by instantaneous and hard to predict environmental factors such as temperature, humidity, wind speed, and water-air thermal differences, haze formation over water is highly spatiotemporally variable [54]. This makes manual collection of diverse, standardized hazy images time-consuming, cost-prohibitive, and insufficient for large-scale supervised training.

Inspired by the RESIDE [15] dataset, we adopt the synthesis pipeline-based ASM to obtain a sufficient number of synthetic hazy images as training samples. However, the accuracy and diversity of synthetic images still depend on the quality of the ground truth data. To ensure that the synthetic images align with real-world environments and to reduce the substantial human and material resources required for manual data collection, we have systematically organized and optimized existing ship detection datasets, as shown in Table 2. Some of these datasets already cover a rich variety of overwater scenes, which are highly relevant to the background information needed for the dehazing task. Through in-depth comparison and analysis of these datasets, we identified several issues related to background confusion. Datasets, such as Seaships [37], MID [55], and WaterScenes [56], primarily rely on cameras placed at limited locations, which results in minor pixel-level discrepancies in the synthetic hazy images and can consequently lead to overfitting in models. Given that the core task of dehazing algorithms is to restore high-frequency details from hazy images, we prioritized overwater scenes with rich texture information for dataset construction. These scenes provide more diverse feature information, which helps train more robust dehazing models. Among these, MVDD13 [57] stands out, as it covers overwater scenes from multiple perspectives, different weather conditions, time periods, and ship types, containing 35,474 high-quality images, thus providing a solid foundation for generating high-quality synthetic hazy overwater images. This data collection approach mitigates the limitations of real-world data acquisition.

Table 2.

Ship object detection datasets.

In addition to synthetic images, to evaluate the performance of different dehazing algorithms under real haze conditions, we curated a collection of hazy images labeled with “Haze” from existing publicly available ship detection datasets [36,56]. Furthermore, to further enrich the variety of our test set, we manually collected a large number of real overwater hazy images from the internet, featuring diverse resolutions and complex degradation issues, as illustrated in Figure 1. These images were used to construct our real-world overwater hazy image test set: Real-Test. During the collection of these real images, we adhered to the following strict selection criteria:

- Avoiding images generated by generative AI: We ensured that all collected images were sourced from real-world scenes rather than artificially generated by AI, thereby avoiding unnatural textures or unrealistic visual effects that may arise from synthetic images.

- Excluding heavily edited landscape photos: We specifically excluded images that had been excessively retouched or optimized, particularly those that were artificially enhanced or beautified, to ensure that the test images accurately reflected real-world haze effects.

- Verifying image resolution to ensure high-quality images: Each image was carefully checked for resolution to ensure that the collected images possessed sufficient detail and clarity. We prioritized images that exhibited complex degradation issues, ensuring that the test set fully represented the challenges faced in dehazing tasks.

Through these stringent selection standards, our Real-Test dataset comprises a wide range of representative real overwater hazy images, covering diverse haze scenarios, different weather conditions, and various degradation issues. This dataset provides a reliable and challenging benchmark for evaluating the performance of dehazing algorithms in real-world settings.

3.2. Data Pre-Processing

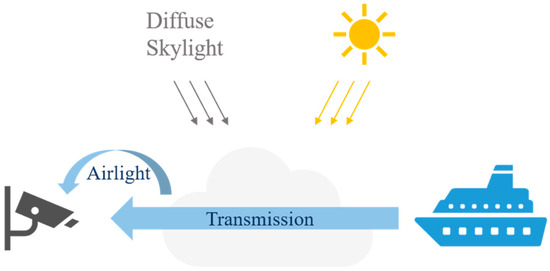

3.2.1. Atmospheric Scattering Model

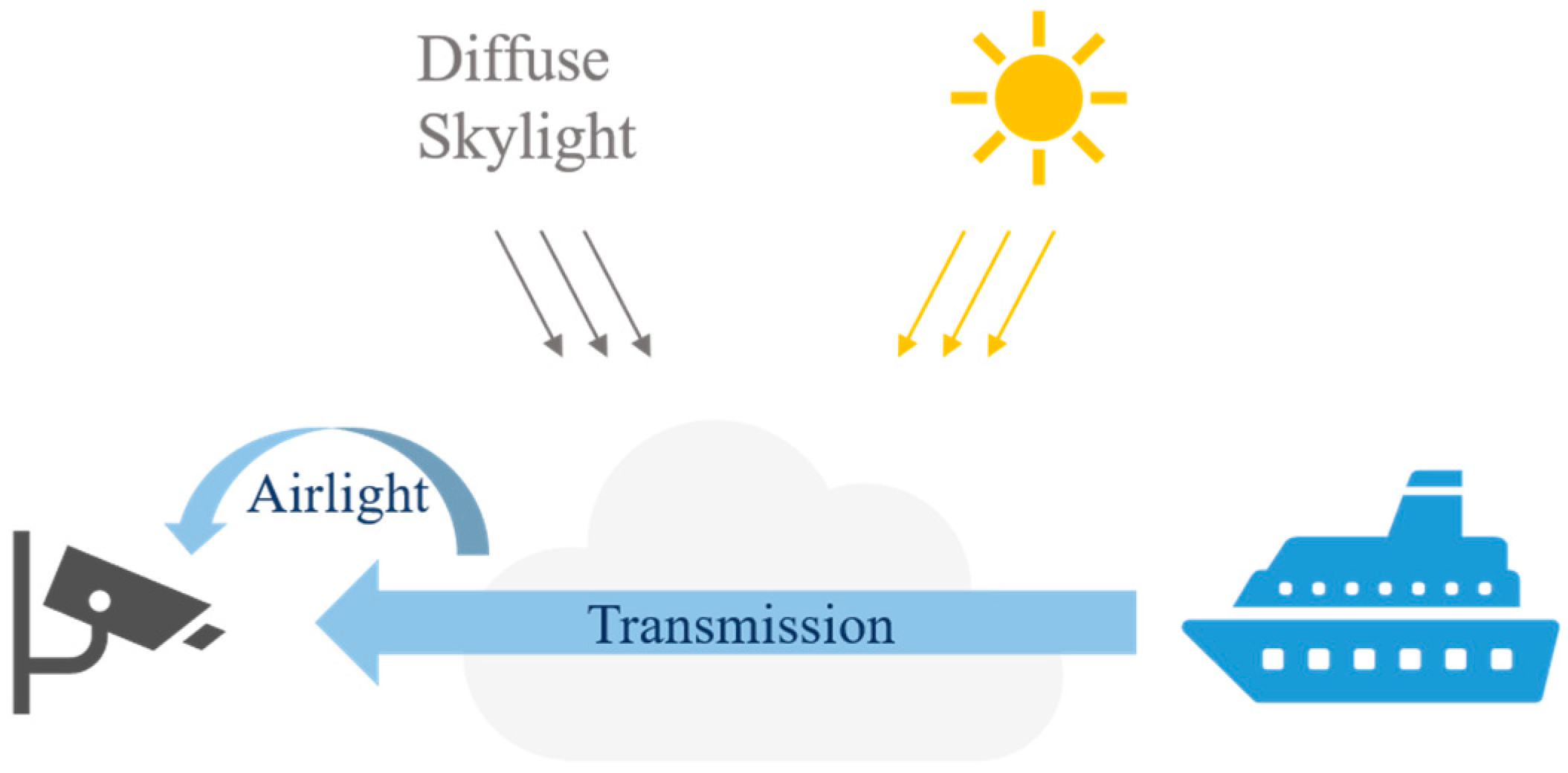

Currently, the most commonly used optical model for fog imaging is ASM [23], established by Srinivasa G. Narasimhan and colleagues. This model explains the imaging process of foggy images and the various elements that contribute to their formation. It is also widely used in computer vision to describe the formation of hazy images, as illustrated in Figure 2.

Figure 2.

Atmospheric scattering model (ASM).

The model is based on the phenomenon of light scattering in the atmosphere and describes how the color and brightness of distant objects are influenced by haze particles in the atmospheric scattering layer under hazy conditions. Its mathematical formulation is as follows:

where represents the observed hazy image, represents the haze-free image to be recovered, denotes the atmospheric light intensity, and represents the transmission matrix. When the atmospheric density is uniform, can be expressed as:

where denotes the atmospheric scattering coefficient. This indicates that the scene radiance decays exponentially with scene depth . In simple terms, a larger corresponds to a higher haze concentration.

To recover the clear image from the observed hazy image, we need to solve Equation (3):

3.2.2. Data Synthesis

As discussed in detail in Section 3.2.1, the imaging principle of haze is commonly modeled using ASM. In fact, the majority of existing image dehazing datasets [15,16,31,33] are constructed by synthesizing hazy images through parameterized implementations of the ASM. Typically, the haze synthesis pipeline consists of the following steps:

- Estimate the transmission map from a haze-free image.

- Estimate the atmospheric light using empirical methods.

- Compute the hazy image based on the atmospheric scattering model.

Under this framework, the problem of haze synthesis is reduced to determining the parameters , , and .

With the recent advancements in monocular depth estimation, it is now possible to infer high-quality depth information from a single image, which significantly improves the realism of haze synthesis. Unlike traditional approaches that rely on depth sensors or multi-view data, modern monocular depth estimation methods offer accurate and generalizable depth prediction across diverse scenes [60]. Leveraging this, we adopt the state-of-the-art depth estimation model DepthAnythingv2 [53], which provides robust and scalable depth predictions for arbitrary images in any environment. This model demonstrates strong performance, particularly in applications requiring precise absolute depth values. By incorporating the predicted depth maps from DepthAnything v2, we enhance the haze generation process, enabling the creation of more realistic and diverse synthetic hazy images.

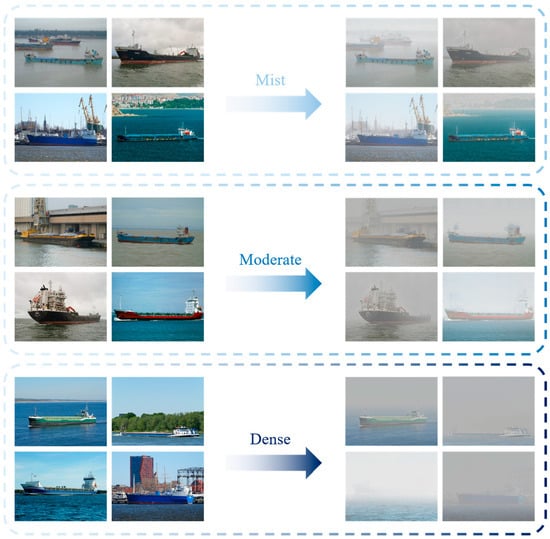

In addition, to systematically differentiate between various haze densities, we implement a haze-level stratification strategy. Specifically, we divide the atmospheric scattering coefficient into three intervals, , , and , corresponding to Mist, Moderate, and Dense subsets, respectively. Within each interval, values are uniformly sampled to control the haze intensity during synthesis. The dataset is evenly partitioned across these three subsets to ensure a balanced distribution. Furthermore, following the atmospheric light estimation method proposed in RESIDE, we define a set of atmospheric light values to modulate the overall brightness of hazy images. These values, combined with the estimated depth and transmission maps, are used in the atmospheric scattering model to generate the final synthetic hazy images.

3.3. Data Enhancement

Although monocular RGB-based depth cameras provide a relatively convenient means of depth estimation, their inherent limitations, coupled with the long-standing challenges of outdoor depth estimation [61], result in noticeable shortcomings in the generalization performance of current depth estimation methods. To ensure the high quality of our synthesized hazy images, we regard data cleaning as an indispensable step in the dataset construction process.

Specifically, to guarantee that the synthesized hazy data meet quality standards, we established the following four criteria to filter out low-quality synthetic images:

- Images with discontinuous or uneven haze distribution caused by errors in 2D depth estimation.

- Distorted haze images affected by nighttime perspective effects due to strong light sources.

- Synthesized images that violate basic physical principles.

- Low-resolution or grayscale images that severely degrade visual quality.

We conducted large-scale manual cleaning of all data to ensure dataset quality. To enhance the accuracy and consistency of this process, we invited three researchers in the field of computer vision to jointly participate in the data verification and decision-making process. This collaborative approach helped ensure the reliability of the final dataset.

As a result, we obtained a dataset consisting of 27,100 synthetic hazy images. Specifically, the Mist subset contains 8913 images, the Moderate subset includes 9140 images, and the Dense subset comprises 9047 images. Representative samples from the dataset are shown in Figure 3.

Figure 3.

Synthesis of hazy image.

In addition, within the Real-Test set, we observed that some images were extracted as frames from videos recorded under real hazy weather conditions. As a result, a significant number of highly similar images were present, which posed challenges in ensuring the diversity and representativeness of the evaluation metrics. To address this issue, we applied perceptual hashing algorithm for image deduplication. By abstracting the image content into compact feature encodings, perceptual hashing enables efficient measurement of content similarity [62]. We set a threshold for the generated hash codes to effectively identify and remove near-duplicate images. After this filtering process, a total of 500 unique images were retained in the Real-Test set. Representative examples are shown in Figure 4.

Figure 4.

Real hazy image.

After the above processing steps, we finalized the construction of our dataset. Detailed statistics of the dataset are presented in Table 3.

Table 3.

Overview of the Overwater-Haze dataset.

4. Results and Discussion

4.1. Implementation Details

4.1.1. Experimental Environment and Parameter Settings

We deployed the fully processed Overwater-Haze dataset on a Linux-based system equipped with an Intel I9-14900K CPU and an NVIDIA GeForce RTX 3090 GPU. Based on this dataset, we conducted a comprehensive and detailed evaluation of five representative single image dehazing algorithms: DCP [39], AOD-Net [9], GridDehazeNet [63], FFA-Net [14], and Dehamer [51]. To ensure the fairness of the evaluation, all hyperparameters of the tested algorithms followed the settings published in their original papers. Each algorithm was independently trained on the three synthetic subsets (Mist, Moderate, and Dense). Building on these training results, we applied both the trained models and the official pre-trained models of each algorithm to the Real-Test subset for inference, aiming to evaluate their generalization capabilities under real-world haze conditions and verify the effectiveness of the Overwater-Haze dataset. Additionally, under the same hardware conditions, we analyzed the computational efficiency of different algorithms.

4.1.2. Evaluation Algorithm Description

DCP is a classic traditional method based on the observation of natural scene statistics. Its core principle is that most non-sky regions in outdoor images contain low-intensity pixels in at least one color channel. For a clear image of , its dark channel is defined as:

where denotes the local region centered at , and represents the color channel.

Based on this, DCP estimates the parameters in Equation (5). The transmission map is derived from the hazy image by:

where is a constant that retains a slight amount of haze to ensure a natural appearance, and is the atmospheric light in channel. Atmospheric light is selected from the brightest 0.1% pixels in . The clear image is further restored by solving the parameters and .

AOD-Net simplifies the dehazing process by unifying the estimation of and into a single learnable parameter through a lightweight CNN architecture. It modifies Equation (3) as follows:

where K(x) is expressed as:

AOD-Net does not estimate and separately. Instead, it directly minimizes the reconstruction error in the pixel domain to learn and then calculates using the simplified formula. This design reduces the cumulative error from multi-step estimation, accelerates inference speed, while retaining the physical basis of atmospheric scattering.

GridDehazeNet adopts an end-to-end learning paradigm, directly modeling the mapping from hazy images to clear images, rather than estimating and independently. Its architecture comprises three key components: a preprocessing module that extracts multi-scale low-level features from ; a backbone GridNet [64] with a 3-layer and 6-column structure, which captures local details and global context through skip connections and grid attention to adapt to non-uniform haze distributions; and a postprocessing module that refines high-level features and outputs the dehazed image . The training process is guided by a joint loss function consisting of smooth L1 loss and perceptual loss. This function minimizes the pixel-level difference between and the ground truth, maintains high-level semantic consistency, and ensures both quantitative accuracy and visual naturalness.

FFA-Net enhances feature representation by integrating channel attention and spatial attention mechanisms, enabling adaptive weighting of haze-related regions. Its core design addresses the challenge of non-uniform haze; channel attention emphasizes features sensitive to haze density, while spatial attention highlights regions with dense haze, allocating more computational resources to these areas. The network fuses multi-scale attention-weighted features through encoder-decoder blocks and directly outputs the clear image .

Dehamer combines the global context modeling capability of Transformer with the local feature extraction advantages of CNN and incorporates haze density priors to enhance performance in dense haze scenarios. It leverages a feature modulation mechanism where the Transformer learns modulation matrices from the global features of to dynamically adjust CNN features, addressing the inconsistency between global context and local details. Meanwhile, it integrates haze density priors by embedding haze density into the positional encoding of the Transformer, guiding the model to prioritize the processing of dense haze regions. By jointly modeling global haze trends and local details, Dehamer achieves accurate dehazing under extreme conditions. It adapts to complex atmospheric scattering patterns through data-driven learning.

4.2. Evaluation Metrics

In deep learning-based image dehazing, evaluation metrics serve as the foundation for measuring the performance of dehazing algorithms. In our experiments, we adopt Peak Signal-to-Noise Ratio (PSNR) [65] and Structural Similarity Index Measure (SSIM) [65] as the objective metrics to assess image quality.

PSNR is one of the most widely used evaluation metrics in the image dehazing field. It quantifies image quality based on the pixel-wise error between the dehazed image and the ground truth. The metric is formally defined as follows:

where denotes the maximum possible pixel value of the image, and represents the mean squared error between the dehazed image and the ground truth. A higher PSNR value indicates greater fidelity of the algorithm in preserving the original scene.

In contrast, SSIM simulates human visual perception and measures the structural similarity between images. The SSIM value ranges from 0 to 1, where a value closer to 1 indicates better dehazing performance. The metric is defined as follows:

where and denote the mean values of the images, and represent the variances of the images, and is the covariance between images. In general, the hyperparameters are and [65].

Additionally, to assess the generalization performance of the model under real-world haze conditions, we employ two no-reference image quality metrics: Natural Image Quality Evaluator (NIQE) [66] and Blind/Referenceless Image Spatial Quality Evaluator (BRISQUE) [67]. NIQE evaluates image quality by measuring the statistical difference between the image and natural scene statistics, while BRISQUE assesses perceptual image quality based on local structural distortions. By utilizing these two no-reference metrics, we can perform a comprehensive quantitative analysis of the dehazing results from a perceptual quality perspective, allowing for a more accurate evaluation of the model’s performance in complex real-world environments.

4.3. Results on Synthetic Images

4.3.1. Subjective Comparison

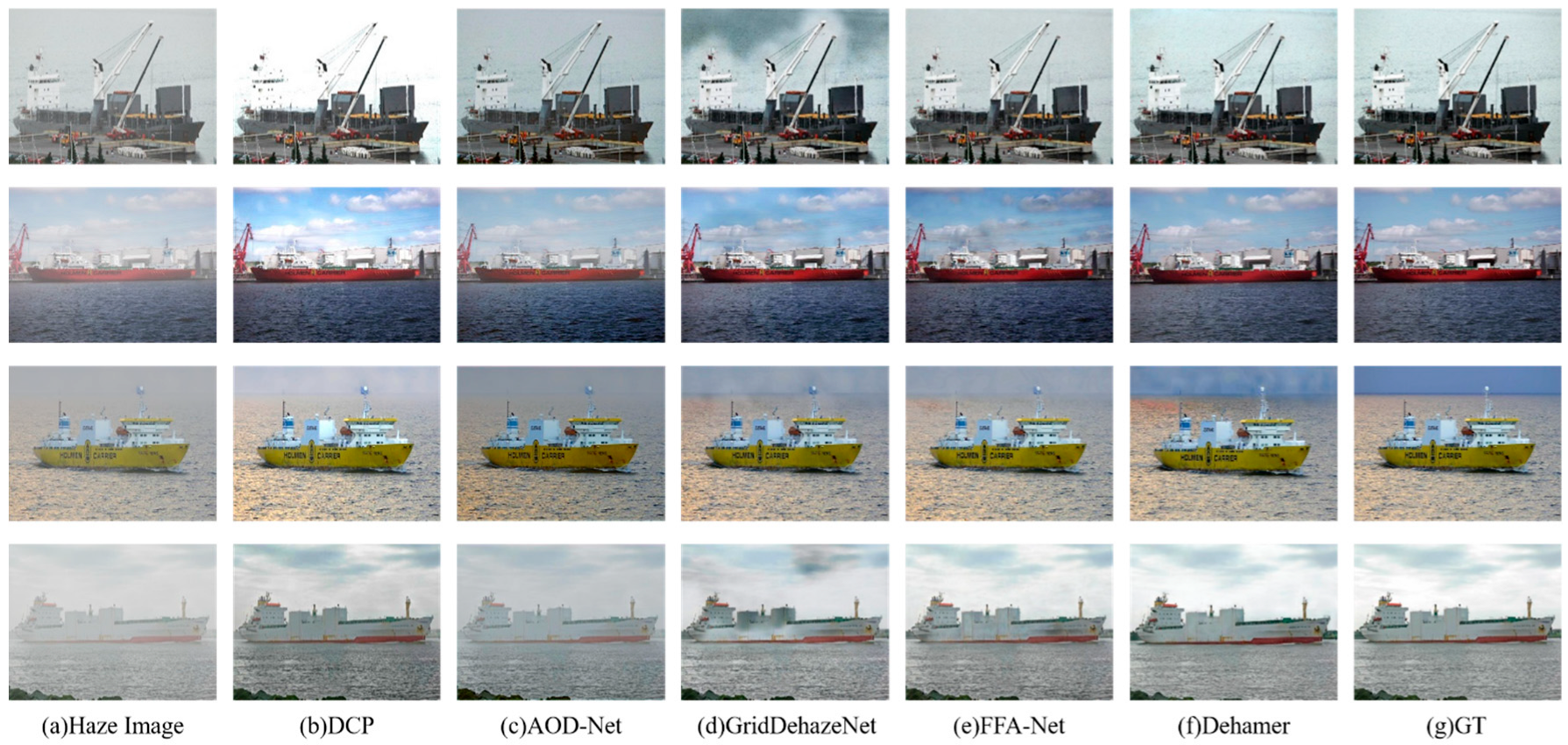

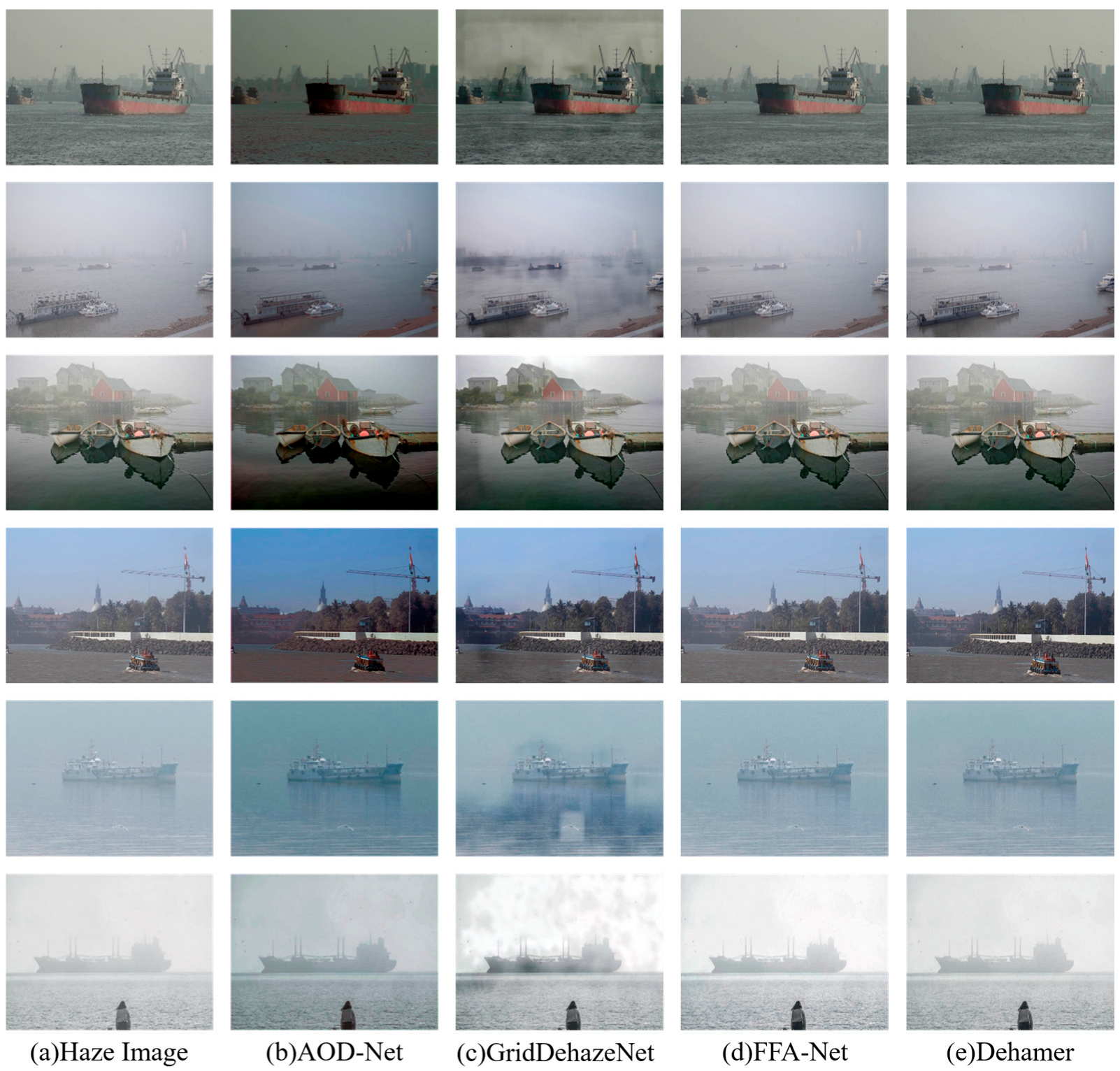

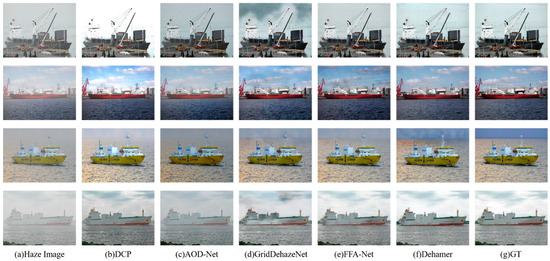

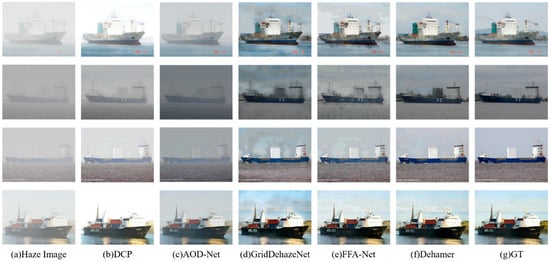

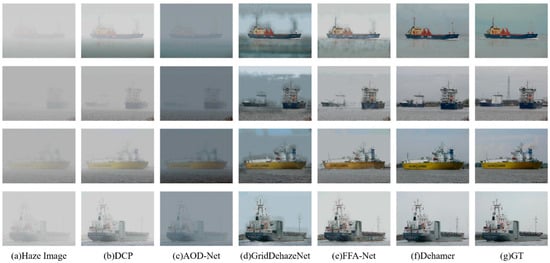

We evaluated and compared several representative dehazing algorithms on the three synthetic subsets of the Overwater-Haze dataset. The evaluation results are presented in Figure 5, Figure 6 and Figure 7. Experimental findings indicate that as the haze density increases, the performance of the dehazing algorithms generally declines, highlighting the limitations of different algorithms when handling complex haze conditions.

Figure 5.

Benchmarking results for the Mist subset.

Figure 6.

Benchmarking results for the Moderate subset.

Figure 7.

Benchmarking results for the Dense subset.

Specifically, the DCP algorithm demonstrated effectiveness based on the dark channel prior theory on the Mist subset, as shown in Figure 5. The results show that it effectively removes light haze interference and even outperforms some deep learning methods in certain images. However, in some scenes, noticeable scene whitening occurred during recovery. Additionally, under high haze concentration conditions, particularly when scene objects have a similar brightness to the atmospheric light, DCP produces inaccuracies in transmission map estimation for these specific images. This leads to poor differentiation between haze and image details, resulting in severe dehazing distortion. These findings reflect the limitations of prior-based theories in image dehazing.

AOD-Net and GridDehazeNet, which rely on CNN for local feature extraction, also performed well on the lighter Mist subset, achieving good dehazing results. However, when applied to the higher haze density subsets, Moderate and Dense, the restored images exhibited incomplete and uneven dehazing, as shown in Figure 6c,d and Figure 7c,d. Their dehazing performance is limited under complex conditions.

In contrast, Dehamer integrates haze density and relative position as priors into the Transformer model. Benefiting from the global context modeling ability of Transformers and the local representation power of CNN, Dehamer outperforms the other algorithms at all haze intensity levels. It handles varying levels of haze more effectively, producing images with clearer contours and better visual effects. Particularly on the Dense subset, it accurately restores image details, demonstrating strong performance.

4.3.2. Objective Comparison

To further quantify the performance of different algorithms on the overwater image dehazing task, we present the average PSNR and SSIM values for each algorithm across the test sets of the various subsets in Table 4. Although DCP shows good visual results on some images, the overall performance is still inferior to deep learning-based methods. In comparison, AOD-Net and GridDehazeNet achieve PSNR and SSIM values of 18.905 and 0.864, and 23.395 and 0.916, respectively, on the Mist subset, showing an improvement over DCP. However, their dehazing performance on the Moderate and Dense subsets remains suboptimal, particularly for AOD-Net, which is significantly lower than the other algorithms.

Table 4.

Testing results for the synthetic hazy image subsets. The arrows indicate that higher values of these metrics are better.

Consistent with the visual results, Dehamer performs excellently across all subsets, especially on the Dense subset, where it demonstrates a significant performance advantage with PSNR and SSIM values of 25.235 and 0.877, respectively. This highlights Dehamer’s superior recovery capability under high-concentration haze conditions and showcases its robustness in handling complex image restoration tasks in overwater scenes with dense fog.

On the other hand, FFA-Net also performed well on the Mist subset and shows poor results on the Dense subset, with PSNR and SSIM values of only 17.300 and 0.816, respectively. This indicates that dense fog in overwater environments remains a major challenge for dehazing algorithms. Particularly in dynamic and complex environments, improving the adaptability and robustness of algorithms is still an open problem.

4.4. Results on Real Hazy Images

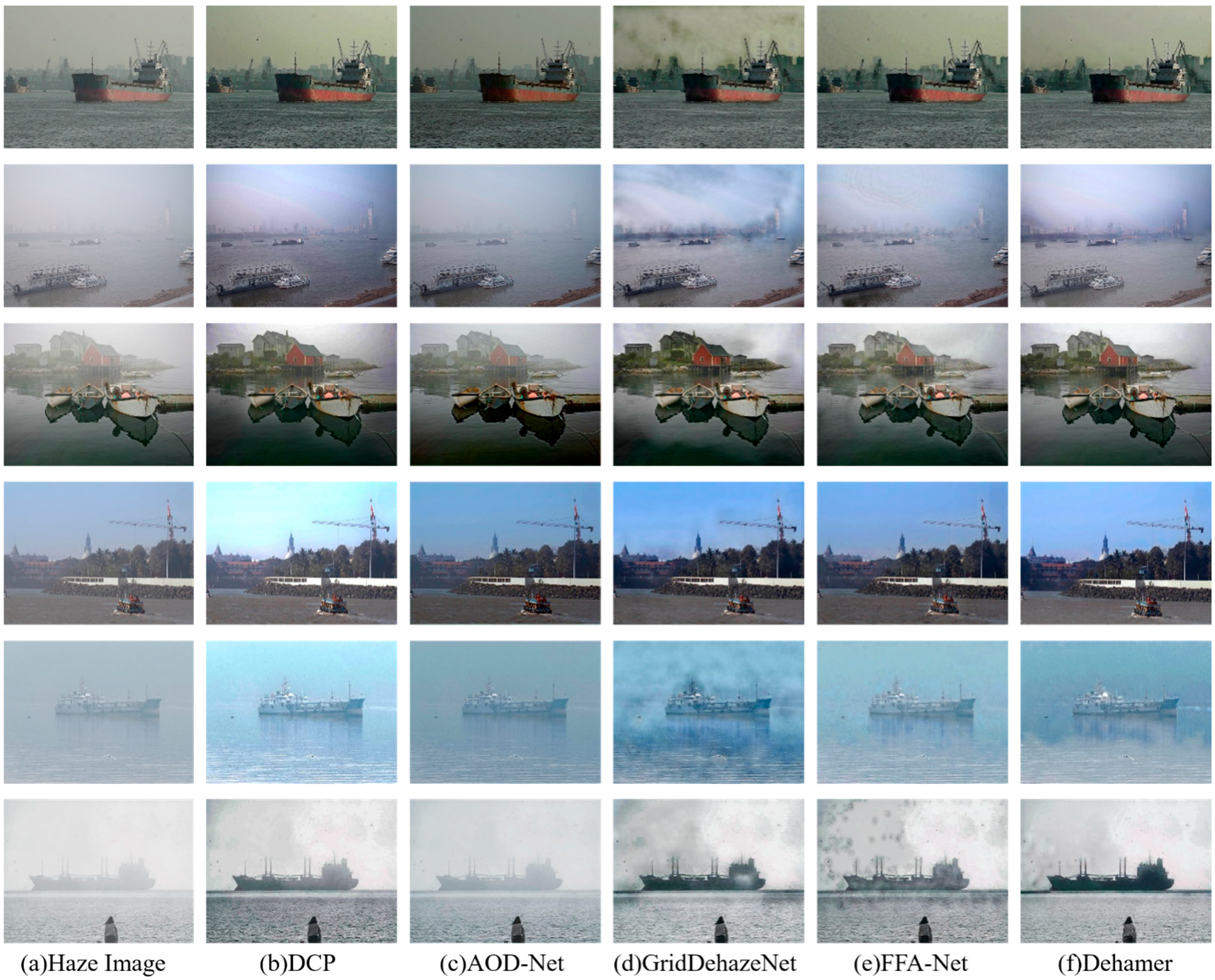

4.4.1. Subjective Comparison

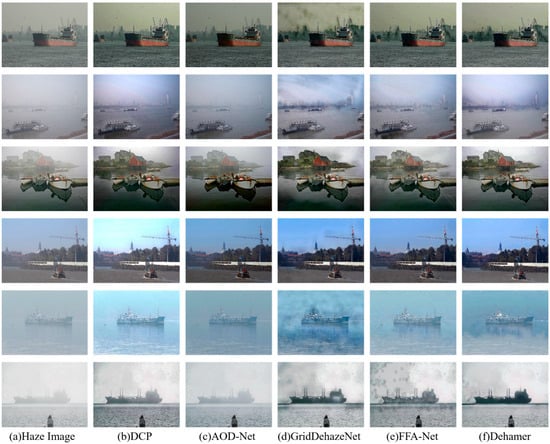

In addition, to comprehensively evaluate the performance of each algorithm in real overwater haze environments, we tested the generalization ability of the algorithms on real hazy overwater images. The experimental results, as shown in Figure 8, demonstrate the restoration performance of different algorithms in real-world scenarios. Compared to their performance on synthetic images, the complex backgrounds and varying lighting conditions of real images pose a greater challenge for dehazing algorithms.

Figure 8.

Benchmarking results for the Real-Test subset.

In these tests, DCP maintained the strength of its prior theory and demonstrated good adaptability. However, for complex scenes, issues such as a blue hue in the sky region and uneven dehazing results were observed, which are also attributed to the limitations of the prior-based theory. While AOD-Net showed good dehazing performance on synthetic images, its generalization ability under real hazy conditions was poor, with subjective differences barely noticeable to the human eye. Similarly, GridDehazeNet’s performance was affected by haze density in real-world scenes, leading to uneven dehazing results.

FFA-Net and Dehamer exhibited strong recovery capabilities. They effectively suppressed haze in the water surface and distant regions, restored image details, and produced clearer visual effects. However, some localized uneven dehazing was observed in certain scenes. These experimental results indicate that although each dehazing algorithm performs differently on synthetic images, they are challenged by more complex backgrounds and dynamic lighting conditions in real overwater haze environments. This further demonstrates that the generalization performance of existing image dehazing algorithms still requires further optimization.

To further verify the targeted improvement effect of the Overwater-Haze dataset on dehazing tasks for overwater scenes, we selected four deep learning algorithms excluding DCP and conducted comparative inference experiments on the Real-Test subset using their official pre-trained models and models trained on the Overwater-Haze dataset. The results are shown in Figure 9.

Figure 9.

Pre-trained models’ results for the Real-Test subset.

Compared to Figure 8, the visual performance of official pre-trained models generally exhibit significant adaptability limitations in overwater scenes. The output of AOD-Net shows a clear dark tone overall, while still lacking significant dehazing performance. GridDehazeNet left more uneven haze areas in the haze removal results, which is consistent with the model trained on Overwater-Haze, but the haze removal effect is significantly worse. Although FFA-Net and Dehamer demonstrated strong dehazing performance in indoor and general outdoor scenes in their official papers, their inference performance in water scenes was almost ineffective.

In contrast, the models trained on the Overwater-Haze dataset demonstrate superior visual performance in real overwater scenes, as shown in Figure 8. Although the dehazing effects vary due to the inherent characteristics of different algorithms, they all can achieve targeted removal of haze in different regions, especially showing outstanding performance in restoring the contour clarity and detail integrity of the main ship body. This comparative result confirms the promoting role of the Overwater-Haze dataset in enabling models to learn haze features of overwater scenes, thereby significantly enhancing the adaptability of algorithms to complex overwater scenes.

4.4.2. Objective Comparison

Unlike synthetic hazy images, real hazy images lack corresponding ground truth images, making it impossible to directly quantify their quality using traditional image quality metrics. We used two no-reference image quality metrics, NIQE and BRISQUE, to effectively quantify and evaluate the performance of the algorithms in real-world environments, encompassing both models retrained on the Overwater-Haze dataset and their official pre-trained models, as shown in Table 5.

Table 5.

Testing results for the real hazy subsets. The arrows for NIQE and BRISQUE mean higher values are better, and the arrows next to numbers show the percentage decrease in retrained model results compared to pre-trained model.

Due to its simpler network structure and fewer parameters, AOD-Net produced the worst results among the tested algorithms, with NIQE and BRISQUE scores of 5.380 and 46.700, respectively. Despite its metrics being worse than those of DCP, a traditional method based on prior theories, this does not imply that deep learning methods are ineffective. In contrast, the performance of other deep learning-based methods surpassed that of DCP, with Dehamer in particular achieving the best results on real hazy images, with NIQE and BRISQUE scores of 4.827 and 38.253, respectively. These results highlight the superiority of deep learning methods, particularly in terms of naturalness, structural quality, and relatively low distortion, demonstrating the feasibility of data-driven approaches over traditional methods like DCP.

Notably, compared with pre-trained models, the models trained on the Overwater-Haze dataset have shown significantly improved performance, with the NIQE and BRISQUE metrics decreasing by an average of 9.96% and 10.47%, respectively. This quantitative result fully confirms the positive role of the Overwater-Haze dataset in adapting models to overwater scenes. Among them, Dehamer exhibits particularly prominent performance improvement: its pre-trained model achieved NIQE and BRISQUE scores of 5.581 and 43.990 on the Real-Test subset, while after training on the Overwater-Haze dataset, the two metrics decreased by 13.51% and 13.04%, respectively. These experimental results not only demonstrate the generalization potential of existing algorithms in the field of overwater image dehazing but also strongly highlight the critical effectiveness of the Overwater-Haze dataset in enhancing the adaptability of algorithms to real-world overwater haze environments.

4.5. Computational Efficiency

For onboard equipment, computational efficiency is a key factor in achieving real-time dehazing. Therefore, we conducted a comprehensive evaluation of the computational performance of these algorithms. For deep learning algorithms, we calculated the number of model parameters and computational FLOPs. Additionally, we computed the average computation time of all testing methods for Real-Test, as shown in Table 6.

Table 6.

Computational efficiency for Real-Test.

As a traditional physics-based prior method, DCP relies on CPU performance and pixel by pixel computation. As a result, the average processing time for each image reaches 1.41 s, which obviously cannot meet the real-time requirements. In deep learning algorithms, AOD-Net exhibits the lightest features with only 0.002 million parameters and 0.458 G FLOPs, which directly leads to its significant speed advantage. The average computation time per image is only 0.11 s. However, despite its efficiency, its dehazing performance under real haze conditions is still limited, especially when dealing with complex scenes and high-intensity haze. GridDehazeNet, with 0.956 million parameters and 85.715 G FLOPs, achieves an average processing time of 0.32 s per image, striking a moderate balance between efficiency and performance. In contrast, FFA-Net and Dehamer, while delivering excellent dehazing results, demonstrate higher computational complexity. FFA-Net possesses 4.456 million parameters and 701.985 G FLOPs, resulting in an average processing time of 0.97 s per image. Dehamer comprises 29.443 million parameters and 237.853 G FLOPs, with an average processing time of 0.52 s per image.

5. Conclusions

In this work, we introduce the Overwater-Haze dataset, a large-scale paired image dehazing resource designed to address the limitations of existing overwater scene datasets and the insufficient adaptability in image dehazing. The dataset consists of three synthetic hazy subsets with varying haze intensities, alongside real overwater hazy images for performance evaluation. We also detail the generation pipeline for the synthetic images, including data sourcing, processing, and augmentation. The conducted comprehensive benchmarking and comparative experiments on existing dehazing algorithms enable a systematic evaluation of their performance and further validate the effectiveness of Overwater-Haze in enhancing the model’s adaptability to hazy overwater scenes. Experimental results show that current dehazing algorithms exhibit performance fluctuations when handling overwater haze scenes of varying intensities, and some existing algorithms with relatively high complexity face constraints in practical application on resource-constrained onboard edge devices.

To address these challenges, future work will focus on three key directions. We plan to expand our dataset by collecting more real-world hazy images to supplement the existing test data, thereby enhancing its applicability across various maritime scenarios. Additionally, we will explore the optimization of dehazing algorithms to achieve high quality results under different haze intensities while maintaining a balance between model efficiency and complexity. Furthermore, to improve ship perception capabilities under foggy conditions and thus meet the needs of safe navigation, we aim to develop lightweight models that can deliver high performance with low computational requirements, ensuring real-time processing on resource-constrained onboard edge devices.

Author Contributions

Conceptualization, Y.X. and H.W.; methodology, Y.X.; software, Y.X. and M.L.; validation, Y.X. and M.L.; formal analysis, Y.X.; investigation, Y.X. and S.W.; resources, H.W.; data curation, Y.X. and S.W.; writing—original draft preparation, Y.X. and M.L.; writing—review and editing, Y.X. and H.W.; visualization, Y.X. and S.W.; supervision, H.W.; project administration, H.W.; funding acquisition, H.W. All authors have read and agreed to the published version of the manuscript.

Funding

This work was supported by grants from the Innovation Fund of the Maritime Defense Technology Innovation Center, China (JJ-2022-702-01) and Laboratory of Science and Technology on Marine Navigation and Control, China State Ship-building Corporation (2023010302).

Data Availability Statement

The public dataset created by this research institute can be used in https://github.com/xie-xyh/Overwater-Haze-Dataset (accessed on 17 July 2025).

Acknowledgments

The authors sincerely thank the editors and reviewers.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Öztürk, Ü.; Akdağ, M.; Ayabakan, T. A Review of Path Planning Algorithms in Maritime Autonomous Surface Ships: Navigation Safety Perspective. Ocean Eng. 2022, 251, 111010. [Google Scholar] [CrossRef]

- Wang, C.; Cai, X.; Li, Y.; Zhai, R.; Wu, R.; Zhu, S.; Guan, L.; Luo, Z.; Zhang, S.; Zhang, J. Research and Application of Panoramic Visual Perception-Assisted Navigation Technology for Ships. J. Mar. Sci. Eng. 2024, 12, 1042. [Google Scholar] [CrossRef]

- Hu, K.; Zeng, Q.; Wang, J.; Huang, J.; Yuan, Q. A Method for Defogging Sea Fog Images by Integrating Dark Channel Prior with Adaptive Sky Region Segmentation. J. Mar. Sci. Eng. 2024, 12, 1255. [Google Scholar] [CrossRef]

- Sun, Y.; Su, L.; Luo, Y.; Meng, H.; Zhang, Z.; Zhang, W.; Yuan, S. IRDCLNet: Instance Segmentation of Ship Images Based on Interference Reduction and Dynamic Contour Learning in Foggy Scenes. IEEE Trans. Circuits Syst. Video Technol. 2022, 32, 6029–6043. [Google Scholar] [CrossRef]

- Fu, C.; Li, M.; Zhang, B.; Wang, H. TBiSeg: A Transformer-Based Network with Bi-Level Routing Attention for Inland Waterway Segmentation. Ocean Eng. 2024, 311, 119011. [Google Scholar] [CrossRef]

- Zhang, Q.; Wang, L.; Meng, H.; Zhang, Z.; Yang, C. Ship Detection in Maritime Scenes under Adverse Weather Conditions. Remote Sens. 2024, 16, 1567. [Google Scholar] [CrossRef]

- Liang, S.; Liu, X.; Yang, Z.; Liu, M.; Yin, Y. Offshore Ship Detection in Foggy Weather Based on Improved YOLOv8. J. Mar. Sci. Eng. 2024, 12, 1641. [Google Scholar] [CrossRef]

- Sun, H.; Zhang, W.; Yang, S.; Wang, H. Lightweight Single-Stage Ship Object Detection Algorithm for Unmanned Surface Vessels Based on Improved YOLOv5. Sensors 2024, 24, 5603. [Google Scholar] [CrossRef]

- Li, B.; Peng, X.; Wang, Z.; Xu, J.; Feng, D. AOD-Net: All-in-One Dehazing Network. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 4780–4788. [Google Scholar] [CrossRef]

- Engin, D.; Genc, A.; Ekenel, H.K. Cycle-Dehaze: Enhanced CycleGAN for Single Image Dehazing. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Salt Lake City, UT, USA, 18–22 June 2018; pp. 938–9388. [Google Scholar] [CrossRef]

- Wang, Y.; Yan, X.; Wang, F.L.; Xie, H.; Yang, W.; Zhang, X.-P.; Qin, J.; Wei, M. UCL-Dehaze: Toward Real-World Image Dehazing via Unsupervised Contrastive Learning. IEEE Trans. Image Process. 2024, 33, 1361–1374. [Google Scholar] [CrossRef]

- Yang, Y.; Wang, C.; Liu, R.; Zhang, L.; Guo, X.; Tao, D. Self-Augmented Unpaired Image Dehazing via Density and Depth Decomposition. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 2027–2036. [Google Scholar] [CrossRef]

- Wang, C.; Shen, H.-Z.; Fan, F.; Shao, M.-W.; Yang, C.-S.; Luo, J.-C.; Deng, L.-J. EAA-Net: A Novel Edge Assisted Attention Network for Single Image Dehazing. Knowl.-Based Syst. 2021, 228, 107279. [Google Scholar] [CrossRef]

- Qin, X.; Wang, Z.; Bai, Y.; Xie, X.; Jia, H. FFA-Net: Feature Fusion Attention Network for Single Image Dehazing. AAAI 2020, 34, 11908–11915. [Google Scholar] [CrossRef]

- Li, B.; Ren, W.; Fu, D.; Tao, D.; Feng, D.; Zeng, W.; Wang, Z. Benchmarking Single-Image Dehazing and Beyond. IEEE Trans. Image Process. 2018, 28, 492–505. [Google Scholar] [CrossRef] [PubMed]

- Islam, M.T.; Rahim, N.; Anwar, S.; Saqib, M.; Bakshi, S.; Muhammad, K. HazeSpace2M: A Dataset for Haze Aware Single Image Dehazing. In Proceedings of the 32nd ACM International Conference on Multimedia, Melbourne, VIC, Australia, 28 October–1 November 2024; pp. 9155–9164. [Google Scholar] [CrossRef]

- Ancuti, C.; Ancuti, C.O.; Timofte, R.; De Vleeschouwer, C. I-HAZE: A Dehazing Benchmark with Real Hazy and Haze-Free Indoor Images. In Proceedings of the International Conference on Advanced Concepts for Intelligent Vision Systems, Poitiers, France, 24–27 September 2018; pp. 620–631. [Google Scholar] [CrossRef]

- Ancuti, C.O.; Ancuti, C.; Timofte, R.; De Vleeschouwer, C. O-HAZE: A Dehazing Benchmark with Real Hazy and Haze-Free Outdoor Images. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Salt Lake City, UT, USA, 18–22 June 2018; pp. 867–8678. [Google Scholar] [CrossRef]

- Liu, T.; Zhang, Z.; Lei, Z.; Huo, Y.; Wang, S.; Zhao, J.; Zhang, J.; Jin, X.; Zhang, X. An Approach to Ship Target Detection Based on Combined Optimization Model of Dehazing and Detection. Eng. Appl. Artif. Intell. 2024, 127, 107332. [Google Scholar] [CrossRef]

- Zhou, W.; Gao, X. Sat-DehazeGAN: An Efficient Dehazing Model in Water-Sky Background for River-Sea Transport. Multimed. Syst. 2025, 31, 9. [Google Scholar] [CrossRef]

- Wang, N.; Wang, Y.; Feng, Y.; Wei, Y. MDD-ShipNet: Math-Data Integrated Defogging for Fog-Occlusion Ship Detection. IEEE Trans. Intell. Transp. Syst. 2024, 25, 15040–15052. [Google Scholar] [CrossRef]

- Moosbauer, S.; Konig, D.; Jakel, J.; Teutsch, M. A Benchmark for Deep Learning Based Object Detection in Maritime Environments. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Long Beach, CA, USA, 16–17 June 2019; pp. 916–925. [Google Scholar] [CrossRef]

- Nayar, S.K.; Narasimhan, S.G. Vision in Bad Weather. In Proceedings of the Seventh IEEE International Conference on Computer Vision, Kerkyra, Greece, 20–27 September 1999; Volume 2, pp. 820–827. [Google Scholar] [CrossRef]

- Fattal, R. Single Image Dehazing. ACM Trans. Graph. 2008, 27, 1–9. [Google Scholar] [CrossRef]

- Tarel, J.-P.; Hautiere, N.; Cord, A.; Gruyer, D.; Halmaoui, H. Improved Visibility of Road Scene Images under Heterogeneous Fog. In Proceedings of the 2010 IEEE Intelligent Vehicles Symposium, La Jolla, CA, USA, 21–24 June 2010; pp. 478–485. [Google Scholar] [CrossRef]

- Tarel, J.-P.; Hautiere, N.; Caraffa, L.; Cord, A.; Halmaoui, H.; Gruyer, D. Vision Enhancement in Homogeneous and Heterogeneous Fog. IEEE Intell. Transport. Syst. Mag. 2012, 4, 6–20. [Google Scholar] [CrossRef]

- Zheng, S.; Sun, J.; Liu, Q.; Qi, Y.; Yan, J. Overwater Image Dehazing via Cycle-Consistent Generative Adversarial Network. Electronics 2020, 9, 1877. [Google Scholar] [CrossRef]

- Ancuti, C.O.; Ancuti, C.; Sbert, M.; Timofte, R. Dense-Haze: A Benchmark for Image Dehazing with Dense-Haze and Haze-Free Images. In Proceedings of the 2019 IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 22–25 September 2019; pp. 1014–1018. [Google Scholar] [CrossRef]

- Ancuti, C.O.; Ancuti, C.; Timofte, R. NH-HAZE: An Image Dehazing Benchmark with Non-Homogeneous Hazy and Haze-Free Images. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, WA, USA, 14–19 June 2020; pp. 1798–1805. [Google Scholar] [CrossRef]

- He, X.; Ji, W. Single Maritime Image Dehazing Using Unpaired Adversarial Learning. SIViP 2023, 17, 593–600. [Google Scholar] [CrossRef]

- Zhang, R.; Yang, H.; Yang, Y.; Fu, Y.; Pan, L. LMHaze: Intensity-Aware Image Dehazing with a Large-Scale Multi-Intensity Real Haze Dataset. In Proceedings of the 6th ACM International Conference on Multimedia in Asia, Auckland, New Zealand, 3–6 December 2024; p. 1. [Google Scholar] [CrossRef]

- Mo, Y.; Li, C.; Ren, W.; Wang, W. Spotlighting on Objects: Prior Knowledge-Driven Maritime Image Dehazing and Object Detection Framework. IEEE J. Ocean. Eng. 2025, 50, 1978–1992. [Google Scholar] [CrossRef]

- Xie, Y.; Wei, H.; Liu, Z.; Wang, X.; Ji, X. SynFog: A Photorealistic Synthetic Fog Dataset Based on End-to-End Imaging Simulation for Advancing Real-World Defogging in Autonomous Driving. In Proceedings of the 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 16–22 June 2024; pp. 21763–21772. [Google Scholar] [CrossRef]

- Zhang, L.; Zhu, A.; Zhao, S.; Zhou, Y. Simulation of Atmospheric Visibility Impairment. IEEE Trans. Image Process. 2021, 30, 8713–8726. [Google Scholar] [CrossRef]

- Liu, R.W.; Lu, Y.; Guo, Y.; Ren, W.; Zhu, F.; Lv, Y. AiOENet: All-in-One Low-Visibility Enhancement to Improve Visual Perception for Intelligent Marine Vehicles Under Severe Weather Conditions. IEEE Trans. Intell. Veh. 2024, 9, 3811–3826. [Google Scholar] [CrossRef]

- Prasad, D.K.; Rajan, D.; Rachmawati, L.; Rajabally, E.; Quek, C. Video Processing from Electro-Optical Sensors for Object Detection and Tracking in a Maritime Environment: A Survey. IEEE Trans. Intell. Transport. Syst. 2017, 18, 1993–2016. [Google Scholar] [CrossRef]

- Shao, Z.; Wu, W.; Wang, Z.; Du, W.; Li, C. SeaShips: A Large-Scale Precisely Annotated Dataset for Ship Detection. IEEE Trans. Multimed. 2018, 20, 2593–2604. [Google Scholar] [CrossRef]

- Dwivedi, P.; Chakraborty, S. A Comprehensive Qualitative and Quantitative Survey on Image Dehazing Based on Deep Neural Networks. Neurocomputing 2024, 610, 128582. [Google Scholar] [CrossRef]

- He, K.; Sun, J.; Tang, X. Single Image Haze Removal Using Dark Channel Prior. In Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009; pp. 1956–1963. [Google Scholar] [CrossRef]

- Zhu, Q.; Mai, J.; Shao, L. A Fast Single Image Haze Removal Algorithm Using Color Attenuation Prior. IEEE Trans. Image Process. 2015, 24, 3522–3533. [Google Scholar] [CrossRef] [PubMed]

- Berman, D.; Treibitz, T.; Avidan, S. Single Image Dehazing Using Haze-Lines. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 42, 720–734. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Lu, K.; Xue, J.; He, N.; Shao, L. Single Image Dehazing Based on the Physical Model and MSRCR Algorithm. IEEE Trans. Circuits Syst. Video Technol. 2018, 28, 2190–2199. [Google Scholar] [CrossRef]

- Galdran, A. Image Dehazing by Artificial Multiple-Exposure Image Fusion. Signal Process. 2018, 149, 135–147. [Google Scholar] [CrossRef]

- Ju, M.; Ding, C.; Zhang, D.; Guo, Y.J. Gamma-Correction-Based Visibility Restoration for Single Hazy Images. IEEE Signal Process. Lett. 2018, 25, 1084–1088. [Google Scholar] [CrossRef]

- Cai, B.; Xu, X.; Jia, K.; Qing, C.; Tao, D. DehazeNet: An End-to-End System for Single Image Haze Removal. IEEE Trans. Image Process. 2016, 25, 5187–5198. [Google Scholar] [CrossRef]

- Single Image Dehazing via Multi-Scale Convolutional Neural Networks. In Lecture Notes in Computer Science; Springer International Publishing: Cham, Switzerland, 2016; pp. 154–169. ISBN 978-3-319-46474-9. [CrossRef]

- Ma, L.; Feng, Y.; Zhang, Y.; Liu, J.; Wang, W.; Chen, G.-Y.; Xu, C.; Su, Z. Coa: Towards Real Image Dehazing via Compression-and-Adaptation. In Proceedings of the Computer Vision and Pattern Recognition Conference, Nashville, TN, USA, 10–17 June 2025; pp. 11197–11206. [Google Scholar] [CrossRef]

- Su, X.; Li, S.; Cui, Y.; Cao, M.; Zhang, Y.; Chen, Z.; Wu, Z.; Wang, Z.; Zhang, Y.; Yuan, X. Prior-Guided Hierarchical Harmonization Network for Efficient Image Dehazing. In Proceedings of the AAAI Conference on Artificial Intelligence, Philadelphia, PA, USA, 25 Febreary–4 March 2025; Volume 39, pp. 7042–7050. [Google Scholar] [CrossRef]

- Chen, Y.; Wen, Z.; Wang, C.; Gong, L.; Yi, Z. PriorNet: A Novel Lightweight Network with Multidimensional Interactive Attention for Efficient Image Dehazing. arXiv 2024, arXiv:2404.15638. [Google Scholar] [CrossRef]

- Ullah, H.; Muhammad, K.; Irfan, M.; Anwar, S.; Sajjad, M.; Imran, A.S.; de Albuquerque, V.H.C. Light-DehazeNet: A Novel Lightweight CNN Architecture for Single Image Dehazing. IEEE Trans. Image Process. 2021, 30, 8968–8982. [Google Scholar] [CrossRef] [PubMed]

- Guo, C.; Yan, Q.; Anwar, S.; Cong, R.; Ren, W.; Li, C. Image Dehazing Transformer with Transmission-Aware 3D Position Embedding. In Proceedings of the 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 5802–5810. [Google Scholar] [CrossRef]

- Song, Y.; He, Z.; Qian, H.; Du, X. Vision Transformers for Single Image Dehazing. IEEE Trans. Image Process. 2023, 32, 1927–1941. [Google Scholar] [CrossRef] [PubMed]

- Yang, L.; Kang, B.; Huang, Z.; Xu, X.; Feng, J.; Zhao, H. Depth Anything: Unleashing the Power of Large-Scale Unlabeled Data. In Proceedings of the 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 16–22 June 2024; pp. 10371–10381. [Google Scholar] [CrossRef]

- Koračin, D.; Dorman, C.E.; Lewis, J.M.; Hudson, J.G.; Wilcox, E.M.; Torregrosa, A. Marine Fog: A Review. Atmos. Res. 2014, 143, 142–175. [Google Scholar] [CrossRef]

- Liu, J.; Li, H.; Luo, J.; Xie, S.; Sun, Y. Efficient Obstacle Detection Based on Prior Estimation Network and Spatially Constrained Mixture Model for Unmanned Surface Vehicles. J. Field Robot. 2021, 38, 212–228. [Google Scholar] [CrossRef]

- Yao, S.; Guan, R.; Wu, Z.; Ni, Y.; Huang, Z.; Liu, R.W.; Yue, Y.; Ding, W.; Lim, E.G.; Seo, H.; et al. Waterscenes: A Multi-Task 4d Radar-Camera Fusion Dataset and Benchmarks for Autonomous Driving on Water Surfaces. IEEE Trans. Intell. Transp. Syst. 2024, 25, 16584–16598. [Google Scholar] [CrossRef]

- Wang, N.; Wang, Y.; Wei, Y.; Han, B.; Feng, Y. Marine Vessel Detection Dataset and Benchmark for Unmanned Surface Vehicles. Appl. Ocean Res. 2024, 142, 103835. [Google Scholar] [CrossRef]

- Kristan, M.; Sulić Kenk, V.; Kovačič, S.; Perš, J. Fast Image-Based Obstacle Detection From Unmanned Surface Vehicles. IEEE Trans. Cybern. 2016, 46, 641–654. [Google Scholar] [CrossRef]

- Žust, L.; Perš, J.; Kristan, M. Lars: A Diverse Panoptic Maritime Obstacle Detection Dataset and Benchmark. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France, 2–3 October 2023; pp. 20304–20314. [Google Scholar] [CrossRef]

- Rajapaksha, U.; Sohel, F.; Laga, H.; Diepeveen, D.; Bennamoun, M. Deep Learning-Based Depth Estimation Methods from Monocular Image and Videos: A Comprehensive Survey. ACM Comput. Surv. 2024, 56, 1–51. [Google Scholar] [CrossRef]

- Arampatzakis, V.; Pavlidis, G.; Mitianoudis, N.; Papamarkos, N. Monocular Depth Estimation: A Thorough Review. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 2396–2414. [Google Scholar] [CrossRef]

- Zhou, Y.; Li, X.; Xiong, C.; Yao, H.; Qin, C. A Survey of Perceptual Hashing for Multimedia. ACM Trans. Multimed. Comput. Commun. Appl. 2025, 21, 1–28. [Google Scholar] [CrossRef]

- Liu, X.; Ma, Y.; Shi, Z.; Chen, J. Griddehazenet: Attention-Based Multi-Scale Network for Image Dehazing. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 7314–7323. [Google Scholar] [CrossRef]

- Fourure, D.; Emonet, R.; Fromont, E.; Muselet, D.; Tremeau, A.; Wolf, C. Residual Conv-Deconv Grid Network for Semantic Segmentation. arXiv 2017, arXiv:1707.07958. [Google Scholar] [CrossRef]

- Hore, A.; Ziou, D. Image Quality Metrics: PSNR vs. SSIM. In Proceedings of the 2010 20th International Conference on Pattern Recognition, Istanbul, Turkey, 23–26 August 2010; pp. 2366–2369. [Google Scholar] [CrossRef]

- Mittal, A.; Soundararajan, R.; Bovik, A.C. Making a “Completely Blind” Image Quality Analyzer. IEEE Signal Process. Lett. 2013, 20, 209–212. [Google Scholar] [CrossRef]

- Mittal, A.; Moorthy, A.K.; Bovik, A.C. No-Reference Image Quality Assessment in the Spatial Domain. IEEE Trans. Image Process. 2012, 21, 4695–4708. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).