1. Introduction

Optimization is finding the optimal settings for a system’s design parameters to minimize or maximize the fitness function. At the same time, all of the constraints are met [

1,

2]. Optimization difficulties exist in every industry, academic discipline, and study area. Exact algorithms are one type of optimization strategy, whereas heuristic and metaheuristic algorithms are another [

3,

4,

5]. Because it requires fewer sophisticated calculations, the former category takes less time to complete, but it may be less useful and practical. As opposed to the former, the second class of algorithms (metaheuristics) exhibits some random/stochastic behavior and makes an “informed search choice” for some “wise areas” [

6].

The above theorem inspires scientists to develop cutting-edge algorithms and improve existing ones. Since optimization exists in many disciplines, including cloud computing activities [

7], face identification [

8], power [

9,

10], and engineering challenges [

11], it has recently attracted a lot of attention from researchers. According to the No Free Lunch (NFL) hypothesis [

12], no algorithm can identify the best solution in all cases, and many optimization algorithms have been published. In other words, an algorithm that succeeds in finding the best answer to one kind of problem does not succeed in solving another.

Because metaheuristic algorithms use a form of random search, it is impossible to guarantee that they always find the global optimum. However, due to their closeness to the global optimal solution, metaheuristic algorithms’ solutions are regarded as quasi-optimal [

13]. To find a workable answer, metaheuristic algorithms need strong search capabilities in both global and local problem-solving spaces. Combining exploration with the global search process may improve the algorithm’s capacity to find the primary optimum region and break out of local optima. The algorithm’s capacity to converge on potentially superior solutions in promising areas is improved by the search process at the local level, which incorporates the idea of exploitation [

14]. While searching for an optimal solution, metaheuristic algorithms thrive when they balance exploration and exploitation. Thus, an algorithm that better balances exploration and exploitation when comparing the performance of many metaheuristic algorithms on an optimization problem [

15] provides a better quasi-optimal solution. Many metaheuristic algorithms have been developed to improve the quality of results obtained for optimization problems.

Optimization methods can be categorized as either deterministic or stochastic. Solving linear, convex, continuous, differentiable, and low-dimensional optimization problems is applicable within the capabilities of both gradient-based and non-gradient-based deterministic techniques [

16]. Optimization problems that are non-linear, non-convex, discontinuous, non-differentiable, and/or high-dimensional are unfortunately outside the scope of deterministic techniques. Deterministic methods provide bad results in this optimization problem, because they become mired in local optimum solutions [

17].

Optimizing problems are notoriously challenging to solve using deterministic methods; thus, academics have responded to stochastic processes. An effective random search in the problem-solving space employing random operators and trial-and-error procedures characterizes metaheuristic algorithms, one of the most popular stochastic approaches [

18]. Metaheuristic algorithms have gained popularity for handling optimization problems due to their effectiveness in solving problems that are non-linear, non-convex, discontinuous, non-differentiable, NP-hard, complex, and high-dimensional. They also require no differentiable information about the objective function or constraints and are not dependent on the problem type [

19].

Considering the many metaheuristic algorithms that have already been developed, whether there is still a need to introduce even more metaheuristic algorithms is the key question that drives metaheuristic algorithm research. The NFL theorem [

20] answers this topic by showing that there is no universally superior metaheuristic method for optimization. Even if a metaheuristic algorithm addresses one set of optimization problems, it does not mean that it works just as well for solving another set of optimization problems. The NFL theorem states that an algorithm may succeed in addressing one optimization problem while failing to solve a different one. So, when applied to optimization problems, a metaheuristic algorithm’s output may be taken at face value. As a result, the NFL theorem motivates researchers to create cutting-edge metaheuristic algorithms that can more efficiently solve optimization problems.

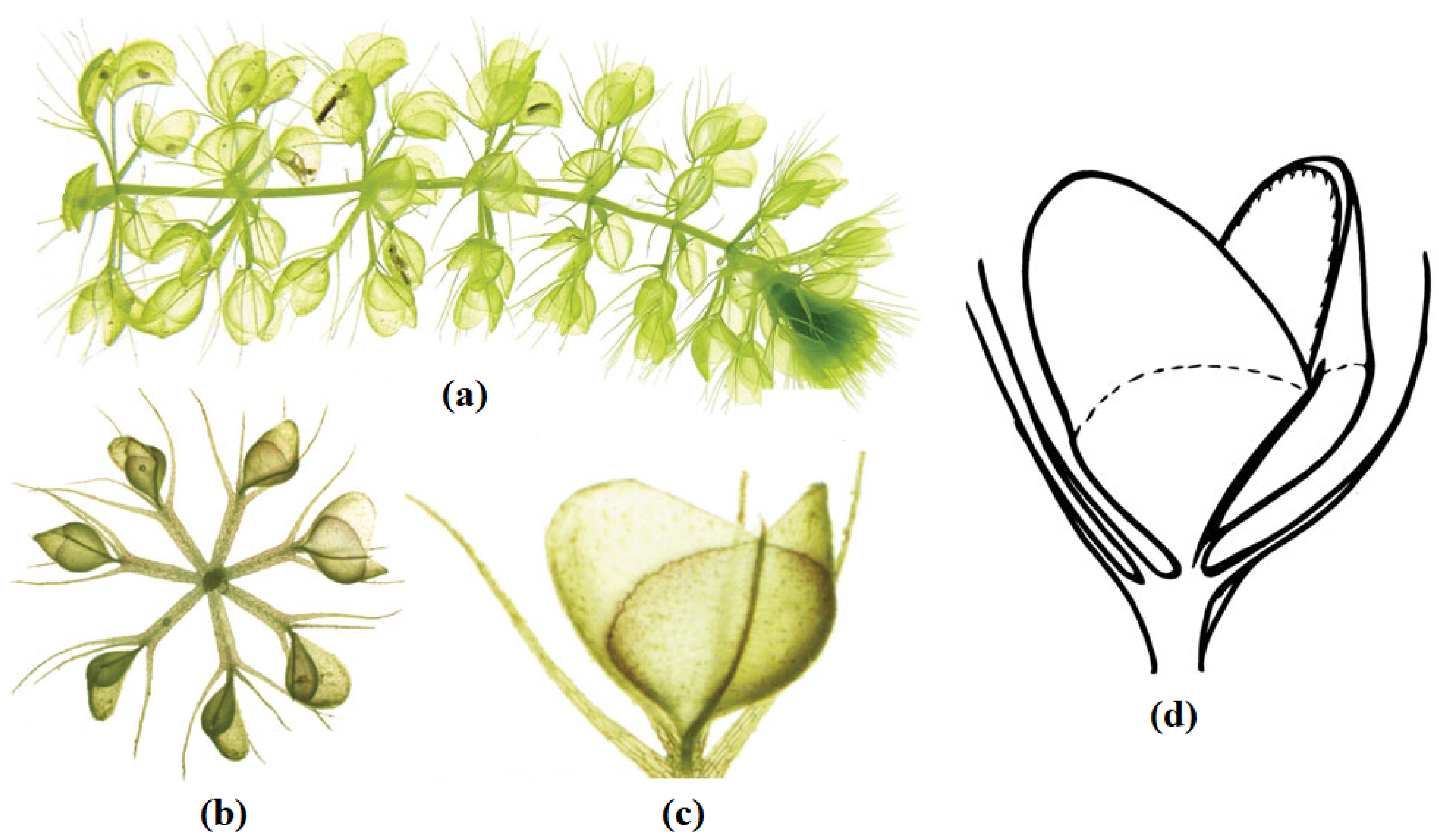

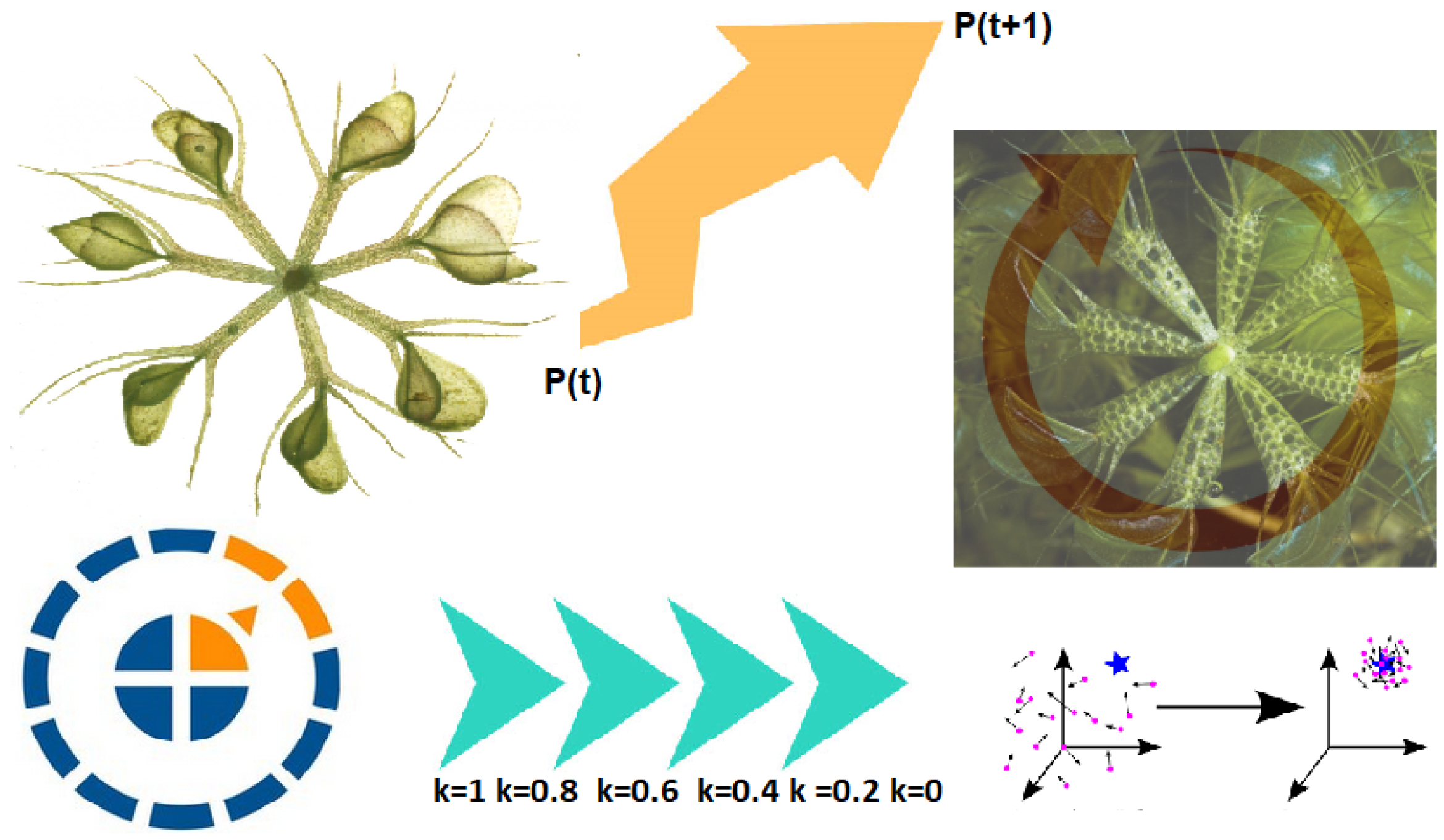

This paper’s innovative contribution is the design of a new metaheuristic algorithm for addressing optimization problems in various scientific disciplines; the method is called Waterwheel Plant Algorithm (WWPA). The following are the most significant contributions of this work:

Modeling natural waterwheel behavior inspired the development of WWPA.

The method used by waterwheel plants to locate their insect food, capture it, and then move it to a more convenient location before devouring it inspired the essential idea of WWPA.

We provide a mathematical model of the WWPA implementation processes throughout the two exploration and exploitation stages.

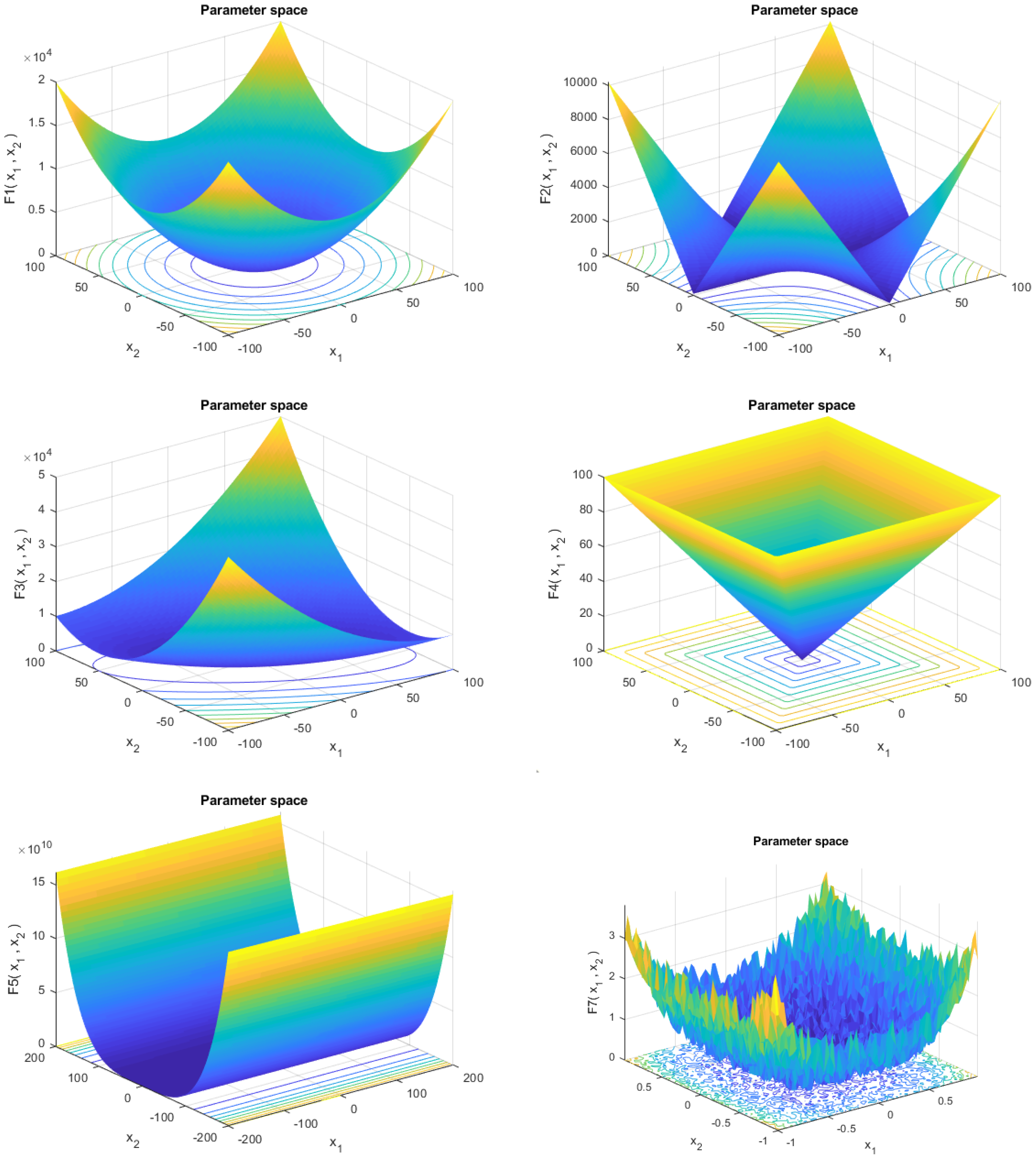

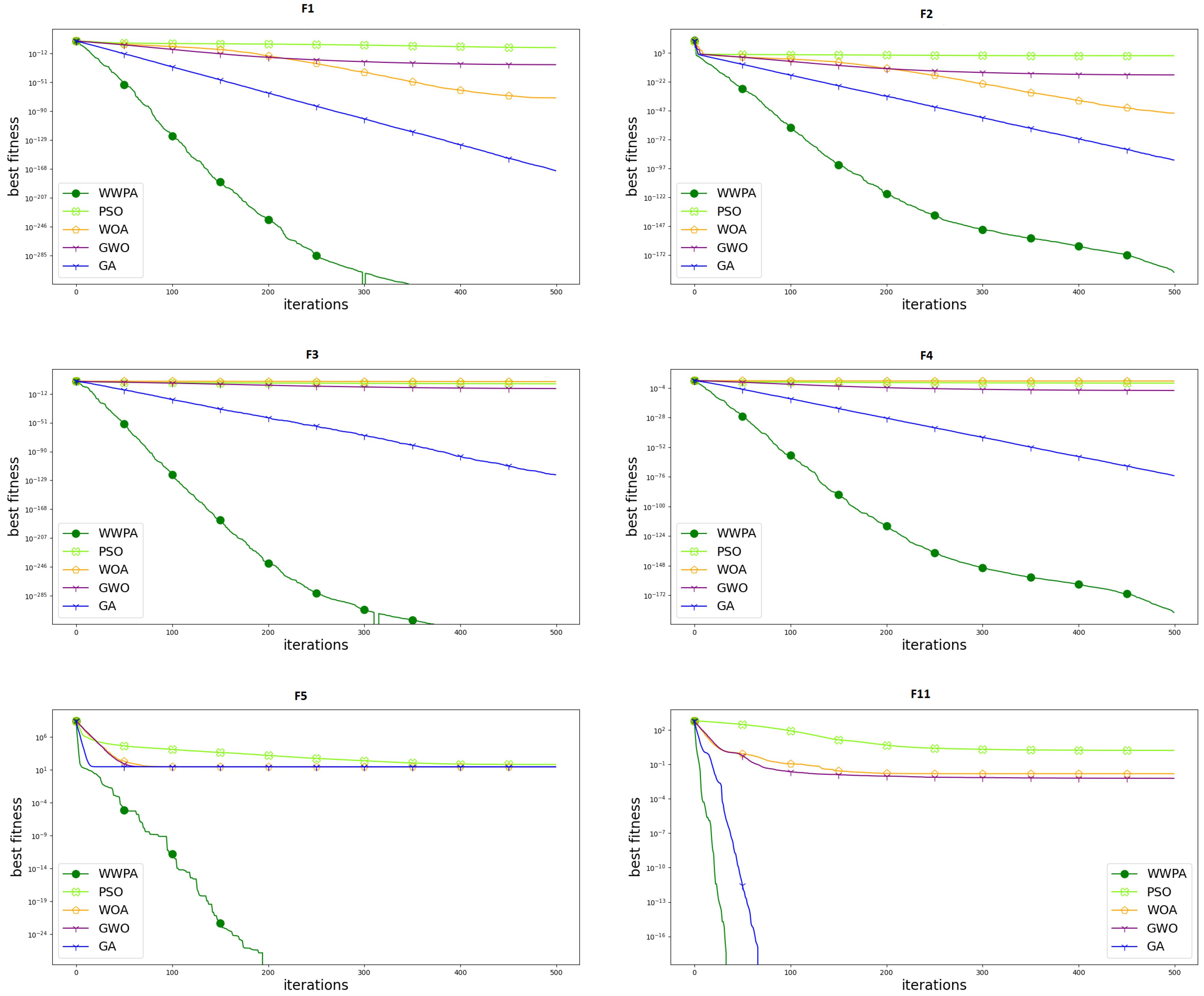

Twenty-three benchmark functions were used to measure WWPA’s efficiency in various optimization tasks.

Three engineering problems were considered in evaluating the effectiveness of the proposed WWPA.

Well-known algorithms were used as benchmarks against which the proposed WWPA method was evaluated.

A statistical analysis was performed to confirm the significant difference of the proposed approach when compared with the other competitor algorithms.

The following is how the rest of the paper is laid out: In

Section 2, we present our literature review.

Section 3 then presents the mathematical model and the introduction to the proposed Waterwheel Plant Algorithm (WWPA). Simulation and effectiveness studies for optimization problems in addition to the assessment of how well the proposed WWPA performed in handling practical problems are then described in

Section 4.

Section 5 summarizes the results, and suggestions for further research are offered.

2. Literature Review

When dealing with practical problems, it is common to encounter a large number of local optimum solutions, since the search space is typically complicated. An optimization method is more likely to converge too quickly because of this, leading to an increased risk of local optimizations. Many optimization algorithms attempt to address this problem by employing methods that broaden the population’s genetic makeup. Local optima may be avoided by using these methods, although the convergence performance may suffer. Consequently, creating a powerful metaheuristic algorithm for optimization necessitates striking a balance between exploration and exploitation. As a result of striking this equilibrium, the optimization algorithm’s convergence speed is enhanced, and the search space is explored more thoroughly, allowing the local optima to be avoided. Metaheuristic algorithms draw inspiration from various sources, including evolutionary occurrences, natural phenomena, animal life in nature, biological sciences, physics, game rules, and human relationships.

Natural swarming phenomena, such as those seen in insects, fish, birds, mammals, and plants and animals, have inspired the development of new metaheuristic algorithms that use swarm intelligence to solve problems. Metaheuristic algorithms can be categorized into five classes based on the type of motivation employed in their development: swarm-based, evolutionary-based, physics-based, human-based, and game-based. The most well-known swarm-based algorithms include Particle Swarm Optimization (PSO) [

21], Ant Colony Optimization (ACO) [

22], and Artificial Bee Colony (ABC) [

23,

24]. The PSO design is based on the analogy of animal flocks foraging for food. The ability of ants to find the quickest route from their colony to a food supply significantly influenced the development of ACO. The design of ABC is based on a simulation of the behavior of foraging bee colonies. Swarm-based algorithms include Golden Jackal Optimization (GJO) [

25], Coati Optimization Algorithm (COA) [

26], Marine Predator Algorithm (MPA) [

27], and Mountain Gazelle Optimizer (MGO) [

28].

The biological sciences, genetics, Darwin’s theory of evolution, survival of the fittest, and natural selection inspired the development of evolutionary-based metaheuristic algorithms. Some of the most well-known evolutionary-based methods are Genetic Algorithm (GA) [

29] and Differential Evolution (DE) [

30]. These approaches are built on models of the reproductive process and use the chance operations of selection, crossover, and mutation. Artificial Immune Systems (AISs) are designed using models of the human immune system to fight off infections and other microorganisms [

31]. Cultural Algorithm (CA) [

32], Evolution Strategy (ES) [

33], and Genetic Programming (GP) [

32] are further examples of evolutionary-based metaheuristic algorithms [

34,

35]. Metaheuristic algorithms with a physics foundation are motivated by physical phenomena, forces, laws, and other notions. One of the most well-known physics-based strategies is called “Simulated Annealing” (SA) [

36]. Modeling the metal annealing process, where the metal is melted under heat and then gently heated to form a perfect crystal, led to the development of SA. Several algorithms that take their inspiration from Newton’s laws of motion and physical forces have been developed. These include Spring Search Algorithm (SSA) [

37], which uses the tension force of a spring and Hooke’s law; Momentum Search Algorithm (MSA) [

38]; and Gravitational Search Algorithm (GSA) [

39].

Water Cycle Algorithm (WCA) was developed to simulate the many physical changes in the natural water cycle [

40]. Multi-Verse Optimizer (MVO) [

41], Archimedes Optimization Algorithm (AOA) [

42], Equilibrium Optimizer (EO) [

43], Electro-Magnetism Optimization (EMO) [

44], Nuclear Reaction Optimization (NRO) [

45], and Lichtenberg Algorithm (LA) [

46] are some well-known metaheuristics in the past decade. There have been advancements in artificial intelligence (AI) that take cues from human behavior in areas such as communication, thinking, and social interaction to create human-based metaheuristic algorithms. The most popular human-based strategy is Teaching–Learning-Based Optimization (TLBO) [

47]. The design inspiration for TLBO came from observing classroom interactions between educators and their pupils. Poor and Rich Optimization’s (PRO) central concept is that economically disadvantaged and privileged social groups may and should work together to better their economic standing [

48,

49].

Examples of other human-based metaheuristic algorithms include Gaining–Sharing Knowledge-based algorithm (GSK) [

50], War Strategy Optimization (WSO) [

51], Teamwork Optimization Algorithm (TOA) [

52], Coronavirus Herd Immunity Optimizer (CHIO) [

53], Driving Training-Based Optimization (DTBO) [

54], and Ali Baba and the Forty Thieves (AFT) [

55,

56]. The strategies of players, coaches, and officials, as well as the regulations of various games, have inspired the creation of game-based metaheuristic algorithms. Volleyball Premier League (VPL) [

57,

58] and Football Game-Based Optimization (FGBO) [

59] are examples of algorithms whose central idea is the mathematical modeling of competitions in various game leagues.

Multiple metaheuristic algorithms have been proposed in recent years, with each employing a unique strategy for overcoming these problems. A contemporary example of a metaheuristic that takes inspiration from nature is Butterfly Optimization Algorithm (BOA) [

60]. BOA acts as a butterfly might when looking for food and trying to mate. BOA’s exploration and exploitation methods are relatively straightforward. In BOA, the butterfly can either aimlessly flit around in the search space to accomplish exploration or go straight to the best butterfly to accomplish exploitation. Switch probabilities determine the relative weights of exploration and exploitation. Using traditional benchmark functions and engineering design challenges, BOA was proven to work. The results and performance of BOA are positive overall. Stochastic Fractal Search (SFS) is a relatively new metaheuristic that takes its cues from fractals in nature [

61]. During the optimization phase, SFS primarily uses diffusion and update processes. While the first method guarantees that the search space is exploited, the second method expands its scope with regular updates. In addition, SFS employs Levy flight and Gaussian methods to generate new particles [

62,

63]. Utilizing these methods, the algorithm’s convergence rate may be sped up. Good performance and robust exploratory capabilities were seen in tests on SFS using both confined and unconstrained standard benchmark functions. Optimal Baleen Whale Algorithm: To accomplish its goals of exploration and exploitation, WOA [

64] employs several methods. Some approaches use movement around a randomly chosen solution to enhance discovery. The opposite is true for alternative solutions, which spiral towards the optimal option to meet their needs. Achieving a happy medium between exploration and exploitation is dependent on WOA’s use of two adaptive parameters. WOA has been rigorously examined and verified compared with industry-standard benchmark functions and restricted engineering design challenges.

Stochastic Paint Optimizer (SPO) [

65] is an optimization technique influenced by art. SPO is a population-based optimizer that draws inspiration from the beauty of color and the painting method. To identify the ideal color, the SPO optimization algorithm considers the search area on a canvas and applies several color combinations. Great exploration and exploitation in SPO are provided by four straightforward color combination rules that do not require any internal parameters. Well-known mathematical benchmark functions were used to assess the algorithm’s performance, and the results were compared with more modern, well-researched methods to confirm the accuracy of the findings. In [

66], the authors developed the multi-objective version of this method for global engineering problems. Principles such as employing an external archive of a specified size set the suggested method apart from the original SPO. Moreover, this method offers the leader selection function for performing multi-objective optimization. Adding chaos to the framework of metaheuristic algorithms is one of the effective methods that can be used to increase the performance of these algorithms. In [

67], ten chaotic maps are used to introduce chaos into SPO. The primary contributions of this research are the proposals of chaotic versions and the identification of the optimal chaotic version of SPO. The analysis of certain mathematical and engineering problems revealed that some chaotic SPO variations improve upon the functionality of the standard SPO.

In addition, several extensions of WOA have been developed and used for a wide range of optimization problems. Harris Hawks Optimization (HHO) [

68] is a brand-new optimizer that takes its cues from hawks’ method of hunting. HHO employs four tactics to ambush its target during the exploitation stage. During this stage, it takes a cue from hawks, as they hunt by perching in various places, waiting for the right moment to strike. HHO uses an adaptable equation similar to WOA’s to iterate between the exploration and exploitation phases. To verify HHO’s reliability, it was subjected to rigorous testing against various reference functions and limited technical design challenges. HHO was shown to be both competitive and promising.

Many researchers have recently developed hybrid optimization algorithms, which combine the best features of two or more optimization techniques to address the shortcomings of using only one [

69]. For example, in [

70,

71], a novel hybrid optimizer dubbed PSOSCA was developed by fusing the PSO algorithm with Sine Cosine Algorithm and the Levy flight technique. The Levy flight strategy uses random wanderings to expand the search area. Using these random walks, you may rest assured that much ground is covered and local maxima are more effectively avoided. Sine Cosine Algorithm (SCA) [

72,

73] improves PSO’s ability to discover and exploit new areas by using position update equations. PSOSCA has benefits and is successful against most PSO variations, as evidenced by the results of tests. Standard benchmark functions and real-world, resource-limited engineering challenges were used to verify the efficacy of the new hybrid, PSOSCA.

In addition to the previous optimization algorithms, recent efforts contributed to the emergence of more advanced algorithms. These algorithms include Keshtel Algorithm (KA) [

74] and its application in [

75], Social Engineering Optimizer (SEO) [

76] and its application in [

77], Red Deer Algorithm (RDA) [

78] and its application in [

79], and the tabu search-based hybrid metaheuristic approach [

80]. Despite the promising performance achieved by these algorithms, according to the No Free Lunch theorem, there is an opportunity to develop more algorithms to improve the overall performance of optimizing machine learning models for various applications.

An examination of current optimization techniques reveals that no metaheuristic algorithm is predicated on simulating the organic behavior of waterwheel machinery. The hunting behavior of plants has been studied, and the results indicate that it is an intelligent process with significant potential for use in developing a new optimizer. In this study, a new swarm-based metaheuristic method is developed and presented in the next section to fill this knowledge gap by mathematically simulating the natural behaviors of waterwheel plants. In this research paper, we present a new metaheuristic optimization approach, WWPA, which takes its cues from the coordinated efforts of swarms of individual organisms working toward a common objective. WWPA seeks to find a middle ground between guaranteeing rapid convergence and preventing inertia between potential local optima. Methods for improving exploitation performance, striking a healthy balance between exploration and exploitation, expanding the search space, and diversifying the present population all contribute to this goal. This paper’s primary contribution is the development of a novel optimization algorithm, referred to as Waterwheel Plant Algorithm (WWPA), which gives a fresh perspective on the problem space of optimization. Compared with other swarm-based and evolutionary-based algorithms, preliminary research indicates that WWPA is competitive, promising, and can even exceed them. The proposed algorithm’s efficacy was tested and confirmed with real-world, time-limited engineering design challenges as added proof of efficiency.

5. Conclusions and Future Perspectives

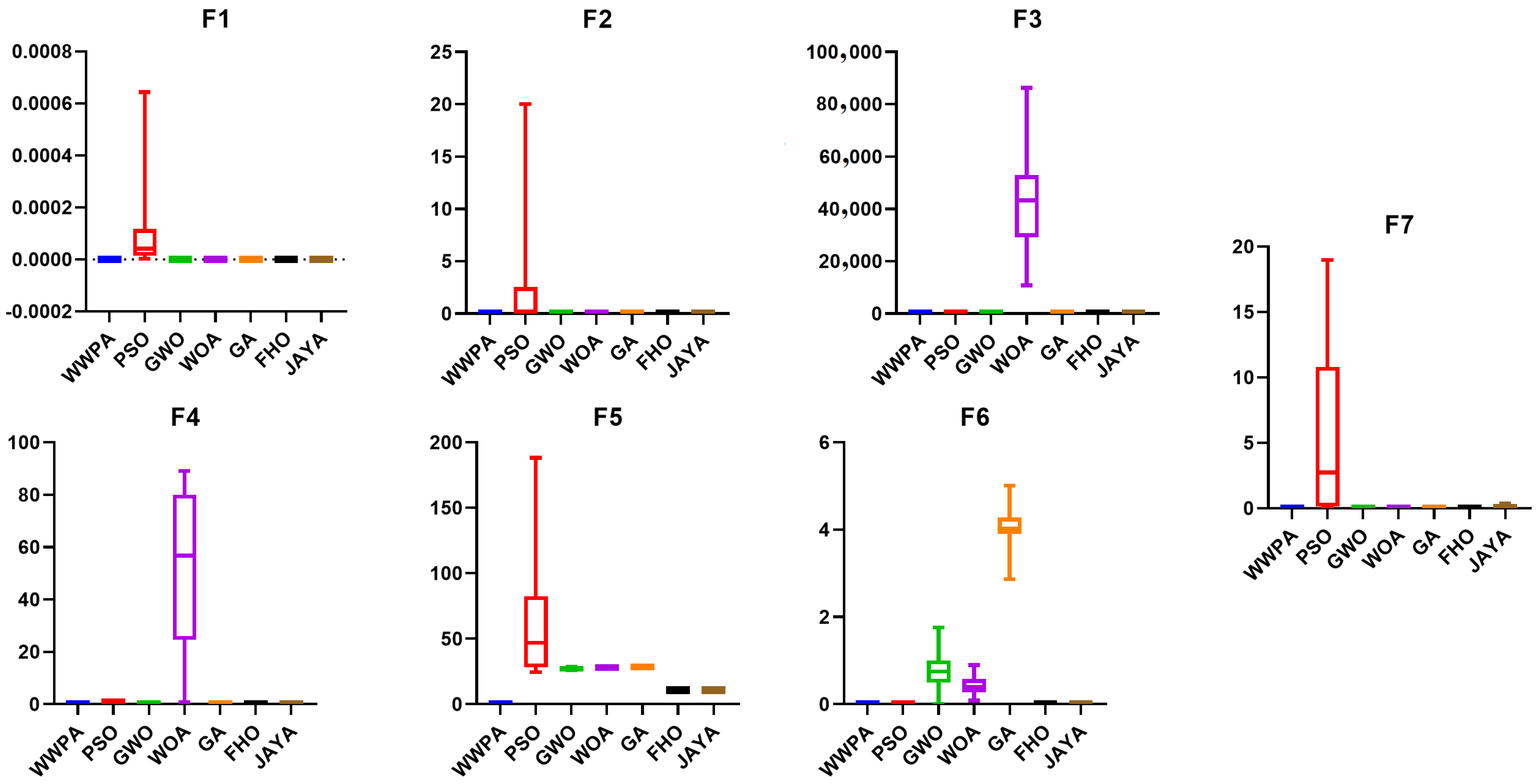

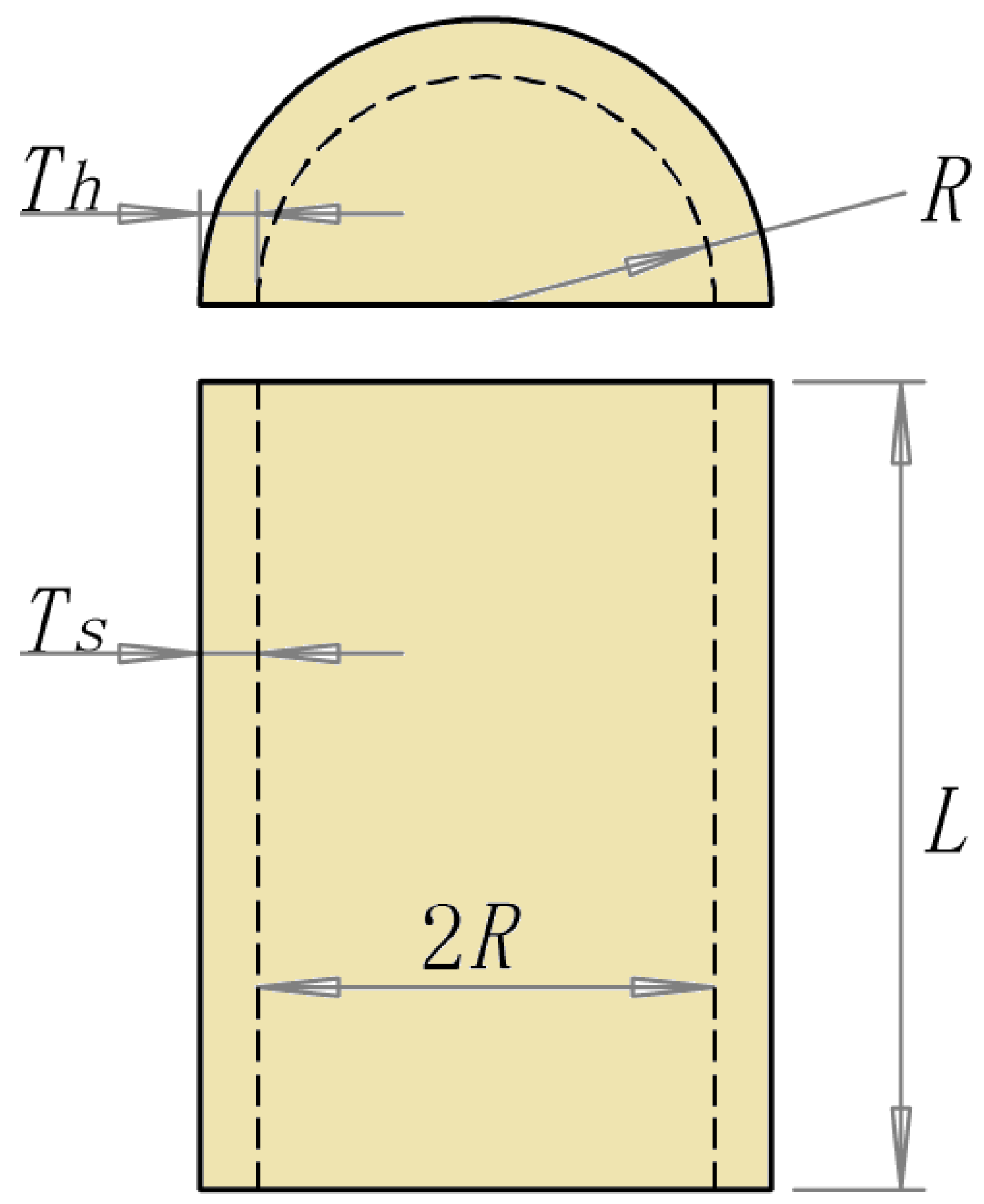

In this study, we introduce the waterwheel plant technique (WWPA), a novel swarm-based optimization technique. The planned WWPA heavily draws on the tactics and actions of waterwheel plants in the course of their search. Following an explanation of how WWPA works, a mathematical model that can be used to help with optimization issues is offered. Twenty-three objective functions from the categories of unimodal, high-dimensional multimodal, and fixed-dimensional multimodal were used to evaluate the effectiveness of the proposed method. The capabilities of the proposed algorithm were further examined by comparing the optimization results acquired by WWPA and those provided by seven other well-known algorithms: PSO, DE, WOA, GWO, GA, FHO, and JAYA. The proposed WWPA was shown to have strong exploitation power in convergently finding the global optimal solution as evidenced by the optimization results of unimodal functions. These functions’ simulation results demonstrate that WWPA outperformed eight other algorithms by a large margin when it came to fixing problems with a single modality. The multimodal function simulation results show that the proposed WWPA has strong exploration capability to test the search space and efficiently locate the ideal region. The WWPA method was superior to seven competing algorithms in simulating real-world scenarios involving multimodal optimization. The simulation results show that the proposed WWPA outperformed other methods by a wide margin in solving optimization problems. We also used WWPA to solve the difficulties of designing a pressure tank, a speed reducer, a welded beam, and a tension/compression spring. When tackling design difficulties in the real world, the simulation findings demonstrate that WWPA performed admirably.

The authors of this paper suggest several avenues for future investigation. The proposed methodology has the potential to pave the way for creating binary and multi-objective variants of WWPA, among other areas of study. In addition, the authors’ proposed directions for future research include using WWPA to address optimization problems in a wide range of scientific disciplines and real-world contexts, keeping in mind the potential of the planned WWPA for facilitating numerous future endeavors. Feature selection, data mining, COVID-19 modeling, big data, artificial intelligence, power systems, machine learning, signal denoising, wireless sensor networks, image processing, and other benchmark tasks are just some of the many areas where this approach has been put to use. It is possible that in the future, new optimizers that will perform better than WWPA in some real-world applications will be created; this is a drawback shared by all stochastic optimization approaches, including the proposed WWPA. In addition, the solutions to optimization problems obtained utilizing WWPA cannot be guaranteed to be exactly equivalent to the global optimum because of the stochastic nature of the solution approach.