5. Research Methodology

We state the research question: are there any differences in interaction time and usability between controllers and hand tracking in VR medical training? The experiment was set up under this research question, and the VR application was created applying both interactions to see the difference in interaction time and usability.

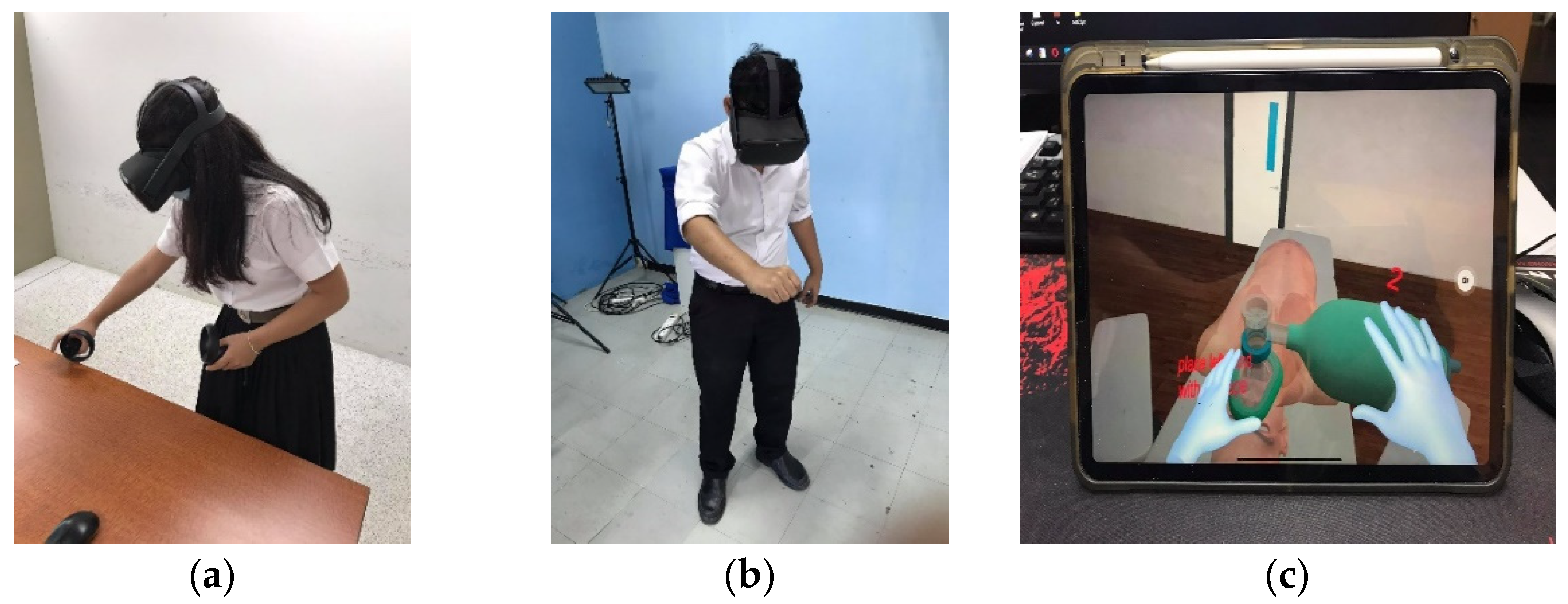

In our experiment, 30 medical students volunteered and participated in the study after providing informed consent. Twenty-eight participants had never used VR headset before, and two of them had used it a few times. They were third-year medical students (undergraduate) taking part in clinical training at Walailak University, Nakhon Si Thammarat, Thailand. We divided the participants into two groups: VR controller and VR hand tracking, each of which contained 15 medical students who participated in different interactions. The protocols were the same in both experiments, but the interactions were different depending on the group. The VR controller group used the controller for interactions, while the VR hand-tracking group used hand gestures

For the selection process, we set an experiment schedule of the hand-tracking group on one Wednesday afternoon and the controller group on another Wednesday afternoon, with 15 time slots each. Next, we demonstrated how to perform the VR training using both methods to all 48 medical students in their third year. According to their curriculum, they had enough knowledge and skills to operate an intubation training but had not yet been trained on this subject before. Then, these 48 students selected one out of 30 time slots for the experiment in accordance with their preference and free time. The group selection closed when all 15 slots were chosen on a first-come, first-serve basis.

The interaction time measurements of each procedure were taken using a timekeeper in the VR application and displayed to the user when the procedure was completed, allowing us to assess the differences of using different VR interactions. Usability and satisfaction were assessed using the System Usability Scale (SUS) [

31,

32] and USE Questionnaire (USEQ) [

33,

34] with 5-point Likert-scale questionnaires. The SUS was used to evaluate the usability of VR applications, while the USEQ was used to assess the usefulness, ease of use, ease of learning, and satisfaction.

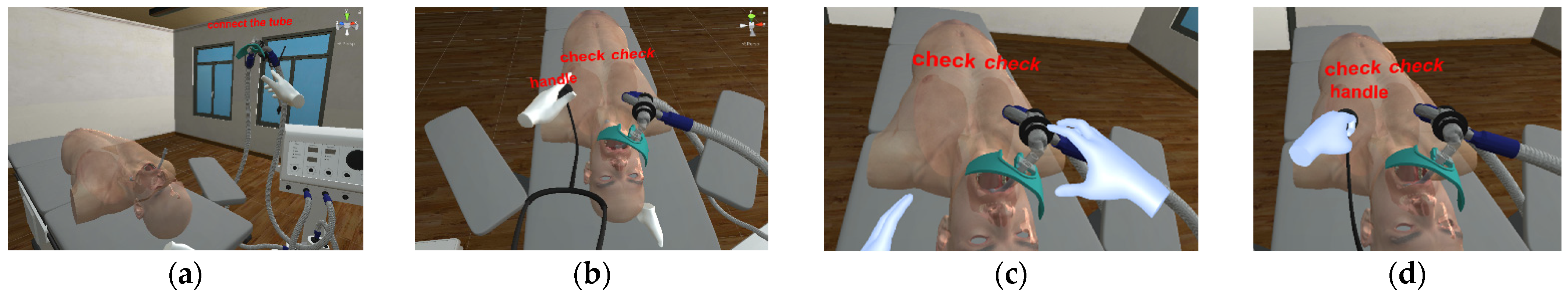

Before training in VR, basic commands to use in the VR application were introduced to all participants. The VR controller group has learned how to use a VR headset with controllers, while the other group learned how to use hand gestures for interactions. At the beginning of the experiment, all participants of both groups studied endotracheal intubation from a video to understand the basic training procedures for approximately 10 min. Then, each group was tested with the same procedures in VR intubation training but using different interactions with controllers and hand tracking. After finishing all procedures, interaction time measurements were automatically recorded to a database. All participants had to complete the evaluations by answering the SUS and USEQ questionnaires.

Finally, the interviewing process was conducted individually by our experiment crew and took about 10 min per student. It consisted of 19 questions concerning emotional, instrumental, and motivational experiences. The questions asked about feelings and opinions on the experiment as well as suggestions for development of VR medical trainings in general. Participant interviews provided further development information and explored factors that affect VR usability and satisfaction besides the questionnaires.

Author Contributions

Conceptualization, C.K. and F.N.; methodology, C.K. and V.V.; software, C.K.; validation, C.K. and V.V.; formal analysis, C.K.; investigation, P.P. and W.H.; resources, C.K., P.P. and W.H.; data curation, C.K., P.P. and W.H.; writing—original draft preparation, C.K. and V.V.; writing—review and editing, C.K., V.V. and F.N.; visualization, C.K., P.P. and W.H.; supervision, F.N.; project administration, C.K.; funding acquisition, C.K. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the Walailak University Research Fund, contract number WU62245.

Institutional Review Board Statement

The study was conducted according to the guidelines of the Declaration of Helsinki, and approved by the Human Research Ethics Committee of Walailak University (approval number WUEC-20-031-01 on 28 January 2021).

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author, upon reasonable request.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Kamińska, D.; Sapiński, T.; Wiak, S.; Tikk, T.; Haamer, R.E.; Avots, E.; Helmi, A.; Ozcinar, C.; Anbarjafari, G. Virtual reality and its applications in education: Survey. Information 2019, 10, 318. [Google Scholar] [CrossRef] [Green Version]

- Radianti, J.; Majchrzak, T.A.; Fromm, J.; Wohlgenannt, I. A systematic review of immersive virtual reality applications for higher education: Design elements, lessons learned, and research agenda. Comput. Educ. 2020, 147, 103778. [Google Scholar] [CrossRef]

- Khundam, C.; Nöel, F. A Study of Physical Fitness and Enjoyment on Virtual Running for Exergames. Int. J. Comput. Games Technol. 2021, 2021, 1–16. [Google Scholar] [CrossRef]

- Taketomi, T.; Uchiyama, H.; Ikeda, S. Visual SLAM algorithms: A survey from 2010 to 2016. IPSJ Trans. Comput. Vis. Appl. 2017, 9, 1–11. [Google Scholar] [CrossRef]

- Tracking Technology Explained: LED Matching [Internet]. Oculus.com. Available online: https://developer.oculus.com/blog/tracking-technology-explained-led-matching/ (accessed on 1 September 2021).

- Tran, D.S.; Ho, N.H.; Yang, H.J.; Baek, E.T.; Kim, S.H.; Lee, G. Real-time hand gesture spotting and recognition using RGB-D camera and 3D convolutional neural network. Appl. Sci. 2020, 10, 722. [Google Scholar] [CrossRef] [Green Version]

- Wang, J.; Mueller, F.; Bernard, F.; Sorli, S.; Sotnychenko, O.; Qian, N.; Otaduy, M.A.; Casas, D.; Theobalt, C. Rgb2hands: Real-time tracking of 3d hand interactions from monocular rgb video. ACM Trans. Graph. (TOG) 2020, 39, 1–16. [Google Scholar]

- Yuan, S.; Garcia-Hernando, G.; Stenger, B.; Moon, G.; Chang, J.Y.; Lee, K.M.; Molchanov, P.; Kautz, J.; Honari, S.; Kim, T.K.; et al. Depth-based 3d hand pose estimation: From current achievements to future goals. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 2636–2645. [Google Scholar]

- Tagliasacchi, A.; Schröder, M.; Tkach, A.; Bouaziz, S.; Botsch, M.; Pauly, M. Robust articulated-icp for real-time hand tracking. In Computer Graphics Forum; Wiley: Hoboken, NJ, USA, 2015; Volume 34, pp. 101–114. [Google Scholar]

- Aditya, K.; Chacko, P.; Kumari, D.; Kumari, D.; Bilgaiyan, S. Recent trends in HCI: A survey on data glove, LEAP motion and microsoft kinect. In Proceedings of the 2018 IEEE International Conference on System, Computation, Automation and Networking (ICSCA), Pondicherry, India, 6–7 July 2018; pp. 1–5. [Google Scholar]

- Buckingham, G. Hand tracking for immersive virtual reality: Opportunities and challenges. arXiv 2021, arXiv:2103.14853. [Google Scholar]

- Angelov, V.; Petkov, E.; Shipkovenski, G.; Kalushkov, T. Modern virtual reality headsets. In Proceedings of the 2020 International Congress on Human-Computer Interaction, Optimization and Robotic Applications (HORA), Ankara, Turkey, 26–27 June 2020; pp. 1–5. [Google Scholar]

- Oudah, M.; Al-Naji, A.; Chahl, J. Hand gesture recognition based on computer vision: A review of techniques. J. Imaging 2020, 6, 73. [Google Scholar] [CrossRef] [PubMed]

- Lin, W.; Du, L.; Harris-Adamson, C.; Barr, A.; Rempel, D. Design of hand gestures for manipulating objects in virtual reality. In Proceedings of the International Conference on Human-Computer Interaction, Vancouver, BC, Canada, 9–14 July 2017; pp. 584–592. [Google Scholar]

- Anthes, C.; García-Hernández, R.J.; Wiedemann, M.; Kranzlmüller, D. State of the art of virtual reality technology. In Proceedings of the 2016 IEEE Aerospace Conference, Big Sky, MT, USA, 5–12 March 2016; pp. 1–19. [Google Scholar]

- Guna, J.; Jakus, G.; Pogačnik, M.; Tomažič, S.; Sodnik, J. An analysis of the precision and reliability of the leap motion sensor and its suitability for static and dynamic tracking. Sensors 2014, 14, 3702–3720. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Wozniak, P.; Vauderwange, O.; Mandal, A.; Javahiraly, N.; Curticapean, D. Possible applications of the LEAP motion controller for more interactive simulated experiments in augmented or virtual reality. In Optics Education and Outreach IV; International Society for Optics and Photonics: Washington, DC, USA, 2016; Volume 9946, p. 99460P. [Google Scholar]

- Pulijala, Y.; Ma, M.; Ayoub, A. VR surgery: Interactive virtual reality application for training oral and maxillofacial surgeons using oculus rift and leap motion. In Serious Games and Edutainment Applications; Springer: London, UK, 2017; pp. 187–202. [Google Scholar]

- Pulijala, Y.; Ma, M.; Pears, M.; Peebles, D.; Ayoub, A. An innovative virtual reality training tool for orthognathic surgery. Int. J. Oral Maxillofac. Surg. 2018, 47, 1199–1205. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Pulijala, Y.; Ma, M.; Pears, M.; Peebles, D.; Ayoub, A. Effectiveness of immersive virtual reality in surgical training—A randomized control trial. J. Oral Maxillofac. Surg. 2018, 76, 1065–1072. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Wang, Z.R.; Wang, P.; Xing, L.; Mei, L.P.; Zhao, J.; Zhang, T. Leap Motion-based virtual reality training for improving motor functional recovery of upper limbs and neural reorganization in subacute stroke patients. Neural Rgeneration Res. 2017, 12, 1823. [Google Scholar]

- Vasylevska, K.; Podkosova, I.; Kaufmann, H. Teaching virtual reality with HTC Vive and Leap Motion. In Proceedings of the SIGGRAPH Asia 2017 Symposium on Education, Bangkok, Thailand, 27–30 November 2017; pp. 1–8. [Google Scholar]

- Obrero-Gaitán, E.; Nieto-Escamez, F.; Zagalaz-Anula, N.; Cortés-Pérez, I. An Innovative Approach for Online Neuroanatomy and Neuropathology Teaching Based on 3D Virtual Anatomical Models Using Leap Motion Controller during COVID-19 Pandemic. Front. Psychol. 2021, 12, 1853. [Google Scholar] [CrossRef] [PubMed]

- Liu, H.; Zhang, Z.; Xie, X.; Zhu, Y.; Liu, Y.; Wang, Y.; Zhu, S.C. High-fidelity grasping in virtual reality using a glove-based system. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 5180–5186. [Google Scholar]

- Besnea, F.; Cismaru, S.I.; Trasculescu, A.C.; Resceanu, I.C.; Ionescu, M.; Hamdan, H.; Bizdoaca, N.G. Integration of a Haptic Glove in a Virtual Reality-Based Environment for Medical Training and Procedures. Acta Tech. Napoc.–Ser. Appl. Math. Mech. Eng. 2021, 64, 281–290. [Google Scholar]

- Fahmi, F.; Tanjung, K.; Nainggolan, F.; Siregar, B.; Mubarakah, N.; Zarlis, M. Comparison study of user experience between virtual reality controllers, leap motion controllers, and senso glove for anatomy learning systems in a virtual reality environment. In Proceedings of the IOP Conference Series: Materials Science and Engineering, Chennai, India, 16–17 September 2020; Volume 851, p. 012024. [Google Scholar]

- Gunawardane, H.; Medagedara, N.T. Comparison of hand gesture inputs of leap motion controller & data glove in to a soft finger. In Proceedings of the 2017 IEEE International Symposium on Robotics and Intelligent Sensors (IRIS), Ottawa, ON, Canada, 5–7 October 2017; pp. 62–68. [Google Scholar]

- LaViola, J.J., Jr.; Kruijff, E.; McMahan, R.P.; Bowman, D.; Poupyrev, I.P. 3D User Interfaces: Theory and Practice; Addison-Wesley Professional: Boston, MA, USA, 2017. [Google Scholar]

- Map Controllers [Internet]. Oculus.com. Available online: https://developer.oculus.com/documentation/unity/unity-ovrinput (accessed on 1 September 2021).

- Hand Tracking in Unity [Internet]. Oculus.com. Available online: https://developer.oculus.com/documentation/unity/unity-handtracking/ (accessed on 1 September 2021).

- Bangor, A.; Kortum, P.T.; Miller, J.T. An empirical evaluation of the system usability scale. Int. J. Hum.-Comput. Interact. 2008, 24, 574–594. [Google Scholar] [CrossRef]

- Brooke, J. SUS: A retrospective. J. Usability Stud. 2013, 8, 29–40. [Google Scholar]

- Lund, A.M. Measuring usability with the use questionnaire12. Usability Interface 2001, 8, 3–6. [Google Scholar]

- Gil-Gómez, J.A.; Manzano-Hernández, P.; Albiol-Pérez, S.; Aula-Valero, C.; Gil-Gómez, H.; Lozano-Quilis, J.A. USEQ: A short questionnaire for satisfaction evaluation of virtual rehabilitation systems. Sensors 2017, 17, 1589. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Webster, R.; Dues, J.F. System Usability Scale (SUS): Oculus Rift® DK2 and Samsung Gear VR®. In Proceedings of the 2017 ASEE Annual Conference & Exposition, Columbus, OH, USA, 25–28 June 2017. [Google Scholar]

| Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).