Parental Attitudes toward Artificial Intelligence-Driven Precision Medicine Technologies in Pediatric Healthcare

Abstract

1. Introduction

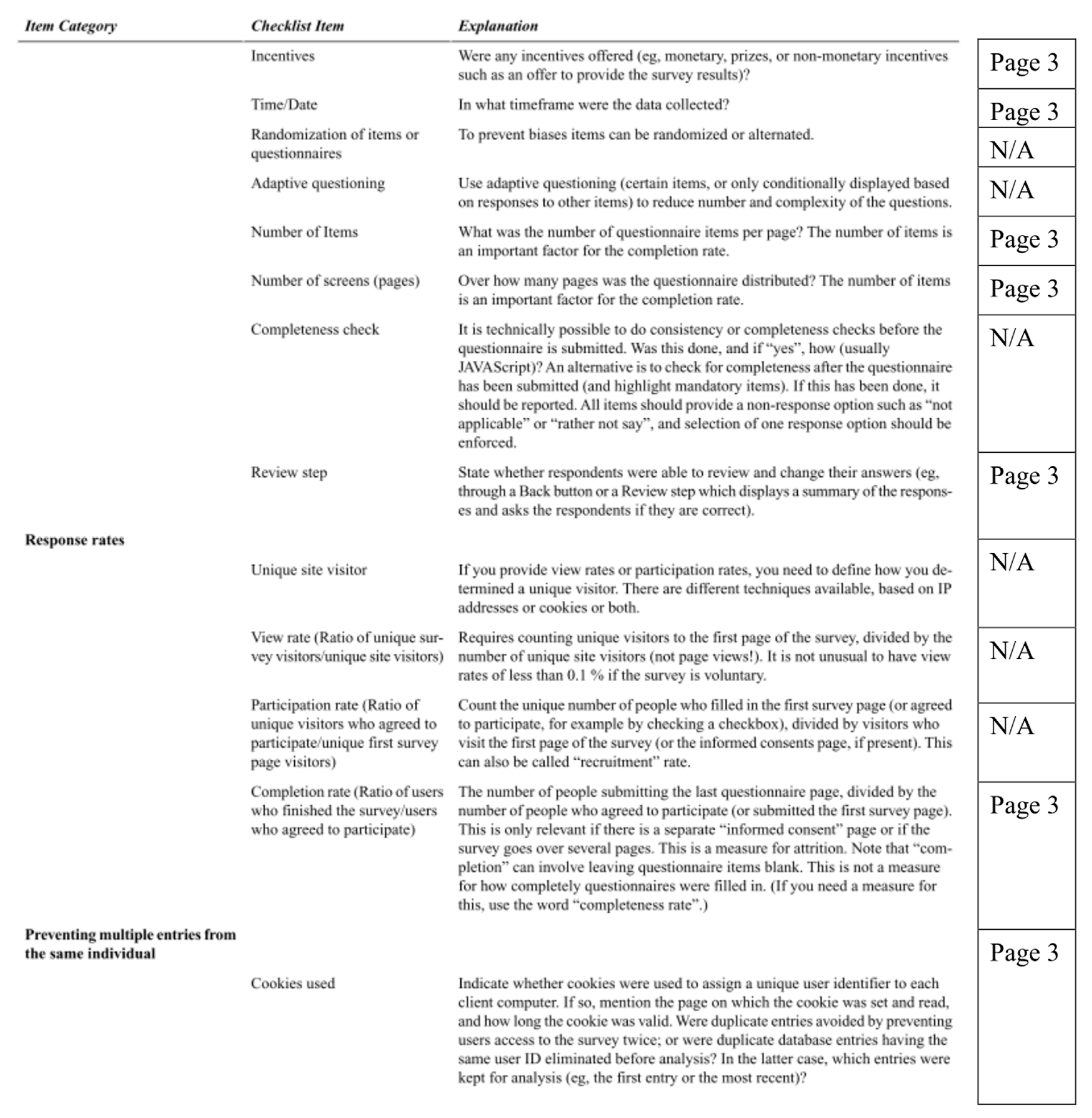

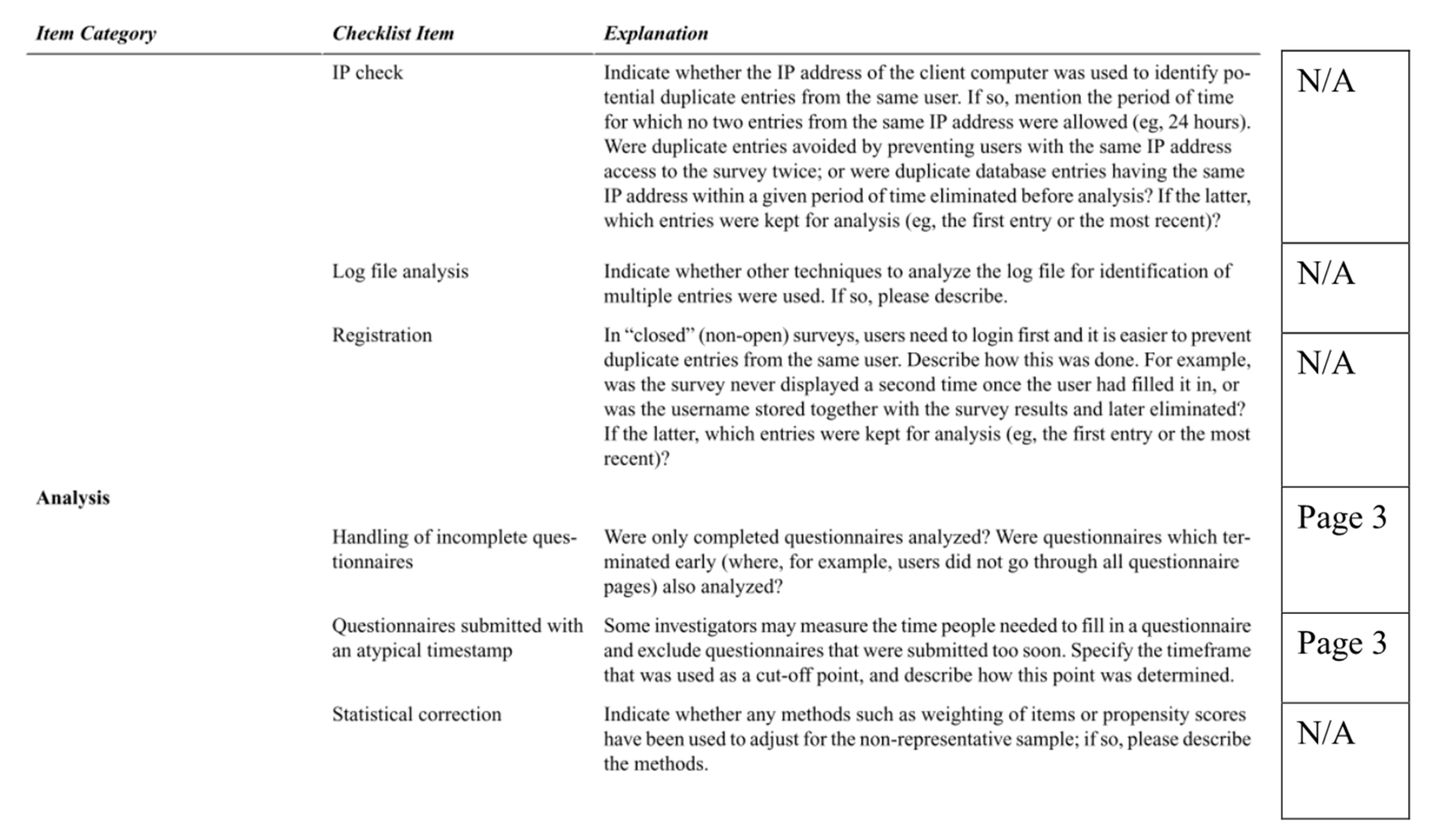

2. Materials and Methods

2.1. Item Writing

2.2. Survey Participants

2.3. Validity and Psychometric Properties of AAIH-P Measure

2.3.1. AAIH-P Openness Scale

2.3.2. Exploratory Factor Analysis

2.3.3. Confirmatory Factor Analysis

2.3.4. Measures of Sociodemographic Attributes, Attitudes, and Personality Traits

2.4. Statistical Analyses

3. Results

3.1. Participant Characteristics

3.2. Openness to AI-Driven Healthcare Interventions and Parental Concerns

3.3. Relationships between Openness on AAIH-P and Parent Concerns and Characteristics

4. Discussion

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

Appendix B. Full AAIH-P Measure with Factors Labeled

| How Open Are You to Allowing Computer Programs to Do the Following Things? | Please Rate Your Response for Each Statement | ||||

| (1) Determine if your child has an ear infection. | 1 | 2 | 3 | 4 | 5 |

| (2) Determine if your child broke a bone. | 1 | 2 | 3 | 4 | 5 |

| (3) Determine if your child has cancer. | 1 | 2 | 3 | 4 | 5 |

| (4) Predict your child’s risk of developing obesity in the future. | 1 | 2 | 3 | 4 | 5 |

| (5) Predict your child’s risk of developing depression in the future. | 1 | 2 | 3 | 4 | 5 |

| (6) Predict your child’s chances of developing a disease that cannot be cured. | 1 | 2 | 3 | 4 | 5 |

| (7) Decide on the best treatment for your child’s lung infection. | 1 | 2 | 3 | 4 | 5 |

| (8) Decide how often your child needs pain medication after surgery. | 1 | 2 | 3 | 4 | 5 |

| (9) Decide the best treatment for your child’s diabetes. | 1 | 2 | 3 | 4 | 5 |

| (10) Give your child feedback on how to prevent anxiety attacks. | 1 | 2 | 3 | 4 | 5 |

| (11) Recommend if you should bring your child to the hospital after an injury. | 1 | 2 | 3 | 4 | 5 |

| (12) Give you advice on how to prevent your child’s asthma attacks. | 1 | 2 | 3 | 4 | 5 |

| 1—Not at all open, 2—Somewhat open, 3—Open, 4—Very open, 5—Extremely open. | |||||

| When You Think of Using These New Devices, How Important Are the Following Details to You? | Please Rate Your Response for Each Statement | ||||

| (1) Whether these devices help you to weigh the risks and benefits of different treatment options for your child’s illness. (Quality and Accuracy) | 1 | 2 | 3 | 4 | 5 |

| (2) Whether companies that develop these devices share your child’s information with other companies. (Privacy) | 1 | 2 | 3 | 4 | 5 |

| (3) Whether these devices are available to low income families. (Social Justice) | 1 | 2 | 3 | 4 | 5 |

| (4) Whether these devices allow your child’s doctor to spend more time with you during clinic visits. (Human Element) | 1 | 2 | 3 | 4 | 5 |

| (5) Whether these devices reduce medical errors. (Quality and Accuracy) | 1 | 2 | 3 | 4 | 5 |

| (6) Whether you might save money by using these devices in your child’s medical care. (Cost) | 1 | 2 | 3 | 4 | 5 |

| (7) Whether these devices make it harder to have a personal relationship with your child’s doctor. (Human Element) | 1 | 2 | 3 | 4 | 5 |

| (8) Whether these devices provide information that is easy to understand. (Quality and Accuracy) | 1 | 2 | 3 | 4 | 5 |

| (9) Whether families without health insurance can access these devices. (Social Justice) | 1 | 2 | 3 | 4 | 5 |

| (10) Whether families in rural areas can access these devices. (Social Justice) | 1 | 2 | 3 | 4 | 5 |

| (11) Whether you will have to pay extra to use these devices for your child’s medical care. (Cost) | 1 | 2 | 3 | 4 | 5 |

| (12) Whether these devices reduce the wait time to schedule an appointment with your child’s doctor. (Convenience) | 1 | 2 | 3 | 4 | 5 |

| (13) Whether these devices make it easier to have a personal relationship with your child’s doctor. (Human Element) | 1 | 2 | 3 | 4 | 5 |

| (14) Whether your child’s doctor asks you for permission before using these devices in your child’s medical care. (Shared Decision Making) | 1 | 2 | 3 | 4 | 5 |

| (15) Whether the doctor asks your opinion before prescribing the treatments recommended by these devices. (Shared Decision Making) | 1 | 2 | 3 | 4 | 5 |

| (16) Whether these devices are accurate. (Quality and Accuracy) | 1 | 2 | 3 | 4 | 5 |

| (17) Whether you know how your child’s medical information will be used by the company that owns this technology. (Privacy) | 1 | 2 | 3 | 4 | 5 |

| (18) Whether you are better able to prevent your child from getting sick because of these devices. (Quality and Accuracy) | 1 | 2 | 3 | 4 | 5 |

| (19) Whether you can get your child’s medical test results more quickly because of these devices. (Convenience) | 1 | 2 | 3 | 4 | 5 |

| (20) Whether your insurance costs will increase to pay for these devices. (Cost) | 1 | 2 | 3 | 4 | 5 |

| (21) Whether these devices are widely available to everyone. (Social Justice) | 1 | 2 | 3 | 4 | 5 |

| (22) Whether these devices will store pictures of your child in an online database. (Privacy) | 1 | 2 | 3 | 4 | 5 |

| (23) Whether these devices can answer your healthcare questions. (Quality and Accuracy) | 1 | 2 | 3 | 4 | 5 |

| (24) Whether these devices will increase costs to the overall healthcare system. (Cost) | 1 | 2 | 3 | 4 | 5 |

| (25) Whether people living with disabilities can access these devices. (Social Justice) | 1 | 2 | 3 | 4 | 5 |

| (26) Whether computer hackers could steal your child’s medical information from these devices. (Privacy) | 1 | 2 | 3 | 4 | 5 |

| (27) Whether these devices are equally accurate when used for people of different races and ethnicities. (Social Justice) | 1 | 2 | 3 | 4 | 5 |

| (28) Whether the company collects more information than it needs about your child. (Privacy) | 1 | 2 | 3 | 4 | 5 |

| (29) Whether your child’s medical information is kept private. (Privacy) | 1 | 2 | 3 | 4 | 5 |

| (30) Whether your child’s doctor tells you if these devices are used in your child’s care. (Shared Decision Making) | 1 | 2 | 3 | 4 | 5 |

| (31) Whether these devices help your child get medical care with fewer doctor visits. (Convenience) | 1 | 2 | 3 | 4 | 5 |

| (32) Whether the doctor orders the treatments recommended by these devices without first asking your opinion. (Shared Decision Making) | 1 | 2 | 3 | 4 | 5 |

| (33) Whether a computer will answer most of your questions instead of people. (Human Element) | 1 | 2 | 3 | 4 | 5 |

| 1—Not Important, 2—Somewhat Important, 3—Important, 4—Very Important, 5—Extremely Important. | |||||

References

- Aronson, S.J.; Rehm, H.L. Building the foundation for genomics in precision medicine. Nature 2015, 526, 336–342. [Google Scholar] [CrossRef] [PubMed]

- Sankar, P.L.; Parker, L.S. The Precision Medicine Initiative’s All of Us Research Program: An agenda for research on its ethical, legal, and social issues. Genet. Med. Off. J. Am. Coll. Med. Genet. 2017, 19, 743–750. [Google Scholar] [CrossRef] [PubMed]

- Fenech, M.; Strukelj, N.; Buston, O. Ethical, Social, and Political Challenges of Artificial Intelligence in Health; Future Advocacy: London, UK, 2018. [Google Scholar]

- Jiang, F.; Jiang, Y.; Zhi, H.; Dong, Y.; Li, H.; Ma, S.; Wang, Y.; Dong, Q.; Shen, H.; Wang, Y. Artificial intelligence in healthcare: Past, present and future. Stroke Vasc. Neurol. 2017, 2, 230. [Google Scholar] [CrossRef] [PubMed]

- Rajkomar, A.; Dean, J.; Kohane, I. Machine Learning in Medicine. N. Engl. J. Med. 2019, 380, 1347–1358. [Google Scholar] [CrossRef] [PubMed]

- Burgess, M. Now DeepMind’s AI can spot eye disease just as well as your doctor. WIRED, 13 August 2018. [Google Scholar]

- Dolins, S.B.; Kero, R.E. The role of AI in building a culture of partnership between patients and providers. In Proceedings of the AAAI Spring Symposium—Technical Report, Stanford, CA, USA, 27–29 March 2006; pp. 47–51. [Google Scholar]

- Li, D.; Kulasegaram, K.; Hodges, B.D. Why We Needn’t Fear the Machines: Opportunities for Medicine in a Machine Learning World. Acad. Med. 2019, 94, 623–625. [Google Scholar] [CrossRef]

- Topol, E.J. High-performance medicine: The convergence of human and artificial intelligence. Nat. Med. 2019, 25, 44–56. [Google Scholar] [CrossRef]

- Chung, W.K.; Erion, K.; Florez, J.C.; Hattersley, A.T.; Hivert, M.F.; Lee, C.G.; McCarthy, M.I.; Nolan, J.J.; Norris, J.M.; Pearson, E.R.; et al. Precision Medicine in Diabetes: A Consensus Report From the American Diabetes Association (ADA) and the European Association for the Study of Diabetes (EASD). Diabetes Care 2020, 43, 1617–1635. [Google Scholar] [CrossRef]

- Perez-Garcia, J.; Herrera-Luis, E.; Lorenzo-Diaz, F.; González, M.; Sardón, O.; Villar, J.; Pino-Yanes, M. Precision Medicine in Childhood Asthma: Omic Studies of Treatment Response. Int. J. Mol. Sci. 2020, 21, 2908. [Google Scholar] [CrossRef]

- Vo, K.T.; Parsons, D.W.; Seibel, N.L. Precision Medicine in Pediatric Oncology. Surg. Oncol. Clin. N. Am. 2020, 29, 63–72. [Google Scholar] [CrossRef]

- Verghese, A.; Shah, N.H.; Harrington, R.A. What This Computer Needs Is a Physician: Humanism and Artificial Intelligence. JAMA 2018, 319, 19–20. [Google Scholar] [CrossRef]

- Eysenbach, G. Improving the quality of Web surveys: The Checklist for Reporting Results of Internet E-Surveys (CHERRIES). J. Med. Internet Res. 2004, 6, e34. [Google Scholar] [CrossRef] [PubMed]

- Luxton, D.D. Recommendations for the ethical use and design of artificial intelligent care providers. Artif. Intell. Med. 2014, 62, 1–10. [Google Scholar] [CrossRef] [PubMed]

- Char, D.S.; Shah, N.H.; Magnus, D. Implementing Machine Learning in Health Care—Addressing Ethical Challenges. N. Engl. J. Med. 2018, 378, 981–983. [Google Scholar] [CrossRef] [PubMed]

- Vayena, E.; Blasimme, A.; Cohen, I.G. Machine learning in medicine: Addressing ethical challenges. PLoS Med. 2018, 15, e1002689. [Google Scholar] [CrossRef]

- McDougall, R.J. Computer knows best? the need for value-flexibility in medical AI. J. Med. Ethics 2019, 45, 156–160. [Google Scholar] [CrossRef]

- Reddy, S.; Allan, S.; Coghlan, S.; Cooper, P. A governance model for the application of AI in health care. J. Am. Med. Inf. Assoc. 2019. [Google Scholar] [CrossRef]

- Peterson, C.H.; Peterson, N.A.; Powell, K.G. Cognitive Interviewing for Item Development: Validity Evidence Based on Content and Response Processes. Meas. Eval. Couns. Dev. 2017, 50, 217–223. [Google Scholar] [CrossRef]

- Dworkin, J.; Hessel, H.; Gliske, K.; Rudi, J.H. A Comparison of Three Online Recruitment Strategies for Engaging Parents. Fam. Relat. 2016, 65, 550–561. [Google Scholar] [CrossRef]

- Clark, L.A.; Watson, D. Constructing validity: Basic issues in objective scale development. Psychol. Assess. 1995, 7, 309–319. [Google Scholar] [CrossRef]

- Ferryman, K.; Winn, R.A. Artificial Intelligence Can Entrench Disparities-Here’s What We Must Do; The Cancer Letter: Washington, DC, USA, 2018. [Google Scholar]

- Gianfrancesco, M.A.; Tamang, S.; Yazdany, J.; Schmajuk, G. Potential Biases in Machine Learning Algorithms Using Electronic Health Record Data. JAMA Intern. Med. 2018, 178, 1544–1547. [Google Scholar] [CrossRef]

- Nordling, L. A fairer way forward for AI in health care. Nature 2019, 573, S103–S105. [Google Scholar] [CrossRef] [PubMed]

- Adamson, A.S.; Smith, A. Machine Learning and Health Care Disparities in Dermatology. JAMA Dermatol. 2018, 154, 1247–1248. [Google Scholar] [CrossRef] [PubMed]

- Carter, S.M.; Rogers, W.; Win, K.T.; Frazer, H.; Richards, B.; Houssami, N. The ethical, legal and social implications of using artificial intelligence systems in breast cancer care. Breast 2020, 49, 25–32. [Google Scholar] [CrossRef]

- Shaw, J.; Rudzicz, F.; Jamieson, T.; Goldfarb, A. Artificial Intelligence and the Implementation Challenge. J. Med. Internet Res. 2019, 21, e13659. [Google Scholar] [CrossRef] [PubMed]

- Yu, K.H.; Kohane, I.S. Framing the challenges of artificial intelligence in medicine. BMJ Qual. Saf. 2019, 28, 238–241. [Google Scholar] [CrossRef]

- Mukherjee, S.A.I.; Versus, M.D. Available online: http://www.medi.io/blog/2017/4/ai-versus-md-new-yorker (accessed on 18 September 2020).

- Emanuel, E.J.; Wachter, R.M. Artificial Intelligence in Health Care: Will the Value Match the Hype? JAMA J. Am. Med. Assoc. 2019, 321, 2281–2282. [Google Scholar] [CrossRef]

- Maddox, T.M.; Rumsfeld, J.S.; Payne, P.R.O. Questions for Artificial Intelligence in Health Care. JAMA J. Am. Med. Assoc. 2019, 321, 31–32. [Google Scholar] [CrossRef]

- Esteva, A.; Kuprel, B.; Novoa, R.A.; Ko, J.; Swetter, S.M.; Blau, H.M.; Thrun, S. Dermatologist-level classification of skin cancer with deep neural networks. Nature 2017, 542, 115–118. [Google Scholar] [CrossRef]

- Lopez-Garnier, S.; Sheen, P.; Zimic, M. Automatic diagnostics of tuberculosis using convolutional neural networks analysis of MODS digital images. PLoS ONE 2019, 14. [Google Scholar] [CrossRef]

- Uthoff, R.D.; Song, B.; Sunny, S.; Patrick, S.; Suresh, A.; Kolur, T.; Keerthi, G.; Spires, O.; Anbarani, A.; Wilder-Smith, P.; et al. Point-of-care, smartphone-based, dual-modality, dual-view, oral cancer screening device with neural network classification for low-resource communities. PLoS ONE 2018, 13. [Google Scholar] [CrossRef]

- Tran, V.-T.; Riveros, C.; Ravaud, P. Patients’ views of wearable devices and AI in healthcare: Findings from the ComPaRe e-cohort. NPJ Digit. Med. 2019, 2, 53. [Google Scholar] [CrossRef] [PubMed]

- Tsay, D.; Patterson, C. From Machine Learning to Artificial Intelligence Applications in Cardiac Care. Circulation 2018, 138, 2569–2575. [Google Scholar] [CrossRef] [PubMed]

- Balthazar, P.; Harri, P.; Prater, A.; Safdar, N.M. Protecting Your Patients’ Interests in the Era of Big Data, Artificial Intelligence, and Predictive Analytics. J. Am. Coll. Radiol. 2018, 15, 580–586. [Google Scholar] [CrossRef] [PubMed]

- Price, W.N. Big data and black-box medical algorithms. Sci. Transl. Med. 2018, 10. [Google Scholar] [CrossRef] [PubMed]

- Price, W.N. Artificial intelligence in Health Care: Applications and Legal Implications. Scitech Lawyer 2017, 14. [Google Scholar]

- Price, W.N.; Cohen, I.G. Privacy in the age of medical big data. Nat. Med. 2019, 25, 37–43. [Google Scholar] [CrossRef]

- Reddy, S.; Fox, J.; Purohit, M.P. Artificial intelligence-enabled healthcare delivery. J. R. Soc. Med. 2019, 112, 22–28. [Google Scholar] [CrossRef]

- Fujisawa, Y.; Otomo, Y.; Ogata, Y.; Nakamura, Y.; Fujita, R.; Ishitsuka, Y.; Watanabe, R.; Okiyama, N.; Ohara, K.; Fujimoto, M. Deep-learning-based, computer-aided classifier developed with a small dataset of clinical images surpasses board-certified dermatologists in skin tumour diagnosis. Br. J. Dermatol. 2019, 180, 373–381. [Google Scholar] [CrossRef]

- Haenssle, H.A.; Fink, C.; Schneiderbauer, R.; Toberer, F.; Buhl, T.; Blum, A.; Kalloo, A.; Ben Hadj Hassen, A.; Thomas, L.; Enk, A.; et al. Man against Machine: Diagnostic performance of a deep learning convolutional neural network for dermoscopic melanoma recognition in comparison to 58 dermatologists. Ann. Oncol. 2018, 29, 1836–1842. [Google Scholar] [CrossRef]

- Raumviboonsuk, P.; Krause, J.; Chotcomwongse, P.; Sayres, R.; Raman, R.; Widner, K.; Campana, B.J.L.; Phene, S.; Hemarat, K.; Tadarati, M.; et al. Deep learning versus human graders for classifying diabetic retinopathy severity in a nationwide screening program. NPJ Digit. Med. 2019, 2, 25. [Google Scholar] [CrossRef]

- Urban, G.; Tripathi, P.; Alkayali, T.; Mittal, M.; Jalali, F.; Karnes, W.; Baldi, P. Deep Learning Localizes and Identifies Polyps in Real Time With 96% Accuracy in Screening Colonoscopy. Gastroenterology 2018, 155, 1069–1078.e8. [Google Scholar] [CrossRef] [PubMed]

- Buhrmester, M.; Kwang, T.; Gosling, S.D. Amazon’s Mechanical Turk:A New Source of Inexpensive, Yet High-Quality, Data? Perspect. Psychol. Sci. 2011, 6, 3–5. [Google Scholar] [CrossRef] [PubMed]

- Platt, J.E.; Jacobson, P.D.; Kardia, S.L.R. Public Trust in Health Information Sharing: A Measure of System Trust. Health Serv. Res. 2018, 53, 824–845. [Google Scholar] [CrossRef] [PubMed]

- McKnight, D.H.; Choudhury, V.; Kacmar, C. Developing and Validating Trust Measures for e-Commerce: An Integrative Typology. Inf. Sys. Res. 2002, 13, 334–359. [Google Scholar] [CrossRef]

- Cabitza, F.; Rasoini, R.; Gensini, G.F. Unintended Consequences of Machine Learning in Medicine. JAMA 2017, 318, 517–518. [Google Scholar] [CrossRef]

- Rigby, M.J. Ethical Dimensions of Using Artificial Intelligence in Health Care. AMA J. Ethics 2019, 21, E121–E124. [Google Scholar]

- Weaver, M.S.; October, T.; Feudtner, C.; Hinds, P.S. “Good-Parent Beliefs”: Research, Concept, and Clinical Practice. Pediatrics 2020. [Google Scholar] [CrossRef]

- Hill, D.L.; Faerber, J.A.; Li, Y.; Miller, V.A.; Carroll, K.W.; Morrison, W.; Hinds, P.S.; Feudtner, C. Changes Over Time in Good-Parent Beliefs Among Parents of Children With Serious Illness: A Two-Year Cohort Study. J. Pain Symptom Manag. 2019, 58, 190–197. [Google Scholar] [CrossRef]

- Feudtner, C.; Walter, J.K.; Faerber, J.A.; Hill, D.L.; Carroll, K.W.; Mollen, C.J.; Miller, V.A.; Morrison, W.E.; Munson, D.; Kang, T.I.; et al. Good-parent beliefs of parents of seriously ill children. JAMA Pediatr. 2015, 169, 39–47. [Google Scholar] [CrossRef]

- October, T.W.; Fisher, K.R.; Feudtner, C.; Hinds, P.S. The parent perspective: “being a good parent” when making critical decisions in the PICU. Pediatr. Crit. Care Med. 2014, 15, 291–298. [Google Scholar] [CrossRef]

- Hinds, P.S.; Oakes, L.L.; Hicks, J.; Powell, B.; Srivastava, D.K.; Baker, J.N.; Spunt, S.L.; West, N.K.; Furman, W.L. Parent-clinician communication intervention during end-of-life decision making for children with incurable cancer. J. Palliat. Med. 2012, 15, 916–922. [Google Scholar] [CrossRef] [PubMed]

- Yeh, V.M.; Bergner, E.M.; Bruce, M.A.; Kripalani, S.; Mitrani, V.B.; Ogunsola, T.A.; Wilkins, C.H.; Griffith, D.M. Can Precision Medicine Actually Help People Like Me? African American and Hispanic Perspectives on the Benefits and Barriers of Precision Medicine. Ethn. Dis. 2020, 30, 149–158. [Google Scholar] [CrossRef] [PubMed]

- Geneviève, L.D.; Martani, A.; Shaw, D.; Elger, B.S.; Wangmo, T. Structural racism in precision medicine: Leaving no one behind. BMC Med. Ethics 2020, 21, 17. [Google Scholar] [CrossRef] [PubMed]

| Parental Concerns | Description |

|---|---|

| Social justice | Concerns about how these new technologies might affect the distribution of the benefits and burdens related to AI use in healthcare [3,9,16,17,19,23,24,25,26,27,28,29]. |

| Human element of care | Concerns about the effect of these technologies on the interaction or relationship between clinicians and patients/families [3,30]. |

| Cost | Concerns about whether these technologies will affect individual or societal costs [31,32]. |

| Convenience | Concerns about the ease with which an individual can access and utilize these technologies [33,34,35,36,37]. |

| Shared decision making | Concerns about parental involvement and authority in deciding whether and how these technologies are utilized in their child’s care. |

| Privacy | Concerns about loss of control over the child’s personal information, who has access to this information, and how this information might be used [3,17,36,38,39,40,41,42]. |

| Quality and accuracy | Concerns about the effectiveness and fidelity of these technologies [43,44,45,46]. |

| Participant Characteristics (N = 804) | n (%), Except Where Specified |

|---|---|

| Age of parent, Mean (SD) | 38.9 years (8.0) |

| Female sex | 470 (59%) |

| Race * | |

| White | 689 (86%) |

| Black or African American | 77 (10%) |

| Asian | 44 (6%) |

| American Indian or Alaska Native | 20 (3%) |

| Native Hawaiian or Pacific Islander | 2 (<1%) |

| Hispanic ethnicity | 53 (7%) |

| Employment status | |

| Full-time | 560 (70%) |

| Part-time (not a full-time student) | 48 (6%) |

| Full-time student | 2 (<1%) |

| Self-employed | 61 (8%) |

| Caregiver or homemaker | 93 (11%) |

| Other | 40 (5%) |

| Household income | |

| Less than 23,000 | 42 (5%) |

| 23,001–45,000 | 150 (19%) |

| 45,001–75,000 | 263 (32%) |

| 75,001–112,000 | 208 (26%) |

| Greater than 112,001 | 138 (17%) |

| Level of education | |

| Some high school | 5 (<1%) |

| High school graduate | 61 (8%) |

| Some college | 141 (18%) |

| Associate’s degree | 116 (14%) |

| Bachelor’s degree | 343 (42%) |

| Master’s or doctoral degree | 138 (17%) |

| Place of residence | |

| Urban | 176 (22%) |

| Suburban | 460 (57%) |

| Rural | 168 (21%) |

| Health insurance status | |

| Private | 595 (74%) |

| Medicaid | 116 (14%) |

| Medicare/Medicare Advantage | 47 (6%) |

| No health insurance | 30 (4%) |

| Other | 16 (2%) |

| Number of children, median (IQR) | 2 (1 to 2) |

| Child visited doctor in past 12 months | 709 (88%) |

| Number of doctor visits, median (IQR) | 8 (6 to 10) |

| Child hospitalized in past 12 months | 27 (3%) |

| Number of hospitalizations, median (IQR) | 1 (1 to 2) |

| Variable (Cronbach’s Alpha) | Correlation (p Value) |

|---|---|

| Concerns | |

| Social justice (0.86) | 0.22 (<0.001) |

| Human element (0.72) | 0.02 (0.49) |

| Cost (0.81) | 0.25 (0.001) |

| Convenience (0.69) | 0.31 (0.001) |

| Shared decision making (0.72) | −0.01 (0.84) |

| Privacy (0.87) | −0.03 (0.45) |

| Quality (0.71) | 0.34 (<0.001) |

| Attitudes, Beliefs, and Personality Scales | |

| Platt fidelity (0.71) | 0.11 (0.002) |

| Platt competency (0.78) | 0.21 (<0.001) |

| Platt trust (0.95) | 0.23 (<0.001) |

| Platt integrity (0.85) | 0.20 (<0.001) |

| TIPI extroversion (0.80) | 0.13 (<0.001) |

| TIPI openness (0.59) | 0.13 (<0.001) |

| TIPI agreeableness (0.46) | 0.06 (0.10) |

| TIPI conscientiousness (0.73) | 0.06 (0.08) |

| TIPI emotional stability (0.83) | 0.10 (0.01) |

| Faith in technology (0.87) | 0.36 (<0.001) |

| Trust in technology (0.91) | 0.28 (<0.001) |

| PANAS–Positive affect (0.91) | 0.17 (<0.001) |

| PANAS–Negative affect (0.91) | 0.01 (0.81) |

| Democrat/lean Democrat political alignment | 0.09 (0.015) |

| Demographic Variables | |

| Female sex | −0.13 (<0.001) |

| Age | −0.04 (0.294) |

| Race (White vs Person of Color) * | 0.04 (0.238) |

| Ethnicity (Hispanic vs non-Hispanic) | −0.04 (0.248) |

| Worked in healthcare field | −0.01 (0.780) |

| Income | 0.03 (0.357) |

| Highest level of education | 0.03 (0.468) |

| Number of children | −0.05 (0.149) |

| Number of children’s doctor visits ** | −0.05 (0.773) |

| Child hospitalization | <0.01 (0.889) |

| Variable | Standardized β Coefficient (95% CI) | p Value |

|---|---|---|

| Concerns | ||

| Quality | 0.23 (0.16 to 0.31) | <0.001 |

| Convenience | 0.16 (0.09 to 0.23) | <0.001 |

| Cost | 0.11 (0.04 to 0.17) | 0.001 |

| Shared decision making | −0.16 (−0.23 to −0.10) | <0.001 |

| Attitudes, Beliefs, and Personality Scales | ||

| Faith in technology | 0.23 (0.17 to 0.29) | <0.001 |

| Platt trust | 0.12 (0.06 to 0.18) | <0.001 |

| Democrat/lean Democrat | 0.09 (0.03 to 0.15) | 0.002 |

| TIPI extroversion | 0.04 (0.01 to 0.07) | 0.015 |

| Demographic Variables | ||

| Female sex | −0.12 (−0.18 to −0.06) | <0.001 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sisk, B.A.; Antes, A.L.; Burrous, S.; DuBois, J.M. Parental Attitudes toward Artificial Intelligence-Driven Precision Medicine Technologies in Pediatric Healthcare. Children 2020, 7, 145. https://doi.org/10.3390/children7090145

Sisk BA, Antes AL, Burrous S, DuBois JM. Parental Attitudes toward Artificial Intelligence-Driven Precision Medicine Technologies in Pediatric Healthcare. Children. 2020; 7(9):145. https://doi.org/10.3390/children7090145

Chicago/Turabian StyleSisk, Bryan A., Alison L. Antes, Sara Burrous, and James M. DuBois. 2020. "Parental Attitudes toward Artificial Intelligence-Driven Precision Medicine Technologies in Pediatric Healthcare" Children 7, no. 9: 145. https://doi.org/10.3390/children7090145

APA StyleSisk, B. A., Antes, A. L., Burrous, S., & DuBois, J. M. (2020). Parental Attitudes toward Artificial Intelligence-Driven Precision Medicine Technologies in Pediatric Healthcare. Children, 7(9), 145. https://doi.org/10.3390/children7090145